Update README.md

Browse files

README.md

CHANGED

|

@@ -13,34 +13,46 @@ pipeline_tag: image-text-to-text

|

|

| 13 |

|

| 14 |

|

| 15 |

|

| 16 |

-

|

| 17 |

|

|

|

|

|

|

|

| 18 |

|

| 19 |

-

|

| 20 |

|

| 21 |

-

|

| 22 |

|

| 23 |

-

[**\[🤗 HF Demo\]**](https://huggingface.co/spaces/khang119966/Vintern-v2-Demo)

|

| 24 |

|

| 25 |

-

|

| 26 |

|

| 27 |

-

|

| 28 |

|

| 29 |

-

|

| 30 |

-

|

| 31 |

-

| Vintern-1B-v2 | [InternViT-300M-448px](https://huggingface.co/OpenGVLab/InternViT-300M-448px) | [Qwen2-0.5B-Instruct](https://huggingface.co/Qwen/Qwen2-0.5B-Instruct) |

|

| 32 |

|

|

|

|

|

|

|

| 33 |

|

| 34 |

-

|

|

|

|

| 35 |

|

| 36 |

-

|

|

|

|

| 37 |

|

| 38 |

-

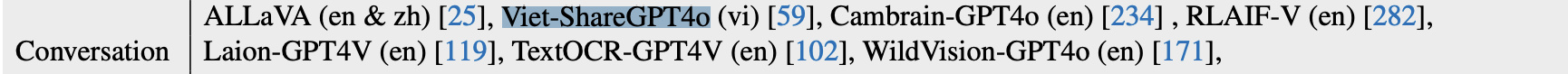

The fine-tuning dataset was meticulously sampled in part from the following datasets:

|

| 39 |

-

[Viet-OCR-VQA 📚](https://huggingface.co/datasets/5CD-AI/Viet-OCR-VQA), [Viet-Doc-VQA 📄](https://huggingface.co/datasets/5CD-AI/Viet-Doc-VQA), [Viet-Doc-VQA-II 📑](https://huggingface.co/datasets/5CD-AI/Viet-Doc-VQA-II), [Vista 🖼️](https://huggingface.co/datasets/Vi-VLM/Vista), [Viet-Receipt-VQA 🧾](https://huggingface.co/datasets/5CD-AI/Viet-Receipt-VQA), [Viet-Sketches-VQA ✏️](https://huggingface.co/datasets/5CD-AI/Viet-Sketches-VQA), [Viet-Geometry-VQA 📐](https://huggingface.co/datasets/5CD-AI/Viet-Geometry-VQA), [Viet-Wiki-Handwriting ✍️](https://huggingface.co/datasets/5CD-AI/Viet-Wiki-Handwriting), [Viet-ComputerScience-VQA 💻](https://huggingface.co/datasets/5CD-AI/Viet-ComputerScience-VQA), [Viet-Handwriting-gemini-VQA 🖋️](https://huggingface.co/datasets/5CD-AI/Viet-Handwriting-gemini-VQA), [Viet-Menu-gemini-VQA 🍽️](https://huggingface.co/datasets/5CD-AI/Viet-Menu-gemini-VQA), [Viet-Vintext-gemini-VQA 📜](https://huggingface.co/datasets/5CD-AI/Viet-Vintext-gemini-VQA), [Viet-OpenViVQA-gemini-VQA 🧠](https://huggingface.co/datasets/5CD-AI/Viet-OpenViVQA-gemini-VQA), [Viet-Resume-VQA 📃](https://huggingface.co/datasets/5CD-AI/Viet-Resume-VQA), [Viet-ViTextVQA-gemini-VQA 📑](https://huggingface.co/datasets/5CD-AI/Viet-ViTextVQA-gemini-VQA)

|

| 40 |

|

| 41 |

## Benchmarks 📈

|

| 42 |

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 43 |

|

|

|

|

| 44 |

|

| 45 |

## Examples

|

| 46 |

|

|

|

|

| 13 |

|

| 14 |

|

| 15 |

|

|

|

|

| 16 |

|

| 17 |

+

# Vintern-1B-v3.5 ❄️ (Viet-InternVL2-1B-v3.5) - The Ultimate Multimodal Solution 🌏

|

| 18 |

+

We are thrilled to announce **Vintern-1B-v3.5**, the latest version in the Vintern series, offering significant improvements over v2 across all evaluation benchmarks. This model has been fine-tuned from **InternVL-1B-2.5**, which already good in Vietnamese tasks thanks to leveraging [Viet-ShareGPT-4o-Text-VQA](https://huggingface.co/datasets/5CD-AI/Viet-ShareGPT-4o-Text-VQA) data during its fine-tuning process by the InternVL 2.5 [1] team.

|

| 19 |

|

| 20 |

+

|

| 21 |

|

| 22 |

+

|

| 23 |

|

|

|

|

| 24 |

|

| 25 |

+

To further enhance its performance in Vietnamese while maintaining robust capabilities on existing English datasets, **Vintern-1B-v3.5** has been fine-tuned using a vast amount of Vietnamese-specific data. This results in a model that is exceptionally powerful in text recognition, OCR, and understanding Vietnam-specific documents.

|

| 26 |

|

| 27 |

+

Key Features 🌟

|

| 28 |

|

| 29 |

+

- Top Quality for Vietnamese Texts

|

| 30 |

+

Vintern-1B-v3.5 is one of the best models in its class (1B parameters) for understanding and processing Vietnamese documents.

|

|

|

|

| 31 |

|

| 32 |

+

- Better Extraction and Understanding

|

| 33 |

+

The model is great at handling invoices, legal texts, handwriting, and tables.

|

| 34 |

|

| 35 |

+

- Runs on Affordable Hardware

|

| 36 |

+

You can run the model on Google Colab with a T4 GPU, making it easy to use without expensive devices.

|

| 37 |

|

| 38 |

+

- Easy to Fine-tune

|

| 39 |

+

The model can be customized for specific tasks with minimal effort.

|

| 40 |

|

|

|

|

|

|

|

| 41 |

|

| 42 |

## Benchmarks 📈

|

| 43 |

|

| 44 |

+

| Benchmark | InternVL2_5 1B | Vintern-1B-v2 | Vintern-1B-v3.5 |

|

| 45 |

+

|:-------------:|:--------------:|:-------------:|:---------------:|

|

| 46 |

+

| vi-MTVQA | 24.8 | 37.4 | 41.9 |

|

| 47 |

+

| DocVQAtest | 84.8 | 72.5 | 78.8 |

|

| 48 |

+

| MMMUval | 40.9 | 31.3 | 32.4 |

|

| 49 |

+

| InfoVQAtest | 56.0 | 38.9 | 46.4 |

|

| 50 |

+

| TextVQAval | 72.0 | 64.0 | 68.2 |

|

| 51 |

+

| ChartQAtest | 75.9 | 34.1 | 60.0 |

|

| 52 |

+

| OCRBench | 785 | 628 | 706 |

|

| 53 |

+

| MMEsum | 1950 | 1185 | 1346 |

|

| 54 |

|

| 55 |

+

|

| 56 |

|

| 57 |

## Examples

|

| 58 |

|