Upload weights, notebooks, sample images

Browse files

README.md

CHANGED

|

@@ -1,103 +1,114 @@

|

|

| 1 |

-

|

| 2 |

-

license: mit

|

| 3 |

-

tags:

|

| 4 |

-

- image-to-image

|

| 5 |

-

- reflection-removal

|

| 6 |

-

- highlight-removal

|

| 7 |

-

- computer-vision

|

| 8 |

-

- dinov3

|

| 9 |

-

- surgical-imaging

|

| 10 |

-

---

|

| 11 |

-

|

| 12 |

-

# UnReflectAnything: RGB-Only Highlight Removal

|

| 13 |

|

| 14 |

-

[](https://arxiv.org/abs/2512.09583)

|

| 17 |

-

[

|

| 30 |

-

|

| 31 |

-

---

|

| 32 |

|

| 33 |

-

## Available Weights

|

| 34 |

-

The following checkpoints are provided for different integration needs:

|

| 35 |

|

| 36 |

-

|

| 37 |

-

| :--- | :--- |

|

| 38 |

-

| **`fullmodel_896.pt`** | Full model checkpoint; recommended for standard inference. |

|

| 39 |

-

| `decoder_896.pth` | Decoder weights optimized for 896px resolution. |

|

| 40 |

-

| `token_inpainter.pth` | Weights for the specialized token inpainter module. |

|

| 41 |

-

| `rgb_decoder.pth` | Standard RGB decoder weights. |

|

| 42 |

-

| `rgb_film_decoder.pth` | RGB FiLM (Feature-wise Linear Modulation) decoder weights. |

|

| 43 |

|

| 44 |

---

|

|

|

|

| 45 |

|

| 46 |

-

## Installation

|

| 47 |

-

|

| 48 |

-

### 1. Install via pip

|

| 49 |

```bash

|

| 50 |

pip install unreflectanything

|

| 51 |

-

|

| 52 |

```

|

|

|

|

|

|

|

|

|

|

| 53 |

|

| 54 |

-

|

| 55 |

|

| 56 |

-

### 2. Download Weights

|

| 57 |

|

| 58 |

-

|

|

|

|

| 59 |

|

|

|

|

| 60 |

```bash

|

| 61 |

-

# Alias 'ura' or 'unreflect' also work

|

| 62 |

unreflectanything download --weights

|

| 63 |

-

|

|

|

|

|

|

|

|

|

|

| 64 |

```

|

| 65 |

|

| 66 |

-

|

| 67 |

-

|

| 68 |

-

```python

|

| 69 |

-

import unreflectanything

|

| 70 |

-

import torch

|

| 71 |

|

| 72 |

-

|

| 73 |

-

unreflect_model = unreflectanything.model(pretrained=True)

|

| 74 |

|

| 75 |

-

|

| 76 |

-

|

| 77 |

-

|

|

|

|

|

|

|

|

|

|

| 78 |

|

| 79 |

-

# Simple file-based inference

|

| 80 |

-

unreflectanything.inference("input_with_highlights.png", output="diffuse_result.png")

|

| 81 |

|

|

|

|

|

|

|

|

|

|

|

|

|

| 82 |

```

|

|

|

|

| 83 |

|

| 84 |

-

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 85 |

|

| 86 |

-

|

| 87 |

|

| 88 |

-

|

|

|

|

|

|

|

| 89 |

|

| 90 |

-

|

| 91 |

-

|

| 92 |

-

* **Verification**: `ura verify --dataset /path/to/dataset`

|

| 93 |

|

| 94 |

-

|

|

|

|

|

|

|

|

|

|

| 95 |

|

| 96 |

-

|

|

|

|

|

|

|

| 97 |

|

| 98 |

-

|

|

|

|

| 99 |

|

| 100 |

-

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 101 |

@misc{rota2025unreflectanythingrgbonlyhighlightremoval,

|

| 102 |

title={UnReflectAnything: RGB-Only Highlight Removal by Rendering Synthetic Specular Supervision},

|

| 103 |

author={Alberto Rota and Mert Kiray and Mert Asim Karaoglu and Patrick Ruhkamp and Elena De Momi and Nassir Navab and Benjamin Busam},

|

|

@@ -105,11 +116,6 @@ If you use UnReflectAnything in your research or pipeline, please cite our paper

|

|

| 105 |

eprint={2512.09583},

|

| 106 |

archivePrefix={arXiv},

|

| 107 |

primaryClass={cs.CV},

|

| 108 |

-

url={

|

| 109 |

}

|

| 110 |

-

|

| 111 |

```

|

| 112 |

-

|

| 113 |

-

---

|

| 114 |

-

|

| 115 |

-

**License**: This project is licensed under the [MIT License](https://mit-license.org/).

|

|

|

|

| 1 |

+

# UnReflectAnything

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 2 |

|

| 3 |

+

[](https://alberto-rota.github.io/UnReflectAnything/)

|

| 4 |

+

[]

|

| 5 |

[](https://arxiv.org/abs/2512.09583)

|

| 6 |

+

[](https://huggingface.co/spaces/AlbeRota/UnReflectAnything)

|

| 7 |

+

[](https://huggingface.co/AlbeRota/UnReflectAnything)

|

| 8 |

+

[](https://github.com/alberto-rota/UnReflectAnything/wiki)

|

| 9 |

+

[](https://mit-license.org/)

|

| 10 |

+

### RGB-Only Highlight Removal by Rendering Synthetic Specular Supervision

|

| 11 |

+

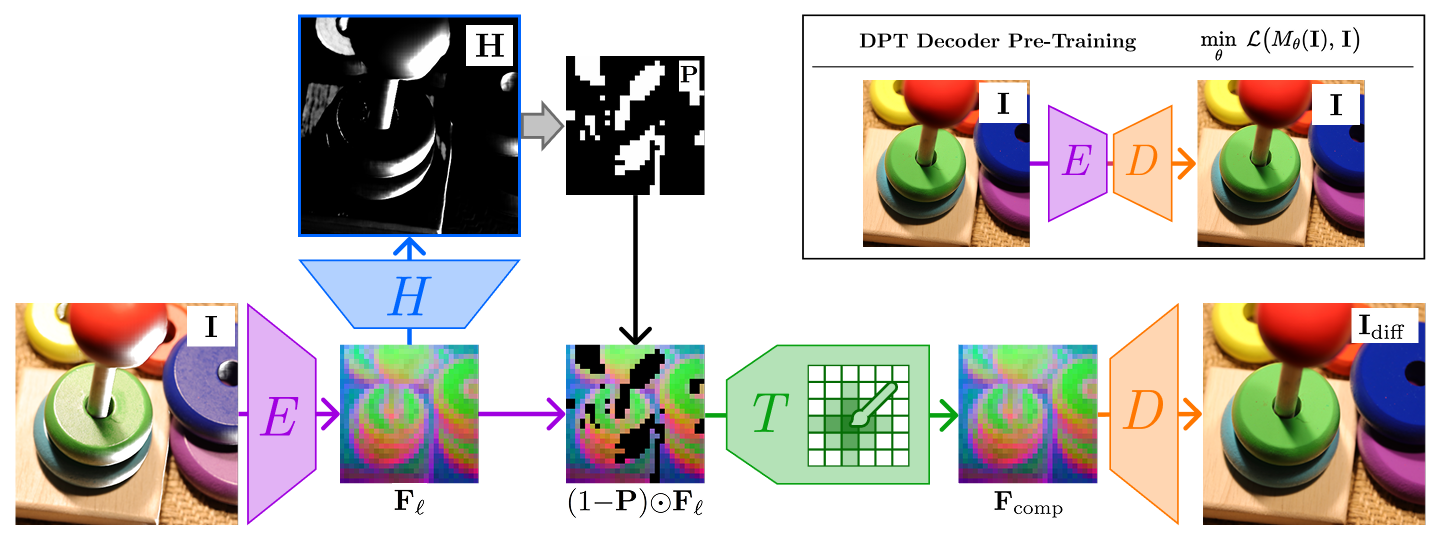

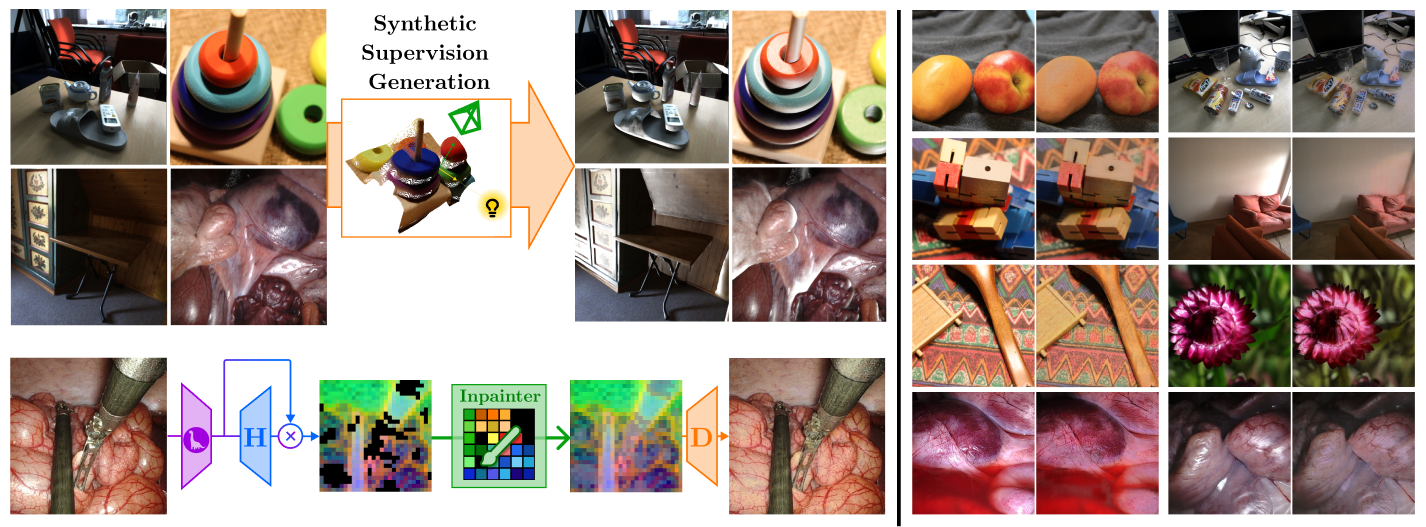

UnReflectAnything inputs any RGB image and removes specular highlights, returning a clean diffuse-only outputs. We trained UnReflectAnything by synthetizing specularities and supervising in DINOv3 feature space.

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 12 |

|

|

|

|

|

|

|

| 13 |

|

| 14 |

+

UnReflectAnything works on both natural indoor and surgical/endoscopic domain data.

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 15 |

|

| 16 |

---

|

| 17 |

+

|

| 18 |

|

| 19 |

+

## Installation

|

|

|

|

|

|

|

| 20 |

```bash

|

| 21 |

pip install unreflectanything

|

|

|

|

| 22 |

```

|

| 23 |

+

Install UnReflectAnything as a Python Package.

|

| 24 |

+

|

| 25 |

+

The minimum required Python version is 3.11, but development and all experiments have been bases on **Python 3.12**.

|

| 26 |

|

| 27 |

+

For GPU support, make sure PyTorch comes with CUDA version for your system (see [PyTorch Get Started](https://pytorch.org/get-started/locally/)).

|

| 28 |

|

|

|

|

| 29 |

|

| 30 |

+

## Setting up

|

| 31 |

+

After pip-installing, you can use the `unreflectanything` CLI command, which is also aliased to `unreflect` and `ura`. The three commands are equivalent.

|

| 32 |

|

| 33 |

+

With the CLI you can already download the model weights with

|

| 34 |

```bash

|

|

|

|

| 35 |

unreflectanything download --weights

|

| 36 |

+

```

|

| 37 |

+

and some sample images with

|

| 38 |

+

```bash

|

| 39 |

+

unreflectanything download --images

|

| 40 |

```

|

| 41 |

|

| 42 |

+

Weights are stored by default in `~/.cache/unreflectanything/weights` (or `$XDG_CACHE_HOME/unreflectanything/weights` if set ; `%LOCALAPPDATA%\unreflectanything` for Windows). Use `--output-dir` to choose another location.

|

|

|

|

|

|

|

|

|

|

|

|

|

| 43 |

|

| 44 |

+

Both the weights and images are stored on the [HuggingFace Model Repo](https://huggingface.co/spaces/AlbeRota/UnReflectAnything).

|

|

|

|

| 45 |

|

| 46 |

+

## Enable shell completion

|

| 47 |

+

Shell completion is available for the `bash` and `zsh` shells. Run

|

| 48 |

+

```bash

|

| 49 |

+

unreflectanything completion bash

|

| 50 |

+

```

|

| 51 |

+

and execute the `echo ...` command that gets printed.

|

| 52 |

|

|

|

|

|

|

|

| 53 |

|

| 54 |

+

## Command Line Interface

|

| 55 |

+

Get an overview of the available CLI endpoints with

|

| 56 |

+

```

|

| 57 |

+

unreflectanything --help # alias 'unreflect --help' alias 'ura --help'

|

| 58 |

```

|

| 59 |

+

Refer to the [Wiki](https://github.com/alberto-rota/UnReflectAnything/wiki) to get detailed documentation about each endpoint. We report a summary of the available subcommands. Remember that `ura` is aliased to the `unreflectanything` command

|

| 60 |

|

| 61 |

+

| Subcommand | Description | Command |

|

| 62 |

+

|------------|-------------|-------------|

|

| 63 |

+

| `inference` | Run inference on an image directory |`ura inference --input /path/to/images --output /path/to/unref_images` |

|

| 64 |

+

| `train` | Run training | `ura train --config config_train.yaml`|

|

| 65 |

+

| `test` | Run evaluation on a trained model |`ura test --config config_test.yaml`|

|

| 66 |

+

| `download` | Download checkpoint weights, sample images, notebooks |`ura download --weights`|

|

| 67 |

+

| `verify` | Verify weights installation and compatibility, as well as dataset directory structure | `ura verify --dataset /path/to/dataset`|

|

| 68 |

+

| `evaluate` | Compute metrics on output data | `ura evaluate --output /path/to/unref_images --gt /path/to/groundtruth_images/`|

|

| 69 |

+

| `completion` | Print shell completion (bash/zsh): |`ura completion bash` |

|

| 70 |

+

| `cite` | Print shell completion (bash/zsh)| `ura cite --bibtex` |

|

| 71 |

+

|

| 72 |

+

## Python API

|

| 73 |

|

| 74 |

+

The same endpoints above are exposed as a Python API. Refer to the [Wiki](https://github.com/alberto-rota/UnReflectAnything/wiki) to get detailed documentation about each endpoint. A few examples are reported below

|

| 75 |

|

| 76 |

+

```python

|

| 77 |

+

import unreflectanything as ura

|

| 78 |

+

import torch

|

| 79 |

|

| 80 |

+

# Get the model class (e.g. for custom setup or training)

|

| 81 |

+

ModelClass = ura.model()

|

|

|

|

| 82 |

|

| 83 |

+

# Get a pretrained model (torch.nn.Module) and run on batched RGB

|

| 84 |

+

uramodel = ura.model(pretrained=True) # uses cached weights; run 'ura download --weights' first

|

| 85 |

+

images = torch.rand(2, 3, 448, 448, device="cuda") # [B, 3, H, W], values in [0, 1]

|

| 86 |

+

model_out = uramodel(images) # [B, 3, H, W] diffuse tensor

|

| 87 |

|

| 88 |

+

# File-based or tensor-based inference (one-shot, no model handle)

|

| 89 |

+

ura.inference("input.png", output="output.png")

|

| 90 |

+

result = ura.inference(images) # tensor input returns tensor

|

| 91 |

|

| 92 |

+

# Run training or testing

|

| 93 |

+

ura.run_pipeline(mode="train") # or mode="test"

|

| 94 |

|

| 95 |

+

# Run inference from options

|

| 96 |

+

options = ura.InferenceOptions(

|

| 97 |

+

weights_path="path/to/full_model_weights.pt",

|

| 98 |

+

input_dir="path/to/input/images",

|

| 99 |

+

output_dir="path/to/output/diffuse",

|

| 100 |

+

)

|

| 101 |

+

ura.run_inference(options)

|

| 102 |

+

```

|

| 103 |

+

|

| 104 |

+

## Citation

|

| 105 |

+

If you include UnReflectAnything in your pipline or research work, we encourage you cite our work.

|

| 106 |

+

Get the citation entry with

|

| 107 |

+

```bash

|

| 108 |

+

unreflectanything cite --bibtex

|

| 109 |

+

```

|

| 110 |

+

or copy it directly from below

|

| 111 |

+

```

|

| 112 |

@misc{rota2025unreflectanythingrgbonlyhighlightremoval,

|

| 113 |

title={UnReflectAnything: RGB-Only Highlight Removal by Rendering Synthetic Specular Supervision},

|

| 114 |

author={Alberto Rota and Mert Kiray and Mert Asim Karaoglu and Patrick Ruhkamp and Elena De Momi and Nassir Navab and Benjamin Busam},

|

|

|

|

| 116 |

eprint={2512.09583},

|

| 117 |

archivePrefix={arXiv},

|

| 118 |

primaryClass={cs.CV},

|

| 119 |

+

url={https://arxiv.org/abs/2512.09583},

|

| 120 |

}

|

|

|

|

| 121 |

```

|

|

|

|

|

|

|

|

|

|

|

|