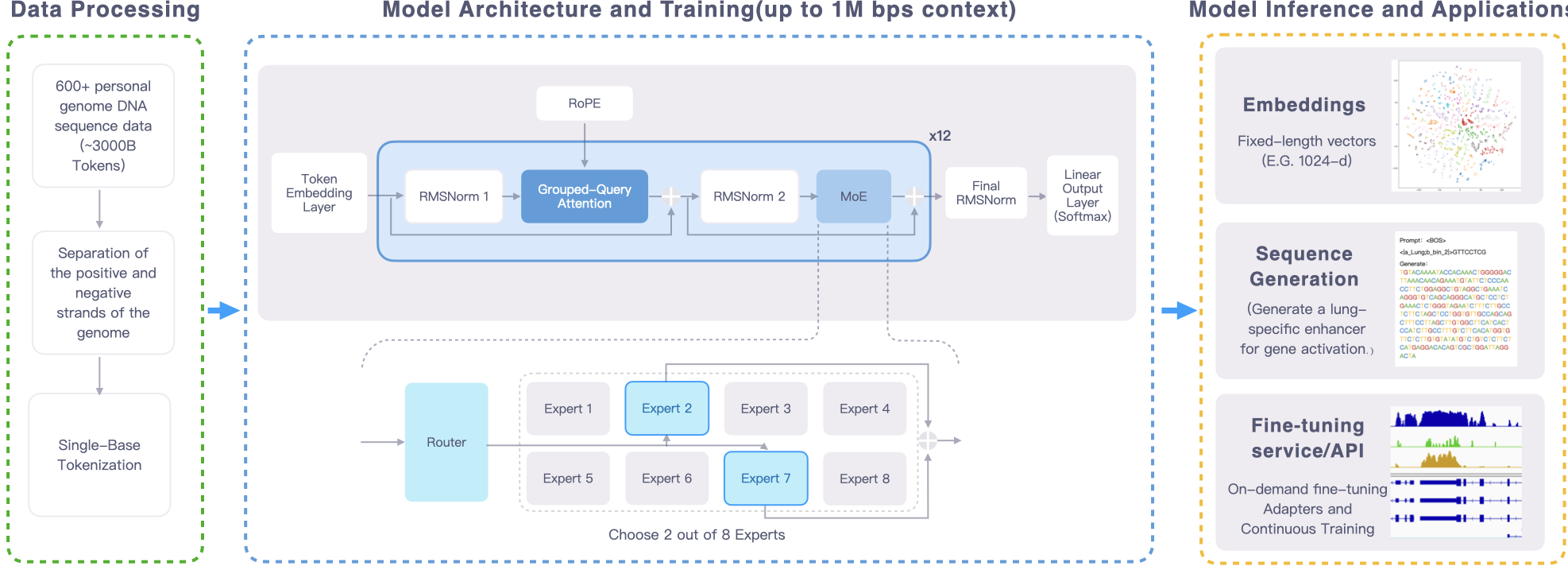

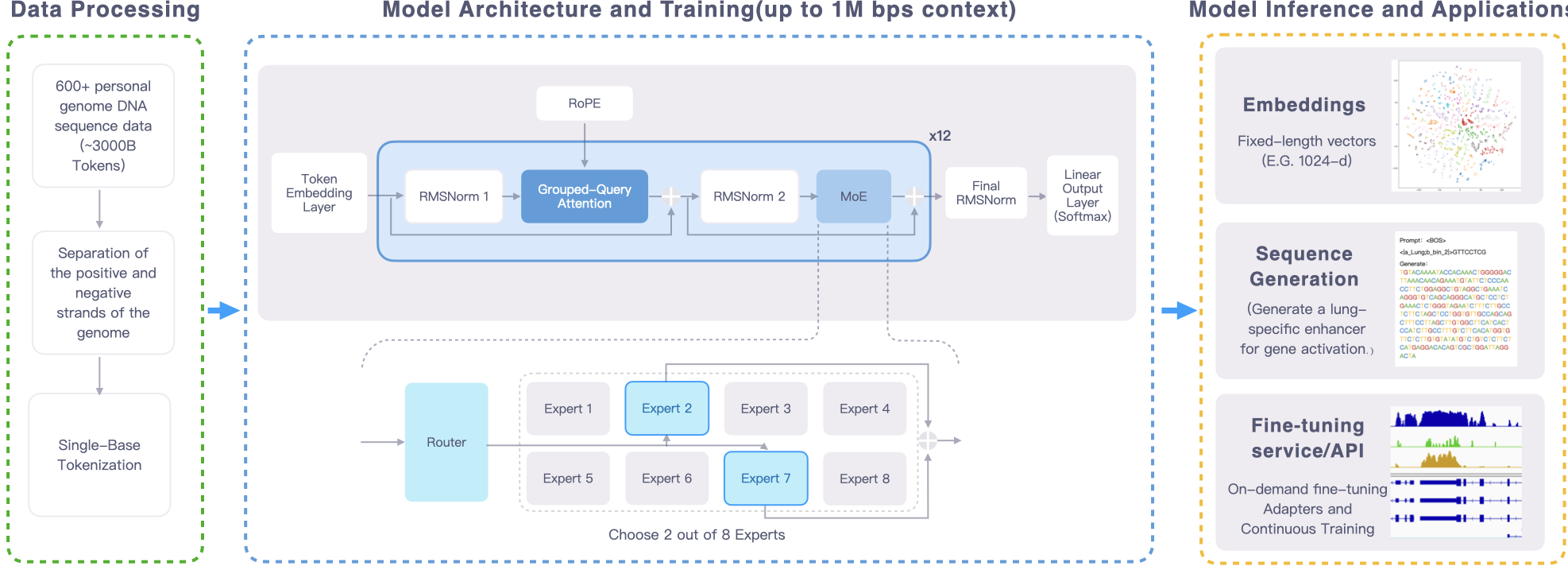

| Model Specification |

Genos 1.2B |

Genos 10B |

| Model Scale |

| Total Parameters |

1.2B |

10B |

| Activated Parameters |

0.33B |

2.87B |

| Trained Tokens |

1600 B |

2200 B |

| Architecture |

| Architecture Type |

MoE |

MoE |

| Number of Experts |

8 |

8 |

| Selected Experts per Token |

2 |

2 |

| Number of Layers |

12 |

12 |

| Attention Hidden Dimension |

1024 |

4096 |

| Number of Attention Heads |

16 |

16 |

| MoE Hidden Dimension (per Expert) |

4096 |

8192 |

| Vocabulary Size |

128 (padded) |

256 (padded) |

| Context Length |

up to 1M |

up to 1M |

Genos 1.2B and 10B checkpoints are available here:

- [Genos-1.2B](https://huggingface.co/BGI-HangzhouAI/Genos-1.2B)

- [Genos-10B](https://huggingface.co/BGI-HangzhouAI/Genos-10B)

We also provide checkpoints trained under the [Megatron-LM](https://github.com/NVIDIA/Megatron-LM) framework:

- [Genos-Megatron-1.2B](https://huggingface.co/BGI-HangzhouAI/Genos-Megatron-1.2B)

- [Genos-Megatron-10B](https://huggingface.co/BGI-HangzhouAI/Genos-Megatron-10B)