Image-to-Image

Diffusers

Safetensors

StableDiffusionPipeline

controlnet

remote-sensing

openstreetmap

Instructions to use BiliSakura/controlearth with libraries, inference providers, notebooks, and local apps. Follow these links to get started.

- Libraries

- Diffusers

How to use BiliSakura/controlearth with Diffusers:

pip install -U diffusers transformers accelerate

from diffusers import ControlNetModel, StableDiffusionControlNetPipeline controlnet = ControlNetModel.from_pretrained("BiliSakura/controlearth") pipe = StableDiffusionControlNetPipeline.from_pretrained( "fill-in-base-model", controlnet=controlnet ) - Notebooks

- Google Colab

- Kaggle

Add files using upload-large-folder tool

Browse files- README.md +51 -0

- controlnet/config.json +52 -0

- controlnet/diffusion_pytorch_model.safetensors +3 -0

- feature_extractor/preprocessor_config.json +20 -0

- inference_demo.py +78 -0

- model_index.json +28 -0

- scheduler/scheduler_config.json +13 -0

- text_encoder/config.json +25 -0

- text_encoder/model.safetensors +3 -0

- text_encoder/pytorch_model.fp16.bin +3 -0

- tokenizer/merges.txt +0 -0

- tokenizer/special_tokens_map.json +24 -0

- tokenizer/tokenizer_config.json +34 -0

- tokenizer/vocab.json +0 -0

- unet/config.json +36 -0

- unet/diffusion_pytorch_model.bin +3 -0

- unet/diffusion_pytorch_model.fp16.bin +3 -0

- unet/diffusion_pytorch_model.non_ema.safetensors +3 -0

- unet/diffusion_pytorch_model.safetensors +3 -0

- vae/config.json +29 -0

- vae/diffusion_pytorch_model.bin +3 -0

- vae/diffusion_pytorch_model.fp16.bin +3 -0

- vae/diffusion_pytorch_model.fp16.safetensors +3 -0

- vae/diffusion_pytorch_model.safetensors +3 -0

README.md

ADDED

|

@@ -0,0 +1,51 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

---

|

| 2 |

+

license: apache-2.0

|

| 3 |

+

---

|

| 4 |

+

# ControlEarth

|

| 5 |

+

|

| 6 |

+

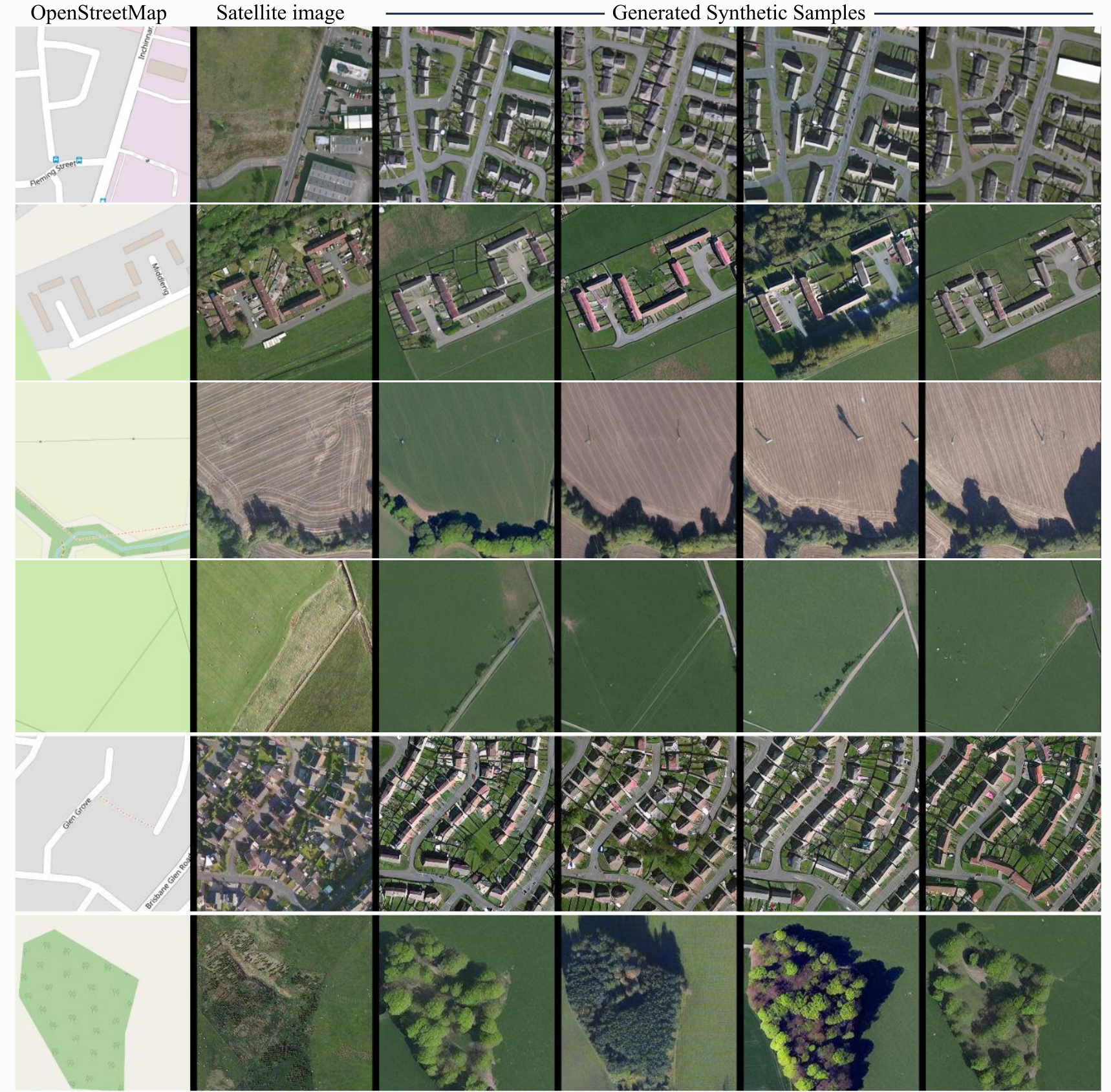

ControlNet model conditioned on OpenStreetMaps (OSM) to generate the corresponding satellite images.

|

| 7 |

+

|

| 8 |

+

Trained on the region of the Central Belt.

|

| 9 |

+

|

| 10 |

+

## Repo structure

|

| 11 |

+

|

| 12 |

+

This repo is self-contained and includes:

|

| 13 |

+

|

| 14 |

+

- **controlnet/** — ControlNet weights (OSM → satellite)

|

| 15 |

+

- **text_encoder/**, **unet/**, **vae/**, **scheduler/**, **tokenizer/** — Stable Diffusion v1-5 base

|

| 16 |

+

- **demo_images/** — Placeholder for input OSM images

|

| 17 |

+

- **inference_demo.py** — Full diffusers inference script (uses only this repo, no external downloads)

|

| 18 |

+

|

| 19 |

+

## Dataset used for training

|

| 20 |

+

|

| 21 |

+

The dataset used for the training procedure is the

|

| 22 |

+

[WorldImagery Clarity dataset](https://www.arcgis.com/home/item.html?id=ab399b847323487dba26809bf11ea91a).

|

| 23 |

+

|

| 24 |

+

The code for the dataset construction can be accessed in https://github.com/miquel-espinosa/map-sat.

|

| 25 |

+

|

| 26 |

+

## Usage

|

| 27 |

+

|

| 28 |

+

```bash

|

| 29 |

+

# From the repo root

|

| 30 |

+

python inference_demo.py

|

| 31 |

+

```

|

| 32 |

+

|

| 33 |

+

Or load programmatically:

|

| 34 |

+

|

| 35 |

+

```python

|

| 36 |

+

from diffusers import StableDiffusionControlNetPipeline, ControlNetModel, UniPCMultistepScheduler

|

| 37 |

+

import torch

|

| 38 |

+

|

| 39 |

+

repo = "/path/to/controlearth" # or "." when run from repo root

|

| 40 |

+

controlnet = ControlNetModel.from_pretrained(f"{repo}/controlnet", torch_dtype=torch.float16)

|

| 41 |

+

pipe = StableDiffusionControlNetPipeline.from_pretrained(

|

| 42 |

+

repo, controlnet=controlnet, torch_dtype=torch.float16,

|

| 43 |

+

safety_checker=None, requires_safety_checker=False

|

| 44 |

+

)

|

| 45 |

+

pipe.scheduler = UniPCMultistepScheduler.from_config(pipe.scheduler.config)

|

| 46 |

+

pipe.enable_model_cpu_offload()

|

| 47 |

+

|

| 48 |

+

image = pipe("convert this openstreetmap into its satellite view", num_inference_steps=50, image=control_image).images[0]

|

| 49 |

+

```

|

| 50 |

+

|

| 51 |

+

|

controlnet/config.json

ADDED

|

@@ -0,0 +1,52 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

{

|

| 2 |

+

"_class_name": "ControlNetModel",

|

| 3 |

+

"_diffusers_version": "0.37.0",

|

| 4 |

+

"_name_or_path": "/root/worksapce/models/mespinosami/controlearth",

|

| 5 |

+

"act_fn": "silu",

|

| 6 |

+

"addition_embed_type": null,

|

| 7 |

+

"addition_embed_type_num_heads": 64,

|

| 8 |

+

"addition_time_embed_dim": null,

|

| 9 |

+

"attention_head_dim": 8,

|

| 10 |

+

"block_out_channels": [

|

| 11 |

+

320,

|

| 12 |

+

640,

|

| 13 |

+

1280,

|

| 14 |

+

1280

|

| 15 |

+

],

|

| 16 |

+

"class_embed_type": null,

|

| 17 |

+

"conditioning_channels": 3,

|

| 18 |

+

"conditioning_embedding_out_channels": [

|

| 19 |

+

16,

|

| 20 |

+

32,

|

| 21 |

+

96,

|

| 22 |

+

256

|

| 23 |

+

],

|

| 24 |

+

"controlnet_conditioning_channel_order": "rgb",

|

| 25 |

+

"cross_attention_dim": 768,

|

| 26 |

+

"down_block_types": [

|

| 27 |

+

"CrossAttnDownBlock2D",

|

| 28 |

+

"CrossAttnDownBlock2D",

|

| 29 |

+

"CrossAttnDownBlock2D",

|

| 30 |

+

"DownBlock2D"

|

| 31 |

+

],

|

| 32 |

+

"downsample_padding": 1,

|

| 33 |

+

"encoder_hid_dim": null,

|

| 34 |

+

"encoder_hid_dim_type": null,

|

| 35 |

+

"flip_sin_to_cos": true,

|

| 36 |

+

"freq_shift": 0,

|

| 37 |

+

"global_pool_conditions": false,

|

| 38 |

+

"in_channels": 4,

|

| 39 |

+

"layers_per_block": 2,

|

| 40 |

+

"mid_block_scale_factor": 1,

|

| 41 |

+

"mid_block_type": "UNetMidBlock2DCrossAttn",

|

| 42 |

+

"norm_eps": 1e-05,

|

| 43 |

+

"norm_num_groups": 32,

|

| 44 |

+

"num_attention_heads": null,

|

| 45 |

+

"num_class_embeds": null,

|

| 46 |

+

"only_cross_attention": false,

|

| 47 |

+

"projection_class_embeddings_input_dim": null,

|

| 48 |

+

"resnet_time_scale_shift": "default",

|

| 49 |

+

"transformer_layers_per_block": 1,

|

| 50 |

+

"upcast_attention": false,

|

| 51 |

+

"use_linear_projection": false

|

| 52 |

+

}

|

controlnet/diffusion_pytorch_model.safetensors

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

version https://git-lfs.github.com/spec/v1

|

| 2 |

+

oid sha256:a0bb55bcbb4dc2e279a0b0c61051e992769acb3748874efd389c5da1a41cabda

|

| 3 |

+

size 1445157120

|

feature_extractor/preprocessor_config.json

ADDED

|

@@ -0,0 +1,20 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

{

|

| 2 |

+

"crop_size": 224,

|

| 3 |

+

"do_center_crop": true,

|

| 4 |

+

"do_convert_rgb": true,

|

| 5 |

+

"do_normalize": true,

|

| 6 |

+

"do_resize": true,

|

| 7 |

+

"feature_extractor_type": "CLIPFeatureExtractor",

|

| 8 |

+

"image_mean": [

|

| 9 |

+

0.48145466,

|

| 10 |

+

0.4578275,

|

| 11 |

+

0.40821073

|

| 12 |

+

],

|

| 13 |

+

"image_std": [

|

| 14 |

+

0.26862954,

|

| 15 |

+

0.26130258,

|

| 16 |

+

0.27577711

|

| 17 |

+

],

|

| 18 |

+

"resample": 3,

|

| 19 |

+

"size": 224

|

| 20 |

+

}

|

inference_demo.py

ADDED

|

@@ -0,0 +1,78 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

#!/usr/bin/env python3

|

| 2 |

+

"""

|

| 3 |

+

Full diffusers-style inference demo for ControlEarth.

|

| 4 |

+

Uses only this repo's checkpoints (base + controlnet), no external downloads.

|

| 5 |

+

"""

|

| 6 |

+

from pathlib import Path

|

| 7 |

+

|

| 8 |

+

import torch

|

| 9 |

+

from diffusers import (

|

| 10 |

+

ControlNetModel,

|

| 11 |

+

StableDiffusionControlNetPipeline,

|

| 12 |

+

UniPCMultistepScheduler,

|

| 13 |

+

)

|

| 14 |

+

from PIL import Image

|

| 15 |

+

|

| 16 |

+

|

| 17 |

+

def main():

|

| 18 |

+

repo = Path(__file__).resolve().parent

|

| 19 |

+

controlnet_path = repo / "controlnet"

|

| 20 |

+

demo_images = repo / "demo_images"

|

| 21 |

+

out_dir = repo / "outputs"

|

| 22 |

+

out_dir.mkdir(exist_ok=True)

|

| 23 |

+

|

| 24 |

+

print("Loading ControlNet...")

|

| 25 |

+

controlnet = ControlNetModel.from_pretrained(

|

| 26 |

+

str(controlnet_path), torch_dtype=torch.float16

|

| 27 |

+

)

|

| 28 |

+

|

| 29 |

+

print("Loading pipeline (base + controlnet)...")

|

| 30 |

+

pipe = StableDiffusionControlNetPipeline.from_pretrained(

|

| 31 |

+

str(repo),

|

| 32 |

+

controlnet=controlnet,

|

| 33 |

+

torch_dtype=torch.float16,

|

| 34 |

+

safety_checker=None,

|

| 35 |

+

requires_safety_checker=False,

|

| 36 |

+

)

|

| 37 |

+

pipe.scheduler = UniPCMultistepScheduler.from_config(pipe.scheduler.config)

|

| 38 |

+

pipe.enable_model_cpu_offload()

|

| 39 |

+

|

| 40 |

+

prompt = "convert this openstreetmap into its satellite view"

|

| 41 |

+

num_inference_steps = 50

|

| 42 |

+

|

| 43 |

+

# Find OSM images in demo_images

|

| 44 |

+

image_exts = {".png", ".jpg", ".jpeg", ".webp"}

|

| 45 |

+

image_paths = [

|

| 46 |

+

p for p in demo_images.iterdir()

|

| 47 |

+

if p.is_file() and p.suffix.lower() in image_exts

|

| 48 |

+

]

|

| 49 |

+

|

| 50 |

+

if not image_paths:

|

| 51 |

+

print(f"No images found in {demo_images}. Creating a placeholder run.")

|

| 52 |

+

# Create minimal 512x512 RGB placeholder for demo

|

| 53 |

+

placeholder = Image.new("RGB", (512, 512), color=(200, 200, 200))

|

| 54 |

+

image_paths = [None]

|

| 55 |

+

control_images = [placeholder]

|

| 56 |

+

else:

|

| 57 |

+

control_images = [

|

| 58 |

+

Image.open(p).convert("RGB") for p in sorted(image_paths)

|

| 59 |

+

]

|

| 60 |

+

|

| 61 |

+

for idx, control_image in enumerate(control_images):

|

| 62 |

+

name = image_paths[idx].stem if image_paths[idx] else "placeholder"

|

| 63 |

+

print(f"Generating for {name}...")

|

| 64 |

+

for i in range(3):

|

| 65 |

+

result = pipe(

|

| 66 |

+

prompt,

|

| 67 |

+

num_inference_steps=num_inference_steps,

|

| 68 |

+

image=control_image,

|

| 69 |

+

).images[0]

|

| 70 |

+

out_path = out_dir / f"{name}-{i}.png"

|

| 71 |

+

result.save(out_path)

|

| 72 |

+

print(f" Saved {out_path}")

|

| 73 |

+

|

| 74 |

+

print(f"Done. Outputs in {out_dir}")

|

| 75 |

+

|

| 76 |

+

|

| 77 |

+

if __name__ == "__main__":

|

| 78 |

+

main()

|

model_index.json

ADDED

|

@@ -0,0 +1,28 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

{

|

| 2 |

+

"_class_name": "StableDiffusionPipeline",

|

| 3 |

+

"_diffusers_version": "0.6.0",

|

| 4 |

+

"feature_extractor": [

|

| 5 |

+

"transformers",

|

| 6 |

+

"CLIPImageProcessor"

|

| 7 |

+

],

|

| 8 |

+

"scheduler": [

|

| 9 |

+

"diffusers",

|

| 10 |

+

"PNDMScheduler"

|

| 11 |

+

],

|

| 12 |

+

"text_encoder": [

|

| 13 |

+

"transformers",

|

| 14 |

+

"CLIPTextModel"

|

| 15 |

+

],

|

| 16 |

+

"tokenizer": [

|

| 17 |

+

"transformers",

|

| 18 |

+

"CLIPTokenizer"

|

| 19 |

+

],

|

| 20 |

+

"unet": [

|

| 21 |

+

"diffusers",

|

| 22 |

+

"UNet2DConditionModel"

|

| 23 |

+

],

|

| 24 |

+

"vae": [

|

| 25 |

+

"diffusers",

|

| 26 |

+

"AutoencoderKL"

|

| 27 |

+

]

|

| 28 |

+

}

|

scheduler/scheduler_config.json

ADDED

|

@@ -0,0 +1,13 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

{

|

| 2 |

+

"_class_name": "PNDMScheduler",

|

| 3 |

+

"_diffusers_version": "0.6.0",

|

| 4 |

+

"beta_end": 0.012,

|

| 5 |

+

"beta_schedule": "scaled_linear",

|

| 6 |

+

"beta_start": 0.00085,

|

| 7 |

+

"num_train_timesteps": 1000,

|

| 8 |

+

"set_alpha_to_one": false,

|

| 9 |

+

"skip_prk_steps": true,

|

| 10 |

+

"steps_offset": 1,

|

| 11 |

+

"trained_betas": null,

|

| 12 |

+

"clip_sample": false

|

| 13 |

+

}

|

text_encoder/config.json

ADDED

|

@@ -0,0 +1,25 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

{

|

| 2 |

+

"_name_or_path": "openai/clip-vit-large-patch14",

|

| 3 |

+

"architectures": [

|

| 4 |

+

"CLIPTextModel"

|

| 5 |

+

],

|

| 6 |

+

"attention_dropout": 0.0,

|

| 7 |

+

"bos_token_id": 0,

|

| 8 |

+

"dropout": 0.0,

|

| 9 |

+

"eos_token_id": 2,

|

| 10 |

+

"hidden_act": "quick_gelu",

|

| 11 |

+

"hidden_size": 768,

|

| 12 |

+

"initializer_factor": 1.0,

|

| 13 |

+

"initializer_range": 0.02,

|

| 14 |

+

"intermediate_size": 3072,

|

| 15 |

+

"layer_norm_eps": 1e-05,

|

| 16 |

+

"max_position_embeddings": 77,

|

| 17 |

+

"model_type": "clip_text_model",

|

| 18 |

+

"num_attention_heads": 12,

|

| 19 |

+

"num_hidden_layers": 12,

|

| 20 |

+

"pad_token_id": 1,

|

| 21 |

+

"projection_dim": 768,

|

| 22 |

+

"torch_dtype": "float32",

|

| 23 |

+

"transformers_version": "4.22.0.dev0",

|

| 24 |

+

"vocab_size": 49408

|

| 25 |

+

}

|

text_encoder/model.safetensors

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

version https://git-lfs.github.com/spec/v1

|

| 2 |

+

oid sha256:d008943c017f0092921106440254dbbe00b6a285f7883ec8ba160c3faad88334

|

| 3 |

+

size 492265874

|

text_encoder/pytorch_model.fp16.bin

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

version https://git-lfs.github.com/spec/v1

|

| 2 |

+

oid sha256:05eee911f195625deeab86f0b22b115d7d8bc3adbfc1404f03557f7e4e6a8fd7

|

| 3 |

+

size 246187076

|

tokenizer/merges.txt

ADDED

|

The diff for this file is too large to render.

See raw diff

|

|

|

tokenizer/special_tokens_map.json

ADDED

|

@@ -0,0 +1,24 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

{

|

| 2 |

+

"bos_token": {

|

| 3 |

+

"content": "<|startoftext|>",

|

| 4 |

+

"lstrip": false,

|

| 5 |

+

"normalized": true,

|

| 6 |

+

"rstrip": false,

|

| 7 |

+

"single_word": false

|

| 8 |

+

},

|

| 9 |

+

"eos_token": {

|

| 10 |

+

"content": "<|endoftext|>",

|

| 11 |

+

"lstrip": false,

|

| 12 |

+

"normalized": true,

|

| 13 |

+

"rstrip": false,

|

| 14 |

+

"single_word": false

|

| 15 |

+

},

|

| 16 |

+

"pad_token": "<|endoftext|>",

|

| 17 |

+

"unk_token": {

|

| 18 |

+

"content": "<|endoftext|>",

|

| 19 |

+

"lstrip": false,

|

| 20 |

+

"normalized": true,

|

| 21 |

+

"rstrip": false,

|

| 22 |

+

"single_word": false

|

| 23 |

+

}

|

| 24 |

+

}

|

tokenizer/tokenizer_config.json

ADDED

|

@@ -0,0 +1,34 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

{

|

| 2 |

+

"add_prefix_space": false,

|

| 3 |

+

"bos_token": {

|

| 4 |

+

"__type": "AddedToken",

|

| 5 |

+

"content": "<|startoftext|>",

|

| 6 |

+

"lstrip": false,

|

| 7 |

+

"normalized": true,

|

| 8 |

+

"rstrip": false,

|

| 9 |

+

"single_word": false

|

| 10 |

+

},

|

| 11 |

+

"do_lower_case": true,

|

| 12 |

+

"eos_token": {

|

| 13 |

+

"__type": "AddedToken",

|

| 14 |

+

"content": "<|endoftext|>",

|

| 15 |

+

"lstrip": false,

|

| 16 |

+

"normalized": true,

|

| 17 |

+

"rstrip": false,

|

| 18 |

+

"single_word": false

|

| 19 |

+

},

|

| 20 |

+

"errors": "replace",

|

| 21 |

+

"model_max_length": 77,

|

| 22 |

+

"name_or_path": "openai/clip-vit-large-patch14",

|

| 23 |

+

"pad_token": "<|endoftext|>",

|

| 24 |

+

"special_tokens_map_file": "./special_tokens_map.json",

|

| 25 |

+

"tokenizer_class": "CLIPTokenizer",

|

| 26 |

+

"unk_token": {

|

| 27 |

+

"__type": "AddedToken",

|

| 28 |

+

"content": "<|endoftext|>",

|

| 29 |

+

"lstrip": false,

|

| 30 |

+

"normalized": true,

|

| 31 |

+

"rstrip": false,

|

| 32 |

+

"single_word": false

|

| 33 |

+

}

|

| 34 |

+

}

|

tokenizer/vocab.json

ADDED

|

The diff for this file is too large to render.

See raw diff

|

|

|

unet/config.json

ADDED

|

@@ -0,0 +1,36 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

{

|

| 2 |

+

"_class_name": "UNet2DConditionModel",

|

| 3 |

+

"_diffusers_version": "0.6.0",

|

| 4 |

+

"act_fn": "silu",

|

| 5 |

+

"attention_head_dim": 8,

|

| 6 |

+

"block_out_channels": [

|

| 7 |

+

320,

|

| 8 |

+

640,

|

| 9 |

+

1280,

|

| 10 |

+

1280

|

| 11 |

+

],

|

| 12 |

+

"center_input_sample": false,

|

| 13 |

+

"cross_attention_dim": 768,

|

| 14 |

+

"down_block_types": [

|

| 15 |

+

"CrossAttnDownBlock2D",

|

| 16 |

+

"CrossAttnDownBlock2D",

|

| 17 |

+

"CrossAttnDownBlock2D",

|

| 18 |

+

"DownBlock2D"

|

| 19 |

+

],

|

| 20 |

+

"downsample_padding": 1,

|

| 21 |

+

"flip_sin_to_cos": true,

|

| 22 |

+

"freq_shift": 0,

|

| 23 |

+

"in_channels": 4,

|

| 24 |

+

"layers_per_block": 2,

|

| 25 |

+

"mid_block_scale_factor": 1,

|

| 26 |

+

"norm_eps": 1e-05,

|

| 27 |

+

"norm_num_groups": 32,

|

| 28 |

+

"out_channels": 4,

|

| 29 |

+

"sample_size": 64,

|

| 30 |

+

"up_block_types": [

|

| 31 |

+

"UpBlock2D",

|

| 32 |

+

"CrossAttnUpBlock2D",

|

| 33 |

+

"CrossAttnUpBlock2D",

|

| 34 |

+

"CrossAttnUpBlock2D"

|

| 35 |

+

]

|

| 36 |

+

}

|

unet/diffusion_pytorch_model.bin

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

version https://git-lfs.github.com/spec/v1

|

| 2 |

+

oid sha256:c7da0e21ba7ea50637bee26e81c220844defdf01aafca02b2c42ecdadb813de4

|

| 3 |

+

size 3438354725

|

unet/diffusion_pytorch_model.fp16.bin

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

version https://git-lfs.github.com/spec/v1

|

| 2 |

+

oid sha256:30eb3dc47c90e4a55476332b284b2331774c530edbbb83b70cacdd9e7b91af92

|

| 3 |

+

size 1719327893

|

unet/diffusion_pytorch_model.non_ema.safetensors

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

version https://git-lfs.github.com/spec/v1

|

| 2 |

+

oid sha256:cd1b6db09a81cb1d39fbd245a89c1e3db9da9fe8eba5e8f9098ea6c4994221d3

|

| 3 |

+

size 3438167536

|

unet/diffusion_pytorch_model.safetensors

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

version https://git-lfs.github.com/spec/v1

|

| 2 |

+

oid sha256:19da7aaa4b880e59d56843f1fcb4dd9b599c28a1d9d9af7c1143057c8ffae9f1

|

| 3 |

+

size 3438167540

|

vae/config.json

ADDED

|

@@ -0,0 +1,29 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

{

|

| 2 |

+

"_class_name": "AutoencoderKL",

|

| 3 |

+

"_diffusers_version": "0.6.0",

|

| 4 |

+

"act_fn": "silu",

|

| 5 |

+

"block_out_channels": [

|

| 6 |

+

128,

|

| 7 |

+

256,

|

| 8 |

+

512,

|

| 9 |

+

512

|

| 10 |

+

],

|

| 11 |

+

"down_block_types": [

|

| 12 |

+

"DownEncoderBlock2D",

|

| 13 |

+

"DownEncoderBlock2D",

|

| 14 |

+

"DownEncoderBlock2D",

|

| 15 |

+

"DownEncoderBlock2D"

|

| 16 |

+

],

|

| 17 |

+

"in_channels": 3,

|

| 18 |

+

"latent_channels": 4,

|

| 19 |

+

"layers_per_block": 2,

|

| 20 |

+

"norm_num_groups": 32,

|

| 21 |

+

"out_channels": 3,

|

| 22 |

+

"sample_size": 512,

|

| 23 |

+

"up_block_types": [

|

| 24 |

+

"UpDecoderBlock2D",

|

| 25 |

+

"UpDecoderBlock2D",

|

| 26 |

+

"UpDecoderBlock2D",

|

| 27 |

+

"UpDecoderBlock2D"

|

| 28 |

+

]

|

| 29 |

+

}

|

vae/diffusion_pytorch_model.bin

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

version https://git-lfs.github.com/spec/v1

|

| 2 |

+

oid sha256:1b134cded8eb78b184aefb8805b6b572f36fa77b255c483665dda931fa0130c5

|

| 3 |

+

size 334707217

|

vae/diffusion_pytorch_model.fp16.bin

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

version https://git-lfs.github.com/spec/v1

|

| 2 |

+

oid sha256:b7643b3e40b9f128eda5fe174fea73c3ef3903562651fb344a79439709c2e503

|

| 3 |

+

size 167405651

|

vae/diffusion_pytorch_model.fp16.safetensors

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

version https://git-lfs.github.com/spec/v1

|

| 2 |

+

oid sha256:4fbcf0ebe55a0984f5a5e00d8c4521d52359af7229bb4d81890039d2aa16dd7c

|

| 3 |

+

size 167335342

|

vae/diffusion_pytorch_model.safetensors

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

version https://git-lfs.github.com/spec/v1

|

| 2 |

+

oid sha256:a2b5134f4dbc140d9c11f11cba3233099e00af40f262f136c691fb7d38d2194c

|

| 3 |

+

size 334643276

|