insightface-000 of frvt | 97.760 | 93.358 | 98.850 | 99.372 | 99.058 | 87.694 | 97.481 | - | - | - |

+

+

+(MS1M-V2 means MS1M-ArcFace, MS1M-V3 means MS1M-RetinaFace).

+

+Inference time in above table was evaluated on Tesla V100 GPU, using onnxruntime-gpu==1.6.

+

+## Rules

+

+1. We have two tracks, academic and unconstrained.

+2. Please **DO NOT** register the account with messy or random characters(for both username and organization).

+3. **For academic submissions, we recommend to set the username as the name of your proposed paper or method. Orgnization hiding is not allowed(or the score will be banned) for this track but you can set the submission as private. You can also create multiple accounts, one account for one method.**

+4. Right now we only support 112x112 input, so make sure that the submission model accepts the correct input shape(['*',3,112,112]), in RGB order. Add an interpolate operator into the first layer of the submission model if you need a different input resolution.

+5. Participants submit onnx model, then get scores by our online evaluation.

+6. Matching score is measured by cosine similarity.

+7. **Online evaluation server uses onnxruntime-gpu==1.8, cuda==11.1, cudnn==8.0.5, GPU is RTX3090.**

+8. Any float-16 model weights is prohibited, as it will lead to incorrect model size estimiation.

+9. Please use ``onnx_helper.py`` to check whether the model is valid.

+10. Leaderboard is now ordered in terms of highest scores across two datasets: **TAR@Mask** and **TAR@MR-All**, by the formula of ``0.25 * TAR@Mask + 0.75 * TAR@MR-All``.

+

+

+

+## Submission Guide

+

+1. Participants must package the onnx model for submission using ``zip xxx.zip model.onnx``.

+2. Each participant can submit three times a day at most.

+3. Please sign-up with the real organization name. You can hide the organization name in our system if you like(not allowed for academic track).

+4. You can decide which submission to be displayed on the leaderboard by clicking 'Set Public' button.

+5. Please click 'sign-in' on submission server if find you're not logged in.

diff --git a/insightface/detection/README.md b/insightface/detection/README.md

new file mode 100644

index 0000000000000000000000000000000000000000..3139f812660603e612747c1af4d28b7e402ac807

--- /dev/null

+++ b/insightface/detection/README.md

@@ -0,0 +1,42 @@

+## Face Detection

+

+

+

+

+

+

+

+## Introduction

+

+These are the face detection methods of [InsightFace](https://insightface.ai)

+

+

+

+

+

+

+

+### Datasets

+

+ Please refer to [datasets](_datasets_) page for the details of face detection datasets used for training and evaluation.

+

+### Evaluation

+

+ Please refer to [evaluation](_evaluation_) page for the details of face recognition evaluation.

+

+

+## Methods

+

+

+Supported methods:

+

+- [x] [RetinaFace (CVPR'2020)](retinaface)

+- [x] [SCRFD (Arxiv'2021)](scrfd)

+- [x] [blazeface_paddle](blazeface_paddle)

+

+

+## Contributing

+

+We appreciate all contributions to improve the face detection model zoo of InsightFace.

+

+

diff --git a/insightface/detection/_datasets_/README.md b/insightface/detection/_datasets_/README.md

new file mode 100644

index 0000000000000000000000000000000000000000..618227009d54f25c981afaeec007cf30fcdf8e04

--- /dev/null

+++ b/insightface/detection/_datasets_/README.md

@@ -0,0 +1,31 @@

+# Face Detection Datasets

+

+(Updating)

+

+## Training Datasets

+

+### WiderFace

+

+http://shuoyang1213.me/WIDERFACE/

+

+

+

+## Test Datasets

+

+### WiderFace

+

+http://shuoyang1213.me/WIDERFACE/

+

+### FDDB

+

+http://vis-www.cs.umass.edu/fddb/

+

+### AFW

+

+

+### PASCAL FACE

+

+

+### MALF

+

+http://www.cbsr.ia.ac.cn/faceevaluation/

diff --git a/insightface/detection/blazeface_paddle/README.md b/insightface/detection/blazeface_paddle/README.md

new file mode 120000

index 0000000000000000000000000000000000000000..13c4f964bb9063f28d6e08dfb8c6b828a81d2536

--- /dev/null

+++ b/insightface/detection/blazeface_paddle/README.md

@@ -0,0 +1 @@

+README_en.md

\ No newline at end of file

diff --git a/insightface/detection/blazeface_paddle/README_cn.md b/insightface/detection/blazeface_paddle/README_cn.md

new file mode 100644

index 0000000000000000000000000000000000000000..0762058e58ca17356418930ef7fe3643dce0442f

--- /dev/null

+++ b/insightface/detection/blazeface_paddle/README_cn.md

@@ -0,0 +1,355 @@

+简体中文 | [English](README_en.md)

+

+# 人脸检测模型

+

+* [1. 简介](#简介)

+* [2. 模型库](#模型库)

+* [3. 安装](#安装)

+* [4. 数据准备](#数据准备)

+* [5. 参数配置](#参数配置)

+* [6. 训练与评估](#训练与评估)

+ * [6.1 训练](#训练)

+ * [6.2 在WIDER-FACE数据集上评估](#评估)

+ * [6.3 推理部署](#推理部署)

+ * [6.4 推理速度提升](#推理速度提升)

+ * [6.5 人脸检测demo](#人脸检测demo)

+* [7. 参考文献](#参考文献)

+

+

+

+## 1. 简介

+

+`Arcface-Paddle`是基于PaddlePaddle实现的,开源深度人脸检测、识别工具。`Arcface-Paddle`目前提供了三个预训练模型,包括用于人脸检测的 `BlazeFace`、用于人脸识别的 `ArcFace` 和 `MobileFace`。

+

+- 本部分内容为人脸检测部分,基于PaddleDetection进行开发。

+- 人脸识别相关内容可以参考:[人脸识别](../../recognition/arcface_paddle/README_cn.md)。

+- 基于PaddleInference的Whl包预测部署内容可以参考:[Whl包预测部署](https://github.com/littletomatodonkey/insight-face-paddle)。

+

+

+

+

+## 2. 模型库

+

+### WIDER-FACE数据集上的mAP

+

+| 网络结构 | 输入尺寸 | 图片个数/GPU | epoch数量 | Easy/Medium/Hard Set | CPU预测时延 | GPU 预测时延 | 模型大小(MB) | 预训练模型地址 | inference模型地址 | 配置文件 |

+|:------------:|:--------:|:----:|:-------:|:-------:|:-------:|:---------:|:----------:|:---------:|:---------:|:--------:|

+| BlazeFace-FPN-SSH | 640 | 8 | 1000 | 0.9187 / 0.8979 / 0.8168 | 31.7ms | 5.6ms | 0.646 |[下载链接](https://paddledet.bj.bcebos.com/models/blazeface_fpn_ssh_1000e.pdparams) | [下载链接](https://paddle-model-ecology.bj.bcebos.com/model/insight-face/blazeface_fpn_ssh_1000e_v1.0_infer.tar) | [配置文件](https://github.com/PaddlePaddle/PaddleDetection/tree/release/2.1/configs/face_detection/blazeface_fpn_ssh_1000e.yml) |

+| RetinaFace | 480x640 | - | - | - / - / 0.8250 | 182.0ms | 17.4ms | 1.680 | - | - | - |

+

+

+**注意:**

+- 我们使用多尺度评估策略得到`Easy/Medium/Hard Set`里的mAP。具体细节请参考[在WIDER-FACE数据集上评估](#评估)。

+- 测量速度时我们使用640*640的分辨,在 Intel(R) Xeon(R) Gold 6148 CPU @ 2.40GHz cpu,CPU线程数设置为5,更多细节请参考[推理速度提升](#推理速度提升)。

+- `RetinaFace`的速度测试代码参考自:[../retinaface/README.md](../retinaface/README.md).

+- 测试环境为

+ - CPU: Intel(R) Xeon(R) Gold 6184 CPU @ 2.40GHz

+ - GPU: a single NVIDIA Tesla V100

+

+

+

+

+## 3. 安装

+

+请参考[安装教程](../../recognition/arcface_paddle/install_ch.md)安装PaddlePaddle以及PaddleDetection。

+

+

+

+## 4. 数据准备

+我们使用[WIDER-FACE数据集](http://shuoyang1213.me/WIDERFACE/)进行训练和模型测试,官方网站提供了详细的数据介绍。

+- WIDER-Face数据源:

+使用如下目录结构加载`wider_face`类型的数据集:

+

+ ```

+ dataset/wider_face/

+ ├── wider_face_split

+ │ ├── wider_face_train_bbx_gt.txt

+ │ ├── wider_face_val_bbx_gt.txt

+ ├── WIDER_train

+ │ ├── images

+ │ │ ├── 0--Parade

+ │ │ │ ├── 0_Parade_marchingband_1_100.jpg

+ │ │ │ ├── 0_Parade_marchingband_1_381.jpg

+ │ │ │ │ ...

+ │ │ ├── 10--People_Marching

+ │ │ │ ...

+ ├── WIDER_val

+ │ ├── images

+ │ │ ├── 0--Parade

+ │ │ │ ├── 0_Parade_marchingband_1_1004.jpg

+ │ │ │ ├── 0_Parade_marchingband_1_1045.jpg

+ │ │ │ │ ...

+ │ │ ├── 10--People_Marching

+ │ │ │ ...

+ ```

+

+- 手动下载数据集:

+要下载WIDER-FACE数据集,请运行以下命令:

+```

+cd dataset/wider_face && ./download_wider_face.sh

+```

+

+

+

+## 5. 参数配置

+

+我们使用 `configs/face_detection/blazeface_fpn_ssh_1000e.yml`配置进行训练,配置文件摘要如下:

+

+```yaml

+

+_BASE_: [

+ '../datasets/wider_face.yml',

+ '../runtime.yml',

+ '_base_/optimizer_1000e.yml',

+ '_base_/blazeface_fpn.yml',

+ '_base_/face_reader.yml',

+]

+weights: output/blazeface_fpn_ssh_1000e/model_final

+multi_scale_eval: True

+

+```

+

+`blazeface_fpn_ssh_1000e.yml` 配置需要依赖其他的配置文件,在该例子中需要依赖:

+

+```

+wider_face.yml:主要说明了训练数据和验证数据的路径

+

+runtime.yml:主要说明了公共的运行参数,比如是否使用GPU、每多少个epoch存储checkpoint等

+

+optimizer_1000e.yml:主要说明了学习率和优化器的配置

+

+blazeface_fpn.yml:主要说明模型和主干网络的情况

+

+face_reader.yml:主要说明数据读取器配置,如batch size,并发加载子进程数等,同时包含读取后预处理操作,如resize、数据增强等等

+```

+

+根据实际情况,修改上述文件,比如数据集路径、batch size等。

+

+基础模型的配置可以参考`configs/face_detection/_base_/blazeface.yml`;

+改进模型增加FPN和SSH的neck结构,配置文件可以参考`configs/face_detection/_base_/blazeface_fpn.yml`,可以根据需求配置FPN和SSH,具体如下:

+```yaml

+BlazeNet:

+ blaze_filters: [[24, 24], [24, 24], [24, 48, 2], [48, 48], [48, 48]]

+ double_blaze_filters: [[48, 24, 96, 2], [96, 24, 96], [96, 24, 96],

+ [96, 24, 96, 2], [96, 24, 96], [96, 24, 96]]

+ act: hard_swish # 配置backbone中BlazeBlock的激活函数,基础模型为relu,增加FPN和SSH时需使用hard_swish

+

+BlazeNeck:

+ neck_type : fpn_ssh # 可选only_fpn、only_ssh和fpn_ssh

+ in_channel: [96,96]

+```

+

+

+

+## 6. 训练与评估

+

+

+

+### 6.1 训练

+首先,下载预训练模型文件:

+```bash

+wget https://paddledet.bj.bcebos.com/models/pretrained/blazenet_pretrain.pdparams

+```

+PaddleDetection提供了单卡/多卡训练模式,满足用户多种训练需求

+* GPU单卡训练

+```bash

+export CUDA_VISIBLE_DEVICES=0 #windows和Mac下不需要执行该命令

+python tools/train.py -c configs/face_detection/blazeface_fpn_ssh_1000e.yml -o pretrain_weight=blazenet_pretrain

+```

+

+* GPU多卡训练

+```bash

+export CUDA_VISIBLE_DEVICES=0,1,2,3 #windows和Mac下不需要执行该命令

+python -m paddle.distributed.launch --gpus 0,1,2,3 tools/train.py -c configs/face_detection/blazeface_fpn_ssh_1000e.yml -o pretrain_weight=blazenet_pretrain

+```

+* 模型恢复训练

+

+ 在日常训练过程中,有的用户由于一些原因导致训练中断,用户可以使用-r的命令恢复训练

+

+```bash

+export CUDA_VISIBLE_DEVICES=0 #windows和Mac下不需要执行该命令

+python tools/train.py -c configs/face_detection/blazeface_fpn_ssh_1000e.yml -r output/blazeface_fan_ssh_1000e/100

+ ```

+* 训练策略

+

+`BlazeFace`训练是以每卡`batch_size=32`在4卡GPU上进行训练(总`batch_size`是128),学习率为0.002,并且训练1000epoch。

+

+

+**注意:** 人脸检测模型目前不支持边训练边评估。

+

+

+

+### 6.2 在WIDER-FACE数据集上评估

+- 步骤一:评估并生成结果文件:

+```shell

+python -u tools/eval.py -c configs/face_detection/blazeface_fpn_ssh_1000e.yml \

+ -o weights=output/blazeface_fpn_ssh_1000e/model_final \

+ multi_scale_eval=True BBoxPostProcess.nms.score_threshold=0.1

+```

+设置`multi_scale_eval=True`进行多尺度评估,评估完成后,将在`output/pred`中生成txt格式的测试结果。

+

+- 步骤二:下载官方评估脚本和Ground Truth文件:

+```

+wget http://mmlab.ie.cuhk.edu.hk/projects/WIDERFace/support/eval_script/eval_tools.zip

+unzip eval_tools.zip && rm -f eval_tools.zip

+```

+

+- 步骤三:开始评估

+

+方法一:python评估。

+

+```bash

+git clone https://github.com/wondervictor/WiderFace-Evaluation.git

+cd WiderFace-Evaluation

+# 编译

+python3 setup.py build_ext --inplace

+# 开始评估

+python3 evaluation.py -p /path/to/PaddleDetection/output/pred -g /path/to/eval_tools/ground_truth

+```

+

+方法二:MatLab评估。

+

+```bash

+# 在`eval_tools/wider_eval.m`中修改保存结果路径和绘制曲线的名称:

+pred_dir = './pred';

+legend_name = 'Paddle-BlazeFace';

+

+`wider_eval.m` 是评估模块的主要执行程序。运行命令如下:

+matlab -nodesktop -nosplash -nojvm -r "run wider_eval.m;quit;"

+```

+

+

+### 6.3 推理部署

+

+在模型训练过程中保存的模型文件是包含前向预测和反向传播的过程,在实际的工业部署则不需要反向传播,因此需要将模型进行导成部署需要的模型格式。

+在PaddleDetection中提供了 `tools/export_model.py`脚本来导出模型

+

+```bash

+python tools/export_model.py -c configs/face_detection/blazeface_fpn_ssh_1000e.yml --output_dir=./inference_model \

+ -o weights=output/blazeface_fpn_ssh_1000e/best_model BBoxPostProcess.nms.score_threshold=0.1

+```

+

+预测模型会导出到`inference_model/blazeface_fpn_ssh_1000e`目录下,分别为`infer_cfg.yml`, `model.pdiparams`, `model.pdiparams.info`,`model.pdmodel` 如果不指定文件夹,模型则会导出在`output_inference`

+

+* 这里将nms后处理`score_threshold`修改为0.1,因为mAP基本没有影响的情况下,GPU预测速度能够大幅提升。更多关于模型导出的文档,请参考[模型导出文档](https://github.com/PaddlePaddle/PaddleDetection/deploy/EXPORT_MODEL.md)

+

+ PaddleDetection提供了PaddleInference、PaddleServing、PaddleLite多种部署形式,支持服务端、移动端、嵌入式等多种平台,提供了完善的Python和C++部署方案。

+* 在这里,我们以Python为例,说明如何使用PaddleInference进行模型部署

+

+```bash

+python deploy/python/infer.py --model_dir=./inference_model/blazeface_fpn_ssh_1000e --image_file=demo/road554.png --use_gpu=True

+```

+* 同时`infer.py`提供了丰富的接口,用户进行接入视频文件、摄像头进行预测,更多内容请参考[Python端预测部署](https://github.com/PaddlePaddle/PaddleDetection/deploy/python.md)

+

+* 更多关于预测部署的文档,请参考[预测部署文档](https://github.com/PaddlePaddle/PaddleDetection/deploy/README.md) 。

+

+

+

+### 6.4 推理速度提升

+如果想要复现我们提供的速度指标,请修改预测模型配置文件`./inference_model/blazeface_fpn_ssh_1000e/infer_cfg.yml`中的输入尺寸,如下所示:

+```yaml

+mode: fluid

+draw_threshold: 0.5

+metric: WiderFace

+arch: Face

+min_subgraph_size: 3

+Preprocess:

+- is_scale: false

+ mean:

+ - 123

+ - 117

+ - 104

+ std:

+ - 127.502231

+ - 127.502231

+ - 127.502231

+ type: NormalizeImage

+- interp: 1

+ keep_ratio: false

+ target_size:

+ - 640

+ - 640

+ type: Resize

+- type: Permute

+label_list:

+- face

+```

+如果希望模型在cpu环境下更快推理,可安装[paddlepaddle_gpu-0.0.0](https://paddle-wheel.bj.bcebos.com/develop-cpu-mkl/paddlepaddle-0.0.0-cp37-cp37m-linux_x86_64.whl) (mkldnn的依赖)可开启mkldnn加速推理。

+

+```bash

+# 使用GPU测速:

+python deploy/python/infer.py --model_dir=./inference_model/blazeface_fpn_ssh_1000e --image_dir=./path/images --run_benchmark=True --use_gpu=True

+

+# 使用cpu测速:

+# 下载paddle whl包

+wget https://paddle-wheel.bj.bcebos.com/develop-cpu-mkl/paddlepaddle-0.0.0-cp37-cp37m-linux_x86_64.whl

+# 安装paddlepaddle_gpu-0.0.0

+pip install paddlepaddle-0.0.0-cp37-cp37m-linux_x86_64.whl

+# 推理

+python deploy/python/infer.py --model_dir=./inference_model/blazeface_fpn_ssh_1000e --image_dir=./path/images --enable_mkldnn=True --run_benchmark=True --cpu_threads=5

+```

+

+

+

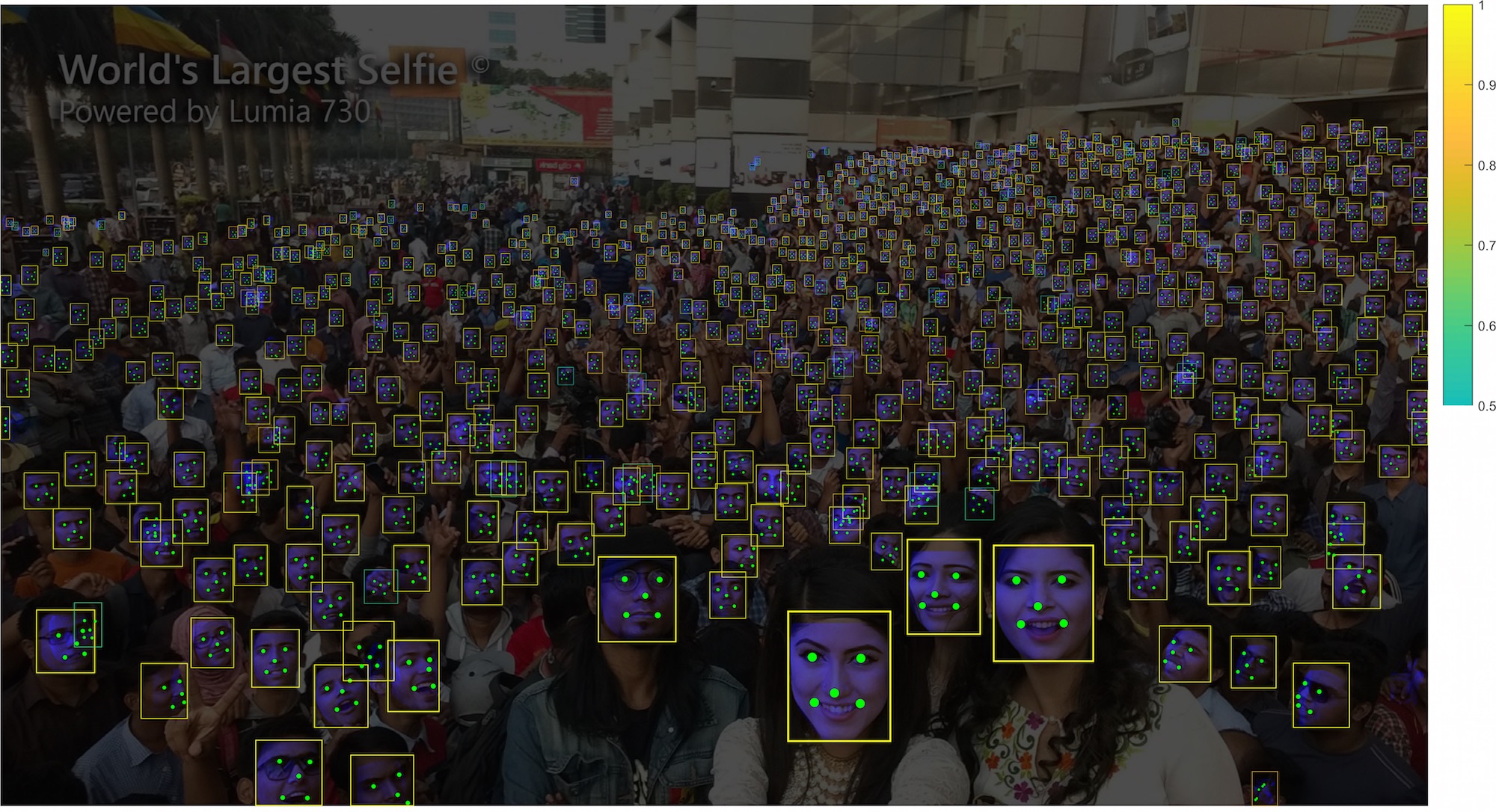

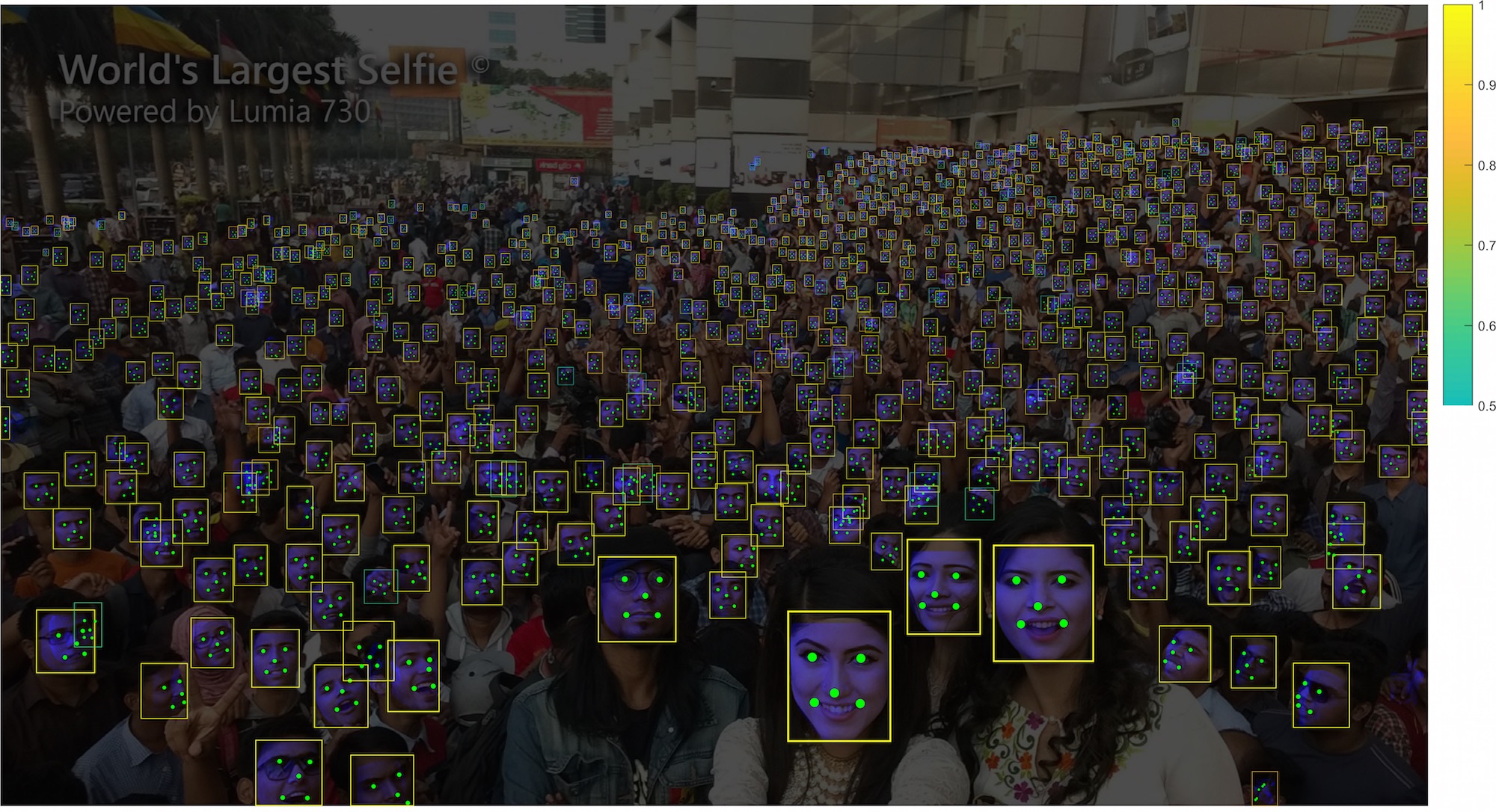

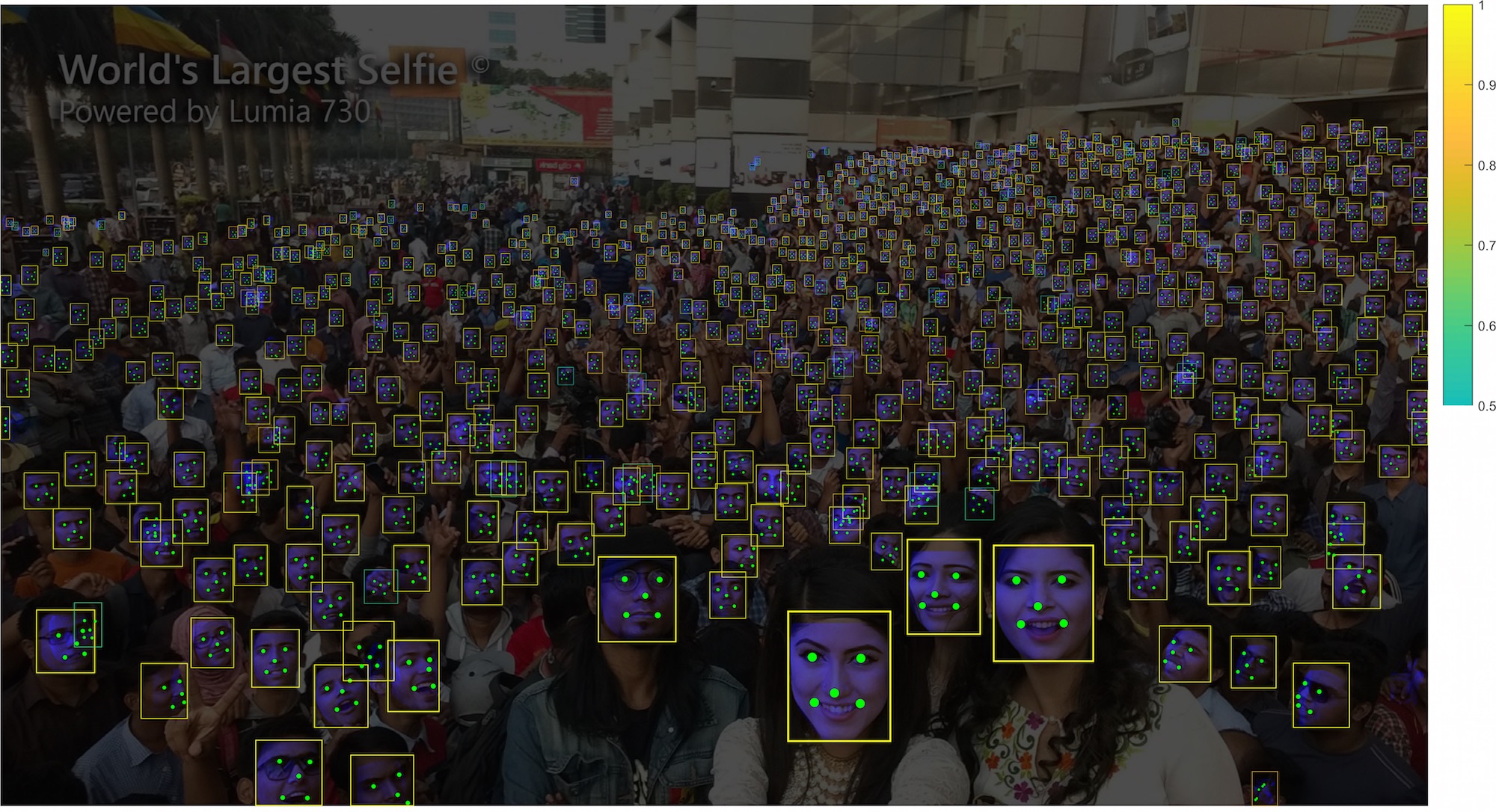

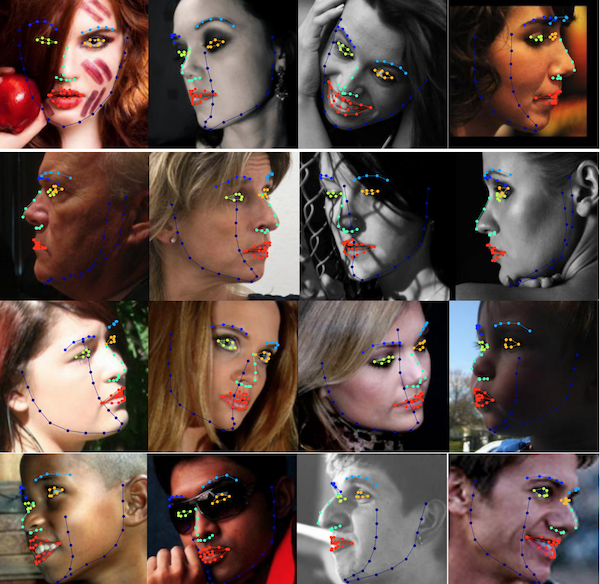

+### 6.5 人脸检测demo

+

+本节介绍基于提供的BlazeFace模型进行人脸检测。

+

+先下载待检测图像与字体文件。

+

+```bash

+# 下载用于人脸检测的示例图像

+wget https://raw.githubusercontent.com/littletomatodonkey/insight-face-paddle/main/demo/friends/query/friends1.jpg

+# 下载字体,用于可视化

+wget https://raw.githubusercontent.com/littletomatodonkey/insight-face-paddle/main/SourceHanSansCN-Medium.otf

+```

+

+示例图像如下所示。

+

+

+

+

+

+

+检测的示例命令如下。

+

+```shell

+python3.7 test_blazeface.py --input=friends1.jpg --output="./output"

+```

+

+最终可视化结果保存在`output`目录下,可视化结果如下所示。

+

+

+

+

+

+

+更多关于参数解释,索引库构建、人脸识别、whl包预测部署的内容可以参考:[Whl包预测部署](https://github.com/littletomatodonkey/insight-face-paddle)。

+

+

+

+## 7. 参考文献

+

+```

+@misc{long2020ppyolo,

+title={PP-YOLO: An Effective and Efficient Implementation of Object Detector},

+author={Xiang Long and Kaipeng Deng and Guanzhong Wang and Yang Zhang and Qingqing Dang and Yuan Gao and Hui Shen and Jianguo Ren and Shumin Han and Errui Ding and Shilei Wen},

+year={2020},

+eprint={2007.12099},

+archivePrefix={arXiv},

+primaryClass={cs.CV}

+}

+@misc{ppdet2019,

+title={PaddleDetection, Object detection and instance segmentation toolkit based on PaddlePaddle.},

+author={PaddlePaddle Authors},

+howpublished = {\url{https://github.com/PaddlePaddle/PaddleDetection}},

+year={2019}

+}

+@article{bazarevsky2019blazeface,

+title={BlazeFace: Sub-millisecond Neural Face Detection on Mobile GPUs},

+author={Valentin Bazarevsky and Yury Kartynnik and Andrey Vakunov and Karthik Raveendran and Matthias Grundmann},

+year={2019},

+eprint={1907.05047},

+ archivePrefix={arXiv}

+}

+```

diff --git a/insightface/detection/blazeface_paddle/README_en.md b/insightface/detection/blazeface_paddle/README_en.md

new file mode 100644

index 0000000000000000000000000000000000000000..24fd17863fab2907465960087c6c905f3dfc78ec

--- /dev/null

+++ b/insightface/detection/blazeface_paddle/README_en.md

@@ -0,0 +1,354 @@

+[简体中文](README_cn.md) | English

+

+# FaceDetection

+

+* [1. Introduction](#Introduction)

+* [2. Model Zoo](#Model_Zoo)

+* [3. Installation](#Installation)

+* [4. Data Pipline](#Data_Pipline)

+* [5. Configuration File](#Configuration_File)

+* [6. Training and Inference](#Training_and_Inference)

+ * [6.1 Training](#Training)

+ * [6.2 Evaluate on the WIDER FACE](#Evaluation)

+ * [6.3 Inference deployment](#Inference_deployment)

+ * [6.4 Improvement of inference speed](#Increase_in_inference_speed)

+ * [6.4 Face detection demo](#Face_detection_demo)

+* [7. Citations](#Citations)

+

+

+

+## 1. Introduction

+

+`Arcface-Paddle` is an open source deep face detection and recognition toolkit, powered by PaddlePaddle. `Arcface-Paddle` provides three related pretrained models now, include `BlazeFace` for face detection, `ArcFace` and `MobileFace` for face recognition.

+

+- This tutorial is mainly about face detection based on `PaddleDetection`.

+- For face recognition task, please refer to: [Face recognition tuturial](../../recognition/arcface_paddle/README_en.md).

+- For Whl package inference using PaddleInference, please refer to [whl package inference](https://github.com/littletomatodonkey/insight-face-paddle).

+

+

+

+## 2. Model Zoo

+

+### mAP in WIDER FACE

+

+| Model | input size | images/GPU | epochs | Easy/Medium/Hard Set | CPU time cost | GPU time cost| Model Size(MB) | Pretrained model | Inference model | Config |

+|:------------:|:--------:|:----:|:-------:|:-------:|:---------:|:---------:|:----------:|:---------:|:--------:|:--------:|

+| BlazeFace-FPN-SSH | 640×640 | 8 | 1000 | 0.9187 / 0.8979 / 0.8168 | 31.7ms | 5.6ms | 0.646 |[download link](https://paddledet.bj.bcebos.com/models/blazeface_fpn_ssh_1000e.pdparams) | [download link](https://paddle-model-ecology.bj.bcebos.com/model/insight-face/blazeface_fpn_ssh_1000e_v1.0_infer.tar) | [config](https://github.com/PaddlePaddle/PaddleDetection/tree/release/2.1/configs/face_detection/blazeface_fpn_ssh_1000e.yml) |

+| RetinaFace | 480x640 | - | - | - / - / 0.8250 | 182.0ms | 17.4ms | 1.680 | - | - | - |

+

+

+**NOTE:**

+- Get mAP in `Easy/Medium/Hard Set` by multi-scale evaluation. For details can refer to [Evaluation](#Evaluate-on-the-WIDER-FACE).

+- Measuring the speed, we use the resolution of `640×640`, in Intel(R) Xeon(R) Gold 6148 CPU @ 2.40GHz environment, cpu-threads are set as 5. For more details, you can refer to [Improvement of inference speed](#Increase_in_inference_speed).

+- Benchmark code for `RetinaFace` is from: [../retinaface/README.md](../retinaface/README.md).

+- The benchmark environment is

+ - CPU: Intel(R) Xeon(R) Gold 6184 CPU @ 2.40GHz

+ - GPU: a single NVIDIA Tesla V100

+

+

+

+## 3. Installation

+

+Please refer to [installation tutorial](../../recognition/arcface_paddle/install_en.md) to install PaddlePaddle and PaddleDetection.

+

+

+

+

+## 4. Data Pipline

+We use the [WIDER FACE dataset](http://shuoyang1213.me/WIDERFACE/) to carry out the training

+and testing of the model, the official website gives detailed data introduction.

+- WIDER Face data source:

+Loads `wider_face` type dataset with directory structures like this:

+

+ ```

+ dataset/wider_face/

+ ├── wider_face_split

+ │ ├── wider_face_train_bbx_gt.txt

+ │ ├── wider_face_val_bbx_gt.txt

+ ├── WIDER_train

+ │ ├── images

+ │ │ ├── 0--Parade

+ │ │ │ ├── 0_Parade_marchingband_1_100.jpg

+ │ │ │ ├── 0_Parade_marchingband_1_381.jpg

+ │ │ │ │ ...

+ │ │ ├── 10--People_Marching

+ │ │ │ ...

+ ├── WIDER_val

+ │ ├── images

+ │ │ ├── 0--Parade

+ │ │ │ ├── 0_Parade_marchingband_1_1004.jpg

+ │ │ │ ├── 0_Parade_marchingband_1_1045.jpg

+ │ │ │ │ ...

+ │ │ ├── 10--People_Marching

+ │ │ │ ...

+ ```

+

+- Download dataset manually:

+To download the WIDER FACE dataset, run the following commands:

+```

+cd dataset/wider_face && ./download_wider_face.sh

+```

+

+

+

+## 5. Configuration file

+

+We use the `configs/face_detection/blazeface_fpn_ssh_1000e.yml` configuration for training. The summary of the configuration file is as follows:

+

+```yaml

+_BASE_: [

+ '../datasets/wider_face.yml',

+ '../runtime.yml',

+ '_base_/optimizer_1000e.yml',

+ '_base_/blazeface_fpn.yml',

+ '_base_/face_reader.yml',

+]

+weights: output/blazeface_fpn_ssh_1000e/model_final

+multi_scale_eval: True

+```

+

+`blazeface_fpn_ssh_1000e.yml` The configuration needs to rely on other configuration files, in this example it needs to rely on:

+

+```

+wider_face.yml:Mainly explains the path of training data and verification data

+

+runtime.yml:Mainly describes the common operating parameters, such as whether to use GPU, how many epochs to store checkpoints, etc.

+

+optimizer_1000e.yml:Mainly explains the configuration of learning rate and optimizer

+

+blazeface_fpn.yml:Mainly explain the situation of the model and the backbone network

+

+face_reader.yml:It mainly describes the configuration of the data reader, such as batch size, the number of concurrent loading subprocesses, etc., and also includes post-reading preprocessing operations, such as resize, data enhancement, etc.

+```

+

+According to the actual situation, modify the above files, such as the data set path, batch size, etc.

+

+For the configuration of the base model, please refer to `configs/face_detection/_base_/blazeface.yml`.

+The improved model adds the neck structure of FPN and SSH. For the configuration file, please refer to `configs/face_detection/_base_/blazeface_fpn.yml`. You can configure FPN and SSH if needed, which is as follows:

+

+```yaml

+BlazeNet:

+ blaze_filters: [[24, 24], [24, 24], [24, 48, 2], [48, 48], [48, 48]]

+ double_blaze_filters: [[48, 24, 96, 2], [96, 24, 96], [96, 24, 96],

+ [96, 24, 96, 2], [96, 24, 96], [96, 24, 96]]

+ act: hard_swish # Configure the activation function of BlazeBlock in backbone, the basic model is relu, hard_swish is required when adding FPN and SSH

+

+BlazeNeck:

+ neck_type : fpn_ssh # Optional only_fpn, only_ssh and fpn_ssh

+ in_channel: [96,96]

+```

+

+

+

+## 6. Training_and_Inference

+

+

+

+### 6.1 Training

+Firstly, download the pretrained model.

+```bash

+wget https://paddledet.bj.bcebos.com/models/pretrained/blazenet_pretrain.pdparams

+```

+PaddleDetection provides a single-GPU/multi-GPU training mode to meet the various training needs of users.

+* single-GPU training

+```bash

+export CUDA_VISIBLE_DEVICES=0 # Do not need to execute this command under windows and Mac

+python tools/train.py -c configs/face_detection/blazeface_fpn_ssh_1000e.yml -o pretrain_weight=blazenet_pretrain

+```

+

+* multi-GPU training

+```bash

+export CUDA_VISIBLE_DEVICES=0,1,2,3 # Do not need to execute this command under windows and Mac

+python -m paddle.distributed.launch --gpus 0,1,2,3 tools/train.py -c configs/face_detection/blazeface_fpn_ssh_1000e.yml -o pretrain_weight=blazenet_pretrain

+```

+* Resume training from Checkpoint

+

+ In the daily training process, if the training was be interrupted, using the -r command to resume training:

+

+```bash

+export CUDA_VISIBLE_DEVICES=0 # Do not need to execute this command under windows and Mac

+python tools/train.py -c configs/face_detection/blazeface_fpn_ssh_1000e.yml -r output/blazeface_fan_ssh_1000e/100

+ ```

+* Training hyperparameters

+

+`BlazeFace` training is based on each GPU `batch_size=32` training on 4 GPUs (total `batch_size` is 128), the learning rate is 0.002, and the total training epoch is set as 1000.

+

+

+**NOTE:** Not support evaluation during train.

+

+

+

+### 6.2 Evaluate on the WIDER FACE

+- Evaluate and generate results files:

+```shell

+python -u tools/eval.py -c configs/face_detection/blazeface_fpn_ssh_1000e.yml \

+ -o weights=output/blazeface_fpn_ssh_1000e/model_final \

+ multi_scale_eval=True BBoxPostProcess.nms.score_threshold=0.1

+```

+Set `multi_scale_eval=True` for multi-scale evaluation,after the evaluation is completed, the test result in txt format will be generated in `output/pred`.

+

+- Download the official evaluation script to evaluate the AP metrics:

+

+```bash

+wget http://mmlab.ie.cuhk.edu.hk/projects/WIDERFace/support/eval_script/eval_tools.zip

+unzip eval_tools.zip && rm -f eval_tools.zip

+```

+

+- Start evaluation:

+

+Method One: Python evaluation:

+

+```bash

+git clone https://github.com/wondervictor/WiderFace-Evaluation.git

+cd WiderFace-Evaluation

+# Compile

+python3 setup.py build_ext --inplace

+# Start evaluation

+python3 evaluation.py -p /path/to/PaddleDetection/output/pred -g /path/to/eval_tools/ground_truth

+```

+

+Method Two: MatLab evaluation:

+

+```bash

+# Modify the result path and the name of the curve to be drawn in `eval_tools/wider_eval.m`:

+pred_dir = './pred';

+legend_name = 'Paddle-BlazeFace';

+

+`wider_eval.m` is the main execution program of the evaluation module. The run command is as follows:

+matlab -nodesktop -nosplash -nojvm -r "run wider_eval.m;quit;"

+```

+

+

+### 6.3 Inference deployment

+

+The model file saved in the model training process includes forward prediction and back propagation. In actual industrial deployment, back propagation is not required. Therefore, the model needs to be exported into the model format required for deployment.

+The `tools/export_model.py` script is provided in PaddleDetection to export the model:

+

+```bash

+python tools/export_model.py -c configs/face_detection/blazeface_fpn_ssh_1000e.yml --output_dir=./inference_model \

+ -o weights=output/blazeface_fpn_ssh_1000e/best_model BBoxPostProcess.nms.score_threshold=0.1

+```

+The inference model will be exported to the `inference_model/blazeface_fpn_ssh_1000e` directory, which are `infer_cfg.yml`, `model.pdiparams`, `model.pdiparams.info`, `model.pdmodel` If no folder is specified, the model will be exported In `output_inference`.

+

+* `score_threshold` for nms is modified as 0.1 for inference, because it takes great speed performance improvement while has little effect on mAP. For more documentation about model export, please refer to: [export doc](https://github.com/PaddlePaddle/PaddleDetection/deploy/EXPORT_MODEL.md)

+

+ PaddleDetection provides multiple deployment forms of PaddleInference, PaddleServing, and PaddleLite, supports multiple platforms such as server, mobile, and embedded, and provides a complete deployment plan for Python and C++.

+* Here, we take Python as an example to illustrate how to use PaddleInference for model deployment:

+```bash

+python deploy/python/infer.py --model_dir=./inference_model/blazeface_fpn_ssh_1000e --image_file=demo/road554.png --use_gpu=True

+```

+* `infer.py` provides a rich interface for users to access video files and cameras for prediction. For more information, please refer to: [Python deployment](https://github.com/PaddlePaddle/PaddleDetection/deploy/python.md).

+

+* For more documentation on deployment, please refer to: [deploy doc](https://github.com/PaddlePaddle/PaddleDetection/deploy/README.md).

+

+

+

+### 6.4 Improvement of inference speed

+

+If you want to reproduce our speed indicators, you need to modify the input size of inference model in the `./inference_model/blazeface_fpn_ssh_1000e/infer_cfg.yml` configuration file. As follows:

+```yaml

+mode: fluid

+draw_threshold: 0.5

+metric: WiderFace

+arch: Face

+min_subgraph_size: 3

+Preprocess:

+- is_scale: false

+ mean:

+ - 123

+ - 117

+ - 104

+ std:

+ - 127.502231

+ - 127.502231

+ - 127.502231

+ type: NormalizeImage

+- interp: 1

+ keep_ratio: false

+ target_size:

+ - 640

+ - 640

+ type: Resize

+- type: Permute

+label_list:

+- face

+```

+

+If you want the model to be inferred faster in the CPU environment, install [paddlepaddle_gpu-0.0.0](https://paddle-wheel.bj.bcebos.com/develop-cpu-mkl/paddlepaddle-0.0.0-cp37-cp37m-linux_x86_64.whl) (dependency of mkldnn) and enable_mkldnn is set to True, when predicting acceleration.

+

+```bash

+# use GPU:

+python deploy/python/infer.py --model_dir=./inference_model/blazeface_fpn_ssh_1000e --image_dir=./path/images --run_benchmark=True --use_gpu=True

+

+# inference with mkldnn use CPU

+# downdoad whl package

+wget https://paddle-wheel.bj.bcebos.com/develop-cpu-mkl/paddlepaddle-0.0.0-cp37-cp37m-linux_x86_64.whl

+#install paddlepaddle_gpu-0.0.0

+pip install paddlepaddle-0.0.0-cp37-cp37m-linux_x86_64.whl

+python deploy/python/infer.py --model_dir=./inference_model/blazeface_fpn_ssh_1000e --image_dir=./path/images --enable_mkldnn=True --run_benchmark=True --cpu_threads=5

+```

+

+

+

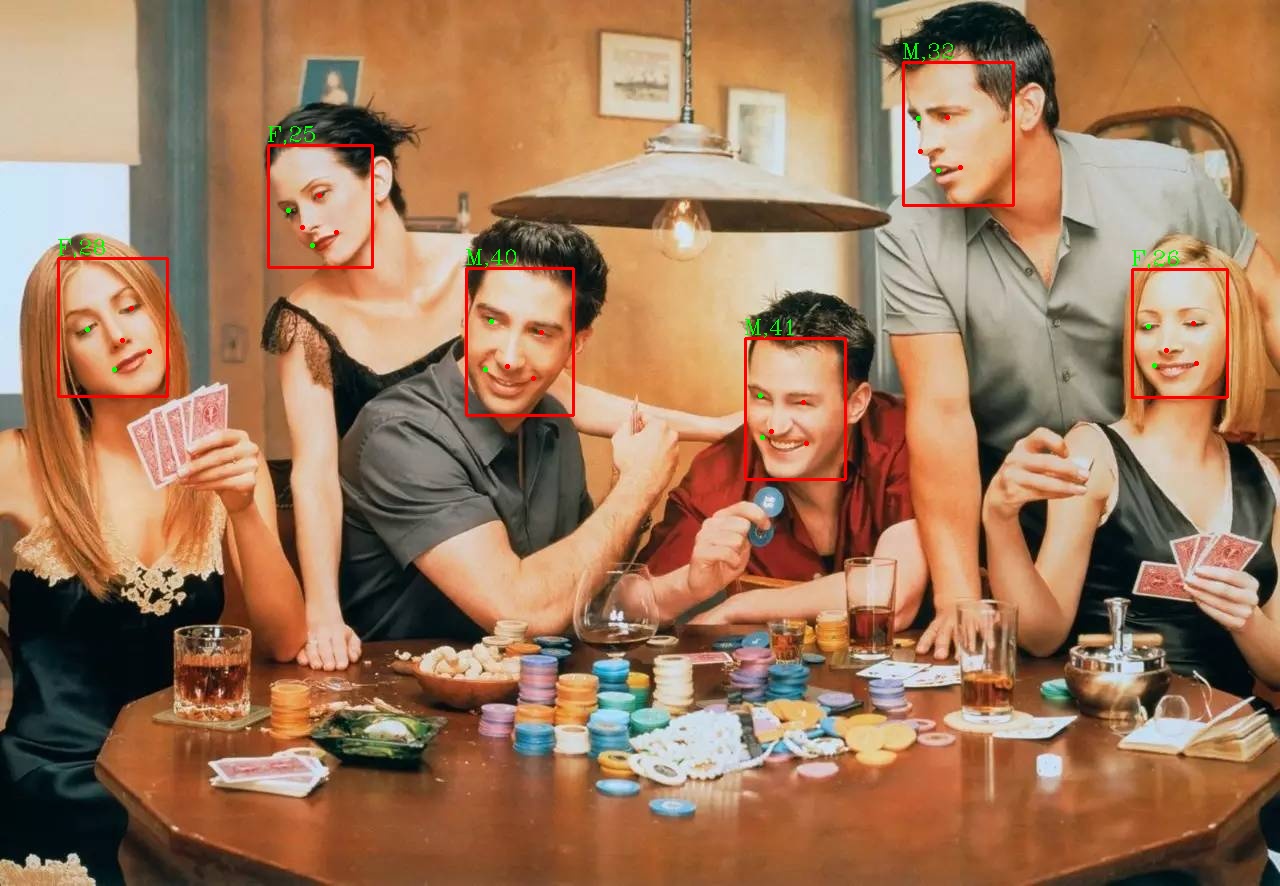

+### 6.5 Face detection demo

+

+This part talks about how to detect faces using BlazeFace model.

+

+Firstly, use the following commands to download the demo image and font file for visualization.

+

+

+```bash

+# Demo image

+wget https://raw.githubusercontent.com/littletomatodonkey/insight-face-paddle/main/demo/friends/query/friends1.jpg

+# Font file for visualization

+wget https://raw.githubusercontent.com/littletomatodonkey/insight-face-paddle/main/SourceHanSansCN-Medium.otf

+```

+

+The demo image is shown as follows.

+

+

+

+

+

+

+Use the following command to run the face detection process.

+

+```shell

+python3.7 test_blazeface.py --input=friends1.jpg --output="./output"

+```

+

+The final result is save in folder `output/`, which is shown as follows.

+

+

+

+

+

+

+For more details about parameter explanations, face recognition, index gallery construction and whl package inference, please refer to [Whl package inference tutorial](https://github.com/littletomatodonkey/insight-face-paddle).

+

+

+## 7. Citations

+

+```

+@misc{long2020ppyolo,

+title={PP-YOLO: An Effective and Efficient Implementation of Object Detector},

+author={Xiang Long and Kaipeng Deng and Guanzhong Wang and Yang Zhang and Qingqing Dang and Yuan Gao and Hui Shen and Jianguo Ren and Shumin Han and Errui Ding and Shilei Wen},

+year={2020},

+eprint={2007.12099},

+archivePrefix={arXiv},

+primaryClass={cs.CV}

+}

+@misc{ppdet2019,

+title={PaddleDetection, Object detection and instance segmentation toolkit based on PaddlePaddle.},

+author={PaddlePaddle Authors},

+howpublished = {\url{https://github.com/PaddlePaddle/PaddleDetection}},

+year={2019}

+}

+@article{bazarevsky2019blazeface,

+title={BlazeFace: Sub-millisecond Neural Face Detection on Mobile GPUs},

+author={Valentin Bazarevsky and Yury Kartynnik and Andrey Vakunov and Karthik Raveendran and Matthias Grundmann},

+year={2019},

+eprint={1907.05047},

+ archivePrefix={arXiv}

+}

+```

diff --git a/insightface/detection/blazeface_paddle/test_blazeface.py b/insightface/detection/blazeface_paddle/test_blazeface.py

new file mode 100644

index 0000000000000000000000000000000000000000..fa8a8f103be3e18c5de6e9d699b4bfdbb891d29a

--- /dev/null

+++ b/insightface/detection/blazeface_paddle/test_blazeface.py

@@ -0,0 +1,593 @@

+# Copyright (c) 2021 PaddlePaddle Authors. All Rights Reserved.

+#

+# Licensed under the Apache License, Version 2.0 (the "License");

+# you may not use this file except in compliance with the License.

+# You may obtain a copy of the License at

+#

+# http://www.apache.org/licenses/LICENSE-2.0

+#

+# Unless required by applicable law or agreed to in writing, software

+# distributed under the License is distributed on an "AS IS" BASIS,

+# WITHOUT WARRANTIES OR CONDITIONS OF ANY KIND, either express or implied.

+# See the License for the specific language governing permissions and

+# limitations under the License.

+

+import os

+import argparse

+import requests

+import logging

+import imghdr

+import pickle

+import tarfile

+from functools import partial

+

+import cv2

+import numpy as np

+from sklearn.metrics.pairwise import cosine_similarity

+from tqdm import tqdm

+from prettytable import PrettyTable

+from PIL import Image, ImageDraw, ImageFont

+import paddle

+from paddle.inference import Config

+from paddle.inference import create_predictor

+

+__all__ = ["parser"]

+BASE_INFERENCE_MODEL_DIR = os.path.expanduser("~/.insightface/ppmodels/")

+BASE_DOWNLOAD_URL = "https://paddle-model-ecology.bj.bcebos.com/model/insight-face/{}.tar"

+

+

+def parser(add_help=True):

+ def str2bool(v):

+ return v.lower() in ("true", "t", "1")

+

+ parser = argparse.ArgumentParser(add_help=add_help)

+

+ parser.add_argument(

+ "--det_model",

+ type=str,

+ default="BlazeFace",

+ help="The detection model.")

+ parser.add_argument(

+ "--use_gpu",

+ type=str2bool,

+ default=True,

+ help="Whether use GPU to predict. Default by True.")

+ parser.add_argument(

+ "--enable_mkldnn",

+ type=str2bool,

+ default=True,

+ help="Whether use MKLDNN to predict, valid only when --use_gpu is False. Default by False."

+ )

+ parser.add_argument(

+ "--cpu_threads",

+ type=int,

+ default=1,

+ help="The num of threads with CPU, valid only when --use_gpu is False. Default by 1."

+ )

+ parser.add_argument(

+ "--input",

+ type=str,

+ help="The path or directory of image(s) or video to be predicted.")

+ parser.add_argument(

+ "--output", type=str, default="./output/", help="The directory of prediction result.")

+ parser.add_argument(

+ "--det_thresh",

+ type=float,

+ default=0.8,

+ help="The threshold of detection postprocess. Default by 0.8.")

+ return parser

+

+

+def print_config(args):

+ args = vars(args)

+ table = PrettyTable(['Param', 'Value'])

+ for param in args:

+ table.add_row([param, args[param]])

+ width = len(str(table).split("\n")[0])

+ print("{}".format("-" * width))

+ print("PaddleFace".center(width))

+ print(table)

+ print("Powered by PaddlePaddle!".rjust(width))

+ print("{}".format("-" * width))

+

+

+def download_with_progressbar(url, save_path):

+ """Download from url with progressbar.

+ """

+ if os.path.isfile(save_path):

+ os.remove(save_path)

+ response = requests.get(url, stream=True)

+ total_size_in_bytes = int(response.headers.get("content-length", 0))

+ block_size = 1024 # 1 Kibibyte

+ progress_bar = tqdm(total=total_size_in_bytes, unit="iB", unit_scale=True)

+ with open(save_path, "wb") as file:

+ for data in response.iter_content(block_size):

+ progress_bar.update(len(data))

+ file.write(data)

+ progress_bar.close()

+ if total_size_in_bytes == 0 or progress_bar.n != total_size_in_bytes or not os.path.isfile(

+ save_path):

+ raise Exception(

+ f"Something went wrong while downloading model/image from {url}")

+

+

+def check_model_file(model):

+ """Check the model files exist and download and untar when no exist.

+ """

+ model_map = {

+ "ArcFace": "arcface_iresnet50_v1.0_infer",

+ "BlazeFace": "blazeface_fpn_ssh_1000e_v1.0_infer",

+ "MobileFace": "mobileface_v1.0_infer"

+ }

+

+ if os.path.isdir(model):

+ model_file_path = os.path.join(model, "inference.pdmodel")

+ params_file_path = os.path.join(model, "inference.pdiparams")

+ if not os.path.exists(model_file_path) or not os.path.exists(

+ params_file_path):

+ raise Exception(

+ f"The specifed model directory error. The drectory must include 'inference.pdmodel' and 'inference.pdiparams'."

+ )

+

+ elif model in model_map:

+ storage_directory = partial(os.path.join, BASE_INFERENCE_MODEL_DIR,

+ model)

+ url = BASE_DOWNLOAD_URL.format(model_map[model])

+

+ tar_file_name_list = [

+ "inference.pdiparams", "inference.pdiparams.info",

+ "inference.pdmodel"

+ ]

+ model_file_path = storage_directory("inference.pdmodel")

+ params_file_path = storage_directory("inference.pdiparams")

+ if not os.path.exists(model_file_path) or not os.path.exists(

+ params_file_path):

+ tmp_path = storage_directory(url.split("/")[-1])

+ logging.info(f"Download {url} to {tmp_path}")

+ os.makedirs(storage_directory(), exist_ok=True)

+ download_with_progressbar(url, tmp_path)

+ with tarfile.open(tmp_path, "r") as tarObj:

+ for member in tarObj.getmembers():

+ filename = None

+ for tar_file_name in tar_file_name_list:

+ if tar_file_name in member.name:

+ filename = tar_file_name

+ if filename is None:

+ continue

+ file = tarObj.extractfile(member)

+ with open(storage_directory(filename), "wb") as f:

+ f.write(file.read())

+ os.remove(tmp_path)

+ if not os.path.exists(model_file_path) or not os.path.exists(

+ params_file_path):

+ raise Exception(

+ f"Something went wrong while downloading and unzip the model[{model}] files!"

+ )

+ else:

+ raise Exception(

+ f"The specifed model name error. Support 'BlazeFace' for detection. And support local directory that include model files ('inference.pdmodel' and 'inference.pdiparams')."

+ )

+

+ return model_file_path, params_file_path

+

+

+def normalize_image(img, scale=None, mean=None, std=None, order='chw'):

+ if isinstance(scale, str):

+ scale = eval(scale)

+ scale = np.float32(scale if scale is not None else 1.0 / 255.0)

+ mean = mean if mean is not None else [0.485, 0.456, 0.406]

+ std = std if std is not None else [0.229, 0.224, 0.225]

+

+ shape = (3, 1, 1) if order == 'chw' else (1, 1, 3)

+ mean = np.array(mean).reshape(shape).astype('float32')

+ std = np.array(std).reshape(shape).astype('float32')

+

+ if isinstance(img, Image.Image):

+ img = np.array(img)

+

+ assert isinstance(img, np.ndarray), "invalid input 'img' in NormalizeImage"

+ return (img.astype('float32') * scale - mean) / std

+

+

+def to_CHW_image(img):

+ if isinstance(img, Image.Image):

+ img = np.array(img)

+ return img.transpose((2, 0, 1))

+

+

+class ColorMap(object):

+ def __init__(self, num):

+ super().__init__()

+ self.get_color_map_list(num)

+ self.color_map = {}

+ self.ptr = 0

+

+ def __getitem__(self, key):

+ return self.color_map[key]

+

+ def update(self, keys):

+ for key in keys:

+ if key not in self.color_map:

+ i = self.ptr % len(self.color_list)

+ self.color_map[key] = self.color_list[i]

+ self.ptr += 1

+

+ def get_color_map_list(self, num_classes):

+ color_map = num_classes * [0, 0, 0]

+ for i in range(0, num_classes):

+ j = 0

+ lab = i

+ while lab:

+ color_map[i * 3] |= (((lab >> 0) & 1) << (7 - j))

+ color_map[i * 3 + 1] |= (((lab >> 1) & 1) << (7 - j))

+ color_map[i * 3 + 2] |= (((lab >> 2) & 1) << (7 - j))

+ j += 1

+ lab >>= 3

+ self.color_list = [

+ color_map[i:i + 3] for i in range(0, len(color_map), 3)

+ ]

+

+

+class ImageReader(object):

+ def __init__(self, inputs):

+ super().__init__()

+ self.idx = 0

+ if isinstance(inputs, np.ndarray):

+ self.image_list = [inputs]

+ else:

+ imgtype_list = {'jpg', 'bmp', 'png', 'jpeg', 'rgb', 'tif', 'tiff'}

+ self.image_list = []

+ if os.path.isfile(inputs):

+ if imghdr.what(inputs) not in imgtype_list:

+ raise Exception(

+ f"Error type of input path, only support: {imgtype_list}"

+ )

+ self.image_list.append(inputs)

+ elif os.path.isdir(inputs):

+ tmp_file_list = os.listdir(inputs)

+ warn_tag = False

+ for file_name in tmp_file_list:

+ file_path = os.path.join(inputs, file_name)

+ if not os.path.isfile(file_path):

+ warn_tag = True

+ continue

+ if imghdr.what(file_path) in imgtype_list:

+ self.image_list.append(file_path)

+ else:

+ warn_tag = True

+ if warn_tag:

+ logging.warning(

+ f"The directory of input contine directory or not supported file type, only support: {imgtype_list}"

+ )

+ else:

+ raise Exception(

+ f"The file of input path not exist! Please check input: {inputs}"

+ )

+

+ def __iter__(self):

+ return self

+

+ def __next__(self):

+ if self.idx >= len(self.image_list):

+ raise StopIteration

+

+ data = self.image_list[self.idx]

+ if isinstance(data, np.ndarray):

+ self.idx += 1

+ return data, "tmp.png"

+ path = data

+ _, file_name = os.path.split(path)

+ img = cv2.imread(path)

+ if img is None:

+ logging.warning(f"Error in reading image: {path}! Ignored.")

+ self.idx += 1

+ return self.__next__()

+ img = cv2.cvtColor(img, cv2.COLOR_BGR2RGB)

+ self.idx += 1

+ return img, file_name

+

+ def __len__(self):

+ return len(self.image_list)

+

+

+class VideoReader(object):

+ def __init__(self, inputs):

+ super().__init__()

+ videotype_list = {"mp4"}

+ if os.path.splitext(inputs)[-1][1:] not in videotype_list:

+ raise Exception(

+ f"The input file is not supported, only support: {videotype_list}"

+ )

+ if not os.path.isfile(inputs):

+ raise Exception(

+ f"The file of input path not exist! Please check input: {inputs}"

+ )

+ self.capture = cv2.VideoCapture(inputs)

+ self.file_name = os.path.split(inputs)[-1]

+

+ def get_info(self):

+ info = {}

+ width = int(self.capture.get(cv2.CAP_PROP_FRAME_WIDTH))

+ height = int(self.capture.get(cv2.CAP_PROP_FRAME_HEIGHT))

+ fourcc = cv2.VideoWriter_fourcc(* 'mp4v')

+ info["file_name"] = self.file_name

+ info["fps"] = 30

+ info["shape"] = (width, height)

+ info["fourcc"] = cv2.VideoWriter_fourcc(* 'mp4v')

+ return info

+

+ def __iter__(self):

+ return self

+

+ def __next__(self):

+ ret, frame = self.capture.read()

+ if not ret:

+ raise StopIteration

+ return frame, self.file_name

+

+

+class ImageWriter(object):

+ def __init__(self, output_dir):

+ super().__init__()

+ if output_dir is None:

+ raise Exception(

+ "Please specify the directory of saving prediction results by --output."

+ )

+ if not os.path.exists(output_dir):

+ os.makedirs(output_dir)

+ self.output_dir = output_dir

+

+ def write(self, image, file_name):

+ path = os.path.join(self.output_dir, file_name)

+ cv2.imwrite(path, cv2.cvtColor(image, cv2.COLOR_RGB2BGR))

+

+

+class VideoWriter(object):

+ def __init__(self, output_dir, video_info):

+ super().__init__()

+ if output_dir is None:

+ raise Exception(

+ "Please specify the directory of saving prediction results by --output."

+ )

+ if not os.path.exists(output_dir):

+ os.makedirs(output_dir)

+ output_path = os.path.join(output_dir, video_info["file_name"])

+ fourcc = cv2.VideoWriter_fourcc(* 'mp4v')

+ self.writer = cv2.VideoWriter(output_path, video_info["fourcc"],

+ video_info["fps"], video_info["shape"])

+

+ def write(self, frame, file_name):

+ self.writer.write(frame)

+

+ def __del__(self):

+ if hasattr(self, "writer"):

+ self.writer.release()

+

+

+class BasePredictor(object):

+ def __init__(self, predictor_config):

+ super().__init__()

+ self.predictor_config = predictor_config

+ self.predictor, self.input_names, self.output_names = self.load_predictor(

+ predictor_config["model_file"], predictor_config["params_file"])

+

+ def load_predictor(self, model_file, params_file):

+ config = Config(model_file, params_file)

+ if self.predictor_config["use_gpu"]:

+ config.enable_use_gpu(200, 0)

+ config.switch_ir_optim(True)

+ else:

+ config.disable_gpu()

+ config.set_cpu_math_library_num_threads(self.predictor_config[

+ "cpu_threads"])

+

+ if self.predictor_config["enable_mkldnn"]:

+ try:

+ # cache 10 different shapes for mkldnn to avoid memory leak

+ config.set_mkldnn_cache_capacity(10)

+ config.enable_mkldnn()

+ except Exception as e:

+ logging.error(

+ "The current environment does not support `mkldnn`, so disable mkldnn."

+ )

+ config.disable_glog_info()

+ config.enable_memory_optim()

+ # use zero copy

+ config.switch_use_feed_fetch_ops(False)

+ predictor = create_predictor(config)

+ input_names = predictor.get_input_names()

+ output_names = predictor.get_output_names()

+ return predictor, input_names, output_names

+

+ def preprocess(self):

+ raise NotImplementedError

+

+ def postprocess(self):

+ raise NotImplementedError

+

+ def predict(self, img):

+ raise NotImplementedError

+

+

+class Detector(BasePredictor):

+ def __init__(self, det_config, predictor_config):

+ super().__init__(predictor_config)

+ self.det_config = det_config

+ self.target_size = self.det_config["target_size"]

+ self.thresh = self.det_config["thresh"]

+

+ def preprocess(self, img):

+ resize_h, resize_w = self.target_size

+ img_shape = img.shape

+ img_scale_x = resize_w / img_shape[1]

+ img_scale_y = resize_h / img_shape[0]

+ img = cv2.resize(

+ img, None, None, fx=img_scale_x, fy=img_scale_y, interpolation=1)

+ img = normalize_image(

+ img,

+ scale=1. / 255.,

+ mean=[0.485, 0.456, 0.406],

+ std=[0.229, 0.224, 0.225],

+ order='hwc')

+ img_info = {}

+ img_info["im_shape"] = np.array(

+ img.shape[:2], dtype=np.float32)[np.newaxis, :]

+ img_info["scale_factor"] = np.array(

+ [img_scale_y, img_scale_x], dtype=np.float32)[np.newaxis, :]

+

+ img = img.transpose((2, 0, 1)).copy()

+ img_info["image"] = img[np.newaxis, :, :, :]

+ return img_info

+

+ def postprocess(self, np_boxes):

+ expect_boxes = (np_boxes[:, 1] > self.thresh) & (np_boxes[:, 0] > -1)

+ return np_boxes[expect_boxes, :]

+

+ def predict(self, img):

+ inputs = self.preprocess(img)

+ for input_name in self.input_names:

+ input_tensor = self.predictor.get_input_handle(input_name)

+ input_tensor.copy_from_cpu(inputs[input_name])

+ self.predictor.run()

+ output_tensor = self.predictor.get_output_handle(self.output_names[0])

+ np_boxes = output_tensor.copy_to_cpu()

+ # boxes_num = self.detector.get_output_handle(self.detector_output_names[1])

+ # np_boxes_num = boxes_num.copy_to_cpu()

+ box_list = self.postprocess(np_boxes)

+ return box_list

+

+class FaceDetector(object):

+ def __init__(self, args, print_info=True):

+ super().__init__()

+ if print_info:

+ print_config(args)

+

+ self.font_path = os.path.join(

+ os.path.abspath(os.path.dirname(__file__)),

+ "SourceHanSansCN-Medium.otf")

+ self.args = args

+

+ predictor_config = {

+ "use_gpu": args.use_gpu,

+ "enable_mkldnn": args.enable_mkldnn,

+ "cpu_threads": args.cpu_threads

+ }

+

+ model_file_path, params_file_path = check_model_file(

+ args.det_model)

+ det_config = {"thresh": args.det_thresh, "target_size": [640, 640]}

+ predictor_config["model_file"] = model_file_path

+ predictor_config["params_file"] = params_file_path

+ self.det_predictor = Detector(det_config, predictor_config)

+ self.color_map = ColorMap(100)

+

+ def preprocess(self, img):

+ img = img.astype(np.float32, copy=False)

+ return img

+

+ def draw(self, img, box_list, labels):

+ self.color_map.update(labels)

+ im = Image.fromarray(img)

+ draw = ImageDraw.Draw(im)

+

+ for i, dt in enumerate(box_list):

+ bbox, score = dt[2:], dt[1]

+ label = labels[i]

+ color = tuple(self.color_map[label])

+

+ xmin, ymin, xmax, ymax = bbox

+

+ font_size = max(int((xmax - xmin) // 6), 10)

+ font = ImageFont.truetype(self.font_path, font_size)

+

+ text = "{} {:.4f}".format(label, score)

+ th = sum(font.getmetrics())

+ tw = font.getsize(text)[0]

+ start_y = max(0, ymin - th)

+

+ draw.rectangle(

+ [(xmin, start_y), (xmin + tw + 1, start_y + th)], fill=color)

+ draw.text(

+ (xmin + 1, start_y),

+ text,

+ fill=(255, 255, 255),

+ font=font,

+ anchor="la")

+ draw.rectangle(

+ [(xmin, ymin), (xmax, ymax)], width=2, outline=color)

+ return np.array(im)

+

+ def predict_np_img(self, img):

+ input_img = self.preprocess(img)

+ box_list = None

+ np_feature = None

+ if hasattr(self, "det_predictor"):

+ box_list = self.det_predictor.predict(input_img)

+ return box_list, np_feature

+

+ def init_reader_writer(self, input_data):

+ if isinstance(input_data, np.ndarray):

+ self.input_reader = ImageReader(input_data)

+ if hasattr(self, "det_predictor"):

+ self.output_writer = ImageWriter(self.args.output)

+ elif isinstance(input_data, str):

+ if input_data.endswith('mp4'):

+ self.input_reader = VideoReader(input_data)

+ info = self.input_reader.get_info()

+ self.output_writer = VideoWriter(self.args.output, info)

+ else:

+ self.input_reader = ImageReader(input_data)

+ if hasattr(self, "det_predictor"):

+ self.output_writer = ImageWriter(self.args.output)

+ else:

+ raise Exception(

+ f"The input data error. Only support path of image or video(.mp4) and dirctory that include images."

+ )

+

+ def predict(self, input_data, print_info=False):

+ """Predict input_data.

+

+ Args:

+ input_data (str | NumPy.array): The path of image, or the derectory including images, or the image data in NumPy.array format.

+ print_info (bool, optional): Wheather to print the prediction results. Defaults to False.

+

+ Yields:

+ dict: {

+ "box_list": The prediction results of detection.

+ "features": The output of recognition.

+ "labels": The results of retrieval.

+ }

+ """

+ self.init_reader_writer(input_data)

+ for img, file_name in self.input_reader:

+ if img is None:

+ logging.warning(f"Error in reading img {file_name}! Ignored.")

+ continue

+ box_list, np_feature = self.predict_np_img(img)

+ labels = ["face"] * len(box_list)

+ if box_list is not None:

+ result = self.draw(img, box_list, labels=labels)

+ self.output_writer.write(result, file_name)

+ if print_info:

+ logging.info(f"File: {file_name}, predict label(s): {labels}")

+ yield {

+ "box_list": box_list,

+ "features": np_feature,

+ "labels": labels

+ }

+ logging.info(f"Predict complete!")

+

+

+# for CLI

+def main(args=None):

+ logging.basicConfig(level=logging.INFO)

+

+ args = parser().parse_args()

+ predictor = FaceDetector(args)

+ res = predictor.predict(args.input, print_info=True)

+ for _ in res:

+ pass

+

+

+if __name__ == "__main__":

+ main()

diff --git a/insightface/detection/retinaface/Makefile b/insightface/detection/retinaface/Makefile

new file mode 100644

index 0000000000000000000000000000000000000000..66a3ed047a49124b921548dbc337946202fedbf7

--- /dev/null

+++ b/insightface/detection/retinaface/Makefile

@@ -0,0 +1,6 @@

+all:

+ cd rcnn/cython/; python setup.py build_ext --inplace; rm -rf build; cd ../../

+ cd rcnn/pycocotools/; python setup.py build_ext --inplace; rm -rf build; cd ../../

+clean:

+ cd rcnn/cython/; rm *.so *.c *.cpp; cd ../../

+ cd rcnn/pycocotools/; rm *.so; cd ../../

diff --git a/insightface/detection/retinaface/README.md b/insightface/detection/retinaface/README.md

new file mode 100644

index 0000000000000000000000000000000000000000..6665d3b12fe6c5d500de9272c4ac564347c46da7

--- /dev/null

+++ b/insightface/detection/retinaface/README.md

@@ -0,0 +1,86 @@

+# RetinaFace Face Detector

+

+## Introduction

+

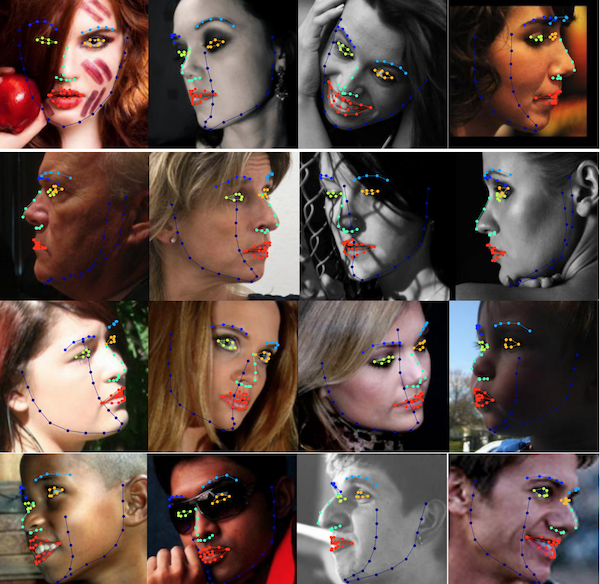

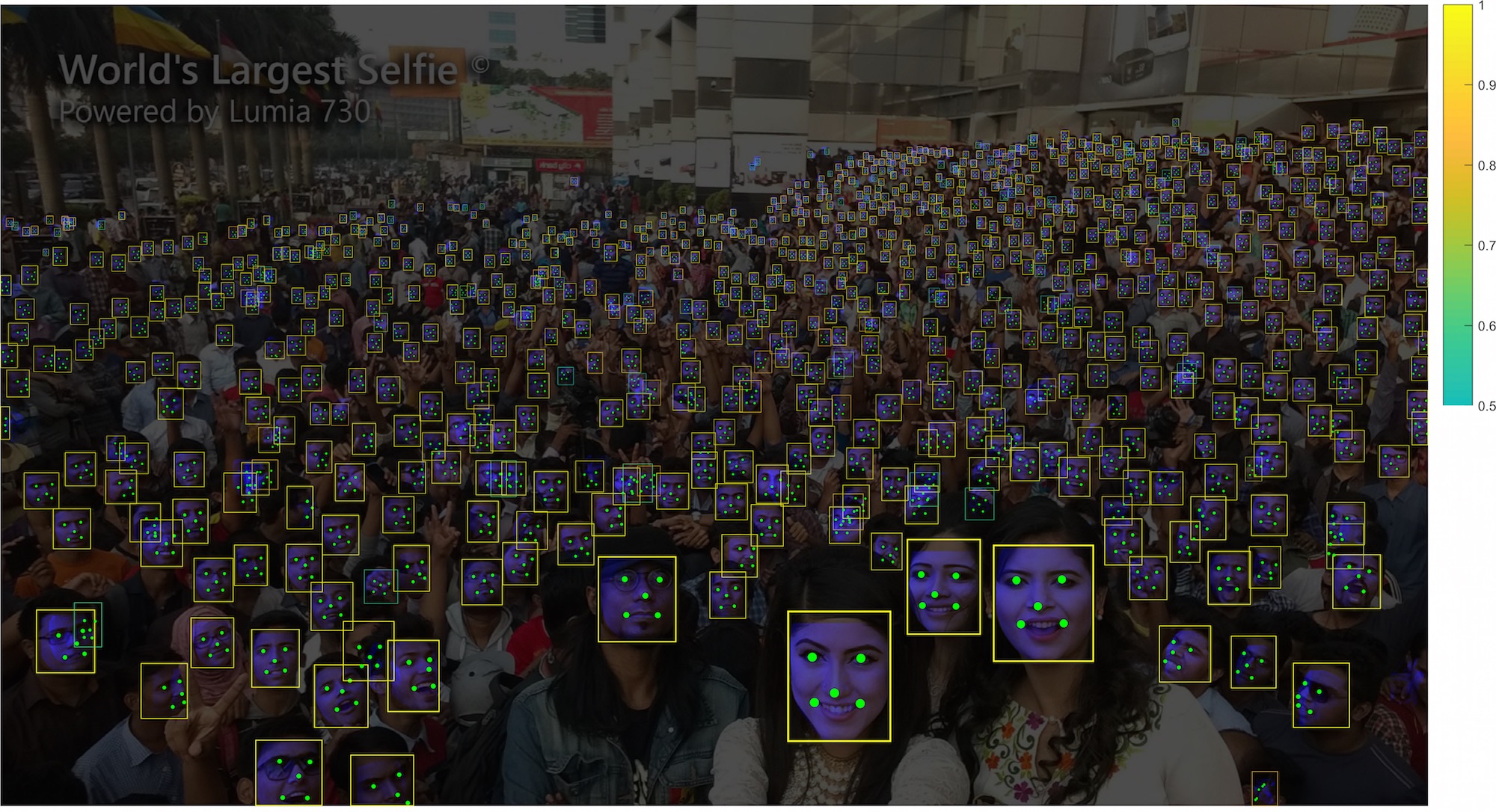

+RetinaFace is a practical single-stage [SOTA](http://shuoyang1213.me/WIDERFACE/WiderFace_Results.html) face detector which is initially introduced in [arXiv technical report](https://arxiv.org/abs/1905.00641) and then accepted by [CVPR 2020](https://openaccess.thecvf.com/content_CVPR_2020/html/Deng_RetinaFace_Single-Shot_Multi-Level_Face_Localisation_in_the_Wild_CVPR_2020_paper.html).

+

+

+

+

+

+## Data

+

+1. Download our annotations (face bounding boxes & five facial landmarks) from [baidu cloud](https://pan.baidu.com/s/1Laby0EctfuJGgGMgRRgykA) or [gdrive](https://drive.google.com/file/d/1BbXxIiY-F74SumCNG6iwmJJ5K3heoemT/view?usp=sharing)

+

+2. Download the [WIDERFACE](http://shuoyang1213.me/WIDERFACE/WiderFace_Results.html) dataset.

+

+3. Organise the dataset directory under ``insightface/RetinaFace/`` as follows:

+

+```Shell

+ data/retinaface/

+ train/

+ images/

+ label.txt

+ val/

+ images/

+ label.txt

+ test/

+ images/

+ label.txt

+```

+

+## Install

+

+1. Install MXNet with GPU support.

+2. Install Deformable Convolution V2 operator from [Deformable-ConvNets](https://github.com/msracver/Deformable-ConvNets) if you use the DCN based backbone.

+3. Type ``make`` to build cxx tools.

+

+## Training

+

+Please check ``train.py`` for training.

+

+1. Copy ``rcnn/sample_config.py`` to ``rcnn/config.py``

+2. Download ImageNet pretrained models and put them into ``model/``(these models are not for detection testing/inferencing but training and parameters initialization).

+

+ ImageNet ResNet50 ([baidu cloud](https://pan.baidu.com/s/1WAkU9ZA_j-OmzO-sdk9whA) and [googledrive](https://drive.google.com/file/d/1ibQOCG4eJyTrlKAJdnioQ3tyGlnbSHjy/view?usp=sharing)).

+

+ ImageNet ResNet152 ([baidu cloud](https://pan.baidu.com/s/1nzQ6CzmdKFzg8bM8ChZFQg) and [googledrive](https://drive.google.com/file/d/1FEjeiIB4u-XBYdASgkyx78pFybrlKUA4/view?usp=sharing)).

+

+3. Start training with ``CUDA_VISIBLE_DEVICES='0,1,2,3' python -u train.py --prefix ./model/retina --network resnet``.

+Before training, you can check the ``resnet`` network configuration (e.g. pretrained model path, anchor setting and learning rate policy etc..) in ``rcnn/config.py``.

+4. We have two predefined network settings named ``resnet``(for medium and large models) and ``mnet``(for lightweight models).

+

+## Testing

+

+Please check ``test.py`` for testing.

+

+## RetinaFace Pretrained Models

+

+Pretrained Model: RetinaFace-R50 ([baidu cloud](https://pan.baidu.com/s/1C6nKq122gJxRhb37vK0_LQ) or [googledrive](https://drive.google.com/file/d/1_DKgGxQWqlTqe78pw0KavId9BIMNUWfu/view?usp=sharing)) is a medium size model with ResNet50 backbone.

+It can output face bounding boxes and five facial landmarks in a single forward pass.

+

+WiderFace validation mAP: Easy 96.5, Medium 95.6, Hard 90.4.

+

+To avoid the confliction with the WiderFace Challenge (ICCV 2019), we postpone the release time of our best model.

+

+## Third-party

+

+[yangfly](https://github.com/yangfly): RetinaFace-MobileNet0.25 ([baidu cloud](https://pan.baidu.com/s/1P1ypO7VYUbNAezdvLm2m9w):nzof).

+WiderFace validation mAP: Hard 82.5. (model size: 1.68Mb)

+

+[clancylian](https://github.com/clancylian/retinaface): C++ version

+

+RetinaFace in [modelscope](https://modelscope.cn/models/damo/cv_resnet50_face-detection_retinaface/summary)

+

+## References

+

+```

+@inproceedings{Deng2020CVPR,

+title = {RetinaFace: Single-Shot Multi-Level Face Localisation in the Wild},

+author = {Deng, Jiankang and Guo, Jia and Ververas, Evangelos and Kotsia, Irene and Zafeiriou, Stefanos},

+booktitle = {CVPR},

+year = {2020}

+}

+```

+

+

diff --git a/insightface/detection/retinaface/rcnn/PY_OP/__init__.py b/insightface/detection/retinaface/rcnn/PY_OP/__init__.py

new file mode 100755

index 0000000000000000000000000000000000000000..e69de29bb2d1d6434b8b29ae775ad8c2e48c5391

diff --git a/insightface/detection/retinaface/rcnn/PY_OP/cascade_refine.py b/insightface/detection/retinaface/rcnn/PY_OP/cascade_refine.py

new file mode 100644

index 0000000000000000000000000000000000000000..e3c6556fab6f8ea13e4a214d88a04503d7c51540

--- /dev/null

+++ b/insightface/detection/retinaface/rcnn/PY_OP/cascade_refine.py

@@ -0,0 +1,518 @@

+from __future__ import print_function

+import sys

+import mxnet as mx

+import numpy as np

+import datetime

+from distutils.util import strtobool

+from ..config import config, generate_config

+from ..processing.generate_anchor import generate_anchors, anchors_plane

+from ..processing.bbox_transform import bbox_overlaps, bbox_transform, landmark_transform

+

+STAT = {0: 0}

+STEP = 28800

+

+

+class CascadeRefineOperator(mx.operator.CustomOp):

+ def __init__(self, stride=0, network='', dataset='', prefix=''):

+ super(CascadeRefineOperator, self).__init__()

+ self.stride = int(stride)

+ self.prefix = prefix

+ generate_config(network, dataset)

+ self.mode = config.TRAIN.OHEM_MODE #0 for random 10:245, 1 for 10:246, 2 for 10:30, mode 1 for default

+ stride = self.stride

+ sstride = str(stride)

+ base_size = config.RPN_ANCHOR_CFG[sstride]['BASE_SIZE']

+ allowed_border = config.RPN_ANCHOR_CFG[sstride]['ALLOWED_BORDER']

+ ratios = config.RPN_ANCHOR_CFG[sstride]['RATIOS']

+ scales = config.RPN_ANCHOR_CFG[sstride]['SCALES']

+ base_anchors = generate_anchors(base_size=base_size,

+ ratios=list(ratios),

+ scales=np.array(scales,

+ dtype=np.float32),

+ stride=stride,

+ dense_anchor=config.DENSE_ANCHOR)

+ num_anchors = base_anchors.shape[0]

+ feat_height, feat_width = config.SCALES[0][

+ 0] // self.stride, config.SCALES[0][0] // self.stride

+ feat_stride = self.stride

+

+ A = num_anchors

+ K = feat_height * feat_width

+ self.A = A

+

+ all_anchors = anchors_plane(feat_height, feat_width, feat_stride,

+ base_anchors)

+ all_anchors = all_anchors.reshape((K * A, 4))

+ self.ori_anchors = all_anchors

+ self.nbatch = 0

+ global STAT

+ for k in config.RPN_FEAT_STRIDE:

+ STAT[k] = [0, 0, 0]

+

+ def apply_bbox_pred(self, bbox_pred, ind=None):

+ box_deltas = bbox_pred

+ box_deltas[:, 0::4] = box_deltas[:, 0::4] * config.TRAIN.BBOX_STDS[0]

+ box_deltas[:, 1::4] = box_deltas[:, 1::4] * config.TRAIN.BBOX_STDS[1]

+ box_deltas[:, 2::4] = box_deltas[:, 2::4] * config.TRAIN.BBOX_STDS[2]

+ box_deltas[:, 3::4] = box_deltas[:, 3::4] * config.TRAIN.BBOX_STDS[3]

+ if ind is None:

+ boxes = self.ori_anchors

+ else:

+ boxes = self.ori_anchors[ind]

+ #print('in apply',self.stride, box_deltas.shape, boxes.shape)

+

+ widths = boxes[:, 2] - boxes[:, 0] + 1.0

+ heights = boxes[:, 3] - boxes[:, 1] + 1.0

+ ctr_x = boxes[:, 0] + 0.5 * (widths - 1.0)

+ ctr_y = boxes[:, 1] + 0.5 * (heights - 1.0)

+

+ dx = box_deltas[:, 0:1]

+ dy = box_deltas[:, 1:2]

+ dw = box_deltas[:, 2:3]

+ dh = box_deltas[:, 3:4]

+

+ pred_ctr_x = dx * widths[:, np.newaxis] + ctr_x[:, np.newaxis]

+ pred_ctr_y = dy * heights[:, np.newaxis] + ctr_y[:, np.newaxis]

+ pred_w = np.exp(dw) * widths[:, np.newaxis]

+ pred_h = np.exp(dh) * heights[:, np.newaxis]

+

+ pred_boxes = np.zeros(box_deltas.shape)

+ # x1

+ pred_boxes[:, 0:1] = pred_ctr_x - 0.5 * (pred_w - 1.0)

+ # y1

+ pred_boxes[:, 1:2] = pred_ctr_y - 0.5 * (pred_h - 1.0)

+ # x2

+ pred_boxes[:, 2:3] = pred_ctr_x + 0.5 * (pred_w - 1.0)

+ # y2

+ pred_boxes[:, 3:4] = pred_ctr_y + 0.5 * (pred_h - 1.0)

+ return pred_boxes

+

+ def assign_anchor_fpn(self,

+ gt_label,

+ anchors,

+ landmark=False,

+ prefix='face'):

+ IOU = config.TRAIN.CASCADE_OVERLAP

+

+ gt_boxes = gt_label['gt_boxes']

+ #_label = gt_label['gt_label']

+ # clean up boxes

+ #nonneg = np.where(_label[:] != -1)[0]

+ #gt_boxes = gt_boxes[nonneg]

+ if landmark:

+ gt_landmarks = gt_label['gt_landmarks']

+ #gt_landmarks = gt_landmarks[nonneg]

+ assert gt_boxes.shape[0] == gt_landmarks.shape[0]

+ #scales = np.array(scales, dtype=np.float32)

+ feat_strides = config.RPN_FEAT_STRIDE

+ bbox_pred_len = 4

+ landmark_pred_len = 10

+ num_anchors = anchors.shape[0]

+ A = self.A

+ total_anchors = num_anchors

+ feat_height, feat_width = config.SCALES[0][

+ 0] // self.stride, config.SCALES[0][0] // self.stride

+

+ #print('total_anchors', anchors.shape[0], len(inds_inside), file=sys.stderr)

+

+ # label: 1 is positive, 0 is negative, -1 is dont care

+ labels = np.empty((num_anchors, ), dtype=np.float32)

+ labels.fill(-1)

+ #print('BB', anchors.shape, len(inds_inside))

+ #print('gt_boxes', gt_boxes.shape, file=sys.stderr)

+ #tb = datetime.datetime.now()

+ #self._times[0] += (tb-ta).total_seconds()

+ #ta = datetime.datetime.now()

+

+ if gt_boxes.size > 0:

+ # overlap between the anchors and the gt boxes

+ # overlaps (ex, gt)

+ overlaps = bbox_overlaps(anchors.astype(np.float),

+ gt_boxes.astype(np.float))

+ argmax_overlaps = overlaps.argmax(axis=1)

+ #print('AAA', argmax_overlaps.shape)

+ max_overlaps = overlaps[np.arange(num_anchors), argmax_overlaps]

+ gt_argmax_overlaps = overlaps.argmax(axis=0)

+ gt_max_overlaps = overlaps[gt_argmax_overlaps,

+ np.arange(overlaps.shape[1])]

+ gt_argmax_overlaps = np.where(overlaps == gt_max_overlaps)[0]

+

+ if not config.TRAIN.RPN_CLOBBER_POSITIVES:

+ # assign bg labels first so that positive labels can clobber them

+ labels[max_overlaps < IOU[0]] = 0

+

+ # fg label: for each gt, anchor with highest overlap

+ if config.TRAIN.RPN_FORCE_POSITIVE:

+ labels[gt_argmax_overlaps] = 1

+

+ # fg label: above threshold IoU

+ labels[max_overlaps >= IOU[1]] = 1

+

+ if config.TRAIN.RPN_CLOBBER_POSITIVES:

+ # assign bg labels last so that negative labels can clobber positives

+ labels[max_overlaps < IOU[0]] = 0

+ else:

+ labels[:] = 0

+ fg_inds = np.where(labels == 1)[0]

+ #print('fg count', len(fg_inds))

+

+ # subsample positive labels if we have too many

+ if config.TRAIN.RPN_ENABLE_OHEM == 0:

+ fg_inds = np.where(labels == 1)[0]

+ num_fg = int(config.TRAIN.RPN_FG_FRACTION *

+ config.TRAIN.RPN_BATCH_SIZE)

+ if len(fg_inds) > num_fg:

+ disable_inds = npr.choice(fg_inds,

+ size=(len(fg_inds) - num_fg),

+ replace=False)

+ if DEBUG:

+ disable_inds = fg_inds[:(len(fg_inds) - num_fg)]

+ labels[disable_inds] = -1

+

+ # subsample negative labels if we have too many

+ num_bg = config.TRAIN.RPN_BATCH_SIZE - np.sum(labels == 1)

+ bg_inds = np.where(labels == 0)[0]

+ if len(bg_inds) > num_bg:

+ disable_inds = npr.choice(bg_inds,

+ size=(len(bg_inds) - num_bg),

+ replace=False)

+ if DEBUG:

+ disable_inds = bg_inds[:(len(bg_inds) - num_bg)]

+ labels[disable_inds] = -1

+

+ #fg_inds = np.where(labels == 1)[0]

+ #num_fg = len(fg_inds)

+ #num_bg = num_fg*int(1.0/config.TRAIN.RPN_FG_FRACTION-1)

+

+ #bg_inds = np.where(labels == 0)[0]

+ #if len(bg_inds) > num_bg:

+ # disable_inds = npr.choice(bg_inds, size=(len(bg_inds) - num_bg), replace=False)

+ # if DEBUG:

+ # disable_inds = bg_inds[:(len(bg_inds) - num_bg)]

+ # labels[disable_inds] = -1

+ else:

+ fg_inds = np.where(labels == 1)[0]

+ num_fg = len(fg_inds)

+ bg_inds = np.where(labels == 0)[0]

+ num_bg = len(bg_inds)

+

+ #print('anchor stat', num_fg, num_bg)

+

+ bbox_targets = np.zeros((num_anchors, bbox_pred_len), dtype=np.float32)

+ if gt_boxes.size > 0:

+ #print('GT', gt_boxes.shape, gt_boxes[argmax_overlaps, :4].shape)

+ bbox_targets[:, :] = bbox_transform(anchors,

+ gt_boxes[argmax_overlaps, :])

+ #bbox_targets[:,4] = gt_blur

+ #tb = datetime.datetime.now()

+ #self._times[1] += (tb-ta).total_seconds()

+ #ta = datetime.datetime.now()

+

+ bbox_weights = np.zeros((num_anchors, bbox_pred_len), dtype=np.float32)

+ #bbox_weights[labels == 1, :] = np.array(config.TRAIN.RPN_BBOX_WEIGHTS)

+ bbox_weights[labels == 1, 0:4] = 1.0

+ if bbox_pred_len > 4:

+ bbox_weights[labels == 1, 4:bbox_pred_len] = 0.1

+

+ if landmark:

+ landmark_targets = np.zeros((num_anchors, landmark_pred_len),

+ dtype=np.float32)

+ landmark_weights = np.zeros((num_anchors, landmark_pred_len),

+ dtype=np.float32)

+ #landmark_weights[labels == 1, :] = np.array(config.TRAIN.RPN_LANDMARK_WEIGHTS)

+ if landmark_pred_len == 10:

+ landmark_weights[labels == 1, :] = 1.0

+ elif landmark_pred_len == 15:

+ v = [1.0, 1.0, 0.1] * 5

+ assert len(v) == 15

+ landmark_weights[labels == 1, :] = np.array(v)

+ else:

+ assert False

+ #TODO here

+ if gt_landmarks.size > 0:

+ #print('AAA',argmax_overlaps)

+ a_landmarks = gt_landmarks[argmax_overlaps, :, :]

+ landmark_targets[:] = landmark_transform(anchors, a_landmarks)

+ invalid = np.where(a_landmarks[:, 0, 2] < 0.0)[0]

+ #assert len(invalid)==0

+ #landmark_weights[invalid, :] = np.array(config.TRAIN.RPN_INVALID_LANDMARK_WEIGHTS)

+ landmark_weights[invalid, :] = 0.0

+ #tb = datetime.datetime.now()

+ #self._times[2] += (tb-ta).total_seconds()

+ #ta = datetime.datetime.now()

+ bbox_targets[:,

+ 0::4] = bbox_targets[:, 0::4] / config.TRAIN.BBOX_STDS[0]

+ bbox_targets[:,

+ 1::4] = bbox_targets[:, 1::4] / config.TRAIN.BBOX_STDS[1]

+ bbox_targets[:,

+ 2::4] = bbox_targets[:, 2::4] / config.TRAIN.BBOX_STDS[2]

+ bbox_targets[:,

+ 3::4] = bbox_targets[:, 3::4] / config.TRAIN.BBOX_STDS[3]

+

+ #print('CC', anchors.shape, len(inds_inside))

+ label = {}

+ _label = labels.reshape(

+ (1, feat_height, feat_width, A)).transpose(0, 3, 1, 2)

+ _label = _label.reshape((1, A * feat_height * feat_width))

+ bbox_target = bbox_targets.reshape(

+ (1, feat_height * feat_width,

+ A * bbox_pred_len)).transpose(0, 2, 1)

+ bbox_weight = bbox_weights.reshape(

+ (1, feat_height * feat_width, A * bbox_pred_len)).transpose(

+ (0, 2, 1))

+ label['%s_label' % prefix] = _label[0]

+ label['%s_bbox_target' % prefix] = bbox_target[0]

+ label['%s_bbox_weight' % prefix] = bbox_weight[0]

+ if landmark:

+ landmark_target = landmark_target.reshape(

+ (1, feat_height * feat_width,

+ A * landmark_pred_len)).transpose(0, 2, 1)

+ landmark_target /= config.TRAIN.LANDMARK_STD

+ landmark_weight = landmark_weight.reshape(

+ (1, feat_height * feat_width,

+ A * landmark_pred_len)).transpose((0, 2, 1))

+ label['%s_landmark_target' % prefix] = landmark_target[0]

+ label['%s_landmark_weight' % prefix] = landmark_weight[0]

+

+ return label

+

+ def forward(self, is_train, req, in_data, out_data, aux):

+ self.nbatch += 1

+ ta = datetime.datetime.now()

+ global STAT

+ A = config.NUM_ANCHORS

+

+ cls_label_t0 = in_data[0].asnumpy() #BS, AHW

+ cls_score_t0 = in_data[1].asnumpy() #BS, C, AHW

+ cls_score = in_data[2].asnumpy() #BS, C, AHW

+ #labels_raw = in_data[1].asnumpy() #BS, ANCHORS

+ bbox_pred_t0 = in_data[3].asnumpy() #BS, AC, HW

+ bbox_target_t0 = in_data[4].asnumpy() #BS, AC, HW

+ cls_label_raw = in_data[5].asnumpy() #BS, AHW

+ gt_boxes = in_data[6].asnumpy() #BS, N, C=4+1

+ #imgs = in_data[7].asnumpy().astype(np.uint8)

+

+ batch_size = cls_score.shape[0]

+ num_anchors = cls_score.shape[2]

+ #print('in cas', cls_score.shape, bbox_target.shape)

+

+ labels_out = np.zeros(shape=(batch_size, num_anchors),

+ dtype=np.float32)

+ bbox_target_out = np.zeros(shape=bbox_target_t0.shape,

+ dtype=np.float32)

+ anchor_weight = np.zeros((batch_size, num_anchors, 1),

+ dtype=np.float32)

+ valid_count = np.zeros((batch_size, 1), dtype=np.float32)

+

+ bbox_pred_t0 = bbox_pred_t0.transpose((0, 2, 1))

+ bbox_pred_t0 = bbox_pred_t0.reshape(

+ (bbox_pred_t0.shape[0], -1, 4)) #BS, H*W*A, C

+ bbox_target_t0 = bbox_target_t0.transpose((0, 2, 1))

+ bbox_target_t0 = bbox_target_t0.reshape(

+ (bbox_target_t0.shape[0], -1, 4))

+

+ #print('anchor_weight', anchor_weight.shape)

+

+ #assert labels.shape[0]==1

+ #assert cls_score.shape[0]==1

+ #assert bbox_weight.shape[0]==1

+ #print('shape', cls_score.shape, labels.shape, file=sys.stderr)

+ #print('bbox_weight 0', bbox_weight.shape, file=sys.stderr)

+ #bbox_weight = np.zeros( (labels_raw.shape[0], labels_raw.shape[1], 4), dtype=np.float32)

+ _stat = [0, 0, 0]

+ SEL_TOPK = config.TRAIN.RPN_BATCH_SIZE

+ FAST = False

+ for ibatch in range(batch_size):

+ #bgr = imgs[ibatch].transpose( (1,2,0) )[:,:,::-1]

+

+ if not FAST:

+ _gt_boxes = gt_boxes[ibatch] #N, 4+1

+ _gtind = len(np.where(_gt_boxes[:, 4] >= 0)[0])

+ #print('gt num', _gtind)

+ _gt_boxes = _gt_boxes[0:_gtind, :]

+

+ #anchors_t1 = self.ori_anchors.copy()

+ #_cls_label_raw = cls_label_raw[ibatch] #AHW

+ #_cls_label_raw = _cls_label_raw.reshape( (A, -1) ).transpose( (1,0) ).reshape( (-1,) ) #HWA

+ #fg_ind_raw = np.where(_cls_label_raw>0)[0]

+ #_bbox_target_t0 = bbox_target_t0[ibatch][fg_ind_raw]

+ #_bbox_pred_t0 = bbox_pred_t0[ibatch][fg_ind_raw]

+ #anchors_t1_pos = self.apply_bbox_pred(_bbox_pred_t0, ind=fg_ind_raw)

+ #anchors_t1[fg_ind_raw,:] = anchors_t1_pos

+

+ anchors_t1 = self.apply_bbox_pred(bbox_pred_t0[ibatch])

+ assert anchors_t1.shape[0] == self.ori_anchors.shape[0]

+

+ #for i in range(_gt_boxes.shape[0]):

+ # box = _gt_boxes[i].astype(np.int)

+ # print('%d: gt%d'%(self.nbatch, i), box)

+ # #color = (0,0,255)

+ # #cv2.rectangle(img, (box[0], box[1]), (box[2], box[3]), color, 2)

+ #for i in range(anchors_t1.shape[0]):

+ # box1 = self.ori_anchors[i].astype(np.int)

+ # box2 = anchors_t1[i].astype(np.int)

+ # print('%d %d: anchorscompare %d'%(self.nbatch, self.stride, i), box1, box2)

+ #color = (255,255,0)

+ #cv2.rectangle(img, (box[0], box[1]), (box[2], box[3]), color, 2)

+ #filename = "./debug/%d_%d_%d.jpg"%(self.nbatch, ibatch, stride)

+ #cv2.imwrite(filename, img)

+ #print(filename)

+ #gt_label = {'gt_boxes': gt_anchors, 'gt_label' : labels_raw[ibatch]}

+ gt_label = {'gt_boxes': _gt_boxes}

+ new_label_dict = self.assign_anchor_fpn(gt_label,

+ anchors_t1,

+ False,

+ prefix=self.prefix)

+ labels = new_label_dict['%s_label' % self.prefix] #AHW

+ new_bbox_target = new_label_dict['%s_bbox_target' %

+ self.prefix] #AC,HW

+ #print('assign ret', labels.shape, new_bbox_target.shape)

+ _anchor_weight = np.zeros((num_anchors, 1), dtype=np.float32)

+ fg_score = cls_score[ibatch, 1, :] - cls_score[ibatch, 0, :]

+ fg_inds = np.where(labels > 0)[0]

+ num_fg = int(config.TRAIN.RPN_FG_FRACTION *

+ config.TRAIN.RPN_BATCH_SIZE)

+ origin_num_fg = len(fg_inds)

+ #continue

+ #print('cas fg', len(fg_inds), num_fg, file=sys.stderr)

+ if len(fg_inds) > num_fg:

+ if self.mode == 0:

+ disable_inds = np.random.choice(fg_inds,

+ size=(len(fg_inds) -

+ num_fg),

+ replace=False)

+ labels[disable_inds] = -1

+ else:

+ pos_ohem_scores = fg_score[fg_inds]

+ order_pos_ohem_scores = pos_ohem_scores.ravel(

+ ).argsort()

+ sampled_inds = fg_inds[order_pos_ohem_scores[:num_fg]]

+ labels[fg_inds] = -1

+ labels[sampled_inds] = 1

+

+ n_fg = np.sum(labels > 0)

+ fg_inds = np.where(labels > 0)[0]

+ num_bg = config.TRAIN.RPN_BATCH_SIZE - n_fg

+ if self.mode == 2:

+ num_bg = max(

+ 48, n_fg * int(1.0 / config.TRAIN.RPN_FG_FRACTION - 1))

+

+ bg_inds = np.where(labels == 0)[0]

+ origin_num_bg = len(bg_inds)

+ if num_bg == 0:

+ labels[bg_inds] = -1

+ elif len(bg_inds) > num_bg:

+ # sort ohem scores

+

+ if self.mode == 0:

+ disable_inds = np.random.choice(bg_inds,

+ size=(len(bg_inds) -

+ num_bg),

+ replace=False)

+ labels[disable_inds] = -1

+ else:

+ neg_ohem_scores = fg_score[bg_inds]

+ order_neg_ohem_scores = neg_ohem_scores.ravel(

+ ).argsort()[::-1]

+ sampled_inds = bg_inds[order_neg_ohem_scores[:num_bg]]

+ #print('sampled_inds_bg', sampled_inds, file=sys.stderr)

+ labels[bg_inds] = -1

+ labels[sampled_inds] = 0

+

+ if n_fg > 0:

+ order0_labels = labels.reshape((1, A, -1)).transpose(

+ (0, 2, 1)).reshape((-1, ))

+ bbox_fg_inds = np.where(order0_labels > 0)[0]

+ #print('bbox_fg_inds, order0 ', bbox_fg_inds, file=sys.stderr)

+ _anchor_weight[bbox_fg_inds, :] = 1.0

+ anchor_weight[ibatch] = _anchor_weight

+ valid_count[ibatch][0] = n_fg

+ labels_out[ibatch] = labels

+ #print('labels_out', self.stride, ibatch, labels)