diff --git a/README.md b/README.md

new file mode 100644

index 0000000000000000000000000000000000000000..652ed8398d9a8e5eb6fc2ff05d9be05e27f38c48

--- /dev/null

+++ b/README.md

@@ -0,0 +1,15 @@

+

+---

+base_model: stabilityai/stable-diffusion-xl-base-1.0

+instance_prompt: a photo of sks dog

+tags:

+- text-to-image

+- diffusers

+- autotrain

+inference: true

+---

+

+# DreamBooth trained by AutoTrain

+

+Test enoder was not trained.

+

diff --git a/autotrain-advanced/.dockerignore b/autotrain-advanced/.dockerignore

new file mode 100644

index 0000000000000000000000000000000000000000..cd1d39f433137e1d62970ca6dfa338d60ae2189d

--- /dev/null

+++ b/autotrain-advanced/.dockerignore

@@ -0,0 +1,9 @@

+build/

+dist/

+logs/

+output/

+output2/

+test/

+test.py

+.DS_Store

+.vscode/

\ No newline at end of file

diff --git a/autotrain-advanced/.github/workflows/build_documentation.yml b/autotrain-advanced/.github/workflows/build_documentation.yml

new file mode 100644

index 0000000000000000000000000000000000000000..c43ccf01925ddf41669754ae0308686457f758a0

--- /dev/null

+++ b/autotrain-advanced/.github/workflows/build_documentation.yml

@@ -0,0 +1,19 @@

+name: Build documentation

+

+on:

+ push:

+ branches:

+ - main

+ - doc-builder*

+ - v*-release

+

+jobs:

+ build:

+ uses: huggingface/doc-builder/.github/workflows/build_main_documentation.yml@main

+ with:

+ commit_sha: ${{ github.sha }}

+ package: autotrain-advanced

+ package_name: autotrain

+ secrets:

+ token: ${{ secrets.HUGGINGFACE_PUSH }}

+ hf_token: ${{ secrets.HF_DOC_BUILD_PUSH }}

\ No newline at end of file

diff --git a/autotrain-advanced/.github/workflows/build_pr_documentation.yml b/autotrain-advanced/.github/workflows/build_pr_documentation.yml

new file mode 100644

index 0000000000000000000000000000000000000000..f5759a06761196262d0f69403bbddc39ddd1b4df

--- /dev/null

+++ b/autotrain-advanced/.github/workflows/build_pr_documentation.yml

@@ -0,0 +1,17 @@

+name: Build PR Documentation

+

+on:

+ pull_request:

+

+concurrency:

+ group: ${{ github.workflow }}-${{ github.head_ref || github.run_id }}

+ cancel-in-progress: true

+

+jobs:

+ build:

+ uses: huggingface/doc-builder/.github/workflows/build_pr_documentation.yml@main

+ with:

+ commit_sha: ${{ github.event.pull_request.head.sha }}

+ pr_number: ${{ github.event.number }}

+ package: autotrain-advanced

+ package_name: autotrain

diff --git a/autotrain-advanced/.github/workflows/code_quality.yml b/autotrain-advanced/.github/workflows/code_quality.yml

new file mode 100644

index 0000000000000000000000000000000000000000..9570ce96d9cfad0a3db247f60b7318b6ffebb38c

--- /dev/null

+++ b/autotrain-advanced/.github/workflows/code_quality.yml

@@ -0,0 +1,30 @@

+name: Code quality

+

+on:

+ push:

+ branches:

+ - main

+ pull_request:

+ branches:

+ - main

+ release:

+ types:

+ - created

+

+jobs:

+ check_code_quality:

+ name: Check code quality

+ runs-on: ubuntu-latest

+ steps:

+ - uses: actions/checkout@v2

+ - name: Set up Python 3.9

+ uses: actions/setup-python@v2

+ with:

+ python-version: 3.9

+ - name: Install dependencies

+ run: |

+ python -m pip install --upgrade pip

+ python -m pip install flake8 black isort

+ - name: Make quality

+ run: |

+ make quality

diff --git a/autotrain-advanced/.github/workflows/delete_doc_comment.yml b/autotrain-advanced/.github/workflows/delete_doc_comment.yml

new file mode 100644

index 0000000000000000000000000000000000000000..72801c856eb5155ccf321d63be37bd146aff260d

--- /dev/null

+++ b/autotrain-advanced/.github/workflows/delete_doc_comment.yml

@@ -0,0 +1,13 @@

+name: Delete doc comment

+

+on:

+ workflow_run:

+ workflows: ["Delete doc comment trigger"]

+ types:

+ - completed

+

+jobs:

+ delete:

+ uses: huggingface/doc-builder/.github/workflows/delete_doc_comment.yml@main

+ secrets:

+ comment_bot_token: ${{ secrets.COMMENT_BOT_TOKEN }}

\ No newline at end of file

diff --git a/autotrain-advanced/.github/workflows/delete_doc_comment_trigger.yml b/autotrain-advanced/.github/workflows/delete_doc_comment_trigger.yml

new file mode 100644

index 0000000000000000000000000000000000000000..5e39e253974df54fd284cf44bb1e52afbefecded

--- /dev/null

+++ b/autotrain-advanced/.github/workflows/delete_doc_comment_trigger.yml

@@ -0,0 +1,12 @@

+name: Delete doc comment trigger

+

+on:

+ pull_request:

+ types: [ closed ]

+

+

+jobs:

+ delete:

+ uses: huggingface/doc-builder/.github/workflows/delete_doc_comment_trigger.yml@main

+ with:

+ pr_number: ${{ github.event.number }}

\ No newline at end of file

diff --git a/autotrain-advanced/.github/workflows/tests.yml b/autotrain-advanced/.github/workflows/tests.yml

new file mode 100644

index 0000000000000000000000000000000000000000..2f6d41e65d6f8b067e954402c90fb83ebb908299

--- /dev/null

+++ b/autotrain-advanced/.github/workflows/tests.yml

@@ -0,0 +1,30 @@

+name: Tests

+

+on:

+ push:

+ branches:

+ - main

+ pull_request:

+ branches:

+ - main

+ release:

+ types:

+ - created

+

+jobs:

+ tests:

+ name: Run unit tests

+ runs-on: ubuntu-latest

+ steps:

+ - uses: actions/checkout@v2

+ - name: Set up Python 3.9

+ uses: actions/setup-python@v2

+ with:

+ python-version: 3.9

+ - name: Install dependencies

+ run: |

+ python -m pip install --upgrade pip

+ python -m pip install .[dev]

+ - name: Make test

+ run: |

+ make test

diff --git a/autotrain-advanced/.github/workflows/upload_pr_documentation.yml b/autotrain-advanced/.github/workflows/upload_pr_documentation.yml

new file mode 100644

index 0000000000000000000000000000000000000000..2bd49da63cc4d4ccec2a8730c2566585c2fb3e83

--- /dev/null

+++ b/autotrain-advanced/.github/workflows/upload_pr_documentation.yml

@@ -0,0 +1,16 @@

+name: Upload PR Documentation

+

+on:

+ workflow_run:

+ workflows: ["Build PR Documentation"]

+ types:

+ - completed

+

+jobs:

+ build:

+ uses: huggingface/doc-builder/.github/workflows/upload_pr_documentation.yml@main

+ with:

+ package_name: autotrain

+ secrets:

+ hf_token: ${{ secrets.HF_DOC_BUILD_PUSH }}

+ comment_bot_token: ${{ secrets.COMMENT_BOT_TOKEN }}

\ No newline at end of file

diff --git a/autotrain-advanced/.gitignore b/autotrain-advanced/.gitignore

new file mode 100644

index 0000000000000000000000000000000000000000..9e10756026b04e5d579770041f0f71656b5b7e8e

--- /dev/null

+++ b/autotrain-advanced/.gitignore

@@ -0,0 +1,138 @@

+# Local stuff

+.DS_Store

+.vscode/

+test/

+test.py

+output/

+output2/

+logs/

+

+# Byte-compiled / optimized / DLL files

+__pycache__/

+*.py[cod]

+*$py.class

+

+# C extensions

+*.so

+

+# Distribution / packaging

+.Python

+build/

+develop-eggs/

+dist/

+downloads/

+eggs/

+.eggs/

+lib/

+lib64/

+parts/

+sdist/

+var/

+wheels/

+pip-wheel-metadata/

+share/python-wheels/

+*.egg-info/

+.installed.cfg

+*.egg

+MANIFEST

+

+# PyInstaller

+# Usually these files are written by a python script from a template

+# before PyInstaller builds the exe, so as to inject date/other infos into it.

+*.manifest

+*.spec

+

+# Installer logs

+pip-log.txt

+pip-delete-this-directory.txt

+

+# Unit test / coverage reports

+htmlcov/

+.tox/

+.nox/

+.coverage

+.coverage.*

+.cache

+nosetests.xml

+coverage.xml

+*.cover

+*.py,cover

+.hypothesis/

+.pytest_cache/

+

+# Translations

+*.mo

+*.pot

+

+# Django stuff:

+*.log

+local_settings.py

+db.sqlite3

+db.sqlite3-journal

+

+# Flask stuff:

+instance/

+.webassets-cache

+

+# Scrapy stuff:

+.scrapy

+

+# Sphinx documentation

+docs/_build/

+

+# PyBuilder

+target/

+

+# Jupyter Notebook

+.ipynb_checkpoints

+

+# IPython

+profile_default/

+ipython_config.py

+

+# pyenv

+.python-version

+

+# pipenv

+# According to pypa/pipenv#598, it is recommended to include Pipfile.lock in version control.

+# However, in case of collaboration, if having platform-specific dependencies or dependencies

+# having no cross-platform support, pipenv may install dependencies that don't work, or not

+# install all needed dependencies.

+#Pipfile.lock

+

+# PEP 582; used by e.g. github.com/David-OConnor/pyflow

+__pypackages__/

+

+# Celery stuff

+celerybeat-schedule

+celerybeat.pid

+

+# SageMath parsed files

+*.sage.py

+

+# Environments

+.env

+.venv

+env/

+venv/

+ENV/

+env.bak/

+venv.bak/

+

+# Spyder project settings

+.spyderproject

+.spyproject

+

+# Rope project settings

+.ropeproject

+

+# mkdocs documentation

+/site

+

+# mypy

+.mypy_cache/

+.dmypy.json

+dmypy.json

+

+# Pyre type checker

+.pyre/

diff --git a/autotrain-advanced/Dockerfile b/autotrain-advanced/Dockerfile

new file mode 100644

index 0000000000000000000000000000000000000000..056d7f570ba8bfb7a6e2a298f585ab21f672b1eb

--- /dev/null

+++ b/autotrain-advanced/Dockerfile

@@ -0,0 +1,65 @@

+FROM nvidia/cuda:11.8.0-cudnn8-devel-ubuntu20.04

+

+ENV DEBIAN_FRONTEND=noninteractive \

+ TZ=UTC

+

+ENV PATH="${HOME}/miniconda3/bin:${PATH}"

+ARG PATH="${HOME}/miniconda3/bin:${PATH}"

+

+RUN mkdir -p /tmp/model

+RUN chown -R 1000:1000 /tmp/model

+RUN mkdir -p /tmp/data

+RUN chown -R 1000:1000 /tmp/data

+

+RUN apt-get update && \

+ apt-get upgrade -y && \

+ apt-get install -y \

+ build-essential \

+ cmake \

+ curl \

+ ca-certificates \

+ gcc \

+ git \

+ locales \

+ net-tools \

+ wget \

+ libpq-dev \

+ libsndfile1-dev \

+ git \

+ git-lfs \

+ libgl1 \

+ && rm -rf /var/lib/apt/lists/*

+

+

+RUN curl -s https://packagecloud.io/install/repositories/github/git-lfs/script.deb.sh | bash && \

+ git lfs install

+

+WORKDIR /app

+RUN mkdir -p /app/.cache

+ENV HF_HOME="/app/.cache"

+RUN chown -R 1000:1000 /app

+USER 1000

+ENV HOME=/app

+

+ENV PYTHONPATH=$HOME/app \

+ PYTHONUNBUFFERED=1 \

+ GRADIO_ALLOW_FLAGGING=never \

+ GRADIO_NUM_PORTS=1 \

+ GRADIO_SERVER_NAME=0.0.0.0 \

+ SYSTEM=spaces

+

+

+RUN wget https://repo.anaconda.com/miniconda/Miniconda3-latest-Linux-x86_64.sh \

+ && sh Miniconda3-latest-Linux-x86_64.sh -b -p /app/miniconda \

+ && rm -f Miniconda3-latest-Linux-x86_64.sh

+ENV PATH /app/miniconda/bin:$PATH

+

+RUN conda create -p /app/env -y python=3.9

+

+SHELL ["conda", "run","--no-capture-output", "-p","/app/env", "/bin/bash", "-c"]

+

+RUN conda install pytorch torchvision torchaudio pytorch-cuda=11.8 -c pytorch -c nvidia

+RUN pip install git+https://github.com/huggingface/peft.git

+COPY --chown=1000:1000 . /app/

+

+RUN pip install -e .

\ No newline at end of file

diff --git a/autotrain-advanced/LICENSE b/autotrain-advanced/LICENSE

new file mode 100644

index 0000000000000000000000000000000000000000..7a4a3ea2424c09fbe48d455aed1eaa94d9124835

--- /dev/null

+++ b/autotrain-advanced/LICENSE

@@ -0,0 +1,202 @@

+

+ Apache License

+ Version 2.0, January 2004

+ http://www.apache.org/licenses/

+

+ TERMS AND CONDITIONS FOR USE, REPRODUCTION, AND DISTRIBUTION

+

+ 1. Definitions.

+

+ "License" shall mean the terms and conditions for use, reproduction,

+ and distribution as defined by Sections 1 through 9 of this document.

+

+ "Licensor" shall mean the copyright owner or entity authorized by

+ the copyright owner that is granting the License.

+

+ "Legal Entity" shall mean the union of the acting entity and all

+ other entities that control, are controlled by, or are under common

+ control with that entity. For the purposes of this definition,

+ "control" means (i) the power, direct or indirect, to cause the

+ direction or management of such entity, whether by contract or

+ otherwise, or (ii) ownership of fifty percent (50%) or more of the

+ outstanding shares, or (iii) beneficial ownership of such entity.

+

+ "You" (or "Your") shall mean an individual or Legal Entity

+ exercising permissions granted by this License.

+

+ "Source" form shall mean the preferred form for making modifications,

+ including but not limited to software source code, documentation

+ source, and configuration files.

+

+ "Object" form shall mean any form resulting from mechanical

+ transformation or translation of a Source form, including but

+ not limited to compiled object code, generated documentation,

+ and conversions to other media types.

+

+ "Work" shall mean the work of authorship, whether in Source or

+ Object form, made available under the License, as indicated by a

+ copyright notice that is included in or attached to the work

+ (an example is provided in the Appendix below).

+

+ "Derivative Works" shall mean any work, whether in Source or Object

+ form, that is based on (or derived from) the Work and for which the

+ editorial revisions, annotations, elaborations, or other modifications

+ represent, as a whole, an original work of authorship. For the purposes

+ of this License, Derivative Works shall not include works that remain

+ separable from, or merely link (or bind by name) to the interfaces of,

+ the Work and Derivative Works thereof.

+

+ "Contribution" shall mean any work of authorship, including

+ the original version of the Work and any modifications or additions

+ to that Work or Derivative Works thereof, that is intentionally

+ submitted to Licensor for inclusion in the Work by the copyright owner

+ or by an individual or Legal Entity authorized to submit on behalf of

+ the copyright owner. For the purposes of this definition, "submitted"

+ means any form of electronic, verbal, or written communication sent

+ to the Licensor or its representatives, including but not limited to

+ communication on electronic mailing lists, source code control systems,

+ and issue tracking systems that are managed by, or on behalf of, the

+ Licensor for the purpose of discussing and improving the Work, but

+ excluding communication that is conspicuously marked or otherwise

+ designated in writing by the copyright owner as "Not a Contribution."

+

+ "Contributor" shall mean Licensor and any individual or Legal Entity

+ on behalf of whom a Contribution has been received by Licensor and

+ subsequently incorporated within the Work.

+

+ 2. Grant of Copyright License. Subject to the terms and conditions of

+ this License, each Contributor hereby grants to You a perpetual,

+ worldwide, non-exclusive, no-charge, royalty-free, irrevocable

+ copyright license to reproduce, prepare Derivative Works of,

+ publicly display, publicly perform, sublicense, and distribute the

+ Work and such Derivative Works in Source or Object form.

+

+ 3. Grant of Patent License. Subject to the terms and conditions of

+ this License, each Contributor hereby grants to You a perpetual,

+ worldwide, non-exclusive, no-charge, royalty-free, irrevocable

+ (except as stated in this section) patent license to make, have made,

+ use, offer to sell, sell, import, and otherwise transfer the Work,

+ where such license applies only to those patent claims licensable

+ by such Contributor that are necessarily infringed by their

+ Contribution(s) alone or by combination of their Contribution(s)

+ with the Work to which such Contribution(s) was submitted. If You

+ institute patent litigation against any entity (including a

+ cross-claim or counterclaim in a lawsuit) alleging that the Work

+ or a Contribution incorporated within the Work constitutes direct

+ or contributory patent infringement, then any patent licenses

+ granted to You under this License for that Work shall terminate

+ as of the date such litigation is filed.

+

+ 4. Redistribution. You may reproduce and distribute copies of the

+ Work or Derivative Works thereof in any medium, with or without

+ modifications, and in Source or Object form, provided that You

+ meet the following conditions:

+

+ (a) You must give any other recipients of the Work or

+ Derivative Works a copy of this License; and

+

+ (b) You must cause any modified files to carry prominent notices

+ stating that You changed the files; and

+

+ (c) You must retain, in the Source form of any Derivative Works

+ that You distribute, all copyright, patent, trademark, and

+ attribution notices from the Source form of the Work,

+ excluding those notices that do not pertain to any part of

+ the Derivative Works; and

+

+ (d) If the Work includes a "NOTICE" text file as part of its

+ distribution, then any Derivative Works that You distribute must

+ include a readable copy of the attribution notices contained

+ within such NOTICE file, excluding those notices that do not

+ pertain to any part of the Derivative Works, in at least one

+ of the following places: within a NOTICE text file distributed

+ as part of the Derivative Works; within the Source form or

+ documentation, if provided along with the Derivative Works; or,

+ within a display generated by the Derivative Works, if and

+ wherever such third-party notices normally appear. The contents

+ of the NOTICE file are for informational purposes only and

+ do not modify the License. You may add Your own attribution

+ notices within Derivative Works that You distribute, alongside

+ or as an addendum to the NOTICE text from the Work, provided

+ that such additional attribution notices cannot be construed

+ as modifying the License.

+

+ You may add Your own copyright statement to Your modifications and

+ may provide additional or different license terms and conditions

+ for use, reproduction, or distribution of Your modifications, or

+ for any such Derivative Works as a whole, provided Your use,

+ reproduction, and distribution of the Work otherwise complies with

+ the conditions stated in this License.

+

+ 5. Submission of Contributions. Unless You explicitly state otherwise,

+ any Contribution intentionally submitted for inclusion in the Work

+ by You to the Licensor shall be under the terms and conditions of

+ this License, without any additional terms or conditions.

+ Notwithstanding the above, nothing herein shall supersede or modify

+ the terms of any separate license agreement you may have executed

+ with Licensor regarding such Contributions.

+

+ 6. Trademarks. This License does not grant permission to use the trade

+ names, trademarks, service marks, or product names of the Licensor,

+ except as required for reasonable and customary use in describing the

+ origin of the Work and reproducing the content of the NOTICE file.

+

+ 7. Disclaimer of Warranty. Unless required by applicable law or

+ agreed to in writing, Licensor provides the Work (and each

+ Contributor provides its Contributions) on an "AS IS" BASIS,

+ WITHOUT WARRANTIES OR CONDITIONS OF ANY KIND, either express or

+ implied, including, without limitation, any warranties or conditions

+ of TITLE, NON-INFRINGEMENT, MERCHANTABILITY, or FITNESS FOR A

+ PARTICULAR PURPOSE. You are solely responsible for determining the

+ appropriateness of using or redistributing the Work and assume any

+ risks associated with Your exercise of permissions under this License.

+

+ 8. Limitation of Liability. In no event and under no legal theory,

+ whether in tort (including negligence), contract, or otherwise,

+ unless required by applicable law (such as deliberate and grossly

+ negligent acts) or agreed to in writing, shall any Contributor be

+ liable to You for damages, including any direct, indirect, special,

+ incidental, or consequential damages of any character arising as a

+ result of this License or out of the use or inability to use the

+ Work (including but not limited to damages for loss of goodwill,

+ work stoppage, computer failure or malfunction, or any and all

+ other commercial damages or losses), even if such Contributor

+ has been advised of the possibility of such damages.

+

+ 9. Accepting Warranty or Additional Liability. While redistributing

+ the Work or Derivative Works thereof, You may choose to offer,

+ and charge a fee for, acceptance of support, warranty, indemnity,

+ or other liability obligations and/or rights consistent with this

+ License. However, in accepting such obligations, You may act only

+ on Your own behalf and on Your sole responsibility, not on behalf

+ of any other Contributor, and only if You agree to indemnify,

+ defend, and hold each Contributor harmless for any liability

+ incurred by, or claims asserted against, such Contributor by reason

+ of your accepting any such warranty or additional liability.

+

+ END OF TERMS AND CONDITIONS

+

+ APPENDIX: How to apply the Apache License to your work.

+

+ To apply the Apache License to your work, attach the following

+ boilerplate notice, with the fields enclosed by brackets "[]"

+ replaced with your own identifying information. (Don't include

+ the brackets!) The text should be enclosed in the appropriate

+ comment syntax for the file format. We also recommend that a

+ file or class name and description of purpose be included on the

+ same "printed page" as the copyright notice for easier

+ identification within third-party archives.

+

+ Copyright [yyyy] [name of copyright owner]

+

+ Licensed under the Apache License, Version 2.0 (the "License");

+ you may not use this file except in compliance with the License.

+ You may obtain a copy of the License at

+

+ http://www.apache.org/licenses/LICENSE-2.0

+

+ Unless required by applicable law or agreed to in writing, software

+ distributed under the License is distributed on an "AS IS" BASIS,

+ WITHOUT WARRANTIES OR CONDITIONS OF ANY KIND, either express or implied.

+ See the License for the specific language governing permissions and

+ limitations under the License.

\ No newline at end of file

diff --git a/autotrain-advanced/Makefile b/autotrain-advanced/Makefile

new file mode 100644

index 0000000000000000000000000000000000000000..cc8e0146ceb1aae8fc271cd430678c96c26c800d

--- /dev/null

+++ b/autotrain-advanced/Makefile

@@ -0,0 +1,28 @@

+.PHONY: quality style test

+

+# Check that source code meets quality standards

+

+quality:

+ black --check --line-length 119 --target-version py38 .

+ isort --check-only .

+ flake8 --max-line-length 119

+

+# Format source code automatically

+

+style:

+ black --line-length 119 --target-version py38 .

+ isort .

+

+test:

+ pytest -sv ./src/

+

+docker:

+ docker build -t autotrain-advanced:latest .

+ docker tag autotrain-advanced:latest huggingface/autotrain-advanced:latest

+ docker push huggingface/autotrain-advanced:latest

+

+pip:

+ rm -rf build/

+ rm -rf dist/

+ python setup.py sdist bdist_wheel

+ twine upload dist/* --verbose

\ No newline at end of file

diff --git a/autotrain-advanced/README.md b/autotrain-advanced/README.md

new file mode 100644

index 0000000000000000000000000000000000000000..6965d6c08c41bd72482bb7d087b2fe9b6cad1298

--- /dev/null

+++ b/autotrain-advanced/README.md

@@ -0,0 +1,13 @@

+# 🤗 AutoTrain Advanced

+

+AutoTrain Advanced: faster and easier training and deployments of state-of-the-art machine learning models

+

+## Installation

+

+You can Install AutoTrain-Advanced python package via PIP. Please note you will need python >= 3.8 for AutoTrain Advanced to work properly.

+

+ pip install autotrain-advanced

+

+Please make sure that you have git lfs installed. Check out the instructions here: https://github.com/git-lfs/git-lfs/wiki/Installation

+

+## Coming Soon!

\ No newline at end of file

diff --git a/autotrain-advanced/docs/source/_toctree.yml b/autotrain-advanced/docs/source/_toctree.yml

new file mode 100644

index 0000000000000000000000000000000000000000..f00a96415d97f839239201d5c9dc51bb4c5e9e8f

--- /dev/null

+++ b/autotrain-advanced/docs/source/_toctree.yml

@@ -0,0 +1,28 @@

+- sections:

+ - local: index

+ title: 🤗 AutoTrain

+ - local: getting_started

+ title: Installation

+ - local: cost

+ title: How much does it cost?

+ - local: support

+ title: Get help and support

+ title: Get started

+- sections:

+ - local: model_choice

+ title: Model Selection

+ - local: param_choice

+ title: Parameter Selection

+ title: Selecting Models and Parameters

+- sections:

+ - local: text_classification

+ title: Text Classification

+ - local: llm_finetuning

+ title: LLM Finetuning

+ title: Text Tasks

+- sections:

+ - local: image_classification

+ title: Image Classification

+ - local: dreambooth

+ title: DreamBooth

+ title: Image Tasks

diff --git a/autotrain-advanced/docs/source/cost.mdx b/autotrain-advanced/docs/source/cost.mdx

new file mode 100644

index 0000000000000000000000000000000000000000..bcfdad181a15514057310382a8e9239ab4f77ff6

--- /dev/null

+++ b/autotrain-advanced/docs/source/cost.mdx

@@ -0,0 +1,17 @@

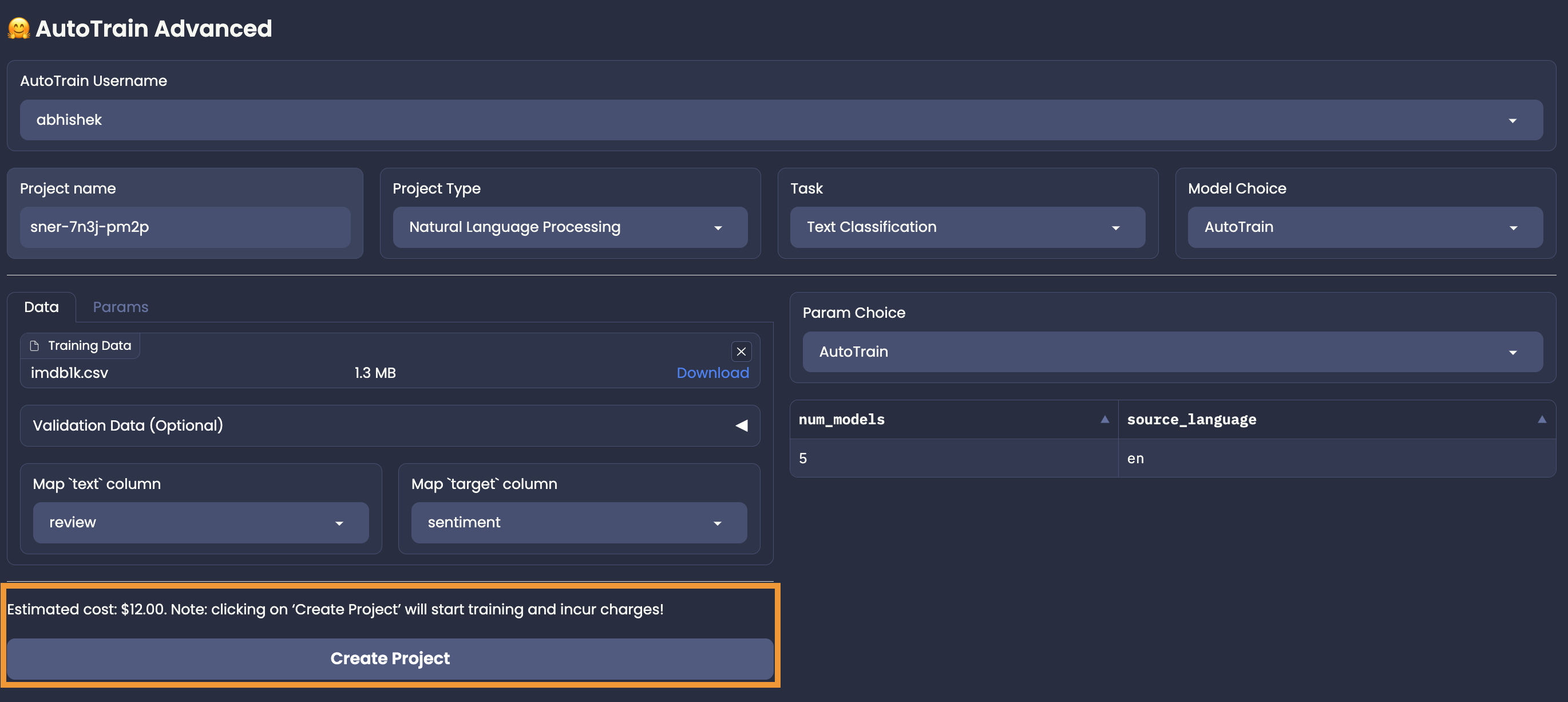

+# How much does it cost?

+

+AutoTrain provides you with best models which are deployable with just a few clicks.

+Unlike other services, we don't own your models. Once the training is done, you can download them and use them anywhere you want.

+

+Before you start training, you can see the estimated cost of training.

+

+Free tier is available for everyone. For a limited number of samples, you can train your models for free!

+If your dataset is larger, you will be presented with the estimated cost of training.

+Training will begin only after you confirm the payment.

+

+Please note that in order to use non-free tier AutoTrain, you need to have a valid payment method on file.

+You can add your payment method in the [billing](https://huggingface.co/settings/billing) section.

+

+Estimated cost will be displayed in the UI as follows:

+

+

\ No newline at end of file

diff --git a/autotrain-advanced/docs/source/dreambooth.mdx b/autotrain-advanced/docs/source/dreambooth.mdx

new file mode 100644

index 0000000000000000000000000000000000000000..f2f7232098c0c4d3a07fab1e9aa1623d15f3c9f8

--- /dev/null

+++ b/autotrain-advanced/docs/source/dreambooth.mdx

@@ -0,0 +1,18 @@

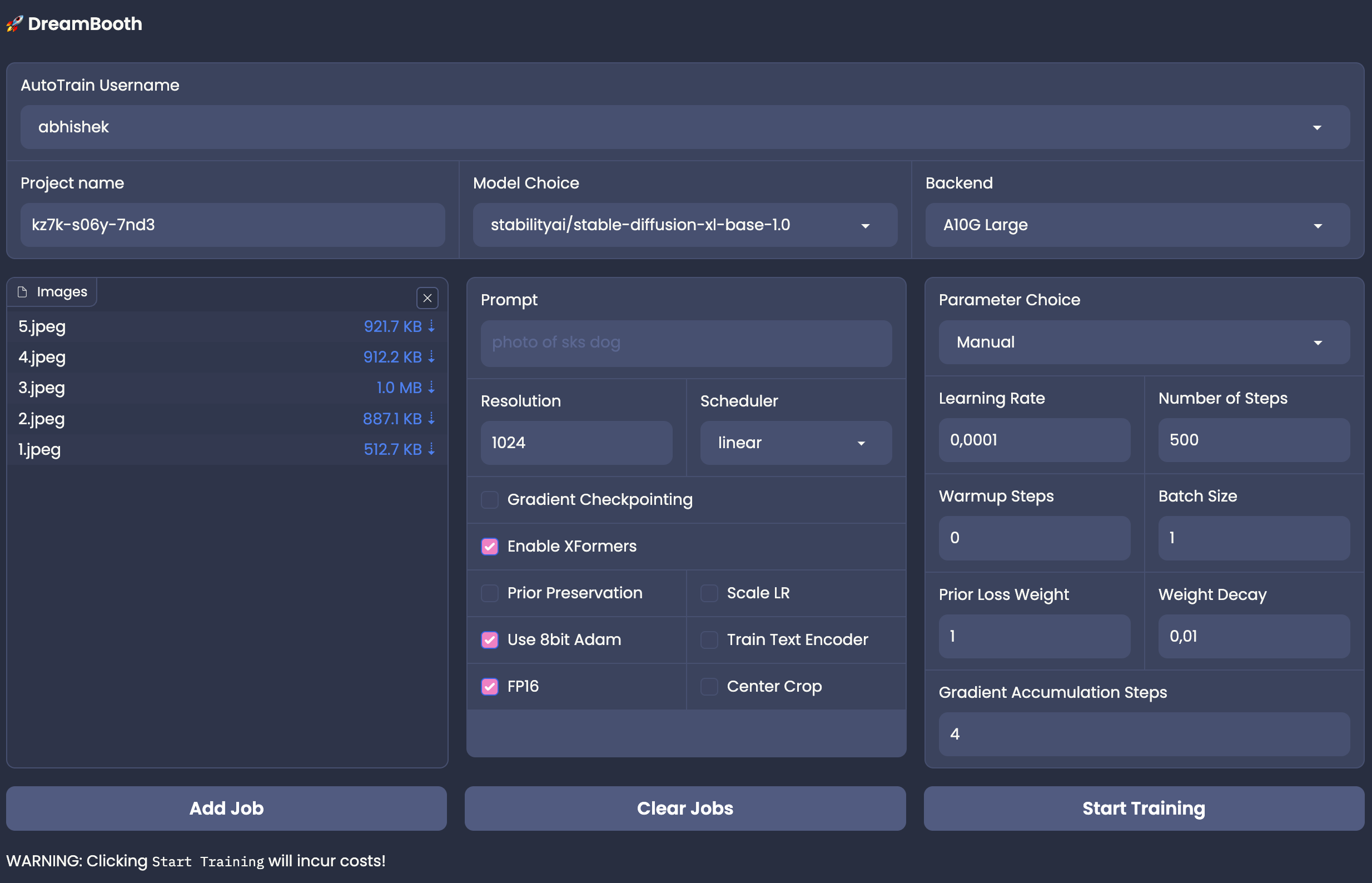

+# DreamBooth

+

+DreamBooth is a method to personalize text-to-image models like Stable Diffusion given just a few (3-5) images of a subject. It allows the model to generate contextualized images of the subject in different scenes, poses, and views.

+

+

+

+## Data Preparation

+

+The data format for DreamBooth training is simple. All you need is images of a concept (e.g. a person) and a concept token.

+

+

+

+To train a dreambooth model, please select an appropriate model from the hub. You can also let AutoTrain decide the best model for you!

+When choosing a model from the hub, please make sure you select the correct image size compatible with the model.

+

+Same as other tasks, you also have an option to select the parameters manually or automatically using AutoTrain.

+

+For each concept that you want to train, you must have a concept token and concept images. Concept token is nothing but a word that is not available in the dictionary.

diff --git a/autotrain-advanced/docs/source/getting_started.mdx b/autotrain-advanced/docs/source/getting_started.mdx

new file mode 100644

index 0000000000000000000000000000000000000000..14d0a999c289ab4270531d8731813c9b1d8d72a8

--- /dev/null

+++ b/autotrain-advanced/docs/source/getting_started.mdx

@@ -0,0 +1,29 @@

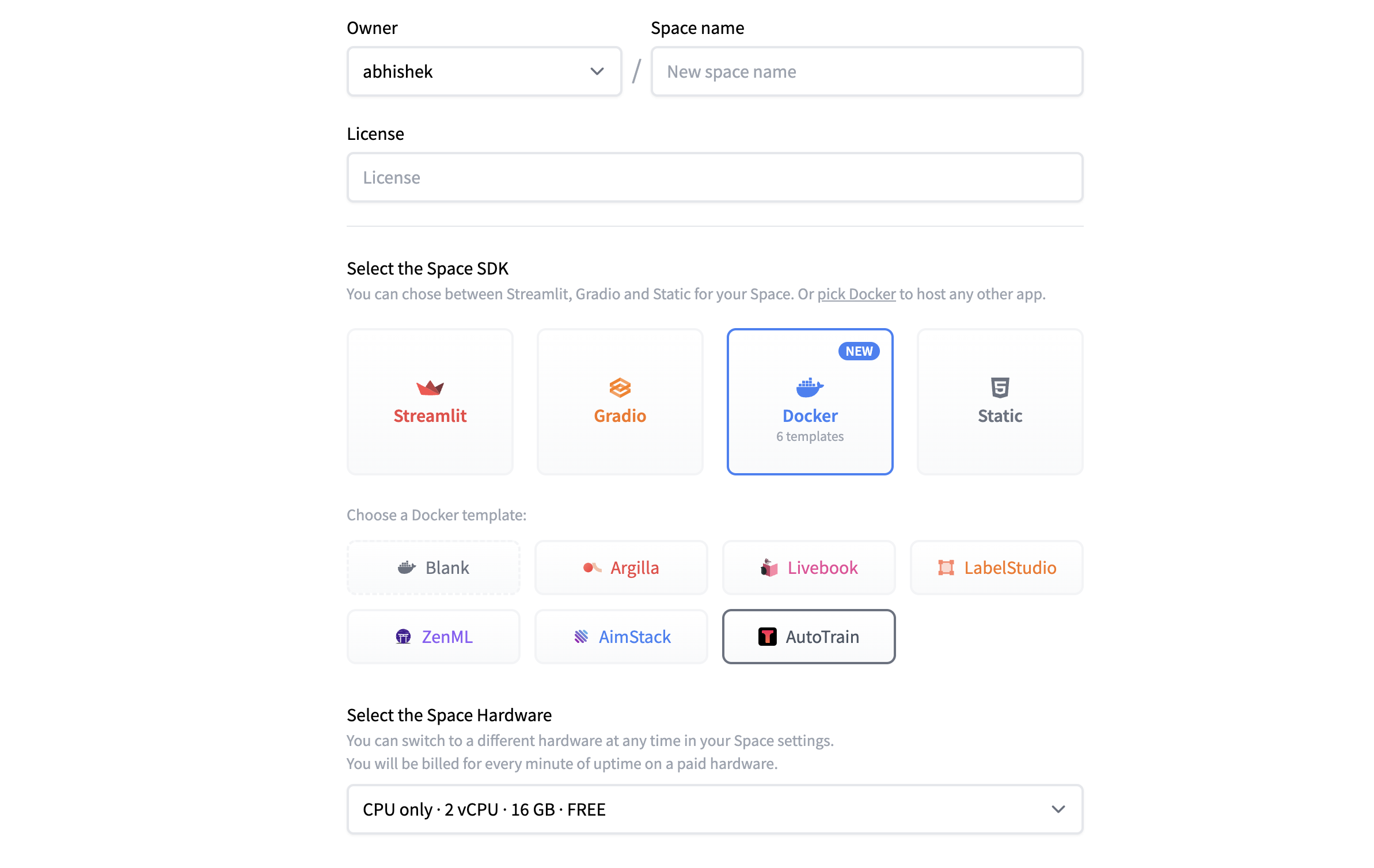

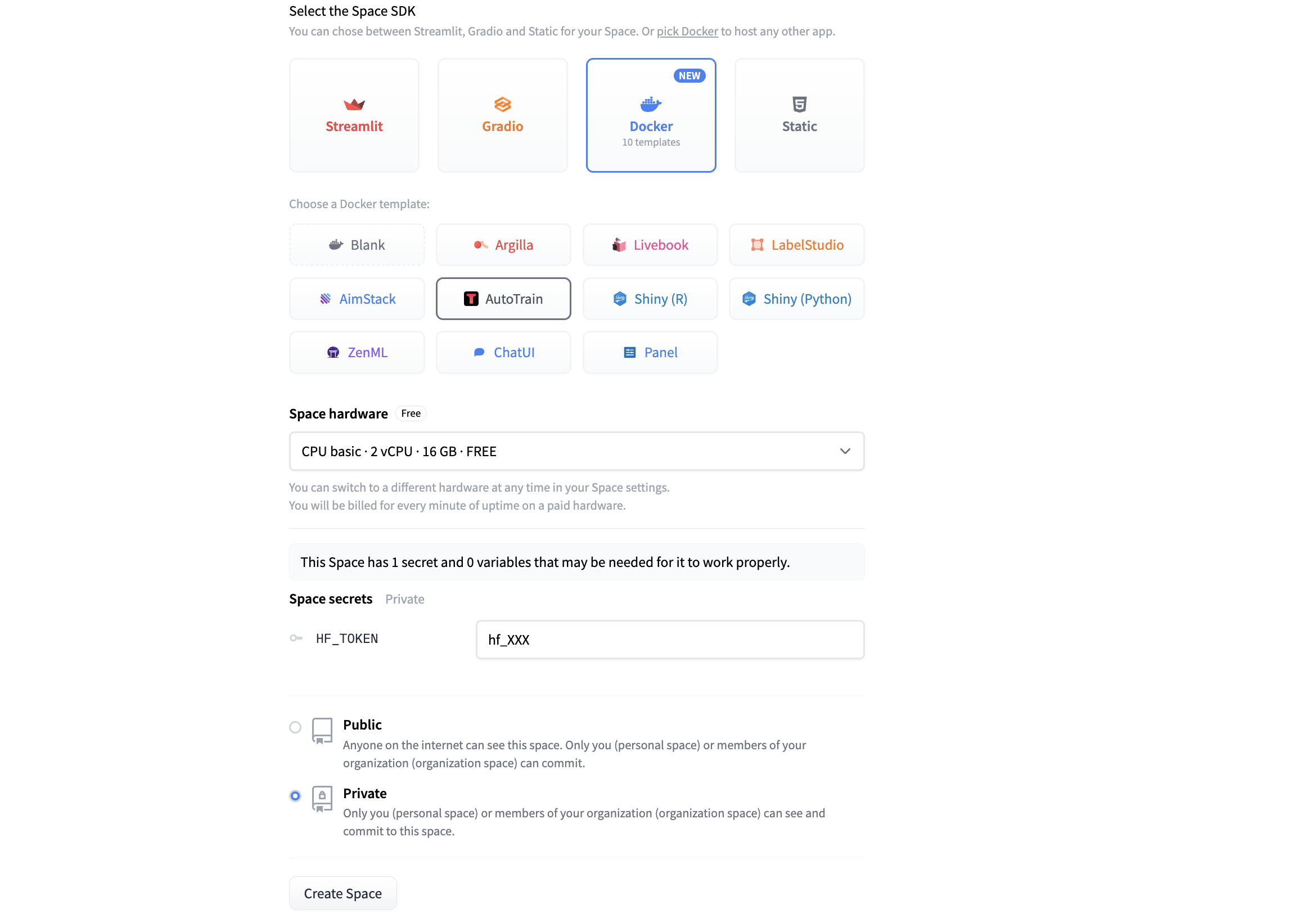

+# Installation

+

+There is no installation required! AutoTrain Advanced runs on Hugging Face Spaces. All you need to do is create a new space with the AutoTrain Advanced template: https://huggingface.co/new-space?template=autotrain-projects/autotrain-advanced. Please make sure you keep the space private.

+

+

+

+Once you have selected Docker > AutoTrain template. You can click on "Create Space" and you will be redirected to your new space.

+

+

+

+Once the space is build, you will see this screen:

+

+

+

+You can find your token at https://huggingface.co/settings/token.

+

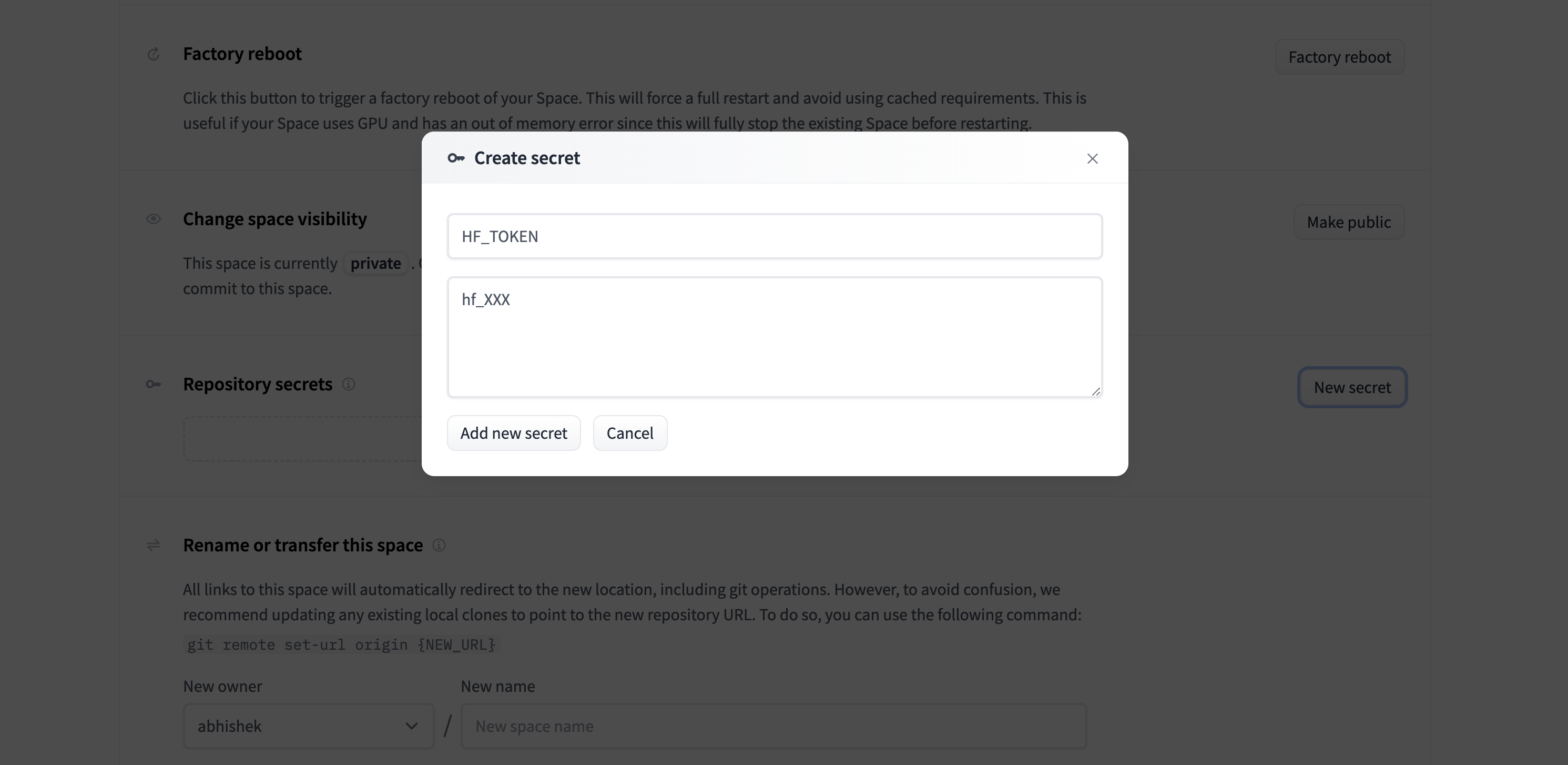

+Note: you have to add HF_TOKEN as an environment variable in your space settings. To do so, click on the "Settings" button in the top right corner of your space, then click on "New Secret" in the "Repository Secrets" section and add a new variable with the name HF_TOKEN and your token as the value as shown below:

+

+

+

+# Updating AutoTrain Advanced to Latest Version

+

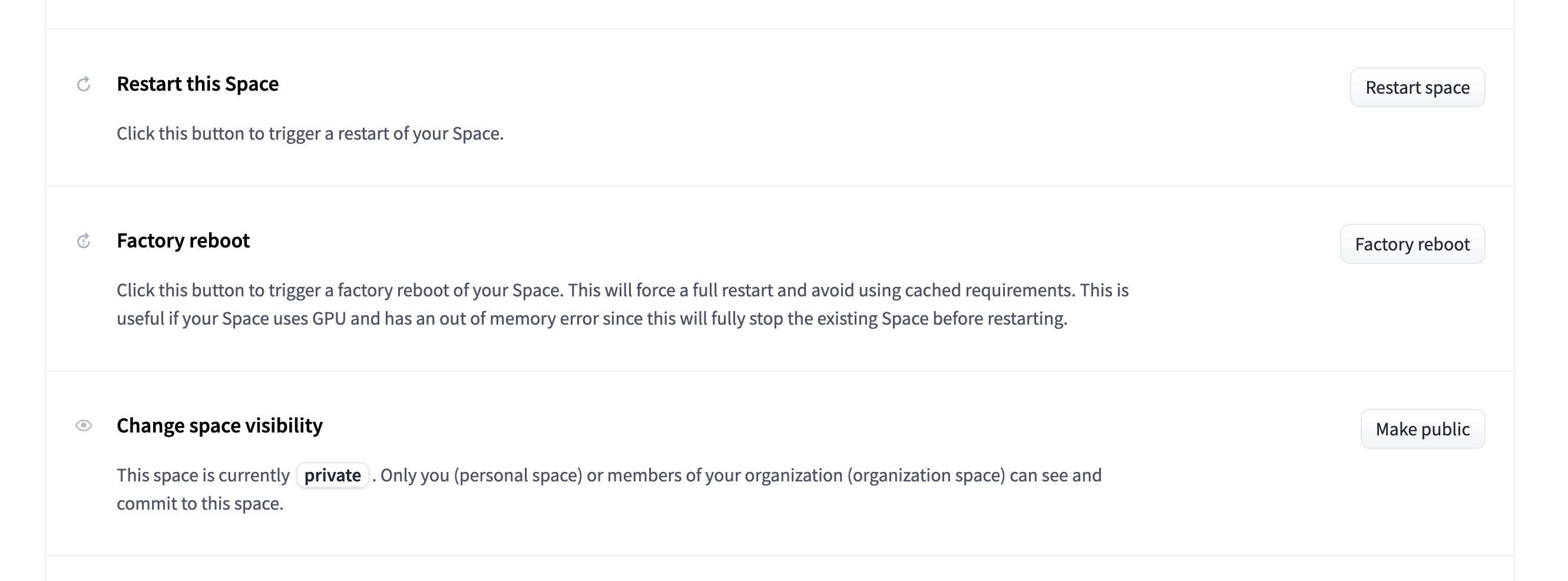

+We are constantly adding new features and tasks to AutoTrain Advanced. Its always a good idea to update your space to the latest version before starting a new project. An up-to-date version of AutoTrain Advanced will have the latest tasks, features and bug fixes! Updating is as easy as clicking on the "Factory reboot" button in the setting page of your space.

+

+

+

+Please note that "restarting" a space will not update it to the latest version. You need to "Factory reboot" the space to update it to the latest version.

+

+And now we are all set and we can start with our first project!

\ No newline at end of file

diff --git a/autotrain-advanced/docs/source/image_classification.mdx b/autotrain-advanced/docs/source/image_classification.mdx

new file mode 100644

index 0000000000000000000000000000000000000000..d8f1ba1997d186cd58311d981a5a046a6a98fdeb

--- /dev/null

+++ b/autotrain-advanced/docs/source/image_classification.mdx

@@ -0,0 +1,40 @@

+# Image Classification

+

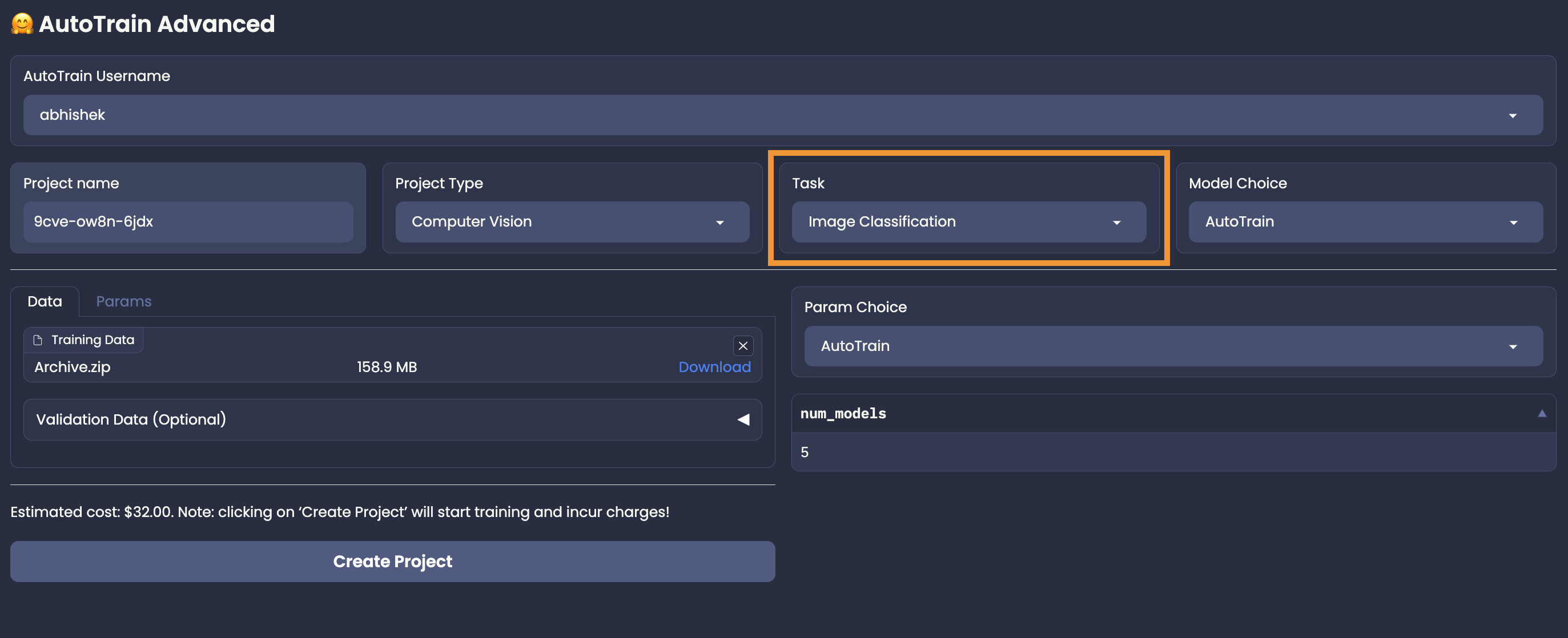

+Image classification is a supervised learning problem: define a set of target classes (objects to identify in images), and train a model to recognize them using labeled example photos.

+Using AutoTrain, its super-easy to train a state-of-the-art image classification model. Just upload a set of images, and AutoTrain will automatically train a model to classify them.

+

+## Data Preparation

+

+The data for image classification must be in zip format, with each class in a separate subfolder. For example, if you want to classify cats and dogs, your zip file should look like this:

+

+```

+cats_and_dogs.zip

+├── cats

+│ ├── cat.1.jpg

+│ ├── cat.2.jpg

+│ ├── cat.3.jpg

+│ └── ...

+└── dogs

+ ├── dog.1.jpg

+ ├── dog.2.jpg

+ ├── dog.3.jpg

+ └── ...

+```

+

+Some points to keep in mind:

+

+- The zip file should contain multiple folders (the classes), each folder should contain images of a single class.

+- The name of the folder should be the name of the class.

+- The images must be jpeg, jpg or png.

+- There should be at least 5 images per class.

+- There should not be any other files in the zip file.

+- There should not be any other folders inside the zip folder.

+

+When train.zip is decompressed, it creates two folders: cats and dogs. these are the two categories for classification. The images for both categories are in their respective folders. You can have as many categories as you want.

+

+## Training

+

+Once you have your data ready, you can upload it to AutoTrain and select model and parameters.

+If the estimate looks good, click on `Create Project` button to start training.

+

+

\ No newline at end of file

diff --git a/autotrain-advanced/docs/source/index.mdx b/autotrain-advanced/docs/source/index.mdx

new file mode 100644

index 0000000000000000000000000000000000000000..faaeb22caf4bdf7001c0d7740f992add27071055

--- /dev/null

+++ b/autotrain-advanced/docs/source/index.mdx

@@ -0,0 +1,34 @@

+# AutoTrain

+

+🤗 AutoTrain is a no-code tool for training state-of-the-art models for Natural Language Processing (NLP) tasks, for Computer Vision (CV) tasks, and for Speech tasks and even for Tabular tasks. It is built on top of the awesome tools developed by the Hugging Face team, and it is designed to be easy to use.

+

+## Who should use AutoTrain?

+

+AutoTrain is for anyone who wants to train a state-of-the-art model for a NLP, CV, Speech or Tabular task, but doesn't want to spend time on the technical details of training a model. AutoTrain is also for anyone who wants to train a model for a custom dataset, but doesn't want to spend time on the technical details of training a model. Our goal is to make it easy for anyone to train a state-of-the-art model for any task and our focus is not just data scientists or machine learning engineers, but also non-technical users.

+

+## How to use AutoTrain?

+

+We offer several ways to use AutoTrain:

+

+- No code users with large number of data samples can use `AutoTrain Advanced` by creating a new space with AutoTrain Docker image: https://huggingface.co/new-space?template=autotrain-projects/autotrain-advanced. Please make sure you keep the space private.

+

+- No code users with small number of data samples can use AutoTrain using the UI located at: https://ui.autotrain.huggingface.co/projects. Please note that this UI won't be updated with new tasks and features as frequently as AutoTrain Advanced.

+

+- Developers can access and build on top of AutoTrain using python api or run AutoTrain Advanced UI locally. The python api is available in the `autotrain-advanced` package. You can install it using pip:

+

+```bash

+pip install autotrain-advanced

+```

+

+- Developers can also use the AutoTrain API directly. The API is available at: https://api.autotrain.huggingface.co/docs

+

+

+## What is AutoTrain Advanced?

+

+AutoTrain Advanced processes your data either in a Hugging Face Space or locally (if installed locally using pip). This saves one time since the data processing is not done by the AutoTrain backend, resulting in your job not being queued. AutoTrain Advanced also allows you to use your own hardware (better CPU and RAM) to process the data, thus, making the data processing faster.

+

+Using AutoTrain Advanced, advanced users can also control the hyperparameters used for training per job. This allows you to train multiple models with different hyperparameters and compare the results.

+

+Everything else is the same as AutoTrain. You can use AutoTrain Advanced to train models for NLP, CV, Speech and Tabular tasks.

+

+We recommend using [AutoTrain Advanced](https://huggingface.co/new-space?template=autotrain-projects/autotrain-advanced) since it is faster, more flexible and will have more supported tasks and features in the future.

\ No newline at end of file

diff --git a/autotrain-advanced/docs/source/llm_finetuning.mdx b/autotrain-advanced/docs/source/llm_finetuning.mdx

new file mode 100644

index 0000000000000000000000000000000000000000..6c41f715972f20ea630d425c5cd408a5c7c375f3

--- /dev/null

+++ b/autotrain-advanced/docs/source/llm_finetuning.mdx

@@ -0,0 +1,43 @@

+# LLM Finetuning

+

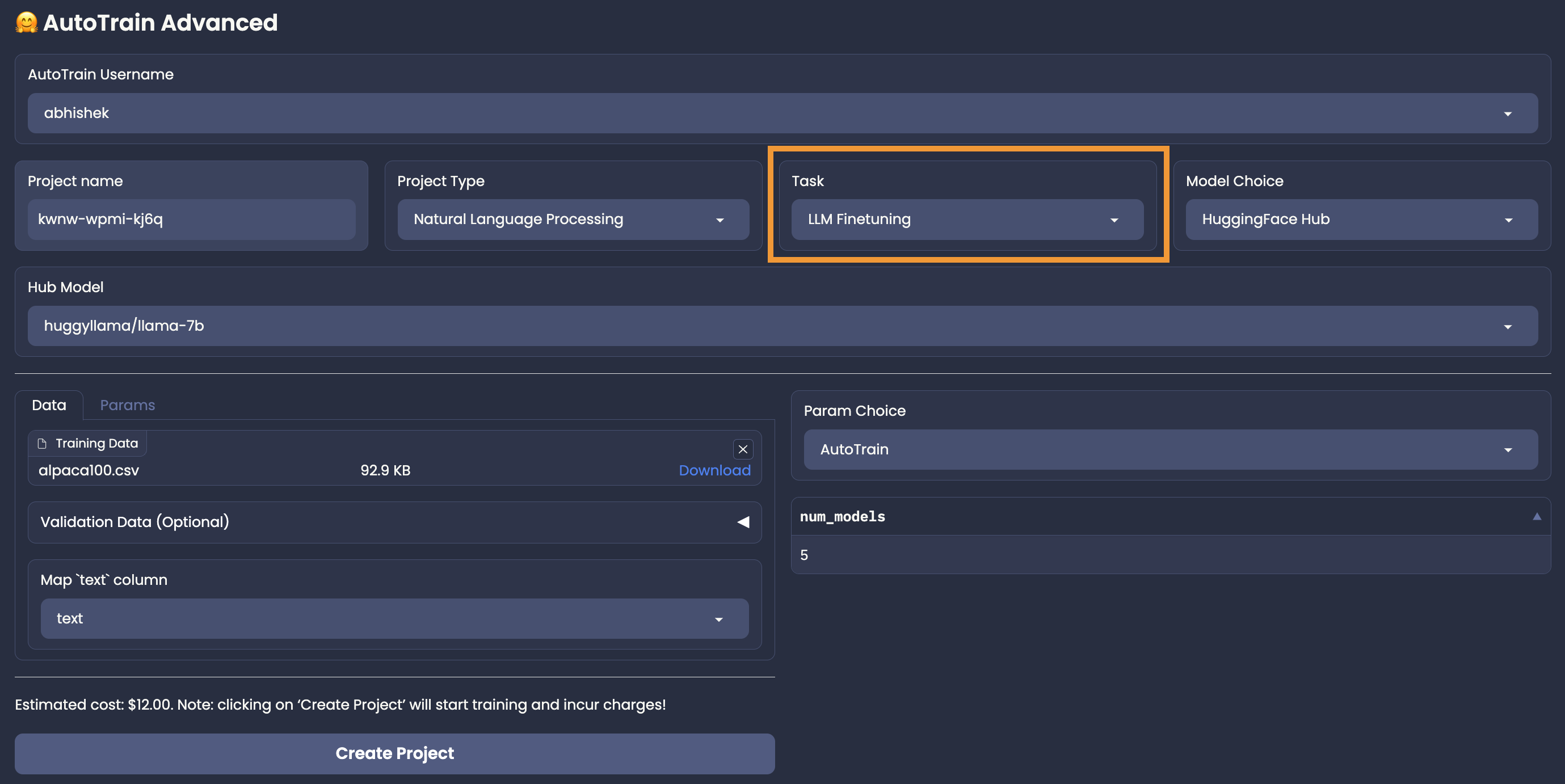

+With AutoTrain, you can easily finetune large language models (LLMs) on your own data!

+

+AutoTrain supports the following types of LLM finetuning:

+

+- Causal Language Modeling (CLM)

+- Masked Language Modeling (MLM) [Coming Soon]

+

+For LLM finetuning, only Hugging Face Hub model choice is available.

+User needs to select a model from Hugging Face Hub, that they want to finetune and select the parameters on their own (Manual Parameter Selection),

+or use AutoTrain's Auto Parameter Selection to automatically select the best parameters for the task.

+

+## Data Preparation

+

+LLM finetuning accepts data in CSV format.

+There are two modes for LLM finetuning: `generic` and `chat`.

+An example dataset with both formats in the same dataset can be found here: https://huggingface.co/datasets/tatsu-lab/alpaca

+

+### Generic

+

+In generic mode, only one column is required: `text`.

+The user can take care of how the data is formatted for the task.

+A sample instance for this format is presented below:

+

+```

+Below is an instruction that describes a task, paired with an input that provides further context. Write a response that appropriately completes the request.

+

+### Instruction: Evaluate this sentence for spelling and grammar mistakes

+

+### Input: He finnished his meal and left the resturant

+

+### Response: He finished his meal and left the restaurant.

+```

+

+

+

+Please note that above is the format for instruction finetuning. But in the `generic` mode, you can also finetune on any other format as you want. The data can be changed according to the requirements.

+

+

+## Training

+

+Once you have your data ready and estimate verified, you can start training your model by clicking the "Create Project" button.

\ No newline at end of file

diff --git a/autotrain-advanced/docs/source/model_choice.mdx b/autotrain-advanced/docs/source/model_choice.mdx

new file mode 100644

index 0000000000000000000000000000000000000000..e7f3b26fb6dbde8fcd8548e100dcb81b9bde1df9

--- /dev/null

+++ b/autotrain-advanced/docs/source/model_choice.mdx

@@ -0,0 +1,24 @@

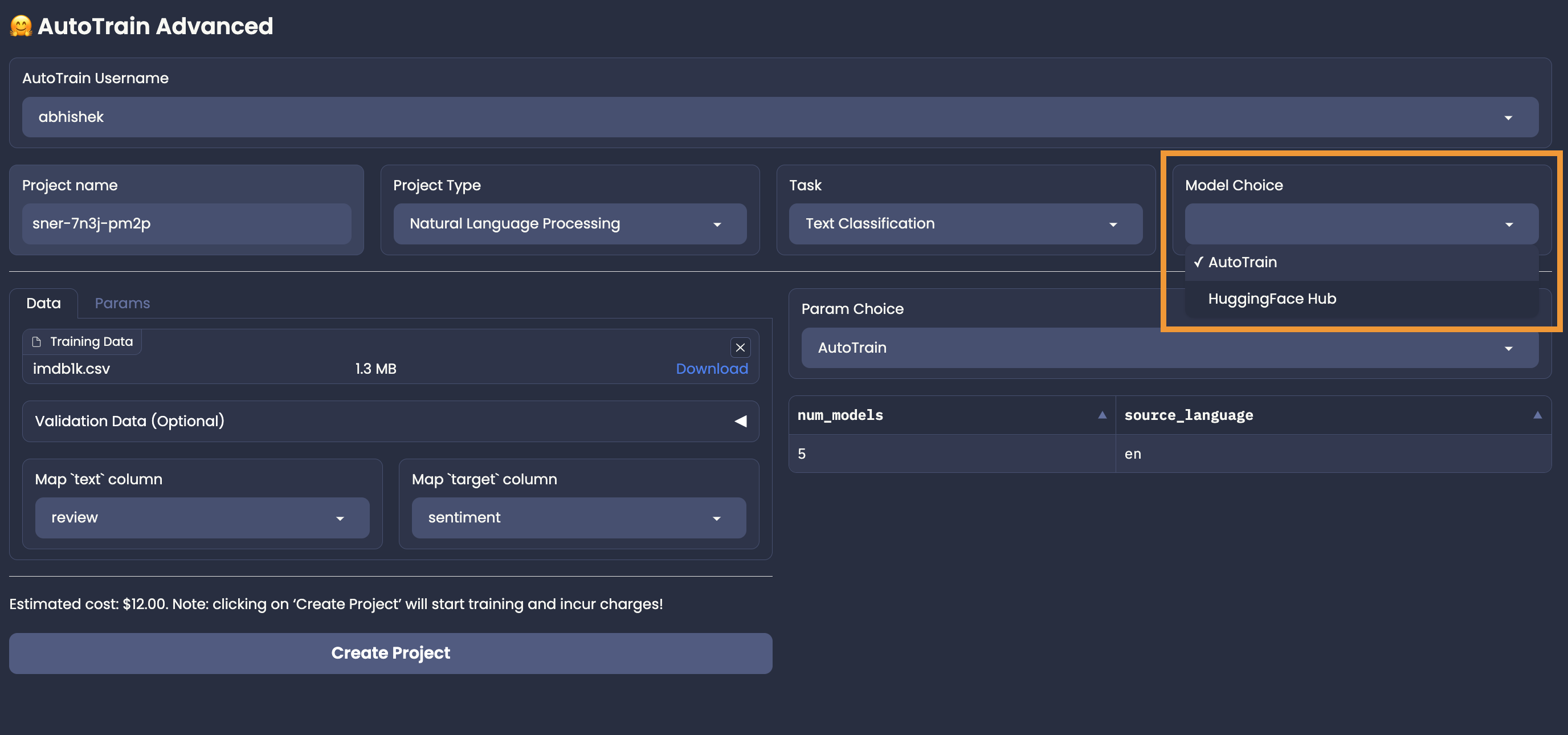

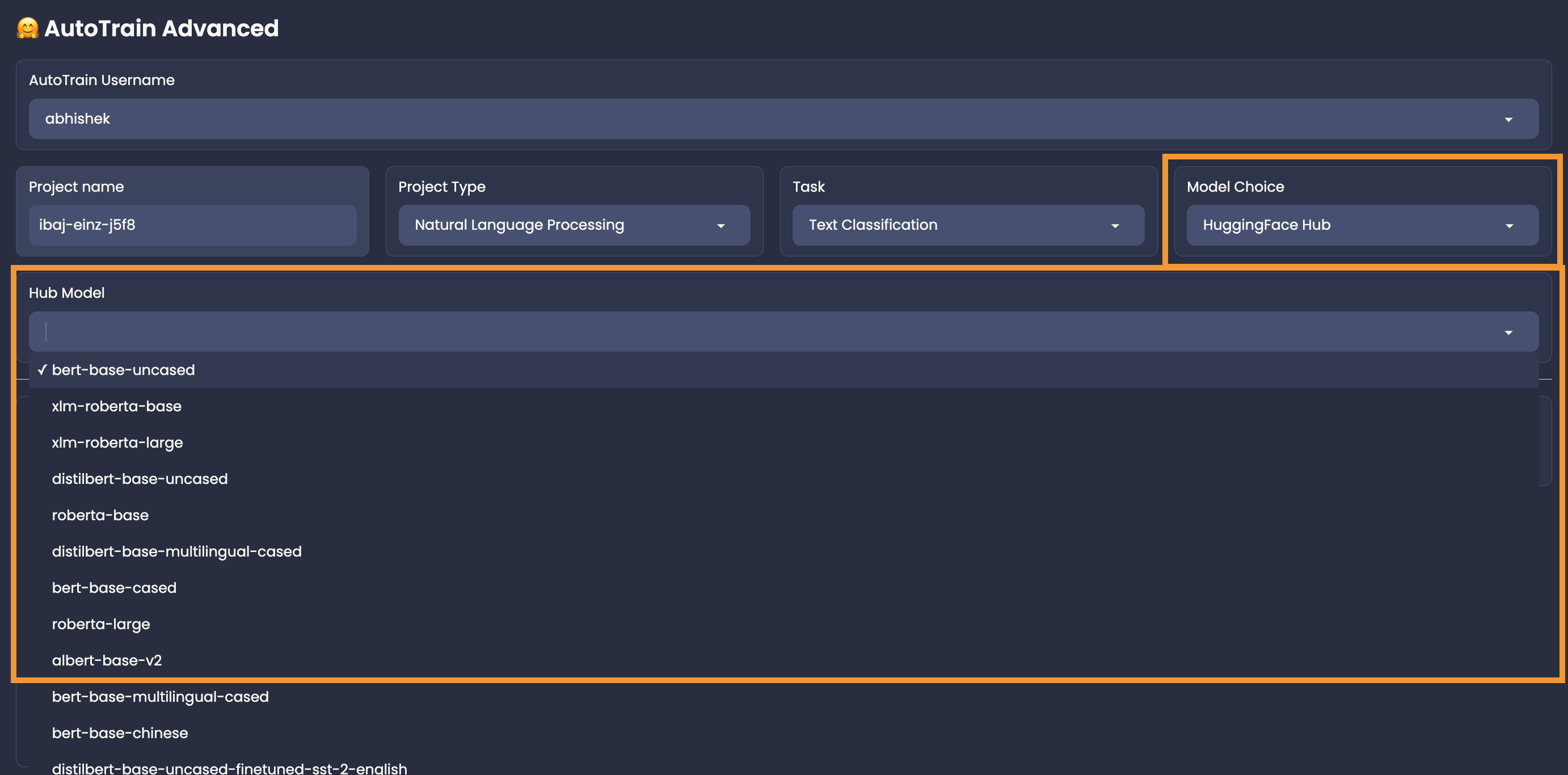

+# Model Choice

+

+AutoTrain can automagically select the best models for your task! However, you are also

+allowed to choose the models you want to use. You can choose the most appropriate models

+from the Hugging Face Hub.

+

+

+

+## AutoTrain Model Choice

+

+To let AutoTrain choose the best models for your task, you can use the "AutoTrain"

+in the "Model Choice" section. Once you choose AutoTrain mode, you no longer need to worry about model and parameter selection.

+AutoTrain will automatically select the best models (and parameters) for your task.

+

+## Manual Model Choice

+

+To choose the models manually, you can use the "HuggingFace Hub" in the "Model Choice" section.

+For example, if you want to use if you are training a text classification task and want to choose Deberta V3 Base for your task

+from https://huggingface.co/microsoft/deberta-v3-base,

+You can choose "HuggingFace Hub" and then write the model name: `microsoft/deberta-v3-base` in the model name field.

+

+

+

+Please note that if you are selecting a hub model, you should make sure that it is compatible with your task, otherwise the training will fail.

\ No newline at end of file

diff --git a/autotrain-advanced/docs/source/param_choice.mdx b/autotrain-advanced/docs/source/param_choice.mdx

new file mode 100644

index 0000000000000000000000000000000000000000..14439ab1e1742567a4600adfc9acd3836b37d271

--- /dev/null

+++ b/autotrain-advanced/docs/source/param_choice.mdx

@@ -0,0 +1,25 @@

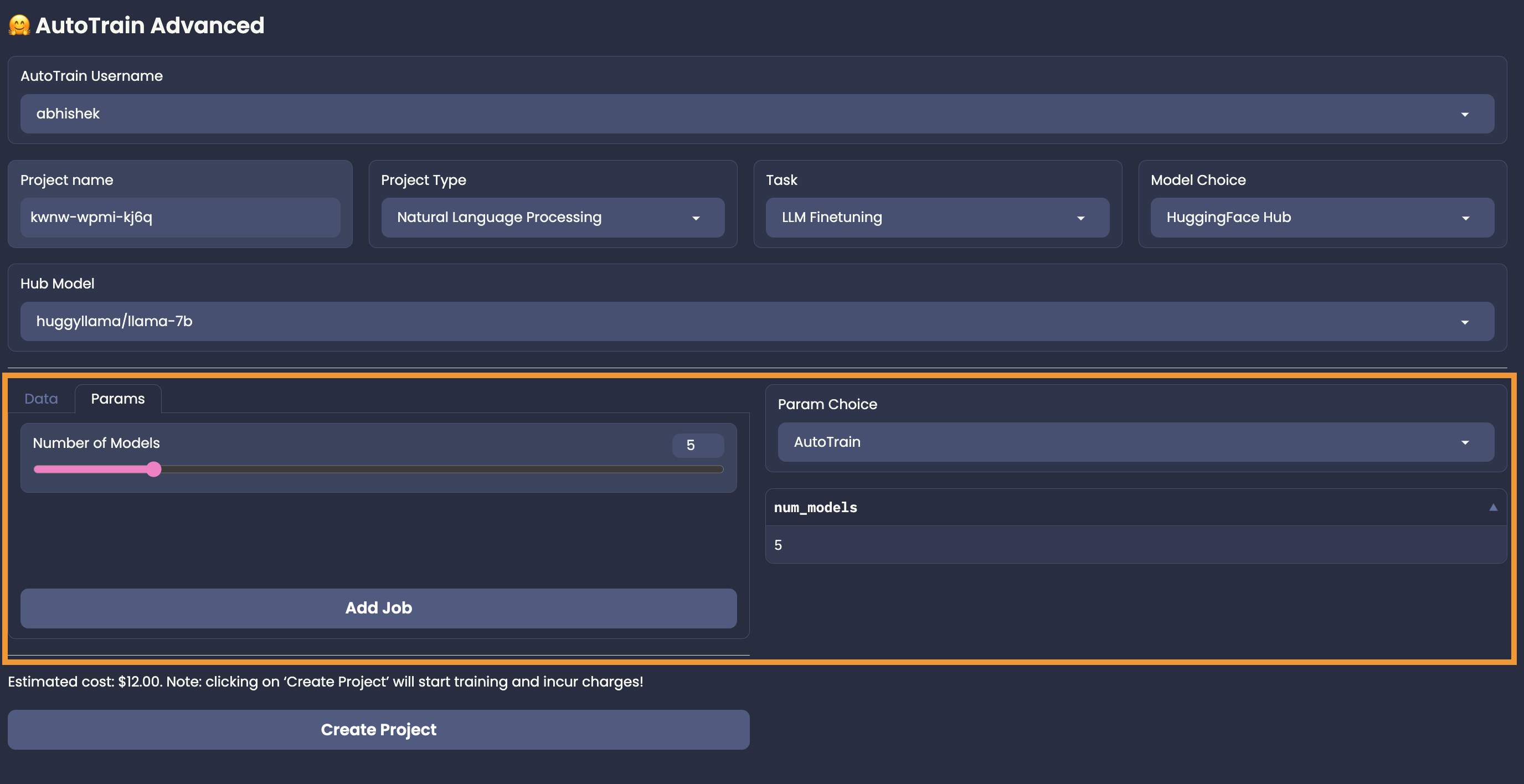

+# Parameter Choice

+

+Just like model choice, you can choose the parameters for your job in two ways: AutoTrain and Manual.

+

+## AutoTrain Mode

+

+In the AutoTrain mode, the parameters for your task-model pair will be chosen automagically.

+If you choose "AutoTrain" as model choice, you get the AutoTrain mode as the only option.

+If you choose "HuggingFace Hub" as model choice, you get the the option to choose between AutoTrain and Manual mode for parameter choice.

+

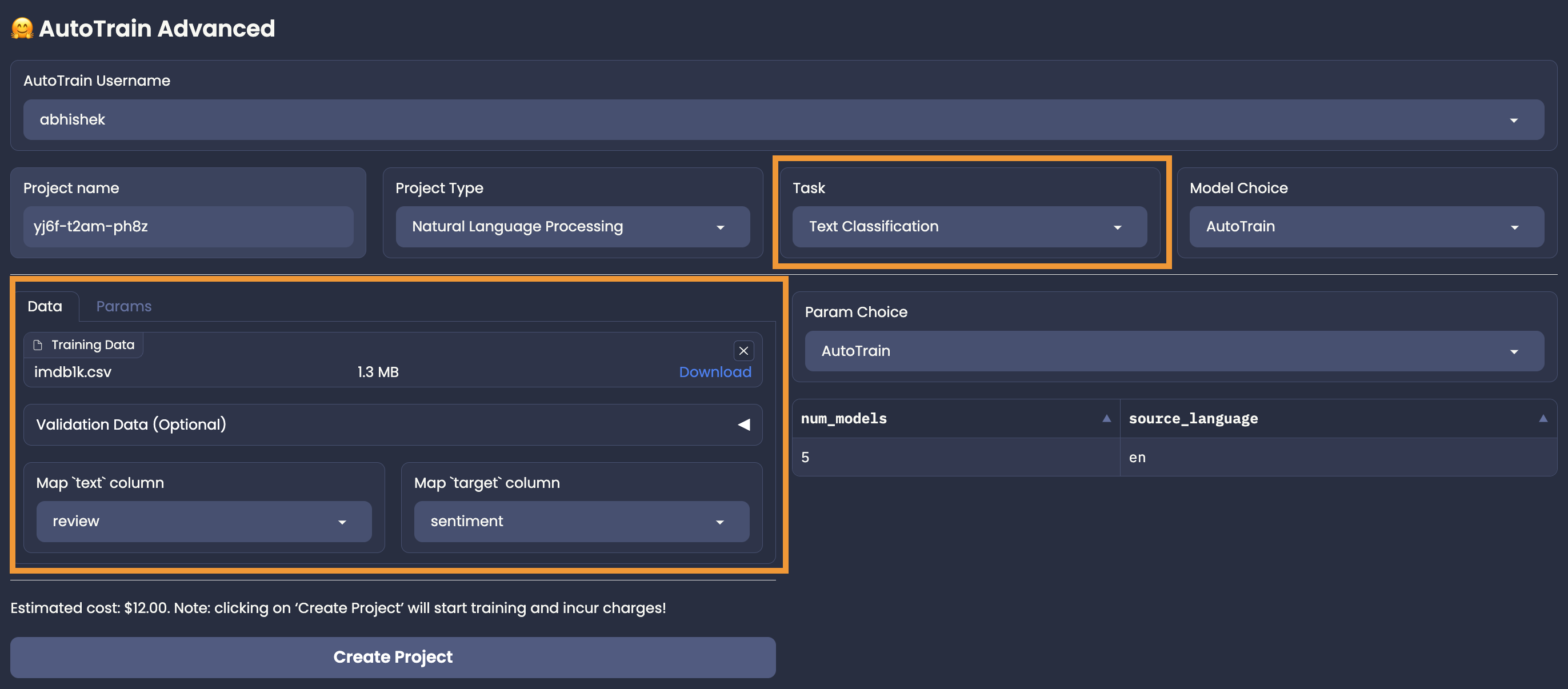

+An example of AutoTrain mode for a text classification task is shown below:

+

+

+

+For most of the tasks in AutoTrain parameter selection mode, you will get "Number of Models" as the only parameter to choose. Some tasks like test-classification might ask you about the language of the dataset.

+The more the number of models, the better the final results might be but it might be more expensive too!

+

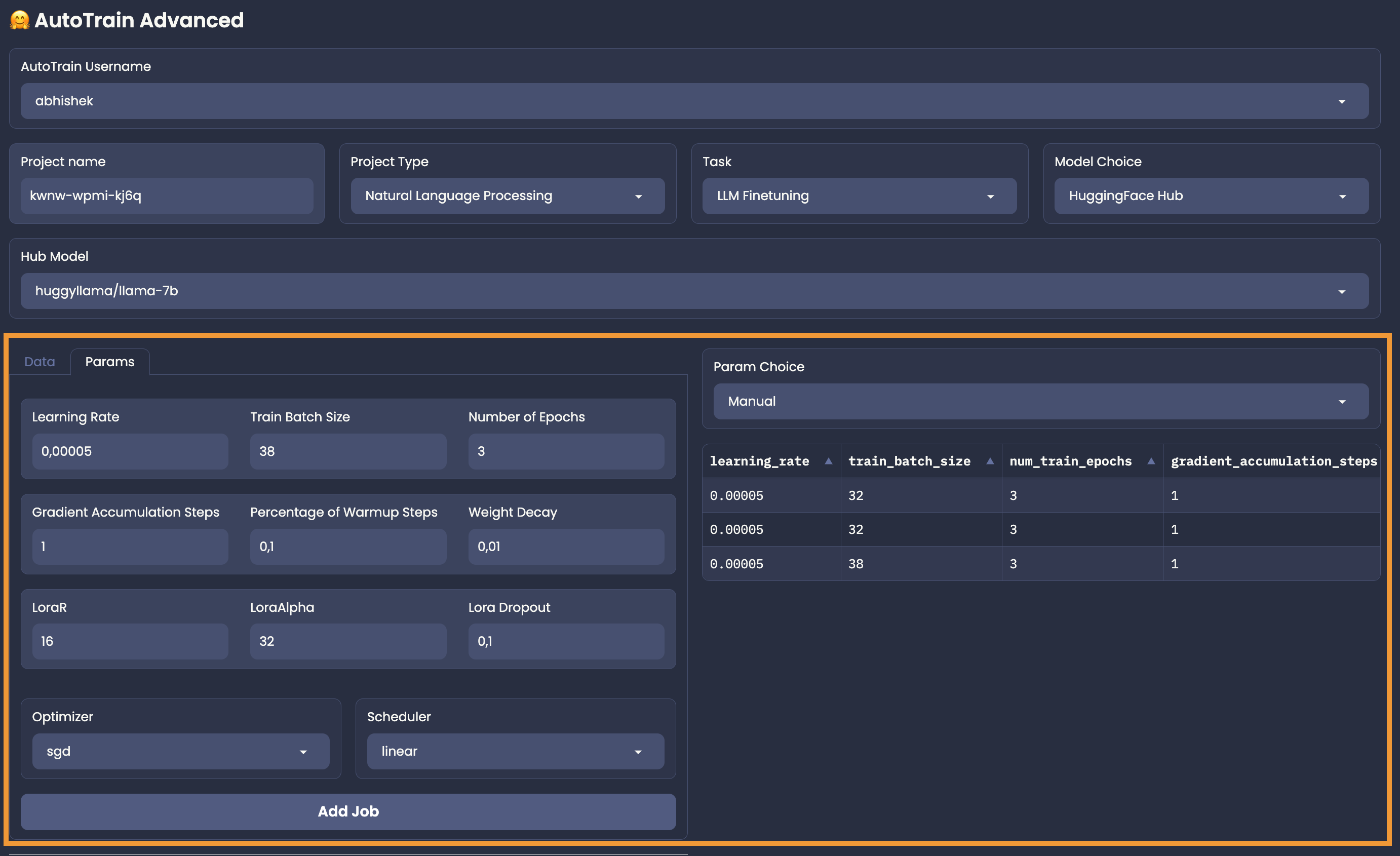

+## Manual Mode

+

+Manual model can be used only when you choose "HuggingFace Hub" as model choice. In this mode, you can choose the parameters for your task-model pair manually.

+An example of Manual mode for a text classification task is shown below:

+

+

+

+In the manual mode, you have to add the jobs on your own. So, carefully select your parameters, click on "Add Job" and 💥.

\ No newline at end of file

diff --git a/autotrain-advanced/docs/source/support.mdx b/autotrain-advanced/docs/source/support.mdx

new file mode 100644

index 0000000000000000000000000000000000000000..0a180c3f8ee27dd748a665767556bae7a13f9ae5

--- /dev/null

+++ b/autotrain-advanced/docs/source/support.mdx

@@ -0,0 +1,12 @@

+# Help and Support

+

+To get help and support for autotrain, there are 3 ways:

+

+- [Create an issue](https://github.com/huggingface/autotrain-advanced/issues/new) in AutoTrain Advanced GitHub repository.

+

+- [Ask in the Hugging Face Forum](https://discuss.huggingface.co/c/autotrain/16).

+

+- [Email us](mailto:autotrain@hf.co) directly.

+

+

+Please don't forget to mention your username and project name if you have a specific question about your project.

diff --git a/autotrain-advanced/docs/source/text_classification.mdx b/autotrain-advanced/docs/source/text_classification.mdx

new file mode 100644

index 0000000000000000000000000000000000000000..6135db8867ea4deefbde365522061c5bcb660e89

--- /dev/null

+++ b/autotrain-advanced/docs/source/text_classification.mdx

@@ -0,0 +1,60 @@

+# Text Classification

+

+Training a text classification model with AutoTrain is super-easy! Get your data ready in

+proper format and then with just a few clicks, your state-of-the-art model will be ready to

+be used in production.

+

+## Data Format

+

+Let's train a model for classifying the sentiment of a movie review. The data should be

+in the following CSV format:

+

+```csv

+review,sentiment

+"this movie is great",positive

+"this movie is bad",negative

+.

+.

+.

+```

+

+As you can see, we have two columns in the CSV file. One column is the text and the other

+is the label. The label can be any string. In this example, we have two labels: `positive`

+and `negative`. You can have as many labels as you want.

+

+If your CSV is huge, you can divide it into multiple CSV files and upload them separately.

+Please make sure that the column names are the same in all CSV files.

+

+One way to divide the CSV file using pandas is as follows:

+

+```python

+import pandas as pd

+

+# Set the chunk size

+chunk_size = 1000

+i = 1

+

+# Open the CSV file and read it in chunks

+for chunk in pd.read_csv('example.csv', chunksize=chunk_size):

+ # Save each chunk to a new file

+ chunk.to_csv(f'chunk_{i}.csv', index=False)

+ i += 1

+```

+

+Once the data has been uploaded, you have to select the proper column mapping

+

+## Column Mapping

+

+

+

+In our example, the text column is called `review` and the label column is called `sentiment`.

+Thus, we have to select `review` for the text column and `sentiment` for the label column.

+Please note that, if column mapping is not done correctly, the training will fail.

+

+

+## Training

+

+Once you have uploaded the data, selected the column mapping, and set the hyperparameters (AutoTrain or Manual mode), you can start the training.

+To start the training, please confirm the estimated cost and click on the `Create Project` button.

+

+

diff --git a/autotrain-advanced/examples/text_classification_binary.py b/autotrain-advanced/examples/text_classification_binary.py

new file mode 100644

index 0000000000000000000000000000000000000000..2cd6469968ab528065bc9807db80e45d3c3f8da2

--- /dev/null

+++ b/autotrain-advanced/examples/text_classification_binary.py

@@ -0,0 +1,77 @@

+import os

+from uuid import uuid4

+

+from datasets import load_dataset

+

+from autotrain.dataset import AutoTrainDataset

+from autotrain.project import Project

+

+

+RANDOM_ID = str(uuid4())

+DATASET = "imdb"

+PROJECT_NAME = f"imdb_{RANDOM_ID}"

+TASK = "text_binary_classification"

+MODEL = "bert-base-uncased"

+

+USERNAME = os.environ["AUTOTRAIN_USERNAME"]

+TOKEN = os.environ["HF_TOKEN"]

+

+

+if __name__ == "__main__":

+ dataset = load_dataset(DATASET)

+ train = dataset["train"]

+ validation = dataset["test"]

+

+ # convert to pandas dataframe

+ train_df = train.to_pandas()

+ validation_df = validation.to_pandas()

+

+ # prepare dataset for AutoTrain

+ dset = AutoTrainDataset(

+ train_data=[train_df],

+ valid_data=[validation_df],

+ task=TASK,

+ token=TOKEN,

+ project_name=PROJECT_NAME,

+ username=USERNAME,

+ column_mapping={"text": "text", "label": "label"},

+ percent_valid=None,

+ )

+ dset.prepare()

+

+ #

+ # How to get params for a task:

+ #

+ # from autotrain.params import Params

+ # params = Params(task=TASK, training_type="hub_model").get()

+ # print(params) to get full list of params for the task

+

+ # define params in proper format

+ job1 = {

+ "task": TASK,

+ "learning_rate": 1e-5,

+ "optimizer": "adamw_torch",

+ "scheduler": "linear",

+ "epochs": 5,

+ }

+

+ job2 = {

+ "task": TASK,

+ "learning_rate": 3e-5,

+ "optimizer": "adamw_torch",

+ "scheduler": "cosine",

+ "epochs": 5,

+ }

+

+ job3 = {

+ "task": TASK,

+ "learning_rate": 5e-5,

+ "optimizer": "sgd",

+ "scheduler": "cosine",

+ "epochs": 5,

+ }

+

+ jobs = [job1, job2, job3]

+ project = Project(dataset=dset, hub_model=MODEL, job_params=jobs)

+ project_id = project.create()

+ project.approve(project_id)

diff --git a/autotrain-advanced/examples/text_classification_multiclass.py b/autotrain-advanced/examples/text_classification_multiclass.py

new file mode 100644

index 0000000000000000000000000000000000000000..57b1b13fcfd6b78f7525c5ac81d8bd18f7e71c0a

--- /dev/null

+++ b/autotrain-advanced/examples/text_classification_multiclass.py

@@ -0,0 +1,77 @@

+import os

+from uuid import uuid4

+

+from datasets import load_dataset

+

+from autotrain.dataset import AutoTrainDataset

+from autotrain.project import Project

+

+

+RANDOM_ID = str(uuid4())

+DATASET = "amazon_reviews_multi"

+PROJECT_NAME = f"amazon_reviews_multi_{RANDOM_ID}"

+TASK = "text_multi_class_classification"

+MODEL = "bert-base-uncased"

+

+USERNAME = os.environ["AUTOTRAIN_USERNAME"]

+TOKEN = os.environ["HF_TOKEN"]

+

+

+if __name__ == "__main__":

+ dataset = load_dataset(DATASET, "en")

+ train = dataset["train"]

+ validation = dataset["test"]

+

+ # convert to pandas dataframe

+ train_df = train.to_pandas()

+ validation_df = validation.to_pandas()

+

+ # prepare dataset for AutoTrain

+ dset = AutoTrainDataset(

+ train_data=[train_df],

+ valid_data=[validation_df],

+ task=TASK,

+ token=TOKEN,

+ project_name=PROJECT_NAME,

+ username=USERNAME,

+ column_mapping={"text": "review_body", "label": "stars"},

+ percent_valid=None,

+ )

+ dset.prepare()

+

+ #

+ # How to get params for a task:

+ #

+ # from autotrain.params import Params

+ # params = Params(task=TASK, training_type="hub_model").get()

+ # print(params) to get full list of params for the task

+

+ # define params in proper format

+ job1 = {

+ "task": TASK,

+ "learning_rate": 1e-5,

+ "optimizer": "adamw_torch",

+ "scheduler": "linear",

+ "epochs": 5,

+ }

+

+ job2 = {

+ "task": TASK,

+ "learning_rate": 3e-5,

+ "optimizer": "adamw_torch",

+ "scheduler": "cosine",

+ "epochs": 5,

+ }

+

+ job3 = {

+ "task": TASK,

+ "learning_rate": 5e-5,

+ "optimizer": "sgd",

+ "scheduler": "cosine",

+ "epochs": 5,

+ }

+

+ jobs = [job1, job2, job3]

+ project = Project(dataset=dset, hub_model=MODEL, job_params=jobs)

+ project_id = project.create()

+ project.approve(project_id)

diff --git a/autotrain-advanced/requirements.txt b/autotrain-advanced/requirements.txt

new file mode 100644

index 0000000000000000000000000000000000000000..b5103740a6cab81568d6251088d50050dffb78ef

--- /dev/null

+++ b/autotrain-advanced/requirements.txt

@@ -0,0 +1,31 @@

+albumentations==1.3.1

+codecarbon==2.2.3

+datasets[vision]~=2.14.0

+evaluate==0.3.0

+ipadic==1.0.0

+jiwer==3.0.2

+joblib==1.3.1

+loguru==0.7.0

+pandas==2.0.3

+Pillow==10.0.0

+protobuf==4.23.4

+pydantic==1.10.11

+sacremoses==0.0.53

+scikit-learn==1.3.0

+sentencepiece==0.1.99

+tqdm==4.65.0

+werkzeug==2.3.6

+huggingface_hub>=0.16.4

+requests==2.31.0

+gradio==3.39.0

+einops==0.6.1

+invisible-watermark==0.2.0

+# latest versions

+tensorboard

+peft

+trl

+tiktoken

+transformers

+accelerate

+diffusers

+bitsandbytes

\ No newline at end of file

diff --git a/autotrain-advanced/setup.cfg b/autotrain-advanced/setup.cfg

new file mode 100644

index 0000000000000000000000000000000000000000..d69b03cda1889d4f3a52ece513782736a4cbdecb

--- /dev/null

+++ b/autotrain-advanced/setup.cfg

@@ -0,0 +1,24 @@

+[metadata]

+license_files = LICENSE

+version = attr: autotrain.__version__

+

+[isort]

+ensure_newline_before_comments = True

+force_grid_wrap = 0

+include_trailing_comma = True

+line_length = 119

+lines_after_imports = 2

+multi_line_output = 3

+use_parentheses = True

+

+[flake8]

+ignore = E203, E501, W503

+max-line-length = 119

+per-file-ignores =

+ # imported but unused

+ __init__.py: F401

+exclude =

+ .git,

+ .venv,

+ __pycache__,

+ dist

\ No newline at end of file

diff --git a/autotrain-advanced/setup.py b/autotrain-advanced/setup.py

new file mode 100644

index 0000000000000000000000000000000000000000..a8165e593380b4aeccb92d922dcc3393451a636e

--- /dev/null

+++ b/autotrain-advanced/setup.py

@@ -0,0 +1,71 @@

+# Lint as: python3

+"""

+HuggingFace / AutoTrain Advanced

+"""

+import os

+

+from setuptools import find_packages, setup

+

+

+DOCLINES = __doc__.split("\n")

+

+this_directory = os.path.abspath(os.path.dirname(__file__))

+with open(os.path.join(this_directory, "README.md"), encoding="utf-8") as f:

+ LONG_DESCRIPTION = f.read()

+

+# get INSTALL_REQUIRES from requirements.txt

+with open(os.path.join(this_directory, "requirements.txt"), encoding="utf-8") as f:

+ INSTALL_REQUIRES = f.read().splitlines()

+

+QUALITY_REQUIRE = [

+ "black",

+ "isort",

+ "flake8==3.7.9",

+]

+

+TESTS_REQUIRE = ["pytest"]

+

+

+EXTRAS_REQUIRE = {

+ "dev": INSTALL_REQUIRES + QUALITY_REQUIRE + TESTS_REQUIRE,

+ "quality": INSTALL_REQUIRES + QUALITY_REQUIRE,

+ "docs": INSTALL_REQUIRES

+ + [

+ "recommonmark",

+ "sphinx==3.1.2",

+ "sphinx-markdown-tables",

+ "sphinx-rtd-theme==0.4.3",

+ "sphinx-copybutton",

+ ],

+}

+

+setup(

+ name="autotrain-advanced",

+ description=DOCLINES[0],

+ long_description=LONG_DESCRIPTION,

+ long_description_content_type="text/markdown",

+ author="HuggingFace Inc.",

+ author_email="autotrain@huggingface.co",

+ url="https://github.com/huggingface/autotrain-advanced",

+ download_url="https://github.com/huggingface/autotrain-advanced/tags",

+ license="Apache 2.0",

+ package_dir={"": "src"},

+ packages=find_packages("src"),

+ extras_require=EXTRAS_REQUIRE,

+ install_requires=INSTALL_REQUIRES,

+ entry_points={"console_scripts": ["autotrain=autotrain.cli.autotrain:main"]},

+ classifiers=[

+ "Development Status :: 5 - Production/Stable",

+ "Intended Audience :: Developers",

+ "Intended Audience :: Education",

+ "Intended Audience :: Science/Research",

+ "License :: OSI Approved :: Apache Software License",

+ "Operating System :: OS Independent",

+ "Programming Language :: Python :: 3.8",

+ "Programming Language :: Python :: 3.9",

+ "Programming Language :: Python :: 3.10",

+ "Programming Language :: Python :: 3.11",

+ "Topic :: Scientific/Engineering :: Artificial Intelligence",

+ ],

+ keywords="automl autonlp autotrain huggingface",

+)

diff --git a/autotrain-advanced/src/autotrain/__init__.py b/autotrain-advanced/src/autotrain/__init__.py

new file mode 100644

index 0000000000000000000000000000000000000000..6d020a37e75d5d58ba706d21478da80aceec3e5d

--- /dev/null

+++ b/autotrain-advanced/src/autotrain/__init__.py

@@ -0,0 +1,24 @@

+# coding=utf-8

+# Copyright 2020-2021 The HuggingFace AutoTrain Authors

+#

+# Licensed under the Apache License, Version 2.0 (the "License");

+# you may not use this file except in compliance with the License.

+# You may obtain a copy of the License at

+#

+# http://www.apache.org/licenses/LICENSE-2.0

+#

+# Unless required by applicable law or agreed to in writing, software

+# distributed under the License is distributed on an "AS IS" BASIS,

+# WITHOUT WARRANTIES OR CONDITIONS OF ANY KIND, either express or implied.

+# See the License for the specific language governing permissions and

+# limitations under the License.

+

+# Lint as: python3

+# pylint: enable=line-too-long

+import os

+

+

+# ignore bnb warnings

+os.environ["BITSANDBYTES_NOWELCOME"] = "1"

+# os.environ["HF_HUB_DISABLE_PROGRESS_BARS"] = "1"

+__version__ = "0.6.16.dev0"

diff --git a/autotrain-advanced/src/autotrain/app.py b/autotrain-advanced/src/autotrain/app.py

new file mode 100644

index 0000000000000000000000000000000000000000..24cd86021512f2bba7c3c7d676d54a5275cc3bc4

--- /dev/null

+++ b/autotrain-advanced/src/autotrain/app.py

@@ -0,0 +1,965 @@

+import json

+import os

+import random

+import string

+import zipfile

+

+import gradio as gr

+import pandas as pd

+from huggingface_hub import list_models

+from loguru import logger

+

+from autotrain.dataset import AutoTrainDataset, AutoTrainDreamboothDataset, AutoTrainImageClassificationDataset

+from autotrain.languages import SUPPORTED_LANGUAGES

+from autotrain.params import Params

+from autotrain.project import Project

+from autotrain.utils import get_project_cost, get_user_token, user_authentication

+

+

+APP_TASKS = {

+ "Natural Language Processing": ["Text Classification", "LLM Finetuning"],

+ # "Tabular": TABULAR_TASKS,

+ "Computer Vision": ["Image Classification", "Dreambooth"],

+}

+

+APP_TASKS_MAPPING = {

+ "Text Classification": "text_multi_class_classification",

+ "LLM Finetuning": "lm_training",

+ "Image Classification": "image_multi_class_classification",

+ "Dreambooth": "dreambooth",

+}

+

+APP_TASK_TYPE_MAPPING = {

+ "text_classification": "Natural Language Processing",

+ "lm_training": "Natural Language Processing",

+ "image_classification": "Computer Vision",

+ "dreambooth": "Computer Vision",

+}

+

+ALLOWED_FILE_TYPES = [

+ ".csv",

+ ".CSV",

+ ".jsonl",

+ ".JSONL",

+ ".zip",

+ ".ZIP",

+ ".png",

+ ".PNG",

+ ".jpg",

+ ".JPG",

+ ".jpeg",

+ ".JPEG",

+]

+

+

+def _login_user(user_token):

+ user_info = user_authentication(token=user_token)

+ username = user_info["name"]

+

+ user_can_pay = user_info["canPay"]

+ orgs = user_info["orgs"]

+

+ valid_orgs = [org for org in orgs if org["canPay"] is True]

+ valid_orgs = [org for org in valid_orgs if org["roleInOrg"] in ("admin", "write")]

+ valid_orgs = [org["name"] for org in valid_orgs]

+

+ valid_can_pay = [username] + valid_orgs if user_can_pay else valid_orgs

+ who_is_training = [username] + [org["name"] for org in orgs]

+ return user_token, valid_can_pay, who_is_training

+

+

+def _update_task_type(project_type):

+ return gr.Dropdown.update(

+ value=APP_TASKS[project_type][0],

+ choices=APP_TASKS[project_type],

+ visible=True,

+ )

+

+

+def _update_model_choice(task, autotrain_backend):

+ # TODO: add tabular and remember, for tabular, we only support AutoTrain

+ if autotrain_backend.lower() != "huggingface internal":

+ model_choice = ["HuggingFace Hub"]

+ return gr.Dropdown.update(

+ value=model_choice[0],

+ choices=model_choice,

+ visible=True,

+ )

+

+ if task == "LLM Finetuning":

+ model_choice = ["HuggingFace Hub"]

+ else:

+ model_choice = ["AutoTrain", "HuggingFace Hub"]

+

+ return gr.Dropdown.update(

+ value=model_choice[0],

+ choices=model_choice,

+ visible=True,

+ )

+

+

+def _update_file_type(task):

+ task = APP_TASKS_MAPPING[task]

+ if task in ("text_multi_class_classification", "lm_training"):

+ return gr.Radio.update(

+ value="CSV",

+ choices=["CSV", "JSONL"],

+ visible=True,

+ )

+ elif task == "image_multi_class_classification":

+ return gr.Radio.update(

+ value="ZIP",

+ choices=["Image Subfolders", "ZIP"],

+ visible=True,

+ )

+ elif task == "dreambooth":

+ return gr.Radio.update(

+ value="ZIP",

+ choices=["Image Folder", "ZIP"],

+ visible=True,

+ )

+ else:

+ raise NotImplementedError

+

+

+def _update_param_choice(model_choice, autotrain_backend):

+ logger.info(f"model_choice: {model_choice}")

+ choices = ["AutoTrain", "Manual"] if model_choice == "HuggingFace Hub" else ["AutoTrain"]

+ choices = ["Manual"] if autotrain_backend != "HuggingFace Internal" else choices

+ return gr.Dropdown.update(

+ value=choices[0],

+ choices=choices,

+ visible=True,

+ )

+

+

+def _project_type_update(project_type, task_type, autotrain_backend):

+ logger.info(f"project_type: {project_type}, task_type: {task_type}")

+ task_choices_update = _update_task_type(project_type)

+ model_choices_update = _update_model_choice(task_choices_update["value"], autotrain_backend)

+ param_choices_update = _update_param_choice(model_choices_update["value"], autotrain_backend)

+ return [

+ task_choices_update,

+ model_choices_update,

+ param_choices_update,

+ _update_hub_model_choices(task_choices_update["value"], model_choices_update["value"]),

+ ]

+

+

+def _task_type_update(task_type, autotrain_backend):

+ logger.info(f"task_type: {task_type}")

+ model_choices_update = _update_model_choice(task_type, autotrain_backend)

+ param_choices_update = _update_param_choice(model_choices_update["value"], autotrain_backend)

+ return [

+ model_choices_update,

+ param_choices_update,

+ _update_hub_model_choices(task_type, model_choices_update["value"]),

+ ]

+

+

+def _update_col_map(training_data, task):

+ task = APP_TASKS_MAPPING[task]

+ if task == "text_multi_class_classification":

+ data_cols = pd.read_csv(training_data[0].name, nrows=2).columns.tolist()

+ return [

+ gr.Dropdown.update(visible=True, choices=data_cols, label="Map `text` column", value=data_cols[0]),

+ gr.Dropdown.update(visible=True, choices=data_cols, label="Map `target` column", value=data_cols[1]),

+ gr.Text.update(visible=False),

+ ]

+ elif task == "lm_training":

+ data_cols = pd.read_csv(training_data[0].name, nrows=2).columns.tolist()

+ return [

+ gr.Dropdown.update(visible=True, choices=data_cols, label="Map `text` column", value=data_cols[0]),

+ gr.Dropdown.update(visible=False),

+ gr.Text.update(visible=False),

+ ]

+ elif task == "dreambooth":

+ return [

+ gr.Dropdown.update(visible=False),

+ gr.Dropdown.update(visible=False),

+ gr.Text.update(visible=True, label="Concept Token", interactive=True),

+ ]

+ else:

+ return [

+ gr.Dropdown.update(visible=False),

+ gr.Dropdown.update(visible=False),

+ gr.Text.update(visible=False),

+ ]

+

+

+def _estimate_costs(

+ training_data, validation_data, task, user_token, autotrain_username, training_params_txt, autotrain_backend

+):

+ if autotrain_backend.lower() != "huggingface internal":

+ return [

+ gr.Markdown.update(

+ value="Cost estimation is not available for this backend",

+ visible=True,

+ ),

+ gr.Number.update(visible=False),

+ ]

+ try:

+ logger.info("Estimating costs....")

+ if training_data is None:

+ return [

+ gr.Markdown.update(

+ value="Could not estimate cost. Please add training data",

+ visible=True,

+ ),

+ gr.Number.update(visible=False),

+ ]

+ if validation_data is None:

+ validation_data = []

+

+ training_params = json.loads(training_params_txt)

+ if len(training_params) == 0:

+ return [

+ gr.Markdown.update(

+ value="Could not estimate cost. Please add atleast one job",

+ visible=True,

+ ),

+ gr.Number.update(visible=False),

+ ]

+ elif len(training_params) == 1:

+ if "num_models" in training_params[0]:

+ num_models = training_params[0]["num_models"]

+ else:

+ num_models = 1

+ else:

+ num_models = len(training_params)

+ task = APP_TASKS_MAPPING[task]

+ num_samples = 0

+ logger.info("Estimating number of samples")

+ if task in ("text_multi_class_classification", "lm_training"):

+ for _f in training_data:

+ num_samples += pd.read_csv(_f.name).shape[0]

+ for _f in validation_data:

+ num_samples += pd.read_csv(_f.name).shape[0]

+ elif task == "image_multi_class_classification":

+ logger.info(f"training_data: {training_data}")

+ if len(training_data) > 1:

+ return [

+ gr.Markdown.update(

+ value="Only one training file is supported for image classification",

+ visible=True,

+ ),

+ gr.Number.update(visible=False),

+ ]

+ if len(validation_data) > 1:

+ return [

+ gr.Markdown.update(

+ value="Only one validation file is supported for image classification",

+ visible=True,

+ ),

+ gr.Number.update(visible=False),

+ ]

+ for _f in training_data:

+ zip_ref = zipfile.ZipFile(_f.name, "r")

+ for _ in zip_ref.namelist():

+ num_samples += 1

+ for _f in validation_data:

+ zip_ref = zipfile.ZipFile(_f.name, "r")

+ for _ in zip_ref.namelist():

+ num_samples += 1

+ elif task == "dreambooth":

+ num_samples = len(training_data)

+ else:

+ raise NotImplementedError

+

+ logger.info(f"Estimating costs for: num_models: {num_models}, task: {task}, num_samples: {num_samples}")

+ estimated_cost = get_project_cost(

+ username=autotrain_username,

+ token=user_token,

+ task=task,

+ num_samples=num_samples,

+ num_models=num_models,

+ )

+ logger.info(f"Estimated_cost: {estimated_cost}")

+ return [

+ gr.Markdown.update(

+ value=f"Estimated cost: ${estimated_cost:.2f}. Note: clicking on 'Create Project' will start training and incur charges!",

+ visible=True,

+ ),

+ gr.Number.update(visible=False),

+ ]

+ except Exception as e:

+ logger.error(e)

+ logger.error("Could not estimate cost, check inputs")

+ return [

+ gr.Markdown.update(

+ value="Could not estimate cost, check inputs",

+ visible=True,

+ ),

+ gr.Number.update(visible=False),

+ ]

+

+

+def get_job_params(param_choice, training_params, task):

+ if param_choice == "autotrain":

+ if len(training_params) > 1:

+ raise ValueError("❌ Only one job parameter is allowed for AutoTrain.")

+ training_params[0].update({"task": task})

+ elif param_choice.lower() == "manual":

+ for i in range(len(training_params)):

+ training_params[i].update({"task": task})

+ if "hub_model" in training_params[i]:

+ # remove hub_model from training_params

+ training_params[i].pop("hub_model")

+ return training_params

+

+

+def _update_project_name():

+ random_project_name = "-".join(

+ ["".join(random.choices(string.ascii_lowercase + string.digits, k=4)) for _ in range(3)]

+ )

+ # check if training tracker exists

+ if os.path.exists(os.path.join("/tmp", "training")):

+ return [

+ gr.Text.update(value=random_project_name, visible=True, interactive=True),

+ gr.Button.update(interactive=False),

+ ]

+ return [

+ gr.Text.update(value=random_project_name, visible=True, interactive=True),

+ gr.Button.update(interactive=True),

+ ]

+

+

+def _update_hub_model_choices(task, model_choice):

+ task = APP_TASKS_MAPPING[task]

+ logger.info(f"Updating hub model choices for task: {task}, model_choice: {model_choice}")

+ if model_choice.lower() == "autotrain":

+ return gr.Dropdown.update(

+ visible=False,

+ interactive=False,

+ )

+ if task == "text_multi_class_classification":

+ hub_models1 = list_models(filter="fill-mask", sort="downloads", direction=-1, limit=100)

+ hub_models2 = list_models(filter="text-classification", sort="downloads", direction=-1, limit=100)

+ hub_models = list(hub_models1) + list(hub_models2)

+ elif task == "lm_training":

+ hub_models = list(list_models(filter="text-generation", sort="downloads", direction=-1, limit=100))

+ elif task == "image_multi_class_classification":

+ hub_models = list(list_models(filter="image-classification", sort="downloads", direction=-1, limit=100))

+ elif task == "dreambooth":

+ hub_models = list(list_models(filter="text-to-image", sort="downloads", direction=-1, limit=100))

+ else:

+ raise NotImplementedError

+ # sort by number of downloads in descending order

+ hub_models = [{"id": m.modelId, "downloads": m.downloads} for m in hub_models if m.private is False]

+ hub_models = sorted(hub_models, key=lambda x: x["downloads"], reverse=True)

+

+ if task == "dreambooth":

+ choices = ["stabilityai/stable-diffusion-xl-base-1.0"] + [m["id"] for m in hub_models]

+ value = choices[0]

+ return gr.Dropdown.update(

+ choices=choices,

+ value=value,

+ visible=True,

+ interactive=True,

+ )

+

+ return gr.Dropdown.update(

+ choices=[m["id"] for m in hub_models],

+ value=hub_models[0]["id"],

+ visible=True,

+ interactive=True,

+ )

+

+

+def _update_backend(backend):

+ if backend != "Hugging Face Internal":

+ return [

+ gr.Dropdown.update(

+ visible=True,

+ interactive=True,

+ choices=["HuggingFace Hub"],

+ value="HuggingFace Hub",

+ ),

+ gr.Dropdown.update(

+ visible=True,

+ interactive=True,

+ choices=["Manual"],

+ value="Manual",

+ ),

+ ]

+ return [

+ gr.Dropdown.update(

+ visible=True,

+ interactive=True,

+ ),

+ gr.Dropdown.update(

+ visible=True,

+ interactive=True,

+ ),

+ ]

+

+

+def _create_project(

+ autotrain_username,

+ valid_can_pay,

+ project_name,

+ user_token,

+ task,

+ training_data,

+ validation_data,

+ col_map_text,

+ col_map_label,

+ concept_token,

+ training_params_txt,

+ hub_model,

+ estimated_cost,

+ autotrain_backend,

+):

+ task = APP_TASKS_MAPPING[task]

+ valid_can_pay = valid_can_pay.split(",")

+ can_pay = autotrain_username in valid_can_pay

+ logger.info(f"🚨🚨🚨Creating project: {project_name}")

+ logger.info(f"🚨Task: {task}")

+ logger.info(f"🚨Training data: {training_data}")

+ logger.info(f"🚨Validation data: {validation_data}")

+ logger.info(f"🚨Training params: {training_params_txt}")

+ logger.info(f"🚨Hub model: {hub_model}")

+ logger.info(f"🚨Estimated cost: {estimated_cost}")

+ logger.info(f"🚨:Can pay: {can_pay}")

+

+ if can_pay is False and estimated_cost > 0:

+ raise gr.Error("❌ You do not have enough credits to create this project. Please add a valid payment method.")

+

+ training_params = json.loads(training_params_txt)

+ if len(training_params) == 0:

+ raise gr.Error("Please add atleast one job")

+ elif len(training_params) == 1:

+ if "num_models" in training_params[0]:

+ param_choice = "autotrain"

+ else:

+ param_choice = "manual"

+ else:

+ param_choice = "manual"

+

+ if task == "image_multi_class_classification":

+ training_data = training_data[0].name

+ if validation_data is not None:

+ validation_data = validation_data[0].name

+ dset = AutoTrainImageClassificationDataset(

+ train_data=training_data,

+ token=user_token,

+ project_name=project_name,

+ username=autotrain_username,

+ valid_data=validation_data,

+ percent_valid=None, # TODO: add to UI

+ )

+ elif task == "text_multi_class_classification":

+ training_data = [f.name for f in training_data]

+ if validation_data is None:

+ validation_data = []

+ else:

+ validation_data = [f.name for f in validation_data]

+ dset = AutoTrainDataset(

+ train_data=training_data,

+ task=task,

+ token=user_token,

+ project_name=project_name,

+ username=autotrain_username,

+ column_mapping={"text": col_map_text, "label": col_map_label},

+ valid_data=validation_data,

+ percent_valid=None, # TODO: add to UI

+ )

+ elif task == "lm_training":

+ training_data = [f.name for f in training_data]

+ if validation_data is None:

+ validation_data = []

+ else:

+ validation_data = [f.name for f in validation_data]

+ dset = AutoTrainDataset(

+ train_data=training_data,

+ task=task,

+ token=user_token,

+ project_name=project_name,

+ username=autotrain_username,

+ column_mapping={"text": col_map_text},

+ valid_data=validation_data,

+ percent_valid=None, # TODO: add to UI

+ )

+ elif task == "dreambooth":

+ dset = AutoTrainDreamboothDataset(

+ concept_images=training_data,

+ concept_name=concept_token,

+ token=user_token,

+ project_name=project_name,

+ username=autotrain_username,

+ )

+ else:

+ raise NotImplementedError

+

+ dset.prepare()

+ project = Project(

+ dataset=dset,

+ param_choice=param_choice,

+ hub_model=hub_model,

+ job_params=get_job_params(param_choice, training_params, task),

+ )

+ if autotrain_backend.lower() == "huggingface internal":

+ project_id = project.create()

+ project.approve(project_id)

+ return gr.Markdown.update(

+ value=f"Project created successfully. Monitor progess on the [dashboard](https://ui.autotrain.huggingface.co/{project_id}/trainings).",

+ visible=True,

+ )

+ else:

+ project.create(local=True)

+

+

+def get_variable_name(var, namespace):

+ for name in namespace:

+ if namespace[name] is var:

+ return name

+ return None

+

+

+def disable_create_project_button():

+ return gr.Button.update(interactive=False)

+

+

+def main():

+ with gr.Blocks(theme="freddyaboulton/dracula_revamped") as demo:

+ gr.Markdown("## 🤗 AutoTrain Advanced")

+ user_token = os.environ.get("HF_TOKEN", "")

+

+ if len(user_token) == 0:

+ user_token = get_user_token()

+

+ if user_token is None:

+ gr.Markdown(

+ """Please login with a write [token](https://huggingface.co/settings/tokens).

+ Pass your HF token in an environment variable called `HF_TOKEN` and then restart this app.

+ """

+ )

+ return demo

+

+ user_token, valid_can_pay, who_is_training = _login_user(user_token)

+

+ if user_token is None or len(user_token) == 0:

+ gr.Error("Please login with a write token.")

+

+ user_token = gr.Textbox(

+ value=user_token, type="password", lines=1, max_lines=1, visible=False, interactive=False

+ )

+ valid_can_pay = gr.Textbox(value=",".join(valid_can_pay), visible=False, interactive=False)

+ with gr.Row():

+ with gr.Column():

+ with gr.Row():

+ autotrain_username = gr.Dropdown(

+ label="AutoTrain Username",

+ choices=who_is_training,

+ value=who_is_training[0] if who_is_training else "",

+ )

+ autotrain_backend = gr.Dropdown(

+ label="AutoTrain Backend",

+ choices=["HuggingFace Internal", "HuggingFace Spaces"],

+ value="HuggingFace Internal",

+ interactive=True,

+ )

+ with gr.Row():