File size: 5,064 Bytes

4fba576 3a83bb1 187ecfd 4935118 c1e0f7d 4935118 ddf1bf0 c1e0f7d 4935118 4fba576 37e5936 4fba576 37e5936 97f4c39 37e5936 97f4c39 37e5936 97f4c39 37e5936 4fba576 37e5936 4fba576 37e5936 4fba576 | 1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 27 28 29 30 31 32 33 34 35 36 37 38 39 40 41 42 43 44 45 46 47 48 49 50 51 52 53 54 55 56 57 58 59 60 61 62 63 64 65 66 67 68 69 70 71 72 73 74 75 76 77 78 79 80 81 82 83 84 85 86 87 88 89 90 91 92 93 94 95 96 97 98 99 100 101 102 103 104 105 106 107 108 109 110 111 112 113 114 115 116 117 118 119 120 121 122 123 124 125 126 127 128 129 130 131 132 133 134 135 136 137 138 | ---

license: mit

---

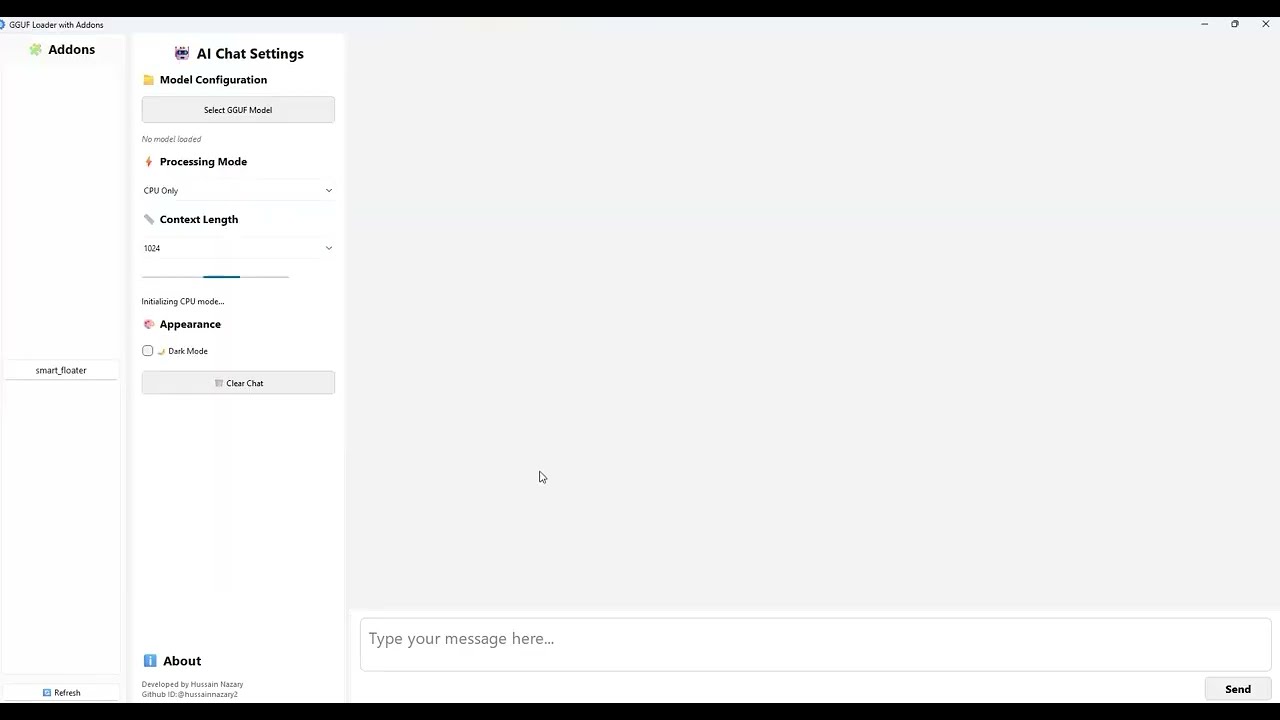

**GGUF Loader is a GUI-first, plugin-based launcher for running local LLMs — featuring a built-in floating assistant that gives you instant AI access on top of any window.**

---

## 🧠 Problem

Open-source LLMs are becoming faster and smarter, but the tools around them are still broken. Most setups require:

- Command-line knowledge

- Model management across multiple platforms

- No way to extend with features like summarization or RAG

- No native way to interact with AI while working

This makes local AI powerful in theory, but unusable for most people in practice.

---

## 💡 Solution

**GGUF Loader** brings everything together:

- One-click model loading with GGUF support

- A modern desktop interface anyone can use

- Built-in **floating button** that allows AI to follow your workflow

- A growing **plugin system** — inspired by Blender — so users can extend, customize, and share features

From loading a Mistral model to summarizing your documents or running agents over folders, GGUF Loader is designed to be your personal AI operating system.

---

## 🎯 Vision

> We believe the future of AI is **local**, **modular**, and **user-owned**.

> GGUF Loader is building the user interface layer for that future — a plug-and-play AI desktop engine that works fully offline, built around your workflows and privacy.

---

## 🔧 What’s Next

- Public plugin SDK

- Pro features: drag-drop RAG builder, context memory, advanced floating tools

- Addon marketplace (free and paid)

- Community system for sharing tools

---

## 🛠️ Dev Philosophy

- 💻 Local-first, no cloud dependency

- 🧩 Modular and hackable

- 🖱️ Usable by non-devs

- ⚡ Fast to launch, fast to load

---

> GGUF Loader is open-source and in active development.

> If you're an investor, contributor, or power user — [get in touch](mailto:hussainnazary2@gmail.com) or star the repo to follow progress.

Update (v2.0.1): GGUF Loader now includes a powerful floating assistant button that lets you interact with any text instantly, system-wide. Plus, it now supports an addon system, allowing developers to extend its capabilities just like a plugin-based platform. These features make GGUF Loader even more flexible and interactive for local AI workflows. Try the new version now!

# 🧠 GGUF Loader Quickstart

### 📦 1. Install GGUF Loader via pip

```bash

pip install ggufloader

```

### 🚀 2. Launch the App

After installation, run the following command in your terminal:

```bash

ggufloader

```

This will start the GGUF Loader interface. You can now load and chat with any GGUF model locally.

## 🧩 🎬 Demo Video: Addon System + Floating Tool in Local LLM (v2.0.1 Update)

[](https://www.youtube.com/watch?v=5lQui7EeUe0)

> Discover how to supercharge your local AI workflows using the new floating addon system! No coding needed. Works offline.

> Let me know if you want to support GUI launching, system tray, or shortcuts too.

---

## 🔽 Download GGUF Models

> ⚡ Click a link below to download the model file directly (no Hugging Face page in between).

### 🧠 GPT-OSS Models (Open Source GPTs)

> High-quality, Apache 2.0 licensed, reasoning-focused models for local/enterprise use.

#### 🧠 GPT-OSS 120B (Dense)

- [⬇️ Download Q4_K (46.2 GB)](https://huggingface.co/lmstudio-community/gpt-oss-120b-GGUF/resolve/main/gpt-oss-120b-MXFP4-00001-of-00002.gguf)

#### 🧠 GPT-OSS 20B (Dense)

- [⬇️ Download Q4_K (7.34 GB)](https://huggingface.co/lmstudio-community/gpt-oss-20b-GGUF/resolve/main/gpt-oss-20b-MXFP4.gguf)

---

### 🧠 Mistral-7B Instruct

- [⬇️ Download Q4_0 (4.23 GB)](https://huggingface.co/TheBloke/Mistral-7B-Instruct-v0.1-GGUF/resolve/main/mistral-7b-instruct-v0.1.Q4_0.gguf)

- [⬇️ Download Q6_K (6.23 GB)](https://huggingface.co/TheBloke/Mistral-7B-Instruct-v0.1-GGUF/resolve/main/mistral-7b-instruct-v0.1.Q6_K.gguf)

### 🧠 Qwen 1.5-7B Chat

- [⬇️ Download Q4_K (4.88 GB)](https://huggingface.co/TheBloke/Qwen1.5-7B-Chat-GGUF/resolve/main/qwen1_5-7b-chat-q4_k.gguf)

- [⬇️ Download Q6_K (6.83 GB)](https://huggingface.co/TheBloke/Qwen1.5-7B-Chat-GGUF/resolve/main/qwen1_5-7b-chat-q6_k.gguf)

### 🧠 DeepSeek 7B Chat

- [⬇️ Download Q4_0 (4.87 GB)](https://huggingface.co/TheBloke/DeepSeek-7B-Chat-GGUF/resolve/main/deepseek-7b-chat.Q4_0.gguf)

- [⬇️ Download Q8_0 (9.33 GB)](https://huggingface.co/TheBloke/DeepSeek-7B-Chat-GGUF/resolve/main/deepseek-7b-chat.Q8_0.gguf)

### 🧠 LLaMA 3 8B Instruct

- [⬇️ Download Q4_0 (4.68 GB)](https://huggingface.co/TheBloke/Llama-3-8B-Instruct-GGUF/resolve/main/llama-3-8b-instruct.Q4_0.gguf)

- [⬇️ Download Q6_K (6.91 GB)](https://huggingface.co/TheBloke/Llama-3-8B-Instruct-GGUF/resolve/main/llama-3-8b-instruct.Q6_K.gguf)

---

### 🗂️ More Model Collections

- [🧠 TheBloke’s GGUF Model Collection](https://local-ai-zone.github.io)

- [🌍 GGUF Community Collection](https://local-ai-zone.github.io)

|