Instructions to use Isotr0py/Ovis2-tokenizer with libraries, inference providers, notebooks, and local apps. Follow these links to get started.

- Libraries

- Transformers

How to use Isotr0py/Ovis2-tokenizer with Transformers:

# Use a pipeline as a high-level helper from transformers import pipeline pipe = pipeline("image-text-to-text", model="Isotr0py/Ovis2-tokenizer") messages = [ { "role": "user", "content": [ {"type": "image", "url": "https://huggingface.co/datasets/huggingface/documentation-images/resolve/main/p-blog/candy.JPG"}, {"type": "text", "text": "What animal is on the candy?"} ] }, ] pipe(text=messages)# Load model directly from transformers import AutoModel model = AutoModel.from_pretrained("Isotr0py/Ovis2-tokenizer", dtype="auto") - Notebooks

- Google Colab

- Kaggle

- Local Apps Settings

- vLLM

How to use Isotr0py/Ovis2-tokenizer with vLLM:

Install from pip and serve model

# Install vLLM from pip: pip install vllm # Start the vLLM server: vllm serve "Isotr0py/Ovis2-tokenizer" # Call the server using curl (OpenAI-compatible API): curl -X POST "http://localhost:8000/v1/chat/completions" \ -H "Content-Type: application/json" \ --data '{ "model": "Isotr0py/Ovis2-tokenizer", "messages": [ { "role": "user", "content": [ { "type": "text", "text": "Describe this image in one sentence." }, { "type": "image_url", "image_url": { "url": "https://cdn.britannica.com/61/93061-050-99147DCE/Statue-of-Liberty-Island-New-York-Bay.jpg" } } ] } ] }'Use Docker

docker model run hf.co/Isotr0py/Ovis2-tokenizer

- SGLang

How to use Isotr0py/Ovis2-tokenizer with SGLang:

Install from pip and serve model

# Install SGLang from pip: pip install sglang # Start the SGLang server: python3 -m sglang.launch_server \ --model-path "Isotr0py/Ovis2-tokenizer" \ --host 0.0.0.0 \ --port 30000 # Call the server using curl (OpenAI-compatible API): curl -X POST "http://localhost:30000/v1/chat/completions" \ -H "Content-Type: application/json" \ --data '{ "model": "Isotr0py/Ovis2-tokenizer", "messages": [ { "role": "user", "content": [ { "type": "text", "text": "Describe this image in one sentence." }, { "type": "image_url", "image_url": { "url": "https://cdn.britannica.com/61/93061-050-99147DCE/Statue-of-Liberty-Island-New-York-Bay.jpg" } } ] } ] }'Use Docker images

docker run --gpus all \ --shm-size 32g \ -p 30000:30000 \ -v ~/.cache/huggingface:/root/.cache/huggingface \ --env "HF_TOKEN=<secret>" \ --ipc=host \ lmsysorg/sglang:latest \ python3 -m sglang.launch_server \ --model-path "Isotr0py/Ovis2-tokenizer" \ --host 0.0.0.0 \ --port 30000 # Call the server using curl (OpenAI-compatible API): curl -X POST "http://localhost:30000/v1/chat/completions" \ -H "Content-Type: application/json" \ --data '{ "model": "Isotr0py/Ovis2-tokenizer", "messages": [ { "role": "user", "content": [ { "type": "text", "text": "Describe this image in one sentence." }, { "type": "image_url", "image_url": { "url": "https://cdn.britannica.com/61/93061-050-99147DCE/Statue-of-Liberty-Island-New-York-Bay.jpg" } } ] } ] }' - Docker Model Runner

How to use Isotr0py/Ovis2-tokenizer with Docker Model Runner:

docker model run hf.co/Isotr0py/Ovis2-tokenizer

Upload 7 files

Browse files- .gitattributes +1 -0

- README.md +258 -3

- added_tokens.json +32 -0

- merges.txt +0 -0

- special_tokens_map.json +39 -0

- tokenizer.json +3 -0

- tokenizer_config.json +279 -0

- vocab.json +0 -0

.gitattributes

CHANGED

|

@@ -33,3 +33,4 @@ saved_model/**/* filter=lfs diff=lfs merge=lfs -text

|

|

| 33 |

*.zip filter=lfs diff=lfs merge=lfs -text

|

| 34 |

*.zst filter=lfs diff=lfs merge=lfs -text

|

| 35 |

*tfevents* filter=lfs diff=lfs merge=lfs -text

|

|

|

|

|

|

| 33 |

*.zip filter=lfs diff=lfs merge=lfs -text

|

| 34 |

*.zst filter=lfs diff=lfs merge=lfs -text

|

| 35 |

*tfevents* filter=lfs diff=lfs merge=lfs -text

|

| 36 |

+

tokenizer.json filter=lfs diff=lfs merge=lfs -text

|

README.md

CHANGED

|

@@ -1,3 +1,258 @@

|

|

| 1 |

-

---

|

| 2 |

-

license: apache-2.0

|

| 3 |

-

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

---

|

| 2 |

+

license: apache-2.0

|

| 3 |

+

datasets:

|

| 4 |

+

- AIDC-AI/Ovis-dataset

|

| 5 |

+

library_name: transformers

|

| 6 |

+

tags:

|

| 7 |

+

- MLLM

|

| 8 |

+

pipeline_tag: image-text-to-text

|

| 9 |

+

language:

|

| 10 |

+

- en

|

| 11 |

+

- zh

|

| 12 |

+

---

|

| 13 |

+

|

| 14 |

+

# Ovis2-1B

|

| 15 |

+

<div align="center">

|

| 16 |

+

<img src=https://cdn-uploads.huggingface.co/production/uploads/637aebed7ce76c3b834cea37/3IK823BZ8w-mz_QfeYkDn.png width="30%"/>

|

| 17 |

+

</div>

|

| 18 |

+

|

| 19 |

+

## Introduction

|

| 20 |

+

[GitHub](https://github.com/AIDC-AI/Ovis) | [Paper](https://arxiv.org/abs/2405.20797)

|

| 21 |

+

|

| 22 |

+

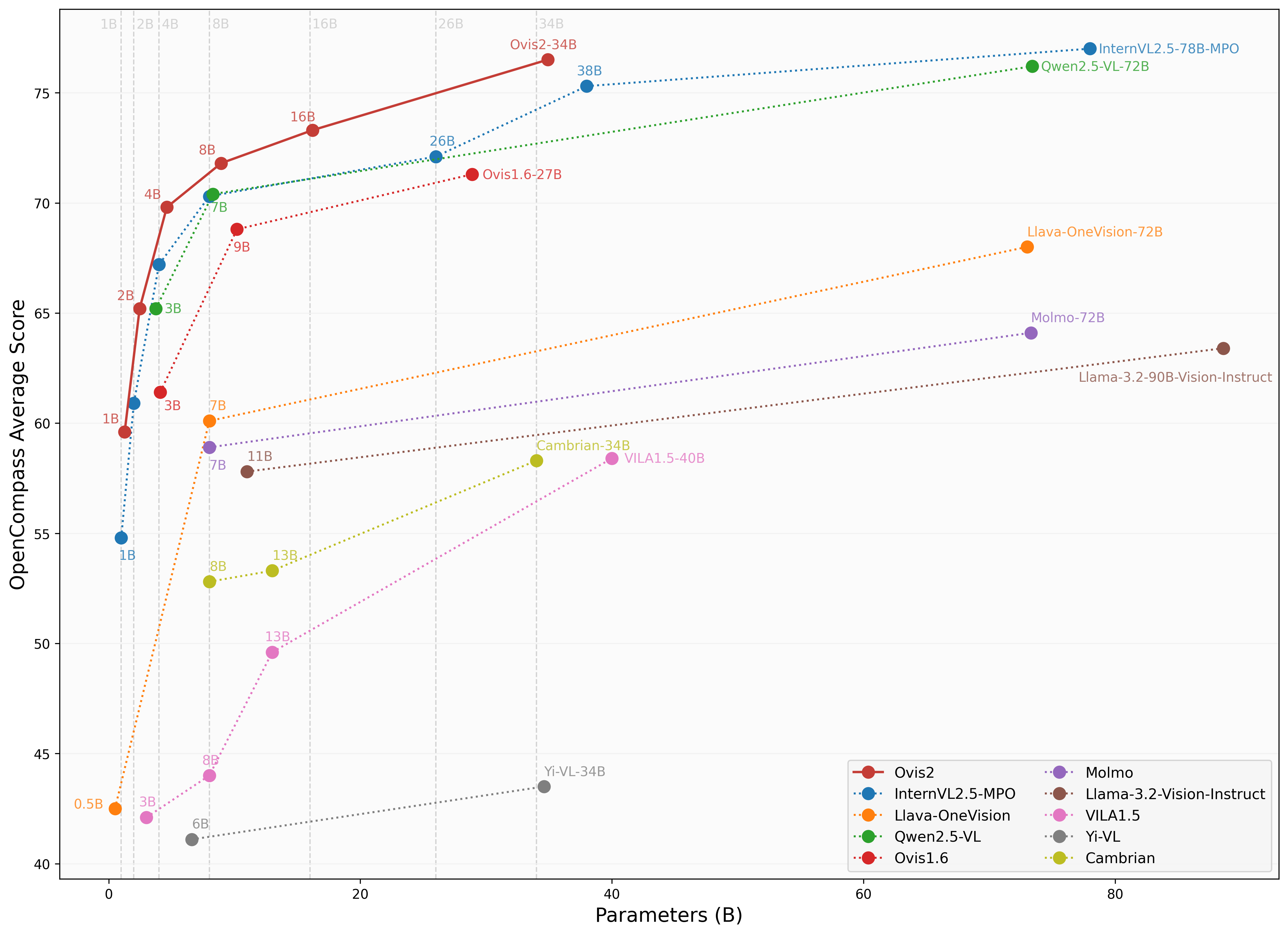

We are pleased to announce the release of **Ovis2**, our latest advancement in multi-modal large language models (MLLMs). Ovis2 inherits the innovative architectural design of the Ovis series, aimed at structurally aligning visual and textual embeddings. As the successor to Ovis1.6, Ovis2 incorporates significant improvements in both dataset curation and training methodologies.

|

| 23 |

+

|

| 24 |

+

**Key Features**:

|

| 25 |

+

|

| 26 |

+

- **Small Model Performance**: Optimized training strategies enable small-scale models to achieve higher capability density, demonstrating cross-tier leading advantages.

|

| 27 |

+

|

| 28 |

+

- **Enhanced Reasoning Capabilities**: Significantly strengthens Chain-of-Thought (CoT) reasoning abilities through the combination of instruction tuning and preference learning.

|

| 29 |

+

|

| 30 |

+

- **Video and Multi-Image Processing**: Video and multi-image data are incorporated into training to enhance the ability to handle complex visual information across frames and images.

|

| 31 |

+

|

| 32 |

+

- **Multilingual Support and OCR**: Enhances multilingual OCR beyond English and Chinese and improves structured data extraction from complex visual elements like tables and charts.

|

| 33 |

+

|

| 34 |

+

<div align="center">

|

| 35 |

+

<img src="https://cdn-uploads.huggingface.co/production/uploads/637aebed7ce76c3b834cea37/XB-vgzDL6FshrSNGyZvzc.png" width="100%" />

|

| 36 |

+

</div>

|

| 37 |

+

|

| 38 |

+

## Model Zoo

|

| 39 |

+

|

| 40 |

+

| Ovis MLLMs | ViT | LLM | Model Weights | Demo |

|

| 41 |

+

|:-----------|:-----------------------:|:---------------------:|:-------------------------------------------------------:|:--------------------------------------------------------:|

|

| 42 |

+

| Ovis2-1B | aimv2-large-patch14-448 | Qwen2.5-0.5B-Instruct | [Huggingface](https://huggingface.co/AIDC-AI/Ovis2-1B) | [Space](https://huggingface.co/spaces/AIDC-AI/Ovis2-1B) |

|

| 43 |

+

| Ovis2-2B | aimv2-large-patch14-448 | Qwen2.5-1.5B-Instruct | [Huggingface](https://huggingface.co/AIDC-AI/Ovis2-2B) | [Space](https://huggingface.co/spaces/AIDC-AI/Ovis2-2B) |

|

| 44 |

+

| Ovis2-4B | aimv2-huge-patch14-448 | Qwen2.5-3B-Instruct | [Huggingface](https://huggingface.co/AIDC-AI/Ovis2-4B) | [Space](https://huggingface.co/spaces/AIDC-AI/Ovis2-4B) |

|

| 45 |

+

| Ovis2-8B | aimv2-huge-patch14-448 | Qwen2.5-7B-Instruct | [Huggingface](https://huggingface.co/AIDC-AI/Ovis2-8B) | [Space](https://huggingface.co/spaces/AIDC-AI/Ovis2-8B) |

|

| 46 |

+

| Ovis2-16B | aimv2-huge-patch14-448 | Qwen2.5-14B-Instruct | [Huggingface](https://huggingface.co/AIDC-AI/Ovis2-16B) | [Space](https://huggingface.co/spaces/AIDC-AI/Ovis2-16B) |

|

| 47 |

+

| Ovis2-34B | aimv2-1B-patch14-448 | Qwen2.5-32B-Instruct | [Huggingface](https://huggingface.co/AIDC-AI/Ovis2-34B) | - |

|

| 48 |

+

|

| 49 |

+

## Performance

|

| 50 |

+

We use [VLMEvalKit](https://github.com/open-compass/VLMEvalKit), as employed in the OpenCompass [multimodal](https://rank.opencompass.org.cn/leaderboard-multimodal) and [reasoning](https://rank.opencompass.org.cn/leaderboard-multimodal-reasoning) leaderboard, to evaluate Ovis2.

|

| 51 |

+

|

| 52 |

+

|

| 53 |

+

|

| 54 |

+

### Image Benchmark

|

| 55 |

+

| Benchmark | Qwen2.5-VL-3B | SAIL-VL-2B | InternVL2.5-2B-MPO | Ovis1.6-3B | InternVL2.5-1B-MPO | Ovis2-1B | Ovis2-2B |

|

| 56 |

+

|:-----------------------------|:---------------:|:------------:|:--------------------:|:------------:|:--------------------:|:----------:|:----------:|

|

| 57 |

+

| MMBench-V1.1<sub>test</sub> | **77.1** | 73.6 | 70.7 | 74.1 | 65.8 | 68.4 | 76.9 |

|

| 58 |

+

| MMStar | 56.5 | 56.5 | 54.9 | 52.0 | 49.5 | 52.1 | **56.7** |

|

| 59 |

+

| MMMU<sub>val</sub> | **51.4** | 44.1 | 44.6 | 46.7 | 40.3 | 36.1 | 45.6 |

|

| 60 |

+

| MathVista<sub>testmini</sub> | 60.1 | 62.8 | 53.4 | 58.9 | 47.7 | 59.4 | **64.1** |

|

| 61 |

+

| HallusionBench | 48.7 | 45.9 | 40.7 | 43.8 | 34.8 | 45.2 | **50.2** |

|

| 62 |

+

| AI2D | 81.4 | 77.4 | 75.1 | 77.8 | 68.5 | 76.4 | **82.7** |

|

| 63 |

+

| OCRBench | 83.1 | 83.1 | 83.8 | 80.1 | 84.3 | **89.0** | 87.3 |

|

| 64 |

+

| MMVet | 63.2 | 44.2 | **64.2** | 57.6 | 47.2 | 50.0 | 58.3 |

|

| 65 |

+

| MMBench<sub>test</sub> | 78.6 | 77 | 72.8 | 76.6 | 67.9 | 70.2 | **78.9** |

|

| 66 |

+

| MMT-Bench<sub>val</sub> | 60.8 | 57.1 | 54.4 | 59.2 | 50.8 | 55.5 | **61.7** |

|

| 67 |

+

| RealWorldQA | 66.5 | 62 | 61.3 | **66.7** | 57 | 63.9 | 66.0 |

|

| 68 |

+

| BLINK | **48.4** | 46.4 | 43.8 | 43.8 | 41 | 44.0 | 47.9 |

|

| 69 |

+

| QBench | 74.4 | 72.8 | 69.8 | 75.8 | 63.3 | 71.3 | **76.2** |

|

| 70 |

+

| ABench | 75.5 | 74.5 | 71.1 | 75.2 | 67.5 | 71.3 | **76.6** |

|

| 71 |

+

| MTVQA | 24.9 | 20.2 | 22.6 | 21.1 | 21.7 | 23.7 | **25.6** |

|

| 72 |

+

|

| 73 |

+

### Video Benchmark

|

| 74 |

+

| Benchmark | Qwen2.5-VL-3B | InternVL2.5-2B | InternVL2.5-1B | Ovis2-1B | Ovis2-2B |

|

| 75 |

+

| ------------------- |:-------------:|:--------------:|:--------------:|:---------:|:-------------:|

|

| 76 |

+

| VideoMME(wo/w-subs) | **61.5/67.6** | 51.9 / 54.1 | 50.3 / 52.3 | 48.6/49.5 | 57.2/60.8 |

|

| 77 |

+

| MVBench | 67.0 | **68.8** | 64.3 | 60.32 | 64.9 |

|

| 78 |

+

| MLVU(M-Avg/G-Avg) | 68.2/- | 61.4/- | 57.3/- | 58.5/3.66 | **68.6**/3.86 |

|

| 79 |

+

| MMBench-Video | **1.63** | 1.44 | 1.36 | 1.26 | 1.57 |

|

| 80 |

+

| TempCompass | **64.4** | - | - | 51.43 | 62.64 |

|

| 81 |

+

|

| 82 |

+

## Usage

|

| 83 |

+

Below is a code snippet demonstrating how to run Ovis with various input types. For additional usage instructions, including inference wrapper and Gradio UI, please refer to [Ovis GitHub](https://github.com/AIDC-AI/Ovis?tab=readme-ov-file#inference).

|

| 84 |

+

```bash

|

| 85 |

+

pip install torch==2.4.0 transformers==4.46.2 numpy==1.25.0 pillow==10.3.0

|

| 86 |

+

pip install flash-attn==2.7.0.post2 --no-build-isolation

|

| 87 |

+

```

|

| 88 |

+

```python

|

| 89 |

+

import torch

|

| 90 |

+

from PIL import Image

|

| 91 |

+

from transformers import AutoModelForCausalLM

|

| 92 |

+

|

| 93 |

+

# load model

|

| 94 |

+

model = AutoModelForCausalLM.from_pretrained("AIDC-AI/Ovis2-1B",

|

| 95 |

+

torch_dtype=torch.bfloat16,

|

| 96 |

+

multimodal_max_length=32768,

|

| 97 |

+

trust_remote_code=True).cuda()

|

| 98 |

+

text_tokenizer = model.get_text_tokenizer()

|

| 99 |

+

visual_tokenizer = model.get_visual_tokenizer()

|

| 100 |

+

|

| 101 |

+

# single-image input

|

| 102 |

+

image_path = '/data/images/example_1.jpg'

|

| 103 |

+

images = [Image.open(image_path)]

|

| 104 |

+

max_partition = 9

|

| 105 |

+

text = 'Describe the image.'

|

| 106 |

+

query = f'<image>\n{text}'

|

| 107 |

+

|

| 108 |

+

## cot-style input

|

| 109 |

+

# cot_suffix = "Provide a step-by-step solution to the problem, and conclude with 'the answer is' followed by the final solution."

|

| 110 |

+

# image_path = '/data/images/example_1.jpg'

|

| 111 |

+

# images = [Image.open(image_path)]

|

| 112 |

+

# max_partition = 9

|

| 113 |

+

# text = "What's the area of the shape?"

|

| 114 |

+

# query = f'<image>\n{text}\n{cot_suffix}'

|

| 115 |

+

|

| 116 |

+

## multiple-images input

|

| 117 |

+

# image_paths = [

|

| 118 |

+

# '/data/images/example_1.jpg',

|

| 119 |

+

# '/data/images/example_2.jpg',

|

| 120 |

+

# '/data/images/example_3.jpg'

|

| 121 |

+

# ]

|

| 122 |

+

# images = [Image.open(image_path) for image_path in image_paths]

|

| 123 |

+

# max_partition = 4

|

| 124 |

+

# text = 'Describe each image.'

|

| 125 |

+

# query = '\n'.join([f'Image {i+1}: <image>' for i in range(len(images))]) + '\n' + text

|

| 126 |

+

|

| 127 |

+

## video input (require `pip install moviepy==1.0.3`)

|

| 128 |

+

# from moviepy.editor import VideoFileClip

|

| 129 |

+

# video_path = '/data/videos/example_1.mp4'

|

| 130 |

+

# num_frames = 12

|

| 131 |

+

# max_partition = 1

|

| 132 |

+

# text = 'Describe the video.'

|

| 133 |

+

# with VideoFileClip(video_path) as clip:

|

| 134 |

+

# total_frames = int(clip.fps * clip.duration)

|

| 135 |

+

# if total_frames <= num_frames:

|

| 136 |

+

# sampled_indices = range(total_frames)

|

| 137 |

+

# else:

|

| 138 |

+

# stride = total_frames / num_frames

|

| 139 |

+

# sampled_indices = [min(total_frames - 1, int((stride * i + stride * (i + 1)) / 2)) for i in range(num_frames)]

|

| 140 |

+

# frames = [clip.get_frame(index / clip.fps) for index in sampled_indices]

|

| 141 |

+

# frames = [Image.fromarray(frame, mode='RGB') for frame in frames]

|

| 142 |

+

# images = frames

|

| 143 |

+

# query = '\n'.join(['<image>'] * len(images)) + '\n' + text

|

| 144 |

+

|

| 145 |

+

## text-only input

|

| 146 |

+

# images = []

|

| 147 |

+

# max_partition = None

|

| 148 |

+

# text = 'Hello'

|

| 149 |

+

# query = text

|

| 150 |

+

|

| 151 |

+

# format conversation

|

| 152 |

+

prompt, input_ids, pixel_values = model.preprocess_inputs(query, images, max_partition=max_partition)

|

| 153 |

+

attention_mask = torch.ne(input_ids, text_tokenizer.pad_token_id)

|

| 154 |

+

input_ids = input_ids.unsqueeze(0).to(device=model.device)

|

| 155 |

+

attention_mask = attention_mask.unsqueeze(0).to(device=model.device)

|

| 156 |

+

if pixel_values is not None:

|

| 157 |

+

pixel_values = pixel_values.to(dtype=visual_tokenizer.dtype, device=visual_tokenizer.device)

|

| 158 |

+

pixel_values = [pixel_values]

|

| 159 |

+

|

| 160 |

+

# generate output

|

| 161 |

+

with torch.inference_mode():

|

| 162 |

+

gen_kwargs = dict(

|

| 163 |

+

max_new_tokens=1024,

|

| 164 |

+

do_sample=False,

|

| 165 |

+

top_p=None,

|

| 166 |

+

top_k=None,

|

| 167 |

+

temperature=None,

|

| 168 |

+

repetition_penalty=None,

|

| 169 |

+

eos_token_id=model.generation_config.eos_token_id,

|

| 170 |

+

pad_token_id=text_tokenizer.pad_token_id,

|

| 171 |

+

use_cache=True

|

| 172 |

+

)

|

| 173 |

+

output_ids = model.generate(input_ids, pixel_values=pixel_values, attention_mask=attention_mask, **gen_kwargs)[0]

|

| 174 |

+

output = text_tokenizer.decode(output_ids, skip_special_tokens=True)

|

| 175 |

+

print(f'Output:\n{output}')

|

| 176 |

+

```

|

| 177 |

+

|

| 178 |

+

<details>

|

| 179 |

+

<summary>Batch Inference</summary>

|

| 180 |

+

|

| 181 |

+

```python

|

| 182 |

+

import torch

|

| 183 |

+

from PIL import Image

|

| 184 |

+

from transformers import AutoModelForCausalLM

|

| 185 |

+

|

| 186 |

+

# load model

|

| 187 |

+

model = AutoModelForCausalLM.from_pretrained("AIDC-AI/Ovis2-1B",

|

| 188 |

+

torch_dtype=torch.bfloat16,

|

| 189 |

+

multimodal_max_length=32768,

|

| 190 |

+

trust_remote_code=True).cuda()

|

| 191 |

+

text_tokenizer = model.get_text_tokenizer()

|

| 192 |

+

visual_tokenizer = model.get_visual_tokenizer()

|

| 193 |

+

|

| 194 |

+

# preprocess inputs

|

| 195 |

+

batch_inputs = [

|

| 196 |

+

('/data/images/example_1.jpg', 'What colors dominate the image?'),

|

| 197 |

+

('/data/images/example_2.jpg', 'What objects are depicted in this image?'),

|

| 198 |

+

('/data/images/example_3.jpg', 'Is there any text in the image?')

|

| 199 |

+

]

|

| 200 |

+

|

| 201 |

+

batch_input_ids = []

|

| 202 |

+

batch_attention_mask = []

|

| 203 |

+

batch_pixel_values = []

|

| 204 |

+

|

| 205 |

+

for image_path, text in batch_inputs:

|

| 206 |

+

image = Image.open(image_path)

|

| 207 |

+

query = f'<image>\n{text}'

|

| 208 |

+

prompt, input_ids, pixel_values = model.preprocess_inputs(query, [image], max_partition=9)

|

| 209 |

+

attention_mask = torch.ne(input_ids, text_tokenizer.pad_token_id)

|

| 210 |

+

batch_input_ids.append(input_ids.to(device=model.device))

|

| 211 |

+

batch_attention_mask.append(attention_mask.to(device=model.device))

|

| 212 |

+

batch_pixel_values.append(pixel_values.to(dtype=visual_tokenizer.dtype, device=visual_tokenizer.device))

|

| 213 |

+

|

| 214 |

+

batch_input_ids = torch.nn.utils.rnn.pad_sequence([i.flip(dims=[0]) for i in batch_input_ids], batch_first=True,

|

| 215 |

+

padding_value=0.0).flip(dims=[1])

|

| 216 |

+

batch_input_ids = batch_input_ids[:, -model.config.multimodal_max_length:]

|

| 217 |

+

batch_attention_mask = torch.nn.utils.rnn.pad_sequence([i.flip(dims=[0]) for i in batch_attention_mask],

|

| 218 |

+

batch_first=True, padding_value=False).flip(dims=[1])

|

| 219 |

+

batch_attention_mask = batch_attention_mask[:, -model.config.multimodal_max_length:]

|

| 220 |

+

|

| 221 |

+

# generate outputs

|

| 222 |

+

with torch.inference_mode():

|

| 223 |

+

gen_kwargs = dict(

|

| 224 |

+

max_new_tokens=1024,

|

| 225 |

+

do_sample=False,

|

| 226 |

+

top_p=None,

|

| 227 |

+

top_k=None,

|

| 228 |

+

temperature=None,

|

| 229 |

+

repetition_penalty=None,

|

| 230 |

+

eos_token_id=model.generation_config.eos_token_id,

|

| 231 |

+

pad_token_id=text_tokenizer.pad_token_id,

|

| 232 |

+

use_cache=True

|

| 233 |

+

)

|

| 234 |

+

output_ids = model.generate(batch_input_ids, pixel_values=batch_pixel_values, attention_mask=batch_attention_mask,

|

| 235 |

+

**gen_kwargs)

|

| 236 |

+

|

| 237 |

+

for i in range(len(batch_inputs)):

|

| 238 |

+

output = text_tokenizer.decode(output_ids[i], skip_special_tokens=True)

|

| 239 |

+

print(f'Output {i + 1}:\n{output}\n')

|

| 240 |

+

```

|

| 241 |

+

</details>

|

| 242 |

+

|

| 243 |

+

## Citation

|

| 244 |

+

If you find Ovis useful, please consider citing the paper

|

| 245 |

+

```

|

| 246 |

+

@article{lu2024ovis,

|

| 247 |

+

title={Ovis: Structural Embedding Alignment for Multimodal Large Language Model},

|

| 248 |

+

author={Shiyin Lu and Yang Li and Qing-Guo Chen and Zhao Xu and Weihua Luo and Kaifu Zhang and Han-Jia Ye},

|

| 249 |

+

year={2024},

|

| 250 |

+

journal={arXiv:2405.20797}

|

| 251 |

+

}

|

| 252 |

+

```

|

| 253 |

+

|

| 254 |

+

## License

|

| 255 |

+

This project is licensed under the [Apache License, Version 2.0](https://www.apache.org/licenses/LICENSE-2.0.txt) (SPDX-License-Identifier: Apache-2.0).

|

| 256 |

+

|

| 257 |

+

## Disclaimer

|

| 258 |

+

We used compliance-checking algorithms during the training process, to ensure the compliance of the trained model to the best of our ability. Due to the complexity of the data and the diversity of language model usage scenarios, we cannot guarantee that the model is completely free of copyright issues or improper content. If you believe anything infringes on your rights or generates improper content, please contact us, and we will promptly address the matter.

|

added_tokens.json

ADDED

|

@@ -0,0 +1,32 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

{

|

| 2 |

+

"</img>": 151671,

|

| 3 |

+

"</tool_call>": 151658,

|

| 4 |

+

"<col>": 151669,

|

| 5 |

+

"<image>": 151665,

|

| 6 |

+

"<image_atom>": 151666,

|

| 7 |

+

"<image_pad>": 151672,

|

| 8 |

+

"<img>": 151667,

|

| 9 |

+

"<pre>": 151668,

|

| 10 |

+

"<row>": 151670,

|

| 11 |

+

"<tool_call>": 151657,

|

| 12 |

+

"<|box_end|>": 151649,

|

| 13 |

+

"<|box_start|>": 151648,

|

| 14 |

+

"<|endoftext|>": 151643,

|

| 15 |

+

"<|file_sep|>": 151664,

|

| 16 |

+

"<|fim_middle|>": 151660,

|

| 17 |

+

"<|fim_pad|>": 151662,

|

| 18 |

+

"<|fim_prefix|>": 151659,

|

| 19 |

+

"<|fim_suffix|>": 151661,

|

| 20 |

+

"<|im_end|>": 151645,

|

| 21 |

+

"<|im_start|>": 151644,

|

| 22 |

+

"<|image_pad|>": 151655,

|

| 23 |

+

"<|object_ref_end|>": 151647,

|

| 24 |

+

"<|object_ref_start|>": 151646,

|

| 25 |

+

"<|quad_end|>": 151651,

|

| 26 |

+

"<|quad_start|>": 151650,

|

| 27 |

+

"<|repo_name|>": 151663,

|

| 28 |

+

"<|video_pad|>": 151656,

|

| 29 |

+

"<|vision_end|>": 151653,

|

| 30 |

+

"<|vision_pad|>": 151654,

|

| 31 |

+

"<|vision_start|>": 151652

|

| 32 |

+

}

|

merges.txt

ADDED

|

The diff for this file is too large to render.

See raw diff

|

|

|

special_tokens_map.json

ADDED

|

@@ -0,0 +1,39 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

{

|

| 2 |

+

"additional_special_tokens": [

|

| 3 |

+

"<|im_start|>",

|

| 4 |

+

"<|im_end|>",

|

| 5 |

+

"<|object_ref_start|>",

|

| 6 |

+

"<|object_ref_end|>",

|

| 7 |

+

"<|box_start|>",

|

| 8 |

+

"<|box_end|>",

|

| 9 |

+

"<|quad_start|>",

|

| 10 |

+

"<|quad_end|>",

|

| 11 |

+

"<|vision_start|>",

|

| 12 |

+

"<|vision_end|>",

|

| 13 |

+

"<|vision_pad|>",

|

| 14 |

+

"<|image_pad|>",

|

| 15 |

+

"<|video_pad|>",

|

| 16 |

+

"<col>",

|

| 17 |

+

"<image>",

|

| 18 |

+

"<image_atom>",

|

| 19 |

+

"<image_pad>",

|

| 20 |

+

"<img>",

|

| 21 |

+

"<pre>",

|

| 22 |

+

"<row>",

|

| 23 |

+

"</img>"

|

| 24 |

+

],

|

| 25 |

+

"eos_token": {

|

| 26 |

+

"content": "<|im_end|>",

|

| 27 |

+

"lstrip": false,

|

| 28 |

+

"normalized": false,

|

| 29 |

+

"rstrip": false,

|

| 30 |

+

"single_word": false

|

| 31 |

+

},

|

| 32 |

+

"pad_token": {

|

| 33 |

+

"content": "<|endoftext|>",

|

| 34 |

+

"lstrip": false,

|

| 35 |

+

"normalized": false,

|

| 36 |

+

"rstrip": false,

|

| 37 |

+

"single_word": false

|

| 38 |

+

}

|

| 39 |

+

}

|

tokenizer.json

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

version https://git-lfs.github.com/spec/v1

|

| 2 |

+

oid sha256:008a38870d99b0f4cb8219f88b3c215a23bf1a205d4039bf956a532a340a3aac

|

| 3 |

+

size 11423368

|

tokenizer_config.json

ADDED

|

@@ -0,0 +1,279 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

{

|

| 2 |

+

"add_bos_token": false,

|

| 3 |

+

"add_prefix_space": false,

|

| 4 |

+

"added_tokens_decoder": {

|

| 5 |

+

"151643": {

|

| 6 |

+

"content": "<|endoftext|>",

|

| 7 |

+

"lstrip": false,

|

| 8 |

+

"normalized": false,

|

| 9 |

+

"rstrip": false,

|

| 10 |

+

"single_word": false,

|

| 11 |

+

"special": true

|

| 12 |

+

},

|

| 13 |

+

"151644": {

|

| 14 |

+

"content": "<|im_start|>",

|

| 15 |

+

"lstrip": false,

|

| 16 |

+

"normalized": false,

|

| 17 |

+

"rstrip": false,

|

| 18 |

+

"single_word": false,

|

| 19 |

+

"special": true

|

| 20 |

+

},

|

| 21 |

+

"151645": {

|

| 22 |

+

"content": "<|im_end|>",

|

| 23 |

+

"lstrip": false,

|

| 24 |

+

"normalized": false,

|

| 25 |

+

"rstrip": false,

|

| 26 |

+

"single_word": false,

|

| 27 |

+

"special": true

|

| 28 |

+

},

|

| 29 |

+

"151646": {

|

| 30 |

+

"content": "<|object_ref_start|>",

|

| 31 |

+

"lstrip": false,

|

| 32 |

+

"normalized": false,

|

| 33 |

+

"rstrip": false,

|

| 34 |

+

"single_word": false,

|

| 35 |

+

"special": true

|

| 36 |

+

},

|

| 37 |

+

"151647": {

|

| 38 |

+

"content": "<|object_ref_end|>",

|

| 39 |

+

"lstrip": false,

|

| 40 |

+

"normalized": false,

|

| 41 |

+

"rstrip": false,

|

| 42 |

+

"single_word": false,

|

| 43 |

+

"special": true

|

| 44 |

+

},

|

| 45 |

+

"151648": {

|

| 46 |

+

"content": "<|box_start|>",

|

| 47 |

+

"lstrip": false,

|

| 48 |

+

"normalized": false,

|

| 49 |

+

"rstrip": false,

|

| 50 |

+

"single_word": false,

|

| 51 |

+

"special": true

|

| 52 |

+

},

|

| 53 |

+

"151649": {

|

| 54 |

+

"content": "<|box_end|>",

|

| 55 |

+

"lstrip": false,

|

| 56 |

+

"normalized": false,

|

| 57 |

+

"rstrip": false,

|

| 58 |

+

"single_word": false,

|

| 59 |

+

"special": true

|

| 60 |

+

},

|

| 61 |

+

"151650": {

|

| 62 |

+

"content": "<|quad_start|>",

|

| 63 |

+

"lstrip": false,

|

| 64 |

+

"normalized": false,

|

| 65 |

+

"rstrip": false,

|

| 66 |

+

"single_word": false,

|

| 67 |

+

"special": true

|

| 68 |

+

},

|

| 69 |

+

"151651": {

|

| 70 |

+

"content": "<|quad_end|>",

|

| 71 |

+

"lstrip": false,

|

| 72 |

+

"normalized": false,

|

| 73 |

+

"rstrip": false,

|

| 74 |

+

"single_word": false,

|

| 75 |

+

"special": true

|

| 76 |

+

},

|

| 77 |

+

"151652": {

|

| 78 |

+

"content": "<|vision_start|>",

|

| 79 |

+

"lstrip": false,

|

| 80 |

+

"normalized": false,

|

| 81 |

+

"rstrip": false,

|

| 82 |

+

"single_word": false,

|

| 83 |

+

"special": true

|

| 84 |

+

},

|

| 85 |

+

"151653": {

|

| 86 |

+

"content": "<|vision_end|>",

|

| 87 |

+

"lstrip": false,

|

| 88 |

+

"normalized": false,

|

| 89 |

+

"rstrip": false,

|

| 90 |

+

"single_word": false,

|

| 91 |

+

"special": true

|

| 92 |

+

},

|

| 93 |

+

"151654": {

|

| 94 |

+

"content": "<|vision_pad|>",

|

| 95 |

+

"lstrip": false,

|

| 96 |

+

"normalized": false,

|

| 97 |

+

"rstrip": false,

|

| 98 |

+

"single_word": false,

|

| 99 |

+

"special": true

|

| 100 |

+

},

|

| 101 |

+

"151655": {

|

| 102 |

+

"content": "<|image_pad|>",

|

| 103 |

+

"lstrip": false,

|

| 104 |

+

"normalized": false,

|

| 105 |

+

"rstrip": false,

|

| 106 |

+

"single_word": false,

|

| 107 |

+

"special": true

|

| 108 |

+

},

|

| 109 |

+

"151656": {

|

| 110 |

+

"content": "<|video_pad|>",

|

| 111 |

+

"lstrip": false,

|

| 112 |

+

"normalized": false,

|

| 113 |

+

"rstrip": false,

|

| 114 |

+

"single_word": false,

|

| 115 |

+

"special": true

|

| 116 |

+

},

|

| 117 |

+

"151657": {

|

| 118 |

+

"content": "<tool_call>",

|

| 119 |

+

"lstrip": false,

|

| 120 |

+

"normalized": false,

|

| 121 |

+

"rstrip": false,

|

| 122 |

+

"single_word": false,

|

| 123 |

+

"special": false

|

| 124 |

+

},

|

| 125 |

+

"151658": {

|

| 126 |

+

"content": "</tool_call>",

|

| 127 |

+

"lstrip": false,

|

| 128 |

+

"normalized": false,

|

| 129 |

+

"rstrip": false,

|

| 130 |

+

"single_word": false,

|

| 131 |

+

"special": false

|

| 132 |

+

},

|

| 133 |

+

"151659": {

|

| 134 |

+

"content": "<|fim_prefix|>",

|

| 135 |

+

"lstrip": false,

|

| 136 |

+

"normalized": false,

|

| 137 |

+

"rstrip": false,

|

| 138 |

+

"single_word": false,

|

| 139 |

+

"special": false

|

| 140 |

+

},

|

| 141 |

+

"151660": {

|

| 142 |

+

"content": "<|fim_middle|>",

|

| 143 |

+

"lstrip": false,

|

| 144 |

+

"normalized": false,

|

| 145 |

+

"rstrip": false,

|

| 146 |

+

"single_word": false,

|

| 147 |

+

"special": false

|

| 148 |

+

},

|

| 149 |

+

"151661": {

|

| 150 |

+

"content": "<|fim_suffix|>",

|

| 151 |

+

"lstrip": false,

|

| 152 |

+

"normalized": false,

|

| 153 |

+

"rstrip": false,

|

| 154 |

+

"single_word": false,

|

| 155 |

+

"special": false

|

| 156 |

+

},

|

| 157 |

+

"151662": {

|

| 158 |

+

"content": "<|fim_pad|>",

|

| 159 |

+

"lstrip": false,

|

| 160 |

+

"normalized": false,

|

| 161 |

+

"rstrip": false,

|

| 162 |

+

"single_word": false,

|

| 163 |

+

"special": false

|

| 164 |

+

},

|

| 165 |

+

"151663": {

|

| 166 |

+

"content": "<|repo_name|>",

|

| 167 |

+

"lstrip": false,

|

| 168 |

+

"normalized": false,

|

| 169 |

+

"rstrip": false,

|

| 170 |

+

"single_word": false,

|

| 171 |

+

"special": false

|

| 172 |

+

},

|

| 173 |

+

"151664": {

|

| 174 |

+

"content": "<|file_sep|>",

|

| 175 |

+

"lstrip": false,

|

| 176 |

+

"normalized": false,

|

| 177 |

+

"rstrip": false,

|

| 178 |

+

"single_word": false,

|

| 179 |

+

"special": false

|

| 180 |

+

},

|

| 181 |

+

"151665": {

|

| 182 |

+

"content": "<image>",

|

| 183 |

+

"lstrip": false,

|

| 184 |

+

"normalized": false,

|

| 185 |

+

"rstrip": false,

|

| 186 |

+

"single_word": false,

|

| 187 |

+

"special": true

|

| 188 |

+

},

|

| 189 |

+

"151666": {

|

| 190 |

+

"content": "<image_atom>",

|

| 191 |

+

"lstrip": false,

|

| 192 |

+

"normalized": false,

|

| 193 |

+

"rstrip": false,

|

| 194 |

+

"single_word": false,

|

| 195 |

+

"special": true

|

| 196 |

+

},

|

| 197 |

+

"151667": {

|

| 198 |

+

"content": "<img>",

|

| 199 |

+

"lstrip": false,

|

| 200 |

+

"normalized": false,

|

| 201 |

+

"rstrip": false,

|

| 202 |

+

"single_word": false,

|

| 203 |

+

"special": true

|

| 204 |

+

},

|

| 205 |

+

"151668": {

|

| 206 |

+

"content": "<pre>",

|

| 207 |

+

"lstrip": false,

|

| 208 |

+

"normalized": false,

|

| 209 |

+

"rstrip": false,

|

| 210 |

+

"single_word": false,

|

| 211 |

+

"special": true

|

| 212 |

+

},

|

| 213 |

+

"151669": {

|

| 214 |

+

"content": "<col>",

|

| 215 |

+

"lstrip": false,

|

| 216 |

+

"normalized": false,

|

| 217 |

+

"rstrip": false,

|

| 218 |

+

"single_word": false,

|

| 219 |

+

"special": true

|

| 220 |

+

},

|

| 221 |

+

"151670": {

|

| 222 |

+

"content": "<row>",

|

| 223 |

+

"lstrip": false,

|

| 224 |

+

"normalized": false,

|

| 225 |

+

"rstrip": false,

|

| 226 |

+

"single_word": false,

|

| 227 |

+

"special": true

|

| 228 |

+

},

|

| 229 |

+

"151671": {

|

| 230 |

+

"content": "</img>",

|

| 231 |

+

"lstrip": false,

|

| 232 |

+

"normalized": false,

|

| 233 |

+

"rstrip": false,

|

| 234 |

+

"single_word": false,

|

| 235 |

+

"special": true

|

| 236 |

+

},

|

| 237 |

+

"151672": {

|

| 238 |

+

"content": "<image_pad>",

|

| 239 |

+

"lstrip": false,

|

| 240 |

+

"normalized": false,

|

| 241 |

+

"rstrip": false,

|

| 242 |

+

"single_word": false,

|

| 243 |

+

"special": true

|

| 244 |

+

}

|

| 245 |

+

},

|

| 246 |

+

"additional_special_tokens": [

|

| 247 |

+

"<|im_start|>",

|

| 248 |

+

"<|im_end|>",

|

| 249 |

+

"<|object_ref_start|>",

|

| 250 |

+

"<|object_ref_end|>",

|

| 251 |

+

"<|box_start|>",

|

| 252 |

+

"<|box_end|>",

|

| 253 |

+

"<|quad_start|>",

|

| 254 |

+

"<|quad_end|>",

|

| 255 |

+

"<|vision_start|>",

|

| 256 |

+

"<|vision_end|>",

|

| 257 |

+

"<|vision_pad|>",

|

| 258 |

+

"<|image_pad|>",

|

| 259 |

+

"<|video_pad|>",

|

| 260 |

+

"<col>",

|

| 261 |

+

"<image>",

|

| 262 |

+

"<image_atom>",

|

| 263 |

+

"<image_pad>",

|

| 264 |

+

"<img>",

|

| 265 |

+

"<pre>",

|

| 266 |

+

"<row>",

|

| 267 |

+

"</img>"

|

| 268 |

+

],

|

| 269 |

+

"bos_token": null,

|

| 270 |

+

"chat_template": "{%- if tools %}\n {{- '<|im_start|>system\\n' }}\n {%- if messages[0]['role'] == 'system' %}\n {{- messages[0]['content'] }}\n {%- else %}\n {{- 'You are Qwen, created by Alibaba Cloud. You are a helpful assistant.' }}\n {%- endif %}\n {{- \"\\n\\n# Tools\\n\\nYou may call one or more functions to assist with the user query.\\n\\nYou are provided with function signatures within <tools></tools> XML tags:\\n<tools>\" }}\n {%- for tool in tools %}\n {{- \"\\n\" }}\n {{- tool | tojson }}\n {%- endfor %}\n {{- \"\\n</tools>\\n\\nFor each function call, return a json object with function name and arguments within <tool_call></tool_call> XML tags:\\n<tool_call>\\n{\\\"name\\\": <function-name>, \\\"arguments\\\": <args-json-object>}\\n</tool_call><|im_end|>\\n\" }}\n{%- else %}\n {%- if messages[0]['role'] == 'system' %}\n {{- '<|im_start|>system\\n' + messages[0]['content'] + '<|im_end|>\\n' }}\n {%- else %}\n {{- '<|im_start|>system\\nYou are Qwen, created by Alibaba Cloud. You are a helpful assistant.<|im_end|>\\n' }}\n {%- endif %}\n{%- endif %}\n{%- for message in messages %}\n {%- if (message.role == \"user\") or (message.role == \"system\" and not loop.first) or (message.role == \"assistant\" and not message.tool_calls) %}\n {{- '<|im_start|>' + message.role + '\\n' + message.content + '<|im_end|>' + '\\n' }}\n {%- elif message.role == \"assistant\" %}\n {{- '<|im_start|>' + message.role }}\n {%- if message.content %}\n {{- '\\n' + message.content }}\n {%- endif %}\n {%- for tool_call in message.tool_calls %}\n {%- if tool_call.function is defined %}\n {%- set tool_call = tool_call.function %}\n {%- endif %}\n {{- '\\n<tool_call>\\n{\"name\": \"' }}\n {{- tool_call.name }}\n {{- '\", \"arguments\": ' }}\n {{- tool_call.arguments | tojson }}\n {{- '}\\n</tool_call>' }}\n {%- endfor %}\n {{- '<|im_end|>\\n' }}\n {%- elif message.role == \"tool\" %}\n {%- if (loop.index0 == 0) or (messages[loop.index0 - 1].role != \"tool\") %}\n {{- '<|im_start|>user' }}\n {%- endif %}\n {{- '\\n<tool_response>\\n' }}\n {{- message.content }}\n {{- '\\n</tool_response>' }}\n {%- if loop.last or (messages[loop.index0 + 1].role != \"tool\") %}\n {{- '<|im_end|>\\n' }}\n {%- endif %}\n {%- endif %}\n{%- endfor %}\n{%- if add_generation_prompt %}\n {{- '<|im_start|>assistant\\n' }}\n{%- endif %}\n",

|

| 271 |

+

"clean_up_tokenization_spaces": false,

|

| 272 |

+

"eos_token": "<|im_end|>",

|

| 273 |

+

"errors": "replace",

|

| 274 |

+

"model_max_length": 131072,

|

| 275 |

+

"pad_token": "<|endoftext|>",

|

| 276 |

+

"split_special_tokens": false,

|

| 277 |

+

"tokenizer_class": "Qwen2Tokenizer",

|

| 278 |

+

"unk_token": null

|

| 279 |

+

}

|

vocab.json

ADDED

|

The diff for this file is too large to render.

See raw diff

|

|

|