File size: 5,003 Bytes

e2a0278 00e2298 5614c51 e2a0278 5614c51 e2a0278 5614c51 e2a0278 5614c51 e2a0278 5614c51 e2a0278 5614c51 e2a0278 5614c51 e2a0278 5614c51 e2a0278 5614c51 e2a0278 5614c51 e2a0278 5614c51 e2a0278 5614c51 e2a0278 5614c51 e2a0278 5614c51 e2a0278 5614c51 e2a0278 5614c51 | 1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 27 28 29 30 31 32 33 34 35 36 37 38 39 40 41 42 43 44 45 46 47 48 49 50 51 52 53 54 55 56 57 58 59 60 61 62 63 64 65 66 67 68 69 70 71 72 73 74 75 76 77 78 79 80 81 82 83 84 85 86 87 88 89 90 91 92 93 94 95 96 97 98 99 100 101 102 103 104 105 106 107 108 109 110 111 112 113 | ---

license: apache-2.0

pipeline_tag: text-generation

library_name: transformers

---

<h2 align="center"> <a href="https://arxiv.org/abs/2405.14297">Dynamic Mixture of Experts: An Auto-Tuning Approach for Efficient Transformer Models</a></h2>

<h5 align="center"> If our project helps you, please give us a star ⭐ on <a href="https://github.com/LINs-lab/DynMoE">GitHub</a> and cite our paper!</h2>

<h5 align="center">

[](https://huggingface.co/papers/2405.14297)

[](https://arxiv.org/abs/2405.14297)

[](https://hits.seeyoufarm.com)

## News

- **[2025.01.23]**: 🎉 Our paper is accepted to ICLR 2025!

- **[2024.05.25]** Our [checkpoints](https://huggingface.co/collections/LINs-lab/dynmoe-family-665ed5a331a7e84463cab01a) are available now!

- **[2024.05.23]** Our [paper](https://arxiv.org/abs/2405.14297) is released!

## Why Do We Need DynMoE?

Sparse MoE (SMoE) has an unavoidable drawback: *the performance of SMoE heavily relies on the choice of hyper-parameters, such as the number of activated experts per token (top-k) and the number of experts.*

Also, *identifying the optimal hyper-parameter without a sufficient number of ablation studies is challenging.* As the size of the models continues to grow, this limitation could result in a significant waste of computational resources, and in turn, could hinder the efficiency of training MoE-based models in practice.

Now, our **DynMoE** addresses these challenges through the two components introduced in [Dynamic Mixture of Experts (DynMoE)](#dynamic-mixture-of-experts-dynmoe).

## Dynamic Mixture of Experts (DynMoE)

## Top-Any Gating

We first introduce a novel gating method that enables each token to **automatically determine the number of experts to activate**.

## Adaptive Training Process

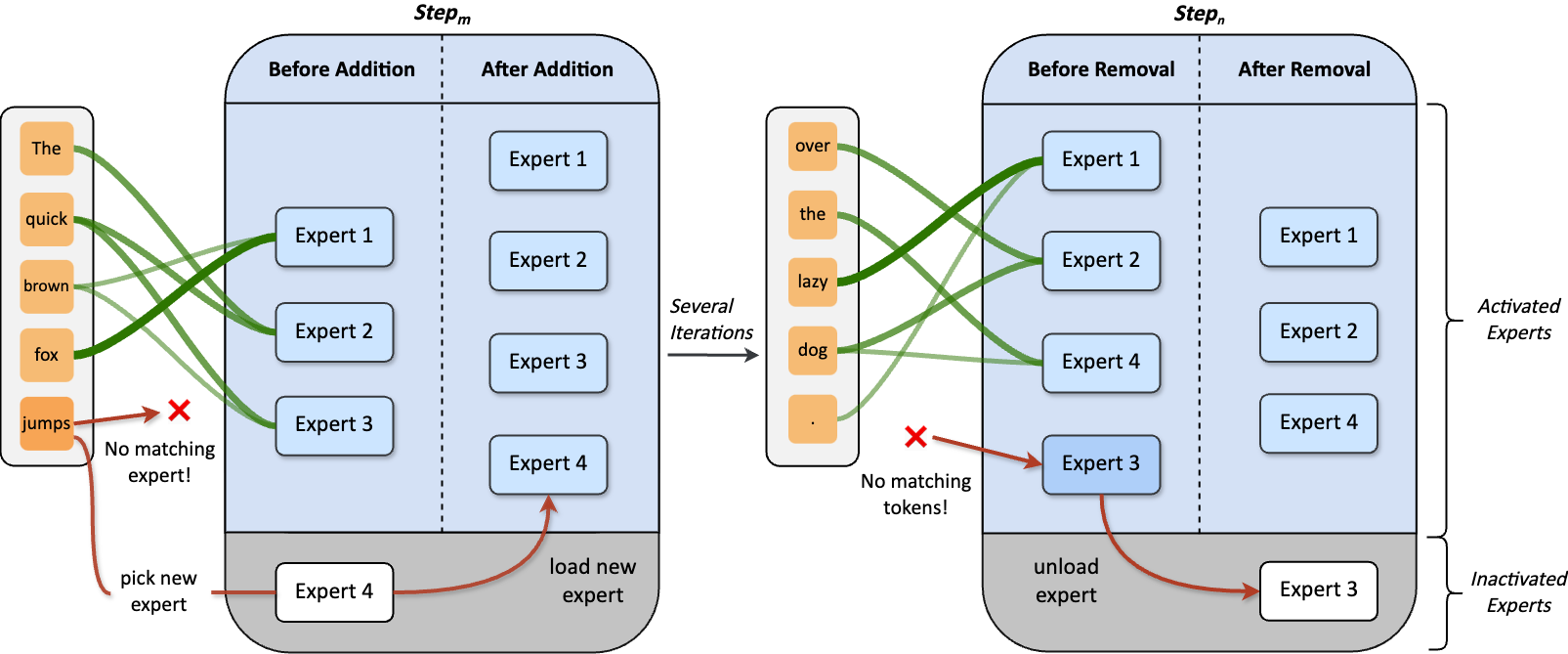

Our method also includes an adaptive process **automatically adjusts the number of experts** during training.

## Can We Trust DynMoE? Yes!

- On language tasks, **DynMoE surpasses the average performance among various MoE settings.**

- **Effectiveness of DynMoE remains consistent** in both Vision and Vision-Language tasks.

- Although sparsity is not enforced in DynMoE, it **maintains efficiency by activating even less parameters!**

## Model Zoo

| Model | Activated Params / Total Params| Transformers(HF) |

| ----- | --------------- | ---------------- |

| DynMoE-StableLM-1.6B | 1.8B / 2.9B | [LINs-lab/DynMoE-StableLM-1.6B](https://huggingface.co/LINs-lab/DynMoE-StableLM-1.6B)

| DynMoE-Qwen-1.8B | 2.2B / 3.1B | [LINs-lab/DynMoE-Qwen-1.8B](https://huggingface.co/LINs-lab/DynMoE-Qwen-1.8B)

| DynMoE-Phi-2-2.7B | 3.4B / 5.3B| [LINs-lab/DynMoE-Phi-2-2.7B](https://huggingface.co/LINs-lab/DynMoE-Phi-2-2.7B)

## Directory Specification

### Experiment Code

- `EMoE/` contains experiments on language and vision tasks, which uses tutel-based DynMoE.

- `MoE-LLaVA/` contains experiments on language-vision tasks, which uses deepspeed-0.9.5-based DynMoE.

### DynMoE Implementations

- `Deepspeed/` provides DynMoE-Deepspeed implementation. **(Recommend)**

- `EMoE/tutel/` provides DynMoE-Tutel implementation.

## Environment Setup

Please refer to instructions under `EMoE/` and `MoE-LLaVA/`.

## Usage

### Tutel Examples

Please refer to `EMoE/Language/README.md` and `EMoE/Language/Vision.md`.

### DeepSpeed Examples (Recommend)

We give a minimal example to train DynMoE-ViT on ImageNet-1K from scratch at `Examples/DeepSpeed-MoE`.

- Check `Examples/DeepSpeed-MoE/dynmoe_vit.py` for how to use DynMoE in model implementation.

- Check `Examples/DeepSpeed-MoE/train.py` for how to train model with DynMoE.

## Acknowledgement

We are grateful for the following awesome projects:

- [tutel](https://github.com/microsoft/tutel)

- [DeepSpeed](https://github.com/microsoft/DeepSpeed)

- [GMoE](https://github.com/Luodian/Generalizable-Mixture-of-Experts)

- [EMoE](https://github.com/qiuzh20/EMoE)

- [MoE-LLaVA](https://github.com/PKU-YuanGroup/MoE-LLaVA)

- [GLUE-X](https://github.com/YangLinyi/GLUE-X)

## Citation

If you find this project helpful, please consider citing our work:

```bibtex

@article{guo2024dynamic,

title={Dynamic Mixture of Experts: An Auto-Tuning Approach for Efficient Transformer Models},

author={Guo, Yongxin and Cheng, Zhenglin and Tang, Xiaoying and Lin, Tao},

journal={arXiv preprint arXiv:2405.14297},

year={2024}

}

```

## Star History

[](https://star-history.com/#LINs-lab/DynMoE&Date)

Code: https://github.com/LINs-lab/DynMoE |