Image-to-Video

Diffusers

text-to-video

video-to-video

image-text-to-video

audio-to-video

text-to-audio

video-to-audio

audio-to-audio

text-to-audio-video

image-to-audio-video

image-text-to-audio-video

ltx-2

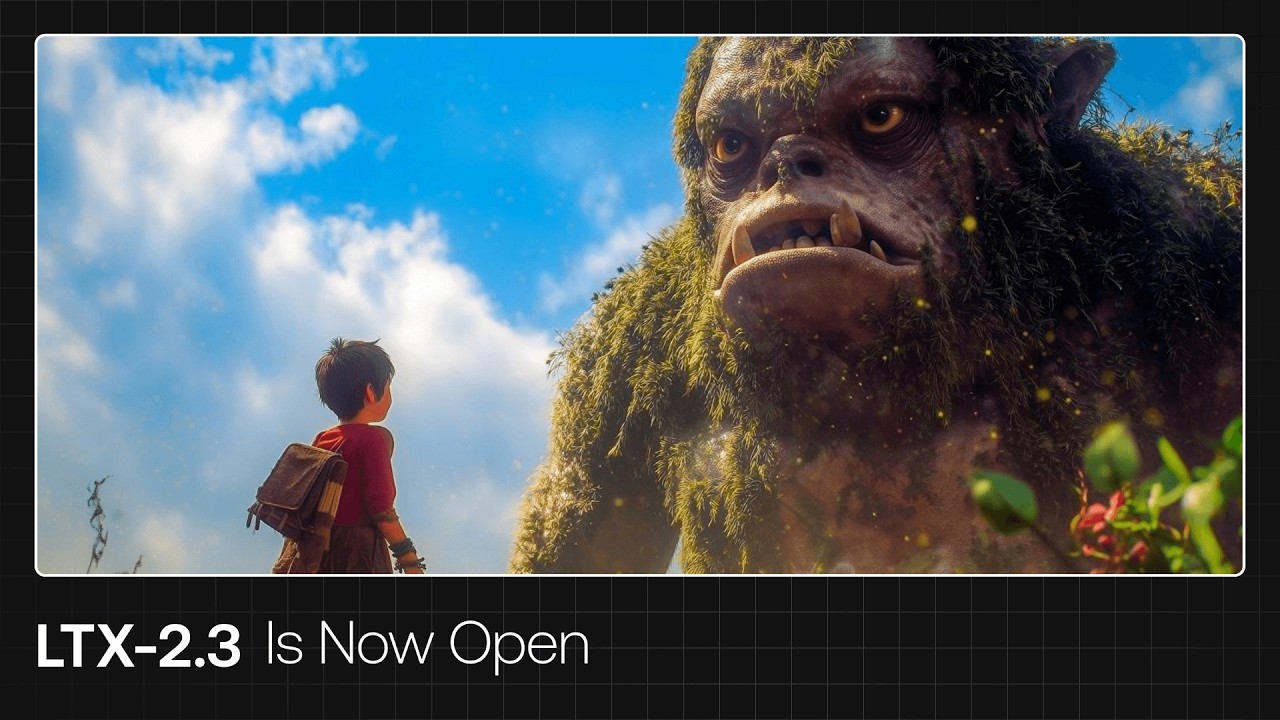

ltx-2-3

ltx-video

ltxv

lightricks

Instructions to use Lightricks/LTX-2.3-fp8 with libraries, inference providers, notebooks, and local apps. Follow these links to get started.

- Libraries

- Diffusers

How to use Lightricks/LTX-2.3-fp8 with Diffusers:

pip install -U diffusers transformers accelerate

import torch from diffusers import DiffusionPipeline from diffusers.utils import load_image, export_to_video # switch to "mps" for apple devices pipe = DiffusionPipeline.from_pretrained("Lightricks/LTX-2.3-fp8", dtype=torch.bfloat16, device_map="cuda") pipe.to("cuda") prompt = "A man with short gray hair plays a red electric guitar." image = load_image( "https://huggingface.co/datasets/huggingface/documentation-images/resolve/main/diffusers/guitar-man.png" ) output = pipe(image=image, prompt=prompt).frames[0] export_to_video(output, "output.mp4") - Notebooks

- Google Colab

- Kaggle

Docs: update readme.

Browse files

README.md

CHANGED

|

@@ -38,7 +38,7 @@ demo: https://app.ltx.studio/ltx-2-playground/i2v

|

|

| 38 |

|

| 39 |

# LTX-2.3 FP8 Model Card

|

| 40 |

|

| 41 |

-

This is the FP8 versions of the LTX-2.3 model. All information below is derived from the base model.

|

| 42 |

|

| 43 |

This model card focuses on the LTX-2.3 model, which is a significant update to the [LTX-2 model](https://huggingface.co/Lightricks/LTX-2) with improved audio and visual quality as well as enhanced prompt adherence.

|

| 44 |

LTX-2 was presented in the paper [LTX-2: Efficient Joint Audio-Visual Foundation Model](https://huggingface.co/papers/2601.03233).

|

|

@@ -47,7 +47,7 @@ LTX-2 was presented in the paper [LTX-2: Efficient Joint Audio-Visual Foundation

|

|

| 47 |

|

| 48 |

LTX-2.3 is a DiT-based audio-video foundation model designed to generate synchronized video and audio within a single model. It brings together the core building blocks of modern video generation, with open weights and a focus on practical, local execution.

|

| 49 |

|

| 50 |

-

[.

|

| 118 |

-

|

| 119 |

-

Training for motion, style or likeness (sound+appearance) can take less than an hour in many settings.

|

| 120 |

|

| 121 |

## Citation

|

| 122 |

|

|

|

|

| 38 |

|

| 39 |

# LTX-2.3 FP8 Model Card

|

| 40 |

|

| 41 |

+

**This is the FP8 versions of the LTX-2.3 model. All information below is derived from the base model.**

|

| 42 |

|

| 43 |

This model card focuses on the LTX-2.3 model, which is a significant update to the [LTX-2 model](https://huggingface.co/Lightricks/LTX-2) with improved audio and visual quality as well as enhanced prompt adherence.

|

| 44 |

LTX-2 was presented in the paper [LTX-2: Efficient Joint Audio-Visual Foundation Model](https://huggingface.co/papers/2601.03233).

|

|

|

|

| 47 |

|

| 48 |

LTX-2.3 is a DiT-based audio-video foundation model designed to generate synchronized video and audio within a single model. It brings together the core building blocks of modern video generation, with open weights and a focus on practical, local execution.

|

| 49 |

|

| 50 |

+

[](https://youtu.be/o-7us-BR_gQ)

|

| 51 |

|

| 52 |

# Model Checkpoints

|

| 53 |

|

|

|

|

| 112 |

|

| 113 |

# Train the model

|

| 114 |

|

| 115 |

+

Currently it is recommended to train the bf16 model. Recipes for training the fp8 model are welcome as community contributions.

|

|

|

|

|

|

|

|

|

|

|

|

|

| 116 |

|

| 117 |

## Citation

|

| 118 |

|