Text Ranking

Transformers

Safetensors

sentence-transformers

Arabic

bert

text-classification

reranking

text-embeddings-inference

Instructions to use NAMAA-Space/GATE-Reranker-V1 with libraries, inference providers, notebooks, and local apps. Follow these links to get started.

- Libraries

- Transformers

How to use NAMAA-Space/GATE-Reranker-V1 with Transformers:

# Load model directly from transformers import AutoTokenizer, AutoModelForSequenceClassification tokenizer = AutoTokenizer.from_pretrained("NAMAA-Space/GATE-Reranker-V1") model = AutoModelForSequenceClassification.from_pretrained("NAMAA-Space/GATE-Reranker-V1") - sentence-transformers

How to use NAMAA-Space/GATE-Reranker-V1 with sentence-transformers:

from sentence_transformers import CrossEncoder model = CrossEncoder("NAMAA-Space/GATE-Reranker-V1") query = "Which planet is known as the Red Planet?" passages = [ "Venus is often called Earth's twin because of its similar size and proximity.", "Mars, known for its reddish appearance, is often referred to as the Red Planet.", "Jupiter, the largest planet in our solar system, has a prominent red spot.", "Saturn, famous for its rings, is sometimes mistaken for the Red Planet." ] scores = model.predict([(query, passage) for passage in passages]) print(scores) - Notebooks

- Google Colab

- Kaggle

Update README.md

Browse files

README.md

CHANGED

|

@@ -49,11 +49,12 @@ scores = model.predict([(Query, Paragraph1), (Query, Paragraph2)])

|

|

| 49 |

|

| 50 |

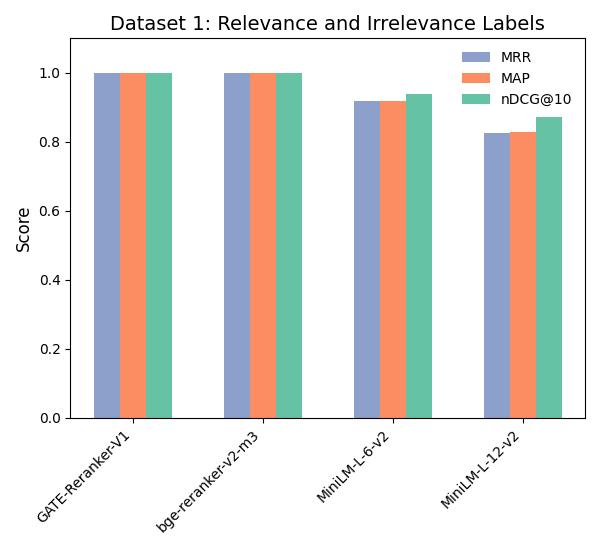

We evaluate our model on two different datasets using the metrics **MAP**, **MRR** and **NDCG@10**:

|

| 51 |

|

| 52 |

-

The purpose of this evaluation is to highlight the performance of our model with regards to: Relevant/Irrelevant labels and

|

| 53 |

|

| 54 |

-

|

| 55 |

-

2 - Dataset 2: [NAMAA-Space/Ar-Reranking-Eval](https://huggingface.co/datasets/NAMAA-Space/Ar-Reranking-Eval)

|

| 56 |

|

| 57 |

-

|

| 58 |

|

| 59 |

-

|

|

|

|

|

|

|

|

|

| 49 |

|

| 50 |

We evaluate our model on two different datasets using the metrics **MAP**, **MRR** and **NDCG@10**:

|

| 51 |

|

| 52 |

+

The purpose of this evaluation is to highlight the performance of our model with regards to: Relevant/Irrelevant labels and positive/multiple negatives documents:

|

| 53 |

|

| 54 |

+

Dataset 1: [NAMAA-Space/Ar-Reranking-Eval](https://huggingface.co/datasets/NAMAA-Space/Ar-Reranking-Eval)

|

|

|

|

| 55 |

|

| 56 |

+

|

| 57 |

|

| 58 |

+

Dataset 2: [NAMAA-Space/Arabic-Reranking-Triplet-5-Eval](https://huggingface.co/datasets/NAMAA-Space/Arabic-Reranking-Triplet-5-Eval)

|

| 59 |

+

|

| 60 |

+

and compare it to other famous models on the hub

|