---

language:

- zh

- en

- de

- es

- fr

- ja

- it

- he

- ko

- ru

- fa

- ar

- pl

- pt

- cs

- da

- sv

- hu

- el

- tr

license: apache-2.0

tags:

- text-to-speech

library_name: transformers

pipeline_tag: text-to-speech

arxiv: 2602.10934

---

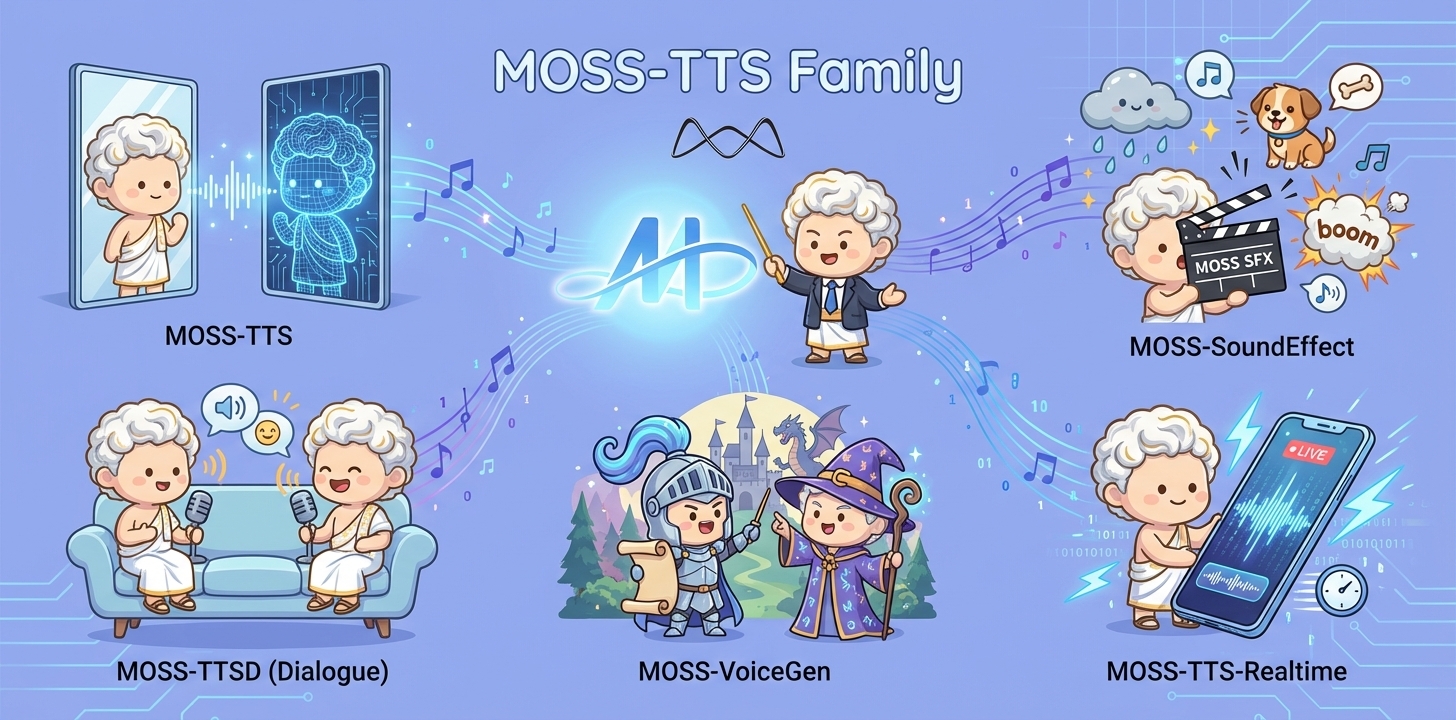

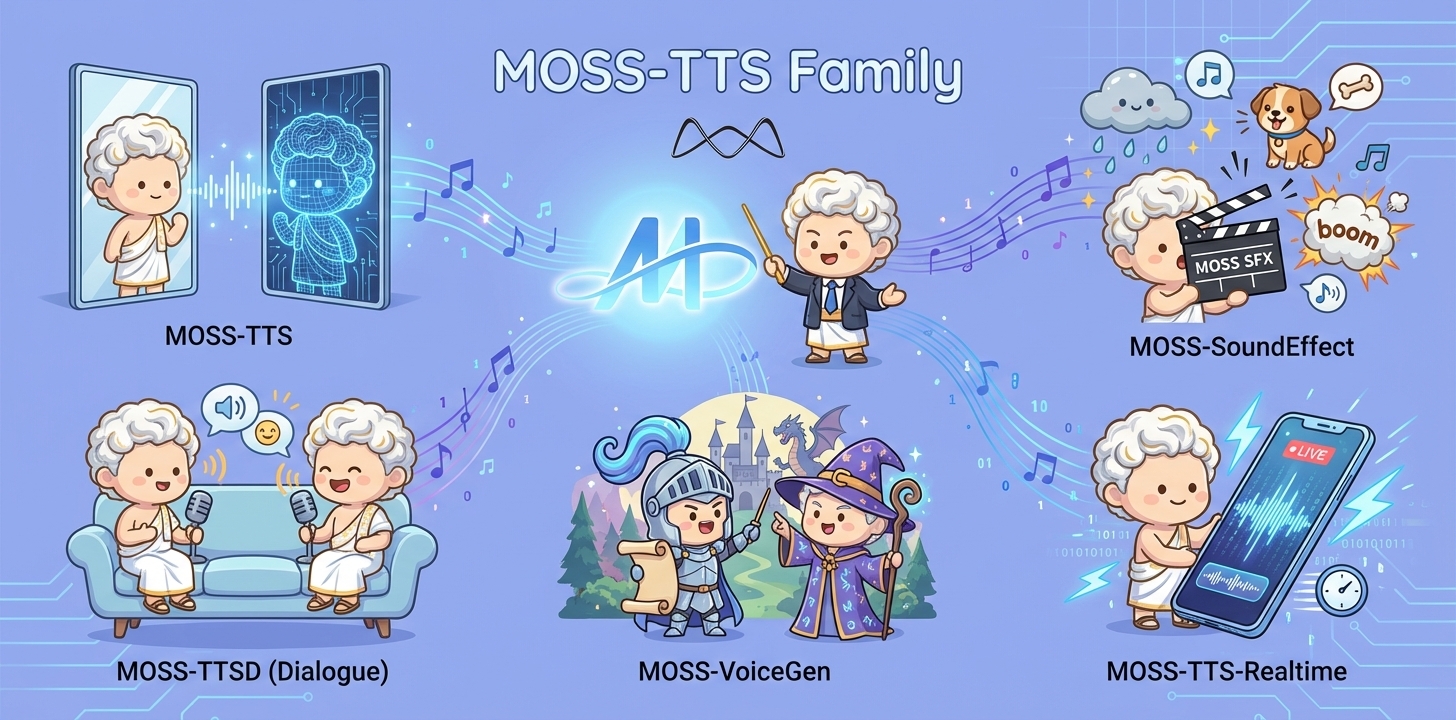

# MOSS-TTS Family

This repository contains the **MOSS-TTS Family**, a series of next-generation speech and sound generation models introduced in the paper [MOSS-Audio-Tokenizer: Scaling Audio Tokenizers for Future Audio Foundation Models](https://huggingface.co/papers/2602.10934).

## Overview

MOSS‑TTS Family is an open‑source **speech and sound generation model family** from [MOSI.AI](https://mosi.cn/#hero) and the [OpenMOSS team](https://www.open-moss.com/). It is designed for **high‑fidelity**, **high‑expressiveness**, and **complex real‑world scenarios**, covering stable long‑form speech, multi‑speaker dialogue, voice/character design, environmental sound effects, and real‑time streaming TTS.

## Introduction

When a single piece of audio needs to **sound like a real person**, **pronounce every word accurately**, **switch speaking styles across content**, **remain stable over tens of minutes**, and **support dialogue, role‑play, and real‑time interaction**, a single TTS model is often not enough. The **MOSS‑TTS Family** breaks the workflow into five production‑ready models that can be used independently or composed into a complete pipeline.

- **MOSS‑TTS**: MOSS-TTS is the flagship production TTS foundation model, centered on high-fidelity zero-shot voice cloning with controllable long-form synthesis, pronunciation, and multilingual/code-switched speech. It serves as the core engine for scalable narration, dubbing, and voice-driven products.

- **MOSS‑TTSD**: MOSS-TTSD is a production long-form dialogue model for expressive multi-speaker conversational audio at scale. It supports long-duration continuity, turn-taking control, and zero-shot voice cloning from short references for podcasts, audiobooks, commentary, dubbing, and entertainment dialogue.

- **MOSS‑VoiceGenerator**: MOSS-VoiceGenerator is an open-source voice design model that creates speaker timbres directly from free-form text, without reference audio. It unifies timbre design, style control, and content synthesis, and can be used standalone or as a voice-design layer for downstream TTS.

- **MOSS‑SoundEffect**: MOSS-SoundEffect is a high-fidelity text-to-sound model with broad category coverage and controllable duration for real content production. It generates stable audio from prompts across ambience, urban scenes, creatures, human actions, and music-like clips for film, games, interactive media, and data synthesis.

- **MOSS‑TTS‑Realtime**: MOSS-TTS-Realtime is a context-aware, multi-turn streaming TTS model for real-time voice agents. By conditioning on dialogue history across both text and prior user acoustics, it delivers low-latency synthesis with coherent, consistent voice responses across turns.

## Released Models

| Model | Architecture | Size | Model Card | Hugging Face |

|---|---|---:|---|---|

| **MOSS-TTS** | MossTTSDelay | 8B | [moss_tts_model_card.md](https://github.com/OpenMOSS/MOSS-TTS/blob/main/docs/moss_tts_model_card.md) | 🤗 [Huggingface](https://huggingface.co/OpenMOSS-Team/MOSS-TTS) |

| | MossTTSLocal | 1.7B | [moss_tts_model_card.md](https://github.com/OpenMOSS/MOSS-TTS/blob/main/docs/moss_tts_model_card.md) | 🤗 [Huggingface](https://huggingface.co/OpenMOSS-Team/MOSS-TTS-Local-Transformer) |

| **MOSS‑TTSD‑V1.0** | MossTTSDelay | 8B | [moss_ttsd_model_card.md](https://github.com/OpenMOSS/MOSS-TTS/blob/main/docs/moss_ttsd_model_card.md) | 🤗 [Huggingface](https://huggingface.co/OpenMOSS-Team/MOSS-TTSD-v1.0) |

| **MOSS‑VoiceGenerator** | MossTTSDelay | 1.7B | [moss_voice_generator_model_card.md](https://github.com/OpenMOSS/MOSS-TTS/blob/main/docs/moss_voice_generator_model_card.md) | 🤗 [Huggingface](https://huggingface.co/OpenMOSS-Team/MOSS-Voice-Generator) |

| **MOSS‑SoundEffect** | MossTTSDelay | 8B | [moss_sound_effect_model_card.md](https://github.com/OpenMOSS/MOSS-TTS/blob/main/docs/moss_sound_effect_model_card.md) | 🤗 [Huggingface](https://huggingface.co/OpenMOSS-Team/MOSS-SoundEffect) |

| **MOSS‑TTS‑Realtime** | MossTTSRealtime | 1.7B | [moss_tts_realtime_model_card.md](https://github.com/OpenMOSS/MOSS-TTS/blob/main/docs/moss_tts_realtime_model_card.md) | 🤗 [Huggingface](https://huggingface.co/OpenMOSS-Team/MOSS-TTS-Realtime) |

## Supported Languages

MOSS-TTS, MOSS-TTSD and MOSS-TTS-Realtime currently supports **20 languages**: Chinese, English, German, Spanish, French, Japanese, Italian, Hebrew, Korean, Russian, Persian, Arabic, Polish, Portuguese, Czech, Danish, Swedish, Hungarian, Greek, and Turkish.

# MOSS-TTS

**MOSS-TTS** is a next-generation, production-grade TTS foundation model focused on **voice cloning**, **ultra-long stable speech generation**, **token-level duration control**, **multilingual & code-switched synthesis**, and **fine-grained Pinyin/phoneme-level pronunciation control**. It is built on a clean autoregressive discrete-token recipe that emphasizes high-quality audio tokenization, large-scale diverse pre-training data, and efficient discrete token modeling.

## 1. Overview

### 1.1 TTS Family Positioning

MOSS-TTS is the **flagship base model** in our open-source **TTS Family**. It is designed as a production-ready synthesis backbone that can serve as the primary high-quality engine for scalable voice applications, and as a strong research baseline for controllable TTS and discrete audio token modeling.

**Design goals**

- **Production readiness**: robust voice cloning with stable, on-brand speaker identity at scale

- **Controllability**: duration and pronunciation controls that integrate into real workflows

- **Long-form stability**: consistent identity and delivery for extended narration

- **Multilingual coverage**: multilingual and code-switched synthesis as first-class capabilities

### 1.2 Key Capabilities

MOSS-TTS delivers state-of-the-art quality while providing the fine-grained controllability and long-form stability required for production-grade voice applications, from zero-shot cloning and hour-long narration to token- and phoneme-level control across multilingual and code-switched speech.

* **State-of-the-art evaluation performance** — top-tier objective and subjective results across standard TTS benchmarks and in-house human preference testing, validating both fidelity and naturalness.

* **Zero-shot Voice Cloning (Voice Clone)** — clone a target speaker’s timbre (and part of speaking style) from short reference audio, without speaker-specific fine-tuning.

* **Ultra-long Speech Generation (up to 1 hour)** — support continuous long-form speech generation for up to one hour in a single run, designed for extended narration and long-session content creation.

* **Token-level Duration Control** — control pacing, rhythm, pauses, and speaking rate at token resolution for precise alignment and expressive delivery.

* **Phoneme-level Pronunciation Control** — supports:

* pure **Pinyin** input

* pure **IPA** phoneme input

* mixed **Chinese / English / Pinyin / IPA** input in any combination

* **Multilingual support** — high-quality multilingual synthesis with robust generalization across languages and accents.

* **Code-switching** — natural mixed-language generation within a single utterance (e.g., Chinese–English), with smooth transitions, consistent speaker identity, and pronunciation-aware rendering on both sides of the switch.

### 1.3 Model Architecture

MOSS-TTS includes **two complementary architectures**, both trained and released to explore different performance/latency tradeoffs and to support downstream research.

**Architecture A: Delay Pattern (MossTTSDelay)**

- Single Transformer backbone with **(n_vq + 1) heads**.

- Uses **delay scheduling** for multi-codebook audio tokens.

- Strong long-context stability, efficient inference, and production-friendly behavior.

**Architecture B: Global Latent + Local Transformer (MossTTSLocal)**

- Backbone produces a **global latent** per time step.

- A lightweight **Local Transformer** emits a token block per step.

- **Streaming-friendly** with simpler alignment (no delay scheduling).

For full details, see:

- **[moss_tts_delay/README.md](https://github.com/OpenMOSS/MOSS-TTS/blob/main/moss_tts_delay/README.md)**

- **[moss_tts_local/README.md](https://github.com/OpenMOSS/MOSS-TTS/tree/main/moss_tts_local)**

### 1.4 Released Models

| Model | Description |

|---|---|

| **MossTTSDelay-8B** | **Recommended for production**. Faster inference, stronger long-context stability, and robust voice cloning quality. Best for large-scale deployment and long-form narration. |

| **MossTTSLocal-1.7B** | **Recommended for evaluation and research**. Smaller model size with SOTA objective metrics. Great for quick experiments, ablations, and academic studies. |

**Recommended decoding hyperparameters (per model)**

| Model | audio_temperature | audio_top_p | audio_top_k | audio_repetition_penalty |

|---|---:|---:|---:|---:|

| **MossTTSDelay-8B** | 1.7 | 0.8 | 25 | 1.0 |

| **MossTTSLocal-1.7B** | 1.0 | 0.95 | 50 | 1.1 |

## 2. Quick Start

### Environment Setup

We recommend a clean, isolated Python environment with **Transformers** to avoid dependency conflicts.

```bash

conda create -n moss-tts python=3.12 -y

conda activate moss-tts

```

Install all required dependencies:

```bash

git clone https://github.com/OpenMOSS/MOSS-TTS.git

cd MOSS-TTS

pip install --extra-index-url https://download.pytorch.org/whl/cu128 -e .

```

### Basic Usage

MOSS-TTS provides a convenient `generate` interface for rapid usage. The example below covers direct generation and voice cloning. Note that you must set `trust_remote_code=True` when loading the model and processor.

```python

from pathlib import Path

import importlib.util

import torch

import torchaudio

from transformers import AutoModel, AutoProcessor

pretrained_model_name_or_path = "OpenMOSS-Team/MOSS-TTS"

device = "cuda" if torch.cuda.is_available() else "cpu"

dtype = torch.bfloat16 if device == "cuda" else torch.float32

processor = AutoProcessor.from_pretrained(

pretrained_model_name_or_path,

trust_remote_code=True,

)

processor.audio_tokenizer = processor.audio_tokenizer.to(device)

model = AutoModel.from_pretrained(

pretrained_model_name_or_path,

trust_remote_code=True,

torch_dtype=dtype,

).to(device)

model.eval()

text_1 = "亲爱的你,

你好呀。

今天,我想用最认真、最温柔的声音,对你说一些重要的话。

这些话,像一颗小小的星星,希望能在你的心里慢慢发光。"

text_2 = "We stand on the threshold of the AI era.

Artificial intelligence is no longer just a concept in laboratories, but is entering every industry, every creative endeavor, and every decision."

conversations = [

[processor.build_user_message(text=text_1)],

[processor.build_user_message(text=text_2)],

]

with torch.no_grad():

for start in range(0, len(conversations), 1):

batch_conversations = conversations[start : start + 1]

batch = processor(batch_conversations, mode="generation")

input_ids = batch["input_ids"].to(device)

attention_mask = batch["attention_mask"].to(device)

outputs = model.generate(

input_ids=input_ids,

attention_mask=attention_mask,

max_new_tokens=4096,

)

for message in processor.decode(outputs):

audio = message.audio_codes_list[0]

torchaudio.save("output.wav", audio.unsqueeze(0), processor.model_config.sampling_rate)

```

## 3. Evaluation

MOSS-TTS achieved state-of-the-art results on the open-source zero-shot TTS benchmark Seed-TTS-eval.

| Model | EN WER (%) ↓ | EN SIM (%) ↑ | ZH CER (%) ↓ | ZH SIM (%) ↑ |

|---|---:|---:|---:|---:|

| MossTTSDelay-8B | 1.79 | 71.46 | 1.32 | 77.05 |

| MossTTSLocal-1.7B | 1.85 | **73.42** | 1.2 | **78.82** |

## Citation

If you use this model in your research, please cite:

```bibtex

@misc{gong2026mossaudiotokenizerscalingaudiotokenizers,

title={MOSS-Audio-Tokenizer: Scaling Audio Tokenizers for Future Audio Foundation Models},

author={Yitian Gong and Kuangwei Chen and Zhaoye Fei and Xiaogui Yang and Ke Chen and Yang Wang and Kexin Huang and Mingshu Chen and Ruixiao Li and Qingyuan Cheng and Shimin Li and Xipeng Qiu},

year={2026},

eprint={2602.10934},

archivePrefix={arXiv},

primaryClass={cs.SD},

url={https://arxiv.org/abs/2602.10934},

}

```