---

license: bsd-3-clause

pipeline_tag: video-text-to-text

---

# UniPixel-3B

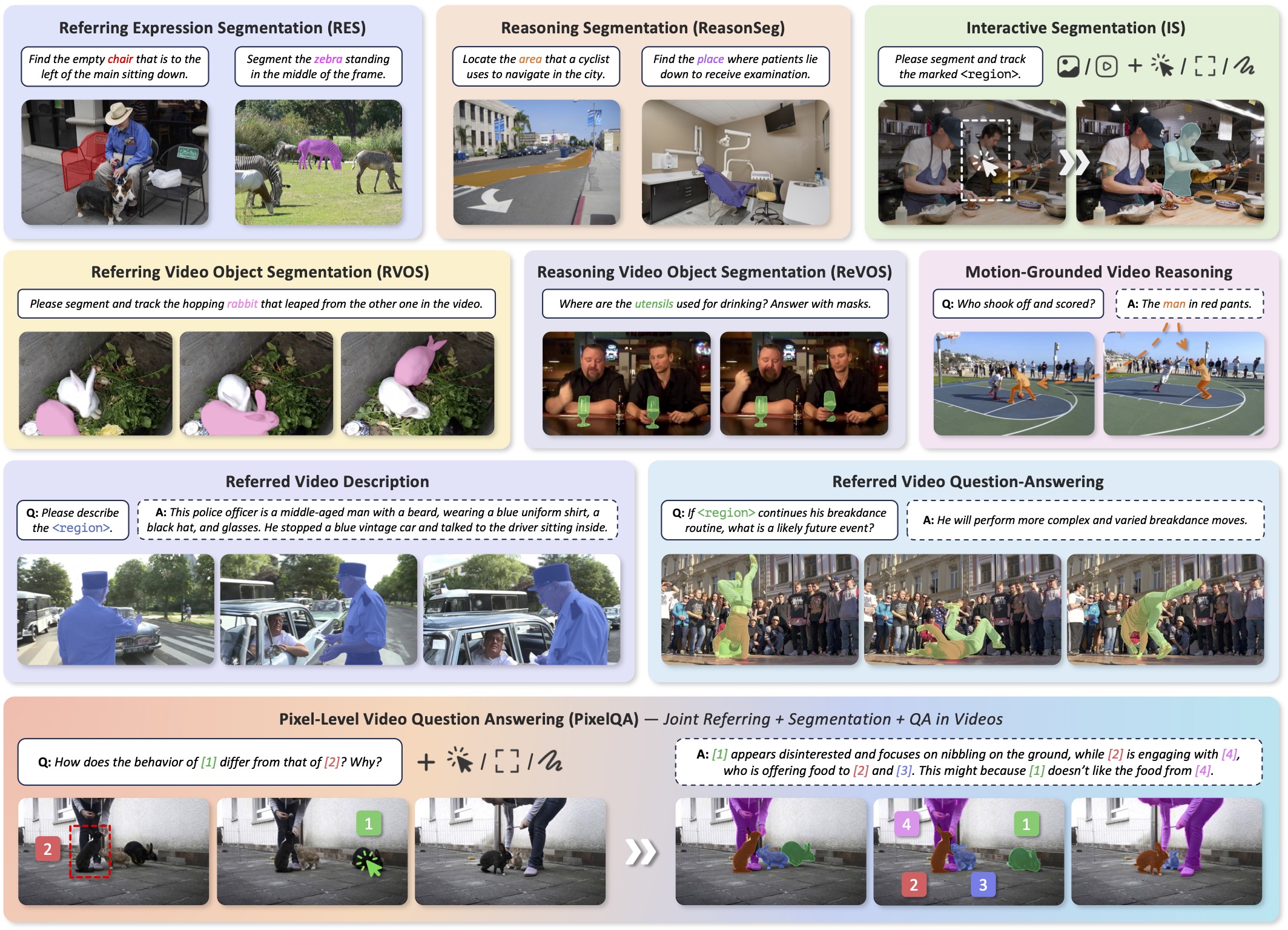

UniPixel is a unified MLLM for pixel-level vision-language understanding. It flexibly supports a variety of fine-grained tasks, including image/video segmentation, regional understanding, and a novel PixelQA task that jointly requires object-centric referring, segmentation, and question-answering in videos.

## 🔖 Model Details

- **Model type:** Multi-modal Large Language Model

- **Language(s):** English

- **License:** BSD-3-Clause

## 🚀 Quick Start

### Install the environment

1. Clone the repository from GitHub.

```shell

git clone https://github.com/PolyU-ChenLab/UniPixel.git

cd UniPixel

```

2. Setup the virtual environment.

```shell

conda create -n unipixel python=3.12 -y

conda activate unipixel

# you may modify 'cu128' to your own CUDA version

pip install torch==2.7.1 torchvision==0.22.1 --index-url https://download.pytorch.org/whl/cu128

# other versions have no been verified

pip install flash_attn==2.8.2 --no-build-isolation

```

3. Install dependencies.

```shell

pip install -r requirements.txt

```

For NPU users, please install the CPU version of PyTorch and [`torch_npu`](https://github.com/Ascend/pytorch) instead.

### Quick Inference Demo

Try our [online demo](https://huggingface.co/spaces/PolyU-ChenLab/UniPixel) or the [inference script](https://github.com/PolyU-ChenLab/UniPixel/blob/main/tools/inference.py) below. Please refer to our [GitHub Repository](https://github.com/PolyU-ChenLab/UniPixel) for more details.

```python

import imageio.v3 as iio

import nncore

from unipixel.dataset.utils import process_vision_info

from unipixel.model.builder import build_model

from unipixel.utils.io import load_image, load_video

from unipixel.utils.transforms import get_sam2_transform

from unipixel.utils.visualizer import draw_mask

media_path = ''

prompt = 'Please segment the...'

output_dir = 'outputs'

model, processor = build_model('PolyU-ChenLab/UniPixel-7B')

device = next(model.parameters()).device

sam2_transform = get_sam2_transform(model.config.sam2_image_size)

if any(media_path.endswith(k) for k in ('jpg', 'png')):

frames, images = load_image(media_path), [media_path]

else:

frames, images = load_video(media_path, sample_frames=16)

messages = [{

'role':

'user',

'content': [{

'type': 'video',

'video': images,

'min_pixels': 128 * 28 * 28,

'max_pixels': 256 * 28 * 28 * int(16 / len(images))

}, {

'type': 'text',

'text': prompt

}]

}]

text = processor.apply_chat_template(messages, add_generation_prompt=True)

images, videos, kwargs = process_vision_info(messages, return_video_kwargs=True)

data = processor(text=[text], images=images, videos=videos, return_tensors='pt', **kwargs)

data['frames'] = [sam2_transform(frames).to(model.sam2.dtype)]

data['frame_size'] = [frames.shape[1:3]]

output_ids = model.generate(

**data.to(device),

do_sample=False,

temperature=None,

top_k=None,

top_p=None,

repetition_penalty=None,

max_new_tokens=512)

assert data.input_ids.size(0) == output_ids.size(0) == 1

output_ids = output_ids[0, data.input_ids.size(1):]

if output_ids[-1] == processor.tokenizer.eos_token_id:

output_ids = output_ids[:-1]

response = processor.decode(output_ids, clean_up_tokenization_spaces=False)

print(f'Response: {response}')

if len(model.seg) >= 1:

imgs = draw_mask(frames, model.seg)

nncore.mkdir(output_dir)

path = nncore.join(output_dir, f"{nncore.pure_name(media_path)}.{'gif' if len(imgs) > 1 else 'png'}")

print(f'Output Path: {path}')

iio.imwrite(path, imgs, duration=100, loop=0)

```

## 📖 Citation

Please kindly cite our paper if you find this project helpful.

```

@inproceedings{liu2025unipixel,

title={UniPixel: Unified Object Referring and Segmentation for Pixel-Level Visual Reasoning},

author={Liu, Ye and Ma, Zongyang and Pu, Junfu and Qi, Zhongang and Wu, Yang and Ying, Shan and Chen, Chang Wen},

booktitle={Advances in Neural Information Processing Systems (NeurIPS)},

year={2025}

}

```

![]()