Commit ·

652cbfa

0

Parent(s):

Duplicate from vslinx/ComfyUIDetailerWorkflow-vslinx

Browse filesCo-authored-by: David <vslinx@users.noreply.huggingface.co>

This view is limited to 50 files because it contains too many changes. See raw diff

- .gitattributes +37 -0

- README.md +250 -0

- TODO-script.md +3 -0

- models/IPAdapter/noobIPAMARK1_mark1.safetensors +3 -0

- models/README.md +1 -0

- windows-nvidia.bat +326 -0

- workflows/IMG2IMG/v4.0/IMG2IMG-ADetailer-v4.0-vslinx.json +0 -0

- workflows/IMG2IMG/v4.0/changelog.md +5 -0

- workflows/IMG2IMG/v4.0/img2img_zoomin.png +3 -0

- workflows/IMG2IMG/v4.0/img2img_zoomout.png +3 -0

- workflows/IMG2IMG/v4.0/sample_workflow_img2img.png +3 -0

- workflows/IMG2IMG/v4.1/Compare-Table1.png +3 -0

- workflows/IMG2IMG/v4.1/Compare-Table2.png +3 -0

- workflows/IMG2IMG/v4.1/Compare-Table3.png +3 -0

- workflows/IMG2IMG/v4.1/Compare-Table4.png +3 -0

- workflows/IMG2IMG/v4.1/Compare-Table5.png +3 -0

- workflows/IMG2IMG/v4.1/Compare-Table6.png +3 -0

- workflows/IMG2IMG/v4.1/IMG2IMG-ADetailer-v4.1-vslinx.json +0 -0

- workflows/IMG2IMG/v4.1/changelog.md +3 -0

- workflows/IMG2IMG/v4.1/workflow.png +3 -0

- workflows/IMG2IMG/v4.2/IMG2IMG-ADetailer-v4.2-vslinx.json +0 -0

- workflows/IMG2IMG/v4.2/IMG2IMG_ADetailer_2025-07-27-030843.png +3 -0

- workflows/IMG2IMG/v4.2/changelog.md +5 -0

- workflows/IMG2IMG/v4.2/img2img-1.png +3 -0

- workflows/IMG2IMG/v4.2/img2img-2.png +3 -0

- workflows/IMG2IMG/v4.2/img2img-fullpreview.png +3 -0

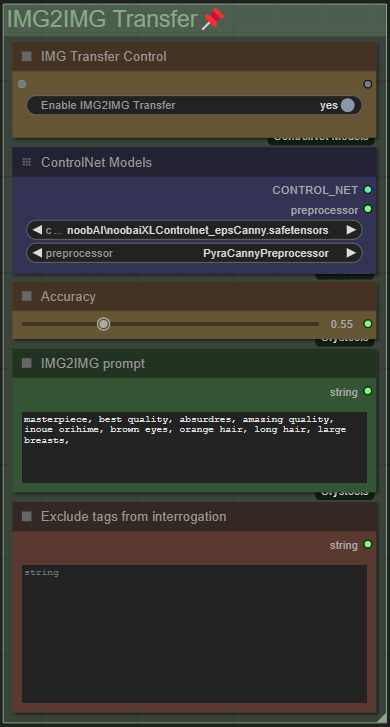

- workflows/IMG2IMG/v4.2/workflow-img2img.png +3 -0

- workflows/IMG2IMG/v4.3/IMG2IMG-ADetailer-v4.3-vslinx.json +0 -0

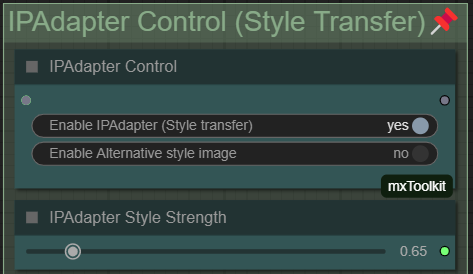

- workflows/IMG2IMG/v4.3/IMG2IMG_ADetailer_2025-08-11-023923.png +3 -0

- workflows/IMG2IMG/v4.3/changelog.md +3 -0

- workflows/IMG2IMG/v4.3/guide backup/Full IMG2IMG Guide 23.08.2025 - PAGE1.png +3 -0

- workflows/IMG2IMG/v4.3/guide backup/Full IMG2IMG Guide 23.08.2025 - PAGE2.png +3 -0

- workflows/IMG2IMG/v4.3/guide backup/guide.md +672 -0

- workflows/IMG2IMG/v4.3/img2img-1.png +3 -0

- workflows/IMG2IMG/v4.3/img2img-2.png +3 -0

- workflows/IMG2IMG/v4.3/img2img-fullpreview.png +3 -0

- workflows/IMG2IMG/v4.3/workflow-img2img.png +3 -0

- workflows/IMG2IMG/v4.4/IMG2IMG-ADetailer-v4.4-vslinx.json +0 -0

- workflows/IMG2IMG/v4.4/IMG2IMG_ADetailer_2025-08-27-012249.png +3 -0

- workflows/IMG2IMG/v4.4/IMG2IMG_ADetailer_2025-08-27-012250.png +3 -0

- workflows/IMG2IMG/v4.4/IMG2IMG_ADetailer_2025-08-27-012251.png +3 -0

- workflows/IMG2IMG/v4.4/IMG2IMG_ADetailer_2025-08-27-012251_01.png +3 -0

- workflows/IMG2IMG/v4.4/changelog.md +13 -0

- workflows/IMG2IMG/v4.4/workflow-txt2img.png +3 -0

- workflows/IMG2IMG/v4.4/workflow.png +3 -0

- workflows/IMG2IMG/v4.5/IMG2IMG-ADetailer-v4.5-vslinx.json +0 -0

- workflows/IMG2IMG/v4.5/IMG2IMG_ADetailer_2025-09-06-142407.png +3 -0

- workflows/IMG2IMG/v4.5/IMG2IMG_ADetailer_2025-09-06-142407_01.png +3 -0

- workflows/IMG2IMG/v4.5/IMG2IMG_ADetailer_2025-09-06-142408.png +3 -0

- workflows/IMG2IMG/v4.5/IMG2IMG_ADetailer_2025-09-06-142409.png +3 -0

.gitattributes

ADDED

|

@@ -0,0 +1,37 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

*.7z filter=lfs diff=lfs merge=lfs -text

|

| 2 |

+

*.arrow filter=lfs diff=lfs merge=lfs -text

|

| 3 |

+

*.bin filter=lfs diff=lfs merge=lfs -text

|

| 4 |

+

*.bz2 filter=lfs diff=lfs merge=lfs -text

|

| 5 |

+

*.ckpt filter=lfs diff=lfs merge=lfs -text

|

| 6 |

+

*.ftz filter=lfs diff=lfs merge=lfs -text

|

| 7 |

+

*.gz filter=lfs diff=lfs merge=lfs -text

|

| 8 |

+

*.h5 filter=lfs diff=lfs merge=lfs -text

|

| 9 |

+

*.joblib filter=lfs diff=lfs merge=lfs -text

|

| 10 |

+

*.lfs.* filter=lfs diff=lfs merge=lfs -text

|

| 11 |

+

*.mlmodel filter=lfs diff=lfs merge=lfs -text

|

| 12 |

+

*.model filter=lfs diff=lfs merge=lfs -text

|

| 13 |

+

*.msgpack filter=lfs diff=lfs merge=lfs -text

|

| 14 |

+

*.npy filter=lfs diff=lfs merge=lfs -text

|

| 15 |

+

*.npz filter=lfs diff=lfs merge=lfs -text

|

| 16 |

+

*.onnx filter=lfs diff=lfs merge=lfs -text

|

| 17 |

+

*.ot filter=lfs diff=lfs merge=lfs -text

|

| 18 |

+

*.parquet filter=lfs diff=lfs merge=lfs -text

|

| 19 |

+

*.pb filter=lfs diff=lfs merge=lfs -text

|

| 20 |

+

*.pickle filter=lfs diff=lfs merge=lfs -text

|

| 21 |

+

*.pkl filter=lfs diff=lfs merge=lfs -text

|

| 22 |

+

*.pt filter=lfs diff=lfs merge=lfs -text

|

| 23 |

+

*.pth filter=lfs diff=lfs merge=lfs -text

|

| 24 |

+

*.rar filter=lfs diff=lfs merge=lfs -text

|

| 25 |

+

*.safetensors filter=lfs diff=lfs merge=lfs -text

|

| 26 |

+

saved_model/**/* filter=lfs diff=lfs merge=lfs -text

|

| 27 |

+

*.tar.* filter=lfs diff=lfs merge=lfs -text

|

| 28 |

+

*.tar filter=lfs diff=lfs merge=lfs -text

|

| 29 |

+

*.tflite filter=lfs diff=lfs merge=lfs -text

|

| 30 |

+

*.tgz filter=lfs diff=lfs merge=lfs -text

|

| 31 |

+

*.wasm filter=lfs diff=lfs merge=lfs -text

|

| 32 |

+

*.xz filter=lfs diff=lfs merge=lfs -text

|

| 33 |

+

*.zip filter=lfs diff=lfs merge=lfs -text

|

| 34 |

+

*.zst filter=lfs diff=lfs merge=lfs -text

|

| 35 |

+

*tfevents* filter=lfs diff=lfs merge=lfs -text

|

| 36 |

+

*.png filter=lfs diff=lfs merge=lfs -text

|

| 37 |

+

*.json -text

|

README.md

ADDED

|

@@ -0,0 +1,250 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

ComfyUI Detailer/ADetailer Workflow

|

| 2 |

+

===================================

|

| 3 |

+

|

| 4 |

+

Requirements for each version are listed below or can be found inside a **Note** in the Workflow itself.

|

| 5 |

+

|

| 6 |

+

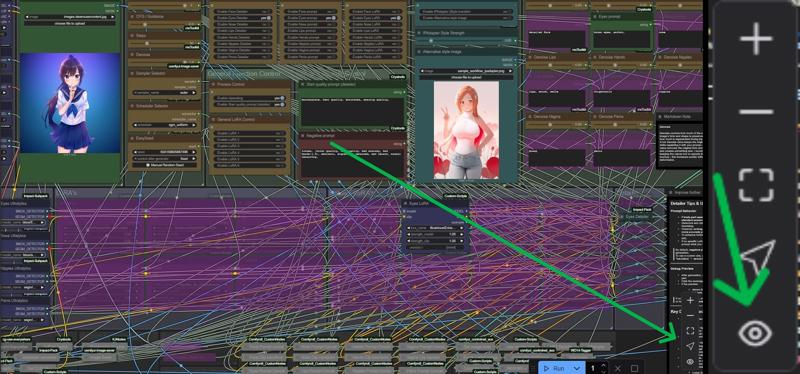

Because of the many connections among the nodes, I **highly** recommend turning off the link visibility by clicking the **"Toggle Link visibility"** (Eye icon) in the bottom right of ComfyUI.

|

| 7 |

+

|

| 8 |

+

[](https://civitai.com/articles/15480)

|

| 9 |

+

|

| 10 |

+

Description

|

| 11 |

+

-----------

|

| 12 |

+

|

| 13 |

+

I originally came from A1111 WebUI to ComfyUI and, honestly, a lot of things felt way more complicated than they needed to be. I couldn’t find workflows that were both visually pleasing _and_ showed me all the important options without requiring a deep understanding of every little thing in ComfyUI.

|

| 14 |

+

|

| 15 |

+

Over a few months I learned the ins and outs of Comfy and built my own very barebones, simple workflow (v1) with one main goal: streamline the process and make it visually understandable. I released it here thinking it might help others who also want to make the jump from A1111 to Comfy.

|

| 16 |

+

|

| 17 |

+

Over the last year I’ve kept adding more and more features as people started using the workflow and requesting things. The workflow has grown a lot, but I’m still trying to keep the same ease-of-use that this whole journey started with. The main goal is still the same: give you a lot of powerful options in a layout that’s as clean, readable, and as user-friendly as ComfyUI will allow.

|

| 18 |

+

|

| 19 |

+

At this point I feel like I have a pretty solid understanding of how Comfy works, and I’ve even created my own custom nodes to add missing functionality so this workflow could match the ideas I had for it. I try to hide as much of the technical complexity as possible — but if you’re ever curious or confused about anything, please feel free to ask!

|

| 20 |

+

|

| 21 |

+

Thanks to all of your suggestions, the workflow now includes features like:

|

| 22 |

+

|

| 23 |

+

* Single-image and batch generation

|

| 24 |

+

|

| 25 |

+

* Automatic detailers for specific body parts

|

| 26 |

+

|

| 27 |

+

* Upscaling

|

| 28 |

+

|

| 29 |

+

* v-pred models

|

| 30 |

+

|

| 31 |

+

* LoRAs

|

| 32 |

+

|

| 33 |

+

* ControlNet

|

| 34 |

+

|

| 35 |

+

* IPAdapter

|

| 36 |

+

|

| 37 |

+

* Hires fix / refiner

|

| 38 |

+

|

| 39 |

+

* Manual inpainting

|

| 40 |

+

|

| 41 |

+

|

| 42 |

+

Thank you to every single person who uses this workflow, donates Buzz, or shares images on this page.

|

| 43 |

+

I really appreciate you taking those extra steps to support and promote the project. ♥️

|

| 44 |

+

|

| 45 |

+

Requirements

|

| 46 |

+

------------

|

| 47 |

+

|

| 48 |

+

**v4.4 and above require ComfyUI version 0.3.51 or above and also need the** [**frontend**](https://github.com/Comfy-Org/ComfyUI_frontend?tab=readme-ov-file#nightly-releases) **to be AT LEAST 1.24.3 or later.**

|

| 49 |

+

|

| 50 |

+

### **v5.0** \- Full List

|

| 51 |

+

|

| 52 |

+

* [ComfyUI Impact Pack](https://github.com/ltdrdata/ComfyUI-Impact-Pack)

|

| 53 |

+

|

| 54 |

+

* [ComfyUI Impact Subpack](https://github.com/ltdrdata/ComfyUI-Impact-Subpack)

|

| 55 |

+

|

| 56 |

+

* [ComfyUI-mxToolkit](https://github.com/Smirnov75/ComfyUI-mxToolkit)

|

| 57 |

+

|

| 58 |

+

* [ComfyUI-Easy-Use](https://github.com/yolain/ComfyUI-Easy-Use)

|

| 59 |

+

|

| 60 |

+

* [ComfyUI-Custom-Scripts](https://github.com/pythongosssss/ComfyUI-Custom-Scripts)

|

| 61 |

+

|

| 62 |

+

* [ComfyUI-Crystools](https://github.com/crystian/ComfyUI-Crystools)

|

| 63 |

+

|

| 64 |

+

* [ComfyUI-Image-Saver](https://github.com/alexopus/ComfyUI-Image-Saver)

|

| 65 |

+

|

| 66 |

+

* [ComfyUI\_Comfyroll\_CustomNodes](https://github.com/Suzie1/ComfyUI_Comfyroll_CustomNodes)

|

| 67 |

+

|

| 68 |

+

* [ComfyUI-Advanced-ControlNet](https://github.com/Kosinkadink/ComfyUI-Advanced-ControlNet)

|

| 69 |

+

|

| 70 |

+

* [ComfyUI-KJNodes](https://github.com/kijai/ComfyUI-KJNodes)

|

| 71 |

+

|

| 72 |

+

* [ComfyUI\_IPAdapter\_plus](https://github.com/cubiq/ComfyUI_IPAdapter_plus)

|

| 73 |

+

|

| 74 |

+

* [ComfyUI-vslinx-nodes](https://github.com/vslinx/ComfyUI-vslinx-nodes)

|

| 75 |

+

|

| 76 |

+

* [ComfyUI-Inpaint-CropAndStitch](https://github.com/lquesada/ComfyUI-Inpaint-CropAndStitch)

|

| 77 |

+

|

| 78 |

+

* [comfyui\_controlnet\_aux](https://github.com/Fannovel16/comfyui_controlnet_aux)

|

| 79 |

+

|

| 80 |

+

* [cg-image-filter](https://github.com/chrisgoringe/cg-image-filter)

|

| 81 |

+

|

| 82 |

+

* [rgthree-comfy](https://github.com/rgthree/rgthree-comfy)

|

| 83 |

+

|

| 84 |

+

* [wlsh\_nodes](https://github.com/wallish77/wlsh_nodes)

|

| 85 |

+

|

| 86 |

+

|

| 87 |

+

### **v4.4** \- Additions to v4.3 **(IMG2IMG Only)**

|

| 88 |

+

|

| 89 |

+

* [ComfyUI-vslinx-nodes](https://github.com/vslinx/ComfyUI-vslinx-nodes)

|

| 90 |

+

|

| 91 |

+

* Otherwise same as **v4-4.3** below

|

| 92 |

+

|

| 93 |

+

|

| 94 |

+

### **v4.2-4.3** \- Additions to v4.1

|

| 95 |

+

|

| 96 |

+

* [WLSH Nodes](https://github.com/wallish77/wlsh_nodes)

|

| 97 |

+

|

| 98 |

+

* Otherwise same as **v4.1** (incl. **v4**) below

|

| 99 |

+

|

| 100 |

+

|

| 101 |

+

### v4.1 - Additions to v4 (IMG2IMG Only)

|

| 102 |

+

|

| 103 |

+

* [ComfyUI-WD14-Tagger](https://github.com/pythongosssss/ComfyUI-WD14-Tagger)

|

| 104 |

+

|

| 105 |

+

* Otherwise same as **v4** below

|

| 106 |

+

|

| 107 |

+

|

| 108 |

+

### v4 - Full List

|

| 109 |

+

|

| 110 |

+

* [ComfyUI Impact Pack](https://github.com/ltdrdata/ComfyUI-Impact-Pack)

|

| 111 |

+

|

| 112 |

+

* [ComfyUI Impact Subpack](https://github.com/ltdrdata/ComfyUI-Impact-Subpack)

|

| 113 |

+

|

| 114 |

+

* [ComfyUI-mxToolkit](https://github.com/Smirnov75/ComfyUI-mxToolkit)

|

| 115 |

+

|

| 116 |

+

* [ComfyUI-Easy-Use](https://github.com/yolain/ComfyUI-Easy-Use)

|

| 117 |

+

|

| 118 |

+

* [ComfyUI-Custom-Scripts](https://github.com/pythongosssss/ComfyUI-Custom-Scripts)

|

| 119 |

+

|

| 120 |

+

* [ComfyUI-Crystools](https://github.com/crystian/ComfyUI-Crystools)

|

| 121 |

+

|

| 122 |

+

* [ComfyUI-Image-Saver](https://github.com/alexopus/ComfyUI-Image-Saver)

|

| 123 |

+

|

| 124 |

+

* [ComfyUI\_Comfyroll\_CustomNodes](https://github.com/Suzie1/ComfyUI_Comfyroll_CustomNodes)

|

| 125 |

+

|

| 126 |

+

* [ComfyUI-Advanced-ControlNet](https://github.com/Kosinkadink/ComfyUI-Advanced-ControlNet)

|

| 127 |

+

|

| 128 |

+

* [ComfyUI-KJNodes](https://github.com/kijai/ComfyUI-KJNodes)

|

| 129 |

+

|

| 130 |

+

* [ComfyUI\_IPAdapter\_plus](https://github.com/cubiq/ComfyUI_IPAdapter_plus)

|

| 131 |

+

|

| 132 |

+

* [comfyui\_controlnet\_aux](https://github.com/Fannovel16/comfyui_controlnet_aux)

|

| 133 |

+

|

| 134 |

+

* [cg-use-everywhere](https://github.com/chrisgoringe/cg-use-everywhere)

|

| 135 |

+

|

| 136 |

+

* [cg-image-filter](https://github.com/chrisgoringe/cg-image-filter)

|

| 137 |

+

|

| 138 |

+

* [rgthree-comfy](https://github.com/rgthree/rgthree-comfy)

|

| 139 |

+

|

| 140 |

+

|

| 141 |

+

### v3 - v3.2 - Full List

|

| 142 |

+

|

| 143 |

+

* [ComfyUI Impact Pack](https://github.com/ltdrdata/ComfyUI-Impact-Pack)

|

| 144 |

+

|

| 145 |

+

* [ComfyUI Impact Subpack](https://github.com/ltdrdata/ComfyUI-Impact-Subpack)

|

| 146 |

+

|

| 147 |

+

* [ComfyUI-mxToolkit](https://github.com/Smirnov75/ComfyUI-mxToolkit)

|

| 148 |

+

|

| 149 |

+

* [ComfyUI-Easy-Use](https://github.com/yolain/ComfyUI-Easy-Use)

|

| 150 |

+

|

| 151 |

+

* [ComfyUI-Custom-Scripts](https://github.com/pythongosssss/ComfyUI-Custom-Scripts)

|

| 152 |

+

|

| 153 |

+

* [ComfyUI-Crystools](https://github.com/crystian/ComfyUI-Crystools)

|

| 154 |

+

|

| 155 |

+

* [ComfyUI-Image-Saver](https://github.com/alexopus/ComfyUI-Image-Saver)

|

| 156 |

+

|

| 157 |

+

* [ComfyUI\_Comfyroll\_CustomNodes](https://github.com/Suzie1/ComfyUI_Comfyroll_CustomNodes)

|

| 158 |

+

|

| 159 |

+

* [ComfyUI-Advanced-ControlNet](https://github.com/Kosinkadink/ComfyUI-Advanced-ControlNet)

|

| 160 |

+

|

| 161 |

+

* [ComfyUI-KJNodes](https://github.com/kijai/ComfyUI-KJNodes)

|

| 162 |

+

|

| 163 |

+

* [comfyui\_controlnet\_aux](https://github.com/Fannovel16/comfyui_controlnet_aux)

|

| 164 |

+

|

| 165 |

+

* [cg-use-everywhere](https://github.com/chrisgoringe/cg-use-everywhere)

|

| 166 |

+

|

| 167 |

+

* [cg-image-filter](https://github.com/chrisgoringe/cg-image-filter)

|

| 168 |

+

|

| 169 |

+

* [rgthree-comfy](https://github.com/rgthree/rgthree-comfy)

|

| 170 |

+

|

| 171 |

+

|

| 172 |

+

### v2.2 - Additions to v2

|

| 173 |

+

|

| 174 |

+

* [ComfyUI\_Comfyroll\_Nodes](https://github.com/Suzie1/ComfyUI_Comfyroll_CustomNodes)

|

| 175 |

+

|

| 176 |

+

* Otherwise same Custom-Nodes as v2 but you can remove [Comfyui-ergouzi-Nodes](https://github.com/11dogzi/Comfyui-ergouzi-Nodes)

|

| 177 |

+

|

| 178 |

+

|

| 179 |

+

v2 - Full List

|

| 180 |

+

--------------

|

| 181 |

+

|

| 182 |

+

* [ComfyUI Impact Pack](https://github.com/ltdrdata/ComfyUI-Impact-Pack)

|

| 183 |

+

|

| 184 |

+

* [ComfyUI Impact Subpack](https://github.com/ltdrdata/ComfyUI-Impact-Subpack)

|

| 185 |

+

|

| 186 |

+

* [ComfyUI-mxToolkit](https://github.com/Smirnov75/ComfyUI-mxToolkit)

|

| 187 |

+

|

| 188 |

+

* [ComfyUI-Easy-Use](https://github.com/yolain/ComfyUI-Easy-Use)

|

| 189 |

+

|

| 190 |

+

* [ComfyUI-Custom-Scripts](https://github.com/pythongosssss/ComfyUI-Custom-Scripts)

|

| 191 |

+

|

| 192 |

+

* [ComfyUI-Crystools](https://github.com/crystian/ComfyUI-Crystools)

|

| 193 |

+

|

| 194 |

+

* [Comfyui-ergouzi-Nodes](https://github.com/11dogzi/Comfyui-ergouzi-Nodes)

|

| 195 |

+

|

| 196 |

+

* [ComfyUI-Image-Saver](https://github.com/alexopus/ComfyUI-Image-Saver)

|

| 197 |

+

|

| 198 |

+

* [cg-use-everywhere](https://github.com/chrisgoringe/cg-use-everywhere)

|

| 199 |

+

|

| 200 |

+

* [cg-image-filter](https://github.com/chrisgoringe/cg-image-filter)

|

| 201 |

+

|

| 202 |

+

* [rgthree-comfy](https://github.com/rgthree/rgthree-comfy)

|

| 203 |

+

|

| 204 |

+

|

| 205 |

+

v1 - Full List

|

| 206 |

+

--------------

|

| 207 |

+

|

| 208 |

+

* [ComfyUI Impact Pack](https://github.com/ltdrdata/ComfyUI-Impact-Pack)

|

| 209 |

+

|

| 210 |

+

* [ComfyUI-Custom-Scripts](https://github.com/pythongosssss/ComfyUI-Custom-Scripts)

|

| 211 |

+

|

| 212 |

+

* [cg-use-everywhere](https://github.com/chrisgoringe/cg-use-everywhere)

|

| 213 |

+

|

| 214 |

+

* [cg-image-picker](https://github.com/chrisgoringe/cg-image-picker)

|

| 215 |

+

|

| 216 |

+

* [ComfyUI Impact Subpack](https://github.com/ltdrdata/ComfyUI-Impact-Subpack)

|

| 217 |

+

|

| 218 |

+

|

| 219 |

+

How to use

|

| 220 |

+

----------

|

| 221 |

+

|

| 222 |

+

Since all of the different versions work differently, you should check the **"How to use"** Node inside of the Workflow itself.

|

| 223 |

+

|

| 224 |

+

I promise that once you read the explanation of the workflow itself, it'll click and it will be a simple plug and play experience.

|

| 225 |

+

|

| 226 |

+

It's the simplest I could've made it coming from someone who's only started using ComfyUI 4-5 months ago and had been exclusively an A1111WebUI user before.

|

| 227 |

+

|

| 228 |

+

When were what functionalitys added?

|

| 229 |

+

------------------------------------

|

| 230 |

+

|

| 231 |

+

Starting from **v3**, ControlNet is included.

|

| 232 |

+

Starting from **v4**, IPAdapter is included.

|

| 233 |

+

Starting from **v4.3**, HiRes Fix and Dynamic prompts(wildcards) is included.

|

| 234 |

+

Starting from **v4.4**, Refiner is included.

|

| 235 |

+

Starting from **v5.0**, Manual Inpainting is included.

|

| 236 |

+

|

| 237 |

+

Any errors during execution?

|

| 238 |

+

----------------------------

|

| 239 |

+

|

| 240 |

+

If you're running into any errors during the execution of the workflow, please check the [FAQ of my Guide](https://civitai.com/articles/15480#faq:) first. The guide is written for the IMG2IMG Workflow but when issues arise that people run into frequently i'll add the solutions and what's hapenning to that FAQ section.

|

| 241 |

+

If you can't find the problem you're running into there - feel free to write me a comment **and include your logs from your comfyui console that show the error** on the model page so that i can help you and other people might benefit from it as well.

|

| 242 |

+

|

| 243 |

+

Feedback

|

| 244 |

+

--------

|

| 245 |

+

|

| 246 |

+

I'd love to see your feedback or opinion on the workflow.

|

| 247 |

+

This is the first workflow I have ever created myself from scratch and I'd love to hear what you think of it.

|

| 248 |

+

|

| 249 |

+

If you want to do me a huge favor, you can post your results on this Model page.

|

| 250 |

+

I'll make sure to send some buzz your way!

|

TODO-script.md

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

- add support of just downloading models and nodes instead of everything (use existing comfy Folder)

|

| 2 |

+

- but also update all nodes that are already there to newest version

|

| 3 |

+

- fix check for controlnet models (currently no check if they already exist)

|

models/IPAdapter/noobIPAMARK1_mark1.safetensors

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

version https://git-lfs.github.com/spec/v1

|

| 2 |

+

oid sha256:5cdb6a00be1b12579745b5bed0c7b83f0869073d8a864fa8cd50a9356601919a

|

| 3 |

+

size 1405172056

|

models/README.md

ADDED

|

@@ -0,0 +1 @@

|

|

|

|

|

|

|

| 1 |

+

All the models stored here are only stored because i couldn't find an official source and didn't want to rely on 3rd-party people to keep the models uploaded. If your model is among these here, you can always contact me [here](https://civitai.com/user/vslinx) and i'll remove it by your request.

|

windows-nvidia.bat

ADDED

|

@@ -0,0 +1,326 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

@echo off

|

| 2 |

+

setlocal enabledelayedexpansion

|

| 3 |

+

|

| 4 |

+

echo.

|

| 5 |

+

echo ============================

|

| 6 |

+

echo ComfyUI Auto Installer

|

| 7 |

+

echo ============================

|

| 8 |

+

echo.

|

| 9 |

+

|

| 10 |

+

REM Define paths

|

| 11 |

+

set "comfyPath=%CD%\ComfyUI"

|

| 12 |

+

set "customNodesPath=%comfyPath%\custom_nodes"

|

| 13 |

+

set "venvPath=%comfyPath%\venv"

|

| 14 |

+

set "pythonPath=%venvPath%\Scripts\python.exe"

|

| 15 |

+

set "activateScript=%venvPath%\Scripts\activate.bat"

|

| 16 |

+

|

| 17 |

+

REM -------------------------------

|

| 18 |

+

REM Check if Python is available

|

| 19 |

+

REM -------------------------------

|

| 20 |

+

where python >nul 2>&1

|

| 21 |

+

if %errorlevel% NEQ 0 (

|

| 22 |

+

echo Python is not found in PATH.

|

| 23 |

+

echo Would you like to install Python 3.12 now? [Y/N]

|

| 24 |

+

set /p "PYINSTALL=Your choice [Y/N]: "

|

| 25 |

+

if /i "!PYINSTALL!"=="Y" (

|

| 26 |

+

echo Downloading Python 3.12...

|

| 27 |

+

%SystemRoot%\System32\curl.exe -L -o python-installer.exe https://www.python.org/ftp/python/3.12.3/python-3.12.3-amd64.exe

|

| 28 |

+

if not exist python-installer.exe (

|

| 29 |

+

echo Failed to download Python installer.

|

| 30 |

+

pause

|

| 31 |

+

exit /b 1

|

| 32 |

+

)

|

| 33 |

+

echo Installing Python 3.12...

|

| 34 |

+

start /wait python-installer.exe /quiet InstallAllUsers=1 PrependPath=1 Include_test=0

|

| 35 |

+

del /f python-installer.exe

|

| 36 |

+

) else (

|

| 37 |

+

echo Cannot continue without Python. Exiting...

|

| 38 |

+

pause

|

| 39 |

+

exit /b 1

|

| 40 |

+

)

|

| 41 |

+

)

|

| 42 |

+

|

| 43 |

+

REM Get Python version from stderr

|

| 44 |

+

for /f "tokens=2 delims= " %%A in ('python -V 2^>^&1') do set PYVERSION=%%A

|

| 45 |

+

|

| 46 |

+

REM Parse major and minor

|

| 47 |

+

for /f "tokens=1,2 delims=." %%B in ("%PYVERSION%") do (

|

| 48 |

+

set "PYMAJOR=%%B"

|

| 49 |

+

set "PYMINOR=%%C"

|

| 50 |

+

)

|

| 51 |

+

|

| 52 |

+

echo Detected Python version: %PYVERSION%

|

| 53 |

+

if not "%PYMAJOR%.%PYMINOR%"=="3.12" (

|

| 54 |

+

echo Your current Python version is %PYVERSION%. It may not be supported.

|

| 55 |

+

echo Would you like to:

|

| 56 |

+

echo [Y] Install Python 3.12

|

| 57 |

+

echo [N] Continue using current version

|

| 58 |

+

set /p "PYCHOICE=Choose [Y/N]: "

|

| 59 |

+

if /i "!PYCHOICE!"=="Y" (

|

| 60 |

+

echo Downloading Python 3.12...

|

| 61 |

+

%SystemRoot%\System32\curl.exe -L -o python-installer.exe https://www.python.org/ftp/python/3.12.3/python-3.12.3-amd64.exe

|

| 62 |

+

if not exist python-installer.exe (

|

| 63 |

+

echo Failed to download Python installer.

|

| 64 |

+

pause

|

| 65 |

+

exit /b 1

|

| 66 |

+

)

|

| 67 |

+

echo Installing Python 3.12...

|

| 68 |

+

start /wait python-installer.exe /quiet InstallAllUsers=1 PrependPath=1 Include_test=0

|

| 69 |

+

del /f python-installer.exe

|

| 70 |

+

)

|

| 71 |

+

)

|

| 72 |

+

|

| 73 |

+

REM -------------------------------

|

| 74 |

+

REM Check Git

|

| 75 |

+

REM -------------------------------

|

| 76 |

+

git --version >nul 2>&1

|

| 77 |

+

if %errorlevel% NEQ 0 (

|

| 78 |

+

echo Git not found. Installing Git...

|

| 79 |

+

powershell -Command "& {Invoke-WebRequest -Uri 'https://github.com/git-for-windows/git/releases/download/v2.41.0.windows.3/Git-2.41.0.3-64-bit.exe' -OutFile 'git_installer.exe'}"

|

| 80 |

+

if %errorlevel% NEQ 0 (

|

| 81 |

+

echo Failed to download Git.

|

| 82 |

+

pause

|

| 83 |

+

exit /b 1

|

| 84 |

+

)

|

| 85 |

+

start /wait git_installer.exe /VERYSILENT

|

| 86 |

+

del /f git_installer.exe

|

| 87 |

+

echo Git installed successfully.

|

| 88 |

+

)

|

| 89 |

+

|

| 90 |

+

REM -------------------------------

|

| 91 |

+

REM Clone ComfyUI

|

| 92 |

+

REM -------------------------------

|

| 93 |

+

if not exist "%comfyPath%" (

|

| 94 |

+

echo Cloning ComfyUI...

|

| 95 |

+

git clone https://github.com/comfyanonymous/ComfyUI.git "%comfyPath%"

|

| 96 |

+

) else (

|

| 97 |

+

echo ComfyUI already exists. Skipping clone.

|

| 98 |

+

)

|

| 99 |

+

|

| 100 |

+

REM -------------------------------

|

| 101 |

+

REM Create venv

|

| 102 |

+

REM -------------------------------

|

| 103 |

+

if not exist "%venvPath%" (

|

| 104 |

+

echo Creating virtual environment...

|

| 105 |

+

python -m venv "%venvPath%"

|

| 106 |

+

)

|

| 107 |

+

|

| 108 |

+

REM -------------------------------

|

| 109 |

+

REM Activate venv and install deps

|

| 110 |

+

REM -------------------------------

|

| 111 |

+

call "%activateScript%"

|

| 112 |

+

echo Installing CUDA-enabled torch manually...

|

| 113 |

+

"%pythonPath%" -m pip install torch torchvision torchaudio --extra-index-url https://download.pytorch.org/whl/cu128

|

| 114 |

+

|

| 115 |

+

echo Installing dependencies...

|

| 116 |

+

"%pythonPath%" -m pip install --upgrade pip

|

| 117 |

+

"%pythonPath%" -m pip install -r "%comfyPath%\requirements.txt"

|

| 118 |

+

|

| 119 |

+

REM -------------------------------

|

| 120 |

+

REM Clone custom nodes

|

| 121 |

+

REM -------------------------------

|

| 122 |

+

if not exist "%customNodesPath%" (

|

| 123 |

+

mkdir "%customNodesPath%"

|

| 124 |

+

)

|

| 125 |

+

|

| 126 |

+

echo Cloning custom nodes...

|

| 127 |

+

|

| 128 |

+

set repos[0]=https://github.com/ltdrdata/ComfyUI-Impact-Pack

|

| 129 |

+

set repos[1]=https://github.com/ltdrdata/ComfyUI-Impact-Subpack

|

| 130 |

+

set repos[2]=https://github.com/Smirnov75/ComfyUI-mxToolkit

|

| 131 |

+

set repos[3]=https://github.com/yolain/ComfyUI-Easy-Use

|

| 132 |

+

set repos[4]=https://github.com/pythongosssss/ComfyUI-Custom-Scripts

|

| 133 |

+

set repos[5]=https://github.com/crystian/ComfyUI-Crystools

|

| 134 |

+

set repos[6]=https://github.com/alexopus/ComfyUI-Image-Saver

|

| 135 |

+

set repos[7]=https://github.com/Suzie1/ComfyUI_Comfyroll_CustomNodes

|

| 136 |

+

set repos[8]=https://github.com/Kosinkadink/ComfyUI-Advanced-ControlNet

|

| 137 |

+

set repos[9]=https://github.com/kijai/ComfyUI-KJNodes

|

| 138 |

+

set repos[10]=https://github.com/Fannovel16/comfyui_controlnet_aux

|

| 139 |

+

set repos[11]=https://github.com/vslinx/ComfyUI-vslinx-nodes.git

|

| 140 |

+

set repos[12]=https://github.com/chrisgoringe/cg-image-filter

|

| 141 |

+

set repos[13]=https://github.com/rgthree/rgthree-comfy

|

| 142 |

+

set repos[14]=https://github.com/cubiq/ComfyUI_IPAdapter_plus

|

| 143 |

+

set repos[15]=https://github.com/pythongosssss/ComfyUI-WD14-Tagger.git

|

| 144 |

+

set repos[16]=https://github.com/Comfy-Org/ComfyUI-Manager

|

| 145 |

+

set repos[17]=https://github.com/wallish77/wlsh_nodes.git

|

| 146 |

+

set repos[18]=https://github.com/lquesada/ComfyUI-Inpaint-CropAndStitch

|

| 147 |

+

|

| 148 |

+

for /L %%i in (0,1,18) do (

|

| 149 |

+

set "repo=!repos[%%i]!"

|

| 150 |

+

for %%A in (!repo!) do (

|

| 151 |

+

set "folderName=%%~nxA"

|

| 152 |

+

set "targetPath=%customNodesPath%\!folderName!"

|

| 153 |

+

|

| 154 |

+

if not exist "!targetPath!" (

|

| 155 |

+

echo - Cloning !folderName!...

|

| 156 |

+

git clone !repo! "!targetPath!"

|

| 157 |

+

) else (

|

| 158 |

+

echo - !folderName! already exists. Skipping.

|

| 159 |

+

)

|

| 160 |

+

|

| 161 |

+

REM Install requirements if available

|

| 162 |

+

if exist "!targetPath!\requirements.txt" (

|

| 163 |

+

echo Installing requirements for !folderName!...

|

| 164 |

+

"%pythonPath%" -s -m pip install -r "!targetPath!\requirements.txt"

|

| 165 |

+

)

|

| 166 |

+

)

|

| 167 |

+

)

|

| 168 |

+

|

| 169 |

+

REM -------------------------------

|

| 170 |

+

REM Download JSON workflow files

|

| 171 |

+

REM -------------------------------

|

| 172 |

+

set "workflowFolder=%comfyPath%\user\default\workflows"

|

| 173 |

+

if not exist "%workflowFolder%" (

|

| 174 |

+

mkdir "%workflowFolder%"

|

| 175 |

+

)

|

| 176 |

+

|

| 177 |

+

REM -------------------------------

|

| 178 |

+

REM Download SAM model (Segment Anything)

|

| 179 |

+

REM -------------------------------

|

| 180 |

+

set "samFolder=%comfyPath%\models\sams"

|

| 181 |

+

if not exist "%samFolder%" (

|

| 182 |

+

mkdir "%samFolder%"

|

| 183 |

+

)

|

| 184 |

+

|

| 185 |

+

set "samFile=%samFolder%\sam_vit_b_01ec64.pth"

|

| 186 |

+

if exist "%samFile%" (

|

| 187 |

+

echo - sam_vit_b_01ec64.pth already exists. Skipping download.

|

| 188 |

+

) else (

|

| 189 |

+

echo Downloading SAM model...

|

| 190 |

+

%SystemRoot%\System32\curl.exe -L -o "%samFile%" ^

|

| 191 |

+

https://dl.fbaipublicfiles.com/segment_anything/sam_vit_b_01ec64.pth

|

| 192 |

+

)

|

| 193 |

+

|

| 194 |

+

echo Checking workflow files...

|

| 195 |

+

|

| 196 |

+

REM Define workflow filenames and URLs

|

| 197 |

+

set workflow[0]=TXT2IMG-ADetailer-v5.0-vslinx.json

|

| 198 |

+

set url[0]=https://huggingface.co/vslinx/ComfyUIDetailerWorkflow-vslinx/resolve/main/workflows/TXT2IMG/v5.0/TXT2IMG-ADetailer-v5.0-vslinx.json

|

| 199 |

+

|

| 200 |

+

set workflow[1]=IMG2IMG-ADetailer-v5.0-vslinx.json

|

| 201 |

+

set url[1]=https://huggingface.co/vslinx/ComfyUIDetailerWorkflow-vslinx/resolve/main/workflows/IMG2IMG/v5.0/IMG2IMG-ADetailer-v5.0-vslinx.json

|

| 202 |

+

|

| 203 |

+

REM Loop through and download if not already present

|

| 204 |

+

for /L %%i in (0,1,1) do (

|

| 205 |

+

call set "file=%%workflow[%%i]%%"

|

| 206 |

+

call set "link=%%url[%%i]%%"

|

| 207 |

+

set "path=%workflowFolder%\!file!"

|

| 208 |

+

|

| 209 |

+

if exist "!path!" (

|

| 210 |

+

echo - !file! already exists. Skipping download.

|

| 211 |

+

) else (

|

| 212 |

+

echo ↓ Downloading !file!...

|

| 213 |

+

%SystemRoot%\System32\curl.exe -L -o "!path!" "!link!"

|

| 214 |

+

)

|

| 215 |

+

)

|

| 216 |

+

|

| 217 |

+

echo.

|

| 218 |

+

echo Would you like to download required models (ControlNet, IPAdapter, Upscale Model, etc.)?

|

| 219 |

+

echo [Y] Yes

|

| 220 |

+

echo [N] No

|

| 221 |

+

set /p "download_models=Choose [Y/N]: "

|

| 222 |

+

|

| 223 |

+

if /i "%download_models%"=="Y" (

|

| 224 |

+

echo Downloading required models...

|

| 225 |

+

|

| 226 |

+

REM Create folders if they don't exist

|

| 227 |

+

if not exist "%comfyPath%\models\vae" mkdir "%comfyPath%\models\vae"

|

| 228 |

+

if not exist "%comfyPath%\models\upscale_models" mkdir "%comfyPath%\models\upscale_models"

|

| 229 |

+

if not exist "%comfyPath%\models\ipadapter" mkdir "%comfyPath%\models\ipadapter"

|

| 230 |

+

if not exist "%comfyPath%\models\clip_vision" mkdir "%comfyPath%\models\clip_vision"

|

| 231 |

+

if not exist "%comfyPath%\models\controlnet" mkdir "%comfyPath%\models\controlnet"

|

| 232 |

+

|

| 233 |

+

REM Download files

|

| 234 |

+

echo Downloading VAE...

|

| 235 |

+

%SystemRoot%\System32\curl.exe -L -o "%comfyPath%\models\vae\sdxl_vae.safetensors" ^

|

| 236 |

+

https://huggingface.co/stabilityai/sdxl-vae/resolve/main/sdxl_vae.safetensors

|

| 237 |

+

|

| 238 |

+

echo Downloading Upscale Model...

|

| 239 |

+

%SystemRoot%\System32\curl.exe -L -o "%comfyPath%\models\upscale_models\4x_foolhardy_Remacri.pth" ^

|

| 240 |

+

https://huggingface.co/FacehugmanIII/4x_foolhardy_Remacri/resolve/main/4x_foolhardy_Remacri.pth

|

| 241 |

+

|

| 242 |

+

echo Downloading IPAdapter model...

|

| 243 |

+

%SystemRoot%\System32\curl.exe -L -o "%comfyPath%\models\ipadapter\noobIPAMARK1_mark1.safetensors" ^

|

| 244 |

+

https://huggingface.co/vslinx/ComfyUIDetailerWorkflow-vslinx/resolve/main/models/IPAdapter/noobIPAMARK1_mark1.safetensors

|

| 245 |

+

|

| 246 |

+

echo Downloading CLIP Vision Model...

|

| 247 |

+

%SystemRoot%\System32\curl.exe -L -o "%comfyPath%\models\clip_vision\CLIP-ViT-bigG-14-laion2B-39B-b160k.safetensors" ^

|

| 248 |

+

https://huggingface.co/XuminYu/example_safetensors/resolve/4b89d7ebd99a9913f0abbec4bf0f54932b11d243/CLIP-ViT-bigG-14-laion2B-39B-b160k.safetensors

|

| 249 |

+

|

| 250 |

+

echo Downloading ControlNet Canny Model...

|

| 251 |

+

%SystemRoot%\System32\curl.exe -L -o "%comfyPath%\models\controlnet\noobaiXLControlnet_epsCanny.safetensors" ^

|

| 252 |

+

https://huggingface.co/Eugeoter/noob-sdxl-controlnet-canny/resolve/main/noob_sdxl_controlnet_canny.safetensors

|

| 253 |

+

|

| 254 |

+

echo Downloading ControlNet DepthMidas Model...

|

| 255 |

+

%SystemRoot%\System32\curl.exe -L -o "%comfyPath%\models\controlnet\noobaiXLControlnet_epsDepthMidasv1-1.safetensors" ^

|

| 256 |

+

https://huggingface.co/Eugeoter/noob-sdxl-controlnet-depth_midas-v1-1/resolve/main/noob-sdxl-controlnet-depth-midas-v1-1.safetensors

|

| 257 |

+

|

| 258 |

+

echo Downloading ControlNet Lineart-Anime Model...

|

| 259 |

+

%SystemRoot%\System32\curl.exe -L -o "%comfyPath%\models\controlnet\noobaiXLControlnet_epsLineartAnime.safetensors" ^

|

| 260 |

+

https://huggingface.co/Eugeoter/noob-sdxl-controlnet-lineart_anime/resolve/main/noob-sdxl-controlnet-lineart_anime.safetensors

|

| 261 |

+

|

| 262 |

+

echo Downloading ControlNet Lineart-Realistic Model...

|

| 263 |

+

%SystemRoot%\System32\curl.exe -L -o "%comfyPath%\models\controlnet\noobaiXLControlnet_epsLineartRealistic.safetensors" ^

|

| 264 |

+

https://huggingface.co/Eugeoter/noob-sdxl-controlnet-lineart_realistic/resolve/main/noob-sdxl-controlnet-lineart_realistic.safetensors

|

| 265 |

+

|

| 266 |

+

echo Downloading ControlNet Manga line Model...

|

| 267 |

+

%SystemRoot%\System32\curl.exe -L -o "%comfyPath%\models\controlnet\noobaiXLControlnet_epsMangaLine.safetensors" ^

|

| 268 |

+

https://huggingface.co/Eugeoter/noob-sdxl-controlnet-manga_line/resolve/main/noob-sdxl-controlnet-manga-line.safetensors

|

| 269 |

+

|

| 270 |

+

echo Downloading ControlNet OpenPose Model...

|

| 271 |

+

%SystemRoot%\System32\curl.exe -L -o "%comfyPath%\models\controlnet\noobaiXLControlnet_epsOpenPose.safetensors" ^

|

| 272 |

+

https://huggingface.co/Laxhar/noob_openpose/resolve/main/openpose_pre.safetensors

|

| 273 |

+

|

| 274 |

+

echo Downloading ControlNet Softedge Hed Model...

|

| 275 |

+

%SystemRoot%\System32\curl.exe -L -o "%comfyPath%\models\controlnet\noobaiXLControlnet_epsSoftedgeHed.safetensors" ^

|

| 276 |

+

https://huggingface.co/Eugeoter/noob-sdxl-controlnet-softedge_hed/resolve/main/noob-sdxl-controlnet-softedge_hed.safetensors

|

| 277 |

+

|

| 278 |

+

echo Downloading ControlNet Depth Model...

|

| 279 |

+

%SystemRoot%\System32\curl.exe -L -o "%comfyPath%\models\controlnet\noobaiXLControlnet_epsDepth.safetensors" ^

|

| 280 |

+

https://huggingface.co/Eugeoter/noob-sdxl-controlnet-depth/resolve/main/noob_sdxl_controlnet_depth.safetensors

|

| 281 |

+

|

| 282 |

+

echo All required models have been downloaded.

|

| 283 |

+

) else (

|

| 284 |

+

echo Skipping model downloads.

|

| 285 |

+

)

|

| 286 |

+

|

| 287 |

+

REM -------------------------------

|

| 288 |

+

REM Setup Complete

|

| 289 |

+

REM -------------------------------

|

| 290 |

+

echo.

|

| 291 |

+

echo ============================

|

| 292 |

+

echo Setup Complete!

|

| 293 |

+

echo ============================

|

| 294 |

+

|

| 295 |

+

REM -------------------------------

|

| 296 |

+

REM Offer to create Desktop shortcut

|

| 297 |

+

REM -------------------------------

|

| 298 |

+

echo Would you like to create a desktop shortcut to start ComfyUI?

|

| 299 |

+

echo [Y] Yes

|

| 300 |

+

echo [N] No

|

| 301 |

+

set /p "MAKE_SHORTCUT=Choose [Y/N]: "

|

| 302 |

+

|

| 303 |

+

if /i "!MAKE_SHORTCUT!"=="Y" (

|

| 304 |

+

set "shortcutBat=%USERPROFILE%\Desktop\Start_ComfyUI.bat"

|

| 305 |

+

echo @echo off > "!shortcutBat!"

|

| 306 |

+

echo cd /d "%comfyPath%" >> "!shortcutBat!"

|

| 307 |

+

echo call "%venvPath%\Scripts\activate.bat" >> "!shortcutBat!"

|

| 308 |

+

echo python main.py >> "!shortcutBat!"

|

| 309 |

+

echo pause >> "!shortcutBat!"

|

| 310 |

+

echo Shortcut created on Desktop as Start_ComfyUI.bat

|

| 311 |

+

)

|

| 312 |

+

|

| 313 |

+

REM -------------------------------

|

| 314 |

+

REM Ask to start ComfyUI

|

| 315 |

+

REM -------------------------------

|

| 316 |

+

echo Would you like to start ComfyUI now?

|

| 317 |

+

echo [Y] Yes

|

| 318 |

+

echo [N] No

|

| 319 |

+

set /p "STARTNOW=Choose [Y/N]: "

|

| 320 |

+

if /i "!STARTNOW!"=="Y" (

|

| 321 |

+

echo Starting ComfyUI in a new shell...

|

| 322 |

+

start "" cmd /k ^

|

| 323 |

+

"cd /d "%comfyPath%" && call "%venvPath%\Scripts\activate.bat" && python main.py"

|

| 324 |

+

)

|

| 325 |

+

|

| 326 |

+

pause

|

workflows/IMG2IMG/v4.0/IMG2IMG-ADetailer-v4.0-vslinx.json

ADDED

|

The diff for this file is too large to render.

See raw diff

|

|

|

workflows/IMG2IMG/v4.0/changelog.md

ADDED

|

@@ -0,0 +1,5 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

- initial release of img2img workflow

|

| 2 |

+

- includes detailer and ipadapter

|

| 3 |

+

- scheduler, sampler, steps & cfg now apply to detailer

|

| 4 |

+

- single selectors for denoise of each individual detailer

|

| 5 |

+

- adapted Manual + Requirements

|

workflows/IMG2IMG/v4.0/img2img_zoomin.png

ADDED

|

Git LFS Details

|

workflows/IMG2IMG/v4.0/img2img_zoomout.png

ADDED

|

Git LFS Details

|

workflows/IMG2IMG/v4.0/sample_workflow_img2img.png

ADDED

|

Git LFS Details

|

workflows/IMG2IMG/v4.1/Compare-Table1.png

ADDED

|

Git LFS Details

|

workflows/IMG2IMG/v4.1/Compare-Table2.png

ADDED

|

Git LFS Details

|

workflows/IMG2IMG/v4.1/Compare-Table3.png

ADDED

|

Git LFS Details

|

workflows/IMG2IMG/v4.1/Compare-Table4.png

ADDED

|

Git LFS Details

|

workflows/IMG2IMG/v4.1/Compare-Table5.png

ADDED

|

Git LFS Details

|

workflows/IMG2IMG/v4.1/Compare-Table6.png

ADDED

|

Git LFS Details

|

workflows/IMG2IMG/v4.1/IMG2IMG-ADetailer-v4.1-vslinx.json

ADDED

|

The diff for this file is too large to render.

See raw diff

|

|

|

workflows/IMG2IMG/v4.1/changelog.md

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

- IMG2IMG Transfer for copying image composition

|

| 2 |

+

- re-arrangement of nodes

|

| 3 |

+

- compatibility updates

|

workflows/IMG2IMG/v4.1/workflow.png

ADDED

|

Git LFS Details

|

workflows/IMG2IMG/v4.2/IMG2IMG-ADetailer-v4.2-vslinx.json

ADDED

|

The diff for this file is too large to render.

See raw diff

|

|

|

workflows/IMG2IMG/v4.2/IMG2IMG_ADetailer_2025-07-27-030843.png

ADDED

|

Git LFS Details

|

workflows/IMG2IMG/v4.2/changelog.md

ADDED

|

@@ -0,0 +1,5 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

- Added Low VRAM Options for IPAdapter Style Transfer and IMG2IMG Transfer

|

| 2 |

+

- Fixed Scheduler Selector after image-saver node update broke it (have to update the custom node)

|

| 3 |

+

- Added upscaling factor to control the upscaling instead of leaving it to the upscale model

|

| 4 |

+

- Optional use of Sage Attention Patch for global speed increase if you have triton installed

|

| 5 |

+

- Updated Manual inside the workflow

|

workflows/IMG2IMG/v4.2/img2img-1.png

ADDED

|

Git LFS Details

|

workflows/IMG2IMG/v4.2/img2img-2.png

ADDED

|

Git LFS Details

|

workflows/IMG2IMG/v4.2/img2img-fullpreview.png

ADDED

|

Git LFS Details

|

workflows/IMG2IMG/v4.2/workflow-img2img.png

ADDED

|

Git LFS Details

|

workflows/IMG2IMG/v4.3/IMG2IMG-ADetailer-v4.3-vslinx.json

ADDED

|

The diff for this file is too large to render.

See raw diff

|

|

|

workflows/IMG2IMG/v4.3/IMG2IMG_ADetailer_2025-08-11-023923.png

ADDED

|

Git LFS Details

|

workflows/IMG2IMG/v4.3/changelog.md

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

- added high-res fix & color fix (not recommended on upscaled images above 2x/larger than 2048x3072)

|

| 2 |

+

- fixed IMG2IMG Transfer Sampler not using settings from "Sampler Settings"-Group

|

| 3 |

+

- adapted guide notes in workflow

|

workflows/IMG2IMG/v4.3/guide backup/Full IMG2IMG Guide 23.08.2025 - PAGE1.png

ADDED

|

Git LFS Details

|

workflows/IMG2IMG/v4.3/guide backup/Full IMG2IMG Guide 23.08.2025 - PAGE2.png

ADDED

|

Git LFS Details

|

workflows/IMG2IMG/v4.3/guide backup/guide.md

ADDED

|

@@ -0,0 +1,672 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|