Update README.md

Browse files

README.md

CHANGED

|

@@ -1,3 +1,221 @@

|

|

| 1 |

-

---

|

| 2 |

-

|

| 3 |

-

-

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

---

|

| 2 |

+

library_name: transformers

|

| 3 |

+

license: apache-2.0

|

| 4 |

+

license_link: https://huggingface.co/Qwen/Qwen3-Coder-Next/blob/main/LICENSE

|

| 5 |

+

pipeline_tag: text-generation

|

| 6 |

+

---

|

| 7 |

+

|

| 8 |

+

# Qwen3-Coder-Next-FP8

|

| 9 |

+

<a href="https://chat.qwen.ai/" target="_blank" style="margin: 2px;">

|

| 10 |

+

<img alt="Chat" src="https://img.shields.io/badge/%F0%9F%92%9C%EF%B8%8F%20Qwen%20Chat%20-536af5" style="display: inline-block; vertical-align: middle;"/>

|

| 11 |

+

</a>

|

| 12 |

+

|

| 13 |

+

## Highlights

|

| 14 |

+

|

| 15 |

+

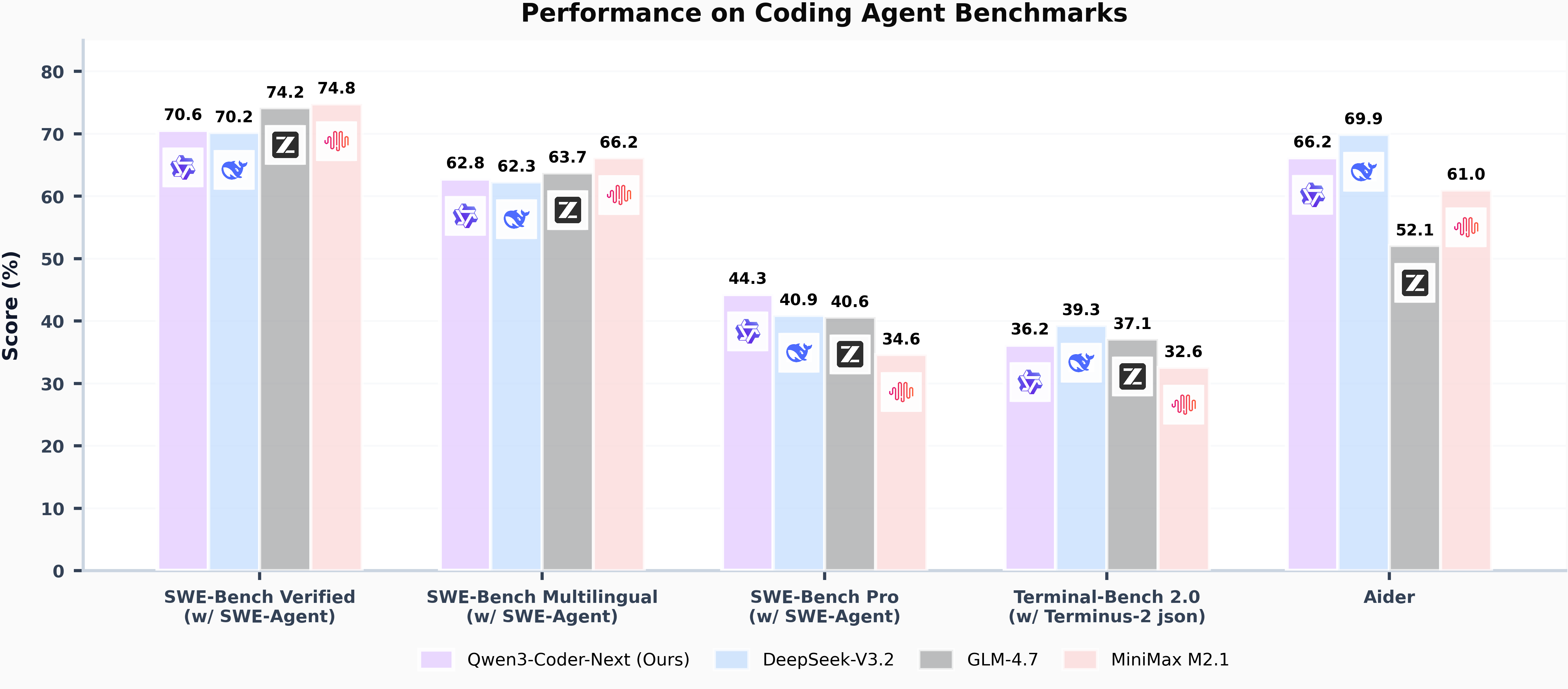

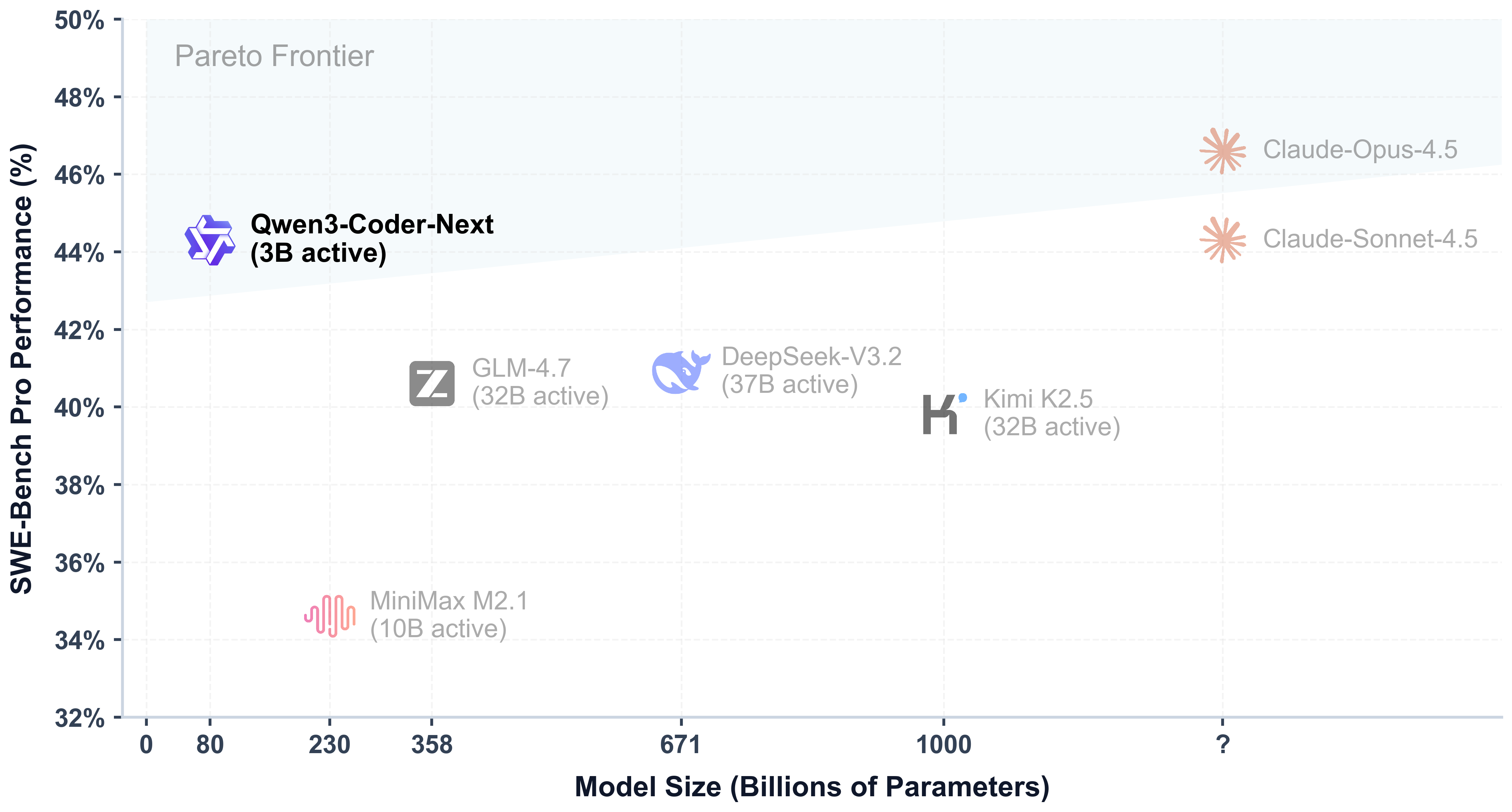

Today, we're announcing **Qwen3-Coder-Next-FP8**, an open-weight language model designed specifically for coding agents and local development. It features the following key enhancements:

|

| 16 |

+

|

| 17 |

+

- **Super Efficient with Significant Performance**: With only 3B activated parameters (80B total parameters), it achieves performance comparable to models with 10–20x more active parameters, making it highly cost-effective for agent deployment.

|

| 18 |

+

- **Advanced Agentic Capabilities**: Through an elaborate training recipe, it excels at long-horizon reasoning, complex tool usage, and recovery from execution failures, ensuring robust performance in dynamic coding tasks.

|

| 19 |

+

- **Versatile Integration with Real-World IDE**: Its 256k context length, combined with adaptability to various scaffold templates, enables seamless integration with different CLI/IDE platforms (e.g., Claude Code, Qwen Code, Qoder, Kilo, Trae, Cline, etc.), supporting diverse development environments.

|

| 20 |

+

|

| 21 |

+

|

| 22 |

+

|

| 23 |

+

|

| 24 |

+

|

| 25 |

+

> [!Note]

|

| 26 |

+

> This repository contains the **FP8-quantized Qwen3-Coder-Next** model checkpoint for convenience and performance.

|

| 27 |

+

> The quantization method is "fine-grained fp8" quantization with block size of 128.

|

| 28 |

+

> You can find more details in the `quantization_config` field in `config.json`.

|

| 29 |

+

>

|

| 30 |

+

> In addition, the experimental results presented in this model card are obtained from the original bfloat16 model prior to FP8 quantization.

|

| 31 |

+

|

| 32 |

+

## Model Overview

|

| 33 |

+

|

| 34 |

+

**Qwen3-Coder-Next-FP8** has the following features:

|

| 35 |

+

- Type: Causal Language Models

|

| 36 |

+

- Training Stage: Pretraining & Post-training

|

| 37 |

+

- Number of Parameters: 80B in total and 3B activated

|

| 38 |

+

- Number of Parameters (Non-Embedding): 79B

|

| 39 |

+

- Hidden Dimension: 2048

|

| 40 |

+

- Number of Layers: 48

|

| 41 |

+

- Hybrid Layout: 12 \* (3 \* (Gated DeltaNet -> MoE) -> 1 \* (Gated Attention -> MoE))

|

| 42 |

+

- Gated Attention:

|

| 43 |

+

- Number of Attention Heads: 16 for Q and 2 for KV

|

| 44 |

+

- Head Dimension: 256

|

| 45 |

+

- Rotary Position Embedding Dimension: 64

|

| 46 |

+

- Gated DeltaNet:

|

| 47 |

+

- Number of Linear Attention Heads: 32 for V and 16 for QK

|

| 48 |

+

- Head Dimension: 128

|

| 49 |

+

- Mixture of Experts:

|

| 50 |

+

- Number of Experts: 512

|

| 51 |

+

- Number of Activated Experts: 10

|

| 52 |

+

- Number of Shared Experts: 1

|

| 53 |

+

- Expert Intermediate Dimension: 512

|

| 54 |

+

- Context Length: 262,144 natively

|

| 55 |

+

|

| 56 |

+

**NOTE: This model supports only non-thinking mode and does not generate ``<think></think>`` blocks in its output. Meanwhile, specifying `enable_thinking=False` is no longer required.**

|

| 57 |

+

|

| 58 |

+

For more details, including benchmark evaluation, hardware requirements, and inference performance, please refer to our [blog](https://qwenlm.github.io/blog/qwen3-coder-next/), [GitHub](https://github.com/QwenLM/Qwen3-Coder), and [Documentation](https://qwen.readthedocs.io/en/latest/).

|

| 59 |

+

|

| 60 |

+

|

| 61 |

+

## Quickstart

|

| 62 |

+

|

| 63 |

+

We advise you to use the latest version of `transformers`.

|

| 64 |

+

|

| 65 |

+

The following contains a code snippet illustrating how to use the model generate content based on given inputs.

|

| 66 |

+

```python

|

| 67 |

+

from transformers import AutoModelForCausalLM, AutoTokenizer

|

| 68 |

+

|

| 69 |

+

model_name = "Qwen/Qwen3-Coder-Next-FP8"

|

| 70 |

+

|

| 71 |

+

# load the tokenizer and the model

|

| 72 |

+

tokenizer = AutoTokenizer.from_pretrained(model_name)

|

| 73 |

+

model = AutoModelForCausalLM.from_pretrained(

|

| 74 |

+

model_name,

|

| 75 |

+

torch_dtype="auto",

|

| 76 |

+

device_map="auto"

|

| 77 |

+

)

|

| 78 |

+

|

| 79 |

+

# prepare the model input

|

| 80 |

+

prompt = "Write a quick sort algorithm."

|

| 81 |

+

messages = [

|

| 82 |

+

{"role": "user", "content": prompt}

|

| 83 |

+

]

|

| 84 |

+

text = tokenizer.apply_chat_template(

|

| 85 |

+

messages,

|

| 86 |

+

tokenize=False,

|

| 87 |

+

add_generation_prompt=True,

|

| 88 |

+

)

|

| 89 |

+

model_inputs = tokenizer([text], return_tensors="pt").to(model.device)

|

| 90 |

+

|

| 91 |

+

# conduct text completion

|

| 92 |

+

generated_ids = model.generate(

|

| 93 |

+

**model_inputs,

|

| 94 |

+

max_new_tokens=65536

|

| 95 |

+

)

|

| 96 |

+

output_ids = generated_ids[0][len(model_inputs.input_ids[0]):].tolist()

|

| 97 |

+

|

| 98 |

+

content = tokenizer.decode(output_ids, skip_special_tokens=True)

|

| 99 |

+

|

| 100 |

+

print("content:", content)

|

| 101 |

+

```

|

| 102 |

+

|

| 103 |

+

**Note: If you encounter out-of-memory (OOM) issues, consider reducing the context length to a shorter value, such as `32,768`.**

|

| 104 |

+

|

| 105 |

+

For local use, applications such as Ollama, LMStudio, MLX-LM, llama.cpp, and KTransformers have also supported Qwen3.

|

| 106 |

+

|

| 107 |

+

## Deployment

|

| 108 |

+

|

| 109 |

+

For deployment, you can use the latest `sglang` or `vllm` to create an OpenAI-compatible API endpoint.

|

| 110 |

+

|

| 111 |

+

### SGLang

|

| 112 |

+

|

| 113 |

+

[SGLang](https://github.com/sgl-project/sglang) is a fast serving framework for large language models and vision language models.

|

| 114 |

+

SGLang could be used to launch a server with OpenAI-compatible API service.

|

| 115 |

+

|

| 116 |

+

`sglang>=v0.5.8` is required for Qwen3-Coder-Next-FP8, which can be installed using:

|

| 117 |

+

```shell

|

| 118 |

+

pip install 'sglang[all]>=v0.5.8'

|

| 119 |

+

```

|

| 120 |

+

See [its documentation](https://docs.sglang.ai/get_started/install.html) for more details.

|

| 121 |

+

|

| 122 |

+

The following command can be used to create an API endpoint at `http://localhost:30000/v1` with maximum context length 256K tokens using tensor parallel on 4 GPUs.

|

| 123 |

+

```shell

|

| 124 |

+

python -m sglang.launch_server --model Qwen/Qwen3-Coder-Next-FP8 --port 30000 --tp-size 2 --tool-call-parser qwen3_coder```

|

| 125 |

+

```

|

| 126 |

+

|

| 127 |

+

> [!Note]

|

| 128 |

+

> The default context length is 256K. Consider reducing the context length to a smaller value, e.g., `32768`, if the server fails to start.

|

| 129 |

+

|

| 130 |

+

|

| 131 |

+

### vLLM

|

| 132 |

+

|

| 133 |

+

[vLLM](https://github.com/vllm-project/vllm) is a high-throughput and memory-efficient inference and serving engine for LLMs.

|

| 134 |

+

vLLM could be used to launch a server with OpenAI-compatible API service.

|

| 135 |

+

|

| 136 |

+

`vllm>=0.15.0` is required for Qwen3-Coder-Next-FP8, which can be installed using:

|

| 137 |

+

```shell

|

| 138 |

+

pip install 'vllm>=0.15.0'

|

| 139 |

+

```

|

| 140 |

+

See [its documentation](https://docs.vllm.ai/en/stable/getting_started/installation/index.html) for more details.

|

| 141 |

+

|

| 142 |

+

The following command can be used to create an API endpoint at `http://localhost:8000/v1` with maximum context length 256K tokens using tensor parallel on 4 GPUs.

|

| 143 |

+

```shell

|

| 144 |

+

vllm serve Qwen/Qwen3-Coder-Next-FP8 --port 8000 --tensor-parallel-size 2 --enable-auto-tool-choice --tool-call-parser qwen3_coder

|

| 145 |

+

```

|

| 146 |

+

|

| 147 |

+

> [!Note]

|

| 148 |

+

> The default context length is 256K. Consider reducing the context length to a smaller value, e.g., `32768`, if the server fails to start.

|

| 149 |

+

|

| 150 |

+

|

| 151 |

+

## Agentic Coding

|

| 152 |

+

|

| 153 |

+

Qwen3-Coder-Next-FP8 excels in tool calling capabilities.

|

| 154 |

+

|

| 155 |

+

You can simply define or use any tools as following example.

|

| 156 |

+

```python

|

| 157 |

+

# Your tool implementation

|

| 158 |

+

def square_the_number(num: float) -> dict:

|

| 159 |

+

return num ** 2

|

| 160 |

+

|

| 161 |

+

# Define Tools

|

| 162 |

+

tools=[

|

| 163 |

+

{

|

| 164 |

+

"type":"function",

|

| 165 |

+

"function":{

|

| 166 |

+

"name": "square_the_number",

|

| 167 |

+

"description": "output the square of the number.",

|

| 168 |

+

"parameters": {

|

| 169 |

+

"type": "object",

|

| 170 |

+

"required": ["input_num"],

|

| 171 |

+

"properties": {

|

| 172 |

+

'input_num': {

|

| 173 |

+

'type': 'number',

|

| 174 |

+

'description': 'input_num is a number that will be squared'

|

| 175 |

+

}

|

| 176 |

+

},

|

| 177 |

+

}

|

| 178 |

+

}

|

| 179 |

+

}

|

| 180 |

+

]

|

| 181 |

+

|

| 182 |

+

from openai import OpenAI

|

| 183 |

+

# Define LLM

|

| 184 |

+

client = OpenAI(

|

| 185 |

+

# Use a custom endpoint compatible with OpenAI API

|

| 186 |

+

base_url='http://localhost:8000/v1', # api_base

|

| 187 |

+

api_key="EMPTY"

|

| 188 |

+

)

|

| 189 |

+

|

| 190 |

+

messages = [{'role': 'user', 'content': 'square the number 1024'}]

|

| 191 |

+

|

| 192 |

+

completion = client.chat.completions.create(

|

| 193 |

+

messages=messages,

|

| 194 |

+

model="Qwen3-Coder-Next-FP8",

|

| 195 |

+

max_tokens=65536,

|

| 196 |

+

tools=tools,

|

| 197 |

+

)

|

| 198 |

+

|

| 199 |

+

print(completion.choices[0])

|

| 200 |

+

```

|

| 201 |

+

|

| 202 |

+

## Best Practices

|

| 203 |

+

|

| 204 |

+

To achieve optimal performance, we recommend the following sampling parameters: `temperature=1.0`, `top_p=0.95`, `top_k=40`.

|

| 205 |

+

|

| 206 |

+

|

| 207 |

+

## Citation

|

| 208 |

+

|

| 209 |

+

If you find our work helpful, feel free to give us a cite.

|

| 210 |

+

|

| 211 |

+

```

|

| 212 |

+

@misc{qwen3codernexttechnicalreport,

|

| 213 |

+

title={Qwen3-Coder-Next Technical Report},

|

| 214 |

+

author={Qwen Team},

|

| 215 |

+

year={2026},

|

| 216 |

+

eprint={},

|

| 217 |

+

archivePrefix={arXiv},

|

| 218 |

+

primaryClass={cs.CL},

|

| 219 |

+

url={https://arxiv.org/abs/},

|

| 220 |

+

}

|

| 221 |

+

```

|