Instructions to use Sampson2022/test2 with libraries, inference providers, notebooks, and local apps. Follow these links to get started.

- Libraries

- Transformers

How to use Sampson2022/test2 with Transformers:

# Use a pipeline as a high-level helper from transformers import pipeline pipe = pipeline("image-classification", model="Sampson2022/test2") pipe("https://huggingface.co/datasets/huggingface/documentation-images/resolve/main/hub/parrots.png")# Load model directly from transformers import AutoImageProcessor, AutoModelForImageClassification processor = AutoImageProcessor.from_pretrained("Sampson2022/test2") model = AutoModelForImageClassification.from_pretrained("Sampson2022/test2") - Notebooks

- Google Colab

- Kaggle

Commit ·

c74cc6e

1

Parent(s): fe2229c

Create README.md

Browse files

README.md

CHANGED

|

@@ -0,0 +1,66 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

---

|

| 2 |

+

license: apache-2.0

|

| 3 |

+

tags:

|

| 4 |

+

- vision

|

| 5 |

+

- image-classification

|

| 6 |

+

datasets:

|

| 7 |

+

- imagenet-1k

|

| 8 |

+

---

|

| 9 |

+

|

| 10 |

+

# ResNet-50 v1.5

|

| 11 |

+

|

| 12 |

+

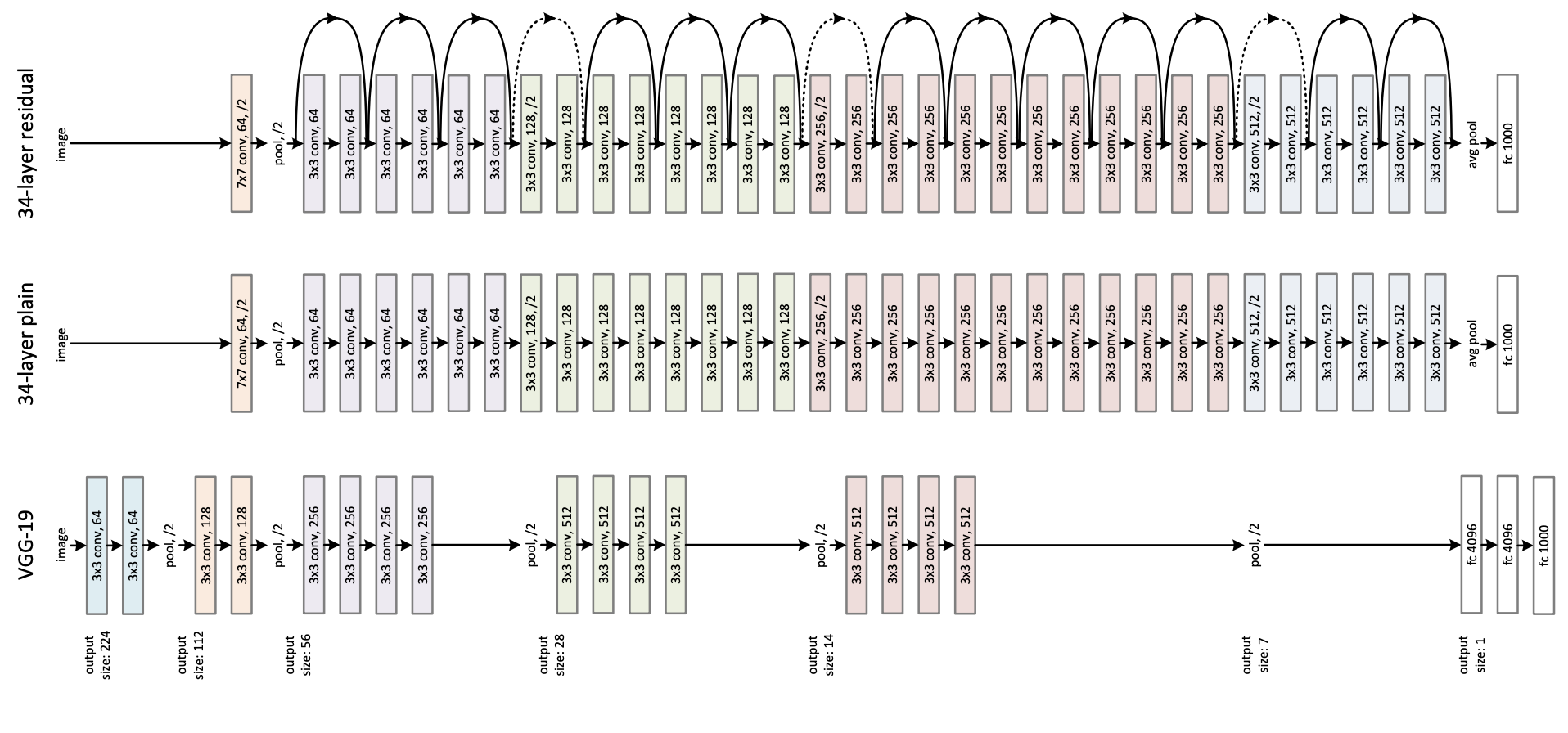

ResNet model pre-trained on ImageNet-1k at resolution 224x224. It was introduced in the paper [Deep Residual Learning for Image Recognition](https://arxiv.org/abs/1512.03385) by He et al.

|

| 13 |

+

|

| 14 |

+

Disclaimer: The team releasing ResNet did not write a model card for this model so this model card has been written by the Hugging Face team.

|

| 15 |

+

|

| 16 |

+

## Model description

|

| 17 |

+

|

| 18 |

+

ResNet (Residual Network) is a convolutional neural network that democratized the concepts of residual learning and skip connections. This enables to train much deeper models.

|

| 19 |

+

|

| 20 |

+

This is ResNet v1.5, which differs from the original model: in the bottleneck blocks which require downsampling, v1 has stride = 2 in the first 1x1 convolution, whereas v1.5 has stride = 2 in the 3x3 convolution. This difference makes ResNet50 v1.5 slightly more accurate (\~0.5% top1) than v1, but comes with a small performance drawback (~5% imgs/sec) according to [Nvidia](https://catalog.ngc.nvidia.com/orgs/nvidia/resources/resnet_50_v1_5_for_pytorch).

|

| 21 |

+

|

| 22 |

+

|

| 23 |

+

|

| 24 |

+

## Intended uses & limitations

|

| 25 |

+

|

| 26 |

+

You can use the raw model for image classification. See the [model hub](https://huggingface.co/models?search=resnet) to look for

|

| 27 |

+

fine-tuned versions on a task that interests you.

|

| 28 |

+

|

| 29 |

+

### How to use

|

| 30 |

+

|

| 31 |

+

Here is how to use this model to classify an image of the COCO 2017 dataset into one of the 1,000 ImageNet classes:

|

| 32 |

+

|

| 33 |

+

```python

|

| 34 |

+

from transformers import AutoFeatureExtractor, ResNetForImageClassification

|

| 35 |

+

import torch

|

| 36 |

+

from datasets import load_dataset

|

| 37 |

+

|

| 38 |

+

dataset = load_dataset("huggingface/cats-image")

|

| 39 |

+

image = dataset["test"]["image"][0]

|

| 40 |

+

|

| 41 |

+

feature_extractor = AutoFeatureExtractor.from_pretrained("microsoft/resnet-50")

|

| 42 |

+

model = ResNetForImageClassification.from_pretrained("microsoft/resnet-50")

|

| 43 |

+

|

| 44 |

+

inputs = feature_extractor(image, return_tensors="pt")

|

| 45 |

+

|

| 46 |

+

with torch.no_grad():

|

| 47 |

+

logits = model(**inputs).logits

|

| 48 |

+

|

| 49 |

+

# model predicts one of the 1000 ImageNet classes

|

| 50 |

+

predicted_label = logits.argmax(-1).item()

|

| 51 |

+

print(model.config.id2label[predicted_label])

|

| 52 |

+

```

|

| 53 |

+

|

| 54 |

+

For more code examples, we refer to the [documentation](https://huggingface.co/docs/transformers/main/en/model_doc/resnet).

|

| 55 |

+

|

| 56 |

+

### BibTeX entry and citation info

|

| 57 |

+

|

| 58 |

+

```bibtex

|

| 59 |

+

@inproceedings{he2016deep,

|

| 60 |

+

title={Deep residual learning for image recognition},

|

| 61 |

+

author={He, Kaiming and Zhang, Xiangyu and Ren, Shaoqing and Sun, Jian},

|

| 62 |

+

booktitle={Proceedings of the IEEE conference on computer vision and pattern recognition},

|

| 63 |

+

pages={770--778},

|

| 64 |

+

year={2016}

|

| 65 |

+

}

|

| 66 |

+

```

|