Video-to-Video

Diffusers

Safetensors

ti2v

motion-transfer

comfyui

video-generation

image-to-video

video-edit

Instructions to use SandwichZ/Wan2.2-Fun-5B-FLEXAM with libraries, inference providers, notebooks, and local apps. Follow these links to get started.

- Libraries

- Diffusers

How to use SandwichZ/Wan2.2-Fun-5B-FLEXAM with Diffusers:

pip install -U diffusers transformers accelerate

import torch from diffusers import DiffusionPipeline # switch to "mps" for apple devices pipe = DiffusionPipeline.from_pretrained("SandwichZ/Wan2.2-Fun-5B-FLEXAM", dtype=torch.bfloat16, device_map="cuda") prompt = "Astronaut in a jungle, cold color palette, muted colors, detailed, 8k" image = pipe(prompt).images[0] - Notebooks

- Google Colab

- Kaggle

Delete README_en.md

Browse files- README_en.md +0 -352

README_en.md

DELETED

|

@@ -1,352 +0,0 @@

|

|

| 1 |

-

---

|

| 2 |

-

license: apache-2.0

|

| 3 |

-

language:

|

| 4 |

-

- en

|

| 5 |

-

- zh

|

| 6 |

-

pipeline_tag: text-to-video

|

| 7 |

-

library_name: diffusers

|

| 8 |

-

tags:

|

| 9 |

-

- video

|

| 10 |

-

- video-generation

|

| 11 |

-

---

|

| 12 |

-

|

| 13 |

-

|

| 14 |

-

# Wan-Fun

|

| 15 |

-

|

| 16 |

-

😊 Welcome!

|

| 17 |

-

|

| 18 |

-

[](https://huggingface.co/spaces/alibaba-pai/Wan2.1-Fun-1.3B-InP)

|

| 19 |

-

|

| 20 |

-

[](https://github.com/aigc-apps/VideoX-Fun)

|

| 21 |

-

|

| 22 |

-

[English](./README_en.md) | [简体中文](./README.md)

|

| 23 |

-

|

| 24 |

-

# Table of Contents

|

| 25 |

-

- [Table of Contents](#table-of-contents)

|

| 26 |

-

- [Model zoo](#model-zoo)

|

| 27 |

-

- [Video Result](#video-result)

|

| 28 |

-

- [Quick Start](#quick-start)

|

| 29 |

-

- [How to use](#how-to-use)

|

| 30 |

-

- [Reference](#reference)

|

| 31 |

-

- [License](#license)

|

| 32 |

-

|

| 33 |

-

# Model zoo

|

| 34 |

-

|

| 35 |

-

| Name | Storage Size | Hugging Face | Model Scope | Description |

|

| 36 |

-

|--|--|--|--|--|

|

| 37 |

-

| Wan2.2-Fun-A14B-InP | 64.0 GB | [🤗Link](https://huggingface.co/alibaba-pai/Wan2.2-Fun-A14B-InP) | [😄Link](https://modelscope.cn/models/PAI/Wan2.2-Fun-A14B-InP) | Wan2.2-Fun-14B text-to-video generation weights, trained at multiple resolutions, supports start-end image prediction. |

|

| 38 |

-

| Wan2.2-Fun-A14B-Control | 64.0 GB | [🤗Link](https://huggingface.co/alibaba-pai/Wan2.2-Fun-A14B-Control) | [😄Link](https://modelscope.cn/models/PAI/Wan2.2-Fun-A14B-Control)| Wan2.2-Fun-14B video control weights, supporting various control conditions such as Canny, Depth, Pose, MLSD, etc., and trajectory control. Supports multi-resolution (512, 768, 1024) video prediction at 81 frames, trained at 16 frames per second, with multilingual prediction support. |

|

| 39 |

-

| Wan2.2-Fun-A14B-Control-Camera | 64.0 GB | [🤗Link](https://huggingface.co/alibaba-pai/Wan2.2-Fun-A14B-Control-Camera) | [😄Link](https://modelscope.cn/models/PAI/Wan2.2-Fun-A14B-Control-Camera)| Wan2.2-Fun-14B camera lens control weights. Supports multi-resolution (512, 768, 1024) video prediction, trained with 81 frames at 16 FPS, supports multilingual prediction. |

|

| 40 |

-

| Wan2.2-Fun-5B-InP | 23.0 GB | [🤗Link](https://huggingface.co/alibaba-pai/Wan2.2-Fun-5B-InP) | [😄Link](https://modelscope.cn/models/PAI/Wan2.2-Fun-5B-InP) | Wan2.2-Fun-5B text-to-video weights trained at 121 frames, 24 FPS, supporting first/last frame prediction. |

|

| 41 |

-

| Wan2.2-Fun-5B-Control | 23.0 GB | [🤗Link](https://huggingface.co/alibaba-pai/Wan2.2-Fun-5B-Control) | [😄Link](https://modelscope.cn/models/PAI/Wan2.2-Fun-5B-Control)| Wan2.2-Fun-5B video control weights, supporting control conditions like Canny, Depth, Pose, MLSD, and trajectory control. Trained at 121 frames, 24 FPS, with multilingual prediction support. |

|

| 42 |

-

| Wan2.2-Fun-5B-Control-Camera | 23.0 GB | [🤗Link](https://huggingface.co/alibaba-pai/Wan2.2-Fun-5B-Control-Camera) | [😄Link](https://modelscope.cn/models/PAI/Wan2.2-Fun-5B-Control-Camera)| Wan2.2-Fun-5B camera lens control weights. Trained at 121 frames, 24 FPS, with multilingual prediction support. |

|

| 43 |

-

|

| 44 |

-

# Video Result

|

| 45 |

-

|

| 46 |

-

### Wan2.2-Fun-A14B-InP

|

| 47 |

-

|

| 48 |

-

<table border="0" style="width: 100%; text-align: left; margin-top: 20px;">

|

| 49 |

-

<tr>

|

| 50 |

-

<td>

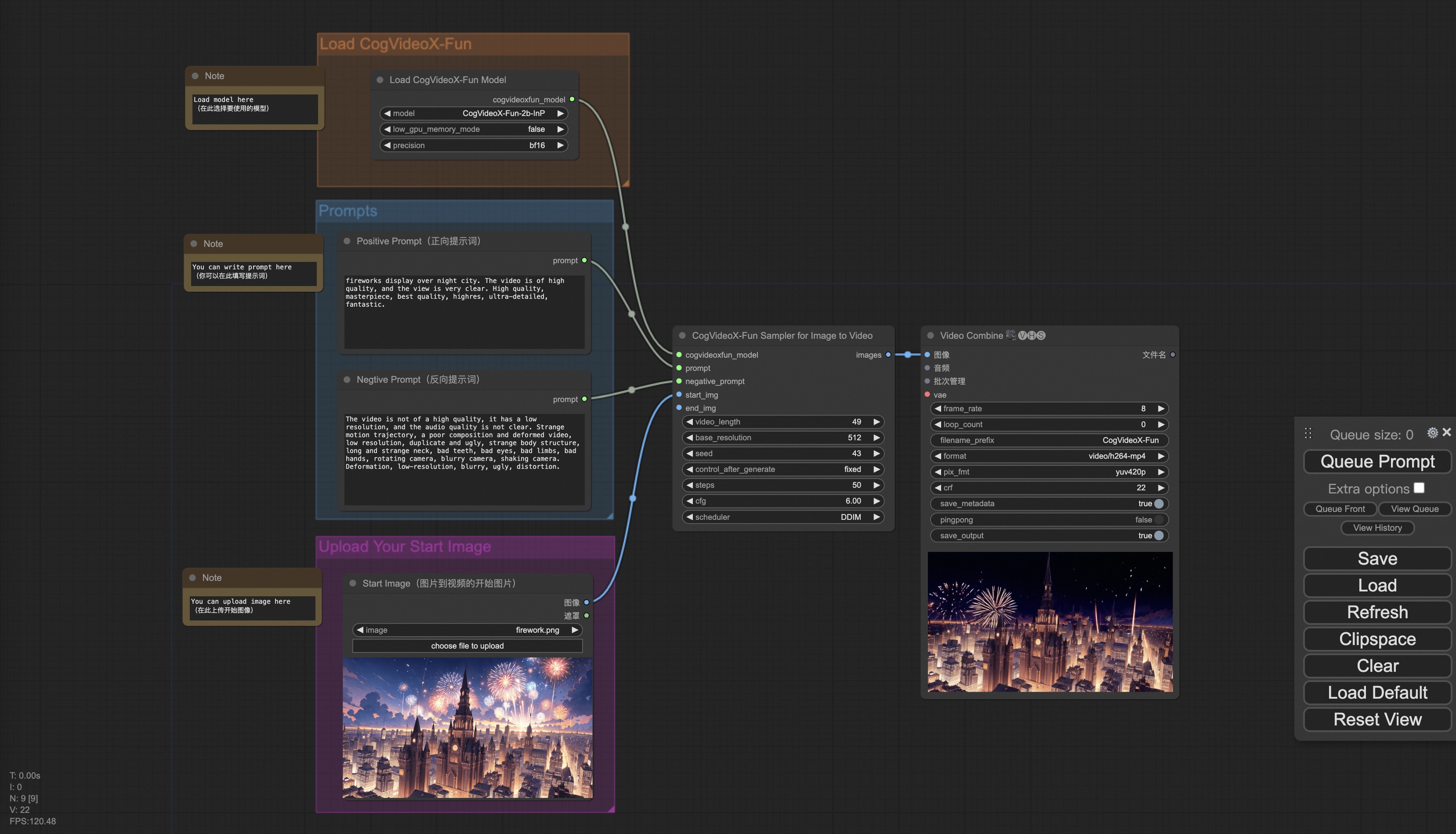

|

| 51 |

-

<video src="https://pai-aigc-photog.oss-cn-hangzhou.aliyuncs.com/wan_fun/asset_Wan2_2/v1.0/inp_1.mp4" width="100%" controls autoplay loop></video>

|

| 52 |

-

</td>

|

| 53 |

-

<td>

|

| 54 |

-

<video src="https://pai-aigc-photog.oss-cn-hangzhou.aliyuncs.com/wan_fun/asset_Wan2_2/v1.0/inp_2.mp4" width="100%" controls autoplay loop></video>

|

| 55 |

-

</td>

|

| 56 |

-

<td>

|

| 57 |

-

<video src="https://pai-aigc-photog.oss-cn-hangzhou.aliyuncs.com/wan_fun/asset_Wan2_2/v1.0/inp_3.mp4" width="100%" controls autoplay loop></video>

|

| 58 |

-

</td>

|

| 59 |

-

<td>

|

| 60 |

-

<video src="https://pai-aigc-photog.oss-cn-hangzhou.aliyuncs.com/wan_fun/asset_Wan2_2/v1.0/inp_4.mp4" width="100%" controls autoplay loop></video>

|

| 61 |

-

</td>

|

| 62 |

-

</tr>

|

| 63 |

-

</table>

|

| 64 |

-

|

| 65 |

-

<table border="0" style="width: 100%; text-align: left; margin-top: 20px;">

|

| 66 |

-

<tr>

|

| 67 |

-

<td>

|

| 68 |

-

<video src="https://pai-aigc-photog.oss-cn-hangzhou.aliyuncs.com/wan_fun/asset_Wan2_2/v1.0/inp_5.mp4" width="100%" controls autoplay loop></video>

|

| 69 |

-

</td>

|

| 70 |

-

<td>

|

| 71 |

-

<video src="https://pai-aigc-photog.oss-cn-hangzhou.aliyuncs.com/wan_fun/asset_Wan2_2/v1.0/inp_6.mp4" width="100%" controls autoplay loop></video>

|

| 72 |

-

</td>

|

| 73 |

-

<td>

|

| 74 |

-

<video src="https://pai-aigc-photog.oss-cn-hangzhou.aliyuncs.com/wan_fun/asset_Wan2_2/v1.0/inp_7.mp4" width="100%" controls autoplay loop></video>

|

| 75 |

-

</td>

|

| 76 |

-

<td>

|

| 77 |

-

<video src="https://pai-aigc-photog.oss-cn-hangzhou.aliyuncs.com/wan_fun/asset_Wan2_2/v1.0/inp_8.mp4" width="100%" controls autoplay loop></video>

|

| 78 |

-

</td>

|

| 79 |

-

</tr>

|

| 80 |

-

</table>

|

| 81 |

-

|

| 82 |

-

### Wan2.2-Fun-A14B-Control

|

| 83 |

-

|

| 84 |

-

Generic Control Video + Reference Image:

|

| 85 |

-

<table border="0" style="width: 100%; text-align: left; margin-top: 20px;">

|

| 86 |

-

<tr>

|

| 87 |

-

<td>

|

| 88 |

-

Reference Image

|

| 89 |

-

</td>

|

| 90 |

-

<td>

|

| 91 |

-

Control Video

|

| 92 |

-

</td>

|

| 93 |

-

<td>

|

| 94 |

-

Wan2.2-Fun-14B-Control

|

| 95 |

-

</td>

|

| 96 |

-

<tr>

|

| 97 |

-

<td>

|

| 98 |

-

<image src="https://pai-aigc-photog.oss-cn-hangzhou.aliyuncs.com/wan_fun/asset_Wan2_2/v1.0/8.png" width="100%" controls autoplay loop></image>

|

| 99 |

-

</td>

|

| 100 |

-

<td>

|

| 101 |

-

<video src="https://pai-aigc-photog.oss-cn-hangzhou.aliyuncs.com/wan_fun/asset_Wan2_2/v1.0/pose.mp4" width="100%" controls autoplay loop></video>

|

| 102 |

-

</td>

|

| 103 |

-

<td>

|

| 104 |

-

<video src="https://pai-aigc-photog.oss-cn-hangzhou.aliyuncs.com/wan_fun/asset_Wan2_2/v1.0/14b_ref.mp4" width="100%" controls autoplay loop></video>

|

| 105 |

-

</td>

|

| 106 |

-

<tr>

|

| 107 |

-

</table>

|

| 108 |

-

|

| 109 |

-

Generic Control Video (Canny, Pose, Depth, etc.) and Trajectory Control:

|

| 110 |

-

<table border="0" style="width: 100%; text-align: left; margin-top: 20px;">

|

| 111 |

-

<tr>

|

| 112 |

-

<td>

|

| 113 |

-

<video src="https://pai-aigc-photog.oss-cn-hangzhou.aliyuncs.com/wan_fun/asset_Wan2_2/v1.0/guiji.mp4" width="100%" controls autoplay loop></video>

|

| 114 |

-

</td>

|

| 115 |

-

<td>

|

| 116 |

-

<video src="https://pai-aigc-photog.oss-cn-hangzhou.aliyuncs.com/wan_fun/asset_Wan2_2/v1.0/guiji_out.mp4" width="100%" controls autoplay loop></video>

|

| 117 |

-

</td>

|

| 118 |

-

<tr>

|

| 119 |

-

</table>

|

| 120 |

-

|

| 121 |

-

<table border="0" style="width: 100%; text-align: left; margin-top: 20px;">

|

| 122 |

-

<tr>

|

| 123 |

-

<td>

|

| 124 |

-

<video src="https://pai-aigc-photog.oss-cn-hangzhou.aliyuncs.com/wan_fun/asset_Wan2_2/v1.0/pose.mp4" width="100%" controls autoplay loop></video>

|

| 125 |

-

</td>

|

| 126 |

-

<td>

|

| 127 |

-

<video src="https://pai-aigc-photog.oss-cn-hangzhou.aliyuncs.com/wan_fun/asset_Wan2_2/v1.0/canny.mp4" width="100%" controls autoplay loop></video>

|

| 128 |

-

</td>

|

| 129 |

-

<td>

|

| 130 |

-

<video src="https://pai-aigc-photog.oss-cn-hangzhou.aliyuncs.com/wan_fun/asset_Wan2_2/v1.0/depth.mp4" width="100%" controls autoplay loop></video>

|

| 131 |

-

</td>

|

| 132 |

-

<tr>

|

| 133 |

-

<td>

|

| 134 |

-

<video src="https://pai-aigc-photog.oss-cn-hangzhou.aliyuncs.com/wan_fun/asset_Wan2_2/v1.0/pose_out.mp4" width="100%" controls autoplay loop></video>

|

| 135 |

-

</td>

|

| 136 |

-

<td>

|

| 137 |

-

<video src="https://pai-aigc-photog.oss-cn-hangzhou.aliyuncs.com/wan_fun/asset_Wan2_2/v1.0/canny_out.mp4" width="100%" controls autoplay loop></video>

|

| 138 |

-

</td>

|

| 139 |

-

<td>

|

| 140 |

-

<video src="https://pai-aigc-photog.oss-cn-hangzhou.aliyuncs.com/wan_fun/asset_Wan2_2/v1.0/depth_out.mp4" width="100%" controls autoplay loop></video>

|

| 141 |

-

</td>

|

| 142 |

-

</tr>

|

| 143 |

-

</table>

|

| 144 |

-

|

| 145 |

-

### Wan2.2-Fun-A14B-Control-Camera

|

| 146 |

-

|

| 147 |

-

<table border="0" style="width: 100%; text-align: left; margin-top: 20px;">

|

| 148 |

-

<tr>

|

| 149 |

-

<td>

|

| 150 |

-

Pan Up

|

| 151 |

-

</td>

|

| 152 |

-

<td>

|

| 153 |

-

Pan Left

|

| 154 |

-

</td>

|

| 155 |

-

<td>

|

| 156 |

-

Pan Right

|

| 157 |

-

</td>

|

| 158 |

-

<td>

|

| 159 |

-

Zoom In

|

| 160 |

-

</td>

|

| 161 |

-

<tr>

|

| 162 |

-

<td>

|

| 163 |

-

<video src="https://pai-aigc-photog.oss-cn-hangzhou.aliyuncs.com/wan_fun/asset_Wan2_2/v1.0/Pan_Up.mp4" width="100%" controls autoplay loop></video>

|

| 164 |

-

</td>

|

| 165 |

-

<td>

|

| 166 |

-

<video src="https://pai-aigc-photog.oss-cn-hangzhou.aliyuncs.com/wan_fun/asset_Wan2_2/v1.0/Pan_Left.mp4" width="100%" controls autoplay loop></video>

|

| 167 |

-

</td>

|

| 168 |

-

<td>

|

| 169 |

-

<video src="https://pai-aigc-photog.oss-cn-hangzhou.aliyuncs.com/wan_fun/asset_Wan2_2/v1.0/Pan_Right.mp4" width="100%" controls autoplay loop></video>

|

| 170 |

-

</td>

|

| 171 |

-

<td>

|

| 172 |

-

<video src="https://pai-aigc-photog.oss-cn-hangzhou.aliyuncs.com/wan_fun/asset_Wan2_2/v1.0/Zoom_In.mp4" width="100%" controls autoplay loop></video>

|

| 173 |

-

</td>

|

| 174 |

-

<tr>

|

| 175 |

-

<td>

|

| 176 |

-

Pan Down

|

| 177 |

-

</td>

|

| 178 |

-

<td>

|

| 179 |

-

Pan Up + Pan Left

|

| 180 |

-

</td>

|

| 181 |

-

<td>

|

| 182 |

-

Pan Up + Pan Right

|

| 183 |

-

</td>

|

| 184 |

-

<td>

|

| 185 |

-

Zoom Out

|

| 186 |

-

</td>

|

| 187 |

-

<tr>

|

| 188 |

-

<td>

|

| 189 |

-

<video src="https://pai-aigc-photog.oss-cn-hangzhou.aliyuncs.com/wan_fun/asset_Wan2_2/v1.0/Pan_Down.mp4" width="100%" controls autoplay loop></video>

|

| 190 |

-

</td>

|

| 191 |

-

<td>

|

| 192 |

-

<video src="https://pai-aigc-photog.oss-cn-hangzhou.aliyuncs.com/wan_fun/asset_Wan2_2/v1.0/Pan_UL.mp4" width="100%" controls autoplay loop></video>

|

| 193 |

-

</td>

|

| 194 |

-

<td>

|

| 195 |

-

<video src="https://pai-aigc-photog.oss-cn-hangzhou.aliyuncs.com/wan_fun/asset_Wan2_2/v1.0/Pan_UR.mp4" width="100%" controls autoplay loop></video>

|

| 196 |

-

</td>

|

| 197 |

-

<td>

|

| 198 |

-

<video src="https://pai-aigc-photog.oss-cn-hangzhou.aliyuncs.com/wan_fun/asset_Wan2_2/v1.0/Zoom_Out.mp4" width="100%" controls autoplay loop></video>

|

| 199 |

-

</td>

|

| 200 |

-

</tr>

|

| 201 |

-

</table>

|

| 202 |

-

|

| 203 |

-

# Quick Start

|

| 204 |

-

### 1. Cloud usage: AliyunDSW/Docker

|

| 205 |

-

#### a. From AliyunDSW

|

| 206 |

-

DSW has free GPU time, which can be applied once by a user and is valid for 3 months after applying.

|

| 207 |

-

|

| 208 |

-

Aliyun provide free GPU time in [Freetier](https://free.aliyun.com/?product=9602825&crowd=enterprise&spm=5176.28055625.J_5831864660.1.e939154aRgha4e&scm=20140722.M_9974135.P_110.MO_1806-ID_9974135-MID_9974135-CID_30683-ST_8512-V_1), get it and use in Aliyun PAI-DSW to start CogVideoX-Fun within 5min!

|

| 209 |

-

|

| 210 |

-

[](https://gallery.pai-ml.com/#/preview/deepLearning/cv/cogvideox_fun)

|

| 211 |

-

|

| 212 |

-

#### b. From ComfyUI

|

| 213 |

-

Our ComfyUI is as follows, please refer to [ComfyUI README](comfyui/README.md) for details.

|

| 214 |

-

|

| 215 |

-

|

| 216 |

-

#### c. From docker

|

| 217 |

-

If you are using docker, please make sure that the graphics card driver and CUDA environment have been installed correctly in your machine.

|

| 218 |

-

|

| 219 |

-

Then execute the following commands in this way:

|

| 220 |

-

```

|

| 221 |

-

# pull image

|

| 222 |

-

docker pull mybigpai-public-registry.cn-beijing.cr.aliyuncs.com/easycv/torch_cuda:cogvideox_fun

|

| 223 |

-

|

| 224 |

-

# enter image

|

| 225 |

-

docker run -it -p 7860:7860 --network host --gpus all --security-opt seccomp:unconfined --shm-size 200g mybigpai-public-registry.cn-beijing.cr.aliyuncs.com/easycv/torch_cuda:cogvideox_fun

|

| 226 |

-

|

| 227 |

-

# clone code

|

| 228 |

-

git clone https://github.com/aigc-apps/VideoX-Fun.git

|

| 229 |

-

|

| 230 |

-

# enter VideoX-Fun's dir

|

| 231 |

-

cd VideoX-Fun

|

| 232 |

-

|

| 233 |

-

# download weights

|

| 234 |

-

mkdir models/Diffusion_Transformer

|

| 235 |

-

mkdir models/Personalized_Model

|

| 236 |

-

|

| 237 |

-

# Please use the hugginface link or modelscope link to download the model.

|

| 238 |

-

# CogVideoX-Fun

|

| 239 |

-

# https://huggingface.co/alibaba-pai/CogVideoX-Fun-V1.1-5b-InP

|

| 240 |

-

# https://modelscope.cn/models/PAI/CogVideoX-Fun-V1.1-5b-InP

|

| 241 |

-

|

| 242 |

-

# Wan

|

| 243 |

-

# https://huggingface.co/alibaba-pai/Wan2.1-Fun-V1.1-14B-InP

|

| 244 |

-

# https://modelscope.cn/models/PAI/Wan2.1-Fun-V1.1-14B-InP

|

| 245 |

-

# https://huggingface.co/alibaba-pai/Wan2.2-Fun-A14B-InP

|

| 246 |

-

# https://modelscope.cn/models/PAI/Wan2.2-Fun-A14B-InP

|

| 247 |

-

```

|

| 248 |

-

|

| 249 |

-

### 2. Local install: Environment Check/Downloading/Installation

|

| 250 |

-

#### a. Environment Check

|

| 251 |

-

We have verified this repo execution on the following environment:

|

| 252 |

-

|

| 253 |

-

The detailed of Windows:

|

| 254 |

-

- OS: Windows 10

|

| 255 |

-

- python: python3.10 & python3.11

|

| 256 |

-

- pytorch: torch2.2.0

|

| 257 |

-

- CUDA: 11.8 & 12.1

|

| 258 |

-

- CUDNN: 8+

|

| 259 |

-

- GPU: Nvidia-3060 12G & Nvidia-3090 24G

|

| 260 |

-

|

| 261 |

-

The detailed of Linux:

|

| 262 |

-

- OS: Ubuntu 20.04, CentOS

|

| 263 |

-

- python: python3.10 & python3.11

|

| 264 |

-

- pytorch: torch2.2.0

|

| 265 |

-

- CUDA: 11.8 & 12.1

|

| 266 |

-

- CUDNN: 8+

|

| 267 |

-

- GPU:Nvidia-V100 16G & Nvidia-A10 24G & Nvidia-A100 40G & Nvidia-A100 80G

|

| 268 |

-

|

| 269 |

-

We need about 60GB available on disk (for saving weights), please check!

|

| 270 |

-

|

| 271 |

-

#### b. Weights

|

| 272 |

-

We'd better place the [weights](#model-zoo) along the specified path:

|

| 273 |

-

|

| 274 |

-

**Via ComfyUI**:

|

| 275 |

-

Put the models into the ComfyUI weights folder `ComfyUI/models/Fun_Models/`:

|

| 276 |

-

```

|

| 277 |

-

📦 ComfyUI/

|

| 278 |

-

├── 📂 models/

|

| 279 |

-

│ └── 📂 Fun_Models/

|

| 280 |

-

│ ├── 📂 CogVideoX-Fun-V1.1-2b-InP/

|

| 281 |

-

│ ├── 📂 CogVideoX-Fun-V1.1-5b-InP/

|

| 282 |

-

│ ├── 📂 Wan2.1-Fun-14B-InP

|

| 283 |

-

│ └── 📂 Wan2.1-Fun-1.3B-InP/

|

| 284 |

-

```

|

| 285 |

-

|

| 286 |

-

**Run its own python file or UI interface**:

|

| 287 |

-

```

|

| 288 |

-

📦 models/

|

| 289 |

-

├── 📂 Diffusion_Transformer/

|

| 290 |

-

│ ├── 📂 CogVideoX-Fun-V1.1-2b-InP/

|

| 291 |

-

│ ├── 📂 CogVideoX-Fun-V1.1-5b-InP/

|

| 292 |

-

│ ├── 📂 Wan2.1-Fun-14B-InP

|

| 293 |

-

│ └── 📂 Wan2.1-Fun-1.3B-InP/

|

| 294 |

-

├── 📂 Personalized_Model/

|

| 295 |

-

│ └── your trained trainformer model / your trained lora model (for UI load)

|

| 296 |

-

```

|

| 297 |

-

|

| 298 |

-

# How to Use

|

| 299 |

-

|

| 300 |

-

<h3 id="video-gen">1. Generation</h3>

|

| 301 |

-

|

| 302 |

-

#### a. GPU Memory Optimization

|

| 303 |

-

Since Wan2.1 has a very large number of parameters, we need to consider memory optimization strategies to adapt to consumer-grade GPUs. We provide `GPU_memory_mode` for each prediction file, allowing you to choose between `model_cpu_offload`, `model_cpu_offload_and_qfloat8`, and `sequential_cpu_offload`. This solution is also applicable to CogVideoX-Fun generation.

|

| 304 |

-

|

| 305 |

-

- `model_cpu_offload`: The entire model is moved to the CPU after use, saving some GPU memory.

|

| 306 |

-

- `model_cpu_offload_and_qfloat8`: The entire model is moved to the CPU after use, and the transformer model is quantized to float8, saving more GPU memory.

|

| 307 |

-

- `sequential_cpu_offload`: Each layer of the model is moved to the CPU after use. It is slower but saves a significant amount of GPU memory.

|

| 308 |

-

|

| 309 |

-

`qfloat8` may slightly reduce model performance but saves more GPU memory. If you have sufficient GPU memory, it is recommended to use `model_cpu_offload`.

|

| 310 |

-

|

| 311 |

-

#### b. Using ComfyUI

|

| 312 |

-

For details, refer to [ComfyUI README](https://github.com/aigc-apps/VideoX-Fun/tree/main/comfyui)。

|

| 313 |

-

|

| 314 |

-

#### c. Running Python Files

|

| 315 |

-

- **Step 1**: Download the corresponding [weights](#model-zoo) and place them in the `models` folder.

|

| 316 |

-

- **Step 2**: Use different files for prediction based on the weights and prediction goals. This library currently supports CogVideoX-Fun, Wan2.1, Wan2.1-Fun and Wan2.2. Different models are distinguished by folder names under the `examples` folder, and their supported features vary. Use them accordingly. Below is an example using CogVideoX-Fun:

|

| 317 |

-

- **Text-to-Video**:

|

| 318 |

-

- Modify `prompt`, `neg_prompt`, `guidance_scale`, and `seed` in the file `examples/cogvideox_fun/predict_t2v.py`.

|

| 319 |

-

- Run the file `examples/cogvideox_fun/predict_t2v.py` and wait for the results. The generated videos will be saved in the folder `samples/cogvideox-fun-videos`.

|

| 320 |

-

- **Image-to-Video**:

|

| 321 |

-

- Modify `validation_image_start`, `validation_image_end`, `prompt`, `neg_prompt`, `guidance_scale`, and `seed` in the file `examples/cogvideox_fun/predict_i2v.py`.

|

| 322 |

-

- `validation_image_start` is the starting image of the video, and `validation_image_end` is the ending image of the video.

|

| 323 |

-

- Run the file `examples/cogvideox_fun/predict_i2v.py` and wait for the results. The generated videos will be saved in the folder `samples/cogvideox-fun-videos_i2v`.

|

| 324 |

-

- **Video-to-Video**:

|

| 325 |

-

- Modify `validation_video`, `validation_image_end`, `prompt`, `neg_prompt`, `guidance_scale`, and `seed` in the file `examples/cogvideox_fun/predict_v2v.py`.

|

| 326 |

-

- `validation_video` is the reference video for video-to-video generation. You can use the following demo video: [Demo Video](https://pai-aigc-photog.oss-cn-hangzhou.aliyuncs.com/cogvideox_fun/asset/v1/play_guitar.mp4).

|

| 327 |

-

- Run the file `examples/cogvideox_fun/predict_v2v.py` and wait for the results. The generated videos will be saved in the folder `samples/cogvideox-fun-videos_v2v`.

|

| 328 |

-

- **Controlled Video Generation (Canny, Pose, Depth, etc.)**:

|

| 329 |

-

- Modify `control_video`, `validation_image_end`, `prompt`, `neg_prompt`, `guidance_scale`, and `seed` in the file `examples/cogvideox_fun/predict_v2v_control.py`.

|

| 330 |

-

- `control_video` is the control video extracted using operators such as Canny, Pose, or Depth. You can use the following demo video: [Demo Video](https://pai-aigc-photog.oss-cn-hangzhou.aliyuncs.com/cogvideox_fun/asset/v1.1/pose.mp4).

|

| 331 |

-

- Run the file `examples/cogvideox_fun/predict_v2v_control.py` and wait for the results. The generated videos will be saved in the folder `samples/cogvideox-fun-videos_v2v_control`.

|

| 332 |

-

- **Step 3**: If you want to integrate other backbones or Loras trained by yourself, modify `lora_path` and relevant paths in `examples/{model_name}/predict_t2v.py` or `examples/{model_name}/predict_i2v.py` as needed.

|

| 333 |

-

|

| 334 |

-

#### d. Using the Web UI

|

| 335 |

-

The web UI supports text-to-video, image-to-video, video-to-video, and controlled video generation (Canny, Pose, Depth, etc.). This library currently supports CogVideoX-Fun, Wan2.1, and Wan2.1-Fun. Different models are distinguished by folder names under the `examples` folder, and their supported features vary. Use them accordingly. Below is an example using CogVideoX-Fun:

|

| 336 |

-

|

| 337 |

-

- **Step 1**: Download the corresponding [weights](#model-zoo) and place them in the `models` folder.

|

| 338 |

-

- **Step 2**: Run the file `examples/cogvideox_fun/app.py` to access the Gradio interface.

|

| 339 |

-

- **Step 3**: Select the generation model on the page, fill in `prompt`, `neg_prompt`, `guidance_scale`, and `seed`, click "Generate," and wait for the results. The generated videos will be saved in the `sample` folder.

|

| 340 |

-

|

| 341 |

-

# Reference

|

| 342 |

-

- CogVideo: https://github.com/THUDM/CogVideo/

|

| 343 |

-

- EasyAnimate: https://github.com/aigc-apps/EasyAnimate

|

| 344 |

-

- Wan2.1: https://github.com/Wan-Video/Wan2.1/

|

| 345 |

-

- Wan2.1: https://github.com/Wan-Video/Wan2.2/

|

| 346 |

-

- ComfyUI-KJNodes: https://github.com/kijai/ComfyUI-KJNodes

|

| 347 |

-

- ComfyUI-EasyAnimateWrapper: https://github.com/kijai/ComfyUI-EasyAnimateWrapper

|

| 348 |

-

- ComfyUI-CameraCtrl-Wrapper: https://github.com/chaojie/ComfyUI-CameraCtrl-Wrapper

|

| 349 |

-

- CameraCtrl: https://github.com/hehao13/CameraCtrl

|

| 350 |

-

|

| 351 |

-

# License

|

| 352 |

-

This project is licensed under the [Apache License (Version 2.0)](https://github.com/modelscope/modelscope/blob/master/LICENSE).

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|