Instructions to use StableDiffusionVN/Flux with libraries, inference providers, notebooks, and local apps. Follow these links to get started.

- Libraries

- llama-cpp-python

How to use StableDiffusionVN/Flux with llama-cpp-python:

# !pip install llama-cpp-python from llama_cpp import Llama llm = Llama.from_pretrained( repo_id="StableDiffusionVN/Flux", filename="Clip/t5-v1_1-xxl-encoder-Q4_K_S.gguf", )

output = llm( "Once upon a time,", max_tokens=512, echo=True ) print(output)

- Notebooks

- Google Colab

- Kaggle

- Local Apps

- llama.cpp

How to use StableDiffusionVN/Flux with llama.cpp:

Install from brew

brew install llama.cpp # Start a local OpenAI-compatible server with a web UI: llama-server -hf StableDiffusionVN/Flux:Q4_K_S # Run inference directly in the terminal: llama-cli -hf StableDiffusionVN/Flux:Q4_K_S

Install from WinGet (Windows)

winget install llama.cpp # Start a local OpenAI-compatible server with a web UI: llama-server -hf StableDiffusionVN/Flux:Q4_K_S # Run inference directly in the terminal: llama-cli -hf StableDiffusionVN/Flux:Q4_K_S

Use pre-built binary

# Download pre-built binary from: # https://github.com/ggerganov/llama.cpp/releases # Start a local OpenAI-compatible server with a web UI: ./llama-server -hf StableDiffusionVN/Flux:Q4_K_S # Run inference directly in the terminal: ./llama-cli -hf StableDiffusionVN/Flux:Q4_K_S

Build from source code

git clone https://github.com/ggerganov/llama.cpp.git cd llama.cpp cmake -B build cmake --build build -j --target llama-server llama-cli # Start a local OpenAI-compatible server with a web UI: ./build/bin/llama-server -hf StableDiffusionVN/Flux:Q4_K_S # Run inference directly in the terminal: ./build/bin/llama-cli -hf StableDiffusionVN/Flux:Q4_K_S

Use Docker

docker model run hf.co/StableDiffusionVN/Flux:Q4_K_S

- LM Studio

- Jan

- Ollama

How to use StableDiffusionVN/Flux with Ollama:

ollama run hf.co/StableDiffusionVN/Flux:Q4_K_S

- Unsloth Studio new

How to use StableDiffusionVN/Flux with Unsloth Studio:

Install Unsloth Studio (macOS, Linux, WSL)

curl -fsSL https://unsloth.ai/install.sh | sh # Run unsloth studio unsloth studio -H 0.0.0.0 -p 8888 # Then open http://localhost:8888 in your browser # Search for StableDiffusionVN/Flux to start chatting

Install Unsloth Studio (Windows)

irm https://unsloth.ai/install.ps1 | iex # Run unsloth studio unsloth studio -H 0.0.0.0 -p 8888 # Then open http://localhost:8888 in your browser # Search for StableDiffusionVN/Flux to start chatting

Using HuggingFace Spaces for Unsloth

# No setup required # Open https://huggingface.co/spaces/unsloth/studio in your browser # Search for StableDiffusionVN/Flux to start chatting

- Docker Model Runner

How to use StableDiffusionVN/Flux with Docker Model Runner:

docker model run hf.co/StableDiffusionVN/Flux:Q4_K_S

- Lemonade

How to use StableDiffusionVN/Flux with Lemonade:

Pull the model

# Download Lemonade from https://lemonade-server.ai/ lemonade pull StableDiffusionVN/Flux:Q4_K_S

Run and chat with the model

lemonade run user.Flux-Q4_K_S

List all available models

lemonade list

Update README.md

Browse files

README.md

CHANGED

|

@@ -7,21 +7,37 @@ language:

|

|

| 7 |

pipeline_tag: text-to-image

|

| 8 |

---

|

| 9 |

*Youtube:*

|

|

|

|

| 10 |

[](https://www.youtube.com/watch?v=PbCiROfJABs&list=PLG1XdX8X1YTouiCg7eIl62AcbGiEP0ktk)

|

|

|

|

| 11 |

*Colab:*

|

| 12 |

[](https://bit.ly/sdvnwebuiv2)

|

|

|

|

| 13 |

[](https://comfy.vn)

|

|

|

|

| 14 |

*Colab Training Lora:*

|

| 15 |

[](https://bit.ly/3MeF8Tg)

|

| 16 |

-

*Workflow:*

|

| 17 |

|

| 18 |

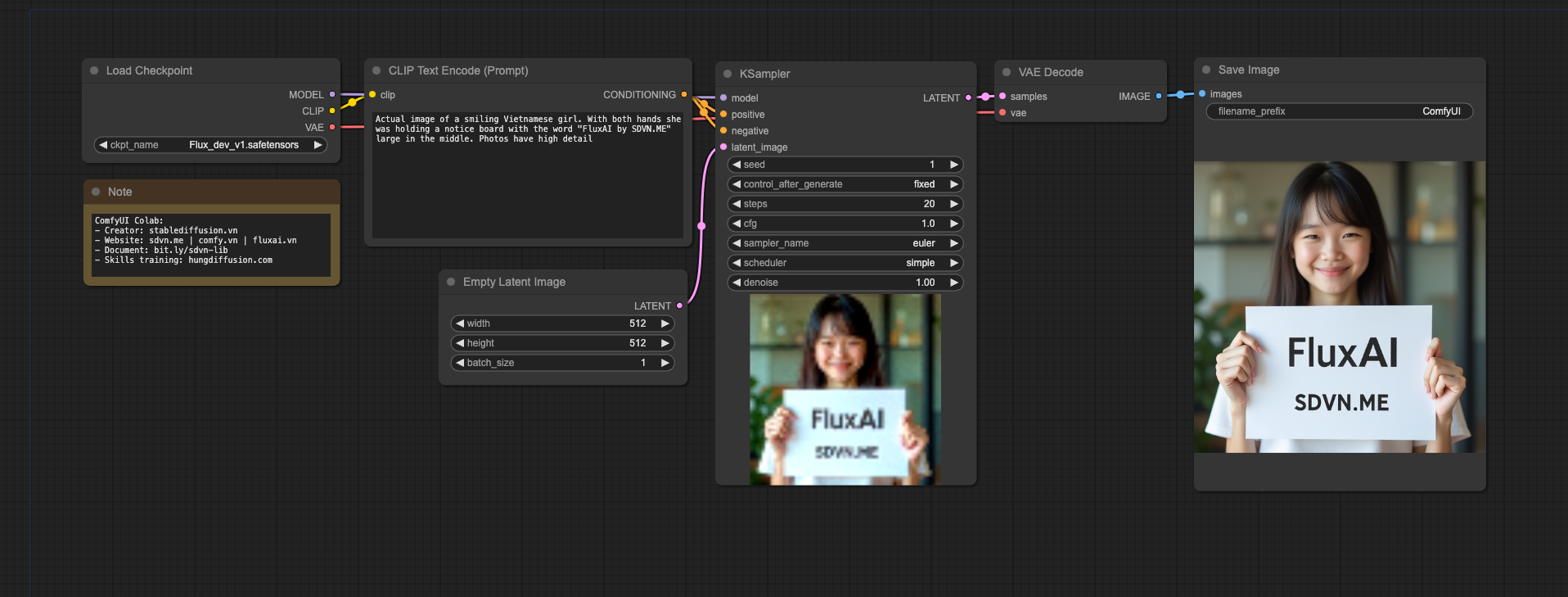

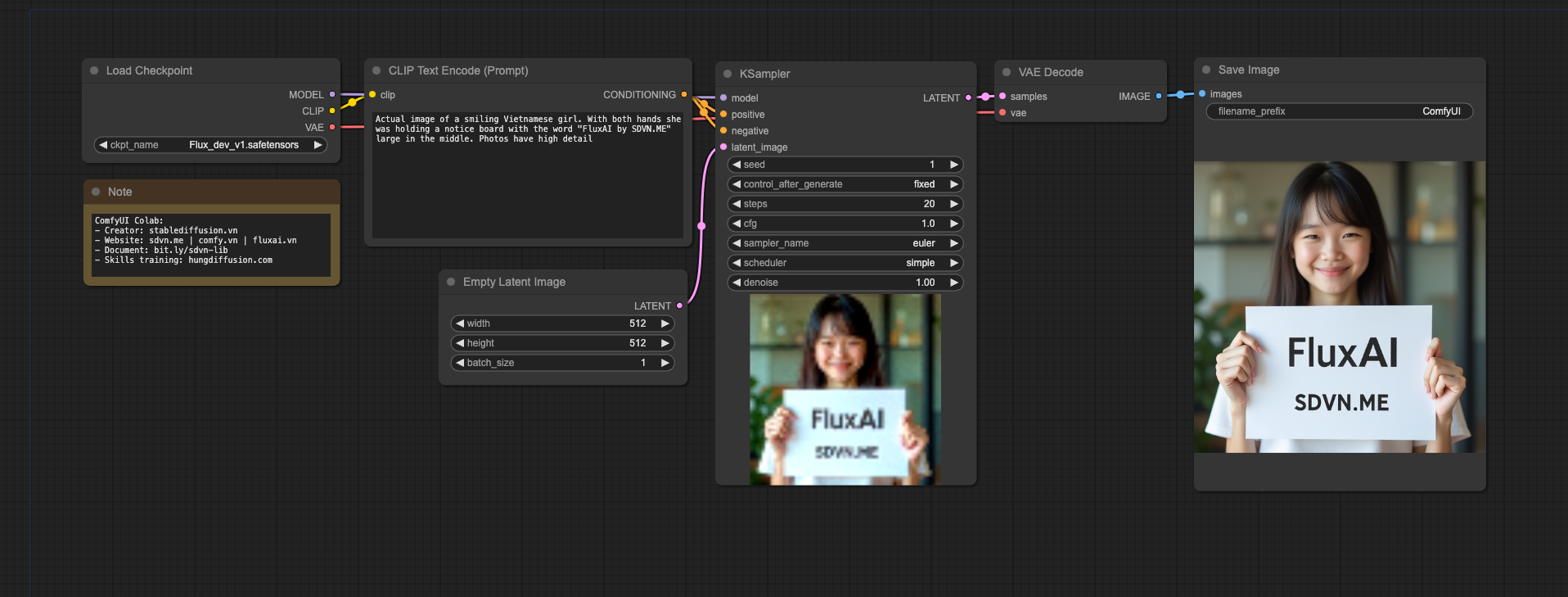

`=> Text-img`

|

| 19 |

|

|

|

|

| 20 |

`=> Img-img`

|

| 21 |

|

|

|

|

| 22 |

`=> Inpaint`

|

| 23 |

|

|

|

|

| 24 |

`=> Schnell Text-img`

|

| 25 |

|

|

|

|

| 26 |

`=> Schnell Text-img-upscale`

|

| 27 |

-

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 7 |

pipeline_tag: text-to-image

|

| 8 |

---

|

| 9 |

*Youtube:*

|

| 10 |

+

|

| 11 |

[](https://www.youtube.com/watch?v=PbCiROfJABs&list=PLG1XdX8X1YTouiCg7eIl62AcbGiEP0ktk)

|

| 12 |

+

|

| 13 |

*Colab:*

|

| 14 |

[](https://bit.ly/sdvnwebuiv2)

|

| 15 |

+

|

| 16 |

[](https://comfy.vn)

|

| 17 |

+

|

| 18 |

*Colab Training Lora:*

|

| 19 |

[](https://bit.ly/3MeF8Tg)

|

| 20 |

+

*Comfy Workflow:*

|

| 21 |

|

| 22 |

`=> Text-img`

|

| 23 |

|

| 24 |

+

|

| 25 |

`=> Img-img`

|

| 26 |

|

| 27 |

+

|

| 28 |

`=> Inpaint`

|

| 29 |

|

| 30 |

+

|

| 31 |

`=> Schnell Text-img`

|

| 32 |

|

| 33 |

+

|

| 34 |

`=> Schnell Text-img-upscale`

|

| 35 |

+

|

| 36 |

+

|

| 37 |

+

*Comfy Node:*

|

| 38 |

+

|

| 39 |

+

- Comfy NF4 Support: https://github.com/comfyanonymous/ComfyUI_bitsandbytes_NF4

|

| 40 |

+

|

| 41 |

+

- Comfy GGUF Support: https://github.com/city96/ComfyUI-GGUF

|

| 42 |

+

|

| 43 |

+

- Comfy Instant Union Controlnet Support: https://github.com/EeroHeikkinen/ComfyUI-eesahesNodes

|