| {i} | \n" + html_code += "

|---|

| {elt} | \n" + html_code += "

"

+ return html_code

+

+

+class NotebookProgressBar:

+ """

+ A progress par for display in a notebook.

+

+ Class attributes (overridden by derived classes)

+

+ - **warmup** (`int`) -- The number of iterations to do at the beginning while ignoring `update_every`.

+ - **update_every** (`float`) -- Since calling the time takes some time, we only do it every presumed

+ `update_every` seconds. The progress bar uses the average time passed up until now to guess the next value

+ for which it will call the update.

+

+ Args:

+ total (`int`):

+ The total number of iterations to reach.

+ prefix (`str`, *optional*):

+ A prefix to add before the progress bar.

+ leave (`bool`, *optional*, defaults to `True`):

+ Whether or not to leave the progress bar once it's completed. You can always call the

+ [`~utils.notebook.NotebookProgressBar.close`] method to make the bar disappear.

+ parent ([`~notebook.NotebookTrainingTracker`], *optional*):

+ A parent object (like [`~utils.notebook.NotebookTrainingTracker`]) that spawns progress bars and handle

+ their display. If set, the object passed must have a `display()` method.

+ width (`int`, *optional*, defaults to 300):

+ The width (in pixels) that the bar will take.

+

+ Example:

+

+ ```python

+ import time

+

+ pbar = NotebookProgressBar(100)

+ for val in range(100):

+ pbar.update(val)

+ time.sleep(0.07)

+ pbar.update(100)

+ ```"""

+

+ warmup = 5

+ update_every = 0.2

+

+ def __init__(

+ self,

+ total: int,

+ prefix: Optional[str] = None,

+ leave: bool = True,

+ parent: Optional["NotebookTrainingTracker"] = None,

+ width: int = 300,

+ ):

+ self.total = total

+ self.prefix = "" if prefix is None else prefix

+ self.leave = leave

+ self.parent = parent

+ self.width = width

+ self.last_value = None

+ self.comment = None

+ self.output = None

+ self.value = None

+ self.label = None

+ if "VSCODE_PID" in os.environ:

+ self.update_every = 0.5 # Adjusted for smooth updated as html rending is slow on VS Code

+ # This is the only adjustment required to optimize training html rending

+

+ def update(self, value: int, force_update: bool = False, comment: Optional[str] = None):

+ """

+ The main method to update the progress bar to `value`.

+

+ Args:

+ value (`int`):

+ The value to use. Must be between 0 and `total`.

+ force_update (`bool`, *optional*, defaults to `False`):

+ Whether or not to force and update of the internal state and display (by default, the bar will wait for

+ `value` to reach the value it predicted corresponds to a time of more than the `update_every` attribute

+ since the last update to avoid adding boilerplate).

+ comment (`str`, *optional*):

+ A comment to add on the left of the progress bar.

+ """

+ self.value = value

+ if comment is not None:

+ self.comment = comment

+ if self.last_value is None:

+ self.start_time = self.last_time = time.time()

+ self.start_value = self.last_value = value

+ self.elapsed_time = self.predicted_remaining = None

+ self.first_calls = self.warmup

+ self.wait_for = 1

+ self.update_bar(value)

+ elif value <= self.last_value and not force_update:

+ return

+ elif force_update or self.first_calls > 0 or value >= min(self.last_value + self.wait_for, self.total):

+ if self.first_calls > 0:

+ self.first_calls -= 1

+ current_time = time.time()

+ self.elapsed_time = current_time - self.start_time

+ # We could have value = self.start_value if the update is called twixe with the same start value.

+ if value > self.start_value:

+ self.average_time_per_item = self.elapsed_time / (value - self.start_value)

+ else:

+ self.average_time_per_item = None

+ if value >= self.total:

+ value = self.total

+ self.predicted_remaining = None

+ if not self.leave:

+ self.close()

+ elif self.average_time_per_item is not None:

+ self.predicted_remaining = self.average_time_per_item * (self.total - value)

+ self.update_bar(value)

+ self.last_value = value

+ self.last_time = current_time

+ if (self.average_time_per_item is None) or (self.average_time_per_item == 0):

+ self.wait_for = 1

+ else:

+ self.wait_for = max(int(self.update_every / self.average_time_per_item), 1)

+

+ def update_bar(self, value, comment=None):

+ spaced_value = " " * (len(str(self.total)) - len(str(value))) + str(value)

+ if self.elapsed_time is None:

+ self.label = f"[{spaced_value}/{self.total} : < :"

+ elif self.predicted_remaining is None:

+ self.label = f"[{spaced_value}/{self.total} {format_time(self.elapsed_time)}"

+ else:

+ self.label = (

+ f"[{spaced_value}/{self.total} {format_time(self.elapsed_time)} <"

+ f" {format_time(self.predicted_remaining)}"

+ )

+ if self.average_time_per_item == 0:

+ self.label += ", +inf it/s"

+ else:

+ self.label += f", {1 / self.average_time_per_item:.2f} it/s"

+

+ self.label += "]" if self.comment is None or len(self.comment) == 0 else f", {self.comment}]"

+ self.display()

+

+ def display(self):

+ self.html_code = html_progress_bar(self.value, self.total, self.prefix, self.label, self.width)

+ if self.parent is not None:

+ # If this is a child bar, the parent will take care of the display.

+ self.parent.display()

+ return

+ if self.output is None:

+ self.output = disp.display(disp.HTML(self.html_code), display_id=True)

+ else:

+ self.output.update(disp.HTML(self.html_code))

+

+ def close(self):

+ "Closes the progress bar."

+ if self.parent is None and self.output is not None:

+ self.output.update(disp.HTML(""))

+

+

+class NotebookTrainingTracker(NotebookProgressBar):

+ """

+ An object tracking the updates of an ongoing training with progress bars and a nice table reporting metrics.

+

+ Args:

+ num_steps (`int`): The number of steps during training. column_names (`List[str]`, *optional*):

+ The list of column names for the metrics table (will be inferred from the first call to

+ [`~utils.notebook.NotebookTrainingTracker.write_line`] if not set).

+ """

+

+ def __init__(self, num_steps, column_names=None):

+ super().__init__(num_steps)

+ self.inner_table = None if column_names is None else [column_names]

+ self.child_bar = None

+

+ def display(self):

+ self.html_code = html_progress_bar(self.value, self.total, self.prefix, self.label, self.width)

+ if self.inner_table is not None:

+ self.html_code += text_to_html_table(self.inner_table)

+ if self.child_bar is not None:

+ self.html_code += self.child_bar.html_code

+ if self.output is None:

+ self.output = disp.display(disp.HTML(self.html_code), display_id=True)

+ else:

+ self.output.update(disp.HTML(self.html_code))

+

+ def write_line(self, values):

+ """

+ Write the values in the inner table.

+

+ Args:

+ values (`Dict[str, float]`): The values to display.

+ """

+ if self.inner_table is None:

+ self.inner_table = [list(values.keys()), list(values.values())]

+ else:

+ columns = self.inner_table[0]

+ for key in values.keys():

+ if key not in columns:

+ columns.append(key)

+ self.inner_table[0] = columns

+ if len(self.inner_table) > 1:

+ last_values = self.inner_table[-1]

+ first_column = self.inner_table[0][0]

+ if last_values[0] != values[first_column]:

+ # write new line

+ self.inner_table.append([values[c] if c in values else "No Log" for c in columns])

+ else:

+ # update last line

+ new_values = values

+ for c in columns:

+ if c not in new_values.keys():

+ new_values[c] = last_values[columns.index(c)]

+ self.inner_table[-1] = [new_values[c] for c in columns]

+ else:

+ self.inner_table.append([values[c] for c in columns])

+

+ def add_child(self, total, prefix=None, width=300):

+ """

+ Add a child progress bar displayed under the table of metrics. The child progress bar is returned (so it can be

+ easily updated).

+

+ Args:

+ total (`int`): The number of iterations for the child progress bar.

+ prefix (`str`, *optional*): A prefix to write on the left of the progress bar.

+ width (`int`, *optional*, defaults to 300): The width (in pixels) of the progress bar.

+ """

+ self.child_bar = NotebookProgressBar(total, prefix=prefix, parent=self, width=width)

+ return self.child_bar

+

+ def remove_child(self):

+ """

+ Closes the child progress bar.

+ """

+ self.child_bar = None

+ self.display()

+

+

+class NotebookProgressCallback(TrainerCallback):

+ """

+ A [`TrainerCallback`] that displays the progress of training or evaluation, optimized for Jupyter Notebooks or

+ Google colab.

+ """

+

+ def __init__(self):

+ self.training_tracker = None

+ self.prediction_bar = None

+ self._force_next_update = False

+

+ def on_train_begin(self, args, state, control, **kwargs):

+ self.first_column = "Epoch" if args.eval_strategy == IntervalStrategy.EPOCH else "Step"

+ self.training_loss = 0

+ self.last_log = 0

+ column_names = [self.first_column] + ["Training Loss"]

+ if args.eval_strategy != IntervalStrategy.NO:

+ column_names.append("Validation Loss")

+ self.training_tracker = NotebookTrainingTracker(state.max_steps, column_names)

+

+ def on_step_end(self, args, state, control, **kwargs):

+ epoch = int(state.epoch) if int(state.epoch) == state.epoch else f"{state.epoch:.2f}"

+ self.training_tracker.update(

+ state.global_step + 1,

+ comment=f"Epoch {epoch}/{state.num_train_epochs}",

+ force_update=self._force_next_update,

+ )

+ self._force_next_update = False

+

+ def on_prediction_step(self, args, state, control, eval_dataloader=None, **kwargs):

+ if not has_length(eval_dataloader):

+ return

+ if self.prediction_bar is None:

+ if self.training_tracker is not None:

+ self.prediction_bar = self.training_tracker.add_child(len(eval_dataloader))

+ else:

+ self.prediction_bar = NotebookProgressBar(len(eval_dataloader))

+ self.prediction_bar.update(1)

+ else:

+ self.prediction_bar.update(self.prediction_bar.value + 1)

+

+ def on_predict(self, args, state, control, **kwargs):

+ if self.prediction_bar is not None:

+ self.prediction_bar.close()

+ self.prediction_bar = None

+

+ def on_log(self, args, state, control, logs=None, **kwargs):

+ # Only for when there is no evaluation

+ if args.eval_strategy == IntervalStrategy.NO and "loss" in logs:

+ values = {"Training Loss": logs["loss"]}

+ # First column is necessarily Step sine we're not in epoch eval strategy

+ values["Step"] = state.global_step

+ self.training_tracker.write_line(values)

+

+ def on_evaluate(self, args, state, control, metrics=None, **kwargs):

+ if self.training_tracker is not None:

+ values = {"Training Loss": "No log", "Validation Loss": "No log"}

+ for log in reversed(state.log_history):

+ if "loss" in log:

+ values["Training Loss"] = log["loss"]

+ break

+

+ if self.first_column == "Epoch":

+ values["Epoch"] = int(state.epoch)

+ else:

+ values["Step"] = state.global_step

+ metric_key_prefix = "eval"

+ for k in metrics:

+ if k.endswith("_loss"):

+ metric_key_prefix = re.sub(r"\_loss$", "", k)

+ _ = metrics.pop("total_flos", None)

+ _ = metrics.pop("epoch", None)

+ _ = metrics.pop(f"{metric_key_prefix}_runtime", None)

+ _ = metrics.pop(f"{metric_key_prefix}_samples_per_second", None)

+ _ = metrics.pop(f"{metric_key_prefix}_steps_per_second", None)

+ _ = metrics.pop(f"{metric_key_prefix}_jit_compilation_time", None)

+ for k, v in metrics.items():

+ splits = k.split("_")

+ name = " ".join([part.capitalize() for part in splits[1:]])

+ if name == "Loss":

+ # Single dataset

+ name = "Validation Loss"

+ values[name] = v

+ self.training_tracker.write_line(values)

+ self.training_tracker.remove_child()

+ self.prediction_bar = None

+ # Evaluation takes a long time so we should force the next update.

+ self._force_next_update = True

+

+ def on_train_end(self, args, state, control, **kwargs):

+ self.training_tracker.update(

+ state.global_step,

+ comment=f"Epoch {int(state.epoch)}/{state.num_train_epochs}",

+ force_update=True,

+ )

+ self.training_tracker = None

diff --git a/docs/transformers/build/lib/transformers/utils/peft_utils.py b/docs/transformers/build/lib/transformers/utils/peft_utils.py

new file mode 100644

index 0000000000000000000000000000000000000000..3eb62a099059d4c1acd9eef75f94ee5ac22fd60f

--- /dev/null

+++ b/docs/transformers/build/lib/transformers/utils/peft_utils.py

@@ -0,0 +1,125 @@

+# Copyright 2023 The HuggingFace Team. All rights reserved.

+#

+# Licensed under the Apache License, Version 2.0 (the "License");

+# you may not use this file except in compliance with the License.

+# You may obtain a copy of the License at

+#

+# http://www.apache.org/licenses/LICENSE-2.0

+#

+# Unless required by applicable law or agreed to in writing, software

+# distributed under the License is distributed on an "AS IS" BASIS,

+# WITHOUT WARRANTIES OR CONDITIONS OF ANY KIND, either express or implied.

+# See the License for the specific language governing permissions and

+# limitations under the License.

+import importlib

+import os

+from typing import Optional, Union

+

+from packaging import version

+

+from .hub import cached_file

+from .import_utils import is_peft_available

+

+

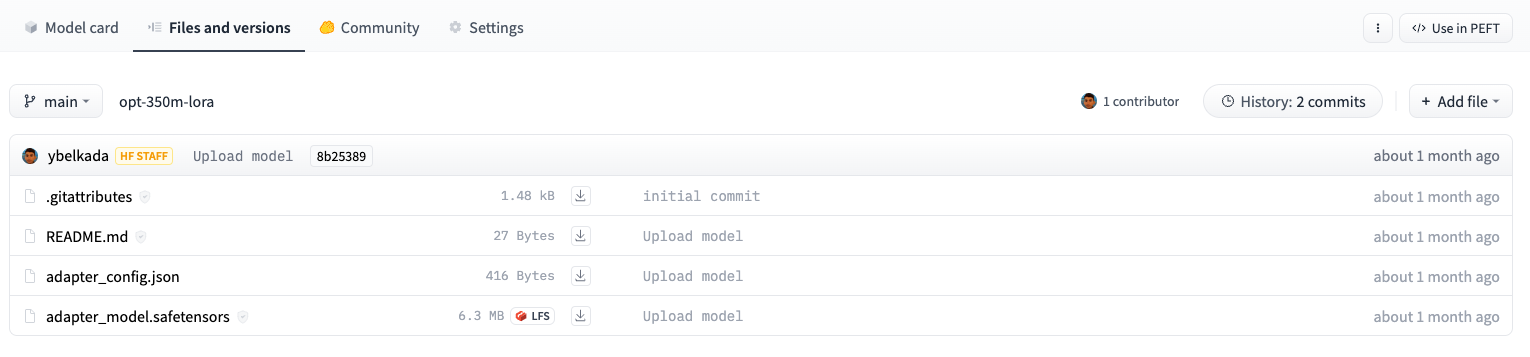

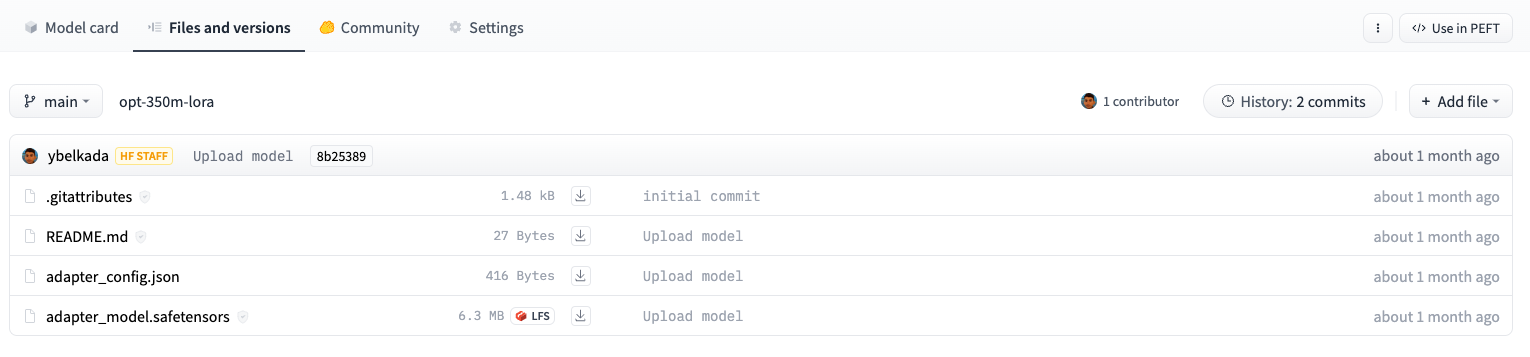

+ADAPTER_CONFIG_NAME = "adapter_config.json"

+ADAPTER_WEIGHTS_NAME = "adapter_model.bin"

+ADAPTER_SAFE_WEIGHTS_NAME = "adapter_model.safetensors"

+

+

+def find_adapter_config_file(

+ model_id: str,

+ cache_dir: Optional[Union[str, os.PathLike]] = None,

+ force_download: bool = False,

+ resume_download: Optional[bool] = None,

+ proxies: Optional[dict[str, str]] = None,

+ token: Optional[Union[bool, str]] = None,

+ revision: Optional[str] = None,

+ local_files_only: bool = False,

+ subfolder: str = "",

+ _commit_hash: Optional[str] = None,

+) -> Optional[str]:

+ r"""

+ Simply checks if the model stored on the Hub or locally is an adapter model or not, return the path of the adapter

+ config file if it is, None otherwise.

+

+ Args:

+ model_id (`str`):

+ The identifier of the model to look for, can be either a local path or an id to the repository on the Hub.

+ cache_dir (`str` or `os.PathLike`, *optional*):

+ Path to a directory in which a downloaded pretrained model configuration should be cached if the standard

+ cache should not be used.

+ force_download (`bool`, *optional*, defaults to `False`):

+ Whether or not to force to (re-)download the configuration files and override the cached versions if they

+ exist.

+ resume_download:

+ Deprecated and ignored. All downloads are now resumed by default when possible.

+ Will be removed in v5 of Transformers.

+ proxies (`Dict[str, str]`, *optional*):

+ A dictionary of proxy servers to use by protocol or endpoint, e.g., `{'http': 'foo.bar:3128',

+ 'http://hostname': 'foo.bar:4012'}.` The proxies are used on each request.

+ token (`str` or *bool*, *optional*):

+ The token to use as HTTP bearer authorization for remote files. If `True`, will use the token generated

+ when running `huggingface-cli login` (stored in `~/.huggingface`).

+ revision (`str`, *optional*, defaults to `"main"`):

+ The specific model version to use. It can be a branch name, a tag name, or a commit id, since we use a

+ git-based system for storing models and other artifacts on huggingface.co, so `revision` can be any

+ identifier allowed by git.

+

+ \x12\x17\n\teos_piece\x18/ \x01(\t:\x04\x12\x18\n\tpad_piece\x18\x30"

+ b" \x01(\t:\x05".decode("utf-8"),

+ message_type=None,

+ enum_type=None,

+ containing_type=None,

+ is_extension=False,

+ extension_scope=None,

+ serialized_options=None,

+ file=DESCRIPTOR,

+ create_key=_descriptor._internal_create_key,

+ ),

+ _descriptor.FieldDescriptor(

+ name="eos_piece",

+ full_name="sentencepiece.TrainerSpec.eos_piece",

+ index=36,

+ number=47,

+ type=9,

+ cpp_type=9,

+ label=1,

+ has_default_value=True,

+ default_value=b"".decode("utf-8"),

+ message_type=None,

+ enum_type=None,

+ containing_type=None,

+ is_extension=False,

+ extension_scope=None,

+ serialized_options=None,

+ file=DESCRIPTOR,

+ create_key=_descriptor._internal_create_key,

+ ),

+ _descriptor.FieldDescriptor(

+ name="pad_piece",

+ full_name="sentencepiece.TrainerSpec.pad_piece",

+ index=37,

+ number=48,

+ type=9,

+ cpp_type=9,

+ label=1,

+ has_default_value=True,

+ default_value=b"\x12\x17\n\teos_piece\x18/ \x01(\t:\x04\x12\x18\n\tpad_piece\x18\x30 \x01(\t:\x05 +

+ +

+  +

+

+

++🖼️ **الرؤية الحاسوبية**: تصنيف الصور، وكشف الأشياء، وتجزئتها.

+🗣️ **الصوت**: التعرف التلقائي على الكلام، وتصنيف الصوت.

+🐙 **متعدد الوسائط**: الإجابة على الأسئلة الجدولية، والتعرف البصري على الحروف، واستخراج المعلومات من المستندات الممسوحة ضوئيًا، وتصنيف الفيديو، والإجابة على الأسئلة البصرية. + +تدعم 🤗 Transformers التوافق بين أطر العمل المختلفة مثل PyTorch و TensorFlow و JAX. ويوفر ذلك المرونة لاستخدام إطار عمل مختلف في كل مرحلة من مراحل حياة النموذج؛ قم بتدريب نموذج في ثلاث خطوط من التعليمات البرمجية في إطار واحد، وقم بتحميله للاستدلال في إطار آخر. ويمكن أيضًا تصدير النماذج إلى صيغ مثل ONNX و TorchScript للنشر في بيئات الإنتاج. + +انضم إلى المجتمع المتنامي على [Hub](https://huggingface.co/models) أو [المنتدى](https://discuss.huggingface.co/) أو [Discord](https://discord.com/invite/JfAtkvEtRb) اليوم! + +## إذا كنت تبحث عن دعم مخصص من فريق Hugging Face + + +

+

+

+## المحتويات

+

+ينقسم التوثيق إلى خمسة أقسام:

+

+- **ابدأ** تقدم جولة سريعة في المكتبة وتعليمات التثبيت للبدء.

+- **الدروس التعليمية** هي مكان رائع للبدء إذا كنت مبتدئًا. سيساعدك هذا القسم على اكتساب المهارات الأساسية التي تحتاجها للبدء في استخدام المكتبة.

+- **أدلة كيفية الاستخدام** تُظهر لك كيفية تحقيق هدف محدد، مثل ضبط نموذج مسبق التدريب لنمذجة اللغة أو كيفية كتابة ومشاركة نموذج مخصص.

+- **الأدلة المفاهيمية** تقدم مناقشة وتفسيرًا أكثر للأفكار والمفاهيم الأساسية وراء النماذج والمهام وفلسفة التصميم في 🤗 Transformers.

+- **واجهة برمجة التطبيقات (API)** تصف جميع الفئات والوظائف:

+

+ - **الفئات الرئيسية** تشرح الفئات الأكثر أهمية مثل التكوين والنمذجة والتحليل النصي وخط الأنابيب.

+ - **النماذج** تشرح الفئات والوظائف المتعلقة بكل نموذج يتم تنفيذه في المكتبة.

+ - **المساعدون الداخليون** يشرحون فئات ووظائف المساعدة التي يتم استخدامها داخليًا.

+

+

+## النماذج والأطر المدعومة

+

+يمثل الجدول أدناه الدعم الحالي في المكتبة لكل من هذه النماذج، وما إذا كان لديها محلل نحوي Python (يُسمى "بطيء"). محلل نحوي "سريع" مدعوم بمكتبة 🤗 Tokenizers، وما إذا كان لديها دعم في Jax (عبر Flax) و/أو PyTorch و/أو TensorFlow.

+

+

+

+

+| Model | PyTorch support | TensorFlow support | Flax Support |

+|:------------------------------------------------------------------------:|:---------------:|:------------------:|:------------:|

+| [ALBERT](model_doc/albert) | ✅ | ✅ | ✅ |

+| [ALIGN](model_doc/align) | ✅ | ❌ | ❌ |

+| [AltCLIP](model_doc/altclip) | ✅ | ❌ | ❌ |

+| [Audio Spectrogram Transformer](model_doc/audio-spectrogram-transformer) | ✅ | ❌ | ❌ |

+| [Autoformer](model_doc/autoformer) | ✅ | ❌ | ❌ |

+| [Bark](model_doc/bark) | ✅ | ❌ | ❌ |

+| [BART](model_doc/bart) | ✅ | ✅ | ✅ |

+| [BARThez](model_doc/barthez) | ✅ | ✅ | ✅ |

+| [BARTpho](model_doc/bartpho) | ✅ | ✅ | ✅ |

+| [BEiT](model_doc/beit) | ✅ | ❌ | ✅ |

+| [BERT](model_doc/bert) | ✅ | ✅ | ✅ |

+| [Bert Generation](model_doc/bert-generation) | ✅ | ❌ | ❌ |

+| [BertJapanese](model_doc/bert-japanese) | ✅ | ✅ | ✅ |

+| [BERTweet](model_doc/bertweet) | ✅ | ✅ | ✅ |

+| [BigBird](model_doc/big_bird) | ✅ | ❌ | ✅ |

+| [BigBird-Pegasus](model_doc/bigbird_pegasus) | ✅ | ❌ | ❌ |

+| [BioGpt](model_doc/biogpt) | ✅ | ❌ | ❌ |

+| [BiT](model_doc/bit) | ✅ | ❌ | ❌ |

+| [Blenderbot](model_doc/blenderbot) | ✅ | ✅ | ✅ |

+| [BlenderbotSmall](model_doc/blenderbot-small) | ✅ | ✅ | ✅ |

+| [BLIP](model_doc/blip) | ✅ | ✅ | ❌ |

+| [BLIP-2](model_doc/blip-2) | ✅ | ❌ | ❌ |

+| [BLOOM](model_doc/bloom) | ✅ | ❌ | ✅ |

+| [BORT](model_doc/bort) | ✅ | ✅ | ✅ |

+| [BridgeTower](model_doc/bridgetower) | ✅ | ❌ | ❌ |

+| [BROS](model_doc/bros) | ✅ | ❌ | ❌ |

+| [ByT5](model_doc/byt5) | ✅ | ✅ | ✅ |

+| [CamemBERT](model_doc/camembert) | ✅ | ✅ | ❌ |

+| [CANINE](model_doc/canine) | ✅ | ❌ | ❌ |

+| [Chameleon](model_doc/chameleon) | ✅ | ❌ | ❌ |

+| [Chinese-CLIP](model_doc/chinese_clip) | ✅ | ❌ | ❌ |

+| [CLAP](model_doc/clap) | ✅ | ❌ | ❌ |

+| [CLIP](model_doc/clip) | ✅ | ✅ | ✅ |

+| [CLIPSeg](model_doc/clipseg) | ✅ | ❌ | ❌ |

+| [CLVP](model_doc/clvp) | ✅ | ❌ | ❌ |

+| [CodeGen](model_doc/codegen) | ✅ | ❌ | ❌ |

+| [CodeLlama](model_doc/code_llama) | ✅ | ❌ | ✅ |

+| [Cohere](model_doc/cohere) | ✅ | ❌ | ❌ |

+| [Conditional DETR](model_doc/conditional_detr) | ✅ | ❌ | ❌ |

+| [ConvBERT](model_doc/convbert) | ✅ | ✅ | ❌ |

+| [ConvNeXT](model_doc/convnext) | ✅ | ✅ | ❌ |

+| [ConvNeXTV2](model_doc/convnextv2) | ✅ | ✅ | ❌ |

+| [CPM](model_doc/cpm) | ✅ | ✅ | ✅ |

+| [CPM-Ant](model_doc/cpmant) | ✅ | ❌ | ❌ |

+| [CTRL](model_doc/ctrl) | ✅ | ✅ | ❌ |

+| [CvT](model_doc/cvt) | ✅ | ✅ | ❌ |

+| [DAC](model_doc/dac) | ✅ | ❌ | ❌ |

+| [Data2VecAudio](model_doc/data2vec) | ✅ | ❌ | ❌ |

+| [Data2VecText](model_doc/data2vec) | ✅ | ❌ | ❌ |

+| [Data2VecVision](model_doc/data2vec) | ✅ | ✅ | ❌ |

+| [DBRX](model_doc/dbrx) | ✅ | ❌ | ❌ |

+| [DeBERTa](model_doc/deberta) | ✅ | ✅ | ❌ |

+| [DeBERTa-v2](model_doc/deberta-v2) | ✅ | ✅ | ❌ |

+| [Decision Transformer](model_doc/decision_transformer) | ✅ | ❌ | ❌ |

+| [Deformable DETR](model_doc/deformable_detr) | ✅ | ❌ | ❌ |

+| [DeiT](model_doc/deit) | ✅ | ✅ | ❌ |

+| [DePlot](model_doc/deplot) | ✅ | ❌ | ❌ |

+| [Depth Anything](model_doc/depth_anything) | ✅ | ❌ | ❌ |

+| [DETA](model_doc/deta) | ✅ | ❌ | ❌ |

+| [DETR](model_doc/detr) | ✅ | ❌ | ❌ |

+| [DialoGPT](model_doc/dialogpt) | ✅ | ✅ | ✅ |

+| [DiNAT](model_doc/dinat) | ✅ | ❌ | ❌ |

+| [DINOv2](model_doc/dinov2) | ✅ | ❌ | ✅ |

+| [DistilBERT](model_doc/distilbert) | ✅ | ✅ | ✅ |

+| [DiT](model_doc/dit) | ✅ | ❌ | ✅ |

+| [DonutSwin](model_doc/donut) | ✅ | ❌ | ❌ |

+| [DPR](model_doc/dpr) | ✅ | ✅ | ❌ |

+| [DPT](model_doc/dpt) | ✅ | ❌ | ❌ |

+| [EfficientFormer](model_doc/efficientformer) | ✅ | ✅ | ❌ |

+| [EfficientNet](model_doc/efficientnet) | ✅ | ❌ | ❌ |

+| [ELECTRA](model_doc/electra) | ✅ | ✅ | ✅ |

+| [EnCodec](model_doc/encodec) | ✅ | ❌ | ❌ |

+| [Encoder decoder](model_doc/encoder-decoder) | ✅ | ✅ | ✅ |

+| [ERNIE](model_doc/ernie) | ✅ | ❌ | ❌ |

+| [ErnieM](model_doc/ernie_m) | ✅ | ❌ | ❌ |

+| [ESM](model_doc/esm) | ✅ | ✅ | ❌ |

+| [FairSeq Machine-Translation](model_doc/fsmt) | ✅ | ❌ | ❌ |

+| [Falcon](model_doc/falcon) | ✅ | ❌ | ❌ |

+| [FalconMamba](model_doc/falcon_mamba) | ✅ | ❌ | ❌ |

+| [FastSpeech2Conformer](model_doc/fastspeech2_conformer) | ✅ | ❌ | ❌ |

+| [FLAN-T5](model_doc/flan-t5) | ✅ | ✅ | ✅ |

+| [FLAN-UL2](model_doc/flan-ul2) | ✅ | ✅ | ✅ |

+| [FlauBERT](model_doc/flaubert) | ✅ | ✅ | ❌ |

+| [FLAVA](model_doc/flava) | ✅ | ❌ | ❌ |

+| [FNet](model_doc/fnet) | ✅ | ❌ | ❌ |

+| [FocalNet](model_doc/focalnet) | ✅ | ❌ | ❌ |

+| [Funnel Transformer](model_doc/funnel) | ✅ | ✅ | ❌ |

+| [Fuyu](model_doc/fuyu) | ✅ | ❌ | ❌ |

+| [Gemma](model_doc/gemma) | ✅ | ❌ | ✅ |

+| [Gemma2](model_doc/gemma2) | ✅ | ❌ | ❌ |

+| [GIT](model_doc/git) | ✅ | ❌ | ❌ |

+| [GLPN](model_doc/glpn) | ✅ | ❌ | ❌ |

+| [GPT Neo](model_doc/gpt_neo) | ✅ | ❌ | ✅ |

+| [GPT NeoX](model_doc/gpt_neox) | ✅ | ❌ | ❌ |

+| [GPT NeoX Japanese](model_doc/gpt_neox_japanese) | ✅ | ❌ | ❌ |

+| [GPT-J](model_doc/gptj) | ✅ | ✅ | ✅ |

+| [GPT-Sw3](model_doc/gpt-sw3) | ✅ | ✅ | ✅ |

+| [GPTBigCode](model_doc/gpt_bigcode) | ✅ | ❌ | ❌ |

+| [GPTSAN-japanese](model_doc/gptsan-japanese) | ✅ | ❌ | ❌ |

+| [Granite](model_doc/granite) | ✅ | ❌ | ❌ |

+| [Graphormer](model_doc/graphormer) | ✅ | ❌ | ❌ |

+| [Grounding DINO](model_doc/grounding-dino) | ✅ | ❌ | ❌ |

+| [GroupViT](model_doc/groupvit) | ✅ | ✅ | ❌ |

+| [HerBERT](model_doc/herbert) | ✅ | ✅ | ✅ |

+| [Hiera](model_doc/hiera) | ✅ | ❌ | ❌ |

+| [Hubert](model_doc/hubert) | ✅ | ✅ | ❌ |

+| [I-BERT](model_doc/ibert) | ✅ | ❌ | ❌ |

+| [IDEFICS](model_doc/idefics) | ✅ | ✅ | ❌ |

+| [Idefics2](model_doc/idefics2) | ✅ | ❌ | ❌ |

+| [ImageGPT](model_doc/imagegpt) | ✅ | ❌ | ❌ |

+| [Informer](model_doc/informer) | ✅ | ❌ | ❌ |

+| [InstructBLIP](model_doc/instructblip) | ✅ | ❌ | ❌ |

+| [InstructBlipVideo](model_doc/instructblipvideo) | ✅ | ❌ | ❌ |

+| [Jamba](model_doc/jamba) | ✅ | ❌ | ❌ |

+| [JetMoe](model_doc/jetmoe) | ✅ | ❌ | ❌ |

+| [Jukebox](model_doc/jukebox) | ✅ | ❌ | ❌ |

+| [KOSMOS-2](model_doc/kosmos-2) | ✅ | ❌ | ❌ |

+| [LayoutLM](model_doc/layoutlm) | ✅ | ✅ | ❌ |

+| [LayoutLMv2](model_doc/layoutlmv2) | ✅ | ❌ | ❌ |

+| [LayoutLMv3](model_doc/layoutlmv3) | ✅ | ✅ | ❌ |

+| [LayoutXLM](model_doc/layoutxlm) | ✅ | ❌ | ❌ |

+| [LED](model_doc/led) | ✅ | ✅ | ❌ |

+| [LeViT](model_doc/levit) | ✅ | ❌ | ❌ |

+| [LiLT](model_doc/lilt) | ✅ | ❌ | ❌ |

+| [LLaMA](model_doc/llama) | ✅ | ❌ | ✅ |

+| [Llama2](model_doc/llama2) | ✅ | ❌ | ✅ |

+| [Llama3](model_doc/llama3) | ✅ | ❌ | ✅ |

+| [LLaVa](model_doc/llava) | ✅ | ❌ | ❌ |

+| [LLaVA-NeXT](model_doc/llava_next) | ✅ | ❌ | ❌ |

+| [LLaVa-NeXT-Video](model_doc/llava_next_video) | ✅ | ❌ | ❌ |

+| [Longformer](model_doc/longformer) | ✅ | ✅ | ❌ |

+| [LongT5](model_doc/longt5) | ✅ | ❌ | ✅ |

+| [LUKE](model_doc/luke) | ✅ | ❌ | ❌ |

+| [LXMERT](model_doc/lxmert) | ✅ | ✅ | ❌ |

+| [M-CTC-T](model_doc/mctct) | ✅ | ❌ | ❌ |

+| [M2M100](model_doc/m2m_100) | ✅ | ❌ | ❌ |

+| [MADLAD-400](model_doc/madlad-400) | ✅ | ✅ | ✅ |

+| [Mamba](model_doc/mamba) | ✅ | ❌ | ❌ |

+| [mamba2](model_doc/mamba2) | ✅ | ❌ | ❌ |

+| [Marian](model_doc/marian) | ✅ | ✅ | ✅ |

+| [MarkupLM](model_doc/markuplm) | ✅ | ❌ | ❌ |

+| [Mask2Former](model_doc/mask2former) | ✅ | ❌ | ❌ |

+| [MaskFormer](model_doc/maskformer) | ✅ | ❌ | ❌ |

+| [MatCha](model_doc/matcha) | ✅ | ❌ | ❌ |

+| [mBART](model_doc/mbart) | ✅ | ✅ | ✅ |

+| [mBART-50](model_doc/mbart50) | ✅ | ✅ | ✅ |

+| [MEGA](model_doc/mega) | ✅ | ❌ | ❌ |

+| [Megatron-BERT](model_doc/megatron-bert) | ✅ | ❌ | ❌ |

+| [Megatron-GPT2](model_doc/megatron_gpt2) | ✅ | ✅ | ✅ |

+| [MGP-STR](model_doc/mgp-str) | ✅ | ❌ | ❌ |

+| [Mistral](model_doc/mistral) | ✅ | ✅ | ✅ |

+| [Mixtral](model_doc/mixtral) | ✅ | ❌ | ❌ |

+| [mLUKE](model_doc/mluke) | ✅ | ❌ | ❌ |

+| [MMS](model_doc/mms) | ✅ | ✅ | ✅ |

+| [MobileBERT](model_doc/mobilebert) | ✅ | ✅ | ❌ |

+| [MobileNetV1](model_doc/mobilenet_v1) | ✅ | ❌ | ❌ |

+| [MobileNetV2](model_doc/mobilenet_v2) | ✅ | ❌ | ❌ |

+| [MobileViT](model_doc/mobilevit) | ✅ | ✅ | ❌ |

+| [MobileViTV2](model_doc/mobilevitv2) | ✅ | ❌ | ❌ |

+| [MPNet](model_doc/mpnet) | ✅ | ✅ | ❌ |

+| [MPT](model_doc/mpt) | ✅ | ❌ | ❌ |

+| [MRA](model_doc/mra) | ✅ | ❌ | ❌ |

+| [MT5](model_doc/mt5) | ✅ | ✅ | ✅ |

+| [MusicGen](model_doc/musicgen) | ✅ | ❌ | ❌ |

+| [MusicGen Melody](model_doc/musicgen_melody) | ✅ | ❌ | ❌ |

+| [MVP](model_doc/mvp) | ✅ | ❌ | ❌ |

+| [NAT](model_doc/nat) | ✅ | ❌ | ❌ |

+| [Nemotron](model_doc/nemotron) | ✅ | ❌ | ❌ |

+| [Nezha](model_doc/nezha) | ✅ | ❌ | ❌ |

+| [NLLB](model_doc/nllb) | ✅ | ❌ | ❌ |

+| [NLLB-MOE](model_doc/nllb-moe) | ✅ | ❌ | ❌ |

+| [Nougat](model_doc/nougat) | ✅ | ✅ | ✅ |

+| [Nyströmformer](model_doc/nystromformer) | ✅ | ❌ | ❌ |

+| [OLMo](model_doc/olmo) | ✅ | ❌ | ❌ |

+| [OneFormer](model_doc/oneformer) | ✅ | ❌ | ❌ |

+| [OpenAI GPT](model_doc/openai-gpt) | ✅ | ✅ | ❌ |

+| [OpenAI GPT-2](model_doc/gpt2) | ✅ | ✅ | ✅ |

+| [OpenLlama](model_doc/open-llama) | ✅ | ❌ | ❌ |

+| [OPT](model_doc/opt) | ✅ | ✅ | ✅ |

+| [OWL-ViT](model_doc/owlvit) | ✅ | ❌ | ❌ |

+| [OWLv2](model_doc/owlv2) | ✅ | ❌ | ❌ |

+| [PaliGemma](model_doc/paligemma) | ✅ | ❌ | ❌ |

+| [PatchTSMixer](model_doc/patchtsmixer) | ✅ | ❌ | ❌ |

+| [PatchTST](model_doc/patchtst) | ✅ | ❌ | ❌ |

+| [Pegasus](model_doc/pegasus) | ✅ | ✅ | ✅ |

+| [PEGASUS-X](model_doc/pegasus_x) | ✅ | ❌ | ❌ |

+| [Perceiver](model_doc/perceiver) | ✅ | ❌ | ❌ |

+| [Persimmon](model_doc/persimmon) | ✅ | ❌ | ❌ |

+| [Phi](model_doc/phi) | ✅ | ❌ | ❌ |

+| [Phi3](model_doc/phi3) | ✅ | ❌ | ❌ |

+| [PhoBERT](model_doc/phobert) | ✅ | ✅ | ✅ |

+| [Pix2Struct](model_doc/pix2struct) | ✅ | ❌ | ❌ |

+| [PLBart](model_doc/plbart) | ✅ | ❌ | ❌ |

+| [PoolFormer](model_doc/poolformer) | ✅ | ❌ | ❌ |

+| [Pop2Piano](model_doc/pop2piano) | ✅ | ❌ | ❌ |

+| [ProphetNet](model_doc/prophetnet) | ✅ | ❌ | ❌ |

+| [PVT](model_doc/pvt) | ✅ | ❌ | ❌ |

+| [PVTv2](model_doc/pvt_v2) | ✅ | ❌ | ❌ |

+| [QDQBert](model_doc/qdqbert) | ✅ | ❌ | ❌ |

+| [Qwen2](model_doc/qwen2) | ✅ | ❌ | ❌ |

+| [Qwen2Audio](model_doc/qwen2_audio) | ✅ | ❌ | ❌ |

+| [Qwen2MoE](model_doc/qwen2_moe) | ✅ | ❌ | ❌ |

+| [Qwen2VL](model_doc/qwen2_vl) | ✅ | ❌ | ❌ |

+| [RAG](model_doc/rag) | ✅ | ✅ | ❌ |

+| [REALM](model_doc/realm) | ✅ | ❌ | ❌ |

+| [RecurrentGemma](model_doc/recurrent_gemma) | ✅ | ❌ | ❌ |

+| [Reformer](model_doc/reformer) | ✅ | ❌ | ❌ |

+| [RegNet](model_doc/regnet) | ✅ | ✅ | ✅ |

+| [RemBERT](model_doc/rembert) | ✅ | ✅ | ❌ |

+| [ResNet](model_doc/resnet) | ✅ | ✅ | ✅ |

+| [RetriBERT](model_doc/retribert) | ✅ | ❌ | ❌ |

+| [RoBERTa](model_doc/roberta) | ✅ | ✅ | ✅ |

+| [RoBERTa-PreLayerNorm](model_doc/roberta-prelayernorm) | ✅ | ✅ | ✅ |

+| [RoCBert](model_doc/roc_bert) | ✅ | ❌ | ❌ |

+| [RoFormer](model_doc/roformer) | ✅ | ✅ | ✅ |

+| [RT-DETR](model_doc/rt_detr) | ✅ | ❌ | ❌ |

+| [RT-DETR-ResNet](model_doc/rt_detr_resnet) | ✅ | ❌ | ❌ |

+| [RWKV](model_doc/rwkv) | ✅ | ❌ | ❌ |

+| [SAM](model_doc/sam) | ✅ | ✅ | ❌ |

+| [SeamlessM4T](model_doc/seamless_m4t) | ✅ | ❌ | ❌ |

+| [SeamlessM4Tv2](model_doc/seamless_m4t_v2) | ✅ | ❌ | ❌ |

+| [SegFormer](model_doc/segformer) | ✅ | ✅ | ❌ |

+| [SegGPT](model_doc/seggpt) | ✅ | ❌ | ❌ |

+| [SEW](model_doc/sew) | ✅ | ❌ | ❌ |

+| [SEW-D](model_doc/sew-d) | ✅ | ❌ | ❌ |

+| [SigLIP](model_doc/siglip) | ✅ | ❌ | ❌ |

+| [Speech Encoder decoder](model_doc/speech-encoder-decoder) | ✅ | ❌ | ✅ |

+| [Speech2Text](model_doc/speech_to_text) | ✅ | ✅ | ❌ |

+| [SpeechT5](model_doc/speecht5) | ✅ | ❌ | ❌ |

+| [Splinter](model_doc/splinter) | ✅ | ❌ | ❌ |

+| [SqueezeBERT](model_doc/squeezebert) | ✅ | ❌ | ❌ |

+| [StableLm](model_doc/stablelm) | ✅ | ❌ | ❌ |

+| [Starcoder2](model_doc/starcoder2) | ✅ | ❌ | ❌ |

+| [SuperPoint](model_doc/superpoint) | ✅ | ❌ | ❌ |

+| [SwiftFormer](model_doc/swiftformer) | ✅ | ✅ | ❌ |

+| [Swin Transformer](model_doc/swin) | ✅ | ✅ | ❌ |

+| [Swin Transformer V2](model_doc/swinv2) | ✅ | ❌ | ❌ |

+| [Swin2SR](model_doc/swin2sr) | ✅ | ❌ | ❌ |

+| [SwitchTransformers](model_doc/switch_transformers) | ✅ | ❌ | ❌ |

+| [T5](model_doc/t5) | ✅ | ✅ | ✅ |

+| [T5v1.1](model_doc/t5v1.1) | ✅ | ✅ | ✅ |

+| [Table Transformer](model_doc/table-transformer) | ✅ | ❌ | ❌ |

+| [TAPAS](model_doc/tapas) | ✅ | ✅ | ❌ |

+| [TAPEX](model_doc/tapex) | ✅ | ✅ | ✅ |

+| [Time Series Transformer](model_doc/time_series_transformer) | ✅ | ❌ | ❌ |

+| [TimeSformer](model_doc/timesformer) | ✅ | ❌ | ❌ |

+| [Trajectory Transformer](model_doc/trajectory_transformer) | ✅ | ❌ | ❌ |

+| [Transformer-XL](model_doc/transfo-xl) | ✅ | ✅ | ❌ |

+| [TrOCR](model_doc/trocr) | ✅ | ❌ | ❌ |

+| [TVLT](model_doc/tvlt) | ✅ | ❌ | ❌ |

+| [TVP](model_doc/tvp) | ✅ | ❌ | ❌ |

+| [UDOP](model_doc/udop) | ✅ | ❌ | ❌ |

+| [UL2](model_doc/ul2) | ✅ | ✅ | ✅ |

+| [UMT5](model_doc/umt5) | ✅ | ❌ | ❌ |

+| [UniSpeech](model_doc/unispeech) | ✅ | ❌ | ❌ |

+| [UniSpeechSat](model_doc/unispeech-sat) | ✅ | ❌ | ❌ |

+| [UnivNet](model_doc/univnet) | ✅ | ❌ | ❌ |

+| [UPerNet](model_doc/upernet) | ✅ | ❌ | ❌ |

+| [VAN](model_doc/van) | ✅ | ❌ | ❌ |

+| [VideoLlava](model_doc/video_llava) | ✅ | ❌ | ❌ |

+| [VideoMAE](model_doc/videomae) | ✅ | ❌ | ❌ |

+| [ViLT](model_doc/vilt) | ✅ | ❌ | ❌ |

+| [VipLlava](model_doc/vipllava) | ✅ | ❌ | ❌ |

+| [Vision Encoder decoder](model_doc/vision-encoder-decoder) | ✅ | ✅ | ✅ |

+| [VisionTextDualEncoder](model_doc/vision-text-dual-encoder) | ✅ | ✅ | ✅ |

+| [VisualBERT](model_doc/visual_bert) | ✅ | ❌ | ❌ |

+| [ViT](model_doc/vit) | ✅ | ✅ | ✅ |

+| [ViT Hybrid](model_doc/vit_hybrid) | ✅ | ❌ | ❌ |

+| [VitDet](model_doc/vitdet) | ✅ | ❌ | ❌ |

+| [ViTMAE](model_doc/vit_mae) | ✅ | ✅ | ❌ |

+| [ViTMatte](model_doc/vitmatte) | ✅ | ❌ | ❌ |

+| [ViTMSN](model_doc/vit_msn) | ✅ | ❌ | ❌ |

+| [VITS](model_doc/vits) | ✅ | ❌ | ❌ |

+| [ViViT](model_doc/vivit) | ✅ | ❌ | ❌ |

+| [Wav2Vec2](model_doc/wav2vec2) | ✅ | ✅ | ✅ |

+| [Wav2Vec2-BERT](model_doc/wav2vec2-bert) | ✅ | ❌ | ❌ |

+| [Wav2Vec2-Conformer](model_doc/wav2vec2-conformer) | ✅ | ❌ | ❌ |

+| [Wav2Vec2Phoneme](model_doc/wav2vec2_phoneme) | ✅ | ✅ | ✅ |

+| [WavLM](model_doc/wavlm) | ✅ | ❌ | ❌ |

+| [Whisper](model_doc/whisper) | ✅ | ✅ | ✅ |

+| [X-CLIP](model_doc/xclip) | ✅ | ❌ | ❌ |

+| [X-MOD](model_doc/xmod) | ✅ | ❌ | ❌ |

+| [XGLM](model_doc/xglm) | ✅ | ✅ | ✅ |

+| [XLM](model_doc/xlm) | ✅ | ✅ | ❌ |

+| [XLM-ProphetNet](model_doc/xlm-prophetnet) | ✅ | ❌ | ❌ |

+| [XLM-RoBERTa](model_doc/xlm-roberta) | ✅ | ✅ | ✅ |

+| [XLM-RoBERTa-XL](model_doc/xlm-roberta-xl) | ✅ | ❌ | ❌ |

+| [XLM-V](model_doc/xlm-v) | ✅ | ✅ | ✅ |

+| [XLNet](model_doc/xlnet) | ✅ | ✅ | ❌ |

+| [XLS-R](model_doc/xls_r) | ✅ | ✅ | ✅ |

+| [XLSR-Wav2Vec2](model_doc/xlsr_wav2vec2) | ✅ | ✅ | ✅ |

+| [YOLOS](model_doc/yolos) | ✅ | ❌ | ❌ |

+| [YOSO](model_doc/yoso) | ✅ | ❌ | ❌ |

+| [ZoeDepth](model_doc/zoedepth) | ✅ | ❌ | ❌ |

+

+

diff --git a/docs/transformers/docs/source/ar/installation.md b/docs/transformers/docs/source/ar/installation.md

new file mode 100644

index 0000000000000000000000000000000000000000..d3bd4c655b6038238ec7bacf3a2723685a7c3a21

--- /dev/null

+++ b/docs/transformers/docs/source/ar/installation.md

@@ -0,0 +1,246 @@

+# التثبيت (Installation)

+

+قم بتثبيت مكتبة 🤗 Transformers المناسبة لمكتبة التعلم العميق التي تستخدمها، وقم بإعداد ذاكرة التخزين المؤقت الخاصة بك، وقم بإعداد 🤗 Transformers للعمل دون اتصال بالإنترنت (اختياري).

+

+تم اختبار 🤗 Transformers على Python 3.6 والإصدارات الأحدث، وPyTorch 1.1.0 والإصدارات الأحدث، وTensorFlow 2.0 والإصدارات الأحدث، وFlax. اتبع تعليمات التثبيت أدناه لمكتبة التعلم العميق التي تستخدمها:

+

+* تعليمات تثبيت [PyTorch](https://pytorch.org/get-started/locally/).

+* تعليمات تثبيت [TensorFlow 2.0](https://www.tensorflow.org/install/pip).

+* تعليمات تثبيت [Flax](https://flax.readthedocs.io/en/latest/).

+

+## التثبيت باستخدام pip

+

+يجب عليك تثبيت 🤗 Transformers داخل [بيئة افتراضية](https://docs.python.org/3/library/venv.html). إذا لم تكن غير ملم ببيئات Python الافتراضية، فراجع هذا [الدليل](https://packaging.python.org/guides/installing-using-pip-and-virtual-environments/). البيئة الافتراضية تسهل إدارة المشاريع المختلف، وتجنب مشكلات التوافق بين المكتبات المطلوبة (اعتماديات المشروع).

+

+ابدأ بإنشاء بيئة افتراضية في دليل مشروعك:

+

+```bash

+python -m venv .env

+```

+

+قم بتفعيل البيئة الافتراضية. على Linux وMacOs:

+

+```bash

+source .env/bin/activate

+```

+

+قم بتفعيل البيئة الافتراضية على Windows:

+

+```bash

+.env/Scripts/activate

+```

+

+الآن أنت مستعد لتثبيت 🤗 Transformers باستخدام الأمر التالي:

+

+```bash

+pip install transformers

+```

+

+للحصول على الدعم الخاص بـ CPU فقط، يمكنك تثبيت 🤗 Transformers ومكتبة التعلم العميق في خطوة واحدة. على سبيل المثال، قم بتثبيت 🤗 Transformers وPyTorch باستخدام:

+

+```bash

+pip install 'transformers[torch]'

+```

+

+🤗 Transformers وTensorFlow 2.0:

+

+```bash

+pip install 'transformers[tf-cpu]'

+```

+

+

+

+

+## المحتويات

+

+ينقسم التوثيق إلى خمسة أقسام:

+

+- **ابدأ** تقدم جولة سريعة في المكتبة وتعليمات التثبيت للبدء.

+- **الدروس التعليمية** هي مكان رائع للبدء إذا كنت مبتدئًا. سيساعدك هذا القسم على اكتساب المهارات الأساسية التي تحتاجها للبدء في استخدام المكتبة.

+- **أدلة كيفية الاستخدام** تُظهر لك كيفية تحقيق هدف محدد، مثل ضبط نموذج مسبق التدريب لنمذجة اللغة أو كيفية كتابة ومشاركة نموذج مخصص.

+- **الأدلة المفاهيمية** تقدم مناقشة وتفسيرًا أكثر للأفكار والمفاهيم الأساسية وراء النماذج والمهام وفلسفة التصميم في 🤗 Transformers.

+- **واجهة برمجة التطبيقات (API)** تصف جميع الفئات والوظائف:

+

+ - **الفئات الرئيسية** تشرح الفئات الأكثر أهمية مثل التكوين والنمذجة والتحليل النصي وخط الأنابيب.

+ - **النماذج** تشرح الفئات والوظائف المتعلقة بكل نموذج يتم تنفيذه في المكتبة.

+ - **المساعدون الداخليون** يشرحون فئات ووظائف المساعدة التي يتم استخدامها داخليًا.

+

+

+## النماذج والأطر المدعومة

+

+يمثل الجدول أدناه الدعم الحالي في المكتبة لكل من هذه النماذج، وما إذا كان لديها محلل نحوي Python (يُسمى "بطيء"). محلل نحوي "سريع" مدعوم بمكتبة 🤗 Tokenizers، وما إذا كان لديها دعم في Jax (عبر Flax) و/أو PyTorch و/أو TensorFlow.

+

+

+

+

+| Model | PyTorch support | TensorFlow support | Flax Support |

+|:------------------------------------------------------------------------:|:---------------:|:------------------:|:------------:|

+| [ALBERT](model_doc/albert) | ✅ | ✅ | ✅ |

+| [ALIGN](model_doc/align) | ✅ | ❌ | ❌ |

+| [AltCLIP](model_doc/altclip) | ✅ | ❌ | ❌ |

+| [Audio Spectrogram Transformer](model_doc/audio-spectrogram-transformer) | ✅ | ❌ | ❌ |

+| [Autoformer](model_doc/autoformer) | ✅ | ❌ | ❌ |

+| [Bark](model_doc/bark) | ✅ | ❌ | ❌ |

+| [BART](model_doc/bart) | ✅ | ✅ | ✅ |

+| [BARThez](model_doc/barthez) | ✅ | ✅ | ✅ |

+| [BARTpho](model_doc/bartpho) | ✅ | ✅ | ✅ |

+| [BEiT](model_doc/beit) | ✅ | ❌ | ✅ |

+| [BERT](model_doc/bert) | ✅ | ✅ | ✅ |

+| [Bert Generation](model_doc/bert-generation) | ✅ | ❌ | ❌ |

+| [BertJapanese](model_doc/bert-japanese) | ✅ | ✅ | ✅ |

+| [BERTweet](model_doc/bertweet) | ✅ | ✅ | ✅ |

+| [BigBird](model_doc/big_bird) | ✅ | ❌ | ✅ |

+| [BigBird-Pegasus](model_doc/bigbird_pegasus) | ✅ | ❌ | ❌ |

+| [BioGpt](model_doc/biogpt) | ✅ | ❌ | ❌ |

+| [BiT](model_doc/bit) | ✅ | ❌ | ❌ |

+| [Blenderbot](model_doc/blenderbot) | ✅ | ✅ | ✅ |

+| [BlenderbotSmall](model_doc/blenderbot-small) | ✅ | ✅ | ✅ |

+| [BLIP](model_doc/blip) | ✅ | ✅ | ❌ |

+| [BLIP-2](model_doc/blip-2) | ✅ | ❌ | ❌ |

+| [BLOOM](model_doc/bloom) | ✅ | ❌ | ✅ |

+| [BORT](model_doc/bort) | ✅ | ✅ | ✅ |

+| [BridgeTower](model_doc/bridgetower) | ✅ | ❌ | ❌ |

+| [BROS](model_doc/bros) | ✅ | ❌ | ❌ |

+| [ByT5](model_doc/byt5) | ✅ | ✅ | ✅ |

+| [CamemBERT](model_doc/camembert) | ✅ | ✅ | ❌ |

+| [CANINE](model_doc/canine) | ✅ | ❌ | ❌ |

+| [Chameleon](model_doc/chameleon) | ✅ | ❌ | ❌ |

+| [Chinese-CLIP](model_doc/chinese_clip) | ✅ | ❌ | ❌ |

+| [CLAP](model_doc/clap) | ✅ | ❌ | ❌ |

+| [CLIP](model_doc/clip) | ✅ | ✅ | ✅ |

+| [CLIPSeg](model_doc/clipseg) | ✅ | ❌ | ❌ |

+| [CLVP](model_doc/clvp) | ✅ | ❌ | ❌ |

+| [CodeGen](model_doc/codegen) | ✅ | ❌ | ❌ |

+| [CodeLlama](model_doc/code_llama) | ✅ | ❌ | ✅ |

+| [Cohere](model_doc/cohere) | ✅ | ❌ | ❌ |

+| [Conditional DETR](model_doc/conditional_detr) | ✅ | ❌ | ❌ |

+| [ConvBERT](model_doc/convbert) | ✅ | ✅ | ❌ |

+| [ConvNeXT](model_doc/convnext) | ✅ | ✅ | ❌ |

+| [ConvNeXTV2](model_doc/convnextv2) | ✅ | ✅ | ❌ |

+| [CPM](model_doc/cpm) | ✅ | ✅ | ✅ |

+| [CPM-Ant](model_doc/cpmant) | ✅ | ❌ | ❌ |

+| [CTRL](model_doc/ctrl) | ✅ | ✅ | ❌ |

+| [CvT](model_doc/cvt) | ✅ | ✅ | ❌ |

+| [DAC](model_doc/dac) | ✅ | ❌ | ❌ |

+| [Data2VecAudio](model_doc/data2vec) | ✅ | ❌ | ❌ |

+| [Data2VecText](model_doc/data2vec) | ✅ | ❌ | ❌ |

+| [Data2VecVision](model_doc/data2vec) | ✅ | ✅ | ❌ |

+| [DBRX](model_doc/dbrx) | ✅ | ❌ | ❌ |

+| [DeBERTa](model_doc/deberta) | ✅ | ✅ | ❌ |

+| [DeBERTa-v2](model_doc/deberta-v2) | ✅ | ✅ | ❌ |

+| [Decision Transformer](model_doc/decision_transformer) | ✅ | ❌ | ❌ |

+| [Deformable DETR](model_doc/deformable_detr) | ✅ | ❌ | ❌ |

+| [DeiT](model_doc/deit) | ✅ | ✅ | ❌ |

+| [DePlot](model_doc/deplot) | ✅ | ❌ | ❌ |

+| [Depth Anything](model_doc/depth_anything) | ✅ | ❌ | ❌ |

+| [DETA](model_doc/deta) | ✅ | ❌ | ❌ |

+| [DETR](model_doc/detr) | ✅ | ❌ | ❌ |

+| [DialoGPT](model_doc/dialogpt) | ✅ | ✅ | ✅ |

+| [DiNAT](model_doc/dinat) | ✅ | ❌ | ❌ |

+| [DINOv2](model_doc/dinov2) | ✅ | ❌ | ✅ |

+| [DistilBERT](model_doc/distilbert) | ✅ | ✅ | ✅ |

+| [DiT](model_doc/dit) | ✅ | ❌ | ✅ |

+| [DonutSwin](model_doc/donut) | ✅ | ❌ | ❌ |

+| [DPR](model_doc/dpr) | ✅ | ✅ | ❌ |

+| [DPT](model_doc/dpt) | ✅ | ❌ | ❌ |

+| [EfficientFormer](model_doc/efficientformer) | ✅ | ✅ | ❌ |

+| [EfficientNet](model_doc/efficientnet) | ✅ | ❌ | ❌ |

+| [ELECTRA](model_doc/electra) | ✅ | ✅ | ✅ |

+| [EnCodec](model_doc/encodec) | ✅ | ❌ | ❌ |

+| [Encoder decoder](model_doc/encoder-decoder) | ✅ | ✅ | ✅ |

+| [ERNIE](model_doc/ernie) | ✅ | ❌ | ❌ |

+| [ErnieM](model_doc/ernie_m) | ✅ | ❌ | ❌ |

+| [ESM](model_doc/esm) | ✅ | ✅ | ❌ |

+| [FairSeq Machine-Translation](model_doc/fsmt) | ✅ | ❌ | ❌ |

+| [Falcon](model_doc/falcon) | ✅ | ❌ | ❌ |

+| [FalconMamba](model_doc/falcon_mamba) | ✅ | ❌ | ❌ |

+| [FastSpeech2Conformer](model_doc/fastspeech2_conformer) | ✅ | ❌ | ❌ |

+| [FLAN-T5](model_doc/flan-t5) | ✅ | ✅ | ✅ |

+| [FLAN-UL2](model_doc/flan-ul2) | ✅ | ✅ | ✅ |

+| [FlauBERT](model_doc/flaubert) | ✅ | ✅ | ❌ |

+| [FLAVA](model_doc/flava) | ✅ | ❌ | ❌ |

+| [FNet](model_doc/fnet) | ✅ | ❌ | ❌ |

+| [FocalNet](model_doc/focalnet) | ✅ | ❌ | ❌ |

+| [Funnel Transformer](model_doc/funnel) | ✅ | ✅ | ❌ |

+| [Fuyu](model_doc/fuyu) | ✅ | ❌ | ❌ |

+| [Gemma](model_doc/gemma) | ✅ | ❌ | ✅ |

+| [Gemma2](model_doc/gemma2) | ✅ | ❌ | ❌ |

+| [GIT](model_doc/git) | ✅ | ❌ | ❌ |

+| [GLPN](model_doc/glpn) | ✅ | ❌ | ❌ |

+| [GPT Neo](model_doc/gpt_neo) | ✅ | ❌ | ✅ |

+| [GPT NeoX](model_doc/gpt_neox) | ✅ | ❌ | ❌ |

+| [GPT NeoX Japanese](model_doc/gpt_neox_japanese) | ✅ | ❌ | ❌ |

+| [GPT-J](model_doc/gptj) | ✅ | ✅ | ✅ |

+| [GPT-Sw3](model_doc/gpt-sw3) | ✅ | ✅ | ✅ |

+| [GPTBigCode](model_doc/gpt_bigcode) | ✅ | ❌ | ❌ |

+| [GPTSAN-japanese](model_doc/gptsan-japanese) | ✅ | ❌ | ❌ |

+| [Granite](model_doc/granite) | ✅ | ❌ | ❌ |

+| [Graphormer](model_doc/graphormer) | ✅ | ❌ | ❌ |

+| [Grounding DINO](model_doc/grounding-dino) | ✅ | ❌ | ❌ |

+| [GroupViT](model_doc/groupvit) | ✅ | ✅ | ❌ |

+| [HerBERT](model_doc/herbert) | ✅ | ✅ | ✅ |

+| [Hiera](model_doc/hiera) | ✅ | ❌ | ❌ |

+| [Hubert](model_doc/hubert) | ✅ | ✅ | ❌ |

+| [I-BERT](model_doc/ibert) | ✅ | ❌ | ❌ |

+| [IDEFICS](model_doc/idefics) | ✅ | ✅ | ❌ |

+| [Idefics2](model_doc/idefics2) | ✅ | ❌ | ❌ |

+| [ImageGPT](model_doc/imagegpt) | ✅ | ❌ | ❌ |

+| [Informer](model_doc/informer) | ✅ | ❌ | ❌ |

+| [InstructBLIP](model_doc/instructblip) | ✅ | ❌ | ❌ |

+| [InstructBlipVideo](model_doc/instructblipvideo) | ✅ | ❌ | ❌ |

+| [Jamba](model_doc/jamba) | ✅ | ❌ | ❌ |

+| [JetMoe](model_doc/jetmoe) | ✅ | ❌ | ❌ |

+| [Jukebox](model_doc/jukebox) | ✅ | ❌ | ❌ |

+| [KOSMOS-2](model_doc/kosmos-2) | ✅ | ❌ | ❌ |

+| [LayoutLM](model_doc/layoutlm) | ✅ | ✅ | ❌ |

+| [LayoutLMv2](model_doc/layoutlmv2) | ✅ | ❌ | ❌ |

+| [LayoutLMv3](model_doc/layoutlmv3) | ✅ | ✅ | ❌ |

+| [LayoutXLM](model_doc/layoutxlm) | ✅ | ❌ | ❌ |

+| [LED](model_doc/led) | ✅ | ✅ | ❌ |

+| [LeViT](model_doc/levit) | ✅ | ❌ | ❌ |

+| [LiLT](model_doc/lilt) | ✅ | ❌ | ❌ |

+| [LLaMA](model_doc/llama) | ✅ | ❌ | ✅ |

+| [Llama2](model_doc/llama2) | ✅ | ❌ | ✅ |

+| [Llama3](model_doc/llama3) | ✅ | ❌ | ✅ |

+| [LLaVa](model_doc/llava) | ✅ | ❌ | ❌ |

+| [LLaVA-NeXT](model_doc/llava_next) | ✅ | ❌ | ❌ |

+| [LLaVa-NeXT-Video](model_doc/llava_next_video) | ✅ | ❌ | ❌ |

+| [Longformer](model_doc/longformer) | ✅ | ✅ | ❌ |

+| [LongT5](model_doc/longt5) | ✅ | ❌ | ✅ |

+| [LUKE](model_doc/luke) | ✅ | ❌ | ❌ |

+| [LXMERT](model_doc/lxmert) | ✅ | ✅ | ❌ |

+| [M-CTC-T](model_doc/mctct) | ✅ | ❌ | ❌ |

+| [M2M100](model_doc/m2m_100) | ✅ | ❌ | ❌ |

+| [MADLAD-400](model_doc/madlad-400) | ✅ | ✅ | ✅ |

+| [Mamba](model_doc/mamba) | ✅ | ❌ | ❌ |

+| [mamba2](model_doc/mamba2) | ✅ | ❌ | ❌ |

+| [Marian](model_doc/marian) | ✅ | ✅ | ✅ |

+| [MarkupLM](model_doc/markuplm) | ✅ | ❌ | ❌ |

+| [Mask2Former](model_doc/mask2former) | ✅ | ❌ | ❌ |

+| [MaskFormer](model_doc/maskformer) | ✅ | ❌ | ❌ |

+| [MatCha](model_doc/matcha) | ✅ | ❌ | ❌ |

+| [mBART](model_doc/mbart) | ✅ | ✅ | ✅ |

+| [mBART-50](model_doc/mbart50) | ✅ | ✅ | ✅ |

+| [MEGA](model_doc/mega) | ✅ | ❌ | ❌ |

+| [Megatron-BERT](model_doc/megatron-bert) | ✅ | ❌ | ❌ |

+| [Megatron-GPT2](model_doc/megatron_gpt2) | ✅ | ✅ | ✅ |

+| [MGP-STR](model_doc/mgp-str) | ✅ | ❌ | ❌ |

+| [Mistral](model_doc/mistral) | ✅ | ✅ | ✅ |

+| [Mixtral](model_doc/mixtral) | ✅ | ❌ | ❌ |

+| [mLUKE](model_doc/mluke) | ✅ | ❌ | ❌ |

+| [MMS](model_doc/mms) | ✅ | ✅ | ✅ |

+| [MobileBERT](model_doc/mobilebert) | ✅ | ✅ | ❌ |

+| [MobileNetV1](model_doc/mobilenet_v1) | ✅ | ❌ | ❌ |

+| [MobileNetV2](model_doc/mobilenet_v2) | ✅ | ❌ | ❌ |

+| [MobileViT](model_doc/mobilevit) | ✅ | ✅ | ❌ |

+| [MobileViTV2](model_doc/mobilevitv2) | ✅ | ❌ | ❌ |

+| [MPNet](model_doc/mpnet) | ✅ | ✅ | ❌ |

+| [MPT](model_doc/mpt) | ✅ | ❌ | ❌ |

+| [MRA](model_doc/mra) | ✅ | ❌ | ❌ |

+| [MT5](model_doc/mt5) | ✅ | ✅ | ✅ |

+| [MusicGen](model_doc/musicgen) | ✅ | ❌ | ❌ |

+| [MusicGen Melody](model_doc/musicgen_melody) | ✅ | ❌ | ❌ |

+| [MVP](model_doc/mvp) | ✅ | ❌ | ❌ |

+| [NAT](model_doc/nat) | ✅ | ❌ | ❌ |

+| [Nemotron](model_doc/nemotron) | ✅ | ❌ | ❌ |

+| [Nezha](model_doc/nezha) | ✅ | ❌ | ❌ |

+| [NLLB](model_doc/nllb) | ✅ | ❌ | ❌ |

+| [NLLB-MOE](model_doc/nllb-moe) | ✅ | ❌ | ❌ |

+| [Nougat](model_doc/nougat) | ✅ | ✅ | ✅ |

+| [Nyströmformer](model_doc/nystromformer) | ✅ | ❌ | ❌ |

+| [OLMo](model_doc/olmo) | ✅ | ❌ | ❌ |

+| [OneFormer](model_doc/oneformer) | ✅ | ❌ | ❌ |

+| [OpenAI GPT](model_doc/openai-gpt) | ✅ | ✅ | ❌ |

+| [OpenAI GPT-2](model_doc/gpt2) | ✅ | ✅ | ✅ |

+| [OpenLlama](model_doc/open-llama) | ✅ | ❌ | ❌ |

+| [OPT](model_doc/opt) | ✅ | ✅ | ✅ |

+| [OWL-ViT](model_doc/owlvit) | ✅ | ❌ | ❌ |

+| [OWLv2](model_doc/owlv2) | ✅ | ❌ | ❌ |

+| [PaliGemma](model_doc/paligemma) | ✅ | ❌ | ❌ |

+| [PatchTSMixer](model_doc/patchtsmixer) | ✅ | ❌ | ❌ |

+| [PatchTST](model_doc/patchtst) | ✅ | ❌ | ❌ |

+| [Pegasus](model_doc/pegasus) | ✅ | ✅ | ✅ |

+| [PEGASUS-X](model_doc/pegasus_x) | ✅ | ❌ | ❌ |

+| [Perceiver](model_doc/perceiver) | ✅ | ❌ | ❌ |

+| [Persimmon](model_doc/persimmon) | ✅ | ❌ | ❌ |

+| [Phi](model_doc/phi) | ✅ | ❌ | ❌ |

+| [Phi3](model_doc/phi3) | ✅ | ❌ | ❌ |

+| [PhoBERT](model_doc/phobert) | ✅ | ✅ | ✅ |

+| [Pix2Struct](model_doc/pix2struct) | ✅ | ❌ | ❌ |

+| [PLBart](model_doc/plbart) | ✅ | ❌ | ❌ |

+| [PoolFormer](model_doc/poolformer) | ✅ | ❌ | ❌ |

+| [Pop2Piano](model_doc/pop2piano) | ✅ | ❌ | ❌ |

+| [ProphetNet](model_doc/prophetnet) | ✅ | ❌ | ❌ |

+| [PVT](model_doc/pvt) | ✅ | ❌ | ❌ |

+| [PVTv2](model_doc/pvt_v2) | ✅ | ❌ | ❌ |

+| [QDQBert](model_doc/qdqbert) | ✅ | ❌ | ❌ |

+| [Qwen2](model_doc/qwen2) | ✅ | ❌ | ❌ |

+| [Qwen2Audio](model_doc/qwen2_audio) | ✅ | ❌ | ❌ |

+| [Qwen2MoE](model_doc/qwen2_moe) | ✅ | ❌ | ❌ |

+| [Qwen2VL](model_doc/qwen2_vl) | ✅ | ❌ | ❌ |

+| [RAG](model_doc/rag) | ✅ | ✅ | ❌ |

+| [REALM](model_doc/realm) | ✅ | ❌ | ❌ |

+| [RecurrentGemma](model_doc/recurrent_gemma) | ✅ | ❌ | ❌ |

+| [Reformer](model_doc/reformer) | ✅ | ❌ | ❌ |

+| [RegNet](model_doc/regnet) | ✅ | ✅ | ✅ |

+| [RemBERT](model_doc/rembert) | ✅ | ✅ | ❌ |

+| [ResNet](model_doc/resnet) | ✅ | ✅ | ✅ |

+| [RetriBERT](model_doc/retribert) | ✅ | ❌ | ❌ |

+| [RoBERTa](model_doc/roberta) | ✅ | ✅ | ✅ |

+| [RoBERTa-PreLayerNorm](model_doc/roberta-prelayernorm) | ✅ | ✅ | ✅ |

+| [RoCBert](model_doc/roc_bert) | ✅ | ❌ | ❌ |

+| [RoFormer](model_doc/roformer) | ✅ | ✅ | ✅ |

+| [RT-DETR](model_doc/rt_detr) | ✅ | ❌ | ❌ |

+| [RT-DETR-ResNet](model_doc/rt_detr_resnet) | ✅ | ❌ | ❌ |

+| [RWKV](model_doc/rwkv) | ✅ | ❌ | ❌ |

+| [SAM](model_doc/sam) | ✅ | ✅ | ❌ |

+| [SeamlessM4T](model_doc/seamless_m4t) | ✅ | ❌ | ❌ |

+| [SeamlessM4Tv2](model_doc/seamless_m4t_v2) | ✅ | ❌ | ❌ |

+| [SegFormer](model_doc/segformer) | ✅ | ✅ | ❌ |

+| [SegGPT](model_doc/seggpt) | ✅ | ❌ | ❌ |

+| [SEW](model_doc/sew) | ✅ | ❌ | ❌ |

+| [SEW-D](model_doc/sew-d) | ✅ | ❌ | ❌ |

+| [SigLIP](model_doc/siglip) | ✅ | ❌ | ❌ |

+| [Speech Encoder decoder](model_doc/speech-encoder-decoder) | ✅ | ❌ | ✅ |

+| [Speech2Text](model_doc/speech_to_text) | ✅ | ✅ | ❌ |

+| [SpeechT5](model_doc/speecht5) | ✅ | ❌ | ❌ |

+| [Splinter](model_doc/splinter) | ✅ | ❌ | ❌ |

+| [SqueezeBERT](model_doc/squeezebert) | ✅ | ❌ | ❌ |

+| [StableLm](model_doc/stablelm) | ✅ | ❌ | ❌ |

+| [Starcoder2](model_doc/starcoder2) | ✅ | ❌ | ❌ |

+| [SuperPoint](model_doc/superpoint) | ✅ | ❌ | ❌ |

+| [SwiftFormer](model_doc/swiftformer) | ✅ | ✅ | ❌ |

+| [Swin Transformer](model_doc/swin) | ✅ | ✅ | ❌ |

+| [Swin Transformer V2](model_doc/swinv2) | ✅ | ❌ | ❌ |

+| [Swin2SR](model_doc/swin2sr) | ✅ | ❌ | ❌ |

+| [SwitchTransformers](model_doc/switch_transformers) | ✅ | ❌ | ❌ |

+| [T5](model_doc/t5) | ✅ | ✅ | ✅ |

+| [T5v1.1](model_doc/t5v1.1) | ✅ | ✅ | ✅ |

+| [Table Transformer](model_doc/table-transformer) | ✅ | ❌ | ❌ |

+| [TAPAS](model_doc/tapas) | ✅ | ✅ | ❌ |

+| [TAPEX](model_doc/tapex) | ✅ | ✅ | ✅ |

+| [Time Series Transformer](model_doc/time_series_transformer) | ✅ | ❌ | ❌ |

+| [TimeSformer](model_doc/timesformer) | ✅ | ❌ | ❌ |

+| [Trajectory Transformer](model_doc/trajectory_transformer) | ✅ | ❌ | ❌ |

+| [Transformer-XL](model_doc/transfo-xl) | ✅ | ✅ | ❌ |

+| [TrOCR](model_doc/trocr) | ✅ | ❌ | ❌ |

+| [TVLT](model_doc/tvlt) | ✅ | ❌ | ❌ |

+| [TVP](model_doc/tvp) | ✅ | ❌ | ❌ |

+| [UDOP](model_doc/udop) | ✅ | ❌ | ❌ |

+| [UL2](model_doc/ul2) | ✅ | ✅ | ✅ |

+| [UMT5](model_doc/umt5) | ✅ | ❌ | ❌ |

+| [UniSpeech](model_doc/unispeech) | ✅ | ❌ | ❌ |

+| [UniSpeechSat](model_doc/unispeech-sat) | ✅ | ❌ | ❌ |

+| [UnivNet](model_doc/univnet) | ✅ | ❌ | ❌ |

+| [UPerNet](model_doc/upernet) | ✅ | ❌ | ❌ |

+| [VAN](model_doc/van) | ✅ | ❌ | ❌ |

+| [VideoLlava](model_doc/video_llava) | ✅ | ❌ | ❌ |

+| [VideoMAE](model_doc/videomae) | ✅ | ❌ | ❌ |

+| [ViLT](model_doc/vilt) | ✅ | ❌ | ❌ |

+| [VipLlava](model_doc/vipllava) | ✅ | ❌ | ❌ |

+| [Vision Encoder decoder](model_doc/vision-encoder-decoder) | ✅ | ✅ | ✅ |

+| [VisionTextDualEncoder](model_doc/vision-text-dual-encoder) | ✅ | ✅ | ✅ |

+| [VisualBERT](model_doc/visual_bert) | ✅ | ❌ | ❌ |

+| [ViT](model_doc/vit) | ✅ | ✅ | ✅ |

+| [ViT Hybrid](model_doc/vit_hybrid) | ✅ | ❌ | ❌ |

+| [VitDet](model_doc/vitdet) | ✅ | ❌ | ❌ |

+| [ViTMAE](model_doc/vit_mae) | ✅ | ✅ | ❌ |

+| [ViTMatte](model_doc/vitmatte) | ✅ | ❌ | ❌ |

+| [ViTMSN](model_doc/vit_msn) | ✅ | ❌ | ❌ |

+| [VITS](model_doc/vits) | ✅ | ❌ | ❌ |

+| [ViViT](model_doc/vivit) | ✅ | ❌ | ❌ |

+| [Wav2Vec2](model_doc/wav2vec2) | ✅ | ✅ | ✅ |

+| [Wav2Vec2-BERT](model_doc/wav2vec2-bert) | ✅ | ❌ | ❌ |

+| [Wav2Vec2-Conformer](model_doc/wav2vec2-conformer) | ✅ | ❌ | ❌ |

+| [Wav2Vec2Phoneme](model_doc/wav2vec2_phoneme) | ✅ | ✅ | ✅ |

+| [WavLM](model_doc/wavlm) | ✅ | ❌ | ❌ |

+| [Whisper](model_doc/whisper) | ✅ | ✅ | ✅ |

+| [X-CLIP](model_doc/xclip) | ✅ | ❌ | ❌ |

+| [X-MOD](model_doc/xmod) | ✅ | ❌ | ❌ |

+| [XGLM](model_doc/xglm) | ✅ | ✅ | ✅ |

+| [XLM](model_doc/xlm) | ✅ | ✅ | ❌ |

+| [XLM-ProphetNet](model_doc/xlm-prophetnet) | ✅ | ❌ | ❌ |

+| [XLM-RoBERTa](model_doc/xlm-roberta) | ✅ | ✅ | ✅ |

+| [XLM-RoBERTa-XL](model_doc/xlm-roberta-xl) | ✅ | ❌ | ❌ |

+| [XLM-V](model_doc/xlm-v) | ✅ | ✅ | ✅ |

+| [XLNet](model_doc/xlnet) | ✅ | ✅ | ❌ |

+| [XLS-R](model_doc/xls_r) | ✅ | ✅ | ✅ |

+| [XLSR-Wav2Vec2](model_doc/xlsr_wav2vec2) | ✅ | ✅ | ✅ |

+| [YOLOS](model_doc/yolos) | ✅ | ❌ | ❌ |

+| [YOSO](model_doc/yoso) | ✅ | ❌ | ❌ |

+| [ZoeDepth](model_doc/zoedepth) | ✅ | ❌ | ❌ |

+

+

diff --git a/docs/transformers/docs/source/ar/installation.md b/docs/transformers/docs/source/ar/installation.md

new file mode 100644

index 0000000000000000000000000000000000000000..d3bd4c655b6038238ec7bacf3a2723685a7c3a21

--- /dev/null

+++ b/docs/transformers/docs/source/ar/installation.md

@@ -0,0 +1,246 @@

+# التثبيت (Installation)

+

+قم بتثبيت مكتبة 🤗 Transformers المناسبة لمكتبة التعلم العميق التي تستخدمها، وقم بإعداد ذاكرة التخزين المؤقت الخاصة بك، وقم بإعداد 🤗 Transformers للعمل دون اتصال بالإنترنت (اختياري).

+

+تم اختبار 🤗 Transformers على Python 3.6 والإصدارات الأحدث، وPyTorch 1.1.0 والإصدارات الأحدث، وTensorFlow 2.0 والإصدارات الأحدث، وFlax. اتبع تعليمات التثبيت أدناه لمكتبة التعلم العميق التي تستخدمها:

+

+* تعليمات تثبيت [PyTorch](https://pytorch.org/get-started/locally/).

+* تعليمات تثبيت [TensorFlow 2.0](https://www.tensorflow.org/install/pip).

+* تعليمات تثبيت [Flax](https://flax.readthedocs.io/en/latest/).

+

+## التثبيت باستخدام pip

+

+يجب عليك تثبيت 🤗 Transformers داخل [بيئة افتراضية](https://docs.python.org/3/library/venv.html). إذا لم تكن غير ملم ببيئات Python الافتراضية، فراجع هذا [الدليل](https://packaging.python.org/guides/installing-using-pip-and-virtual-environments/). البيئة الافتراضية تسهل إدارة المشاريع المختلف، وتجنب مشكلات التوافق بين المكتبات المطلوبة (اعتماديات المشروع).

+

+ابدأ بإنشاء بيئة افتراضية في دليل مشروعك:

+

+```bash

+python -m venv .env

+```

+

+قم بتفعيل البيئة الافتراضية. على Linux وMacOs:

+

+```bash

+source .env/bin/activate

+```

+

+قم بتفعيل البيئة الافتراضية على Windows:

+

+```bash

+.env/Scripts/activate

+```

+

+الآن أنت مستعد لتثبيت 🤗 Transformers باستخدام الأمر التالي:

+

+```bash

+pip install transformers

+```

+

+للحصول على الدعم الخاص بـ CPU فقط، يمكنك تثبيت 🤗 Transformers ومكتبة التعلم العميق في خطوة واحدة. على سبيل المثال، قم بتثبيت 🤗 Transformers وPyTorch باستخدام:

+

+```bash

+pip install 'transformers[torch]'

+```

+

+🤗 Transformers وTensorFlow 2.0:

+

+```bash

+pip install 'transformers[tf-cpu]'

+```

+

+ +

+  +

+هذا تقريب أقرب للتفكيك الحقيقي لاحتمالية التسلسل وسيؤدي عادةً إلى نتيجة أفضل.لكن الجانب السلبي هو أنه يتطلب تمريرًا للأمام لكل رمز في مجموعة البيانات. حل وسط عملي مناسب هو استخدام نافذة منزلقة بخطوة، بحيث يتم تحريك السياق بخطوات أكبر بدلاً من الانزلاق بمقدار 1 رمز في كل مرة. مما يسمح بإجراء الحساب بشكل أسرع مع إعطاء النموذج سياقًا كبيرًا للتنبؤات في كل خطوة.

+

+## مثال: حساب التعقيد اللغوي مع GPT-2 في 🤗 Transformers

+

+دعونا نوضح هذه العملية مع GPT-2.

+

+```python

+from transformers import GPT2LMHeadModel, GPT2TokenizerFast

+

+device = "cuda"

+model_id = "openai-community/gpt2-large"

+model = GPT2LMHeadModel.from_pretrained(model_id).to(device)

+tokenizer = GPT2TokenizerFast.from_pretrained(model_id)

+```

+

+سنقوم بتحميل مجموعة بيانات WikiText-2 وتقييم التعقيد اللغوي باستخدام بعض إستراتيجيات مختلفة النافذة المنزلقة. نظرًا لأن هذه المجموعة البيانات الصغيرة ونقوم فقط بمسح واحد فقط للمجموعة، فيمكننا ببساطة تحميل مجموعة البيانات وترميزها بالكامل في الذاكرة.

+

+```python

+from datasets import load_dataset

+

+test = load_dataset("wikitext", "wikitext-2-raw-v1", split="test")

+encodings = tokenizer("\n\n".join(test["text"]), return_tensors="pt")

+```

+

+مع 🤗 Transformers، يمكننا ببساطة تمرير `input_ids` كـ `labels` إلى نموذجنا، وسيتم إرجاع متوسط احتمالية السجل السالب لكل رمز كخسارة. ومع ذلك، مع نهج النافذة المنزلقة، هناك تداخل في الرموز التي نمررها إلى النموذج في كل تكرار. لا نريد تضمين احتمالية السجل للرموز التي نتعامل معها كسياق فقط في خسارتنا، لذا يمكننا تعيين هذه الأهداف إلى `-100` بحيث يتم تجاهلها. فيما يلي هو مثال على كيفية القيام بذلك بخطوة تبلغ `512`. وهذا يعني أن النموذج سيكون لديه 512 رمزًا على الأقل للسياق عند حساب الاحتمالية الشرطية لأي رمز واحد (بشرط توفر 512 رمزًا سابقًا متاحًا للاشتقاق).

+

+```python

+import torch

+from tqdm import tqdm

+

+max_length = model.config.n_positions

+stride = 512

+seq_len = encodings.input_ids.size(1)

+

+nlls = []

+prev_end_loc = 0

+for begin_loc in tqdm(range(0, seq_len, stride)):

+ end_loc = min(begin_loc + max_length, seq_len)

+ trg_len = end_loc - prev_end_loc # قد تكون مختلفة عن الخطوة في الحلقة الأخيرة

+ input_ids = encodings.input_ids[:, begin_loc:end_loc].to(device)

+ target_ids = input_ids.clone()

+ target_ids[:, :-trg_len] = -100

+

+ with torch.no_grad():

+ outputs = model(input_ids, labels=target_ids)

+

+ # يتم حساب الخسارة باستخدام CrossEntropyLoss الذي يقوم بالمتوسط على التصنيفات الصحيحة

+ # لاحظ أن النموذج يحسب الخسارة على trg_len - 1 من التصنيفات فقط، لأنه يتحول داخليًا إلى اليسار بواسطة 1.

+ neg_log_likelihood = outputs.loss

+

+ nlls.append(neg_log_likelihood)

+

+ prev_end_loc = end_loc

+ if end_loc == seq_len:

+ break

+

+ppl = torch.exp(torch.stack(nlls).mean())

+```

+

+يعد تشغيل هذا مع طول الخطوة مساويًا لطول الإدخال الأقصى يعادل لاستراتيجية النافذة غير المنزلقة وغير المثلى التي ناقشناها أعلاه. وكلما صغرت الخطوة، زاد السياق الذي سيحصل عليه النموذج في عمل كل تنبؤ، وكلما كانت التعقيد اللغوي المُبلغ عنها أفضل عادةً.

+

+عندما نقوم بتشغيل ما سبق باستخدام `stride = 1024`، أي بدون تداخل، تكون درجة التعقيد اللغوي الناتجة هي `19.44`، وهو ما يماثل `19.93` المبلغ عنها في ورقة GPT-2. من خلال استخدام `stride = 512` وبالتالي استخدام إستراتيجية النافذة المنزلقة، ينخفض هذا إلى `16.45`. هذه النتيجة ليست فقط أفضل، ولكنها محسوبة بطريقة أقرب إلى التحليل التلقائي الحقيقي لاحتمالية التسلسل.

\ No newline at end of file

diff --git a/docs/transformers/docs/source/ar/philosophy.md b/docs/transformers/docs/source/ar/philosophy.md

new file mode 100644

index 0000000000000000000000000000000000000000..09de2991fba28d3e650aafc30bed104396c50894

--- /dev/null

+++ b/docs/transformers/docs/source/ar/philosophy.md

@@ -0,0 +1,49 @@

+# الفلسفة

+

+تُعد 🤗 Transformers مكتبة برمجية ذات رؤية واضحة صُممت من أجل:

+

+- الباحثون والمُتعلّمون في مجال التعلم الآلي ممن يسعون لاستخدام أو دراسة أو تطوير نماذج Transformers واسعة النطاق.

+- مُطبّقي تعلم الآلة الذين يرغبون في ضبط تلك النماذج أو تشغيلها في بيئة إنتاجية، أو كليهما.

+- المهندسون الذين يريدون فقط تنزيل نموذج مُدرب مسبقًا واستخدامه لحل مهمة تعلم آلي معينة.

+

+تم تصميم المكتبة مع الأخذ في الاعتبار هدفين رئيسيين:

+

+1. سهولة وسرعة الاستخدام:

+

+ - تمّ تقليل عدد المفاهيم المُجردة التي يتعامل معها المستخدم إلى أدنى حد والتي يجب تعلمها، وفي الواقع، لا توجد مفاهيم مُجردة تقريبًا، فقط ثلاث فئات أساسية مطلوبة لاستخدام كل نموذج: [الإعدادات](main_classes/configuration)، [نماذج](main_classes/model)، وفئة ما قبل المعالجة ([مُجزّئ لغوي](main_classes/tokenizer) لـ NLP، [معالج الصور](main_classes/image_processor) للرؤية، [مستخرج الميزات](main_classes/feature_extractor) للصوت، و [معالج](main_classes/processors) للمدخﻻت متعددة الوسائط).

+ - يمكن تهيئة جميع هذه الفئات بطريقة بسيطة وموحدة من خلال نماذج مُدربة مسبقًا باستخدام الدالة الموحدة `from_pretrained()` والتي تقوم بتنزيل (إذا لزم الأمر)، وتخزين وتحميل كل من: فئة النموذج المُراد استخدامه والبيانات المرتبطة ( مُعاملات الإعدادات، ومعجم للمُجزّئ اللغوي،وأوزان النماذج) من نقطة تدقيق مُحددة مُخزّنة على [Hugging Face Hub](https://huggingface.co/models) أو ن من نقطة تخزين خاصة بالمستخدم.

+ - بالإضافة إلى هذه الفئات الأساسية الثلاث، توفر المكتبة واجهتي برمجة تطبيقات: [`pipeline`] للاستخدام السريع لأحد النماذج لأداء استنتاجات على مهمة مُحددة، و [`Trainer`] للتدريب السريع أو الضبط الدقيق لنماذج PyTorch (جميع نماذج TensorFlow متوافقة مع `Keras.fit`).

+ - نتيجة لذلك، هذه المكتبة ليست صندوق أدوات متعدد الاستخدامات من الكتل الإنشائية للشبكات العصبية. إذا كنت تريد توسيع أو البناء على المكتبة، فما عليك سوى استخدام Python و PyTorch و TensorFlow و Keras العادية والوراثة من الفئات الأساسية للمكتبة لإعادة استخدام الوظائف مثل تحميل النموذج وحفظه. إذا كنت ترغب في معرفة المزيد عن فلسفة الترميز لدينا للنماذج، فراجع منشور المدونة الخاص بنا [Repeat Yourself](https://huggingface.co/blog/transformers-design-philosophy).

+

+2. تقديم نماذج رائدة في مجالها مع أداء قريب قدر الإمكان من النماذج الأصلية:

+

+ - نقدم مثالًا واحدًا على الأقل لكل بنية تقوم بإعادة إنتاج نتيجة مقدمة من المؤلفين الرسميين لتلك البنية.

+ - عادةً ما تكون الشفرة قريبة قدر الإمكان من قاعدة الشفرة الأصلية، مما يعني أن بعض شفرة PyTorch قد لا تكون "بأسلوب PyTorch" كما يمكن أن تكون نتيجة لكونها شفرة TensorFlow محولة والعكس صحيح.

+

+بعض الأهداف الأخرى:

+

+- كشف تفاصيل النماذج الداخلية بشكل متسق قدر الإمكان:

+

+ -نتيح الوصول، باستخدام واجهة برمجة واحدة، إلى جميع الحالات المخفية (Hidden-States) وأوزان الانتباه (Attention Weights).

+ - تم توحيد واجهات برمجة التطبيقات الخاصة بفئات المعالجة المسبقة والنماذج الأساسية لتسهيل التبديل بين النماذج.

+

+- دمج مجموعة مختارة من الأدوات الواعدة لضبط النماذج بدقة (Fine-tuning) ودراستها:

+

+ - طريقة بسيطة ومتسقة لإضافة رموز جديدة إلى مفردات التضمينات (Embeddings) لضبط النماذج بدقة.

+ - طرق سهلة لإخفاء (Masking) وتقليم (Pruning) رؤوس المحولات (Transformer Heads).

+

+- التبديل بسهولة بين PyTorch و TensorFlow 2.0 و Flax، مما يسمح بالتدريب باستخدام إطار واحد والاستدلال باستخدام إطار آخر.

+

+## المفاهيم الرئيسية

+

+تعتمد المكتبة على ثلاثة أنواع من الفئات لكل نموذج:

+

+- **فئات النماذج** يمكن أن تكون نماذج PyTorch ([torch.nn.Module](https://pytorch.org/docs/stable/nn.html#torch.nn.Module))، أو نماذج Keras ([tf.keras.Model](https://www.tensorflow.org/api_docs/python/tf/keras/Model))، أو نماذج JAX/Flax ([flax.linen.Module](https://flax.readthedocs.io/en/latest/api_reference/flax.linen/module.html)) التي تعمل مع الأوزان المُدربة مسبقًا المقدمة في المكتبة.

+- **فئات الإعداد** تخزن معلمات التهيئة المطلوبة لبناء نموذج (مثل عدد الطبقات وحجم الطبقة المخفية). أنت لست مضطرًا دائمًا إلى إنشاء مثيل لهذه الفئات بنفسك. على وجه الخصوص، إذا كنت تستخدم نموذجًا مُدربًا مسبقًا دون أي تعديل، فإن إنشاء النموذج سيهتم تلقائيًا تهيئة الإعدادات (والذي يعد جزءًا من النموذج).

+- **فئات ما قبل المعالجة** تحويل البيانات الخام إلى تنسيق مقبول من قبل النموذج. يقوم [المعالج](main_classes/tokenizer) بتخزين المعجم لكل نموذج ويقدم طرقًا لتشفير وفك تشفير السلاسل في قائمة من مؤشرات تضمين الرموز ليتم إطعامها للنموذج. تقوم [معالجات الصور](main_classes/image_processor) بمعالجة إدخالات الرؤية، وتقوم [مستخلصات الميزات](main_classes/feature_extractor) بمعالجة إدخالات الصوت، ويقوم [المعالج](main_classes/processors) بمعالجة الإدخالات متعددة الوسائط.

+

+يمكن تهيئة جميع هذه الفئات من نسخ مُدربة مسبقًا، وحفظها محليًا، ومشاركتها على منصة Hub عبر ثلاث طرق:

+

+- تسمح لك الدالة `from_pretrained()` بتهيئة النموذج وتكويناته وفئة المعالجة المسبقة من إصدار مُدرب مسبقًا إما يتم توفيره بواسطة المكتبة نفسها (يمكن العثور على النماذج المدعومة على [Model Hub](https://huggingface.co/models)) أو مخزنة محليًا (أو على خادم) بواسطة المستخدم.

+- تسمح لك الدالة `save_pretrained()` بحفظ النموذج، وتكويناته وفئة المعالجة المسبقة محليًا، بحيث يمكن إعادة تحميله باستخدام الدالة `from_pretrained()`.

+- تسمح لك `push_to_hub()` بمشاركة نموذج وتكويناتهوفئة المعالجة المسبقة على Hub، بحيث يمكن الوصول إليها بسهولة من قبل الجميع.

diff --git a/docs/transformers/docs/source/ar/pipeline_tutorial.md b/docs/transformers/docs/source/ar/pipeline_tutorial.md

new file mode 100644

index 0000000000000000000000000000000000000000..2dd713a6533f6e3831bdde6116f16bbdcf573f57

--- /dev/null

+++ b/docs/transformers/docs/source/ar/pipeline_tutorial.md

@@ -0,0 +1,315 @@

+# خطوط الأنابيب الاستدلال

+

+يجعل [`pipeline`] من السهل استخدام أي نموذج من [Hub](https://huggingface.co/models) للاستدلال لأي مهام خاصة باللغة أو الرؤية الحاسوبية أو الكلام أو المهام متعددة الوسائط. حتى إذا لم يكن لديك خبرة في طريقة معينة أو لم تكن على دراية بالرمز الأساسي وراء النماذج، يمكنك مع ذلك استخدامها للاستدلال باستخدام [`pipeline`]! سوف يُعلمك هذا البرنامج التعليمي ما يلي:

+

+* استخدام [`pipeline`] للاستدلال.

+* استخدم مُجزّئ أو نموذجًا محددًا.

+* استخدم [`pipeline`] للمهام الصوتية والبصرية والمتعددة الوسائط.

+

+

+

+هذا تقريب أقرب للتفكيك الحقيقي لاحتمالية التسلسل وسيؤدي عادةً إلى نتيجة أفضل.لكن الجانب السلبي هو أنه يتطلب تمريرًا للأمام لكل رمز في مجموعة البيانات. حل وسط عملي مناسب هو استخدام نافذة منزلقة بخطوة، بحيث يتم تحريك السياق بخطوات أكبر بدلاً من الانزلاق بمقدار 1 رمز في كل مرة. مما يسمح بإجراء الحساب بشكل أسرع مع إعطاء النموذج سياقًا كبيرًا للتنبؤات في كل خطوة.

+

+## مثال: حساب التعقيد اللغوي مع GPT-2 في 🤗 Transformers

+

+دعونا نوضح هذه العملية مع GPT-2.

+

+```python

+from transformers import GPT2LMHeadModel, GPT2TokenizerFast

+

+device = "cuda"

+model_id = "openai-community/gpt2-large"

+model = GPT2LMHeadModel.from_pretrained(model_id).to(device)

+tokenizer = GPT2TokenizerFast.from_pretrained(model_id)

+```

+

+سنقوم بتحميل مجموعة بيانات WikiText-2 وتقييم التعقيد اللغوي باستخدام بعض إستراتيجيات مختلفة النافذة المنزلقة. نظرًا لأن هذه المجموعة البيانات الصغيرة ونقوم فقط بمسح واحد فقط للمجموعة، فيمكننا ببساطة تحميل مجموعة البيانات وترميزها بالكامل في الذاكرة.

+

+```python

+from datasets import load_dataset

+

+test = load_dataset("wikitext", "wikitext-2-raw-v1", split="test")

+encodings = tokenizer("\n\n".join(test["text"]), return_tensors="pt")

+```

+

+مع 🤗 Transformers، يمكننا ببساطة تمرير `input_ids` كـ `labels` إلى نموذجنا، وسيتم إرجاع متوسط احتمالية السجل السالب لكل رمز كخسارة. ومع ذلك، مع نهج النافذة المنزلقة، هناك تداخل في الرموز التي نمررها إلى النموذج في كل تكرار. لا نريد تضمين احتمالية السجل للرموز التي نتعامل معها كسياق فقط في خسارتنا، لذا يمكننا تعيين هذه الأهداف إلى `-100` بحيث يتم تجاهلها. فيما يلي هو مثال على كيفية القيام بذلك بخطوة تبلغ `512`. وهذا يعني أن النموذج سيكون لديه 512 رمزًا على الأقل للسياق عند حساب الاحتمالية الشرطية لأي رمز واحد (بشرط توفر 512 رمزًا سابقًا متاحًا للاشتقاق).

+

+```python

+import torch

+from tqdm import tqdm

+

+max_length = model.config.n_positions

+stride = 512

+seq_len = encodings.input_ids.size(1)

+

+nlls = []

+prev_end_loc = 0

+for begin_loc in tqdm(range(0, seq_len, stride)):

+ end_loc = min(begin_loc + max_length, seq_len)

+ trg_len = end_loc - prev_end_loc # قد تكون مختلفة عن الخطوة في الحلقة الأخيرة

+ input_ids = encodings.input_ids[:, begin_loc:end_loc].to(device)

+ target_ids = input_ids.clone()

+ target_ids[:, :-trg_len] = -100

+

+ with torch.no_grad():

+ outputs = model(input_ids, labels=target_ids)

+

+ # يتم حساب الخسارة باستخدام CrossEntropyLoss الذي يقوم بالمتوسط على التصنيفات الصحيحة

+ # لاحظ أن النموذج يحسب الخسارة على trg_len - 1 من التصنيفات فقط، لأنه يتحول داخليًا إلى اليسار بواسطة 1.

+ neg_log_likelihood = outputs.loss

+

+ nlls.append(neg_log_likelihood)

+

+ prev_end_loc = end_loc

+ if end_loc == seq_len:

+ break

+

+ppl = torch.exp(torch.stack(nlls).mean())

+```

+

+يعد تشغيل هذا مع طول الخطوة مساويًا لطول الإدخال الأقصى يعادل لاستراتيجية النافذة غير المنزلقة وغير المثلى التي ناقشناها أعلاه. وكلما صغرت الخطوة، زاد السياق الذي سيحصل عليه النموذج في عمل كل تنبؤ، وكلما كانت التعقيد اللغوي المُبلغ عنها أفضل عادةً.

+

+عندما نقوم بتشغيل ما سبق باستخدام `stride = 1024`، أي بدون تداخل، تكون درجة التعقيد اللغوي الناتجة هي `19.44`، وهو ما يماثل `19.93` المبلغ عنها في ورقة GPT-2. من خلال استخدام `stride = 512` وبالتالي استخدام إستراتيجية النافذة المنزلقة، ينخفض هذا إلى `16.45`. هذه النتيجة ليست فقط أفضل، ولكنها محسوبة بطريقة أقرب إلى التحليل التلقائي الحقيقي لاحتمالية التسلسل.

\ No newline at end of file

diff --git a/docs/transformers/docs/source/ar/philosophy.md b/docs/transformers/docs/source/ar/philosophy.md

new file mode 100644

index 0000000000000000000000000000000000000000..09de2991fba28d3e650aafc30bed104396c50894

--- /dev/null

+++ b/docs/transformers/docs/source/ar/philosophy.md

@@ -0,0 +1,49 @@

+# الفلسفة

+

+تُعد 🤗 Transformers مكتبة برمجية ذات رؤية واضحة صُممت من أجل:

+

+- الباحثون والمُتعلّمون في مجال التعلم الآلي ممن يسعون لاستخدام أو دراسة أو تطوير نماذج Transformers واسعة النطاق.

+- مُطبّقي تعلم الآلة الذين يرغبون في ضبط تلك النماذج أو تشغيلها في بيئة إنتاجية، أو كليهما.

+- المهندسون الذين يريدون فقط تنزيل نموذج مُدرب مسبقًا واستخدامه لحل مهمة تعلم آلي معينة.

+

+تم تصميم المكتبة مع الأخذ في الاعتبار هدفين رئيسيين:

+

+1. سهولة وسرعة الاستخدام:

+

+ - تمّ تقليل عدد المفاهيم المُجردة التي يتعامل معها المستخدم إلى أدنى حد والتي يجب تعلمها، وفي الواقع، لا توجد مفاهيم مُجردة تقريبًا، فقط ثلاث فئات أساسية مطلوبة لاستخدام كل نموذج: [الإعدادات](main_classes/configuration)، [نماذج](main_classes/model)، وفئة ما قبل المعالجة ([مُجزّئ لغوي](main_classes/tokenizer) لـ NLP، [معالج الصور](main_classes/image_processor) للرؤية، [مستخرج الميزات](main_classes/feature_extractor) للصوت، و [معالج](main_classes/processors) للمدخﻻت متعددة الوسائط).

+ - يمكن تهيئة جميع هذه الفئات بطريقة بسيطة وموحدة من خلال نماذج مُدربة مسبقًا باستخدام الدالة الموحدة `from_pretrained()` والتي تقوم بتنزيل (إذا لزم الأمر)، وتخزين وتحميل كل من: فئة النموذج المُراد استخدامه والبيانات المرتبطة ( مُعاملات الإعدادات، ومعجم للمُجزّئ اللغوي،وأوزان النماذج) من نقطة تدقيق مُحددة مُخزّنة على [Hugging Face Hub](https://huggingface.co/models) أو ن من نقطة تخزين خاصة بالمستخدم.

+ - بالإضافة إلى هذه الفئات الأساسية الثلاث، توفر المكتبة واجهتي برمجة تطبيقات: [`pipeline`] للاستخدام السريع لأحد النماذج لأداء استنتاجات على مهمة مُحددة، و [`Trainer`] للتدريب السريع أو الضبط الدقيق لنماذج PyTorch (جميع نماذج TensorFlow متوافقة مع `Keras.fit`).

+ - نتيجة لذلك، هذه المكتبة ليست صندوق أدوات متعدد الاستخدامات من الكتل الإنشائية للشبكات العصبية. إذا كنت تريد توسيع أو البناء على المكتبة، فما عليك سوى استخدام Python و PyTorch و TensorFlow و Keras العادية والوراثة من الفئات الأساسية للمكتبة لإعادة استخدام الوظائف مثل تحميل النموذج وحفظه. إذا كنت ترغب في معرفة المزيد عن فلسفة الترميز لدينا للنماذج، فراجع منشور المدونة الخاص بنا [Repeat Yourself](https://huggingface.co/blog/transformers-design-philosophy).

+

+2. تقديم نماذج رائدة في مجالها مع أداء قريب قدر الإمكان من النماذج الأصلية:

+

+ - نقدم مثالًا واحدًا على الأقل لكل بنية تقوم بإعادة إنتاج نتيجة مقدمة من المؤلفين الرسميين لتلك البنية.

+ - عادةً ما تكون الشفرة قريبة قدر الإمكان من قاعدة الشفرة الأصلية، مما يعني أن بعض شفرة PyTorch قد لا تكون "بأسلوب PyTorch" كما يمكن أن تكون نتيجة لكونها شفرة TensorFlow محولة والعكس صحيح.

+

+بعض الأهداف الأخرى:

+

+- كشف تفاصيل النماذج الداخلية بشكل متسق قدر الإمكان:

+

+ -نتيح الوصول، باستخدام واجهة برمجة واحدة، إلى جميع الحالات المخفية (Hidden-States) وأوزان الانتباه (Attention Weights).

+ - تم توحيد واجهات برمجة التطبيقات الخاصة بفئات المعالجة المسبقة والنماذج الأساسية لتسهيل التبديل بين النماذج.

+

+- دمج مجموعة مختارة من الأدوات الواعدة لضبط النماذج بدقة (Fine-tuning) ودراستها:

+

+ - طريقة بسيطة ومتسقة لإضافة رموز جديدة إلى مفردات التضمينات (Embeddings) لضبط النماذج بدقة.

+ - طرق سهلة لإخفاء (Masking) وتقليم (Pruning) رؤوس المحولات (Transformer Heads).

+

+- التبديل بسهولة بين PyTorch و TensorFlow 2.0 و Flax، مما يسمح بالتدريب باستخدام إطار واحد والاستدلال باستخدام إطار آخر.

+

+## المفاهيم الرئيسية

+

+تعتمد المكتبة على ثلاثة أنواع من الفئات لكل نموذج:

+

+- **فئات النماذج** يمكن أن تكون نماذج PyTorch ([torch.nn.Module](https://pytorch.org/docs/stable/nn.html#torch.nn.Module))، أو نماذج Keras ([tf.keras.Model](https://www.tensorflow.org/api_docs/python/tf/keras/Model))، أو نماذج JAX/Flax ([flax.linen.Module](https://flax.readthedocs.io/en/latest/api_reference/flax.linen/module.html)) التي تعمل مع الأوزان المُدربة مسبقًا المقدمة في المكتبة.

+- **فئات الإعداد** تخزن معلمات التهيئة المطلوبة لبناء نموذج (مثل عدد الطبقات وحجم الطبقة المخفية). أنت لست مضطرًا دائمًا إلى إنشاء مثيل لهذه الفئات بنفسك. على وجه الخصوص، إذا كنت تستخدم نموذجًا مُدربًا مسبقًا دون أي تعديل، فإن إنشاء النموذج سيهتم تلقائيًا تهيئة الإعدادات (والذي يعد جزءًا من النموذج).

+- **فئات ما قبل المعالجة** تحويل البيانات الخام إلى تنسيق مقبول من قبل النموذج. يقوم [المعالج](main_classes/tokenizer) بتخزين المعجم لكل نموذج ويقدم طرقًا لتشفير وفك تشفير السلاسل في قائمة من مؤشرات تضمين الرموز ليتم إطعامها للنموذج. تقوم [معالجات الصور](main_classes/image_processor) بمعالجة إدخالات الرؤية، وتقوم [مستخلصات الميزات](main_classes/feature_extractor) بمعالجة إدخالات الصوت، ويقوم [المعالج](main_classes/processors) بمعالجة الإدخالات متعددة الوسائط.

+

+يمكن تهيئة جميع هذه الفئات من نسخ مُدربة مسبقًا، وحفظها محليًا، ومشاركتها على منصة Hub عبر ثلاث طرق:

+

+- تسمح لك الدالة `from_pretrained()` بتهيئة النموذج وتكويناته وفئة المعالجة المسبقة من إصدار مُدرب مسبقًا إما يتم توفيره بواسطة المكتبة نفسها (يمكن العثور على النماذج المدعومة على [Model Hub](https://huggingface.co/models)) أو مخزنة محليًا (أو على خادم) بواسطة المستخدم.

+- تسمح لك الدالة `save_pretrained()` بحفظ النموذج، وتكويناته وفئة المعالجة المسبقة محليًا، بحيث يمكن إعادة تحميله باستخدام الدالة `from_pretrained()`.

+- تسمح لك `push_to_hub()` بمشاركة نموذج وتكويناتهوفئة المعالجة المسبقة على Hub، بحيث يمكن الوصول إليها بسهولة من قبل الجميع.

diff --git a/docs/transformers/docs/source/ar/pipeline_tutorial.md b/docs/transformers/docs/source/ar/pipeline_tutorial.md

new file mode 100644

index 0000000000000000000000000000000000000000..2dd713a6533f6e3831bdde6116f16bbdcf573f57

--- /dev/null

+++ b/docs/transformers/docs/source/ar/pipeline_tutorial.md

@@ -0,0 +1,315 @@

+# خطوط الأنابيب الاستدلال

+

+يجعل [`pipeline`] من السهل استخدام أي نموذج من [Hub](https://huggingface.co/models) للاستدلال لأي مهام خاصة باللغة أو الرؤية الحاسوبية أو الكلام أو المهام متعددة الوسائط. حتى إذا لم يكن لديك خبرة في طريقة معينة أو لم تكن على دراية بالرمز الأساسي وراء النماذج، يمكنك مع ذلك استخدامها للاستدلال باستخدام [`pipeline`]! سوف يُعلمك هذا البرنامج التعليمي ما يلي:

+

+* استخدام [`pipeline`] للاستدلال.

+* استخدم مُجزّئ أو نموذجًا محددًا.

+* استخدم [`pipeline`] للمهام الصوتية والبصرية والمتعددة الوسائط.

+