Update README.md

Browse files

README.md

CHANGED

|

@@ -99,88 +99,48 @@ pixel_values = pixel_values.to(torch.bfloat16).cuda()

|

|

| 99 |

outputs = model(pixel_values)

|

| 100 |

```

|

| 101 |

|

| 102 |

-

##

|

| 103 |

-

|

| 104 |

-

we employ the TokenOCR as the visual foundation model and further develop an MLLM, named TokenVL, tailored for document understanding.

|

| 105 |

-

Following the previous training paradigm, TokenVL also includes two stages:

|

| 106 |

-

**Stage 1: LLM-guided Token Alignment Training for text parsing tasks.**

|

| 107 |

-

**Stage 2: Supervised Instruction Tuning for VQA tasks.**

|

| 108 |

-

|

| 109 |

-

## Model Architecture

|

| 110 |

-

|

| 111 |

-

As shown in the following figure, InternVL 2.5 retains the same model architecture as its predecessors, InternVL 1.5 and 2.0, following the "ViT-MLP-LLM" paradigm. In this new version, we integrate a newly incrementally pre-trained InternViT with various pre-trained LLMs, including InternLM 2.5 and Qwen 2.5, using a randomly initialized MLP projector.

|

| 112 |

-

|

| 113 |

-

|

| 114 |

-

|

| 115 |

-

As in the previous version, we applied a pixel unshuffle operation, reducing the number of visual tokens to one-quarter of the original. Besides, we adopted a similar dynamic resolution strategy as InternVL 1.5, dividing images into tiles of 448×448 pixels. The key difference, starting from InternVL 2.0, is that we additionally introduced support for multi-image and video data.

|

| 116 |

-

|

| 117 |

-

## Training Strategy

|

| 118 |

-

|

| 119 |

-

### Dynamic High-Resolution for Multimodal Data

|

| 120 |

-

|

| 121 |

-

In InternVL 2.0 and 2.5, we extend the dynamic high-resolution training approach, enhancing its capabilities to handle multi-image and video datasets.

|

| 122 |

-

|

| 123 |

-

|

| 124 |

-

|

| 125 |

-

- For single-image datasets, the total number of tiles `n_max` are allocated to a single image for maximum resolution. Visual tokens are enclosed in `<img>` and `</img>` tags.

|

| 126 |

-

|

| 127 |

-

- For multi-image datasets, the total number of tiles `n_max` are distributed across all images in a sample. Each image is labeled with auxiliary tags like `Image-1` and enclosed in `<img>` and `</img>` tags.

|

| 128 |

-

|

| 129 |

-

- For videos, each frame is resized to 448×448. Frames are labeled with tags like `Frame-1` and enclosed in `<img>` and `</img>` tags, similar to images.

|

| 130 |

-

|

| 131 |

-

### Single Model Training Pipeline

|

| 132 |

-

|

| 133 |

-

The training pipeline for a single model in InternVL 2.5 is structured across three stages, designed to enhance the model's visual perception and multimodal capabilities.

|

| 134 |

-

|

| 135 |

-

|

| 136 |

-

|

| 137 |

-

- **Stage 1: MLP Warmup.** In this stage, only the MLP projector is trained while the vision encoder and language model are frozen. A dynamic high-resolution training strategy is applied for better performance, despite increased cost. This phase ensures robust cross-modal alignment and prepares the model for stable multimodal training.

|

| 138 |

-

|

| 139 |

-

- **Stage 1.5: ViT Incremental Learning (Optional).** This stage allows incremental training of the vision encoder and MLP projector using the same data as Stage 1. It enhances the encoder’s ability to handle rare domains like multilingual OCR and mathematical charts. Once trained, the encoder can be reused across LLMs without retraining, making this stage optional unless new domains are introduced.

|

| 140 |

-

|

| 141 |

-

- **Stage 2: Full Model Instruction Tuning.** The entire model is trained on high-quality multimodal instruction datasets. Strict data quality controls are enforced to prevent degradation of the LLM, as noisy data can cause issues like repetitive or incorrect outputs. After this stage, the training process is complete.

|

| 142 |

-

|

| 143 |

-

## Evaluation on Vision Capability

|

| 144 |

|

| 145 |

We present a comprehensive evaluation of the vision encoder’s performance across various domains and tasks. The evaluation is divided into two key categories: (1) image classification, representing global-view semantic quality, and (2) semantic segmentation, capturing local-view semantic quality. This approach allows us to assess the representation quality of InternViT across its successive version updates. Please refer to our technical report for more details.

|

| 146 |

|

| 147 |

-

## Image Classification

|

| 148 |

|

| 149 |

|

| 150 |

|

| 151 |

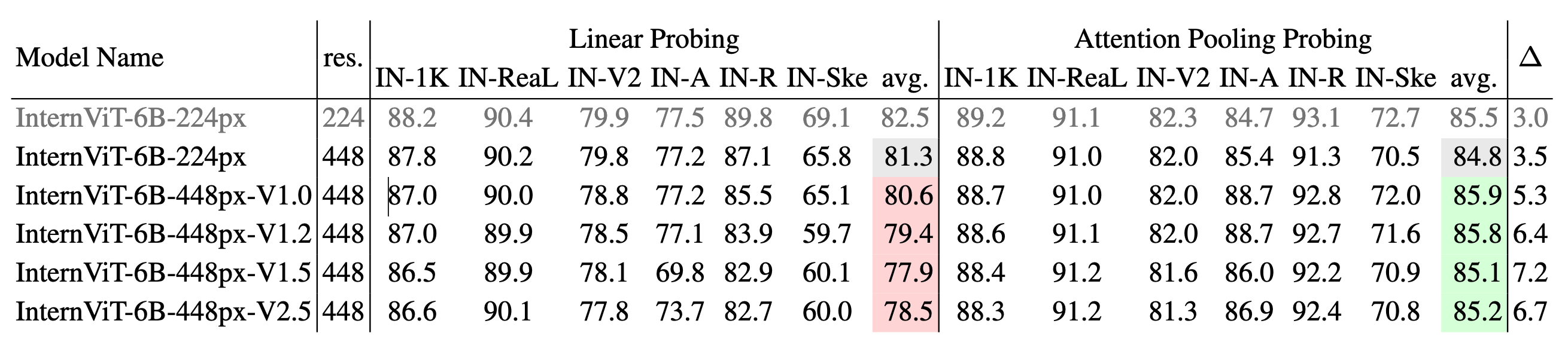

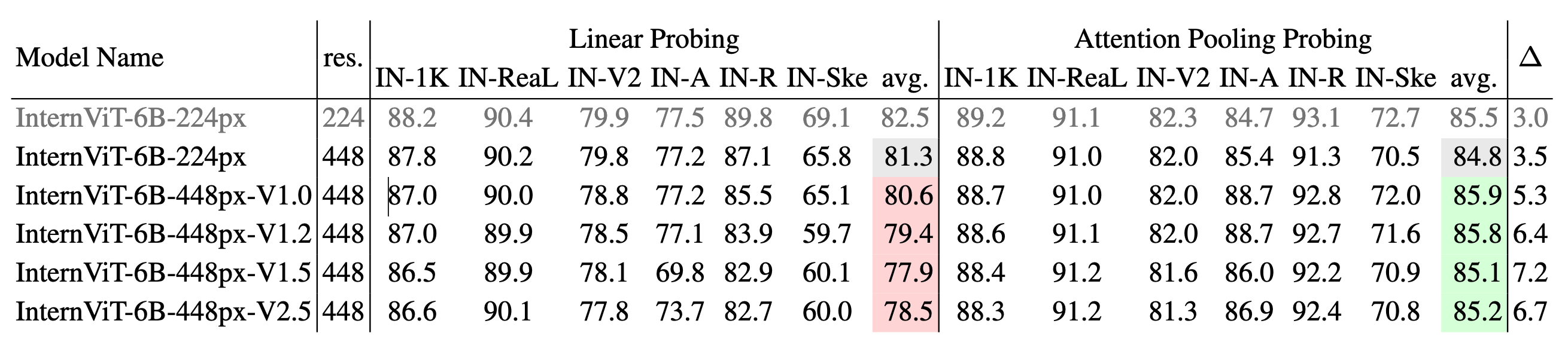

**Image classification performance across different versions of InternViT.** We use IN-1K for training and evaluate on the IN-1K validation set as well as multiple ImageNet variants, including IN-ReaL, IN-V2, IN-A, IN-R, and IN-Sketch. Results are reported for both linear probing and attention pooling probing methods, with average accuracy for each method. ∆ represents the performance gap between attention pooling probing and linear probing, where a larger ∆ suggests a shift from learning simple linear features to capturing more complex, nonlinear semantic representations.

|

| 152 |

|

| 153 |

-

## Semantic Segmentation Performance

|

| 154 |

|

| 155 |

|

| 156 |

|

| 157 |

**Semantic segmentation performance across different versions of InternViT.** The models are evaluated on ADE20K and COCO-Stuff-164K using three configurations: linear probing, head tuning, and full tuning. The table shows the mIoU scores for each configuration and their averages. ∆1 represents the gap between head tuning and linear probing, while ∆2 shows the gap between full tuning and linear probing. A larger ∆ value indicates a shift from simple linear features to more complex, nonlinear representations.

|

| 158 |

|

| 159 |

-

## Quick Start

|

| 160 |

|

| 161 |

-

|

| 162 |

-

> 🚨 Note: In our experience, the InternViT V2.5 series is better suited for building MLLMs than traditional computer vision tasks.

|

| 163 |

|

| 164 |

-

|

| 165 |

-

|

| 166 |

-

from PIL import Image

|

| 167 |

-

from transformers import AutoModel, CLIPImageProcessor

|

| 168 |

|

| 169 |

-

|

| 170 |

-

'OpenGVLab/InternViT-300M-448px-V2_5',

|

| 171 |

-

torch_dtype=torch.bfloat16,

|

| 172 |

-

low_cpu_mem_usage=True,

|

| 173 |

-

trust_remote_code=True).cuda().eval()

|

| 174 |

|

| 175 |

-

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 176 |

|

| 177 |

-

image_processor = CLIPImageProcessor.from_pretrained('OpenGVLab/InternViT-300M-448px-V2_5')

|

| 178 |

|

| 179 |

-

pixel_values = image_processor(images=image, return_tensors='pt').pixel_values

|

| 180 |

-

pixel_values = pixel_values.to(torch.bfloat16).cuda()

|

| 181 |

|

| 182 |

-

outputs = model(pixel_values)

|

| 183 |

-

```

|

| 184 |

|

| 185 |

## License

|

| 186 |

|

|

|

|

| 99 |

outputs = model(pixel_values)

|

| 100 |

```

|

| 101 |

|

| 102 |

+

### Evaluation on Vision Capability

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 103 |

|

| 104 |

We present a comprehensive evaluation of the vision encoder’s performance across various domains and tasks. The evaluation is divided into two key categories: (1) image classification, representing global-view semantic quality, and (2) semantic segmentation, capturing local-view semantic quality. This approach allows us to assess the representation quality of InternViT across its successive version updates. Please refer to our technical report for more details.

|

| 105 |

|

| 106 |

+

#### Image Classification

|

| 107 |

|

| 108 |

|

| 109 |

|

| 110 |

**Image classification performance across different versions of InternViT.** We use IN-1K for training and evaluate on the IN-1K validation set as well as multiple ImageNet variants, including IN-ReaL, IN-V2, IN-A, IN-R, and IN-Sketch. Results are reported for both linear probing and attention pooling probing methods, with average accuracy for each method. ∆ represents the performance gap between attention pooling probing and linear probing, where a larger ∆ suggests a shift from learning simple linear features to capturing more complex, nonlinear semantic representations.

|

| 111 |

|

| 112 |

+

#### Semantic Segmentation Performance

|

| 113 |

|

| 114 |

|

| 115 |

|

| 116 |

**Semantic segmentation performance across different versions of InternViT.** The models are evaluated on ADE20K and COCO-Stuff-164K using three configurations: linear probing, head tuning, and full tuning. The table shows the mIoU scores for each configuration and their averages. ∆1 represents the gap between head tuning and linear probing, while ∆2 shows the gap between full tuning and linear probing. A larger ∆ value indicates a shift from simple linear features to more complex, nonlinear representations.

|

| 117 |

|

|

|

|

| 118 |

|

| 119 |

+

## TokenVL

|

|

|

|

| 120 |

|

| 121 |

+

we employ the TokenOCR as the visual foundation model and further develop an MLLM, named TokenVL, tailored for document understanding.

|

| 122 |

+

Following the previous training paradigm, TokenVL also includes two stages:

|

|

|

|

|

|

|

| 123 |

|

| 124 |

+

**Stage 1: LLM-guided Token Alignment Training for text parsing tasks.**

|

|

|

|

|

|

|

|

|

|

|

|

|

| 125 |

|

| 126 |

+

<div align="center">

|

| 127 |

+

<img width="500" alt="image" src="https://cdn-uploads.huggingface.co/production/uploads/650d4a36cbd0c7d550d3b41b/gDr1fQg7I1nTIsiRWNHTr.png">

|

| 128 |

+

</div>

|

| 129 |

+

|

| 130 |

+

The framework of LLM-guided Token Alignment Training. Existing MLLMs primarily enhance spatial-wise text perception capabilities by integrating localization prompts to predict coordinates. However, this implicit

|

| 131 |

+

method makes it difficult for these models to have a precise understanding.

|

| 132 |

+

In contrast, the proposed token alignment uses BPE token masks to directly and explicitly align text with corresponding pixels in the input image, enhancing the MLLM’s localization awareness.

|

| 133 |

+

|

| 134 |

+

**Stage 2: Supervised Instruction Tuning for VQA tasks.**

|

| 135 |

+

|

| 136 |

+

During the Supervised Instruction Tuning stage, we cancel the token alignment branch as answers may not appear in the image for some reasoning tasks

|

| 137 |

+

(e.g., How much taller is the red bar compared to the green bar?). This also ensures no computational overhead during inference to improve the document understanding capability. Finally, we inherit the

|

| 138 |

+

remaining weights from the LLM-guided Token Alignment and unfreeze all parameters to facilitate comprehensive parameter updates.

|

| 139 |

+

|

| 140 |

+

### OCRBench Results

|

| 141 |

|

|

|

|

| 142 |

|

|

|

|

|

|

|

| 143 |

|

|

|

|

|

|

|

| 144 |

|

| 145 |

## License

|

| 146 |

|