Create README.md

Browse files

README.md

ADDED

|

@@ -0,0 +1,25 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

---

|

| 2 |

+

license: mit

|

| 3 |

+

datasets:

|

| 4 |

+

- VisualSphinx/VisualSphinx-Seeds

|

| 5 |

+

language:

|

| 6 |

+

- en

|

| 7 |

+

base_model:

|

| 8 |

+

- Qwen/Qwen2.5-VL-7B-Instruct

|

| 9 |

+

---

|

| 10 |

+

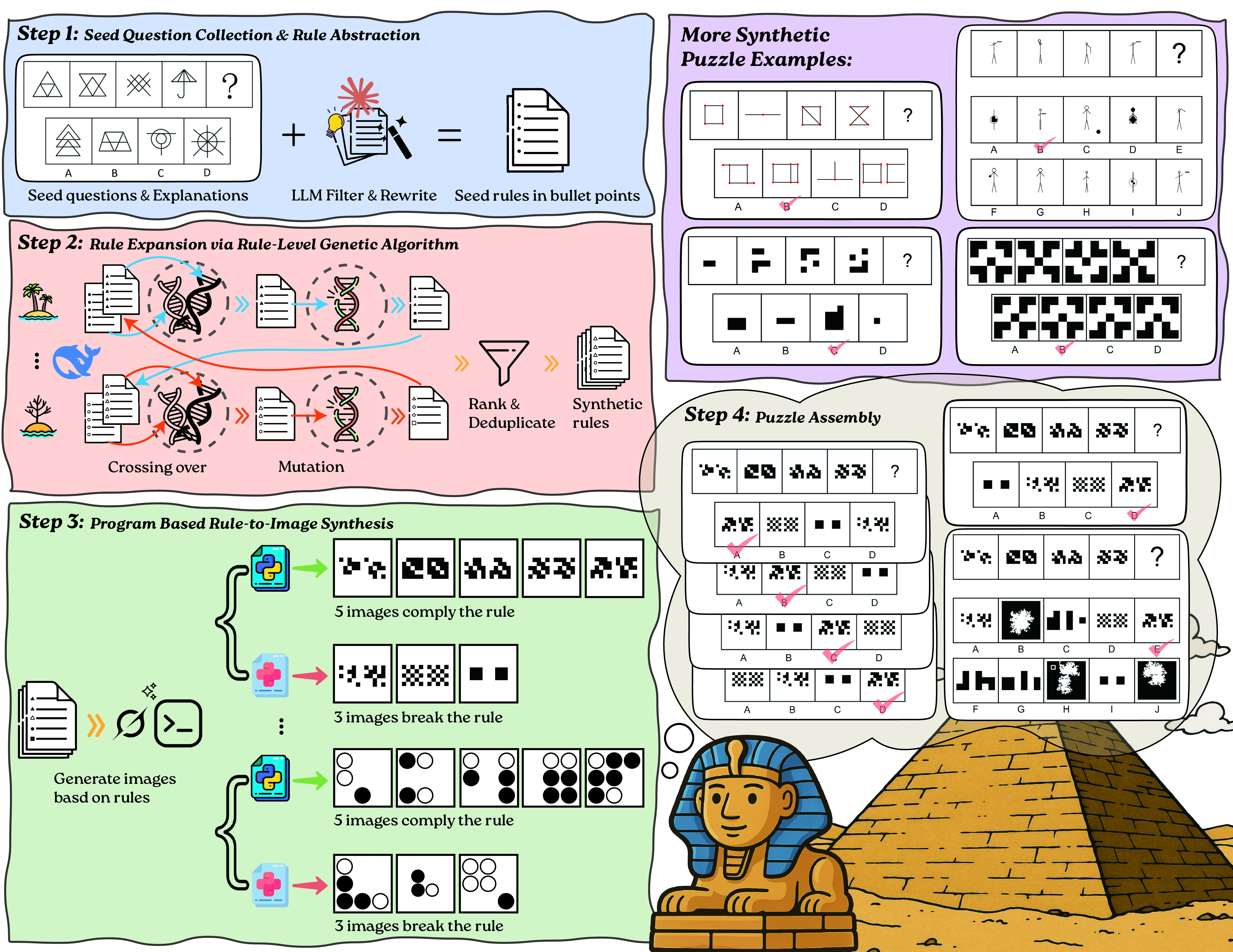

# 🦁 VisualSphinx: Large-Scale Synthetic Vision Logic Puzzles for RL

|

| 11 |

+

VisualSphinx is the largest fully-synthetic open-source dataset providing vision logic puzzles. It consists of over **660K** automatically generated logical visual puzzles. Each logical puzzle is grounded with an interpretable rule and accompanied by both correct answers and plausible distractors.

|

| 12 |

+

- 🌐 [Project Website](https://visualsphinx.github.io/) - Learn more about VisualSphinx

|

| 13 |

+

- 📖 [Technical Report](https://arxiv.org/abs/2505.23977) - Discover the methodology and technical details behind VisualSphinx

|

| 14 |

+

- 🔧 [Github Repo](https://github.com/VisualSphinx/VisualSphinx) - Access the complete pipeline used to produce VisualSphinx-V1

|

| 15 |

+

- 🤗 HF Datasets:

|

| 16 |

+

- [VisualSphinx-V1 (Raw)](https://huggingface.co/datasets/VisualSphinx/VisualSphinx-V1-Raw);

|

| 17 |

+

- [VisualSphinx-V1 (For RL)](https://huggingface.co/datasets/VisualSphinx/VisualSphinx-V1-RL-20K);

|

| 18 |

+

- [VisualSphinx-V1 (Benchmark)](https://huggingface.co/datasets/VisualSphinx/VisualSphinx-V1-Benchmark);

|

| 19 |

+

- [VisualSphinx (Seeds)](https://huggingface.co/datasets/VisualSphinx/VisualSphinx-Seeds);

|

| 20 |

+

- [VisualSphinx (Rules)](https://huggingface.co/datasets/VisualSphinx/VisualSphinx-V1-Rules).

|

| 21 |

+

|

| 22 |

+

|

| 23 |

+

## 📊 About This Model

|

| 24 |

+

|

| 25 |

+

This model is used for tagging the difficulty of our [VisualSphinx-V1](https://huggingface.co/datasets/VisualSphinx/VisualSphinx-V1-Raw) synthetic dataset. To train this model, we perform GRPO on Qwen/Qwen2.5-VL-7B-Instruct using our [seed dataset](https://huggingface.co/datasets/VisualSphinx/VisualSphinx-Seeds) for 256 steps.

|