File size: 4,636 Bytes

e45ccf6 69569c3 860fb5a 69569c3 860fb5a 69569c3 e45ccf6 69569c3 e45ccf6 69569c3 e45ccf6 69569c3 e45ccf6 | 1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 27 28 29 30 31 32 33 34 35 36 37 38 39 40 41 42 43 44 45 46 47 48 49 50 51 52 53 54 55 56 57 58 59 60 61 62 63 64 65 66 67 68 69 70 71 72 73 74 75 76 77 78 79 80 81 82 83 84 85 86 87 88 89 90 91 92 93 94 95 96 97 98 99 100 101 102 103 104 105 106 107 | ---

license: apache-2.0

language:

- en

- zh

base_model:

- Qwen/Qwen-Image

pipeline_tag: image-to-image

library_name: diffusers

---

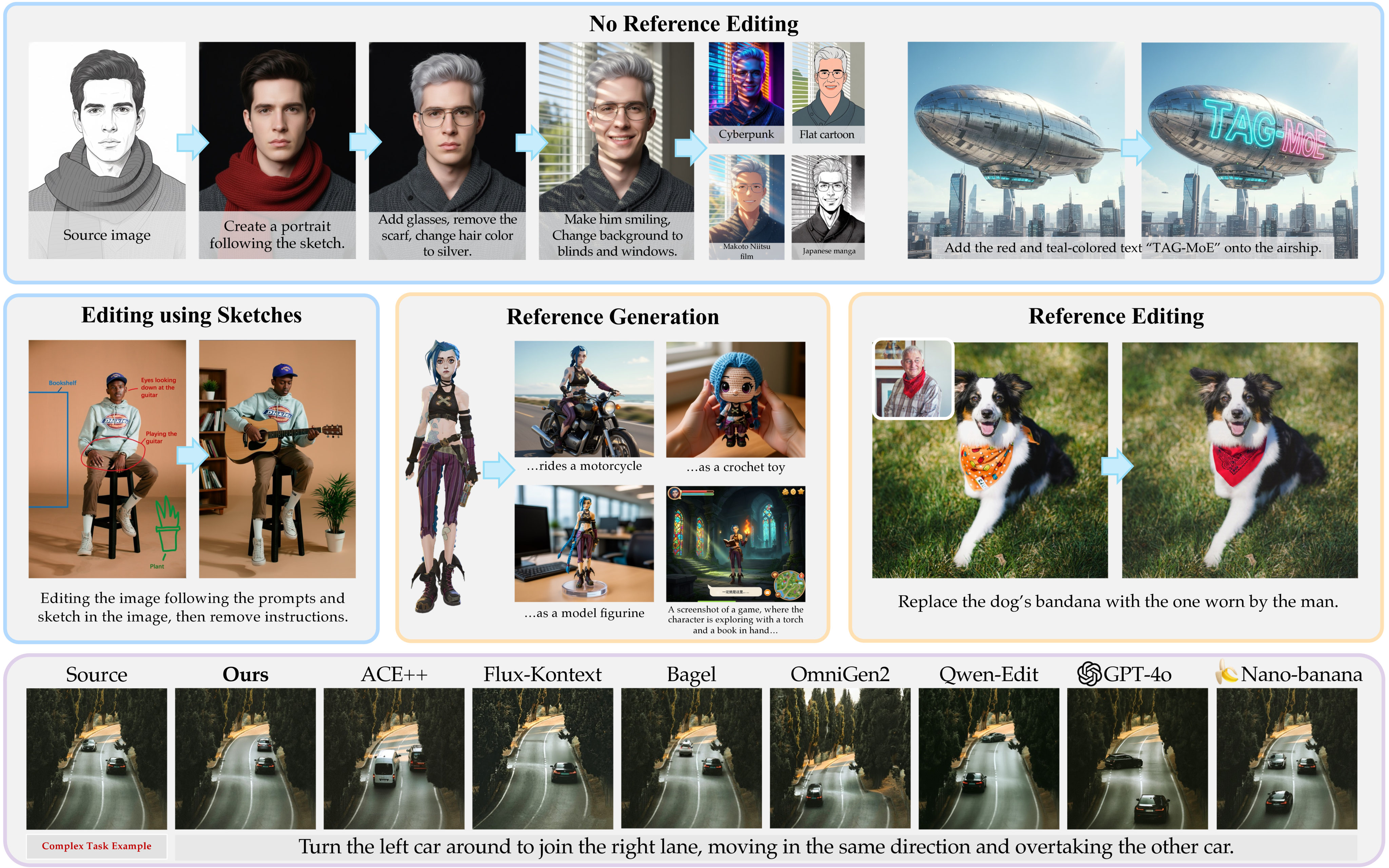

# TAG-MoE: Task-Aware Gating for Unified Generative Mixture-of-Experts

> **TAG-MoE: Task-Aware Gating for Unified Generative Mixture-of-Experts**<br>

> Yu Xu<sup>1,2†</sup>, Hongbin Yan<sup>1</sup>, Juan Cao<sup>1</sup>, Yiji Cheng<sup>2</sup>, Tiankai Hang<sup>2</sup>, Runze He<sup>2</sup>, Zijin Yin<sup>2</sup>, Shiyi Zhang<sup>2</sup>, Yuxin Zhang<sup>1</sup>, Jintao Li<sup>1</sup>, Chunyu Wang<sup>2‡</sup>, Qinglin Lu<sup>2</sup>, Tong-Yee Lee<sup>3</sup>, Fan Tang<sup>1§</sup><br>

> <sup>1</sup>University of Chinese Academy of Sciences, <sup>2</sup>Tencent Hunyuan, <sup>3</sup>National Cheng-Kung University

<a href='https://arxiv.org/abs/2601.08881'><img src='https://img.shields.io/badge/ArXiv-2601.08881-red'></a>

<a href='https://yuci-gpt.github.io/TAG-MoE/'><img src='https://img.shields.io/badge/Project%20Page-homepage-green'></a>

<a href='https://github.com/ICTMCG/TAG-MoE'><img src='https://img.shields.io/badge/github-repo-blue?logo=github'></a>

<a href='https://huggingface.co/spaces/YUXU915/TAG-MoE'><img src='https://img.shields.io/badge/HuggingFace-demo-4CAF50?logo=huggingface'></a>

> **Abstract**:<br>

> Unified image generation and editing models suffer from severe task interference in dense diffusion transformers architectures, where a shared parameter space must compromise between conflicting objectives (e.g., local editing v.s. subject-driven generation). While the sparse Mixture-of-Experts (MoE) paradigm is a promising solution, its gating networks remain task-agnostic, operating based on local features, unaware of global task intent. This task-agnostic nature prevents meaningful specialization and fails to resolve the underlying task interference. In this paper, we propose a novel framework to inject semantic intent into MoE routing. We introduce a Hierarchical Task Semantic Annotation scheme to create structured task descriptors (e.g., scope, type, preservation). We then design Predictive Alignment Regularization to align internal routing decisions with the task's high-level semantics. This regularization evolves the gating network from a task-agnostic executor to a dispatch center. Our model effectively mitigates task interference, outperforming dense baselines in fidelity and quality, and our analysis shows that experts naturally develop clear and semantically correlated specializations.

---

## 🔧 Environment Setup

We recommend using [uv](https://docs.astral.sh/uv/) with the provided `pyproject.toml` / `uv.lock`.

### 1. Install uv

```bash

curl -LsSf https://astral.sh/uv/install.sh | sh

```

### 2. Create and activate virtual environment

```bash

git clone https://github.com/ICTMCG/TAG-MoE.git && cd TAG-MoE

uv venv --python 3.12 --python-preference only-managed

source .venv/bin/activate

```

### 3. Install dependencies

```bash

UV_EXTRA_INDEX_URL=https://download.pytorch.org/whl/cu126 uv sync

```

---

## 🚀 Inference

> **Note**:

>

> - TAG-MoE inference requires **60GB+ available VRAM**. We ran inference tests on 2× A100 40GB GPUs.

> - We leverage a VLM model to refine the instruction according to the input for better result.

```bash

uv run python infer.py \

--pretrained_model_path Qwen/Qwen-Image \

--transformer_model_path YUXU915/TAG-MoE \

--device 0,1 \

--image input.png \

--prompt "Change the weather to sunny, remove the umbrella, the girl's hands hung naturally at her sides" \

--output result.png

```

### WebUI

```bash

uv run python run_gradio.py \

--pretrained_model_path Qwen/Qwen-Image \

--transformer_model_path YUXU915/TAG-MoE \

--device 0,1

```

---

## 📄 Citation

If you find this work useful, please consider citing:

```bibtex

@misc{xu2026tagmoetaskawaregatingunified,

title={TAG-MoE: Task-Aware Gating for Unified Generative Mixture-of-Experts},

author={Yu Xu and Hongbin Yan and Juan Cao and Yiji Cheng and Tiankai Hang and Runze He and Zijin Yin and Shiyi Zhang and Yuxin Zhang and Jintao Li and Chunyu Wang and Qinglin Lu and Tong-Yee Lee and Fan Tang},

year={2026},

eprint={2601.08881},

archivePrefix={arXiv},

primaryClass={cs.CV},

url={https://arxiv.org/abs/2601.08881},

}

```

---

## 🙏 Acknowledgements

This project builds upon the following excellent open-source works:

- **Diffusers** — https://github.com/huggingface/diffusers

- **MegaBlocks** — https://github.com/databricks/megablocks |