Instructions to use alaa-lab/InstructCV with libraries, inference providers, notebooks, and local apps. Follow these links to get started.

- Libraries

- Diffusers

How to use alaa-lab/InstructCV with Diffusers:

pip install -U diffusers transformers accelerate

import torch from diffusers import DiffusionPipeline from diffusers.utils import load_image # switch to "mps" for apple devices pipe = DiffusionPipeline.from_pretrained("alaa-lab/InstructCV", dtype=torch.bfloat16, device_map="cuda") prompt = "Turn this cat into a dog" input_image = load_image("https://huggingface.co/datasets/huggingface/documentation-images/resolve/main/diffusers/cat.png") image = pipe(image=input_image, prompt=prompt).images[0] - Notebooks

- Google Colab

- Kaggle

Update README.md

Browse files

README.md

CHANGED

|

@@ -6,9 +6,9 @@ datasets:

|

|

| 6 |

- yulu2/InstructCV-Demo-Data

|

| 7 |

---

|

| 8 |

|

| 9 |

-

#

|

| 10 |

|

| 11 |

-

GitHub: https://github.com

|

| 12 |

|

| 13 |

[](https://imgse.com/i/pCVB5B8)

|

| 14 |

|

|

|

|

| 6 |

- yulu2/InstructCV-Demo-Data

|

| 7 |

---

|

| 8 |

|

| 9 |

+

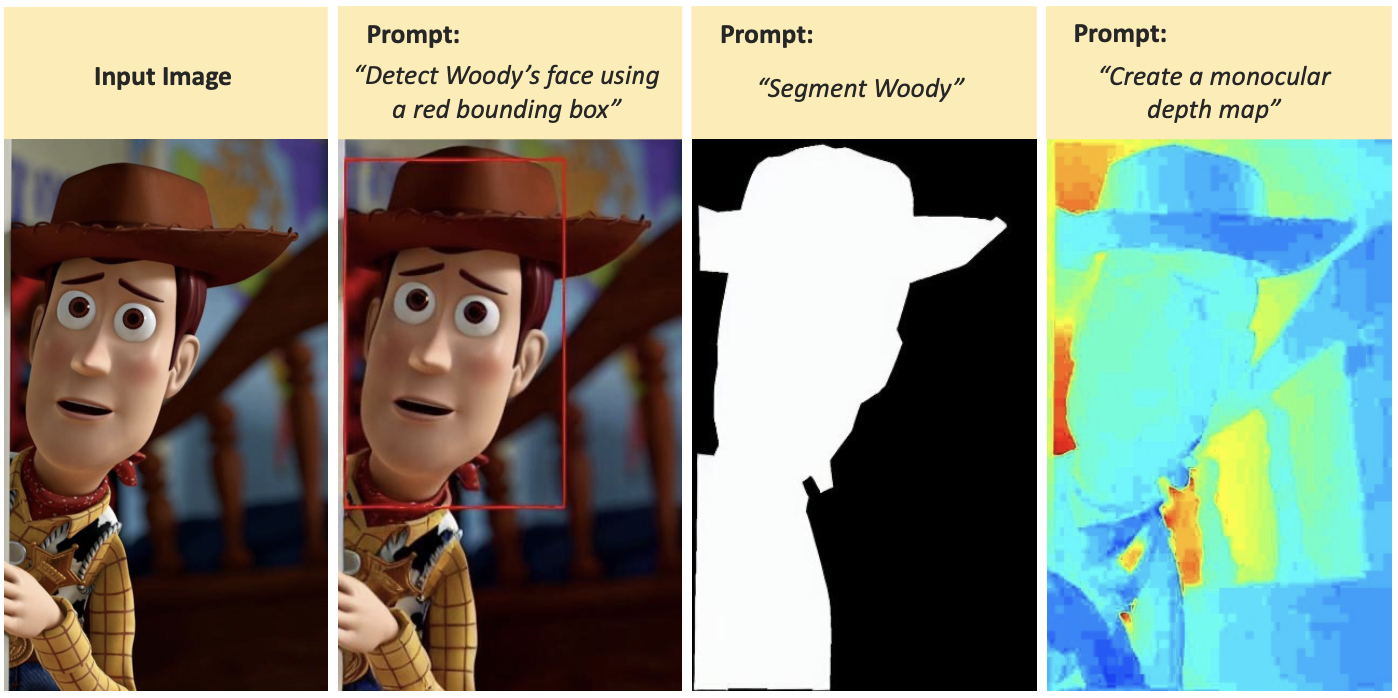

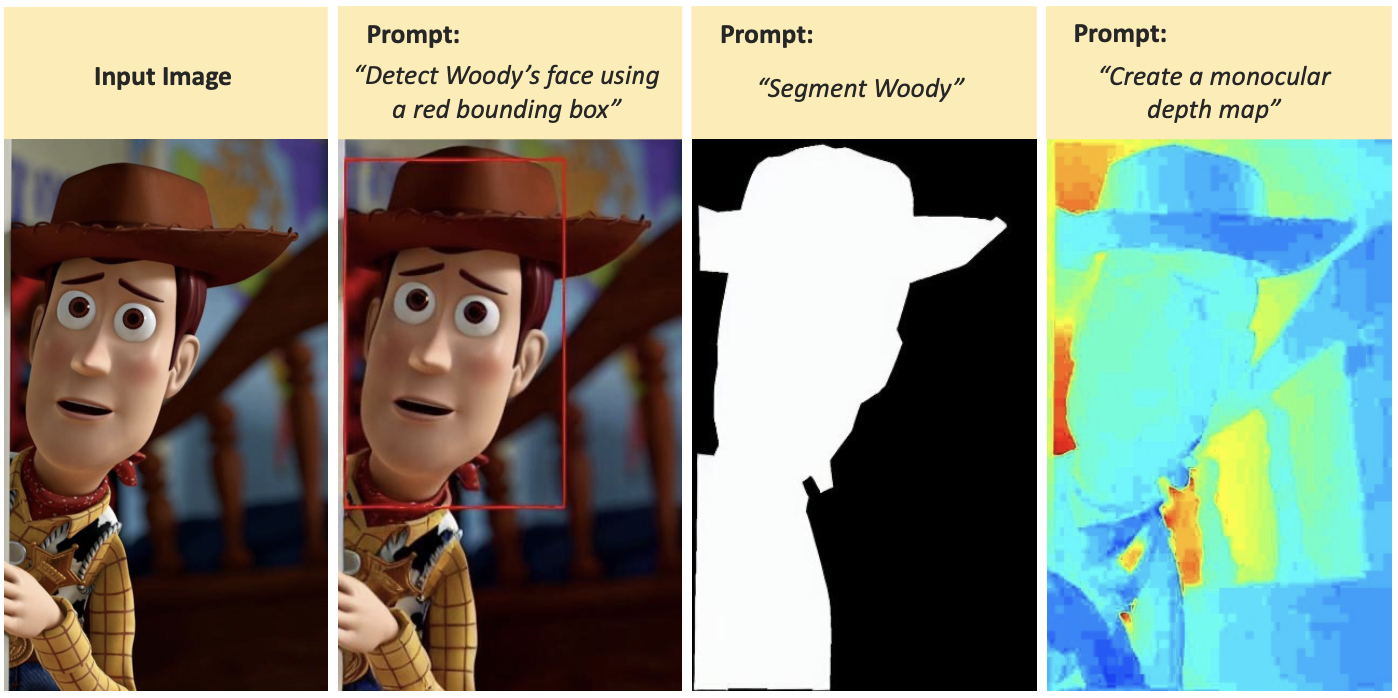

# InstructCV: Instruction-Tuned Text-to-Image Diffusion Models as Vision Generalists

|

| 10 |

|

| 11 |

+

GitHub: https://github.com/AlaaLab/InstructCV

|

| 12 |

|

| 13 |

[](https://imgse.com/i/pCVB5B8)

|

| 14 |

|