Instructions to use alaa-lab/InstructCV with libraries, inference providers, notebooks, and local apps. Follow these links to get started.

- Libraries

- Diffusers

How to use alaa-lab/InstructCV with Diffusers:

pip install -U diffusers transformers accelerate

import torch from diffusers import DiffusionPipeline from diffusers.utils import load_image # switch to "mps" for apple devices pipe = DiffusionPipeline.from_pretrained("alaa-lab/InstructCV", dtype=torch.bfloat16, device_map="cuda") prompt = "Turn this cat into a dog" input_image = load_image("https://huggingface.co/datasets/huggingface/documentation-images/resolve/main/diffusers/cat.png") image = pipe(image=input_image, prompt=prompt).images[0] - Notebooks

- Google Colab

- Kaggle

Update README.md

Browse files

README.md

CHANGED

|

@@ -1,3 +1,54 @@

|

|

| 1 |

---

|

| 2 |

-

license:

|

|

|

|

|

|

|

|

|

|

|

|

|

| 3 |

---

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

---

|

| 2 |

+

license: mit

|

| 3 |

+

tags:

|

| 4 |

+

- image-to-image

|

| 5 |

+

datasets:

|

| 6 |

+

- yulu2/InstructCV-Demo-Data

|

| 7 |

---

|

| 8 |

+

|

| 9 |

+

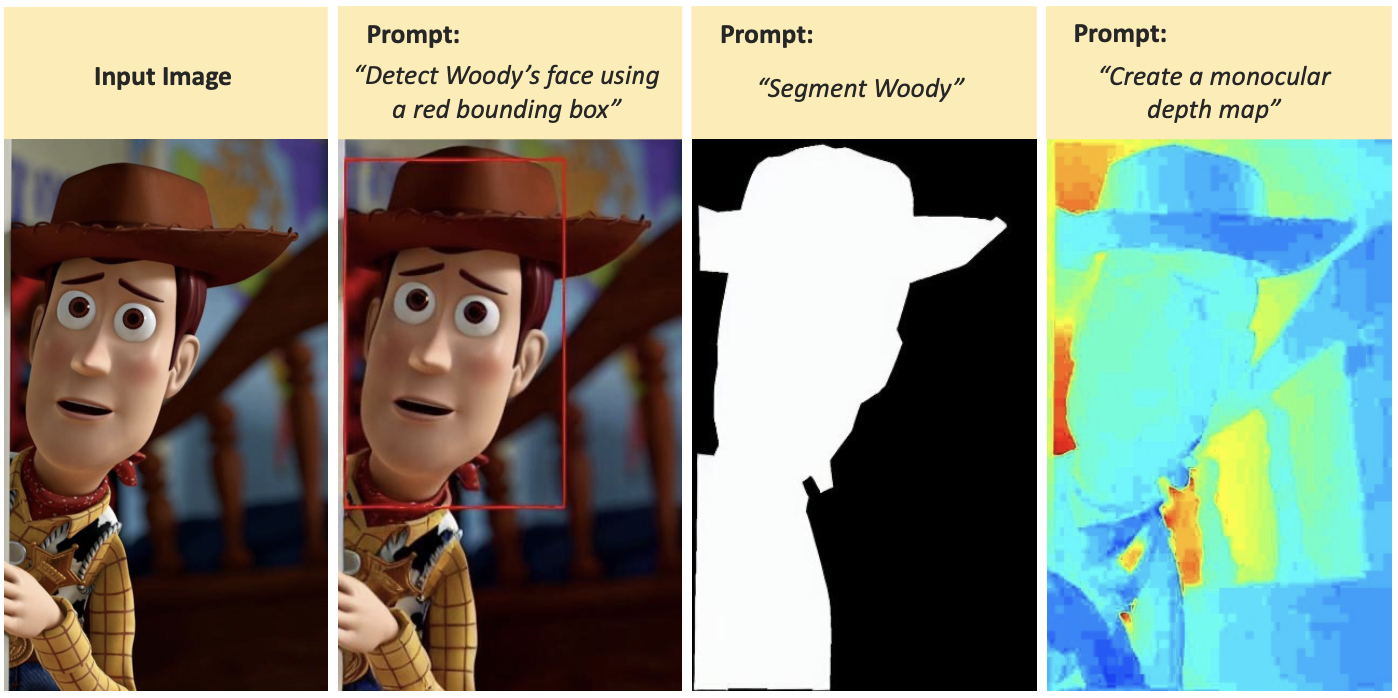

# INSTRUCTCV: YOUR TEXT-TO-IMAGE MODEL IS SECRETLY A VISION GENERALIST

|

| 10 |

+

|

| 11 |

+

GitHub: https://github.com

|

| 12 |

+

|

| 13 |

+

[](https://imgse.com/i/pCVB5B8)

|

| 14 |

+

|

| 15 |

+

|

| 16 |

+

## Example

|

| 17 |

+

|

| 18 |

+

To use `InstructCV`, install `diffusers` using `main` for now. The pipeline will be available in the next release

|

| 19 |

+

|

| 20 |

+

```bash

|

| 21 |

+

pip install diffusers accelerate safetensors transformers

|

| 22 |

+

```

|

| 23 |

+

|

| 24 |

+

```python

|

| 25 |

+

import PIL

|

| 26 |

+

import requests

|

| 27 |

+

import torch

|

| 28 |

+

from diffusers import StableDiffusionInstructPix2PixPipeline, EulerAncestralDiscreteScheduler

|

| 29 |

+

|

| 30 |

+

model_id = "yulu2/InstructCV"

|

| 31 |

+

pipe = StableDiffusionInstructPix2PixPipeline.from_pretrained(model_id, torch_dtype=torch.float16, safety_checker=None, variant="ema")

|

| 32 |

+

pipe.to("cuda")

|

| 33 |

+

pipe.scheduler = EulerAncestralDiscreteScheduler.from_config(pipe.scheduler.config)

|

| 34 |

+

|

| 35 |

+

url = "https://raw.githubusercontent.com/timothybrooks/instruct-pix2pix/main/imgs/example.jpg"

|

| 36 |

+

|

| 37 |

+

def download_image(url):

|

| 38 |

+

image = PIL.Image.open(requests.get(url, stream=True).raw)

|

| 39 |

+

image = PIL.ImageOps.exif_transpose(image)

|

| 40 |

+

image = image.convert("RGB")

|

| 41 |

+

return image

|

| 42 |

+

|

| 43 |

+

image = download_image(URL)

|

| 44 |

+

width, height = image.size

|

| 45 |

+

factor = 512 / max(width, height)

|

| 46 |

+

factor = math.ceil(min(width, height) * factor / 64) * 64 / min(width, height)

|

| 47 |

+

width = int((width * factor) // 64) * 64

|

| 48 |

+

height = int((height * factor) // 64) * 64

|

| 49 |

+

image = ImageOps.fit(image, (width, height), method=Image.Resampling.LANCZOS)

|

| 50 |

+

|

| 51 |

+

prompt = "Detect the person."

|

| 52 |

+

images = pipe(prompt, image=image, num_inference_steps=100).images

|

| 53 |

+

images[0]

|

| 54 |

+

```

|