Update README.md

Browse files

README.md

CHANGED

|

@@ -1,3 +1,73 @@

|

|

| 1 |

---

|

| 2 |

license: creativeml-openrail-m

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 3 |

---

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

---

|

| 2 |

license: creativeml-openrail-m

|

| 3 |

+

tags:

|

| 4 |

+

- text-to-image

|

| 5 |

+

- stable-diffusion

|

| 6 |

+

- anime

|

| 7 |

+

- aiart

|

| 8 |

---

|

| 9 |

+

|

| 10 |

+

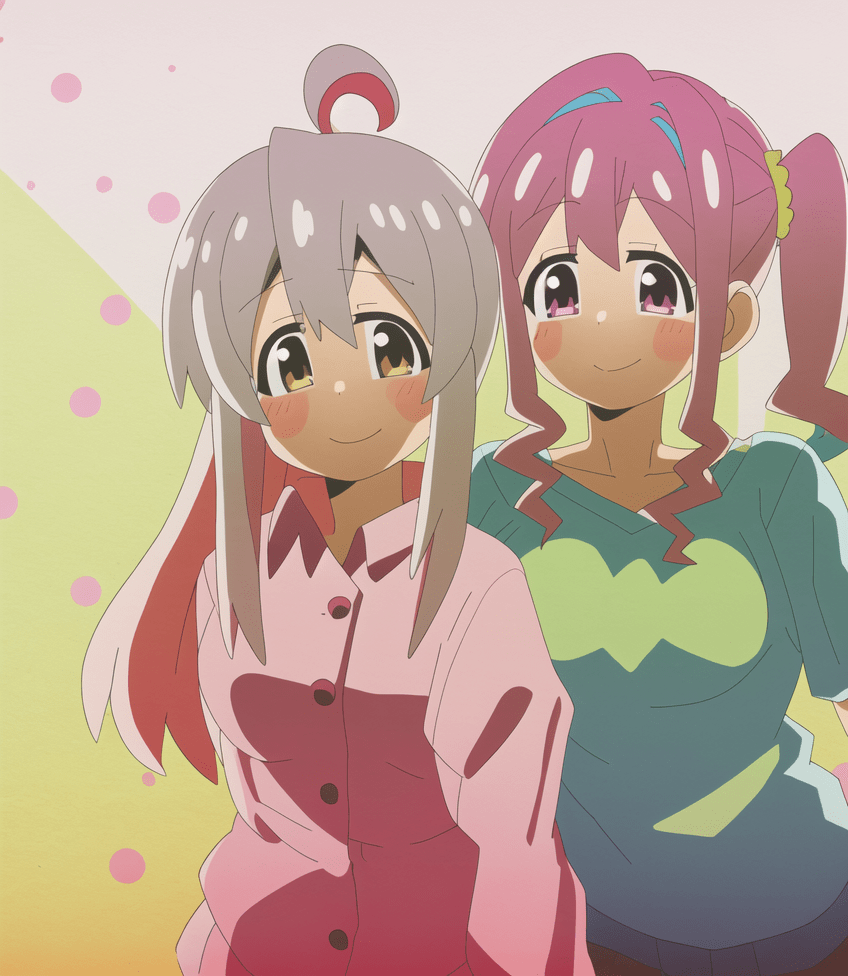

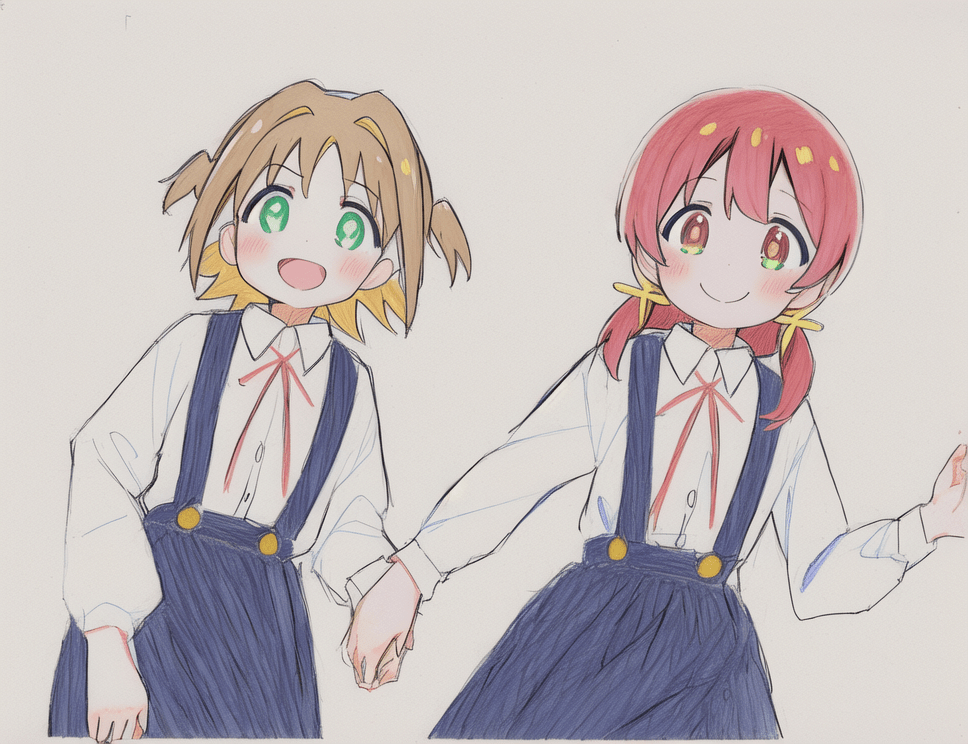

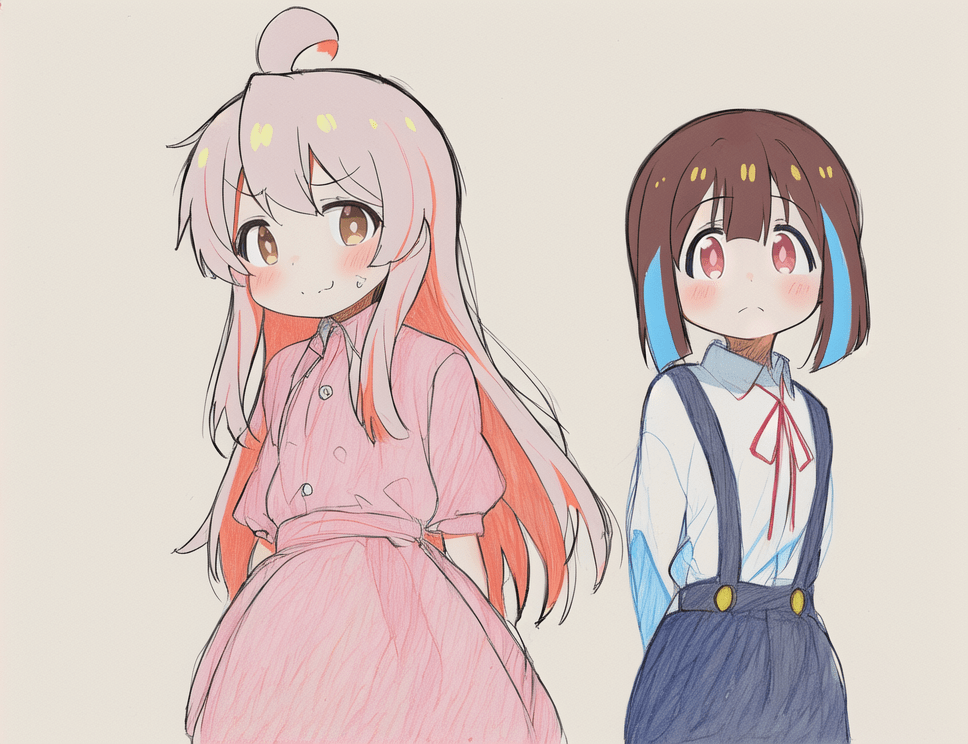

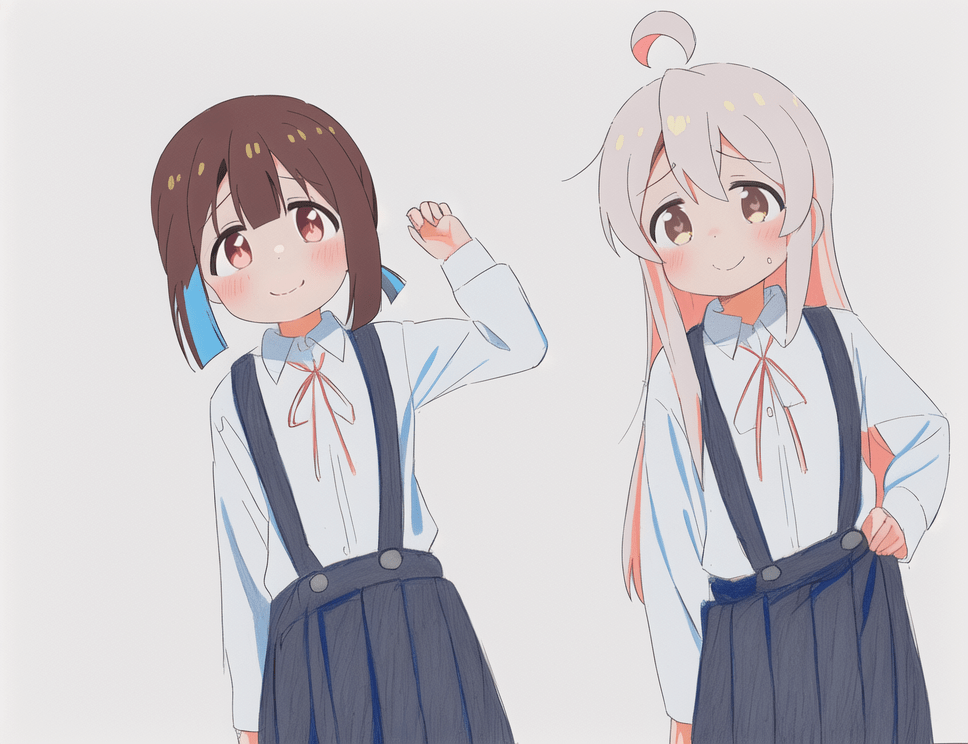

**This model is trained on 6(+1?) characters from ONIMAI: I'm Now Your Sister! (お兄ちゃんはおしまい!)**

|

| 11 |

+

|

| 12 |

+

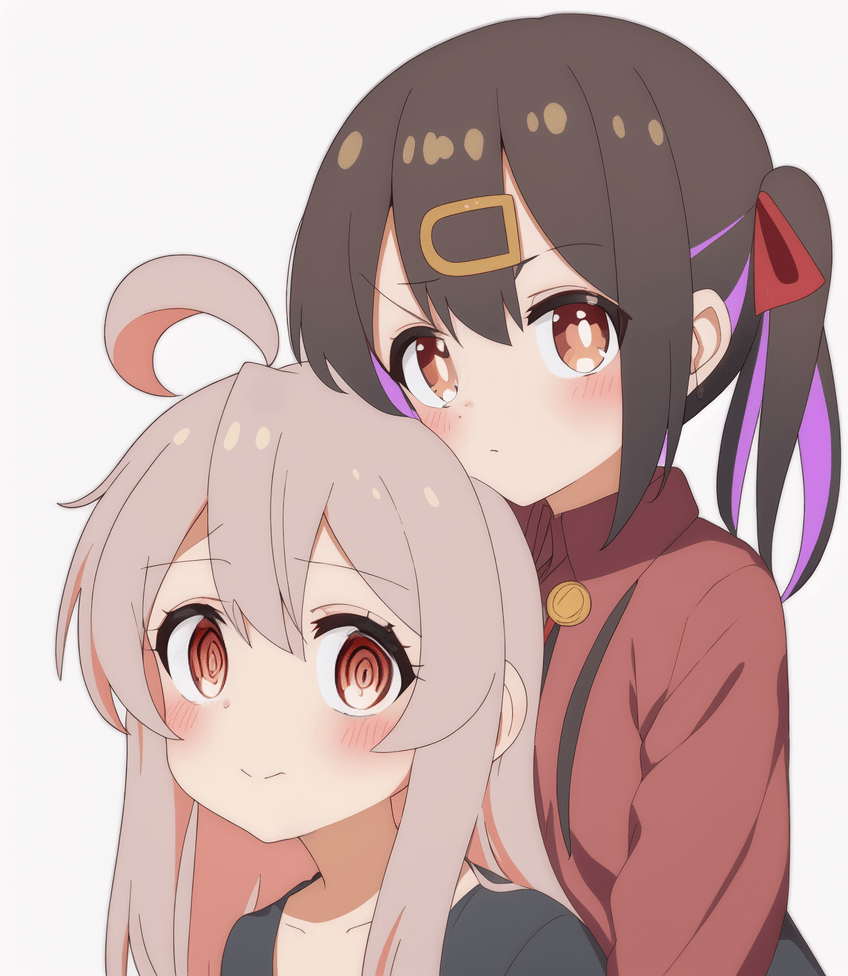

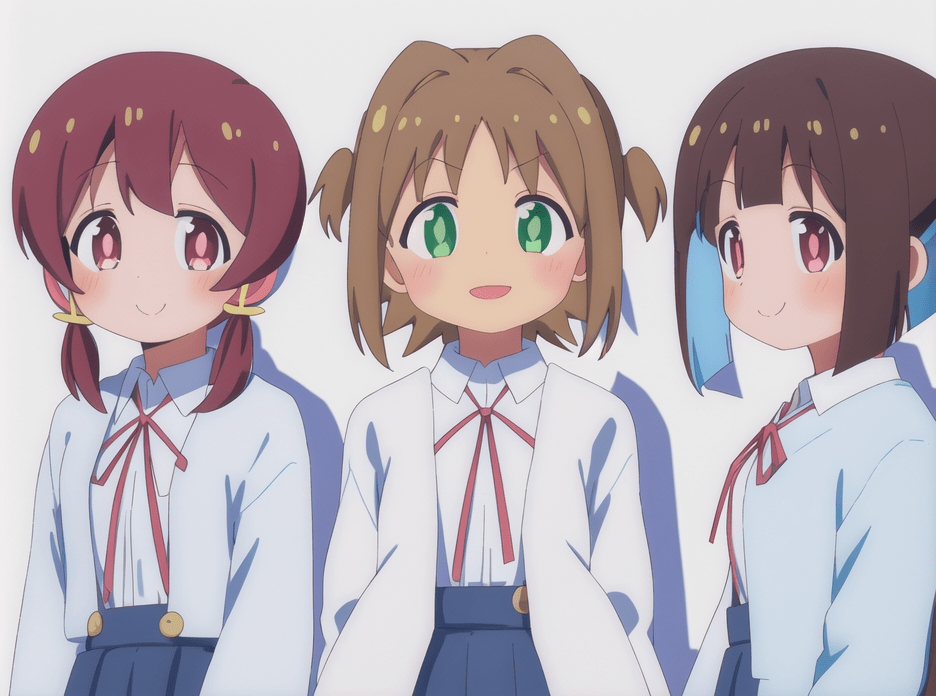

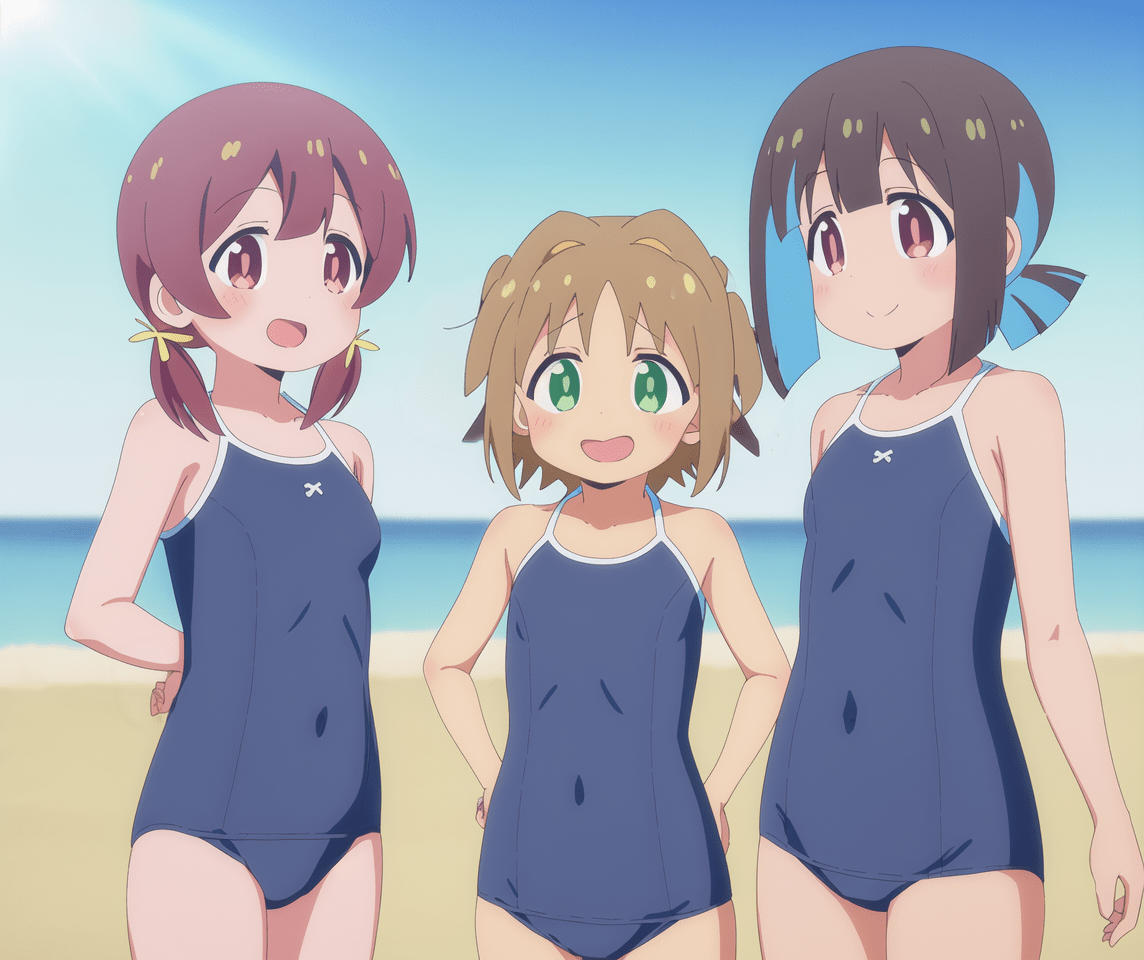

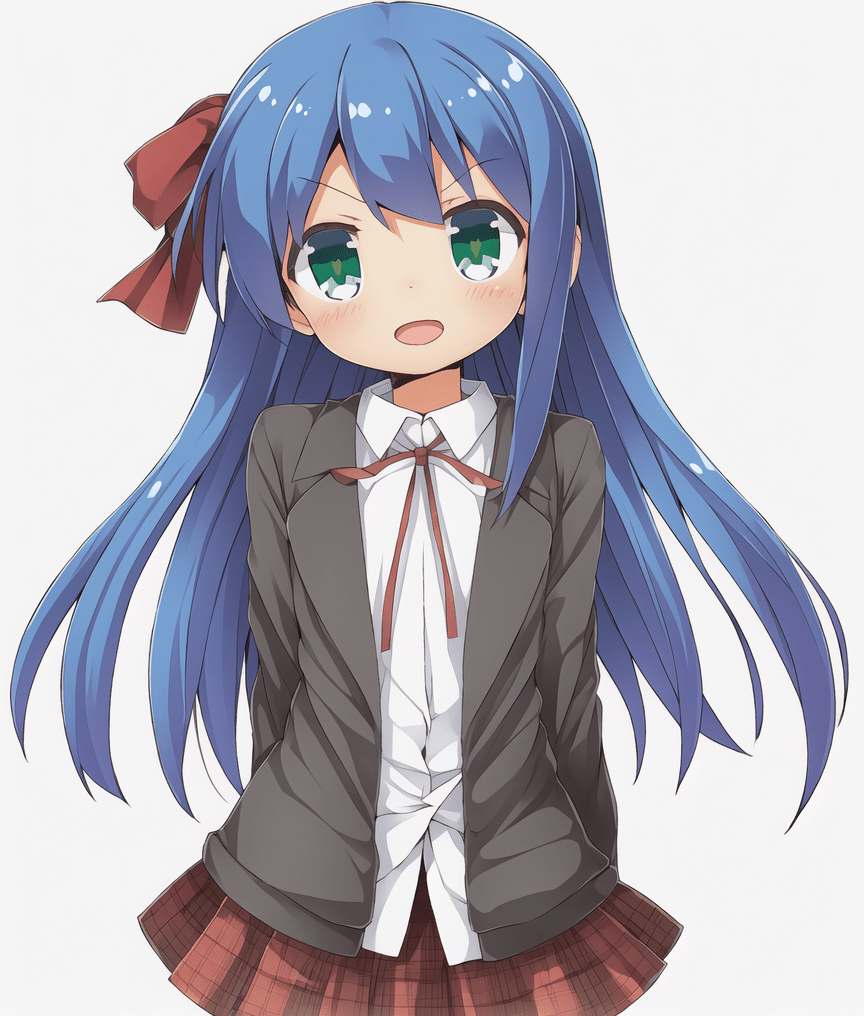

### Example Generations

|

| 13 |

+

|

| 14 |

+

|

| 15 |

+

|

| 16 |

+

|

| 17 |

+

### Usage

|

| 18 |

+

|

| 19 |

+

The model is shared in both diffuser and safetensors formats.

|

| 20 |

+

As for the trigger words, the six characters can be prompted with

|

| 21 |

+

`OyamaMahiro`, `OyamaMihari`, `HozukiKaede`, `HozukiMomiji`, `OkaAsahi`, and `MurosakiMiyo`.

|

| 22 |

+

`TenkawaNayuta` is tagged but she appears in fewer than 10 images so don't expect any good result.

|

| 23 |

+

There are also three different styles trained into the model: `aniscreen`, `edstyle`, and `megazine` (yes, typo).

|

| 24 |

+

As usual you can get multiple-character imagee but starting from 4 it is difficult.

|

| 25 |

+

|

| 26 |

+

In the following images are shown the generations of different checkpoints.

|

| 27 |

+

The default one is that of step 22828, but all the checkpoints starting from step 9969 can be found in the `checkpoints` directory.

|

| 28 |

+

They are all sufficiently good at the six characters but later ones are better at `megazine` and `edstyle` (at the risk of overfitting, I don't really know).

|

| 29 |

+

|

| 30 |

+

|

| 31 |

+

|

| 32 |

+

|

| 33 |

+

|

| 34 |

+

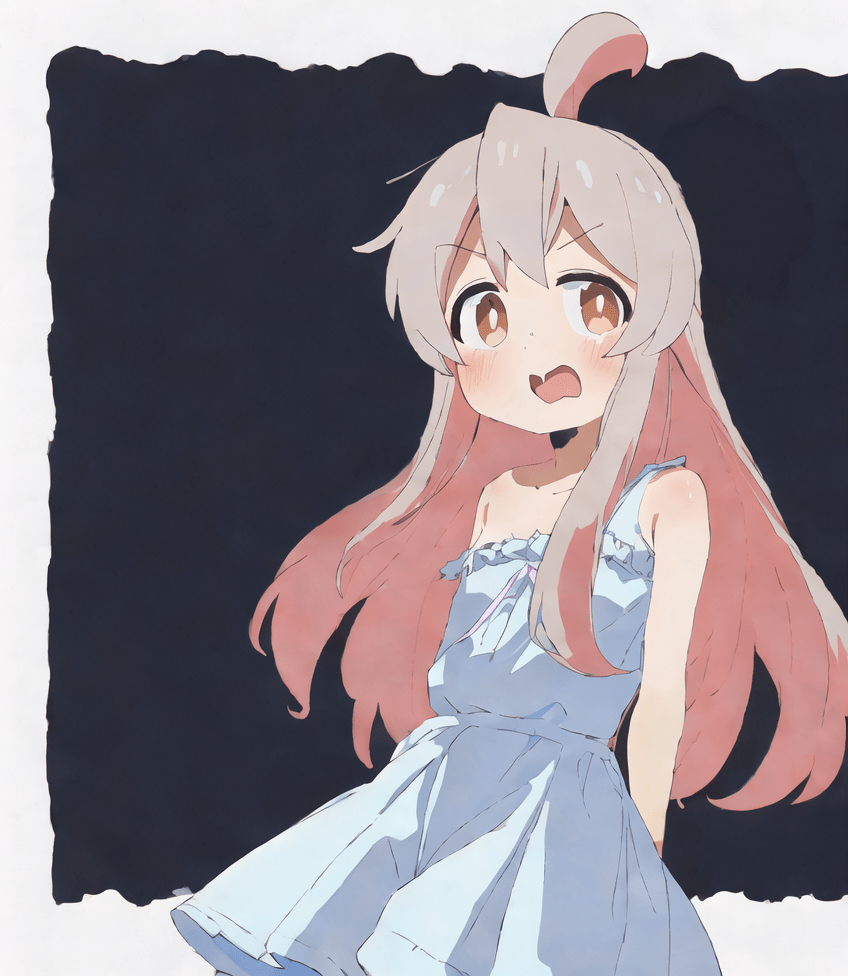

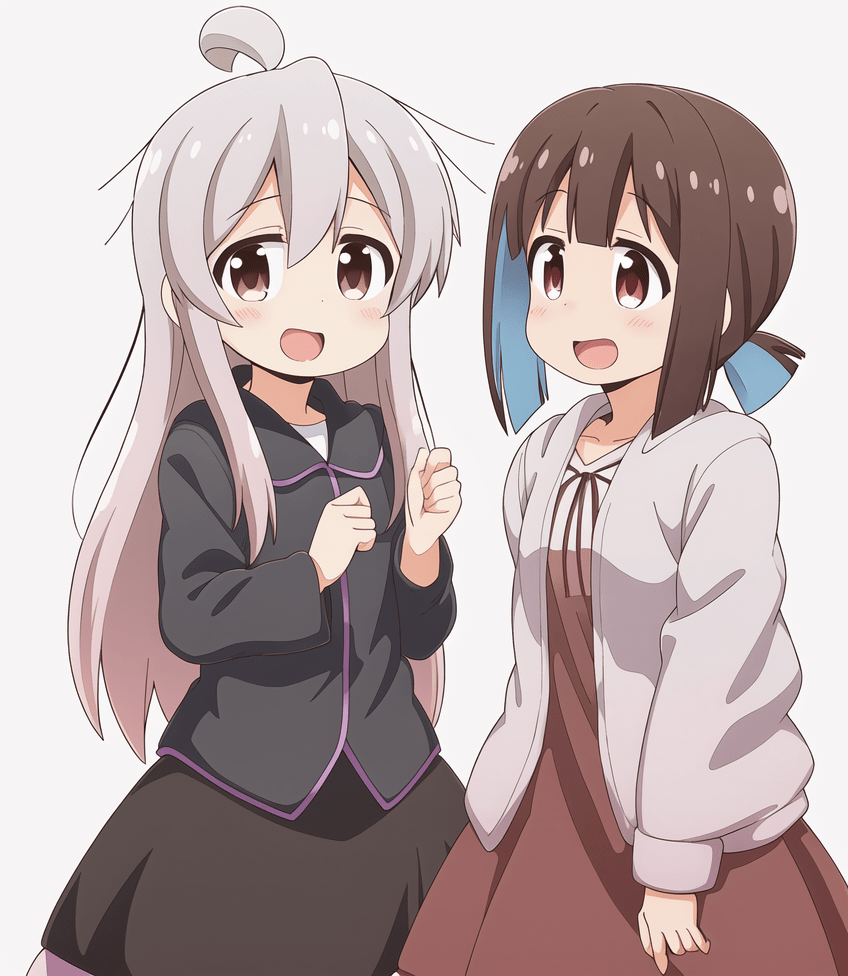

### More Generations

|

| 35 |

+

|

| 36 |

+

|

| 37 |

+

|

| 38 |

+

|

| 39 |

+

|

| 40 |

+

|

| 41 |

+

|

| 42 |

+

|

| 43 |

+

|

| 44 |

+

|

| 45 |

+

|

| 46 |

+

### Dataset Description

|

| 47 |

+

|

| 48 |

+

The dataset is prepared via the workflow detailed here: https://github.com/cyber-meow/anime_screenshot_pipeline

|

| 49 |

+

|

| 50 |

+

It contains 21412 images with the following composition

|

| 51 |

+

|

| 52 |

+

- 2133 onimai images separated in four types

|

| 53 |

+

- 1496 anime screenshots from the first six episodes (for style `aniscreen`)

|

| 54 |

+

- 70 screenshots of the ending of the anime (for style `edstyle`, not counted in the 1496 above)

|

| 55 |

+

- 528 fan arts (or probably some official arts)

|

| 56 |

+

- 39 scans of the covers of the mangas (for style `megazine`, don't ask me why I choose this name, it is bad but it turns out to work)

|

| 57 |

+

- 19279 regularization images which intend to be as various as possible while being in anime style (i.e. no photorealistic image is used)

|

| 58 |

+

|

| 59 |

+

Note that the model is trained with a specific weighting scheme to balance between different concepts so that every image does not weight equally.

|

| 60 |

+

After applying the per-image repeat we get around 145K images per epoch.

|

| 61 |

+

|

| 62 |

+

### Training

|

| 63 |

+

|

| 64 |

+

Training is done with [EveryDream2](https://github.com/victorchall/EveryDream2trainer) trainer with [ACertainty](https://huggingface.co/JosephusCheung/ACertainty) as base model.

|

| 65 |

+

The following configuration is used

|

| 66 |

+

|

| 67 |

+

- resolution 512

|

| 68 |

+

- cosine learning rate scheduler, lr 2.5e-6

|

| 69 |

+

- batch size 8

|

| 70 |

+

- conditional dropout 0.08

|

| 71 |

+

- change beta scheduler from `scaler_linear` to `linear` in `config.json` of the scheduler of the model

|

| 72 |

+

|

| 73 |

+

I trained for two epochs wheareas the default release model was trained for 22828 steps as mentioned above.

|