diff --git a/.gitattributes b/.gitattributes

index a6344aac8c09253b3b630fb776ae94478aa0275b..47b6957b885f951b1edf155fcc47476c9cbbc6cc 100644

--- a/.gitattributes

+++ b/.gitattributes

@@ -33,3 +33,27 @@ saved_model/**/* filter=lfs diff=lfs merge=lfs -text

*.zip filter=lfs diff=lfs merge=lfs -text

*.zst filter=lfs diff=lfs merge=lfs -text

*tfevents* filter=lfs diff=lfs merge=lfs -text

+NotoSans-Regular.ttf filter=lfs diff=lfs merge=lfs -text

+examples/CNAuxBanner.jpg filter=lfs diff=lfs merge=lfs -text

+examples/ExecuteAll.png filter=lfs diff=lfs merge=lfs -text

+examples/ExecuteAll1.jpg filter=lfs diff=lfs merge=lfs -text

+examples/ExecuteAll2.jpg filter=lfs diff=lfs merge=lfs -text

+examples/comfyui-controlnet-aux-logo.png filter=lfs diff=lfs merge=lfs -text

+examples/example_animal_pose.png filter=lfs diff=lfs merge=lfs -text

+examples/example_anime_face_segmentor.png filter=lfs diff=lfs merge=lfs -text

+examples/example_anyline.png filter=lfs diff=lfs merge=lfs -text

+examples/example_densepose.png filter=lfs diff=lfs merge=lfs -text

+examples/example_depth_anything.png filter=lfs diff=lfs merge=lfs -text

+examples/example_depth_anything_v2.png filter=lfs diff=lfs merge=lfs -text

+examples/example_dsine.png filter=lfs diff=lfs merge=lfs -text

+examples/example_marigold.png filter=lfs diff=lfs merge=lfs -text

+examples/example_marigold_flat.jpg filter=lfs diff=lfs merge=lfs -text

+examples/example_mesh_graphormer.png filter=lfs diff=lfs merge=lfs -text

+examples/example_metric3d.png filter=lfs diff=lfs merge=lfs -text

+examples/example_recolor.png filter=lfs diff=lfs merge=lfs -text

+examples/example_save_kps.png filter=lfs diff=lfs merge=lfs -text

+examples/example_teed.png filter=lfs diff=lfs merge=lfs -text

+examples/example_torchscript.png filter=lfs diff=lfs merge=lfs -text

+examples/example_unimatch.png filter=lfs diff=lfs merge=lfs -text

+src/custom_controlnet_aux/mesh_graphormer/hand_landmarker.task filter=lfs diff=lfs merge=lfs -text

+tests/pose.png filter=lfs diff=lfs merge=lfs -text

diff --git a/.github/workflows/publish.yml b/.github/workflows/publish.yml

new file mode 100644

index 0000000000000000000000000000000000000000..eb5b7d5f5484abc954a89dde2b97d327de44b43b

--- /dev/null

+++ b/.github/workflows/publish.yml

@@ -0,0 +1,25 @@

+name: Publish to Comfy registry

+on:

+ workflow_dispatch:

+ push:

+ branches:

+ - main

+ paths:

+ - "pyproject.toml"

+

+permissions:

+ issues: write

+

+jobs:

+ publish-node:

+ name: Publish Custom Node to registry

+ runs-on: ubuntu-latest

+ if: ${{ github.repository_owner == 'Fannovel16' }}

+ steps:

+ - name: Check out code

+ uses: actions/checkout@v4

+ - name: Publish Custom Node

+ uses: Comfy-Org/publish-node-action@v1

+ with:

+ ## Add your own personal access token to your Github Repository secrets and reference it here.

+ personal_access_token: ${{ secrets.REGISTRY_ACCESS_TOKEN }}

diff --git a/.gitignore b/.gitignore

new file mode 100644

index 0000000000000000000000000000000000000000..c280aed1f84c22714c9bb068e94dc1a0b88a0626

--- /dev/null

+++ b/.gitignore

@@ -0,0 +1,183 @@

+# Initially taken from Github's Python gitignore file

+

+# Byte-compiled / optimized / DLL files

+__pycache__/

+*.py[cod]

+*$py.class

+

+# C extensions

+*.so

+

+# tests and logs

+tests/fixtures/cached_*_text.txt

+logs/

+lightning_logs/

+lang_code_data/

+tests/outputs

+

+# Distribution / packaging

+.Python

+build/

+develop-eggs/

+dist/

+downloads/

+eggs/

+.eggs/

+lib/

+lib64/

+parts/

+sdist/

+var/

+wheels/

+*.egg-info/

+.installed.cfg

+*.egg

+MANIFEST

+

+# PyInstaller

+# Usually these files are written by a python script from a template

+# before PyInstaller builds the exe, so as to inject date/other infos into it.

+*.manifest

+*.spec

+

+# Installer logs

+pip-log.txt

+pip-delete-this-directory.txt

+

+# Unit test / coverage reports

+htmlcov/

+.tox/

+.nox/

+.coverage

+.coverage.*

+.cache

+nosetests.xml

+coverage.xml

+*.cover

+.hypothesis/

+.pytest_cache/

+

+# Translations

+*.mo

+*.pot

+

+# Django stuff:

+*.log

+local_settings.py

+db.sqlite3

+

+# Flask stuff:

+instance/

+.webassets-cache

+

+# Scrapy stuff:

+.scrapy

+

+# Sphinx documentation

+docs/_build/

+

+# PyBuilder

+target/

+

+# Jupyter Notebook

+.ipynb_checkpoints

+

+# IPython

+profile_default/

+ipython_config.py

+

+# pyenv

+.python-version

+

+# celery beat schedule file

+celerybeat-schedule

+

+# SageMath parsed files

+*.sage.py

+

+# Environments

+.env

+.venv

+env/

+venv/

+ENV/

+env.bak/

+venv.bak/

+

+# Spyder project settings

+.spyderproject

+.spyproject

+

+# Rope project settings

+.ropeproject

+

+# mkdocs documentation

+/site

+

+# mypy

+.mypy_cache/

+.dmypy.json

+dmypy.json

+

+# Pyre type checker

+.pyre/

+

+# vscode

+.vs

+.vscode

+

+# Pycharm

+.idea

+

+# TF code

+tensorflow_code

+

+# Models

+proc_data

+

+# examples

+runs

+/runs_old

+/wandb

+/examples/runs

+/examples/**/*.args

+/examples/rag/sweep

+

+# data

+/data

+serialization_dir

+

+# emacs

+*.*~

+debug.env

+

+# vim

+.*.swp

+

+#ctags

+tags

+

+# pre-commit

+.pre-commit*

+

+# .lock

+*.lock

+

+# DS_Store (MacOS)

+.DS_Store

+# RL pipelines may produce mp4 outputs

+*.mp4

+

+# dependencies

+/transformers

+

+# ruff

+.ruff_cache

+

+wandb

+

+ckpts/

+

+test.ipynb

+config.yaml

+test.ipynb

\ No newline at end of file

diff --git a/LICENSE.txt b/LICENSE.txt

new file mode 100644

index 0000000000000000000000000000000000000000..261eeb9e9f8b2b4b0d119366dda99c6fd7d35c64

--- /dev/null

+++ b/LICENSE.txt

@@ -0,0 +1,201 @@

+ Apache License

+ Version 2.0, January 2004

+ http://www.apache.org/licenses/

+

+ TERMS AND CONDITIONS FOR USE, REPRODUCTION, AND DISTRIBUTION

+

+ 1. Definitions.

+

+ "License" shall mean the terms and conditions for use, reproduction,

+ and distribution as defined by Sections 1 through 9 of this document.

+

+ "Licensor" shall mean the copyright owner or entity authorized by

+ the copyright owner that is granting the License.

+

+ "Legal Entity" shall mean the union of the acting entity and all

+ other entities that control, are controlled by, or are under common

+ control with that entity. For the purposes of this definition,

+ "control" means (i) the power, direct or indirect, to cause the

+ direction or management of such entity, whether by contract or

+ otherwise, or (ii) ownership of fifty percent (50%) or more of the

+ outstanding shares, or (iii) beneficial ownership of such entity.

+

+ "You" (or "Your") shall mean an individual or Legal Entity

+ exercising permissions granted by this License.

+

+ "Source" form shall mean the preferred form for making modifications,

+ including but not limited to software source code, documentation

+ source, and configuration files.

+

+ "Object" form shall mean any form resulting from mechanical

+ transformation or translation of a Source form, including but

+ not limited to compiled object code, generated documentation,

+ and conversions to other media types.

+

+ "Work" shall mean the work of authorship, whether in Source or

+ Object form, made available under the License, as indicated by a

+ copyright notice that is included in or attached to the work

+ (an example is provided in the Appendix below).

+

+ "Derivative Works" shall mean any work, whether in Source or Object

+ form, that is based on (or derived from) the Work and for which the

+ editorial revisions, annotations, elaborations, or other modifications

+ represent, as a whole, an original work of authorship. For the purposes

+ of this License, Derivative Works shall not include works that remain

+ separable from, or merely link (or bind by name) to the interfaces of,

+ the Work and Derivative Works thereof.

+

+ "Contribution" shall mean any work of authorship, including

+ the original version of the Work and any modifications or additions

+ to that Work or Derivative Works thereof, that is intentionally

+ submitted to Licensor for inclusion in the Work by the copyright owner

+ or by an individual or Legal Entity authorized to submit on behalf of

+ the copyright owner. For the purposes of this definition, "submitted"

+ means any form of electronic, verbal, or written communication sent

+ to the Licensor or its representatives, including but not limited to

+ communication on electronic mailing lists, source code control systems,

+ and issue tracking systems that are managed by, or on behalf of, the

+ Licensor for the purpose of discussing and improving the Work, but

+ excluding communication that is conspicuously marked or otherwise

+ designated in writing by the copyright owner as "Not a Contribution."

+

+ "Contributor" shall mean Licensor and any individual or Legal Entity

+ on behalf of whom a Contribution has been received by Licensor and

+ subsequently incorporated within the Work.

+

+ 2. Grant of Copyright License. Subject to the terms and conditions of

+ this License, each Contributor hereby grants to You a perpetual,

+ worldwide, non-exclusive, no-charge, royalty-free, irrevocable

+ copyright license to reproduce, prepare Derivative Works of,

+ publicly display, publicly perform, sublicense, and distribute the

+ Work and such Derivative Works in Source or Object form.

+

+ 3. Grant of Patent License. Subject to the terms and conditions of

+ this License, each Contributor hereby grants to You a perpetual,

+ worldwide, non-exclusive, no-charge, royalty-free, irrevocable

+ (except as stated in this section) patent license to make, have made,

+ use, offer to sell, sell, import, and otherwise transfer the Work,

+ where such license applies only to those patent claims licensable

+ by such Contributor that are necessarily infringed by their

+ Contribution(s) alone or by combination of their Contribution(s)

+ with the Work to which such Contribution(s) was submitted. If You

+ institute patent litigation against any entity (including a

+ cross-claim or counterclaim in a lawsuit) alleging that the Work

+ or a Contribution incorporated within the Work constitutes direct

+ or contributory patent infringement, then any patent licenses

+ granted to You under this License for that Work shall terminate

+ as of the date such litigation is filed.

+

+ 4. Redistribution. You may reproduce and distribute copies of the

+ Work or Derivative Works thereof in any medium, with or without

+ modifications, and in Source or Object form, provided that You

+ meet the following conditions:

+

+ (a) You must give any other recipients of the Work or

+ Derivative Works a copy of this License; and

+

+ (b) You must cause any modified files to carry prominent notices

+ stating that You changed the files; and

+

+ (c) You must retain, in the Source form of any Derivative Works

+ that You distribute, all copyright, patent, trademark, and

+ attribution notices from the Source form of the Work,

+ excluding those notices that do not pertain to any part of

+ the Derivative Works; and

+

+ (d) If the Work includes a "NOTICE" text file as part of its

+ distribution, then any Derivative Works that You distribute must

+ include a readable copy of the attribution notices contained

+ within such NOTICE file, excluding those notices that do not

+ pertain to any part of the Derivative Works, in at least one

+ of the following places: within a NOTICE text file distributed

+ as part of the Derivative Works; within the Source form or

+ documentation, if provided along with the Derivative Works; or,

+ within a display generated by the Derivative Works, if and

+ wherever such third-party notices normally appear. The contents

+ of the NOTICE file are for informational purposes only and

+ do not modify the License. You may add Your own attribution

+ notices within Derivative Works that You distribute, alongside

+ or as an addendum to the NOTICE text from the Work, provided

+ that such additional attribution notices cannot be construed

+ as modifying the License.

+

+ You may add Your own copyright statement to Your modifications and

+ may provide additional or different license terms and conditions

+ for use, reproduction, or distribution of Your modifications, or

+ for any such Derivative Works as a whole, provided Your use,

+ reproduction, and distribution of the Work otherwise complies with

+ the conditions stated in this License.

+

+ 5. Submission of Contributions. Unless You explicitly state otherwise,

+ any Contribution intentionally submitted for inclusion in the Work

+ by You to the Licensor shall be under the terms and conditions of

+ this License, without any additional terms or conditions.

+ Notwithstanding the above, nothing herein shall supersede or modify

+ the terms of any separate license agreement you may have executed

+ with Licensor regarding such Contributions.

+

+ 6. Trademarks. This License does not grant permission to use the trade

+ names, trademarks, service marks, or product names of the Licensor,

+ except as required for reasonable and customary use in describing the

+ origin of the Work and reproducing the content of the NOTICE file.

+

+ 7. Disclaimer of Warranty. Unless required by applicable law or

+ agreed to in writing, Licensor provides the Work (and each

+ Contributor provides its Contributions) on an "AS IS" BASIS,

+ WITHOUT WARRANTIES OR CONDITIONS OF ANY KIND, either express or

+ implied, including, without limitation, any warranties or conditions

+ of TITLE, NON-INFRINGEMENT, MERCHANTABILITY, or FITNESS FOR A

+ PARTICULAR PURPOSE. You are solely responsible for determining the

+ appropriateness of using or redistributing the Work and assume any

+ risks associated with Your exercise of permissions under this License.

+

+ 8. Limitation of Liability. In no event and under no legal theory,

+ whether in tort (including negligence), contract, or otherwise,

+ unless required by applicable law (such as deliberate and grossly

+ negligent acts) or agreed to in writing, shall any Contributor be

+ liable to You for damages, including any direct, indirect, special,

+ incidental, or consequential damages of any character arising as a

+ result of this License or out of the use or inability to use the

+ Work (including but not limited to damages for loss of goodwill,

+ work stoppage, computer failure or malfunction, or any and all

+ other commercial damages or losses), even if such Contributor

+ has been advised of the possibility of such damages.

+

+ 9. Accepting Warranty or Additional Liability. While redistributing

+ the Work or Derivative Works thereof, You may choose to offer,

+ and charge a fee for, acceptance of support, warranty, indemnity,

+ or other liability obligations and/or rights consistent with this

+ License. However, in accepting such obligations, You may act only

+ on Your own behalf and on Your sole responsibility, not on behalf

+ of any other Contributor, and only if You agree to indemnify,

+ defend, and hold each Contributor harmless for any liability

+ incurred by, or claims asserted against, such Contributor by reason

+ of your accepting any such warranty or additional liability.

+

+ END OF TERMS AND CONDITIONS

+

+ APPENDIX: How to apply the Apache License to your work.

+

+ To apply the Apache License to your work, attach the following

+ boilerplate notice, with the fields enclosed by brackets "[]"

+ replaced with your own identifying information. (Don't include

+ the brackets!) The text should be enclosed in the appropriate

+ comment syntax for the file format. We also recommend that a

+ file or class name and description of purpose be included on the

+ same "printed page" as the copyright notice for easier

+ identification within third-party archives.

+

+ Copyright [yyyy] [name of copyright owner]

+

+ Licensed under the Apache License, Version 2.0 (the "License");

+ you may not use this file except in compliance with the License.

+ You may obtain a copy of the License at

+

+ http://www.apache.org/licenses/LICENSE-2.0

+

+ Unless required by applicable law or agreed to in writing, software

+ distributed under the License is distributed on an "AS IS" BASIS,

+ WITHOUT WARRANTIES OR CONDITIONS OF ANY KIND, either express or implied.

+ See the License for the specific language governing permissions and

+ limitations under the License.

diff --git a/NotoSans-Regular.ttf b/NotoSans-Regular.ttf

new file mode 100644

index 0000000000000000000000000000000000000000..4df6c6c6d0a0aa7c81f7bacfe4240bdd968d2d65

--- /dev/null

+++ b/NotoSans-Regular.ttf

@@ -0,0 +1,3 @@

+version https://git-lfs.github.com/spec/v1

+oid sha256:6b04c8dd65af6b73eb4279472ed1580b29102d6496a377340e80a40cdb3b22c9

+size 455188

diff --git a/README.md b/README.md

new file mode 100644

index 0000000000000000000000000000000000000000..1c0e2dcc38eac48b290b4e323920e8b1477d4b8b

--- /dev/null

+++ b/README.md

@@ -0,0 +1,252 @@

+# ComfyUI's ControlNet Auxiliary Preprocessors

+Plug-and-play [ComfyUI](https://github.com/comfyanonymous/ComfyUI) node sets for making [ControlNet](https://github.com/lllyasviel/ControlNet/) hint images

+

+"anime style, a protest in the street, cyberpunk city, a woman with pink hair and golden eyes (looking at the viewer) is holding a sign with the text "ComfyUI ControlNet Aux" in bold, neon pink" on Flux.1 Dev

+

+

+

+The code is copy-pasted from the respective folders in https://github.com/lllyasviel/ControlNet/tree/main/annotator and connected to [the 🤗 Hub](https://huggingface.co/lllyasviel/Annotators).

+

+All credit & copyright goes to https://github.com/lllyasviel.

+

+# Updates

+Go to [Update page](./UPDATES.md) to follow updates

+

+# Installation:

+## Using ComfyUI Manager (recommended):

+Install [ComfyUI Manager](https://github.com/ltdrdata/ComfyUI-Manager) and do steps introduced there to install this repo.

+

+## Alternative:

+If you're running on Linux, or non-admin account on windows you'll want to ensure `/ComfyUI/custom_nodes` and `comfyui_controlnet_aux` has write permissions.

+

+There is now a **install.bat** you can run to install to portable if detected. Otherwise it will default to system and assume you followed ConfyUI's manual installation steps.

+

+If you can't run **install.bat** (e.g. you are a Linux user). Open the CMD/Shell and do the following:

+ - Navigate to your `/ComfyUI/custom_nodes/` folder

+ - Run `git clone https://github.com/Fannovel16/comfyui_controlnet_aux/`

+ - Navigate to your `comfyui_controlnet_aux` folder

+ - Portable/venv:

+ - Run `path/to/ComfUI/python_embeded/python.exe -s -m pip install -r requirements.txt`

+ - With system python

+ - Run `pip install -r requirements.txt`

+ - Start ComfyUI

+

+# Nodes

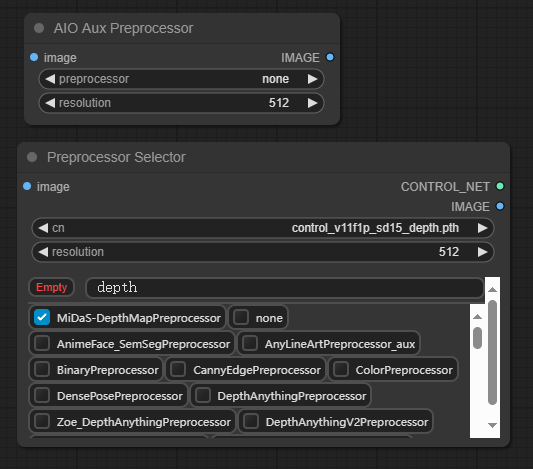

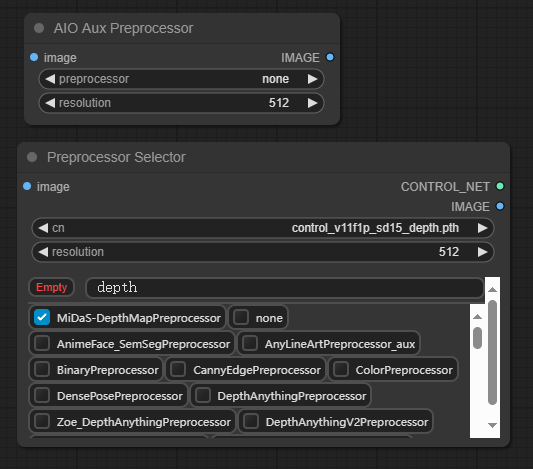

+Please note that this repo only supports preprocessors making hint images (e.g. stickman, canny edge, etc).

+All preprocessors except Inpaint are intergrated into `AIO Aux Preprocessor` node.

+This node allow you to quickly get the preprocessor but a preprocessor's own threshold parameters won't be able to set.

+You need to use its node directly to set thresholds.

+

+# Nodes (sections are categories in Comfy menu)

+## Line Extractors

+| Preprocessor Node | sd-webui-controlnet/other | ControlNet/T2I-Adapter |

+|-----------------------------|---------------------------|-------------------------------------------|

+| Binary Lines | binary | control_scribble |

+| Canny Edge | canny | control_v11p_sd15_canny

control_canny

t2iadapter_canny |

+| HED Soft-Edge Lines | hed | control_v11p_sd15_softedge

control_hed |

+| Standard Lineart | standard_lineart | control_v11p_sd15_lineart |

+| Realistic Lineart | lineart (or `lineart_coarse` if `coarse` is enabled) | control_v11p_sd15_lineart |

+| Anime Lineart | lineart_anime | control_v11p_sd15s2_lineart_anime |

+| Manga Lineart | lineart_anime_denoise | control_v11p_sd15s2_lineart_anime |

+| M-LSD Lines | mlsd | control_v11p_sd15_mlsd

control_mlsd |

+| PiDiNet Soft-Edge Lines | pidinet | control_v11p_sd15_softedge

control_scribble |

+| Scribble Lines | scribble | control_v11p_sd15_scribble

control_scribble |

+| Scribble XDoG Lines | scribble_xdog | control_v11p_sd15_scribble

control_scribble |

+| Fake Scribble Lines | scribble_hed | control_v11p_sd15_scribble

control_scribble |

+| TEED Soft-Edge Lines | teed | [controlnet-sd-xl-1.0-softedge-dexined](https://huggingface.co/SargeZT/controlnet-sd-xl-1.0-softedge-dexined/blob/main/controlnet-sd-xl-1.0-softedge-dexined.safetensors)

control_v11p_sd15_softedge (Theoretically)

+| Scribble PiDiNet Lines | scribble_pidinet | control_v11p_sd15_scribble

control_scribble |

+| AnyLine Lineart | | mistoLine_fp16.safetensors

mistoLine_rank256

control_v11p_sd15s2_lineart_anime

control_v11p_sd15_lineart |

+

+## Normal and Depth Estimators

+| Preprocessor Node | sd-webui-controlnet/other | ControlNet/T2I-Adapter |

+|-----------------------------|---------------------------|-------------------------------------------|

+| MiDaS Depth Map | (normal) depth | control_v11f1p_sd15_depth

control_depth

t2iadapter_depth |

+| LeReS Depth Map | depth_leres | control_v11f1p_sd15_depth

control_depth

t2iadapter_depth |

+| Zoe Depth Map | depth_zoe | control_v11f1p_sd15_depth

control_depth

t2iadapter_depth |

+| MiDaS Normal Map | normal_map | control_normal |

+| BAE Normal Map | normal_bae | control_v11p_sd15_normalbae |

+| MeshGraphormer Hand Refiner ([HandRefinder](https://github.com/wenquanlu/HandRefiner)) | depth_hand_refiner | [control_sd15_inpaint_depth_hand_fp16](https://huggingface.co/hr16/ControlNet-HandRefiner-pruned/blob/main/control_sd15_inpaint_depth_hand_fp16.safetensors) |

+| Depth Anything | depth_anything | [Depth-Anything](https://huggingface.co/spaces/LiheYoung/Depth-Anything/blob/main/checkpoints_controlnet/diffusion_pytorch_model.safetensors) |

+| Zoe Depth Anything

(Basically Zoe but the encoder is replaced with DepthAnything) | depth_anything | [Depth-Anything](https://huggingface.co/spaces/LiheYoung/Depth-Anything/blob/main/checkpoints_controlnet/diffusion_pytorch_model.safetensors) |

+| Normal DSINE | | control_normal/control_v11p_sd15_normalbae |

+| Metric3D Depth | | control_v11f1p_sd15_depth

control_depth

t2iadapter_depth |

+| Metric3D Normal | | control_v11p_sd15_normalbae |

+| Depth Anything V2 | | [Depth-Anything](https://huggingface.co/spaces/LiheYoung/Depth-Anything/blob/main/checkpoints_controlnet/diffusion_pytorch_model.safetensors) |

+

+## Faces and Poses Estimators

+| Preprocessor Node | sd-webui-controlnet/other | ControlNet/T2I-Adapter |

+|-----------------------------|---------------------------|-------------------------------------------|

+| DWPose Estimator | dw_openpose_full | control_v11p_sd15_openpose

control_openpose

t2iadapter_openpose |

+| OpenPose Estimator | openpose (detect_body)

openpose_hand (detect_body + detect_hand)

openpose_faceonly (detect_face)

openpose_full (detect_hand + detect_body + detect_face) | control_v11p_sd15_openpose

control_openpose

t2iadapter_openpose |

+| MediaPipe Face Mesh | mediapipe_face | controlnet_sd21_laion_face_v2 |

+| Animal Estimator | animal_openpose | [control_sd15_animal_openpose_fp16](https://huggingface.co/huchenlei/animal_openpose/blob/main/control_sd15_animal_openpose_fp16.pth) |

+

+## Optical Flow Estimators

+| Preprocessor Node | sd-webui-controlnet/other | ControlNet/T2I-Adapter |

+|-----------------------------|---------------------------|-------------------------------------------|

+| Unimatch Optical Flow | | [DragNUWA](https://github.com/ProjectNUWA/DragNUWA) |

+

+### How to get OpenPose-format JSON?

+#### User-side

+This workflow will save images to ComfyUI's output folder (the same location as output images). If you haven't found `Save Pose Keypoints` node, update this extension

+

+

+#### Dev-side

+An array of [OpenPose-format JSON](https://github.com/CMU-Perceptual-Computing-Lab/openpose/blob/master/doc/02_output.md#json-output-format) corresponsding to each frame in an IMAGE batch can be gotten from DWPose and OpenPose using `app.nodeOutputs` on the UI or `/history` API endpoint. JSON output from AnimalPose uses a kinda similar format to OpenPose JSON:

+```

+[

+ {

+ "version": "ap10k",

+ "animals": [

+ [[x1, y1, 1], [x2, y2, 1],..., [x17, y17, 1]],

+ [[x1, y1, 1], [x2, y2, 1],..., [x17, y17, 1]],

+ ...

+ ],

+ "canvas_height": 512,

+ "canvas_width": 768

+ },

+ ...

+]

+```

+

+For extension developers (e.g. Openpose editor):

+```js

+const poseNodes = app.graph._nodes.filter(node => ["OpenposePreprocessor", "DWPreprocessor", "AnimalPosePreprocessor"].includes(node.type))

+for (const poseNode of poseNodes) {

+ const openposeResults = JSON.parse(app.nodeOutputs[poseNode.id].openpose_json[0])

+ console.log(openposeResults) //An array containing Openpose JSON for each frame

+}

+```

+

+For API users:

+Javascript

+```js

+import fetch from "node-fetch" //Remember to add "type": "module" to "package.json"

+async function main() {

+ const promptId = '792c1905-ecfe-41f4-8114-83e6a4a09a9f' //Too lazy to POST /queue

+ let history = await fetch(`http://127.0.0.1:8188/history/${promptId}`).then(re => re.json())

+ history = history[promptId]

+ const nodeOutputs = Object.values(history.outputs).filter(output => output.openpose_json)

+ for (const nodeOutput of nodeOutputs) {

+ const openposeResults = JSON.parse(nodeOutput.openpose_json[0])

+ console.log(openposeResults) //An array containing Openpose JSON for each frame

+ }

+}

+main()

+```

+

+Python

+```py

+import json, urllib.request

+

+server_address = "127.0.0.1:8188"

+prompt_id = '' #Too lazy to POST /queue

+

+def get_history(prompt_id):

+ with urllib.request.urlopen("http://{}/history/{}".format(server_address, prompt_id)) as response:

+ return json.loads(response.read())

+

+history = get_history(prompt_id)[prompt_id]

+for o in history['outputs']:

+ for node_id in history['outputs']:

+ node_output = history['outputs'][node_id]

+ if 'openpose_json' in node_output:

+ print(json.loads(node_output['openpose_json'][0])) #An list containing Openpose JSON for each frame

+```

+## Semantic Segmentation

+| Preprocessor Node | sd-webui-controlnet/other | ControlNet/T2I-Adapter |

+|-----------------------------|---------------------------|-------------------------------------------|

+| OneFormer ADE20K Segmentor | oneformer_ade20k | control_v11p_sd15_seg |

+| OneFormer COCO Segmentor | oneformer_coco | control_v11p_sd15_seg |

+| UniFormer Segmentor | segmentation |control_sd15_seg

control_v11p_sd15_seg|

+

+## T2IAdapter-only

+| Preprocessor Node | sd-webui-controlnet/other | ControlNet/T2I-Adapter |

+|-----------------------------|---------------------------|-------------------------------------------|

+| Color Pallete | color | t2iadapter_color |

+| Content Shuffle | shuffle | t2iadapter_style |

+

+## Recolor

+| Preprocessor Node | sd-webui-controlnet/other | ControlNet/T2I-Adapter |

+|-----------------------------|---------------------------|-------------------------------------------|

+| Image Luminance | recolor_luminance | [ioclab_sd15_recolor](https://huggingface.co/lllyasviel/sd_control_collection/resolve/main/ioclab_sd15_recolor.safetensors)

[sai_xl_recolor_256lora](https://huggingface.co/lllyasviel/sd_control_collection/resolve/main/sai_xl_recolor_256lora.safetensors)

[bdsqlsz_controlllite_xl_recolor_luminance](https://huggingface.co/bdsqlsz/qinglong_controlnet-lllite/resolve/main/bdsqlsz_controlllite_xl_recolor_luminance.safetensors) |

+| Image Intensity | recolor_intensity | Idk. Maybe same as above? |

+

+# Examples

+> A picture is worth a thousand words

+

+

+

+

+# Testing workflow

+https://github.com/Fannovel16/comfyui_controlnet_aux/blob/main/examples/ExecuteAll.png

+Input image: https://github.com/Fannovel16/comfyui_controlnet_aux/blob/main/examples/comfyui-controlnet-aux-logo.png

+

+# Q&A:

+## Why some nodes doesn't appear after I installed this repo?

+

+This repo has a new mechanism which will skip any custom node can't be imported. If you meet this case, please create a issue on [Issues tab](https://github.com/Fannovel16/comfyui_controlnet_aux/issues) with the log from the command line.

+

+## DWPose/AnimalPose only uses CPU so it's so slow. How can I make it use GPU?

+There are two ways to speed-up DWPose: using TorchScript checkpoints (.torchscript.pt) checkpoints or ONNXRuntime (.onnx). TorchScript way is little bit slower than ONNXRuntime but doesn't require any additional library and still way way faster than CPU.

+

+A torchscript bbox detector is compatiable with an onnx pose estimator and vice versa.

+### TorchScript

+Set `bbox_detector` and `pose_estimator` according to this picture. You can try other bbox detector endings with `.torchscript.pt` to reduce bbox detection time if input images are ideal.

+

+### ONNXRuntime

+If onnxruntime is installed successfully and the checkpoint used endings with `.onnx`, it will replace default cv2 backend to take advantage of GPU. Note that if you are using NVidia card, this method currently can only works on CUDA 11.8 (ComfyUI_windows_portable_nvidia_cu118_or_cpu.7z) unless you compile onnxruntime yourself.

+

+1. Know your onnxruntime build:

+* * NVidia CUDA 11.x or bellow/AMD GPU: `onnxruntime-gpu`

+* * NVidia CUDA 12.x: `onnxruntime-gpu --extra-index-url https://aiinfra.pkgs.visualstudio.com/PublicPackages/_packaging/onnxruntime-cuda-12/pypi/simple/`

+* * DirectML: `onnxruntime-directml`

+* * OpenVINO: `onnxruntime-openvino`

+

+Note that if this is your first time using ComfyUI, please test if it can run on your device before doing next steps.

+

+2. Add it into `requirements.txt`

+

+3. Run `install.bat` or pip command mentioned in Installation

+

+

+

+# Assets files of preprocessors

+* anime_face_segment: [bdsqlsz/qinglong_controlnet-lllite/Annotators/UNet.pth](https://huggingface.co/bdsqlsz/qinglong_controlnet-lllite/blob/main/Annotators/UNet.pth), [anime-seg/isnetis.ckpt](https://huggingface.co/skytnt/anime-seg/blob/main/isnetis.ckpt)

+* densepose: [LayerNorm/DensePose-TorchScript-with-hint-image/densepose_r50_fpn_dl.torchscript](https://huggingface.co/LayerNorm/DensePose-TorchScript-with-hint-image/blob/main/densepose_r50_fpn_dl.torchscript)

+* dwpose:

+* * bbox_detector: Either [yzd-v/DWPose/yolox_l.onnx](https://huggingface.co/yzd-v/DWPose/blob/main/yolox_l.onnx), [hr16/yolox-onnx/yolox_l.torchscript.pt](https://huggingface.co/hr16/yolox-onnx/blob/main/yolox_l.torchscript.pt), [hr16/yolo-nas-fp16/yolo_nas_l_fp16.onnx](https://huggingface.co/hr16/yolo-nas-fp16/blob/main/yolo_nas_l_fp16.onnx), [hr16/yolo-nas-fp16/yolo_nas_m_fp16.onnx](https://huggingface.co/hr16/yolo-nas-fp16/blob/main/yolo_nas_m_fp16.onnx), [hr16/yolo-nas-fp16/yolo_nas_s_fp16.onnx](https://huggingface.co/hr16/yolo-nas-fp16/blob/main/yolo_nas_s_fp16.onnx)

+* * pose_estimator: Either [hr16/DWPose-TorchScript-BatchSize5/dw-ll_ucoco_384_bs5.torchscript.pt](https://huggingface.co/hr16/DWPose-TorchScript-BatchSize5/blob/main/dw-ll_ucoco_384_bs5.torchscript.pt), [yzd-v/DWPose/dw-ll_ucoco_384.onnx](https://huggingface.co/yzd-v/DWPose/blob/main/dw-ll_ucoco_384.onnx)

+* animal_pose (ap10k):

+* * bbox_detector: Either [yzd-v/DWPose/yolox_l.onnx](https://huggingface.co/yzd-v/DWPose/blob/main/yolox_l.onnx), [hr16/yolox-onnx/yolox_l.torchscript.pt](https://huggingface.co/hr16/yolox-onnx/blob/main/yolox_l.torchscript.pt), [hr16/yolo-nas-fp16/yolo_nas_l_fp16.onnx](https://huggingface.co/hr16/yolo-nas-fp16/blob/main/yolo_nas_l_fp16.onnx), [hr16/yolo-nas-fp16/yolo_nas_m_fp16.onnx](https://huggingface.co/hr16/yolo-nas-fp16/blob/main/yolo_nas_m_fp16.onnx), [hr16/yolo-nas-fp16/yolo_nas_s_fp16.onnx](https://huggingface.co/hr16/yolo-nas-fp16/blob/main/yolo_nas_s_fp16.onnx)

+* * pose_estimator: Either [hr16/DWPose-TorchScript-BatchSize5/rtmpose-m_ap10k_256_bs5.torchscript.pt](https://huggingface.co/hr16/DWPose-TorchScript-BatchSize5/blob/main/rtmpose-m_ap10k_256_bs5.torchscript.pt), [hr16/UnJIT-DWPose/rtmpose-m_ap10k_256.onnx](https://huggingface.co/hr16/UnJIT-DWPose/blob/main/rtmpose-m_ap10k_256.onnx)

+* hed: [lllyasviel/Annotators/ControlNetHED.pth](https://huggingface.co/lllyasviel/Annotators/blob/main/ControlNetHED.pth)

+* leres: [lllyasviel/Annotators/res101.pth](https://huggingface.co/lllyasviel/Annotators/blob/main/res101.pth), [lllyasviel/Annotators/latest_net_G.pth](https://huggingface.co/lllyasviel/Annotators/blob/main/latest_net_G.pth)

+* lineart: [lllyasviel/Annotators/sk_model.pth](https://huggingface.co/lllyasviel/Annotators/blob/main/sk_model.pth), [lllyasviel/Annotators/sk_model2.pth](https://huggingface.co/lllyasviel/Annotators/blob/main/sk_model2.pth)

+* lineart_anime: [lllyasviel/Annotators/netG.pth](https://huggingface.co/lllyasviel/Annotators/blob/main/netG.pth)

+* manga_line: [lllyasviel/Annotators/erika.pth](https://huggingface.co/lllyasviel/Annotators/blob/main/erika.pth)

+* mesh_graphormer: [hr16/ControlNet-HandRefiner-pruned/graphormer_hand_state_dict.bin](https://huggingface.co/hr16/ControlNet-HandRefiner-pruned/blob/main/graphormer_hand_state_dict.bin), [hr16/ControlNet-HandRefiner-pruned/hrnetv2_w64_imagenet_pretrained.pth](https://huggingface.co/hr16/ControlNet-HandRefiner-pruned/blob/main/hrnetv2_w64_imagenet_pretrained.pth)

+* midas: [lllyasviel/Annotators/dpt_hybrid-midas-501f0c75.pt](https://huggingface.co/lllyasviel/Annotators/blob/main/dpt_hybrid-midas-501f0c75.pt)

+* mlsd: [lllyasviel/Annotators/mlsd_large_512_fp32.pth](https://huggingface.co/lllyasviel/Annotators/blob/main/mlsd_large_512_fp32.pth)

+* normalbae: [lllyasviel/Annotators/scannet.pt](https://huggingface.co/lllyasviel/Annotators/blob/main/scannet.pt)

+* oneformer: [lllyasviel/Annotators/250_16_swin_l_oneformer_ade20k_160k.pth](https://huggingface.co/lllyasviel/Annotators/blob/main/250_16_swin_l_oneformer_ade20k_160k.pth)

+* open_pose: [lllyasviel/Annotators/body_pose_model.pth](https://huggingface.co/lllyasviel/Annotators/blob/main/body_pose_model.pth), [lllyasviel/Annotators/hand_pose_model.pth](https://huggingface.co/lllyasviel/Annotators/blob/main/hand_pose_model.pth), [lllyasviel/Annotators/facenet.pth](https://huggingface.co/lllyasviel/Annotators/blob/main/facenet.pth)

+* pidi: [lllyasviel/Annotators/table5_pidinet.pth](https://huggingface.co/lllyasviel/Annotators/blob/main/table5_pidinet.pth)

+* sam: [dhkim2810/MobileSAM/mobile_sam.pt](https://huggingface.co/dhkim2810/MobileSAM/blob/main/mobile_sam.pt)

+* uniformer: [lllyasviel/Annotators/upernet_global_small.pth](https://huggingface.co/lllyasviel/Annotators/blob/main/upernet_global_small.pth)

+* zoe: [lllyasviel/Annotators/ZoeD_M12_N.pt](https://huggingface.co/lllyasviel/Annotators/blob/main/ZoeD_M12_N.pt)

+* teed: [bdsqlsz/qinglong_controlnet-lllite/7_model.pth](https://huggingface.co/bdsqlsz/qinglong_controlnet-lllite/blob/main/Annotators/7_model.pth)

+* depth_anything: Either [LiheYoung/Depth-Anything/checkpoints/depth_anything_vitl14.pth](https://huggingface.co/spaces/LiheYoung/Depth-Anything/blob/main/checkpoints/depth_anything_vitl14.pth), [LiheYoung/Depth-Anything/checkpoints/depth_anything_vitb14.pth](https://huggingface.co/spaces/LiheYoung/Depth-Anything/blob/main/checkpoints/depth_anything_vitb14.pth) or [LiheYoung/Depth-Anything/checkpoints/depth_anything_vits14.pth](https://huggingface.co/spaces/LiheYoung/Depth-Anything/blob/main/checkpoints/depth_anything_vits14.pth)

+* diffusion_edge: Either [hr16/Diffusion-Edge/diffusion_edge_indoor.pt](https://huggingface.co/hr16/Diffusion-Edge/blob/main/diffusion_edge_indoor.pt), [hr16/Diffusion-Edge/diffusion_edge_urban.pt](https://huggingface.co/hr16/Diffusion-Edge/blob/main/diffusion_edge_urban.pt) or [hr16/Diffusion-Edge/diffusion_edge_natrual.pt](https://huggingface.co/hr16/Diffusion-Edge/blob/main/diffusion_edge_natrual.pt)

+* unimatch: Either [hr16/Unimatch/gmflow-scale2-regrefine6-mixdata.pth](https://huggingface.co/hr16/Unimatch/blob/main/gmflow-scale2-regrefine6-mixdata.pth), [hr16/Unimatch/gmflow-scale2-mixdata.pth](https://huggingface.co/hr16/Unimatch/blob/main/gmflow-scale2-mixdata.pth) or [hr16/Unimatch/gmflow-scale1-mixdata.pth](https://huggingface.co/hr16/Unimatch/blob/main/gmflow-scale1-mixdata.pth)

+* zoe_depth_anything: Either [LiheYoung/Depth-Anything/checkpoints_metric_depth/depth_anything_metric_depth_indoor.pt](https://huggingface.co/spaces/LiheYoung/Depth-Anything/blob/main/checkpoints_metric_depth/depth_anything_metric_depth_indoor.pt) or [LiheYoung/Depth-Anything/checkpoints_metric_depth/depth_anything_metric_depth_outdoor.pt](https://huggingface.co/spaces/LiheYoung/Depth-Anything/blob/main/checkpoints_metric_depth/depth_anything_metric_depth_outdoor.pt)

+# 2000 Stars 😄

+

+

+

+

+  +

+

+

+Thanks for yalls supports. I never thought the graph for stars would be linear lol.

diff --git a/UPDATES.md b/UPDATES.md

new file mode 100644

index 0000000000000000000000000000000000000000..7d5a8f622a72e536d30d86838dc9770612787ebd

--- /dev/null

+++ b/UPDATES.md

@@ -0,0 +1,45 @@

+* `AIO Aux Preprocessor` intergrating all loadable aux preprocessors as dropdown options. Easy to copy, paste and get the preprocessor faster.

+* Added OpenPose-format JSON output from OpenPose Preprocessor and DWPose Preprocessor. Checks [here](#faces-and-poses).

+* Fixed wrong model path when downloading DWPose.

+* Make hint images less blurry.

+* Added `resolution` option, `PixelPerfectResolution` and `HintImageEnchance` nodes (TODO: Documentation).

+* Added `RAFT Optical Flow Embedder` for TemporalNet2 (TODO: Workflow example).

+* Fixed opencv's conflicts between this extension, [ReActor](https://github.com/Gourieff/comfyui-reactor-node) and Roop. Thanks `Gourieff` for [the solution](https://github.com/Fannovel16/comfyui_controlnet_aux/issues/7#issuecomment-1734319075)!

+* RAFT is removed as the code behind it doesn't match what what the original code does

+* Changed `lineart`'s display name from `Normal Lineart` to `Realistic Lineart`. This change won't affect old workflows

+* Added support for `onnxruntime` to speed-up DWPose (see the Q&A)

+* Fixed TypeError: expected size to be one of int or Tuple[int] or Tuple[int, int] or Tuple[int, int, int], but got size with types [, ]: [Issue](https://github.com/Fannovel16/comfyui_controlnet_aux/issues/2), [PR](https://github.com/Fannovel16/comfyui_controlnet_aux/pull/71))

+* Fixed ImageGenResolutionFromImage mishape (https://github.com/Fannovel16/comfyui_controlnet_aux/pull/74)

+* Fixed LeRes and MiDaS's incomatipility with MPS device

+* Fixed checking DWPose onnxruntime session multiple times: https://github.com/Fannovel16/comfyui_controlnet_aux/issues/89)

+* Added `Anime Face Segmentor` (in `ControlNet Preprocessors/Semantic Segmentation`) for [ControlNet AnimeFaceSegmentV2](https://huggingface.co/bdsqlsz/qinglong_controlnet-lllite#animefacesegmentv2). Checks [here](#anime-face-segmentor)

+* Change download functions and fix [download error](https://github.com/Fannovel16/comfyui_controlnet_aux/issues/39): [PR](https://github.com/Fannovel16/comfyui_controlnet_aux/pull/96)

+* Caching DWPose Onnxruntime during the first use of DWPose node instead of ComfyUI startup

+* Added alternative YOLOX models for faster speed when using DWPose

+* Added alternative DWPose models

+* Implemented the preprocessor for [AnimalPose ControlNet](https://github.com/abehonest/ControlNet_AnimalPose/tree/main). Check [Animal Pose AP-10K](#animal-pose-ap-10k)

+* Added YOLO-NAS models which are drop-in replacements of YOLOX

+* Fixed Openpose Face/Hands no longer detecting: https://github.com/Fannovel16/comfyui_controlnet_aux/issues/54

+* Added TorchScript implementation of DWPose and AnimalPose

+* Added TorchScript implementation of DensePose from [Colab notebook](https://colab.research.google.com/drive/16hcaaKs210ivpxjoyGNuvEXZD4eqOOSQ) which doesn't require detectron2. [Example](#densepose). Thanks [@LayerNome](https://github.com/Layer-norm) for fixing bugs related.

+* Added Standard Lineart Preprocessor

+* Fixed OpenPose misplacements in some cases

+* Added Mesh Graphormer - Hand Depth Map & Mask

+* Misaligned hands bug from MeshGraphormer was fixed

+* Added more mask options for MeshGraphormer

+* Added Save Pose Keypoint node for editing

+* Added Unimatch Optical Flow

+* Added Depth Anything & Zoe Depth Anything

+* Removed resolution field from Unimatch Optical Flow as that interpolating optical flow seems unstable

+* Added TEED Soft-Edge Preprocessor

+* Added DiffusionEdge

+* Added Image Luminance and Image Intensity

+* Added Normal DSINE

+* Added TTPlanet Tile (09/05/2024, DD/MM/YYYY)

+* Added AnyLine, Metric3D (18/05/2024)

+* Added Depth Anything V2 (16/06/2024)

+* Added Union model of ControlNet and preprocessors

+

+* Refactor INPUT_TYPES and add Execute All node during the process of learning [Execution Model Inversion](https://github.com/comfyanonymous/ComfyUI/pull/2666)

+* Added scale_stick_for_xinsr_cn (https://github.com/Fannovel16/comfyui_controlnet_aux/issues/447) (09/04/2024)

+* PyTorch 2.7 compatibility fixes - eliminated custom_timm, custom_detectron2, and custom_midas_repo dependencies causing hanging issues. Refactored 7 major preprocessors including OneFormer (now using HuggingFace transformers), ZOE, DSINE, MiDaS, BAE, Metric3D, and Uniformer. Resolved ~59 GitHub issues related to import failures, hanging, and extension conflicts. Full modernization to actively maintained packages.

\ No newline at end of file

diff --git a/__init__.py b/__init__.py

new file mode 100644

index 0000000000000000000000000000000000000000..0de222b1bcab8a10fc4da2af24b305381cea8002

--- /dev/null

+++ b/__init__.py

@@ -0,0 +1,224 @@

+import sys, os

+

+# Disable NPU device initialization and problematic MMCV ops to prevent RuntimeError

+# Must be set BEFORE any MMCV imports happen anywhere in ComfyUI

+os.environ['NPU_DEVICE_COUNT'] = '0'

+os.environ['MMCV_WITH_OPS'] = '0'

+from .utils import here, define_preprocessor_inputs, INPUT

+from pathlib import Path

+import traceback

+import importlib

+from .log import log, blue_text, cyan_text, get_summary, get_label

+from .hint_image_enchance import NODE_CLASS_MAPPINGS as HIE_NODE_CLASS_MAPPINGS

+from .hint_image_enchance import NODE_DISPLAY_NAME_MAPPINGS as HIE_NODE_DISPLAY_NAME_MAPPINGS

+#Ref: https://github.com/comfyanonymous/ComfyUI/blob/76d53c4622fc06372975ed2a43ad345935b8a551/nodes.py#L17

+sys.path.insert(0, str(Path(here, "src").resolve()))

+for pkg_name in ["custom_controlnet_aux", "custom_mmpkg"]:

+ sys.path.append(str(Path(here, "src", pkg_name).resolve()))

+

+#Enable CPU fallback for ops not being supported by MPS like upsample_bicubic2d.out

+#https://github.com/pytorch/pytorch/issues/77764

+#https://github.com/Fannovel16/comfyui_controlnet_aux/issues/2#issuecomment-1763579485

+os.environ["PYTORCH_ENABLE_MPS_FALLBACK"] = os.getenv("PYTORCH_ENABLE_MPS_FALLBACK", '1')

+

+

+def load_nodes():

+ shorted_errors = []

+ full_error_messages = []

+ node_class_mappings = {}

+ node_display_name_mappings = {}

+

+ for filename in (here / "node_wrappers").iterdir():

+ module_name = filename.stem

+ if module_name.startswith('.'): continue #Skip hidden files created by the OS (e.g. [.DS_Store](https://en.wikipedia.org/wiki/.DS_Store))

+ try:

+ module = importlib.import_module(

+ f".node_wrappers.{module_name}", package=__package__

+ )

+ node_class_mappings.update(getattr(module, "NODE_CLASS_MAPPINGS"))

+ if hasattr(module, "NODE_DISPLAY_NAME_MAPPINGS"):

+ node_display_name_mappings.update(getattr(module, "NODE_DISPLAY_NAME_MAPPINGS"))

+

+ log.debug(f"Imported {module_name} nodes")

+

+ except AttributeError:

+ pass # wip nodes

+ except Exception:

+ error_message = traceback.format_exc()

+ full_error_messages.append(error_message)

+ error_message = error_message.splitlines()[-1]

+ shorted_errors.append(

+ f"Failed to import module {module_name} because {error_message}"

+ )

+

+ if len(shorted_errors) > 0:

+ full_err_log = '\n\n'.join(full_error_messages)

+ print(f"\n\nFull error log from comfyui_controlnet_aux: \n{full_err_log}\n\n")

+ log.info(

+ f"Some nodes failed to load:\n\t"

+ + "\n\t".join(shorted_errors)

+ + "\n\n"

+ + "Check that you properly installed the dependencies.\n"

+ + "If you think this is a bug, please report it on the github page (https://github.com/Fannovel16/comfyui_controlnet_aux/issues)"

+ )

+ return node_class_mappings, node_display_name_mappings

+

+AUX_NODE_MAPPINGS, AUX_DISPLAY_NAME_MAPPINGS = load_nodes()

+

+#For nodes not mapping image to image or has special requirements

+AIO_NOT_SUPPORTED = ["InpaintPreprocessor", "MeshGraphormer+ImpactDetector-DepthMapPreprocessor", "DiffusionEdge_Preprocessor"]

+AIO_NOT_SUPPORTED += ["SavePoseKpsAsJsonFile", "FacialPartColoringFromPoseKps", "UpperBodyTrackingFromPoseKps", "RenderPeopleKps", "RenderAnimalKps"]

+AIO_NOT_SUPPORTED += ["Unimatch_OptFlowPreprocessor", "MaskOptFlow"]

+

+def preprocessor_options():

+ auxs = list(AUX_NODE_MAPPINGS.keys())

+ auxs.insert(0, "none")

+ for name in AIO_NOT_SUPPORTED:

+ if name in auxs:

+ auxs.remove(name)

+ return auxs

+

+

+PREPROCESSOR_OPTIONS = preprocessor_options()

+

+class AIO_Preprocessor:

+ @classmethod

+ def INPUT_TYPES(s):

+ return define_preprocessor_inputs(

+ preprocessor=INPUT.COMBO(PREPROCESSOR_OPTIONS, default="none"),

+ resolution=INPUT.RESOLUTION()

+ )

+

+ RETURN_TYPES = ("IMAGE",)

+ FUNCTION = "execute"

+

+ CATEGORY = "ControlNet Preprocessors"

+

+ def execute(self, preprocessor, image, resolution=512):

+ if preprocessor == "none":

+ return (image, )

+ else:

+ aux_class = AUX_NODE_MAPPINGS[preprocessor]

+ input_types = aux_class.INPUT_TYPES()

+ input_types = {

+ **input_types["required"],

+ **(input_types["optional"] if "optional" in input_types else {})

+ }

+ params = {}

+ for name, input_type in input_types.items():

+ if name == "image":

+ params[name] = image

+ continue

+

+ if name == "resolution":

+ params[name] = resolution

+ continue

+

+ if len(input_type) == 2 and ("default" in input_type[1]):

+ params[name] = input_type[1]["default"]

+ continue

+

+ default_values = { "INT": 0, "FLOAT": 0.0 }

+ if type(input_type[0]) is list:

+ for input_type_value in input_type[0]:

+ if input_type_value in default_values:

+ params[name] = default_values[input_type[0]]

+ else:

+ if input_type[0] in default_values:

+ params[name] = default_values[input_type[0]]

+

+ return getattr(aux_class(), aux_class.FUNCTION)(**params)

+

+class ControlNetAuxSimpleAddText:

+ @classmethod

+ def INPUT_TYPES(s):

+ return dict(

+ required=dict(image=INPUT.IMAGE(), text=INPUT.STRING())

+ )

+

+ RETURN_TYPES = ("IMAGE",)

+ FUNCTION = "execute"

+ CATEGORY = "ControlNet Preprocessors"

+ def execute(self, image, text):

+ from PIL import Image, ImageDraw, ImageFont

+ import numpy as np

+ import torch

+

+ font = ImageFont.truetype(str((here / "NotoSans-Regular.ttf").resolve()), 40)

+ img = Image.fromarray(image[0].cpu().numpy().__mul__(255.).astype(np.uint8))

+ ImageDraw.Draw(img).text((0,0), text, fill=(0,255,0), font=font)

+ return (torch.from_numpy(np.array(img)).unsqueeze(0) / 255.,)

+

+class ExecuteAllControlNetPreprocessors:

+ @classmethod

+ def INPUT_TYPES(s):

+ return define_preprocessor_inputs(resolution=INPUT.RESOLUTION())

+ RETURN_TYPES = ("IMAGE",)

+ FUNCTION = "execute"

+

+ CATEGORY = "ControlNet Preprocessors"

+

+ def execute(self, image, resolution=512):

+ try:

+ from comfy_execution.graph_utils import GraphBuilder

+ except:

+ raise RuntimeError("ExecuteAllControlNetPreprocessor requries [Execution Model Inversion](https://github.com/comfyanonymous/ComfyUI/commit/5cfe38). Update ComfyUI/SwarmUI to get this feature")

+

+ graph = GraphBuilder()

+ curr_outputs = []

+ for preprocc in PREPROCESSOR_OPTIONS:

+ preprocc_node = graph.node("AIO_Preprocessor", preprocessor=preprocc, image=image, resolution=resolution)

+ hint_img = preprocc_node.out(0)

+ add_text_node = graph.node("ControlNetAuxSimpleAddText", image=hint_img, text=preprocc)

+ curr_outputs.append(add_text_node.out(0))

+

+ while len(curr_outputs) > 1:

+ _outputs = []

+ for i in range(0, len(curr_outputs), 2):

+ if i+1 < len(curr_outputs):

+ image_batch = graph.node("ImageBatch", image1=curr_outputs[i], image2=curr_outputs[i+1])

+ _outputs.append(image_batch.out(0))

+ else:

+ _outputs.append(curr_outputs[i])

+ curr_outputs = _outputs

+

+ return {

+ "result": (curr_outputs[0],),

+ "expand": graph.finalize(),

+ }

+

+class ControlNetPreprocessorSelector:

+ @classmethod

+ def INPUT_TYPES(s):

+ return {

+ "required": {

+ "preprocessor": (PREPROCESSOR_OPTIONS,),

+ }

+ }

+

+ RETURN_TYPES = (PREPROCESSOR_OPTIONS,)

+ RETURN_NAMES = ("preprocessor",)

+ FUNCTION = "get_preprocessor"

+

+ CATEGORY = "ControlNet Preprocessors"

+

+ def get_preprocessor(self, preprocessor: str):

+ return (preprocessor,)

+

+

+NODE_CLASS_MAPPINGS = {

+ **AUX_NODE_MAPPINGS,

+ "AIO_Preprocessor": AIO_Preprocessor,

+ "ControlNetPreprocessorSelector": ControlNetPreprocessorSelector,

+ **HIE_NODE_CLASS_MAPPINGS,

+ "ExecuteAllControlNetPreprocessors": ExecuteAllControlNetPreprocessors,

+ "ControlNetAuxSimpleAddText": ControlNetAuxSimpleAddText

+}

+

+NODE_DISPLAY_NAME_MAPPINGS = {

+ **AUX_DISPLAY_NAME_MAPPINGS,

+ "AIO_Preprocessor": "AIO Aux Preprocessor",

+ "ControlNetPreprocessorSelector": "Preprocessor Selector",

+ **HIE_NODE_DISPLAY_NAME_MAPPINGS,

+ "ExecuteAllControlNetPreprocessors": "Execute All ControlNet Preprocessors"

+}

diff --git a/config.example.yaml b/config.example.yaml

new file mode 100644

index 0000000000000000000000000000000000000000..6734ad02ba275e4f3dd820e448f841a4cd156dd3

--- /dev/null

+++ b/config.example.yaml

@@ -0,0 +1,20 @@

+# this is an example for config.yaml file, you can rename it to config.yaml if you want to use it

+# ###############################################################################################

+# This path is for custom pressesor models base folder. default is "./ckpts"

+# you can also use absolute paths like: "/root/ComfyUI/custom_nodes/comfyui_controlnet_aux/ckpts" or "D:\\ComfyUI\\custom_nodes\\comfyui_controlnet_aux\\ckpts"

+annotator_ckpts_path: "./ckpts"

+# ###############################################################################################

+# This path is for downloading temporary files.

+# You SHOULD use absolute path for this like"D:\\temp", DO NOT use relative paths. Empty for default.

+custom_temp_path:

+# ###############################################################################################

+# if you already have downloaded ckpts via huggingface hub into default cache path like: ~/.cache/huggingface/hub, you can set this True to use symlinks to save space

+USE_SYMLINKS: False

+# ###############################################################################################

+# EP_list is a list of execution providers for onnxruntime, if one of them is not available or not working well, you can delete that provider from here(config.yaml)

+# you can find all available providers here: https://onnxruntime.ai/docs/execution-providers

+# for example, if you have CUDA installed, you can set it to: ["CUDAExecutionProvider", "CPUExecutionProvider"]

+# empty list or only keep ["CPUExecutionProvider"] means you use cv2.dnn.readNetFromONNX to load onnx models

+# if your onnx models can only run on the CPU or have other issues, we recommend using pt model instead.

+# default value is ["CUDAExecutionProvider", "DirectMLExecutionProvider", "OpenVINOExecutionProvider", "ROCMExecutionProvider", "CPUExecutionProvider"]

+EP_list: ["CUDAExecutionProvider", "DirectMLExecutionProvider", "OpenVINOExecutionProvider", "ROCMExecutionProvider", "CPUExecutionProvider"]

diff --git a/dev_interface.py b/dev_interface.py

new file mode 100644

index 0000000000000000000000000000000000000000..3c08abdbbbdfa154f3b09c64d63ce7c517491337

--- /dev/null

+++ b/dev_interface.py

@@ -0,0 +1,6 @@

+from pathlib import Path

+from utils import here

+import sys

+sys.path.append(str(Path(here, "src")))

+

+from custom_controlnet_aux import *

\ No newline at end of file

diff --git a/examples/CNAuxBanner.jpg b/examples/CNAuxBanner.jpg

new file mode 100644

index 0000000000000000000000000000000000000000..42621fb16301b731b9f8853b77cef9f3a209d370

--- /dev/null

+++ b/examples/CNAuxBanner.jpg

@@ -0,0 +1,3 @@

+version https://git-lfs.github.com/spec/v1

+oid sha256:ee78fe95215ac7cedec155f7df25707858b11add1f15c8a06e978deeef8a1666

+size 589690

diff --git a/examples/ExecuteAll.png b/examples/ExecuteAll.png

new file mode 100644

index 0000000000000000000000000000000000000000..238e96e327b8a0a2c738591f91a0a77b96a7909c

--- /dev/null

+++ b/examples/ExecuteAll.png

@@ -0,0 +1,3 @@

+version https://git-lfs.github.com/spec/v1

+oid sha256:f9e9d1f3cb4d13005818cf7c14e04be0635b44e180776dce7f02f715e246d18e

+size 10007102

diff --git a/examples/ExecuteAll1.jpg b/examples/ExecuteAll1.jpg

new file mode 100644

index 0000000000000000000000000000000000000000..c01db1275c3e6d8a334d6d5b38f9c6cafbd4af1d

--- /dev/null

+++ b/examples/ExecuteAll1.jpg

@@ -0,0 +1,3 @@

+version https://git-lfs.github.com/spec/v1

+oid sha256:6750043f6866ec52ae348ce108f8b7361c6e30a744cef162f289bbb2296cdad9

+size 1171712

diff --git a/examples/ExecuteAll2.jpg b/examples/ExecuteAll2.jpg

new file mode 100644

index 0000000000000000000000000000000000000000..03796e2d40734f108d454bfaa073f7dfe82d239a

--- /dev/null

+++ b/examples/ExecuteAll2.jpg

@@ -0,0 +1,3 @@

+version https://git-lfs.github.com/spec/v1

+oid sha256:281478f1d39ab9d2ba8b0aa5d3ab33a34c4dc993e078b3bcca9ffbf024f2505b

+size 1021442

diff --git a/examples/comfyui-controlnet-aux-logo.png b/examples/comfyui-controlnet-aux-logo.png

new file mode 100644

index 0000000000000000000000000000000000000000..28082eb86a7cf726c9d783fa99e8cd1a980ea232

--- /dev/null

+++ b/examples/comfyui-controlnet-aux-logo.png

@@ -0,0 +1,3 @@

+version https://git-lfs.github.com/spec/v1

+oid sha256:5e564b8171c2cdd6a3056c079dd0e096cfcd877225c318c63962500f13c313c8

+size 710215

diff --git a/examples/example_animal_pose.png b/examples/example_animal_pose.png

new file mode 100644

index 0000000000000000000000000000000000000000..2c2e34821a9f173dd35b2203347bd4b5eb9cce0f

--- /dev/null

+++ b/examples/example_animal_pose.png

@@ -0,0 +1,3 @@

+version https://git-lfs.github.com/spec/v1

+oid sha256:53d5485dfe3c1d512773e3fbcb063b8cb5ff2a9b4d9bd814d41120e4636d7711

+size 722786

diff --git a/examples/example_anime_face_segmentor.png b/examples/example_anime_face_segmentor.png

new file mode 100644

index 0000000000000000000000000000000000000000..2c8a589a8037a5b86769167fba475f460a5d90c8

--- /dev/null

+++ b/examples/example_anime_face_segmentor.png

@@ -0,0 +1,3 @@

+version https://git-lfs.github.com/spec/v1

+oid sha256:561a225461ba56ffb33491dab0bd2b9d053cffb21c98a46a4281f53725a0f863

+size 483000

diff --git a/examples/example_anyline.png b/examples/example_anyline.png

new file mode 100644

index 0000000000000000000000000000000000000000..9369205d566123d59fe72b18995805f093f9962b

--- /dev/null

+++ b/examples/example_anyline.png

@@ -0,0 +1,3 @@

+version https://git-lfs.github.com/spec/v1

+oid sha256:28e2ffe0c96d7c7d44c45ff10c6754ef9741c638caa06c619774f82a0d4e12c5

+size 379500

diff --git a/examples/example_densepose.png b/examples/example_densepose.png

new file mode 100644

index 0000000000000000000000000000000000000000..bbfc71c830280ab21041bec457b4d41675f461f2

--- /dev/null

+++ b/examples/example_densepose.png

@@ -0,0 +1,3 @@

+version https://git-lfs.github.com/spec/v1

+oid sha256:89e5840fe9ba3be4cecd5842de7c16fd36d36b5ded597c316a4479f668e6e420

+size 277796

diff --git a/examples/example_depth_anything.png b/examples/example_depth_anything.png

new file mode 100644

index 0000000000000000000000000000000000000000..733e4a974c731fc7b2e5b2b33aebb3f7c531a032

--- /dev/null

+++ b/examples/example_depth_anything.png

@@ -0,0 +1,3 @@

+version https://git-lfs.github.com/spec/v1

+oid sha256:9c6da6f0b1a4656a193a1797c2b235889c45cd8f08ae4405ebf95d1fe6d2aa70

+size 448662

diff --git a/examples/example_depth_anything_v2.png b/examples/example_depth_anything_v2.png

new file mode 100644

index 0000000000000000000000000000000000000000..b47e60a901e22302d6f20234714cd14cd3d484b9

--- /dev/null

+++ b/examples/example_depth_anything_v2.png

@@ -0,0 +1,3 @@

+version https://git-lfs.github.com/spec/v1

+oid sha256:7b3f78b0ba41d13f8097722f936822f81acf1df0b318d2319c352dab7576c03b

+size 184526

diff --git a/examples/example_dsine.png b/examples/example_dsine.png

new file mode 100644

index 0000000000000000000000000000000000000000..98eeed056a32fae7635c5fc735a5101d34387ca7

--- /dev/null

+++ b/examples/example_dsine.png

@@ -0,0 +1,3 @@

+version https://git-lfs.github.com/spec/v1

+oid sha256:f136a86046455848b35847cabf0f0801c37b117d52beebdbdb21f5dccfde3291

+size 651038

diff --git a/examples/example_marigold.png b/examples/example_marigold.png

new file mode 100644

index 0000000000000000000000000000000000000000..c0f916bbff15708ccb4c927a37289ddc9bbea0c3

--- /dev/null

+++ b/examples/example_marigold.png

@@ -0,0 +1,3 @@

+version https://git-lfs.github.com/spec/v1

+oid sha256:d3da4c5a5e934e1912b67a737df9aec3b12b893403b650545bdc69b06a006038

+size 661365

diff --git a/examples/example_marigold_flat.jpg b/examples/example_marigold_flat.jpg

new file mode 100644

index 0000000000000000000000000000000000000000..a2f304371400aed1bdbb1894aea76f7a5d2ba79e

--- /dev/null

+++ b/examples/example_marigold_flat.jpg

@@ -0,0 +1,3 @@

+version https://git-lfs.github.com/spec/v1

+oid sha256:7182b223c53093d28cfd6c34e3d26083658eefac0bc22f0f88a801b589dcdee1

+size 300798

diff --git a/examples/example_mesh_graphormer.png b/examples/example_mesh_graphormer.png

new file mode 100644

index 0000000000000000000000000000000000000000..90b162d7c91beda88ccf81a4358ba44914596fd2

--- /dev/null

+++ b/examples/example_mesh_graphormer.png

@@ -0,0 +1,3 @@

+version https://git-lfs.github.com/spec/v1

+oid sha256:d7692c5df1ee107b95c02455eea4e88f878b59313a98b5447736c9c417c0e182

+size 5481152

diff --git a/examples/example_metric3d.png b/examples/example_metric3d.png

new file mode 100644

index 0000000000000000000000000000000000000000..be3bbeb0ed4ab2a6848c227e3d95600601064a08

--- /dev/null

+++ b/examples/example_metric3d.png

@@ -0,0 +1,3 @@

+version https://git-lfs.github.com/spec/v1

+oid sha256:080c52e5f27d1cba63368f6ad277b2a2f9b13433e6d8621436f5ad926157917d

+size 249587

diff --git a/examples/example_onnx.png b/examples/example_onnx.png

new file mode 100644

index 0000000000000000000000000000000000000000..f3f9ad5a45e2ce33b03883446b55fb487c059a00

Binary files /dev/null and b/examples/example_onnx.png differ

diff --git a/examples/example_recolor.png b/examples/example_recolor.png

new file mode 100644

index 0000000000000000000000000000000000000000..4750a3396835adb520620931ef3f73cd3d58ab62

--- /dev/null

+++ b/examples/example_recolor.png

@@ -0,0 +1,3 @@

+version https://git-lfs.github.com/spec/v1

+oid sha256:cc0eecdecf9f698dbca0b38e08834cfd5f232c12a11b63945e75e6af7b3ce366

+size 730211

diff --git a/examples/example_save_kps.png b/examples/example_save_kps.png

new file mode 100644

index 0000000000000000000000000000000000000000..c6b97029d486eefa180b18bae209cf44678fd78c

--- /dev/null

+++ b/examples/example_save_kps.png

@@ -0,0 +1,3 @@

+version https://git-lfs.github.com/spec/v1

+oid sha256:ec9f6d86d0d620458e6f8b85a4e7798525c5d50b314e81b7e8234182edc3a62b

+size 409884

diff --git a/examples/example_teed.png b/examples/example_teed.png

new file mode 100644

index 0000000000000000000000000000000000000000..520d2fe6e5cdf1ab0a9db4a1e31001ab4a96cb29

--- /dev/null

+++ b/examples/example_teed.png

@@ -0,0 +1,3 @@

+version https://git-lfs.github.com/spec/v1

+oid sha256:aea1e8799a1d600a64a900dc8b71b07f30e86e30f12777db3e5dec7039afa7ce

+size 531265

diff --git a/examples/example_torchscript.png b/examples/example_torchscript.png

new file mode 100644

index 0000000000000000000000000000000000000000..6d50d424372d01015dd0a91fa4656823237f7de1

--- /dev/null

+++ b/examples/example_torchscript.png

@@ -0,0 +1,3 @@

+version https://git-lfs.github.com/spec/v1

+oid sha256:056c3a6d78503b3d4aa4a6556e53498b34593b0220d7d447f654304ac0209fa6

+size 109440

diff --git a/examples/example_unimatch.png b/examples/example_unimatch.png

new file mode 100644

index 0000000000000000000000000000000000000000..60f77d14ccea695774a51b613938ba7ea778b04a

--- /dev/null

+++ b/examples/example_unimatch.png

@@ -0,0 +1,3 @@

+version https://git-lfs.github.com/spec/v1

+oid sha256:09a4b0f71140532e0a0c679bdff9023f5e01012df1294eafffc08ced5848acb8

+size 249664

diff --git a/hint_image_enchance.py b/hint_image_enchance.py

new file mode 100644

index 0000000000000000000000000000000000000000..cb2a06974d2e23e53218706248d6340ed99de61a

--- /dev/null

+++ b/hint_image_enchance.py

@@ -0,0 +1,233 @@

+from .log import log

+from .utils import ResizeMode, safe_numpy

+import numpy as np

+import torch

+import cv2

+from .utils import get_unique_axis0

+from .lvminthin import nake_nms, lvmin_thin

+

+MAX_IMAGEGEN_RESOLUTION = 8192 #https://github.com/comfyanonymous/ComfyUI/blob/c910b4a01ca58b04e5d4ab4c747680b996ada02b/nodes.py#L42

+RESIZE_MODES = [ResizeMode.RESIZE.value, ResizeMode.INNER_FIT.value, ResizeMode.OUTER_FIT.value]

+

+#Port from https://github.com/Mikubill/sd-webui-controlnet/blob/e67e017731aad05796b9615dc6eadce911298ea1/internal_controlnet/external_code.py#L89

+class PixelPerfectResolution:

+ @classmethod

+ def INPUT_TYPES(s):

+ return {

+ "required": {

+ "original_image": ("IMAGE", ),

+ "image_gen_width": ("INT", {"default": 512, "min": 64, "max": MAX_IMAGEGEN_RESOLUTION, "step": 8}),

+ "image_gen_height": ("INT", {"default": 512, "min": 64, "max": MAX_IMAGEGEN_RESOLUTION, "step": 8}),

+ #https://github.com/comfyanonymous/ComfyUI/blob/c910b4a01ca58b04e5d4ab4c747680b996ada02b/nodes.py#L854

+ "resize_mode": (RESIZE_MODES, {"default": ResizeMode.RESIZE.value})

+ }

+ }

+

+ RETURN_TYPES = ("INT",)

+ RETURN_NAMES = ("RESOLUTION (INT)", )

+ FUNCTION = "execute"

+

+ CATEGORY = "ControlNet Preprocessors"

+

+ def execute(self, original_image, image_gen_width, image_gen_height, resize_mode):

+ _, raw_H, raw_W, _ = original_image.shape

+

+ k0 = float(image_gen_height) / float(raw_H)

+ k1 = float(image_gen_width) / float(raw_W)

+

+ if resize_mode == ResizeMode.OUTER_FIT.value:

+ estimation = min(k0, k1) * float(min(raw_H, raw_W))

+ else:

+ estimation = max(k0, k1) * float(min(raw_H, raw_W))

+

+ log.debug(f"Pixel Perfect Computation:")

+ log.debug(f"resize_mode = {resize_mode}")

+ log.debug(f"raw_H = {raw_H}")

+ log.debug(f"raw_W = {raw_W}")

+ log.debug(f"target_H = {image_gen_height}")

+ log.debug(f"target_W = {image_gen_width}")

+ log.debug(f"estimation = {estimation}")

+

+ return (int(np.round(estimation)), )

+

+class HintImageEnchance:

+ @classmethod

+ def INPUT_TYPES(s):

+ return {

+ "required": {

+ "hint_image": ("IMAGE", ),

+ "image_gen_width": ("INT", {"default": 512, "min": 64, "max": MAX_IMAGEGEN_RESOLUTION, "step": 8}),

+ "image_gen_height": ("INT", {"default": 512, "min": 64, "max": MAX_IMAGEGEN_RESOLUTION, "step": 8}),

+ #https://github.com/comfyanonymous/ComfyUI/blob/c910b4a01ca58b04e5d4ab4c747680b996ada02b/nodes.py#L854

+ "resize_mode": (RESIZE_MODES, {"default": ResizeMode.RESIZE.value})

+ }

+ }

+

+ RETURN_TYPES = ("IMAGE",)

+ FUNCTION = "execute"

+

+ CATEGORY = "ControlNet Preprocessors"

+ def execute(self, hint_image, image_gen_width, image_gen_height, resize_mode):

+ outs = []

+ for single_hint_image in hint_image:

+ np_hint_image = np.asarray(single_hint_image * 255., dtype=np.uint8)

+

+ if resize_mode == ResizeMode.RESIZE.value:

+ np_hint_image = self.execute_resize(np_hint_image, image_gen_width, image_gen_height)

+ elif resize_mode == ResizeMode.OUTER_FIT.value:

+ np_hint_image = self.execute_outer_fit(np_hint_image, image_gen_width, image_gen_height)

+ else:

+ np_hint_image = self.execute_inner_fit(np_hint_image, image_gen_width, image_gen_height)

+

+ outs.append(torch.from_numpy(np_hint_image.astype(np.float32) / 255.0))

+

+ return (torch.stack(outs, dim=0),)

+

+ def execute_resize(self, detected_map, w, h):

+ detected_map = self.high_quality_resize(detected_map, (w, h))

+ detected_map = safe_numpy(detected_map)

+ return detected_map

+

+ def execute_outer_fit(self, detected_map, w, h):

+ old_h, old_w, _ = detected_map.shape

+ old_w = float(old_w)

+ old_h = float(old_h)

+ k0 = float(h) / old_h

+ k1 = float(w) / old_w

+ safeint = lambda x: int(np.round(x))

+ k = min(k0, k1)

+

+ borders = np.concatenate([detected_map[0, :, :], detected_map[-1, :, :], detected_map[:, 0, :], detected_map[:, -1, :]], axis=0)

+ high_quality_border_color = np.median(borders, axis=0).astype(detected_map.dtype)

+ if len(high_quality_border_color) == 4:

+ # Inpaint hijack

+ high_quality_border_color[3] = 255

+ high_quality_background = np.tile(high_quality_border_color[None, None], [h, w, 1])

+ detected_map = self.high_quality_resize(detected_map, (safeint(old_w * k), safeint(old_h * k)))

+ new_h, new_w, _ = detected_map.shape

+ pad_h = max(0, (h - new_h) // 2)

+ pad_w = max(0, (w - new_w) // 2)

+ high_quality_background[pad_h:pad_h + new_h, pad_w:pad_w + new_w] = detected_map

+ detected_map = high_quality_background

+ detected_map = safe_numpy(detected_map)

+ return detected_map

+

+ def execute_inner_fit(self, detected_map, w, h):

+ old_h, old_w, _ = detected_map.shape

+ old_w = float(old_w)

+ old_h = float(old_h)

+ k0 = float(h) / old_h

+ k1 = float(w) / old_w

+ safeint = lambda x: int(np.round(x))

+ k = max(k0, k1)

+

+ detected_map = self.high_quality_resize(detected_map, (safeint(old_w * k), safeint(old_h * k)))

+ new_h, new_w, _ = detected_map.shape

+ pad_h = max(0, (new_h - h) // 2)

+ pad_w = max(0, (new_w - w) // 2)

+ detected_map = detected_map[pad_h:pad_h+h, pad_w:pad_w+w]

+ detected_map = safe_numpy(detected_map)

+ return detected_map

+

+ def high_quality_resize(self, x, size):

+ # Written by lvmin

+ # Super high-quality control map up-scaling, considering binary, seg, and one-pixel edges

+

+ inpaint_mask = None

+ if x.ndim == 3 and x.shape[2] == 4:

+ inpaint_mask = x[:, :, 3]

+ x = x[:, :, 0:3]

+

+ if x.shape[0] != size[1] or x.shape[1] != size[0]:

+ new_size_is_smaller = (size[0] * size[1]) < (x.shape[0] * x.shape[1])

+ new_size_is_bigger = (size[0] * size[1]) > (x.shape[0] * x.shape[1])

+ unique_color_count = len(get_unique_axis0(x.reshape(-1, x.shape[2])))

+ is_one_pixel_edge = False

+ is_binary = False

+ if unique_color_count == 2:

+ is_binary = np.min(x) < 16 and np.max(x) > 240

+ if is_binary:

+ xc = x

+ xc = cv2.erode(xc, np.ones(shape=(3, 3), dtype=np.uint8), iterations=1)

+ xc = cv2.dilate(xc, np.ones(shape=(3, 3), dtype=np.uint8), iterations=1)

+ one_pixel_edge_count = np.where(xc < x)[0].shape[0]

+ all_edge_count = np.where(x > 127)[0].shape[0]

+ is_one_pixel_edge = one_pixel_edge_count * 2 > all_edge_count

+

+ if 2 < unique_color_count < 200:

+ interpolation = cv2.INTER_NEAREST

+ elif new_size_is_smaller:

+ interpolation = cv2.INTER_AREA

+ else:

+ interpolation = cv2.INTER_CUBIC # Must be CUBIC because we now use nms. NEVER CHANGE THIS

+

+ y = cv2.resize(x, size, interpolation=interpolation)

+ if inpaint_mask is not None:

+ inpaint_mask = cv2.resize(inpaint_mask, size, interpolation=interpolation)

+

+ if is_binary:

+ y = np.mean(y.astype(np.float32), axis=2).clip(0, 255).astype(np.uint8)

+ if is_one_pixel_edge:

+ y = nake_nms(y)

+ _, y = cv2.threshold(y, 0, 255, cv2.THRESH_BINARY + cv2.THRESH_OTSU)

+ y = lvmin_thin(y, prunings=new_size_is_bigger)

+ else:

+ _, y = cv2.threshold(y, 0, 255, cv2.THRESH_BINARY + cv2.THRESH_OTSU)

+ y = np.stack([y] * 3, axis=2)

+ else:

+ y = x

+

+ if inpaint_mask is not None:

+ inpaint_mask = (inpaint_mask > 127).astype(np.float32) * 255.0

+ inpaint_mask = inpaint_mask[:, :, None].clip(0, 255).astype(np.uint8)

+ y = np.concatenate([y, inpaint_mask], axis=2)

+

+ return y

+

+

+class ImageGenResolutionFromLatent:

+ @classmethod

+ def INPUT_TYPES(s):

+ return {

+ "required": { "latent": ("LATENT", ) }

+ }

+

+ RETURN_TYPES = ("INT", "INT")

+ RETURN_NAMES = ("IMAGE_GEN_WIDTH (INT)", "IMAGE_GEN_HEIGHT (INT)")

+ FUNCTION = "execute"

+

+ CATEGORY = "ControlNet Preprocessors"

+

+ def execute(self, latent):

+ _, _, H, W = latent["samples"].shape

+ return (W * 8, H * 8)

+

+class ImageGenResolutionFromImage:

+ @classmethod

+ def INPUT_TYPES(s):

+ return {

+ "required": { "image": ("IMAGE", ) }

+ }

+

+ RETURN_TYPES = ("INT", "INT")

+ RETURN_NAMES = ("IMAGE_GEN_WIDTH (INT)", "IMAGE_GEN_HEIGHT (INT)")

+ FUNCTION = "execute"

+

+ CATEGORY = "ControlNet Preprocessors"

+

+ def execute(self, image):

+ _, H, W, _ = image.shape

+ return (W, H)

+

+NODE_CLASS_MAPPINGS = {

+ "PixelPerfectResolution": PixelPerfectResolution,

+ "ImageGenResolutionFromImage": ImageGenResolutionFromImage,

+ "ImageGenResolutionFromLatent": ImageGenResolutionFromLatent,

+ "HintImageEnchance": HintImageEnchance

+}

+NODE_DISPLAY_NAME_MAPPINGS = {

+ "PixelPerfectResolution": "Pixel Perfect Resolution",

+ "ImageGenResolutionFromImage": "Generation Resolution From Image",

+ "ImageGenResolutionFromLatent": "Generation Resolution From Latent",

+ "HintImageEnchance": "Enchance And Resize Hint Images"

+}

\ No newline at end of file

diff --git a/install.bat b/install.bat

new file mode 100644

index 0000000000000000000000000000000000000000..c36a67448534a5febc2a83d4ebef4cfa49fa6deb

--- /dev/null

+++ b/install.bat

@@ -0,0 +1,20 @@

+@echo off

+

+set "requirements_txt=%~dp0\requirements.txt"

+set "python_exec=..\..\..\python_embeded\python.exe"

+

+echo Installing ComfyUI's ControlNet Auxiliary Preprocessors..

+

+if exist "%python_exec%" (

+ echo Installing with ComfyUI Portable

+ for /f "delims=" %%i in (%requirements_txt%) do (

+ %python_exec% -s -m pip install "%%i"

+ )

+) else (

+ echo Installing with system Python

+ for /f "delims=" %%i in (%requirements_txt%) do (

+ pip install "%%i"

+ )

+)

+

+pause

\ No newline at end of file

diff --git a/log.py b/log.py

new file mode 100644

index 0000000000000000000000000000000000000000..2978c6dc770c78feddc2d0dece7c0b6a91ed23f0

--- /dev/null

+++ b/log.py

@@ -0,0 +1,80 @@

+#Cre: https://github.com/melMass/comfy_mtb/blob/main/log.py

+import logging

+import re

+import os

+

+base_log_level = logging.INFO

+

+

+# Custom object that discards the output

+class NullWriter:

+ def write(self, text):

+ pass

+

+

+class Formatter(logging.Formatter):

+ grey = "\x1b[38;20m"

+ cyan = "\x1b[36;20m"

+ purple = "\x1b[35;20m"

+ yellow = "\x1b[33;20m"

+ red = "\x1b[31;20m"

+ bold_red = "\x1b[31;1m"

+ reset = "\x1b[0m"

+ # format = "%(asctime)s - [%(name)s] - %(levelname)s - %(message)s (%(filename)s:%(lineno)d)"

+ format = "[%(name)s] | %(levelname)s -> %(message)s"

+

+ FORMATS = {

+ logging.DEBUG: purple + format + reset,

+ logging.INFO: cyan + format + reset,

+ logging.WARNING: yellow + format + reset,