41

Browse filesnew

- .gitattributes +3 -0

- requirements.txt +0 -0

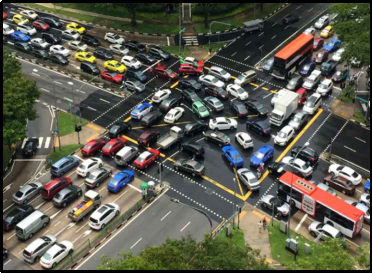

- traffic-light-management-using-AI-main/traffic-light-management-using-AI-main/README.md +52 -0

- traffic-light-management-using-AI-main/traffic-light-management-using-AI-main/__pycache__/tracker.cpython-312.pyc +0 -0

- traffic-light-management-using-AI-main/traffic-light-management-using-AI-main/__pycache__/tracker.cpython-37.pyc +0 -0

- traffic-light-management-using-AI-main/traffic-light-management-using-AI-main/coco.names +80 -0

- traffic-light-management-using-AI-main/traffic-light-management-using-AI-main/data.csv +3 -0

- traffic-light-management-using-AI-main/traffic-light-management-using-AI-main/images/car1.png +0 -0

- traffic-light-management-using-AI-main/traffic-light-management-using-AI-main/images/car2.jpg +0 -0

- traffic-light-management-using-AI-main/traffic-light-management-using-AI-main/images/down/bike.png +0 -0

- traffic-light-management-using-AI-main/traffic-light-management-using-AI-main/images/down/bus.png +0 -0

- traffic-light-management-using-AI-main/traffic-light-management-using-AI-main/images/down/car.png +0 -0

- traffic-light-management-using-AI-main/traffic-light-management-using-AI-main/images/down/rickshaw.png +0 -0

- traffic-light-management-using-AI-main/traffic-light-management-using-AI-main/images/down/truck.png +0 -0

- traffic-light-management-using-AI-main/traffic-light-management-using-AI-main/images/intersection.jpg +3 -0

- traffic-light-management-using-AI-main/traffic-light-management-using-AI-main/images/left/bike.png +0 -0

- traffic-light-management-using-AI-main/traffic-light-management-using-AI-main/images/left/bus.png +0 -0

- traffic-light-management-using-AI-main/traffic-light-management-using-AI-main/images/left/car.png +0 -0

- traffic-light-management-using-AI-main/traffic-light-management-using-AI-main/images/left/rickshaw.png +0 -0

- traffic-light-management-using-AI-main/traffic-light-management-using-AI-main/images/left/truck.png +0 -0

- traffic-light-management-using-AI-main/traffic-light-management-using-AI-main/images/mod_int.png +3 -0

- traffic-light-management-using-AI-main/traffic-light-management-using-AI-main/images/right/bike.png +0 -0

- traffic-light-management-using-AI-main/traffic-light-management-using-AI-main/images/right/bus.png +0 -0

- traffic-light-management-using-AI-main/traffic-light-management-using-AI-main/images/right/car.png +0 -0

- traffic-light-management-using-AI-main/traffic-light-management-using-AI-main/images/right/rickshaw.png +0 -0

- traffic-light-management-using-AI-main/traffic-light-management-using-AI-main/images/right/truck.png +0 -0

- traffic-light-management-using-AI-main/traffic-light-management-using-AI-main/images/signals/green.png +0 -0

- traffic-light-management-using-AI-main/traffic-light-management-using-AI-main/images/signals/red.png +0 -0

- traffic-light-management-using-AI-main/traffic-light-management-using-AI-main/images/signals/yellow.png +0 -0

- traffic-light-management-using-AI-main/traffic-light-management-using-AI-main/images/up/bike.png +0 -0

- traffic-light-management-using-AI-main/traffic-light-management-using-AI-main/images/up/bus.png +0 -0

- traffic-light-management-using-AI-main/traffic-light-management-using-AI-main/images/up/car.png +0 -0

- traffic-light-management-using-AI-main/traffic-light-management-using-AI-main/images/up/rickshaw.png +0 -0

- traffic-light-management-using-AI-main/traffic-light-management-using-AI-main/images/up/truck.png +0 -0

- traffic-light-management-using-AI-main/traffic-light-management-using-AI-main/requirements.txt +1 -0

- traffic-light-management-using-AI-main/traffic-light-management-using-AI-main/simulation.py +511 -0

- traffic-light-management-using-AI-main/traffic-light-management-using-AI-main/tracker.py +56 -0

- traffic-light-management-using-AI-main/traffic-light-management-using-AI-main/vehicle_count.py +209 -0

- traffic-light-management-using-AI-main/traffic-light-management-using-AI-main/yolov3-320.cfg +788 -0

- yolov3-320.weights +3 -0

.gitattributes

CHANGED

|

@@ -33,3 +33,6 @@ saved_model/**/* filter=lfs diff=lfs merge=lfs -text

|

|

| 33 |

*.zip filter=lfs diff=lfs merge=lfs -text

|

| 34 |

*.zst filter=lfs diff=lfs merge=lfs -text

|

| 35 |

*tfevents* filter=lfs diff=lfs merge=lfs -text

|

|

|

|

|

|

|

|

|

|

|

|

| 33 |

*.zip filter=lfs diff=lfs merge=lfs -text

|

| 34 |

*.zst filter=lfs diff=lfs merge=lfs -text

|

| 35 |

*tfevents* filter=lfs diff=lfs merge=lfs -text

|

| 36 |

+

traffic-light-management-using-AI-main/traffic-light-management-using-AI-main/images/intersection.jpg filter=lfs diff=lfs merge=lfs -text

|

| 37 |

+

traffic-light-management-using-AI-main/traffic-light-management-using-AI-main/images/mod_int.png filter=lfs diff=lfs merge=lfs -text

|

| 38 |

+

yolov3-320.weights filter=lfs diff=lfs merge=lfs -text

|

requirements.txt

ADDED

|

Binary file (780 Bytes). View file

|

|

|

traffic-light-management-using-AI-main/traffic-light-management-using-AI-main/README.md

ADDED

|

@@ -0,0 +1,52 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

# Traffic-light-management-using-AI

|

| 2 |

+

Despite the fact that computer vision is today a highly developed field, capabilities for recognition and classification are not frequently used in day-to-day activities. Therefore, in this project we will make use of the current traffic infrastructure with some additional requirements, to create and demonstrate an advanced traffic light management

|

| 3 |

+

# Abstract

|

| 4 |

+

Increasing traffic congestion at signalized junctions is a matter of great concern for creating and maintaining sustainable cities. Traffic jams in addition to adding to drivers' delays and stress levels, traffic bottlenecks significantly increase fuel usage and pollution. The main reason behind this is the way of controlling traffic at such junctions. Semi-automated and conventional traffic management has reached it’s saturation point and can no longer handle the high density of traffic flow with complex patterns. In this paper, we discuss and draw comparisons of previous research and works done to solve this problem. Our focus would be on techniques using Machine Learning, such as Computer Vision and Deep Neural Networks.

|

| 5 |

+

# Introduction

|

| 6 |

+

Numerous road networks are experiencing issues due to the decline in road capacity and the accompanying Level of Service as a result of the rise in automobiles in metropolitan areas. The bottleneck for these high vehicular flow are the conventional traffic management system used in these cities. There are two ways to tackle traffic flow currently in implementation : semi-automatic traffic lights with fixed timings or traffic police stationed at junctions to control the flow manually. The problem with use of traffic lights is that they use a fixed time for each side of the cross road, irrespective of the density of vehicles on those roads.

|

| 7 |

+

|

| 8 |

+

|

| 9 |

+

|

| 10 |

+

|

| 11 |

+

Ideally, the road with higher density of vehicles should be given extra time to pass the junction, as that will lead to lower waiting time on average for all of the vehicles. Depending on manual control of traffic at junctions with manpower is inefficient, as a human cannot process all of the information at live with great accuracy for a longer time. Despite the fact that computer vision is today a highly developed field, capabilities for recognition and classification are not frequently used in day-to-day activities. Therefore, in this project we will be using Machine Vision to improve the current state of traffic. We would also come across some new methodology and techniques in analysis and decision making for traffic lights using live cameras installed at junctions.

|

| 12 |

+

# Problem Statement

|

| 13 |

+

To build a self adaptive traffic light control system based on YOLO. Disproportionate and diverse traffic in different lanes leads to inefficient utilization of same time slot for each of them characterized by slower speeds, longer trip times, and increased vehicular queuing.To create a system which enable the traffic management system to take time allocation decisions for a particular lane according to the traffic density on other different lanes with the help of cameras, image processing modules.

|

| 14 |

+

# Methodology

|

| 15 |

+

The solution can be explained in four simple steps:

|

| 16 |

+

1. Get a real time image of each lane.

|

| 17 |

+

2. Scan and determine traffic density.

|

| 18 |

+

3. Input this data to the Time Allocation module.

|

| 19 |

+

4. The output will be the time slots for each lane, accordingly.

|

| 20 |

+

|

| 21 |

+

<img src="https://user-images.githubusercontent.com/75363378/207391460-a103d439-ec6b-460f-a7b7-acddeb8c42a2.png" height="500"> <img src="https://user-images.githubusercontent.com/75363378/207391504-bf55def6-0644-4673-99ba-233f63f3c63b.png" width="500" height="500">

|

| 22 |

+

|

| 23 |

+

# Results and Discussions

|

| 24 |

+

The result for the project could be divided in two divisions :

|

| 25 |

+

|

| 26 |

+

1. Evaluation of Vehicle Detection Model

|

| 27 |

+

The vehicle detection module was tested with a variety of test images containing varying amounts of vehicles, and the accuracy of detection was found to be in the range of 75-80%. Some test results are shown above in Fig. 3. This is satisfactory, but not optimum. The primary reason for low accuracy is the lack of a proper dataset. To improve upon this, real-life footage from traffic cameras can be used to train the model, so that accuracy of the system can be improved.

|

| 28 |

+

We have used the images from the live feed camera installed at the traffic junctions or at normal traffic spots around the city or on the outskirts. These videos or live feeds were used to feed the model based on YOLO and OpenCV, where we detected the vehicle and there movement as in downwards or upwards, i.e., towards the traffic signal or away from it. We were also successful in defining what type of vehicle is it.

|

| 29 |

+

|

| 30 |

+

<img src="https://user-images.githubusercontent.com/75363378/207393722-8678178d-4ca3-41d3-a8ab-ffc61a123f9a.png" width="800" height="550">

|

| 31 |

+

<img src="https://user-images.githubusercontent.com/75363378/207393802-b3c7df30-5a11-48f0-baf0-838395017fe3.png" width="800" height="550">

|

| 32 |

+

|

| 33 |

+

We were also succesfull in implementing the derived traffic density from the detection module to the model for finding the optimum timings for each traffic lane to pass the vehicles. This was done on the principle of giving more priority or giving more time to the lane with higher traffic density and lesser to the ones with relatively uncrowded lane, while not creating bottlenecks or congestion in any one lane. For the same purpose, we created a simple simulation using PyGame.

|

| 34 |

+

|

| 35 |

+

<img src="https://user-images.githubusercontent.com/75363378/207395269-acc46692-6cd3-4391-b262-17749ada0868.png" width="800" height="550">

|

| 36 |

+

|

| 37 |

+

|

| 38 |

+

2. Evaluation of the Proposed Adaptive System

|

| 39 |

+

For the pupose of evaluation of the system, the traffic movement simulation for both the system was run for 5 minutes a total of 15 times, using which the total number of vehicles that were able to pass through this junction were calculated.

|

| 40 |

+

|

| 41 |

+

<img src="https://user-images.githubusercontent.com/75363378/207395478-a0ceec2b-21e0-4954-aa3d-732d7bd51bd4.png" width="500">

|

| 42 |

+

|

| 43 |

+

# References

|

| 44 |

+

[1] <a href="https://ieeexplore.ieee.org/stamp/stamp.jsp?tp=&arnumber=9358334&isnumber=9358272">M. M. Gandhi, D. S. Solanki, R. S. Daptardar and N. S. Baloorkar, "Smart Control of Traffic Light Using Artificial Intelligence," 2020 5th IEEE International Conference on Recent Advances and Innovations in Engineering (ICRAIE), 2020, pp. 1-6, doi: 10.1109/ICRAIE51050.2020.9358334.</a>

|

| 45 |

+

|

| 46 |

+

[2] <a href="https://www.researchgate.net/publication/229029935_Intelligent_Traffic_Lights_Control_By_Fuzzy_Logic">Khiang, Kok & Khalid, Marzuki & Yusof, Rubiyah. (1997). Intelligent Traffic Lights Control By Fuzzy Logic. Malaysian Journal of Computer Science. 9. 29-35.</a>

|

| 47 |

+

|

| 48 |

+

[3] <a href="https://ieeexplore.ieee.org/stamp/stamp.jsp?tp=&arnumber=6137134&isnumber=6137119">M. H. Malhi, M. H. Aslam, F. Saeed, O. Javed and M. Fraz, "Vision Based Intelligent Traffic Management System," 2011 Frontiers of Information Technology, 2011, pp. 137-141, doi: 10.1109/FIT.2011.33.</a>

|

| 49 |

+

|

| 50 |

+

[4] <a href="https://www.researchgate.net/publication/328987822_Improving_Traffic_Light_Control_by_Means_of_Fuzzy_Logic">A. Vogel, I. Oremović, R. Šimić and E. Ivanjko, "Improving Traffic Light Control by Means of Fuzzy Logic," 2018 International Symposium ELMAR, 2018, pp. 51-56, doi: 10.23919/ELMAR.2018.8534692.</a>

|

| 51 |

+

|

| 52 |

+

[5] <a href="https://ieeexplore.ieee.org/stamp/stamp.jsp?tp=&arnumber=7868350&isnumber=7868337">T. Osman, S. S. Psyche, J. M. Shafi Ferdous and H. U. Zaman, "Intelligent traffic management system for cross section of roads using computer vision," 2017 IEEE 7th Annual Computing and Communication Workshop and Conference (CCWC), 2017, pp. 1-7, doi: 10.1109/CCWC.2017.7868350.</a>

|

traffic-light-management-using-AI-main/traffic-light-management-using-AI-main/__pycache__/tracker.cpython-312.pyc

ADDED

|

Binary file (2.08 kB). View file

|

|

|

traffic-light-management-using-AI-main/traffic-light-management-using-AI-main/__pycache__/tracker.cpython-37.pyc

ADDED

|

Binary file (1.29 kB). View file

|

|

|

traffic-light-management-using-AI-main/traffic-light-management-using-AI-main/coco.names

ADDED

|

@@ -0,0 +1,80 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

person

|

| 2 |

+

bicycle

|

| 3 |

+

car

|

| 4 |

+

motorbike

|

| 5 |

+

aeroplane

|

| 6 |

+

bus

|

| 7 |

+

train

|

| 8 |

+

truck

|

| 9 |

+

boat

|

| 10 |

+

traffic light

|

| 11 |

+

fire hydrant

|

| 12 |

+

stop sign

|

| 13 |

+

parking meter

|

| 14 |

+

bench

|

| 15 |

+

bird

|

| 16 |

+

cat

|

| 17 |

+

dog

|

| 18 |

+

horse

|

| 19 |

+

sheep

|

| 20 |

+

cow

|

| 21 |

+

elephant

|

| 22 |

+

bear

|

| 23 |

+

zebra

|

| 24 |

+

giraffe

|

| 25 |

+

backpack

|

| 26 |

+

umbrella

|

| 27 |

+

handbag

|

| 28 |

+

tie

|

| 29 |

+

suitcase

|

| 30 |

+

frisbee

|

| 31 |

+

skis

|

| 32 |

+

snowboard

|

| 33 |

+

sports ball

|

| 34 |

+

kite

|

| 35 |

+

baseball bat

|

| 36 |

+

baseball glove

|

| 37 |

+

skateboard

|

| 38 |

+

surfboard

|

| 39 |

+

tennis racket

|

| 40 |

+

bottle

|

| 41 |

+

wine glass

|

| 42 |

+

cup

|

| 43 |

+

fork

|

| 44 |

+

knife

|

| 45 |

+

spoon

|

| 46 |

+

bowl

|

| 47 |

+

banana

|

| 48 |

+

apple

|

| 49 |

+

sandwich

|

| 50 |

+

orange

|

| 51 |

+

broccoli

|

| 52 |

+

carrot

|

| 53 |

+

hot dog

|

| 54 |

+

pizza

|

| 55 |

+

donut

|

| 56 |

+

cake

|

| 57 |

+

chair

|

| 58 |

+

sofa

|

| 59 |

+

pottedplant

|

| 60 |

+

bed

|

| 61 |

+

diningtable

|

| 62 |

+

toilet

|

| 63 |

+

tvmonitor

|

| 64 |

+

laptop

|

| 65 |

+

mouse

|

| 66 |

+

remote

|

| 67 |

+

keyboard

|

| 68 |

+

cell phone

|

| 69 |

+

microwave

|

| 70 |

+

oven

|

| 71 |

+

toaster

|

| 72 |

+

sink

|

| 73 |

+

refrigerator

|

| 74 |

+

book

|

| 75 |

+

clock

|

| 76 |

+

vase

|

| 77 |

+

scissors

|

| 78 |

+

teddy bear

|

| 79 |

+

hair drier

|

| 80 |

+

toothbrush

|

traffic-light-management-using-AI-main/traffic-light-management-using-AI-main/data.csv

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

Direction,car,motorbike,bus,truck

|

| 2 |

+

Up,20,0,2,0

|

| 3 |

+

Down,5,3,2,1

|

traffic-light-management-using-AI-main/traffic-light-management-using-AI-main/images/car1.png

ADDED

|

traffic-light-management-using-AI-main/traffic-light-management-using-AI-main/images/car2.jpg

ADDED

|

traffic-light-management-using-AI-main/traffic-light-management-using-AI-main/images/down/bike.png

ADDED

|

traffic-light-management-using-AI-main/traffic-light-management-using-AI-main/images/down/bus.png

ADDED

|

traffic-light-management-using-AI-main/traffic-light-management-using-AI-main/images/down/car.png

ADDED

|

traffic-light-management-using-AI-main/traffic-light-management-using-AI-main/images/down/rickshaw.png

ADDED

|

traffic-light-management-using-AI-main/traffic-light-management-using-AI-main/images/down/truck.png

ADDED

|

traffic-light-management-using-AI-main/traffic-light-management-using-AI-main/images/intersection.jpg

ADDED

|

Git LFS Details

|

traffic-light-management-using-AI-main/traffic-light-management-using-AI-main/images/left/bike.png

ADDED

|

traffic-light-management-using-AI-main/traffic-light-management-using-AI-main/images/left/bus.png

ADDED

|

traffic-light-management-using-AI-main/traffic-light-management-using-AI-main/images/left/car.png

ADDED

|

traffic-light-management-using-AI-main/traffic-light-management-using-AI-main/images/left/rickshaw.png

ADDED

|

traffic-light-management-using-AI-main/traffic-light-management-using-AI-main/images/left/truck.png

ADDED

|

traffic-light-management-using-AI-main/traffic-light-management-using-AI-main/images/mod_int.png

ADDED

|

Git LFS Details

|

traffic-light-management-using-AI-main/traffic-light-management-using-AI-main/images/right/bike.png

ADDED

|

traffic-light-management-using-AI-main/traffic-light-management-using-AI-main/images/right/bus.png

ADDED

|

traffic-light-management-using-AI-main/traffic-light-management-using-AI-main/images/right/car.png

ADDED

|

traffic-light-management-using-AI-main/traffic-light-management-using-AI-main/images/right/rickshaw.png

ADDED

|

traffic-light-management-using-AI-main/traffic-light-management-using-AI-main/images/right/truck.png

ADDED

|

traffic-light-management-using-AI-main/traffic-light-management-using-AI-main/images/signals/green.png

ADDED

|

traffic-light-management-using-AI-main/traffic-light-management-using-AI-main/images/signals/red.png

ADDED

|

traffic-light-management-using-AI-main/traffic-light-management-using-AI-main/images/signals/yellow.png

ADDED

|

traffic-light-management-using-AI-main/traffic-light-management-using-AI-main/images/up/bike.png

ADDED

|

traffic-light-management-using-AI-main/traffic-light-management-using-AI-main/images/up/bus.png

ADDED

|

traffic-light-management-using-AI-main/traffic-light-management-using-AI-main/images/up/car.png

ADDED

|

traffic-light-management-using-AI-main/traffic-light-management-using-AI-main/images/up/rickshaw.png

ADDED

|

traffic-light-management-using-AI-main/traffic-light-management-using-AI-main/images/up/truck.png

ADDED

|

traffic-light-management-using-AI-main/traffic-light-management-using-AI-main/requirements.txt

ADDED

|

@@ -0,0 +1 @@

|

|

|

|

|

|

|

| 1 |

+

Download the YoloV3 weights file from here : https://drive.google.com/file/d/1RuNsdS9MgumwTFwVqI9rwSQJwk8qSAQS/view?usp=sharing

|

traffic-light-management-using-AI-main/traffic-light-management-using-AI-main/simulation.py

ADDED

|

@@ -0,0 +1,511 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

# LAG

|

| 2 |

+

# NO. OF VEHICLES IN SIGNAL CLASS

|

| 3 |

+

# stops not used

|

| 4 |

+

# DISTRIBUTION

|

| 5 |

+

# BUS TOUCHING ON TURNS

|

| 6 |

+

# Distribution using python class

|

| 7 |

+

|

| 8 |

+

# *** IMAGE XY COOD IS TOP LEFT

|

| 9 |

+

import random

|

| 10 |

+

import math

|

| 11 |

+

import time

|

| 12 |

+

import threading

|

| 13 |

+

# from vehicle_detection import detection

|

| 14 |

+

import pygame

|

| 15 |

+

import sys

|

| 16 |

+

import os

|

| 17 |

+

|

| 18 |

+

# options={

|

| 19 |

+

# 'model':'./cfg/yolo.cfg', #specifying the path of model

|

| 20 |

+

# 'load':'./bin/yolov2.weights', #weights

|

| 21 |

+

# 'threshold':0.3 #minimum confidence factor to create a box, greater than 0.3 good

|

| 22 |

+

# }

|

| 23 |

+

|

| 24 |

+

# tfnet=TFNet(options) #READ ABOUT TFNET

|

| 25 |

+

|

| 26 |

+

# Default values of signal times

|

| 27 |

+

defaultRed = 150

|

| 28 |

+

defaultYellow = 5

|

| 29 |

+

defaultGreen = 20

|

| 30 |

+

defaultMinimum = 10

|

| 31 |

+

defaultMaximum = 60

|

| 32 |

+

|

| 33 |

+

signals = []

|

| 34 |

+

noOfSignals = 4

|

| 35 |

+

simTime = 300 # change this to change time of simulation

|

| 36 |

+

timeElapsed = 0

|

| 37 |

+

|

| 38 |

+

currentGreen = 0 # Indicates which signal is green

|

| 39 |

+

nextGreen = (currentGreen+1)%noOfSignals

|

| 40 |

+

currentYellow = 0 # Indicates whether yellow signal is on or off

|

| 41 |

+

|

| 42 |

+

# Average times for vehicles to pass the intersection

|

| 43 |

+

carTime = 2

|

| 44 |

+

bikeTime = 1

|

| 45 |

+

busTime = 2.5

|

| 46 |

+

truckTime = 2.5

|

| 47 |

+

|

| 48 |

+

# Count of cars at a traffic signal

|

| 49 |

+

noOfCars = 0

|

| 50 |

+

noOfBikes = 0

|

| 51 |

+

noOfBuses = 0

|

| 52 |

+

noOfTrucks = 0

|

| 53 |

+

noOfLanes = 2

|

| 54 |

+

|

| 55 |

+

# Red signal time at which cars will be detected at a signal

|

| 56 |

+

detectionTime = 5

|

| 57 |

+

|

| 58 |

+

speeds = {'car': 2.25, 'bus': 1.8, 'truck': 1.8, 'bike': 2.5} # average speeds of vehicles

|

| 59 |

+

|

| 60 |

+

# Coordinates of start

|

| 61 |

+

x = {'right':[0,0,0], 'down':[755,727,697], 'left':[1400,1400,1400], 'up':[602,627,657]}

|

| 62 |

+

y = {'right':[348,370,398], 'down':[0,0,0], 'left':[498,466,436], 'up':[800,800,800]}

|

| 63 |

+

|

| 64 |

+

vehicles = {'right': {0:[], 1:[], 2:[], 'crossed':0}, 'down': {0:[], 1:[], 2:[], 'crossed':0}, 'left': {0:[], 1:[], 2:[], 'crossed':0}, 'up': {0:[], 1:[], 2:[], 'crossed':0}}

|

| 65 |

+

vehicleTypes = {0: 'car', 1: 'bus', 2: 'truck', 3: 'bike'}

|

| 66 |

+

directionNumbers = {0:'right', 1:'down', 2:'left', 3:'up'}

|

| 67 |

+

|

| 68 |

+

# Coordinates of signal image, timer, and vehicle count

|

| 69 |

+

signalCoods = [(530,230),(810,230),(810,570),(530,570)]

|

| 70 |

+

signalTimerCoods = [(530,210),(810,210),(810,550),(530,550)]

|

| 71 |

+

vehicleCountCoods = [(480,210),(880,210),(880,550),(480,550)]

|

| 72 |

+

vehicleCountTexts = ["0", "0", "0", "0"]

|

| 73 |

+

|

| 74 |

+

# Coordinates of stop lines

|

| 75 |

+

stopLines = {'right': 590, 'down': 330, 'left': 800, 'up': 535}

|

| 76 |

+

defaultStop = {'right': 580, 'down': 320, 'left': 810, 'up': 545}

|

| 77 |

+

stops = {'right': [580,580,580], 'down': [320,320,320], 'left': [810,810,810], 'up': [545,545,545]}

|

| 78 |

+

|

| 79 |

+

mid = {'right': {'x':705, 'y':445}, 'down': {'x':695, 'y':450}, 'left': {'x':695, 'y':425}, 'up': {'x':695, 'y':400}}

|

| 80 |

+

rotationAngle = 3

|

| 81 |

+

|

| 82 |

+

# Gap between vehicles

|

| 83 |

+

gap = 15 # stopping gap

|

| 84 |

+

gap2 = 15 # moving gap

|

| 85 |

+

|

| 86 |

+

pygame.init()

|

| 87 |

+

simulation = pygame.sprite.Group()

|

| 88 |

+

|

| 89 |

+

class TrafficSignal:

|

| 90 |

+

def __init__(self, red, yellow, green, minimum, maximum):

|

| 91 |

+

self.red = red

|

| 92 |

+

self.yellow = yellow

|

| 93 |

+

self.green = green

|

| 94 |

+

self.minimum = minimum

|

| 95 |

+

self.maximum = maximum

|

| 96 |

+

self.signalText = "30"

|

| 97 |

+

self.totalGreenTime = 0

|

| 98 |

+

|

| 99 |

+

class Vehicle(pygame.sprite.Sprite):

|

| 100 |

+

def __init__(self, lane, vehicleClass, direction_number, direction, will_turn):

|

| 101 |

+

pygame.sprite.Sprite.__init__(self)

|

| 102 |

+

self.lane = lane

|

| 103 |

+

self.vehicleClass = vehicleClass

|

| 104 |

+

self.speed = speeds[vehicleClass]

|

| 105 |

+

self.direction_number = direction_number

|

| 106 |

+

self.direction = direction

|

| 107 |

+

self.x = x[direction][lane]

|

| 108 |

+

self.y = y[direction][lane]

|

| 109 |

+

self.crossed = 0

|

| 110 |

+

self.willTurn = will_turn

|

| 111 |

+

self.turned = 0

|

| 112 |

+

self.rotateAngle = 0

|

| 113 |

+

vehicles[direction][lane].append(self)

|

| 114 |

+

# self.stop = stops[direction][lane]

|

| 115 |

+

self.index = len(vehicles[direction][lane]) - 1

|

| 116 |

+

path = "images/" + direction + "/" + vehicleClass + ".png"

|

| 117 |

+

self.originalImage = pygame.image.load(path)

|

| 118 |

+

self.currentImage = pygame.image.load(path)

|

| 119 |

+

|

| 120 |

+

|

| 121 |

+

if(direction=='right'):

|

| 122 |

+

if(len(vehicles[direction][lane])>1 and vehicles[direction][lane][self.index-1].crossed==0): # if more than 1 vehicle in the lane of vehicle before it has crossed stop line

|

| 123 |

+

self.stop = vehicles[direction][lane][self.index-1].stop - vehicles[direction][lane][self.index-1].currentImage.get_rect().width - gap # setting stop coordinate as: stop coordinate of next vehicle - width of next vehicle - gap

|

| 124 |

+

else:

|

| 125 |

+

self.stop = defaultStop[direction]

|

| 126 |

+

# Set new starting and stopping coordinate

|

| 127 |

+

temp = self.currentImage.get_rect().width + gap

|

| 128 |

+

x[direction][lane] -= temp

|

| 129 |

+

stops[direction][lane] -= temp

|

| 130 |

+

elif(direction=='left'):

|

| 131 |

+

if(len(vehicles[direction][lane])>1 and vehicles[direction][lane][self.index-1].crossed==0):

|

| 132 |

+

self.stop = vehicles[direction][lane][self.index-1].stop + vehicles[direction][lane][self.index-1].currentImage.get_rect().width + gap

|

| 133 |

+

else:

|

| 134 |

+

self.stop = defaultStop[direction]

|

| 135 |

+

temp = self.currentImage.get_rect().width + gap

|

| 136 |

+

x[direction][lane] += temp

|

| 137 |

+

stops[direction][lane] += temp

|

| 138 |

+

elif(direction=='down'):

|

| 139 |

+

if(len(vehicles[direction][lane])>1 and vehicles[direction][lane][self.index-1].crossed==0):

|

| 140 |

+

self.stop = vehicles[direction][lane][self.index-1].stop - vehicles[direction][lane][self.index-1].currentImage.get_rect().height - gap

|

| 141 |

+

else:

|

| 142 |

+

self.stop = defaultStop[direction]

|

| 143 |

+

temp = self.currentImage.get_rect().height + gap

|

| 144 |

+

y[direction][lane] -= temp

|

| 145 |

+

stops[direction][lane] -= temp

|

| 146 |

+

elif(direction=='up'):

|

| 147 |

+

if(len(vehicles[direction][lane])>1 and vehicles[direction][lane][self.index-1].crossed==0):

|

| 148 |

+

self.stop = vehicles[direction][lane][self.index-1].stop + vehicles[direction][lane][self.index-1].currentImage.get_rect().height + gap

|

| 149 |

+

else:

|

| 150 |

+

self.stop = defaultStop[direction]

|

| 151 |

+

temp = self.currentImage.get_rect().height + gap

|

| 152 |

+

y[direction][lane] += temp

|

| 153 |

+

stops[direction][lane] += temp

|

| 154 |

+

simulation.add(self)

|

| 155 |

+

|

| 156 |

+

def render(self, screen):

|

| 157 |

+

screen.blit(self.currentImage, (self.x, self.y))

|

| 158 |

+

|

| 159 |

+

def move(self):

|

| 160 |

+

if(self.direction=='right'):

|

| 161 |

+

if(self.crossed==0 and self.x+self.currentImage.get_rect().width>stopLines[self.direction]): # if the image has crossed stop line now

|

| 162 |

+

self.crossed = 1

|

| 163 |

+

vehicles[self.direction]['crossed'] += 1

|

| 164 |

+

if(self.willTurn==1):

|

| 165 |

+

if(self.crossed==0 or self.x+self.currentImage.get_rect().width<mid[self.direction]['x']):

|

| 166 |

+

if((self.x+self.currentImage.get_rect().width<=self.stop or (currentGreen==0 and currentYellow==0) or self.crossed==1) and (self.index==0 or self.x+self.currentImage.get_rect().width<(vehicles[self.direction][self.lane][self.index-1].x - gap2) or vehicles[self.direction][self.lane][self.index-1].turned==1)):

|

| 167 |

+

self.x += self.speed

|

| 168 |

+

else:

|

| 169 |

+

if(self.turned==0):

|

| 170 |

+

self.rotateAngle += rotationAngle

|

| 171 |

+

self.currentImage = pygame.transform.rotate(self.originalImage, -self.rotateAngle)

|

| 172 |

+

self.x += 2

|

| 173 |

+

self.y += 1.8

|

| 174 |

+

if(self.rotateAngle==90):

|

| 175 |

+

self.turned = 1

|

| 176 |

+

# path = "images/" + directionNumbers[((self.direction_number+1)%noOfSignals)] + "/" + self.vehicleClass + ".png"

|

| 177 |

+

# self.x = mid[self.direction]['x']

|

| 178 |

+

# self.y = mid[self.direction]['y']

|

| 179 |

+

# self.image = pygame.image.load(path)

|

| 180 |

+

else:

|

| 181 |

+

if(self.index==0 or self.y+self.currentImage.get_rect().height<(vehicles[self.direction][self.lane][self.index-1].y - gap2) or self.x+self.currentImage.get_rect().width<(vehicles[self.direction][self.lane][self.index-1].x - gap2)):

|

| 182 |

+

self.y += self.speed

|

| 183 |

+

else:

|

| 184 |

+

if((self.x+self.currentImage.get_rect().width<=self.stop or self.crossed == 1 or (currentGreen==0 and currentYellow==0)) and (self.index==0 or self.x+self.currentImage.get_rect().width<(vehicles[self.direction][self.lane][self.index-1].x - gap2) or (vehicles[self.direction][self.lane][self.index-1].turned==1))):

|

| 185 |

+

# (if the image has not reached its stop coordinate or has crossed stop line or has green signal) and (it is either the first vehicle in that lane or it is has enough gap to the next vehicle in that lane)

|

| 186 |

+

self.x += self.speed # move the vehicle

|

| 187 |

+

|

| 188 |

+

|

| 189 |

+

|

| 190 |

+

elif(self.direction=='down'):

|

| 191 |

+

if(self.crossed==0 and self.y+self.currentImage.get_rect().height>stopLines[self.direction]):

|

| 192 |

+

self.crossed = 1

|

| 193 |

+

vehicles[self.direction]['crossed'] += 1

|

| 194 |

+

if(self.willTurn==1):

|

| 195 |

+

if(self.crossed==0 or self.y+self.currentImage.get_rect().height<mid[self.direction]['y']):

|

| 196 |

+

if((self.y+self.currentImage.get_rect().height<=self.stop or (currentGreen==1 and currentYellow==0) or self.crossed==1) and (self.index==0 or self.y+self.currentImage.get_rect().height<(vehicles[self.direction][self.lane][self.index-1].y - gap2) or vehicles[self.direction][self.lane][self.index-1].turned==1)):

|

| 197 |

+

self.y += self.speed

|

| 198 |

+

else:

|

| 199 |

+

if(self.turned==0):

|

| 200 |

+

self.rotateAngle += rotationAngle

|

| 201 |

+

self.currentImage = pygame.transform.rotate(self.originalImage, -self.rotateAngle)

|

| 202 |

+

self.x -= 2.5

|

| 203 |

+

self.y += 2

|

| 204 |

+

if(self.rotateAngle==90):

|

| 205 |

+

self.turned = 1

|

| 206 |

+

else:

|

| 207 |

+

if(self.index==0 or self.x>(vehicles[self.direction][self.lane][self.index-1].x + vehicles[self.direction][self.lane][self.index-1].currentImage.get_rect().width + gap2) or self.y<(vehicles[self.direction][self.lane][self.index-1].y - gap2)):

|

| 208 |

+

self.x -= self.speed

|

| 209 |

+

else:

|

| 210 |

+

if((self.y+self.currentImage.get_rect().height<=self.stop or self.crossed == 1 or (currentGreen==1 and currentYellow==0)) and (self.index==0 or self.y+self.currentImage.get_rect().height<(vehicles[self.direction][self.lane][self.index-1].y - gap2) or (vehicles[self.direction][self.lane][self.index-1].turned==1))):

|

| 211 |

+

self.y += self.speed

|

| 212 |

+

|

| 213 |

+

elif(self.direction=='left'):

|

| 214 |

+

if(self.crossed==0 and self.x<stopLines[self.direction]):

|

| 215 |

+

self.crossed = 1

|

| 216 |

+

vehicles[self.direction]['crossed'] += 1

|

| 217 |

+

if(self.willTurn==1):

|

| 218 |

+

if(self.crossed==0 or self.x>mid[self.direction]['x']):

|

| 219 |

+

if((self.x>=self.stop or (currentGreen==2 and currentYellow==0) or self.crossed==1) and (self.index==0 or self.x>(vehicles[self.direction][self.lane][self.index-1].x + vehicles[self.direction][self.lane][self.index-1].currentImage.get_rect().width + gap2) or vehicles[self.direction][self.lane][self.index-1].turned==1)):

|

| 220 |

+

self.x -= self.speed

|

| 221 |

+

else:

|

| 222 |

+

if(self.turned==0):

|

| 223 |

+

self.rotateAngle += rotationAngle

|

| 224 |

+

self.currentImage = pygame.transform.rotate(self.originalImage, -self.rotateAngle)

|

| 225 |

+

self.x -= 1.8

|

| 226 |

+

self.y -= 2.5

|

| 227 |

+

if(self.rotateAngle==90):

|

| 228 |

+

self.turned = 1

|

| 229 |

+

# path = "images/" + directionNumbers[((self.direction_number+1)%noOfSignals)] + "/" + self.vehicleClass + ".png"

|

| 230 |

+

# self.x = mid[self.direction]['x']

|

| 231 |

+

# self.y = mid[self.direction]['y']

|

| 232 |

+

# self.currentImage = pygame.image.load(path)

|

| 233 |

+

else:

|

| 234 |

+

if(self.index==0 or self.y>(vehicles[self.direction][self.lane][self.index-1].y + vehicles[self.direction][self.lane][self.index-1].currentImage.get_rect().height + gap2) or self.x>(vehicles[self.direction][self.lane][self.index-1].x + gap2)):

|

| 235 |

+

self.y -= self.speed

|

| 236 |

+

else:

|

| 237 |

+

if((self.x>=self.stop or self.crossed == 1 or (currentGreen==2 and currentYellow==0)) and (self.index==0 or self.x>(vehicles[self.direction][self.lane][self.index-1].x + vehicles[self.direction][self.lane][self.index-1].currentImage.get_rect().width + gap2) or (vehicles[self.direction][self.lane][self.index-1].turned==1))):

|

| 238 |

+

# (if the image has not reached its stop coordinate or has crossed stop line or has green signal) and (it is either the first vehicle in that lane or it is has enough gap to the next vehicle in that lane)

|

| 239 |

+

self.x -= self.speed # move the vehicle

|

| 240 |

+

# if((self.x>=self.stop or self.crossed == 1 or (currentGreen==2 and currentYellow==0)) and (self.index==0 or self.x>(vehicles[self.direction][self.lane][self.index-1].x + vehicles[self.direction][self.lane][self.index-1].currentImage.get_rect().width + gap2))):

|

| 241 |

+

# self.x -= self.speed

|

| 242 |

+

elif(self.direction=='up'):

|

| 243 |

+

if(self.crossed==0 and self.y<stopLines[self.direction]):

|

| 244 |

+

self.crossed = 1

|

| 245 |

+

vehicles[self.direction]['crossed'] += 1

|

| 246 |

+

if(self.willTurn==1):

|

| 247 |

+

if(self.crossed==0 or self.y>mid[self.direction]['y']):

|

| 248 |

+

if((self.y>=self.stop or (currentGreen==3 and currentYellow==0) or self.crossed == 1) and (self.index==0 or self.y>(vehicles[self.direction][self.lane][self.index-1].y + vehicles[self.direction][self.lane][self.index-1].currentImage.get_rect().height + gap2) or vehicles[self.direction][self.lane][self.index-1].turned==1)):

|

| 249 |

+

self.y -= self.speed

|

| 250 |

+

else:

|

| 251 |

+

if(self.turned==0):

|

| 252 |

+

self.rotateAngle += rotationAngle

|

| 253 |

+

self.currentImage = pygame.transform.rotate(self.originalImage, -self.rotateAngle)

|

| 254 |

+

self.x += 1

|

| 255 |

+

self.y -= 1

|

| 256 |

+

if(self.rotateAngle==90):

|

| 257 |

+

self.turned = 1

|

| 258 |

+

else:

|

| 259 |

+

if(self.index==0 or self.x<(vehicles[self.direction][self.lane][self.index-1].x - vehicles[self.direction][self.lane][self.index-1].currentImage.get_rect().width - gap2) or self.y>(vehicles[self.direction][self.lane][self.index-1].y + gap2)):

|

| 260 |

+

self.x += self.speed

|

| 261 |

+

else:

|

| 262 |

+

if((self.y>=self.stop or self.crossed == 1 or (currentGreen==3 and currentYellow==0)) and (self.index==0 or self.y>(vehicles[self.direction][self.lane][self.index-1].y + vehicles[self.direction][self.lane][self.index-1].currentImage.get_rect().height + gap2) or (vehicles[self.direction][self.lane][self.index-1].turned==1))):

|

| 263 |

+

self.y -= self.speed

|

| 264 |

+

|

| 265 |

+

# Initialization of signals with default values

|

| 266 |

+

def initialize():

|

| 267 |

+

ts1 = TrafficSignal(0, defaultYellow, defaultGreen, defaultMinimum, defaultMaximum)

|

| 268 |

+

signals.append(ts1)

|

| 269 |

+

ts2 = TrafficSignal(ts1.red+ts1.yellow+ts1.green, defaultYellow, defaultGreen, defaultMinimum, defaultMaximum)

|

| 270 |

+

signals.append(ts2)

|

| 271 |

+

ts3 = TrafficSignal(defaultRed, defaultYellow, defaultGreen, defaultMinimum, defaultMaximum)

|

| 272 |

+

signals.append(ts3)

|

| 273 |

+

ts4 = TrafficSignal(defaultRed, defaultYellow, defaultGreen, defaultMinimum, defaultMaximum)

|

| 274 |

+

signals.append(ts4)

|

| 275 |

+

repeat()

|

| 276 |

+

|

| 277 |

+

# Set time according to formula

|

| 278 |

+

def setTime():

|

| 279 |

+

global noOfCars, noOfBikes, noOfBuses, noOfTrucks, noOfLanes

|

| 280 |

+

global carTime, busTime, truckTime, bikeTime

|

| 281 |

+

os.system("say detecting vehicles, "+directionNumbers[(currentGreen+1)%noOfSignals])

|

| 282 |

+

# detection_result=detection(currentGreen,tfnet)

|

| 283 |

+

# greenTime = math.ceil(((noOfCars*carTime) + (noOfBuses*busTime) + (noOfBikes*bikeTime))/(noOfLanes+1))

|

| 284 |

+

# if(greenTime<defaultMinimum):

|

| 285 |

+

# greenTime = defaultMinimum

|

| 286 |

+

# elif(greenTime>defaultMaximum):

|

| 287 |

+

# greenTime = defaultMaximum

|

| 288 |

+

# greenTime = len(vehicles[currentGreen][0])+len(vehicles[currentGreen][1])+len(vehicles[currentGreen][2])

|

| 289 |

+

# noOfVehicles = len(vehicles[directionNumbers[nextGreen]][1])+len(vehicles[directionNumbers[nextGreen]][2])-vehicles[directionNumbers[nextGreen]]['crossed']

|

| 290 |

+

# print("no. of vehicles = ",noOfVehicles)

|

| 291 |

+

noOfCars, noOfBuses, noOfTrucks, noOfBikes = 0,0,0,0

|

| 292 |

+

for j in range(len(vehicles[directionNumbers[nextGreen]][0])):

|

| 293 |

+

vehicle = vehicles[directionNumbers[nextGreen]][0][j]

|

| 294 |

+

if(vehicle.crossed==0):

|

| 295 |

+

vclass = vehicle.vehicleClass

|

| 296 |

+

# print(vclass)

|

| 297 |

+

noOfBikes += 1

|

| 298 |

+

for i in range(1,3):

|

| 299 |

+

for j in range(len(vehicles[directionNumbers[nextGreen]][i])):

|

| 300 |

+

vehicle = vehicles[directionNumbers[nextGreen]][i][j]

|

| 301 |

+

if(vehicle.crossed==0):

|

| 302 |

+

vclass = vehicle.vehicleClass

|

| 303 |

+

# print(vclass)

|

| 304 |

+

if(vclass=='car'):

|

| 305 |

+

noOfCars += 1

|

| 306 |

+

elif(vclass=='bus'):

|

| 307 |

+

noOfBuses += 1

|

| 308 |

+

elif(vclass=='truck'):

|

| 309 |

+

noOfTrucks += 1

|

| 310 |

+

# print(noOfCars)

|

| 311 |

+

greenTime = math.ceil(((noOfCars*carTime) + (noOfBuses*busTime) + (noOfTrucks*truckTime)+ (noOfBikes*bikeTime))/(noOfLanes+1))

|

| 312 |

+

# greenTime = math.ceil((noOfVehicles)/noOfLanes)

|

| 313 |

+

print('Green Time: ',greenTime)

|

| 314 |

+

if(greenTime<defaultMinimum):

|

| 315 |

+

greenTime = defaultMinimum

|

| 316 |

+

elif(greenTime>defaultMaximum):

|

| 317 |

+

greenTime = defaultMaximum

|

| 318 |

+

# greenTime = random.randint(15,50)

|

| 319 |

+

signals[(currentGreen+1)%(noOfSignals)].green = greenTime

|

| 320 |

+

|

| 321 |

+

def repeat():

|

| 322 |

+

global currentGreen, currentYellow, nextGreen

|

| 323 |

+

while(signals[currentGreen].green>0): # while the timer of current green signal is not zero

|

| 324 |

+

printStatus()

|

| 325 |

+

updateValues()

|

| 326 |

+

if(signals[(currentGreen+1)%(noOfSignals)].red==detectionTime): # set time of next green signal

|

| 327 |

+

thread = threading.Thread(name="detection",target=setTime, args=())

|

| 328 |

+

thread.daemon = True

|

| 329 |

+

thread.start()

|

| 330 |

+

# setTime()

|

| 331 |

+

time.sleep(1)

|

| 332 |

+

currentYellow = 1 # set yellow signal on

|

| 333 |

+

vehicleCountTexts[currentGreen] = "0"

|

| 334 |

+

# reset stop coordinates of lanes and vehicles

|

| 335 |

+

for i in range(0,3):

|

| 336 |

+

stops[directionNumbers[currentGreen]][i] = defaultStop[directionNumbers[currentGreen]]

|

| 337 |

+

for vehicle in vehicles[directionNumbers[currentGreen]][i]:

|

| 338 |

+

vehicle.stop = defaultStop[directionNumbers[currentGreen]]

|

| 339 |

+

while(signals[currentGreen].yellow>0): # while the timer of current yellow signal is not zero

|

| 340 |

+

printStatus()

|

| 341 |

+

updateValues()

|

| 342 |

+

time.sleep(1)

|

| 343 |

+

currentYellow = 0 # set yellow signal off

|

| 344 |

+

|

| 345 |

+

# reset all signal times of current signal to default times

|

| 346 |

+

signals[currentGreen].green = defaultGreen

|

| 347 |

+

signals[currentGreen].yellow = defaultYellow

|

| 348 |

+

signals[currentGreen].red = defaultRed

|

| 349 |

+

|

| 350 |

+

currentGreen = nextGreen # set next signal as green signal

|

| 351 |

+

nextGreen = (currentGreen+1)%noOfSignals # set next green signal

|

| 352 |

+

signals[nextGreen].red = signals[currentGreen].yellow+signals[currentGreen].green # set the red time of next to next signal as (yellow time + green time) of next signal

|

| 353 |

+

repeat()

|

| 354 |

+

|

| 355 |

+

# Print the signal timers on cmd

|

| 356 |

+

def printStatus():

|

| 357 |

+

for i in range(0, noOfSignals):

|

| 358 |

+

if(i==currentGreen):

|

| 359 |

+

if(currentYellow==0):

|

| 360 |

+

print(" GREEN TS",i+1,"-> r:",signals[i].red," y:",signals[i].yellow," g:",signals[i].green)

|

| 361 |

+

else:

|

| 362 |

+

print("YELLOW TS",i+1,"-> r:",signals[i].red," y:",signals[i].yellow," g:",signals[i].green)

|

| 363 |

+

else:

|

| 364 |

+

print(" RED TS",i+1,"-> r:",signals[i].red," y:",signals[i].yellow," g:",signals[i].green)

|

| 365 |

+

print()

|

| 366 |

+

|

| 367 |

+

# Update values of the signal timers after every second

|

| 368 |

+

def updateValues():

|

| 369 |

+

for i in range(0, noOfSignals):

|

| 370 |

+

if(i==currentGreen):

|

| 371 |

+

if(currentYellow==0):

|

| 372 |

+

signals[i].green-=1

|

| 373 |

+

signals[i].totalGreenTime+=1

|

| 374 |

+

else:

|

| 375 |

+

signals[i].yellow-=1

|

| 376 |

+

else:

|

| 377 |

+

signals[i].red-=1

|

| 378 |

+

|

| 379 |

+

# Generating vehicles in the simulation

|

| 380 |

+

def generateVehicles():

|

| 381 |

+

while True:

|

| 382 |

+

vehicle_type = random.randint(0, 3)

|

| 383 |

+

if vehicle_type == 3:

|

| 384 |

+

lane_number = 0

|

| 385 |

+

else:

|

| 386 |

+

lane_number = random.randint(0, 1) + 1

|

| 387 |

+

will_turn = 0

|

| 388 |

+

if lane_number == 2:

|

| 389 |

+

temp = random.randint(0, 4)

|

| 390 |

+

if temp <= 2:

|

| 391 |

+

will_turn = 1

|

| 392 |

+

elif temp > 2:

|

| 393 |

+

will_turn = 0

|

| 394 |

+

temp = random.randint(0, 999)

|

| 395 |

+

direction_number = 0

|

| 396 |

+

a = [400, 800, 900, 1000]

|

| 397 |

+

if temp < a[0]:

|

| 398 |

+

direction_number = 0

|

| 399 |

+

elif temp < a[1]:

|

| 400 |

+

direction_number = 1

|

| 401 |

+

elif temp < a[2]:

|

| 402 |

+

direction_number = 2

|

| 403 |

+

elif temp < a[3]:

|

| 404 |

+

direction_number = 3

|

| 405 |

+

Vehicle(lane_number, vehicleTypes[vehicle_type], direction_number, directionNumbers[direction_number], will_turn)

|

| 406 |

+

time.sleep(0.75)

|

| 407 |

+

|

| 408 |

+

def simulationTime():

|

| 409 |

+

global timeElapsed, simTime

|

| 410 |

+

while(True):

|

| 411 |

+

timeElapsed += 1

|

| 412 |

+

time.sleep(1)

|

| 413 |

+

if(timeElapsed==simTime):

|

| 414 |

+

totalVehicles = 0

|

| 415 |

+

print('Lane-wise Vehicle Counts')

|

| 416 |

+

for i in range(noOfSignals):

|

| 417 |

+

print('Lane',i+1,':',vehicles[directionNumbers[i]]['crossed'])

|

| 418 |

+

totalVehicles += vehicles[directionNumbers[i]]['crossed']

|

| 419 |

+

print('Total vehicles passed: ',totalVehicles)

|

| 420 |

+

print('Total time passed: ',timeElapsed)

|

| 421 |

+

print('No. of vehicles passed per unit time: ',(float(totalVehicles)/float(timeElapsed)))

|

| 422 |

+

os._exit(1)

|

| 423 |

+

|

| 424 |

+

|

| 425 |

+

class Main:

|

| 426 |

+

thread4 = threading.Thread(name="simulationTime",target=simulationTime, args=())

|

| 427 |

+

thread4.daemon = True

|

| 428 |

+

thread4.start()

|

| 429 |

+

|

| 430 |

+

thread2 = threading.Thread(name="initialization",target=initialize, args=()) # initialization

|

| 431 |

+

thread2.daemon = True

|

| 432 |

+

thread2.start()

|

| 433 |

+

|

| 434 |

+

# Colours

|

| 435 |

+

black = (0, 0, 0)

|

| 436 |

+

white = (255, 255, 255)

|

| 437 |

+

|

| 438 |

+

# Screensize

|

| 439 |

+

screenWidth = 1400

|

| 440 |

+

screenHeight = 800

|

| 441 |

+

screenSize = (screenWidth, screenHeight)

|

| 442 |

+

|

| 443 |

+

# Setting background image i.e. image of intersection

|

| 444 |

+

background = pygame.image.load('images/mod_int.png')

|

| 445 |

+

|

| 446 |

+

screen = pygame.display.set_mode(screenSize)

|

| 447 |

+

pygame.display.set_caption("SIMULATION")

|

| 448 |

+

|

| 449 |

+

# Loading signal images and font

|

| 450 |

+

redSignal = pygame.image.load('images/signals/red.png')

|

| 451 |

+

yellowSignal = pygame.image.load('images/signals/yellow.png')

|

| 452 |

+

greenSignal = pygame.image.load('images/signals/green.png')

|

| 453 |

+

font = pygame.font.Font(None, 30)

|

| 454 |

+

|

| 455 |

+

thread3 = threading.Thread(name="generateVehicles",target=generateVehicles, args=()) # Generating vehicles

|

| 456 |

+

thread3.daemon = True

|

| 457 |

+

thread3.start()

|

| 458 |

+

|

| 459 |

+

while True:

|

| 460 |

+

for event in pygame.event.get():

|

| 461 |

+

if event.type == pygame.QUIT:

|

| 462 |

+

sys.exit()

|

| 463 |

+

|

| 464 |

+

screen.blit(background,(0,0)) # display background in simulation

|

| 465 |

+

for i in range(0,noOfSignals): # display signal and set timer according to current status: green, yello, or red

|

| 466 |

+

if(i==currentGreen):

|

| 467 |

+

if(currentYellow==1):

|

| 468 |

+

if(signals[i].yellow==0):

|

| 469 |

+

signals[i].signalText = "STOP"

|

| 470 |

+

else:

|

| 471 |

+

signals[i].signalText = signals[i].yellow

|

| 472 |

+

screen.blit(yellowSignal, signalCoods[i])

|

| 473 |

+

else:

|

| 474 |

+

if(signals[i].green==0):

|

| 475 |

+

signals[i].signalText = "SLOW"

|

| 476 |

+

else:

|

| 477 |

+

signals[i].signalText = signals[i].green

|

| 478 |

+

screen.blit(greenSignal, signalCoods[i])

|

| 479 |

+

else:

|

| 480 |

+

if(signals[i].red<=10):

|

| 481 |

+

if(signals[i].red==0):

|

| 482 |

+

signals[i].signalText = "GO"

|

| 483 |

+

else:

|

| 484 |

+

signals[i].signalText = signals[i].red

|

| 485 |

+

else:

|

| 486 |

+

signals[i].signalText = "---"

|

| 487 |

+

screen.blit(redSignal, signalCoods[i])

|

| 488 |

+

signalTexts = ["","","",""]

|

| 489 |

+

|

| 490 |

+

# display signal timer and vehicle count

|

| 491 |

+

for i in range(0,noOfSignals):

|

| 492 |

+

signalTexts[i] = font.render(str(signals[i].signalText), True, white, black)

|

| 493 |

+

screen.blit(signalTexts[i],signalTimerCoods[i])

|

| 494 |

+

displayText = vehicles[directionNumbers[i]]['crossed']

|

| 495 |

+

vehicleCountTexts[i] = font.render(str(displayText), True, black, white)

|

| 496 |

+

screen.blit(vehicleCountTexts[i],vehicleCountCoods[i])