Update README.md

Browse files

README.md

CHANGED

|

@@ -1,46 +1,49 @@

|

|

| 1 |

-

Hugging Face's logo

|

| 2 |

-

---

|

| 3 |

tags:

|

| 4 |

- object-detection

|

| 5 |

- vision

|

| 6 |

-

library_name:

|

| 7 |

datasets:

|

| 8 |

- coco

|

| 9 |

|

| 10 |

---

|

| 11 |

|

| 12 |

-

#

|

| 13 |

|

| 14 |

## Model desription

|

| 15 |

|

| 16 |

-

|

| 17 |

|

| 18 |

*This Model is based on the Pretrained model from [OpenMMlab](https://github.com/open-mmlab/mmdetection)*

|

| 19 |

|

| 20 |

-

|

| 31 |

|

| 32 |

-

##

|

| 33 |

Please [read the paper](https://arxiv.org/pdf/1703.06870.pdf) for more information on training, or check OpenMMLab [repository](https://github.com/open-mmlab/mmdetection/tree/master/configs/mask_rcnn)

|

| 34 |

|

| 35 |

-

|

|

|

|

|

|

|

| 36 |

|

| 37 |

-

|

|

|

|

|

|

|

|

|

|

|

|

|

| 38 |

|

| 39 |

#### Results Summary

|

| 40 |

-

-

|

| 41 |

-

-

|

| 42 |

-

- The selective search model takes more time(ms) than the RPN model.

|

| 43 |

-

|

| 44 |

|

| 45 |

## Intended uses & limitations

|

| 46 |

-

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

tags:

|

| 2 |

- object-detection

|

| 3 |

- vision

|

| 4 |

+

library_name: mask_rcnn

|

| 5 |

datasets:

|

| 6 |

- coco

|

| 7 |

|

| 8 |

---

|

| 9 |

|

| 10 |

+

# Mask R-CNN

|

| 11 |

|

| 12 |

## Model desription

|

| 13 |

|

| 14 |

+

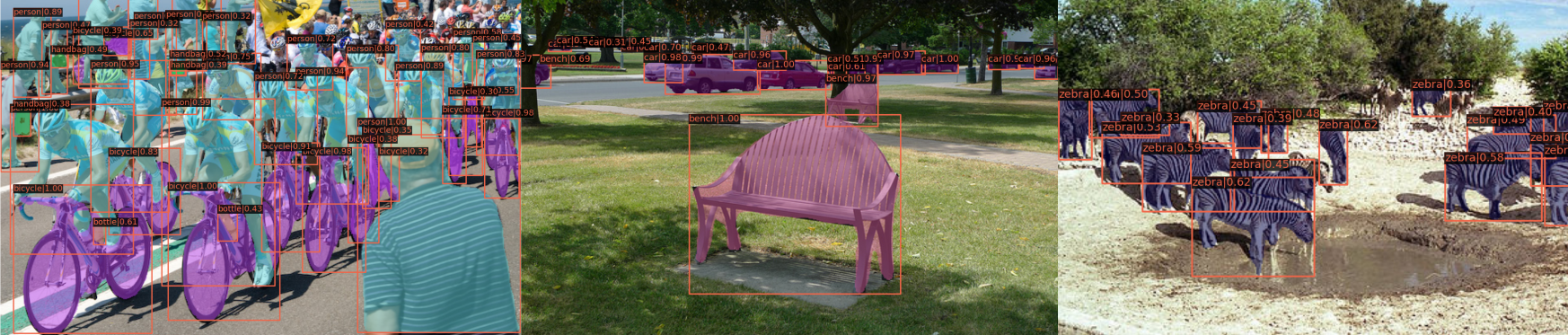

Mask R-CNN is a model that extends Faster R-CNN by adding a branch for predicting an object mask in parallel with the existing branch for bounding box recognition. The model locates pixels of images instead of just bounding boxes as Faster R-CNN was not designed for pixel-to-pixel alignment between network inputs and outputs.

|

| 15 |

|

| 16 |

*This Model is based on the Pretrained model from [OpenMMlab](https://github.com/open-mmlab/mmdetection)*

|

| 17 |

|

| 18 |

+

|

| 19 |

|

| 20 |

+

### More information on the model and dataset:

|

| 21 |

|

| 22 |

#### The model

|

| 23 |

+

Mask R-CNN works towards the approach of instance segmentation, which involves object detection, and semantic segmentation. For object detection, Mask R-CNN uses an architecture that is similar to Faster R-CNN, while it uses a Fully Convolutional Network(FCN) for semantic segmentation.

|

| 24 |

+

The FCN is added to the top of features of a Faster R-CNN to generate a mask segmentation output. This segmentation output is in parallel with the classification and bounding box regressor network of the Faster R-CNN model. From the advancement of Fast R-CNN Region of Interest Pooling(ROI), Mask R-CNN adds refinement called ROI aligning by addressing the loss and misalignment of ROI Pooling; the new ROI aligned leads to improved results.

|

| 25 |

|

|

|

|

| 26 |

|

| 27 |

#### Datasets

|

| 28 |

[COCO Datasets](https://cocodataset.org/#home)

|

| 29 |

|

| 30 |

+

## Training Procedure

|

| 31 |

Please [read the paper](https://arxiv.org/pdf/1703.06870.pdf) for more information on training, or check OpenMMLab [repository](https://github.com/open-mmlab/mmdetection/tree/master/configs/mask_rcnn)

|

| 32 |

|

| 33 |

+

The model architecture is divided into two parts:

|

| 34 |

+

- Region proposal network (RPN) to propose candidate object bounding boxes.

|

| 35 |

+

- Binary mask classifier to generate a mask for every class

|

| 36 |

|

| 37 |

+

#### Technical Summary.

|

| 38 |

+

- Mask R-CNN is quite similar to the structure of faster R-CNN.

|

| 39 |

+

- Outputs a binary mask for each Region of Interest.

|

| 40 |

+

- Applies bounding-box classification and regression in parallel, simplifying the original R-CNN's multi-stage pipeline.

|

| 41 |

+

- The network architectures utilized are called ResNet and ResNeXt. The depth can be either 50 or 101

|

| 42 |

|

| 43 |

#### Results Summary

|

| 44 |

+

- Instance Segmentation: Based on the COCO dataset, Mask R-CNN outperforms all categories compared to MNC and FCIS, which are state-of-the-art models.

|

| 45 |

+

- Bounding Box Detection: Mask R-CNN outperforms the base variants of all previous state-of-the-art models, including the COCO 2016 Detection Challenge winner.

|

|

|

|

|

|

|

| 46 |

|

| 47 |

## Intended uses & limitations

|

| 48 |

+

The identification of object relationships and the context of objects in a picture are both aided by image segmentation. Some of the applications include face recognition, number plate recognition, and satellite image analysis. With great model generality, Mask RCNN can be extended to human pose estimation; it can be used to estimate on-site approaching live traffic to aid autonomous driving.

|

| 49 |

+

|