Audio-to-Audio

Transformers

Safetensors

English

Chinese

qwen2

text-generation

text-generation-inference

Instructions to use cmots/UniSS with libraries, inference providers, notebooks, and local apps. Follow these links to get started.

- Libraries

- Transformers

How to use cmots/UniSS with Transformers:

# Load model directly from transformers import AutoTokenizer, AutoModelForCausalLM tokenizer = AutoTokenizer.from_pretrained("cmots/UniSS") model = AutoModelForCausalLM.from_pretrained("cmots/UniSS") - Notebooks

- Google Colab

- Kaggle

Upload folder using huggingface_hub

Browse files- .gitattributes +1 -0

- bicodec/.gitattributes +39 -0

- bicodec/BiCodec/config.yaml +60 -0

- bicodec/BiCodec/model.safetensors +3 -0

- bicodec/README.md +130 -0

- bicodec/config.yaml +7 -0

- bicodec/wav2vec2-large-xlsr-53/README.md +29 -0

- bicodec/wav2vec2-large-xlsr-53/config.json +83 -0

- bicodec/wav2vec2-large-xlsr-53/preprocessor_config.json +9 -0

- bicodec/wav2vec2-large-xlsr-53/pytorch_model.bin +3 -0

- config.json +29 -0

- generation_config.json +14 -0

- glm4_tokenizer/.gitattributes +35 -0

- glm4_tokenizer/LICENSE +70 -0

- glm4_tokenizer/README.md +13 -0

- glm4_tokenizer/config.json +65 -0

- glm4_tokenizer/model.safetensors +3 -0

- glm4_tokenizer/preprocessor_config.json +14 -0

- merges.txt +0 -0

- model.safetensors +3 -0

- tokenizer.json +3 -0

- tokenizer_config.json +0 -0

- vocab.json +0 -0

.gitattributes

CHANGED

|

@@ -33,3 +33,4 @@ saved_model/**/* filter=lfs diff=lfs merge=lfs -text

|

|

| 33 |

*.zip filter=lfs diff=lfs merge=lfs -text

|

| 34 |

*.zst filter=lfs diff=lfs merge=lfs -text

|

| 35 |

*tfevents* filter=lfs diff=lfs merge=lfs -text

|

|

|

|

|

|

| 33 |

*.zip filter=lfs diff=lfs merge=lfs -text

|

| 34 |

*.zst filter=lfs diff=lfs merge=lfs -text

|

| 35 |

*tfevents* filter=lfs diff=lfs merge=lfs -text

|

| 36 |

+

tokenizer.json filter=lfs diff=lfs merge=lfs -text

|

bicodec/.gitattributes

ADDED

|

@@ -0,0 +1,39 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

*.7z filter=lfs diff=lfs merge=lfs -text

|

| 2 |

+

*.arrow filter=lfs diff=lfs merge=lfs -text

|

| 3 |

+

*.bin filter=lfs diff=lfs merge=lfs -text

|

| 4 |

+

*.bz2 filter=lfs diff=lfs merge=lfs -text

|

| 5 |

+

*.ckpt filter=lfs diff=lfs merge=lfs -text

|

| 6 |

+

*.ftz filter=lfs diff=lfs merge=lfs -text

|

| 7 |

+

*.gz filter=lfs diff=lfs merge=lfs -text

|

| 8 |

+

*.h5 filter=lfs diff=lfs merge=lfs -text

|

| 9 |

+

*.joblib filter=lfs diff=lfs merge=lfs -text

|

| 10 |

+

*.lfs.* filter=lfs diff=lfs merge=lfs -text

|

| 11 |

+

*.mlmodel filter=lfs diff=lfs merge=lfs -text

|

| 12 |

+

*.model filter=lfs diff=lfs merge=lfs -text

|

| 13 |

+

*.msgpack filter=lfs diff=lfs merge=lfs -text

|

| 14 |

+

*.npy filter=lfs diff=lfs merge=lfs -text

|

| 15 |

+

*.npz filter=lfs diff=lfs merge=lfs -text

|

| 16 |

+

*.onnx filter=lfs diff=lfs merge=lfs -text

|

| 17 |

+

*.ot filter=lfs diff=lfs merge=lfs -text

|

| 18 |

+

*.parquet filter=lfs diff=lfs merge=lfs -text

|

| 19 |

+

*.pb filter=lfs diff=lfs merge=lfs -text

|

| 20 |

+

*.pickle filter=lfs diff=lfs merge=lfs -text

|

| 21 |

+

*.pkl filter=lfs diff=lfs merge=lfs -text

|

| 22 |

+

*.pt filter=lfs diff=lfs merge=lfs -text

|

| 23 |

+

*.pth filter=lfs diff=lfs merge=lfs -text

|

| 24 |

+

*.rar filter=lfs diff=lfs merge=lfs -text

|

| 25 |

+

*.safetensors filter=lfs diff=lfs merge=lfs -text

|

| 26 |

+

saved_model/**/* filter=lfs diff=lfs merge=lfs -text

|

| 27 |

+

*.tar.* filter=lfs diff=lfs merge=lfs -text

|

| 28 |

+

*.tar filter=lfs diff=lfs merge=lfs -text

|

| 29 |

+

*.tflite filter=lfs diff=lfs merge=lfs -text

|

| 30 |

+

*.tgz filter=lfs diff=lfs merge=lfs -text

|

| 31 |

+

*.wasm filter=lfs diff=lfs merge=lfs -text

|

| 32 |

+

*.xz filter=lfs diff=lfs merge=lfs -text

|

| 33 |

+

*.zip filter=lfs diff=lfs merge=lfs -text

|

| 34 |

+

*.zst filter=lfs diff=lfs merge=lfs -text

|

| 35 |

+

*tfevents* filter=lfs diff=lfs merge=lfs -text

|

| 36 |

+

LLM/tokenizer.json filter=lfs diff=lfs merge=lfs -text

|

| 37 |

+

wav2vec2-large-xlsr-53/pytorch_model.bin filter=lfs diff=lfs merge=lfs -text

|

| 38 |

+

LLM/model.safetensors filter=lfs diff=lfs merge=lfs -text

|

| 39 |

+

BiCodec/model.safetensors filter=lfs diff=lfs merge=lfs -text

|

bicodec/BiCodec/config.yaml

ADDED

|

@@ -0,0 +1,60 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

audio_tokenizer:

|

| 2 |

+

mel_params:

|

| 3 |

+

sample_rate: 16000

|

| 4 |

+

n_fft: 1024

|

| 5 |

+

win_length: 640

|

| 6 |

+

hop_length: 320

|

| 7 |

+

mel_fmin: 10

|

| 8 |

+

mel_fmax: null

|

| 9 |

+

num_mels: 128

|

| 10 |

+

|

| 11 |

+

encoder:

|

| 12 |

+

input_channels: 1024

|

| 13 |

+

vocos_dim: 384

|

| 14 |

+

vocos_intermediate_dim: 2048

|

| 15 |

+

vocos_num_layers: 12

|

| 16 |

+

out_channels: 1024

|

| 17 |

+

sample_ratios: [1,1]

|

| 18 |

+

|

| 19 |

+

decoder:

|

| 20 |

+

input_channel: 1024

|

| 21 |

+

channels: 1536

|

| 22 |

+

rates: [8, 5, 4, 2]

|

| 23 |

+

kernel_sizes: [16,11,8,4]

|

| 24 |

+

|

| 25 |

+

quantizer:

|

| 26 |

+

input_dim: 1024

|

| 27 |

+

codebook_size: 8192

|

| 28 |

+

codebook_dim: 8

|

| 29 |

+

commitment: 0.25

|

| 30 |

+

codebook_loss_weight: 2.0

|

| 31 |

+

use_l2_normlize: True

|

| 32 |

+

threshold_ema_dead_code: 0.2

|

| 33 |

+

|

| 34 |

+

speaker_encoder:

|

| 35 |

+

input_dim: 128

|

| 36 |

+

out_dim: 1024

|

| 37 |

+

latent_dim: 128

|

| 38 |

+

token_num: 32

|

| 39 |

+

fsq_levels: [4, 4, 4, 4, 4, 4]

|

| 40 |

+

fsq_num_quantizers: 1

|

| 41 |

+

|

| 42 |

+

prenet:

|

| 43 |

+

input_channels: 1024

|

| 44 |

+

vocos_dim: 384

|

| 45 |

+

vocos_intermediate_dim: 2048

|

| 46 |

+

vocos_num_layers: 12

|

| 47 |

+

out_channels: 1024

|

| 48 |

+

condition_dim: 1024

|

| 49 |

+

sample_ratios: [1,1]

|

| 50 |

+

use_tanh_at_final: False

|

| 51 |

+

|

| 52 |

+

postnet:

|

| 53 |

+

input_channels: 1024

|

| 54 |

+

vocos_dim: 384

|

| 55 |

+

vocos_intermediate_dim: 2048

|

| 56 |

+

vocos_num_layers: 6

|

| 57 |

+

out_channels: 1024

|

| 58 |

+

use_tanh_at_final: False

|

| 59 |

+

|

| 60 |

+

|

bicodec/BiCodec/model.safetensors

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

version https://git-lfs.github.com/spec/v1

|

| 2 |

+

oid sha256:e9940cd48d4446e4340ced82d234bf5618350dd9f5db900ebe47a4fdb03867ec

|

| 3 |

+

size 625518756

|

bicodec/README.md

ADDED

|

@@ -0,0 +1,130 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

---

|

| 2 |

+

license: apache-2.0

|

| 3 |

+

language:

|

| 4 |

+

- en

|

| 5 |

+

- zh

|

| 6 |

+

tags:

|

| 7 |

+

- text-to-speech

|

| 8 |

+

library_tag: spark-tts

|

| 9 |

+

---

|

| 10 |

+

|

| 11 |

+

|

| 12 |

+

<div align="center">

|

| 13 |

+

<h1>

|

| 14 |

+

Spark-TTS

|

| 15 |

+

</h1>

|

| 16 |

+

<p>

|

| 17 |

+

Official model for <br>

|

| 18 |

+

<b><em>Spark-TTS: An Efficient LLM-Based Text-to-Speech Model with Single-Stream Decoupled Speech Tokens</em></b>

|

| 19 |

+

</p>

|

| 20 |

+

<p>

|

| 21 |

+

<img src="src/logo.webp" alt="Spark-TTS Logo" style="width: 200px; height: 200px;">

|

| 22 |

+

</p>

|

| 23 |

+

<p>

|

| 24 |

+

|

| 25 |

+

</div>

|

| 26 |

+

|

| 27 |

+

|

| 28 |

+

## Spark-TTS 🔥

|

| 29 |

+

|

| 30 |

+

### 👉🏻 [Spark-TTS Demos](https://sparkaudio.github.io/spark-tts/) 👈🏻

|

| 31 |

+

|

| 32 |

+

### 👉🏻 [Github Repo](https://github.com/SparkAudio/Spark-TTS) 👈🏻

|

| 33 |

+

|

| 34 |

+

### Overview

|

| 35 |

+

|

| 36 |

+

Spark-TTS is an advanced text-to-speech system that uses the power of large language models (LLM) for highly accurate and natural-sounding voice synthesis. It is designed to be efficient, flexible, and powerful for both research and production use.

|

| 37 |

+

|

| 38 |

+

### Key Features

|

| 39 |

+

|

| 40 |

+

- **Simplicity and Efficiency**: Built entirely on Qwen2.5, Spark-TTS eliminates the need for additional generation models like flow matching. Instead of relying on separate models to generate acoustic features, it directly reconstructs audio from the code predicted by the LLM. This approach streamlines the process, improving efficiency and reducing complexity.

|

| 41 |

+

- **High-Quality Voice Cloning**: Supports zero-shot voice cloning, which means it can replicate a speaker's voice even without specific training data for that voice. This is ideal for cross-lingual and code-switching scenarios, allowing for seamless transitions between languages and voices without requiring separate training for each one.

|

| 42 |

+

- **Bilingual Support**: Supports both Chinese and English, and is capable of zero-shot voice cloning for cross-lingual and code-switching scenarios, enabling the model to synthesize speech in multiple languages with high naturalness and accuracy.

|

| 43 |

+

- **Controllable Speech Generation**: Supports creating virtual speakers by adjusting parameters such as gender, pitch, and speaking rate.

|

| 44 |

+

|

| 45 |

+

---

|

| 46 |

+

|

| 47 |

+

<table align="center">

|

| 48 |

+

<tr>

|

| 49 |

+

<td align="center"><b>Inference Overview of Voice Cloning</b><br><img src="src/figures/infer_voice_cloning.png" width="80%" /></td>

|

| 50 |

+

</tr>

|

| 51 |

+

<tr>

|

| 52 |

+

<td align="center"><b>Inference Overview of Controlled Generation</b><br><img src="src/figures/infer_control.png" width="80%" /></td>

|

| 53 |

+

</tr>

|

| 54 |

+

</table>

|

| 55 |

+

|

| 56 |

+

|

| 57 |

+

## Install

|

| 58 |

+

**Clone and Install**

|

| 59 |

+

|

| 60 |

+

- Clone the repo

|

| 61 |

+

``` sh

|

| 62 |

+

git clone https://github.com/SparkAudio/Spark-TTS.git

|

| 63 |

+

cd Spark-TTS

|

| 64 |

+

```

|

| 65 |

+

|

| 66 |

+

- Install Conda: please see https://docs.conda.io/en/latest/miniconda.html

|

| 67 |

+

- Create Conda env:

|

| 68 |

+

|

| 69 |

+

``` sh

|

| 70 |

+

conda create -n sparktts -y python=3.12

|

| 71 |

+

conda activate sparktts

|

| 72 |

+

pip install -r requirements.txt

|

| 73 |

+

# If you are in mainland China, you can set the mirror as follows:

|

| 74 |

+

pip install -r requirements.txt -i https://mirrors.aliyun.com/pypi/simple/ --trusted-host=mirrors.aliyun.com

|

| 75 |

+

```

|

| 76 |

+

|

| 77 |

+

**Model Download**

|

| 78 |

+

|

| 79 |

+

Download via python:

|

| 80 |

+

```python

|

| 81 |

+

from huggingface_hub import snapshot_download

|

| 82 |

+

|

| 83 |

+

snapshot_download("SparkAudio/Spark-TTS-0.5B", local_dir="pretrained_models/Spark-TTS-0.5B")

|

| 84 |

+

```

|

| 85 |

+

|

| 86 |

+

Download via git clone:

|

| 87 |

+

```sh

|

| 88 |

+

mkdir -p pretrained_models

|

| 89 |

+

|

| 90 |

+

# Make sure you have git-lfs installed (https://git-lfs.com)

|

| 91 |

+

git lfs install

|

| 92 |

+

|

| 93 |

+

git clone https://huggingface.co/SparkAudio/Spark-TTS-0.5B pretrained_models/Spark-TTS-0.5B

|

| 94 |

+

```

|

| 95 |

+

|

| 96 |

+

**Basic Usage**

|

| 97 |

+

|

| 98 |

+

You can simply run the demo with the following commands:

|

| 99 |

+

``` sh

|

| 100 |

+

cd example

|

| 101 |

+

bash infer.sh

|

| 102 |

+

```

|

| 103 |

+

|

| 104 |

+

Alternatively, you can directly execute the following command in the command line to perform inference:

|

| 105 |

+

|

| 106 |

+

``` sh

|

| 107 |

+

python -m cli.inference \

|

| 108 |

+

--text "text to synthesis." \

|

| 109 |

+

--device 0 \

|

| 110 |

+

--save_dir "path/to/save/audio" \

|

| 111 |

+

--model_dir pretrained_models/Spark-TTS-0.5B \

|

| 112 |

+

--prompt_text "transcript of the prompt audio" \

|

| 113 |

+

--prompt_speech_path "path/to/prompt_audio"

|

| 114 |

+

```

|

| 115 |

+

|

| 116 |

+

**UI Usage**

|

| 117 |

+

|

| 118 |

+

You can start the UI interface by running `python webui.py`, which allows you to perform Voice Cloning and Voice Creation. Voice Cloning supports uploading reference audio or directly recording the audio.

|

| 119 |

+

|

| 120 |

+

|

| 121 |

+

| **Voice Cloning** | **Voice Creation** |

|

| 122 |

+

|:-------------------:|:-------------------:|

|

| 123 |

+

|  |  |

|

| 124 |

+

|

| 125 |

+

|

| 126 |

+

## To-Do List

|

| 127 |

+

|

| 128 |

+

- [ ] Release the Spark-TTS paper.

|

| 129 |

+

- [ ] Release the training code.

|

| 130 |

+

- [ ] Release the training dataset, VoxBox.

|

bicodec/config.yaml

ADDED

|

@@ -0,0 +1,7 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

highpass_cutoff_freq: 40

|

| 2 |

+

sample_rate: 16000

|

| 3 |

+

segment_duration: 2.4 # (s)

|

| 4 |

+

max_val_duration: 12 # (s)

|

| 5 |

+

latent_hop_length: 320

|

| 6 |

+

ref_segment_duration: 6

|

| 7 |

+

volume_normalize: true

|

bicodec/wav2vec2-large-xlsr-53/README.md

ADDED

|

@@ -0,0 +1,29 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

---

|

| 2 |

+

language: multilingual

|

| 3 |

+

datasets:

|

| 4 |

+

- common_voice

|

| 5 |

+

tags:

|

| 6 |

+

- speech

|

| 7 |

+

license: apache-2.0

|

| 8 |

+

---

|

| 9 |

+

|

| 10 |

+

# Wav2Vec2-XLSR-53

|

| 11 |

+

|

| 12 |

+

[Facebook's XLSR-Wav2Vec2](https://ai.facebook.com/blog/wav2vec-20-learning-the-structure-of-speech-from-raw-audio/)

|

| 13 |

+

|

| 14 |

+

The base model pretrained on 16kHz sampled speech audio. When using the model make sure that your speech input is also sampled at 16Khz. Note that this model should be fine-tuned on a downstream task, like Automatic Speech Recognition. Check out [this blog](https://huggingface.co/blog/fine-tune-wav2vec2-english) for more information.

|

| 15 |

+

|

| 16 |

+

[Paper](https://arxiv.org/abs/2006.13979)

|

| 17 |

+

|

| 18 |

+

Authors: Alexis Conneau, Alexei Baevski, Ronan Collobert, Abdelrahman Mohamed, Michael Auli

|

| 19 |

+

|

| 20 |

+

**Abstract**

|

| 21 |

+

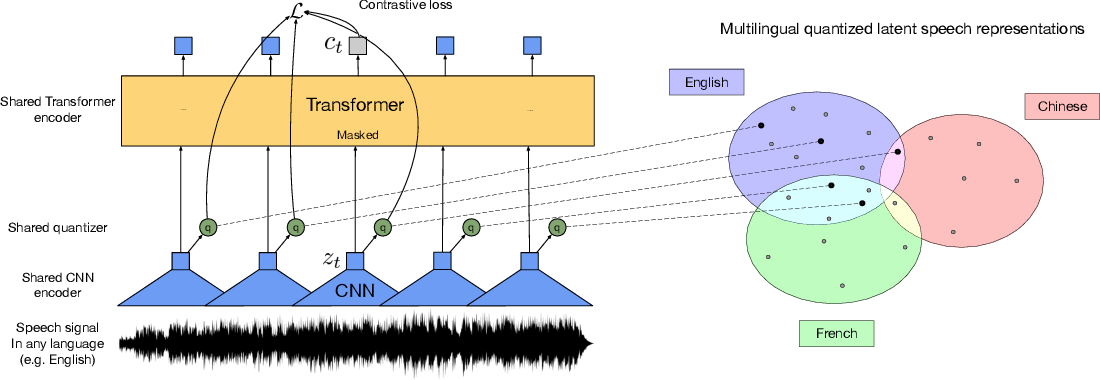

This paper presents XLSR which learns cross-lingual speech representations by pretraining a single model from the raw waveform of speech in multiple languages. We build on wav2vec 2.0 which is trained by solving a contrastive task over masked latent speech representations and jointly learns a quantization of the latents shared across languages. The resulting model is fine-tuned on labeled data and experiments show that cross-lingual pretraining significantly outperforms monolingual pretraining. On the CommonVoice benchmark, XLSR shows a relative phoneme error rate reduction of 72% compared to the best known results. On BABEL, our approach improves word error rate by 16% relative compared to a comparable system. Our approach enables a single multilingual speech recognition model which is competitive to strong individual models. Analysis shows that the latent discrete speech representations are shared across languages with increased sharing for related languages. We hope to catalyze research in low-resource speech understanding by releasing XLSR-53, a large model pretrained in 53 languages.

|

| 22 |

+

|

| 23 |

+

The original model can be found under https://github.com/pytorch/fairseq/tree/master/examples/wav2vec#wav2vec-20.

|

| 24 |

+

|

| 25 |

+

# Usage

|

| 26 |

+

|

| 27 |

+

See [this notebook](https://colab.research.google.com/github/patrickvonplaten/notebooks/blob/master/Fine_Tune_XLSR_Wav2Vec2_on_Turkish_ASR_with_%F0%9F%A4%97_Transformers.ipynb) for more information on how to fine-tune the model.

|

| 28 |

+

|

| 29 |

+

|

bicodec/wav2vec2-large-xlsr-53/config.json

ADDED

|

@@ -0,0 +1,83 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

{

|

| 2 |

+

"activation_dropout": 0.0,

|

| 3 |

+

"apply_spec_augment": true,

|

| 4 |

+

"architectures": [

|

| 5 |

+

"Wav2Vec2ForPreTraining"

|

| 6 |

+

],

|

| 7 |

+

"attention_dropout": 0.1,

|

| 8 |

+

"bos_token_id": 1,

|

| 9 |

+

"codevector_dim": 768,

|

| 10 |

+

"contrastive_logits_temperature": 0.1,

|

| 11 |

+

"conv_bias": true,

|

| 12 |

+

"conv_dim": [

|

| 13 |

+

512,

|

| 14 |

+

512,

|

| 15 |

+

512,

|

| 16 |

+

512,

|

| 17 |

+

512,

|

| 18 |

+

512,

|

| 19 |

+

512

|

| 20 |

+

],

|

| 21 |

+

"conv_kernel": [

|

| 22 |

+

10,

|

| 23 |

+

3,

|

| 24 |

+

3,

|

| 25 |

+

3,

|

| 26 |

+

3,

|

| 27 |

+

2,

|

| 28 |

+

2

|

| 29 |

+

],

|

| 30 |

+

"conv_stride": [

|

| 31 |

+

5,

|

| 32 |

+

2,

|

| 33 |

+

2,

|

| 34 |

+

2,

|

| 35 |

+

2,

|

| 36 |

+

2,

|

| 37 |

+

2

|

| 38 |

+

],

|

| 39 |

+

"ctc_loss_reduction": "sum",

|

| 40 |

+

"ctc_zero_infinity": false,

|

| 41 |

+

"diversity_loss_weight": 0.1,

|

| 42 |

+

"do_stable_layer_norm": true,

|

| 43 |

+

"eos_token_id": 2,

|

| 44 |

+

"feat_extract_activation": "gelu",

|

| 45 |

+

"feat_extract_dropout": 0.0,

|

| 46 |

+

"feat_extract_norm": "layer",

|

| 47 |

+

"feat_proj_dropout": 0.1,

|

| 48 |

+

"feat_quantizer_dropout": 0.0,

|

| 49 |

+

"final_dropout": 0.0,

|

| 50 |

+

"gradient_checkpointing": false,

|

| 51 |

+

"hidden_act": "gelu",

|

| 52 |

+

"hidden_dropout": 0.1,

|

| 53 |

+

"hidden_size": 1024,

|

| 54 |

+

"initializer_range": 0.02,

|

| 55 |

+

"intermediate_size": 4096,

|

| 56 |

+

"layer_norm_eps": 1e-05,

|

| 57 |

+

"layerdrop": 0.1,

|

| 58 |

+

"mask_channel_length": 10,

|

| 59 |

+

"mask_channel_min_space": 1,

|

| 60 |

+

"mask_channel_other": 0.0,

|

| 61 |

+

"mask_channel_prob": 0.0,

|

| 62 |

+

"mask_channel_selection": "static",

|

| 63 |

+

"mask_feature_length": 10,

|

| 64 |

+

"mask_feature_prob": 0.0,

|

| 65 |

+

"mask_time_length": 10,

|

| 66 |

+

"mask_time_min_space": 1,

|

| 67 |

+

"mask_time_other": 0.0,

|

| 68 |

+

"mask_time_prob": 0.075,

|

| 69 |

+

"mask_time_selection": "static",

|

| 70 |

+

"model_type": "wav2vec2",

|

| 71 |

+

"num_attention_heads": 16,

|

| 72 |

+

"num_codevector_groups": 2,

|

| 73 |

+

"num_codevectors_per_group": 320,

|

| 74 |

+

"num_conv_pos_embedding_groups": 16,

|

| 75 |

+

"num_conv_pos_embeddings": 128,

|

| 76 |

+

"num_feat_extract_layers": 7,

|

| 77 |

+

"num_hidden_layers": 24,

|

| 78 |

+

"num_negatives": 100,

|

| 79 |

+

"pad_token_id": 0,

|

| 80 |

+

"proj_codevector_dim": 768,

|

| 81 |

+

"transformers_version": "4.7.0.dev0",

|

| 82 |

+

"vocab_size": 32

|

| 83 |

+

}

|

bicodec/wav2vec2-large-xlsr-53/preprocessor_config.json

ADDED

|

@@ -0,0 +1,9 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

{

|

| 2 |

+

"do_normalize": true,

|

| 3 |

+

"feature_extractor_type": "Wav2Vec2FeatureExtractor",

|

| 4 |

+

"feature_size": 1,

|

| 5 |

+

"padding_side": "right",

|

| 6 |

+

"padding_value": 0,

|

| 7 |

+

"return_attention_mask": true,

|

| 8 |

+

"sampling_rate": 16000

|

| 9 |

+

}

|

bicodec/wav2vec2-large-xlsr-53/pytorch_model.bin

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

version https://git-lfs.github.com/spec/v1

|

| 2 |

+

oid sha256:314340227371a608f71adcd5f0de5933824fe77e55822aa4b24dba9c1c364dcb

|

| 3 |

+

size 1269737156

|

config.json

ADDED

|

@@ -0,0 +1,29 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

{

|

| 2 |

+

"_name_or_path": "/aifs4su/sitong/model/Qwen2.5-1.5B-Instruct",

|

| 3 |

+

"architectures": [

|

| 4 |

+

"Qwen2ForCausalLM"

|

| 5 |

+

],

|

| 6 |

+

"attention_dropout": 0.0,

|

| 7 |

+

"bos_token_id": 151643,

|

| 8 |

+

"eos_token_id": 151645,

|

| 9 |

+

"hidden_act": "silu",

|

| 10 |

+

"hidden_size": 1536,

|

| 11 |

+

"initializer_range": 0.02,

|

| 12 |

+

"intermediate_size": 8960,

|

| 13 |

+

"max_position_embeddings": 32768,

|

| 14 |

+

"max_window_layers": 21,

|

| 15 |

+

"model_type": "qwen2",

|

| 16 |

+

"num_attention_heads": 12,

|

| 17 |

+

"num_hidden_layers": 28,

|

| 18 |

+

"num_key_value_heads": 2,

|

| 19 |

+

"rms_norm_eps": 1e-06,

|

| 20 |

+

"rope_scaling": null,

|

| 21 |

+

"rope_theta": 1000000.0,

|

| 22 |

+

"sliding_window": null,

|

| 23 |

+

"tie_word_embeddings": true,

|

| 24 |

+

"torch_dtype": "bfloat16",

|

| 25 |

+

"transformers_version": "4.48.2",

|

| 26 |

+

"use_cache": true,

|

| 27 |

+

"use_sliding_window": false,

|

| 28 |

+

"vocab_size": 180407

|

| 29 |

+

}

|

generation_config.json

ADDED

|

@@ -0,0 +1,14 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

{

|

| 2 |

+

"bos_token_id": 151643,

|

| 3 |

+

"do_sample": true,

|

| 4 |

+

"eos_token_id": [

|

| 5 |

+

151645,

|

| 6 |

+

151643

|

| 7 |

+

],

|

| 8 |

+

"pad_token_id": 151643,

|

| 9 |

+

"repetition_penalty": 1.1,

|

| 10 |

+

"temperature": 0.7,

|

| 11 |

+

"top_k": 20,

|

| 12 |

+

"top_p": 0.8,

|

| 13 |

+

"transformers_version": "4.48.2"

|

| 14 |

+

}

|

glm4_tokenizer/.gitattributes

ADDED

|

@@ -0,0 +1,35 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

*.7z filter=lfs diff=lfs merge=lfs -text

|

| 2 |

+

*.arrow filter=lfs diff=lfs merge=lfs -text

|

| 3 |

+

*.bin filter=lfs diff=lfs merge=lfs -text

|

| 4 |

+

*.bz2 filter=lfs diff=lfs merge=lfs -text

|

| 5 |

+

*.ckpt filter=lfs diff=lfs merge=lfs -text

|

| 6 |

+

*.ftz filter=lfs diff=lfs merge=lfs -text

|

| 7 |

+

*.gz filter=lfs diff=lfs merge=lfs -text

|

| 8 |

+

*.h5 filter=lfs diff=lfs merge=lfs -text

|

| 9 |

+

*.joblib filter=lfs diff=lfs merge=lfs -text

|

| 10 |

+

*.lfs.* filter=lfs diff=lfs merge=lfs -text

|

| 11 |

+

*.mlmodel filter=lfs diff=lfs merge=lfs -text

|

| 12 |

+

*.model filter=lfs diff=lfs merge=lfs -text

|

| 13 |

+

*.msgpack filter=lfs diff=lfs merge=lfs -text

|

| 14 |

+

*.npy filter=lfs diff=lfs merge=lfs -text

|

| 15 |

+

*.npz filter=lfs diff=lfs merge=lfs -text

|

| 16 |

+

*.onnx filter=lfs diff=lfs merge=lfs -text

|

| 17 |

+

*.ot filter=lfs diff=lfs merge=lfs -text

|

| 18 |

+

*.parquet filter=lfs diff=lfs merge=lfs -text

|

| 19 |

+

*.pb filter=lfs diff=lfs merge=lfs -text

|

| 20 |

+

*.pickle filter=lfs diff=lfs merge=lfs -text

|

| 21 |

+

*.pkl filter=lfs diff=lfs merge=lfs -text

|

| 22 |

+

*.pt filter=lfs diff=lfs merge=lfs -text

|

| 23 |

+

*.pth filter=lfs diff=lfs merge=lfs -text

|

| 24 |

+

*.rar filter=lfs diff=lfs merge=lfs -text

|

| 25 |

+

*.safetensors filter=lfs diff=lfs merge=lfs -text

|

| 26 |

+

saved_model/**/* filter=lfs diff=lfs merge=lfs -text

|

| 27 |

+

*.tar.* filter=lfs diff=lfs merge=lfs -text

|

| 28 |

+

*.tar filter=lfs diff=lfs merge=lfs -text

|

| 29 |

+

*.tflite filter=lfs diff=lfs merge=lfs -text

|

| 30 |

+

*.tgz filter=lfs diff=lfs merge=lfs -text

|

| 31 |

+

*.wasm filter=lfs diff=lfs merge=lfs -text

|

| 32 |

+

*.xz filter=lfs diff=lfs merge=lfs -text

|

| 33 |

+

*.zip filter=lfs diff=lfs merge=lfs -text

|

| 34 |

+

*.zst filter=lfs diff=lfs merge=lfs -text

|

| 35 |

+

*tfevents* filter=lfs diff=lfs merge=lfs -text

|

glm4_tokenizer/LICENSE

ADDED

|

@@ -0,0 +1,70 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

The glm-4-voice License

|

| 2 |

+

|

| 3 |

+

1. 定义

|

| 4 |

+

|

| 5 |

+

“许可方”是指分发其软件的 glm-4-voice 模型团队。

|

| 6 |

+

“软件”是指根据本许可提供的 glm-4-voice 模型参数。

|

| 7 |

+

|

| 8 |

+

2. 许可授予

|

| 9 |

+

|

| 10 |

+

根据本许可的条款和条件,许可方特此授予您非排他性、全球性、不可转让、不可再许可、可撤销、免版税的版权许可。

|

| 11 |

+

本许可允许您免费使用本仓库中的所有开源模型进行学术研究,对于希望将模型用于商业目的的用户,需在[这里](https://open.bigmodel.cn/mla/form)完成登记。经过登记的用户可以免费使用本模型进行商业活动,但必须遵守本许可的所有条款和条件。

|

| 12 |

+

上述版权声明和本许可声明应包含在本软件的所有副本或重要部分中。

|

| 13 |

+

如果您分发或提供 THUDM / 智谱AI 关于 glm-4 开源模型的材料(或其任何衍生作品),或使用其中任何材料(包括 glm-4 系列的所有开源模型)的产品或服务,您应:

|

| 14 |

+

|

| 15 |

+

(A) 随任何此类 THUDM / 智谱AI 材料提供本协议的副本;

|

| 16 |

+

(B) 在相关网站、用户界面、博客文章、关于页面或产品文档上突出显示 “Built with glm-4”。

|

| 17 |

+

如果您使用 THUDM / 智谱AI的 glm-4 开源模型的材料来创建、训练、微调或以其他方式改进已分发或可用的 AI 模型,您还应在任何此类 AI 模型名称的开头添加 “glm-4”。

|

| 18 |

+

|

| 19 |

+

3. 限制

|

| 20 |

+

|

| 21 |

+

您不得出于任何军事或非法目的使用、复制、修改、合并、发布、分发、复制或创建本软件的全部或部分衍生作品。

|

| 22 |

+

您不得利用本软件从事任何危害国家安全和国家统一,危害社会公共利益及公序良俗,侵犯他人商业秘密、知识产权、名誉权、肖像权、财产权等权益的行为。

|

| 23 |

+

您在使用中应遵循使用地所适用的法律法规政策、道德规范等要求。

|

| 24 |

+

|

| 25 |

+

4. 免责声明

|

| 26 |

+

|

| 27 |

+

本软件“按原样”提供,不提供任何明示或暗示的保证,包括但不限于对适销性、特定用途的适用性和非侵权性的保证。

|

| 28 |

+

在任何情况下,作者或版权持有人均不对任何索赔、损害或其他责任负责,无论是在合同诉讼、侵权行为还是其他方面,由软件或软件的使用或其他交易引起、由软件引起或与之相关

|

| 29 |

+

软件。

|

| 30 |

+

|

| 31 |

+

5. 责任限制

|

| 32 |

+

|

| 33 |

+

除适用法律禁止的范围外,在任何情况下且根据任何法律理论,无论是基于侵权行为、疏忽、合同、责任或其他原因,任何许可方均不对您承担任何直接、间接、特殊、偶然、示范性、

|

| 34 |

+

或间接损害,或任何其他商业损失,即使许可人已被告知此类损害的可能性。

|

| 35 |

+

|

| 36 |

+

6. 争议解决

|

| 37 |

+

|

| 38 |

+

本许可受中华人民共和国法律管辖并按其解释。 因本许可引起的或与本许可有关的任何争议应提交北京市海淀区人民法院。

|

| 39 |

+

请注意,许可证可能会更新到更全面的版本。 有关许可和版权的任何问题,请通过 license@zhipuai.cn 与我们联系。

|

| 40 |

+

1. Definitions

|

| 41 |

+

“Licensor” means the glm-4-voice Model Team that distributes its Software.

|

| 42 |

+

“Software” means the glm-4-voice model parameters made available under this license.

|

| 43 |

+

2. License

|

| 44 |

+

Under the terms and conditions of this license, the Licensor hereby grants you a non-exclusive, worldwide, non-transferable, non-sublicensable, revocable, royalty-free copyright license.

|

| 45 |

+

This license allows you to use all open source models in this repository for free for academic research. For users who wish to use the models for commercial purposes, please do so [here](https://open.bigmodel.cn/mla/form)

|

| 46 |

+

Complete registration. Registered users are free to use this model for commercial activities, but must comply with all terms and conditions of this license.

|

| 47 |

+

The copyright notice and this license notice shall be included in all copies or substantial portions of the Software.

|

| 48 |

+

If you distribute or provide THUDM / Zhipu AI materials on the glm-4 open source model (or any derivative works thereof), or products or services that use any materials therein (including all open source models of the glm-4 series), you should:

|

| 49 |

+

(A) Provide a copy of this Agreement with any such THUDM/Zhipu AI Materials;

|

| 50 |

+

(B) Prominently display "Built with glm-4" on the relevant website, user interface, blog post, related page or product documentation.

|

| 51 |

+

If you use materials from THUDM/Zhipu AI's glm-4 model to create, train, operate, or otherwise improve assigned or available AI models, you should also add "glm-4" to the beginning of any such AI model name.

|

| 52 |

+

3. Restrictions

|

| 53 |

+

You are not allowed to use, copy, modify, merge, publish, distribute, copy or create all or part of the derivative works of this software for any military or illegal purposes.

|

| 54 |

+

You are not allowed to use this software to engage in any behavior that endangers national security and unity, endangers social public interests and public order, infringes on the rights and interests of others such as trade secrets, intellectual property rights, reputation rights, portrait rights, and property rights.

|

| 55 |

+

You should comply with the applicable laws, regulations, policies, ethical standards, and other requirements in the place of use during use.

|

| 56 |

+

4. Disclaimer

|

| 57 |

+

THE SOFTWARE IS PROVIDED "AS IS", WITHOUT WARRANTY OF ANY KIND, EXPRESS OR IMPLIED, INCLUDING BUT NOT LIMITED TO THE

|

| 58 |

+

WARRANTIES OF MERCHANTABILITY, FITNESS FOR A PARTICULAR PURPOSE AND NONINFRINGEMENT. IN NO EVENT SHALL THE AUTHORS OR

|

| 59 |

+

COPYRIGHT HOLDERS BE LIABLE FOR ANY CLAIM, DAMAGES OR OTHER LIABILITY, WHETHER IN AN ACTION OF CONTRACT, TORT OR

|

| 60 |

+

OTHERWISE, ARISING FROM, OUT OF OR IN CONNECTION WITH THE SOFTWARE OR THE USE OR OTHER DEALINGS IN THE SOFTWARE.

|

| 61 |

+

5. Limitation of Liability

|

| 62 |

+

EXCEPT TO THE EXTENT PROHIBITED BY APPLICABLE LAW, IN NO EVENT AND UNDER NO LEGAL THEORY, WHETHER BASED IN TORT,

|

| 63 |

+

NEGLIGENCE, CONTRACT, LIABILITY, OR OTHERWISE WILL ANY LICENSOR BE LIABLE TO YOU FOR ANY DIRECT, INDIRECT, SPECIAL,

|

| 64 |

+

INCIDENTAL, EXEMPLARY, OR CONSEQUENTIAL DAMAGES, OR ANY OTHER COMMERCIAL LOSSES, EVEN IF THE LICENSOR HAS BEEN ADVISED

|

| 65 |

+

OF THE POSSIBILITY OF SUCH DAMAGES.

|

| 66 |

+

6. Dispute Resolution

|

| 67 |

+

This license shall be governed and construed in accordance with the laws of People’s Republic of China. Any dispute

|

| 68 |

+

arising from or in connection with this License shall be submitted to Haidian District People's Court in Beijing.

|

| 69 |

+

Note that the license is subject to update to a more comprehensive version. For any questions related to the license and

|

| 70 |

+

copyright, please contact us at license@zhipuai.cn.

|

glm4_tokenizer/README.md

ADDED

|

@@ -0,0 +1,13 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

# GLM-4-Voice-Tokenizer

|

| 2 |

+

|

| 3 |

+

GLM-4-Voice 是智谱 AI 推出的端到端语音模型。GLM-4-Voice 能够直接理解和生成中英文语音,进行实时语音对话,并且能够根据用户的指令改变语音的情感、语调、语速、方言等属性。

|

| 4 |

+

|

| 5 |

+

GLM-4-Voice is an end-to-end voice model launched by Zhipu AI. GLM-4-Voice can directly understand and generate Chinese and English speech, engage in real-time voice conversations, and change attributes such as emotion, intonation, speech rate, and dialect based on user instructions.

|

| 6 |

+

|

| 7 |

+

本仓库是 GLM-4-Voice 的 speech tokenizer 部分。通过在 [Whisper](https://github.com/openai/whisper) 的 encoder 部分增加 vector quantization 进行训练,将连续的语音输入转化为离散的 token。每秒音频转化为 12.5 个离散 token。

|

| 8 |

+

|

| 9 |

+

The repo provides the speech tokenzier of GLM-4-Voice, which is trained by adding vector quantization to the encoder part of [Whisper](https://github.com/openai/whisper) and converts continuous speech input into discrete tokens. Each second of audio is converted into 12.5 discrete tokens.

|

| 10 |

+

|

| 11 |

+

更多信息请参考我们的仓库 [GLM-4-Voice](https://github.com/THUDM/GLM-4-Voice).

|

| 12 |

+

|

| 13 |

+

For more information please refer to our repo [GLM-4-Voice](https://github.com/THUDM/GLM-4-Voice).

|

glm4_tokenizer/config.json

ADDED

|

@@ -0,0 +1,65 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

{

|

| 2 |

+

"_name_or_path": "THUDM/glm-4-voice-tokenizer",

|

| 3 |

+

"activation_dropout": 0.0,

|

| 4 |

+

"activation_function": "gelu",

|

| 5 |

+

"apply_spec_augment": false,

|

| 6 |

+

"architectures": [

|

| 7 |

+

"WhisperVQEncoder"

|

| 8 |

+

],

|

| 9 |

+

"attention_dropout": 0.0,

|

| 10 |

+

"begin_suppress_tokens": [

|

| 11 |

+

220,

|

| 12 |

+

50257

|

| 13 |

+

],

|

| 14 |

+

"bos_token_id": 50257,

|

| 15 |

+

"classifier_proj_size": 256,

|

| 16 |

+

"d_model": 1280,

|

| 17 |

+

"decoder_attention_heads": 20,

|

| 18 |

+

"decoder_ffn_dim": 5120,

|

| 19 |

+

"decoder_layerdrop": 0.0,

|

| 20 |

+

"decoder_layers": 32,

|

| 21 |

+

"decoder_start_token_id": 50258,

|

| 22 |

+

"dropout": 0.0,

|

| 23 |

+

"encoder_attention_heads": 20,

|

| 24 |

+

"encoder_causal_attention": false,

|

| 25 |

+

"encoder_causal_convolution": true,

|

| 26 |

+

"encoder_ffn_dim": 5120,

|

| 27 |

+

"encoder_layerdrop": 0.0,

|

| 28 |

+

"encoder_layers": 32,

|

| 29 |

+

"eos_token_id": 50257,

|

| 30 |

+

"init_std": 0.02,

|

| 31 |

+

"is_encoder_decoder": true,

|

| 32 |

+

"mask_feature_length": 10,

|

| 33 |

+

"mask_feature_min_masks": 0,

|

| 34 |

+

"mask_feature_prob": 0.0,

|

| 35 |

+

"mask_time_length": 10,

|

| 36 |

+

"mask_time_min_masks": 2,

|

| 37 |

+

"mask_time_prob": 0.05,

|

| 38 |

+

"max_length": 448,

|

| 39 |

+

"max_source_positions": 1500,

|

| 40 |

+

"max_target_positions": 448,

|

| 41 |

+

"median_filter_width": 7,

|

| 42 |

+

"model_type": "whisper",

|

| 43 |

+

"num_hidden_layers": 32,

|

| 44 |

+

"num_mel_bins": 128,

|

| 45 |

+

"pad_token_id": 50256,

|

| 46 |

+

"pooling_kernel_size": 4,

|

| 47 |

+

"pooling_position": 16,

|

| 48 |

+

"pooling_type": "avg",

|

| 49 |

+

"quantize_causal_block_size": 200,

|

| 50 |

+

"quantize_causal_encoder": false,

|

| 51 |

+

"quantize_commit_coefficient": 0.25,

|

| 52 |

+

"quantize_ema_decay": 0.99,

|

| 53 |

+

"quantize_encoder_only": true,

|

| 54 |

+

"quantize_loss_scale": 10.0,

|

| 55 |

+

"quantize_position": 16,

|

| 56 |

+

"quantize_restart_interval": 100,

|

| 57 |

+

"quantize_vocab_size": 16384,

|

| 58 |

+

"scale_embedding": false,

|

| 59 |

+

"skip_language_detection": true,

|

| 60 |

+

"torch_dtype": "float32",

|

| 61 |

+

"transformers_version": "4.44.1",

|

| 62 |

+

"use_cache": true,

|

| 63 |

+

"use_weighted_layer_sum": false,

|

| 64 |

+

"vocab_size": 51866

|

| 65 |

+

}

|

glm4_tokenizer/model.safetensors

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

version https://git-lfs.github.com/spec/v1

|

| 2 |

+

oid sha256:2800bd503f52b51e45f0c53cfd5c31dcfe8ef7f13d22b396aa3d53e0280dd1e4

|

| 3 |

+

size 1458374480

|

glm4_tokenizer/preprocessor_config.json

ADDED

|

@@ -0,0 +1,14 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

{

|

| 2 |

+

"chunk_length": 30,

|

| 3 |

+

"feature_extractor_type": "WhisperFeatureExtractor",

|

| 4 |

+

"feature_size": 128,

|

| 5 |

+

"hop_length": 160,

|

| 6 |

+

"n_fft": 400,

|

| 7 |

+

"n_samples": 480000,

|

| 8 |

+

"nb_max_frames": 3000,

|

| 9 |

+

"padding_side": "right",

|

| 10 |

+

"padding_value": 0.0,

|

| 11 |

+

"processor_class": "WhisperProcessor",

|

| 12 |

+

"return_attention_mask": false,

|

| 13 |

+

"sampling_rate": 16000

|

| 14 |

+

}

|

merges.txt

ADDED

|

The diff for this file is too large to render.

See raw diff

|

|

|

model.safetensors

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

version https://git-lfs.github.com/spec/v1

|

| 2 |

+

oid sha256:847957fa5db2a201b319207d01e7a6c2cea4bed4682abb15974fbbf24864c4d7

|

| 3 |

+

size 3729140584

|

tokenizer.json

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

version https://git-lfs.github.com/spec/v1

|

| 2 |

+

oid sha256:5e7459aa6bb881763af654582e228016490528087256916c3629751ded708489

|

| 3 |

+

size 17156419

|

tokenizer_config.json

ADDED

|

The diff for this file is too large to render.

See raw diff

|

|

|

vocab.json

ADDED

|

The diff for this file is too large to render.

See raw diff

|

|

|