root commited on

Commit ·

fd0683b

1

Parent(s): ff3fa65

Add facefusion directory as regular files

Browse filesThis view is limited to 50 files because it contains too many changes. See raw diff

- facefusion +0 -1

- facefusion/.editorconfig +8 -0

- facefusion/.flake8 +3 -0

- facefusion/.github/FUNDING.yml +2 -0

- facefusion/.github/workflows/ci.yml +35 -0

- facefusion/.gitignore +3 -0

- facefusion/.install/LICENSE.md +3 -0

- facefusion/.install/facefusion.ico +0 -0

- facefusion/.install/facefusion.nsi +183 -0

- facefusion/LICENSE.md +3 -0

- facefusion/README.md +113 -0

- facefusion/core.py +443 -0

- facefusion/facefusion.ini +74 -0

- facefusion/facefusion/__init__.py +0 -0

- facefusion/facefusion/__pycache__/__init__.cpython-310.pyc +0 -0

- facefusion/facefusion/__pycache__/audio.cpython-310.pyc +0 -0

- facefusion/facefusion/__pycache__/choices.cpython-310.pyc +0 -0

- facefusion/facefusion/__pycache__/common_helper.cpython-310.pyc +0 -0

- facefusion/facefusion/__pycache__/config.cpython-310.pyc +0 -0

- facefusion/facefusion/__pycache__/content_analyser.cpython-310.pyc +0 -0

- facefusion/facefusion/__pycache__/core.cpython-310.pyc +0 -0

- facefusion/facefusion/__pycache__/download.cpython-310.pyc +0 -0

- facefusion/facefusion/__pycache__/execution.cpython-310.pyc +0 -0

- facefusion/facefusion/__pycache__/face_analyser.cpython-310.pyc +0 -0

- facefusion/facefusion/__pycache__/face_helper.cpython-310.pyc +0 -0

- facefusion/facefusion/__pycache__/face_masker.cpython-310.pyc +0 -0

- facefusion/facefusion/__pycache__/face_store.cpython-310.pyc +0 -0

- facefusion/facefusion/__pycache__/ffmpeg.cpython-310.pyc +0 -0

- facefusion/facefusion/__pycache__/filesystem.cpython-310.pyc +0 -0

- facefusion/facefusion/__pycache__/globals.cpython-310.pyc +0 -0

- facefusion/facefusion/__pycache__/logger.cpython-310.pyc +0 -0

- facefusion/facefusion/__pycache__/memory.cpython-310.pyc +0 -0

- facefusion/facefusion/__pycache__/metadata.cpython-310.pyc +0 -0

- facefusion/facefusion/__pycache__/my_typing.cpython-310.pyc +0 -0

- facefusion/facefusion/__pycache__/normalizer.cpython-310.pyc +0 -0

- facefusion/facefusion/__pycache__/process_manager.cpython-310.pyc +0 -0

- facefusion/facefusion/__pycache__/statistics.cpython-310.pyc +0 -0

- facefusion/facefusion/__pycache__/thread_helper.cpython-310.pyc +0 -0

- facefusion/facefusion/__pycache__/typing.cpython-310.pyc +0 -0

- facefusion/facefusion/__pycache__/vision.cpython-310.pyc +0 -0

- facefusion/facefusion/__pycache__/voice_extractor.cpython-310.pyc +0 -0

- facefusion/facefusion/__pycache__/wording.cpython-310.pyc +0 -0

- facefusion/facefusion/audio.py +137 -0

- facefusion/facefusion/choices.py +37 -0

- facefusion/facefusion/common_helper.py +46 -0

- facefusion/facefusion/config.py +91 -0

- facefusion/facefusion/content_analyser.py +112 -0

- facefusion/facefusion/download.py +48 -0

- facefusion/facefusion/execution.py +112 -0

- facefusion/facefusion/face_analyser.py +586 -0

facefusion

DELETED

|

@@ -1 +0,0 @@

|

|

| 1 |

-

Subproject commit 126845c24c5c60699311d1f822de1a056d83b3bd

|

|

|

|

|

|

facefusion/.editorconfig

ADDED

|

@@ -0,0 +1,8 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

root = true

|

| 2 |

+

|

| 3 |

+

[*]

|

| 4 |

+

end_of_line = lf

|

| 5 |

+

insert_final_newline = true

|

| 6 |

+

indent_size = 4

|

| 7 |

+

indent_style = tab

|

| 8 |

+

trim_trailing_whitespace = true

|

facefusion/.flake8

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

[flake8]

|

| 2 |

+

select = E3, E4, F

|

| 3 |

+

per-file-ignores = facefusion/core.py:E402

|

facefusion/.github/FUNDING.yml

ADDED

|

@@ -0,0 +1,2 @@

|

|

|

|

|

|

|

|

|

|

| 1 |

+

github: henryruhs

|

| 2 |

+

custom: [ buymeacoffee.com/henryruhs, paypal.me/henryruhs ]

|

facefusion/.github/workflows/ci.yml

ADDED

|

@@ -0,0 +1,35 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

name: ci

|

| 2 |

+

|

| 3 |

+

on: [ push, pull_request ]

|

| 4 |

+

|

| 5 |

+

jobs:

|

| 6 |

+

lint:

|

| 7 |

+

runs-on: ubuntu-latest

|

| 8 |

+

steps:

|

| 9 |

+

- name: Checkout

|

| 10 |

+

uses: actions/checkout@v4

|

| 11 |

+

- name: Set up Python 3.10

|

| 12 |

+

uses: actions/setup-python@v5

|

| 13 |

+

with:

|

| 14 |

+

python-version: '3.10'

|

| 15 |

+

- run: pip install flake8

|

| 16 |

+

- run: pip install mypy

|

| 17 |

+

- run: flake8 run.py facefusion tests

|

| 18 |

+

- run: mypy run.py facefusion tests

|

| 19 |

+

test:

|

| 20 |

+

strategy:

|

| 21 |

+

matrix:

|

| 22 |

+

os: [ macos-13, ubuntu-latest, windows-latest ]

|

| 23 |

+

runs-on: ${{ matrix.os }}

|

| 24 |

+

steps:

|

| 25 |

+

- name: Checkout

|

| 26 |

+

uses: actions/checkout@v4

|

| 27 |

+

- name: Set up FFMpeg

|

| 28 |

+

uses: FedericoCarboni/setup-ffmpeg@v3

|

| 29 |

+

- name: Set up Python 3.10

|

| 30 |

+

uses: actions/setup-python@v5

|

| 31 |

+

with:

|

| 32 |

+

python-version: '3.10'

|

| 33 |

+

- run: python install.py --onnxruntime default --skip-conda

|

| 34 |

+

- run: pip install pytest

|

| 35 |

+

- run: pytest

|

facefusion/.gitignore

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

.assets

|

| 2 |

+

.idea

|

| 3 |

+

.vscode

|

facefusion/.install/LICENSE.md

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

CC-BY-4.0 license

|

| 2 |

+

|

| 3 |

+

Copyright (c) 2024 Henry Ruhs

|

facefusion/.install/facefusion.ico

ADDED

|

|

facefusion/.install/facefusion.nsi

ADDED

|

@@ -0,0 +1,183 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

!include MUI2.nsh

|

| 2 |

+

!include nsDialogs.nsh

|

| 3 |

+

!include LogicLib.nsh

|

| 4 |

+

|

| 5 |

+

RequestExecutionLevel admin

|

| 6 |

+

ManifestDPIAware true

|

| 7 |

+

|

| 8 |

+

Name 'FaceFusion 2.6.0'

|

| 9 |

+

OutFile 'FaceFusion_2.6.0.exe'

|

| 10 |

+

|

| 11 |

+

!define MUI_ICON 'facefusion.ico'

|

| 12 |

+

|

| 13 |

+

!insertmacro MUI_PAGE_DIRECTORY

|

| 14 |

+

Page custom InstallPage PostInstallPage

|

| 15 |

+

!insertmacro MUI_PAGE_INSTFILES

|

| 16 |

+

!insertmacro MUI_LANGUAGE English

|

| 17 |

+

|

| 18 |

+

Var UseDefault

|

| 19 |

+

Var UseCuda

|

| 20 |

+

Var UseDirectMl

|

| 21 |

+

Var UseOpenVino

|

| 22 |

+

|

| 23 |

+

Function .onInit

|

| 24 |

+

StrCpy $INSTDIR 'C:\FaceFusion'

|

| 25 |

+

FunctionEnd

|

| 26 |

+

|

| 27 |

+

Function InstallPage

|

| 28 |

+

nsDialogs::Create 1018

|

| 29 |

+

!insertmacro MUI_HEADER_TEXT 'Choose Your Accelerator' 'Choose your accelerator based on the graphics card.'

|

| 30 |

+

|

| 31 |

+

${NSD_CreateRadioButton} 0 40u 100% 10u 'Default'

|

| 32 |

+

Pop $UseDefault

|

| 33 |

+

|

| 34 |

+

${NSD_CreateRadioButton} 0 55u 100% 10u 'CUDA (NVIDIA)'

|

| 35 |

+

Pop $UseCuda

|

| 36 |

+

|

| 37 |

+

${NSD_CreateRadioButton} 0 70u 100% 10u 'DirectML (AMD, Intel, NVIDIA)'

|

| 38 |

+

Pop $UseDirectMl

|

| 39 |

+

|

| 40 |

+

${NSD_CreateRadioButton} 0 85u 100% 10u 'OpenVINO (Intel)'

|

| 41 |

+

Pop $UseOpenVino

|

| 42 |

+

|

| 43 |

+

${NSD_Check} $UseDefault

|

| 44 |

+

|

| 45 |

+

nsDialogs::Show

|

| 46 |

+

FunctionEnd

|

| 47 |

+

|

| 48 |

+

Function PostInstallPage

|

| 49 |

+

${NSD_GetState} $UseDefault $UseDefault

|

| 50 |

+

${NSD_GetState} $UseCuda $UseCuda

|

| 51 |

+

${NSD_GetState} $UseDirectMl $UseDirectMl

|

| 52 |

+

${NSD_GetState} $UseOpenVino $UseOpenVino

|

| 53 |

+

FunctionEnd

|

| 54 |

+

|

| 55 |

+

Function Destroy

|

| 56 |

+

${If} ${Silent}

|

| 57 |

+

Quit

|

| 58 |

+

${Else}

|

| 59 |

+

Abort

|

| 60 |

+

${EndIf}

|

| 61 |

+

FunctionEnd

|

| 62 |

+

|

| 63 |

+

Section 'Prepare Your Platform'

|

| 64 |

+

DetailPrint 'Install GIT'

|

| 65 |

+

inetc::get 'https://github.com/git-for-windows/git/releases/download/v2.45.1.windows.1/Git-2.45.1-64-bit.exe' '$TEMP\Git.exe'

|

| 66 |

+

ExecWait '$TEMP\Git.exe /CURRENTUSER /VERYSILENT /DIR=$LOCALAPPDATA\Programs\Git' $0

|

| 67 |

+

Delete '$TEMP\Git.exe'

|

| 68 |

+

|

| 69 |

+

${If} $0 > 0

|

| 70 |

+

DetailPrint 'Git installation aborted with error code $0'

|

| 71 |

+

Call Destroy

|

| 72 |

+

${EndIf}

|

| 73 |

+

|

| 74 |

+

DetailPrint 'Uninstall Conda'

|

| 75 |

+

ExecWait '$LOCALAPPDATA\Programs\Miniconda3\Uninstall-Miniconda3.exe /S _?=$LOCALAPPDATA\Programs\Miniconda3'

|

| 76 |

+

RMDir /r '$LOCALAPPDATA\Programs\Miniconda3'

|

| 77 |

+

|

| 78 |

+

DetailPrint 'Install Conda'

|

| 79 |

+

inetc::get 'https://repo.anaconda.com/miniconda/Miniconda3-py310_24.3.0-0-Windows-x86_64.exe' '$TEMP\Miniconda3.exe'

|

| 80 |

+

ExecWait '$TEMP\Miniconda3.exe /InstallationType=JustMe /AddToPath=1 /S /D=$LOCALAPPDATA\Programs\Miniconda3' $1

|

| 81 |

+

Delete '$TEMP\Miniconda3.exe'

|

| 82 |

+

|

| 83 |

+

${If} $1 > 0

|

| 84 |

+

DetailPrint 'Conda installation aborted with error code $1'

|

| 85 |

+

Call Destroy

|

| 86 |

+

${EndIf}

|

| 87 |

+

SectionEnd

|

| 88 |

+

|

| 89 |

+

Section 'Download Your Copy'

|

| 90 |

+

SetOutPath $INSTDIR

|

| 91 |

+

|

| 92 |

+

DetailPrint 'Download Your Copy'

|

| 93 |

+

RMDir /r $INSTDIR

|

| 94 |

+

nsExec::Exec '$LOCALAPPDATA\Programs\Git\cmd\git.exe clone https://github.com/facefusion/facefusion --branch 2.6.0 .'

|

| 95 |

+

SectionEnd

|

| 96 |

+

|

| 97 |

+

Section 'Setup Your Environment'

|

| 98 |

+

DetailPrint 'Setup Your Environment'

|

| 99 |

+

nsExec::Exec '$LOCALAPPDATA\Programs\Miniconda3\Scripts\conda.exe init --all'

|

| 100 |

+

nsExec::Exec '$LOCALAPPDATA\Programs\Miniconda3\Scripts\conda.exe create --name facefusion python=3.10 --yes'

|

| 101 |

+

SectionEnd

|

| 102 |

+

|

| 103 |

+

Section 'Create Install Batch'

|

| 104 |

+

SetOutPath $INSTDIR

|

| 105 |

+

|

| 106 |

+

FileOpen $0 install-ffmpeg.bat w

|

| 107 |

+

FileOpen $1 install-accelerator.bat w

|

| 108 |

+

FileOpen $2 install-application.bat w

|

| 109 |

+

|

| 110 |

+

FileWrite $0 '@echo off && conda activate facefusion && conda install conda-forge::ffmpeg=7.0.0 --yes'

|

| 111 |

+

${If} $UseCuda == 1

|

| 112 |

+

FileWrite $1 '@echo off && conda activate facefusion && conda install cudatoolkit=11.8 cudnn=8.9.2.26 conda-forge::gputil=1.4.0 conda-forge::zlib-wapi --yes'

|

| 113 |

+

FileWrite $2 '@echo off && conda activate facefusion && python install.py --onnxruntime cuda-11.8'

|

| 114 |

+

${ElseIf} $UseDirectMl == 1

|

| 115 |

+

FileWrite $2 '@echo off && conda activate facefusion && python install.py --onnxruntime directml'

|

| 116 |

+

${ElseIf} $UseOpenVino == 1

|

| 117 |

+

FileWrite $1 '@echo off && conda activate facefusion && conda install conda-forge::openvino=2023.1.0 --yes'

|

| 118 |

+

FileWrite $2 '@echo off && conda activate facefusion && python install.py --onnxruntime openvino'

|

| 119 |

+

${Else}

|

| 120 |

+

FileWrite $2 '@echo off && conda activate facefusion && python install.py --onnxruntime default'

|

| 121 |

+

${EndIf}

|

| 122 |

+

|

| 123 |

+

FileClose $0

|

| 124 |

+

FileClose $1

|

| 125 |

+

FileClose $2

|

| 126 |

+

SectionEnd

|

| 127 |

+

|

| 128 |

+

Section 'Install Your FFmpeg'

|

| 129 |

+

SetOutPath $INSTDIR

|

| 130 |

+

|

| 131 |

+

DetailPrint 'Install Your FFmpeg'

|

| 132 |

+

nsExec::ExecToLog 'install-ffmpeg.bat'

|

| 133 |

+

SectionEnd

|

| 134 |

+

|

| 135 |

+

Section 'Install Your Accelerator'

|

| 136 |

+

SetOutPath $INSTDIR

|

| 137 |

+

|

| 138 |

+

DetailPrint 'Install Your Accelerator'

|

| 139 |

+

nsExec::ExecToLog 'install-accelerator.bat'

|

| 140 |

+

SectionEnd

|

| 141 |

+

|

| 142 |

+

Section 'Install The Application'

|

| 143 |

+

SetOutPath $INSTDIR

|

| 144 |

+

|

| 145 |

+

DetailPrint 'Install The Application'

|

| 146 |

+

nsExec::ExecToLog 'install-application.bat'

|

| 147 |

+

SectionEnd

|

| 148 |

+

|

| 149 |

+

Section 'Create Run Batch'

|

| 150 |

+

SetOutPath $INSTDIR

|

| 151 |

+

FileOpen $0 run.bat w

|

| 152 |

+

FileWrite $0 '@echo off && conda activate facefusion && python run.py %*'

|

| 153 |

+

FileClose $0

|

| 154 |

+

SectionEnd

|

| 155 |

+

|

| 156 |

+

Section 'Register The Application'

|

| 157 |

+

DetailPrint 'Register The Application'

|

| 158 |

+

|

| 159 |

+

CreateDirectory $SMPROGRAMS\FaceFusion

|

| 160 |

+

CreateShortcut '$SMPROGRAMS\FaceFusion\FaceFusion.lnk' $INSTDIR\run.bat '--open-browser' $INSTDIR\.install\facefusion.ico

|

| 161 |

+

CreateShortcut '$SMPROGRAMS\FaceFusion\FaceFusion Benchmark.lnk' $INSTDIR\run.bat '--ui-layouts benchmark --open-browser' $INSTDIR\.install\facefusion.ico

|

| 162 |

+

CreateShortcut '$SMPROGRAMS\FaceFusion\FaceFusion Webcam.lnk' $INSTDIR\run.bat '--ui-layouts webcam --open-browser' $INSTDIR\.install\facefusion.ico

|

| 163 |

+

|

| 164 |

+

CreateShortcut $DESKTOP\FaceFusion.lnk $INSTDIR\run.bat '--open-browser' $INSTDIR\.install\facefusion.ico

|

| 165 |

+

|

| 166 |

+

WriteUninstaller $INSTDIR\Uninstall.exe

|

| 167 |

+

|

| 168 |

+

WriteRegStr HKLM SOFTWARE\Microsoft\Windows\CurrentVersion\Uninstall\FaceFusion DisplayName 'FaceFusion'

|

| 169 |

+

WriteRegStr HKLM SOFTWARE\Microsoft\Windows\CurrentVersion\Uninstall\FaceFusion DisplayVersion '2.6.0'

|

| 170 |

+

WriteRegStr HKLM SOFTWARE\Microsoft\Windows\CurrentVersion\Uninstall\FaceFusion Publisher 'Henry Ruhs'

|

| 171 |

+

WriteRegStr HKLM SOFTWARE\Microsoft\Windows\CurrentVersion\Uninstall\FaceFusion InstallLocation $INSTDIR

|

| 172 |

+

WriteRegStr HKLM SOFTWARE\Microsoft\Windows\CurrentVersion\Uninstall\FaceFusion UninstallString $INSTDIR\uninstall.exe

|

| 173 |

+

SectionEnd

|

| 174 |

+

|

| 175 |

+

Section 'Uninstall'

|

| 176 |

+

nsExec::Exec '$LOCALAPPDATA\Programs\Miniconda3\Scripts\conda.exe env remove --name facefusion --yes'

|

| 177 |

+

|

| 178 |

+

Delete $DESKTOP\FaceFusion.lnk

|

| 179 |

+

RMDir /r $SMPROGRAMS\FaceFusion

|

| 180 |

+

RMDir /r $INSTDIR

|

| 181 |

+

|

| 182 |

+

DeleteRegKey HKLM SOFTWARE\Microsoft\Windows\CurrentVersion\Uninstall\FaceFusion

|

| 183 |

+

SectionEnd

|

facefusion/LICENSE.md

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

MIT license

|

| 2 |

+

|

| 3 |

+

Copyright (c) 2024 Henry Ruhs

|

facefusion/README.md

ADDED

|

@@ -0,0 +1,113 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

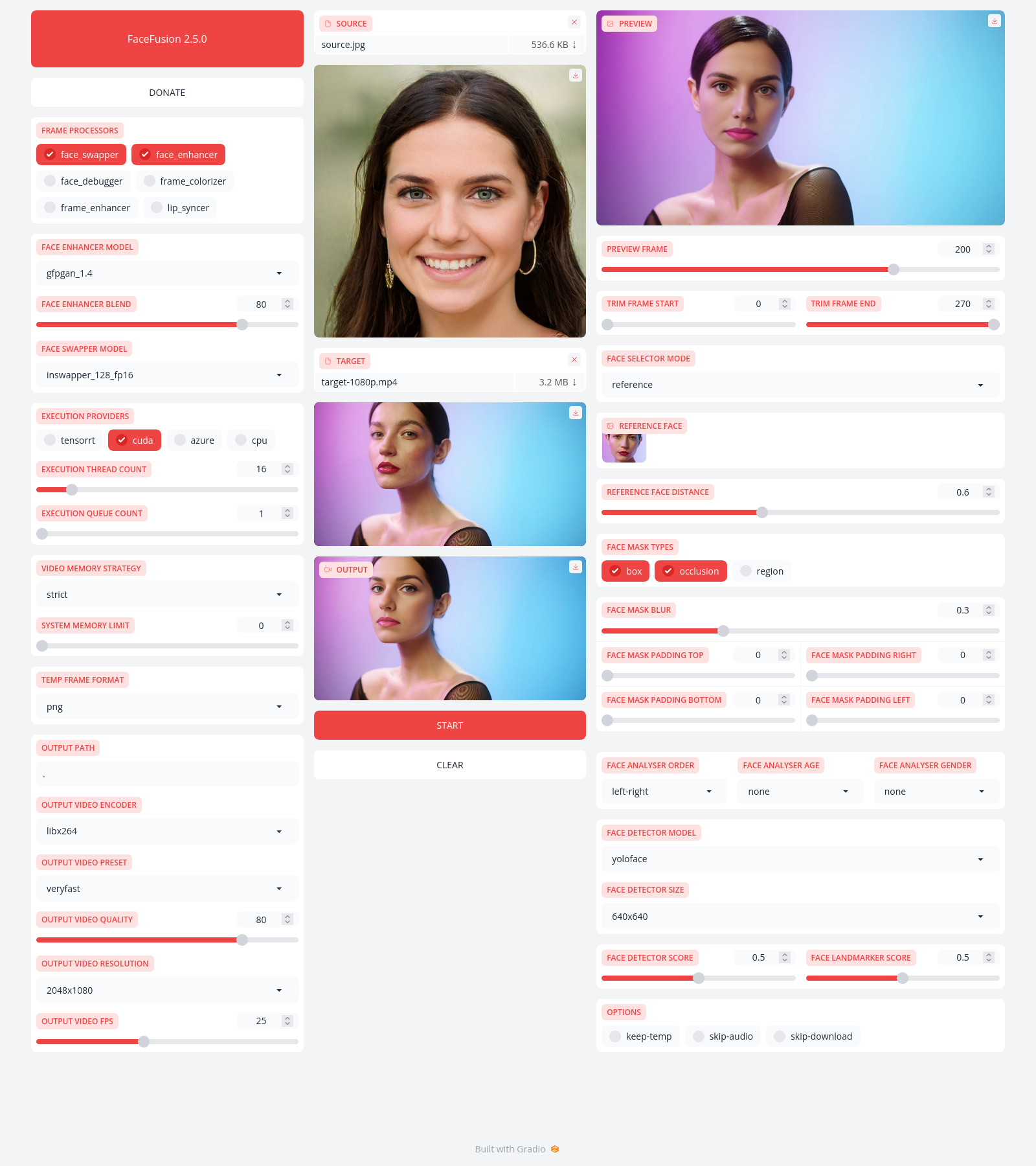

+

FaceFusion

|

| 2 |

+

==========

|

| 3 |

+

|

| 4 |

+

> Next generation face swapper and enhancer.

|

| 5 |

+

|

| 6 |

+

[](https://github.com/facefusion/facefusion/actions?query=workflow:ci)

|

| 7 |

+

|

| 8 |

+

|

| 9 |

+

|

| 10 |

+

Preview

|

| 11 |

+

-------

|

| 12 |

+

|

| 13 |

+

|

| 14 |

+

|

| 15 |

+

|

| 16 |

+

Installation

|

| 17 |

+

------------

|

| 18 |

+

|

| 19 |

+

Be aware, the [installation](https://docs.facefusion.io/installation) needs technical skills and is not recommended for beginners. In case you are not comfortable using a terminal, our [Windows Installer](https://buymeacoffee.com/henryruhs/e/251939) can have you up and running in minutes.

|

| 20 |

+

|

| 21 |

+

|

| 22 |

+

Usage

|

| 23 |

+

-----

|

| 24 |

+

|

| 25 |

+

Run the command:

|

| 26 |

+

|

| 27 |

+

```

|

| 28 |

+

python run.py [options]

|

| 29 |

+

|

| 30 |

+

options:

|

| 31 |

+

-h, --help show this help message and exit

|

| 32 |

+

-c CONFIG_PATH, --config CONFIG_PATH choose the config file to override defaults

|

| 33 |

+

-s SOURCE_PATHS, --source SOURCE_PATHS choose single or multiple source images or audios

|

| 34 |

+

-t TARGET_PATH, --target TARGET_PATH choose single target image or video

|

| 35 |

+

-o OUTPUT_PATH, --output OUTPUT_PATH specify the output file or directory

|

| 36 |

+

-v, --version show program's version number and exit

|

| 37 |

+

|

| 38 |

+

misc:

|

| 39 |

+

--force-download force automate downloads and exit

|

| 40 |

+

--skip-download omit automate downloads and remote lookups

|

| 41 |

+

--headless run the program without a user interface

|

| 42 |

+

--log-level {error,warn,info,debug} adjust the message severity displayed in the terminal

|

| 43 |

+

|

| 44 |

+

execution:

|

| 45 |

+

--execution-device-id EXECUTION_DEVICE_ID specify the device used for processing

|

| 46 |

+

--execution-providers EXECUTION_PROVIDERS [EXECUTION_PROVIDERS ...] accelerate the model inference using different providers (choices: cpu, ...)

|

| 47 |

+

--execution-thread-count [1-128] specify the amount of parallel threads while processing

|

| 48 |

+

--execution-queue-count [1-32] specify the amount of frames each thread is processing

|

| 49 |

+

|

| 50 |

+

memory:

|

| 51 |

+

--video-memory-strategy {strict,moderate,tolerant} balance fast frame processing and low VRAM usage

|

| 52 |

+

--system-memory-limit [0-128] limit the available RAM that can be used while processing

|

| 53 |

+

|

| 54 |

+

face analyser:

|

| 55 |

+

--face-analyser-order {left-right,right-left,top-bottom,bottom-top,small-large,large-small,best-worst,worst-best} specify the order in which the face analyser detects faces

|

| 56 |

+

--face-analyser-age {child,teen,adult,senior} filter the detected faces based on their age

|

| 57 |

+

--face-analyser-gender {female,male} filter the detected faces based on their gender

|

| 58 |

+

--face-detector-model {many,retinaface,scrfd,yoloface,yunet} choose the model responsible for detecting the face

|

| 59 |

+

--face-detector-size FACE_DETECTOR_SIZE specify the size of the frame provided to the face detector

|

| 60 |

+

--face-detector-score [0.0-0.95] filter the detected faces base on the confidence score

|

| 61 |

+

--face-landmarker-score [0.0-0.95] filter the detected landmarks base on the confidence score

|

| 62 |

+

|

| 63 |

+

face selector:

|

| 64 |

+

--face-selector-mode {many,one,reference} use reference based tracking or simple matching

|

| 65 |

+

--reference-face-position REFERENCE_FACE_POSITION specify the position used to create the reference face

|

| 66 |

+

--reference-face-distance [0.0-1.45] specify the desired similarity between the reference face and target face

|

| 67 |

+

--reference-frame-number REFERENCE_FRAME_NUMBER specify the frame used to create the reference face

|

| 68 |

+

|

| 69 |

+

face mask:

|

| 70 |

+

--face-mask-types FACE_MASK_TYPES [FACE_MASK_TYPES ...] mix and match different face mask types (choices: box, occlusion, region)

|

| 71 |

+

--face-mask-blur [0.0-0.95] specify the degree of blur applied the box mask

|

| 72 |

+

--face-mask-padding FACE_MASK_PADDING [FACE_MASK_PADDING ...] apply top, right, bottom and left padding to the box mask

|

| 73 |

+

--face-mask-regions FACE_MASK_REGIONS [FACE_MASK_REGIONS ...] choose the facial features used for the region mask (choices: skin, left-eyebrow, right-eyebrow, left-eye, right-eye, glasses, nose, mouth, upper-lip, lower-lip)

|

| 74 |

+

|

| 75 |

+

frame extraction:

|

| 76 |

+

--trim-frame-start TRIM_FRAME_START specify the the start frame of the target video

|

| 77 |

+

--trim-frame-end TRIM_FRAME_END specify the the end frame of the target video

|

| 78 |

+

--temp-frame-format {bmp,jpg,png} specify the temporary resources format

|

| 79 |

+

--keep-temp keep the temporary resources after processing

|

| 80 |

+

|

| 81 |

+

output creation:

|

| 82 |

+

--output-image-quality [0-100] specify the image quality which translates to the compression factor

|

| 83 |

+

--output-image-resolution OUTPUT_IMAGE_RESOLUTION specify the image output resolution based on the target image

|

| 84 |

+

--output-video-encoder {libx264,libx265,libvpx-vp9,h264_nvenc,hevc_nvenc,h264_amf,hevc_amf} specify the encoder use for the video compression

|

| 85 |

+

--output-video-preset {ultrafast,superfast,veryfast,faster,fast,medium,slow,slower,veryslow} balance fast video processing and video file size

|

| 86 |

+

--output-video-quality [0-100] specify the video quality which translates to the compression factor

|

| 87 |

+

--output-video-resolution OUTPUT_VIDEO_RESOLUTION specify the video output resolution based on the target video

|

| 88 |

+

--output-video-fps OUTPUT_VIDEO_FPS specify the video output fps based on the target video

|

| 89 |

+

--skip-audio omit the audio from the target video

|

| 90 |

+

|

| 91 |

+

frame processors:

|

| 92 |

+

--frame-processors FRAME_PROCESSORS [FRAME_PROCESSORS ...] load a single or multiple frame processors. (choices: face_debugger, face_enhancer, face_swapper, frame_colorizer, frame_enhancer, lip_syncer, ...)

|

| 93 |

+

--face-debugger-items FACE_DEBUGGER_ITEMS [FACE_DEBUGGER_ITEMS ...] load a single or multiple frame processors (choices: bounding-box, face-landmark-5, face-landmark-5/68, face-landmark-68, face-landmark-68/5, face-mask, face-detector-score, face-landmarker-score, age, gender)

|

| 94 |

+

--face-enhancer-model {codeformer,gfpgan_1.2,gfpgan_1.3,gfpgan_1.4,gpen_bfr_256,gpen_bfr_512,gpen_bfr_1024,gpen_bfr_2048,restoreformer_plus_plus} choose the model responsible for enhancing the face

|

| 95 |

+

--face-enhancer-blend [0-100] blend the enhanced into the previous face

|

| 96 |

+

--face-swapper-model {blendswap_256,inswapper_128,inswapper_128_fp16,simswap_256,simswap_512_unofficial,uniface_256} choose the model responsible for swapping the face

|

| 97 |

+

--frame-colorizer-model {ddcolor,ddcolor_artistic,deoldify,deoldify_artistic,deoldify_stable} choose the model responsible for colorizing the frame

|

| 98 |

+

--frame-colorizer-blend [0-100] blend the colorized into the previous frame

|

| 99 |

+

--frame-colorizer-size {192x192,256x256,384x384,512x512} specify the size of the frame provided to the frame colorizer

|

| 100 |

+

--frame-enhancer-model {clear_reality_x4,lsdir_x4,nomos8k_sc_x4,real_esrgan_x2,real_esrgan_x2_fp16,real_esrgan_x4,real_esrgan_x4_fp16,real_hatgan_x4,span_kendata_x4,ultra_sharp_x4} choose the model responsible for enhancing the frame

|

| 101 |

+

--frame-enhancer-blend [0-100] blend the enhanced into the previous frame

|

| 102 |

+

--lip-syncer-model {wav2lip_gan} choose the model responsible for syncing the lips

|

| 103 |

+

|

| 104 |

+

uis:

|

| 105 |

+

--open-browser open the browser once the program is ready

|

| 106 |

+

--ui-layouts UI_LAYOUTS [UI_LAYOUTS ...] launch a single or multiple UI layouts (choices: benchmark, default, webcam, ...)

|

| 107 |

+

```

|

| 108 |

+

|

| 109 |

+

|

| 110 |

+

Documentation

|

| 111 |

+

-------------

|

| 112 |

+

|

| 113 |

+

Read the [documentation](https://docs.facefusion.io) for a deep dive.

|

facefusion/core.py

ADDED

|

@@ -0,0 +1,443 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

import os

|

| 2 |

+

|

| 3 |

+

os.environ['OMP_NUM_THREADS'] = '1'

|

| 4 |

+

|

| 5 |

+

import signal

|

| 6 |

+

import sys

|

| 7 |

+

import warnings

|

| 8 |

+

import shutil

|

| 9 |

+

import numpy

|

| 10 |

+

import onnxruntime

|

| 11 |

+

from time import sleep, time

|

| 12 |

+

from argparse import ArgumentParser, HelpFormatter

|

| 13 |

+

|

| 14 |

+

import facefusion.choices

|

| 15 |

+

import facefusion.globals

|

| 16 |

+

from facefusion.face_analyser import get_one_face, get_average_face

|

| 17 |

+

from facefusion.face_store import get_reference_faces, append_reference_face

|

| 18 |

+

from facefusion import face_analyser, face_masker, content_analyser, config, process_manager, metadata, logger, wording, voice_extractor

|

| 19 |

+

from facefusion.content_analyser import analyse_image, analyse_video

|

| 20 |

+

from facefusion.processors.frame.core import get_frame_processors_modules, load_frame_processor_module

|

| 21 |

+

from facefusion.common_helper import create_metavar, get_first

|

| 22 |

+

from facefusion.execution import encode_execution_providers, decode_execution_providers

|

| 23 |

+

from facefusion.normalizer import normalize_output_path, normalize_padding, normalize_fps

|

| 24 |

+

from facefusion.memory import limit_system_memory

|

| 25 |

+

from facefusion.statistics import conditional_log_statistics

|

| 26 |

+

from facefusion.download import conditional_download

|

| 27 |

+

from facefusion.filesystem import get_temp_frame_paths, get_temp_file_path, create_temp, move_temp, clear_temp, is_image, is_video, filter_audio_paths, resolve_relative_path, list_directory

|

| 28 |

+

from facefusion.ffmpeg import extract_frames, merge_video, copy_image, finalize_image, restore_audio, replace_audio

|

| 29 |

+

from facefusion.vision import read_image, read_static_images, detect_image_resolution, restrict_video_fps, create_image_resolutions, get_video_frame, detect_video_resolution, detect_video_fps, restrict_video_resolution, restrict_image_resolution, create_video_resolutions, pack_resolution, unpack_resolution

|

| 30 |

+

|

| 31 |

+

onnxruntime.set_default_logger_severity(3)

|

| 32 |

+

warnings.filterwarnings('ignore', category = UserWarning, module = 'gradio')

|

| 33 |

+

|

| 34 |

+

|

| 35 |

+

def cli() -> None:

|

| 36 |

+

signal.signal(signal.SIGINT, lambda signal_number, frame: destroy())

|

| 37 |

+

program = ArgumentParser(formatter_class=lambda prog: HelpFormatter(prog, max_help_position=200), add_help=False)

|

| 38 |

+

# general

|

| 39 |

+

program.add_argument('-c', '--config', help=wording.get('help.config'), dest='config_path', default='facefusion.ini')

|

| 40 |

+

apply_config(program)

|

| 41 |

+

program.add_argument('-s', '--source', help=wording.get('help.source'), action='append', dest='source_paths', default=config.get_str_list('general.source_paths'))

|

| 42 |

+

program.add_argument('-t', '--target', help=wording.get('help.target'), dest='target_path', default=config.get_str_value('general.target_path'))

|

| 43 |

+

program.add_argument('-o', '--output', help=wording.get('help.output'), dest='output_path', default=config.get_str_value('general.output_path'))

|

| 44 |

+

program.add_argument('-v', '--version', version=metadata.get('name') + ' ' + metadata.get('version'), action='version')

|

| 45 |

+

# misc

|

| 46 |

+

group_misc = program.add_argument_group('misc')

|

| 47 |

+

group_misc.add_argument('--force-download', help=wording.get('help.force_download'), action='store_true', default=config.get_bool_value('misc.force_download'))

|

| 48 |

+

group_misc.add_argument('--skip-download', help=wording.get('help.skip_download'), action='store_true', default=config.get_bool_value('misc.skip_download'))

|

| 49 |

+

group_misc.add_argument('--headless', help=wording.get('help.headless'), action='store_true', default=config.get_bool_value('misc.headless'))

|

| 50 |

+

group_misc.add_argument('--log-level', help=wording.get('help.log_level'), default=config.get_str_value('misc.log_level', 'info'), choices=logger.get_log_levels())

|

| 51 |

+

# execution

|

| 52 |

+

execution_providers = encode_execution_providers(onnxruntime.get_available_providers())

|

| 53 |

+

group_execution = program.add_argument_group('execution')

|

| 54 |

+

group_execution.add_argument('--execution-device-id', help=wording.get('help.execution_device_id'), default=config.get_str_value('execution.face_detector_size', '0'))

|

| 55 |

+

group_execution.add_argument('--execution-providers', help=wording.get('help.execution_providers').format(choices=', '.join(execution_providers)), default=config.get_str_list('execution.execution_providers', 'cpu'), choices=execution_providers, nargs='+', metavar='EXECUTION_PROVIDERS')

|

| 56 |

+

group_execution.add_argument('--execution-thread-count', help=wording.get('help.execution_thread_count'), type=int, default=config.get_int_value('execution.execution_thread_count', '4'), choices=facefusion.choices.execution_thread_count_range, metavar=create_metavar(facefusion.choices.execution_thread_count_range))

|

| 57 |

+

group_execution.add_argument('--execution-queue-count', help=wording.get('help.execution_queue_count'), type=int, default=config.get_int_value('execution.execution_queue_count', '1'), choices=facefusion.choices.execution_queue_count_range, metavar=create_metavar(facefusion.choices.execution_queue_count_range))

|

| 58 |

+

# memory

|

| 59 |

+

group_memory = program.add_argument_group('memory')

|

| 60 |

+

group_memory.add_argument('--video-memory-strategy', help=wording.get('help.video_memory_strategy'), default=config.get_str_value('memory.video_memory_strategy', 'strict'), choices=facefusion.choices.video_memory_strategies)

|

| 61 |

+

group_memory.add_argument('--system-memory-limit', help=wording.get('help.system_memory_limit'), type=int, default=config.get_int_value('memory.system_memory_limit', '0'), choices=facefusion.choices.system_memory_limit_range, metavar=create_metavar(facefusion.choices.system_memory_limit_range))

|

| 62 |

+

# face analyser

|

| 63 |

+

group_face_analyser = program.add_argument_group('face analyser')

|

| 64 |

+

group_face_analyser.add_argument('--face-analyser-order', help=wording.get('help.face_analyser_order'), default=config.get_str_value('face_analyser.face_analyser_order', 'left-right'), choices=facefusion.choices.face_analyser_orders)

|

| 65 |

+

group_face_analyser.add_argument('--face-analyser-age', help=wording.get('help.face_analyser_age'), default=config.get_str_value('face_analyser.face_analyser_age'), choices=facefusion.choices.face_analyser_ages)

|

| 66 |

+

group_face_analyser.add_argument('--face-analyser-gender', help=wording.get('help.face_analyser_gender'), default=config.get_str_value('face_analyser.face_analyser_gender'), choices=facefusion.choices.face_analyser_genders)

|

| 67 |

+

group_face_analyser.add_argument('--face-detector-model', help=wording.get('help.face_detector_model'), default=config.get_str_value('face_analyser.face_detector_model', 'yoloface'), choices=facefusion.choices.face_detector_set.keys())

|

| 68 |

+

group_face_analyser.add_argument('--face-detector-size', help=wording.get('help.face_detector_size'), default=config.get_str_value('face_analyser.face_detector_size', '640x640'))

|

| 69 |

+

group_face_analyser.add_argument('--face-detector-score', help=wording.get('help.face_detector_score'), type=float, default=config.get_float_value('face_analyser.face_detector_score', '0.5'), choices=facefusion.choices.face_detector_score_range, metavar=create_metavar(facefusion.choices.face_detector_score_range))

|

| 70 |

+

group_face_analyser.add_argument('--face-landmarker-score', help=wording.get('help.face_landmarker_score'), type=float, default=config.get_float_value('face_analyser.face_landmarker_score', '0.5'), choices=facefusion.choices.face_landmarker_score_range, metavar=create_metavar(facefusion.choices.face_landmarker_score_range))

|

| 71 |

+

# face selector

|

| 72 |

+

group_face_selector = program.add_argument_group('face selector')

|

| 73 |

+

group_face_selector.add_argument('--face-selector-mode', help=wording.get('help.face_selector_mode'), default=config.get_str_value('face_selector.face_selector_mode', 'reference'), choices=facefusion.choices.face_selector_modes)

|

| 74 |

+

group_face_selector.add_argument('--reference-face-position', help=wording.get('help.reference_face_position'), type=int, default=config.get_int_value('face_selector.reference_face_position', '0'))

|

| 75 |

+

group_face_selector.add_argument('--reference-face-distance', help=wording.get('help.reference_face_distance'), type=float, default=config.get_float_value('face_selector.reference_face_distance', '0.6'), choices=facefusion.choices.reference_face_distance_range, metavar=create_metavar(facefusion.choices.reference_face_distance_range))

|

| 76 |

+

group_face_selector.add_argument('--reference-frame-number', help=wording.get('help.reference_frame_number'), type=int, default=config.get_int_value('face_selector.reference_frame_number', '0'))

|

| 77 |

+

# face mask

|

| 78 |

+

group_face_mask = program.add_argument_group('face mask')

|

| 79 |

+

group_face_mask.add_argument('--face-mask-types', help=wording.get('help.face_mask_types').format(choices=', '.join(facefusion.choices.face_mask_types)), default=config.get_str_list('face_mask.face_mask_types', 'box'), choices=facefusion.choices.face_mask_types, nargs='+', metavar='FACE_MASK_TYPES')

|

| 80 |

+

group_face_mask.add_argument('--face-mask-blur', help=wording.get('help.face_mask_blur'), type=float, default=config.get_float_value('face_mask.face_mask_blur', '0.3'), choices=facefusion.choices.face_mask_blur_range, metavar=create_metavar(facefusion.choices.face_mask_blur_range))

|

| 81 |

+

group_face_mask.add_argument('--face-mask-padding', help=wording.get('help.face_mask_padding'), type=int, default=config.get_int_list('face_mask.face_mask_padding', '0 0 0 0'), nargs='+')

|

| 82 |

+

group_face_mask.add_argument('--face-mask-regions', help=wording.get('help.face_mask_regions').format(choices=', '.join(facefusion.choices.face_mask_regions)), default=config.get_str_list('face_mask.face_mask_regions', ' '.join(facefusion.choices.face_mask_regions)), choices=facefusion.choices.face_mask_regions, nargs='+', metavar='FACE_MASK_REGIONS')

|

| 83 |

+

# frame extraction

|

| 84 |

+

group_frame_extraction = program.add_argument_group('frame extraction')

|

| 85 |

+

group_frame_extraction.add_argument('--trim-frame-start', help=wording.get('help.trim_frame_start'), type=int, default=facefusion.config.get_int_value('frame_extraction.trim_frame_start'))

|

| 86 |

+

group_frame_extraction.add_argument('--trim-frame-end', help=wording.get('help.trim_frame_end'), type=int, default=facefusion.config.get_int_value('frame_extraction.trim_frame_end'))

|

| 87 |

+

group_frame_extraction.add_argument('--temp-frame-format', help=wording.get('help.temp_frame_format'), default=config.get_str_value('frame_extraction.temp_frame_format', 'png'), choices=facefusion.choices.temp_frame_formats)

|

| 88 |

+

group_frame_extraction.add_argument('--keep-temp', help=wording.get('help.keep_temp'), action='store_true', default=config.get_bool_value('frame_extraction.keep_temp'))

|

| 89 |

+

# output creation

|

| 90 |

+

group_output_creation = program.add_argument_group('output creation')

|

| 91 |

+

group_output_creation.add_argument('--output-image-quality', help=wording.get('help.output_image_quality'), type=int, default=config.get_int_value('output_creation.output_image_quality', '80'), choices=facefusion.choices.output_image_quality_range, metavar=create_metavar(facefusion.choices.output_image_quality_range))

|

| 92 |

+

group_output_creation.add_argument('--output-image-resolution', help=wording.get('help.output_image_resolution'), default=config.get_str_value('output_creation.output_image_resolution'))

|

| 93 |

+

group_output_creation.add_argument('--output-video-encoder', help=wording.get('help.output_video_encoder'), default=config.get_str_value('output_creation.output_video_encoder', 'libx264'), choices=facefusion.choices.output_video_encoders)

|

| 94 |

+

group_output_creation.add_argument('--output-video-preset', help=wording.get('help.output_video_preset'), default=config.get_str_value('output_creation.output_video_preset', 'veryfast'), choices=facefusion.choices.output_video_presets)

|

| 95 |

+

group_output_creation.add_argument('--output-video-quality', help=wording.get('help.output_video_quality'), type=int, default=config.get_int_value('output_creation.output_video_quality', '80'), choices=facefusion.choices.output_video_quality_range, metavar=create_metavar(facefusion.choices.output_video_quality_range))

|

| 96 |

+

group_output_creation.add_argument('--output-video-resolution', help=wording.get('help.output_video_resolution'), default=config.get_str_value('output_creation.output_video_resolution'))

|

| 97 |

+

group_output_creation.add_argument('--output-video-fps', help=wording.get('help.output_video_fps'), type=float, default=config.get_str_value('output_creation.output_video_fps'))

|

| 98 |

+

group_output_creation.add_argument('--skip-audio', help=wording.get('help.skip_audio'), action='store_true', default=config.get_bool_value('output_creation.skip_audio'))

|

| 99 |

+

# frame processors

|

| 100 |

+

base_dir = os.path.dirname(os.path.abspath(__file__))

|

| 101 |

+

modules_path = os.path.join(base_dir, "facefusion", "processors", "frame", "modules")

|

| 102 |

+

available_frame_processors = list_directory(modules_path)

|

| 103 |

+

|

| 104 |

+

# Debug statement to print available frame processors

|

| 105 |

+

print(f"Available frame processors: {available_frame_processors}")

|

| 106 |

+

|

| 107 |

+

if not isinstance(available_frame_processors, list):

|

| 108 |

+

available_frame_processors = list(available_frame_processors)

|

| 109 |

+

|

| 110 |

+

program = ArgumentParser(parents=[program], formatter_class=program.formatter_class, add_help=True)

|

| 111 |

+

group_frame_processors = program.add_argument_group('frame processors')

|

| 112 |

+

group_frame_processors.add_argument('--frame-processors', help=wording.get('help.frame_processors').format(choices=', '.join(available_frame_processors)), default=config.get_str_list('frame_processors.frame_processors', 'face_swapper'), nargs='+')

|

| 113 |

+

for frame_processor in available_frame_processors:

|

| 114 |

+

frame_processor_module = load_frame_processor_module(frame_processor)

|

| 115 |

+

frame_processor_module.register_args(group_frame_processors)

|

| 116 |

+

run(program)

|

| 117 |

+

|

| 118 |

+

|

| 119 |

+

def apply_config(program: ArgumentParser) -> None:

|

| 120 |

+

known_args = program.parse_known_args()

|

| 121 |

+

facefusion.globals.config_path = get_first(known_args).config_path

|

| 122 |

+

|

| 123 |

+

|

| 124 |

+

def validate_args(program: ArgumentParser) -> None:

|

| 125 |

+

try:

|

| 126 |

+

for action in program._actions:

|

| 127 |

+

if action.default:

|

| 128 |

+

if isinstance(action.default, list):

|

| 129 |

+

for default in action.default:

|

| 130 |

+

program._check_value(action, default)

|

| 131 |

+

else:

|

| 132 |

+

program._check_value(action, action.default)

|

| 133 |

+

except Exception as exception:

|

| 134 |

+

program.error(str(exception))

|

| 135 |

+

|

| 136 |

+

|

| 137 |

+

def apply_args(program: ArgumentParser) -> None:

|

| 138 |

+

args = program.parse_args()

|

| 139 |

+

# general

|

| 140 |

+

facefusion.globals.source_paths = args.source_paths

|

| 141 |

+

facefusion.globals.target_path = args.target_path

|

| 142 |

+

facefusion.globals.output_path = args.output_path

|

| 143 |

+

# misc

|

| 144 |

+

facefusion.globals.force_download = args.force_download

|

| 145 |

+

facefusion.globals.skip_download = args.skip_download

|

| 146 |

+

facefusion.globals.headless = args.headless

|

| 147 |

+

facefusion.globals.log_level = args.log_level

|

| 148 |

+

# execution

|

| 149 |

+

facefusion.globals.execution_device_id = args.execution_device_id

|

| 150 |

+

facefusion.globals.execution_providers = decode_execution_providers(args.execution_providers)

|

| 151 |

+

facefusion.globals.execution_thread_count = args.execution_thread_count

|

| 152 |

+

facefusion.globals.execution_queue_count = args.execution_queue_count

|

| 153 |

+

# memory

|

| 154 |

+

facefusion.globals.video_memory_strategy = args.video_memory_strategy

|

| 155 |

+

facefusion.globals.system_memory_limit = args.system_memory_limit

|

| 156 |

+

# face analyser

|

| 157 |

+

facefusion.globals.face_analyser_order = args.face_analyser_order

|

| 158 |

+

facefusion.globals.face_analyser_age = args.face_analyser_age

|

| 159 |

+

facefusion.globals.face_analyser_gender = args.face_analyser_gender

|

| 160 |

+

facefusion.globals.face_detector_model = args.face_detector_model

|

| 161 |

+

if args.face_detector_size in facefusion.choices.face_detector_set[args.face_detector_model]:

|

| 162 |

+

facefusion.globals.face_detector_size = args.face_detector_size

|

| 163 |

+

else:

|

| 164 |

+

facefusion.globals.face_detector_size = '640x640'

|

| 165 |

+

facefusion.globals.face_detector_score = args.face_detector_score

|

| 166 |

+

facefusion.globals.face_landmarker_score = args.face_landmarker_score

|

| 167 |

+

# face selector

|

| 168 |

+

facefusion.globals.face_selector_mode = args.face_selector_mode

|

| 169 |

+

facefusion.globals.reference_face_position = args.reference_face_position

|

| 170 |

+

facefusion.globals.reference_face_distance = args.reference_face_distance

|

| 171 |

+

facefusion.globals.reference_frame_number = args.reference_frame_number

|

| 172 |

+

# face mask

|

| 173 |

+

facefusion.globals.face_mask_types = args.face_mask_types

|

| 174 |

+

facefusion.globals.face_mask_blur = args.face_mask_blur

|

| 175 |

+

facefusion.globals.face_mask_padding = normalize_padding(args.face_mask_padding)

|

| 176 |

+

facefusion.globals.face_mask_regions = args.face_mask_regions

|

| 177 |

+

# frame extraction

|

| 178 |

+

facefusion.globals.trim_frame_start = args.trim_frame_start

|

| 179 |

+

facefusion.globals.trim_frame_end = args.trim_frame_end

|

| 180 |

+

facefusion.globals.temp_frame_format = args.temp_frame_format

|

| 181 |

+

facefusion.globals.keep_temp = args.keep_temp

|

| 182 |

+

# output creation

|

| 183 |

+

facefusion.globals.output_image_quality = args.output_image_quality

|

| 184 |

+

if is_image(args.target_path):

|

| 185 |

+

output_image_resolution = detect_image_resolution(args.target_path)

|

| 186 |

+

output_image_resolutions = create_image_resolutions(output_image_resolution)

|

| 187 |

+

if args.output_image_resolution in output_image_resolutions:

|

| 188 |

+

facefusion.globals.output_image_resolution = args.output_image_resolution

|

| 189 |

+

else:

|

| 190 |

+

facefusion.globals.output_image_resolution = pack_resolution(output_image_resolution)

|

| 191 |

+

facefusion.globals.output_video_encoder = args.output_video_encoder

|

| 192 |

+

facefusion.globals.output_video_preset = args.output_video_preset

|

| 193 |

+

facefusion.globals.output_video_quality = args.output_video_quality

|

| 194 |

+

if is_video(args.target_path):

|

| 195 |

+

output_video_resolution = detect_video_resolution(args.target_path)

|

| 196 |

+

output_video_resolutions = create_video_resolutions(output_video_resolution)

|

| 197 |

+

if args.output_video_resolution in output_video_resolutions:

|

| 198 |

+

facefusion.globals.output_video_resolution = args.output_video_resolution

|

| 199 |

+

else:

|

| 200 |

+

facefusion.globals.output_video_resolution = pack_resolution(output_video_resolution)

|

| 201 |

+

if args.output_video_fps or is_video(args.target_path):

|

| 202 |

+

facefusion.globals.output_video_fps = normalize_fps(args.output_video_fps) or detect_video_fps(args.target_path)

|

| 203 |

+

facefusion.globals.skip_audio = args.skip_audio

|

| 204 |

+

# frame processors

|

| 205 |

+

base_dir = os.path.dirname(os.path.abspath(__file__))

|

| 206 |

+

modules_path = os.path.join(base_dir, "facefusion", "processors", "frame", "modules")

|

| 207 |

+

available_frame_processors = list_directory(modules_path)

|

| 208 |

+

facefusion.globals.frame_processors = args.frame_processors

|

| 209 |

+

for frame_processor in available_frame_processors:

|

| 210 |

+

frame_processor_module = load_frame_processor_module(frame_processor)

|

| 211 |

+

frame_processor_module.apply_args(program)

|

| 212 |

+

# uis

|

| 213 |

+

# facefusion.globals.open_browser = args.open_browser

|

| 214 |

+

# facefusion.globals.ui_layouts = args.ui_layouts

|

| 215 |

+

|

| 216 |

+

|

| 217 |

+

def run(program: ArgumentParser) -> None:

|

| 218 |

+

validate_args(program)

|

| 219 |

+

apply_args(program)

|

| 220 |

+

logger.init(facefusion.globals.log_level)

|

| 221 |

+

|

| 222 |

+

if facefusion.globals.system_memory_limit > 0:

|

| 223 |

+

limit_system_memory(facefusion.globals.system_memory_limit)

|

| 224 |

+

if facefusion.globals.force_download:

|

| 225 |

+

force_download()

|

| 226 |

+

return

|

| 227 |

+

if not pre_check() or not content_analyser.pre_check() or not face_analyser.pre_check() or not face_masker.pre_check() or not voice_extractor.pre_check():

|

| 228 |

+

return

|

| 229 |

+

for frame_processor_module in get_frame_processors_modules(facefusion.globals.frame_processors):

|

| 230 |

+

if not frame_processor_module.pre_check():

|

| 231 |

+

return

|

| 232 |

+

if facefusion.globals.headless:

|

| 233 |

+

conditional_process()

|

| 234 |

+

else:

|

| 235 |

+

conditional_process() # Run process directly without UI

|

| 236 |

+

|

| 237 |

+

|

| 238 |