---

library_name: transformers

license: cc-by-nc-4.0

pipeline_tag: text-generation

tags:

- text-to-sql

- reinforcement-learning

---

# SLM-SQL: An Exploration of Small Language Models for Text-to-SQL

### Important Links

📖[Arxiv Paper](https://arxiv.org/abs/2507.22478) | 🤗[Hugging Face Paper](https://huggingface.co/papers/2507.22478) | 🐙[GitHub Repository](https://github.com/CycloneBoy/slm_sql) | 🤗[HuggingFace Collection](https://huggingface.co/collections/cycloneboy/slm-sql-688b02f99f958d7a417658dc) | 🤖[ModelScope Collection](https://modelscope.cn/collections/SLM-SQL-624bb6a60e9643) |

## News

+ `July 31, 2025`: Upload model to modelscope and huggingface.

+ `July 30, 2025`: Publish the paper to arxiv

## Abstract

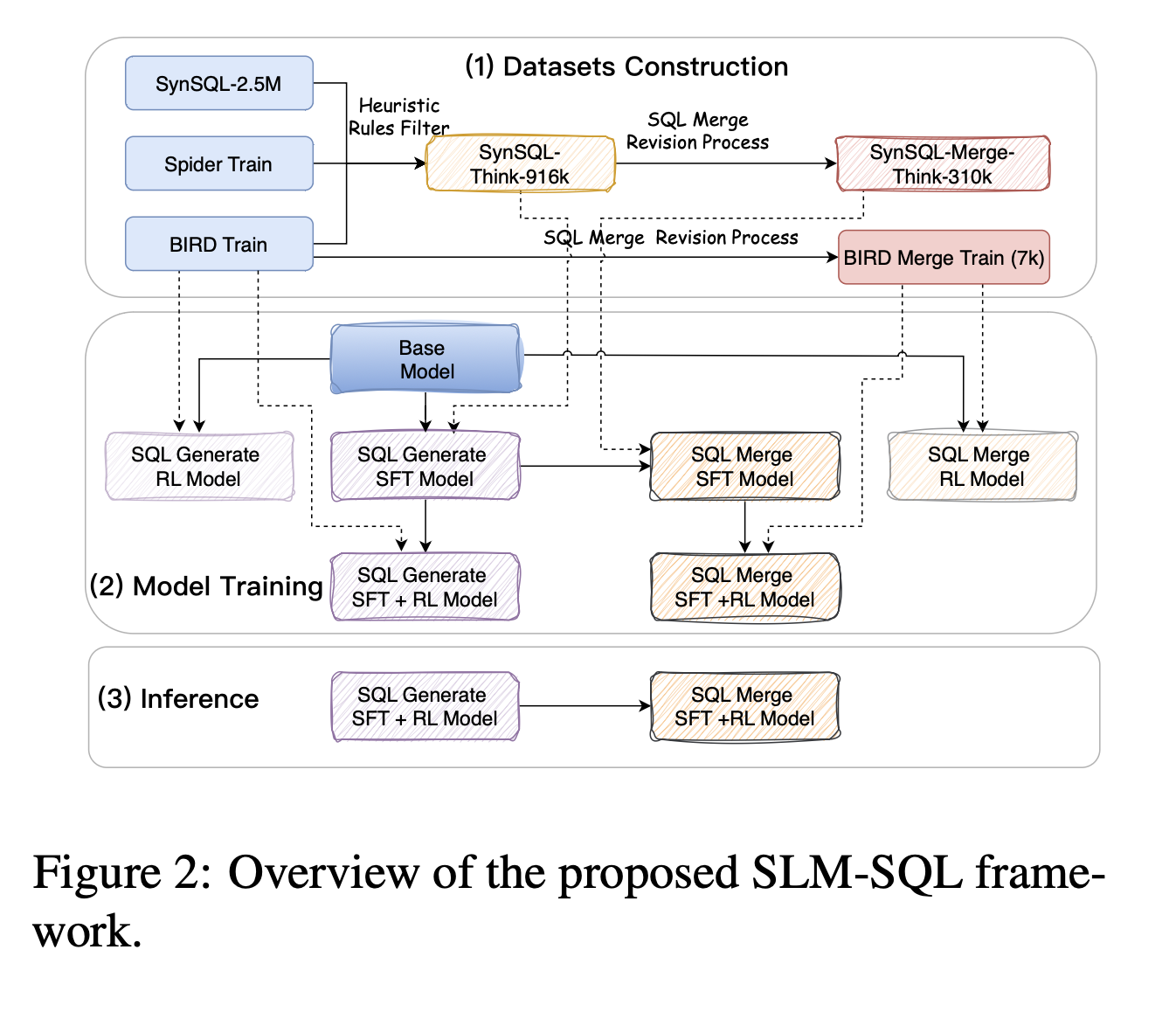

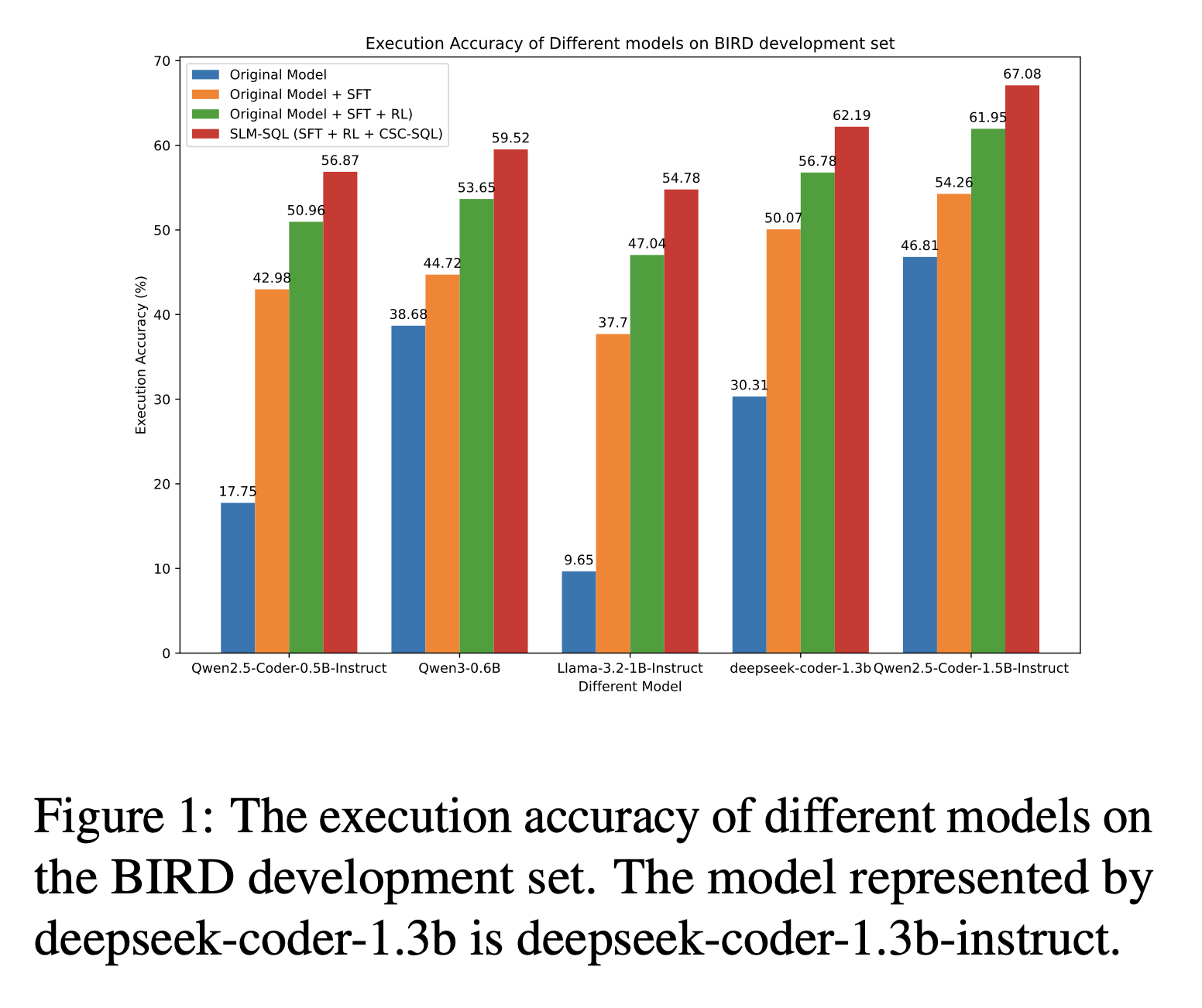

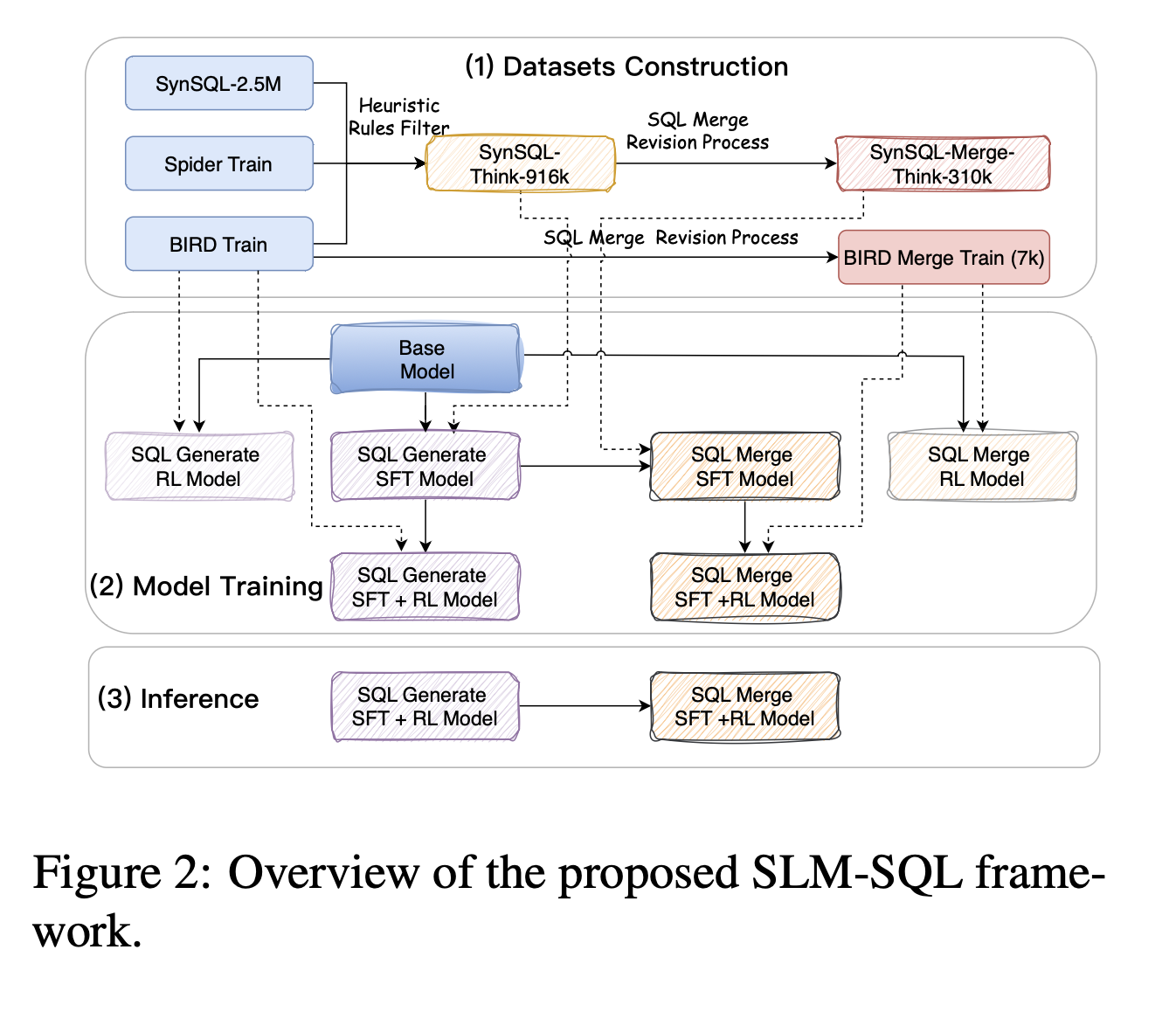

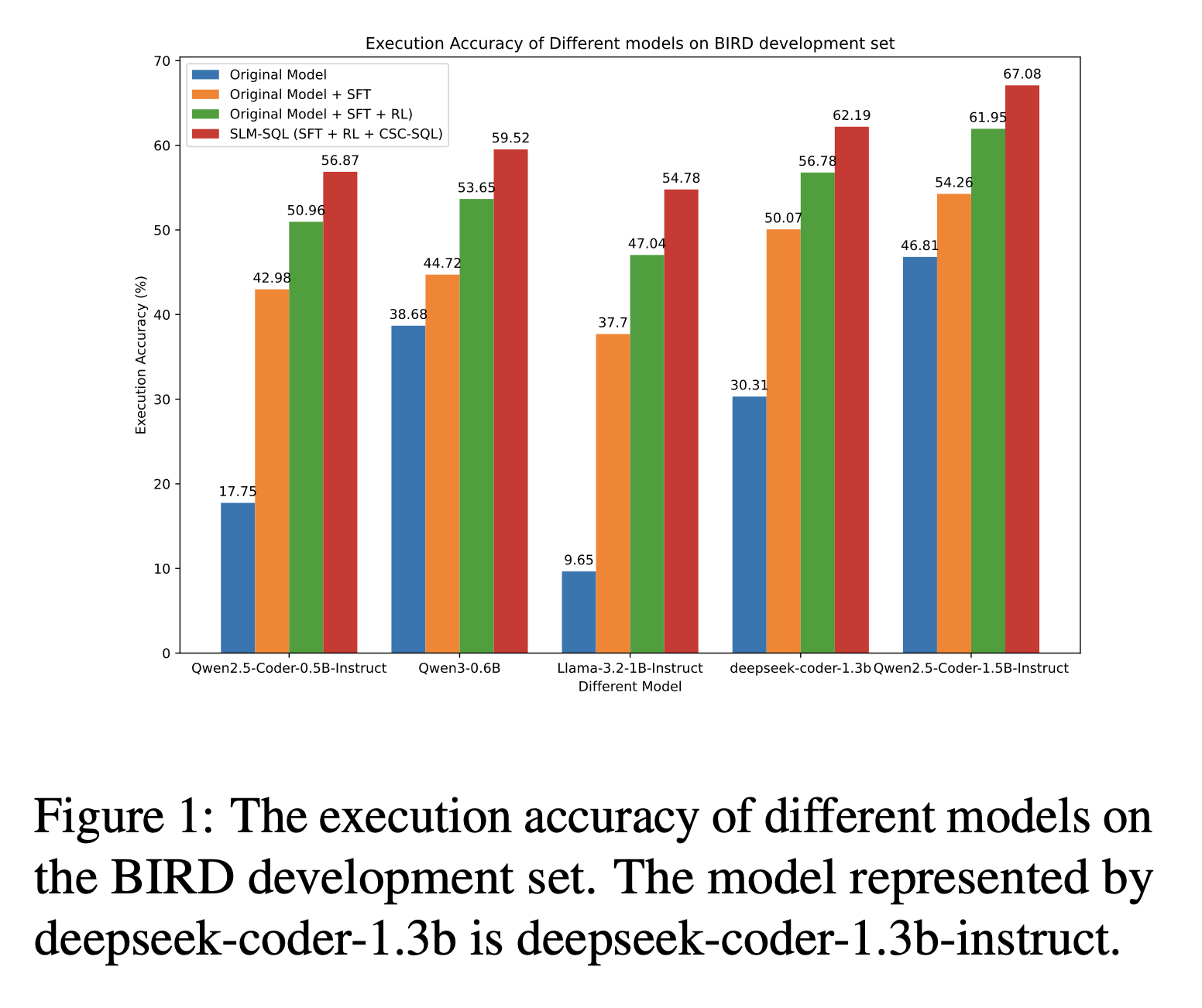

Large language models (LLMs) have demonstrated strong performance in translating natural language questions into SQL queries (Text-to-SQL). In contrast, small language models (SLMs) ranging from 0.5B to 1.5B parameters currently underperform on Text-to-SQL tasks due to their limited logical reasoning capabilities. However, SLMs offer inherent advantages in inference speed and suitability for edge deployment. To explore their potential in Text-to-SQL applications, we leverage recent advancements in post-training techniques. Specifically, we used the open-source SynSQL-2.5M dataset to construct two derived datasets: SynSQL-Think-916K for SQL generation and SynSQL-Merge-Think-310K for SQL merge revision. We then applied supervised fine-tuning and reinforcement learning-based post-training to the SLM, followed by inference using a corrective self-consistency approach. Experimental results validate the effectiveness and generalizability of our method, SLM-SQL. On the BIRD development set, the five evaluated models achieved an average improvement of 31.4 points. Notably, the 0.5B model reached 56.87\% execution accuracy (EX), while the 1.5B model achieved 67.08\% EX.

### Framework

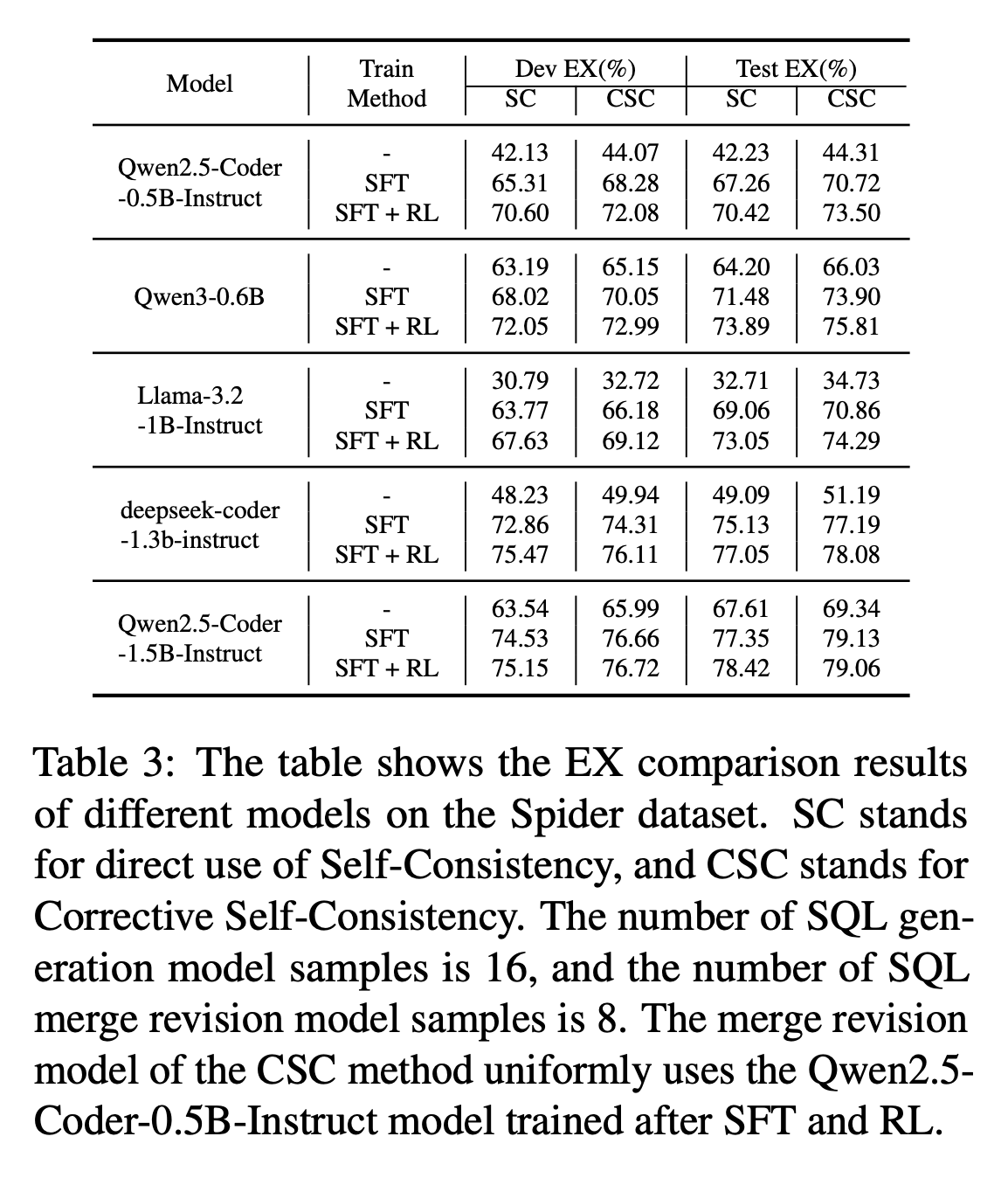

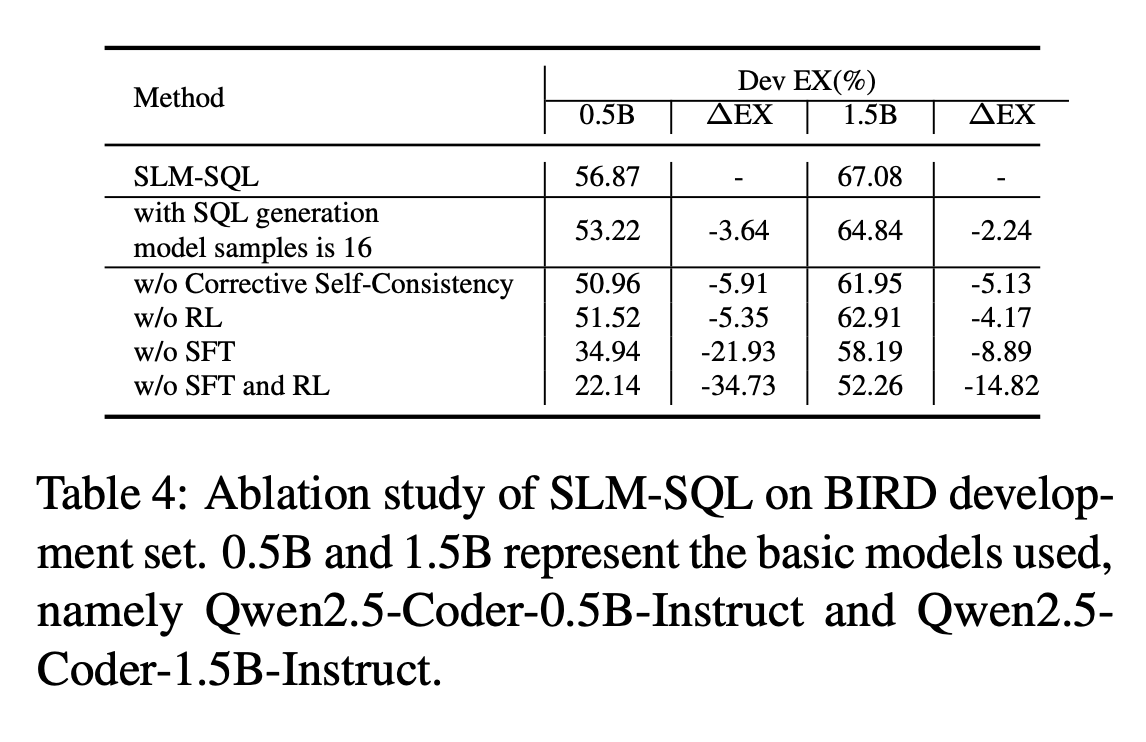

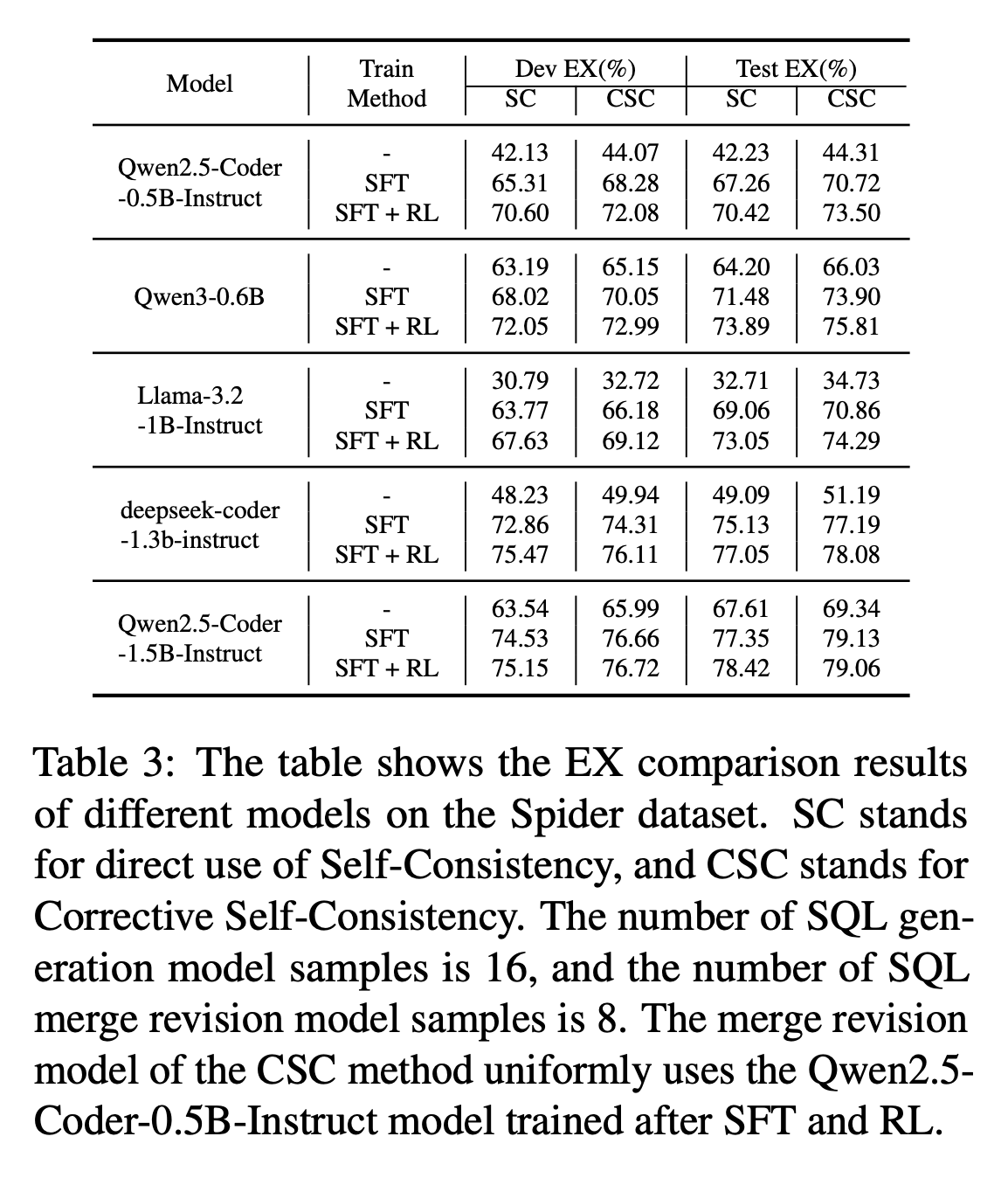

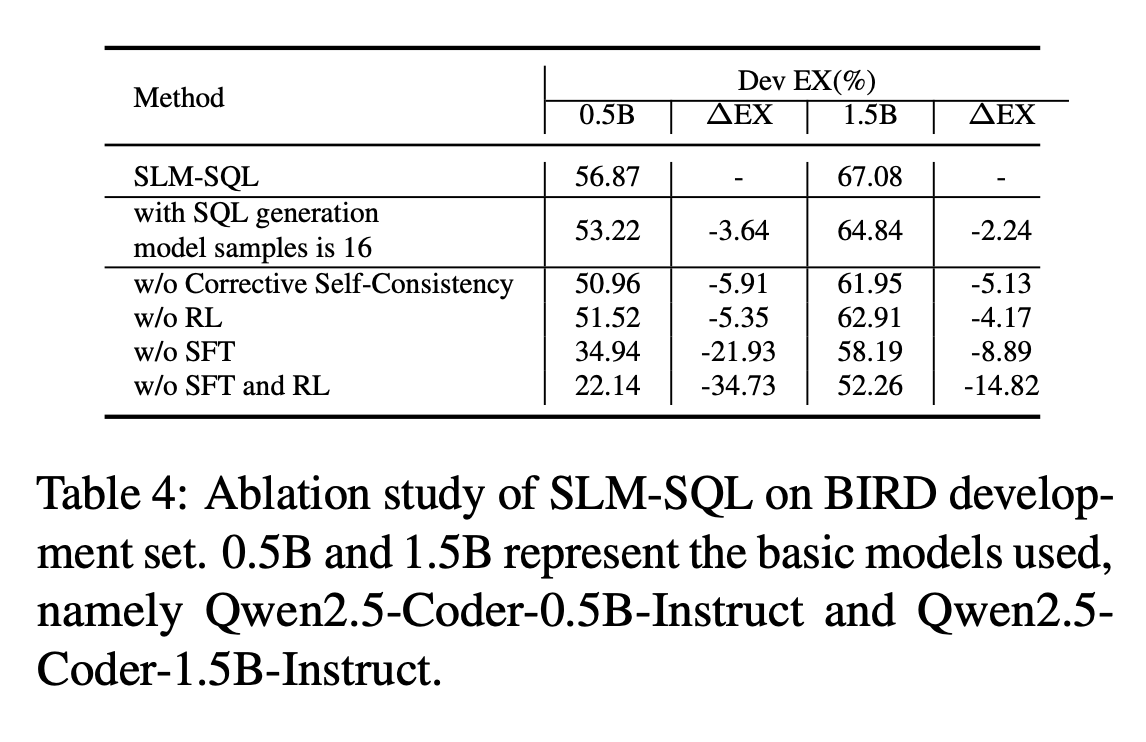

### Main Results

### Main Results

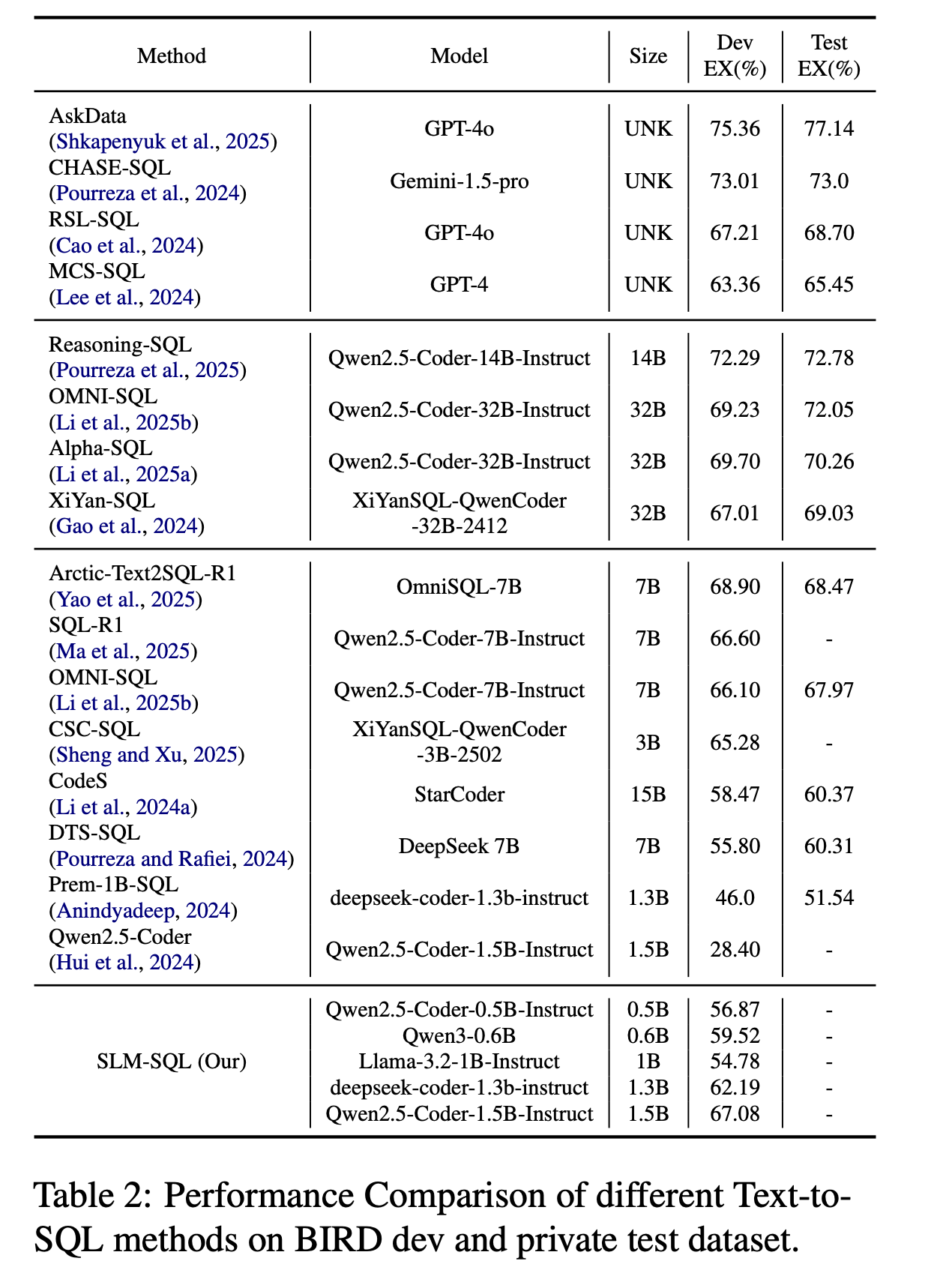

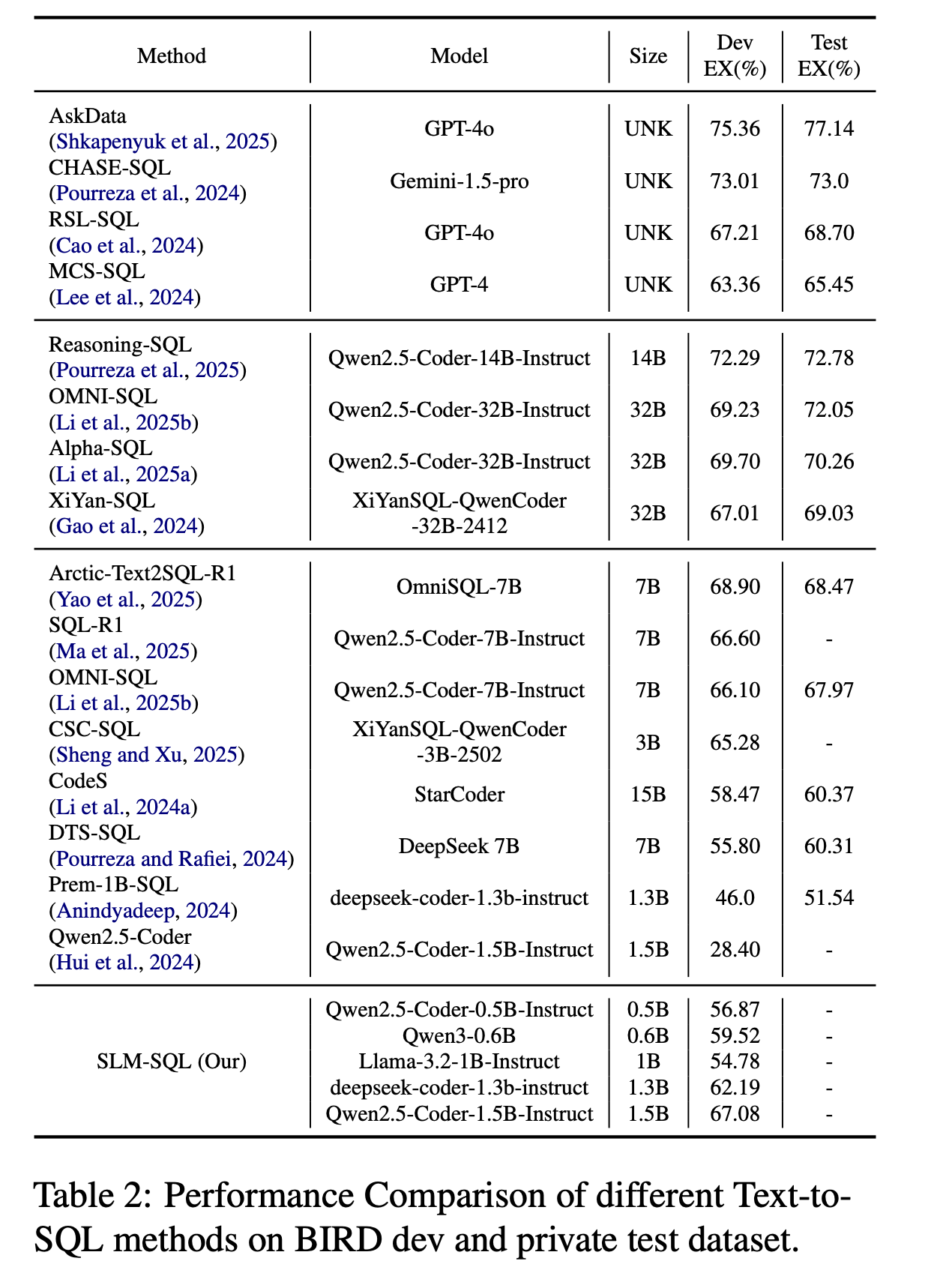

Performance Comparison of different Text-to-SQL methods on BIRD dev and test dataset.

Performance Comparison of different Text-to-SQL methods on BIRD dev and test dataset.

## How to Use

You can easily use this model with the Hugging Face `transformers` library. Below is a general example for inference:

```python

from transformers import AutoModelForCausalLM, AutoTokenizer

import torch

# Load the model and tokenizer

model_name = "cycloneboy/SLM-SQL-1.5B" # Example: You can choose other models from the table below

tokenizer = AutoTokenizer.from_pretrained(model_name)

model = AutoModelForCausalLM.from_pretrained(

model_name,

torch_dtype=torch.bfloat16, # or torch.float16, adjust based on your GPU

device_map="auto" # Automatically map model to available devices

)

model.eval()

# Example prompt for Text-to-SQL

# Replace this with your natural language query for a specific database schema

prompt = """

[Instruction]: Given the following database schema, generate a SQL query that answers the question.

[Schema]:

CREATE TABLE Student (StuID INT, Name TEXT, Age INT, Sex TEXT, Major TEXT, Advisor INT, Graduated BOOL);

CREATE TABLE Course (CrsID INT, Title TEXT, Dept TEXT, Credits INT);

CREATE TABLE Enrollment (StuID INT, CrsID INT, Grade REAL);

CREATE TABLE Advisor (AdvID INT, Name TEXT, Dept TEXT);

[Question]: What is the average age of students who are taking 'Database' course?

"""

inputs = tokenizer(prompt, return_tensors="pt").to(model.device)

# Generate SQL query

outputs = model.generate(

**inputs,

max_new_tokens=256,

num_beams=1, # Adjust for different decoding strategies

do_sample=False,

temperature=0.0,

top_p=1.0,

eos_token_id=tokenizer.eos_token_id

)

generated_text = tokenizer.decode(outputs[0], skip_special_tokens=True)

print(generated_text)

# The output will contain the prompt and the generated SQL.

# You might need to parse the generated_text to extract only the SQL query.

```

## Model

| **Model** | Base Model | Train Method | Modelscope | HuggingFace |

|------------------------------------------|------------------------------|--------------|---------------------------------------------------------------------------------------------------|----------------------------------------------------------------------------------------------|

| SLM-SQL-Base-0.5B | Qwen2.5-Coder-0.5B-Instruct | SFT | [🤖 Modelscope](https://modelscope.cn/models/cycloneboy/SLM-SQL-Base-0.5B) | [🤗 HuggingFace](https://huggingface.co/cycloneboy/SLM-SQL-Base-0.5B) |

| SLM-SQL-0.5B | Qwen2.5-Coder-0.5B-Instruct | SFT + GRPO | [🤖 Modelscope](https://modelscope.cn/models/cycloneboy/SLM-SQL-0.5B) | [🤗 HuggingFace](https://huggingface.co/cycloneboy/SLM-SQL-0.5B) |

| CscSQL-Merge-Qwen2.5-Coder-0.5B-Instruct | Qwen2.5-Coder-0.5B-Instruct | SFT + GRPO | [🤖 Modelscope](https://modelscope.cn/models/cycloneboy/CscSQL-Merge-Qwen2.5-Coder-0.5B-Instruct) | [🤗 HuggingFace](https://huggingface.co/cycloneboy/CscSQL-Merge-Qwen2.5-Coder-0.5B-Instruct) |

| SLM-SQL-Base-1.5B | Qwen2.5-Coder-1.5B-Instruct | SFT | [🤖 Modelscope](https://modelscope.cn/models/cycloneboy/SLM-SQL-Base-1.5B) | [🤗 HuggingFace](https://huggingface.co/cycloneboy/SLM-SQL-Base-1.5B) |

| SLM-SQL-1.5B | Qwen2.5-Coder-1.5B-Instruct | SFT + GRPO | [🤖 Modelscope](https://modelscope.cn/models/cycloneboy/SLM-SQL-1.5B) | [🤗 HuggingFace](https://huggingface.co/cycloneboy/SLM-SQL-1.5B) |

| CscSQL-Merge-Qwen2.5-Coder-1.5B-Instruct | Qwen2.5-Coder-1.5B-Instruct | SFT + GRPO | [🤖 Modelscope](https://modelscope.cn/models/cycloneboy/CscSQL-Merge-Qwen2.5-Coder-1.5B-Instruct) | [🤗 HuggingFace](https://huggingface.co/cycloneboy/CscSQL-Merge-Qwen2.5-Coder-1.5B-Instruct) |

| SLM-SQL-Base-0.6B | Qwen3-0.6B | SFT | [🤖 Modelscope](https://modelscope.cn/models/cycloneboy/SLM-SQL-Base-0.6B) | [🤗 HuggingFace](https://huggingface.co/cycloneboy/SLM-SQL-Base-0.6B) |

| SLM-SQL-0.6B | Qwen3-0.6B | SFT + GRPO | [🤖 Modelscope](https://modelscope.cn/models/cycloneboy/SLM-SQL-0.6B) | [🤗 HuggingFace](https://huggingface.co/cycloneboy/SLM-SQL-0.6B) |

| SLM-SQL-Base-1.3B | deepseek-coder-1.3b-instruct | SFT | [🤖 Modelscope](https://modelscope.cn/models/cycloneboy/SLM-SQL-Base-1.3B ) | [🤗 HuggingFace](https://huggingface.co/cycloneboy/SLM-SQL-Base-1.3B ) |

| SLM-SQL-1.3B | deepseek-coder-1.3b-instruct | SFT + GRPO | [🤖 Modelscope](https://modelscope.cn/models/cycloneboy/SLM-SQL-1.3B ) | [🤗 HuggingFace](https://huggingface.co/cycloneboy/SLM-SQL-1.3B ) |

| SLM-SQL-Base-1B | Llama-3.2-1B-Instruct | SFT | [🤖 Modelscope](https://modelscope.cn/models/cycloneboy/SLM-SQL-Base-1B ) | [🤗 HuggingFace](https://huggingface.co/cycloneboy/SLM-SQL-Base-1B ) |

## Dataset

| **Dataset** | Modelscope | HuggingFace |

|----------------------------|------------------------------------------------------------------------------------|--------------------------------------------------------------------------------------|

| SynsQL-Think-916k | [🤖 Modelscope](https://modelscope.cn/datasets/cycloneboy/SynsQL-Think-916k) | [🤗 HuggingFace](https://huggingface.co/datasets/cycloneboy/SynsQL-Think-916k) |

| SynsQL-Merge-Think-310k | [🤖 Modelscope](https://modelscope.cn/datasets/cycloneboy/SynsQL-Merge-Think-310k) | [🤗 HuggingFace](https://huggingface.co/datasets/cycloneboy/SynsQL-Merge-Think-310k) |

| bird train and dev dataset | [🤖 Modelscope](https://modelscope.cn/datasets/cycloneboy/bird_train) | [🤗 HuggingFace](https://huggingface.co/datasets/cycloneboy/bird_train) |

## TODO

- [ ] Release inference code

- [ ] Upload Model

- [ ] Release training code

- [ ] Fix bug

- [ ] Update doc

## Thanks to the following projects

- [csc_sql](https://github.com/CycloneBoy/csc_sql)

- [open-r1](https://github.com/huggingface/open-r1)

- [OmniSQL](https://github.com/RUCKBReasoning/OmniSQL)

## Citation

```bibtex

@misc{sheng2025slmsqlexplorationsmalllanguage,

title={SLM-SQL: An Exploration of Small Language Models for Text-to-SQL},

author={Lei Sheng and Shuai-Shuai Xu},

year={2025},

eprint={2507.22478},

archivePrefix={arXiv},

primaryClass={cs.CL},

url={https://arxiv.org/abs/2507.22478},

}

@misc{sheng2025cscsqlcorrectiveselfconsistencytexttosql,

title={CSC-SQL: Corrective Self-Consistency in Text-to-SQL via Reinforcement Learning},

author={Lei Sheng and Shuai-Shuai Xu},

year={2025},

eprint={2505.13271},

archivePrefix={arXiv},

primaryClass={cs.CL},

url={https://arxiv.org/abs/2505.13271},

}

```

## How to Use

You can easily use this model with the Hugging Face `transformers` library. Below is a general example for inference:

```python

from transformers import AutoModelForCausalLM, AutoTokenizer

import torch

# Load the model and tokenizer

model_name = "cycloneboy/SLM-SQL-1.5B" # Example: You can choose other models from the table below

tokenizer = AutoTokenizer.from_pretrained(model_name)

model = AutoModelForCausalLM.from_pretrained(

model_name,

torch_dtype=torch.bfloat16, # or torch.float16, adjust based on your GPU

device_map="auto" # Automatically map model to available devices

)

model.eval()

# Example prompt for Text-to-SQL

# Replace this with your natural language query for a specific database schema

prompt = """

[Instruction]: Given the following database schema, generate a SQL query that answers the question.

[Schema]:

CREATE TABLE Student (StuID INT, Name TEXT, Age INT, Sex TEXT, Major TEXT, Advisor INT, Graduated BOOL);

CREATE TABLE Course (CrsID INT, Title TEXT, Dept TEXT, Credits INT);

CREATE TABLE Enrollment (StuID INT, CrsID INT, Grade REAL);

CREATE TABLE Advisor (AdvID INT, Name TEXT, Dept TEXT);

[Question]: What is the average age of students who are taking 'Database' course?

"""

inputs = tokenizer(prompt, return_tensors="pt").to(model.device)

# Generate SQL query

outputs = model.generate(

**inputs,

max_new_tokens=256,

num_beams=1, # Adjust for different decoding strategies

do_sample=False,

temperature=0.0,

top_p=1.0,

eos_token_id=tokenizer.eos_token_id

)

generated_text = tokenizer.decode(outputs[0], skip_special_tokens=True)

print(generated_text)

# The output will contain the prompt and the generated SQL.

# You might need to parse the generated_text to extract only the SQL query.

```

## Model

| **Model** | Base Model | Train Method | Modelscope | HuggingFace |

|------------------------------------------|------------------------------|--------------|---------------------------------------------------------------------------------------------------|----------------------------------------------------------------------------------------------|

| SLM-SQL-Base-0.5B | Qwen2.5-Coder-0.5B-Instruct | SFT | [🤖 Modelscope](https://modelscope.cn/models/cycloneboy/SLM-SQL-Base-0.5B) | [🤗 HuggingFace](https://huggingface.co/cycloneboy/SLM-SQL-Base-0.5B) |

| SLM-SQL-0.5B | Qwen2.5-Coder-0.5B-Instruct | SFT + GRPO | [🤖 Modelscope](https://modelscope.cn/models/cycloneboy/SLM-SQL-0.5B) | [🤗 HuggingFace](https://huggingface.co/cycloneboy/SLM-SQL-0.5B) |

| CscSQL-Merge-Qwen2.5-Coder-0.5B-Instruct | Qwen2.5-Coder-0.5B-Instruct | SFT + GRPO | [🤖 Modelscope](https://modelscope.cn/models/cycloneboy/CscSQL-Merge-Qwen2.5-Coder-0.5B-Instruct) | [🤗 HuggingFace](https://huggingface.co/cycloneboy/CscSQL-Merge-Qwen2.5-Coder-0.5B-Instruct) |

| SLM-SQL-Base-1.5B | Qwen2.5-Coder-1.5B-Instruct | SFT | [🤖 Modelscope](https://modelscope.cn/models/cycloneboy/SLM-SQL-Base-1.5B) | [🤗 HuggingFace](https://huggingface.co/cycloneboy/SLM-SQL-Base-1.5B) |

| SLM-SQL-1.5B | Qwen2.5-Coder-1.5B-Instruct | SFT + GRPO | [🤖 Modelscope](https://modelscope.cn/models/cycloneboy/SLM-SQL-1.5B) | [🤗 HuggingFace](https://huggingface.co/cycloneboy/SLM-SQL-1.5B) |

| CscSQL-Merge-Qwen2.5-Coder-1.5B-Instruct | Qwen2.5-Coder-1.5B-Instruct | SFT + GRPO | [🤖 Modelscope](https://modelscope.cn/models/cycloneboy/CscSQL-Merge-Qwen2.5-Coder-1.5B-Instruct) | [🤗 HuggingFace](https://huggingface.co/cycloneboy/CscSQL-Merge-Qwen2.5-Coder-1.5B-Instruct) |

| SLM-SQL-Base-0.6B | Qwen3-0.6B | SFT | [🤖 Modelscope](https://modelscope.cn/models/cycloneboy/SLM-SQL-Base-0.6B) | [🤗 HuggingFace](https://huggingface.co/cycloneboy/SLM-SQL-Base-0.6B) |

| SLM-SQL-0.6B | Qwen3-0.6B | SFT + GRPO | [🤖 Modelscope](https://modelscope.cn/models/cycloneboy/SLM-SQL-0.6B) | [🤗 HuggingFace](https://huggingface.co/cycloneboy/SLM-SQL-0.6B) |

| SLM-SQL-Base-1.3B | deepseek-coder-1.3b-instruct | SFT | [🤖 Modelscope](https://modelscope.cn/models/cycloneboy/SLM-SQL-Base-1.3B ) | [🤗 HuggingFace](https://huggingface.co/cycloneboy/SLM-SQL-Base-1.3B ) |

| SLM-SQL-1.3B | deepseek-coder-1.3b-instruct | SFT + GRPO | [🤖 Modelscope](https://modelscope.cn/models/cycloneboy/SLM-SQL-1.3B ) | [🤗 HuggingFace](https://huggingface.co/cycloneboy/SLM-SQL-1.3B ) |

| SLM-SQL-Base-1B | Llama-3.2-1B-Instruct | SFT | [🤖 Modelscope](https://modelscope.cn/models/cycloneboy/SLM-SQL-Base-1B ) | [🤗 HuggingFace](https://huggingface.co/cycloneboy/SLM-SQL-Base-1B ) |

## Dataset

| **Dataset** | Modelscope | HuggingFace |

|----------------------------|------------------------------------------------------------------------------------|--------------------------------------------------------------------------------------|

| SynsQL-Think-916k | [🤖 Modelscope](https://modelscope.cn/datasets/cycloneboy/SynsQL-Think-916k) | [🤗 HuggingFace](https://huggingface.co/datasets/cycloneboy/SynsQL-Think-916k) |

| SynsQL-Merge-Think-310k | [🤖 Modelscope](https://modelscope.cn/datasets/cycloneboy/SynsQL-Merge-Think-310k) | [🤗 HuggingFace](https://huggingface.co/datasets/cycloneboy/SynsQL-Merge-Think-310k) |

| bird train and dev dataset | [🤖 Modelscope](https://modelscope.cn/datasets/cycloneboy/bird_train) | [🤗 HuggingFace](https://huggingface.co/datasets/cycloneboy/bird_train) |

## TODO

- [ ] Release inference code

- [ ] Upload Model

- [ ] Release training code

- [ ] Fix bug

- [ ] Update doc

## Thanks to the following projects

- [csc_sql](https://github.com/CycloneBoy/csc_sql)

- [open-r1](https://github.com/huggingface/open-r1)

- [OmniSQL](https://github.com/RUCKBReasoning/OmniSQL)

## Citation

```bibtex

@misc{sheng2025slmsqlexplorationsmalllanguage,

title={SLM-SQL: An Exploration of Small Language Models for Text-to-SQL},

author={Lei Sheng and Shuai-Shuai Xu},

year={2025},

eprint={2507.22478},

archivePrefix={arXiv},

primaryClass={cs.CL},

url={https://arxiv.org/abs/2507.22478},

}

@misc{sheng2025cscsqlcorrectiveselfconsistencytexttosql,

title={CSC-SQL: Corrective Self-Consistency in Text-to-SQL via Reinforcement Learning},

author={Lei Sheng and Shuai-Shuai Xu},

year={2025},

eprint={2505.13271},

archivePrefix={arXiv},

primaryClass={cs.CL},

url={https://arxiv.org/abs/2505.13271},

}

``` ### Main Results

### Main Results

Performance Comparison of different Text-to-SQL methods on BIRD dev and test dataset.

Performance Comparison of different Text-to-SQL methods on BIRD dev and test dataset.

## How to Use

You can easily use this model with the Hugging Face `transformers` library. Below is a general example for inference:

```python

from transformers import AutoModelForCausalLM, AutoTokenizer

import torch

# Load the model and tokenizer

model_name = "cycloneboy/SLM-SQL-1.5B" # Example: You can choose other models from the table below

tokenizer = AutoTokenizer.from_pretrained(model_name)

model = AutoModelForCausalLM.from_pretrained(

model_name,

torch_dtype=torch.bfloat16, # or torch.float16, adjust based on your GPU

device_map="auto" # Automatically map model to available devices

)

model.eval()

# Example prompt for Text-to-SQL

# Replace this with your natural language query for a specific database schema

prompt = """

[Instruction]: Given the following database schema, generate a SQL query that answers the question.

[Schema]:

CREATE TABLE Student (StuID INT, Name TEXT, Age INT, Sex TEXT, Major TEXT, Advisor INT, Graduated BOOL);

CREATE TABLE Course (CrsID INT, Title TEXT, Dept TEXT, Credits INT);

CREATE TABLE Enrollment (StuID INT, CrsID INT, Grade REAL);

CREATE TABLE Advisor (AdvID INT, Name TEXT, Dept TEXT);

[Question]: What is the average age of students who are taking 'Database' course?

"""

inputs = tokenizer(prompt, return_tensors="pt").to(model.device)

# Generate SQL query

outputs = model.generate(

**inputs,

max_new_tokens=256,

num_beams=1, # Adjust for different decoding strategies

do_sample=False,

temperature=0.0,

top_p=1.0,

eos_token_id=tokenizer.eos_token_id

)

generated_text = tokenizer.decode(outputs[0], skip_special_tokens=True)

print(generated_text)

# The output will contain the prompt and the generated SQL.

# You might need to parse the generated_text to extract only the SQL query.

```

## Model

| **Model** | Base Model | Train Method | Modelscope | HuggingFace |

|------------------------------------------|------------------------------|--------------|---------------------------------------------------------------------------------------------------|----------------------------------------------------------------------------------------------|

| SLM-SQL-Base-0.5B | Qwen2.5-Coder-0.5B-Instruct | SFT | [🤖 Modelscope](https://modelscope.cn/models/cycloneboy/SLM-SQL-Base-0.5B) | [🤗 HuggingFace](https://huggingface.co/cycloneboy/SLM-SQL-Base-0.5B) |

| SLM-SQL-0.5B | Qwen2.5-Coder-0.5B-Instruct | SFT + GRPO | [🤖 Modelscope](https://modelscope.cn/models/cycloneboy/SLM-SQL-0.5B) | [🤗 HuggingFace](https://huggingface.co/cycloneboy/SLM-SQL-0.5B) |

| CscSQL-Merge-Qwen2.5-Coder-0.5B-Instruct | Qwen2.5-Coder-0.5B-Instruct | SFT + GRPO | [🤖 Modelscope](https://modelscope.cn/models/cycloneboy/CscSQL-Merge-Qwen2.5-Coder-0.5B-Instruct) | [🤗 HuggingFace](https://huggingface.co/cycloneboy/CscSQL-Merge-Qwen2.5-Coder-0.5B-Instruct) |

| SLM-SQL-Base-1.5B | Qwen2.5-Coder-1.5B-Instruct | SFT | [🤖 Modelscope](https://modelscope.cn/models/cycloneboy/SLM-SQL-Base-1.5B) | [🤗 HuggingFace](https://huggingface.co/cycloneboy/SLM-SQL-Base-1.5B) |

| SLM-SQL-1.5B | Qwen2.5-Coder-1.5B-Instruct | SFT + GRPO | [🤖 Modelscope](https://modelscope.cn/models/cycloneboy/SLM-SQL-1.5B) | [🤗 HuggingFace](https://huggingface.co/cycloneboy/SLM-SQL-1.5B) |

| CscSQL-Merge-Qwen2.5-Coder-1.5B-Instruct | Qwen2.5-Coder-1.5B-Instruct | SFT + GRPO | [🤖 Modelscope](https://modelscope.cn/models/cycloneboy/CscSQL-Merge-Qwen2.5-Coder-1.5B-Instruct) | [🤗 HuggingFace](https://huggingface.co/cycloneboy/CscSQL-Merge-Qwen2.5-Coder-1.5B-Instruct) |

| SLM-SQL-Base-0.6B | Qwen3-0.6B | SFT | [🤖 Modelscope](https://modelscope.cn/models/cycloneboy/SLM-SQL-Base-0.6B) | [🤗 HuggingFace](https://huggingface.co/cycloneboy/SLM-SQL-Base-0.6B) |

| SLM-SQL-0.6B | Qwen3-0.6B | SFT + GRPO | [🤖 Modelscope](https://modelscope.cn/models/cycloneboy/SLM-SQL-0.6B) | [🤗 HuggingFace](https://huggingface.co/cycloneboy/SLM-SQL-0.6B) |

| SLM-SQL-Base-1.3B | deepseek-coder-1.3b-instruct | SFT | [🤖 Modelscope](https://modelscope.cn/models/cycloneboy/SLM-SQL-Base-1.3B ) | [🤗 HuggingFace](https://huggingface.co/cycloneboy/SLM-SQL-Base-1.3B ) |

| SLM-SQL-1.3B | deepseek-coder-1.3b-instruct | SFT + GRPO | [🤖 Modelscope](https://modelscope.cn/models/cycloneboy/SLM-SQL-1.3B ) | [🤗 HuggingFace](https://huggingface.co/cycloneboy/SLM-SQL-1.3B ) |

| SLM-SQL-Base-1B | Llama-3.2-1B-Instruct | SFT | [🤖 Modelscope](https://modelscope.cn/models/cycloneboy/SLM-SQL-Base-1B ) | [🤗 HuggingFace](https://huggingface.co/cycloneboy/SLM-SQL-Base-1B ) |

## Dataset

| **Dataset** | Modelscope | HuggingFace |

|----------------------------|------------------------------------------------------------------------------------|--------------------------------------------------------------------------------------|

| SynsQL-Think-916k | [🤖 Modelscope](https://modelscope.cn/datasets/cycloneboy/SynsQL-Think-916k) | [🤗 HuggingFace](https://huggingface.co/datasets/cycloneboy/SynsQL-Think-916k) |

| SynsQL-Merge-Think-310k | [🤖 Modelscope](https://modelscope.cn/datasets/cycloneboy/SynsQL-Merge-Think-310k) | [🤗 HuggingFace](https://huggingface.co/datasets/cycloneboy/SynsQL-Merge-Think-310k) |

| bird train and dev dataset | [🤖 Modelscope](https://modelscope.cn/datasets/cycloneboy/bird_train) | [🤗 HuggingFace](https://huggingface.co/datasets/cycloneboy/bird_train) |

## TODO

- [ ] Release inference code

- [ ] Upload Model

- [ ] Release training code

- [ ] Fix bug

- [ ] Update doc

## Thanks to the following projects

- [csc_sql](https://github.com/CycloneBoy/csc_sql)

- [open-r1](https://github.com/huggingface/open-r1)

- [OmniSQL](https://github.com/RUCKBReasoning/OmniSQL)

## Citation

```bibtex

@misc{sheng2025slmsqlexplorationsmalllanguage,

title={SLM-SQL: An Exploration of Small Language Models for Text-to-SQL},

author={Lei Sheng and Shuai-Shuai Xu},

year={2025},

eprint={2507.22478},

archivePrefix={arXiv},

primaryClass={cs.CL},

url={https://arxiv.org/abs/2507.22478},

}

@misc{sheng2025cscsqlcorrectiveselfconsistencytexttosql,

title={CSC-SQL: Corrective Self-Consistency in Text-to-SQL via Reinforcement Learning},

author={Lei Sheng and Shuai-Shuai Xu},

year={2025},

eprint={2505.13271},

archivePrefix={arXiv},

primaryClass={cs.CL},

url={https://arxiv.org/abs/2505.13271},

}

```

## How to Use

You can easily use this model with the Hugging Face `transformers` library. Below is a general example for inference:

```python

from transformers import AutoModelForCausalLM, AutoTokenizer

import torch

# Load the model and tokenizer

model_name = "cycloneboy/SLM-SQL-1.5B" # Example: You can choose other models from the table below

tokenizer = AutoTokenizer.from_pretrained(model_name)

model = AutoModelForCausalLM.from_pretrained(

model_name,

torch_dtype=torch.bfloat16, # or torch.float16, adjust based on your GPU

device_map="auto" # Automatically map model to available devices

)

model.eval()

# Example prompt for Text-to-SQL

# Replace this with your natural language query for a specific database schema

prompt = """

[Instruction]: Given the following database schema, generate a SQL query that answers the question.

[Schema]:

CREATE TABLE Student (StuID INT, Name TEXT, Age INT, Sex TEXT, Major TEXT, Advisor INT, Graduated BOOL);

CREATE TABLE Course (CrsID INT, Title TEXT, Dept TEXT, Credits INT);

CREATE TABLE Enrollment (StuID INT, CrsID INT, Grade REAL);

CREATE TABLE Advisor (AdvID INT, Name TEXT, Dept TEXT);

[Question]: What is the average age of students who are taking 'Database' course?

"""

inputs = tokenizer(prompt, return_tensors="pt").to(model.device)

# Generate SQL query

outputs = model.generate(

**inputs,

max_new_tokens=256,

num_beams=1, # Adjust for different decoding strategies

do_sample=False,

temperature=0.0,

top_p=1.0,

eos_token_id=tokenizer.eos_token_id

)

generated_text = tokenizer.decode(outputs[0], skip_special_tokens=True)

print(generated_text)

# The output will contain the prompt and the generated SQL.

# You might need to parse the generated_text to extract only the SQL query.

```

## Model

| **Model** | Base Model | Train Method | Modelscope | HuggingFace |

|------------------------------------------|------------------------------|--------------|---------------------------------------------------------------------------------------------------|----------------------------------------------------------------------------------------------|

| SLM-SQL-Base-0.5B | Qwen2.5-Coder-0.5B-Instruct | SFT | [🤖 Modelscope](https://modelscope.cn/models/cycloneboy/SLM-SQL-Base-0.5B) | [🤗 HuggingFace](https://huggingface.co/cycloneboy/SLM-SQL-Base-0.5B) |

| SLM-SQL-0.5B | Qwen2.5-Coder-0.5B-Instruct | SFT + GRPO | [🤖 Modelscope](https://modelscope.cn/models/cycloneboy/SLM-SQL-0.5B) | [🤗 HuggingFace](https://huggingface.co/cycloneboy/SLM-SQL-0.5B) |

| CscSQL-Merge-Qwen2.5-Coder-0.5B-Instruct | Qwen2.5-Coder-0.5B-Instruct | SFT + GRPO | [🤖 Modelscope](https://modelscope.cn/models/cycloneboy/CscSQL-Merge-Qwen2.5-Coder-0.5B-Instruct) | [🤗 HuggingFace](https://huggingface.co/cycloneboy/CscSQL-Merge-Qwen2.5-Coder-0.5B-Instruct) |

| SLM-SQL-Base-1.5B | Qwen2.5-Coder-1.5B-Instruct | SFT | [🤖 Modelscope](https://modelscope.cn/models/cycloneboy/SLM-SQL-Base-1.5B) | [🤗 HuggingFace](https://huggingface.co/cycloneboy/SLM-SQL-Base-1.5B) |

| SLM-SQL-1.5B | Qwen2.5-Coder-1.5B-Instruct | SFT + GRPO | [🤖 Modelscope](https://modelscope.cn/models/cycloneboy/SLM-SQL-1.5B) | [🤗 HuggingFace](https://huggingface.co/cycloneboy/SLM-SQL-1.5B) |

| CscSQL-Merge-Qwen2.5-Coder-1.5B-Instruct | Qwen2.5-Coder-1.5B-Instruct | SFT + GRPO | [🤖 Modelscope](https://modelscope.cn/models/cycloneboy/CscSQL-Merge-Qwen2.5-Coder-1.5B-Instruct) | [🤗 HuggingFace](https://huggingface.co/cycloneboy/CscSQL-Merge-Qwen2.5-Coder-1.5B-Instruct) |

| SLM-SQL-Base-0.6B | Qwen3-0.6B | SFT | [🤖 Modelscope](https://modelscope.cn/models/cycloneboy/SLM-SQL-Base-0.6B) | [🤗 HuggingFace](https://huggingface.co/cycloneboy/SLM-SQL-Base-0.6B) |

| SLM-SQL-0.6B | Qwen3-0.6B | SFT + GRPO | [🤖 Modelscope](https://modelscope.cn/models/cycloneboy/SLM-SQL-0.6B) | [🤗 HuggingFace](https://huggingface.co/cycloneboy/SLM-SQL-0.6B) |

| SLM-SQL-Base-1.3B | deepseek-coder-1.3b-instruct | SFT | [🤖 Modelscope](https://modelscope.cn/models/cycloneboy/SLM-SQL-Base-1.3B ) | [🤗 HuggingFace](https://huggingface.co/cycloneboy/SLM-SQL-Base-1.3B ) |

| SLM-SQL-1.3B | deepseek-coder-1.3b-instruct | SFT + GRPO | [🤖 Modelscope](https://modelscope.cn/models/cycloneboy/SLM-SQL-1.3B ) | [🤗 HuggingFace](https://huggingface.co/cycloneboy/SLM-SQL-1.3B ) |

| SLM-SQL-Base-1B | Llama-3.2-1B-Instruct | SFT | [🤖 Modelscope](https://modelscope.cn/models/cycloneboy/SLM-SQL-Base-1B ) | [🤗 HuggingFace](https://huggingface.co/cycloneboy/SLM-SQL-Base-1B ) |

## Dataset

| **Dataset** | Modelscope | HuggingFace |

|----------------------------|------------------------------------------------------------------------------------|--------------------------------------------------------------------------------------|

| SynsQL-Think-916k | [🤖 Modelscope](https://modelscope.cn/datasets/cycloneboy/SynsQL-Think-916k) | [🤗 HuggingFace](https://huggingface.co/datasets/cycloneboy/SynsQL-Think-916k) |

| SynsQL-Merge-Think-310k | [🤖 Modelscope](https://modelscope.cn/datasets/cycloneboy/SynsQL-Merge-Think-310k) | [🤗 HuggingFace](https://huggingface.co/datasets/cycloneboy/SynsQL-Merge-Think-310k) |

| bird train and dev dataset | [🤖 Modelscope](https://modelscope.cn/datasets/cycloneboy/bird_train) | [🤗 HuggingFace](https://huggingface.co/datasets/cycloneboy/bird_train) |

## TODO

- [ ] Release inference code

- [ ] Upload Model

- [ ] Release training code

- [ ] Fix bug

- [ ] Update doc

## Thanks to the following projects

- [csc_sql](https://github.com/CycloneBoy/csc_sql)

- [open-r1](https://github.com/huggingface/open-r1)

- [OmniSQL](https://github.com/RUCKBReasoning/OmniSQL)

## Citation

```bibtex

@misc{sheng2025slmsqlexplorationsmalllanguage,

title={SLM-SQL: An Exploration of Small Language Models for Text-to-SQL},

author={Lei Sheng and Shuai-Shuai Xu},

year={2025},

eprint={2507.22478},

archivePrefix={arXiv},

primaryClass={cs.CL},

url={https://arxiv.org/abs/2507.22478},

}

@misc{sheng2025cscsqlcorrectiveselfconsistencytexttosql,

title={CSC-SQL: Corrective Self-Consistency in Text-to-SQL via Reinforcement Learning},

author={Lei Sheng and Shuai-Shuai Xu},

year={2025},

eprint={2505.13271},

archivePrefix={arXiv},

primaryClass={cs.CL},

url={https://arxiv.org/abs/2505.13271},

}

```