File size: 5,063 Bytes

9feec7c |

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 27 28 29 30 31 32 33 34 35 36 37 38 39 40 41 42 43 44 45 46 47 48 49 50 51 52 53 54 55 56 57 58 59 60 61 62 63 64 65 66 67 68 69 70 71 72 73 74 75 76 77 78 79 80 81 82 83 84 85 86 87 88 89 90 91 92 93 94 95 96 97 98 99 100 101 102 103 104 105 106 107 108 109 110 111 112 113 114 115 116 117 118 119 120 121 122 123 124 125 126 127 128 129 130 131 132 133 134 135 136 137 138 139 140 141 142 143 144 145 146 147 148 149 150 151 |

# Pixel-aligned RGB-NIR Stereo Imaging and Dataset for Robot Vision

> **CVPR 2025**

> **Jinnyeong Kim**, **Seung-Hwan Baek**

> POSTECH

> [[arXiv]](https://arxiv.org/abs/2411.18025) • [[Code]](https://github.com/your-repo-url) • [[Video]](https://your-video-link.com) • [[Dataset on HuggingFace]](https://huggingface.co/datasets/your-dataset-url)

---

## Overview

This repository provides the code and dataset accompanying our CVPR 2025 paper:

**"Pixel-aligned RGB-NIR Stereo Imaging and Dataset for Robot Vision"**

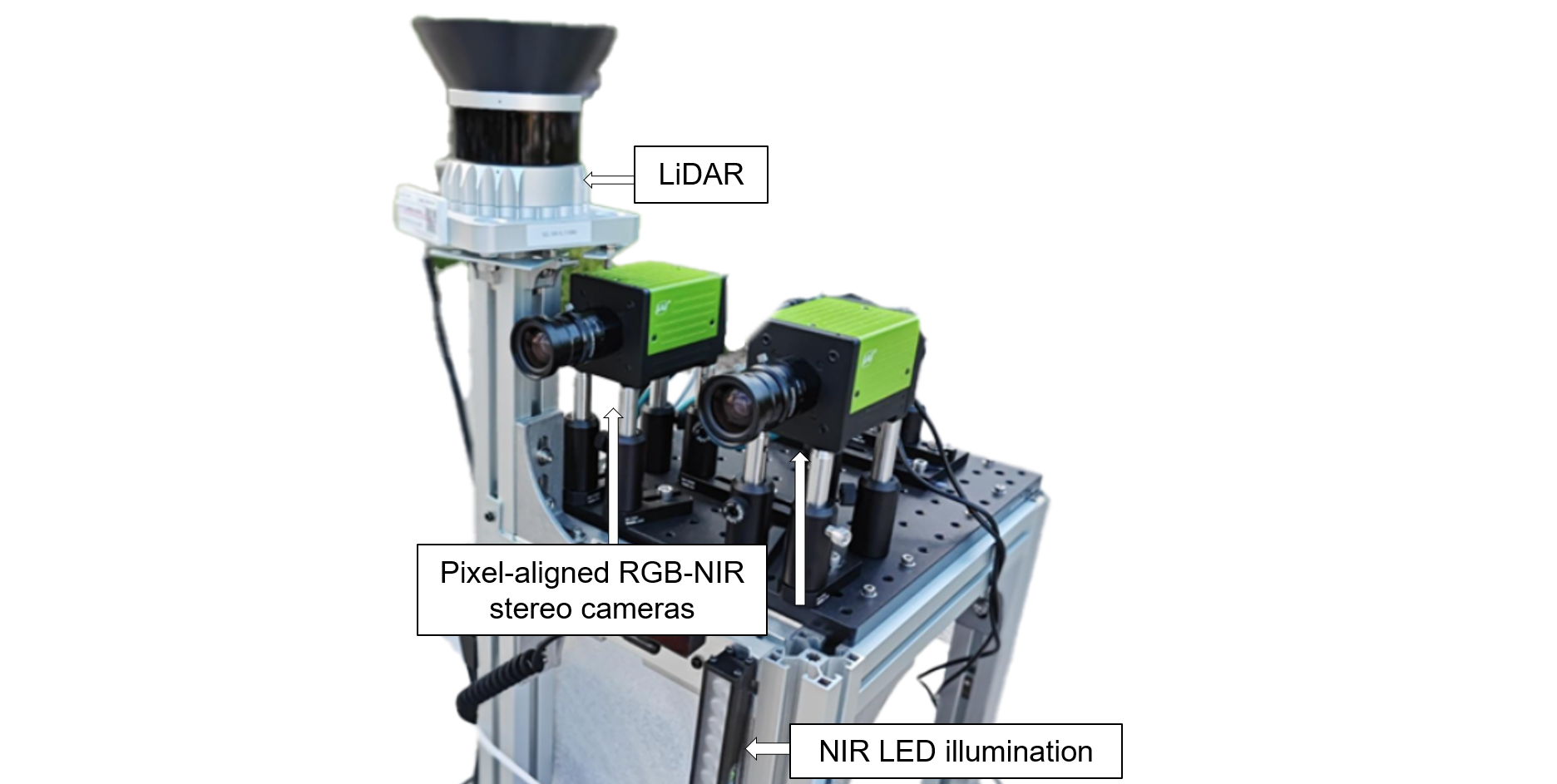

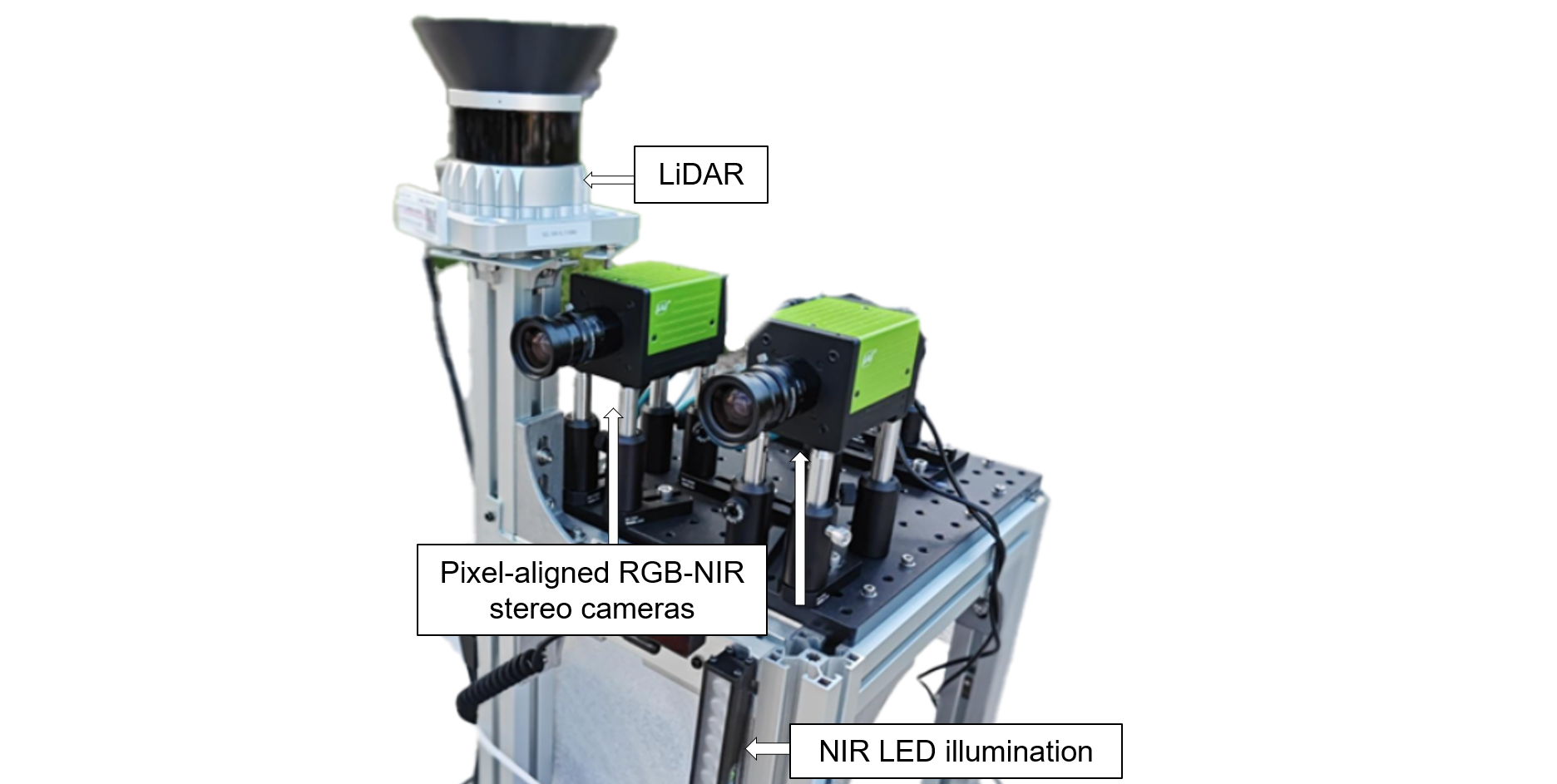

We propose a novel robotic vision system equipped with **two pixel-aligned RGB-NIR stereo cameras** and a **LiDAR sensor** mounted on a mobile robot. Our system captures **RGB-NIR stereo video sequences** and **temporally synchronized LiDAR point clouds**, offering a high-quality, aligned multi-spectral dataset under diverse lighting conditions.

---

## ✨ Highlights

- **Pixel-aligned RGB-NIR stereo imaging** for robust vision under challenging lighting.

- **Continuous video sequences** recorded using a mobile robot.

- **Sparse LiDAR point clouds** temporally synchronized with stereo imagery.

- Two proposed methods to utilize RGB-NIR pairs:

- RGB-NIR **Image Fusion** (pretrained model-compatible)

- RGB-NIR **Feature Fusion** (for fine-tuned stereo depth estimation)

---

## 📦 Dataset

We release a large-scale dataset for training and evaluating robot vision models in realistic environments.

### 📹 Data Statistics

| | #Videos | #Frames |

|---|--------|---------|

| Training | 80 | 90,000 |

| Testing | 40 | 7,000 |

### 📁 Per Frame Data Includes:

- Pixel-aligned **RGB-NIR stereo images**

- **Sparse LiDAR** point cloud (in camera coordinates)

- **Sensor timestamps** (synchronized)

### 🌗 Lighting Scenarios

<img width="920" alt="image" src="https://github.com/user-attachments/assets/a07bea4e-5674-4277-a585-f556ce9d4825" />

➡️ **[Code is availabe on github](https://github.com/divisonofficer/Pixel_aligned_RGB_NIR_Stereo)**

Each .tar.gz file follows below structure

```

frame1

--rgb

-----left_distorted.png (or left.png)

-----right_distorted.png (or right.png)

--nir

-----left_distorted.png (or left.png)

-----right_distorted.png (or right.png)

storage.hdf5

```

The frame ids are named after their creation date.

**_distorted.png** image need to be undistorted. **left.png** and **right.png** are undistorted version.

**storage.hdf5** is H5 database. it contains **frame** group with children of each frame ids.

---

## 📷 Imaging System

Our robotic platform integrates:

- **Two RGB-NIR stereo cameras** (pixel-aligned RGB and NIR sensors)

- **LiDAR sensor**

- **Omnidirectional mobile base** (360° movement)

- **High-capacity battery** (up to 6 hours)

- **NIR LED bar light source** for consistent active illumination

---

## 🔧 Methods

### RGB-NIR synthetic data augmentation

See **visualize/synth_aug_render.ipynb** for method of synthetic data augmentation to build RGB-NIR training dataset.

### RGB-NIR Image Fusion

We introduce an RGB-NIR **image-level fusion technique** for 3-channel vision tasks. This approach allows existing **RGB-pretrained models** to benefit from NIR information **without additional fine-tuning**.

Applicable to:

- Stereo Depth Estimation

- Semantic Segmentation

- Object Detection

See **net/image_fusion.py** for pytorch implementation.

### RGB-NIR Feature Fusion (Stereo Depth)

We extend RAFT-Stereo with a novel **feature-level fusion strategy**, alternating between fused and NIR **correlation volumes** during iterative disparity estimation using GRUs.

See **net/feature_fusion.py** of implementation with RAFT-Stereo as baseline

Our setup reflects the **RGB with active illumination** scenario:

- NIR provides robust depth cues

- RGB complements NIR with texture under normal lighting

---

## 📊 Experimental Results

Our experiments demonstrate that pixel-aligned RGB-NIR inputs:

- Improve stereo depth accuracy under low-light and high-contrast conditions

- Enable pretrained RGB models to generalize better

- Enhance robustness across lighting domains

---

## 📄 Citation

If you use this dataset or code, please cite our work:

```bibtex

@article{kim2025pixelnir,

author = {Jinnyeong Kim and Seung-Hwan Baek},

title = {Pixel-aligned RGB-NIR Stereo Imaging and Dataset for Robot Vision},

conference = {The IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR)},

year = {2025},

doi = {10.48550/arXiv.2411.18025},

url = {https://arxiv.org/abs/2411.18025},

}

|