id stringlengths 11 95 | author stringlengths 3 36 | task_category stringclasses 16

values | tags listlengths 1 4.05k | created_time int64 1.65k 1.74k | last_modified int64 1.62k 1.74k | downloads int64 0 15.6M | likes int64 0 4.86k | README stringlengths 246 1.01M | matched_task listlengths 1 8 | matched_bigbio_names listlengths 1 8 | is_bionlp stringclasses 3

values |

|---|---|---|---|---|---|---|---|---|---|---|---|

Goodmotion/spam-mail-classifier | Goodmotion | text-classification | [

"transformers",

"safetensors",

"text-classification",

"spam-detection",

"license:apache-2.0",

"endpoints_compatible",

"region:us"

] | 1,733 | 1,733 | 87 | 2 | ---

license: apache-2.0

tags:

- transformers

- text-classification

- spam-detection

---

# SPAM Mail Classifier

This model is fine-tuned from `microsoft/Multilingual-MiniLM-L12-H384` to classify email subjects as SPAM or NOSPAM.

## Model Details

- **Base model**: `microsoft/Multilingual-MiniLM-L12-H384`

... | [

"TEXT_CLASSIFICATION"

] | [

"ESSAI"

] | Non_BioNLP |

knowledgator/gliner-poly-small-v1.0 | knowledgator | token-classification | [

"gliner",

"pytorch",

"token-classification",

"multilingual",

"dataset:urchade/pile-mistral-v0.1",

"dataset:numind/NuNER",

"dataset:knowledgator/GLINER-multi-task-synthetic-data",

"license:apache-2.0",

"region:us"

] | 1,724 | 1,724 | 32 | 14 | ---

datasets:

- urchade/pile-mistral-v0.1

- numind/NuNER

- knowledgator/GLINER-multi-task-synthetic-data

language:

- multilingual

library_name: gliner

license: apache-2.0

pipeline_tag: token-classification

---

# About

GLiNER is a Named Entity Recognition (NER) model capable of identifying any entity type using a bidi... | [

"NAMED_ENTITY_RECOGNITION"

] | [

"ANATEM",

"BC5CDR"

] | Non_BioNLP |

QuantFactory/meditron-7b-GGUF | QuantFactory | null | [

"gguf",

"en",

"dataset:epfl-llm/guidelines",

"arxiv:2311.16079",

"base_model:meta-llama/Llama-2-7b",

"base_model:quantized:meta-llama/Llama-2-7b",

"license:llama2",

"endpoints_compatible",

"region:us"

] | 1,727 | 1,727 | 206 | 1 | ---

base_model: meta-llama/Llama-2-7b

datasets:

- epfl-llm/guidelines

language:

- en

license: llama2

metrics:

- accuracy

- perplexity

---

[ instruct and preference... | [

"QUESTION_ANSWERING",

"SUMMARIZATION"

] | [

"MEDQA"

] | BioNLP |

seongil-dn/bge-m3-756 | seongil-dn | sentence-similarity | [

"sentence-transformers",

"safetensors",

"xlm-roberta",

"sentence-similarity",

"feature-extraction",

"generated_from_trainer",

"dataset_size:1138596",

"loss:CachedGISTEmbedLoss",

"arxiv:1908.10084",

"base_model:seongil-dn/unsupervised_20m_3800",

"base_model:finetune:seongil-dn/unsupervised_20m_38... | 1,741 | 1,741 | 12 | 0 | ---

base_model: seongil-dn/unsupervised_20m_3800

library_name: sentence-transformers

pipeline_tag: sentence-similarity

tags:

- sentence-transformers

- sentence-similarity

- feature-extraction

- generated_from_trainer

- dataset_size:1138596

- loss:CachedGISTEmbedLoss

widget:

- source_sentence: How many people were repor... | [

"TEXT_CLASSIFICATION",

"TRANSLATION"

] | [

"CRAFT"

] | Non_BioNLP |

LoneStriker/OpenBioLLM-Llama3-8B-GGUF | LoneStriker | null | [

"gguf",

"llama-3",

"llama",

"Mixtral",

"instruct",

"finetune",

"chatml",

"DPO",

"RLHF",

"gpt4",

"distillation",

"en",

"arxiv:2305.18290",

"arxiv:2303.13375",

"arxiv:2212.13138",

"arxiv:2305.09617",

"arxiv:2402.07023",

"base_model:meta-llama/Meta-Llama-3-8B",

"base_model:quantized... | 1,714 | 1,714 | 30 | 1 | ---

base_model: meta-llama/Meta-Llama-3-8B

language:

- en

license: llama3

tags:

- llama-3

- llama

- Mixtral

- instruct

- finetune

- chatml

- DPO

- RLHF

- gpt4

- distillation

widget:

- example_title: OpenBioLLM-8B

messages:

- role: system

content: You are an expert and experienced from the healthcare and biomedi... | [

"QUESTION_ANSWERING"

] | [

"MEDQA",

"PUBMEDQA"

] | BioNLP |

medspaner/mdeberta-v3-base-es-trials-misc-ents | medspaner | token-classification | [

"transformers",

"pytorch",

"deberta-v2",

"token-classification",

"generated_from_trainer",

"arxiv:2111.09543",

"license:cc-by-nc-4.0",

"autotrain_compatible",

"endpoints_compatible",

"region:us"

] | 1,705 | 1,727 | 12 | 0 | ---

license: cc-by-nc-4.0

metrics:

- precision

- recall

- f1

- accuracy

tags:

- generated_from_trainer

widget:

- text: 'Motivo de consulta: migraña leve. Exploración: Tensión arterial: 120/70 mmHg.'

model-index:

- name: mdeberta-v3-base-es-trials-misc-ents

results: []

---

<!-- This model card has been generated auto... | [

"NAMED_ENTITY_RECOGNITION"

] | [

"SCIELO"

] | BioNLP |

carsondial/slinger20241231-3 | carsondial | sentence-similarity | [

"sentence-transformers",

"safetensors",

"bert",

"sentence-similarity",

"feature-extraction",

"generated_from_trainer",

"dataset_size:45000",

"loss:MatryoshkaLoss",

"loss:MultipleNegativesRankingLoss",

"en",

"arxiv:1908.10084",

"arxiv:2205.13147",

"arxiv:1705.00652",

"base_model:BAAI/bge-ba... | 1,735 | 1,735 | 6 | 0 | ---

base_model: BAAI/bge-base-en-v1.5

language:

- en

library_name: sentence-transformers

license: apache-2.0

metrics:

- cosine_accuracy@1

- cosine_accuracy@3

- cosine_accuracy@5

- cosine_accuracy@10

- cosine_precision@1

- cosine_precision@3

- cosine_precision@5

- cosine_precision@10

- cosine_recall@1

- cosine_recall@3

... | [

"TEXT_CLASSIFICATION"

] | [

"CRAFT"

] | Non_BioNLP |

StivenLancheros/Roberta-base-biomedical-clinical-es-finetuned-ner-CRAFT_en_es | StivenLancheros | token-classification | [

"transformers",

"pytorch",

"tensorboard",

"roberta",

"token-classification",

"generated_from_trainer",

"license:apache-2.0",

"autotrain_compatible",

"endpoints_compatible",

"region:us"

] | 1,647 | 1,647 | 115 | 0 | ---

license: apache-2.0

metrics:

- precision

- recall

- f1

- accuracy

tags:

- generated_from_trainer

model-index:

- name: Roberta-base-biomedical-clinical-es-finetuned-ner-CRAFT_en_es

results: []

---

<!-- This model card has been generated automatically according to the information the Trainer had access to. You

sho... | [

"NAMED_ENTITY_RECOGNITION"

] | [

"CRAFT"

] | BioNLP |

bobox/DeBERTa-small-ST-v1-test-step2 | bobox | sentence-similarity | [

"sentence-transformers",

"pytorch",

"deberta-v2",

"sentence-similarity",

"feature-extraction",

"generated_from_trainer",

"dataset_size:305010",

"loss:CachedGISTEmbedLoss",

"en",

"dataset:jinaai/negation-dataset-v2",

"dataset:tals/vitaminc",

"dataset:allenai/scitail",

"dataset:allenai/sciq",

... | 1,724 | 1,724 | 7 | 0 | ---

base_model: bobox/DeBERTa-small-ST-v1-test

datasets:

- jinaai/negation-dataset-v2

- tals/vitaminc

- allenai/scitail

- allenai/sciq

- allenai/qasc

- sentence-transformers/msmarco-msmarco-distilbert-base-v3

- sentence-transformers/natural-questions

- sentence-transformers/trivia-qa

- sentence-transformers/gooaq

- goo... | [

"TEXT_CLASSIFICATION",

"SEMANTIC_SIMILARITY"

] | [

"MEDAL",

"SCIQ",

"SCITAIL"

] | Non_BioNLP |

RichardErkhov/aaditya_-_Llama3-OpenBioLLM-8B-8bits | RichardErkhov | text-generation | [

"transformers",

"safetensors",

"llama",

"text-generation",

"arxiv:2305.18290",

"arxiv:2303.13375",

"arxiv:2212.13138",

"arxiv:2305.09617",

"arxiv:2402.07023",

"autotrain_compatible",

"text-generation-inference",

"endpoints_compatible",

"8-bit",

"bitsandbytes",

"region:us"

] | 1,714 | 1,714 | 16 | 0 | ---

{}

---

Quantization made by Richard Erkhov.

[Github](https://github.com/RichardErkhov)

[Discord](https://discord.gg/pvy7H8DZMG)

[Request more models](https://github.com/RichardErkhov/quant_request)

Llama3-OpenBioLLM-8B - bnb 8bits

- Model creator: https://huggingface.co/aaditya/

- Original model: https://huggi... | [

"QUESTION_ANSWERING"

] | [

"MEDQA",

"PUBMEDQA"

] | BioNLP |

KeyurRamoliya/e5-large-v2-GGUF | KeyurRamoliya | sentence-similarity | [

"sentence-transformers",

"gguf",

"mteb",

"Sentence Transformers",

"sentence-similarity",

"llama-cpp",

"gguf-my-repo",

"en",

"base_model:intfloat/e5-large-v2",

"base_model:quantized:intfloat/e5-large-v2",

"license:mit",

"model-index",

"autotrain_compatible",

"endpoints_compatible",

"regio... | 1,724 | 1,724 | 12 | 0 | ---

base_model: intfloat/e5-large-v2

language:

- en

license: mit

tags:

- mteb

- Sentence Transformers

- sentence-similarity

- sentence-transformers

- llama-cpp

- gguf-my-repo

model-index:

- name: e5-large-v2

results:

- task:

type: Classification

dataset:

name: MTEB AmazonCounterfactualClassification... | [

"SUMMARIZATION"

] | [

"BIOSSES",

"SCIFACT"

] | Non_BioNLP |

michaelfeil/ct2fast-jina-embedding-s-en-v1 | michaelfeil | sentence-similarity | [

"transformers",

"t5",

"feature-extraction",

"ctranslate2",

"int8",

"float16 - finetuner - mteb - sentence-transformers - feature-extraction - sentence-similarity",

"sentence-similarity",

"custom_code",

"en",

"dataset:jinaai/negation-dataset",

"arxiv:2307.11224",

"license:apache-2.0",

"model-... | 1,697 | 1,697 | 4 | 0 | ---

datasets:

- jinaai/negation-dataset

language: en

license: apache-2.0

pipeline_tag: sentence-similarity

tags:

- ctranslate2

- int8

- float16 - finetuner - mteb - sentence-transformers - feature-extraction - sentence-similarity

model-index:

- name: jina-embedding-s-en-v1

results:

- task:

type: Classificatio... | [

"SUMMARIZATION"

] | [

"BIOSSES",

"LINNAEUS",

"SCIFACT"

] | Non_BioNLP |

pruas/BENT-PubMedBERT-NER-Organism | pruas | token-classification | [

"transformers",

"pytorch",

"bert",

"token-classification",

"en",

"license:apache-2.0",

"autotrain_compatible",

"endpoints_compatible",

"region:us"

] | 1,673 | 1,709 | 32 | 3 | ---

language:

- en

license: apache-2.0

pipeline_tag: token-classification

---

Named Entity Recognition (NER) model to recognize organism entities.

Please cite our work:

```

@article{NILNKER2022,

title = {NILINKER: Attention-based approach to NIL Entity Linking},

journal = {Journal of Biomedical Informatics},

v... | [

"NAMED_ENTITY_RECOGNITION"

] | [

"CRAFT",

"CELLFINDER",

"LINNAEUS",

"MLEE",

"MIRNA"

] | BioNLP |

RichardErkhov/ricepaper_-_vi-gemma-2b-RAG-awq | RichardErkhov | null | [

"safetensors",

"gemma",

"4-bit",

"awq",

"region:us"

] | 1,733 | 1,733 | 4 | 0 | ---

{}

---

Quantization made by Richard Erkhov.

[Github](https://github.com/RichardErkhov)

[Discord](https://discord.gg/pvy7H8DZMG)

[Request more models](https://github.com/RichardErkhov/quant_request)

vi-gemma-2b-RAG - AWQ

- Model creator: https://huggingface.co/ricepaper/

- Original model: https://huggingface.co... | [

"QUESTION_ANSWERING",

"TRANSLATION",

"SUMMARIZATION"

] | [

"CHIA"

] | Non_BioNLP |

RichardErkhov/EleutherAI_-_pythia-2.8b-deduped-v0-4bits | RichardErkhov | text-generation | [

"transformers",

"safetensors",

"gpt_neox",

"text-generation",

"arxiv:2101.00027",

"arxiv:2201.07311",

"autotrain_compatible",

"text-generation-inference",

"endpoints_compatible",

"4-bit",

"bitsandbytes",

"region:us"

] | 1,713 | 1,713 | 4 | 0 | ---

{}

---

Quantization made by Richard Erkhov.

[Github](https://github.com/RichardErkhov)

[Discord](https://discord.gg/pvy7H8DZMG)

[Request more models](https://github.com/RichardErkhov/quant_request)

pythia-2.8b-deduped-v0 - bnb 4bits

- Model creator: https://huggingface.co/EleutherAI/

- Original model: https://... | [

"QUESTION_ANSWERING",

"TRANSLATION"

] | [

"SCIQ"

] | Non_BioNLP |

walsons/jina-embeddings-v2-base-en-Q4_K_M-GGUF | walsons | feature-extraction | [

"sentence-transformers",

"gguf",

"feature-extraction",

"sentence-similarity",

"mteb",

"llama-cpp",

"gguf-my-repo",

"en",

"dataset:allenai/c4",

"base_model:jinaai/jina-embeddings-v2-base-en",

"base_model:quantized:jinaai/jina-embeddings-v2-base-en",

"license:apache-2.0",

"model-index",

"aut... | 1,722 | 1,722 | 15 | 0 | ---

base_model: jinaai/jina-embeddings-v2-base-en

datasets:

- allenai/c4

language: en

license: apache-2.0

tags:

- sentence-transformers

- feature-extraction

- sentence-similarity

- mteb

- llama-cpp

- gguf-my-repo

inference: false

model-index:

- name: jina-embedding-b-en-v2

results:

- task:

type: Classificatio... | [

"SUMMARIZATION"

] | [

"BIOSSES",

"SCIFACT"

] | Non_BioNLP |

KarBik/legal-french-matroshka | KarBik | sentence-similarity | [

"sentence-transformers",

"safetensors",

"xlm-roberta",

"sentence-similarity",

"feature-extraction",

"generated_from_trainer",

"dataset_size:9000",

"loss:MatryoshkaLoss",

"loss:MultipleNegativesRankingLoss",

"arxiv:1908.10084",

"arxiv:2205.13147",

"arxiv:1705.00652",

"base_model:intfloat/mult... | 1,728 | 1,728 | 6 | 0 | ---

base_model: intfloat/multilingual-e5-base

library_name: sentence-transformers

metrics:

- cosine_accuracy@1

- cosine_accuracy@3

- cosine_accuracy@5

- cosine_accuracy@10

- cosine_precision@1

- cosine_precision@3

- cosine_precision@5

- cosine_precision@10

- cosine_recall@1

- cosine_recall@3

- cosine_recall@5

- cosine_... | [

"TEXT_CLASSIFICATION"

] | [

"CAS"

] | Non_BioNLP |

medspaner/mbert-base-clinical-trials-attributes | medspaner | null | [

"pytorch",

"bert",

"generated_from_trainer",

"license:cc-by-nc-4.0",

"region:us"

] | 1,726 | 1,727 | 7 | 0 | ---

license: cc-by-nc-4.0

metrics:

- precision

- recall

- f1

- accuracy

tags:

- generated_from_trainer

widget:

- text: Paciente normotenso (PA = 120/70 mmHg)

model-index:

- name: mbert-base-clinical-trials-attributes

results: []

---

<!-- This model card has been generated automatically according to the information t... | [

"NAMED_ENTITY_RECOGNITION"

] | [

"CT-EBM-SP",

"SCIELO"

] | BioNLP |

am-azadi/bilingual-embedding-large_Fine_Tuned_2e | am-azadi | sentence-similarity | [

"sentence-transformers",

"safetensors",

"bilingual",

"sentence-similarity",

"feature-extraction",

"generated_from_trainer",

"dataset_size:21769",

"loss:MultipleNegativesRankingLoss",

"custom_code",

"arxiv:1908.10084",

"arxiv:1705.00652",

"base_model:am-azadi/bilingual-embedding-large_Fine_Tune... | 1,740 | 1,740 | 7 | 0 | ---

base_model: am-azadi/bilingual-embedding-large_Fine_Tuned_1e

library_name: sentence-transformers

pipeline_tag: sentence-similarity

tags:

- sentence-transformers

- sentence-similarity

- feature-extraction

- generated_from_trainer

- dataset_size:21769

- loss:MultipleNegativesRankingLoss

widget:

- source_sentence: 'GO... | [

"TEXT_CLASSIFICATION"

] | [

"PCR"

] | Non_BioNLP |

huizhang0110/hui-embedding | huizhang0110 | null | [

"mteb",

"model-index",

"region:us"

] | 1,705 | 1,732 | 0 | 0 | ---

tags:

- mteb

model-index:

- name: no_model_name_available

results:

- task:

type: STS

dataset:

name: MTEB STS22 (en)

type: mteb/sts22-crosslingual-sts

config: en

split: test

revision: de9d86b3b84231dc21f76c7b7af1f28e2f57f6e3

metrics:

- type: cosine_pearson

va... | [

"SUMMARIZATION"

] | [

"BIOSSES",

"SCIFACT"

] | Non_BioNLP |

espnet/iwslt24_indic_en_bn_bpe_tc4000 | espnet | null | [

"espnet",

"audio",

"speech-translation",

"en",

"bn",

"dataset:iwslt24_indic",

"arxiv:1804.00015",

"license:cc-by-4.0",

"region:us"

] | 1,713 | 1,713 | 0 | 0 | ---

datasets:

- iwslt24_indic

language:

- en

- bn

license: cc-by-4.0

tags:

- espnet

- audio

- speech-translation

---

## ESPnet2 ST model

### `espnet/iwslt24_indic_en_bn_bpe_tc4000`

This model was trained by cromz22 using iwslt24_indic recipe in [espnet](https://github.com/espnet/espnet/).

### Demo: How to use in ES... | [

"TRANSLATION"

] | [

"CRAFT"

] | Non_BioNLP |

thenlper/gte-large | thenlper | sentence-similarity | [

"sentence-transformers",

"pytorch",

"onnx",

"safetensors",

"openvino",

"bert",

"mteb",

"sentence-similarity",

"Sentence Transformers",

"en",

"arxiv:2308.03281",

"license:mit",

"model-index",

"autotrain_compatible",

"text-embeddings-inference",

"endpoints_compatible",

"region:us"

] | 1,690 | 1,731 | 460,453 | 272 | ---

language:

- en

license: mit

tags:

- mteb

- sentence-similarity

- sentence-transformers

- Sentence Transformers

model-index:

- name: gte-large

results:

- task:

type: Classification

dataset:

name: MTEB AmazonCounterfactualClassification (en)

type: mteb/amazon_counterfactual

config: en

... | [

"SUMMARIZATION"

] | [

"BIOSSES",

"SCIFACT"

] | Non_BioNLP |

sschet/bert-base-uncased_clinical-ner | sschet | token-classification | [

"transformers",

"pytorch",

"tf",

"jax",

"bert",

"token-classification",

"dataset:tner/bc5cdr",

"dataset:commanderstrife/jnlpba",

"dataset:bc2gm_corpus",

"dataset:drAbreu/bc4chemd_ner",

"dataset:linnaeus",

"dataset:chintagunta85/ncbi_disease",

"autotrain_compatible",

"endpoints_compatible",... | 1,674 | 1,675 | 124 | 5 | ---

datasets:

- tner/bc5cdr

- commanderstrife/jnlpba

- bc2gm_corpus

- drAbreu/bc4chemd_ner

- linnaeus

- chintagunta85/ncbi_disease

---

A Named Entity Recognition model for clinical entities (`problem`, `treatment`, `test`)

The model has been trained on the [i2b2 (now n2c2) dataset](https://n2c2.dbmi.hms.harvard.edu) f... | [

"NAMED_ENTITY_RECOGNITION"

] | [

"BC5CDR",

"JNLPBA",

"LINNAEUS",

"NCBI DISEASE"

] | BioNLP |

adriansanz/stsitgesreranking | adriansanz | text-classification | [

"setfit",

"safetensors",

"bert",

"sentence-transformers",

"text-classification",

"generated_from_setfit_trainer",

"arxiv:2209.11055",

"base_model:cross-encoder/ms-marco-MiniLM-L-4-v2",

"base_model:finetune:cross-encoder/ms-marco-MiniLM-L-4-v2",

"model-index",

"region:us"

] | 1,724 | 1,724 | 4 | 0 | ---

base_model: cross-encoder/ms-marco-MiniLM-L-4-v2

library_name: setfit

metrics:

- accuracy

pipeline_tag: text-classification

tags:

- setfit

- sentence-transformers

- text-classification

- generated_from_setfit_trainer

widget:

- text: He de prendre la decisió de renunciar a una subvenció que no es pot ajustar

als... | [

"TEXT_CLASSIFICATION"

] | [

"CAS"

] | Non_BioNLP |

Muennighoff/SGPT-125M-weightedmean-nli-bitfit | Muennighoff | sentence-similarity | [

"sentence-transformers",

"pytorch",

"gpt_neo",

"feature-extraction",

"sentence-similarity",

"mteb",

"arxiv:2202.08904",

"model-index",

"autotrain_compatible",

"endpoints_compatible",

"region:us"

] | 1,646 | 1,685 | 327 | 3 | ---

pipeline_tag: sentence-similarity

tags:

- sentence-transformers

- feature-extraction

- sentence-similarity

- mteb

model-index:

- name: SGPT-125M-weightedmean-nli-bitfit

results:

- task:

type: Classification

dataset:

name: MTEB AmazonCounterfactualClassification (en)

type: mteb/amazon_count... | [

"SUMMARIZATION"

] | [

"BIOSSES",

"SCIFACT"

] | Non_BioNLP |

fresha/e5-large-v2-endpoint | fresha | feature-extraction | [

"transformers",

"pytorch",

"safetensors",

"bert",

"feature-extraction",

"mteb",

"en",

"arxiv:2212.03533",

"arxiv:2104.08663",

"arxiv:2210.07316",

"license:mit",

"model-index",

"text-embeddings-inference",

"endpoints_compatible",

"region:us"

] | 1,687 | 1,687 | 29 | 0 | ---

language:

- en

license: mit

tags:

- mteb

model-index:

- name: e5-large-v2

results:

- task:

type: Classification

dataset:

name: MTEB AmazonCounterfactualClassification (en)

type: mteb/amazon_counterfactual

config: en

split: test

revision: e8379541af4e31359cca9fbcf4b00f2671... | [

"SUMMARIZATION"

] | [

"BIOSSES",

"SCIFACT"

] | Non_BioNLP |

pszemraj/long-t5-tglobal-base-16384-booksci-summary-v1 | pszemraj | summarization | [

"transformers",

"pytorch",

"onnx",

"safetensors",

"longt5",

"text2text-generation",

"generated_from_trainer",

"lay summary",

"narrative",

"biomedical",

"long document summary",

"summarization",

"en",

"dataset:pszemraj/scientific_lay_summarisation-elife-norm",

"base_model:pszemraj/long-t5... | 1,680 | 1,696 | 36 | 2 | ---

base_model: pszemraj/long-t5-tglobal-base-16384-book-summary

datasets:

- pszemraj/scientific_lay_summarisation-elife-norm

language:

- en

library_name: transformers

license:

- bsd-3-clause

- apache-2.0

metrics:

- rouge

pipeline_tag: summarization

tags:

- generated_from_trainer

- lay summary

- narrative

- biomedical

... | [

"QUESTION_ANSWERING",

"SUMMARIZATION"

] | [

"BEAR"

] | Non_BioNLP |

ymelka/camembert-cosmetic-similarity-cp1200 | ymelka | sentence-similarity | [

"sentence-transformers",

"safetensors",

"camembert",

"sentence-similarity",

"feature-extraction",

"generated_from_trainer",

"dataset_size:5000",

"loss:CoSENTLoss",

"arxiv:1908.10084",

"base_model:ymelka/camembert-cosmetic-finetuned",

"base_model:finetune:ymelka/camembert-cosmetic-finetuned",

"... | 1,718 | 1,718 | 9 | 1 | ---

base_model: ymelka/camembert-cosmetic-finetuned

datasets: []

language: []

library_name: sentence-transformers

metrics:

- pearson_cosine

- spearman_cosine

- pearson_manhattan

- spearman_manhattan

- pearson_euclidean

- spearman_euclidean

- pearson_dot

- spearman_dot

- pearson_max

- spearman_max

pipeline_tag: sentence... | [

"TEXT_CLASSIFICATION",

"SEMANTIC_SIMILARITY"

] | [

"CAS"

] | Non_BioNLP |

adriansanz/ST-tramits-SB-003-5ep | adriansanz | sentence-similarity | [

"sentence-transformers",

"safetensors",

"xlm-roberta",

"sentence-similarity",

"feature-extraction",

"generated_from_trainer",

"dataset_size:2884",

"loss:MatryoshkaLoss",

"loss:MultipleNegativesRankingLoss",

"arxiv:1908.10084",

"arxiv:2205.13147",

"arxiv:1705.00652",

"base_model:BAAI/bge-m3",... | 1,729 | 1,729 | 6 | 0 | ---

base_model: BAAI/bge-m3

library_name: sentence-transformers

metrics:

- cosine_accuracy@1

- cosine_accuracy@3

- cosine_accuracy@5

- cosine_accuracy@10

- cosine_precision@1

- cosine_precision@3

- cosine_precision@5

- cosine_precision@10

- cosine_recall@1

- cosine_recall@3

- cosine_recall@5

- cosine_recall@10

- cosine... | [

"TEXT_CLASSIFICATION"

] | [

"CAS"

] | Non_BioNLP |

LeroyDyer/LCARS_AI_StarTrek_Computer | LeroyDyer | text2text-generation | [

"transformers",

"safetensors",

"mistral",

"text-generation",

"LCARS",

"Star-Trek",

"128k-Context",

"chemistry",

"biology",

"finance",

"legal",

"art",

"code",

"medical",

"text-generation-inference",

"text2text-generation",

"en",

"license:mit",

"autotrain_compatible",

"endpoints_... | 1,715 | 1,729 | 83 | 4 | ---

language:

- en

library_name: transformers

license: mit

pipeline_tag: text2text-generation

tags:

- LCARS

- Star-Trek

- 128k-Context

- mistral

- chemistry

- biology

- finance

- legal

- art

- code

- medical

- text-generation-inference

---

If anybody has star trek data please send as this starship computer database arc... | [

"TRANSLATION"

] | [

"MEDICAL DATA"

] | Non_BioNLP |

zbrunner/hallucination_noisetag | zbrunner | automatic-speech-recognition | [

"espnet",

"audio",

"automatic-speech-recognition",

"en",

"dataset:tedlium3",

"arxiv:1804.00015",

"license:cc-by-4.0",

"region:us"

] | 1,726 | 1,726 | 2 | 0 | ---

datasets:

- tedlium3

language: en

license: cc-by-4.0

tags:

- espnet

- audio

- automatic-speech-recognition

---

## ESPnet2 ASR model

### `zbrunner/hallucination_noisetag`

This model was trained by zbrunner using tedlium3 recipe in [espnet](https://github.com/espnet/espnet/).

### Demo: How to use in ESPnet2

Foll... | [

"TRANSLATION"

] | [

"BEAR",

"CRAFT",

"MEDAL"

] | Non_BioNLP |

intfloat/multilingual-e5-base | intfloat | sentence-similarity | [

"sentence-transformers",

"pytorch",

"onnx",

"safetensors",

"openvino",

"xlm-roberta",

"mteb",

"Sentence Transformers",

"sentence-similarity",

"multilingual",

"af",

"am",

"ar",

"as",

"az",

"be",

"bg",

"bn",

"br",

"bs",

"ca",

"cs",

"cy",

"da",

"de",

"el",

"en",

"e... | 1,684 | 1,739 | 578,159 | 263 | ---

language:

- multilingual

- af

- am

- ar

- as

- az

- be

- bg

- bn

- br

- bs

- ca

- cs

- cy

- da

- de

- el

- en

- eo

- es

- et

- eu

- fa

- fi

- fr

- fy

- ga

- gd

- gl

- gu

- ha

- he

- hi

- hr

- hu

- hy

- id

- is

- it

- ja

- jv

- ka

- kk

- km

- kn

- ko

- ku

- ky

- la

- lo

- lt

- lv

- mg

- mk

- ml

- mn

- mr

- ms

- my

-... | [

"SEMANTIC_SIMILARITY",

"TRANSLATION",

"SUMMARIZATION"

] | [

"BIOSSES",

"SCIFACT"

] | Non_BioNLP |

Amir13/bert-base-parsbert-uncased-ncbi_disease | Amir13 | token-classification | [

"transformers",

"pytorch",

"bert",

"token-classification",

"generated_from_trainer",

"arxiv:2302.09611",

"autotrain_compatible",

"endpoints_compatible",

"region:us"

] | 1,676 | 1,676 | 26 | 0 | ---

metrics:

- precision

- recall

- f1

- accuracy

tags:

- generated_from_trainer

model-index:

- name: bert-base-parsbert-uncased-ncbi_disease

results: []

---

<!-- This model card has been generated automatically according to the information the Trainer had access to. You

should probably proofread and complete it, th... | [

"TRANSLATION"

] | [

"NCBI DISEASE"

] | BioNLP |

croissantllm/base_125k | croissantllm | text2text-generation | [

"transformers",

"pytorch",

"llama",

"text-generation",

"legal",

"code",

"text-generation-inference",

"art",

"text2text-generation",

"fr",

"en",

"dataset:cerebras/SlimPajama-627B",

"dataset:uonlp/CulturaX",

"dataset:pg19",

"dataset:bigcode/starcoderdata",

"license:mit",

"autotrain_com... | 1,705 | 1,706 | 6 | 0 | ---

datasets:

- cerebras/SlimPajama-627B

- uonlp/CulturaX

- pg19

- bigcode/starcoderdata

language:

- fr

- en

license: mit

pipeline_tag: text2text-generation

tags:

- legal

- code

- text-generation-inference

- art

---

# CroissantLLM - Base (125k steps)

This model is part of the CroissantLLM initiative, and corresponds ... | [

"TRANSLATION"

] | [

"CRAFT"

] | Non_BioNLP |

Teradata/multilingual-e5-small | Teradata | sentence-similarity | [

"onnx",

"mteb",

"Sentence Transformers",

"sentence-similarity",

"teradata",

"multilingual",

"af",

"am",

"ar",

"as",

"az",

"be",

"bg",

"bn",

"br",

"bs",

"ca",

"cs",

"cy",

"da",

"de",

"el",

"en",

"eo",

"es",

"et",

"eu",

"fa",

"fi",

"fr",

"fy",

"ga",

"gd"... | 1,739 | 1,741 | 11 | 0 | ---

language:

- multilingual

- af

- am

- ar

- as

- az

- be

- bg

- bn

- br

- bs

- ca

- cs

- cy

- da

- de

- el

- en

- eo

- es

- et

- eu

- fa

- fi

- fr

- fy

- ga

- gd

- gl

- gu

- ha

- he

- hi

- hr

- hu

- hy

- id

- is

- it

- ja

- jv

- ka

- kk

- km

- kn

- ko

- ku

- ky

- la

- lo

- lt

- lv

- mg

- mk

- ml

- mn

- mr

- ms

- my

-... | [

"SEMANTIC_SIMILARITY",

"SUMMARIZATION"

] | [

"BIOSSES",

"SCIFACT"

] | Non_BioNLP |

nadeem1362/mxbai-embed-large-v1-Q4_K_M-GGUF | nadeem1362 | feature-extraction | [

"sentence-transformers",

"gguf",

"mteb",

"transformers.js",

"transformers",

"llama-cpp",

"gguf-my-repo",

"feature-extraction",

"en",

"license:apache-2.0",

"model-index",

"autotrain_compatible",

"endpoints_compatible",

"region:us"

] | 1,716 | 1,716 | 17 | 0 | ---

language:

- en

library_name: sentence-transformers

license: apache-2.0

pipeline_tag: feature-extraction

tags:

- mteb

- transformers.js

- transformers

- llama-cpp

- gguf-my-repo

model-index:

- name: mxbai-angle-large-v1

results:

- task:

type: Classification

dataset:

name: MTEB AmazonCounterfactua... | [

"SUMMARIZATION"

] | [

"BIOSSES",

"SCIFACT"

] | Non_BioNLP |

Dampish/StellarX-4B-V0.2 | Dampish | text-generation | [

"transformers",

"pytorch",

"gpt_neox",

"text-generation",

"arxiv:2204.06745",

"license:cc-by-nc-sa-4.0",

"autotrain_compatible",

"text-generation-inference",

"endpoints_compatible",

"region:us"

] | 1,685 | 1,695 | 2,264 | 2 | ---

license: cc-by-nc-sa-4.0

---

# StellarX: A Base Model by Dampish and Arkane

StellarX is a powerful autoregressive language model designed for various natural language processing tasks. It has been trained on a massive dataset containing 810 billion tokens(trained on 300B tokens), trained on "redpajama," and is bui... | [

"TRANSLATION"

] | [

"SCIQ"

] | Non_BioNLP |

RichardErkhov/himmeow_-_vi-gemma-2b-RAG-gguf | RichardErkhov | null | [

"gguf",

"endpoints_compatible",

"region:us",

"conversational"

] | 1,721 | 1,721 | 32 | 0 | ---

{}

---

Quantization made by Richard Erkhov.

[Github](https://github.com/RichardErkhov)

[Discord](https://discord.gg/pvy7H8DZMG)

[Request more models](https://github.com/RichardErkhov/quant_request)

vi-gemma-2b-RAG - GGUF

- Model creator: https://huggingface.co/himmeow/

- Original model: https://huggingface.co/... | [

"QUESTION_ANSWERING",

"TRANSLATION",

"SUMMARIZATION"

] | [

"CHIA"

] | Non_BioNLP |

mradermacher/HiTZ-GoLLIE-13B-AsSafeTensors-i1-GGUF | mradermacher | null | [

"transformers",

"gguf",

"code",

"text-generation-inference",

"Information Extraction",

"IE",

"Named Entity Recogniton",

"Event Extraction",

"Relation Extraction",

"LLaMA",

"en",

"dataset:ACE05",

"dataset:bc5cdr",

"dataset:conll2003",

"dataset:ncbi_disease",

"dataset:conll2012_ontonotes... | 1,740 | 1,740 | 949 | 1 | ---

base_model: KaraKaraWitch/HiTZ-GoLLIE-13B-AsSafeTensors

datasets:

- ACE05

- bc5cdr

- conll2003

- ncbi_disease

- conll2012_ontonotesv5

- rams

- tacred

- wnut_17

language:

- en

library_name: transformers

license: llama2

tags:

- code

- text-generation-inference

- Information Extraction

- IE

- Named Entity Recogniton

-... | [

"RELATION_EXTRACTION",

"EVENT_EXTRACTION"

] | [

"BC5CDR",

"NCBI DISEASE"

] | Non_BioNLP |

fine-tuned/SciFact-512-192-gpt-4o-2024-05-13-74504128 | fine-tuned | feature-extraction | [

"sentence-transformers",

"safetensors",

"xlm-roberta",

"feature-extraction",

"sentence-similarity",

"mteb",

"en",

"dataset:fine-tuned/SciFact-512-192-gpt-4o-2024-05-13-74504128",

"dataset:allenai/c4",

"license:apache-2.0",

"autotrain_compatible",

"text-embeddings-inference",

"endpoints_compa... | 1,716 | 1,716 | 6 | 0 | ---

datasets:

- fine-tuned/SciFact-512-192-gpt-4o-2024-05-13-74504128

- allenai/c4

language:

- en

- en

license: apache-2.0

pipeline_tag: feature-extraction

tags:

- sentence-transformers

- feature-extraction

- sentence-similarity

- mteb

---

This model is a fine-tuned version of [**BAAI/bge-m3**](https://huggingface.co/B... | [

"TEXT_CLASSIFICATION"

] | [

"SCIFACT"

] | Non_BioNLP |

microsoft/prophetnet-large-uncased-cnndm | microsoft | text2text-generation | [

"transformers",

"pytorch",

"rust",

"prophetnet",

"text2text-generation",

"en",

"dataset:cnn_dailymail",

"arxiv:2001.04063",

"autotrain_compatible",

"endpoints_compatible",

"region:us"

] | 1,646 | 1,674 | 965 | 2 | ---

datasets:

- cnn_dailymail

language: en

---

## prophetnet-large-uncased-cnndm

Fine-tuned weights(converted from [original fairseq version repo](https://github.com/microsoft/ProphetNet)) for [ProphetNet](https://arxiv.org/abs/2001.04063) on summarization task CNN/DailyMail.

ProphetNet is a new pre-trained language... | [

"SUMMARIZATION"

] | [

"CAS"

] | Non_BioNLP |

EleutherAI/pythia-1b | EleutherAI | text-generation | [

"transformers",

"pytorch",

"safetensors",

"gpt_neox",

"text-generation",

"causal-lm",

"pythia",

"en",

"dataset:the_pile",

"arxiv:2304.01373",

"arxiv:2101.00027",

"arxiv:2201.07311",

"license:apache-2.0",

"autotrain_compatible",

"text-generation-inference",

"endpoints_compatible",

"re... | 1,678 | 1,688 | 64,849 | 37 | ---

datasets:

- the_pile

language:

- en

license: apache-2.0

tags:

- pytorch

- causal-lm

- pythia

---

The *Pythia Scaling Suite* is a collection of models developed to facilitate

interpretability research [(see paper)](https://arxiv.org/pdf/2304.01373.pdf).

It contains two sets of eight models of sizes

70M, 160M, 41... | [

"QUESTION_ANSWERING",

"TRANSLATION"

] | [

"SCIQ"

] | Non_BioNLP |

blockblockblock/Dark-Miqu-70B-bpw6-exl2 | blockblockblock | text-generation | [

"transformers",

"safetensors",

"llama",

"text-generation",

"arxiv:2403.19522",

"license:other",

"autotrain_compatible",

"text-generation-inference",

"endpoints_compatible",

"6-bit",

"exl2",

"region:us"

] | 1,715 | 1,715 | 10 | 0 | ---

license: other

---

***NOTE***: *For a full range of GGUF quants kindly provided by @mradermacher: [Static](https://huggingface.co/mradermacher/Dark-Miqu-70B-GGUF) and [IMatrix](https://huggingface.co/mradermacher/Dark-Miqu-70B-i1-GGUF).*

A "dark" creative writing model with 32k co... | [

"TRANSLATION"

] | [

"BEAR"

] | Non_BioNLP |

Backedman/TriviaAnsweringMachine | Backedman | question-answering | [

"transformers",

"TFIDF-QA",

"question-answering",

"custom_code",

"en",

"license:mit",

"region:us"

] | 1,715 | 1,715 | 5 | 0 | ---

language:

- en

license: mit

pipeline_tag: question-answering

---

The evaluation of this project is to answer trivia questions. You do

not need to do well at this task, but you should submit a system that

completes the task or create adversarial questions in that setting. This will help the whole class share data ... | [

"TRANSLATION"

] | [

"MEDAL"

] | Non_BioNLP |

TheBloke/med42-70B-AWQ | TheBloke | text-generation | [

"transformers",

"safetensors",

"llama",

"text-generation",

"m42",

"health",

"healthcare",

"clinical-llm",

"en",

"base_model:m42-health/med42-70b",

"base_model:quantized:m42-health/med42-70b",

"license:other",

"autotrain_compatible",

"text-generation-inference",

"4-bit",

"awq",

"regio... | 1,698 | 1,699 | 449 | 2 | ---

base_model: m42-health/med42-70b

language:

- en

license: other

license_name: med42

model_name: Med42 70B

pipeline_tag: text-generation

tags:

- m42

- health

- healthcare

- clinical-llm

inference: false

model_creator: M42 Health

model_type: llama

prompt_template: '<|system|>: You are a helpful medical assistant creat... | [

"QUESTION_ANSWERING",

"SUMMARIZATION"

] | [

"MEDQA",

"PUBMEDQA"

] | BioNLP |

AdaptLLM/finance-LLM | AdaptLLM | text-generation | [

"transformers",

"pytorch",

"safetensors",

"llama",

"text-generation",

"finance",

"en",

"dataset:Open-Orca/OpenOrca",

"dataset:GAIR/lima",

"dataset:WizardLM/WizardLM_evol_instruct_V2_196k",

"arxiv:2309.09530",

"arxiv:2411.19930",

"arxiv:2406.14491",

"autotrain_compatible",

"text-generatio... | 1,695 | 1,733 | 665 | 118 | ---

datasets:

- Open-Orca/OpenOrca

- GAIR/lima

- WizardLM/WizardLM_evol_instruct_V2_196k

language:

- en

metrics:

- accuracy

pipeline_tag: text-generation

tags:

- finance

---

# Adapting LLMs to Domains via Continual Pre-Training (ICLR 2024)

This repo contains the domain-specific base model developed from **LLaMA-1-7B**... | [

"QUESTION_ANSWERING"

] | [

"CHEMPROT"

] | Non_BioNLP |

tsirif/BinGSE-Meta-Llama-3-8B-Instruct | tsirif | sentence-similarity | [

"peft",

"safetensors",

"text-embedding",

"embeddings",

"information-retrieval",

"beir",

"text-classification",

"language-model",

"text-clustering",

"text-semantic-similarity",

"text-evaluation",

"text-reranking",

"feature-extraction",

"sentence-similarity",

"Sentence Similarity",

"natu... | 1,729 | 1,729 | 14 | 0 | ---

language:

- en

library_name: peft

license: mit

pipeline_tag: sentence-similarity

tags:

- text-embedding

- embeddings

- information-retrieval

- beir

- text-classification

- language-model

- text-clustering

- text-semantic-similarity

- text-evaluation

- text-reranking

- feature-extraction

- sentence-similarity

- Sent... | [

"SUMMARIZATION"

] | [

"BIOSSES",

"SCIFACT"

] | Non_BioNLP |

yishan-wang/snowflake-arctic-embed-m-v1.5-Q8_0-GGUF | yishan-wang | sentence-similarity | [

"sentence-transformers",

"gguf",

"feature-extraction",

"sentence-similarity",

"mteb",

"arctic",

"snowflake-arctic-embed",

"transformers.js",

"llama-cpp",

"gguf-my-repo",

"base_model:Snowflake/snowflake-arctic-embed-m-v1.5",

"base_model:quantized:Snowflake/snowflake-arctic-embed-m-v1.5",

"lic... | 1,723 | 1,723 | 19 | 0 | ---

base_model: Snowflake/snowflake-arctic-embed-m-v1.5

license: apache-2.0

pipeline_tag: sentence-similarity

tags:

- sentence-transformers

- feature-extraction

- sentence-similarity

- mteb

- arctic

- snowflake-arctic-embed

- transformers.js

- llama-cpp

- gguf-my-repo

model-index:

- name: snowflake-arctic-embed-m-v1.5

... | [

"SUMMARIZATION"

] | [

"BIOSSES",

"SCIFACT"

] | Non_BioNLP |

sschet/scibert_scivocab_uncased-finetuned-ner | sschet | token-classification | [

"transformers",

"pytorch",

"bert",

"token-classification",

"Named Entity Recognition",

"SciBERT",

"Adverse Effect",

"Drug",

"Medical",

"en",

"dataset:ade_corpus_v2",

"dataset:tner/bc5cdr",

"dataset:commanderstrife/jnlpba",

"dataset:bc2gm_corpus",

"dataset:drAbreu/bc4chemd_ner",

"datase... | 1,675 | 1,675 | 152 | 0 | ---

datasets:

- ade_corpus_v2

- tner/bc5cdr

- commanderstrife/jnlpba

- bc2gm_corpus

- drAbreu/bc4chemd_ner

- linnaeus

- chintagunta85/ncbi_disease

language:

- en

tags:

- Named Entity Recognition

- SciBERT

- Adverse Effect

- Drug

- Medical

widget:

- text: Abortion, miscarriage or uterine hemorrhage associated with misop... | [

"NAMED_ENTITY_RECOGNITION"

] | [

"BC5CDR",

"JNLPBA",

"LINNAEUS",

"NCBI DISEASE"

] | BioNLP |

LXC1999/gte-Qwen2-7B-instruct-Q4_K_M-GGUF | LXC1999 | sentence-similarity | [

"sentence-transformers",

"gguf",

"mteb",

"transformers",

"Qwen2",

"sentence-similarity",

"llama-cpp",

"gguf-my-repo",

"base_model:Alibaba-NLP/gte-Qwen2-7B-instruct",

"base_model:quantized:Alibaba-NLP/gte-Qwen2-7B-instruct",

"license:apache-2.0",

"model-index",

"autotrain_compatible",

"endp... | 1,739 | 1,739 | 9 | 0 | ---

base_model: Alibaba-NLP/gte-Qwen2-7B-instruct

license: apache-2.0

tags:

- mteb

- sentence-transformers

- transformers

- Qwen2

- sentence-similarity

- llama-cpp

- gguf-my-repo

model-index:

- name: gte-qwen2-7B-instruct

results:

- task:

type: Classification

dataset:

name: MTEB AmazonCounterfactual... | [

"SUMMARIZATION"

] | [

"BIOSSES",

"SCIFACT"

] | Non_BioNLP |

RichardErkhov/EleutherAI_-_pythia-160m-deduped-v0-8bits | RichardErkhov | text-generation | [

"transformers",

"safetensors",

"gpt_neox",

"text-generation",

"arxiv:2101.00027",

"arxiv:2201.07311",

"autotrain_compatible",

"text-generation-inference",

"endpoints_compatible",

"8-bit",

"bitsandbytes",

"region:us"

] | 1,713 | 1,713 | 10 | 0 | ---

{}

---

Quantization made by Richard Erkhov.

[Github](https://github.com/RichardErkhov)

[Discord](https://discord.gg/pvy7H8DZMG)

[Request more models](https://github.com/RichardErkhov/quant_request)

pythia-160m-deduped-v0 - bnb 8bits

- Model creator: https://huggingface.co/EleutherAI/

- Original model: https://... | [

"QUESTION_ANSWERING",

"TRANSLATION"

] | [

"SCIQ"

] | Non_BioNLP |

pankajrajdeo/UMLS-ED-Bioformer-8L-V-1.25 | pankajrajdeo | sentence-similarity | [

"sentence-transformers",

"safetensors",

"bert",

"sentence-similarity",

"feature-extraction",

"generated_from_trainer",

"dataset_size:187491593",

"loss:CustomTripletLoss",

"arxiv:1908.10084",

"arxiv:1703.07737",

"base_model:pankajrajdeo/UMLS-ED-Bioformer-8L-V-1",

"base_model:finetune:pankajrajd... | 1,733 | 1,736 | 24 | 0 | ---

base_model:

- pankajrajdeo/UMLS-ED-Bioformer-8L-V-1

library_name: sentence-transformers

pipeline_tag: sentence-similarity

tags:

- sentence-transformers

- sentence-similarity

- feature-extraction

- generated_from_trainer

- dataset_size:187491593

- loss:CustomTripletLoss

widget:

- source_sentence: Hylocharis xantusii... | [

"TEXT_CLASSIFICATION"

] | [

"PCR"

] | BioNLP |

RomainDarous/large_directOneEpoch_additivePooling_randomInit_mistranslationModel | RomainDarous | sentence-similarity | [

"sentence-transformers",

"safetensors",

"xlm-roberta",

"sentence-similarity",

"feature-extraction",

"generated_from_trainer",

"dataset_size:4460010",

"loss:CoSENTLoss",

"dataset:RomainDarous/corrupted_os_by_language",

"arxiv:1908.10084",

"base_model:sentence-transformers/paraphrase-multilingual-... | 1,739 | 1,739 | 25 | 0 | ---

base_model: sentence-transformers/paraphrase-multilingual-mpnet-base-v2

datasets:

- RomainDarous/corrupted_os_by_language

library_name: sentence-transformers

metrics:

- pearson_cosine

- spearman_cosine

pipeline_tag: sentence-similarity

tags:

- sentence-transformers

- sentence-similarity

- feature-extraction

- gener... | [

"TEXT_CLASSIFICATION",

"SEMANTIC_SIMILARITY",

"TRANSLATION"

] | [

"CAS"

] | Non_BioNLP |

RichardErkhov/Alibaba-NLP_-_gte-Qwen2-7B-instruct-4bits | RichardErkhov | null | [

"safetensors",

"qwen2",

"custom_code",

"arxiv:2308.03281",

"4-bit",

"bitsandbytes",

"region:us"

] | 1,731 | 1,731 | 4 | 0 | ---

{}

---

Quantization made by Richard Erkhov.

[Github](https://github.com/RichardErkhov)

[Discord](https://discord.gg/pvy7H8DZMG)

[Request more models](https://github.com/RichardErkhov/quant_request)

gte-Qwen2-7B-instruct - bnb 4bits

- Model creator: https://huggingface.co/Alibaba-NLP/

- Original model: https://... | [

"SUMMARIZATION"

] | [

"BIOSSES",

"SCIFACT"

] | Non_BioNLP |

croissantllm/base_185k | croissantllm | text2text-generation | [

"transformers",

"pytorch",

"llama",

"text-generation",

"legal",

"code",

"text-generation-inference",

"art",

"text2text-generation",

"fr",

"en",

"dataset:cerebras/SlimPajama-627B",

"dataset:uonlp/CulturaX",

"dataset:pg19",

"dataset:bigcode/starcoderdata",

"license:mit",

"autotrain_com... | 1,704 | 1,706 | 5 | 0 | ---

datasets:

- cerebras/SlimPajama-627B

- uonlp/CulturaX

- pg19

- bigcode/starcoderdata

language:

- fr

- en

license: mit

pipeline_tag: text2text-generation

tags:

- legal

- code

- text-generation-inference

- art

---

# CroissantLLM - Base (185k steps)

This model is part of the CroissantLLM initiative, and corresponds ... | [

"TRANSLATION"

] | [

"CRAFT"

] | Non_BioNLP |

espnet/pengcheng_aishell_asr_train_asr_whisper_medium_finetune_raw_zh_whisper_multilingual_sp | espnet | automatic-speech-recognition | [

"espnet",

"audio",

"automatic-speech-recognition",

"zh",

"dataset:aishell",

"arxiv:1804.00015",

"license:cc-by-4.0",

"region:us"

] | 1,690 | 1,690 | 1 | 1 | ---

datasets:

- aishell

language: zh

license: cc-by-4.0

tags:

- espnet

- audio

- automatic-speech-recognition

---

## ESPnet2 ASR model

### `espnet/pengcheng_aishell_asr_train_asr_whisper_medium_finetune_raw_zh_whisper_multilingual_sp`

This model was trained by Pengcheng Guo using aishell recipe in [espnet](https://g... | [

"TRANSLATION"

] | [

"BEAR",

"CAS",

"CHIA",

"CRAFT",

"GAD",

"MEDAL",

"PCR"

] | TBD |

twadada/fasttext | twadada | null | [

"mteb",

"model-index",

"region:us"

] | 1,725 | 1,725 | 0 | 0 | ---

tags:

- mteb

model-index:

- name: fasttext_main

results:

- task:

type: Classification

dataset:

name: MTEB AmazonCounterfactualClassification (en)

type: None

config: en

split: test

revision: e8379541af4e31359cca9fbcf4b00f2671dba205

metrics:

- type: accuracy

v... | [

"SUMMARIZATION"

] | [

"BIOSSES",

"SCIFACT"

] | Non_BioNLP |

Sci-fi-vy/Meditron-7b-finetuned | Sci-fi-vy | image-text-to-text | [

"transformers",

"pytorch",

"safetensors",

"llama",

"text-generation",

"image-text-to-text",

"en",

"dataset:epfl-llm/guidelines",

"arxiv:2311.16079",

"base_model:meta-llama/Llama-2-7b",

"base_model:finetune:meta-llama/Llama-2-7b",

"license:llama2",

"autotrain_compatible",

"text-generation-i... | 1,737 | 1,737 | 78 | 1 | ---

base_model: meta-llama/Llama-2-7b

datasets:

- epfl-llm/guidelines

language:

- en

library_name: transformers

license: llama2

metrics:

- accuracy

- perplexity

pipeline_tag: image-text-to-text

---

# Model Card for Meditron-7B-finetuned

Meditron is a suite of open-source medical Large Language Models (LLMs).

Meditron-... | [

"QUESTION_ANSWERING"

] | [

"MEDQA",

"PUBMEDQA"

] | BioNLP |

adriansanz/ST-tramits-SITGES-007-5ep | adriansanz | sentence-similarity | [

"sentence-transformers",

"safetensors",

"xlm-roberta",

"sentence-similarity",

"feature-extraction",

"generated_from_trainer",

"dataset_size:6692",

"loss:MatryoshkaLoss",

"loss:MultipleNegativesRankingLoss",

"arxiv:1908.10084",

"arxiv:2205.13147",

"arxiv:1705.00652",

"base_model:BAAI/bge-m3",... | 1,728 | 1,728 | 4 | 0 | ---

base_model: BAAI/bge-m3

library_name: sentence-transformers

metrics:

- cosine_accuracy@1

- cosine_accuracy@3

- cosine_accuracy@5

- cosine_accuracy@10

- cosine_precision@1

- cosine_precision@3

- cosine_precision@5

- cosine_precision@10

- cosine_recall@1

- cosine_recall@3

- cosine_recall@5

- cosine_recall@10

- cosine... | [

"TEXT_CLASSIFICATION"

] | [

"CAS"

] | Non_BioNLP |

BAAI/bge-small-en | BAAI | feature-extraction | [

"transformers",

"pytorch",

"safetensors",

"bert",

"feature-extraction",

"mteb",

"sentence transformers",

"en",

"arxiv:2311.13534",

"arxiv:2310.07554",

"arxiv:2309.07597",

"license:mit",

"model-index",

"text-embeddings-inference",

"endpoints_compatible",

"region:us"

] | 1,691 | 1,702 | 419,855 | 74 | ---

language:

- en

license: mit

tags:

- mteb

- sentence transformers

model-index:

- name: bge-small-en

results:

- task:

type: Classification

dataset:

name: MTEB AmazonCounterfactualClassification (en)

type: mteb/amazon_counterfactual

config: en

split: test

revision: e8379541a... | [

"SEMANTIC_SIMILARITY",

"SUMMARIZATION"

] | [

"BEAR",

"BIOSSES",

"SCIFACT"

] | Non_BioNLP |

adriansanz/fs_setfit_hybrid2 | adriansanz | text-classification | [

"setfit",

"safetensors",

"xlm-roberta",

"sentence-transformers",

"text-classification",

"generated_from_setfit_trainer",

"arxiv:2209.11055",

"base_model:projecte-aina/ST-NLI-ca_paraphrase-multilingual-mpnet-base",

"base_model:finetune:projecte-aina/ST-NLI-ca_paraphrase-multilingual-mpnet-base",

"m... | 1,717 | 1,717 | 7 | 0 | ---

base_model: projecte-aina/ST-NLI-ca_paraphrase-multilingual-mpnet-base

library_name: setfit

metrics:

- accuracy

pipeline_tag: text-classification

tags:

- setfit

- sentence-transformers

- text-classification

- generated_from_setfit_trainer

widget:

- text: Estic preocupat per la falta de legislació i regulació adequa... | [

"TEXT_CLASSIFICATION"

] | [

"CAS"

] | Non_BioNLP |

svorwerk/setfit-fine-tuned-demo-class | svorwerk | text-classification | [

"setfit",

"safetensors",

"mpnet",

"sentence-transformers",

"text-classification",

"generated_from_setfit_trainer",

"arxiv:2209.11055",

"base_model:sentence-transformers/all-mpnet-base-v2",

"base_model:finetune:sentence-transformers/all-mpnet-base-v2",

"region:us"

] | 1,706 | 1,706 | 6 | 0 | ---

base_model: sentence-transformers/all-mpnet-base-v2

library_name: setfit

metrics:

- accuracy

pipeline_tag: text-classification

tags:

- setfit

- sentence-transformers

- text-classification

- generated_from_setfit_trainer

widget:

- text: 'Acquisition Id: ALOG; Ancotel; Asia Tone; Bit-Isle; IXEurope; Infomart; Itconic... | [

"TEXT_CLASSIFICATION",

"TRANSLATION"

] | [

"CHIA",

"MIRNA"

] | Non_BioNLP |

FINGU-AI/FingUEm_V3 | FINGU-AI | sentence-similarity | [

"sentence-transformers",

"safetensors",

"qwen2",

"sentence-similarity",

"feature-extraction",

"generated_from_trainer",

"dataset_size:245133",

"loss:MultipleNegativesRankingLoss",

"loss:MultipleNegativesSymmetricRankingLoss",

"loss:CoSENTLoss",

"custom_code",

"arxiv:1908.10084",

"arxiv:1705.... | 1,720 | 1,721 | 6 | 3 | ---

base_model: Alibaba-NLP/gte-Qwen2-1.5B-instruct

datasets: []

language: []

library_name: sentence-transformers

pipeline_tag: sentence-similarity

tags:

- sentence-transformers

- sentence-similarity

- feature-extraction

- generated_from_trainer

- dataset_size:245133

- loss:MultipleNegativesRankingLoss

- loss:MultipleN... | [

"TEXT_CLASSIFICATION"

] | [

"CAS"

] | Non_BioNLP |

minishlab/potion-retrieval-32M | minishlab | null | [

"model2vec",

"onnx",

"safetensors",

"embeddings",

"static-embeddings",

"sentence-transformers",

"license:mit",

"region:us"

] | 1,737 | 1,738 | 3,271 | 17 | ---

library_name: model2vec

license: mit

model_name: potion-retrieval-32M

tags:

- embeddings

- static-embeddings

- sentence-transformers

---

# potion-retrieval-32M Model Card

<div align="center">

<img width="35%" alt="Model2Vec logo" src="https://raw.githubusercontent.com/MinishLab/model2vec/main/assets/images/lo... | [

"SUMMARIZATION"

] | [

"PUBMEDQA"

] | Non_BioNLP |

DeusImperator/Dark-Miqu-70B_exl2_2.4bpw | DeusImperator | text-generation | [

"transformers",

"safetensors",

"llama",

"text-generation",

"mergekit",

"merge",

"arxiv:2403.19522",

"base_model:152334H/miqu-1-70b-sf",

"base_model:merge:152334H/miqu-1-70b-sf",

"base_model:Sao10K/Euryale-1.3-L2-70B",

"base_model:merge:Sao10K/Euryale-1.3-L2-70B",

"base_model:Sao10K/WinterGodde... | 1,716 | 1,716 | 9 | 0 | ---

base_model:

- 152334H/miqu-1-70b-sf

- sophosympatheia/Midnight-Rose-70B-v2.0.3

- Sao10K/Euryale-1.3-L2-70B

- Sao10K/WinterGoddess-1.4x-70B-L2

library_name: transformers

license: other

tags:

- mergekit

- merge

---

# Dark-Miqu-70B - EXL2 2.4bpw

This is a 2.4bpw EXL2 quant of [jukofy... | [

"TRANSLATION"

] | [

"BEAR"

] | Non_BioNLP |

beethogedeon/gte-Qwen2-7B-instruct-Q4_K_M-GGUF | beethogedeon | sentence-similarity | [

"sentence-transformers",

"gguf",

"qwen2",

"text-generation",

"mteb",

"transformers",

"Qwen2",

"sentence-similarity",

"llama-cpp",

"gguf-my-repo",

"custom_code",

"base_model:Alibaba-NLP/gte-Qwen2-7B-instruct",

"base_model:quantized:Alibaba-NLP/gte-Qwen2-7B-instruct",

"license:apache-2.0",

... | 1,733 | 1,733 | 354 | 2 | ---

base_model: Alibaba-NLP/gte-Qwen2-7B-instruct

license: apache-2.0

tags:

- mteb

- sentence-transformers

- transformers

- Qwen2

- sentence-similarity

- llama-cpp

- gguf-my-repo

model-index:

- name: gte-qwen2-7B-instruct

results:

- task:

type: Classification

dataset:

name: MTEB AmazonCounterfactual... | [

"SUMMARIZATION"

] | [

"BIOSSES",

"SCIFACT"

] | Non_BioNLP |

RichardErkhov/ricepaper_-_vi-gemma-2b-RAG-gguf | RichardErkhov | null | [

"gguf",

"endpoints_compatible",

"region:us",

"conversational"

] | 1,724 | 1,724 | 98 | 0 | ---

{}

---

Quantization made by Richard Erkhov.

[Github](https://github.com/RichardErkhov)

[Discord](https://discord.gg/pvy7H8DZMG)

[Request more models](https://github.com/RichardErkhov/quant_request)

vi-gemma-2b-RAG - GGUF

- Model creator: https://huggingface.co/ricepaper/

- Original model: https://huggingface.c... | [

"QUESTION_ANSWERING",

"TRANSLATION",

"SUMMARIZATION"

] | [

"CHIA"

] | BioNLP |

RichardErkhov/BSC-LT_-_salamandra-7b-gguf | RichardErkhov | null | [

"gguf",

"arxiv:2403.14009",

"arxiv:2403.20266",

"arxiv:2101.00027",

"arxiv:2207.00220",

"arxiv:1810.06694",

"arxiv:1911.05507",

"arxiv:1906.03741",

"arxiv:2406.17557",

"arxiv:2402.06619",

"arxiv:1803.09010",

"endpoints_compatible",

"region:us"

] | 1,728 | 1,728 | 80 | 0 | ---

{}

---

Quantization made by Richard Erkhov.

[Github](https://github.com/RichardErkhov)

[Discord](https://discord.gg/pvy7H8DZMG)

[Request more models](https://github.com/RichardErkhov/quant_request)

salamandra-7b - GGUF

- Model creator: https://huggingface.co/BSC-LT/

- Original model: https://huggingface.co/BSC... | [

"QUESTION_ANSWERING",

"TRANSLATION",

"SUMMARIZATION",

"PARAPHRASING"

] | [

"BEAR",

"SCIELO"

] | Non_BioNLP |

CCwz/gme-Qwen2-VL-7B-Instruct-Q5_K_S-GGUF | CCwz | sentence-similarity | [

"sentence-transformers",

"gguf",

"mteb",

"transformers",

"Qwen2-VL",

"sentence-similarity",

"vidore",

"llama-cpp",

"gguf-my-repo",

"en",

"zh",

"base_model:Alibaba-NLP/gme-Qwen2-VL-7B-Instruct",

"base_model:quantized:Alibaba-NLP/gme-Qwen2-VL-7B-Instruct",

"license:apache-2.0",

"model-inde... | 1,735 | 1,735 | 73 | 0 | ---

base_model: Alibaba-NLP/gme-Qwen2-VL-7B-Instruct

language:

- en

- zh

license: apache-2.0

tags:

- mteb

- sentence-transformers

- transformers

- Qwen2-VL

- sentence-similarity

- vidore

- llama-cpp

- gguf-my-repo

model-index:

- name: gme-Qwen2-VL-7B-Instruct

results:

- task:

type: STS

dataset:

name... | [

"SUMMARIZATION"

] | [

"BIOSSES",

"SCIFACT"

] | Non_BioNLP |

AdaptLLM/law-LLM-13B | AdaptLLM | text-generation | [

"transformers",

"pytorch",

"safetensors",

"llama",

"text-generation",

"legal",

"en",

"dataset:Open-Orca/OpenOrca",

"dataset:GAIR/lima",

"dataset:WizardLM/WizardLM_evol_instruct_V2_196k",

"dataset:EleutherAI/pile",

"arxiv:2309.09530",

"arxiv:2411.19930",

"arxiv:2406.14491",

"autotrain_com... | 1,703 | 1,733 | 257 | 34 | ---

datasets:

- Open-Orca/OpenOrca

- GAIR/lima

- WizardLM/WizardLM_evol_instruct_V2_196k

- EleutherAI/pile

language:

- en

metrics:

- accuracy

pipeline_tag: text-generation

tags:

- legal

---

# Adapting LLMs to Domains via Continual Pre-Training (ICLR 2024)

This repo contains the domain-specific base model developed fro... | [

"QUESTION_ANSWERING"

] | [

"CHEMPROT"

] | Non_BioNLP |

RichardErkhov/bennegeek_-_stella_en_1.5B_v5-4bits | RichardErkhov | null | [

"safetensors",

"qwen2",

"custom_code",

"arxiv:2205.13147",

"4-bit",

"bitsandbytes",

"region:us"

] | 1,741 | 1,741 | 12 | 0 | ---

{}

---

Quantization made by Richard Erkhov.

[Github](https://github.com/RichardErkhov)

[Discord](https://discord.gg/pvy7H8DZMG)

[Request more models](https://github.com/RichardErkhov/quant_request)

stella_en_1.5B_v5 - bnb 4bits

- Model creator: https://huggingface.co/bennegeek/

- Original model: https://huggin... | [

"SUMMARIZATION"

] | [

"BIOSSES",

"SCIFACT"

] | Non_BioNLP |

huoxu/bge-large-en-v1.5-Q8_0-GGUF | huoxu | feature-extraction | [

"sentence-transformers",

"gguf",

"feature-extraction",

"sentence-similarity",

"transformers",

"mteb",

"llama-cpp",

"gguf-my-repo",

"en",

"base_model:BAAI/bge-large-en-v1.5",

"base_model:quantized:BAAI/bge-large-en-v1.5",

"license:mit",

"model-index",

"autotrain_compatible",

"endpoints_co... | 1,721 | 1,721 | 285 | 0 | ---

base_model: BAAI/bge-large-en-v1.5

language:

- en

license: mit

tags:

- sentence-transformers

- feature-extraction

- sentence-similarity

- transformers

- mteb

- llama-cpp

- gguf-my-repo

model-index:

- name: bge-large-en-v1.5

results:

- task:

type: Classification

dataset:

name: MTEB AmazonCounterf... | [

"SUMMARIZATION"

] | [

"BIOSSES",

"SCIFACT"

] | Non_BioNLP |

minhtuan7akp/snowflake-m-v2.0-vietnamese-finetune | minhtuan7akp | sentence-similarity | [

"sentence-transformers",

"safetensors",

"gte",

"sentence-similarity",

"feature-extraction",

"generated_from_trainer",

"dataset_size:21892",

"loss:MultipleNegativesRankingLoss",

"custom_code",

"arxiv:1908.10084",

"arxiv:1705.00652",

"base_model:Snowflake/snowflake-arctic-embed-m-v2.0",

"base_... | 1,740 | 1,740 | 21 | 0 | ---

base_model: Snowflake/snowflake-arctic-embed-m-v2.0

library_name: sentence-transformers

metrics:

- cosine_accuracy@1

- cosine_accuracy@3

- cosine_accuracy@5

- cosine_accuracy@10

- cosine_precision@1

- cosine_precision@3

- cosine_precision@5

- cosine_precision@10

- cosine_recall@1

- cosine_recall@3

- cosine_recall@5... | [

"TEXT_CLASSIFICATION"

] | [

"CHIA"

] | Non_BioNLP |

pruas/BENT-PubMedBERT-NER-Cell-Type | pruas | token-classification | [

"transformers",

"pytorch",

"bert",

"token-classification",

"en",

"license:apache-2.0",

"autotrain_compatible",

"endpoints_compatible",

"region:us"

] | 1,673 | 1,709 | 24 | 0 | ---

language:

- en

license: apache-2.0

pipeline_tag: token-classification

---

Named Entity Recognition (NER) model to recognize cell type entities.

Please cite our work:

```

@article{NILNKER2022,

title = {NILINKER: Attention-based approach to NIL Entity Linking},

journal = {Journal of Biomedical Informatics},

... | [

"NAMED_ENTITY_RECOGNITION"

] | [

"CRAFT",

"CELLFINDER",

"JNLPBA"

] | BioNLP |

RichardErkhov/GritLM_-_GritLM-7B-8bits | RichardErkhov | text-generation | [

"transformers",

"safetensors",

"mistral",

"text-generation",

"conversational",

"custom_code",

"arxiv:2402.09906",

"autotrain_compatible",

"text-generation-inference",

"endpoints_compatible",

"8-bit",

"bitsandbytes",

"region:us"

] | 1,714 | 1,714 | 4 | 0 | ---

{}

---

Quantization made by Richard Erkhov.

[Github](https://github.com/RichardErkhov)

[Discord](https://discord.gg/pvy7H8DZMG)

[Request more models](https://github.com/RichardErkhov/quant_request)

GritLM-7B - bnb 8bits

- Model creator: https://huggingface.co/GritLM/

- Original model: https://huggingface.co/Gr... | [

"SUMMARIZATION"

] | [

"BIOSSES",

"SCIFACT"

] | Non_BioNLP |

nomic-ai/nomic-embed-text-v1.5 | nomic-ai | sentence-similarity | [

"sentence-transformers",

"onnx",

"safetensors",

"nomic_bert",

"feature-extraction",

"sentence-similarity",

"mteb",

"transformers",

"transformers.js",

"custom_code",

"en",

"arxiv:2205.13147",

"arxiv:2402.01613",

"license:apache-2.0",

"model-index",

"autotrain_compatible",

"text-embedd... | 1,707 | 1,737 | 986,761 | 577 | ---

language:

- en

library_name: sentence-transformers

license: apache-2.0

pipeline_tag: sentence-similarity

tags:

- feature-extraction

- sentence-similarity

- mteb

- transformers

- transformers.js

model-index:

- name: epoch_0_model

results:

- task:

type: Classification

dataset:

name: MTEB AmazonCou... | [

"SUMMARIZATION"

] | [

"BIOSSES",

"SCIFACT"

] | Non_BioNLP |

RichardErkhov/phamhai_-_Llama-3.2-1B-Instruct-Frog-awq | RichardErkhov | null | [

"safetensors",

"llama",

"4-bit",

"awq",

"region:us"

] | 1,732 | 1,732 | 11 | 0 | ---

{}

---

Quantization made by Richard Erkhov.

[Github](https://github.com/RichardErkhov)

[Discord](https://discord.gg/pvy7H8DZMG)

[Request more models](https://github.com/RichardErkhov/quant_request)

Llama-3.2-1B-Instruct-Frog - AWQ

- Model creator: https://huggingface.co/phamhai/

- Original model: https://huggi... | [

"SUMMARIZATION"

] | [

"CHIA"

] | Non_BioNLP |

simonosgoode/nomic_embed_fine_tune_law_v3 | simonosgoode | sentence-similarity | [

"sentence-transformers",

"safetensors",

"nomic_bert",

"sentence-similarity",

"feature-extraction",

"generated_from_trainer",

"dataset_size:12750",

"loss:MultipleNegativesRankingLoss",

"custom_code",

"arxiv:1908.10084",

"arxiv:1705.00652",

"base_model:nomic-ai/nomic-embed-text-v1.5",

"base_mo... | 1,731 | 1,731 | 9 | 0 | ---

base_model: nomic-ai/nomic-embed-text-v1.5

library_name: sentence-transformers

pipeline_tag: sentence-similarity

tags:

- sentence-transformers

- sentence-similarity

- feature-extraction

- generated_from_trainer

- dataset_size:12750

- loss:MultipleNegativesRankingLoss

widget:

- source_sentence: 'cluster: SUMMARY: E... | [

"TEXT_CLASSIFICATION"

] | [

"BEAR",

"CAS",

"MQP"

] | Non_BioNLP |

tanbinh2210/mlm_finetuned_2_phobert | tanbinh2210 | sentence-similarity | [

"sentence-transformers",

"safetensors",

"roberta",

"sentence-similarity",

"feature-extraction",

"generated_from_trainer",

"dataset_size:357018",

"loss:MultipleNegativesRankingLoss",

"arxiv:1908.10084",

"arxiv:1705.00652",

"base_model:tanbinh2210/mlm_finetuned_phobert",

"base_model:finetune:tan... | 1,732 | 1,732 | 7 | 0 | ---

base_model: tanbinh2210/mlm_finetuned_phobert

library_name: sentence-transformers

pipeline_tag: sentence-similarity

tags:

- sentence-transformers

- sentence-similarity

- feature-extraction

- generated_from_trainer

- dataset_size:357018

- loss:MultipleNegativesRankingLoss

widget:

- source_sentence: đánh_giá phẩm_chấ... | [

"TEXT_CLASSIFICATION"

] | [

"CHIA"

] | Non_BioNLP |

IEETA/BioNExt | IEETA | null | [

"en",

"dataset:bigbio/biored",

"license:mit",

"region:us"

] | 1,715 | 1,715 | 0 | 1 | ---

datasets:

- bigbio/biored

language:

- en

license: mit

metrics:

- f1

---

# Model Card for BioNExt

BioNExt, is an end-to-end Biomedical Relation Extraction and Classifcation system. The work utilized three modules, a Tagger (Named Entity Recognition), Linker (Entity Linking) and an Extractor (Relation Extraction a... | [

"NAMED_ENTITY_RECOGNITION",

"RELATION_EXTRACTION"

] | [

"BIORED"

] | BioNLP |

vocabtrimmer/mt5-small-trimmed-fr-10000-frquad-qa | vocabtrimmer | text2text-generation | [

"transformers",

"pytorch",

"mt5",

"text2text-generation",

"question answering",

"fr",

"dataset:lmqg/qg_frquad",

"arxiv:2210.03992",

"license:cc-by-4.0",

"model-index",

"autotrain_compatible",

"endpoints_compatible",

"region:us"

] | 1,679 | 1,679 | 12 | 0 | ---

datasets:

- lmqg/qg_frquad

language: fr

license: cc-by-4.0

metrics:

- bleu4

- meteor

- rouge-l

- bertscore

- moverscore

pipeline_tag: text2text-generation

tags:

- question answering

widget:

- text: 'question: En quelle année a-t-on trouvé trace d''un haut fourneau similaire?,

context: Cette technologie ne dispa... | [

"QUESTION_ANSWERING"

] | [

"CAS"

] | Non_BioNLP |

Savoxism/Finetuned-Paraphrase-Multilingual-MiniLM-L12-v2 | Savoxism | sentence-similarity | [

"sentence-transformers",

"safetensors",

"bert",

"sentence-similarity",

"feature-extraction",

"generated_from_trainer",

"dataset_size:89592",

"loss:CachedMultipleNegativesRankingLoss",

"arxiv:1908.10084",

"arxiv:2101.06983",

"base_model:sentence-transformers/paraphrase-multilingual-MiniLM-L12-v2"... | 1,741 | 1,741 | 3 | 0 | ---

base_model: sentence-transformers/paraphrase-multilingual-MiniLM-L12-v2

library_name: sentence-transformers

pipeline_tag: sentence-similarity

tags:

- sentence-transformers

- sentence-similarity

- feature-extraction

- generated_from_trainer

- dataset_size:89592

- loss:CachedMultipleNegativesRankingLoss

widget:

- sou... | [

"TEXT_CLASSIFICATION"

] | [

"CHIA"

] | Non_BioNLP |

Dizex/FoodBaseBERT-NER | Dizex | token-classification | [

"transformers",

"pytorch",

"safetensors",

"bert",

"token-classification",

"FoodBase",

"NER",

"en",

"dataset:Dizex/FoodBase",

"license:mit",

"autotrain_compatible",

"endpoints_compatible",

"region:us"

] | 1,667 | 1,684 | 1,241 | 19 | ---

datasets:

- Dizex/FoodBase

language: en

license: mit

tags:

- FoodBase

- NER

widget:

- text: 'Today''s meal: Fresh olive poké bowl topped with chia seeds. Very delicious!'

example_title: Food example 1

- text: Tartufo Pasta with garlic flavoured butter and olive oil, egg yolk, parmigiano

and pasta water.

exa... | [

"NAMED_ENTITY_RECOGNITION"

] | [

"CHIA"

] | Non_BioNLP |

bobox/DeBERTa-small-ST-v1-test-step3 | bobox | sentence-similarity | [

"sentence-transformers",

"pytorch",

"deberta-v2",

"sentence-similarity",

"feature-extraction",

"generated_from_trainer",

"dataset_size:279409",

"loss:CachedGISTEmbedLoss",

"en",

"dataset:tals/vitaminc",

"dataset:allenai/scitail",

"dataset:allenai/sciq",

"dataset:allenai/qasc",

"dataset:sen... | 1,724 | 1,724 | 6 | 0 | ---

base_model: bobox/DeBERTa-small-ST-v1-test-step2

datasets:

- tals/vitaminc

- allenai/scitail

- allenai/sciq

- allenai/qasc

- sentence-transformers/msmarco-msmarco-distilbert-base-v3

- sentence-transformers/natural-questions

- sentence-transformers/trivia-qa

- sentence-transformers/gooaq

- google-research-datasets/p... | [

"TEXT_CLASSIFICATION",

"SEMANTIC_SIMILARITY",

"TRANSLATION"

] | [

"CAS",

"SCIQ",

"SCITAIL"

] | Non_BioNLP |

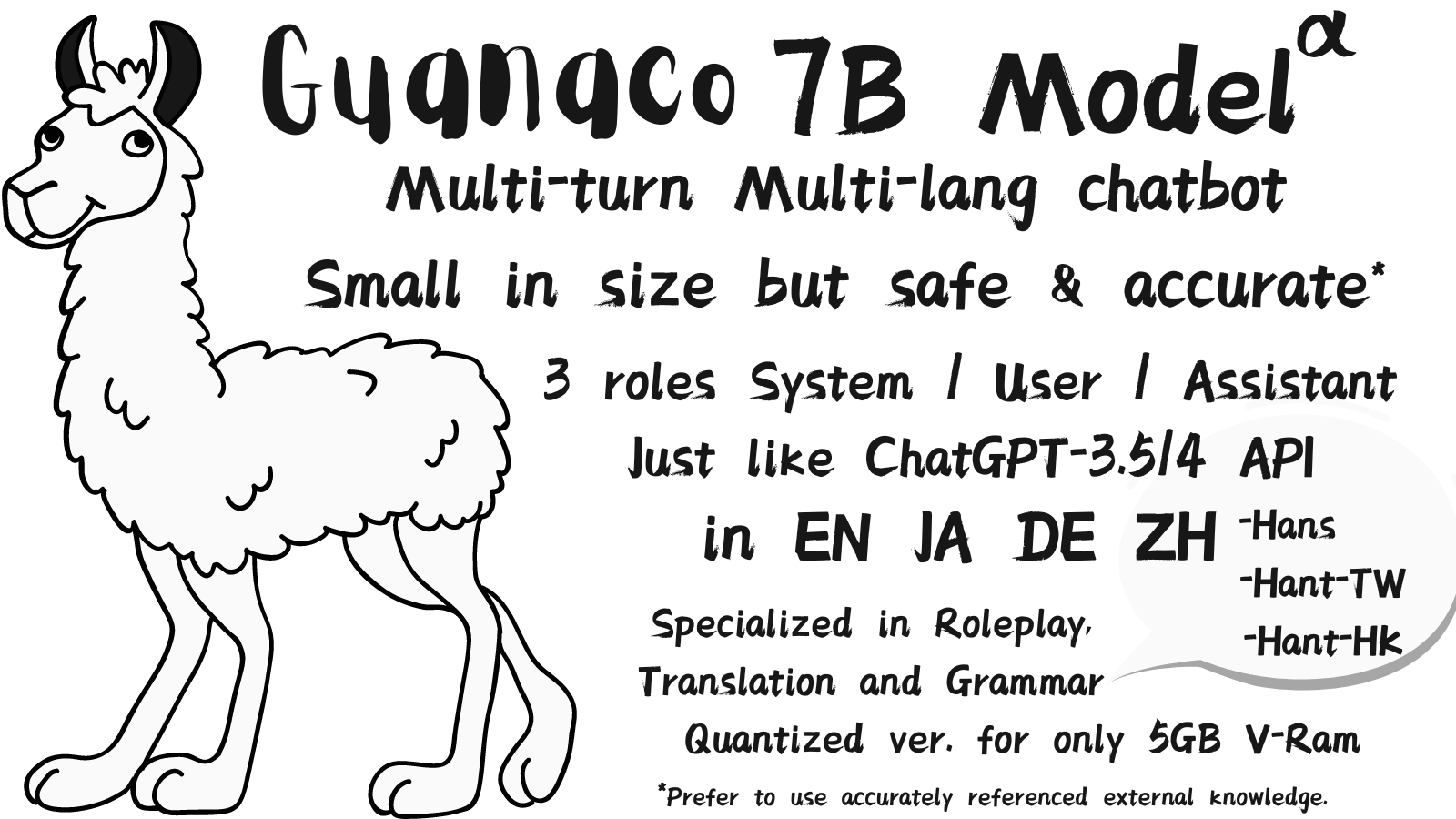

JosephusCheung/Guanaco | JosephusCheung | text-generation | [

"transformers",

"pytorch",

"llama",

"text-generation",

"guannaco",

"alpaca",

"conversational",

"en",

"zh",

"ja",

"de",

"dataset:JosephusCheung/GuanacoDataset",

"doi:10.57967/hf/0607",

"license:gpl-3.0",

"autotrain_compatible",

"text-generation-inference",

"region:us"

] | 1,680 | 1,685 | 2,164 | 230 | ---

datasets:

- JosephusCheung/GuanacoDataset

language:

- en

- zh

- ja

- de

license: gpl-3.0

pipeline_tag: conversational

tags:

- llama

- guannaco

- alpaca

inference: false

---