Datasets:

File size: 6,058 Bytes

16c2d73 3cb89cb 1e04115 3cb89cb 8976940 1e04115 16c2d73 1e04115 3cb89cb 1e04115 23a00cd 3cb89cb 1ba5bc0 3cb89cb c8e5722 3cb89cb c8e5722 3cb89cb c8e5722 3cb89cb bade111 3cb89cb e6d41f1 3cb89cb | 1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 27 28 29 30 31 32 33 34 35 36 37 38 39 40 41 42 43 44 45 46 47 48 49 50 51 52 53 54 55 56 57 58 59 60 61 62 63 64 65 66 67 68 69 70 71 72 73 74 75 76 77 78 79 80 81 82 83 84 85 86 87 88 89 90 91 92 93 94 95 96 97 98 99 100 101 102 103 104 105 106 107 108 109 110 111 112 113 114 115 116 117 118 119 120 121 122 123 124 125 126 127 128 129 130 131 132 133 134 135 136 137 138 139 140 141 142 143 144 145 146 147 148 | ---

language:

- en

- ar

- fr

- jp

- ru

license: apache-2.0

size_categories:

- n<1K

task_categories:

- feature-extraction

pretty_name: MOLE

tags:

- metadata

- extraction

- validation

---

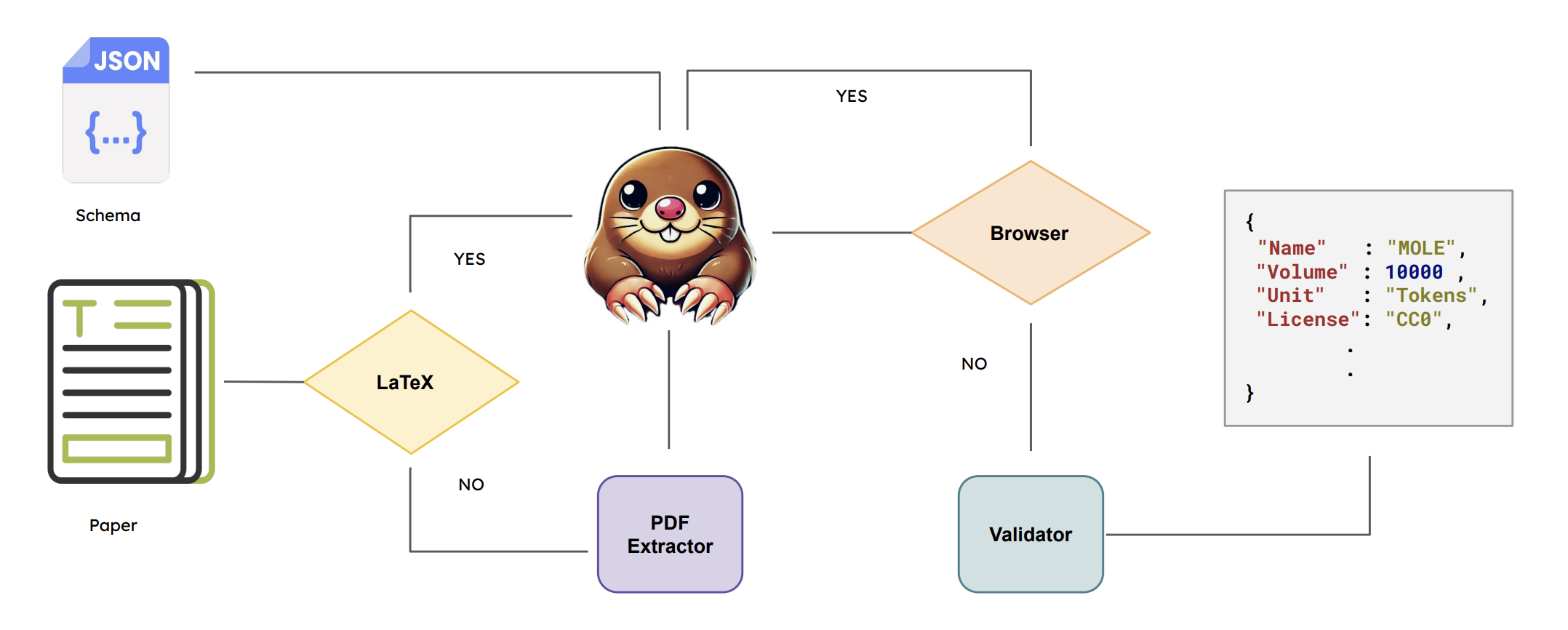

# MOLE: *Metadata Extraction and Validation in Scientific Papers*

MOLE is a dataset for evaluating and validating metadata extracted from scientific papers. The paper can be found [here](https://huggingface.co/papers/2505.19800).

## 📋 Dataset Structure

The main datasets attributes are shown below. Also for earch feature there is binary value `attribute_exist`. The value is 1 if the attribute is retrievable form the paper, otherwise it is 0.

- `Name (str)`: What is the name of the dataset?

- `Subsets (List[Dict[Name, Volume, Unit, Dialect]])`: What are the dialect subsets of this dataset?

- `Link (url)`: What is the link to access the dataset?

- `HF Link (url)`: What is the Huggingface link of the dataset?

- `License (str)`: What is the license of the dataset?

- `Year (date[year])`: What year was the dataset published?

- `Language (str)`: What languages are in the dataset?

- `Dialect (str)`: What is the dialect of the dataset?

- `Domain (List[str])`: What is the source of the dataset?

- `Form (str)`: What is the form of the data?

- `Collection Style (List[str])`: How was this dataset collected?

- `Description (str)`: Write a brief description about the dataset.

- `Volume (float)`: What is the size of the dataset?

- `Unit (str)`: What kind of examples does the dataset include?

- `Ethical Risks (str)`: What is the level of the ethical risks of the dataset?

- `Provider (List[str])`: What entity is the provider of the dataset?

- `Derived From (List[str])`: What datasets were used to create the dataset?

- `Paper Title (str)`: What is the title of the paper?

- `Paper Link (url)`: What is the link to the paper?

- `Script (str)`: What is the script of this dataset?

- `Tokenized (bool)`: Is the dataset tokenized?

- `Host (str)`: What is name of the repository that hosts the dataset?

- `Access (str)`: What is the accessibility of the dataset?

- `Cost (str)`: If the dataset is not free, what is the cost?

- `Test Split (bool)`: Does the dataset contain a train/valid and test split?

- `Tasks (List[str])`: What NLP tasks is this dataset intended for?

- `Venue Title (str)`: What is the venue title of the published paper?

- `Venue Type (str)`: What is the venue type?

- `Venue Name (str)`: What is the full name of the venue that published the paper?

- `Authors (List[str])`: Who are the authors of the paper?

- `Affiliations (List[str])`: What are the affiliations of the authors?

- `Abstract (str)`: What is the abstract of the paper?

## 📁 Loading The Dataset

How to load the dataset

```python

from datasets import load_dataset

dataset = load_dataset('IVUL-KAUST/mole')

```

## 📄 Sample From The Dataset:

A sample for an annotated paper

```python

{

"metadata": {

"Name": "TUNIZI",

"Subsets": [],

"Link": "https://github.com/chaymafourati/TUNIZI-Sentiment-Analysis-Tunisian-Arabizi-Dataset",

"HF Link": "",

"License": "unknown",

"Year": 2020,

"Language": "ar",

"Dialect": "Tunisia",

"Domain": [

"social media"

],

"Form": "text",

"Collection Style": [

"crawling",

"manual curation",

"human annotation"

],

"Description": "TUNIZI is a sentiment analysis dataset of over 9,000 Tunisian Arabizi sentences collected from YouTube comments, preprocessed, and manually annotated by native Tunisian speakers.",

"Volume": 9210.0,

"Unit": "sentences",

"Ethical Risks": "Medium",

"Provider": [

"iCompass"

],

"Derived From": [],

"Paper Title": "TUNIZI: A TUNISIAN ARABIZI SENTIMENT ANALYSIS DATASET",

"Paper Link": "https://arxiv.org/abs/2004.14303",

"Script": "Latin",

"Tokenized": false,

"Host": "GitHub",

"Access": "Free",

"Cost": "",

"Test Split": false,

"Tasks": [

"sentiment analysis"

],

"Venue Title": "International Conference on Learning Representations",

"Venue Type": "conference",

"Venue Name": "International Conference on Learning Representations 2020",

"Authors": [

"Chayma Fourati",

"Abir Messaoudi",

"Hatem Haddad"

],

"Affiliations": [

"iCompass"

],

"Abstract": "On social media, Arabic people tend to express themselves in their own local dialects. More particularly, Tunisians use the informal way called 'Tunisian Arabizi'. Analytical studies seek to explore and recognize online opinions aiming to exploit them for planning and prediction purposes such as measuring the customer satisfaction and establishing sales and marketing strategies. However, analytical studies based on Deep Learning are data hungry. On the other hand, African languages and dialects are considered low resource languages. For instance, to the best of our knowledge, no annotated Tunisian Arabizi dataset exists. In this paper, we introduce TUNIZI as a sentiment analysis Tunisian Arabizi Dataset, collected from social networks, preprocessed for analytical studies and annotated manually by Tunisian native speakers."

},

}

```

## ⛔️ Limitations

The dataset contains 52 annotated papers, it might be limited to truely evaluate LLMs. We are working on increasing the size of the dataset.

## 🔑 License

[Apache 2.0](https://www.apache.org/licenses/LICENSE-2.0).

## Citation

```

@misc{mole,

title={MOLE: Metadata Extraction and Validation in Scientific Papers Using LLMs},

author={Zaid Alyafeai and Maged S. Al-Shaibani and Bernard Ghanem},

year={2025},

eprint={2505.19800},

archivePrefix={arXiv},

primaryClass={cs.CL},

url={https://arxiv.org/abs/2505.19800},

}

``` |