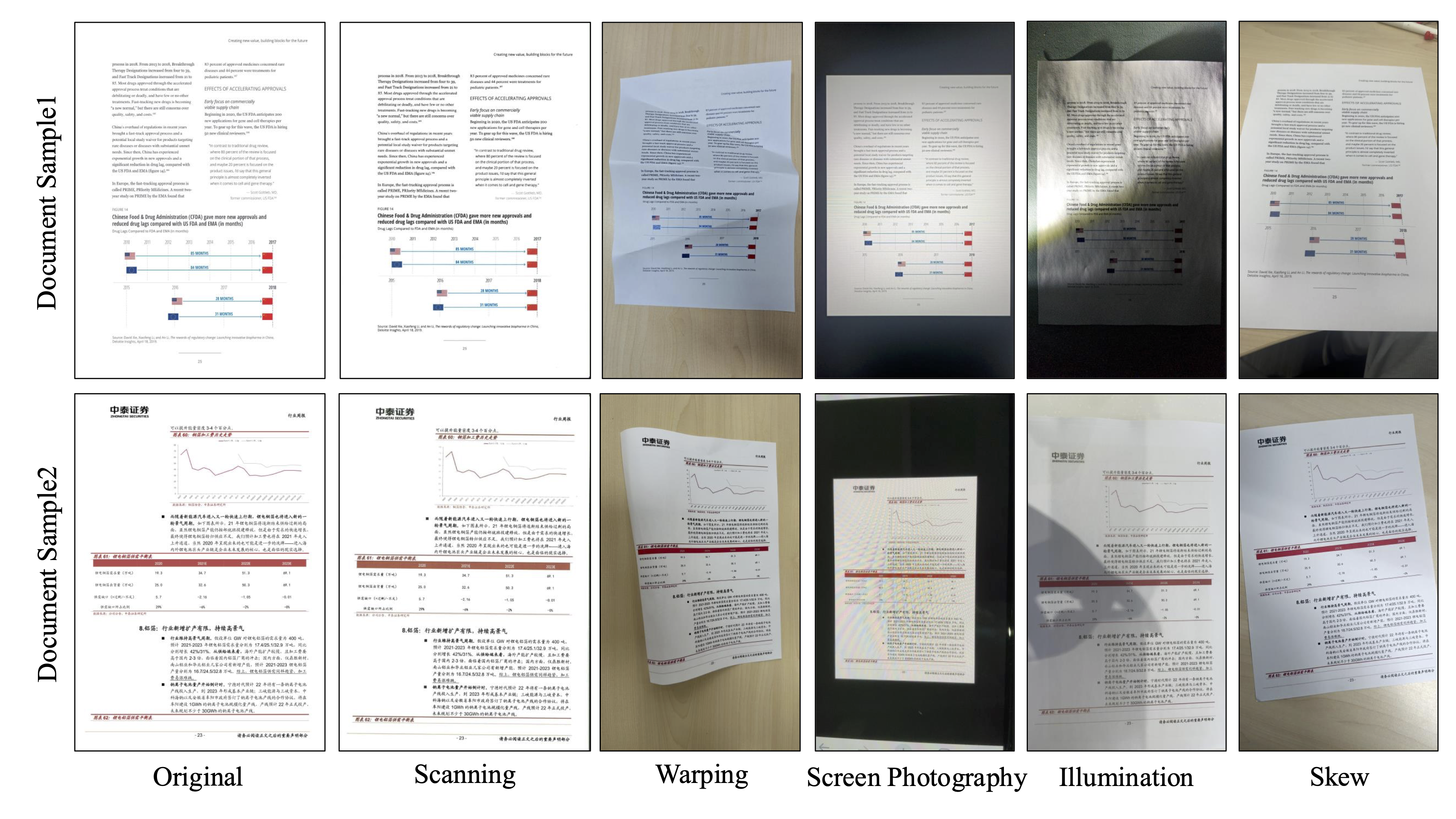

| Model Type | Methods | Parameters | Overall↑ | TextEdit↓ | FormulaCDM↑ | TableTEDS↑ | Reading OrderEdit↓ |

|---|---|---|---|---|---|---|---|

| Pipeline Tools | Maker-1.8.2 | - | 70.27 | 0.223 | 77.03 | 56.05 | 0.238 |

| PP-StructureV3 | - | 84.68 | 0.094 | 84.34 | 79.06 | 0.092 | |

| General VLMs | GPT-5.2 | - | 84.43 | 0.142 | 85.68 | 81.78 | 0.109 |

| Qwen2.5-VL-72B | 72B | 86.19 | 0.110 | 86.14 | 83.41 | 0.114 | |

| Gemini-2.5 Pro | - | 89.25 | 0.073 | 87.44 | 87.62 | 0.098 | |

| Qwen3-VL-235B-A22B-Instruct | 235B | 89.43 | 0.059 | 89.01 | 85.19 | 0.066 | |

| Gemini-3 Pro | - | 89.47 | 0.071 | 88.16 | 87.37 | 0.078 | |

| Specialized VLMs | Dolphin | 322M | 72.16 | 0.154 | 64.58 | 67.27 | 0.130 |

| Dolphin-1.5 | 0.3B | 83.39 | 0.097 | 76.25 | 83.65 | 0.090 | |

| MinerU2-VLM | 0.9B | 83.60 | 0.094 | 79.76 | 80.44 | 0.091 | |

| MonkeyOCR-pro-1.2B | 1.9B | 84.64 | 0.123 | 84.17 | 82.13 | 0.145 | |

| MonkeyOCR-3B | 3.7B | 84.65 | 0.100 | 84.16 | 79.81 | 0.143 | |

| Nanonets-OCR-s | 3B | 85.52 | 0.106 | 88.09 | 79.11 | 0.106 | |

| Deepseek-OCR | 3B | 86.17 | 0.078 | 83.59 | 82.69 | 0.085 | |

| dots.ocr | 3B | 86.87 | 0.083 | 83.27 | 85.68 | 0.081 | |

| MonkeyOCR-pro-3B | 3.7B | 86.94 | 0.103 | 86.29 | 84.86 | 0.141 | |

| MinerU2.5 | 1.2B | 90.06 | 0.052 | 88.22 | 87.16 | 0.050 | |

| PaddleOCR-VL | 0.9B | 92.11 | 0.039 | 90.35 | 89.90 | 0.048 | |

| PaddleOCR-VL-1.5 | 0.9B | 93.43 | 0.037 | 93.04 | 90.97 | 0.045 |

| Model Type | Methods | Parameters | Overall↑ | TextEdit↓ | FormulaCDM↑ | TableTEDS↑ | Reading OrderEdit↓ |

|---|---|---|---|---|---|---|---|

| Pipeline Tools | Maker-1.8.2 | - | 58.98 | 0.349 | 72.71 | 39.08 | 0.390 |

| PP-StructureV3 | - | 59.34 | 0.376 | 68.22 | 47.40 | 0.261 | |

| General VLMs | GPT-5.2 | - | 76.26 | 0.239 | 80.90 | 71.80 | 0.165 |

| Gemini-2.5 Pro | - | 87.63 | 0.092 | 86.50 | 85.59 | 0.109 | |

| Qwen2.5-VL-72B | 72B | 87.77 | 0.086 | 88.85 | 83.06 | 0.102 | |

| Gemini-3 Pro | - | 88.90 | 0.086 | 88.10 | 87.20 | 0.087 | |

| Qwen3-VL-235B-A22B-Instruct | 235B | 89.99 | 0.051 | 89.06 | 85.95 | 0.064 | |

| Specialized VLMs | Dolphin-1.5 | 0.3B | 50.50 | 0.383 | 47.24 | 42.52 | 0.309 |

| Dolphin | 322M | 60.35 | 0.316 | 61.06 | 51.58 | 0.247 | |

| Deepseek-OCR | 3B | 67.20 | 0.328 | 73.59 | 60.80 | 0.226 | |

| MinerU2-VLM | 0.9B | 73.73 | 0.202 | 77.72 | 63.65 | 0.173 | |

| MonkeyOCR-pro-1.2B | 1.9B | 76.59 | 0.196 | 78.85 | 70.52 | 0.221 | |

| MonkeyOCR-3B | 3.7B | 77.27 | 0.164 | 79.08 | 69.18 | 0.211 | |

| MonkeyOCR-pro-3B | 3.7B | 78.90 | 0.168 | 79.55 | 73.94 | 0.212 | |

| Nanonets-OCR-s | 3B | 83.56 | 0.121 | 86.24 | 76.57 | 0.124 | |

| MinerU2.5 | 1.2B | 83.76 | 0.154 | 85.92 | 80.71 | 0.104 | |

| PaddleOCR-VL | 0.9B | 85.97 | 0.093 | 85.45 | 81.77 | 0.092 | |

| dots.ocr | 3B | 86.01 | 0.087 | 85.03 | 81.74 | 0.093 | |

| PaddleOCR-VL-1.5 | 0.9B | 91.25 | 0.053 | 90.94 | 88.10 | 0.063 |

| Model Type | Methods | Parameters | Overall↑ | TextEdit↓ | FormulaCDM↑ | TableTEDS↑ | Reading OrderEdit↓ |

|---|---|---|---|---|---|---|---|

| Pipeline Tools | Maker-1.8.2 | - | 63.65 | 0.290 | 72.73 | 47.21 | 0.325 |

| PP-StructureV3 | - | 66.89 | 0.204 | 73.26 | 47.82 | 0.165 | |

| General VLMs | GPT-5.2 | - | 76.75 | 0.208 | 79.27 | 71.73 | 0.148 |

| Qwen2.5-VL-72B | 72B | 86.48 | 0.100 | 87.46 | 82.00 | 0.102 | |

| Gemini-2.5 Pro | - | 87.11 | 0.103 | 85.30 | 86.31 | 0.117 | |

| Gemini-3 Pro | - | 88.86 | 0.084 | 87.33 | 87.65 | 0.087 | |

| Qwen3-VL-235B-A22B-Instruct | 235B | 89.27 | 0.068 | 88.72 | 85.85 | 0.071 | |

| Specialized VLMs | Dolphin | 322M | 64.29 | 0.232 | 58.66 | 57.38 | 0.195 |

| Dolphin-1.5 | 0.3B | 69.76 | 0.205 | 61.80 | 68.00 | 0.177 | |

| Deepseek-OCR | 3B | 75.31 | 0.220 | 77.68 | 70.26 | 0.169 | |

| MinerU2-VLM | 0.9B | 78.77 | 0.139 | 79.02 | 71.17 | 0.123 | |

| MonkeyOCR-pro-1.2B | 1.9B | 80.24 | 0.148 | 80.78 | 74.74 | 0.179 | |

| MonkeyOCR-3B | 3.7B | 80.71 | 0.122 | 81.33 | 73.04 | 0.177 | |

| MonkeyOCR-pro-3B | 3.7B | 82.44 | 0.124 | 81.55 | 78.13 | 0.177 | |

| PaddleOCR-VL | 0.9B | 82.54 | 0.103 | 83.58 | 74.36 | 0.107 | |

| Nanonets-OCR-s | 3B | 84.86 | 0.112 | 86.65 | 79.09 | 0.117 | |

| dots.ocr | 3B | 87.18 | 0.081 | 85.34 | 84.26 | 0.079 | |

| MinerU2.5 | 1.2B | 89.41 | 0.062 | 87.55 | 86.83 | 0.053 | |

| PaddleOCR-VL-1.5 | 0.9B | 91.76 | 0.050 | 90.88 | 89.38 | 0.059 |

| Model Type | Methods | Parameters | Overall↑ | TextEdit↓ | FormulaCDM↑ | TableTEDS↑ | Reading OrderEdit↓ |

|---|---|---|---|---|---|---|---|

| Pipeline Tools | Maker-1.8.2 | - | 66.31 | 0.259 | 74.80 | 50.03 | 0.337 |

| PP-StructureV3 | - | 73.38 | 0.158 | 77.75 | 58.19 | 0.126 | |

| General VLMs | GPT-5.2 | - | 80.88 | 0.191 | 84.41 | 77.37 | 0.134 |

| Qwen2.5-VL-72B | 72B | 87.25 | 0.087 | 86.44 | 84.03 | 0.097 | |

| Gemini-2.5 Pro | - | 87.97 | 0.083 | 86.13 | 86.11 | 0.103 | |

| Qwen3-VL-235B-A22B-Instruct | 235B | 89.27 | 0.060 | 87.81 | 86.05 | 0.070 | |

| Gemini-3 Pro | - | 89.53 | 0.073 | 87.78 | 88.14 | 0.080 | |

| Specialized VLMs | Dolphin | 322M | 67.29 | 0.197 | 61.42 | 60.10 | 0.173 |

| Dolphin-1.5 | 0.3B | 75.61 | 0.159 | 70.04 | 72.69 | 0.133 | |

| Deepseek-OCR | 3B | 78.10 | 0.192 | 81.71 | 71.81 | 0.156 | |

| MinerU2-VLM | 0.9B | 80.51 | 0.135 | 80.72 | 74.29 | 0.123 | |

| MonkeyOCR-pro-1.2B | 1.9B | 82.11 | 0.144 | 82.07 | 78.67 | 0.172 | |

| MonkeyOCR-3B | 3.7B | 83.16 | 0.118 | 83.63 | 77.62 | 0.168 | |

| MonkeyOCR-pro-3B | 3.7B | 84.71 | 0.120 | 84.13 | 82.02 | 0.171 | |

| Nanonets-OCR-s | 3B | 85.01 | 0.099 | 87.94 | 76.96 | 0.112 | |

| dots.ocr | 3B | 87.57 | 0.068 | 85.07 | 84.44 | 0.076 | |

| MinerU2.5 | 1.2B | 89.57 | 0.065 | 88.36 | 86.87 | 0.062 | |

| PaddleOCR-VL | 0.9B | 89.61 | 0.049 | 86.66 | 87.02 | 0.055 | |

| PaddleOCR-VL-1.5 | 0.9B | 92.16 | 0.046 | 91.80 | 89.33 | 0.051 |

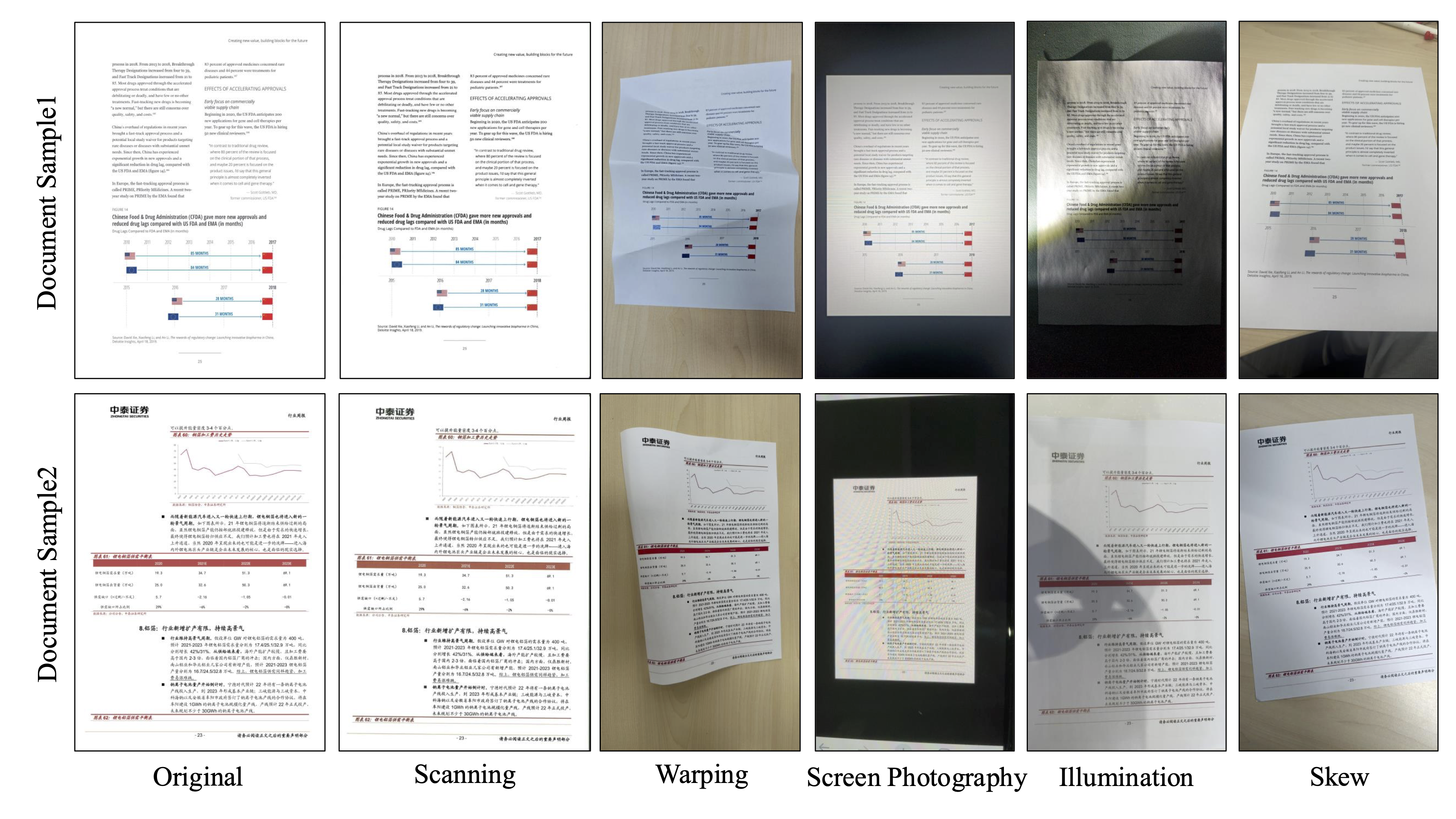

| Model Type | Methods | Parameters | Overall↑ | TextEdit↓ | FormulaCDM↑ | TableTEDS↑ | Reading OrderEdit↓ |

|---|---|---|---|---|---|---|---|

| Pipeline Tools | PP-StructureV3 | - | 37.98 | 0.557 | 44.37 | 25.27 | 0.417 |

| Maker-1.8.2 | - | 41.27 | 0.536 | 60.16 | 17.23 | 0.543 | |

| General VLMs | GPT-5.2 | - | 75.00 | 0.257 | 80.27 | 70.47 | 0.167 |

| Qwen3-VL-235B-A22B-Instruct | 235B | 86.56 | 0.077 | 83.96 | 83.41 | 0.091 | |

| Qwen2.5-VL-72B | 72B | 86.90 | 0.077 | 87.26 | 81.14 | 0.091 | |

| Gemini-2.5 Pro | - | 89.07 | 0.077 | 87.89 | 86.99 | 0.104 | |

| Gemini-3 Pro | - | 89.45 | 0.080 | 88.33 | 88.06 | 0.092 | |

| Specialized VLMs | Dolphin-1.5 | 0.3B | 28.16 | 0.553 | 25.60 | 14.18 | 0.419 |

| Dolphin | 322M | 44.83 | 0.500 | 51.34 | 33.22 | 0.321 | |

| MonkeyOCR-pro-1.2B | 1.9B | 62.18 | 0.292 | 66.25 | 49.46 | 0.317 | |

| Deepseek-OCR | 3B | 63.01 | 0.327 | 73.27 | 48.48 | 0.231 | |

| MonkeyOCR-pro-3B | 3.7B | 64.47 | 0.251 | 69.06 | 49.42 | 0.301 | |

| MonkeyOCR-3B | 3.7B | 65.67 | 0.248 | 69.23 | 52.59 | 0.300 | |

| MinerU2-VLM | 0.9B | 68.16 | 0.230 | 74.45 | 53.07 | 0.191 | |

| MinerU2.5 | 1.2B | 75.24 | 0.305 | 81.78 | 74.39 | 0.151 | |

| PaddleOCR-VL | 0.9B | 77.47 | 0.192 | 78.81 | 72.83 | 0.193 | |

| Nanonets-OCR-s | 3B | 81.98 | 0.121 | 85.78 | 72.22 | 0.133 | |

| dots.ocr | 3B | 84.27 | 0.087 | 85.73 | 75.74 | 0.094 | |

| PaddleOCR-VL-1.5 | 0.9B | 91.66 | 0.047 | 91.00 | 88.69 | 0.061 |