instance_id

stringlengths 18

32

| text

stringlengths 13.1k

63.9k

| repo

stringclasses 11

values | base_commit

stringlengths 40

40

| problem_statement

stringlengths 36

24.8k

| hints_text

stringlengths 0

38.1k

| created_at

stringlengths 20

20

| patch

stringlengths 302

252k

| test_patch

stringlengths 347

33.9k

| version

stringclasses 55

values | FAIL_TO_PASS

stringlengths 12

95.7k

| PASS_TO_PASS

stringlengths 2

271k

| environment_setup_commit

stringlengths 40

40

|

|---|---|---|---|---|---|---|---|---|---|---|---|---|

astropy__astropy-12318

|

You will be provided with a partial code base and an issue statement explaining a problem to resolve.

<issue>

BlackBody bolometric flux is wrong if scale has units of dimensionless_unscaled

The `astropy.modeling.models.BlackBody` class has the wrong bolometric flux if `scale` argument is passed as a Quantity with `dimensionless_unscaled` units, but the correct bolometric flux if `scale` is simply a float.

### Description

<!-- Provide a general description of the bug. -->

### Expected behavior

Expected output from sample code:

```

4.823870774433646e-16 erg / (cm2 s)

4.823870774433646e-16 erg / (cm2 s)

```

### Actual behavior

Actual output from sample code:

```

4.5930032795393893e+33 erg / (cm2 s)

4.823870774433646e-16 erg / (cm2 s)

```

### Steps to Reproduce

Sample code:

```python

from astropy.modeling.models import BlackBody

from astropy import units as u

import numpy as np

T = 3000 * u.K

r = 1e14 * u.cm

DL = 100 * u.Mpc

scale = np.pi * (r / DL)**2

print(BlackBody(temperature=T, scale=scale).bolometric_flux)

print(BlackBody(temperature=T, scale=scale.to_value(u.dimensionless_unscaled)).bolometric_flux)

```

### System Details

```pycon

>>> import numpy; print("Numpy", numpy.__version__)

Numpy 1.20.2

>>> import astropy; print("astropy", astropy.__version__)

astropy 4.3.dev758+g1ed1d945a

>>> import scipy; print("Scipy", scipy.__version__)

Traceback (most recent call last):

File "<stdin>", line 1, in <module>

ModuleNotFoundError: No module named 'scipy'

>>> import matplotlib; print("Matplotlib", matplotlib.__version__)

Traceback (most recent call last):

File "<stdin>", line 1, in <module>

ModuleNotFoundError: No module named 'matplotlib'

```

</issue>

<code>

[start of README.rst]

1 =======

2 Astropy

3 =======

4

5 |Actions Status| |CircleCI Status| |Azure Status| |Coverage Status| |PyPI Status| |Documentation Status| |Zenodo|

6

7 The Astropy Project (http://astropy.org/) is a community effort to develop a

8 single core package for Astronomy in Python and foster interoperability between

9 Python astronomy packages. This repository contains the core package which is

10 intended to contain much of the core functionality and some common tools needed

11 for performing astronomy and astrophysics with Python.

12

13 Releases are `registered on PyPI <https://pypi.org/project/astropy>`_,

14 and development is occurring at the

15 `project's GitHub page <http://github.com/astropy/astropy>`_.

16

17 For installation instructions, see the `online documentation <https://docs.astropy.org/>`_

18 or `docs/install.rst <docs/install.rst>`_ in this source distribution.

19

20 Contributing Code, Documentation, or Feedback

21 ---------------------------------------------

22

23 The Astropy Project is made both by and for its users, so we welcome and

24 encourage contributions of many kinds. Our goal is to keep this a positive,

25 inclusive, successful, and growing community by abiding with the

26 `Astropy Community Code of Conduct <http://www.astropy.org/about.html#codeofconduct>`_.

27

28 More detailed information on contributing to the project or submitting feedback

29 can be found on the `contributions <http://www.astropy.org/contribute.html>`_

30 page. A `summary of contribution guidelines <CONTRIBUTING.md>`_ can also be

31 used as a quick reference when you are ready to start writing or validating

32 code for submission.

33

34 Supporting the Project

35 ----------------------

36

37 |NumFOCUS| |Donate|

38

39 The Astropy Project is sponsored by NumFOCUS, a 501(c)(3) nonprofit in the

40 United States. You can donate to the project by using the link above, and this

41 donation will support our mission to promote sustainable, high-level code base

42 for the astronomy community, open code development, educational materials, and

43 reproducible scientific research.

44

45 License

46 -------

47

48 Astropy is licensed under a 3-clause BSD style license - see the

49 `LICENSE.rst <LICENSE.rst>`_ file.

50

51 .. |Actions Status| image:: https://github.com/astropy/astropy/workflows/CI/badge.svg

52 :target: https://github.com/astropy/astropy/actions

53 :alt: Astropy's GitHub Actions CI Status

54

55 .. |CircleCI Status| image:: https://img.shields.io/circleci/build/github/astropy/astropy/main?logo=circleci&label=CircleCI

56 :target: https://circleci.com/gh/astropy/astropy

57 :alt: Astropy's CircleCI Status

58

59 .. |Azure Status| image:: https://dev.azure.com/astropy-project/astropy/_apis/build/status/astropy.astropy?repoName=astropy%2Fastropy&branchName=main

60 :target: https://dev.azure.com/astropy-project/astropy

61 :alt: Astropy's Azure Pipelines Status

62

63 .. |Coverage Status| image:: https://codecov.io/gh/astropy/astropy/branch/main/graph/badge.svg

64 :target: https://codecov.io/gh/astropy/astropy

65 :alt: Astropy's Coverage Status

66

67 .. |PyPI Status| image:: https://img.shields.io/pypi/v/astropy.svg

68 :target: https://pypi.org/project/astropy

69 :alt: Astropy's PyPI Status

70

71 .. |Zenodo| image:: https://zenodo.org/badge/DOI/10.5281/zenodo.4670728.svg

72 :target: https://doi.org/10.5281/zenodo.4670728

73 :alt: Zenodo DOI

74

75 .. |Documentation Status| image:: https://img.shields.io/readthedocs/astropy/latest.svg?logo=read%20the%20docs&logoColor=white&label=Docs&version=stable

76 :target: https://docs.astropy.org/en/stable/?badge=stable

77 :alt: Documentation Status

78

79 .. |NumFOCUS| image:: https://img.shields.io/badge/powered%20by-NumFOCUS-orange.svg?style=flat&colorA=E1523D&colorB=007D8A

80 :target: http://numfocus.org

81 :alt: Powered by NumFOCUS

82

83 .. |Donate| image:: https://img.shields.io/badge/Donate-to%20Astropy-brightgreen.svg

84 :target: https://numfocus.salsalabs.org/donate-to-astropy/index.html

85

86

87 If you locally cloned this repo before 7 Apr 2021

88 -------------------------------------------------

89

90 The primary branch for this repo has been transitioned from ``master`` to

91 ``main``. If you have a local clone of this repository and want to keep your

92 local branch in sync with this repo, you'll need to do the following in your

93 local clone from your terminal::

94

95 git fetch --all --prune

96 # you can stop here if you don't use your local "master"/"main" branch

97 git branch -m master main

98 git branch -u origin/main main

99

100 If you are using a GUI to manage your repos you'll have to find the equivalent

101 commands as it's different for different programs. Alternatively, you can just

102 delete your local clone and re-clone!

103

[end of README.rst]

[start of astropy/modeling/physical_models.py]

1 # Licensed under a 3-clause BSD style license - see LICENSE.rst

2 """

3 Models that have physical origins.

4 """

5 # pylint: disable=invalid-name, no-member

6

7 import warnings

8

9 import numpy as np

10

11 from astropy import constants as const

12 from astropy import units as u

13 from astropy.utils.exceptions import AstropyUserWarning

14 from .core import Fittable1DModel

15 from .parameters import Parameter, InputParameterError

16

17 __all__ = ["BlackBody", "Drude1D", "Plummer1D", "NFW"]

18

19

20 class BlackBody(Fittable1DModel):

21 """

22 Blackbody model using the Planck function.

23

24 Parameters

25 ----------

26 temperature : `~astropy.units.Quantity` ['temperature']

27 Blackbody temperature.

28

29 scale : float or `~astropy.units.Quantity` ['dimensionless']

30 Scale factor

31

32 Notes

33 -----

34

35 Model formula:

36

37 .. math:: B_{\\nu}(T) = A \\frac{2 h \\nu^{3} / c^{2}}{exp(h \\nu / k T) - 1}

38

39 Examples

40 --------

41 >>> from astropy.modeling import models

42 >>> from astropy import units as u

43 >>> bb = models.BlackBody(temperature=5000*u.K)

44 >>> bb(6000 * u.AA) # doctest: +FLOAT_CMP

45 <Quantity 1.53254685e-05 erg / (cm2 Hz s sr)>

46

47 .. plot::

48 :include-source:

49

50 import numpy as np

51 import matplotlib.pyplot as plt

52

53 from astropy.modeling.models import BlackBody

54 from astropy import units as u

55 from astropy.visualization import quantity_support

56

57 bb = BlackBody(temperature=5778*u.K)

58 wav = np.arange(1000, 110000) * u.AA

59 flux = bb(wav)

60

61 with quantity_support():

62 plt.figure()

63 plt.semilogx(wav, flux)

64 plt.axvline(bb.nu_max.to(u.AA, equivalencies=u.spectral()).value, ls='--')

65 plt.show()

66 """

67

68 # We parametrize this model with a temperature and a scale.

69 temperature = Parameter(default=5000.0, min=0, unit=u.K, description="Blackbody temperature")

70 scale = Parameter(default=1.0, min=0, description="Scale factor")

71

72 # We allow values without units to be passed when evaluating the model, and

73 # in this case the input x values are assumed to be frequencies in Hz.

74 _input_units_allow_dimensionless = True

75

76 # We enable the spectral equivalency by default for the spectral axis

77 input_units_equivalencies = {'x': u.spectral()}

78

79 def evaluate(self, x, temperature, scale):

80 """Evaluate the model.

81

82 Parameters

83 ----------

84 x : float, `~numpy.ndarray`, or `~astropy.units.Quantity` ['frequency']

85 Frequency at which to compute the blackbody. If no units are given,

86 this defaults to Hz.

87

88 temperature : float, `~numpy.ndarray`, or `~astropy.units.Quantity`

89 Temperature of the blackbody. If no units are given, this defaults

90 to Kelvin.

91

92 scale : float, `~numpy.ndarray`, or `~astropy.units.Quantity` ['dimensionless']

93 Desired scale for the blackbody.

94

95 Returns

96 -------

97 y : number or ndarray

98 Blackbody spectrum. The units are determined from the units of

99 ``scale``.

100

101 .. note::

102

103 Use `numpy.errstate` to suppress Numpy warnings, if desired.

104

105 .. warning::

106

107 Output values might contain ``nan`` and ``inf``.

108

109 Raises

110 ------

111 ValueError

112 Invalid temperature.

113

114 ZeroDivisionError

115 Wavelength is zero (when converting to frequency).

116 """

117 if not isinstance(temperature, u.Quantity):

118 in_temp = u.Quantity(temperature, u.K)

119 else:

120 in_temp = temperature

121

122 # Convert to units for calculations, also force double precision

123 with u.add_enabled_equivalencies(u.spectral() + u.temperature()):

124 freq = u.Quantity(x, u.Hz, dtype=np.float64)

125 temp = u.Quantity(in_temp, u.K)

126

127 # check the units of scale and setup the output units

128 bb_unit = u.erg / (u.cm ** 2 * u.s * u.Hz * u.sr) # default unit

129 # use the scale that was used at initialization for determining the units to return

130 # to support returning the right units when fitting where units are stripped

131 if hasattr(self.scale, "unit") and self.scale.unit is not None:

132 # check that the units on scale are covertable to surface brightness units

133 if not self.scale.unit.is_equivalent(bb_unit, u.spectral_density(x)):

134 raise ValueError(

135 f"scale units not surface brightness: {self.scale.unit}"

136 )

137 # use the scale passed to get the value for scaling

138 if hasattr(scale, "unit"):

139 mult_scale = scale.value

140 else:

141 mult_scale = scale

142 bb_unit = self.scale.unit

143 else:

144 mult_scale = scale

145

146 # Check if input values are physically possible

147 if np.any(temp < 0):

148 raise ValueError(f"Temperature should be positive: {temp}")

149 if not np.all(np.isfinite(freq)) or np.any(freq <= 0):

150 warnings.warn(

151 "Input contains invalid wavelength/frequency value(s)",

152 AstropyUserWarning,

153 )

154

155 log_boltz = const.h * freq / (const.k_B * temp)

156 boltzm1 = np.expm1(log_boltz)

157

158 # Calculate blackbody flux

159 bb_nu = 2.0 * const.h * freq ** 3 / (const.c ** 2 * boltzm1) / u.sr

160

161 y = mult_scale * bb_nu.to(bb_unit, u.spectral_density(freq))

162

163 # If the temperature parameter has no unit, we should return a unitless

164 # value. This occurs for instance during fitting, since we drop the

165 # units temporarily.

166 if hasattr(temperature, "unit"):

167 return y

168 return y.value

169

170 @property

171 def input_units(self):

172 # The input units are those of the 'x' value, which should always be

173 # Hz. Because we do this, and because input_units_allow_dimensionless

174 # is set to True, dimensionless values are assumed to be in Hz.

175 return {self.inputs[0]: u.Hz}

176

177 def _parameter_units_for_data_units(self, inputs_unit, outputs_unit):

178 return {"temperature": u.K}

179

180 @property

181 def bolometric_flux(self):

182 """Bolometric flux."""

183 # bolometric flux in the native units of the planck function

184 native_bolflux = (

185 self.scale.value * const.sigma_sb * self.temperature ** 4 / np.pi

186 )

187 # return in more "astro" units

188 return native_bolflux.to(u.erg / (u.cm ** 2 * u.s))

189

190 @property

191 def lambda_max(self):

192 """Peak wavelength when the curve is expressed as power density."""

193 return const.b_wien / self.temperature

194

195 @property

196 def nu_max(self):

197 """Peak frequency when the curve is expressed as power density."""

198 return 2.8214391 * const.k_B * self.temperature / const.h

199

200

201 class Drude1D(Fittable1DModel):

202 """

203 Drude model based one the behavior of electons in materials (esp. metals).

204

205 Parameters

206 ----------

207 amplitude : float

208 Peak value

209 x_0 : float

210 Position of the peak

211 fwhm : float

212 Full width at half maximum

213

214 Model formula:

215

216 .. math:: f(x) = A \\frac{(fwhm/x_0)^2}{((x/x_0 - x_0/x)^2 + (fwhm/x_0)^2}

217

218 Examples

219 --------

220

221 .. plot::

222 :include-source:

223

224 import numpy as np

225 import matplotlib.pyplot as plt

226

227 from astropy.modeling.models import Drude1D

228

229 fig, ax = plt.subplots()

230

231 # generate the curves and plot them

232 x = np.arange(7.5 , 12.5 , 0.1)

233

234 dmodel = Drude1D(amplitude=1.0, fwhm=1.0, x_0=10.0)

235 ax.plot(x, dmodel(x))

236

237 ax.set_xlabel('x')

238 ax.set_ylabel('F(x)')

239

240 plt.show()

241 """

242

243 amplitude = Parameter(default=1.0, description="Peak Value")

244 x_0 = Parameter(default=1.0, description="Position of the peak")

245 fwhm = Parameter(default=1.0, description="Full width at half maximum")

246

247 @staticmethod

248 def evaluate(x, amplitude, x_0, fwhm):

249 """

250 One dimensional Drude model function

251 """

252 return (

253 amplitude

254 * ((fwhm / x_0) ** 2)

255 / ((x / x_0 - x_0 / x) ** 2 + (fwhm / x_0) ** 2)

256 )

257

258 @staticmethod

259 def fit_deriv(x, amplitude, x_0, fwhm):

260 """

261 Drude1D model function derivatives.

262 """

263 d_amplitude = (fwhm / x_0) ** 2 / ((x / x_0 - x_0 / x) ** 2 + (fwhm / x_0) ** 2)

264 d_x_0 = (

265 -2

266 * amplitude

267 * d_amplitude

268 * (

269 (1 / x_0)

270 + d_amplitude

271 * (x_0 ** 2 / fwhm ** 2)

272 * (

273 (-x / x_0 - 1 / x) * (x / x_0 - x_0 / x)

274 - (2 * fwhm ** 2 / x_0 ** 3)

275 )

276 )

277 )

278 d_fwhm = (2 * amplitude * d_amplitude / fwhm) * (1 - d_amplitude)

279 return [d_amplitude, d_x_0, d_fwhm]

280

281 @property

282 def input_units(self):

283 if self.x_0.unit is None:

284 return None

285 return {self.inputs[0]: self.x_0.unit}

286

287 def _parameter_units_for_data_units(self, inputs_unit, outputs_unit):

288 return {

289 "x_0": inputs_unit[self.inputs[0]],

290 "fwhm": inputs_unit[self.inputs[0]],

291 "amplitude": outputs_unit[self.outputs[0]],

292 }

293

294 @property

295 def return_units(self):

296 if self.amplitude.unit is None:

297 return None

298 return {self.outputs[0]: self.amplitude.unit}

299

300 @x_0.validator

301 def x_0(self, val):

302 """ Ensure `x_0` is not 0."""

303 if np.any(val == 0):

304 raise InputParameterError("0 is not an allowed value for x_0")

305

306 def bounding_box(self, factor=50):

307 """Tuple defining the default ``bounding_box`` limits,

308 ``(x_low, x_high)``.

309

310 Parameters

311 ----------

312 factor : float

313 The multiple of FWHM used to define the limits.

314 """

315 x0 = self.x_0

316 dx = factor * self.fwhm

317

318 return (x0 - dx, x0 + dx)

319

320

321 class Plummer1D(Fittable1DModel):

322 r"""One dimensional Plummer density profile model.

323

324 Parameters

325 ----------

326 mass : float

327 Total mass of cluster.

328 r_plum : float

329 Scale parameter which sets the size of the cluster core.

330

331 Notes

332 -----

333 Model formula:

334

335 .. math::

336

337 \rho(r)=\frac{3M}{4\pi a^3}(1+\frac{r^2}{a^2})^{-5/2}

338

339 References

340 ----------

341 .. [1] https://ui.adsabs.harvard.edu/abs/1911MNRAS..71..460P

342 """

343

344 mass = Parameter(default=1.0, description="Total mass of cluster")

345 r_plum = Parameter(default=1.0, description="Scale parameter which sets the size of the cluster core")

346

347 @staticmethod

348 def evaluate(x, mass, r_plum):

349 """

350 Evaluate plummer density profile model.

351 """

352 return (3*mass)/(4 * np.pi * r_plum**3) * (1+(x/r_plum)**2)**(-5/2)

353

354 @staticmethod

355 def fit_deriv(x, mass, r_plum):

356 """

357 Plummer1D model derivatives.

358 """

359 d_mass = 3 / ((4*np.pi*r_plum**3) * (((x/r_plum)**2 + 1)**(5/2)))

360 d_r_plum = (6*mass*x**2-9*mass*r_plum**2) / ((4*np.pi * r_plum**6) *

361 (1+(x/r_plum)**2)**(7/2))

362 return [d_mass, d_r_plum]

363

364 @property

365 def input_units(self):

366 if self.mass.unit is None and self.r_plum.unit is None:

367 return None

368 else:

369 return {self.inputs[0]: self.r_plum.unit}

370

371 def _parameter_units_for_data_units(self, inputs_unit, outputs_unit):

372 return {'mass': outputs_unit[self.outputs[0]] * inputs_unit[self.inputs[0]] ** 3,

373 'r_plum': inputs_unit[self.inputs[0]]}

374

375

376 class NFW(Fittable1DModel):

377 r"""

378 Navarro–Frenk–White (NFW) profile - model for radial distribution of dark matter.

379

380 Parameters

381 ----------

382 mass : float or `~astropy.units.Quantity` ['mass']

383 Mass of NFW peak within specified overdensity radius.

384 concentration : float

385 Concentration of the NFW profile.

386 redshift : float

387 Redshift of the NFW profile.

388 massfactor : tuple or str

389 Mass overdensity factor and type for provided profiles:

390 Tuple version:

391 ("virial",) : virial radius

392

393 ("critical", N) : radius where density is N times that of the critical density

394

395 ("mean", N) : radius where density is N times that of the mean density

396

397 String version:

398 "virial" : virial radius

399

400 "Nc" : radius where density is N times that of the critical density (e.g. "200c")

401

402 "Nm" : radius where density is N times that of the mean density (e.g. "500m")

403 cosmo : :class:`~astropy.cosmology.Cosmology`

404 Background cosmology for density calculation. If None, the default cosmology will be used.

405

406 Notes

407 -----

408

409 Model formula:

410

411 .. math:: \rho(r)=\frac{\delta_c\rho_{c}}{r/r_s(1+r/r_s)^2}

412

413 References

414 ----------

415 .. [1] https://arxiv.org/pdf/astro-ph/9508025

416 .. [2] https://en.wikipedia.org/wiki/Navarro%E2%80%93Frenk%E2%80%93White_profile

417 .. [3] https://en.wikipedia.org/wiki/Virial_mass

418 """

419

420 # Model Parameters

421

422 # NFW Profile mass

423 mass = Parameter(default=1.0, min=1.0, unit=u.M_sun,

424 description="Peak mass within specified overdensity radius")

425

426 # NFW profile concentration

427 concentration = Parameter(default=1.0, min=1.0, description="Concentration")

428

429 # NFW Profile redshift

430 redshift = Parameter(default=0.0, min=0.0, description="Redshift")

431

432 # We allow values without units to be passed when evaluating the model, and

433 # in this case the input r values are assumed to be lengths / positions in kpc.

434 _input_units_allow_dimensionless = True

435

436 def __init__(self, mass=u.Quantity(mass.default, mass.unit),

437 concentration=concentration.default, redshift=redshift.default,

438 massfactor=("critical", 200), cosmo=None, **kwargs):

439 # Set default cosmology

440 if cosmo is None:

441 # LOCAL

442 from astropy.cosmology import default_cosmology

443

444 cosmo = default_cosmology.get()

445

446 # Set mass overdensity type and factor

447 self._density_delta(massfactor, cosmo, redshift)

448

449 # Establish mass units for density calculation (default solar masses)

450 if not isinstance(mass, u.Quantity):

451 in_mass = u.Quantity(mass, u.M_sun)

452 else:

453 in_mass = mass

454

455 # Obtain scale radius

456 self._radius_s(mass, concentration)

457

458 # Obtain scale density

459 self._density_s(mass, concentration)

460

461 super().__init__(mass=in_mass, concentration=concentration, redshift=redshift, **kwargs)

462

463 def evaluate(self, r, mass, concentration, redshift):

464 """

465 One dimensional NFW profile function

466

467 Parameters

468 ----------

469 r : float or `~astropy.units.Quantity` ['length']

470 Radial position of density to be calculated for the NFW profile.

471 mass : float or `~astropy.units.Quantity` ['mass']

472 Mass of NFW peak within specified overdensity radius.

473 concentration : float

474 Concentration of the NFW profile.

475 redshift : float

476 Redshift of the NFW profile.

477

478 Returns

479 -------

480 density : float or `~astropy.units.Quantity` ['density']

481 NFW profile mass density at location ``r``. The density units are:

482 [``mass`` / ``r`` ^3]

483

484 Notes

485 -----

486 .. warning::

487

488 Output values might contain ``nan`` and ``inf``.

489 """

490 # Create radial version of input with dimension

491 if hasattr(r, "unit"):

492 in_r = r

493 else:

494 in_r = u.Quantity(r, u.kpc)

495

496 # Define reduced radius (r / r_{\\rm s})

497 # also update scale radius

498 radius_reduced = in_r / self._radius_s(mass, concentration).to(in_r.unit)

499

500 # Density distribution

501 # \rho (r)=\frac{\rho_0}{\frac{r}{R_s}\left(1~+~\frac{r}{R_s}\right)^2}

502 # also update scale density

503 density = self._density_s(mass, concentration) / (radius_reduced *

504 (u.Quantity(1.0) + radius_reduced) ** 2)

505

506 if hasattr(mass, "unit"):

507 return density

508 else:

509 return density.value

510

511 def _density_delta(self, massfactor, cosmo, redshift):

512 """

513 Calculate density delta.

514 """

515 # Set mass overdensity type and factor

516 if isinstance(massfactor, tuple):

517 # Tuple options

518 # ("virial") : virial radius

519 # ("critical", N) : radius where density is N that of the critical density

520 # ("mean", N) : radius where density is N that of the mean density

521 if massfactor[0].lower() == "virial":

522 # Virial Mass

523 delta = None

524 masstype = massfactor[0].lower()

525 elif massfactor[0].lower() == "critical":

526 # Critical or Mean Overdensity Mass

527 delta = float(massfactor[1])

528 masstype = 'c'

529 elif massfactor[0].lower() == "mean":

530 # Critical or Mean Overdensity Mass

531 delta = float(massfactor[1])

532 masstype = 'm'

533 else:

534 raise ValueError("Massfactor '" + str(massfactor[0]) + "' not one of 'critical', "

535 "'mean', or 'virial'")

536 else:

537 try:

538 # String options

539 # virial : virial radius

540 # Nc : radius where density is N that of the critical density

541 # Nm : radius where density is N that of the mean density

542 if massfactor.lower() == "virial":

543 # Virial Mass

544 delta = None

545 masstype = massfactor.lower()

546 elif massfactor[-1].lower() == 'c' or massfactor[-1].lower() == 'm':

547 # Critical or Mean Overdensity Mass

548 delta = float(massfactor[0:-1])

549 masstype = massfactor[-1].lower()

550 else:

551 raise ValueError("Massfactor " + str(massfactor) + " string not of the form "

552 "'#m', '#c', or 'virial'")

553 except (AttributeError, TypeError):

554 raise TypeError("Massfactor " + str(

555 massfactor) + " not a tuple or string")

556

557 # Set density from masstype specification

558 if masstype == "virial":

559 Om_c = cosmo.Om(redshift) - 1.0

560 d_c = 18.0 * np.pi ** 2 + 82.0 * Om_c - 39.0 * Om_c ** 2

561 self.density_delta = d_c * cosmo.critical_density(redshift)

562 elif masstype == 'c':

563 self.density_delta = delta * cosmo.critical_density(redshift)

564 elif masstype == 'm':

565 self.density_delta = delta * cosmo.critical_density(redshift) * cosmo.Om(redshift)

566

567 return self.density_delta

568

569 @staticmethod

570 def A_NFW(y):

571 r"""

572 Dimensionless volume integral of the NFW profile, used as an intermediate step in some

573 calculations for this model.

574

575 Notes

576 -----

577

578 Model formula:

579

580 .. math:: A_{NFW} = [\ln(1+y) - \frac{y}{1+y}]

581 """

582 return np.log(1.0 + y) - (y / (1.0 + y))

583

584 def _density_s(self, mass, concentration):

585 """

586 Calculate scale density of the NFW profile.

587 """

588 # Enforce default units

589 if not isinstance(mass, u.Quantity):

590 in_mass = u.Quantity(mass, u.M_sun)

591 else:

592 in_mass = mass

593

594 # Calculate scale density

595 # M_{200} = 4\pi \rho_{s} R_{s}^3 \left[\ln(1+c) - \frac{c}{1+c}\right].

596 self.density_s = in_mass / (4.0 * np.pi * self._radius_s(in_mass, concentration) ** 3 *

597 self.A_NFW(concentration))

598

599 return self.density_s

600

601 @property

602 def rho_scale(self):

603 r"""

604 Scale density of the NFW profile. Often written in the literature as :math:`\rho_s`

605 """

606 return self.density_s

607

608 def _radius_s(self, mass, concentration):

609 """

610 Calculate scale radius of the NFW profile.

611 """

612 # Enforce default units

613 if not isinstance(mass, u.Quantity):

614 in_mass = u.Quantity(mass, u.M_sun)

615 else:

616 in_mass = mass

617

618 # Delta Mass is related to delta radius by

619 # M_{200}=\frac{4}{3}\pi r_{200}^3 200 \rho_{c}

620 # And delta radius is related to the NFW scale radius by

621 # c = R / r_{\\rm s}

622 self.radius_s = (((3.0 * in_mass) / (4.0 * np.pi * self.density_delta)) ** (

623 1.0 / 3.0)) / concentration

624

625 # Set radial units to kiloparsec by default (unit will be rescaled by units of radius

626 # in evaluate)

627 return self.radius_s.to(u.kpc)

628

629 @property

630 def r_s(self):

631 """

632 Scale radius of the NFW profile.

633 """

634 return self.radius_s

635

636 @property

637 def r_virial(self):

638 """

639 Mass factor defined virial radius of the NFW profile (R200c for M200c, Rvir for Mvir, etc.).

640 """

641 return self.r_s * self.concentration

642

643 @property

644 def r_max(self):

645 """

646 Radius of maximum circular velocity.

647 """

648 return self.r_s * 2.16258

649

650 @property

651 def v_max(self):

652 """

653 Maximum circular velocity.

654 """

655 return self.circular_velocity(self.r_max)

656

657 def circular_velocity(self, r):

658 r"""

659 Circular velocities of the NFW profile.

660

661 Parameters

662 ----------

663 r : float or `~astropy.units.Quantity` ['length']

664 Radial position of velocity to be calculated for the NFW profile.

665

666 Returns

667 -------

668 velocity : float or `~astropy.units.Quantity` ['speed']

669 NFW profile circular velocity at location ``r``. The velocity units are:

670 [km / s]

671

672 Notes

673 -----

674

675 Model formula:

676

677 .. math:: v_{circ}(r)^2 = \frac{1}{x}\frac{\ln(1+cx)-(cx)/(1+cx)}{\ln(1+c)-c/(1+c)}

678

679 .. math:: x = r/r_s

680

681 .. warning::

682

683 Output values might contain ``nan`` and ``inf``.

684 """

685 # Enforce default units (if parameters are without units)

686 if hasattr(r, "unit"):

687 in_r = r

688 else:

689 in_r = u.Quantity(r, u.kpc)

690

691 # Mass factor defined velocity (i.e. V200c for M200c, Rvir for Mvir)

692 v_profile = np.sqrt(self.mass * const.G.to(in_r.unit**3 / (self.mass.unit * u.s**2)) /

693 self.r_virial)

694

695 # Define reduced radius (r / r_{\\rm s})

696 reduced_radius = in_r / self.r_virial.to(in_r.unit)

697

698 # Circular velocity given by:

699 # v^2=\frac{1}{x}\frac{\ln(1+cx)-(cx)/(1+cx)}{\ln(1+c)-c/(1+c)}

700 # where x=r/r_{200}

701 velocity = np.sqrt((v_profile**2 * self.A_NFW(self.concentration * reduced_radius)) /

702 (reduced_radius * self.A_NFW(self.concentration)))

703

704 return velocity.to(u.km / u.s)

705

706 @property

707 def input_units(self):

708 # The units for the 'r' variable should be a length (default kpc)

709 return {self.inputs[0]: u.kpc}

710

711 @property

712 def return_units(self):

713 # The units for the 'density' variable should be a matter density (default M_sun / kpc^3)

714

715 if (self.mass.unit is None):

716 return {self.outputs[0]: u.M_sun / self.input_units[self.inputs[0]] ** 3}

717 else:

718 return {self.outputs[0]: self.mass.unit / self.input_units[self.inputs[0]] ** 3}

719

720 def _parameter_units_for_data_units(self, inputs_unit, outputs_unit):

721 return {'mass': u.M_sun,

722 "concentration": None,

723 "redshift": None}

724

[end of astropy/modeling/physical_models.py]

</code>

I need you to solve this issue by generating a single patch file that I can apply directly to this repository using git apply. Please respond with a single patch file in the following format.

<patch>

--- a/file.py

+++ b/file.py

@@ -1,27 +1,35 @@

def euclidean(a, b):

- while b:

- a, b = b, a % b

- return a

+ if b == 0:

+ return a

+ return euclidean(b, a % b)

def bresenham(x0, y0, x1, y1):

points = []

dx = abs(x1 - x0)

dy = abs(y1 - y0)

- sx = 1 if x0 < x1 else -1

- sy = 1 if y0 < y1 else -1

- err = dx - dy

+ x, y = x0, y0

+ sx = -1 if x0 > x1 else 1

+ sy = -1 if y0 > y1 else 1

- while True:

- points.append((x0, y0))

- if x0 == x1 and y0 == y1:

- break

- e2 = 2 * err

- if e2 > -dy:

+ if dx > dy:

+ err = dx / 2.0

+ while x != x1:

+ points.append((x, y))

err -= dy

- x0 += sx

- if e2 < dx:

- err += dx

- y0 += sy

+ if err < 0:

+ y += sy

+ err += dx

+ x += sx

+ else:

+ err = dy / 2.0

+ while y != y1:

+ points.append((x, y))

+ err -= dx

+ if err < 0:

+ x += sx

+ err += dy

+ y += sy

+ points.append((x, y))

return points

</patch>

|

astropy/astropy

|

43ce7895bb5b61d4fab2f9cc7d07016cf105f18e

|

BlackBody bolometric flux is wrong if scale has units of dimensionless_unscaled

The `astropy.modeling.models.BlackBody` class has the wrong bolometric flux if `scale` argument is passed as a Quantity with `dimensionless_unscaled` units, but the correct bolometric flux if `scale` is simply a float.

### Description

<!-- Provide a general description of the bug. -->

### Expected behavior

Expected output from sample code:

```

4.823870774433646e-16 erg / (cm2 s)

4.823870774433646e-16 erg / (cm2 s)

```

### Actual behavior

Actual output from sample code:

```

4.5930032795393893e+33 erg / (cm2 s)

4.823870774433646e-16 erg / (cm2 s)

```

### Steps to Reproduce

Sample code:

```python

from astropy.modeling.models import BlackBody

from astropy import units as u

import numpy as np

T = 3000 * u.K

r = 1e14 * u.cm

DL = 100 * u.Mpc

scale = np.pi * (r / DL)**2

print(BlackBody(temperature=T, scale=scale).bolometric_flux)

print(BlackBody(temperature=T, scale=scale.to_value(u.dimensionless_unscaled)).bolometric_flux)

```

### System Details

```pycon

>>> import numpy; print("Numpy", numpy.__version__)

Numpy 1.20.2

>>> import astropy; print("astropy", astropy.__version__)

astropy 4.3.dev758+g1ed1d945a

>>> import scipy; print("Scipy", scipy.__version__)

Traceback (most recent call last):

File "<stdin>", line 1, in <module>

ModuleNotFoundError: No module named 'scipy'

>>> import matplotlib; print("Matplotlib", matplotlib.__version__)

Traceback (most recent call last):

File "<stdin>", line 1, in <module>

ModuleNotFoundError: No module named 'matplotlib'

```

|

I forgot who added that part of `BlackBody`. It was either @karllark or @astrofrog .

There are several problems here:

1. In `BlackBody.evaluate()`, there is an `if` statement that handles two special cases: either scale is dimensionless, and multiplies the original blackbody surface brightness, or `scale` has units that are compatible with surface brightness, and replaces the original surface brightness. This check is broken, because it does not correctly handle the case that `scale` has a unit, but that unit is compatible with `dimensionless_unscaled`. This is easy to fix.

2. The `BlackBody.bolometric_flux` method does not handle this special case. Again, this is easy to fix.

3. In the case that `scale` has units that are compatible with surface brightness, it is impossible to unambiguously determine the correct multiplier in `BlackBody.bolometric_flux`, because the conversion may depend on the frequency or wavelength at which the scale was given. This might be a design flaw.

Unless I'm missing something, there is no way for this class to give an unambiguous and correct value of the bolometric flux, unless `scale` is dimensionless. Is that correct?

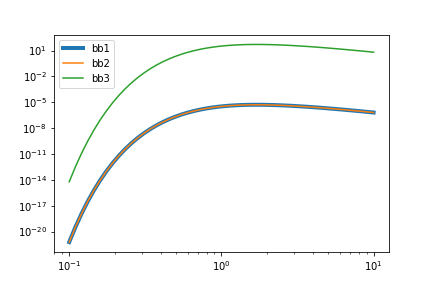

Here's another weird output from BlackBody. I _think_ it's a manifestation of the same bug, or at least it's related. I create three black bodies:

* `bb1` with a scale=1 erg / (cm2 Hz s sr)

* `bb2` with a scale=1 J / (cm2 Hz s sr)

* `bb3` with a scale=1e7 erg / (cm2 Hz s sr)

The spectra from `bb1` and `bb2` look the same, even though `bb2` should be (1 J / 1 erg) = 1e7 times as bright! And the spectrum from `bb3` looks different from `bb2`, even though 1e7 erg = 1 J.

```python

from astropy.modeling.models import BlackBody

from astropy import units as u

from matplotlib import pyplot as plt

import numpy as np

nu = np.geomspace(0.1, 10) * u.micron

bb1 = BlackBody(temperature=3000*u.K, scale=1*u.erg/(u.cm ** 2 * u.s * u.Hz * u.sr))

bb2 = BlackBody(temperature=3000*u.K, scale=1*u.J/(u.cm ** 2 * u.s * u.Hz * u.sr))

bb3 = BlackBody(temperature=3000*u.K, scale=1e7*u.erg/(u.cm ** 2 * u.s * u.Hz * u.sr))

fig, ax = plt.subplots()

ax.set_xscale('log')

ax.set_yscale('log')

ax.plot(nu.value, bb1(nu).to_value(u.erg/(u.cm ** 2 * u.s * u.Hz * u.sr)), lw=4, label='bb1')

ax.plot(nu.value, bb2(nu).to_value(u.erg/(u.cm ** 2 * u.s * u.Hz * u.sr)), label='bb2')

ax.plot(nu.value, bb3(nu).to_value(u.erg/(u.cm ** 2 * u.s * u.Hz * u.sr)), label='bb3')

ax.legend()

fig.savefig('test.png')

```

This is great testing of the code. Thanks!

I think I was the one that added this capability. I don't have time at this point to investigate this issue in detail. I can look at in the near(ish) future. If someone else is motivated and has time to investigate and solve, I'm happy to cheer from the sidelines.

In pseudocode, here's what the code does with `scale`:

* If `scale` has no units, it simply multiplies a standard blackbody.

* If `scale` has units that are compatible with flux density, it splits off the value and unit. The value multiplies the standard blackbody, and the output is converted to the given unit.

So in both cases, the actual _units_ of the `scale` parameter are ignored. Only the _value_ of the `scale` parameter matters.

As nice as the spectral equivalencies are, I think it was a mistake to support a dimensionful `scale` parameter. Clearly that case is completely broken. Can we simply remove that functionality?

Beginning to think that the scale keyword should go away (in time, deprecated first of course) and docs updated to clearly show how to convert between units (flam to fnu for example) and remove sterradians. Astropy does have great units support and the scale functionality can all be accomplished with such. Not 100% sure yet, looking forward to seeing what others think.

The blackbody function would return in default units and scale (fnu seems like the best choice, but kinda arbitrary in the end).

If my memory is correct, the scale keyword was partially introduced to be able to reproduce the previous behavior of two backbody functions that were deprecated and have now been removed from astropy.

No, I think @astrofrog introduced scale for fitting. The functional, uh, functions that we have removed did not have scaling.

FWIW, I still have the old stuff over at https://github.com/spacetelescope/synphot_refactor/blob/master/synphot/blackbody.py . I never got around to using the new models over there. 😬

In trying to handle support for flux units outside of the `BlackBody` model, I ran into a few issues that I'll try to summarize with an example below.

```

from astropy.modeling import models

import astropy.units as u

import numpy as np

FLAM = u.erg / (u.cm ** 2 * u.s * u.AA)

SLAM = u.erg / (u.cm ** 2 * u.s * u.AA * u.sr)

wavelengths = np.linspace(2000, 50000, 10001)*u.AA

```

Using `Scale` to handle the unit conversion fails in the forward model because the `Scale` model will not accept wavelength units as input (it seems `factor` **must** be provided in the same units as the input x-array, but we need output of `sr` for the units to cooperate).

```

m = models.BlackBody(temperature=5678*u.K, scale=1.0*SLAM) * models.Scale(factor=1.0*u.sr)

fluxes = m(wavelengths)

```

which gives the error: `Scale: Units of input 'x', Angstrom (length), could not be converted to required input units of sr (solid angle)`.

Using `Linear1D` with a slope of 0 and an intercept as the scaling factor (with appropriate units to convert from wavelength to `sr`) does work for the forward model, and yields correct units from the `Compound` model, but fails within fitting when calling `without_units_for_data`:

```

m = models.BlackBody(temperature=5678*u.K, scale=1.0*SLAM) * models.Linear1D(slope=0.0*u.sr/u.AA, intercept=1.0*u.sr)

fluxes = m(wavelengths)

m.without_units_for_data(x=wavelengths, y=fluxes)

```

with the error: `'sr' (solid angle) and 'erg / (Angstrom cm2 s)' (power density/spectral flux density wav) are not convertible`. It seems to me that this error _might_ be a bug (?), and if it could be fixed, then this approach would technically work for handling the scale and unit conversions externally, but its not exactly obvious or clean from the user-perspective.

Is there another approach for handling the conversion externally to the model that works with fitting and `Compound` models? If not, then either the `without_units_for_data` needs to work for a case like this, or I think `scale` in `BlackBody` might need to be kept and extended to support `FLAM` and `FNU` units as input to allow fluxes as output.

While I broadly like the cleanness of @karllark's approach of just saying "rescale to your hearts desire", I'm concerned that the ship has essentially sailed. In particular, I think the following are true:

1. Plenty of other models have scale parameters, so users probably took that up conceptually already

2. In situations like `specutils` where the blackbody model is used as a tool on already-existing data, it's often useful to carry around the model *with its units*.

So to me that argues pretty clearly for "allow `scale` to have whatever units the user wants. But I see a way to "have our cake and eat it too":

1. Take the existing blackbody model, remove the `scale`, and call it `UnscaledBlackbodyModel` or something

2. Make a new `BlackbodyModel` which is a compound model using `Scale` (with `scale` as the keyword), assuming @kecnry's report that it failed can be fixed (since it sure seems like as a bug).

That way we can let people move in the direction @karllark suggested if it seems like people actually like it by telling them to use `UnscaledBlackbodyModel`, but fixing the problem with `Scale` at the same time.

(Plan B, at least if we want something fixed for Astropy 5.0, is to just fix `scale` and have the above be a longer-term plan for maybe 5.1)

If someone else wants to do Plan B for ver5.0 as described by @eteq, that's fine with me. I won't have time before Friday to do such.

I think that all of these proposed solutions fail to address the problem that scale units of FLAM or FNU cannot be handled unambiguously, because the reference frequency or wavelength is unspecified.

I feel the way forward on this topic is to generate a list of use cases for the use of the scale keyword and then we can figure out how to modify the current code. These use cases can be coded up into tests. I have to admit I'm getting a little lost in knowing what all the uses of scale.

And if all the use cases are compatible with each other.

@lpsinger - agreed. The `bolometric_flux` method and adding support for flux units to `evaluate` are definitely related, but have slightly different considerations that make this quite difficult. Sorry if the latter goal somewhat hijacked this issue - but I do think the solution needs to account for both (as well as the unitless "bug" in your original post).

@karllark - also agreed. After looking into this in more detail, I think `scale` really has 2 (and perhaps eventually 3) different purposes: a _unitless_ scale to the blackbody equation, determining the output units of `evaluate` and whether it should be wrt wavelength or frequency, and possibly would also be responsible for providing `sterradians` to convert to flux units. Separating this functionality into three separate arguments might be the simplest to implement and perhaps the clearest and might resolve the `bolometric_flux` concern, but also is clunky for the user and might be a little difficult for backwards compatibility. Keeping it as one argument is definitely convenient, but confusing and raises issues with ambiguity in `bolometric_flux` mentioned above.

@kecnry, I'm concerned that overloading the scale to handle either a unitless value or a value with units of steradians is a footgun, because depending on the units you pass, it may or may not add a factor of pi. This is a footgun because people often think of steradians as being dimensionless.

@lpsinger (and others) - how would you feel about splitting the parameters then?

* `scale`: **must** be unitless (or convertible to true unitless), perhaps with backwards compatibility support for SLAM and SNU units that get stripped and interpreted as `output_units`. I think this can then be used in both `evaluate` and `bolometric_flux`.

* `solid_angle` (or similar name): which is only required when wanting the `evaluate` method to output in flux units. If provided, you must also set a compatible unit for `output_units`.

* `output_units` (or similar name): choose whether `evaluate` will output SNU (default as it is now), SLAM, FNU, or FLAM units (with compatibility checks for the other arguments: you can't set this to SLAM or SNU and pass `solid_angle`, for example).

The downside here is that in the flux case, fitting both `scale` and `solid_angle` will be entirely degenerate, so one of the two will likely need to be held fixed. In some use-cases where you don't care about how much of the scale belongs to which units, it might be convenient to just leave one fixed at unity and let the other absorb the full scale factor. But the upside is that I _think_ this approach might get around the ambiguity cases you brought up?

A delta on @kecnry's suggestion to make it a bit less confusing to the user (maybe?) would be to have *3* classes, one that's just `BaseBlackbodyModel` with only the temperature (and no units), a `BlackbodyModel` that's what @kecnry suggeted just above, and a `FluxButNotDensityReallyIMeanItBlackbodyModel` (ok, maybe a different name is needed there) which has the originally posed `scale` but not `solid_angle`.

My motivation here is that I rarely actually want to think about solid angle at all if I can avoid it, but sometimes I have to.

@eteq - I would be for that, but then `FluxButNotDensityReallyIMeanItBlackbodyModel` would likely have to raise an error if calling `bolometric_flux` or possibly could estimate through integration (over wavelength or frequency) instead.

Yeah, I'm cool with that, as long as the exception message says something like "not sure why you're seeing this? Try using BlackbodyModel instead"

If you end up with a few new classes, the user documentation needs some serious explaining, as I feel like this is going against "There should be one-- and preferably only one --obvious way to do it" ([PEP 20](https://www.python.org/dev/peps/pep-0020/)) a little...

@eteq @pllim - it might be possible to achieve this same use-case (not having to worry about thinking about solid angle if you don't intend to make calls to `bolometric_flux`) in a single class by allowing `solid_angle = None` for the flux case and absorbing the steradians into the scale factor. That case would then need to raise an informative error for calls to `bolometric_flux` to avoid the ambiguity issue. The tradeoff I see is more complex argument validation logic and extended documentation in a single class rather than multiple classes for different use-cases.

If no one thinks of any major drawbacks/concerns, I will take a stab at that implementation and come up with examples for each of the use-cases discussed so far and we can then reconsider if splitting into separate classes is warranted.

Thanks for all the good ideas!

Here are some proposed pseudo-code calls that I think could cover all the cases above with a single class including new optional `solid_angle` and `output_units` arguments. Please let me know if I've missed any cases or if any of these wouldn't act as you'd expect.

As you can see, there are quite a few different scenarios, so this is likely to be a documentation and testing challenge - but I'm guessing any approach will have that same problem. Ultimately though it boils down to attempting to pull the units out of `scale` to avoid the ambiguous issues brought up here, while still allowing support for output and fitting in flux units (by supporting both separating the dimensionless scale from the solid angle to allow calling `bolometric_flux` and also by absorbing them together for the case of fitting a single scale factor and sacrificing the ability to call `bolometric_flux`).

**SNU/SLAM units**

`BlackBody(temperature, [scale (float or unitless)], output_units=(None, SNU, or SLAM))`

* if `output_units` is not provided or `None`, defaults to `SNU` to match current behavior

* unitless `scale` converted to unitless_unscaled (should address this *original* bug report)

* returns in SNU/SLAM units

* `bolometric_flux` uses unitless `scale` directly (matches current behavior)

`BlackBody(temperature, scale (SNU or SLAM units))`

* for **backwards compatibility** only

* `output_units = scale.unit`, `scale = scale.value`

* returns in SNU/SLAM units

* `bolometric_flux`: we have two options here: (1) interpret this as a unitless `scale` with units being interpreted only for the sake of output units which matches current behavior (2) raise an error that `bolometric_flux` requires unitless `scale` to be passed (see [point 3 in the comment above](https://github.com/astropy/astropy/issues/11547#issuecomment-822667522)).

`BlackBody(temperature, scale (with other units), output_units=(None, SNU, or SLAM))`

* **ERROR**: `scale` cannot have units if `output_units` are SNU or SLAM (or non-SNU/SLAM units if `output_units` not provided or None)

**FNU/FLAM units**

`BlackBody(temperature, scale (float or unitless), solid_angle (u.sr), output_units=(FNU or FLAM))`

* unitless `scale` converted to unitless_unscaled

* returns in FNU/FLAM

* `bolometric_flux` uses unitless `scale` directly (since separated from `solid_angle`)

* fitting: either raise an error if both `scale` and `solid_angle` are left unfixed or just let it be degenerate?

`BlackBody(temperature, scale (sr units), output_units=(FNU or FLAM))`

* `scale = scale.value`, `solid_angle = 1.0*u.sr` and **automatically set to be kept fixed** during fitting

* returns in FNU/FLAM

* `bolometric_flux` => ERROR: must provide separate `scale` and `solid_angle` to call `bolometric_flux` (i.e. the previous case)

`BlackBody(temperature, scale (FNU or FLAM units))`

* to match **backwards compatibility** case for SNU/SLAM

* `output_units = scale.unit`, `scale = scale.value`, `solid_angle = 1.0*u.sr` and **automatically set to be kept fixed** during fitting

* returns in FNU/FLAM units

* `bolometric_flux` => ERROR: same as above, must provide separate `scale` and `solid_angle`.

`BlackBody(temperature, scale (float, unitless, or non sr units), output_units=(FNU or FLAM))`

* **ERROR**: FNU/FLAM requires scale to have FNU/FLAM/sr units OR unitless with solid_angle provided (any of the cases above)

Upon further reflection, I think that we are twisting ourselves into a knot by treating the black body as a special case when it comes to this pesky factor of pi. It's not. The factor of pi comes up any time that you need to convert from specific intensity (S_nu a.k.a. B_nu [erg cm^-2 s^-1 Hz^-1 sr^-1]) to flux density (F_nu [erg cm^-2 s^-1 Hz^-1]) assuming that your emitting surface element radiates isotropically. It's just the integral of cos(theta) from theta=0 to pi/2.

BlackBody only looks like a special case among the astropy models because there are no other physical radiation models. If we declared a constant specific intensity source model class, then we would be having the same argument about whether we need to have a dual flux density class with an added factor of pi.

What we commonly call Planck's law is B_nu. In order to avoid confusing users who are expecting the class to use the textbook definition, the Astropy model should _not_ insert the factor of pi.

Instead, I propose that we go back to for `astropy.modeling.models.BlackBody`:

1. `scale` may have units of dimensionless_unscaled or solid angle, and in either case simply multiplies the output, or

2. has no scale parameter.

In both cases, support for scale in FNU/FLAM/SNU/SLAM is deprecated because it cannot be implemented correctly and unambiguously.

And in both cases, synphot keeps its own BlackBody1D class (perhaps renamed to BlackBodyFlux1D to mirror ConstFlux1D) and it _does_ have the factor of pi added.

BTW, I found this to be a nice refresher: https://www.cv.nrao.edu/~sransom/web/Ch2.html

> synphot keeps its own BlackBody1D class (perhaps renamed to BlackBodyFlux1D to mirror ConstFlux1D)

`synphot` never used the new blackbody stuff here, so I think it can be safely left out of the changes here. If you feel strongly about its model names, feel free to open issue at https://github.com/spacetelescope/synphot_refactor/issues but I don't think it will affect anything at `astropy` or vice versa. 😅

@lpsinger - good points. I agree that this situation isn't fundamentally unique to BlackBody, and on further thought along those lines, can't think of any practical reason not to abstract away the `solid_angle` entirely from my use-cases above (as it should probably always either be N/A or pi - allowing it to possibly be fitted or set incorrectly just asks for problems). I have gone back and forth with myself about your point for *not* adding support for including the pi automatically, but as long as the default behavior remains the "pure" B_nu form, I think there are significant practical advantages for supporting more flexibility. The more this conversation continues, the more convinced I am that `scale` is indeed useful, but that we should move towards forcing it to be unitless to avoid a lot of these confusing scenarios. I'm worried that allowing `scale` to have steradians as units will cause more confusion (although I appreciate the simplicity of just multiplying the result).

So... my (current) vote would be to still implement a separate `output_units` argument to make sure any change in units (and/or inclusion of pi) is explicitly clear and to take over the role of differentiating between specific intensity and flux density (by eventually requiring `scale` to be unitless and always handling the pi internally if requesting in flux units).

Assuming we can't remove support for units in `scale` this release without warning, that leaves us with the following:

* `BlackBody(temperature, [scale (float or unitless)], output_units=(None, SNU, or SLAM))`

* temporary support for `BlackBody(temperature, scale (SNU or SLAM units))`: this is the current supported syntax that we want to deprecate. In the meantime, we would split the `scale` quantity into `scale` (unitless) and `output_units`. I think this still might be a bit confusing for the `bolometric_flux` case, so we may want to raise an error/warning there?

* `BlackBody(temperature, [scale (float or unitless)], output_units=(FNU or FLAM))`: since scale is unitless, it is assumed *not* to include the pi, the returned value is multiplied by `scale*pi` internally and with requested units.

* temporary support for `BlackBody(temperature, scale (FNU, FLAM))`: here `scale` includes units of solid angle, so internally we would set `scale = scale.value/pi` and then use the above treatment to multiply by `scale*pi`. Note that this does mean the these last two cases behave a little differently for passing the same "number" to `scale`, as without units it assumes to not include the pi, but will assume to include the pi if passed as a quantity. Definitely not ideal - I suppose we don't need to add support for this case since it wasn't supported in the past. But if we do, we may again want to raise an error/warning when calling `bolometric_flux`?

If we don't like the `output_units` argument, this could be done instead with `BlackBody` vs `BlackBodyFlux` model (similar to @eteq's suggestion earlier), still deprecate passing units to scale as described above for both classes, and leave any unit conversion between *NU and *LAM to the user. Separate classes may be slightly cleaner looking and help separate the documentation, while a single class with the `output_units` argument provides a little more convenience functionality.

I think we should not include the factor of pi at all in the astropy model because it assumes not only that one is integrating over a solid angle, but that the temperature is uniform over the body. In general, that does not have to be the case, does it?

Would we ruffle too many feathers if we deprecated `scale` altogether?

> Would we ruffle too many feathers

Can't be worse than the episode when we deprecated `clobber` in `io.fits`... 😅

No, not in general. But so long as we only support a single temperature, I think it's reasonable that that would assume uniform temperature.

I think getting rid of `scale` entirely was @karllark's original suggestion, but then all of this logic is left to be done externally (likely by the user). My attempts to do so with the existing `Scale` or `Linear1D` models, [showed complications](https://github.com/astropy/astropy/issues/11547#issuecomment-949734738). Perhaps I was missing something there and there's a better way... or maybe we need to work on fixing underlying bugs or lack of flexibility in `Compound` models instead. I also agree with @eteq's [arguments that users would expect a scale](https://github.com/astropy/astropy/issues/11547#issuecomment-951154117) and that it might indeed ruffle some feathers.

> No, not in general. But so long as we only support a single temperature, I think it's reasonable that that would assume uniform temperature.

It may be fair to assume a uniform temperature, but the factor of pi is also kind of assuming that the emitting surface is a sphere, isn't it?

> I think getting rid of `scale` entirely was @karllark's original suggestion, but then all of this logic is left to be done externally (likely by the user). My attempts to do so with the existing `Scale` or `Linear1D` models, [showed complications](https://github.com/astropy/astropy/issues/11547#issuecomment-949734738). Perhaps I was missing something there and there's a better way... or maybe we need to work on fixing underlying bugs or lack of flexibility in `Compound` models instead. I also agree with @eteq's [arguments that users would expect a scale](https://github.com/astropy/astropy/issues/11547#issuecomment-951154117) and that it might indeed ruffle some feathers.

I see. In that case, it seems that we are converging toward retaining the `scale` attribute but deprecating any but dimensionless units for it. Is that an accurate statement? If so, then I can whip up a PR.

Yes, most likely a sphere, or at least anything where the solid angle is pi. But I agree that adding the generality for any solid angle will probably never be used and just adds unnecessary complication.

I think that's the best approach for now (deprecating unit support in `scale` but supporting flux units) and then if in the future we want to completely remove `scale`, that is an option as long as external scaling can pick up the slack. I already started on testing some implementations, so am happy to put together the PR (and will tag you so you can look at it and comment before any decision is made).

> I think that's the best approach for now (deprecating unit support in `scale` but supporting flux units) and then if in the future we want to completely remove `scale`, that is an option as long as external scaling can pick up the slack. I already started on testing some implementations, so am happy to put together the PR (and will tag you so you can look at it and comment before any decision is made).

Go for it.

|

2021-10-28T15:32:17Z

|

<patch>

diff --git a/astropy/modeling/physical_models.py b/astropy/modeling/physical_models.py

--- a/astropy/modeling/physical_models.py

+++ b/astropy/modeling/physical_models.py

@@ -27,7 +27,12 @@ class BlackBody(Fittable1DModel):

Blackbody temperature.

scale : float or `~astropy.units.Quantity` ['dimensionless']

- Scale factor

+ Scale factor. If dimensionless, input units will assumed

+ to be in Hz and output units in (erg / (cm ** 2 * s * Hz * sr).

+ If not dimensionless, must be equivalent to either

+ (erg / (cm ** 2 * s * Hz * sr) or erg / (cm ** 2 * s * AA * sr),

+ in which case the result will be returned in the requested units and

+ the scale will be stripped of units (with the float value applied).

Notes

-----

@@ -70,12 +75,40 @@ class BlackBody(Fittable1DModel):

scale = Parameter(default=1.0, min=0, description="Scale factor")

# We allow values without units to be passed when evaluating the model, and

- # in this case the input x values are assumed to be frequencies in Hz.

+ # in this case the input x values are assumed to be frequencies in Hz or wavelengths

+ # in AA (depending on the choice of output units controlled by units on scale

+ # and stored in self._output_units during init).

_input_units_allow_dimensionless = True

# We enable the spectral equivalency by default for the spectral axis

input_units_equivalencies = {'x': u.spectral()}

+ # Store the native units returned by B_nu equation

+ _native_units = u.erg / (u.cm ** 2 * u.s * u.Hz * u.sr)

+

+ # Store the base native output units. If scale is not dimensionless, it

+ # must be equivalent to one of these. If equivalent to SLAM, then

+ # input_units will expect AA for 'x', otherwise Hz.

+ _native_output_units = {'SNU': u.erg / (u.cm ** 2 * u.s * u.Hz * u.sr),

+ 'SLAM': u.erg / (u.cm ** 2 * u.s * u.AA * u.sr)}

+

+ def __init__(self, *args, **kwargs):

+ scale = kwargs.get('scale', None)

+

+ # Support scale with non-dimensionless unit by stripping the unit and

+ # storing as self._output_units.

+ if hasattr(scale, 'unit') and not scale.unit.is_equivalent(u.dimensionless_unscaled):

+ output_units = scale.unit

+ if not output_units.is_equivalent(self._native_units, u.spectral_density(1*u.AA)):

+ raise ValueError(f"scale units not dimensionless or in surface brightness: {output_units}")

+

+ kwargs['scale'] = scale.value

+ self._output_units = output_units

+ else:

+ self._output_units = self._native_units

+

+ return super().__init__(*args, **kwargs)

+

def evaluate(self, x, temperature, scale):

"""Evaluate the model.

@@ -83,7 +116,8 @@ def evaluate(self, x, temperature, scale):

----------

x : float, `~numpy.ndarray`, or `~astropy.units.Quantity` ['frequency']

Frequency at which to compute the blackbody. If no units are given,

- this defaults to Hz.

+ this defaults to Hz (or AA if `scale` was initialized with units

+ equivalent to erg / (cm ** 2 * s * AA * sr)).

temperature : float, `~numpy.ndarray`, or `~astropy.units.Quantity`

Temperature of the blackbody. If no units are given, this defaults

@@ -119,30 +153,18 @@ def evaluate(self, x, temperature, scale):

else:

in_temp = temperature

+ if not isinstance(x, u.Quantity):

+ # then we assume it has input_units which depends on the

+ # requested output units (either Hz or AA)

+ in_x = u.Quantity(x, self.input_units['x'])

+ else:

+ in_x = x

+

# Convert to units for calculations, also force double precision

with u.add_enabled_equivalencies(u.spectral() + u.temperature()):

- freq = u.Quantity(x, u.Hz, dtype=np.float64)

+ freq = u.Quantity(in_x, u.Hz, dtype=np.float64)

temp = u.Quantity(in_temp, u.K)

- # check the units of scale and setup the output units

- bb_unit = u.erg / (u.cm ** 2 * u.s * u.Hz * u.sr) # default unit

- # use the scale that was used at initialization for determining the units to return

- # to support returning the right units when fitting where units are stripped

- if hasattr(self.scale, "unit") and self.scale.unit is not None:

- # check that the units on scale are covertable to surface brightness units

- if not self.scale.unit.is_equivalent(bb_unit, u.spectral_density(x)):

- raise ValueError(

- f"scale units not surface brightness: {self.scale.unit}"

- )

- # use the scale passed to get the value for scaling

- if hasattr(scale, "unit"):

- mult_scale = scale.value

- else:

- mult_scale = scale

- bb_unit = self.scale.unit

- else:

- mult_scale = scale

-

# Check if input values are physically possible

if np.any(temp < 0):

raise ValueError(f"Temperature should be positive: {temp}")

@@ -158,7 +180,17 @@ def evaluate(self, x, temperature, scale):

# Calculate blackbody flux

bb_nu = 2.0 * const.h * freq ** 3 / (const.c ** 2 * boltzm1) / u.sr

- y = mult_scale * bb_nu.to(bb_unit, u.spectral_density(freq))

+ if self.scale.unit is not None:

+ # Will be dimensionless at this point, but may not be dimensionless_unscaled

+ if not hasattr(scale, 'unit'):

+ # during fitting, scale will be passed without units

+ # but we still need to convert from the input dimensionless

+ # to dimensionless unscaled

+ scale = scale * self.scale.unit

+ scale = scale.to(u.dimensionless_unscaled).value

+

+ # NOTE: scale is already stripped of any input units

+ y = scale * bb_nu.to(self._output_units, u.spectral_density(freq))

# If the temperature parameter has no unit, we should return a unitless

# value. This occurs for instance during fitting, since we drop the

@@ -169,10 +201,13 @@ def evaluate(self, x, temperature, scale):

@property

def input_units(self):

- # The input units are those of the 'x' value, which should always be

- # Hz. Because we do this, and because input_units_allow_dimensionless

- # is set to True, dimensionless values are assumed to be in Hz.

- return {self.inputs[0]: u.Hz}

+ # The input units are those of the 'x' value, which will depend on the

+ # units compatible with the expected output units.

+ if self._output_units.is_equivalent(self._native_output_units['SNU']):

+ return {self.inputs[0]: u.Hz}

+ else:

+ # only other option is equivalent with SLAM

+ return {self.inputs[0]: u.AA}

def _parameter_units_for_data_units(self, inputs_unit, outputs_unit):

return {"temperature": u.K}

@@ -180,9 +215,15 @@ def _parameter_units_for_data_units(self, inputs_unit, outputs_unit):

@property

def bolometric_flux(self):

"""Bolometric flux."""

+ if self.scale.unit is not None:

+ # Will be dimensionless at this point, but may not be dimensionless_unscaled

+ scale = self.scale.quantity.to(u.dimensionless_unscaled)

+ else:

+ scale = self.scale.value

+

# bolometric flux in the native units of the planck function

native_bolflux = (

- self.scale.value * const.sigma_sb * self.temperature ** 4 / np.pi

+ scale * const.sigma_sb * self.temperature ** 4 / np.pi

)

# return in more "astro" units

return native_bolflux.to(u.erg / (u.cm ** 2 * u.s))

</patch>

|

diff --git a/astropy/modeling/tests/test_physical_models.py b/astropy/modeling/tests/test_physical_models.py

--- a/astropy/modeling/tests/test_physical_models.py

+++ b/astropy/modeling/tests/test_physical_models.py

@@ -40,6 +40,17 @@ def test_blackbody_sefanboltzman_law():

assert_quantity_allclose(b.bolometric_flux, 133.02471751812573 * u.W / (u.m * u.m))

+def test_blackbody_input_units():

+ SLAM = u.erg / (u.cm ** 2 * u.s * u.AA * u.sr)

+ SNU = u.erg / (u.cm ** 2 * u.s * u.Hz * u.sr)

+

+ b_lam = BlackBody(3000*u.K, scale=1*SLAM)

+ assert(b_lam.input_units['x'] == u.AA)

+

+ b_nu = BlackBody(3000*u.K, scale=1*SNU)

+ assert(b_nu.input_units['x'] == u.Hz)

+

+

def test_blackbody_return_units():

# return of evaluate has no units when temperature has no units

b = BlackBody(1000.0 * u.K, scale=1.0)

@@ -72,7 +83,7 @@ def test_blackbody_fit():

b_fit = fitter(b, wav, fnu, maxiter=1000)

assert_quantity_allclose(b_fit.temperature, 2840.7438355865065 * u.K)

- assert_quantity_allclose(b_fit.scale, 5.803783292762381e-17 * u.Jy / u.sr)

+ assert_quantity_allclose(b_fit.scale, 5.803783292762381e-17)

def test_blackbody_overflow():

@@ -104,10 +115,11 @@ def test_blackbody_exceptions_and_warnings():

"""Test exceptions."""

# Negative temperature

- with pytest.raises(ValueError) as exc:

+ with pytest.raises(

+ ValueError,

+ match="Temperature should be positive: \\[-100.\\] K"):

bb = BlackBody(-100 * u.K)

bb(1.0 * u.micron)

- assert exc.value.args[0] == "Temperature should be positive: [-100.] K"

bb = BlackBody(5000 * u.K)

@@ -121,11 +133,11 @@ def test_blackbody_exceptions_and_warnings():

bb(-1.0 * u.AA)

assert len(w) == 1

- # Test that a non surface brightness converatable scale unit

- with pytest.raises(ValueError) as exc:

+ # Test that a non surface brightness convertible scale unit raises an error

+ with pytest.raises(

+ ValueError,

+ match="scale units not dimensionless or in surface brightness: Jy"):

bb = BlackBody(5000 * u.K, scale=1.0 * u.Jy)

- bb(1.0 * u.micron)

- assert exc.value.args[0] == "scale units not surface brightness: Jy"

def test_blackbody_array_temperature():

@@ -146,6 +158,45 @@ def test_blackbody_array_temperature():

assert flux.shape == (3, 4)

+def test_blackbody_dimensionless():

+ """Test support for dimensionless (but not unscaled) units for scale"""

+ T = 3000 * u.K

+ r = 1e14 * u.cm

+ DL = 100 * u.Mpc

+ scale = np.pi * (r / DL)**2

+

+ bb1 = BlackBody(temperature=T, scale=scale)

+ # even though we passed scale with units, we should be able to evaluate with unitless

+ bb1.evaluate(0.5, T.value, scale.to_value(u.dimensionless_unscaled))

+

+ bb2 = BlackBody(temperature=T, scale=scale.to_value(u.dimensionless_unscaled))

+ bb2.evaluate(0.5, T.value, scale.to_value(u.dimensionless_unscaled))

+

+ # bolometric flux for both cases should be equivalent

+ assert(bb1.bolometric_flux == bb2.bolometric_flux)

+

+

+@pytest.mark.skipif("not HAS_SCIPY")

+def test_blackbody_dimensionless_fit():

+ T = 3000 * u.K

+ r = 1e14 * u.cm

+ DL = 100 * u.Mpc

+ scale = np.pi * (r / DL)**2

+

+ bb1 = BlackBody(temperature=T, scale=scale)

+ bb2 = BlackBody(temperature=T, scale=scale.to_value(u.dimensionless_unscaled))

+

+ fitter = LevMarLSQFitter()

+

+ wav = np.array([0.5, 5, 10]) * u.micron

+ fnu = np.array([1, 10, 5]) * u.Jy / u.sr

+

+ bb1_fit = fitter(bb1, wav, fnu, maxiter=1000)

+ bb2_fit = fitter(bb2, wav, fnu, maxiter=1000)

+

+ assert(bb1_fit.temperature == bb2_fit.temperature)

+

+

@pytest.mark.parametrize("mass", (2.0000000000000E15 * u.M_sun, 3.976819741e+45 * u.kg))

def test_NFW_evaluate(mass):

"""Evaluation, density, and radii validation of NFW model."""

|

4.3

|

["astropy/modeling/tests/test_physical_models.py::test_blackbody_input_units", "astropy/modeling/tests/test_physical_models.py::test_blackbody_exceptions_and_warnings", "astropy/modeling/tests/test_physical_models.py::test_blackbody_dimensionless"]

|

["astropy/modeling/tests/test_physical_models.py::test_blackbody_evaluate[temperature0]", "astropy/modeling/tests/test_physical_models.py::test_blackbody_evaluate[temperature1]", "astropy/modeling/tests/test_physical_models.py::test_blackbody_weins_law", "astropy/modeling/tests/test_physical_models.py::test_blackbody_sefanboltzman_law", "astropy/modeling/tests/test_physical_models.py::test_blackbody_return_units", "astropy/modeling/tests/test_physical_models.py::test_blackbody_overflow", "astropy/modeling/tests/test_physical_models.py::test_blackbody_array_temperature", "astropy/modeling/tests/test_physical_models.py::test_NFW_evaluate[mass0]", "astropy/modeling/tests/test_physical_models.py::test_NFW_evaluate[mass1]", "astropy/modeling/tests/test_physical_models.py::test_NFW_circular_velocity", "astropy/modeling/tests/test_physical_models.py::test_NFW_exceptions_and_warnings_and_misc"]

|

298ccb478e6bf092953bca67a3d29dc6c35f6752

|

astropy__astropy-12825

|

You will be provided with a partial code base and an issue statement explaining a problem to resolve.

<issue>

SkyCoord in Table breaks aggregate on group_by

### Description, actual behaviour, reproduction

When putting a column of `SkyCoord`s in a `Table`, `aggregate` does not work on `group_by().groups`:

```python

from astropy.table import Table

import astropy.units as u

from astropy.coordinates import SkyCoord

import numpy as np

ras = [10, 20] * u.deg

decs = [32, -2] * u.deg

str_col = ['foo', 'bar']

coords = SkyCoord(ra=ras, dec=decs)

table = Table([str_col, coords], names=['col1', 'col2'])

table.group_by('col1').groups.aggregate(np.mean)

```

fails with

```

Traceback (most recent call last):

File "repro.py", line 13, in <module>

table.group_by('col1').groups.aggregate(np.mean)

File "astropy/table/groups.py", line 357, in aggregate

new_col = col.groups.aggregate(func)

File "astropy/coordinates/sky_coordinate.py", line 835, in __getattr__

raise AttributeError("'{}' object has no attribute '{}'"

AttributeError: 'SkyCoord' object has no attribute 'groups'

```

This happens irregardless of the aggregation function.

### Expected behavior

Aggregation works, only fails to aggregate columns where operation does not make sense.

### System Details

```

Linux-5.14.11-arch1-1-x86_64-with-glibc2.33

Python 3.9.7 (default, Aug 31 2021, 13:28:12)

[GCC 11.1.0]

Numpy 1.21.2

astropy 5.0.dev945+g7dfa1edb2

(no scipy or matplotlib)

```

and

```

Linux-5.14.11-arch1-1-x86_64-with-glibc2.33

Python 3.9.7 (default, Aug 31 2021, 13:28:12)

[GCC 11.1.0]

Numpy 1.21.2

astropy 4.3.1

Scipy 1.7.1

Matplotlib 3.4.3

```

</issue>

<code>

[start of README.rst]

1 =======

2 Astropy

3 =======

4

5 |Actions Status| |CircleCI Status| |Azure Status| |Coverage Status| |PyPI Status| |Documentation Status| |Zenodo|

6

7 The Astropy Project (http://astropy.org/) is a community effort to develop a

8 single core package for Astronomy in Python and foster interoperability between

9 Python astronomy packages. This repository contains the core package which is

10 intended to contain much of the core functionality and some common tools needed

11 for performing astronomy and astrophysics with Python.

12

13 Releases are `registered on PyPI <https://pypi.org/project/astropy>`_,