Add files using upload-large-folder tool

Browse filesThis view is limited to 50 files because it contains too many changes.

See raw diff

- evalkit_tf437/lib/python3.10/site-packages/fastapi-0.103.2.dist-info/METADATA +531 -0

- evalkit_tf437/lib/python3.10/site-packages/fastapi-0.103.2.dist-info/WHEEL +4 -0

- evalkit_tf437/lib/python3.10/site-packages/google_crc32c/_checksum.py +87 -0

- evalkit_tf437/lib/python3.10/site-packages/google_crc32c/_crc32c.cpython-310-x86_64-linux-gnu.so +0 -0

- evalkit_tf437/lib/python3.10/site-packages/google_crc32c/cext.py +45 -0

- evalkit_tf437/lib/python3.10/site-packages/google_crc32c/py.typed +2 -0

- evalkit_tf437/lib/python3.10/site-packages/nvidia/cuda_cupti/include/Openmp/omp-tools.h +1083 -0

- evalkit_tf437/lib/python3.10/site-packages/nvidia/cuda_cupti/include/__pycache__/__init__.cpython-310.pyc +0 -0

- evalkit_tf437/lib/python3.10/site-packages/nvidia/cuda_cupti/include/cupti_callbacks.h +762 -0

- evalkit_tf437/lib/python3.10/site-packages/nvidia/cuda_cupti/include/cupti_checkpoint.h +127 -0

- evalkit_tf437/lib/python3.10/site-packages/nvidia/cuda_cupti/include/cupti_pcsampling_util.h +419 -0

- evalkit_tf437/lib/python3.10/site-packages/nvidia/cuda_cupti/include/cupti_runtime_cbid.h +458 -0

- evalkit_tf437/lib/python3.10/site-packages/nvidia/cuda_cupti/include/generated_cuda_vdpau_interop_meta.h +38 -0

- evalkit_tf437/lib/python3.10/site-packages/nvidia/cuda_nvrtc/__init__.py +0 -0

- evalkit_tf437/lib/python3.10/site-packages/nvidia/cuda_nvrtc/include/__init__.py +0 -0

- evalkit_tf437/lib/python3.10/site-packages/nvidia/cuda_nvrtc/include/nvrtc.h +845 -0

- evalkit_tf437/lib/python3.10/site-packages/nvidia/cuda_nvrtc/lib/__init__.py +0 -0

- evalkit_tf437/lib/python3.10/site-packages/nvidia/cuda_nvrtc/lib/__pycache__/__init__.cpython-310.pyc +0 -0

- evalkit_tf437/lib/python3.10/site-packages/nvidia/cuda_runtime/include/channel_descriptor.h +588 -0

- evalkit_tf437/lib/python3.10/site-packages/nvidia/cuda_runtime/include/cooperative_groups/details/coalesced_reduce.h +108 -0

- evalkit_tf437/lib/python3.10/site-packages/nvidia/cuda_runtime/include/cooperative_groups/details/scan.h +320 -0

- evalkit_tf437/lib/python3.10/site-packages/nvidia/cuda_runtime/include/cudaEGLTypedefs.h +96 -0

- evalkit_tf437/lib/python3.10/site-packages/nvidia/cuda_runtime/include/cudaGLTypedefs.h +123 -0

- evalkit_tf437/lib/python3.10/site-packages/nvidia/cuda_runtime/include/cuda_fp8.h +367 -0

- evalkit_tf437/lib/python3.10/site-packages/nvidia/cuda_runtime/include/cuda_fp8.hpp +1546 -0

- evalkit_tf437/lib/python3.10/site-packages/nvidia/cuda_runtime/include/cuda_occupancy.h +1958 -0

- evalkit_tf437/lib/python3.10/site-packages/nvidia/cuda_runtime/include/cuda_pipeline_primitives.h +148 -0

- evalkit_tf437/lib/python3.10/site-packages/nvidia/cuda_runtime/include/cuda_vdpau_interop.h +201 -0

- evalkit_tf437/lib/python3.10/site-packages/nvidia/cuda_runtime/include/device_atomic_functions.h +217 -0

- evalkit_tf437/lib/python3.10/site-packages/nvidia/cuda_runtime/include/device_double_functions.h +65 -0

- evalkit_tf437/lib/python3.10/site-packages/nvidia/cuda_runtime/include/device_functions.h +65 -0

- evalkit_tf437/lib/python3.10/site-packages/nvidia/cuda_runtime/include/driver_types.h +0 -0

- evalkit_tf437/lib/python3.10/site-packages/nvidia/cuda_runtime/include/library_types.h +103 -0

- evalkit_tf437/lib/python3.10/site-packages/nvidia/cuda_runtime/include/math_functions.h +65 -0

- evalkit_tf437/lib/python3.10/site-packages/nvidia/cuda_runtime/include/sm_20_atomic_functions.hpp +85 -0

- evalkit_tf437/lib/python3.10/site-packages/nvidia/cuda_runtime/include/sm_20_intrinsics.hpp +221 -0

- evalkit_tf437/lib/python3.10/site-packages/nvidia/cuda_runtime/include/sm_30_intrinsics.hpp +604 -0

- evalkit_tf437/lib/python3.10/site-packages/nvidia/cuda_runtime/include/sm_32_intrinsics.hpp +588 -0

- evalkit_tf437/lib/python3.10/site-packages/nvidia/cuda_runtime/include/sm_35_atomic_functions.h +58 -0

- evalkit_tf437/lib/python3.10/site-packages/nvidia/cuda_runtime/include/sm_35_intrinsics.h +116 -0

- evalkit_tf437/lib/python3.10/site-packages/nvidia/cuda_runtime/include/sm_60_atomic_functions.hpp +527 -0

- evalkit_tf437/lib/python3.10/site-packages/nvidia/cuda_runtime/include/texture_fetch_functions.h +223 -0

- evalkit_tf437/lib/python3.10/site-packages/nvidia/cuda_runtime/include/texture_types.h +177 -0

- evalkit_tf437/lib/python3.10/site-packages/nvidia/cuda_runtime/include/vector_types.h +443 -0

- evalkit_tf437/lib/python3.10/site-packages/nvidia/cuda_runtime/lib/__init__.py +0 -0

- evalkit_tf437/lib/python3.10/site-packages/nvidia/cudnn/include/__pycache__/__init__.cpython-310.pyc +0 -0

- evalkit_tf437/lib/python3.10/site-packages/nvidia/cudnn/include/cudnn_adv_infer.h +658 -0

- evalkit_tf437/lib/python3.10/site-packages/nvidia/cudnn/include/cudnn_backend.h +608 -0

- evalkit_tf437/lib/python3.10/site-packages/nvidia/cudnn/include/cudnn_cnn_infer_v8.h +571 -0

- evalkit_tf437/lib/python3.10/site-packages/nvidia/cudnn/include/cudnn_cnn_train_v8.h +219 -0

evalkit_tf437/lib/python3.10/site-packages/fastapi-0.103.2.dist-info/METADATA

ADDED

|

@@ -0,0 +1,531 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

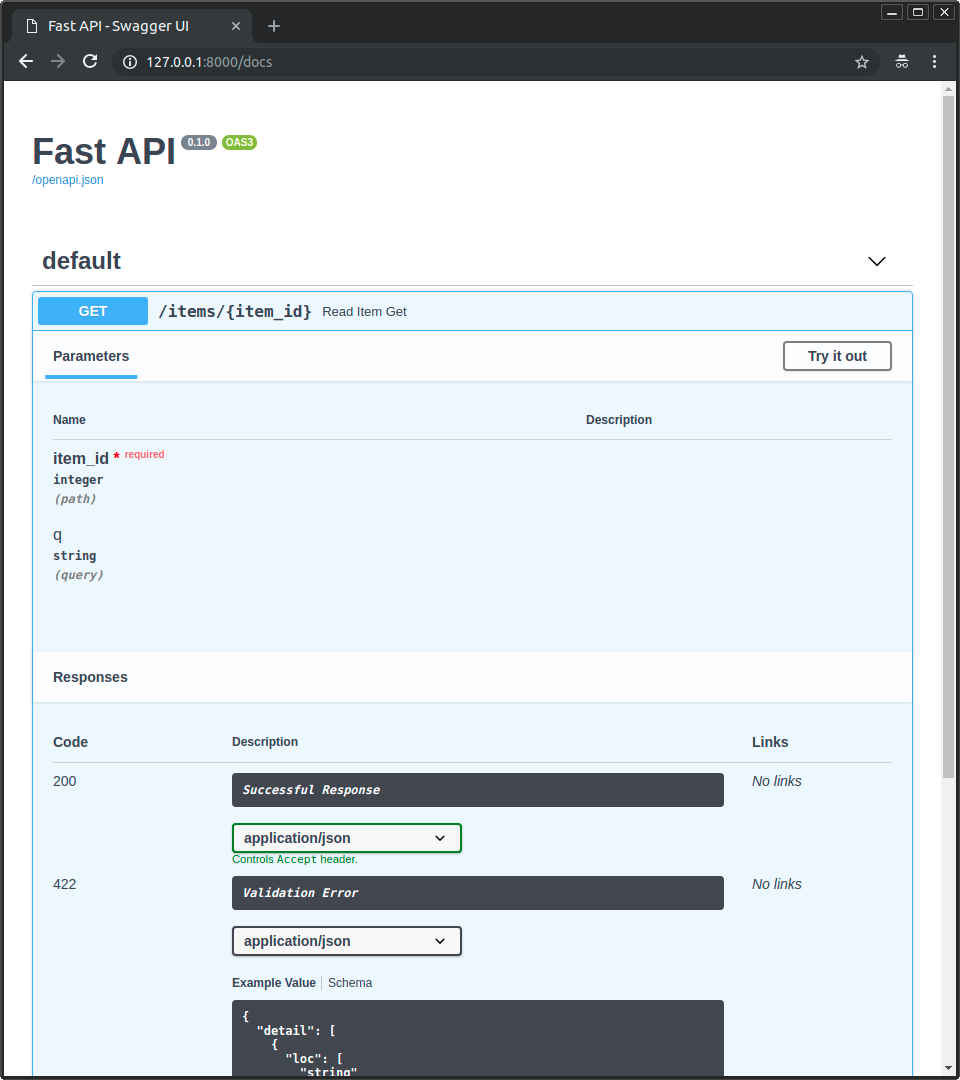

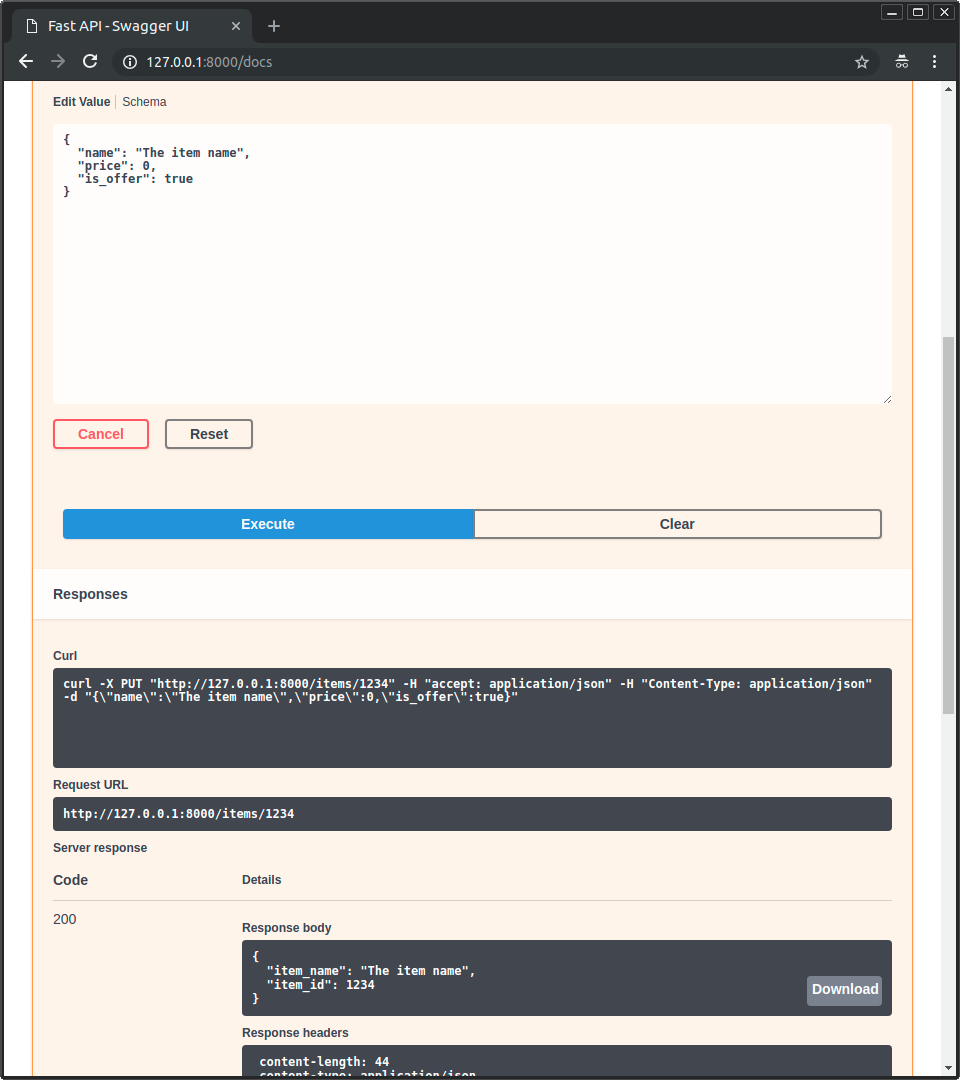

|

|

|

|

|

|

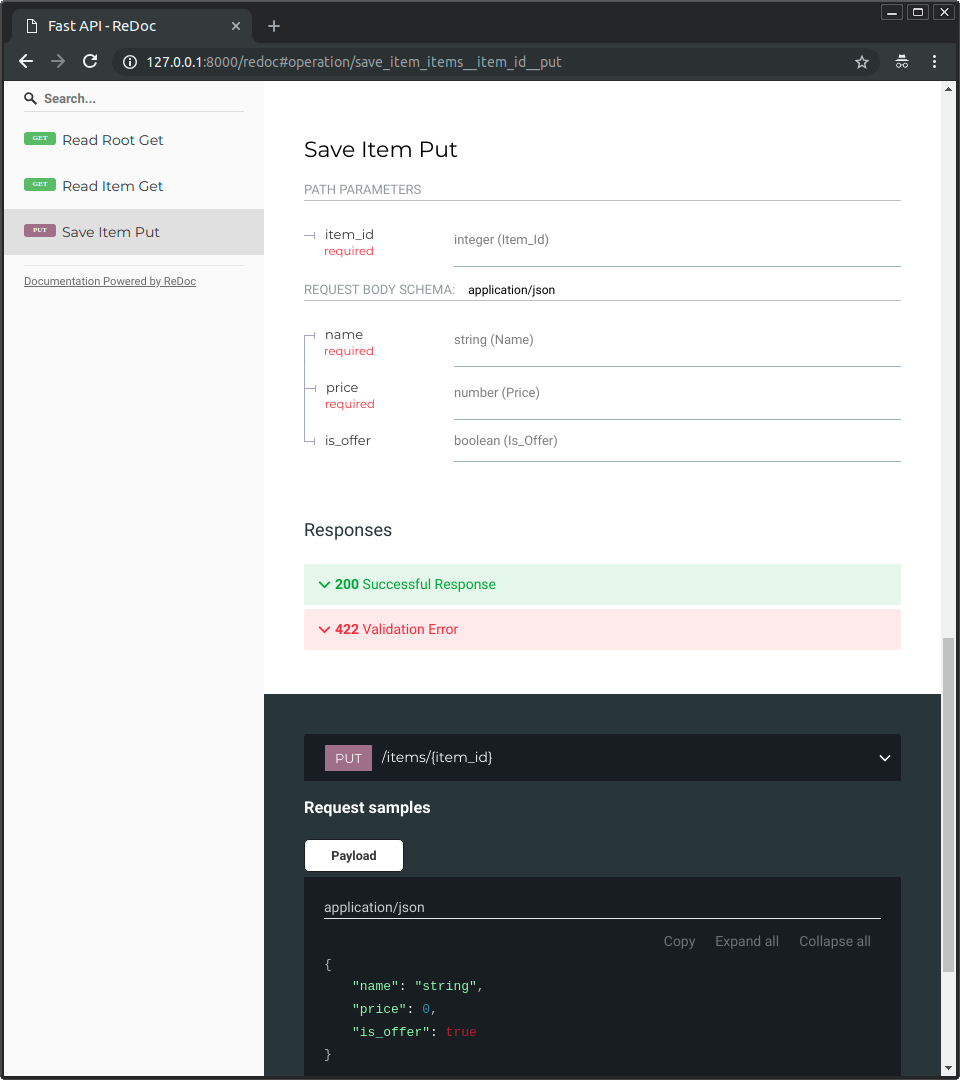

|

|

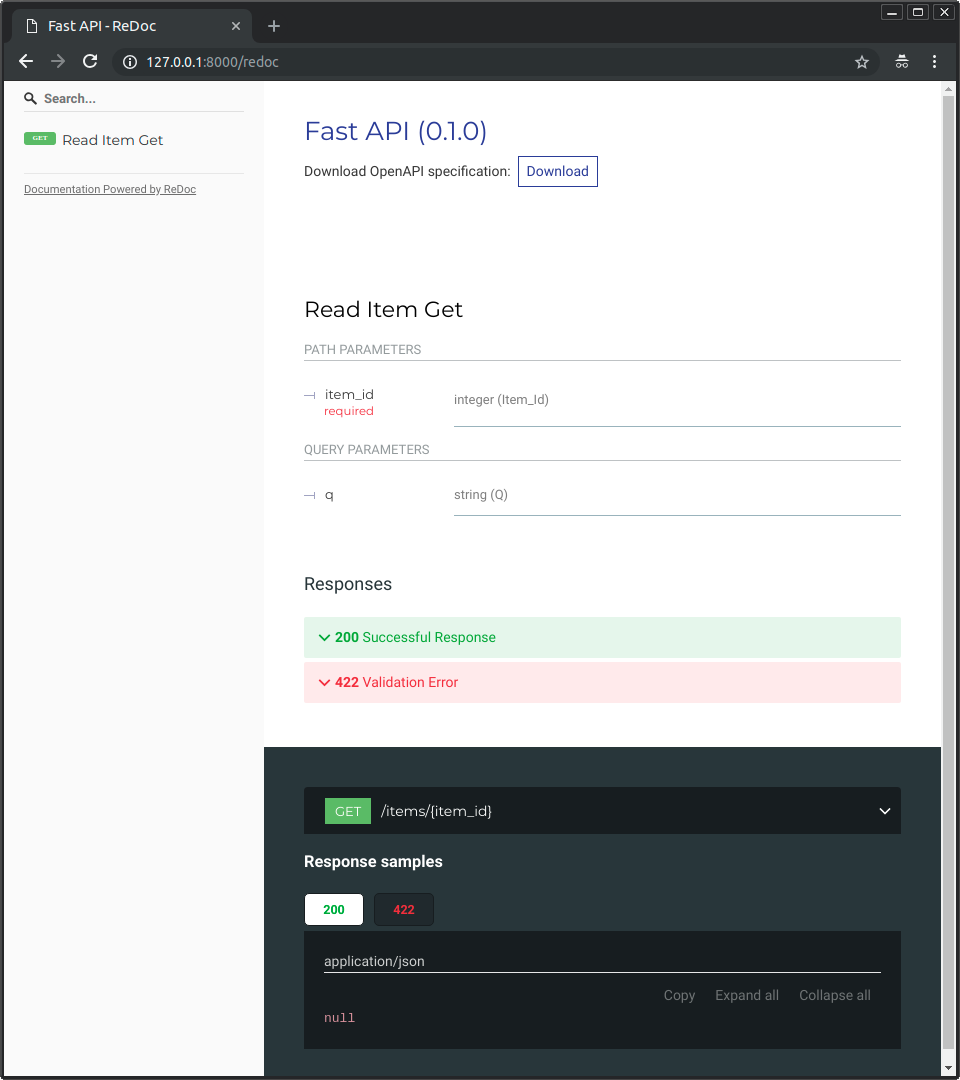

|

|

|

|

|

|

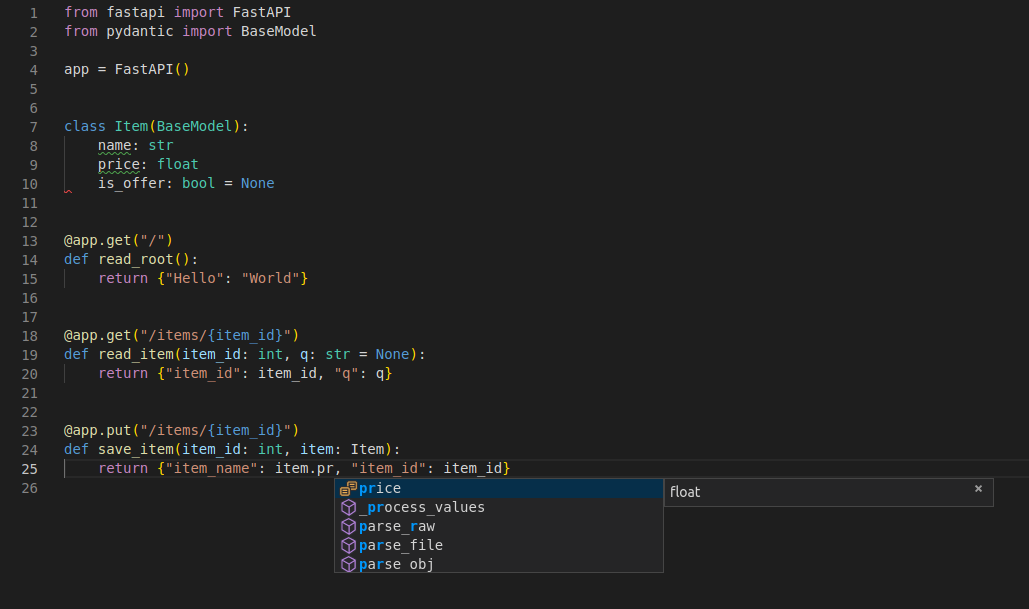

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

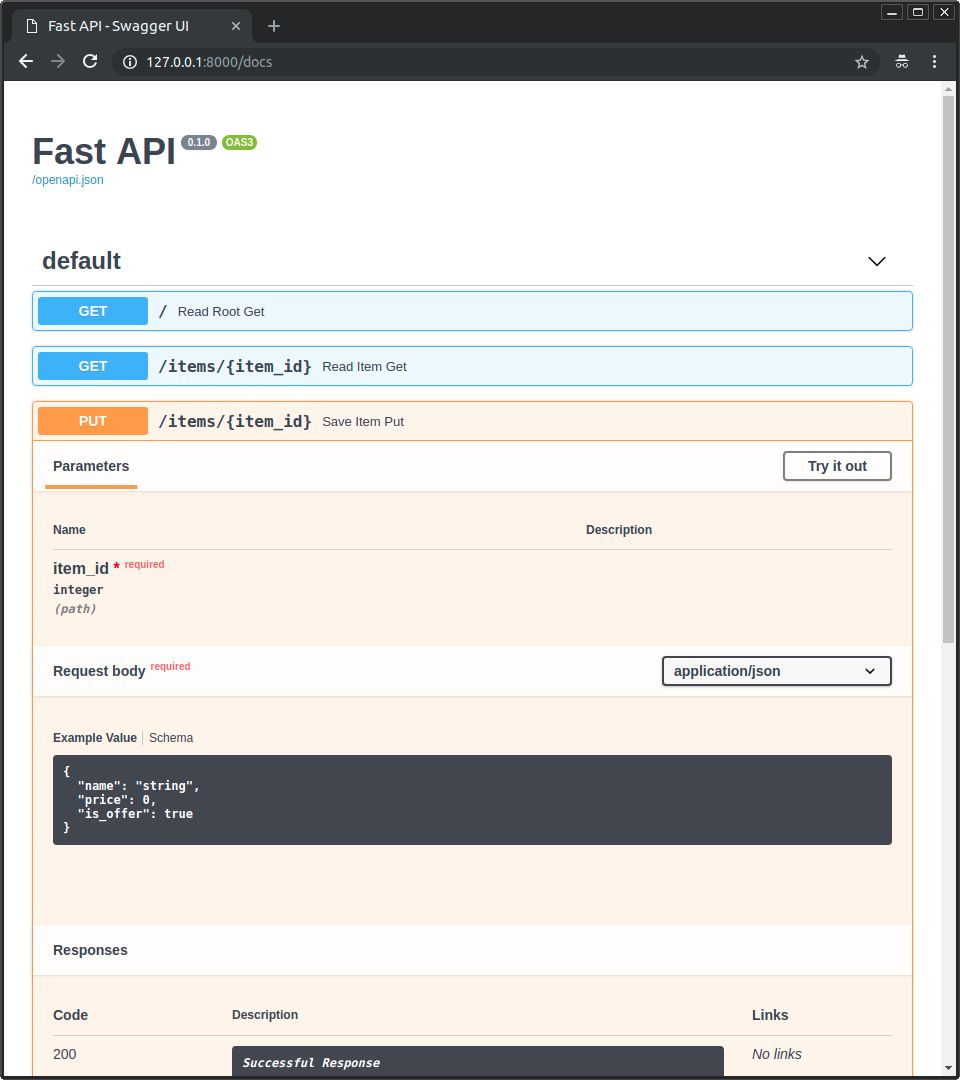

|

|

|

|

|

|

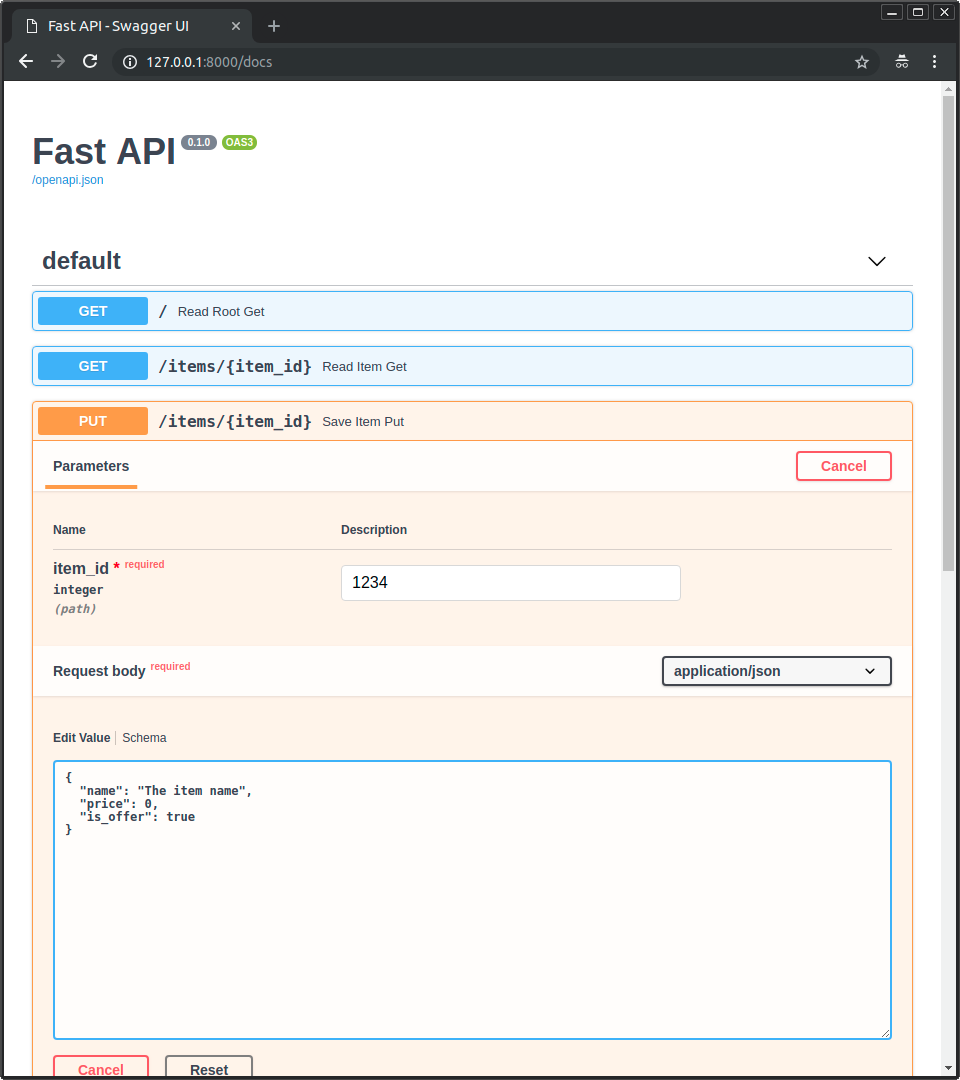

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

Metadata-Version: 2.1

|

| 2 |

+

Name: fastapi

|

| 3 |

+

Version: 0.103.2

|

| 4 |

+

Summary: FastAPI framework, high performance, easy to learn, fast to code, ready for production

|

| 5 |

+

Project-URL: Homepage, https://github.com/tiangolo/fastapi

|

| 6 |

+

Project-URL: Documentation, https://fastapi.tiangolo.com/

|

| 7 |

+

Project-URL: Repository, https://github.com/tiangolo/fastapi

|

| 8 |

+

Author-email: Sebastián Ramírez <tiangolo@gmail.com>

|

| 9 |

+

License-Expression: MIT

|

| 10 |

+

License-File: LICENSE

|

| 11 |

+

Classifier: Development Status :: 4 - Beta

|

| 12 |

+

Classifier: Environment :: Web Environment

|

| 13 |

+

Classifier: Framework :: AsyncIO

|

| 14 |

+

Classifier: Framework :: FastAPI

|

| 15 |

+

Classifier: Framework :: Pydantic

|

| 16 |

+

Classifier: Framework :: Pydantic :: 1

|

| 17 |

+

Classifier: Intended Audience :: Developers

|

| 18 |

+

Classifier: Intended Audience :: Information Technology

|

| 19 |

+

Classifier: Intended Audience :: System Administrators

|

| 20 |

+

Classifier: License :: OSI Approved :: MIT License

|

| 21 |

+

Classifier: Operating System :: OS Independent

|

| 22 |

+

Classifier: Programming Language :: Python

|

| 23 |

+

Classifier: Programming Language :: Python :: 3

|

| 24 |

+

Classifier: Programming Language :: Python :: 3 :: Only

|

| 25 |

+

Classifier: Programming Language :: Python :: 3.7

|

| 26 |

+

Classifier: Programming Language :: Python :: 3.8

|

| 27 |

+

Classifier: Programming Language :: Python :: 3.9

|

| 28 |

+

Classifier: Programming Language :: Python :: 3.10

|

| 29 |

+

Classifier: Programming Language :: Python :: 3.11

|

| 30 |

+

Classifier: Topic :: Internet

|

| 31 |

+

Classifier: Topic :: Internet :: WWW/HTTP

|

| 32 |

+

Classifier: Topic :: Internet :: WWW/HTTP :: HTTP Servers

|

| 33 |

+

Classifier: Topic :: Software Development

|

| 34 |

+

Classifier: Topic :: Software Development :: Libraries

|

| 35 |

+

Classifier: Topic :: Software Development :: Libraries :: Application Frameworks

|

| 36 |

+

Classifier: Topic :: Software Development :: Libraries :: Python Modules

|

| 37 |

+

Classifier: Typing :: Typed

|

| 38 |

+

Requires-Python: >=3.7

|

| 39 |

+

Requires-Dist: anyio<4.0.0,>=3.7.1

|

| 40 |

+

Requires-Dist: pydantic!=1.8,!=1.8.1,!=2.0.0,!=2.0.1,!=2.1.0,<3.0.0,>=1.7.4

|

| 41 |

+

Requires-Dist: starlette<0.28.0,>=0.27.0

|

| 42 |

+

Requires-Dist: typing-extensions>=4.5.0

|

| 43 |

+

Provides-Extra: all

|

| 44 |

+

Requires-Dist: email-validator>=2.0.0; extra == 'all'

|

| 45 |

+

Requires-Dist: httpx>=0.23.0; extra == 'all'

|

| 46 |

+

Requires-Dist: itsdangerous>=1.1.0; extra == 'all'

|

| 47 |

+

Requires-Dist: jinja2>=2.11.2; extra == 'all'

|

| 48 |

+

Requires-Dist: orjson>=3.2.1; extra == 'all'

|

| 49 |

+

Requires-Dist: pydantic-extra-types>=2.0.0; extra == 'all'

|

| 50 |

+

Requires-Dist: pydantic-settings>=2.0.0; extra == 'all'

|

| 51 |

+

Requires-Dist: python-multipart>=0.0.5; extra == 'all'

|

| 52 |

+

Requires-Dist: pyyaml>=5.3.1; extra == 'all'

|

| 53 |

+

Requires-Dist: ujson!=4.0.2,!=4.1.0,!=4.2.0,!=4.3.0,!=5.0.0,!=5.1.0,>=4.0.1; extra == 'all'

|

| 54 |

+

Requires-Dist: uvicorn[standard]>=0.12.0; extra == 'all'

|

| 55 |

+

Description-Content-Type: text/markdown

|

| 56 |

+

|

| 57 |

+

<p align="center">

|

| 58 |

+

<a href="https://fastapi.tiangolo.com"><img src="https://fastapi.tiangolo.com/img/logo-margin/logo-teal.png" alt="FastAPI"></a>

|

| 59 |

+

</p>

|

| 60 |

+

<p align="center">

|

| 61 |

+

<em>FastAPI framework, high performance, easy to learn, fast to code, ready for production</em>

|

| 62 |

+

</p>

|

| 63 |

+

<p align="center">

|

| 64 |

+

<a href="https://github.com/tiangolo/fastapi/actions?query=workflow%3ATest+event%3Apush+branch%3Amaster" target="_blank">

|

| 65 |

+

<img src="https://github.com/tiangolo/fastapi/workflows/Test/badge.svg?event=push&branch=master" alt="Test">

|

| 66 |

+

</a>

|

| 67 |

+

<a href="https://coverage-badge.samuelcolvin.workers.dev/redirect/tiangolo/fastapi" target="_blank">

|

| 68 |

+

<img src="https://coverage-badge.samuelcolvin.workers.dev/tiangolo/fastapi.svg" alt="Coverage">

|

| 69 |

+

</a>

|

| 70 |

+

<a href="https://pypi.org/project/fastapi" target="_blank">

|

| 71 |

+

<img src="https://img.shields.io/pypi/v/fastapi?color=%2334D058&label=pypi%20package" alt="Package version">

|

| 72 |

+

</a>

|

| 73 |

+

<a href="https://pypi.org/project/fastapi" target="_blank">

|

| 74 |

+

<img src="https://img.shields.io/pypi/pyversions/fastapi.svg?color=%2334D058" alt="Supported Python versions">

|

| 75 |

+

</a>

|

| 76 |

+

</p>

|

| 77 |

+

|

| 78 |

+

---

|

| 79 |

+

|

| 80 |

+

**Documentation**: <a href="https://fastapi.tiangolo.com" target="_blank">https://fastapi.tiangolo.com</a>

|

| 81 |

+

|

| 82 |

+

**Source Code**: <a href="https://github.com/tiangolo/fastapi" target="_blank">https://github.com/tiangolo/fastapi</a>

|

| 83 |

+

|

| 84 |

+

---

|

| 85 |

+

|

| 86 |

+

FastAPI is a modern, fast (high-performance), web framework for building APIs with Python 3.7+ based on standard Python type hints.

|

| 87 |

+

|

| 88 |

+

The key features are:

|

| 89 |

+

|

| 90 |

+

* **Fast**: Very high performance, on par with **NodeJS** and **Go** (thanks to Starlette and Pydantic). [One of the fastest Python frameworks available](#performance).

|

| 91 |

+

* **Fast to code**: Increase the speed to develop features by about 200% to 300%. *

|

| 92 |

+

* **Fewer bugs**: Reduce about 40% of human (developer) induced errors. *

|

| 93 |

+

* **Intuitive**: Great editor support. <abbr title="also known as auto-complete, autocompletion, IntelliSense">Completion</abbr> everywhere. Less time debugging.

|

| 94 |

+

* **Easy**: Designed to be easy to use and learn. Less time reading docs.

|

| 95 |

+

* **Short**: Minimize code duplication. Multiple features from each parameter declaration. Fewer bugs.

|

| 96 |

+

* **Robust**: Get production-ready code. With automatic interactive documentation.

|

| 97 |

+

* **Standards-based**: Based on (and fully compatible with) the open standards for APIs: <a href="https://github.com/OAI/OpenAPI-Specification" class="external-link" target="_blank">OpenAPI</a> (previously known as Swagger) and <a href="https://json-schema.org/" class="external-link" target="_blank">JSON Schema</a>.

|

| 98 |

+

|

| 99 |

+

<small>* estimation based on tests on an internal development team, building production applications.</small>

|

| 100 |

+

|

| 101 |

+

## Sponsors

|

| 102 |

+

|

| 103 |

+

<!-- sponsors -->

|

| 104 |

+

|

| 105 |

+

<a href="https://cryptapi.io/" target="_blank" title="CryptAPI: Your easy to use, secure and privacy oriented payment gateway."><img src="https://fastapi.tiangolo.com/img/sponsors/cryptapi.svg"></a>

|

| 106 |

+

<a href="https://platform.sh/try-it-now/?utm_source=fastapi-signup&utm_medium=banner&utm_campaign=FastAPI-signup-June-2023" target="_blank" title="Build, run and scale your apps on a modern, reliable, and secure PaaS."><img src="https://fastapi.tiangolo.com/img/sponsors/platform-sh.png"></a>

|

| 107 |

+

<a href="https://www.buildwithfern.com/?utm_source=tiangolo&utm_medium=website&utm_campaign=main-badge" target="_blank" title="Fern | SDKs and API docs"><img src="https://fastapi.tiangolo.com/img/sponsors/fern.svg"></a>

|

| 108 |

+

<a href="https://www.porter.run" target="_blank" title="Deploy FastAPI on AWS with a few clicks"><img src="https://fastapi.tiangolo.com/img/sponsors/porter.png"></a>

|

| 109 |

+

<a href="https://bump.sh/fastapi?utm_source=fastapi&utm_medium=referral&utm_campaign=sponsor" target="_blank" title="Automate FastAPI documentation generation with Bump.sh"><img src="https://fastapi.tiangolo.com/img/sponsors/bump-sh.png"></a>

|

| 110 |

+

<a href="https://www.deta.sh/?ref=fastapi" target="_blank" title="The launchpad for all your (team's) ideas"><img src="https://fastapi.tiangolo.com/img/sponsors/deta.svg"></a>

|

| 111 |

+

<a href="https://training.talkpython.fm/fastapi-courses" target="_blank" title="FastAPI video courses on demand from people you trust"><img src="https://fastapi.tiangolo.com/img/sponsors/talkpython.png"></a>

|

| 112 |

+

<a href="https://testdriven.io/courses/tdd-fastapi/" target="_blank" title="Learn to build high-quality web apps with best practices"><img src="https://fastapi.tiangolo.com/img/sponsors/testdriven.svg"></a>

|

| 113 |

+

<a href="https://github.com/deepset-ai/haystack/" target="_blank" title="Build powerful search from composable, open source building blocks"><img src="https://fastapi.tiangolo.com/img/sponsors/haystack-fastapi.svg"></a>

|

| 114 |

+

<a href="https://careers.powens.com/" target="_blank" title="Powens is hiring!"><img src="https://fastapi.tiangolo.com/img/sponsors/powens.png"></a>

|

| 115 |

+

<a href="https://databento.com/" target="_blank" title="Pay as you go for market data"><img src="https://fastapi.tiangolo.com/img/sponsors/databento.svg"></a>

|

| 116 |

+

<a href="https://speakeasyapi.dev?utm_source=fastapi+repo&utm_medium=github+sponsorship" target="_blank" title="SDKs for your API | Speakeasy"><img src="https://fastapi.tiangolo.com/img/sponsors/speakeasy.png"></a>

|

| 117 |

+

<a href="https://www.svix.com/" target="_blank" title="Svix - Webhooks as a service"><img src="https://fastapi.tiangolo.com/img/sponsors/svix.svg"></a>

|

| 118 |

+

|

| 119 |

+

<!-- /sponsors -->

|

| 120 |

+

|

| 121 |

+

<a href="https://fastapi.tiangolo.com/fastapi-people/#sponsors" class="external-link" target="_blank">Other sponsors</a>

|

| 122 |

+

|

| 123 |

+

## Opinions

|

| 124 |

+

|

| 125 |

+

"_[...] I'm using **FastAPI** a ton these days. [...] I'm actually planning to use it for all of my team's **ML services at Microsoft**. Some of them are getting integrated into the core **Windows** product and some **Office** products._"

|

| 126 |

+

|

| 127 |

+

<div style="text-align: right; margin-right: 10%;">Kabir Khan - <strong>Microsoft</strong> <a href="https://github.com/tiangolo/fastapi/pull/26" target="_blank"><small>(ref)</small></a></div>

|

| 128 |

+

|

| 129 |

+

---

|

| 130 |

+

|

| 131 |

+

"_We adopted the **FastAPI** library to spawn a **REST** server that can be queried to obtain **predictions**. [for Ludwig]_"

|

| 132 |

+

|

| 133 |

+

<div style="text-align: right; margin-right: 10%;">Piero Molino, Yaroslav Dudin, and Sai Sumanth Miryala - <strong>Uber</strong> <a href="https://eng.uber.com/ludwig-v0-2/" target="_blank"><small>(ref)</small></a></div>

|

| 134 |

+

|

| 135 |

+

---

|

| 136 |

+

|

| 137 |

+

"_**Netflix** is pleased to announce the open-source release of our **crisis management** orchestration framework: **Dispatch**! [built with **FastAPI**]_"

|

| 138 |

+

|

| 139 |

+

<div style="text-align: right; margin-right: 10%;">Kevin Glisson, Marc Vilanova, Forest Monsen - <strong>Netflix</strong> <a href="https://netflixtechblog.com/introducing-dispatch-da4b8a2a8072" target="_blank"><small>(ref)</small></a></div>

|

| 140 |

+

|

| 141 |

+

---

|

| 142 |

+

|

| 143 |

+

"_I’m over the moon excited about **FastAPI**. It’s so fun!_"

|

| 144 |

+

|

| 145 |

+

<div style="text-align: right; margin-right: 10%;">Brian Okken - <strong><a href="https://pythonbytes.fm/episodes/show/123/time-to-right-the-py-wrongs?time_in_sec=855" target="_blank">Python Bytes</a> podcast host</strong> <a href="https://twitter.com/brianokken/status/1112220079972728832" target="_blank"><small>(ref)</small></a></div>

|

| 146 |

+

|

| 147 |

+

---

|

| 148 |

+

|

| 149 |

+

"_Honestly, what you've built looks super solid and polished. In many ways, it's what I wanted **Hug** to be - it's really inspiring to see someone build that._"

|

| 150 |

+

|

| 151 |

+

<div style="text-align: right; margin-right: 10%;">Timothy Crosley - <strong><a href="https://www.hug.rest/" target="_blank">Hug</a> creator</strong> <a href="https://news.ycombinator.com/item?id=19455465" target="_blank"><small>(ref)</small></a></div>

|

| 152 |

+

|

| 153 |

+

---

|

| 154 |

+

|

| 155 |

+

"_If you're looking to learn one **modern framework** for building REST APIs, check out **FastAPI** [...] It's fast, easy to use and easy to learn [...]_"

|

| 156 |

+

|

| 157 |

+

"_We've switched over to **FastAPI** for our **APIs** [...] I think you'll like it [...]_"

|

| 158 |

+

|

| 159 |

+

<div style="text-align: right; margin-right: 10%;">Ines Montani - Matthew Honnibal - <strong><a href="https://explosion.ai" target="_blank">Explosion AI</a> founders - <a href="https://spacy.io" target="_blank">spaCy</a> creators</strong> <a href="https://twitter.com/_inesmontani/status/1144173225322143744" target="_blank"><small>(ref)</small></a> - <a href="https://twitter.com/honnibal/status/1144031421859655680" target="_blank"><small>(ref)</small></a></div>

|

| 160 |

+

|

| 161 |

+

---

|

| 162 |

+

|

| 163 |

+

"_If anyone is looking to build a production Python API, I would highly recommend **FastAPI**. It is **beautifully designed**, **simple to use** and **highly scalable**, it has become a **key component** in our API first development strategy and is driving many automations and services such as our Virtual TAC Engineer._"

|

| 164 |

+

|

| 165 |

+

<div style="text-align: right; margin-right: 10%;">Deon Pillsbury - <strong>Cisco</strong> <a href="https://www.linkedin.com/posts/deonpillsbury_cisco-cx-python-activity-6963242628536487936-trAp/" target="_blank"><small>(ref)</small></a></div>

|

| 166 |

+

|

| 167 |

+

---

|

| 168 |

+

|

| 169 |

+

## **Typer**, the FastAPI of CLIs

|

| 170 |

+

|

| 171 |

+

<a href="https://typer.tiangolo.com" target="_blank"><img src="https://typer.tiangolo.com/img/logo-margin/logo-margin-vector.svg" style="width: 20%;"></a>

|

| 172 |

+

|

| 173 |

+

If you are building a <abbr title="Command Line Interface">CLI</abbr> app to be used in the terminal instead of a web API, check out <a href="https://typer.tiangolo.com/" class="external-link" target="_blank">**Typer**</a>.

|

| 174 |

+

|

| 175 |

+

**Typer** is FastAPI's little sibling. And it's intended to be the **FastAPI of CLIs**. ⌨️ 🚀

|

| 176 |

+

|

| 177 |

+

## Requirements

|

| 178 |

+

|

| 179 |

+

Python 3.7+

|

| 180 |

+

|

| 181 |

+

FastAPI stands on the shoulders of giants:

|

| 182 |

+

|

| 183 |

+

* <a href="https://www.starlette.io/" class="external-link" target="_blank">Starlette</a> for the web parts.

|

| 184 |

+

* <a href="https://pydantic-docs.helpmanual.io/" class="external-link" target="_blank">Pydantic</a> for the data parts.

|

| 185 |

+

|

| 186 |

+

## Installation

|

| 187 |

+

|

| 188 |

+

<div class="termy">

|

| 189 |

+

|

| 190 |

+

```console

|

| 191 |

+

$ pip install fastapi

|

| 192 |

+

|

| 193 |

+

---> 100%

|

| 194 |

+

```

|

| 195 |

+

|

| 196 |

+

</div>

|

| 197 |

+

|

| 198 |

+

You will also need an ASGI server, for production such as <a href="https://www.uvicorn.org" class="external-link" target="_blank">Uvicorn</a> or <a href="https://github.com/pgjones/hypercorn" class="external-link" target="_blank">Hypercorn</a>.

|

| 199 |

+

|

| 200 |

+

<div class="termy">

|

| 201 |

+

|

| 202 |

+

```console

|

| 203 |

+

$ pip install "uvicorn[standard]"

|

| 204 |

+

|

| 205 |

+

---> 100%

|

| 206 |

+

```

|

| 207 |

+

|

| 208 |

+

</div>

|

| 209 |

+

|

| 210 |

+

## Example

|

| 211 |

+

|

| 212 |

+

### Create it

|

| 213 |

+

|

| 214 |

+

* Create a file `main.py` with:

|

| 215 |

+

|

| 216 |

+

```Python

|

| 217 |

+

from typing import Union

|

| 218 |

+

|

| 219 |

+

from fastapi import FastAPI

|

| 220 |

+

|

| 221 |

+

app = FastAPI()

|

| 222 |

+

|

| 223 |

+

|

| 224 |

+

@app.get("/")

|

| 225 |

+

def read_root():

|

| 226 |

+

return {"Hello": "World"}

|

| 227 |

+

|

| 228 |

+

|

| 229 |

+

@app.get("/items/{item_id}")

|

| 230 |

+

def read_item(item_id: int, q: Union[str, None] = None):

|

| 231 |

+

return {"item_id": item_id, "q": q}

|

| 232 |

+

```

|

| 233 |

+

|

| 234 |

+

<details markdown="1">

|

| 235 |

+

<summary>Or use <code>async def</code>...</summary>

|

| 236 |

+

|

| 237 |

+

If your code uses `async` / `await`, use `async def`:

|

| 238 |

+

|

| 239 |

+

```Python hl_lines="9 14"

|

| 240 |

+

from typing import Union

|

| 241 |

+

|

| 242 |

+

from fastapi import FastAPI

|

| 243 |

+

|

| 244 |

+

app = FastAPI()

|

| 245 |

+

|

| 246 |

+

|

| 247 |

+

@app.get("/")

|

| 248 |

+

async def read_root():

|

| 249 |

+

return {"Hello": "World"}

|

| 250 |

+

|

| 251 |

+

|

| 252 |

+

@app.get("/items/{item_id}")

|

| 253 |

+

async def read_item(item_id: int, q: Union[str, None] = None):

|

| 254 |

+

return {"item_id": item_id, "q": q}

|

| 255 |

+

```

|

| 256 |

+

|

| 257 |

+

**Note**:

|

| 258 |

+

|

| 259 |

+

If you don't know, check the _"In a hurry?"_ section about <a href="https://fastapi.tiangolo.com/async/#in-a-hurry" target="_blank">`async` and `await` in the docs</a>.

|

| 260 |

+

|

| 261 |

+

</details>

|

| 262 |

+

|

| 263 |

+

### Run it

|

| 264 |

+

|

| 265 |

+

Run the server with:

|

| 266 |

+

|

| 267 |

+

<div class="termy">

|

| 268 |

+

|

| 269 |

+

```console

|

| 270 |

+

$ uvicorn main:app --reload

|

| 271 |

+

|

| 272 |

+

INFO: Uvicorn running on http://127.0.0.1:8000 (Press CTRL+C to quit)

|

| 273 |

+

INFO: Started reloader process [28720]

|

| 274 |

+

INFO: Started server process [28722]

|

| 275 |

+

INFO: Waiting for application startup.

|

| 276 |

+

INFO: Application startup complete.

|

| 277 |

+

```

|

| 278 |

+

|

| 279 |

+

</div>

|

| 280 |

+

|

| 281 |

+

<details markdown="1">

|

| 282 |

+

<summary>About the command <code>uvicorn main:app --reload</code>...</summary>

|

| 283 |

+

|

| 284 |

+

The command `uvicorn main:app` refers to:

|

| 285 |

+

|

| 286 |

+

* `main`: the file `main.py` (the Python "module").

|

| 287 |

+

* `app`: the object created inside of `main.py` with the line `app = FastAPI()`.

|

| 288 |

+

* `--reload`: make the server restart after code changes. Only do this for development.

|

| 289 |

+

|

| 290 |

+

</details>

|

| 291 |

+

|

| 292 |

+

### Check it

|

| 293 |

+

|

| 294 |

+

Open your browser at <a href="http://127.0.0.1:8000/items/5?q=somequery" class="external-link" target="_blank">http://127.0.0.1:8000/items/5?q=somequery</a>.

|

| 295 |

+

|

| 296 |

+

You will see the JSON response as:

|

| 297 |

+

|

| 298 |

+

```JSON

|

| 299 |

+

{"item_id": 5, "q": "somequery"}

|

| 300 |

+

```

|

| 301 |

+

|

| 302 |

+

You already created an API that:

|

| 303 |

+

|

| 304 |

+

* Receives HTTP requests in the _paths_ `/` and `/items/{item_id}`.

|

| 305 |

+

* Both _paths_ take `GET` <em>operations</em> (also known as HTTP _methods_).

|

| 306 |

+

* The _path_ `/items/{item_id}` has a _path parameter_ `item_id` that should be an `int`.

|

| 307 |

+

* The _path_ `/items/{item_id}` has an optional `str` _query parameter_ `q`.

|

| 308 |

+

|

| 309 |

+

### Interactive API docs

|

| 310 |

+

|

| 311 |

+

Now go to <a href="http://127.0.0.1:8000/docs" class="external-link" target="_blank">http://127.0.0.1:8000/docs</a>.

|

| 312 |

+

|

| 313 |

+

You will see the automatic interactive API documentation (provided by <a href="https://github.com/swagger-api/swagger-ui" class="external-link" target="_blank">Swagger UI</a>):

|

| 314 |

+

|

| 315 |

+

|

| 316 |

+

|

| 317 |

+

### Alternative API docs

|

| 318 |

+

|

| 319 |

+

And now, go to <a href="http://127.0.0.1:8000/redoc" class="external-link" target="_blank">http://127.0.0.1:8000/redoc</a>.

|

| 320 |

+

|

| 321 |

+

You will see the alternative automatic documentation (provided by <a href="https://github.com/Rebilly/ReDoc" class="external-link" target="_blank">ReDoc</a>):

|

| 322 |

+

|

| 323 |

+

|

| 324 |

+

|

| 325 |

+

## Example upgrade

|

| 326 |

+

|

| 327 |

+

Now modify the file `main.py` to receive a body from a `PUT` request.

|

| 328 |

+

|

| 329 |

+

Declare the body using standard Python types, thanks to Pydantic.

|

| 330 |

+

|

| 331 |

+

```Python hl_lines="4 9-12 25-27"

|

| 332 |

+

from typing import Union

|

| 333 |

+

|

| 334 |

+

from fastapi import FastAPI

|

| 335 |

+

from pydantic import BaseModel

|

| 336 |

+

|

| 337 |

+

app = FastAPI()

|

| 338 |

+

|

| 339 |

+

|

| 340 |

+

class Item(BaseModel):

|

| 341 |

+

name: str

|

| 342 |

+

price: float

|

| 343 |

+

is_offer: Union[bool, None] = None

|

| 344 |

+

|

| 345 |

+

|

| 346 |

+

@app.get("/")

|

| 347 |

+

def read_root():

|

| 348 |

+

return {"Hello": "World"}

|

| 349 |

+

|

| 350 |

+

|

| 351 |

+

@app.get("/items/{item_id}")

|

| 352 |

+

def read_item(item_id: int, q: Union[str, None] = None):

|

| 353 |

+

return {"item_id": item_id, "q": q}

|

| 354 |

+

|

| 355 |

+

|

| 356 |

+

@app.put("/items/{item_id}")

|

| 357 |

+

def update_item(item_id: int, item: Item):

|

| 358 |

+

return {"item_name": item.name, "item_id": item_id}

|

| 359 |

+

```

|

| 360 |

+

|

| 361 |

+

The server should reload automatically (because you added `--reload` to the `uvicorn` command above).

|

| 362 |

+

|

| 363 |

+

### Interactive API docs upgrade

|

| 364 |

+

|

| 365 |

+

Now go to <a href="http://127.0.0.1:8000/docs" class="external-link" target="_blank">http://127.0.0.1:8000/docs</a>.

|

| 366 |

+

|

| 367 |

+

* The interactive API documentation will be automatically updated, including the new body:

|

| 368 |

+

|

| 369 |

+

|

| 370 |

+

|

| 371 |

+

* Click on the button "Try it out", it allows you to fill the parameters and directly interact with the API:

|

| 372 |

+

|

| 373 |

+

|

| 374 |

+

|

| 375 |

+

* Then click on the "Execute" button, the user interface will communicate with your API, send the parameters, get the results and show them on the screen:

|

| 376 |

+

|

| 377 |

+

|

| 378 |

+

|

| 379 |

+

### Alternative API docs upgrade

|

| 380 |

+

|

| 381 |

+

And now, go to <a href="http://127.0.0.1:8000/redoc" class="external-link" target="_blank">http://127.0.0.1:8000/redoc</a>.

|

| 382 |

+

|

| 383 |

+

* The alternative documentation will also reflect the new query parameter and body:

|

| 384 |

+

|

| 385 |

+

|

| 386 |

+

|

| 387 |

+

### Recap

|

| 388 |

+

|

| 389 |

+

In summary, you declare **once** the types of parameters, body, etc. as function parameters.

|

| 390 |

+

|

| 391 |

+

You do that with standard modern Python types.

|

| 392 |

+

|

| 393 |

+

You don't have to learn a new syntax, the methods or classes of a specific library, etc.

|

| 394 |

+

|

| 395 |

+

Just standard **Python 3.7+**.

|

| 396 |

+

|

| 397 |

+

For example, for an `int`:

|

| 398 |

+

|

| 399 |

+

```Python

|

| 400 |

+

item_id: int

|

| 401 |

+

```

|

| 402 |

+

|

| 403 |

+

or for a more complex `Item` model:

|

| 404 |

+

|

| 405 |

+

```Python

|

| 406 |

+

item: Item

|

| 407 |

+

```

|

| 408 |

+

|

| 409 |

+

...and with that single declaration you get:

|

| 410 |

+

|

| 411 |

+

* Editor support, including:

|

| 412 |

+

* Completion.

|

| 413 |

+

* Type checks.

|

| 414 |

+

* Validation of data:

|

| 415 |

+

* Automatic and clear errors when the data is invalid.

|

| 416 |

+

* Validation even for deeply nested JSON objects.

|

| 417 |

+

* <abbr title="also known as: serialization, parsing, marshalling">Conversion</abbr> of input data: coming from the network to Python data and types. Reading from:

|

| 418 |

+

* JSON.

|

| 419 |

+

* Path parameters.

|

| 420 |

+

* Query parameters.

|

| 421 |

+

* Cookies.

|

| 422 |

+

* Headers.

|

| 423 |

+

* Forms.

|

| 424 |

+

* Files.

|

| 425 |

+

* <abbr title="also known as: serialization, parsing, marshalling">Conversion</abbr> of output data: converting from Python data and types to network data (as JSON):

|

| 426 |

+

* Convert Python types (`str`, `int`, `float`, `bool`, `list`, etc).

|

| 427 |

+

* `datetime` objects.

|

| 428 |

+

* `UUID` objects.

|

| 429 |

+

* Database models.

|

| 430 |

+

* ...and many more.

|

| 431 |

+

* Automatic interactive API documentation, including 2 alternative user interfaces:

|

| 432 |

+

* Swagger UI.

|

| 433 |

+

* ReDoc.

|

| 434 |

+

|

| 435 |

+

---

|

| 436 |

+

|

| 437 |

+

Coming back to the previous code example, **FastAPI** will:

|

| 438 |

+

|

| 439 |

+

* Validate that there is an `item_id` in the path for `GET` and `PUT` requests.

|

| 440 |

+

* Validate that the `item_id` is of type `int` for `GET` and `PUT` requests.

|

| 441 |

+

* If it is not, the client will see a useful, clear error.

|

| 442 |

+

* Check if there is an optional query parameter named `q` (as in `http://127.0.0.1:8000/items/foo?q=somequery`) for `GET` requests.

|

| 443 |

+

* As the `q` parameter is declared with `= None`, it is optional.

|

| 444 |

+

* Without the `None` it would be required (as is the body in the case with `PUT`).

|

| 445 |

+

* For `PUT` requests to `/items/{item_id}`, Read the body as JSON:

|

| 446 |

+

* Check that it has a required attribute `name` that should be a `str`.

|

| 447 |

+

* Check that it has a required attribute `price` that has to be a `float`.

|

| 448 |

+

* Check that it has an optional attribute `is_offer`, that should be a `bool`, if present.

|

| 449 |

+

* All this would also work for deeply nested JSON objects.

|

| 450 |

+

* Convert from and to JSON automatically.

|

| 451 |

+

* Document everything with OpenAPI, that can be used by:

|

| 452 |

+

* Interactive documentation systems.

|

| 453 |

+

* Automatic client code generation systems, for many languages.

|

| 454 |

+

* Provide 2 interactive documentation web interfaces directly.

|

| 455 |

+

|

| 456 |

+

---

|

| 457 |

+

|

| 458 |

+

We just scratched the surface, but you already get the idea of how it all works.

|

| 459 |

+

|

| 460 |

+

Try changing the line with:

|

| 461 |

+

|

| 462 |

+

```Python

|

| 463 |

+

return {"item_name": item.name, "item_id": item_id}

|

| 464 |

+

```

|

| 465 |

+

|

| 466 |

+

...from:

|

| 467 |

+

|

| 468 |

+

```Python

|

| 469 |

+

... "item_name": item.name ...

|

| 470 |

+

```

|

| 471 |

+

|

| 472 |

+

...to:

|

| 473 |

+

|

| 474 |

+

```Python

|

| 475 |

+

... "item_price": item.price ...

|

| 476 |

+

```

|

| 477 |

+

|

| 478 |

+

...and see how your editor will auto-complete the attributes and know their types:

|

| 479 |

+

|

| 480 |

+

|

| 481 |

+

|

| 482 |

+

For a more complete example including more features, see the <a href="https://fastapi.tiangolo.com/tutorial/">Tutorial - User Guide</a>.

|

| 483 |

+

|

| 484 |

+

**Spoiler alert**: the tutorial - user guide includes:

|

| 485 |

+

|

| 486 |

+

* Declaration of **parameters** from other different places as: **headers**, **cookies**, **form fields** and **files**.

|

| 487 |

+

* How to set **validation constraints** as `maximum_length` or `regex`.

|

| 488 |

+

* A very powerful and easy to use **<abbr title="also known as components, resources, providers, services, injectables">Dependency Injection</abbr>** system.

|

| 489 |

+

* Security and authentication, including support for **OAuth2** with **JWT tokens** and **HTTP Basic** auth.

|

| 490 |

+

* More advanced (but equally easy) techniques for declaring **deeply nested JSON models** (thanks to Pydantic).

|

| 491 |

+

* **GraphQL** integration with <a href="https://strawberry.rocks" class="external-link" target="_blank">Strawberry</a> and other libraries.

|

| 492 |

+

* Many extra features (thanks to Starlette) as:

|

| 493 |

+

* **WebSockets**

|

| 494 |

+

* extremely easy tests based on HTTPX and `pytest`

|

| 495 |

+

* **CORS**

|

| 496 |

+

* **Cookie Sessions**

|

| 497 |

+

* ...and more.

|

| 498 |

+

|

| 499 |

+

## Performance

|

| 500 |

+

|

| 501 |

+

Independent TechEmpower benchmarks show **FastAPI** applications running under Uvicorn as <a href="https://www.techempower.com/benchmarks/#section=test&runid=7464e520-0dc2-473d-bd34-dbdfd7e85911&hw=ph&test=query&l=zijzen-7" class="external-link" target="_blank">one of the fastest Python frameworks available</a>, only below Starlette and Uvicorn themselves (used internally by FastAPI). (*)

|

| 502 |

+

|

| 503 |

+

To understand more about it, see the section <a href="https://fastapi.tiangolo.com/benchmarks/" class="internal-link" target="_blank">Benchmarks</a>.

|

| 504 |

+

|

| 505 |

+

## Optional Dependencies

|

| 506 |

+

|

| 507 |

+

Used by Pydantic:

|

| 508 |

+

|

| 509 |

+

* <a href="https://github.com/JoshData/python-email-validator" target="_blank"><code>email_validator</code></a> - for email validation.

|

| 510 |

+

* <a href="https://docs.pydantic.dev/latest/usage/pydantic_settings/" target="_blank"><code>pydantic-settings</code></a> - for settings management.

|

| 511 |

+

* <a href="https://docs.pydantic.dev/latest/usage/types/extra_types/extra_types/" target="_blank"><code>pydantic-extra-types</code></a> - for extra types to be used with Pydantic.

|

| 512 |

+

|

| 513 |

+

Used by Starlette:

|

| 514 |

+

|

| 515 |

+

* <a href="https://www.python-httpx.org" target="_blank"><code>httpx</code></a> - Required if you want to use the `TestClient`.

|

| 516 |

+

* <a href="https://jinja.palletsprojects.com" target="_blank"><code>jinja2</code></a> - Required if you want to use the default template configuration.

|

| 517 |

+

* <a href="https://andrew-d.github.io/python-multipart/" target="_blank"><code>python-multipart</code></a> - Required if you want to support form <abbr title="converting the string that comes from an HTTP request into Python data">"parsing"</abbr>, with `request.form()`.

|

| 518 |

+

* <a href="https://pythonhosted.org/itsdangerous/" target="_blank"><code>itsdangerous</code></a> - Required for `SessionMiddleware` support.

|

| 519 |

+

* <a href="https://pyyaml.org/wiki/PyYAMLDocumentation" target="_blank"><code>pyyaml</code></a> - Required for Starlette's `SchemaGenerator` support (you probably don't need it with FastAPI).

|

| 520 |

+

* <a href="https://github.com/esnme/ultrajson" target="_blank"><code>ujson</code></a> - Required if you want to use `UJSONResponse`.

|

| 521 |

+

|

| 522 |

+

Used by FastAPI / Starlette:

|

| 523 |

+

|

| 524 |

+

* <a href="https://www.uvicorn.org" target="_blank"><code>uvicorn</code></a> - for the server that loads and serves your application.

|

| 525 |

+

* <a href="https://github.com/ijl/orjson" target="_blank"><code>orjson</code></a> - Required if you want to use `ORJSONResponse`.

|

| 526 |

+

|

| 527 |

+

You can install all of these with `pip install "fastapi[all]"`.

|

| 528 |

+

|

| 529 |

+

## License

|

| 530 |

+

|

| 531 |

+

This project is licensed under the terms of the MIT license.

|

evalkit_tf437/lib/python3.10/site-packages/fastapi-0.103.2.dist-info/WHEEL

ADDED

|

@@ -0,0 +1,4 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

Wheel-Version: 1.0

|

| 2 |

+

Generator: hatchling 1.17.1

|

| 3 |

+

Root-Is-Purelib: true

|

| 4 |

+

Tag: py3-none-any

|

evalkit_tf437/lib/python3.10/site-packages/google_crc32c/_checksum.py

ADDED

|

@@ -0,0 +1,87 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

# Copyright 2020 Google LLC

|

| 2 |

+

#

|

| 3 |

+

# Licensed under the Apache License, Version 2.0 (the "License");

|

| 4 |

+

# you may not use this file except in compliance with the License.

|

| 5 |

+

# You may obtain a copy of the License at

|

| 6 |

+

#

|

| 7 |

+

# https://www.apache.org/licenses/LICENSE-2.0

|

| 8 |

+

#

|

| 9 |

+

# Unless required by applicable law or agreed to in writing, software

|

| 10 |

+

# distributed under the License is distributed on an "AS IS" BASIS,

|

| 11 |

+

# WITHOUT WARRANTIES OR CONDITIONS OF ANY KIND, either express or implied.

|

| 12 |

+

# See the License for the specific language governing permissions and

|

| 13 |

+

# limitations under the License.

|

| 14 |

+

|

| 15 |

+

import struct

|

| 16 |

+

|

| 17 |

+

|

| 18 |

+

class CommonChecksum(object):

|

| 19 |

+

"""Hashlib-alike helper for CRC32C operations.

|

| 20 |

+

|

| 21 |

+

This class should not be used directly and requires an update implementation.

|

| 22 |

+

|

| 23 |

+

Args:

|

| 24 |

+

initial_value (Optional[bytes]): the initial chunk of data from

|

| 25 |

+

which the CRC32C checksum is computed. Defaults to b''.

|

| 26 |

+

"""

|

| 27 |

+

__slots__ = ()

|

| 28 |

+

|

| 29 |

+

def __init__(self, initial_value=b""):

|

| 30 |

+

self._crc = 0

|

| 31 |

+

if initial_value != b"":

|

| 32 |

+

self.update(initial_value)

|

| 33 |

+

|

| 34 |

+

def update(self, data):

|

| 35 |

+

"""Update the checksum with a new chunk of data.

|

| 36 |

+

|

| 37 |

+

Args:

|

| 38 |

+

chunk (Optional[bytes]): a chunk of data used to extend

|

| 39 |

+

the CRC32C checksum.

|

| 40 |

+

"""

|

| 41 |

+

raise NotImplemented()

|

| 42 |

+

|

| 43 |

+

def digest(self):

|

| 44 |

+

"""Big-endian order, per RFC 4960.

|

| 45 |

+

|

| 46 |

+

See: https://cloud.google.com/storage/docs/json_api/v1/objects#crc32c

|

| 47 |

+

|

| 48 |

+

Returns:

|

| 49 |

+

bytes: An eight-byte digest string.

|

| 50 |

+

"""

|

| 51 |

+

return struct.pack(">L", self._crc)

|

| 52 |

+

|

| 53 |

+

def hexdigest(self):

|

| 54 |

+

"""Like :meth:`digest` except returns as a bytestring of double length.

|

| 55 |

+

|

| 56 |

+

Returns

|

| 57 |

+

bytes: A sixteen byte digest string, contaiing only hex digits.

|

| 58 |

+

"""

|

| 59 |

+

return "{:08x}".format(self._crc).encode("ascii")

|

| 60 |

+

|

| 61 |

+

def copy(self):

|

| 62 |

+

"""Create another checksum with the same CRC32C value.

|

| 63 |

+

|

| 64 |

+

Returns:

|

| 65 |

+

Checksum: the new instance.

|

| 66 |

+

"""

|

| 67 |

+

clone = self.__class__()

|

| 68 |

+

clone._crc = self._crc

|

| 69 |

+

return clone

|

| 70 |

+

|

| 71 |

+

def consume(self, stream, chunksize):

|

| 72 |

+

"""Consume chunks from a stream, extending our CRC32 checksum.

|

| 73 |

+

|

| 74 |

+

Args:

|

| 75 |

+

stream (BinaryIO): the stream to consume.

|

| 76 |

+

chunksize (int): the size of the read to perform

|

| 77 |

+

|

| 78 |

+

Returns:

|

| 79 |

+

Generator[bytes, None, None]: Iterable of the chunks read from the

|

| 80 |

+

stream.

|

| 81 |

+

"""

|

| 82 |

+

while True:

|

| 83 |

+

chunk = stream.read(chunksize)

|

| 84 |

+

if not chunk:

|

| 85 |

+

break

|

| 86 |

+

self.update(chunk)

|

| 87 |

+

yield chunk

|

evalkit_tf437/lib/python3.10/site-packages/google_crc32c/_crc32c.cpython-310-x86_64-linux-gnu.so

ADDED

|

Binary file (37.7 kB). View file

|

|

|

evalkit_tf437/lib/python3.10/site-packages/google_crc32c/cext.py

ADDED

|

@@ -0,0 +1,45 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

# Copyright 2020 Google LLC

|

| 2 |

+

#

|

| 3 |

+

# Licensed under the Apache License, Version 2.0 (the "License");

|

| 4 |

+

# you may not use this file except in compliance with the License.

|

| 5 |

+

# You may obtain a copy of the License at

|

| 6 |

+

#

|

| 7 |

+

# https://www.apache.org/licenses/LICENSE-2.0

|

| 8 |

+

#

|

| 9 |

+

# Unless required by applicable law or agreed to in writing, software

|

| 10 |

+

# distributed under the License is distributed on an "AS IS" BASIS,

|

| 11 |

+

# WITHOUT WARRANTIES OR CONDITIONS OF ANY KIND, either express or implied.

|

| 12 |

+

# See the License for the specific language governing permissions and

|

| 13 |

+

# limitations under the License.

|

| 14 |

+

|

| 15 |

+

import struct

|

| 16 |

+

|

| 17 |

+

# NOTE: ``__config__`` **must** be the first import because it (may)

|

| 18 |

+

# modify the search path used to locate shared libraries.

|

| 19 |

+

import google_crc32c.__config__ # type: ignore

|

| 20 |

+

from google_crc32c._crc32c import extend # type: ignore

|

| 21 |

+

from google_crc32c._crc32c import value # type: ignore

|

| 22 |

+

from google_crc32c._checksum import CommonChecksum

|

| 23 |

+

|

| 24 |

+

|

| 25 |

+

class Checksum(CommonChecksum):

|

| 26 |

+

"""Hashlib-alike helper for CRC32C operations.

|

| 27 |

+

|

| 28 |

+

Args:

|

| 29 |

+

initial_value (Optional[bytes]): the initial chunk of data from

|

| 30 |

+

which the CRC32C checksum is computed. Defaults to b''.

|

| 31 |

+

"""

|

| 32 |

+

|

| 33 |

+

__slots__ = ("_crc",)

|

| 34 |

+

|

| 35 |

+

def __init__(self, initial_value=b""):

|

| 36 |

+

self._crc = value(initial_value)

|

| 37 |

+

|

| 38 |

+

def update(self, chunk):

|

| 39 |

+

"""Update the checksum with a new chunk of data.

|

| 40 |

+

|

| 41 |

+

Args:

|

| 42 |

+

chunk (Optional[bytes]): a chunk of data used to extend

|

| 43 |

+

the CRC32C checksum.

|

| 44 |

+

"""

|

| 45 |

+

self._crc = extend(self._crc, chunk)

|

evalkit_tf437/lib/python3.10/site-packages/google_crc32c/py.typed

ADDED

|

@@ -0,0 +1,2 @@

|

|

|

|

|

|

|

|

|

|

| 1 |

+

# Marker file for PEP 561.

|

| 2 |

+

# The google_crc32c package uses inline types.

|

evalkit_tf437/lib/python3.10/site-packages/nvidia/cuda_cupti/include/Openmp/omp-tools.h

ADDED

|

@@ -0,0 +1,1083 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|