People with typical recognition capabilities are worse than chance: more often than not, they think AI-generated faces are real.

That's according to research published Nov. 12 in the journal Royal Society Open Science. However, the study also found that receiving just five minutes of training on common AI rendering errors greatly improves individuals' ability to spot the fakes.

"I think it was encouraging that our kind of quite short training procedure increased performance in both groups quite a lot," lead study author Katie Gray, an associate professor in psychology at the University of Reading in the U.K., told Live Science.

Surprisingly, the training increased accuracy by similar amounts in super recognizers and typical recognizers, Gray said. Because super recognizers are better at spotting fake faces at baseline, this suggests that they are relying on another set of clues, not simply rendering errors, to identify fake faces.

Gray hopes that scientists will be able to harness super recognizers' enhanced detection skills to better spot AI-generated images in the future.

"To best detect synthetic faces, it may be possible to use AI detection algorithms with a human-in-the-loop approach — where that human is a trained SR [super recognizer]," the authors wrote in the study.

Detecting deepfakes

In recent years, there has been an onslaught of AI-generated images online. Deepfake faces are created using a two-stage AI algorithm called generative adversarial networks. First, a fake image is generated based on real-world images, and the resulting image is then scrutinized by a discriminator that determines whether it is real or fake. With iteration, the fake images become realistic enough to get past the discriminator.

These algorithms have now improved to such an extent that individuals are often duped into thinking fake faces are more "real" than real faces — a phenomenon known as "hyperrealism."

As a result, researchers are now trying to design training regiments that can improve individuals' abilities to detect AI faces. These trainings point out common rendering errors in AI-generated faces, such as the face having a middle tooth, an odd-looking hairline or unnatural-looking skin texture. They also highlight that fake faces tend to be more proportional than real ones.

In theory, so-called super recognizers should be better at spotting fakes than the average person. These super recognizers are individuals who excel in facial perception and recognition tasks, in which they might be shown two photographs of unfamiliar individuals and asked to identify if they are the same person or not. But to date, few studies have examined super recognizers' abilities to detect fake faces, and whether training can improve their performance.

To fill this gap, Gray and her team ran a series of online experiments comparing the performance of a group of super recognizers to typical recognizers. The super recognizers were recruited from the Greenwich Face and Voice Recognition Laboratory volunteer database; they had performed in the top 2% of individuals in tasks where they were shown unfamiliar faces and had to remember them.

In the first experiment, an image of a face appeared onscreen and was either real or computer-generated. Participants had 10 seconds to decide if the face was real or not. Super recognizers performed no better than if they had randomly guessed, spotting only 41% of AI faces. Typical recognizers correctly identified only about 30% of fakes.

Each cohort also differed in how often they thought real faces were fake. This occurred in 39% of cases for super recognizers and in around 46% for typical recognizers.

The next experiment was identical, but included a new set of participants who received a five-minute training session in which they were shown examples of errors in AI-generated faces. They were then tested on 10 faces and provided with real-time feedback on their accuracy at detecting fakes. The final stage of the training involved a recap of rendering errors to look out for. The participants then repeated the original task from the first experiment.

Training greatly improved detection accuracy, with super recognizers spotting 64% of fake faces and typical recognizers noticing 51%. The rate that each group inaccurately called real faces fake was about the same as the first experiment, with super recognizers and typical recognizers rating real faces as "not real" in 37% and 49% of cases, respectively.

Trained participants tended to take longer to scrutinize the images than the untrained participants had — typical recognizers slowed by about 1.9 seconds and super recognizers did by 1.2 seconds. Gray said this is a key message to anyone who is trying to determine if a face they see is real or fake: slow down and really inspect the features.

It is worth noting, however, that the test was conducted immediately after participants completed the training, so it is unclear how long the effect lasts.

"The training cannot be considered a lasting, effective intervention, since it was not re-tested," Meike Ramon, a professor of applied data science and expert in face processing at the Bern University of Applied Sciences in Switzerland, wrote in a review of the study conducted before it went to print.

And since separate participants were used in the two experiments, we cannot be sure how much training improves an individual's detection skills, Ramon added. That would require testing the same set of people twice, before and after training.

Three decades later, another new technology has unleashed another wave of exuberance. Investors are pouring billions into any company with "AI" in its name. But there is a crucial difference between these two bubbles, which isn't always recognised. The World Wide Web existed. It was real. General Artificial Intelligence does not exist, and no one knows if or when it ever will.

In February, the CEO of OpenAI, Sam Altman, wrote on his blog that the very latest systems have only just started to "point towards" AI in its "general" sense. OpenAI may market its products as "AIs," but they are merely statistical data-crunchers, rather than "intelligences" in the sense that human beings are intelligent.

So why are investors so keen to give money to the people selling AI systems? One reason might be that AI is a mythical technology. I don't mean it is a lie. I mean it evokes a powerful, foundational story of Western culture about human powers of creation.

Perhaps investors are willing to believe AI is just around the corner because it taps into myths that are deeply ingrained in their imaginations?

The myth of Prometheus

The most relevant myth for AI is the Ancient Greek myth of Prometheus.

There are many versions of this myth, but the most famous are found in Hesiod'spoems Theogony and Works and Days, and in the play Prometheus Bound, traditionally attributed to Aeschylus.

Prometheus was a Titan, a god in the Ancient Greek pantheon. He was also a criminal who stole fire from Hephaestus, the blacksmith god. Hiding the fire in a stalk of fennel, Prometheus came to earth and gave it to humankind. As punishment, he was chained to a mountain, where an eagle visited every day to eat his liver.

Prometheus' gift was not simply the gift of fire; it was the gift of intelligence. In Prometheus Bound, he declares that before his gift humans saw without seeing and heard without hearing. After his gift, humans could write, build houses, read the stars, perform mathematics, domesticate animals, construct ships, invent medicines, interpret dreams and give proper offerings to the gods.

The myth of Prometheus is a creation story with a difference. In the Hebrew Bible, God does not give Adam the power to create life. But Prometheus gives (some of) the gods' creative power to humankind.

Hesiod indicates this aspect of the myth in Theogony. In that poem, Zeus not only punishes Prometheus for the theft of fire; he punishes humankind as well. He orders Hephaestus to fire up his forge and construct the first woman, Pandora, who unleashes evil on the world.

The fire that Hephaestus uses to make Pandora is the same fire that Prometheus has given humankind.

The Greeks proposed the idea that humans are a form of artificial intelligence. Prometheus and Hephaestus use technology to manufacture men and women. As historian Adrienne Mayor reveals in her book Gods and Robots, the ancients often depicted Prometheus as a craftsman, using ordinary tools to create human beings in an ordinary workshop.

If Prometheus gave us the fire of the gods, it would seem to follow that we can use this fire to make our own intelligent beings. Such stories abound in Ancient Greek literature, from the inventor Daedalus, who created statues that came to life, to the witch Medea, who could restore youth and potency with her cunning drugs. Greek inventors also constructed mechanical computers for astronomy and remarkable moving figures powered by gravity, water and air.

The Pope and the chatbot

2,700 years have passed since Hesiod first wrote down the story of Prometheus. In the ensuing centuries, the myth has been endlessly retold, especially since the publication of Mary Shelley's Frankenstein; or the Modern Prometheus in 1818.

But the myth is not always told as fiction. Here are two historical examples where the myth of Prometheus seemed to come true.

Gerbert of Aurillac was the Prometheus of the 10th century. He was born in the early 940s CE, went to school at Aurillac Abbey, and became a monk himself. He proceeded to master every known branch of learning. In the year 999, he was elected Pope. He died in 1003 under his pontifical name, Sylvester II.

Rumours about Gerbert spread wildly across Europe. Within a century of his death, his life had already become legend. One of the most famous legends, and the most pertinent in our age of AI hype, is that of Gerbert's "brazen head." The legend was told in the 1120s by the English historian William of Malmesbury, in his well researched and highly regarded book, Deeds of the English Kings.

Gerbert was deeply learned in astronomy, a science of prediction. Astronomers could use the astrolabe to predict the position of the stars and foresee cosmological events such as eclipses. According to William, Gerbert used his knowledge of astronomy to construct a talking head. After inspecting the movements of the stars and planets, he cast a head in bronze that could answer yes-or-no questions.

First Gerbert asked the head: "Will I become Pope?"

"Yes," answered the head.

Then Gerbert asked: "Will I die before I sing mass in Jerusalem?"

"No," the head replied.

In both cases, the head was correct, though not as Gerbert anticipated. He did become Pope, and he sensibly avoided going on pilgrimage to Jerusalem. One day, however, he sang mass at Santa Croce in Gerusalemme in Rome. Unfortunately for Gerbert, Santa Croce in Gerusalemme was known in those days simply as "Jerusalem."

Gerbert sickened and died. On his deathbed, he asked his attendants to cut up his body and cast away the pieces, so he could go to his true master, Satan. In this way, he was, like Prometheus, punished for his theft of fire.

It is a thrilling story. It is not clear whether William of Malmesbury actually believed it. But he does try to persuade his readers that it is plausible. Why did this great historian with a devotion to the truth insert some fanciful legends about a French pope into his history of England? Good question!

Is it so fanciful to believe that an advanced astronomer might build a general-purpose prediction machine? In those days, astronomy was the most powerful science of prediction. The sober and scholarly William was at least willing to entertain the idea that brilliant advances in astronomy might make it possible for a Pope to build an intelligent chatbot.

Today, that same possibility is credited to machine-learning algorithms, which can predict which ad you will click, which movie you will watch, which word you will type next. We can be forgiven for falling under the same spell.

The anatomist and the automaton

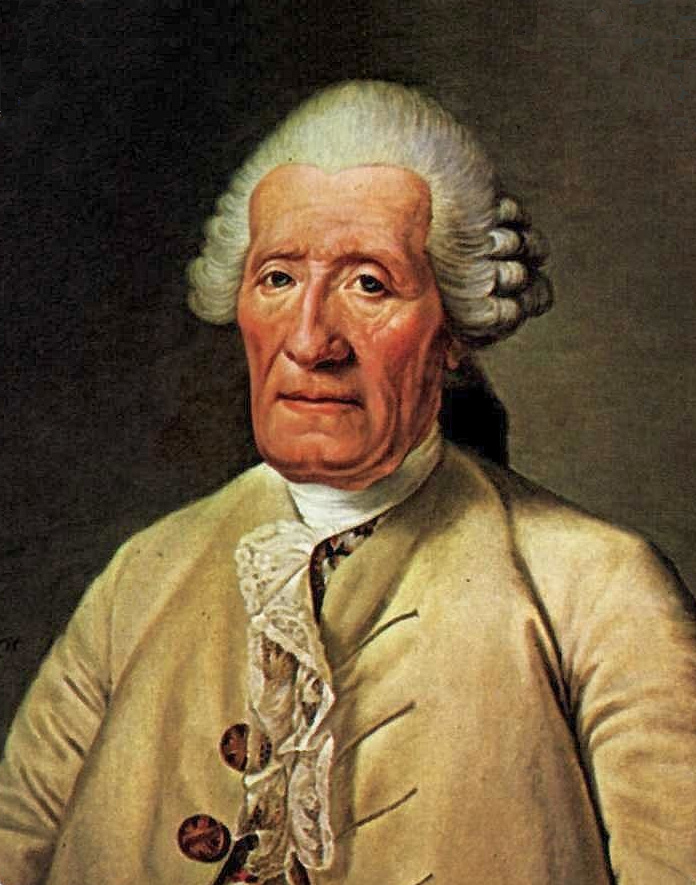

The Prometheus of the 18th century was Jacques de Vaucanson, at least according to Voltaire:

Bold Vaucanson, rival of Prometheus,Seems, imitating the springs of nature,To steal the fire of heaven to animate the body.

Vaucanson was a great machinist, famous for his automata. These were clockwork devices that realistically simulated human or animal anatomy. Philosophers of the time believed that the body was a machine — so why couldn't a machinist build one?

Sometimes Vaucanson's automata were scientifically significant. He constructed a piper, for example, that had lips and lungs and fingers, and blew the pipe in much the same way a human would. Historian Jessica Riskin explains in her book The Restless Clock that Vaucanson had to make significant discoveries in acoustics in order to make his piper play in tune.

Sometimes his automata were less scientific. His digesting duck was hugely famous, but turned out to be fraudulent. It appeared to eat and digest food, but its poos were in fact prefabricated pellets hidden inside the mechanism.

Vaucanson spent decades working on what he called a "moving anatomy." In 1741, he presented a plan to the Lyons Academy to build an "imitation of all animal operations." Twenty years later, he was at it again. He secured support from King Louis XV to build a simulation of the circulatory system. He claimed he could build a complete, living artificial body.

There is no evidence that Vaucanson ever completed a whole body. In the end, he couldn't live up to the hype. But many of his contemporaries believed he could do it. They wanted to believe in his magical mechanisms. They wished he would seize the fire of life.

If Vaucanson could manufacture a new human body, couldn't he also repair an existing one? This is the promise of some AI companies today. According to Dario Amodei, CEO of Anthropic, AI will soon allow people "to live as long as they want." Immortality seems like an attractive investment.

Sylvester II and Vaucanson were great technologists, but neither was a Prometheus. They stole no fire from the gods. Will the aspiring Prometheans of Silicon Valley succeed where their predecessors have failed? If only we had Sylvester II's brazen head, we could ask it.

This edited article is republished from The Conversation under a Creative Commons license. Read the original article.

]]>