Sensor: 25.5MP APS-C CMOS

Monitor: 3-inch vari-angle, 1.04 million dots

Image stabilization: Digital only in-camera, or optical via attached IS-equipped lens

Autofocus detection range: Down to -5EV, if using an f/1.2 lens

ISO Range: ISO 100-32,000 (expandable to ISO 51,200)

Minimum shutter speed: 30 seconds

Burst rate: Up to 15FPS with electronic shutter or 12FPS with mechanical shutter

Video: UHD 4K video up to 60p (with a crop) and uncropped 4K 30p

Battery life: Approx. 480 shots

Storage: Single SD card slot

Weight: 13.1 oz (370 g)

Close competitors include Sony’s blogger-oriented ‘ZV’ series of compact digital cameras with their flip-out, selfie-enabling rear screens. We get an angle-adjustable LCD on Canon’s EOS R50 V, too, which is also a touchscreen. Unfortunately, because of this, Canon hasn’t bothered to include our personal favorite feature of an eye-level viewfinder as an alternative means of composition and review.

An angle-adjustable screen has uses beyond the purely narcissistic, of course. It’s great for helping us achieve those otherwise awkward high or low angle shots, while including features accessible via a tap of the screen negates the need for a body crammed with buttons, wheels and dials — even if we do enjoy the tactile nature of such features.

So, how does the R50 V acquit itself in practice, especially when it comes to satisfying the needs of beginner photographers, not just videographers?

Canon EOS R50 V review

Design & comfort

- Vari-angle touch panel LCD allows for easy shot composition from awkward angles

- Handgrip is more slender and slippery than we’d like for handheld shooting, especially when a lens is attached

- Boxy dimensions resemble something from Canon’s EOS Cinema range rather than the EOS R series

Resembling a child’s drawing of a camera — essentially a box with a sensor and a lens mount — the EOS R50 V is far from the flashiest mirrorless model we’ve seen. Especially in the age of retro-styled gems like the OM System OM-3, Fujifilm X100V / VI and the Nikon Zf and Zfc. In fact, as mentioned above, Canon’s styling is closer to the functional appearance of its pro-targeted Cinema EOS range rather than the rest of its R series lineup.

That said, it’s not quite so self-consciously wacky as Canon’s PowerShot V10, a video-first attempt with a vertically styled wide-angle lens. While that model had its cigarette packet-sized point-and-shoot charm, the fact that we can use a broad range of EOS R system lenses and accessories with the R50 V extends the creative possibilities much further. Although available at a price to entice consumers, it feels like there is serious intent here, too.

In terms of ergonomic design, the paucity of buttons here means everything falls readily to hand. The EOS R50 V’s raised shutter release is encircled via a zoom lever, something we’d more commonly expect to find on a point-and-shoot digital digicam, rather than an interchangeable lens model. This works in tandem with Canon’s motorized RF-S 14-30mm f/4-6.3 IS STM PZ or ‘Power Zoom’ lens that arrived with our review sample. Alternatively, the zoom can be more conventionally controlled via a manual twist of its lens barrel. The foreshortened lens design allows for a slimmer, more compact profile when the optic and camera are combined.

Also encircled, this time by a command/control dial, is the camera’s on/off power lever. A familiar top plate shooting mode dial features just the one dedicated stills setting rather than individual P, A, S, M settings — the selection of which brings up shooting mode options on-screen — alongside a fistful of further video options. Joined by no fewer than three custom settings is a ‘Slow and Fast’ video option. As it suggests, this gifts videographers control over the speed of capture and playback, either speeding up or slowing down footage.

Weighing just 370 g with battery and card, if we’ve one grumble it’s that we found the magnesium and aluminum alloy built camera felt a bit insubstantial when held in the palm, although adding the 14-30mm compact lens lends it marginally more heft. At least it’s comfortably portable — helpful as we’ll be taking a tripod out with us for astrophotography and potentially also for wildlife photography. That said, the camera body alone is still too wide to fit in most pockets, at dimensions of 119.3 x 73.7 x 45.2 mm.

Electronic viewfinder & LCD screen

- No EVF, but it does have 1.04 million dot resolution 3-inch vari-angle capacitive touchscreen LCD instead

- LCD offers 100% frame coverage with a 150-degree vertical and horizontal viewing angle

- AF point is selectable with a screen tap while in still and video modes, with touch shutter accessible in still photo shooting mode only

Anyone viewing investment in the Canon EOS R50 V as a step up from their smartphone for creating videos or shooting stills may not immediately register the fact that an eye-level viewfinder, as found on most dedicated digital cameras in this price bracket, is omitted here. For those of us who love distraction-free image composition via an optical finder or EVF, it’s a real shame. That said, those composing video in the main can probably live with that, as the 3-inch LCD’s aspect ratio is closer to that of a desktop PC monitor, or TV set.

Still, it’s just as well that the monitor here is endlessly flexible, capable of being flipped out from the body to the full 180 degrees and turned to face the subject in front of the lens. We can hold the camera above our heads and tilt the screen down so we can see what the camera’s lens is seeing. Or hold it low to the ground and tilt the screen upwards to avoid us having to get down on our knees. At times like those, we found ourselves missing an eye-level viewfinder just that little bit less. The fact that we can tap the screen and take the picture once we’ve got the subject framed, just as we want it, is a further boon that adds to the camera’s overall intuitiveness.

Outwardly resembling a baby version of its maker’s Cinema EOS cameras, we found its angle-adjustable LCD screen essential for low-angle wildlife shots and night sky images alike, especially as there is no eye-level viewfinder to add interest to its boxy design. Because said panel is touch sensitive, helpfully, the manual functions required for astrophotography are placed at our fingertips and are easier to locate in the dark than the camera’s tiny black buttons.

Image quality & dynamic range

- Choice of JPEG, HEIF and RAW formats

- ISO 1600 or ISO 3200 proves the sweet spot during long exposures for night sky shoots

- 4K resolution video clips at up to a cinematic 60FPS, selectable for videographers and content creators of every description

The APS-C sensor offers 24 megapixel stills for the photographers and up to 4K 60FPS video clips for the filmmakers. What this camera does miss when it comes to still image capture, although obviously irrelevant for video, is a built-in flash bulb. There is a hot shoe atop this model for accessory attachment, however.

As we might assume, given the camera’s compositional LCD screen is presented in 3:2 aspect ratio, the default setting when it comes to image capture is 3:2, which will deliver maximum resolution shots. Lower resolution alternatives include the more standard 4:3, a widescreen 16:9, plus 1:1.

The tone of images produced can be affected in camera via its digital filter effects, selected from Canon’s familiar Picture Style options. On top of settings optimized for portraiture and landscapes, various color filter effects can provide subtle blue, green and magenta washes to images, should we feel that enhances them in any way. As we felt the warm yet naturalistic images produced by the camera as a default were punchy enough, we tended to steer clear of these and opt for the ‘Standard’ Picture Style setting as our chosen default. Clear, sharp and moreover consistent results are what this camera delivers, as we’d fully expect from Canon. Anyone getting started with this model is going to appreciate such consistency, taking both their photography and videography a step further.

Autofocus & subject detection

- Auto Focus capable of being determined down to -5EV

- Dual Pixel CMOS AF II system offers eye and face detection

- AF tracking covers the most common subjects of people, animals and vehicles and delivers a consistent performance

The Canon EOS R50 V features its manufacturer’s Dual Pixel CMOS AF II system, offering the ability to automatically determine focus down to -5EV. The camera’s AF system also offers eye and face detection along with tracking AF for people, animals and vehicles. The animals option proves useful for wildlife and results in a higher proportion of sharp images than we’d achieve without it. We also found that if a duck were to turn its head so that we only saw the tuft at the back, the camera would still stay locked on. We wouldn't necessarily consider it one of the best cameras for wildlife photography, but it does a pretty good job.

While aimed at keen amateurs rather than pros, this mirrorless compact is as swift in its responses as we’d expect a consumer-level DSLR to be. Pressing down halfway on its shutter release button prompts the camera to acquire focus as quickly as we can blink. As the back screen is also a touchscreen, we can tap on an area of the panel to redirect the auto focus if it hasn’t alighted on the portion of the frame or the subject we originally intended. For night sky photography, we found it helpful to press left on the camera’s rear plate control dial to switch from auto to manual focus, allowing us to fine-tune the camera’s response and make sure the stars were as sharply rendered as possible.

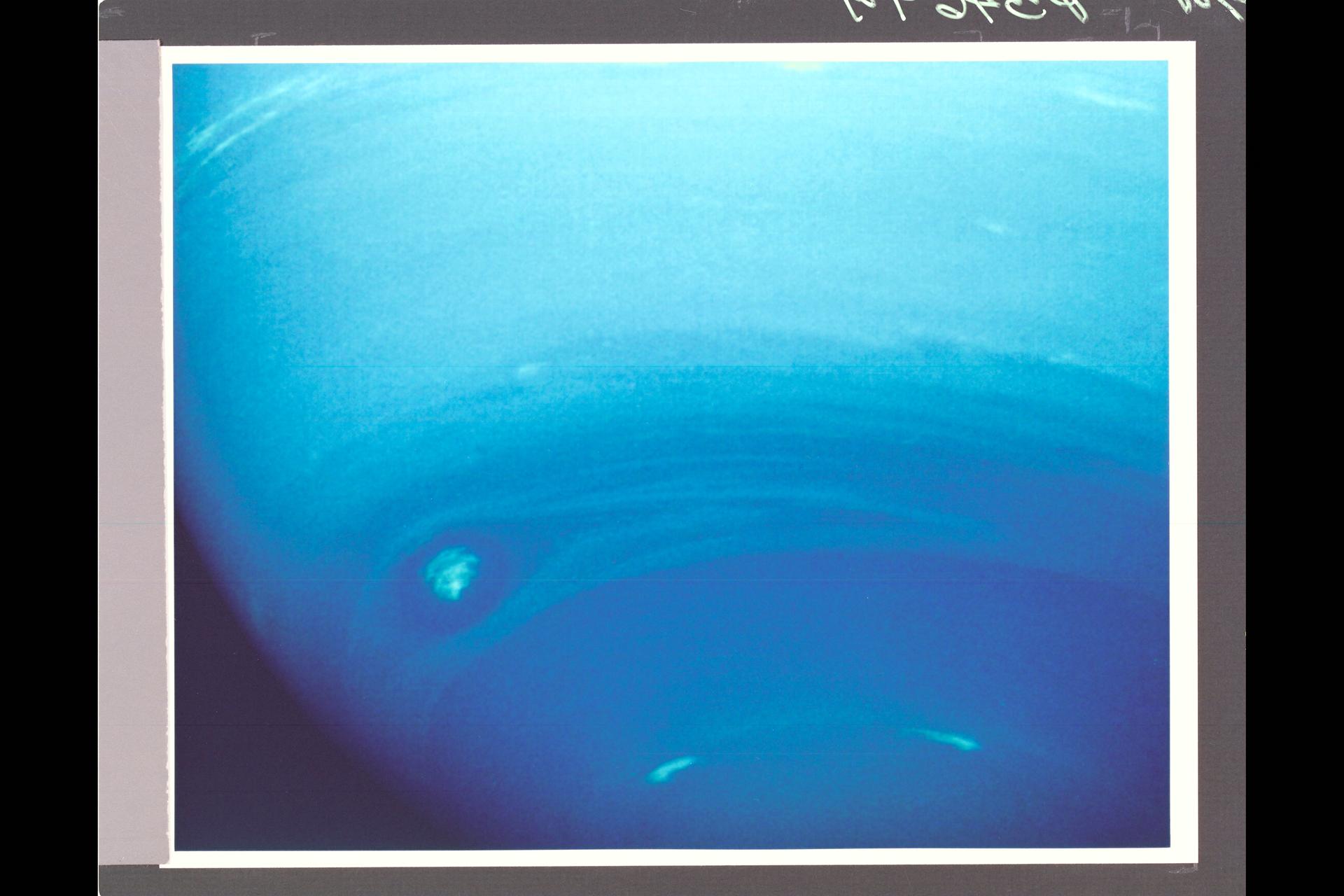

Astro & low light performance

- Core light sensitivity range expandable from ISO 32,000 up to ISO 51,200 if required

- Screw thread for tripod mounting is provided at the base

- Digital image stabilization in lieu of the sensor shift variety, though optical image stabilization can be provided if choosing an IS-equipped lens

We got our best results when attempting astrophotography by switching to manual focus rather than purely relying on the camera’s AF to try and focus on the constellations. If there is a tree line or illuminated road in the frame, the camera will obviously try and focus on that rather than more ethereal heavenly objects. It certainly helps that we can use the camera’s touchscreen to make changes and select settings in real time rather than having to otherwise rely on its tiny backplate buttons. We found the latter — black buttons against a black background — almost impossible to locate in the pitch black.

Burst rate, buffer & battery life

- Battery delivers approximately 480 shots

- Up to 15FPS with electronic shutter or 12FPS with mechanical shutter

- Maximum burst sizes of up to 95 images are possible

The R50 V’s rechargeable lithium battery is claimed to be good for up to 480 shots from a full charge. That sort of performance is respectable for this beginner class of camera and indeed better than average for its consumer class. Image bursts can be captured up to a maximum of 95 shots. In terms of shooting sports, action photography or capturing wildlife, buffer capacity is such that we’ve got the option here to capture up to 12FPS bursts with mechanical shutter, speed maintained for approximately 42 JPEGs or 7 RAW images. If that’s not quite enough for the purpose, alternatively, we can switch things up to 15FPS if opting for the electronic shutter for 28 JPEGs, or again, 7 RAW files.

Verdict

✅ You want a comprehensive, fully featured retro-styled camera with plenty of physical control to supplement the more intuitive touch screen operation.

✅ You want an all-rounder that just happens to have a few specialist tricks up its sleeve for occasional astrophotography.

❌ You are looking for a simple point-and-shoot camera, or at the other end of the scale, would prefer a full-frame sensor.

❌ You want a compact camera with a comfortably rounded and robust handgrip for handheld captures.

The EOS R50 V may look boxy and unassuming from the outside, but the focus here is on functionality rather than form. While the build for us felt more insubstantial than we’d like, at least this aids portability. It does, however, miss a well-rounded grip that would be a further aid to shooting handheld, especially for those vloggers who may like to make ‘walk and talk’ type recordings to the camera. However, it’s not such an issue for astrophotography, whereby we’ll need to have the camera tripod-mounted and set the self-timer for firing the shutter release, so the process of otherwise pressing it ourselves doesn’t jog the camera.

As the operation is built around the touch panel LCD, inevitably, there is more to this camera than its pared-back physical control layout may suggest. This leaves room for those just getting started to grow their skill set, as greater familiarity ensures arriving quickly at the settings we want and it becoming second nature. However, if we’re more into shooting stills than video, it would make sense to look elsewhere at the multitude of alternatives, not least the likes of the OM System OM-3, which not only looks much more attractive in terms of design but also features a dedicated Starry Sky AF setting for astrophotographers.

If the Canon EOS R50 V isn't for you

If you’re seeking out a hybrid camera that’s just as, if not more than, focused on video as stills, then also check out the Panasonic S1R II, which resembles more of a conventionally classic camera build, as well as its updated range toppers in the S1 II and the S1 IIE. These share some of the earlier S1R II’s technological DNA, plus near-identical outer construction. Closer alternatives to the Canon EOS R50 V still come via the Sony ZV range of content creator-targeted compacts, of which there is a growing creative arsenal.

How we tested the Canon EOS R50 V

We recorded video clips at both 4K and Full HD settings, with little difference between them visible when watched back on our desktop PC. When recording outdoors and being reliant on the built-in microphones, we experienced issues with wind noise that could be much improved/avoided with the use of an accessory microphone with a windshield. Naturally, we tested the EOS R50 V out during the daytime as well as at night, with resultant images well saturated, color-rich and detailed yet naturalistic with it despite being slightly on the warm side, which is what we’d expect of a Canon.

In terms of night sky photography, we were shooting wide open at the lens’ maximum f/4 setting and trying out manually selected exposure durations of 15 and 10 seconds, down to five or four seconds, coupling this with selected ISO settings of ISO 1600 and ISO 3200. At all times, we had the camera mounted on a tripod, focusing manually and then using the model’s self-timer to remotely trigger the shutter.

]]>