modelId stringlengths 4 111 | lastModified stringlengths 24 24 | tags list | pipeline_tag stringlengths 5 30 ⌀ | author stringlengths 2 34 ⌀ | config null | securityStatus null | id stringlengths 4 111 | likes int64 0 9.53k | downloads int64 2 73.6M | library_name stringlengths 2 84 ⌀ | created timestamp[us] | card stringlengths 101 901k | card_len int64 101 901k | embeddings list |

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

Salesforce/blip2-opt-2.7b | 2023-09-11T13:01:16.000Z | [

"transformers",

"pytorch",

"blip-2",

"visual-question-answering",

"vision",

"image-to-text",

"image-captioning",

"en",

"arxiv:2301.12597",

"license:mit",

"endpoints_compatible",

"has_space",

"region:us"

] | image-to-text | Salesforce | null | null | Salesforce/blip2-opt-2.7b | 162 | 162,652 | transformers | 2023-02-06T16:21:49 | ---

language: en

license: mit

tags:

- vision

- image-to-text

- image-captioning

- visual-question-answering

pipeline_tag: image-to-text

---

# BLIP-2, OPT-2.7b, pre-trained only

BLIP-2 model, leveraging [OPT-2.7b](https://huggingface.co/facebook/opt-2.7b) (a large language model with 2.7 billion parameters).

It was introduced in the paper [BLIP-2: Bootstrapping Language-Image Pre-training with Frozen Image Encoders and Large Language Models](https://arxiv.org/abs/2301.12597) by Li et al. and first released in [this repository](https://github.com/salesforce/LAVIS/tree/main/projects/blip2).

Disclaimer: The team releasing BLIP-2 did not write a model card for this model so this model card has been written by the Hugging Face team.

## Model description

BLIP-2 consists of 3 models: a CLIP-like image encoder, a Querying Transformer (Q-Former) and a large language model.

The authors initialize the weights of the image encoder and large language model from pre-trained checkpoints and keep them frozen

while training the Querying Transformer, which is a BERT-like Transformer encoder that maps a set of "query tokens" to query embeddings,

which bridge the gap between the embedding space of the image encoder and the large language model.

The goal for the model is simply to predict the next text token, giving the query embeddings and the previous text.

<img src="https://huggingface.co/datasets/huggingface/documentation-images/resolve/main/transformers/model_doc/blip2_architecture.jpg"

alt="drawing" width="600"/>

This allows the model to be used for tasks like:

- image captioning

- visual question answering (VQA)

- chat-like conversations by feeding the image and the previous conversation as prompt to the model

## Direct Use and Downstream Use

You can use the raw model for conditional text generation given an image and optional text. See the [model hub](https://huggingface.co/models?search=Salesforce/blip) to look for

fine-tuned versions on a task that interests you.

## Bias, Risks, Limitations, and Ethical Considerations

BLIP2-OPT uses off-the-shelf OPT as the language model. It inherits the same risks and limitations as mentioned in Meta's model card.

> Like other large language models for which the diversity (or lack thereof) of training

> data induces downstream impact on the quality of our model, OPT-175B has limitations in terms

> of bias and safety. OPT-175B can also have quality issues in terms of generation diversity and

> hallucination. In general, OPT-175B is not immune from the plethora of issues that plague modern

> large language models.

>

BLIP2 is fine-tuned on image-text datasets (e.g. [LAION](https://laion.ai/blog/laion-400-open-dataset/) ) collected from the internet. As a result the model itself is potentially vulnerable to generating equivalently inappropriate content or replicating inherent biases in the underlying data.

BLIP2 has not been tested in real world applications. It should not be directly deployed in any applications. Researchers should first carefully assess the safety and fairness of the model in relation to the specific context they’re being deployed within.

### How to use

For code examples, we refer to the [documentation](https://huggingface.co/docs/transformers/main/en/model_doc/blip-2#transformers.Blip2ForConditionalGeneration.forward.example).

#### Running the model on CPU

<details>

<summary> Click to expand </summary>

```python

import requests

from PIL import Image

from transformers import Blip2Processor, Blip2ForConditionalGeneration

processor = Blip2Processor.from_pretrained("Salesforce/blip2-opt-2.7b")

model = Blip2ForConditionalGeneration.from_pretrained("Salesforce/blip2-opt-2.7b")

img_url = 'https://storage.googleapis.com/sfr-vision-language-research/BLIP/demo.jpg'

raw_image = Image.open(requests.get(img_url, stream=True).raw).convert('RGB')

question = "how many dogs are in the picture?"

inputs = processor(raw_image, question, return_tensors="pt")

out = model.generate(**inputs)

print(processor.decode(out[0], skip_special_tokens=True).strip())

```

</details>

#### Running the model on GPU

##### In full precision

<details>

<summary> Click to expand </summary>

```python

# pip install accelerate

import requests

from PIL import Image

from transformers import Blip2Processor, Blip2ForConditionalGeneration

processor = Blip2Processor.from_pretrained("Salesforce/blip2-opt-2.7b")

model = Blip2ForConditionalGeneration.from_pretrained("Salesforce/blip2-opt-2.7b", device_map="auto")

img_url = 'https://storage.googleapis.com/sfr-vision-language-research/BLIP/demo.jpg'

raw_image = Image.open(requests.get(img_url, stream=True).raw).convert('RGB')

question = "how many dogs are in the picture?"

inputs = processor(raw_image, question, return_tensors="pt").to("cuda")

out = model.generate(**inputs)

print(processor.decode(out[0], skip_special_tokens=True).strip())

```

</details>

##### In half precision (`float16`)

<details>

<summary> Click to expand </summary>

```python

# pip install accelerate

import torch

import requests

from PIL import Image

from transformers import Blip2Processor, Blip2ForConditionalGeneration

processor = Blip2Processor.from_pretrained("Salesforce/blip2-opt-2.7b")

model = Blip2ForConditionalGeneration.from_pretrained("Salesforce/blip2-opt-2.7b", torch_dtype=torch.float16, device_map="auto")

img_url = 'https://storage.googleapis.com/sfr-vision-language-research/BLIP/demo.jpg'

raw_image = Image.open(requests.get(img_url, stream=True).raw).convert('RGB')

question = "how many dogs are in the picture?"

inputs = processor(raw_image, question, return_tensors="pt").to("cuda", torch.float16)

out = model.generate(**inputs)

print(processor.decode(out[0], skip_special_tokens=True).strip())

```

</details>

##### In 8-bit precision (`int8`)

<details>

<summary> Click to expand </summary>

```python

# pip install accelerate bitsandbytes

import torch

import requests

from PIL import Image

from transformers import Blip2Processor, Blip2ForConditionalGeneration

processor = Blip2Processor.from_pretrained("Salesforce/blip2-opt-2.7b")

model = Blip2ForConditionalGeneration.from_pretrained("Salesforce/blip2-opt-2.7b", load_in_8bit=True, device_map="auto")

img_url = 'https://storage.googleapis.com/sfr-vision-language-research/BLIP/demo.jpg'

raw_image = Image.open(requests.get(img_url, stream=True).raw).convert('RGB')

question = "how many dogs are in the picture?"

inputs = processor(raw_image, question, return_tensors="pt").to("cuda", torch.float16)

out = model.generate(**inputs)

print(processor.decode(out[0], skip_special_tokens=True).strip())

```

</details> | 6,637 | [

[

-0.03179931640625,

-0.056396484375,

-0.003635406494140625,

0.030181884765625,

-0.021026611328125,

-0.01078033447265625,

-0.024200439453125,

-0.0595703125,

-0.009979248046875,

0.0263214111328125,

-0.03607177734375,

-0.006702423095703125,

-0.043304443359375,

-0.004337310791015625,

-0.004505157470703125,

0.051849365234375,

0.0088958740234375,

0.0014314651489257812,

-0.006885528564453125,

0.003658294677734375,

-0.020843505859375,

-0.0066070556640625,

-0.0513916015625,

-0.013519287109375,

-0.0009670257568359375,

0.0250701904296875,

0.059478759765625,

0.0308837890625,

0.056304931640625,

0.0270233154296875,

-0.016143798828125,

0.0089569091796875,

-0.038665771484375,

-0.0210723876953125,

-0.007305145263671875,

-0.054840087890625,

-0.01351165771484375,

-0.0003714561462402344,

0.042694091796875,

0.036651611328125,

0.01129913330078125,

0.0260467529296875,

-0.003711700439453125,

0.040374755859375,

-0.035919189453125,

0.0193023681640625,

-0.05523681640625,

0.0012531280517578125,

-0.0029315948486328125,

0.001979827880859375,

-0.033233642578125,

-0.00988006591796875,

0.004009246826171875,

-0.052703857421875,

0.03363037109375,

0.006587982177734375,

0.115966796875,

0.026824951171875,

-0.00098419189453125,

-0.020751953125,

-0.03271484375,

0.0677490234375,

-0.047882080078125,

0.036407470703125,

0.0176849365234375,

0.0195159912109375,

-0.002803802490234375,

-0.06365966796875,

-0.0513916015625,

-0.00887298583984375,

-0.01007080078125,

0.02484130859375,

-0.0211334228515625,

-0.00632476806640625,

0.036407470703125,

0.019561767578125,

-0.047943115234375,

0.004169464111328125,

-0.064697265625,

-0.0174102783203125,

0.052703857421875,

-0.005096435546875,

0.0205841064453125,

-0.026580810546875,

-0.039764404296875,

-0.028472900390625,

-0.040985107421875,

0.024688720703125,

0.0120391845703125,

0.005954742431640625,

-0.0306549072265625,

0.06256103515625,

-0.0031795501708984375,

0.048553466796875,

0.026611328125,

-0.0177764892578125,

0.043304443359375,

-0.0236663818359375,

-0.0198211669921875,

-0.01462554931640625,

0.072998046875,

0.04583740234375,

0.01482391357421875,

0.0006546974182128906,

-0.009979248046875,

0.002262115478515625,

0.0037441253662109375,

-0.0814208984375,

-0.0163421630859375,

0.0289306640625,

-0.038909912109375,

-0.0108642578125,

-0.00737762451171875,

-0.06854248046875,

-0.00489044189453125,

0.006694793701171875,

0.035491943359375,

-0.04498291015625,

-0.0233154296875,

0.007965087890625,

-0.032958984375,

0.03411865234375,

0.015838623046875,

-0.0716552734375,

-0.0023632049560546875,

0.0372314453125,

0.06549072265625,

0.011260986328125,

-0.034759521484375,

-0.01082611083984375,

0.00649261474609375,

-0.024200439453125,

0.041534423828125,

-0.01145172119140625,

-0.016387939453125,

-0.0030765533447265625,

0.01611328125,

0.0004818439483642578,

-0.04541015625,

0.0100250244140625,

-0.031951904296875,

0.018646240234375,

-0.0112762451171875,

-0.033416748046875,

-0.0240936279296875,

0.0116119384765625,

-0.032470703125,

0.08697509765625,

0.021514892578125,

-0.061248779296875,

0.034454345703125,

-0.035858154296875,

-0.0230865478515625,

0.0185089111328125,

-0.00940704345703125,

-0.05230712890625,

-0.002838134765625,

0.0155181884765625,

0.0245513916015625,

-0.0241851806640625,

0.003948211669921875,

-0.025848388671875,

-0.027557373046875,

0.006130218505859375,

-0.01253509521484375,

0.083740234375,

0.002307891845703125,

-0.047576904296875,

-0.005519866943359375,

-0.037200927734375,

-0.0059356689453125,

0.02960205078125,

-0.0190887451171875,

0.006267547607421875,

-0.01439666748046875,

0.01348114013671875,

0.02252197265625,

0.04388427734375,

-0.051300048828125,

-0.0007991790771484375,

-0.04205322265625,

0.03607177734375,

0.038330078125,

-0.01751708984375,

0.0281982421875,

-0.010284423828125,

0.0235748291015625,

0.0145111083984375,

0.026824951171875,

-0.0196533203125,

-0.06146240234375,

-0.06890869140625,

-0.018280029296875,

-0.0004730224609375,

0.05224609375,

-0.061676025390625,

0.035797119140625,

-0.014312744140625,

-0.052825927734375,

-0.0443115234375,

0.00949859619140625,

0.0411376953125,

0.048614501953125,

0.03802490234375,

-0.017822265625,

-0.037353515625,

-0.0733642578125,

0.0192108154296875,

-0.0207672119140625,

0.0026836395263671875,

0.035858154296875,

0.053924560546875,

-0.024169921875,

0.060882568359375,

-0.040740966796875,

-0.0157623291015625,

-0.022125244140625,

0.0015106201171875,

0.0240631103515625,

0.05194091796875,

0.05938720703125,

-0.060089111328125,

-0.02587890625,

0.00018548965454101562,

-0.065185546875,

0.0140533447265625,

-0.0132293701171875,

-0.0182952880859375,

0.038909912109375,

0.03399658203125,

-0.06671142578125,

0.039642333984375,

0.038238525390625,

-0.020965576171875,

0.044219970703125,

-0.00936126708984375,

-0.0095062255859375,

-0.07415771484375,

0.0269012451171875,

0.0108642578125,

-0.007381439208984375,

-0.0277862548828125,

0.006259918212890625,

0.01947021484375,

-0.0163421630859375,

-0.05108642578125,

0.0604248046875,

-0.03240966796875,

-0.019317626953125,

0.006587982177734375,

-0.01422119140625,

0.0081634521484375,

0.04443359375,

0.020294189453125,

0.0595703125,

0.07000732421875,

-0.042938232421875,

0.033477783203125,

0.04327392578125,

-0.024688720703125,

0.0228424072265625,

-0.0645751953125,

0.004974365234375,

-0.00951385498046875,

0.00083160400390625,

-0.08282470703125,

-0.0111846923828125,

0.0202178955078125,

-0.054656982421875,

0.0302886962890625,

-0.016937255859375,

-0.034393310546875,

-0.057037353515625,

-0.02197265625,

0.0256500244140625,

0.051727294921875,

-0.048004150390625,

0.0310516357421875,

0.025177001953125,

0.00460052490234375,

-0.053741455078125,

-0.0826416015625,

0.0007762908935546875,

0.007289886474609375,

-0.0662841796875,

0.03814697265625,

-0.0008115768432617188,

0.01032257080078125,

0.01296234130859375,

0.0215301513671875,

0.00006949901580810547,

-0.011627197265625,

0.0204620361328125,

0.030792236328125,

-0.021270751953125,

-0.0139007568359375,

-0.01031494140625,

-0.0008139610290527344,

-0.00013375282287597656,

-0.00946807861328125,

0.061248779296875,

-0.0194549560546875,

-0.0019273757934570312,

-0.05010986328125,

0.00894927978515625,

0.035491943359375,

-0.023193359375,

0.04638671875,

0.057525634765625,

-0.0316162109375,

-0.0068511962890625,

-0.03973388671875,

-0.0131378173828125,

-0.043365478515625,

0.048797607421875,

-0.0197296142578125,

-0.032928466796875,

0.04638671875,

0.0179443359375,

0.009307861328125,

0.030303955078125,

0.06097412109375,

-0.00629425048828125,

0.0692138671875,

0.0430908203125,

0.0161285400390625,

0.048187255859375,

-0.066162109375,

0.003570556640625,

-0.060760498046875,

-0.03839111328125,

-0.00789642333984375,

-0.016387939453125,

-0.034027099609375,

-0.03607177734375,

0.0175933837890625,

0.0233001708984375,

-0.028717041015625,

0.024993896484375,

-0.04351806640625,

0.01629638671875,

0.055419921875,

0.021881103515625,

-0.0258941650390625,

0.00994873046875,

-0.006633758544921875,

0.0027408599853515625,

-0.047210693359375,

-0.018890380859375,

0.07440185546875,

0.03717041015625,

0.051605224609375,

-0.01274871826171875,

0.0310516357421875,

-0.0241241455078125,

0.01568603515625,

-0.059234619140625,

0.047210693359375,

-0.0240631103515625,

-0.062164306640625,

-0.0161285400390625,

-0.02313232421875,

-0.0672607421875,

0.00936126708984375,

-0.0167694091796875,

-0.05230712890625,

0.01274871826171875,

0.024688720703125,

-0.01372528076171875,

0.02398681640625,

-0.068115234375,

0.0733642578125,

-0.035064697265625,

-0.046417236328125,

0.00426483154296875,

-0.047882080078125,

0.0272369384765625,

0.0121307373046875,

-0.01377105712890625,

0.0086669921875,

0.004810333251953125,

0.057952880859375,

-0.035186767578125,

0.05950927734375,

-0.02587890625,

0.025299072265625,

0.036468505859375,

-0.01324462890625,

0.01490020751953125,

-0.0020904541015625,

-0.004962921142578125,

0.0277252197265625,

-0.00833892822265625,

-0.04156494140625,

-0.032073974609375,

0.0161895751953125,

-0.06719970703125,

-0.0328369140625,

-0.0201873779296875,

-0.034332275390625,

0.0008263587951660156,

0.032623291015625,

0.05328369140625,

0.023681640625,

0.017669677734375,

0.01229095458984375,

0.0243072509765625,

-0.043914794921875,

0.050567626953125,

0.0059661865234375,

-0.0205841064453125,

-0.0367431640625,

0.06341552734375,

-0.0007562637329101562,

0.0220489501953125,

0.012847900390625,

0.01120758056640625,

-0.04498291015625,

-0.029754638671875,

-0.056854248046875,

0.04345703125,

-0.0467529296875,

-0.03582763671875,

-0.0307769775390625,

-0.0216217041015625,

-0.045501708984375,

-0.016845703125,

-0.043365478515625,

-0.01401519775390625,

-0.03955078125,

0.01552581787109375,

0.03717041015625,

0.02947998046875,

-0.00702667236328125,

0.031219482421875,

-0.040374755859375,

0.037017822265625,

0.0260467529296875,

0.03131103515625,

-0.004199981689453125,

-0.04547119140625,

-0.005489349365234375,

0.0218353271484375,

-0.029296875,

-0.053070068359375,

0.036468505859375,

0.01605224609375,

0.03192138671875,

0.03033447265625,

-0.0295867919921875,

0.067138671875,

-0.0293121337890625,

0.0633544921875,

0.0394287109375,

-0.0701904296875,

0.0531005859375,

-0.00562286376953125,

0.0074005126953125,

0.0279083251953125,

0.02606201171875,

-0.0229339599609375,

-0.0229339599609375,

-0.04974365234375,

-0.0643310546875,

0.06671142578125,

0.01294708251953125,

0.01255035400390625,

0.0098419189453125,

0.029388427734375,

-0.013519287109375,

0.0072479248046875,

-0.05230712890625,

-0.0225677490234375,

-0.04052734375,

-0.010528564453125,

-0.006313323974609375,

-0.01230621337890625,

0.013824462890625,

-0.03662109375,

0.040679931640625,

-0.002471923828125,

0.04473876953125,

0.024658203125,

-0.0236053466796875,

-0.0151214599609375,

-0.03582763671875,

0.038360595703125,

0.038970947265625,

-0.0242919921875,

-0.00411224365234375,

0.0005717277526855469,

-0.053314208984375,

-0.0178680419921875,

0.00910186767578125,

-0.02996826171875,

-0.0006213188171386719,

0.025848388671875,

0.08233642578125,

-0.006580352783203125,

-0.04473876953125,

0.049774169921875,

0.0041046142578125,

-0.0179595947265625,

-0.0245513916015625,

0.0007395744323730469,

0.007625579833984375,

0.019989013671875,

0.033111572265625,

0.01071929931640625,

-0.012359619140625,

-0.037261962890625,

0.0256805419921875,

0.042144775390625,

-0.01096343994140625,

-0.0245513916015625,

0.0633544921875,

0.004669189453125,

-0.018035888671875,

0.059356689453125,

-0.035614013671875,

-0.05230712890625,

0.07305908203125,

0.059173583984375,

0.034637451171875,

-0.00274658203125,

0.01837158203125,

0.053924560546875,

0.034027099609375,

-0.00669097900390625,

0.034149169921875,

0.022064208984375,

-0.06597900390625,

-0.021881103515625,

-0.04974365234375,

-0.0259246826171875,

0.0172119140625,

-0.03924560546875,

0.043243408203125,

-0.04669189453125,

-0.01702880859375,

0.01690673828125,

0.0146484375,

-0.0634765625,

0.026123046875,

0.026031494140625,

0.06597900390625,

-0.062469482421875,

0.034515380859375,

0.061737060546875,

-0.05548095703125,

-0.06756591796875,

-0.0225372314453125,

-0.02587890625,

-0.0860595703125,

0.06048583984375,

0.033905029296875,

0.00984954833984375,

0.0039215087890625,

-0.05902099609375,

-0.061248779296875,

0.07672119140625,

0.034454345703125,

-0.0271453857421875,

0.003849029541015625,

0.01485443115234375,

0.04058837890625,

-0.007022857666015625,

0.034912109375,

0.0165252685546875,

0.035980224609375,

0.027587890625,

-0.0675048828125,

0.0100555419921875,

-0.02587890625,

-0.0004718303680419922,

-0.0022373199462890625,

-0.071533203125,

0.0760498046875,

-0.036102294921875,

-0.01218414306640625,

0.0016574859619140625,

0.052520751953125,

0.031707763671875,

0.00955963134765625,

0.03167724609375,

0.053863525390625,

0.046173095703125,

-0.0036487579345703125,

0.07550048828125,

-0.026153564453125,

0.051605224609375,

0.04248046875,

0.0041046142578125,

0.059112548828125,

0.029296875,

-0.01227569580078125,

0.0149078369140625,

0.052093505859375,

-0.048248291015625,

0.0306243896484375,

-0.0012331008911132812,

0.020538330078125,

-0.0011157989501953125,

0.007045745849609375,

-0.0306854248046875,

0.050201416015625,

0.035186767578125,

-0.02606201171875,

-0.002223968505859375,

-0.0010805130004882812,

0.00287628173828125,

-0.033447265625,

-0.0221405029296875,

0.02740478515625,

-0.0118408203125,

-0.059539794921875,

0.07183837890625,

-0.0024662017822265625,

0.0765380859375,

-0.01873779296875,

0.003662109375,

-0.017303466796875,

0.01751708984375,

-0.024871826171875,

-0.08184814453125,

0.0241241455078125,

-0.006984710693359375,

-0.0003006458282470703,

0.0015048980712890625,

0.03826904296875,

-0.032470703125,

-0.07147216796875,

0.02337646484375,

0.00890350341796875,

0.02398681640625,

0.010009765625,

-0.0811767578125,

0.01058197021484375,

0.006191253662109375,

-0.027496337890625,

-0.00762176513671875,

0.0213623046875,

0.0093536376953125,

0.0521240234375,

0.05078125,

0.0161285400390625,

0.036376953125,

-0.011383056640625,

0.062744140625,

-0.04364013671875,

-0.0267181396484375,

-0.048065185546875,

0.045074462890625,

-0.01189422607421875,

-0.047149658203125,

0.039337158203125,

0.0687255859375,

0.071044921875,

-0.011871337890625,

0.045684814453125,

-0.0265350341796875,

0.01068115234375,

-0.035369873046875,

0.053924560546875,

-0.060089111328125,

-0.0140533447265625,

-0.0163421630859375,

-0.05230712890625,

-0.028594970703125,

0.06854248046875,

-0.0177459716796875,

0.00972747802734375,

0.040008544921875,

0.09039306640625,

-0.0175323486328125,

-0.024688720703125,

0.005336761474609375,

0.021514892578125,

0.0257720947265625,

0.053131103515625,

0.049163818359375,

-0.051544189453125,

0.046417236328125,

-0.052581787109375,

-0.0167694091796875,

-0.0163421630859375,

-0.042205810546875,

-0.06866455078125,

-0.04449462890625,

-0.03240966796875,

-0.038543701171875,

-0.0115966796875,

0.0350341796875,

0.07025146484375,

-0.048614501953125,

-0.01318359375,

-0.01229095458984375,

-0.00029397010803222656,

-0.0016908645629882812,

-0.0169525146484375,

0.03570556640625,

-0.027099609375,

-0.06744384765625,

-0.01035308837890625,

0.0149078369140625,

0.0178375244140625,

-0.0116119384765625,

-0.0015716552734375,

-0.013427734375,

-0.018829345703125,

0.034454345703125,

0.0311737060546875,

-0.044677734375,

-0.014556884765625,

0.0078887939453125,

-0.0119476318359375,

0.019775390625,

0.0188751220703125,

-0.04071044921875,

0.0282440185546875,

0.0391845703125,

0.028778076171875,

0.0618896484375,

-0.003482818603515625,

0.004352569580078125,

-0.045074462890625,

0.0570068359375,

0.01534271240234375,

0.035308837890625,

0.0369873046875,

-0.0295867919921875,

0.028594970703125,

0.02227783203125,

-0.02508544921875,

-0.06866455078125,

0.00782012939453125,

-0.0948486328125,

-0.021087646484375,

0.10614013671875,

-0.0124053955078125,

-0.04388427734375,

0.01242828369140625,

-0.0171661376953125,

0.0278167724609375,

-0.017669677734375,

0.04400634765625,

0.0136566162109375,

-0.0074920654296875,

-0.0306854248046875,

-0.0246124267578125,

0.03167724609375,

0.0279541015625,

-0.0499267578125,

-0.0174407958984375,

0.021942138671875,

0.03369140625,

0.03472900390625,

0.033935546875,

-0.01065826416015625,

0.030364990234375,

0.0179443359375,

0.0279083251953125,

-0.01116943359375,

-0.005489349365234375,

-0.012420654296875,

-0.00531768798828125,

-0.01284027099609375,

-0.032440185546875

]

] |

asahi417/tner-xlm-roberta-base-all-english | 2021-02-12T23:31:37.000Z | [

"transformers",

"pytorch",

"xlm-roberta",

"token-classification",

"autotrain_compatible",

"endpoints_compatible",

"region:us"

] | token-classification | asahi417 | null | null | asahi417/tner-xlm-roberta-base-all-english | 0 | 162,337 | transformers | 2022-03-02T23:29:05 | # XLM-RoBERTa for NER

XLM-RoBERTa finetuned on NER. Check more detail at [TNER repository](https://github.com/asahi417/tner).

## Usage

```

from transformers import AutoTokenizer, AutoModelForTokenClassification

tokenizer = AutoTokenizer.from_pretrained("asahi417/tner-xlm-roberta-base-all-english")

model = AutoModelForTokenClassification.from_pretrained("asahi417/tner-xlm-roberta-base-all-english")

``` | 419 | [

[

-0.0138702392578125,

-0.0254669189453125,

0.0160064697265625,

0.01435089111328125,

-0.032073974609375,

-0.0021381378173828125,

-0.012908935546875,

0.006801605224609375,

0.01367950439453125,

0.042022705078125,

-0.037322998046875,

-0.0298309326171875,

-0.068359375,

0.031341552734375,

-0.0223846435546875,

0.07080078125,

-0.01541900634765625,

0.040283203125,

0.0157470703125,

-0.022064208984375,

-0.02667236328125,

-0.049835205078125,

-0.08258056640625,

-0.030029296875,

0.024810791015625,

0.0340576171875,

0.016510009765625,

0.022491455078125,

0.0259246826171875,

0.033905029296875,

-0.0075225830078125,

0.0072174072265625,

-0.01346588134765625,

0.0018186569213867188,

0.00601959228515625,

-0.04840087890625,

-0.058807373046875,

-0.0018968582153320312,

0.05108642578125,

0.0177001953125,

0.004619598388671875,

0.0225372314453125,

-0.0157470703125,

0.0279998779296875,

-0.025299072265625,

0.0179595947265625,

-0.033111572265625,

0.01067352294921875,

-0.004985809326171875,

-0.0020427703857421875,

-0.04095458984375,

-0.0171051025390625,

-0.01800537109375,

-0.057373046875,

-0.0027713775634765625,

0.0104522705078125,

0.09918212890625,

0.027740478515625,

-0.048553466796875,

0.002532958984375,

-0.070556640625,

0.07696533203125,

-0.052703857421875,

0.0276336669921875,

0.0240020751953125,

0.04071044921875,

0.00788116455078125,

-0.0772705078125,

-0.042694091796875,

-0.0163421630859375,

-0.01242828369140625,

0.0262908935546875,

-0.028717041015625,

-0.01375579833984375,

0.0286712646484375,

0.0196685791015625,

-0.040740966796875,

0.000050127506256103516,

-0.0458984375,

-0.0177764892578125,

0.024078369140625,

0.0207061767578125,

0.023956298828125,

-0.022216796875,

-0.01050567626953125,

-0.021392822265625,

-0.0232086181640625,

-0.03045654296875,

0.01451873779296875,

0.0218658447265625,

-0.034515380859375,

0.03973388671875,

-0.00005269050598144531,

0.0499267578125,

0.035400390625,

0.01467132568359375,

0.04461669921875,

-0.00928497314453125,

-0.01580810546875,

-0.01544189453125,

0.06805419921875,

0.00791168212890625,

0.005062103271484375,

-0.0035877227783203125,

-0.025421142578125,

-0.0150146484375,

0.002140045166015625,

-0.05523681640625,

-0.0160980224609375,

-0.0029430389404296875,

-0.0306854248046875,

-0.0014486312866210938,

0.030914306640625,

-0.038970947265625,

0.03546142578125,

-0.049591064453125,

0.0604248046875,

-0.035797119140625,

-0.0171051025390625,

-0.017791748046875,

-0.01342010498046875,

0.02447509765625,

0.004085540771484375,

-0.059417724609375,

0.03009033203125,

0.039947509765625,

0.046173095703125,

0.01438140869140625,

-0.023345947265625,

-0.04339599609375,

-0.00670623779296875,

-0.0245208740234375,

0.047943115234375,

-0.0019893646240234375,

-0.0196685791015625,

-0.0200958251953125,

0.0300445556640625,

-0.0270538330078125,

-0.033905029296875,

0.045013427734375,

-0.0303497314453125,

0.051666259765625,

0.0232696533203125,

-0.048919677734375,

-0.027557373046875,

0.01296234130859375,

-0.0389404296875,

0.06988525390625,

0.0377197265625,

-0.0294342041015625,

0.02978515625,

-0.06610107421875,

-0.028106689453125,

0.0026493072509765625,

0.007602691650390625,

-0.054168701171875,

0.00588226318359375,

-0.00579071044921875,

0.006137847900390625,

0.003589630126953125,

0.0243988037109375,

0.007965087890625,

-0.01529693603515625,

0.01031494140625,

-0.0247650146484375,

0.06256103515625,

0.0362548828125,

-0.03692626953125,

0.0304107666015625,

-0.069580078125,

0.00667572021484375,

-0.0021533966064453125,

-0.00830078125,

-0.01898193359375,

-0.0280609130859375,

0.04351806640625,

0.01517486572265625,

0.0225982666015625,

-0.031341552734375,

0.002193450927734375,

-0.04241943359375,

0.03326416015625,

0.031768798828125,

-0.007659912109375,

0.046661376953125,

-0.0249786376953125,

0.045135498046875,

0.01076507568359375,

0.030059814453125,

0.004352569580078125,

-0.01727294921875,

-0.08819580078125,

-0.01329803466796875,

0.04510498046875,

0.044677734375,

-0.03265380859375,

0.054229736328125,

-0.00856781005859375,

-0.05450439453125,

-0.023468017578125,

0.0010023117065429688,

0.03045654296875,

0.021453857421875,

0.04986572265625,

-0.01453399658203125,

-0.06890869140625,

-0.05322265625,

-0.003185272216796875,

0.013519287109375,

0.00968170166015625,

0.01409912109375,

0.049102783203125,

-0.039276123046875,

0.044403076171875,

-0.0281524658203125,

-0.034210205078125,

-0.032501220703125,

0.02593994140625,

0.039215087890625,

0.06585693359375,

0.05047607421875,

-0.048370361328125,

-0.07373046875,

-0.0149688720703125,

-0.0209503173828125,

0.004314422607421875,

-0.00428009033203125,

-0.0282135009765625,

0.0433349609375,

0.037322998046875,

-0.0372314453125,

0.033843994140625,

0.039398193359375,

-0.026123046875,

0.0297393798828125,

-0.029052734375,

0.0007586479187011719,

-0.125244140625,

0.0144195556640625,

-0.0231475830078125,

-0.030029296875,

-0.04327392578125,

0.0007538795471191406,

0.01509857177734375,

0.00696563720703125,

-0.04119873046875,

0.05767822265625,

-0.034210205078125,

0.0027713775634765625,

-0.027008056640625,

-0.0121612548828125,

0.00688934326171875,

0.0015783309936523438,

0.0014867782592773438,

0.041259765625,

0.05810546875,

-0.049774169921875,

0.03631591796875,

0.0552978515625,

-0.003955841064453125,

0.019989013671875,

-0.051239013671875,

0.0163421630859375,

0.0055389404296875,

0.0220947265625,

-0.0335693359375,

-0.00679779052734375,

0.03753662109375,

-0.04290771484375,

0.045562744140625,

-0.03033447265625,

-0.03033447265625,

-0.0169525146484375,

0.023101806640625,

0.0408935546875,

0.050628662109375,

-0.04083251953125,

0.0849609375,

0.01250457763671875,

0.0004642009735107422,

-0.032135009765625,

-0.054168701171875,

0.020355224609375,

-0.02630615234375,

-0.01416778564453125,

0.023406982421875,

-0.0213775634765625,

-0.0093536376953125,

-0.0038166046142578125,

0.00041675567626953125,

-0.0299072265625,

-0.00804901123046875,

0.0192718505859375,

0.042724609375,

-0.0265655517578125,

-0.005126953125,

-0.0110321044921875,

-0.06475830078125,

-0.00818634033203125,

-0.0302276611328125,

0.057403564453125,

-0.01036834716796875,

-0.0062713623046875,

-0.0312347412109375,

0.01007080078125,

0.02703857421875,

-0.0192413330078125,

0.05389404296875,

0.0704345703125,

-0.017364501953125,

-0.00037026405334472656,

-0.04376220703125,

-0.025665283203125,

-0.033233642578125,

0.0287628173828125,

-0.010711669921875,

-0.05133056640625,

0.040740966796875,

0.0101776123046875,

-0.0099334716796875,

0.052459716796875,

0.023040771484375,

0.02020263671875,

0.0718994140625,

0.046661376953125,

-0.0025959014892578125,

0.027862548828125,

-0.04248046875,

0.0135498046875,

-0.07293701171875,

-0.009033203125,

-0.057037353515625,

0.00879669189453125,

-0.042022705078125,

-0.00795745849609375,

0.0066070556640625,

-0.012542724609375,

-0.041595458984375,

0.05438232421875,

-0.04095458984375,

0.031707763671875,

0.046142578125,

-0.007778167724609375,

0.001087188720703125,

-0.018890380859375,

-0.006191253662109375,

-0.01418304443359375,

-0.04254150390625,

-0.035491943359375,

0.0771484375,

-0.006633758544921875,

0.040283203125,

0.006011962890625,

0.0550537109375,

-0.032684326171875,

0.038482666015625,

-0.05572509765625,

0.0227813720703125,

-0.04998779296875,

-0.07147216796875,

-0.00201416015625,

-0.034454345703125,

-0.07647705078125,

-0.00951385498046875,

-0.0228118896484375,

-0.0278167724609375,

0.005863189697265625,

0.0091552734375,

-0.0117950439453125,

0.013214111328125,

-0.01751708984375,

0.07586669921875,

-0.030181884765625,

0.0166168212890625,

-0.011322021484375,

-0.03948974609375,

0.009002685546875,

-0.0223236083984375,

0.017547607421875,

0.00024127960205078125,

0.006683349609375,

0.0447998046875,

-0.0350341796875,

0.04193115234375,

-0.00920867919921875,

0.032012939453125,

-0.003520965576171875,

-0.006900787353515625,

0.01263427734375,

0.004520416259765625,

-0.005542755126953125,

0.01477813720703125,

-0.017547607421875,

-0.0156707763671875,

-0.0237579345703125,

0.043731689453125,

-0.07745361328125,

-0.0264434814453125,

-0.04449462890625,

-0.019195556640625,

0.0158538818359375,

0.046478271484375,

0.05859375,

0.0604248046875,

-0.00102996826171875,

0.0089569091796875,

0.0171966552734375,

-0.00797271728515625,

0.031005859375,

0.05194091796875,

-0.0244903564453125,

-0.055084228515625,

0.049957275390625,

0.00940704345703125,

0.01824951171875,

0.03802490234375,

0.017822265625,

-0.0333251953125,

-0.0372314453125,

-0.0198211669921875,

0.0177764892578125,

-0.0577392578125,

-0.0175933837890625,

-0.038360595703125,

-0.04034423828125,

-0.0292510986328125,

0.006160736083984375,

-0.0235443115234375,

-0.04632568359375,

-0.041473388671875,

-0.00991058349609375,

0.039703369140625,

0.041961669921875,

-0.0290985107421875,

0.036163330078125,

-0.07745361328125,

0.0270843505859375,

0.01151275634765625,

0.0149993896484375,

-0.0226593017578125,

-0.0784912109375,

-0.027252197265625,

-0.006885528564453125,

-0.0247650146484375,

-0.039154052734375,

0.05877685546875,

0.027130126953125,

0.054595947265625,

0.01580810546875,

0.0012073516845703125,

0.050445556640625,

-0.038116455078125,

0.050872802734375,

0.0072174072265625,

-0.0810546875,

0.03936767578125,

-0.006744384765625,

0.0248870849609375,

0.044189453125,

0.0276336669921875,

-0.03338623046875,

-0.035888671875,

-0.058746337890625,

-0.09210205078125,

0.062286376953125,

0.01273345947265625,

0.0455322265625,

-0.004451751708984375,

0.02288818359375,

0.011322021484375,

-0.009124755859375,

-0.08538818359375,

-0.05426025390625,

-0.05230712890625,

-0.0247039794921875,

-0.03778076171875,

-0.006134033203125,

-0.0010471343994140625,

-0.03131103515625,

0.0875244140625,

0.0013523101806640625,

0.00861358642578125,

0.023101806640625,

-0.021514892578125,

-0.025665283203125,

0.0168914794921875,

0.04962158203125,

0.0391845703125,

-0.01441192626953125,

-0.01213836669921875,

0.026275634765625,

-0.028564453125,

0.0134124755859375,

0.0190887451171875,

-0.03045654296875,

0.028289794921875,

0.00925445556640625,

0.07928466796875,

0.0303497314453125,

-0.0087127685546875,

0.0229034423828125,

-0.0305023193359375,

-0.0253448486328125,

-0.08154296875,

0.00904083251953125,

0.00485992431640625,

-0.006130218505859375,

0.0238494873046875,

0.0245208740234375,

-0.005413055419921875,

-0.0156402587890625,

0.01194000244140625,

0.0166015625,

-0.05078125,

-0.0168914794921875,

0.0654296875,

-0.002147674560546875,

-0.039642333984375,

0.06390380859375,

-0.01220703125,

-0.04248046875,

0.058258056640625,

0.05255126953125,

0.0875244140625,

-0.012908935546875,

-0.005565643310546875,

0.06414794921875,

0.0110321044921875,

0.00579833984375,

0.0278167724609375,

0.0217132568359375,

-0.0615234375,

-0.0391845703125,

-0.045806884765625,

-0.033935546875,

0.0123291015625,

-0.06719970703125,

0.03839111328125,

-0.02545166015625,

-0.034942626953125,

0.00946807861328125,

-0.01561737060546875,

-0.041351318359375,

0.01432037353515625,

-0.00555419921875,

0.06719970703125,

-0.038970947265625,

0.061920166015625,

0.07501220703125,

-0.04296875,

-0.09576416015625,

-0.0126953125,

-0.0081329345703125,

-0.040374755859375,

0.07916259765625,

0.01337432861328125,

0.022369384765625,

0.024261474609375,

-0.02001953125,

-0.069091796875,

0.07867431640625,

-0.005649566650390625,

-0.03460693359375,

0.03045654296875,

0.006862640380859375,

0.0236968994140625,

-0.028564453125,

0.046051025390625,

0.01111602783203125,

0.0667724609375,

0.000492095947265625,

-0.06768798828125,

-0.0153961181640625,

-0.030609130859375,

-0.00954437255859375,

0.019805908203125,

-0.057769775390625,

0.07501220703125,

0.004512786865234375,

0.003841400146484375,

0.033966064453125,

0.040069580078125,

0.0111846923828125,

0.016082763671875,

0.03857421875,

0.04302978515625,

0.010223388671875,

-0.0236358642578125,

0.042144775390625,

-0.047210693359375,

0.0438232421875,

0.0814208984375,

-0.0049591064453125,

0.05194091796875,

0.0213623046875,

-0.007457733154296875,

0.0572509765625,

0.033416748046875,

-0.042266845703125,

0.0256805419921875,

-0.00641632080078125,

-0.0028057098388671875,

-0.02044677734375,

0.03460693359375,

-0.0231170654296875,

0.037811279296875,

0.00859832763671875,

-0.0210113525390625,

-0.003047943115234375,

-0.0006246566772460938,

0.0198822021484375,

0.0006899833679199219,

-0.02642822265625,

0.06396484375,

0.0252227783203125,

-0.057037353515625,

0.03594970703125,

0.0085296630859375,

0.0499267578125,

-0.057525634765625,

0.01145172119140625,

-0.012664794921875,

0.033111572265625,

-0.00594329833984375,

-0.0156097412109375,

0.043121337890625,

-0.003513336181640625,

-0.00921630859375,

-0.0030059814453125,

0.038299560546875,

-0.0311126708984375,

-0.0533447265625,

0.0234375,

0.0255126953125,

0.010101318359375,

0.020538330078125,

-0.05859375,

-0.0234527587890625,

-0.0033054351806640625,

-0.0157470703125,

0.0157012939453125,

0.0215911865234375,

0.0191802978515625,

0.039581298828125,

0.044281005859375,

-0.00096893310546875,

0.00286865234375,

0.028900146484375,

0.035614013671875,

-0.04644775390625,

-0.037689208984375,

-0.0562744140625,

0.040618896484375,

0.002513885498046875,

-0.03692626953125,

0.07763671875,

0.0621337890625,

0.04791259765625,

-0.035919189453125,

0.040252685546875,

-0.005168914794921875,

0.035858154296875,

-0.037628173828125,

0.060455322265625,

-0.036834716796875,

-0.01184844970703125,

-0.006923675537109375,

-0.0799560546875,

-0.0312347412109375,

0.0650634765625,

-0.00812530517578125,

0.01461029052734375,

0.056671142578125,

0.057098388671875,

-0.0175933837890625,

-0.01690673828125,

0.0201416015625,

0.0416259765625,

0.00733184814453125,

0.013641357421875,

0.05816650390625,

-0.053863525390625,

0.04132080078125,

-0.03240966796875,

-0.0034942626953125,

-0.0102996826171875,

-0.07525634765625,

-0.06561279296875,

-0.0377197265625,

-0.033599853515625,

-0.0288543701171875,

-0.0179443359375,

0.08746337890625,

0.07110595703125,

-0.0819091796875,

-0.023406982421875,

-0.0176239013671875,

-0.0050506591796875,

-0.004535675048828125,

-0.0182952880859375,

0.0294952392578125,

-0.03045654296875,

-0.07452392578125,

0.0215911865234375,

-0.004405975341796875,

0.036407470703125,

-0.002613067626953125,

-0.0316162109375,

0.0111541748046875,

0.0015869140625,

0.003589630126953125,

0.015472412109375,

-0.038726806640625,

-0.00506591796875,

0.0033893585205078125,

-0.01137542724609375,

0.019439697265625,

0.01861572265625,

-0.030029296875,

0.005031585693359375,

0.02587890625,

-0.00974273681640625,

0.05255126953125,

-0.013763427734375,

0.0494384765625,

-0.035919189453125,

0.0276641845703125,

0.014862060546875,

0.05743408203125,

0.0307159423828125,

-0.0118865966796875,

0.0224761962890625,

0.0014438629150390625,

-0.053131103515625,

-0.043243408203125,

-0.003131866455078125,

-0.07501220703125,

-0.00018489360809326172,

0.0692138671875,

-0.0125274658203125,

-0.023101806640625,

-0.0177154541015625,

-0.0275421142578125,

0.043304443359375,

-0.0168914794921875,

0.051239013671875,

0.031341552734375,

0.0184783935546875,

-0.0186309814453125,

-0.027984619140625,

0.025604248046875,

0.03302001953125,

-0.048553466796875,

-0.02777099609375,

0.00914764404296875,

0.04736328125,

0.0247955322265625,

0.0300445556640625,

-0.0266265869140625,

0.025390625,

-0.01177215576171875,

0.0252685546875,

-0.0238037109375,

-0.005710601806640625,

-0.02685546875,

-0.037322998046875,

0.00614166259765625,

-0.000640869140625

]

] |

darkstorm2150/Protogen_x5.8_Official_Release | 2023-03-21T18:20:14.000Z | [

"diffusers",

"stable-diffusion",

"stable-diffusion-diffusers",

"text-to-image",

"art",

"artistic",

"en",

"license:creativeml-openrail-m",

"endpoints_compatible",

"has_space",

"diffusers:StableDiffusionPipeline",

"region:us"

] | text-to-image | darkstorm2150 | null | null | darkstorm2150/Protogen_x5.8_Official_Release | 193 | 161,893 | diffusers | 2023-01-06T01:18:34 | ---

language:

- en

tags:

- stable-diffusion

- stable-diffusion-diffusers

- text-to-image

- art

- artistic

- diffusers

inference: true

license: creativeml-openrail-m

---

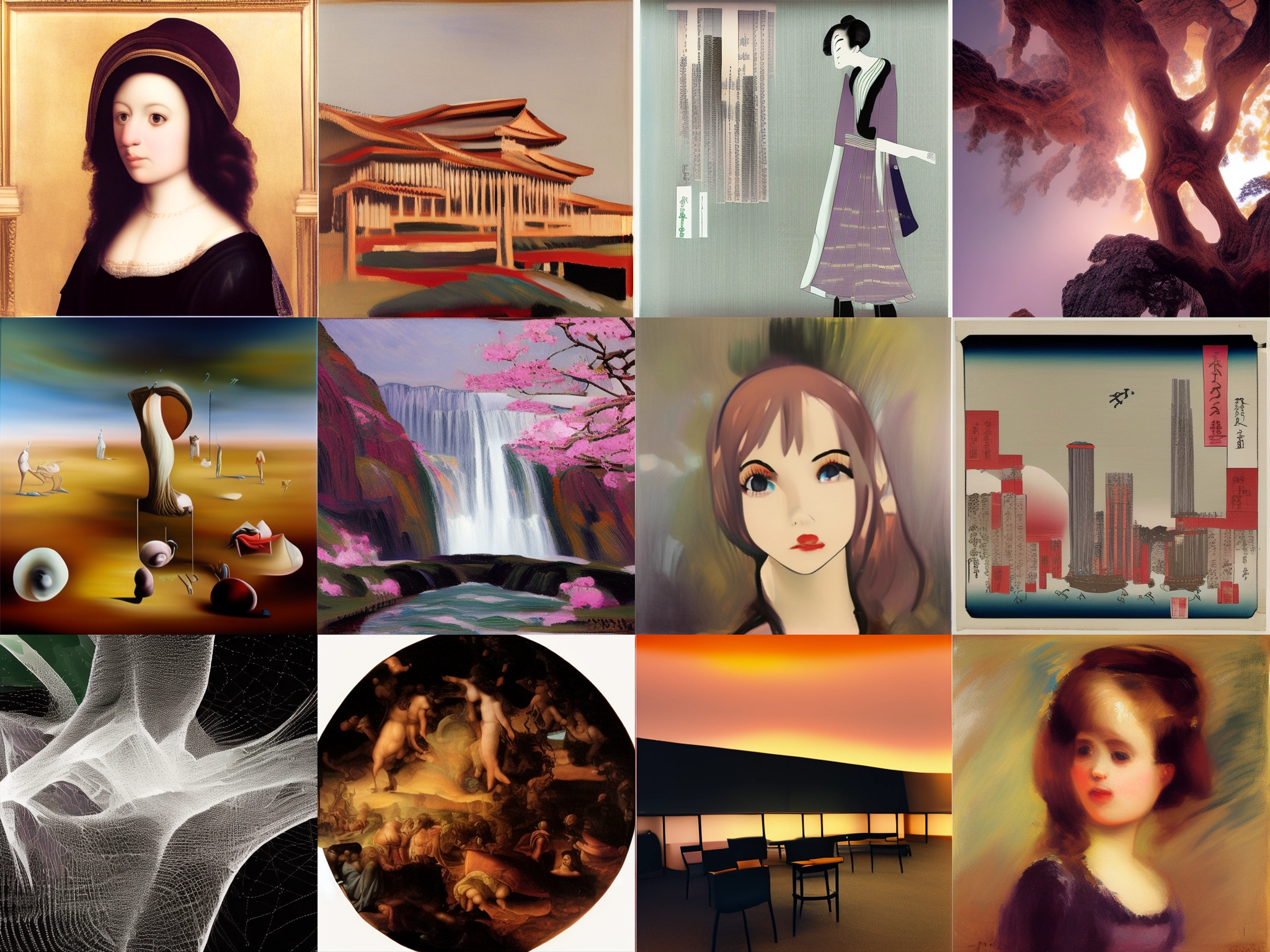

<center><img src="https://huggingface.co/darkstorm2150/Protogen_x5.8_Official_Release/resolve/main/Protogen%20x5.8-512.png" style="height:690px; border-radius: 8%; border: 10px solid #663380; padding-top:0px;" span title="Protogen x5.8 Raw Output"></center>

<center><h1>Protogen x5.8 (Scifi-Anime) Official Release</h1></center>

<center><p><em>Research Model by <a href="https://instagram.com/officialvictorespinoza">darkstorm2150</a></em></p></center>

</div>

## Table of contents

* [General info](#general-info)

* [Granular Adaptive Learning](#granular-adaptive-learning)

* [Trigger Words](#trigger-words)

* [Setup](#setup)

* [Space](#space)

* [CompVis](#compvis)

* [Diffusers](#🧨-diffusers)

* [Checkpoint Merging Data Reference](#checkpoint-merging-data-reference)

* [License](#license)

## General info

Protogen x5.8

Protogen was warm-started with [Stable Diffusion v1-5](https://huggingface.co/runwayml/stable-diffusion-v1-5) and

is rebuilt using dreamlikePhotoRealV2.ckpt as a core, adding small amounts during merge checkpoints.

## Granular Adaptive Learning

Granular adaptive learning is a machine learning technique that focuses on adjusting the learning process at a fine-grained level, rather than making global adjustments to the model. This approach allows the model to adapt to specific patterns or features in the data, rather than making assumptions based on general trends.

Granular adaptive learning can be achieved through techniques such as active learning, which allows the model to select the data it wants to learn from, or through the use of reinforcement learning, where the model receives feedback on its performance and adapts based on that feedback. It can also be achieved through techniques such as online learning where the model adjust itself as it receives more data.

Granular adaptive learning is often used in situations where the data is highly diverse or non-stationary and where the model needs to adapt quickly to changing patterns. This is often the case in dynamic environments such as robotics, financial markets, and natural language processing.

## Trigger Words

modelshoot style, analog style, mdjrny-v4 style, nousr robot

Trigger words are available for the hassan1.4 and f222, might have to google them :)

## Setup

To run this model, download the model.ckpt or model.safetensor and install it in your "stable-diffusion-webui\models\Stable-diffusion" directory

## Space

We support a [Gradio](https://github.com/gradio-app/gradio) Web UI:

[](https://huggingface.co/spaces/darkstorm2150/Stable-Diffusion-Protogen-webui)

## CompVis

## CKPT

[Download ProtoGen x5.8.ckpt (7.7GB)](https://huggingface.co/darkstorm2150/Protogen_x5.8_Official_Release/resolve/main/ProtoGen_X5.8.ckpt)

[Download ProtoGen X5.8-pruned-fp16.ckpt (1.72 GB)](https://huggingface.co/darkstorm2150/Protogen_x5.8_Official_Release/resolve/main/ProtoGen_X5.8-pruned-fp16.ckpt)

## Safetensors

[Download ProtoGen x5.8.safetensors (7.7GB)](https://huggingface.co/darkstorm2150/Protogen_x5.8_Official_Release/resolve/main/ProtoGen_X5.8.safetensors)

[Download ProtoGen x5.8-pruned-fp16.safetensors (1.72GB)](https://huggingface.co/darkstorm2150/Protogen_x5.8_Official_Release/resolve/main/ProtoGen_X5.8-pruned-fp16.safetensors)

### 🧨 Diffusers

This model can be used just like any other Stable Diffusion model. For more information,

please have a look at the [Stable Diffusion Pipeline](https://huggingface.co/docs/diffusers/api/pipelines/stable_diffusion).

```python

from diffusers import StableDiffusionPipeline, DPMSolverMultistepScheduler

import torch

prompt = (

"modelshoot style, (extremely detailed CG unity 8k wallpaper), full shot body photo of the most beautiful artwork in the world, "

"english medieval witch, black silk vale, pale skin, black silk robe, black cat, necromancy magic, medieval era, "

"photorealistic painting by Ed Blinkey, Atey Ghailan, Studio Ghibli, by Jeremy Mann, Greg Manchess, Antonio Moro, trending on ArtStation, "

"trending on CGSociety, Intricate, High Detail, Sharp focus, dramatic, photorealistic painting art by midjourney and greg rutkowski"

)

model_id = "darkstorm2150/Protogen_v5.8_Official_Release"

pipe = StableDiffusionPipeline.from_pretrained(model_id, torch_dtype=torch.float16)

pipe.scheduler = DPMSolverMultistepScheduler.from_config(pipe.scheduler.config)

pipe = pipe.to("cuda")

image = pipe(prompt, num_inference_steps=25).images[0]

image.save("./result.jpg")

```

## - PENDING DATA FOR MERGE, RPGv2 not accounted..

## Checkpoint Merging Data Reference

<style>

.myTable {

border-collapse:collapse;

}

.myTable th {

background-color:#663380;

color:white;

}

.myTable td, .myTable th {

padding:5px;

border:1px solid #663380;

}

</style>

<table class="myTable">

<tr>

<th>Models</th>

<th>Protogen v2.2 (Anime)</th>

<th>Protogen x3.4 (Photo)</th>

<th>Protogen x5.3 (Photo)</th>

<th>Protogen x5.8 (Sci-fi/Anime)</th>

<th>Protogen x5.9 (Dragon)</th>

<th>Protogen x7.4 (Eclipse)</th>

<th>Protogen x8.0 (Nova)</th>

<th>Protogen x8.6 (Infinity)</th>

</tr>

<tr>

<td>seek_art_mega v1</td>

<td>52.50%</td>

<td>42.76%</td>

<td>42.63%</td>

<td></td>

<td></td>

<td></td>

<td>25.21%</td>

<td>14.83%</td>

</tr>

<tr>

<td>modelshoot v1</td>

<td>30.00%</td>

<td>24.44%</td>

<td>24.37%</td>

<td>2.56%</td>

<td>2.05%</td>

<td>3.48%</td>

<td>22.91%</td>

<td>13.48%</td>

</tr>

<tr>

<td>elldreth v1</td>

<td>12.64%</td>

<td>10.30%</td>

<td>10.23%</td>

<td></td>

<td></td>

<td></td>

<td>6.06%</td>

<td>3.57%</td>

</tr>

<tr>

<td>photoreal v2</td>

<td></td>

<td></td>

<td>10.00%</td>

<td>48.64%</td>

<td>38.91%</td>

<td>66.33%</td>

<td>20.49%</td>

<td>12.06%</td>

</tr>

<tr>

<td>analogdiffusion v1</td>

<td></td>

<td>4.75%</td>

<td>4.50%</td>

<td></td>

<td></td>

<td></td>

<td>1.75%</td>

<td>1.03%</td>

</tr>

<tr>

<td>openjourney v2</td>

<td></td>

<td>4.51%</td>

<td>4.28%</td>

<td></td>

<td></td>

<td>4.75%</td>

<td>2.26%</td>

<td>1.33%</td>

</tr>

<tr>

<td>hassan1.4</td>

<td>2.63%</td>

<td>2.14%</td>

<td>2.13%</td>

<td></td>

<td></td>

<td></td>

<td>1.26%</td>

<td>0.74%</td>

</tr>

<tr>

<td>f222</td>

<td>2.23%</td>

<td>1.82%</td>

<td>1.81%</td>

<td></td>

<td></td>

<td></td>

<td>1.07%</td>

<td>0.63%</td>

</tr>

<tr>

<td>hasdx</td>

<td></td>

<td></td>

<td></td>

<td>20.00%</td>

<td>16.00%</td>

<td>4.07%</td>

<td>5.01%</td>

<td>2.95%</td>

</tr>

<tr>

<td>moistmix</td>

<td></td>

<td></td>

<td></td>

<td>16.00%</td>

<td>12.80%</td>

<td>3.86%</td>

<td>4.08%</td>

<td>2.40%</td>

</tr>

<tr>

<td>roboDiffusion v1</td>

<td></td>

<td>4.29%</td>

<td></td>

<td>12.80%</td>

<td>10.24%</td>

<td>3.67%</td>

<td>4.41%</td>

<td>2.60%</td>

</tr>

<tr>

<td>RPG v3</td>

<td></td>

<td>5.00%</td>

<td></td>

<td></td>

<td>20.00%</td>

<td>4.29%</td>

<td>4.29%</td>

<td>2.52%</td>

</tr>

<tr>

<td>anything&everything</td>

<td></td>

<td></td>

<td></td>

<td></td>

<td></td>

<td>4.51%</td>

<td>0.56%</td>

<td>0.33%</td>

</tr>

<tr>

<td>dreamlikediff v1</td>

<td></td>

<td></td>

<td></td>

<td></td>

<td></td>

<td>5.0%</td>

<td>0.63%</td>

<td>0.37%</td>

</tr>

<tr>

<td>sci-fidiff v1</td>

<td></td>

<td></td>

<td></td>

<td></td>

<td></td>

<td></td>

<td></td>

<td>3.10%</td>

</tr>

<tr>

<td>synthwavepunk v2</td>

<td></td>

<td></td>

<td></td>

<td></td>

<td></td>

<td></td>

<td></td>

<td>3.26%</td>

</tr>

<tr>

<td>mashupv2</td>

<td></td>

<td></td>

<td></td>

<td></td>

<td></td>

<td></td>

<td></td>

<td>11.51%</td>

</tr>

<tr>

<td>dreamshaper 252</td>

<td></td>

<td></td>

<td></td>

<td></td>

<td></td>

<td></td>

<td></td>

<td>4.04%</td>

</tr>

<tr>

<td>comicdiff v2</td>

<td></td>

<td></td>

<td></td>

<td></td>

<td></td>

<td></td>

<td></td>

<td>4.25%</td>

</tr>

<tr>

<td>artEros</td>

<td></td>

<td></td>

<td></td>

<td></td>

<td></td>

<td></td>

<td></td>

<td>15.00%</td>

</tr>

</table>

## License

License

This model is licesed under a modified CreativeML OpenRAIL-M license.

You are not allowed to host, finetune, or do inference with the model or its derivatives on websites/apps/etc. If you want to, please email us at contact@dreamlike.art

You are free to host the model card and files (Without any actual inference or finetuning) on both commercial and non-commercial websites/apps/etc. Please state the full model name (Dreamlike Photoreal 2.0) and include the license as well as a link to the model card (https://huggingface.co/dreamlike-art/dreamlike-photoreal-2.0)

You are free to use the outputs (images) of the model for commercial purposes in teams of 10 or less

You can't use the model to deliberately produce nor share illegal or harmful outputs or content

The authors claims no rights on the outputs you generate, you are free to use them and are accountable for their use which must not go against the provisions set in the license

You may re-distribute the weights. If you do, please be aware you have to include the same use restrictions as the ones in the license and share a copy of the modified CreativeML OpenRAIL-M to all your users (please read the license entirely and carefully) Please read the full license here: https://huggingface.co/dreamlike-art/dreamlike-photoreal-2.0/blob/main/LICENSE.md | 9,504 | [

[

-0.05096435546875,

-0.047637939453125,

0.01291656494140625,

0.034759521484375,

-0.01207733154296875,

0.005352020263671875,

0.010955810546875,

-0.03173828125,

0.027740478515625,

0.00682830810546875,

-0.048370361328125,

-0.02740478515625,

-0.04388427734375,

0.0008158683776855469,

-0.00885009765625,

0.061798095703125,

-0.0016069412231445312,

-0.0162811279296875,

0.00467681884765625,

0.00818634033203125,

-0.0177001953125,

-0.002239227294921875,

-0.028564453125,

-0.026214599609375,

0.0225830078125,

0.034088134765625,

0.061309814453125,

0.041656494140625,

0.026641845703125,

0.0262451171875,

-0.0264129638671875,

0.0029010772705078125,

-0.0355224609375,

-0.0027446746826171875,

0.0032958984375,

-0.019561767578125,

-0.034881591796875,

-0.0023212432861328125,

0.025177001953125,

0.032684326171875,

-0.0118560791015625,

0.0247039794921875,

0.0232391357421875,

0.058349609375,

-0.039398193359375,

0.01226043701171875,

-0.00449371337890625,

0.0220794677734375,

-0.0028018951416015625,

-0.003383636474609375,

-0.0034427642822265625,

-0.04278564453125,

-0.004283905029296875,

-0.0634765625,

0.01474761962890625,

-0.001682281494140625,

0.0833740234375,

-0.0048980712890625,

-0.0184783935546875,

-0.0022754669189453125,

-0.053497314453125,

0.057708740234375,

-0.054290771484375,

0.027679443359375,

0.0135345458984375,

0.01020050048828125,

-0.015472412109375,

-0.058135986328125,

-0.0672607421875,

0.0175018310546875,

0.000823974609375,

0.046966552734375,

-0.0300445556640625,

-0.02783203125,

0.01204681396484375,

0.02191162109375,

-0.0538330078125,

-0.0099945068359375,

-0.034576416015625,

-0.0120391845703125,

0.043212890625,

0.014068603515625,

0.0268707275390625,

-0.01145172119140625,

-0.044403076171875,

-0.0191497802734375,

-0.01654052734375,

0.032012939453125,

0.0219879150390625,

-0.0026416778564453125,

-0.039337158203125,

0.03363037109375,

-0.0076904296875,

0.048370361328125,

0.0186920166015625,

-0.0297088623046875,

0.03900146484375,

-0.0357666015625,

-0.025177001953125,

-0.0134124755859375,

0.055908203125,

0.046478271484375,

-0.006237030029296875,

0.00223541259765625,

0.0091094970703125,

0.01078033447265625,

0.0017032623291015625,

-0.07415771484375,

-0.022735595703125,

0.03814697265625,

-0.027557373046875,

-0.035980224609375,

0.00034427642822265625,

-0.07916259765625,

-0.00478363037109375,

0.00936126708984375,

0.0235443115234375,

-0.03662109375,

-0.031341552734375,

0.01556396484375,

-0.0248565673828125,

0.01312255859375,

0.0248565673828125,

-0.050048828125,

0.02587890625,

0.025054931640625,

0.074951171875,

-0.0019121170043945312,

-0.00983428955078125,

0.0258026123046875,

0.0233917236328125,

-0.036895751953125,

0.05718994140625,

-0.01220703125,

-0.04791259765625,

-0.0399169921875,

0.0258331298828125,

-0.014556884765625,

-0.005199432373046875,

0.046234130859375,

-0.0253448486328125,

0.028594970703125,

-0.0217132568359375,

-0.043609619140625,

-0.0167388916015625,

0.01416015625,

-0.0443115234375,

0.06427001953125,

0.01300811767578125,

-0.0716552734375,

0.0279541015625,

-0.05767822265625,

-0.00140380859375,

-0.0172271728515625,

0.0021076202392578125,

-0.060333251953125,

-0.0255126953125,

0.01275634765625,

0.020233154296875,

-0.0161590576171875,

-0.0230712890625,

-0.0355224609375,

-0.0250091552734375,

0.00681304931640625,

-0.020355224609375,

0.081298828125,

0.036163330078125,

-0.049163818359375,

-0.0030975341796875,

-0.057525634765625,

0.00351715087890625,

0.046478271484375,

-0.02069091796875,

0.01050567626953125,

-0.031646728515625,

0.0013456344604492188,

0.0284423828125,

0.01107025146484375,

-0.04327392578125,

0.01708984375,

-0.0267486572265625,

0.0305328369140625,

0.07098388671875,

0.0251617431640625,

0.03582763671875,

-0.0616455078125,

0.04046630859375,

0.0251312255859375,

0.008331298828125,

0.0268096923828125,

-0.0406494140625,

-0.05126953125,

-0.027679443359375,

0.0191650390625,

0.03741455078125,

-0.032012939453125,

0.03302001953125,

-0.01873779296875,

-0.07012939453125,

-0.0187835693359375,

-0.00507354736328125,

0.0252227783203125,

0.049530029296875,

0.022064208984375,

-0.00421905517578125,

-0.041046142578125,

-0.053497314453125,

0.0182037353515625,

-0.01233673095703125,

0.0217132568359375,

0.044525146484375,

0.05438232421875,

-0.0226287841796875,

0.0604248046875,

-0.068115234375,

-0.021636962890625,

-0.0237274169921875,

-0.006710052490234375,

0.035736083984375,

0.05548095703125,

0.06292724609375,

-0.07196044921875,

-0.044281005859375,

0.005199432373046875,

-0.049713134765625,

-0.0011339187622070312,

0.0079498291015625,

-0.02825927734375,

-0.005634307861328125,

0.01004791259765625,

-0.048797607421875,

0.036163330078125,

0.04241943359375,

-0.053741455078125,

0.059173583984375,

-0.032684326171875,

0.0293426513671875,

-0.0855712890625,

0.0236968994140625,

0.0029430389404296875,

-0.0056915283203125,

-0.05816650390625,

0.01096343994140625,

-0.006046295166015625,

-0.0008935928344726562,

-0.05218505859375,

0.051727294921875,

-0.04400634765625,

0.021392822265625,

0.0014657974243164062,

0.00452423095703125,

0.006313323974609375,

0.04095458984375,

-0.01299285888671875,

0.072998046875,

0.044708251953125,

-0.0513916015625,

0.019378662109375,

0.0221405029296875,

-0.03533935546875,

0.015167236328125,

-0.04669189453125,

-0.005733489990234375,

-0.0188140869140625,

0.01349639892578125,

-0.08197021484375,

-0.014251708984375,

0.02117919921875,

-0.044769287109375,

0.00226593017578125,

0.0037860870361328125,

-0.024871826171875,

-0.0562744140625,

-0.027252197265625,

0.00792694091796875,

0.06500244140625,

-0.01190948486328125,

0.033233642578125,

0.014007568359375,

0.0164642333984375,

-0.0364990234375,

-0.04327392578125,

-0.016448974609375,

-0.028472900390625,

-0.0751953125,

0.05413818359375,

-0.0164794921875,

-0.01287841796875,

-0.00243377685546875,

-0.0189666748046875,

-0.00997161865234375,

0.00778961181640625,

0.026123046875,

0.0082855224609375,

0.0048828125,

-0.0231475830078125,

-0.0248870849609375,

-0.008758544921875,

-0.01434326171875,

-0.00991058349609375,

0.050201416015625,

0.0036945343017578125,

-0.017333984375,

-0.0421142578125,

-0.00351715087890625,

0.059478759765625,

0.002239227294921875,

0.05450439453125,

0.052337646484375,

-0.01702880859375,

-0.01397705078125,

-0.01763916015625,

-0.0134124755859375,

-0.035400390625,

0.003139495849609375,

-0.0250091552734375,

-0.043975830078125,

0.056182861328125,

0.005527496337890625,

-0.00445556640625,

0.04498291015625,

0.0360107421875,

-0.0231475830078125,

0.067626953125,

0.0377197265625,

0.0004603862762451172,

0.0308837890625,

-0.06915283203125,

-0.0081939697265625,

-0.0604248046875,

-0.038330078125,

-0.0281982421875,

-0.047576904296875,

-0.035247802734375,

-0.052734375,

0.042449951171875,

0.0169219970703125,

-0.046295166015625,

0.02777099609375,

-0.05023193359375,

0.007762908935546875,

0.036712646484375,

0.050445556640625,

0.0004680156707763672,

-0.01302337646484375,

-0.036163330078125,

-0.01148223876953125,

-0.038787841796875,

-0.0252532958984375,

0.0618896484375,

0.00738525390625,

0.040435791015625,

0.036773681640625,

0.05596923828125,

0.01207733154296875,

-0.0009245872497558594,

-0.01959228515625,

0.03411865234375,

0.00946044921875,

-0.07427978515625,

-0.0232086181640625,

-0.0229949951171875,

-0.08197021484375,

0.0245819091796875,

-0.0352783203125,

-0.064453125,

0.032989501953125,

0.01322174072265625,

-0.04205322265625,

0.0350341796875,

-0.04510498046875,

0.073486328125,

-0.00811004638671875,

-0.05792236328125,

0.019744873046875,

-0.0538330078125,

0.01355743408203125,

0.007556915283203125,

0.0465087890625,

-0.01556396484375,

-0.0196685791015625,

0.061492919921875,

-0.049041748046875,

0.053375244140625,

-0.016754150390625,

0.0207672119140625,

0.04241943359375,

0.00724029541015625,

0.048370361328125,

0.0046539306640625,

-0.0026187896728515625,

-0.01248931884765625,

-0.01241302490234375,

-0.047088623046875,

-0.029083251953125,

0.05908203125,

-0.07965087890625,

-0.041412353515625,

-0.060333251953125,

-0.0199127197265625,

0.01186370849609375,

0.02777099609375,

0.033172607421875,

0.01268768310546875,

-0.004413604736328125,

0.005931854248046875,

0.0555419921875,

-0.01287078857421875,

0.051727294921875,

0.0210723876953125,

-0.0282745361328125,

-0.04058837890625,

0.0748291015625,

0.0238494873046875,

0.0239410400390625,

0.0186920166015625,

0.050445556640625,

-0.019500732421875,

-0.052032470703125,

-0.0196380615234375,

0.007175445556640625,

-0.0287628173828125,

-0.018096923828125,

-0.06304931640625,

-0.007633209228515625,

-0.039825439453125,

-0.034393310546875,

-0.00963592529296875,

-0.0367431640625,

-0.030120849609375,

-0.0224151611328125,

0.043914794921875,

0.02838134765625,

-0.0263671875,

0.00844573974609375,

-0.036285400390625,

0.028717041015625,

0.03265380859375,

0.01654052734375,

0.00493621826171875,

-0.035247802734375,

0.01055145263671875,

0.0175018310546875,

-0.0440673828125,

-0.0828857421875,

0.049102783203125,

-0.001312255859375,

0.0345458984375,

0.0198516845703125,

-0.005626678466796875,

0.08416748046875,

-0.020782470703125,

0.07049560546875,

0.03179931640625,

-0.058929443359375,

0.042938232421875,

-0.0465087890625,

0.0300445556640625,

0.032012939453125,

0.038177490234375,

-0.024810791015625,

-0.0248870849609375,

-0.0665283203125,

-0.06866455078125,

0.0426025390625,

0.041351318359375,

-0.0198974609375,

0.016265869140625,

-0.0018930435180664062,

-0.01343536376953125,

0.007389068603515625,

-0.06695556640625,

-0.047882080078125,

-0.019561767578125,

0.002056121826171875,

-0.005985260009765625,

0.01296234130859375,

-0.0001609325408935547,

-0.042205810546875,

0.062255859375,

0.0160064697265625,

0.04644775390625,

0.02752685546875,

0.01163482666015625,

-0.01383209228515625,

0.0131683349609375,

0.040924072265625,

0.0498046875,

-0.0301055908203125,

0.0007252693176269531,

0.0101470947265625,

-0.052276611328125,

0.02825927734375,

-0.01184844970703125,

-0.04022216796875,

-0.005207061767578125,

0.0238494873046875,

0.035400390625,

0.0162200927734375,

-0.00977325439453125,

0.03314208984375,

0.0029449462890625,

-0.029632568359375,

-0.039886474609375,

0.0265350341796875,

0.0294036865234375,

0.0236053466796875,

0.016845703125,

0.031341552734375,

0.006046295166015625,

-0.0419921875,

0.019989013671875,

0.02392578125,

-0.03955078125,

-0.005573272705078125,

0.08160400390625,

0.004558563232421875,

0.0008001327514648438,

0.01172637939453125,

-0.0096435546875,

-0.041656494140625,

0.0767822265625,

0.05499267578125,

0.037322998046875,

-0.01221466064453125,

0.0218048095703125,

0.05242919921875,

0.0012388229370117188,

-0.0117340087890625,

0.0413818359375,

0.017547607421875,

-0.0399169921875,

0.002994537353515625,

-0.060791015625,

-0.01467132568359375,

0.005054473876953125,

-0.0247650146484375,

0.0352783203125,

-0.042572021484375,

-0.0232086181640625,

0.005218505859375,

0.0132598876953125,

-0.038177490234375,

0.042449951171875,

-0.00701904296875,

0.07232666015625,

-0.0606689453125,

0.051483154296875,

0.044281005859375,

-0.052581787109375,

-0.0789794921875,

-0.0081939697265625,

0.01297760009765625,

-0.055572509765625,

0.036773681640625,

-0.0091705322265625,

0.005809783935546875,

0.0150604248046875,

-0.043914794921875,

-0.083251953125,

0.10772705078125,

0.0072021484375,

-0.02392578125,

0.004730224609375,

-0.0007143020629882812,

0.049224853515625,

-0.00818634033203125,

0.0379638671875,

0.028564453125,

0.043426513671875,

0.032958984375,

-0.04962158203125,

0.00997161865234375,

-0.0411376953125,

0.0118560791015625,

0.01003265380859375,

-0.07635498046875,

0.07208251953125,

-0.02532958984375,

-0.0156402587890625,

-0.0029754638671875,

0.053924560546875,

0.036865234375,

0.01434326171875,

0.03436279296875,

0.07232666015625,

0.027557373046875,

-0.030517578125,

0.0709228515625,

-0.0279541015625,

0.0477294921875,

0.0506591796875,

0.01055908203125,

0.043609619140625,

0.022430419921875,

-0.0457763671875,

0.03192138671875,

0.05316162109375,

-0.0017642974853515625,

0.052001953125,

0.02142333984375,

-0.020751953125,

0.0010395050048828125,

0.01197052001953125,

-0.048583984375,

0.01543426513671875,

0.010101318359375,

-0.0210418701171875,

0.0075225830078125,

0.0018453598022460938,

0.02288818359375,

0.008270263671875,

-0.021514892578125,

0.05450439453125,

-0.01026153564453125,

-0.034942626953125,

0.040191650390625,

-0.0087890625,

0.04986572265625,

-0.040283203125,

0.0070037841796875,

-0.0184478759765625,

0.0302886962890625,

-0.036865234375,

-0.07647705078125,

0.006145477294921875,

-0.009185791015625,

-0.005901336669921875,

-0.016387939453125,

0.0307159423828125,

-0.0096435546875,

-0.06024169921875,

0.0229644775390625,

0.01103973388671875,

0.0038013458251953125,

0.032257080078125,

-0.0731201171875,

0.0164794921875,

0.018829345703125,

-0.033538818359375,

0.016143798828125,

0.03021240234375,

0.0225067138671875,

0.0621337890625,

0.058837890625,

0.0198822021484375,

0.01959228515625,

-0.035491943359375,

0.07354736328125,

-0.059906005859375,

-0.046173095703125,

-0.0572509765625,

0.060821533203125,

-0.0195159912109375,

-0.02587890625,

0.0806884765625,

0.05242919921875,

0.05047607421875,

-0.01114654541015625,

0.066650390625,

-0.0426025390625,

0.034698486328125,

-0.01004791259765625,

0.0501708984375,

-0.049072265625,

-0.009796142578125,

-0.0501708984375,

-0.0657958984375,

-0.01580810546875,

0.056396484375,

-0.006946563720703125,

0.0265960693359375,

0.036285400390625,

0.0582275390625,

-0.0089874267578125,

-0.003971099853515625,

0.0050811767578125,

0.031158447265625,

0.0264892578125,

0.04962158203125,

0.0244140625,

-0.04522705078125,

0.030975341796875,

-0.0570068359375,

-0.02349853515625,

-0.0160980224609375,

-0.05242919921875,

-0.032470703125,

-0.03814697265625,

-0.036285400390625,

-0.049407958984375,

0.008514404296875,

0.06268310546875,

0.041839599609375,

-0.053436279296875,

-0.02923583984375,

-0.0208892822265625,

0.01061248779296875,

-0.0343017578125,

-0.01947021484375,

0.0297393798828125,

0.01244354248046875,

-0.05694580078125,

-0.004634857177734375,

0.0144195556640625,

0.04205322265625,

0.004550933837890625,

-0.01537322998046875,

-0.03082275390625,

0.0069732666015625,

0.02069091796875,