diff --git a/.gitattributes b/.gitattributes

index 1ef325f1b111266a6b26e0196871bd78baa8c2f3..1cf1df9110199449371df3536cb7ae773a75661a 100644

--- a/.gitattributes

+++ b/.gitattributes

@@ -1,4 +1,6 @@

*.7z filter=lfs diff=lfs merge=lfs -text

+*.ipynb filter=lfs diff=lfs merge=lfs -text

+*.pdf filter=lfs diff=lfs merge=lfs -text

*.arrow filter=lfs diff=lfs merge=lfs -text

*.bin filter=lfs diff=lfs merge=lfs -text

*.bz2 filter=lfs diff=lfs merge=lfs -text

diff --git a/.gitignore b/.gitignore

new file mode 100644

index 0000000000000000000000000000000000000000..bd8cd18eb507aaaea59caa98a03af1bb0584a482

--- /dev/null

+++ b/.gitignore

@@ -0,0 +1,143 @@

+# Byte-compiled / optimized / DLL files

+__pycache__/

+*.py[cod]

+*$py.class

+

+# C extensions

+*.so

+

+# Distribution / packaging

+.Python

+.DS_Store

+data/

+models/

+output-*/

+outputs-*/

+outputs/

+*.jpg

+*.jpeg

+*.png

+docs/feedbacks/

+*.tar

+*.pth

+build/

+develop-eggs/

+dist/

+downloads/

+eggs/

+.eggs/

+.idea/

+lib/

+lib64/

+parts/

+sdist/

+var/

+wheels/

+pip-wheel-metadata/

+share/python-wheels/

+*.egg-info/

+.installed.cfg

+*.egg

+MANIFEST

+

+# PyInstaller

+# Usually these files are written by a python script from a template

+# before PyInstaller builds the exe, so as to inject date/other infos into it.

+*.manifest

+*.spec

+

+# Installer logs

+pip-log.txt

+pip-delete-this-directory.txt

+

+# Unit test / coverage reports

+htmlcov/

+.tox/

+.nox/

+.coverage

+.coverage.*

+.cache

+nosetests.xml

+coverage.xml

+*.cover

+*.py,cover

+.hypothesis/

+.pytest_cache/

+

+# Translations

+*.mo

+*.pot

+

+# Django stuff:

+*.log

+local_settings.py

+db.sqlite3

+db.sqlite3-journal

+

+# Flask stuff:

+instance/

+.webassets-cache

+

+# Scrapy stuff:

+.scrapy

+

+# Sphinx documentation

+docs/_build/

+

+# PyBuilder

+target/

+

+# Jupyter Notebook

+.ipynb_checkpoints

+

+# IPython

+profile_default/

+ipython_config.py

+

+# pyenv

+.python-version

+

+# pipenv

+# According to pypa/pipenv#598, it is recommended to include Pipfile.lock in version control.

+# However, in case of collaboration, if having platform-specific dependencies or dependencies

+# having no cross-platform support, pipenv may install dependencies that don't work, or not

+# install all needed dependencies.

+#Pipfile.lock

+

+# PEP 582; used by e.g. github.com/David-OConnor/pyflow

+__pypackages__/

+

+# Celery stuff

+celerybeat-schedule

+celerybeat.pid

+

+# SageMath parsed files

+*.sage.py

+

+# Environments

+.env

+.venv

+env/

+venv/

+ENV/

+env.bak/

+venv.bak/

+

+# Spyder project settings

+.spyderproject

+.spyproject

+

+# Rope project settings

+.ropeproject

+

+# mkdocs documentation

+/site

+

+# mypy

+.mypy_cache/

+.dmypy.json

+dmypy.json

+

+# Pyre type checker

+.pyre/

+

diff --git a/.readthedocs.yaml b/.readthedocs.yaml

new file mode 100644

index 0000000000000000000000000000000000000000..a11adbafa61de469117ae782cc5168bde7b89667

--- /dev/null

+++ b/.readthedocs.yaml

@@ -0,0 +1,19 @@

+# Read the Docs configuration file for MkDocs projects

+# See https://docs.readthedocs.io/en/stable/config-file/v2.html for details

+

+# Required

+version: 2

+

+# Set the version of Python and other tools you might need

+build:

+ os: ubuntu-22.04

+ tools:

+ python: "3.9"

+

+mkdocs:

+ configuration: mkdocs.yml

+

+# Optionally declare the Python requirements required to build your docs

+python:

+ install:

+ - requirements: docs/requirements.txt

diff --git a/LICENSE b/LICENSE

new file mode 100644

index 0000000000000000000000000000000000000000..334b993f68bd352ca77efe9bf7e65e14cce19711

--- /dev/null

+++ b/LICENSE

@@ -0,0 +1,21 @@

+MIT License

+

+Copyright (c) 2022 BreezeDeus

+

+Permission is hereby granted, free of charge, to any person obtaining a copy

+of this software and associated documentation files (the "Software"), to deal

+in the Software without restriction, including without limitation the rights

+to use, copy, modify, merge, publish, distribute, sublicense, and/or sell

+copies of the Software, and to permit persons to whom the Software is

+furnished to do so, subject to the following conditions:

+

+The above copyright notice and this permission notice shall be included in all

+copies or substantial portions of the Software.

+

+THE SOFTWARE IS PROVIDED "AS IS", WITHOUT WARRANTY OF ANY KIND, EXPRESS OR

+IMPLIED, INCLUDING BUT NOT LIMITED TO THE WARRANTIES OF MERCHANTABILITY,

+FITNESS FOR A PARTICULAR PURPOSE AND NONINFRINGEMENT. IN NO EVENT SHALL THE

+AUTHORS OR COPYRIGHT HOLDERS BE LIABLE FOR ANY CLAIM, DAMAGES OR OTHER

+LIABILITY, WHETHER IN AN ACTION OF CONTRACT, TORT OR OTHERWISE, ARISING FROM,

+OUT OF OR IN CONNECTION WITH THE SOFTWARE OR THE USE OR OTHER DEALINGS IN THE

+SOFTWARE.

diff --git a/Makefile b/Makefile

new file mode 100644

index 0000000000000000000000000000000000000000..3091378b9f6f51b4bf61fbdc7152571a3e720e7a

--- /dev/null

+++ b/Makefile

@@ -0,0 +1,44 @@

+predict:

+ p2t predict -l en,ch_sim -a mfd -t yolov7_tiny -i docs/examples/mixed.jpg --save-analysis-res tmp-output.jpg

+# p2t predict -l en,ch_sim --text-ocr-config '{"rec_model_name": "doc-densenet_lite_666-gru_large"}' \

+# --use-analyzer -a mfd -t yolov7 --resized-shape 768 \

+# --analyzer-model-fp ~/.cnstd/1.2/analysis/mfd-yolov7-epoch224-20230613.pt \

+# --latex-ocr-model-fp ~/.pix2text/formula/p2t-mfr-20230702.pth \

+# -i docs/examples/mixed.jpg --save-analysis-res tmp-output.jpg

+# p2t predict -l vi \

+# --use-analyzer -a mfd -t yolov7 --resized-shape 768 \

+# --analyzer-model-fp ~/.cnstd/1.2/analysis/mfd-yolov7-epoch224-20230613.pt \

+# --latex-ocr-model-fp ~/.pix2text/formula/p2t-mfr-20230702.pth \

+# -i docs/examples/vietnamese.jpg --save-analysis-res tmp-output.jpg

+# p2t predict -l en,ch_tra \

+# --use-analyzer -a mfd -t yolov7 --resized-shape 768 \

+# --analyzer-model-fp ~/.cnstd/1.2/analysis/mfd-yolov7-epoch224-20230613.pt \

+# --latex-ocr-model-fp ~/.pix2text/formula/p2t-mfr-20230702.pth --rec-kwargs '{"det_bbox_max_expand_ratio": 0}'\

+# -i docs/examples/ch_tra7.jpg --save-analysis-res tmp-output.jpg

+

+evaluate-mfr:

+ p2t evaluate -l en,ch_sim --mfd-config '{"model_name": "mfd"}' \

+ --formula-ocr-config '{"model_name":"mfr-1.5","model_backend":"onnx"}' \

+ --text-ocr-config '{"rec_model_name": "doc-densenet_lite_666-gru_large"}' \

+ --resized-shape 768 --auto-line-break --file-type formula \

+ --max-samples 50 --prefix-img-dir data \

+ -i data/exported_call_events_with_images.json -o data/exported_cer_mfr1.0.json \

+ --output-excel data/exported_cer_mfr1.0.xls --output-html data/exported_cer_mfr1.0.html

+

+

+package:

+ rm -rf build

+ python setup.py sdist bdist_wheel

+

+VERSION := $(shell sed -n "s/^__version__ = '\(.*\)'/\1/p" pix2text/__version__.py)

+upload:

+ python -m twine upload dist/pix2text-$(VERSION)* --verbose

+

+# 开启 OCR HTTP 服务

+serve:

+ p2t serve -l en,ch_sim -a mfd -t yolov7 --analyzer-model-fp ~/.cnstd/1.2/analysis/mfd-yolov7-epoch224-20230613.pt --formula-ocr-config '{"model_name":"mfr-pro-1.5","model_backend":"onnx"}' --text-ocr-config '{"rec_model_name": "doc-densenet_lite_666-gru_large"}'

+

+docker-build:

+ docker build -t breezedeus/pix2text:v$(VERSION) .

+

+.PHONY: package upload serve daemon

diff --git a/README.md b/README.md

new file mode 100644

index 0000000000000000000000000000000000000000..20086e72ac0dc9641cc87f6063891d6c4d299b67

--- /dev/null

+++ b/README.md

@@ -0,0 +1,284 @@

+

+

+

+

+[](https://discord.gg/GgD87WM8Tf)

+[](https://pepy.tech/project/pix2text)

+[](https://visitorbadge.io/status?path=https%3A%2F%2Fgithub.com%2Fbreezedeus%2FPix2Text)

+[](./LICENSE)

+[](https://badge.fury.io/py/pix2text)

+[](https://github.com/breezedeus/pix2text)

+[](https://github.com/breezedeus/pix2text)

+

+

+[](https://twitter.com/breezedeus)

+

+[📖 Doc](https://pix2text.readthedocs.io) |

+[👩🏻💻 Online Service](https://p2t.breezedeus.com) |

+[👨🏻💻 Demo](https://huggingface.co/spaces/breezedeus/Pix2Text-Demo) |

+[💬 Contact](https://www.breezedeus.com/article/join-group)

+

+

+

+[中文](./README_cn.md) | English

+

+

+

+

+

+# Pix2Text

+

+## Update 2025.07.25: **V1.1.4** Released

+

+Major Changes:

+

+- Upgraded the Mathematical Formula Detection (MFD) and Mathematical Formula Recognition (MFR) models to version 1.5. All default configurations, documentation, and examples now use `mfd-1.5` and `mfr-1.5` as the standard models.

+

+## Update 2025.04.15: **V1.1.3** Released

+

+Major Changes:

+

+- Support for `VlmTableOCR` and `VlmTextFormulaOCR` models based on the VLM interface (see [LiteLLM documentation](https://docs.litellm.ai/docs/)) allowing the use of closed-source VLM models. Installation command: `pip install pix2text[vlm]`.

+ - Usage examples can be found in [tests/test_vlm.py](tests/test_vlm.py) and [tests/test_pix2text.py](tests/test_pix2text.py).

+

+## Update 2024.11.17: **V1.1.2** Released

+

+Major Changes:

+

+* A new layout analysis model [DocLayout-YOLO](https://github.com/opendatalab/DocLayout-YOLO) has been integrated, improving the accuracy of layout analysis.

+

+## Update 2024.06.18:**V1.1.1** Released

+

+Major changes:

+

+* Support the new mathematical formula detection models (MFD): [breezedeus/pix2text-mfd](https://huggingface.co/breezedeus/pix2text-mfd) ([Mirror](https://hf-mirror.com/breezedeus/pix2text-mfd)), which significantly improves the accuracy of formula detection.

+

+See details: [Pix2Text V1.1.1 Released, Bringing Better Mathematical Formula Detection Models | Breezedeus.com](https://www.breezedeus.com/article/p2t-mfd-v1.1.1).

+

+## Update 2024.04.28: **V1.1** Released

+

+Major changes:

+

+* Added layout analysis and table recognition models, supporting the conversion of images with complex layouts into Markdown format. See examples: [Pix2Text Online Documentation / Examples](https://pix2text.readthedocs.io/zh-cn/stable/examples_en/).

+* Added support for converting entire PDF files to Markdown format. See examples: [Pix2Text Online Documentation / Examples](https://pix2text.readthedocs.io/zh-cn/stable/examples_en/).

+* Enhanced the interface with more features, including adjustments to existing interface parameters.

+* Launched the [Pix2Text Online Documentation](https://pix2text.readthedocs.io).

+

+## Update 2024.02.26: **V1.0** Released

+

+Main Changes:

+

+* The Mathematical Formula Recognition (MFR) model employs a new architecture and has been trained on a new dataset, achieving state-of-the-art (SOTA) accuracy. For detailed information, please see: [Pix2Text V1.0 New Release: The Best Open-Source Formula Recognition Model | Breezedeus.com](https://www.breezedeus.com/article/p2t-v1.0).

+

+See more at: [RELEASE.md](docs/RELEASE.md) .

+

+

+

+

+

+

+↓↓↓ Click to show details ↓↓↓

+

+| Language | Code Name |

+| ------------------- | ----------- |

+| Abaza | abq |

+| Adyghe | ady |

+| Afrikaans | af |

+| Angika | ang |

+| Arabic | ar |

+| Assamese | as |

+| Avar | ava |

+| Azerbaijani | az |

+| Belarusian | be |

+| Bulgarian | bg |

+| Bihari | bh |

+| Bhojpuri | bho |

+| Bengali | bn |

+| Bosnian | bs |

+| Simplified Chinese | ch_sim |

+| Traditional Chinese | ch_tra |

+| Chechen | che |

+| Czech | cs |

+| Welsh | cy |

+| Danish | da |

+| Dargwa | dar |

+| German | de |

+| English | en |

+| Spanish | es |

+| Estonian | et |

+| Persian (Farsi) | fa |

+| French | fr |

+| Irish | ga |

+| Goan Konkani | gom |

+| Hindi | hi |

+| Croatian | hr |

+| Hungarian | hu |

+| Indonesian | id |

+| Ingush | inh |

+| Icelandic | is |

+| Italian | it |

+| Japanese | ja |

+| Kabardian | kbd |

+| Kannada | kn |

+| Korean | ko |

+| Kurdish | ku |

+| Latin | la |

+| Lak | lbe |

+| Lezghian | lez |

+| Lithuanian | lt |

+| Latvian | lv |

+| Magahi | mah |

+| Maithili | mai |

+| Maori | mi |

+| Mongolian | mn |

+| Marathi | mr |

+| Malay | ms |

+| Maltese | mt |

+| Nepali | ne |

+| Newari | new |

+| Dutch | nl |

+| Norwegian | no |

+| Occitan | oc |

+| Pali | pi |

+| Polish | pl |

+| Portuguese | pt |

+| Romanian | ro |

+| Russian | ru |

+| Serbian (cyrillic) | rs_cyrillic |

+| Serbian (latin) | rs_latin |

+| Nagpuri | sck |

+| Slovak | sk |

+| Slovenian | sl |

+| Albanian | sq |

+| Swedish | sv |

+| Swahili | sw |

+| Tamil | ta |

+| Tabassaran | tab |

+| Telugu | te |

+| Thai | th |

+| Tajik | tjk |

+| Tagalog | tl |

+| Turkish | tr |

+| Uyghur | ug |

+| Ukranian | uk |

+| Urdu | ur |

+| Uzbek | uz |

+| Vietnamese | vi |

+

+

+> Ref: [Supported Languages](https://www.jaided.ai/easyocr/) .

+

+

+

+

+

+## Online Service

+

+Everyone can use the **[P2T Online Service](https://p2t.breezedeus.com)** for free, with a daily limit of 10,000 characters per account, which should be sufficient for normal use. *Please refrain from bulk API calls, as machine resources are limited, and this could prevent others from accessing the service.*

+

+Due to hardware constraints, the Online Service currently only supports **Simplified Chinese** and **English** languages. To try the models in other languages, please use the following **Online Demo**.

+

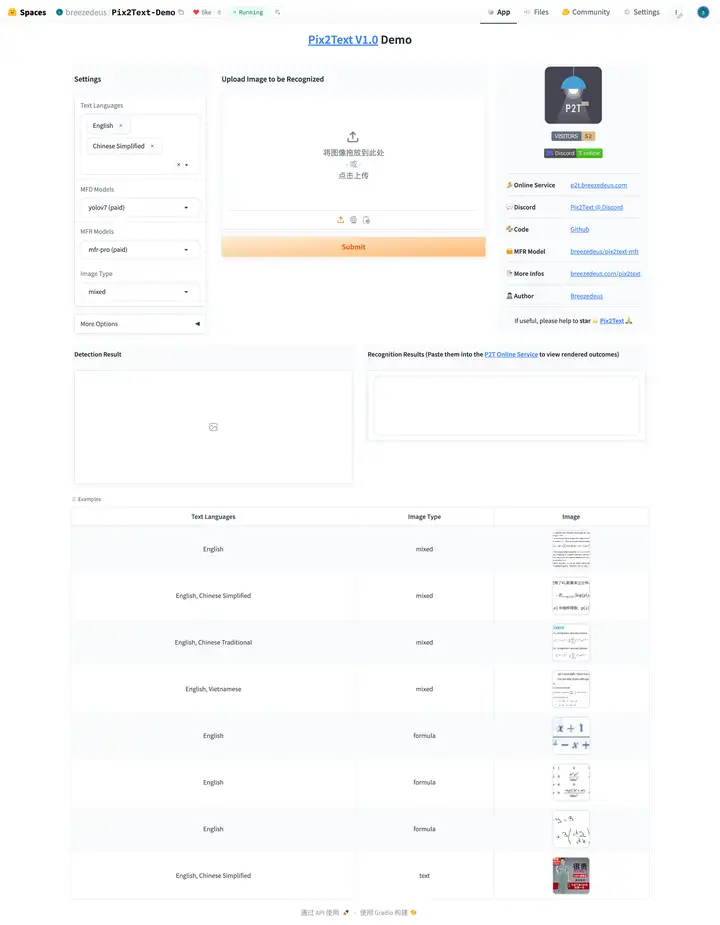

+## Online Demo 🤗

+

+You can also try the **[Online Demo](https://huggingface.co/spaces/breezedeus/Pix2Text-Demo)** to see the performance of **P2T** in various languages. However, the online demo operates on lower hardware specifications and may be slower. For Simplified Chinese or English images, it is recommended to use the **[P2T Online Service](https://p2t.breezedeus.com)**.

+

+## Examples

+

+See: [Pix2Text Online Documentation/Examples](https://pix2text.readthedocs.io/zh-cn/stable/examples_en/).

+

+## Usage

+

+See: [Pix2Text Online Documentation/Usage](https://pix2text.readthedocs.io/zh-cn/stable/usage/).

+

+## Models

+

+See: [Pix2Text Online Documentation/Models](https://pix2text.readthedocs.io/zh-cn/stable/models/).

+

+## Install

+

+Well, one line of command is enough if it goes well.

+

+```bash

+pip install pix2text

+```

+

+If you need to recognize languages other than **English** and **Simplified Chinese**, please use the following command to install additional packages:

+

+```bash

+pip install pix2text[multilingual]

+```

+

+If the installation is slow, you can specify an installation source, such as using the Aliyun source:

+

+```bash

+pip install pix2text -i https://mirrors.aliyun.com/pypi/simple

+```

+

+For more information, please refer to: [Pix2Text Online Documentation/Install](https://pix2text.readthedocs.io/zh-cn/stable/install/).

+

+## Command Line Tool

+

+See: [Pix2Text Online Documentation/Command Tool](https://pix2text.readthedocs.io/zh-cn/stable/command/).

+

+## HTTP Service

+

+See: [Pix2Text Online Documentation/Command Tool/Start Service](https://pix2text.readthedocs.io/zh-cn/stable/command/).

+

+

+## MacOS Desktop Application

+

+Please refer to [Pix2Text-Mac](https://github.com/breezedeus/Pix2Text-Mac) for installing the Pix2Text Desktop App for MacOS.

+

+

+

+

+

+

+

+[](https://discord.gg/GgD87WM8Tf)

+[](https://pepy.tech/project/pix2text)

+[](https://visitorbadge.io/status?path=https%3A%2F%2Fgithub.com%2Fbreezedeus%2FPix2Text)

+[](./LICENSE)

+[](https://badge.fury.io/py/pix2text)

+[](https://github.com/breezedeus/pix2text)

+[](https://github.com/breezedeus/pix2text)

+

+

+[](https://twitter.com/breezedeus)

+

+[📖 在线文档](https://pix2text.readthedocs.io) |

+[👩🏻💻 网页版](https://p2t.breezedeus.com) |

+[👨🏻💻 在线 Demo](https://huggingface.co/spaces/breezedeus/Pix2Text-Demo) |

+[💬 交流群](https://www.breezedeus.com/article/join-group)

+

+

+

+[English](./README.md) | 中文

+

+

+

+

+# Pix2Text (P2T)

+

+## Update 2025.07.25:发布 **V1.1.4**

+

+主要变更:

+

+- 数学公式检测(MFD)和数学公式识别(MFR)模型升级到 1.5 版本,所有默认配置、文档和示例均以 `mfd-1.5` 和 `mfr-1.5` 为标准模型。

+

+## Update 2025.04.15:分布 **V1.1.3**

+

+主要变更:

+

+- 支持基于 VLM 接口(具体参考 [LiteLLM 文档](https://docs.litellm.ai/docs/))的 `VlmTableOCR` 和 `VlmTextFormulaOCR` 模型,可使用闭源 VLM 模型。安装命令:`pip install pix2text[vlm]`。

+ - 使用方式见 [tests/test_vlm.py](tests/test_vlm.py) 和 [tests/test_pix2text.py](tests/test_pix2text.py)。

+

+## Update 2024.11.17:发布 **V1.1.2**

+

+主要变更:

+

+* 版面分析模型加入 [DocLayout-YOLO](https://github.com/opendatalab/DocLayout-YOLO),提升版面分析的准确性。

+

+## Update 2024.06.18:发布 **V1.1.1**

+

+主要变更:

+

+* 支持新的数学公式检测模型(MFD):[breezedeus/pix2text-mfd](https://huggingface.co/breezedeus/pix2text-mfd) ([国内镜像](https://hf-mirror.com/breezedeus/pix2text-mfd)),公式检测精度获得较大提升。

+

+具体说明请见:[Pix2Text V1.1.1 发布,带来更好的数学公式检测模型 | Breezedeus.com](https://www.breezedeus.com/article/p2t-mfd-v1.1.1)。

+

+## Update 2024.04.28:发布 **V1.1**

+

+主要变更:

+

+* 加入了版面分析和表格识别模型,支持把复杂排版的图片转换为 Markdown 格式,示例见:[Pix2Text 在线文档/Examples](https://pix2text.readthedocs.io/zh-cn/stable/examples/)。

+* 支持把整个 PDF 文件转换为 Markdown 格式,示例见:[Pix2Text 在线文档/Examples](https://pix2text.readthedocs.io/zh-cn/stable/examples/)。

+* 加入了更丰富的接口,已有接口的参数也有所调整。

+* 上线了 [Pix2Text 在线文档](https://pix2text.readthedocs.io)。

+

+## Update 2024.02.26:发布 **V1.0**

+

+主要变更:

+

+* 数学公式识别(MFR)模型使用新架构,在新的数据集上训练,获得了 SOTA 的精度。具体说明请见:[Pix2Text V1.0 新版发布:最好的开源公式识别模型 | Breezedeus.com](https://www.breezedeus.com/article/p2t-v1.0)。

+

+了解更多:[RELEASE.md](docs/RELEASE.md) 。

+

+

+

+

+

+

+↓↓↓ Click to show details ↓↓↓

+

+

+| Language | Code Name |

+| ------------------- | ----------- |

+| Abaza | abq |

+| Adyghe | ady |

+| Afrikaans | af |

+| Angika | ang |

+| Arabic | ar |

+| Assamese | as |

+| Avar | ava |

+| Azerbaijani | az |

+| Belarusian | be |

+| Bulgarian | bg |

+| Bihari | bh |

+| Bhojpuri | bho |

+| Bengali | bn |

+| Bosnian | bs |

+| Simplified Chinese | ch_sim |

+| Traditional Chinese | ch_tra |

+| Chechen | che |

+| Czech | cs |

+| Welsh | cy |

+| Danish | da |

+| Dargwa | dar |

+| German | de |

+| English | en |

+| Spanish | es |

+| Estonian | et |

+| Persian (Farsi) | fa |

+| French | fr |

+| Irish | ga |

+| Goan Konkani | gom |

+| Hindi | hi |

+| Croatian | hr |

+| Hungarian | hu |

+| Indonesian | id |

+| Ingush | inh |

+| Icelandic | is |

+| Italian | it |

+| Japanese | ja |

+| Kabardian | kbd |

+| Kannada | kn |

+| Korean | ko |

+| Kurdish | ku |

+| Latin | la |

+| Lak | lbe |

+| Lezghian | lez |

+| Lithuanian | lt |

+| Latvian | lv |

+| Magahi | mah |

+| Maithili | mai |

+| Maori | mi |

+| Mongolian | mn |

+| Marathi | mr |

+| Malay | ms |

+| Maltese | mt |

+| Nepali | ne |

+| Newari | new |

+| Dutch | nl |

+| Norwegian | no |

+| Occitan | oc |

+| Pali | pi |

+| Polish | pl |

+| Portuguese | pt |

+| Romanian | ro |

+| Russian | ru |

+| Serbian (cyrillic) | rs_cyrillic |

+| Serbian (latin) | rs_latin |

+| Nagpuri | sck |

+| Slovak | sk |

+| Slovenian | sl |

+| Albanian | sq |

+| Swedish | sv |

+| Swahili | sw |

+| Tamil | ta |

+| Tabassaran | tab |

+| Telugu | te |

+| Thai | th |

+| Tajik | tjk |

+| Tagalog | tl |

+| Turkish | tr |

+| Uyghur | ug |

+| Ukranian | uk |

+| Urdu | ur |

+| Uzbek | uz |

+| Vietnamese | vi |

+

+

+> Ref: [Supported Languages](https://www.jaided.ai/easyocr/) .

+

+

+

+

+

+## P2T 网页版

+

+所有人都可以免费使用 **[P2T网页版](https://p2t.breezedeus.com)**,每人每天可以免费识别 10000 个字符,正常使用应该够用了。*请不要批量调用接口,机器资源有限,批量调用会导致其他人无法使用服务。*

+

+受限于机器资源,网页版当前只支持**简体中文和英文**,要尝试其他语言上的效果,请使用以下的**在线 Demo**。

+

+

+

+## 在线 Demo 🤗

+

+也可以使用 **[在线 Demo](https://huggingface.co/spaces/breezedeus/Pix2Text-Demo)**(无法科学上网可以使用 [国内镜像](https://hf.qhduan.com/spaces/breezedeus/Pix2Text-Demo)) 尝试 **P2T** 在不同语言上的效果。但在线 Demo 使用的硬件配置较低,速度会较慢。如果是简体中文或者英文图片,建议使用 **[P2T网页版](https://p2t.breezedeus.com)**。

+

+## 示例

+

+参见:[Pix2Text在线文档/示例](https://pix2text.readthedocs.io/zh-cn/stable/examples/)。

+

+## 使用说明

+

+参见:[Pix2Text在线文档/使用说明](https://pix2text.readthedocs.io/zh-cn/stable/usage/)。

+

+## 模型下载

+

+参见:[Pix2Text在线文档/模型](https://pix2text.readthedocs.io/zh-cn/stable/models/)。

+

+

+

+## 安装

+

+嗯,顺利的话一行命令即可。

+

+```bash

+pip install pix2text

+```

+

+如果需要识别**英文**与**简体中文**之外的文字,请使用以下命令安装额外的包:

+

+```bash

+pip install pix2text[multilingual]

+```

+

+安装速度慢的话,可以指定国内的安装源,如使用阿里云的安装源:

+

+```bash

+pip install pix2text -i https://mirrors.aliyun.com/pypi/simple

+```

+

+

+

+

+{: style="width:280px"}

+

+

+

+## 2. 支付宝打赏 (Alipay reward)

+

+通过**支付宝**给作者打赏。

+Give the author a reward through Alipay.

+

+

+{: style="width:280px"}

+

+

+

+## 3. Buy me a Coffee

+If you are not in mainland China, you can also support the author through:

+

+

+

+

+{: style="width:280px"}

+

+

+

+## 二、微信交流群

+

+扫码加小助手为好友,备注 `p2t`,小助手会定期统一邀请大家入群:

+

+

+{: style="width:270px"}

+

+

+正常情况小助手会定期邀请入群,但无法保证时间。如果期望尽快得到答复,可以加入上面的知识星球 [**P2T/CnOCR/CnSTD私享群**](https://t.zsxq.com/FEYZRJQ) 。

+

+

+## 三、Discord

+

+欢迎加入 [**Pix2Text Discord 服务器**](https://discord.gg/GgD87WM8Tf) 。

+

+Welcome to join [**Pix2Text Discord Server**](https://discord.gg/GgD87WM8Tf) .

+

+

+## 四、邮件 / Email

+

+**邮箱**:breezedeus AT gmail.com,看的不勤,除非其他方式联系不上。

+

+Email: breezedeus AT gmail.com .

diff --git a/docs/demo.md b/docs/demo.md

new file mode 100644

index 0000000000000000000000000000000000000000..95b43e7f7981d0cc256eb9095efc1ed6819e48d0

--- /dev/null

+++ b/docs/demo.md

@@ -0,0 +1,20 @@

+## P2T 网页版

+

+所有人都可以免费使用 **[P2T网页版](https://p2t.breezedeus.com)**,每人每天可以免费识别 10000 个字符,正常使用应该够用了。如果无法打开,请尝试科学上网。*请不要批量调用接口,机器资源有限,批量调用会导致其他人无法使用服务。*

+

+受限于机器资源,网页版当前只支持**简体中文和英文**,要尝试其他语言上的效果,请使用以下的**在线 Demo**。

+

+

+

+

+

+

+## 在线 Demo 🤗

+

+也可以使用 **[在线 Demo](https://huggingface.co/spaces/breezedeus/Pix2Text-Demo)**(无法科学上网可以使用 [国内镜像](https://hf.qhduan.com/spaces/breezedeus/Pix2Text-Demo)) 尝试 **P2T** 在不同语言上的效果。但在线 Demo 使用的硬件配置较低,速度会较慢。如果是简体中文或者英文图片,建议使用 **[P2T网页版](https://p2t.breezedeus.com)**。

+

+

+

+

+

+更多说明请参考 [Pix2Text 主页](https://www.breezedeus.com/article/pix2text_cn) 。

\ No newline at end of file

diff --git a/docs/examples.md b/docs/examples.md

new file mode 100644

index 0000000000000000000000000000000000000000..1d37fb4f0a13c4dfa305a912fb1d55cdeac9feed

--- /dev/null

+++ b/docs/examples.md

@@ -0,0 +1,221 @@

+

+

+[English](examples_en.md) | 中文

+

+

+

+# 示例

+## 识别 PDF 文件,返回其 Markdown 格式

+

+对于 PDF 文件,可以使用函数 `.recognize_pdf()` 对整个文件或者指定页进行识别,并把结果输出为 Markdown 文件。如针对以下 PDF 文件 ([examples/test-doc.pdf](examples/test-doc.pdf)),

+调用方式如下:

+

+```python

+from pix2text import Pix2Text

+

+img_fp = './examples/test-doc.pdf'

+p2t = Pix2Text.from_config()

+doc = p2t.recognize_pdf(img_fp, page_numbers=[0, 1])

+doc.to_markdown('output-md') # 导出的 Markdown 信息保存在 output-md 目录中

+```

+

+也可以使用命令行完成一样的功能,如下面命令使用了付费版模型(MFD + MFR + CnOCR 三个付费模型)进行识别:

+

+```bash

+p2t predict -l en,ch_sim --mfd-config '{"model_name": "mfd-pro-1.5", "model_backend": "onnx"}' --formula-ocr-config '{"model_name":"mfr-pro-1.5","model_backend":"onnx"}' --text-ocr-config '{"rec_model_name": "doc-densenet_lite_666-gru_large"}' --rec-kwargs '{"page_numbers": [0, 1]}' --resized-shape 768 --file-type pdf -i docs/examples/test-doc.pdf -o output-md --save-debug-res output-debug

+```

+

+识别结果见 [output-md/output.md](output-md/output.md)。

+

+

+{: style="width:600px"}

+

+

+调用方式如下:

+

+```python

+from pix2text import Pix2Text

+

+img_fp = './examples/test-doc.pdf'

+p2t = Pix2Text.from_config()

+page = p2t.recognize_page(img_fp)

+page.to_markdown('output-page') # 导出的 Markdown 信息保存在 output-page 目录中

+```

+

+也可以使用命令行完成一样的功能,如下面命令使用了付费版模型(MFD + MFR + CnOCR 三个付费模型)进行识别:

+

+```bash

+p2t predict -l en,ch_sim --mfd-config '{"model_name": "mfd-pro-1.5", "model_backend": "onnx"}' --formula-ocr-config '{"model_name":"mfr-pro-1.5","model_backend":"onnx"}' --text-ocr-config '{"rec_model_name": "doc-densenet_lite_666-gru_large"}' --resized-shape 768 --file-type page -i docs/examples/page2.png -o output-page --save-debug-res output-debug-page

+```

+

+识别结果和 [output-md/output.md](output-md/output.md) 类似。

+

+## 识别既有公式又有文本的段落图片

+

+对于既有公式又有文本的段落图片,识别时不需要使用版面分析模型。

+可以使用函数 `.recognize_text_formula()` 识别图片中的文字和数学公式。如针对以下图片 ([examples/en1.jpg](examples/en1.jpg)):

+

+

+{: style="width:600px"}

+

+

+调用方式如下:

+

+```python

+from pix2text import Pix2Text, merge_line_texts

+

+img_fp = './examples/en1.jpg'

+p2t = Pix2Text.from_config()

+outs = p2t.recognize_text_formula(img_fp, resized_shape=768, return_text=True)

+print(outs)

+```

+

+返回结果 `outs` 是个 `dict`,其中 key `position` 表示Box位置信息,`type` 表示类别信息,而 `text` 表示识别的结果。具体说明见[接口说明](#接口说明)。

+

+也可以使用命令行完成一样的功能,如下面命令使用了付费版模型(MFD + MFR + CnOCR 三个付费模型)进行识别:

+

+```bash

+p2t predict -l en,ch_sim --mfd-config '{"model_name": "mfd-pro-1.5", "model_backend": "onnx"}' --formula-ocr-config '{"model_name":"mfr-pro-1.5","model_backend":"onnx"}' --text-ocr-config '{"rec_model_name": "doc-densenet_lite_666-gru_large"}' --resized-shape 768 --file-type text_formula -i docs/examples/en1.jpg --save-debug-res out-debug-en1.jpg

+```

+

+或者使用免费开源模型进行识别:

+

+```bash

+p2t predict -l en,ch_sim --resized-shape 768 --file-type text_formula -i docs/examples/en1.jpg --save-debug-res out-debug-en1.jpg

+```

+

+## 识别纯公式图片

+

+对于只包含数学公式的图片,使用函数 `.recognize_formula()` 可以把数学公式识别为 LaTeX 表达式。如针对以下图片 ([examples/math-formula-42.png](examples/math-formula-42.png)):

+

+

+{: style="width:300px"}

+

+

+

+调用方式如下:

+

+```python

+from pix2text import Pix2Text

+

+img_fp = './examples/math-formula-42.png'

+p2t = Pix2Text.from_config()

+outs = p2t.recognize_formula(img_fp)

+print(outs)

+```

+

+返回结果为字符串,即对应的 LaTeX 表达式。具体说明见[说明](usage.md)。

+

+也可以使用命令行完成一样的功能,如下面命令使用了付费版模型(MFR 一个付费模型)进行识别:

+

+```bash

+p2t predict -l en,ch_sim --formula-ocr-config '{"model_name":"mfr-pro-1.5","model_backend":"onnx"}' --file-type formula -i docs/examples/math-formula-42.png

+```

+

+或者使用免费开源模型进行识别:

+

+```bash

+p2t predict -l en,ch_sim --file-type formula -i docs/examples/math-formula-42.png

+```

+

+## 识别纯文字图片

+

+对于只包含文字不包含数学公式的图片,使用函数 `.recognize_text()` 可以识别出图片中的文字。此时 Pix2Text 相当于一般的文字 OCR 引擎。如针对以下图片 ([examples/general.jpg](examples/general.jpg)):

+

+

+{: style="width:400px"}

+

+

+

+调用方式如下:

+

+```python

+from pix2text import Pix2Text

+

+img_fp = './examples/general.jpg'

+p2t = Pix2Text.from_config()

+outs = p2t.recognize_text(img_fp)

+print(outs)

+```

+

+返回结果为字符串,即对应的文字序列。具体说明见[接口说明](https://pix2text.readthedocs.io/zh-cn/latest/pix2text/pix_to_text/)。

+

+也可以使用命令行完成一样的功能,如下面命令使用了付费版模型(CnOCR 一个付费模型)进行识别:

+

+```bash

+p2t predict -l en,ch_sim --text-ocr-config '{"rec_model_name": "doc-densenet_lite_666-gru_large"}' --file-type text --no-return-text -i docs/examples/general.jpg --save-debug-res out-debug-general.jpg

+```

+

+或者使用免费开源模型进行识别:

+

+```bash

+p2t predict -l en,ch_sim --file-type text --no-return-text -i docs/examples/general.jpg --save-debug-res out-debug-general.jpg

+```

+

+

+## 针对不同语言

+

+### 英文

+

+**识别效果**:

+

+

+

+**识别命令**:

+

+```bash

+p2t predict -l en --mfd-config '{"model_name": "mfd-pro-1.5", "model_backend": "onnx"}' --formula-ocr-config '{"model_name":"mfr-pro-1.5","model_backend":"onnx"}' --text-ocr-config '{"rec_model_name": "doc-densenet_lite_666-gru_large"}' --resized-shape 768 --file-type text_formula -i docs/examples/en1.jpg

+```

+

+### 简体中文

+

+**识别效果**:

+

+

+

+**识别命令**:

+

+```bash

+p2t predict -l en,ch_sim --mfd-config '{"model_name": "mfd-pro-1.5", "model_backend": "onnx"}' --formula-ocr-config '{"model_name":"mfr-pro-1.5","model_backend":"onnx"}' --text-ocr-config '{"rec_model_name": "doc-densenet_lite_666-gru_large"}' --resized-shape 768 --auto-line-break --file-type text_formula -i docs/examples/mixed.jpg --save-debug-res out-debug-mixed.jpg

+```

+

+### 繁体中文

+

+**识别效果**:

+

+

+

+**识别命令**:

+

+```bash

+p2t predict -l en,ch_tra --mfd-config '{"model_name": "mfd-pro", "model_backend": "onnx"}' --formula-ocr-config '{"model_name":"mfr-pro-1.5","model_backend":"onnx"}' --resized-shape 768 --auto-line-break --file-type text_formula -i docs/examples/ch_tra.jpg --save-debug-res out-debug-tra.jpg

+```

+

+> 注意 ⚠️ :请通过以下命令安装 pix2text 的多语言版本:

+> ```bash

+> pip install pix2text[multilingual]

+> ```

+

+

+### 越南语

+**识别效果**:

+

+

+

+**识别命令**:

+

+```bash

+p2t predict -l en,vi --mfd-config '{"model_name": "mfd-pro", "model_backend": "onnx"}' --formula-ocr-config '{"model_name":"mfr-pro","model_backend":"onnx"}' --resized-shape 608 --no-auto-line-break --file-type text_formula -i docs/examples/vietnamese.jpg --save-debug-res out-debug-vi.jpg

+```

+

+> 注意 ⚠️ :请通过以下命令安装 pix2text 的多语言版本:

+> ```bash

+> pip install pix2text[multilingual]

+> ```

diff --git a/docs/examples/test-doc.pdf b/docs/examples/test-doc.pdf

new file mode 100644

index 0000000000000000000000000000000000000000..9deeb4c32963c21df2d3b9349a4d1cf71ba8af87

--- /dev/null

+++ b/docs/examples/test-doc.pdf

@@ -0,0 +1,3 @@

+version https://git-lfs.github.com/spec/v1

+oid sha256:746024d672224466f2fbcc46385afe71e186b3d6542ae4c7132f7fd9aac36ac7

+size 1631522

diff --git a/docs/examples_en.md b/docs/examples_en.md

new file mode 100644

index 0000000000000000000000000000000000000000..4ddd20a7330aac9d7f626347196e9a7e3bcf6826

--- /dev/null

+++ b/docs/examples_en.md

@@ -0,0 +1,219 @@

+

+

+[中文](examples.md) | English

+

+

+

+# Examples

+## Recognize PDF Files and Return Markdown Format

+

+For PDF files, you can use the `.recognize_pdf()` function to recognize the entire file or specific pages and output the results as a Markdown file. For example, for the following PDF file ([examples/test-doc.pdf](examples/test-doc.pdf)),

+you can call the function like this:

+

+```python

+from pix2text import Pix2Text

+

+img_fp = './examples/test-doc.pdf'

+p2t = Pix2Text.from_config()

+doc = p2t.recognize_pdf(img_fp, page_numbers=[0, 1])

+doc.to_markdown('output-md') # The exported Markdown information is saved in the output-md directory

+```

+

+You can also achieve the same functionality using the command line. Below is a command that uses the premium models (MFD + MFR + CnOCR) for recognition:

+

+```bash

+p2t predict -l en,ch_sim --mfd-config '{"model_name": "mfd-pro-1.5", "model_backend": "onnx"}' --formula-ocr-config '{"model_name":"mfr-pro-1.5","model_backend":"onnx"}' --text-ocr-config '{"rec_model_name": "doc-densenet_lite_666-gru_large"}' --rec-kwargs '{"page_numbers": [0, 1]}' --resized-shape 768 --file-type pdf -i docs/examples/test-doc.pdf -o output-md --save-debug-res output-debug

+```

+

+The recognition result can be found in [output-md/output.md](output-md/output.md).

+

+

+{: style="width:600px"}

+

+

+You can call the function like this:

+

+```python

+from pix2text import Pix2Text

+

+img_fp = './examples/test-doc.pdf'

+p2t = Pix2Text.from_config()

+page = p2t.recognize_page(img_fp)

+page.to_markdown('output-page') # The exported Markdown information is saved in the output-page directory

+```

+

+You can also achieve the same functionality using the command line. Below is a command that uses the premium models (MFD + MFR + CnOCR) for recognition:

+

+```bash

+p2t predict -l en,ch_sim --mfd-config '{"model_name": "mfd-pro-1.5", "model_backend": "onnx"}' --formula-ocr-config '{"model_name":"mfr-pro-1.5","model_backend":"onnx"}' --text-ocr-config '{"rec_model_name": "doc-densenet_lite_666-gru_large"}' --resized-shape 768 --file-type page -i docs/examples/page2.png -o output-page --save-debug-res output-debug-page

+```

+

+The recognition result is similar to [output-md/output.md](output-md/output.md).

+

+

+## Recognize Paragraph Images with Both Formulas and Texts

+

+For paragraph images containing both formulas and texts, you don't need to use the layout analysis model. You can use the `.recognize_text_formula()` function to recognize both texts and mathematical formulas in the image. For example, for the following image ([examples/en1.jpg](examples/en1.jpg)):

+

+

+{: style="width:600px"}

+

+

+You can call the function like this:

+

+```python

+from pix2text import Pix2Text, merge_line_texts

+

+img_fp = './examples/en1.jpg'

+p2t = Pix2Text.from_config()

+outs = p2t.recognize_text_formula(img_fp, resized_shape=768, return_text=True)

+print(outs)

+```

+

+The returned result `outs` is a dictionary, where the key `position` represents the box position information, `type` represents the category information, and `text` represents the recognition result. For detailed explanations, see [API Documentation](#api-documentation).

+

+You can also achieve the same functionality using the command line. Below is a command that uses the premium models (MFD + MFR + CnOCR) for recognition:

+

+```bash

+p2t predict -l en,ch_sim --mfd-config '{"model_name": "mfd-pro-1.5", "model_backend": "onnx"}' --formula-ocr-config '{"model_name":"mfr-pro-1.5","model_backend":"onnx"}' --text-ocr-config '{"rec_model_name": "doc-densenet_lite_666-gru_large"}' --resized-shape 768 --file-type text_formula -i docs/examples/en1.jpg --save-debug-res out-debug-en1.jpg

+```

+

+Or use the free open-source models for recognition:

+

+```bash

+p2t predict -l en,ch_sim --resized-shape 768 --file-type text_formula -i docs/examples/en1.jpg --save-debug-res out-debug-en1.jpg

+```

+

+## Recognize Pure Formula Images

+

+For images containing only mathematical formulas, you can use the `.recognize_formula()` function to recognize the formulas as LaTeX expressions. For example, for the following image ([examples/math-formula-42.png](examples/math-formula-42.png)):

+

+

+{: style="width:300px"}

+

+

+You can call the function like this:

+

+```python

+from pix2text import Pix2Text

+

+img_fp = './examples/math-formula-42.png'

+p2t = Pix2Text.from_config()

+outs = p2t.recognize_formula(img_fp)

+print(outs)

+```

+

+The returned result is a string representing the corresponding LaTeX expression. For detailed explanations, see [Usage](usage.md).

+

+You can also achieve the same functionality using the command line. Below is a command that uses the premium model (MFR) for recognition:

+

+```bash

+p2t predict -l en,ch_sim --formula-ocr-config '{"model_name":"mfr-pro-1.5","model_backend":"onnx"}' --file-type formula -i docs/examples/math-formula-42.png

+```

+

+Or use the free open-source model for recognition:

+

+```bash

+p2t predict -l en,ch_sim --file-type formula -i docs/examples/math-formula-42.png

+```

+

+## Recognize Pure Text Images

+

+For images containing only text without mathematical formulas, you can use the `.recognize_text()` function to recognize the text in the image. In this case, Pix2Text acts as a general text OCR engine. For example, for the following image ([examples/general.jpg](examples/general.jpg)):

+

+

+{: style="width:400px"}

+

+

+You can call the function like this:

+

+```python

+from pix2text import Pix2Text

+

+img_fp = './examples/general.jpg'

+p2t = Pix2Text.from_config()

+outs = p2t.recognize_text(img_fp)

+print(outs)

+```

+

+The returned result is a string representing the corresponding text sequence. For detailed explanations, see [API Documentation](https://pix2text.readthedocs.io/zh-cn/latest/pix2text/pix_to_text/).

+

+You can also achieve the same functionality using the command line. Below is a command that uses the premium model (CnOCR) for recognition:

+

+```bash

+p2t predict -l en,ch_sim --text-ocr-config '{"rec_model_name": "doc-densenet_lite_666-gru_large"}' --file-type text --no-return-text -i docs/examples/general.jpg --save-debug-res out-debug-general.jpg

+```

+

+Or use the free open-source model for recognition:

+

+```bash

+p2t predict -l en,ch_sim --file-type text --no-return-text -i docs/examples/general.jpg --save-debug-res out-debug-general.jpg

+```

+

+## For Different Languages

+

+### English

+

+**Recognition Result**:

+

+

+

+**Recognition Command**:

+

+```bash

+p2t predict -l en --mfd-config '{"model_name": "mfd-pro-1.5", "model_backend": "onnx"}' --formula-ocr-config '{"model_name":"mfr-pro-1.5","model_backend":"onnx"}' --text-ocr-config '{"rec_model_name": "doc-densenet_lite_666-gru_large"}' --resized-shape 768 --file-type text_formula -i docs/examples/en1.jpg

+```

+

+### Simplified Chinese

+

+**Recognition Result**:

+

+

+

+**Recognition Command**:

+

+```bash

+p2t predict -l en,ch_sim --mfd-config '{"model_name": "mfd-pro-1.5", "model_backend": "onnx"}' --formula-ocr-config '{"model_name":"mfr-pro-1.5","model_backend":"onnx"}' --text-ocr-config '{"rec_model_name": "doc-densenet_lite_666-gru_large"}' --resized-shape 768 --auto-line-break --file-type text_formula -i docs/examples/mixed.jpg --save-debug-res out-debug-mixed.jpg

+```

+

+### Traditional Chinese

+

+**Recognition Result**:

+

+

+

+**Recognition Command**:

+

+```bash

+p2t predict -l en,ch_tra --mfd-config '{"model_name": "mfd-pro-1.5", "model_backend": "onnx"}' --formula-ocr-config '{"model_name":"mfr-pro-1.5","model_backend":"onnx"}' --resized-shape 768 --auto-line-break --file-type text_formula -i docs/examples/ch_tra.jpg --save-debug-res out-debug-tra.jpg

+```

+

+> Note ⚠️: Please install the multilingual version of pix2text using the following command:

+> ```bash

+> pip install pix2text[multilingual]

+> ```

+

+### Vietnamese

+

+**Recognition Result**:

+

+

+

+**Recognition Command**:

+

+```bash

+p2t predict -l en,vi --mfd-config '{"model_name": "mfd-pro-1.5", "model_backend": "onnx"}' --formula-ocr-config '{"model_name":"mfr-pro-1.5","model_backend":"onnx"}' --resized-shape 608 --no-auto-line-break --file-type text_formula -i docs/examples/vietnamese.jpg --save-debug-res out-debug-vi.jpg

+```

+

+> Note ⚠️: Please install the multilingual version of pix2text using the following command:

+> ```bash

+> pip install pix2text[multilingual]

+> ```

\ No newline at end of file

diff --git a/docs/faq.md b/docs/faq.md

new file mode 100644

index 0000000000000000000000000000000000000000..48ef9733506d912ab77976e2ff5caabab4854b56

--- /dev/null

+++ b/docs/faq.md

@@ -0,0 +1,8 @@

+# 常见问题(FAQ)

+

+## Pix2Text 是免费的吗?

+

+Pix2Text 代码和基础模型是免费的,而且是开源的。可以按需自行调整发布或商业使用。

+

+但请注意,Pix2Text 的不同付费模型包含不同的 license,购买时请参考具体的 license 说明。

+

diff --git a/docs/figs/breezedeus.ico b/docs/figs/breezedeus.ico

new file mode 100644

index 0000000000000000000000000000000000000000..b070848947e791d69303f4dafc18a36a2ec740c1

Binary files /dev/null and b/docs/figs/breezedeus.ico differ

diff --git a/docs/index.md b/docs/index.md

new file mode 100644

index 0000000000000000000000000000000000000000..f5cae58d90c3a079049c2d13aa121280273be508

--- /dev/null

+++ b/docs/index.md

@@ -0,0 +1,263 @@

+

+{: style="width:180px"}

+

+

+# Pix2Text (P2T)

+[](https://discord.gg/GgD87WM8Tf)

+[](https://pepy.tech/project/pix2text)

+[](https://visitorbadge.io/status?path=https%3A%2F%2Fpix2text.readthedocs.io%2Fzh-cn%2Fstable%2F)

+[](./LICENSE)

+[](https://badge.fury.io/py/pix2text)

+[](https://github.com/breezedeus/pix2text)

+[](https://github.com/breezedeus/pix2text)

+

+

+[](https://twitter.com/breezedeus)

+

+

+[📖 使用](usage.md) |

+[🛠️ 安装](install.md) |

+[🧳 模型](models.md) |

+[🛀🏻 在线Demo](demo.md) |

+[💬 交流群](contact.md)

+

+[English](index_en.md) | 中文

+

+

+**Pix2Text (P2T)** 期望成为 **[Mathpix](https://mathpix.com/)** 的**免费开源 Python** 替代工具,目前已经可以完成 **Mathpix** 的核心功能。

+**Pix2Text (P2T) 可以识别图片中的版面、表格、图片、文字、数学公式等内容,并整合所有内容后以 Markdown 格式输出。P2T 也可以把一整个 PDF 文件(PDF 的内容可以是扫描图片或者其他任何格式)转换为 Markdown 格式。**

+

+**Pix2Text (P2T)** 整合了以下模型:

+

+- **版面分析模型**:[breezedeus/pix2text-layout-docyolo](https://huggingface.co/breezedeus/pix2text-layout-docyolo) ([国内镜像](https://hf-mirror.com/breezedeus/pix2text-layout-docyolo))。

+- **表格识别模型**:[breezedeus/pix2text-table-rec](https://huggingface.co/breezedeus/pix2text-table-rec) ([国内镜像](https://hf-mirror.com/breezedeus/pix2text-table-rec))。

+- **文字识别引擎**:支持 **`80+` 种语言**,如**英文、简体中文、繁体中文、越南语**等。其中,**英文**和**简体中文**识别使用的是开源 OCR 工具 [CnOCR](https://github.com/breezedeus/cnocr) ,其他语言的识别使用的是开源 OCR 工具 [EasyOCR](https://github.com/JaidedAI/EasyOCR) 。

+- **数学公式检测模型(MFD)**:[breezedeus/pix2text-mfd-1.5](https://huggingface.co/breezedeus/pix2text-mfd-1.5) ([国内镜像](https://hf-mirror.com/breezedeus/pix2text-mfd-1.5))。基于 [CnSTD](https://github.com/breezedeus/cnstd) 实现。

+- **数学公式识别模型(MFR)**:[breezedeus/pix2text-mfr-1.5](https://huggingface.co/breezedeus/pix2text-mfr-1.5) ([国内镜像](https://hf-mirror.com/breezedeus/pix2text-mfr-1.5))。

+

+其中多个模型来自其他开源作者, 非常感谢他们的贡献。

+

+

+

+

+

+

+↓↓↓ Click to show details ↓↓↓

+

+| Language | Code Name |

+| ------------------- | ----------- |

+| Abaza | abq |

+| Adyghe | ady |

+| Afrikaans | af |

+| Angika | ang |

+| Arabic | ar |

+| Assamese | as |

+| Avar | ava |

+| Azerbaijani | az |

+| Belarusian | be |

+| Bulgarian | bg |

+| Bihari | bh |

+| Bhojpuri | bho |

+| Bengali | bn |

+| Bosnian | bs |

+| Simplified Chinese | ch_sim |

+| Traditional Chinese | ch_tra |

+| Chechen | che |

+| Czech | cs |

+| Welsh | cy |

+| Danish | da |

+| Dargwa | dar |

+| German | de |

+| English | en |

+| Spanish | es |

+| Estonian | et |

+| Persian (Farsi) | fa |

+| French | fr |

+| Irish | ga |

+| Goan Konkani | gom |

+| Hindi | hi |

+| Croatian | hr |

+| Hungarian | hu |

+| Indonesian | id |

+| Ingush | inh |

+| Icelandic | is |

+| Italian | it |

+| Japanese | ja |

+| Kabardian | kbd |

+| Kannada | kn |

+| Korean | ko |

+| Kurdish | ku |

+| Latin | la |

+| Lak | lbe |

+| Lezghian | lez |

+| Lithuanian | lt |

+| Latvian | lv |

+| Magahi | mah |

+| Maithili | mai |

+| Maori | mi |

+| Mongolian | mn |

+| Marathi | mr |

+| Malay | ms |

+| Maltese | mt |

+| Nepali | ne |

+| Newari | new |

+| Dutch | nl |

+| Norwegian | no |

+| Occitan | oc |

+| Pali | pi |

+| Polish | pl |

+| Portuguese | pt |

+| Romanian | ro |

+| Russian | ru |

+| Serbian (cyrillic) | rs_cyrillic |

+| Serbian (latin) | rs_latin |

+| Nagpuri | sck |

+| Slovak | sk |

+| Slovenian | sl |

+| Albanian | sq |

+| Swedish | sv |

+| Swahili | sw |

+| Tamil | ta |

+| Tabassaran | tab |

+| Telugu | te |

+| Thai | th |

+| Tajik | tjk |

+| Tagalog | tl |

+| Turkish | tr |

+| Uyghur | ug |

+| Ukranian | uk |

+| Urdu | ur |

+| Uzbek | uz |

+| Vietnamese | vi |

+

+> Ref: [Supported Languages](https://www.jaided.ai/easyocr/) .

+

+

+

+

+

+## P2T 网页版

+

+所有人都可以免费使用 **[P2T网页版](https://p2t.breezedeus.com)**,每人每天可以免费识别 10000 个字符,正常使用应该够用了。*请不要批量调用接口,机器资源有限,批量调用会导致其他人无法使用服务。*

+

+受限于机器资源,网页版当前只支持**简体中文和英文**,要尝试其他语言上的效果,请使用以下的**在线 Demo**。

+

+

+

+## 在线 Demo 🤗

+

+也可以使用 **[在线 Demo](https://huggingface.co/spaces/breezedeus/Pix2Text-Demo)**(无法科学上网可以使用 [国内镜像](https://hf.qhduan.com/spaces/breezedeus/Pix2Text-Demo)) 尝试 **P2T** 在不同语言上的效果。但在线 Demo 使用的硬件配置较低,速度会较慢。如果是简体中文或者英文图片,建议使用 **[P2T网页版](https://p2t.breezedeus.com)**。

+

+

+## 安装

+

+嗯,顺利的话一行命令即可。

+

+```bash

+pip install pix2text

+```

+

+如果需要识别**英文**与**简体中文**之外的文字,请使用以下命令安装额外的包:

+

+```bash

+pip install pix2text[multilingual]

+```

+

+安装速度慢的话,可以指定国内的安装源,如使用阿里云的安装源:

+

+```bash

+pip install pix2text -i https://mirrors.aliyun.com/pypi/simple

+```

+

+如果是初次使用**OpenCV**,那估计安装都不会很顺利,bless。

+

+**Pix2Text** 主要依赖 [**CnSTD>=1.2.4**](https://github.com/breezedeus/cnstd)、[**CnOCR>=2.3**](https://github.com/breezedeus/cnocr) ,以及 [**transformers>=4.37.0**](https://github.com/huggingface/transformers) 。如果安装过程遇到问题,也可参考它们的安装说明文档。

+

+> **Warning**

+>

+> 如果电脑中从未安装过 `PyTorch`,`OpenCV` python包,初次安装可能会遇到不少问题,但一般都是常见问题,可以自行百度/Google解决。

+

+更多说明参考 [安装说明](install.md) 。

+

+

+## 使用说明

+

+参见:[使用说明](usage.md)。

+

+## 示例

+

+参见:[示例](examples.md)。

+

+## 模型下载

+

+参见:[模型](models.md)。

+

+## 命令行工具

+

+参见:[命令行工具](command.md)。

+

+

+## HTTP 服务

+

+使用命令 **`p2t serve`** 开启一个 HTTP 服务,用于接收图片(当前不支持 PDF)并返回识别结果。

+

+```bash

+p2t serve -l en,ch_sim -H 0.0.0.0 -p 8503

+```

+

+之后可以使用 curl 调用服务:

+

+```bash

+curl -X POST \

+ -F "file_type=page" \

+ -F "resized_shape=768" \

+ -F "embed_sep= $,$ " \

+ -F "isolated_sep=$$\n, \n$$" \

+ -F "image=@docs/examples/page2.png;type=image/jpeg" \

+ http://0.0.0.0:8503/pix2text

+```

+

+更多说明参考 [命令说明/开启服务](command.md) 。

+

+## Mac 桌面客户端

+

+请参考 [Pix2Text-Mac](https://github.com/breezedeus/Pix2Text-Mac) 安装 Pix2Text 的 MacOS 桌面客户端。

+

+

+

+

+{: style="width:180px"}

+

+

+# Pix2Text (P2T)

+[](https://discord.gg/GgD87WM8Tf)

+[](https://pepy.tech/project/pix2text)

+[](https://visitorbadge.io/status?path=https%3A%2F%2Fpix2text.readthedocs.io%2Fzh-cn%2Fstable%2F)

+[](./LICENSE)

+[](https://badge.fury.io/py/pix2text)

+[](https://github.com/breezedeus/pix2text)

+[](https://github.com/breezedeus/pix2text)

+

+

+[](https://twitter.com/breezedeus)

+

+

+[📖 Usage](usage.md) |

+[🛠️ Install](install.md) |

+[🧳 Models](models.md) |

+[🛀🏻 Demo](demo.md) |

+[💬 Contact](contact.md)

+

+[中文](index.md) | English

+

+

+**Pix2Text (P2T)** aims to be a **free and open-source Python** alternative to **[Mathpix](https://mathpix.com/)**, and it can already accomplish **Mathpix**'s core functionality. **Pix2Text (P2T) can recognize layouts, tables, images, text, mathematical formulas, and integrate all of these contents into Markdown format. P2T can also convert an entire PDF file (which can contain scanned images or any other format) into Markdown format.**

+

+**Pix2Text (P2T)** integrates the following models:

+

+- **Layout Analysis Model**: [breezedeus/pix2text-layout-docyolo](https://huggingface.co/breezedeus/pix2text-layout-docyolo) ([Mirror](https://hf-mirror.com/breezedeus/pix2text-layout-docyolo)).

+- **Table Recognition Model**: [breezedeus/pix2text-table-rec](https://huggingface.co/breezedeus/pix2text-table-rec) ([Mirror](https://hf-mirror.com/breezedeus/pix2text-table-rec)).

+- **Text Recognition Engine**: Supports **80+ languages** such as **English, Simplified Chinese, Traditional Chinese, Vietnamese**, etc. For English and Simplified Chinese recognition, it uses the open-source OCR tool [CnOCR](https://github.com/breezedeus/cnocr), while for other languages, it uses the open-source OCR tool [EasyOCR](https://github.com/JaidedAI/EasyOCR).

+- **Mathematical Formula Detection Model (MFD)**: [breezedeus/pix2text-mfd-1.5](https://huggingface.co/breezedeus/pix2text-mfd-1.5) ([Mirror](https://hf-mirror.com/breezedeus/pix2text-mfd-1.5)). Implemented based on [CnSTD](https://github.com/breezedeus/cnstd).

+- **Mathematical Formula Recognition Model (MFR)**: [breezedeus/pix2text-mfr-1.5](https://huggingface.co/breezedeus/pix2text-mfr-1.5) ([Mirror](https://hf-mirror.com/breezedeus/pix2text-mfr-1.5)).

+

+Several models are contributed by other open-source authors, and their contributions are highly appreciated.

+

+

+

+

+

+For detailed explanations, please refer to the [Models](models.md).

+

+As a Python3 toolkit, P2T may not be very user-friendly for those who are not familiar with Python. Therefore, we also provide a **[free-to-use P2T Online Web](https://p2t.breezedeus.com)**, where you can directly upload images and get P2T parsing results. The web version uses the latest models, resulting in better performance compared to the open-source models.

+

+Welcome to join [**Pix2Text Discord Server**](https://discord.gg/GgD87WM8Tf), if you have any questions or suggestions.

+

+If you're interested, feel free to add the WeChat assistant as a friend by scanning the QR code and mentioning `p2t`. The assistant will regularly invite everyone to join the group where the latest developments related to P2T tools will be announced:

+

+

+{: style="width:300px"}

+

+

+The author also maintains a **Knowledge Planet** [**P2T/CnOCR/CnSTD Private Group**](https://t.zsxq.com/FEYZRJQ), where questions are answered promptly. You're welcome to join. The **knowledge planet private group** will also gradually release some private materials related to P2T/CnOCR/CnSTD, including **some unreleased models**, **discounts on purchasing premium models**, **code snippets for different application scenarios**, and answers to difficult problems encountered during use. The planet will also publish the latest research materials related to P2T/OCR/STD.

+

+For more contact method, please refer to [Contact](contact.md).

+

+

+## List of Supported Languages

+

+The text recognition engine of Pix2Text supports **`80+` languages**, including **English, Simplified Chinese, Traditional Chinese, Vietnamese**, etc. Among these, **English** and **Simplified Chinese** recognition utilize the open-source OCR tool **[CnOCR](https://github.com/breezedeus/cnocr)**, while recognition for other languages employs the open-source OCR tool **[EasyOCR](https://github.com/JaidedAI/EasyOCR)**. Special thanks to the respective authors.

+

+List of **Supported Languages** and **Language Codes** are shown below:

+

+

+↓↓↓ Click to show details ↓↓↓

+

+| Language | Code Name |

+| ------------------- | ----------- |

+| Abaza | abq |

+| Adyghe | ady |

+| Afrikaans | af |

+| Angika | ang |

+| Arabic | ar |

+| Assamese | as |

+| Avar | ava |

+| Azerbaijani | az |

+| Belarusian | be |

+| Bulgarian | bg |

+| Bihari | bh |

+| Bhojpuri | bho |

+| Bengali | bn |

+| Bosnian | bs |

+| Simplified Chinese | ch_sim |

+| Traditional Chinese | ch_tra |

+| Chechen | che |

+| Czech | cs |

+| Welsh | cy |

+| Danish | da |

+| Dargwa | dar |

+| German | de |

+| English | en |

+| Spanish | es |

+| Estonian | et |

+| Persian (Farsi) | fa |

+| French | fr |

+| Irish | ga |

+| Goan Konkani | gom |

+| Hindi | hi |

+| Croatian | hr |

+| Hungarian | hu |

+| Indonesian | id |

+| Ingush | inh |

+| Icelandic | is |

+| Italian | it |

+| Japanese | ja |

+| Kabardian | kbd |

+| Kannada | kn |

+| Korean | ko |

+| Kurdish | ku |

+| Latin | la |

+| Lak | lbe |

+| Lezghian | lez |

+| Lithuanian | lt |

+| Latvian | lv |

+| Magahi | mah |

+| Maithili | mai |

+| Maori | mi |

+| Mongolian | mn |

+| Marathi | mr |

+| Malay | ms |

+| Maltese | mt |

+| Nepali | ne |

+| Newari | new |

+| Dutch | nl |

+| Norwegian | no |

+| Occitan | oc |

+| Pali | pi |

+| Polish | pl |

+| Portuguese | pt |

+| Romanian | ro |

+| Russian | ru |

+| Serbian (cyrillic) | rs_cyrillic |

+| Serbian (latin) | rs_latin |

+| Nagpuri | sck |

+| Slovak | sk |

+| Slovenian | sl |

+| Albanian | sq |

+| Swedish | sv |

+| Swahili | sw |

+| Tamil | ta |

+| Tabassaran | tab |

+| Telugu | te |

+| Thai | th |

+| Tajik | tjk |

+| Tagalog | tl |

+| Turkish | tr |

+| Uyghur | ug |

+| Ukranian | uk |

+| Urdu | ur |

+| Uzbek | uz |

+| Vietnamese | vi |

+

+

+> Ref: [Supported Languages](https://www.jaided.ai/easyocr/) .

+

+

+

+

+## Online Service

+

+Everyone can use the **[P2T Online Service](https://p2t.breezedeus.com)** for free, with a daily limit of 10,000 characters per account, which should be sufficient for normal use. *Please refrain from bulk API calls, as machine resources are limited, and this could prevent others from accessing the service.*

+

+Due to hardware constraints, the Online Service currently only supports **Simplified Chinese** and **English** languages. To try the models in other languages, please use the following **Online Demo**.

+

+

+

+## Online Demo 🤗

+

+You can also try the **[Online Demo](https://huggingface.co/spaces/breezedeus/Pix2Text-Demo)** ([Mirror](https://hf-mirror.com/spaces/breezedeus/Pix2Text-Demo)) to see the performance of **P2T** in various languages. However, the online demo operates on lower hardware specifications and may be slower. For Simplified Chinese or English images, it is recommended to use the **[P2T Online Service](https://p2t.breezedeus.com)**.

+

+

+## Install

+

+Well, one line of command is enough if it goes well.

+

+```bash

+pip install pix2text

+```

+

+If you need to recognize languages other than **English** and **Simplified Chinese**, please use the following command to install additional packages:

+

+```bash

+pip install pix2text[multilingual]

+```

+

+

+

+If the installation is slow, you can specify a domestic installation source, such as using the Aliyun source:

+

+```bash

+pip install pix2text -i https://mirrors.aliyun.com/pypi/simple

+```

+

+

+If it is your first time to use **OpenCV**, then probably the installation will not be very easy. Bless.

+

+**Pix2Text** mainly depends on [**CnSTD>=1.2.1**](https://github.com/breezedeus/cnstd), [**CnOCR>=2.2.2.1**](https://github.com/breezedeus/cnocr), and [**transformers>=4.37.0**](https://github.com/huggingface/transformers). If you encounter problems with the installation, you can also refer to their installation instruction documentations.

+

+

+> **Warning**

+>

+> If you have never installed the `PyTorch`, `OpenCV` python packages before, you may encounter a lot of problems during the first installation, but they are usually common problems that can be solved by Baidu/Google.

+

+For more instructions, please refer to [Install](install.md) .

+

+## Usage

+

+Refer to: [Usage](usage.md).

+

+## Examples

+

+Refer to: [Examples](examples.md).

+

+## Model Downloads

+

+Refer to: [Models](models.md).

+

+## Command Line Tools

+

+Refer to: [Command Line Tools](command.md).

+

+## HTTP Service

+

+To start an HTTP service for receiving images (currently does not support PDF) and returning recognition results, use the command **`p2t serve`**.

+

+```bash

+p2t serve -l en,ch_sim -H 0.0.0.0 -p 8503

+```

+

+Afterwards, you can call the service using curl:

+

+```bash

+curl -X POST \

+ -F "file_type=page" \

+ -F "resized_shape=768" \

+ -F "embed_sep= $,$ " \

+ -F "isolated_sep=$$\n, \n$$" \

+ -F "image=@docs/examples/page2.png;type=image/jpeg" \

+ http://0.0.0.0:8503/pix2text

+```

+

+For more information, refer to [Command/Starting the Service](command.md).

+

+## MacOS Desktop Application

+

+Please refer to [Pix2Text-Mac](https://github.com/breezedeus/Pix2Text-Mac) for installing the Pix2Text Desktop App for MacOS.

+

+

+

+

\AppData\Roaming\pix2text\1.1\layout-parser`)目录下即可,目录不存在的话请自己创建。

+

+> 注:上面路径的 `1.1` 是 pix2text 的版本号,`1.1.*` 都对应 `1.1`。如果是其他版本请自行替换。

+

+## 表格识别模型

+**表格识别模型** 下载地址:[breezedeus/pix2text-table-rec](https://huggingface.co/breezedeus/pix2text-table-rec) (不能科学上网请使用 [国内镜像](https://hf-mirror.com/breezedeus/pix2text-table-rec))。

+把这里面的所有文件都下载到 `~/.pix2text/1.1/table-rec` (Windows 系统放在 `C:\Users\\AppData\Roaming\pix2text\1.1\table-rec`)目录下即可,目录不存在的话请自己创建。

+

+> 注:上面路径的 `1.1` 是 pix2text 的版本号,`1.1.*` 都对应 `1.1`。如果是其他版本请自行替换。

+

+## 数学公式检测模型(MFD)

+### `pix2text >= 1.1.1`

+Pix2Text 自 **V1.1.1** 开始,**数学公式检测模型** 下载地址:[breezedeus/pix2text-mfd](https://huggingface.co/breezedeus/pix2text-mfd) (不能科学上网请使用 [国内镜像](https://hf-mirror.com/breezedeus/pix2text-mfd))。

+

+### `pix2text < 1.1.1`

+**数学公式检测模型**(MFD)来自 [CnSTD](https://github.com/breezedeus/cnstd) 的数学公式检测模型(MFD),请参考其代码库说明。

+

+如果系统无法自动成功下载模型文件,则需要手动从 [**cnstd-cnocr-models**](https://huggingface.co/breezedeus/cnstd-cnocr-models) ([国内镜像](https://hf-mirror.com/breezedeus/cnstd-cnocr-models))项目中下载,或者从[百度云盘](https://pan.baidu.com/s/1zDMzArCDrrXHWL0AWxwYQQ?pwd=nstd)(提取码为 `nstd`)下载对应的zip文件并把它存放于 `~/.cnstd/1.2`(Windows下为 `C:\Users\\AppData\Roaming\cnstd\1.2`)目录中。

+

+## 数学公式识别模型(MFR)

+**数学公式识别模型** 下载地址:[breezedeus/pix2text-mfr](https://huggingface.co/breezedeus/pix2text-mfr) (不能科学上网请使用 [国内镜像](https://hf-mirror.com/breezedeus/pix2text-mfr))。

+把这里面的所有文件都下载到 `~/.pix2text/1.1/mfr-1.5-onnx` (Windows 系统放在 `C:\Users\\AppData\Roaming\pix2text\1.1\mfr-1.5-onnx`)目录下即可,目录不存在的话请自己创建。

+

+> 注:上面路径的 `1.1` 是 pix2text 的版本号,`1.1.*` 都对应 `1.1`。如果是其他版本请自行替换。

+

+## 文字识别引擎

+Pix2Text 的**文字识别引擎**可以识别 **`80+` 种语言**,如**英文、简体中文、繁体中文、越南语**等。其中,**英文**和**简体中文**识别使用的是开源 OCR 工具 [CnOCR](https://github.com/breezedeus/cnocr) ,其他语言的识别使用的是开源 OCR 工具 [EasyOCR](https://github.com/JaidedAI/EasyOCR) 。

+

+正常情况下,CnOCR 的模型都会自动下载。如果无法自动下载,可以参考以下说明手动下载。

+CnOCR 的开源模型都放在 [**cnstd-cnocr-models**](https://huggingface.co/breezedeus/cnstd-cnocr-models) ([国内镜像](https://hf-mirror.com/breezedeus/cnstd-cnocr-models))项目中,可免费下载使用。

+如果下载太慢,也可以从 [百度云盘](https://pan.baidu.com/s/1RhLBf8DcLnLuGLPrp89hUg?pwd=nocr) 下载, 提取码为 `nocr`。具体方法可参考 [CnOCR在线文档/使用方法](https://cnocr.readthedocs.io/zh-cn/latest/usage) 。

+

+CnOCR 中的文字检测引擎使用的是 [CnSTD](https://github.com/breezedeus/cnstd),

+如果系统无法自动成功下载模型文件,则需要手动从 [**cnstd-cnocr-models**](https://huggingface.co/breezedeus/cnstd-cnocr-models) ([国内镜像](https://hf-mirror.com/breezedeus/cnstd-cnocr-models))项目中下载,或者从[百度云盘](https://pan.baidu.com/s/1zDMzArCDrrXHWL0AWxwYQQ?pwd=nstd)(提取码为 `nstd`)下载对应的zip文件并把它存放于 `~/.cnstd/1.2`(Windows下为 `C:\Users\\AppData\Roaming\cnstd\1.2`)目录中。

+

+关于 CnOCR 模型的更多信息请参考 [CnOCR在线文档/可用模型](https://cnocr.readthedocs.io/zh-cn/latest/models)。

+

+CnOCR 也提供**高级版的付费模型**,具体参考本文末尾的说明。

+

+- CnOCR 付费模型:具体参考 [CnOCR详细资料 | Breezedeus.com](https://www.breezedeus.com/article/cnocr)。

+

+

+{: style="width:270px"}

+

+

+更多联系方式见 [交流群](contact.md)。

\ No newline at end of file

diff --git a/docs/pix2text/latex_ocr.md b/docs/pix2text/latex_ocr.md

new file mode 100644

index 0000000000000000000000000000000000000000..da75cbe9b3f1f5b54598a11c29e6397b7a5ee4ac

--- /dev/null

+++ b/docs/pix2text/latex_ocr.md

@@ -0,0 +1 @@

+:::pix2text.latex_ocr

diff --git a/docs/pix2text/pix_to_text.md b/docs/pix2text/pix_to_text.md

new file mode 100644

index 0000000000000000000000000000000000000000..878b6ff24c4f0c827b6cf41430c3aef87633d0c5

--- /dev/null

+++ b/docs/pix2text/pix_to_text.md

@@ -0,0 +1 @@

+:::pix2text.pix_to_text

diff --git a/docs/pix2text/table_ocr.md b/docs/pix2text/table_ocr.md

new file mode 100644

index 0000000000000000000000000000000000000000..c78c6bd2d9d3ef1d7ee2cb87e735a1ac64b6700a

--- /dev/null

+++ b/docs/pix2text/table_ocr.md

@@ -0,0 +1 @@

+:::pix2text.table_ocr

diff --git a/docs/pix2text/text_formula_ocr.md b/docs/pix2text/text_formula_ocr.md

new file mode 100644

index 0000000000000000000000000000000000000000..5efdb41c60086202bd04ff4b53f25a5686b37736

--- /dev/null

+++ b/docs/pix2text/text_formula_ocr.md

@@ -0,0 +1 @@

+:::pix2text.text_formula_ocr

diff --git a/docs/requirements.txt b/docs/requirements.txt

new file mode 100644

index 0000000000000000000000000000000000000000..0b15e66ff06869841203bf64630f7e01c49e047f

--- /dev/null

+++ b/docs/requirements.txt

@@ -0,0 +1,387 @@

+#

+# This file is autogenerated by pip-compile with Python 3.9

+# by the following command:

+#

+# pip-compile --output-file=requirements.txt requirements.in

+#

+#--index-url https://mirrors.aliyun.com/pypi/simple

+#--extra-index-url https://pypi.tuna.tsinghua.edu.cn/simple

+#--extra-index-url https://pypi.org/simple

+

+aiohttp==3.9.3

+ # via

+ # datasets

+ # fsspec

+aiosignal==1.3.1

+ # via aiohttp

+appdirs==1.4.4

+ # via wandb

+async-timeout==4.0.3

+ # via aiohttp

+attrs==23.2.0

+ # via aiohttp

+certifi==2024.2.2

+ # via

+ # requests

+ # sentry-sdk

+charset-normalizer==3.3.2

+ # via requests

+click==8.1.7

+ # via

+ # -r requirements.in

+ # cnocr

+ # cnstd

+ # wandb

+cnocr[ort-cpu]==2.3.0.2

+ # via

+ # -r requirements.in

+ # cnocr

+cnstd==1.2.4.1

+ # via

+ # -r requirements.in

+ # cnocr

+coloredlogs==15.0.1

+ # via

+ # onnxruntime

+ # optimum

+contourpy==1.2.0

+ # via matplotlib

+cycler==0.12.1

+ # via matplotlib

+datasets==2.17.0

+ # via

+ # evaluate

+ # optimum

+dill==0.3.8

+ # via

+ # datasets

+ # evaluate

+ # multiprocess

+docker-pycreds==0.4.0

+ # via wandb

+easyocr==1.7.1

+ # via -r requirements.in

+evaluate==0.4.1

+ # via optimum

+filelock==3.13.1

+ # via

+ # datasets

+ # huggingface-hub

+ # torch

+ # transformers

+flatbuffers==23.5.26

+ # via onnxruntime

+fonttools==4.49.0

+ # via matplotlib

+frozenlist==1.4.1

+ # via

+ # aiohttp

+ # aiosignal

+fsspec[http]==2023.10.0

+ # via

+ # datasets

+ # evaluate

+ # huggingface-hub

+ # pytorch-lightning

+ # torch

+gitdb==4.0.11

+ # via gitpython

+gitpython==3.1.42

+ # via wandb

+huggingface-hub==0.20.3

+ # via

+ # cnstd

+ # datasets

+ # evaluate

+ # optimum

+ # tokenizers

+ # transformers

+humanfriendly==10.0

+ # via coloredlogs

+idna==3.6

+ # via

+ # requests

+ # yarl

+imageio==2.34.0

+ # via scikit-image

+importlib-resources==6.1.1

+ # via matplotlib

+jinja2==3.0.3

+ # via torch

+kiwisolver==1.4.5

+ # via matplotlib

+lazy-loader==0.3

+ # via scikit-image

+lightning-utilities==0.10.1

+ # via

+ # pytorch-lightning

+ # torchmetrics

+markupsafe==2.1.5

+ # via jinja2

+matplotlib==3.8.3

+ # via

+ # cnstd

+ # seaborn

+mpmath==1.3.0

+ # via sympy

+multidict==6.0.5

+ # via

+ # aiohttp

+ # yarl

+multiprocess==0.70.16

+ # via

+ # datasets

+ # evaluate

+networkx==3.2.1

+ # via

+ # scikit-image

+ # torch

+ninja==1.11.1.1

+ # via easyocr

+numpy==1.26.4

+ # via

+ # -r requirements.in

+ # cnocr

+ # cnstd

+ # contourpy

+ # datasets

+ # easyocr

+ # evaluate

+ # imageio

+ # matplotlib

+ # onnx

+ # onnxruntime

+ # opencv-python

+ # opencv-python-headless

+ # optimum

+ # pandas

+ # pyarrow

+ # pytorch-lightning

+ # scikit-image

+ # scipy

+ # seaborn

+ # shapely

+ # tifffile

+ # torchmetrics

+ # torchvision

+ # transformers

+onnx==1.15.0

+ # via

+ # cnocr

+ # cnstd

+ # optimum

+onnxruntime==1.17.0

+ # via

+ # cnocr

+ # optimum

+opencv-python==4.9.0.80

+ # via

+ # -r requirements.in

+ # cnstd

+opencv-python-headless==4.9.0.80

+ # via easyocr

+optimum[onnxruntime]==1.16.2

+ # via -r requirements.in

+packaging==23.2

+ # via

+ # datasets

+ # evaluate

+ # huggingface-hub

+ # lightning-utilities

+ # matplotlib

+ # onnxruntime

+ # optimum

+ # pytorch-lightning

+ # scikit-image

+ # torchmetrics

+ # transformers

+pandas==2.2.0

+ # via

+ # cnstd

+ # datasets

+ # evaluate

+ # seaborn

+pillow==10.2.0

+ # via

+ # -r requirements.in

+ # cnocr

+ # cnstd

+ # easyocr

+ # imageio

+ # matplotlib

+ # scikit-image

+ # torchvision

+polygon3==3.0.9.1

+ # via cnstd

+protobuf==4.25.3

+ # via

+ # onnx

+ # onnxruntime

+ # optimum

+ # transformers

+ # wandb

+psutil==5.9.8

+ # via wandb

+pyarrow==15.0.0

+ # via datasets

+pyarrow-hotfix==0.6

+ # via datasets

+pyclipper==1.3.0.post5

+ # via

+ # cnstd

+ # easyocr

+pymupdf==1.24.1

+ # via -r requirements.in

+pymupdfb==1.24.1

+ # via pymupdf

+pyparsing==3.1.1

+ # via matplotlib

+pyspellchecker==0.8.1

+ # via -r requirements.in

+python-bidi==0.4.2

+ # via easyocr

+python-dateutil==2.8.2

+ # via

+ # matplotlib

+ # pandas

+pytorch-lightning==2.2.0.post0

+ # via

+ # cnocr

+ # cnstd

+pytz==2024.1

+ # via pandas

+pyyaml==6.0.1

+ # via

+ # cnstd

+ # datasets

+ # easyocr

+ # huggingface-hub

+ # pytorch-lightning

+ # transformers

+ # wandb

+regex==2023.12.25

+ # via transformers

+requests==2.31.0

+ # via

+ # datasets

+ # evaluate

+ # fsspec

+ # huggingface-hub

+ # responses

+ # torchvision

+ # transformers

+ # wandb

+responses==0.18.0

+ # via evaluate

+safetensors==0.4.2

+ # via transformers

+scikit-image==0.22.0

+ # via easyocr

+scipy==1.12.0

+ # via

+ # cnstd

+ # easyocr

+ # scikit-image

+seaborn==0.13.2

+ # via cnstd

+sentencepiece==0.1.99

+ # via transformers

+sentry-sdk==1.40.4

+ # via wandb

+setproctitle==1.3.3

+ # via wandb

+shapely==2.0.2

+ # via

+ # cnstd

+ # easyocr

+six==1.16.0

+ # via

+ # docker-pycreds

+ # python-bidi

+ # python-dateutil

+smmap==5.0.1

+ # via gitdb

+sympy==1.12

+ # via

+ # onnxruntime

+ # optimum

+ # torch

+tifffile==2024.2.12

+ # via scikit-image

+tokenizers==0.15.2

+ # via transformers

+torch==2.2.0

+ # via

+ # -r requirements.in

+ # cnocr

+ # cnstd

+ # easyocr

+ # optimum

+ # pytorch-lightning

+ # torchmetrics

+ # torchvision

+torchmetrics==1.3.1

+ # via

+ # cnocr

+ # pytorch-lightning

+torchvision==0.17.0

+ # via

+ # -r requirements.in

+ # cnocr

+ # cnstd

+ # easyocr

+tqdm==4.66.2

+ # via

+ # -r requirements.in

+ # cnocr

+ # cnstd

+ # datasets

+ # evaluate

+ # huggingface-hub

+ # pytorch-lightning

+ # transformers

+transformers[sentencepiece]==4.37.2

+ # via

+ # -r requirements.in

+ # optimum

+typing-extensions==4.9.0

+ # via

+ # huggingface-hub

+ # lightning-utilities

+ # pytorch-lightning

+ # torch

+ # wandb

+tzdata==2024.1

+ # via pandas

+unidecode==1.3.8

+ # via cnstd

+urllib3==2.2.0

+ # via

+ # requests

+ # responses

+ # sentry-sdk

+wandb==0.16.3

+ # via cnocr

+xxhash==3.4.1

+ # via

+ # datasets

+ # evaluate

+yarl==1.9.4

+ # via aiohttp

+zipp==3.17.0

+ # via importlib-resources

+

+doclayout-yolo<0.1

+litellm<2.0

+

+# The following packages are considered to be unsafe in a requirements file:

+# setuptools

+

+# for mkdocs

+pygments==2.11

+jinja2<3.1.0

+mkdocs==1.2.2

+mkdocs-macros-plugin==0.6.0

+mkdocs-material==7.3.0

+mkdocs-material-extensions==1.0.3

+mkdocstrings==0.16.1

diff --git a/docs/train.md b/docs/train.md

new file mode 100644

index 0000000000000000000000000000000000000000..08f3e0a35e44a66b370ab6b70107400e61722426

--- /dev/null

+++ b/docs/train.md

@@ -0,0 +1,3 @@

+# Model Train

+

+TODO

diff --git a/docs/usage.md b/docs/usage.md

new file mode 100644

index 0000000000000000000000000000000000000000..c5d8c2a49dd9a625dc0206bc9aa3cf74208c748c

--- /dev/null

+++ b/docs/usage.md

@@ -0,0 +1,547 @@

+# Usage

+

+## 模型文件自动下载

+

+首次使用 **Pix2Text** 时,系统会**自动下载**所需的开源模型,并存于 `~/.pix2text` 目录(Windows下默认路径为 `C:\Users\\AppData\Roaming\pix2text`)。

+CnOCR 和 CnSTD 中的模型分别存于 `~/.cnocr` 和 `~/.cnstd` 中(Windows 下默认路径为 `C:\Users\\AppData\Roaming\cnocr` 和 `C:\Users\\AppData\Roaming\cnstd`)。

+下载过程请耐心等待,无法科学上网时系统会自动尝试其他可用站点进行下载,所以可能需要等待较长时间。

+对于没有网络连接的机器,可以先把模型下载到其他机器上,然后拷贝到对应目录。

+

+如果系统无法自动成功下载模型文件,则需要手动下载模型文件,可以参考 [huggingface.co/breezedeus](https://huggingface.co/breezedeus) ([国内镜像](https://hf-mirror.com/breezedeus))自己手动下载。

+

+具体说明见 [模型下载](models.md)。

+

+

+## 初始化

+### 方法一

+

+类 [Pix2Text](pix2text/pix_to_text.md) 是识别主类,包含了多个识别函数识别不同类型的 **图片** 或 **PDF文件** 中的内容。类 `Pix2Text` 的初始化函数如下:

+

+```python

+class Pix2Text(object):

+ def __init__(

+ self,

+ *,

+ layout_parser: Optional[LayoutParser] = None,

+ text_formula_ocr: Optional[TextFormulaOCR] = None,

+ table_ocr: Optional[TableOCR] = None,

+ **kwargs,

+ ):

+ """

+ Initialize the Pix2Text object.

+ Args:

+ layout_parser (LayoutParser): The layout parser object; default value is `None`, which means to create a default one

+ text_formula_ocr (TextFormulaOCR): The text and formula OCR object; default value is `None`, which means to create a default one

+ table_ocr (TableOCR): The table OCR object; default value is `None`, which means not to recognize tables

+ **kwargs (dict): Other arguments, currently not used

+ """

+```

+

+其中的几个参数含义如下:

+

+* `layout_parser`:版面分析模型对象,默认值为 `None`,表示使用默认的版面分析模型;

+* `text_formula_ocr`:文字与公式识别模型对象,默认值为 `None`,表示使用默认的文字与公式识别模型;

+* `table_ocr`:表格识别模型对象,默认值为 `None`,表示不识别表格;

+* `**kwargs`:其他参数,目前未使用。

+

+

+每个参数都有默认取值,所以可以不传入任何参数值进行初始化:`p2t = Pix2Text()`。但请注意,如果不传入任何参数值,那么只会导入默认的版面分析模型和文字与公式识别模型,而**不会导入表格识别模型**。

+

+初始化 Pix2Text 实例的更好的方法是使用以下的函数。

+

+### 方法二

+可以通过指定配置信息来初始化 `Pix2Text` 类的实例:

+

+```python

+@classmethod

+def from_config(

+ cls,

+ total_configs: Optional[dict] = None,

+ enable_formula: bool = True,

+ enable_table: bool = True,

+ device: str = None,

+ **kwargs,

+):

+ """