'

+$ git push --set-upstream origin your-branch-for-syncing

+```

diff --git a/eval_agent/eval_tools/t2i_comp/diffusers/LICENSE b/eval_agent/eval_tools/t2i_comp/diffusers/LICENSE

new file mode 100644

index 0000000000000000000000000000000000000000..261eeb9e9f8b2b4b0d119366dda99c6fd7d35c64

--- /dev/null

+++ b/eval_agent/eval_tools/t2i_comp/diffusers/LICENSE

@@ -0,0 +1,201 @@

+ Apache License

+ Version 2.0, January 2004

+ http://www.apache.org/licenses/

+

+ TERMS AND CONDITIONS FOR USE, REPRODUCTION, AND DISTRIBUTION

+

+ 1. Definitions.

+

+ "License" shall mean the terms and conditions for use, reproduction,

+ and distribution as defined by Sections 1 through 9 of this document.

+

+ "Licensor" shall mean the copyright owner or entity authorized by

+ the copyright owner that is granting the License.

+

+ "Legal Entity" shall mean the union of the acting entity and all

+ other entities that control, are controlled by, or are under common

+ control with that entity. For the purposes of this definition,

+ "control" means (i) the power, direct or indirect, to cause the

+ direction or management of such entity, whether by contract or

+ otherwise, or (ii) ownership of fifty percent (50%) or more of the

+ outstanding shares, or (iii) beneficial ownership of such entity.

+

+ "You" (or "Your") shall mean an individual or Legal Entity

+ exercising permissions granted by this License.

+

+ "Source" form shall mean the preferred form for making modifications,

+ including but not limited to software source code, documentation

+ source, and configuration files.

+

+ "Object" form shall mean any form resulting from mechanical

+ transformation or translation of a Source form, including but

+ not limited to compiled object code, generated documentation,

+ and conversions to other media types.

+

+ "Work" shall mean the work of authorship, whether in Source or

+ Object form, made available under the License, as indicated by a

+ copyright notice that is included in or attached to the work

+ (an example is provided in the Appendix below).

+

+ "Derivative Works" shall mean any work, whether in Source or Object

+ form, that is based on (or derived from) the Work and for which the

+ editorial revisions, annotations, elaborations, or other modifications

+ represent, as a whole, an original work of authorship. For the purposes

+ of this License, Derivative Works shall not include works that remain

+ separable from, or merely link (or bind by name) to the interfaces of,

+ the Work and Derivative Works thereof.

+

+ "Contribution" shall mean any work of authorship, including

+ the original version of the Work and any modifications or additions

+ to that Work or Derivative Works thereof, that is intentionally

+ submitted to Licensor for inclusion in the Work by the copyright owner

+ or by an individual or Legal Entity authorized to submit on behalf of

+ the copyright owner. For the purposes of this definition, "submitted"

+ means any form of electronic, verbal, or written communication sent

+ to the Licensor or its representatives, including but not limited to

+ communication on electronic mailing lists, source code control systems,

+ and issue tracking systems that are managed by, or on behalf of, the

+ Licensor for the purpose of discussing and improving the Work, but

+ excluding communication that is conspicuously marked or otherwise

+ designated in writing by the copyright owner as "Not a Contribution."

+

+ "Contributor" shall mean Licensor and any individual or Legal Entity

+ on behalf of whom a Contribution has been received by Licensor and

+ subsequently incorporated within the Work.

+

+ 2. Grant of Copyright License. Subject to the terms and conditions of

+ this License, each Contributor hereby grants to You a perpetual,

+ worldwide, non-exclusive, no-charge, royalty-free, irrevocable

+ copyright license to reproduce, prepare Derivative Works of,

+ publicly display, publicly perform, sublicense, and distribute the

+ Work and such Derivative Works in Source or Object form.

+

+ 3. Grant of Patent License. Subject to the terms and conditions of

+ this License, each Contributor hereby grants to You a perpetual,

+ worldwide, non-exclusive, no-charge, royalty-free, irrevocable

+ (except as stated in this section) patent license to make, have made,

+ use, offer to sell, sell, import, and otherwise transfer the Work,

+ where such license applies only to those patent claims licensable

+ by such Contributor that are necessarily infringed by their

+ Contribution(s) alone or by combination of their Contribution(s)

+ with the Work to which such Contribution(s) was submitted. If You

+ institute patent litigation against any entity (including a

+ cross-claim or counterclaim in a lawsuit) alleging that the Work

+ or a Contribution incorporated within the Work constitutes direct

+ or contributory patent infringement, then any patent licenses

+ granted to You under this License for that Work shall terminate

+ as of the date such litigation is filed.

+

+ 4. Redistribution. You may reproduce and distribute copies of the

+ Work or Derivative Works thereof in any medium, with or without

+ modifications, and in Source or Object form, provided that You

+ meet the following conditions:

+

+ (a) You must give any other recipients of the Work or

+ Derivative Works a copy of this License; and

+

+ (b) You must cause any modified files to carry prominent notices

+ stating that You changed the files; and

+

+ (c) You must retain, in the Source form of any Derivative Works

+ that You distribute, all copyright, patent, trademark, and

+ attribution notices from the Source form of the Work,

+ excluding those notices that do not pertain to any part of

+ the Derivative Works; and

+

+ (d) If the Work includes a "NOTICE" text file as part of its

+ distribution, then any Derivative Works that You distribute must

+ include a readable copy of the attribution notices contained

+ within such NOTICE file, excluding those notices that do not

+ pertain to any part of the Derivative Works, in at least one

+ of the following places: within a NOTICE text file distributed

+ as part of the Derivative Works; within the Source form or

+ documentation, if provided along with the Derivative Works; or,

+ within a display generated by the Derivative Works, if and

+ wherever such third-party notices normally appear. The contents

+ of the NOTICE file are for informational purposes only and

+ do not modify the License. You may add Your own attribution

+ notices within Derivative Works that You distribute, alongside

+ or as an addendum to the NOTICE text from the Work, provided

+ that such additional attribution notices cannot be construed

+ as modifying the License.

+

+ You may add Your own copyright statement to Your modifications and

+ may provide additional or different license terms and conditions

+ for use, reproduction, or distribution of Your modifications, or

+ for any such Derivative Works as a whole, provided Your use,

+ reproduction, and distribution of the Work otherwise complies with

+ the conditions stated in this License.

+

+ 5. Submission of Contributions. Unless You explicitly state otherwise,

+ any Contribution intentionally submitted for inclusion in the Work

+ by You to the Licensor shall be under the terms and conditions of

+ this License, without any additional terms or conditions.

+ Notwithstanding the above, nothing herein shall supersede or modify

+ the terms of any separate license agreement you may have executed

+ with Licensor regarding such Contributions.

+

+ 6. Trademarks. This License does not grant permission to use the trade

+ names, trademarks, service marks, or product names of the Licensor,

+ except as required for reasonable and customary use in describing the

+ origin of the Work and reproducing the content of the NOTICE file.

+

+ 7. Disclaimer of Warranty. Unless required by applicable law or

+ agreed to in writing, Licensor provides the Work (and each

+ Contributor provides its Contributions) on an "AS IS" BASIS,

+ WITHOUT WARRANTIES OR CONDITIONS OF ANY KIND, either express or

+ implied, including, without limitation, any warranties or conditions

+ of TITLE, NON-INFRINGEMENT, MERCHANTABILITY, or FITNESS FOR A

+ PARTICULAR PURPOSE. You are solely responsible for determining the

+ appropriateness of using or redistributing the Work and assume any

+ risks associated with Your exercise of permissions under this License.

+

+ 8. Limitation of Liability. In no event and under no legal theory,

+ whether in tort (including negligence), contract, or otherwise,

+ unless required by applicable law (such as deliberate and grossly

+ negligent acts) or agreed to in writing, shall any Contributor be

+ liable to You for damages, including any direct, indirect, special,

+ incidental, or consequential damages of any character arising as a

+ result of this License or out of the use or inability to use the

+ Work (including but not limited to damages for loss of goodwill,

+ work stoppage, computer failure or malfunction, or any and all

+ other commercial damages or losses), even if such Contributor

+ has been advised of the possibility of such damages.

+

+ 9. Accepting Warranty or Additional Liability. While redistributing

+ the Work or Derivative Works thereof, You may choose to offer,

+ and charge a fee for, acceptance of support, warranty, indemnity,

+ or other liability obligations and/or rights consistent with this

+ License. However, in accepting such obligations, You may act only

+ on Your own behalf and on Your sole responsibility, not on behalf

+ of any other Contributor, and only if You agree to indemnify,

+ defend, and hold each Contributor harmless for any liability

+ incurred by, or claims asserted against, such Contributor by reason

+ of your accepting any such warranty or additional liability.

+

+ END OF TERMS AND CONDITIONS

+

+ APPENDIX: How to apply the Apache License to your work.

+

+ To apply the Apache License to your work, attach the following

+ boilerplate notice, with the fields enclosed by brackets "[]"

+ replaced with your own identifying information. (Don't include

+ the brackets!) The text should be enclosed in the appropriate

+ comment syntax for the file format. We also recommend that a

+ file or class name and description of purpose be included on the

+ same "printed page" as the copyright notice for easier

+ identification within third-party archives.

+

+ Copyright [yyyy] [name of copyright owner]

+

+ Licensed under the Apache License, Version 2.0 (the "License");

+ you may not use this file except in compliance with the License.

+ You may obtain a copy of the License at

+

+ http://www.apache.org/licenses/LICENSE-2.0

+

+ Unless required by applicable law or agreed to in writing, software

+ distributed under the License is distributed on an "AS IS" BASIS,

+ WITHOUT WARRANTIES OR CONDITIONS OF ANY KIND, either express or implied.

+ See the License for the specific language governing permissions and

+ limitations under the License.

diff --git a/eval_agent/eval_tools/t2i_comp/diffusers/MANIFEST.in b/eval_agent/eval_tools/t2i_comp/diffusers/MANIFEST.in

new file mode 100644

index 0000000000000000000000000000000000000000..b22fe1a28a1ef881fdb36af3c30b14c0a5d10aa5

--- /dev/null

+++ b/eval_agent/eval_tools/t2i_comp/diffusers/MANIFEST.in

@@ -0,0 +1,2 @@

+include LICENSE

+include src/diffusers/utils/model_card_template.md

diff --git a/eval_agent/eval_tools/t2i_comp/diffusers/Makefile b/eval_agent/eval_tools/t2i_comp/diffusers/Makefile

new file mode 100644

index 0000000000000000000000000000000000000000..94af6d2f12724c9e22a09143be9277aaace3cd85

--- /dev/null

+++ b/eval_agent/eval_tools/t2i_comp/diffusers/Makefile

@@ -0,0 +1,96 @@

+.PHONY: deps_table_update modified_only_fixup extra_style_checks quality style fixup fix-copies test test-examples

+

+# make sure to test the local checkout in scripts and not the pre-installed one (don't use quotes!)

+export PYTHONPATH = src

+

+check_dirs := examples scripts src tests utils

+

+modified_only_fixup:

+ $(eval modified_py_files := $(shell python utils/get_modified_files.py $(check_dirs)))

+ @if test -n "$(modified_py_files)"; then \

+ echo "Checking/fixing $(modified_py_files)"; \

+ black $(modified_py_files); \

+ ruff $(modified_py_files); \

+ else \

+ echo "No library .py files were modified"; \

+ fi

+

+# Update src/diffusers/dependency_versions_table.py

+

+deps_table_update:

+ @python setup.py deps_table_update

+

+deps_table_check_updated:

+ @md5sum src/diffusers/dependency_versions_table.py > md5sum.saved

+ @python setup.py deps_table_update

+ @md5sum -c --quiet md5sum.saved || (printf "\nError: the version dependency table is outdated.\nPlease run 'make fixup' or 'make style' and commit the changes.\n\n" && exit 1)

+ @rm md5sum.saved

+

+# autogenerating code

+

+autogenerate_code: deps_table_update

+

+# Check that the repo is in a good state

+

+repo-consistency:

+ python utils/check_dummies.py

+ python utils/check_repo.py

+ python utils/check_inits.py

+

+# this target runs checks on all files

+

+quality:

+ black --check $(check_dirs)

+ ruff $(check_dirs)

+ doc-builder style src/diffusers docs/source --max_len 119 --check_only --path_to_docs docs/source

+ python utils/check_doc_toc.py

+

+# Format source code automatically and check is there are any problems left that need manual fixing

+

+extra_style_checks:

+ python utils/custom_init_isort.py

+ doc-builder style src/diffusers docs/source --max_len 119 --path_to_docs docs/source

+ python utils/check_doc_toc.py --fix_and_overwrite

+

+# this target runs checks on all files and potentially modifies some of them

+

+style:

+ black $(check_dirs)

+ ruff $(check_dirs) --fix

+ ${MAKE} autogenerate_code

+ ${MAKE} extra_style_checks

+

+# Super fast fix and check target that only works on relevant modified files since the branch was made

+

+fixup: modified_only_fixup extra_style_checks autogenerate_code repo-consistency

+

+# Make marked copies of snippets of codes conform to the original

+

+fix-copies:

+ python utils/check_copies.py --fix_and_overwrite

+ python utils/check_dummies.py --fix_and_overwrite

+

+# Run tests for the library

+

+test:

+ python -m pytest -n auto --dist=loadfile -s -v ./tests/

+

+# Run tests for examples

+

+test-examples:

+ python -m pytest -n auto --dist=loadfile -s -v ./examples/pytorch/

+

+

+# Release stuff

+

+pre-release:

+ python utils/release.py

+

+pre-patch:

+ python utils/release.py --patch

+

+post-release:

+ python utils/release.py --post_release

+

+post-patch:

+ python utils/release.py --post_release --patch

diff --git a/eval_agent/eval_tools/t2i_comp/diffusers/README.md b/eval_agent/eval_tools/t2i_comp/diffusers/README.md

new file mode 100644

index 0000000000000000000000000000000000000000..fc384c9f8fb29fa26f1c63c336b102aa1875c5e8

--- /dev/null

+++ b/eval_agent/eval_tools/t2i_comp/diffusers/README.md

@@ -0,0 +1,563 @@

+

+

+  +

+

+

+

+

+  +

+

+

+

+

+  +

+

+

+

+

+  +

+

+

+

+

+🤗 Diffusers provides pretrained diffusion models across multiple modalities, such as vision and audio, and serves

+as a modular toolbox for inference and training of diffusion models.

+

+More precisely, 🤗 Diffusers offers:

+

+- State-of-the-art diffusion pipelines that can be run in inference with just a couple of lines of code (see [src/diffusers/pipelines](https://github.com/huggingface/diffusers/tree/main/src/diffusers/pipelines)). Check [this overview](https://github.com/huggingface/diffusers/tree/main/src/diffusers/pipelines/README.md#pipelines-summary) to see all supported pipelines and their corresponding official papers.

+- Various noise schedulers that can be used interchangeably for the preferred speed vs. quality trade-off in inference (see [src/diffusers/schedulers](https://github.com/huggingface/diffusers/tree/main/src/diffusers/schedulers)).

+- Multiple types of models, such as UNet, can be used as building blocks in an end-to-end diffusion system (see [src/diffusers/models](https://github.com/huggingface/diffusers/tree/main/src/diffusers/models)).

+- Training examples to show how to train the most popular diffusion model tasks (see [examples](https://github.com/huggingface/diffusers/tree/main/examples), *e.g.* [unconditional-image-generation](https://github.com/huggingface/diffusers/tree/main/examples/unconditional_image_generation)).

+

+## Installation

+

+### For PyTorch

+

+**With `pip`** (official package)

+

+```bash

+pip install --upgrade diffusers[torch]

+```

+

+**With `conda`** (maintained by the community)

+

+```sh

+conda install -c conda-forge diffusers

+```

+

+### For Flax

+

+**With `pip`**

+

+```bash

+pip install --upgrade diffusers[flax]

+```

+

+**Apple Silicon (M1/M2) support**

+

+Please, refer to [the documentation](https://huggingface.co/docs/diffusers/optimization/mps).

+

+## Contributing

+

+We ❤️ contributions from the open-source community!

+If you want to contribute to this library, please check out our [Contribution guide](https://github.com/huggingface/diffusers/blob/main/CONTRIBUTING.md).

+You can look out for [issues](https://github.com/huggingface/diffusers/issues) you'd like to tackle to contribute to the library.

+- See [Good first issues](https://github.com/huggingface/diffusers/issues?q=is%3Aopen+is%3Aissue+label%3A%22good+first+issue%22) for general opportunities to contribute

+- See [New model/pipeline](https://github.com/huggingface/diffusers/issues?q=is%3Aopen+is%3Aissue+label%3A%22New+pipeline%2Fmodel%22) to contribute exciting new diffusion models / diffusion pipelines

+- See [New scheduler](https://github.com/huggingface/diffusers/issues?q=is%3Aopen+is%3Aissue+label%3A%22New+scheduler%22)

+

+Also, say 👋 in our public Discord channel  . We discuss the hottest trends about diffusion models, help each other with contributions, personal projects or

+just hang out ☕.

+

+## Quickstart

+

+In order to get started, we recommend taking a look at two notebooks:

+

+- The [Getting started with Diffusers](https://colab.research.google.com/github/huggingface/notebooks/blob/main/diffusers/diffusers_intro.ipynb) [](https://colab.research.google.com/github/huggingface/notebooks/blob/main/diffusers/diffusers_intro.ipynb) notebook, which showcases an end-to-end example of usage for diffusion models, schedulers and pipelines.

+ Take a look at this notebook to learn how to use the pipeline abstraction, which takes care of everything (model, scheduler, noise handling) for you, and also to understand each independent building block in the library.

+- The [Training a diffusers model](https://colab.research.google.com/github/huggingface/notebooks/blob/main/diffusers/training_example.ipynb) [](https://colab.research.google.com/github/huggingface/notebooks/blob/main/diffusers/training_example.ipynb) notebook summarizes diffusion models training methods. This notebook takes a step-by-step approach to training your

+ diffusion models on an image dataset, with explanatory graphics.

+

+## Stable Diffusion is fully compatible with `diffusers`!

+

+Stable Diffusion is a text-to-image latent diffusion model created by the researchers and engineers from [CompVis](https://github.com/CompVis), [Stability AI](https://stability.ai/), [LAION](https://laion.ai/) and [RunwayML](https://runwayml.com/). It's trained on 512x512 images from a subset of the [LAION-5B](https://laion.ai/blog/laion-5b/) database. This model uses a frozen CLIP ViT-L/14 text encoder to condition the model on text prompts. With its 860M UNet and 123M text encoder, the model is relatively lightweight and runs on a GPU with at least 4GB VRAM.

+See the [model card](https://huggingface.co/CompVis/stable-diffusion) for more information.

+

+

+### Text-to-Image generation with Stable Diffusion

+

+First let's install

+

+```bash

+pip install --upgrade diffusers transformers accelerate

+```

+

+We recommend using the model in [half-precision (`fp16`)](https://pytorch.org/blog/accelerating-training-on-nvidia-gpus-with-pytorch-automatic-mixed-precision/) as it gives almost always the same results as full

+precision while being roughly twice as fast and requiring half the amount of GPU RAM.

+

+```python

+import torch

+from diffusers import StableDiffusionPipeline

+

+pipe = StableDiffusionPipeline.from_pretrained("runwayml/stable-diffusion-v1-5", torch_dtype=torch.float16)

+pipe = pipe.to("cuda")

+

+prompt = "a photo of an astronaut riding a horse on mars"

+image = pipe(prompt).images[0]

+```

+

+#### Running the model locally

+

+You can also simply download the model folder and pass the path to the local folder to the `StableDiffusionPipeline`.

+

+```

+git lfs install

+git clone https://huggingface.co/runwayml/stable-diffusion-v1-5

+```

+

+Assuming the folder is stored locally under `./stable-diffusion-v1-5`, you can run stable diffusion

+as follows:

+

+```python

+pipe = StableDiffusionPipeline.from_pretrained("./stable-diffusion-v1-5")

+pipe = pipe.to("cuda")

+

+prompt = "a photo of an astronaut riding a horse on mars"

+image = pipe(prompt).images[0]

+```

+

+If you are limited by GPU memory, you might want to consider chunking the attention computation in addition

+to using `fp16`.

+The following snippet should result in less than 4GB VRAM.

+

+```python

+pipe = StableDiffusionPipeline.from_pretrained("runwayml/stable-diffusion-v1-5", torch_dtype=torch.float16)

+pipe = pipe.to("cuda")

+

+prompt = "a photo of an astronaut riding a horse on mars"

+pipe.enable_attention_slicing()

+image = pipe(prompt).images[0]

+```

+

+If you wish to use a different scheduler (e.g.: DDIM, LMS, PNDM/PLMS), you can instantiate

+it before the pipeline and pass it to `from_pretrained`.

+

+```python

+from diffusers import LMSDiscreteScheduler

+

+pipe.scheduler = LMSDiscreteScheduler.from_config(pipe.scheduler.config)

+

+prompt = "a photo of an astronaut riding a horse on mars"

+image = pipe(prompt).images[0]

+

+image.save("astronaut_rides_horse.png")

+```

+

+If you want to run Stable Diffusion on CPU or you want to have maximum precision on GPU,

+please run the model in the default *full-precision* setting:

+

+```python

+from diffusers import StableDiffusionPipeline

+

+pipe = StableDiffusionPipeline.from_pretrained("runwayml/stable-diffusion-v1-5")

+

+# disable the following line if you run on CPU

+pipe = pipe.to("cuda")

+

+prompt = "a photo of an astronaut riding a horse on mars"

+image = pipe(prompt).images[0]

+

+image.save("astronaut_rides_horse.png")

+```

+

+### JAX/Flax

+

+Diffusers offers a JAX / Flax implementation of Stable Diffusion for very fast inference. JAX shines specially on TPU hardware because each TPU server has 8 accelerators working in parallel, but it runs great on GPUs too.

+

+Running the pipeline with the default PNDMScheduler:

+

+```python

+import jax

+import numpy as np

+from flax.jax_utils import replicate

+from flax.training.common_utils import shard

+

+from diffusers import FlaxStableDiffusionPipeline

+

+pipeline, params = FlaxStableDiffusionPipeline.from_pretrained(

+ "runwayml/stable-diffusion-v1-5", revision="flax", dtype=jax.numpy.bfloat16

+)

+

+prompt = "a photo of an astronaut riding a horse on mars"

+

+prng_seed = jax.random.PRNGKey(0)

+num_inference_steps = 50

+

+num_samples = jax.device_count()

+prompt = num_samples * [prompt]

+prompt_ids = pipeline.prepare_inputs(prompt)

+

+# shard inputs and rng

+params = replicate(params)

+prng_seed = jax.random.split(prng_seed, jax.device_count())

+prompt_ids = shard(prompt_ids)

+

+images = pipeline(prompt_ids, params, prng_seed, num_inference_steps, jit=True).images

+images = pipeline.numpy_to_pil(np.asarray(images.reshape((num_samples,) + images.shape[-3:])))

+```

+

+**Note**:

+If you are limited by TPU memory, please make sure to load the `FlaxStableDiffusionPipeline` in `bfloat16` precision instead of the default `float32` precision as done above. You can do so by telling diffusers to load the weights from "bf16" branch.

+

+```python

+import jax

+import numpy as np

+from flax.jax_utils import replicate

+from flax.training.common_utils import shard

+

+from diffusers import FlaxStableDiffusionPipeline

+

+pipeline, params = FlaxStableDiffusionPipeline.from_pretrained(

+ "runwayml/stable-diffusion-v1-5", revision="bf16", dtype=jax.numpy.bfloat16

+)

+

+prompt = "a photo of an astronaut riding a horse on mars"

+

+prng_seed = jax.random.PRNGKey(0)

+num_inference_steps = 50

+

+num_samples = jax.device_count()

+prompt = num_samples * [prompt]

+prompt_ids = pipeline.prepare_inputs(prompt)

+

+# shard inputs and rng

+params = replicate(params)

+prng_seed = jax.random.split(prng_seed, jax.device_count())

+prompt_ids = shard(prompt_ids)

+

+images = pipeline(prompt_ids, params, prng_seed, num_inference_steps, jit=True).images

+images = pipeline.numpy_to_pil(np.asarray(images.reshape((num_samples,) + images.shape[-3:])))

+```

+

+Diffusers also has a Image-to-Image generation pipeline with Flax/Jax

+```python

+import jax

+import numpy as np

+import jax.numpy as jnp

+from flax.jax_utils import replicate

+from flax.training.common_utils import shard

+import requests

+from io import BytesIO

+from PIL import Image

+from diffusers import FlaxStableDiffusionImg2ImgPipeline

+

+def create_key(seed=0):

+ return jax.random.PRNGKey(seed)

+rng = create_key(0)

+

+url = "https://raw.githubusercontent.com/CompVis/stable-diffusion/main/assets/stable-samples/img2img/sketch-mountains-input.jpg"

+response = requests.get(url)

+init_img = Image.open(BytesIO(response.content)).convert("RGB")

+init_img = init_img.resize((768, 512))

+

+prompts = "A fantasy landscape, trending on artstation"

+

+pipeline, params = FlaxStableDiffusionImg2ImgPipeline.from_pretrained(

+ "CompVis/stable-diffusion-v1-4", revision="flax",

+ dtype=jnp.bfloat16,

+)

+

+num_samples = jax.device_count()

+rng = jax.random.split(rng, jax.device_count())

+prompt_ids, processed_image = pipeline.prepare_inputs(prompt=[prompts]*num_samples, image = [init_img]*num_samples)

+p_params = replicate(params)

+prompt_ids = shard(prompt_ids)

+processed_image = shard(processed_image)

+

+output = pipeline(

+ prompt_ids=prompt_ids,

+ image=processed_image,

+ params=p_params,

+ prng_seed=rng,

+ strength=0.75,

+ num_inference_steps=50,

+ jit=True,

+ height=512,

+ width=768).images

+

+output_images = pipeline.numpy_to_pil(np.asarray(output.reshape((num_samples,) + output.shape[-3:])))

+```

+

+Diffusers also has a Text-guided inpainting pipeline with Flax/Jax

+

+```python

+import jax

+import numpy as np

+from flax.jax_utils import replicate

+from flax.training.common_utils import shard

+import PIL

+import requests

+from io import BytesIO

+

+

+from diffusers import FlaxStableDiffusionInpaintPipeline

+

+def download_image(url):

+ response = requests.get(url)

+ return PIL.Image.open(BytesIO(response.content)).convert("RGB")

+img_url = "https://raw.githubusercontent.com/CompVis/latent-diffusion/main/data/inpainting_examples/overture-creations-5sI6fQgYIuo.png"

+mask_url = "https://raw.githubusercontent.com/CompVis/latent-diffusion/main/data/inpainting_examples/overture-creations-5sI6fQgYIuo_mask.png"

+

+init_image = download_image(img_url).resize((512, 512))

+mask_image = download_image(mask_url).resize((512, 512))

+

+pipeline, params = FlaxStableDiffusionInpaintPipeline.from_pretrained("xvjiarui/stable-diffusion-2-inpainting")

+

+prompt = "Face of a yellow cat, high resolution, sitting on a park bench"

+prng_seed = jax.random.PRNGKey(0)

+num_inference_steps = 50

+

+num_samples = jax.device_count()

+prompt = num_samples * [prompt]

+init_image = num_samples * [init_image]

+mask_image = num_samples * [mask_image]

+prompt_ids, processed_masked_images, processed_masks = pipeline.prepare_inputs(prompt, init_image, mask_image)

+

+

+# shard inputs and rng

+params = replicate(params)

+prng_seed = jax.random.split(prng_seed, jax.device_count())

+prompt_ids = shard(prompt_ids)

+processed_masked_images = shard(processed_masked_images)

+processed_masks = shard(processed_masks)

+

+images = pipeline(prompt_ids, processed_masks, processed_masked_images, params, prng_seed, num_inference_steps, jit=True).images

+images = pipeline.numpy_to_pil(np.asarray(images.reshape((num_samples,) + images.shape[-3:])))

+```

+

+### Image-to-Image text-guided generation with Stable Diffusion

+

+The `StableDiffusionImg2ImgPipeline` lets you pass a text prompt and an initial image to condition the generation of new images.

+

+```python

+import requests

+import torch

+from PIL import Image

+from io import BytesIO

+

+from diffusers import StableDiffusionImg2ImgPipeline

+

+# load the pipeline

+device = "cuda"

+model_id_or_path = "runwayml/stable-diffusion-v1-5"

+pipe = StableDiffusionImg2ImgPipeline.from_pretrained(model_id_or_path, torch_dtype=torch.float16)

+

+# or download via git clone https://huggingface.co/runwayml/stable-diffusion-v1-5

+# and pass `model_id_or_path="./stable-diffusion-v1-5"`.

+pipe = pipe.to(device)

+

+# let's download an initial image

+url = "https://raw.githubusercontent.com/CompVis/stable-diffusion/main/assets/stable-samples/img2img/sketch-mountains-input.jpg"

+

+response = requests.get(url)

+init_image = Image.open(BytesIO(response.content)).convert("RGB")

+init_image = init_image.resize((768, 512))

+

+prompt = "A fantasy landscape, trending on artstation"

+

+images = pipe(prompt=prompt, image=init_image, strength=0.75, guidance_scale=7.5).images

+

+images[0].save("fantasy_landscape.png")

+```

+You can also run this example on colab [](https://colab.research.google.com/github/huggingface/notebooks/blob/main/diffusers/image_2_image_using_diffusers.ipynb)

+

+### In-painting using Stable Diffusion

+

+The `StableDiffusionInpaintPipeline` lets you edit specific parts of an image by providing a mask and a text prompt.

+

+```python

+import PIL

+import requests

+import torch

+from io import BytesIO

+

+from diffusers import StableDiffusionInpaintPipeline

+

+def download_image(url):

+ response = requests.get(url)

+ return PIL.Image.open(BytesIO(response.content)).convert("RGB")

+

+img_url = "https://raw.githubusercontent.com/CompVis/latent-diffusion/main/data/inpainting_examples/overture-creations-5sI6fQgYIuo.png"

+mask_url = "https://raw.githubusercontent.com/CompVis/latent-diffusion/main/data/inpainting_examples/overture-creations-5sI6fQgYIuo_mask.png"

+

+init_image = download_image(img_url).resize((512, 512))

+mask_image = download_image(mask_url).resize((512, 512))

+

+pipe = StableDiffusionInpaintPipeline.from_pretrained("runwayml/stable-diffusion-inpainting", torch_dtype=torch.float16)

+pipe = pipe.to("cuda")

+

+prompt = "Face of a yellow cat, high resolution, sitting on a park bench"

+image = pipe(prompt=prompt, image=init_image, mask_image=mask_image).images[0]

+```

+

+### Tweak prompts reusing seeds and latents

+

+You can generate your own latents to reproduce results, or tweak your prompt on a specific result you liked.

+Please have a look at [Reusing seeds for deterministic generation](https://huggingface.co/docs/diffusers/main/en/using-diffusers/reusing_seeds).

+

+## Fine-Tuning Stable Diffusion

+

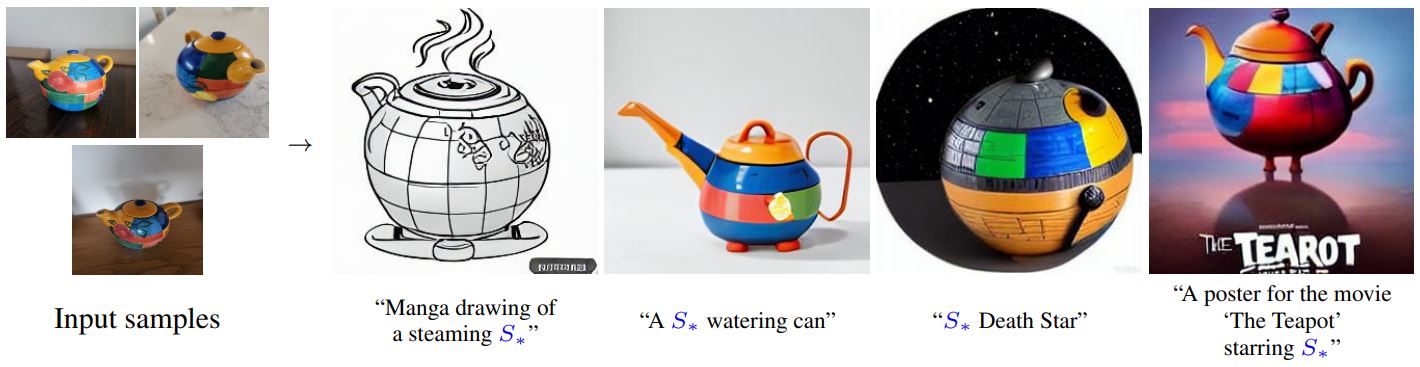

+Fine-tuning techniques make it possible to adapt Stable Diffusion to your own dataset, or add new subjects to it. These are some of the techniques supported in `diffusers`:

+

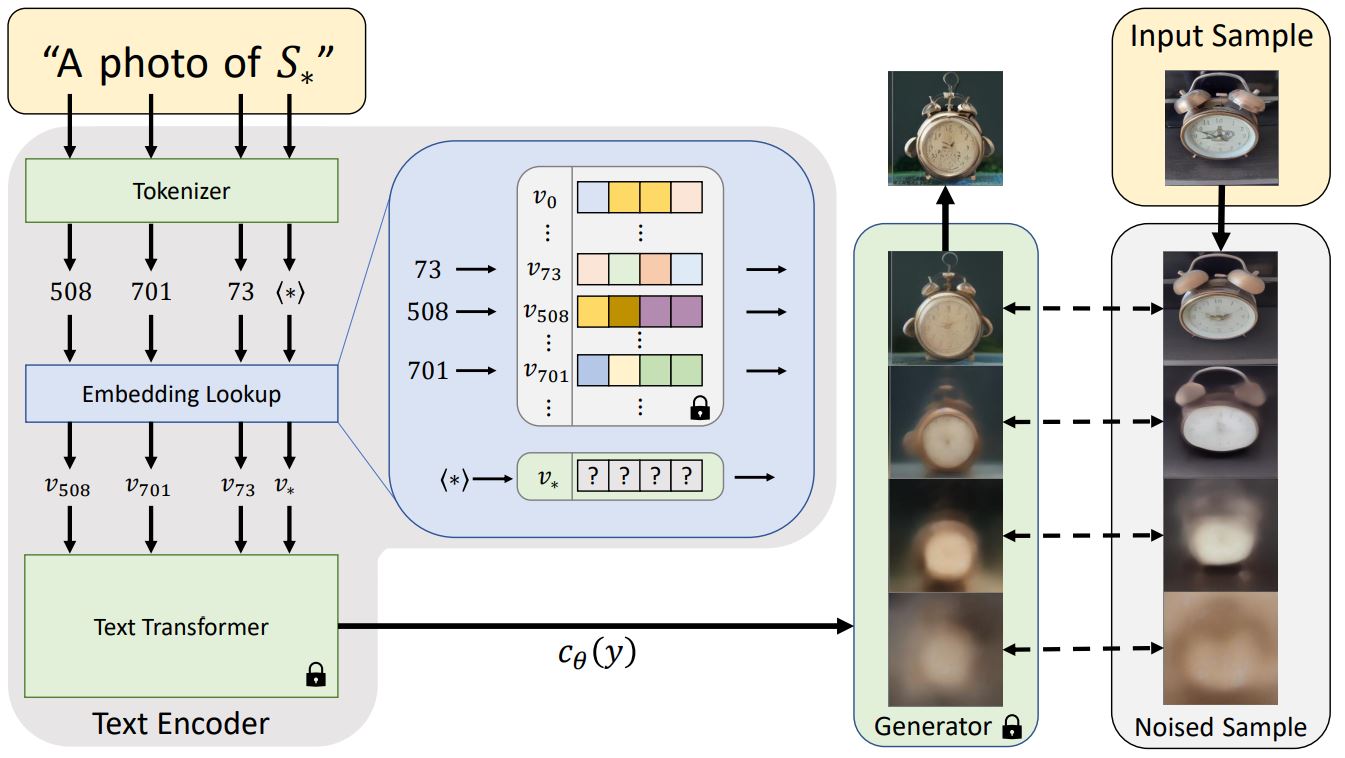

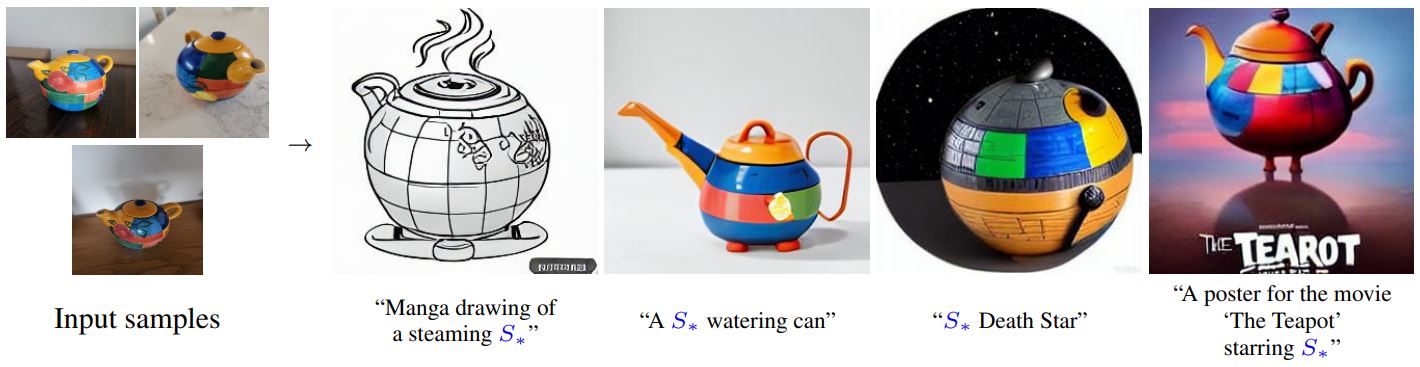

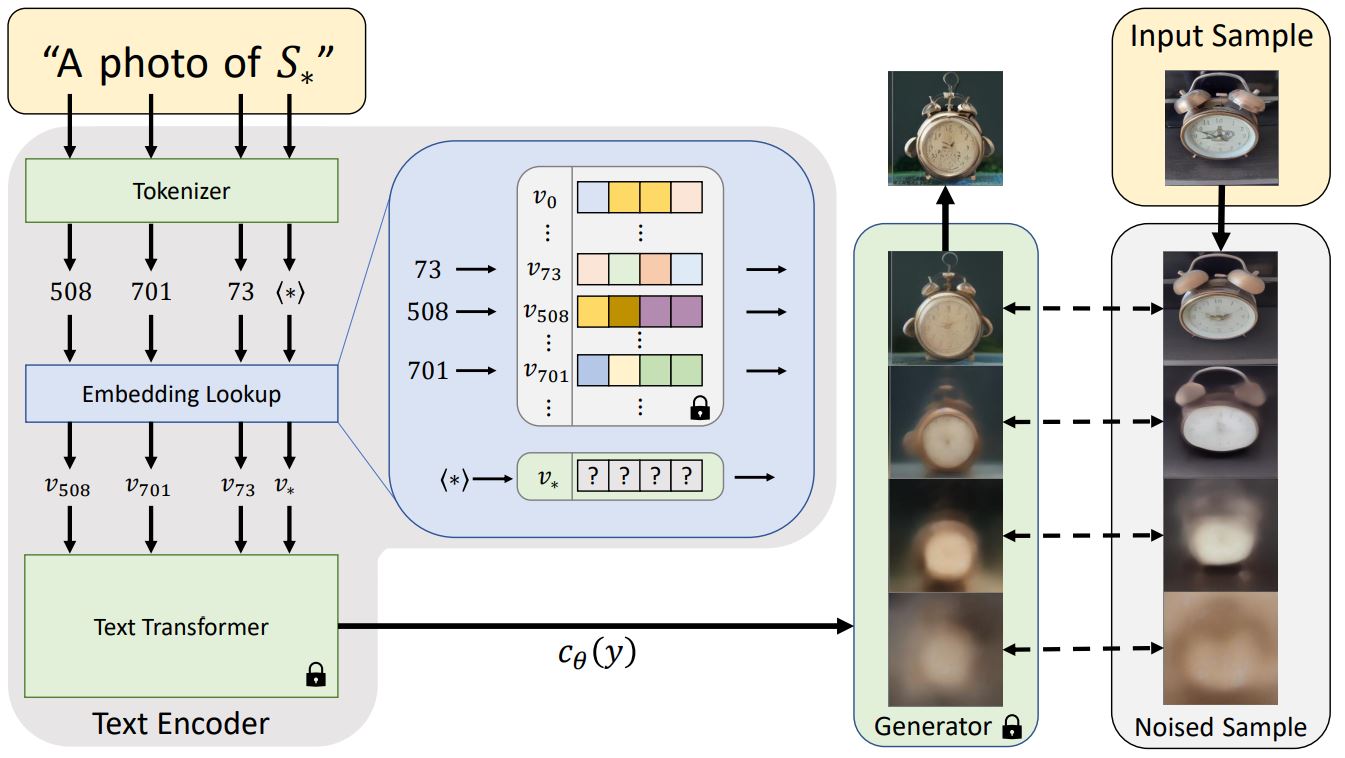

+Textual Inversion is a technique for capturing novel concepts from a small number of example images in a way that can later be used to control text-to-image pipelines. It does so by learning new 'words' in the embedding space of the pipeline's text encoder. These special words can then be used within text prompts to achieve very fine-grained control of the resulting images.

+

+- Textual Inversion. Capture novel concepts from a small set of sample images, and associate them with new "words" in the embedding space of the text encoder. Please, refer to [our training examples](https://github.com/huggingface/diffusers/tree/main/examples/textual_inversion) or [documentation](https://huggingface.co/docs/diffusers/training/text_inversion) to try for yourself.

+

+- Dreambooth. Another technique to capture new concepts in Stable Diffusion. This method fine-tunes the UNet (and, optionally, also the text encoder) of the pipeline to achieve impressive results. Please, refer to [our training example](https://github.com/huggingface/diffusers/tree/main/examples/dreambooth) and [training report](https://huggingface.co/blog/dreambooth) for additional details and training recommendations.

+

+- Full Stable Diffusion fine-tuning. If you have a more sizable dataset with a specific look or style, you can fine-tune Stable Diffusion so that it outputs images following those examples. This was the approach taken to create [a Pokémon Stable Diffusion model](https://huggingface.co/justinpinkney/pokemon-stable-diffusion) (by Justing Pinkney / Lambda Labs), [a Japanese specific version of Stable Diffusion](https://huggingface.co/spaces/rinna/japanese-stable-diffusion) (by [Rinna Co.](https://github.com/rinnakk/japanese-stable-diffusion/) and others. You can start at [our text-to-image fine-tuning example](https://github.com/huggingface/diffusers/tree/main/examples/text_to_image) and go from there.

+

+

+## Stable Diffusion Community Pipelines

+

+The release of Stable Diffusion as an open source model has fostered a lot of interesting ideas and experimentation.

+Our [Community Examples folder](https://github.com/huggingface/diffusers/tree/main/examples/community) contains many ideas worth exploring, like interpolating to create animated videos, using CLIP Guidance for additional prompt fidelity, term weighting, and much more! [Take a look](https://huggingface.co/docs/diffusers/using-diffusers/custom_pipeline_overview) and [contribute your own](https://huggingface.co/docs/diffusers/using-diffusers/contribute_pipeline).

+

+## Other Examples

+

+There are many ways to try running Diffusers! Here we outline code-focused tools (primarily using `DiffusionPipeline`s and Google Colab) and interactive web-tools.

+

+### Running Code

+

+If you want to run the code yourself 💻, you can try out:

+- [Text-to-Image Latent Diffusion](https://huggingface.co/CompVis/ldm-text2im-large-256)

+```python

+# !pip install diffusers["torch"] transformers

+from diffusers import DiffusionPipeline

+

+device = "cuda"

+model_id = "CompVis/ldm-text2im-large-256"

+

+# load model and scheduler

+ldm = DiffusionPipeline.from_pretrained(model_id)

+ldm = ldm.to(device)

+

+# run pipeline in inference (sample random noise and denoise)

+prompt = "A painting of a squirrel eating a burger"

+image = ldm([prompt], num_inference_steps=50, eta=0.3, guidance_scale=6).images[0]

+

+# save image

+image.save("squirrel.png")

+```

+- [Unconditional Diffusion with discrete scheduler](https://huggingface.co/google/ddpm-celebahq-256)

+```python

+# !pip install diffusers["torch"]

+from diffusers import DDPMPipeline, DDIMPipeline, PNDMPipeline

+

+model_id = "google/ddpm-celebahq-256"

+device = "cuda"

+

+# load model and scheduler

+ddpm = DDPMPipeline.from_pretrained(model_id) # you can replace DDPMPipeline with DDIMPipeline or PNDMPipeline for faster inference

+ddpm.to(device)

+

+# run pipeline in inference (sample random noise and denoise)

+image = ddpm().images[0]

+

+# save image

+image.save("ddpm_generated_image.png")

+```

+- [Unconditional Latent Diffusion](https://huggingface.co/CompVis/ldm-celebahq-256)

+- [Unconditional Diffusion with continuous scheduler](https://huggingface.co/google/ncsnpp-ffhq-1024)

+

+**Other Image Notebooks**:

+* [image-to-image generation with Stable Diffusion](https://colab.research.google.com/github/huggingface/notebooks/blob/main/diffusers/image_2_image_using_diffusers.ipynb) ,

+* [tweak images via repeated Stable Diffusion seeds](https://colab.research.google.com/github/pcuenca/diffusers-examples/blob/main/notebooks/stable-diffusion-seeds.ipynb) ,

+

+**Diffusers for Other Modalities**:

+* [Molecule conformation generation](https://colab.research.google.com/github/huggingface/notebooks/blob/main/diffusers/geodiff_molecule_conformation.ipynb) ,

+* [Model-based reinforcement learning](https://colab.research.google.com/github/huggingface/notebooks/blob/main/diffusers/reinforcement_learning_with_diffusers.ipynb) ,

+

+### Web Demos

+If you just want to play around with some web demos, you can try out the following 🚀 Spaces:

+| Model | Hugging Face Spaces |

+|-------------------------------- |------------------------------------------------------------------------------------------------------------------------------------------------------------------- |

+| Text-to-Image Latent Diffusion | [](https://huggingface.co/spaces/CompVis/text2img-latent-diffusion) |

+| Faces generator | [](https://huggingface.co/spaces/CompVis/celeba-latent-diffusion) |

+| DDPM with different schedulers | [](https://huggingface.co/spaces/fusing/celeba-diffusion) |

+| Conditional generation from sketch | [](https://huggingface.co/spaces/huggingface/diffuse-the-rest) |

+| Composable diffusion | [](https://huggingface.co/spaces/Shuang59/Composable-Diffusion) |

+

+## Definitions

+

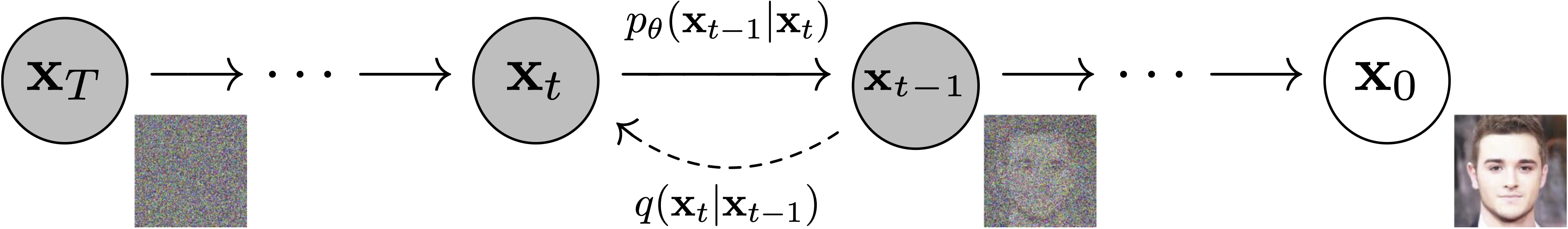

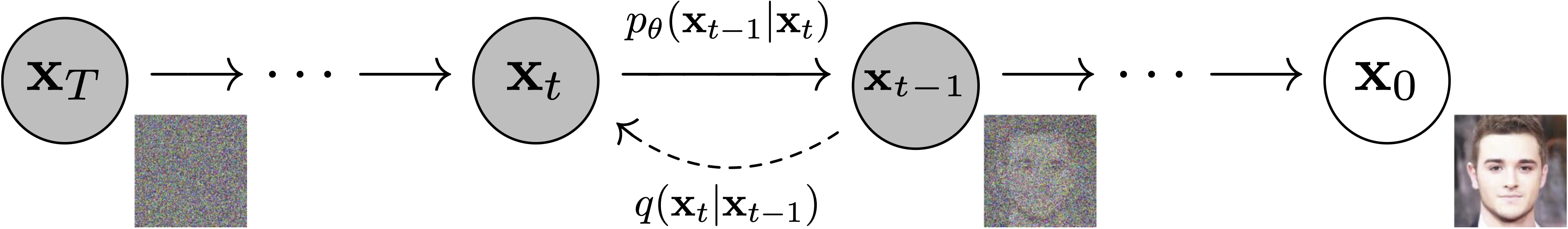

+**Models**: Neural network that models $p_\theta(\mathbf{x}_{t-1}|\mathbf{x}_t)$ (see image below) and is trained end-to-end to *denoise* a noisy input to an image.

+*Examples*: UNet, Conditioned UNet, 3D UNet, Transformer UNet

+

+

. We discuss the hottest trends about diffusion models, help each other with contributions, personal projects or

+just hang out ☕.

+

+## Quickstart

+

+In order to get started, we recommend taking a look at two notebooks:

+

+- The [Getting started with Diffusers](https://colab.research.google.com/github/huggingface/notebooks/blob/main/diffusers/diffusers_intro.ipynb) [](https://colab.research.google.com/github/huggingface/notebooks/blob/main/diffusers/diffusers_intro.ipynb) notebook, which showcases an end-to-end example of usage for diffusion models, schedulers and pipelines.

+ Take a look at this notebook to learn how to use the pipeline abstraction, which takes care of everything (model, scheduler, noise handling) for you, and also to understand each independent building block in the library.

+- The [Training a diffusers model](https://colab.research.google.com/github/huggingface/notebooks/blob/main/diffusers/training_example.ipynb) [](https://colab.research.google.com/github/huggingface/notebooks/blob/main/diffusers/training_example.ipynb) notebook summarizes diffusion models training methods. This notebook takes a step-by-step approach to training your

+ diffusion models on an image dataset, with explanatory graphics.

+

+## Stable Diffusion is fully compatible with `diffusers`!

+

+Stable Diffusion is a text-to-image latent diffusion model created by the researchers and engineers from [CompVis](https://github.com/CompVis), [Stability AI](https://stability.ai/), [LAION](https://laion.ai/) and [RunwayML](https://runwayml.com/). It's trained on 512x512 images from a subset of the [LAION-5B](https://laion.ai/blog/laion-5b/) database. This model uses a frozen CLIP ViT-L/14 text encoder to condition the model on text prompts. With its 860M UNet and 123M text encoder, the model is relatively lightweight and runs on a GPU with at least 4GB VRAM.

+See the [model card](https://huggingface.co/CompVis/stable-diffusion) for more information.

+

+

+### Text-to-Image generation with Stable Diffusion

+

+First let's install

+

+```bash

+pip install --upgrade diffusers transformers accelerate

+```

+

+We recommend using the model in [half-precision (`fp16`)](https://pytorch.org/blog/accelerating-training-on-nvidia-gpus-with-pytorch-automatic-mixed-precision/) as it gives almost always the same results as full

+precision while being roughly twice as fast and requiring half the amount of GPU RAM.

+

+```python

+import torch

+from diffusers import StableDiffusionPipeline

+

+pipe = StableDiffusionPipeline.from_pretrained("runwayml/stable-diffusion-v1-5", torch_dtype=torch.float16)

+pipe = pipe.to("cuda")

+

+prompt = "a photo of an astronaut riding a horse on mars"

+image = pipe(prompt).images[0]

+```

+

+#### Running the model locally

+

+You can also simply download the model folder and pass the path to the local folder to the `StableDiffusionPipeline`.

+

+```

+git lfs install

+git clone https://huggingface.co/runwayml/stable-diffusion-v1-5

+```

+

+Assuming the folder is stored locally under `./stable-diffusion-v1-5`, you can run stable diffusion

+as follows:

+

+```python

+pipe = StableDiffusionPipeline.from_pretrained("./stable-diffusion-v1-5")

+pipe = pipe.to("cuda")

+

+prompt = "a photo of an astronaut riding a horse on mars"

+image = pipe(prompt).images[0]

+```

+

+If you are limited by GPU memory, you might want to consider chunking the attention computation in addition

+to using `fp16`.

+The following snippet should result in less than 4GB VRAM.

+

+```python

+pipe = StableDiffusionPipeline.from_pretrained("runwayml/stable-diffusion-v1-5", torch_dtype=torch.float16)

+pipe = pipe.to("cuda")

+

+prompt = "a photo of an astronaut riding a horse on mars"

+pipe.enable_attention_slicing()

+image = pipe(prompt).images[0]

+```

+

+If you wish to use a different scheduler (e.g.: DDIM, LMS, PNDM/PLMS), you can instantiate

+it before the pipeline and pass it to `from_pretrained`.

+

+```python

+from diffusers import LMSDiscreteScheduler

+

+pipe.scheduler = LMSDiscreteScheduler.from_config(pipe.scheduler.config)

+

+prompt = "a photo of an astronaut riding a horse on mars"

+image = pipe(prompt).images[0]

+

+image.save("astronaut_rides_horse.png")

+```

+

+If you want to run Stable Diffusion on CPU or you want to have maximum precision on GPU,

+please run the model in the default *full-precision* setting:

+

+```python

+from diffusers import StableDiffusionPipeline

+

+pipe = StableDiffusionPipeline.from_pretrained("runwayml/stable-diffusion-v1-5")

+

+# disable the following line if you run on CPU

+pipe = pipe.to("cuda")

+

+prompt = "a photo of an astronaut riding a horse on mars"

+image = pipe(prompt).images[0]

+

+image.save("astronaut_rides_horse.png")

+```

+

+### JAX/Flax

+

+Diffusers offers a JAX / Flax implementation of Stable Diffusion for very fast inference. JAX shines specially on TPU hardware because each TPU server has 8 accelerators working in parallel, but it runs great on GPUs too.

+

+Running the pipeline with the default PNDMScheduler:

+

+```python

+import jax

+import numpy as np

+from flax.jax_utils import replicate

+from flax.training.common_utils import shard

+

+from diffusers import FlaxStableDiffusionPipeline

+

+pipeline, params = FlaxStableDiffusionPipeline.from_pretrained(

+ "runwayml/stable-diffusion-v1-5", revision="flax", dtype=jax.numpy.bfloat16

+)

+

+prompt = "a photo of an astronaut riding a horse on mars"

+

+prng_seed = jax.random.PRNGKey(0)

+num_inference_steps = 50

+

+num_samples = jax.device_count()

+prompt = num_samples * [prompt]

+prompt_ids = pipeline.prepare_inputs(prompt)

+

+# shard inputs and rng

+params = replicate(params)

+prng_seed = jax.random.split(prng_seed, jax.device_count())

+prompt_ids = shard(prompt_ids)

+

+images = pipeline(prompt_ids, params, prng_seed, num_inference_steps, jit=True).images

+images = pipeline.numpy_to_pil(np.asarray(images.reshape((num_samples,) + images.shape[-3:])))

+```

+

+**Note**:

+If you are limited by TPU memory, please make sure to load the `FlaxStableDiffusionPipeline` in `bfloat16` precision instead of the default `float32` precision as done above. You can do so by telling diffusers to load the weights from "bf16" branch.

+

+```python

+import jax

+import numpy as np

+from flax.jax_utils import replicate

+from flax.training.common_utils import shard

+

+from diffusers import FlaxStableDiffusionPipeline

+

+pipeline, params = FlaxStableDiffusionPipeline.from_pretrained(

+ "runwayml/stable-diffusion-v1-5", revision="bf16", dtype=jax.numpy.bfloat16

+)

+

+prompt = "a photo of an astronaut riding a horse on mars"

+

+prng_seed = jax.random.PRNGKey(0)

+num_inference_steps = 50

+

+num_samples = jax.device_count()

+prompt = num_samples * [prompt]

+prompt_ids = pipeline.prepare_inputs(prompt)

+

+# shard inputs and rng

+params = replicate(params)

+prng_seed = jax.random.split(prng_seed, jax.device_count())

+prompt_ids = shard(prompt_ids)

+

+images = pipeline(prompt_ids, params, prng_seed, num_inference_steps, jit=True).images

+images = pipeline.numpy_to_pil(np.asarray(images.reshape((num_samples,) + images.shape[-3:])))

+```

+

+Diffusers also has a Image-to-Image generation pipeline with Flax/Jax

+```python

+import jax

+import numpy as np

+import jax.numpy as jnp

+from flax.jax_utils import replicate

+from flax.training.common_utils import shard

+import requests

+from io import BytesIO

+from PIL import Image

+from diffusers import FlaxStableDiffusionImg2ImgPipeline

+

+def create_key(seed=0):

+ return jax.random.PRNGKey(seed)

+rng = create_key(0)

+

+url = "https://raw.githubusercontent.com/CompVis/stable-diffusion/main/assets/stable-samples/img2img/sketch-mountains-input.jpg"

+response = requests.get(url)

+init_img = Image.open(BytesIO(response.content)).convert("RGB")

+init_img = init_img.resize((768, 512))

+

+prompts = "A fantasy landscape, trending on artstation"

+

+pipeline, params = FlaxStableDiffusionImg2ImgPipeline.from_pretrained(

+ "CompVis/stable-diffusion-v1-4", revision="flax",

+ dtype=jnp.bfloat16,

+)

+

+num_samples = jax.device_count()

+rng = jax.random.split(rng, jax.device_count())

+prompt_ids, processed_image = pipeline.prepare_inputs(prompt=[prompts]*num_samples, image = [init_img]*num_samples)

+p_params = replicate(params)

+prompt_ids = shard(prompt_ids)

+processed_image = shard(processed_image)

+

+output = pipeline(

+ prompt_ids=prompt_ids,

+ image=processed_image,

+ params=p_params,

+ prng_seed=rng,

+ strength=0.75,

+ num_inference_steps=50,

+ jit=True,

+ height=512,

+ width=768).images

+

+output_images = pipeline.numpy_to_pil(np.asarray(output.reshape((num_samples,) + output.shape[-3:])))

+```

+

+Diffusers also has a Text-guided inpainting pipeline with Flax/Jax

+

+```python

+import jax

+import numpy as np

+from flax.jax_utils import replicate

+from flax.training.common_utils import shard

+import PIL

+import requests

+from io import BytesIO

+

+

+from diffusers import FlaxStableDiffusionInpaintPipeline

+

+def download_image(url):

+ response = requests.get(url)

+ return PIL.Image.open(BytesIO(response.content)).convert("RGB")

+img_url = "https://raw.githubusercontent.com/CompVis/latent-diffusion/main/data/inpainting_examples/overture-creations-5sI6fQgYIuo.png"

+mask_url = "https://raw.githubusercontent.com/CompVis/latent-diffusion/main/data/inpainting_examples/overture-creations-5sI6fQgYIuo_mask.png"

+

+init_image = download_image(img_url).resize((512, 512))

+mask_image = download_image(mask_url).resize((512, 512))

+

+pipeline, params = FlaxStableDiffusionInpaintPipeline.from_pretrained("xvjiarui/stable-diffusion-2-inpainting")

+

+prompt = "Face of a yellow cat, high resolution, sitting on a park bench"

+prng_seed = jax.random.PRNGKey(0)

+num_inference_steps = 50

+

+num_samples = jax.device_count()

+prompt = num_samples * [prompt]

+init_image = num_samples * [init_image]

+mask_image = num_samples * [mask_image]

+prompt_ids, processed_masked_images, processed_masks = pipeline.prepare_inputs(prompt, init_image, mask_image)

+

+

+# shard inputs and rng

+params = replicate(params)

+prng_seed = jax.random.split(prng_seed, jax.device_count())

+prompt_ids = shard(prompt_ids)

+processed_masked_images = shard(processed_masked_images)

+processed_masks = shard(processed_masks)

+

+images = pipeline(prompt_ids, processed_masks, processed_masked_images, params, prng_seed, num_inference_steps, jit=True).images

+images = pipeline.numpy_to_pil(np.asarray(images.reshape((num_samples,) + images.shape[-3:])))

+```

+

+### Image-to-Image text-guided generation with Stable Diffusion

+

+The `StableDiffusionImg2ImgPipeline` lets you pass a text prompt and an initial image to condition the generation of new images.

+

+```python

+import requests

+import torch

+from PIL import Image

+from io import BytesIO

+

+from diffusers import StableDiffusionImg2ImgPipeline

+

+# load the pipeline

+device = "cuda"

+model_id_or_path = "runwayml/stable-diffusion-v1-5"

+pipe = StableDiffusionImg2ImgPipeline.from_pretrained(model_id_or_path, torch_dtype=torch.float16)

+

+# or download via git clone https://huggingface.co/runwayml/stable-diffusion-v1-5

+# and pass `model_id_or_path="./stable-diffusion-v1-5"`.

+pipe = pipe.to(device)

+

+# let's download an initial image

+url = "https://raw.githubusercontent.com/CompVis/stable-diffusion/main/assets/stable-samples/img2img/sketch-mountains-input.jpg"

+

+response = requests.get(url)

+init_image = Image.open(BytesIO(response.content)).convert("RGB")

+init_image = init_image.resize((768, 512))

+

+prompt = "A fantasy landscape, trending on artstation"

+

+images = pipe(prompt=prompt, image=init_image, strength=0.75, guidance_scale=7.5).images

+

+images[0].save("fantasy_landscape.png")

+```

+You can also run this example on colab [](https://colab.research.google.com/github/huggingface/notebooks/blob/main/diffusers/image_2_image_using_diffusers.ipynb)

+

+### In-painting using Stable Diffusion

+

+The `StableDiffusionInpaintPipeline` lets you edit specific parts of an image by providing a mask and a text prompt.

+

+```python

+import PIL

+import requests

+import torch

+from io import BytesIO

+

+from diffusers import StableDiffusionInpaintPipeline

+

+def download_image(url):

+ response = requests.get(url)

+ return PIL.Image.open(BytesIO(response.content)).convert("RGB")

+

+img_url = "https://raw.githubusercontent.com/CompVis/latent-diffusion/main/data/inpainting_examples/overture-creations-5sI6fQgYIuo.png"

+mask_url = "https://raw.githubusercontent.com/CompVis/latent-diffusion/main/data/inpainting_examples/overture-creations-5sI6fQgYIuo_mask.png"

+

+init_image = download_image(img_url).resize((512, 512))

+mask_image = download_image(mask_url).resize((512, 512))

+

+pipe = StableDiffusionInpaintPipeline.from_pretrained("runwayml/stable-diffusion-inpainting", torch_dtype=torch.float16)

+pipe = pipe.to("cuda")

+

+prompt = "Face of a yellow cat, high resolution, sitting on a park bench"

+image = pipe(prompt=prompt, image=init_image, mask_image=mask_image).images[0]

+```

+

+### Tweak prompts reusing seeds and latents

+

+You can generate your own latents to reproduce results, or tweak your prompt on a specific result you liked.

+Please have a look at [Reusing seeds for deterministic generation](https://huggingface.co/docs/diffusers/main/en/using-diffusers/reusing_seeds).

+

+## Fine-Tuning Stable Diffusion

+

+Fine-tuning techniques make it possible to adapt Stable Diffusion to your own dataset, or add new subjects to it. These are some of the techniques supported in `diffusers`:

+

+Textual Inversion is a technique for capturing novel concepts from a small number of example images in a way that can later be used to control text-to-image pipelines. It does so by learning new 'words' in the embedding space of the pipeline's text encoder. These special words can then be used within text prompts to achieve very fine-grained control of the resulting images.

+

+- Textual Inversion. Capture novel concepts from a small set of sample images, and associate them with new "words" in the embedding space of the text encoder. Please, refer to [our training examples](https://github.com/huggingface/diffusers/tree/main/examples/textual_inversion) or [documentation](https://huggingface.co/docs/diffusers/training/text_inversion) to try for yourself.

+

+- Dreambooth. Another technique to capture new concepts in Stable Diffusion. This method fine-tunes the UNet (and, optionally, also the text encoder) of the pipeline to achieve impressive results. Please, refer to [our training example](https://github.com/huggingface/diffusers/tree/main/examples/dreambooth) and [training report](https://huggingface.co/blog/dreambooth) for additional details and training recommendations.

+

+- Full Stable Diffusion fine-tuning. If you have a more sizable dataset with a specific look or style, you can fine-tune Stable Diffusion so that it outputs images following those examples. This was the approach taken to create [a Pokémon Stable Diffusion model](https://huggingface.co/justinpinkney/pokemon-stable-diffusion) (by Justing Pinkney / Lambda Labs), [a Japanese specific version of Stable Diffusion](https://huggingface.co/spaces/rinna/japanese-stable-diffusion) (by [Rinna Co.](https://github.com/rinnakk/japanese-stable-diffusion/) and others. You can start at [our text-to-image fine-tuning example](https://github.com/huggingface/diffusers/tree/main/examples/text_to_image) and go from there.

+

+

+## Stable Diffusion Community Pipelines

+

+The release of Stable Diffusion as an open source model has fostered a lot of interesting ideas and experimentation.

+Our [Community Examples folder](https://github.com/huggingface/diffusers/tree/main/examples/community) contains many ideas worth exploring, like interpolating to create animated videos, using CLIP Guidance for additional prompt fidelity, term weighting, and much more! [Take a look](https://huggingface.co/docs/diffusers/using-diffusers/custom_pipeline_overview) and [contribute your own](https://huggingface.co/docs/diffusers/using-diffusers/contribute_pipeline).

+

+## Other Examples

+

+There are many ways to try running Diffusers! Here we outline code-focused tools (primarily using `DiffusionPipeline`s and Google Colab) and interactive web-tools.

+

+### Running Code

+

+If you want to run the code yourself 💻, you can try out:

+- [Text-to-Image Latent Diffusion](https://huggingface.co/CompVis/ldm-text2im-large-256)

+```python

+# !pip install diffusers["torch"] transformers

+from diffusers import DiffusionPipeline

+

+device = "cuda"

+model_id = "CompVis/ldm-text2im-large-256"

+

+# load model and scheduler

+ldm = DiffusionPipeline.from_pretrained(model_id)

+ldm = ldm.to(device)

+

+# run pipeline in inference (sample random noise and denoise)

+prompt = "A painting of a squirrel eating a burger"

+image = ldm([prompt], num_inference_steps=50, eta=0.3, guidance_scale=6).images[0]

+

+# save image

+image.save("squirrel.png")

+```

+- [Unconditional Diffusion with discrete scheduler](https://huggingface.co/google/ddpm-celebahq-256)

+```python

+# !pip install diffusers["torch"]

+from diffusers import DDPMPipeline, DDIMPipeline, PNDMPipeline

+

+model_id = "google/ddpm-celebahq-256"

+device = "cuda"

+

+# load model and scheduler

+ddpm = DDPMPipeline.from_pretrained(model_id) # you can replace DDPMPipeline with DDIMPipeline or PNDMPipeline for faster inference

+ddpm.to(device)

+

+# run pipeline in inference (sample random noise and denoise)

+image = ddpm().images[0]

+

+# save image

+image.save("ddpm_generated_image.png")

+```

+- [Unconditional Latent Diffusion](https://huggingface.co/CompVis/ldm-celebahq-256)

+- [Unconditional Diffusion with continuous scheduler](https://huggingface.co/google/ncsnpp-ffhq-1024)

+

+**Other Image Notebooks**:

+* [image-to-image generation with Stable Diffusion](https://colab.research.google.com/github/huggingface/notebooks/blob/main/diffusers/image_2_image_using_diffusers.ipynb) ,

+* [tweak images via repeated Stable Diffusion seeds](https://colab.research.google.com/github/pcuenca/diffusers-examples/blob/main/notebooks/stable-diffusion-seeds.ipynb) ,

+

+**Diffusers for Other Modalities**:

+* [Molecule conformation generation](https://colab.research.google.com/github/huggingface/notebooks/blob/main/diffusers/geodiff_molecule_conformation.ipynb) ,

+* [Model-based reinforcement learning](https://colab.research.google.com/github/huggingface/notebooks/blob/main/diffusers/reinforcement_learning_with_diffusers.ipynb) ,

+

+### Web Demos

+If you just want to play around with some web demos, you can try out the following 🚀 Spaces:

+| Model | Hugging Face Spaces |

+|-------------------------------- |------------------------------------------------------------------------------------------------------------------------------------------------------------------- |

+| Text-to-Image Latent Diffusion | [](https://huggingface.co/spaces/CompVis/text2img-latent-diffusion) |

+| Faces generator | [](https://huggingface.co/spaces/CompVis/celeba-latent-diffusion) |

+| DDPM with different schedulers | [](https://huggingface.co/spaces/fusing/celeba-diffusion) |

+| Conditional generation from sketch | [](https://huggingface.co/spaces/huggingface/diffuse-the-rest) |

+| Composable diffusion | [](https://huggingface.co/spaces/Shuang59/Composable-Diffusion) |

+

+## Definitions

+

+**Models**: Neural network that models $p_\theta(\mathbf{x}_{t-1}|\mathbf{x}_t)$ (see image below) and is trained end-to-end to *denoise* a noisy input to an image.

+*Examples*: UNet, Conditioned UNet, 3D UNet, Transformer UNet

+

+

+  +

+

+ Figure from DDPM paper (https://arxiv.org/abs/2006.11239).

+

+

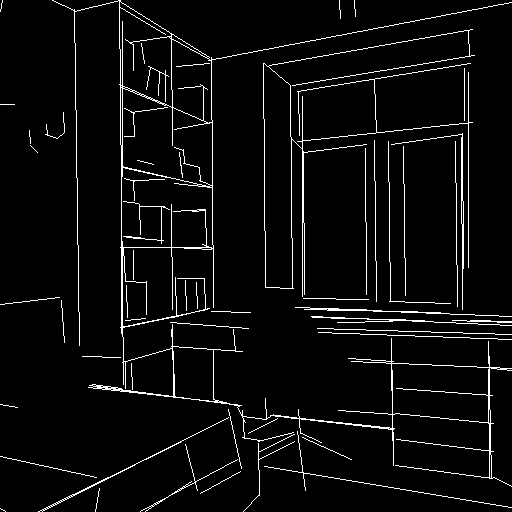

+**Schedulers**: Algorithm class for both **inference** and **training**.

+The class provides functionality to compute previous image according to alpha, beta schedule as well as predict noise for training. Also known as **Samplers**.

+*Examples*: [DDPM](https://arxiv.org/abs/2006.11239), [DDIM](https://arxiv.org/abs/2010.02502), [PNDM](https://arxiv.org/abs/2202.09778), [DEIS](https://arxiv.org/abs/2204.13902)

+

+

+  +

+

+ Sampling and training algorithms. Figure from DDPM paper (https://arxiv.org/abs/2006.11239).

+

+

+

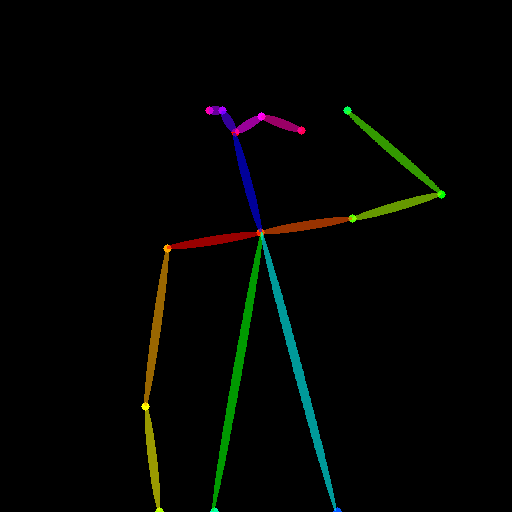

+**Diffusion Pipeline**: End-to-end pipeline that includes multiple diffusion models, possible text encoders, ...

+*Examples*: Glide, Latent-Diffusion, Imagen, DALL-E 2

+

+

+  +

+

+ Figure from ImageGen (https://imagen.research.google/).

+

+

+## Philosophy

+

+- Readability and clarity is preferred over highly optimized code. A strong importance is put on providing readable, intuitive and elementary code design. *E.g.*, the provided [schedulers](https://github.com/huggingface/diffusers/tree/main/src/diffusers/schedulers) are separated from the provided [models](https://github.com/huggingface/diffusers/tree/main/src/diffusers/models) and provide well-commented code that can be read alongside the original paper.

+- Diffusers is **modality independent** and focuses on providing pretrained models and tools to build systems that generate **continuous outputs**, *e.g.* vision and audio.

+- Diffusion models and schedulers are provided as concise, elementary building blocks. In contrast, diffusion pipelines are a collection of end-to-end diffusion systems that can be used out-of-the-box, should stay as close as possible to their original implementation and can include components of another library, such as text-encoders. Examples for diffusion pipelines are [Glide](https://github.com/openai/glide-text2im) and [Latent Diffusion](https://github.com/CompVis/latent-diffusion).

+

+## In the works

+

+For the first release, 🤗 Diffusers focuses on text-to-image diffusion techniques. However, diffusers can be used for much more than that! Over the upcoming releases, we'll be focusing on:

+

+- Diffusers for audio

+- Diffusers for reinforcement learning (initial work happening in https://github.com/huggingface/diffusers/pull/105).

+- Diffusers for video generation

+- Diffusers for molecule generation (initial work happening in https://github.com/huggingface/diffusers/pull/54)

+

+A few pipeline components are already being worked on, namely:

+

+- BDDMPipeline for spectrogram-to-sound vocoding

+- GLIDEPipeline to support OpenAI's GLIDE model

+- Grad-TTS for text to audio generation / conditional audio generation

+

+We want diffusers to be a toolbox useful for diffusers models in general; if you find yourself limited in any way by the current API, or would like to see additional models, schedulers, or techniques, please open a [GitHub issue](https://github.com/huggingface/diffusers/issues) mentioning what you would like to see.

+

+## Credits

+

+This library concretizes previous work by many different authors and would not have been possible without their great research and implementations. We'd like to thank, in particular, the following implementations which have helped us in our development and without which the API could not have been as polished today:

+

+- @CompVis' latent diffusion models library, available [here](https://github.com/CompVis/latent-diffusion)

+- @hojonathanho original DDPM implementation, available [here](https://github.com/hojonathanho/diffusion) as well as the extremely useful translation into PyTorch by @pesser, available [here](https://github.com/pesser/pytorch_diffusion)

+- @ermongroup's DDIM implementation, available [here](https://github.com/ermongroup/ddim).

+- @yang-song's Score-VE and Score-VP implementations, available [here](https://github.com/yang-song/score_sde_pytorch)

+

+We also want to thank @heejkoo for the very helpful overview of papers, code and resources on diffusion models, available [here](https://github.com/heejkoo/Awesome-Diffusion-Models) as well as @crowsonkb and @rromb for useful discussions and insights.

+

+## Citation

+

+```bibtex

+@misc{von-platen-etal-2022-diffusers,

+ author = {Patrick von Platen and Suraj Patil and Anton Lozhkov and Pedro Cuenca and Nathan Lambert and Kashif Rasul and Mishig Davaadorj and Thomas Wolf},

+ title = {Diffusers: State-of-the-art diffusion models},

+ year = {2022},

+ publisher = {GitHub},

+ journal = {GitHub repository},

+ howpublished = {\url{https://github.com/huggingface/diffusers}}

+}

+```

diff --git a/eval_agent/eval_tools/t2i_comp/diffusers/_typos.toml b/eval_agent/eval_tools/t2i_comp/diffusers/_typos.toml

new file mode 100644

index 0000000000000000000000000000000000000000..551099f981e7885fbda9ed28e297bace0e13407b

--- /dev/null

+++ b/eval_agent/eval_tools/t2i_comp/diffusers/_typos.toml

@@ -0,0 +1,13 @@

+# Files for typos

+# Instruction: https://github.com/marketplace/actions/typos-action#getting-started

+

+[default.extend-identifiers]

+

+[default.extend-words]

+NIN="NIN" # NIN is used in scripts/convert_ncsnpp_original_checkpoint_to_diffusers.py

+nd="np" # nd may be np (numpy)

+parms="parms" # parms is used in scripts/convert_original_stable_diffusion_to_diffusers.py

+

+

+[files]

+extend-exclude = ["_typos.toml"]

diff --git a/eval_agent/eval_tools/t2i_comp/diffusers/docker/diffusers-flax-cpu/Dockerfile b/eval_agent/eval_tools/t2i_comp/diffusers/docker/diffusers-flax-cpu/Dockerfile

new file mode 100644

index 0000000000000000000000000000000000000000..57a9c1ec742200b48f8c2f906d1152e85e60584a

--- /dev/null

+++ b/eval_agent/eval_tools/t2i_comp/diffusers/docker/diffusers-flax-cpu/Dockerfile

@@ -0,0 +1,44 @@

+FROM ubuntu:20.04

+LABEL maintainer="Hugging Face"

+LABEL repository="diffusers"

+

+ENV DEBIAN_FRONTEND=noninteractive

+

+RUN apt update && \

+ apt install -y bash \

+ build-essential \

+ git \

+ git-lfs \

+ curl \

+ ca-certificates \

+ libsndfile1-dev \

+ python3.8 \

+ python3-pip \

+ python3.8-venv && \

+ rm -rf /var/lib/apt/lists

+

+# make sure to use venv

+RUN python3 -m venv /opt/venv

+ENV PATH="/opt/venv/bin:$PATH"

+

+# pre-install the heavy dependencies (these can later be overridden by the deps from setup.py)

+# follow the instructions here: https://cloud.google.com/tpu/docs/run-in-container#train_a_jax_model_in_a_docker_container

+RUN python3 -m pip install --no-cache-dir --upgrade pip && \

+ python3 -m pip install --upgrade --no-cache-dir \

+ clu \

+ "jax[cpu]>=0.2.16,!=0.3.2" \

+ "flax>=0.4.1" \

+ "jaxlib>=0.1.65" && \

+ python3 -m pip install --no-cache-dir \

+ accelerate \

+ datasets \

+ hf-doc-builder \

+ huggingface-hub \

+ Jinja2 \

+ librosa \

+ numpy \

+ scipy \

+ tensorboard \

+ transformers

+

+CMD ["/bin/bash"]

\ No newline at end of file

diff --git a/eval_agent/eval_tools/t2i_comp/diffusers/docker/diffusers-flax-tpu/Dockerfile b/eval_agent/eval_tools/t2i_comp/diffusers/docker/diffusers-flax-tpu/Dockerfile

new file mode 100644

index 0000000000000000000000000000000000000000..2517da586d74b43c4c94a0eca4651f047345ec4d

--- /dev/null

+++ b/eval_agent/eval_tools/t2i_comp/diffusers/docker/diffusers-flax-tpu/Dockerfile

@@ -0,0 +1,46 @@

+FROM ubuntu:20.04

+LABEL maintainer="Hugging Face"

+LABEL repository="diffusers"

+

+ENV DEBIAN_FRONTEND=noninteractive

+

+RUN apt update && \

+ apt install -y bash \

+ build-essential \

+ git \

+ git-lfs \

+ curl \

+ ca-certificates \

+ libsndfile1-dev \

+ python3.8 \

+ python3-pip \

+ python3.8-venv && \

+ rm -rf /var/lib/apt/lists

+

+# make sure to use venv

+RUN python3 -m venv /opt/venv

+ENV PATH="/opt/venv/bin:$PATH"

+

+# pre-install the heavy dependencies (these can later be overridden by the deps from setup.py)

+# follow the instructions here: https://cloud.google.com/tpu/docs/run-in-container#train_a_jax_model_in_a_docker_container

+RUN python3 -m pip install --no-cache-dir --upgrade pip && \

+ python3 -m pip install --no-cache-dir \

+ "jax[tpu]>=0.2.16,!=0.3.2" \

+ -f https://storage.googleapis.com/jax-releases/libtpu_releases.html && \

+ python3 -m pip install --upgrade --no-cache-dir \

+ clu \

+ "flax>=0.4.1" \

+ "jaxlib>=0.1.65" && \

+ python3 -m pip install --no-cache-dir \

+ accelerate \

+ datasets \

+ hf-doc-builder \

+ huggingface-hub \

+ Jinja2 \

+ librosa \

+ numpy \

+ scipy \

+ tensorboard \

+ transformers

+

+CMD ["/bin/bash"]

\ No newline at end of file

diff --git a/eval_agent/eval_tools/t2i_comp/diffusers/docker/diffusers-onnxruntime-cpu/Dockerfile b/eval_agent/eval_tools/t2i_comp/diffusers/docker/diffusers-onnxruntime-cpu/Dockerfile

new file mode 100644

index 0000000000000000000000000000000000000000..75f45be87a033e9476c4038218c9c2fd2f1255a5

--- /dev/null

+++ b/eval_agent/eval_tools/t2i_comp/diffusers/docker/diffusers-onnxruntime-cpu/Dockerfile

@@ -0,0 +1,44 @@

+FROM ubuntu:20.04

+LABEL maintainer="Hugging Face"

+LABEL repository="diffusers"

+

+ENV DEBIAN_FRONTEND=noninteractive

+

+RUN apt update && \

+ apt install -y bash \

+ build-essential \

+ git \

+ git-lfs \

+ curl \

+ ca-certificates \

+ libsndfile1-dev \

+ python3.8 \

+ python3-pip \

+ python3.8-venv && \

+ rm -rf /var/lib/apt/lists

+

+# make sure to use venv

+RUN python3 -m venv /opt/venv

+ENV PATH="/opt/venv/bin:$PATH"

+

+# pre-install the heavy dependencies (these can later be overridden by the deps from setup.py)

+RUN python3 -m pip install --no-cache-dir --upgrade pip && \

+ python3 -m pip install --no-cache-dir \

+ torch \

+ torchvision \

+ torchaudio \

+ onnxruntime \

+ --extra-index-url https://download.pytorch.org/whl/cpu && \

+ python3 -m pip install --no-cache-dir \

+ accelerate \

+ datasets \

+ hf-doc-builder \

+ huggingface-hub \

+ Jinja2 \

+ librosa \

+ numpy \

+ scipy \

+ tensorboard \

+ transformers

+

+CMD ["/bin/bash"]

\ No newline at end of file

diff --git a/eval_agent/eval_tools/t2i_comp/diffusers/docker/diffusers-onnxruntime-cuda/Dockerfile b/eval_agent/eval_tools/t2i_comp/diffusers/docker/diffusers-onnxruntime-cuda/Dockerfile

new file mode 100644

index 0000000000000000000000000000000000000000..2129dbcaf68c57755485e1e54e867af05b937336

--- /dev/null

+++ b/eval_agent/eval_tools/t2i_comp/diffusers/docker/diffusers-onnxruntime-cuda/Dockerfile

@@ -0,0 +1,44 @@

+FROM nvidia/cuda:11.6.2-cudnn8-devel-ubuntu20.04

+LABEL maintainer="Hugging Face"

+LABEL repository="diffusers"

+

+ENV DEBIAN_FRONTEND=noninteractive

+

+RUN apt update && \

+ apt install -y bash \

+ build-essential \

+ git \

+ git-lfs \

+ curl \

+ ca-certificates \

+ libsndfile1-dev \

+ python3.8 \

+ python3-pip \

+ python3.8-venv && \

+ rm -rf /var/lib/apt/lists

+

+# make sure to use venv

+RUN python3 -m venv /opt/venv

+ENV PATH="/opt/venv/bin:$PATH"

+

+# pre-install the heavy dependencies (these can later be overridden by the deps from setup.py)

+RUN python3 -m pip install --no-cache-dir --upgrade pip && \

+ python3 -m pip install --no-cache-dir \

+ torch \

+ torchvision \

+ torchaudio \

+ "onnxruntime-gpu>=1.13.1" \

+ --extra-index-url https://download.pytorch.org/whl/cu117 && \

+ python3 -m pip install --no-cache-dir \

+ accelerate \

+ datasets \

+ hf-doc-builder \

+ huggingface-hub \

+ Jinja2 \

+ librosa \

+ numpy \

+ scipy \

+ tensorboard \

+ transformers

+

+CMD ["/bin/bash"]

\ No newline at end of file

diff --git a/eval_agent/eval_tools/t2i_comp/diffusers/docker/diffusers-pytorch-cpu/Dockerfile b/eval_agent/eval_tools/t2i_comp/diffusers/docker/diffusers-pytorch-cpu/Dockerfile

new file mode 100644

index 0000000000000000000000000000000000000000..a70eff4c852b21e51c576e1e43172dd8dc25e1a0

--- /dev/null

+++ b/eval_agent/eval_tools/t2i_comp/diffusers/docker/diffusers-pytorch-cpu/Dockerfile

@@ -0,0 +1,43 @@

+FROM ubuntu:20.04

+LABEL maintainer="Hugging Face"

+LABEL repository="diffusers"

+

+ENV DEBIAN_FRONTEND=noninteractive

+

+RUN apt update && \

+ apt install -y bash \

+ build-essential \

+ git \

+ git-lfs \

+ curl \

+ ca-certificates \

+ libsndfile1-dev \

+ python3.8 \

+ python3-pip \

+ python3.8-venv && \

+ rm -rf /var/lib/apt/lists

+

+# make sure to use venv

+RUN python3 -m venv /opt/venv

+ENV PATH="/opt/venv/bin:$PATH"

+

+# pre-install the heavy dependencies (these can later be overridden by the deps from setup.py)

+RUN python3 -m pip install --no-cache-dir --upgrade pip && \

+ python3 -m pip install --no-cache-dir \

+ torch \

+ torchvision \

+ torchaudio \

+ --extra-index-url https://download.pytorch.org/whl/cpu && \

+ python3 -m pip install --no-cache-dir \

+ accelerate \

+ datasets \

+ hf-doc-builder \

+ huggingface-hub \

+ Jinja2 \

+ librosa \

+ numpy \

+ scipy \

+ tensorboard \

+ transformers

+

+CMD ["/bin/bash"]

\ No newline at end of file

diff --git a/eval_agent/eval_tools/t2i_comp/diffusers/docker/diffusers-pytorch-cuda/Dockerfile b/eval_agent/eval_tools/t2i_comp/diffusers/docker/diffusers-pytorch-cuda/Dockerfile

new file mode 100644

index 0000000000000000000000000000000000000000..1c5ac3998faa4a04fa67f2a640dd5ab28a838963

--- /dev/null

+++ b/eval_agent/eval_tools/t2i_comp/diffusers/docker/diffusers-pytorch-cuda/Dockerfile

@@ -0,0 +1,43 @@

+FROM nvidia/cuda:11.7.1-cudnn8-runtime-ubuntu20.04

+LABEL maintainer="Hugging Face"

+LABEL repository="diffusers"

+

+ENV DEBIAN_FRONTEND=noninteractive

+