+

+

+ OpenMMLab website

+

+

+ HOT

+

+

+

+ OpenMMLab platform

+

+

+ TRY IT OUT

+

+

+

+  +

+Then, you can clone the repositories to local:

+

+```shell

+git clone git@github.com:{username}/mmengine.git

+```

+

+After that, you should ddd official repository as the upstream repository

+

+```bash

+git remote add upstream git@github.com:open-mmlab/mmengine

+```

+

+Check whether remote repository has been added successfully by `git remote -v`

+

+```bash

+origin git@github.com:{username}/mmengine.git (fetch)

+origin git@github.com:{username}/mmengine.git (push)

+upstream git@github.com:open-mmlab/mmengine (fetch)

+upstream git@github.com:open-mmlab/mmengine (push)

+```

+

+> Here's a brief introduction to origin and upstream. When we use "git clone", we create an "origin" remote by default, which points to the repository cloned from. As for "upstream", we add it ourselves to point to the target repository. Of course, if you don't like the name "upstream", you could name it as you wish. Usually, we'll push the code to "origin". If the pushed code conflicts with the latest code in official("upstream"), we should pull the latest code from upstream to resolve the conflicts, and then push to "origin" again. The posted Pull Request will be updated automatically.

+

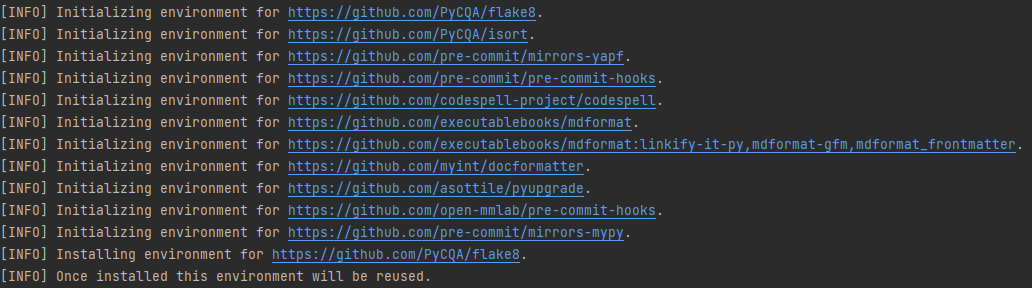

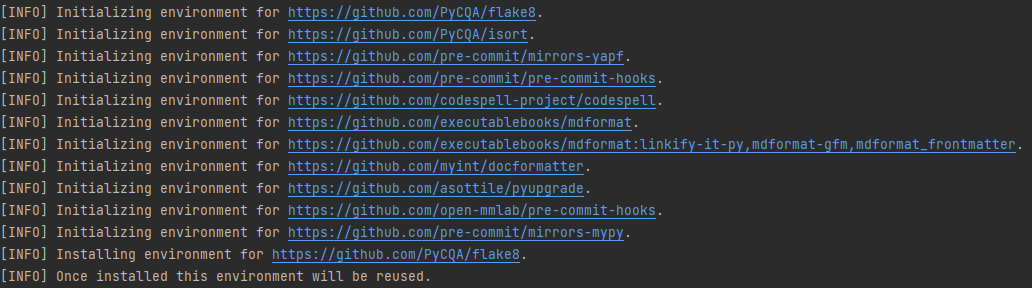

+#### 2. Configure pre-commit

+

+You should configure [pre-commit](https://pre-commit.com/#intro) in the local development environment to make sure the code style matches that of OpenMMLab. **Note**: The following code should be executed under the mmengine directory.

+

+```shell

+pip install -U pre-commit

+pre-commit install

+```

+

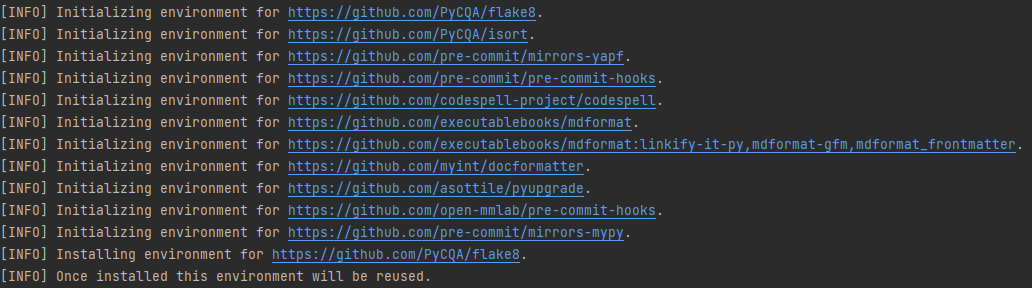

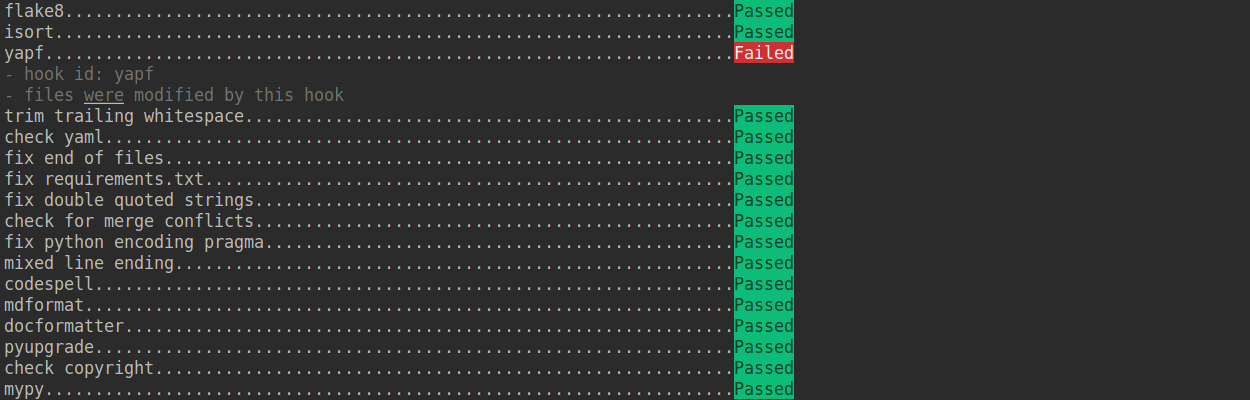

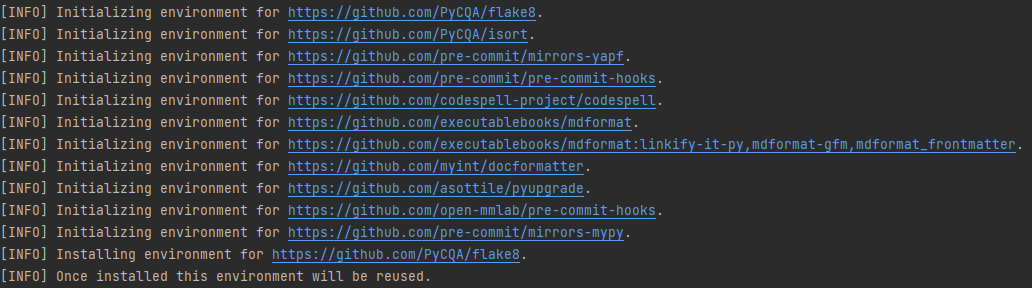

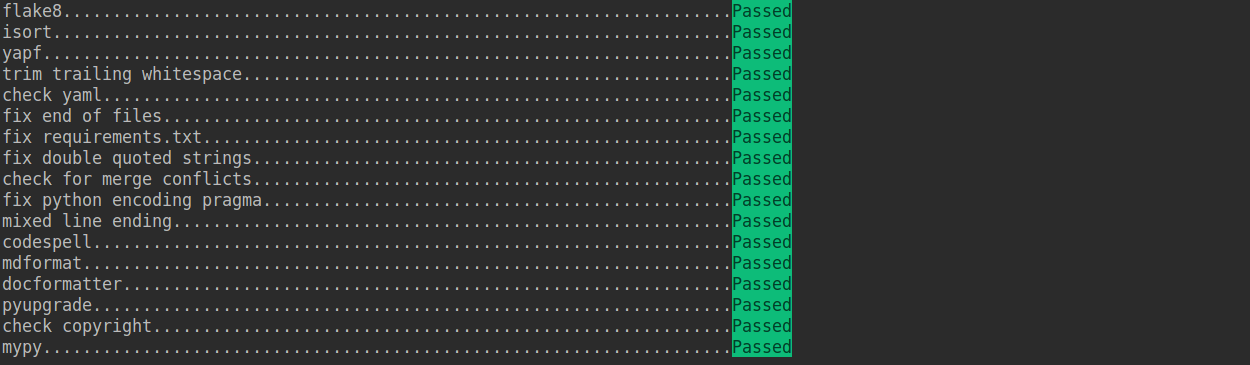

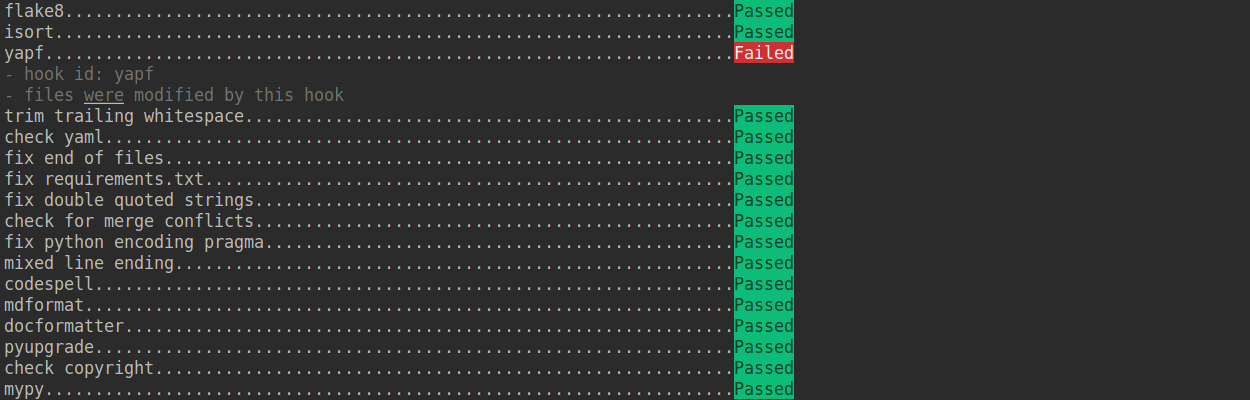

+Check that pre-commit is configured successfully, and install the hooks defined in `.pre-commit-config.yaml`.

+

+```shell

+pre-commit run --all-files

+```

+

+

+

+Then, you can clone the repositories to local:

+

+```shell

+git clone git@github.com:{username}/mmengine.git

+```

+

+After that, you should ddd official repository as the upstream repository

+

+```bash

+git remote add upstream git@github.com:open-mmlab/mmengine

+```

+

+Check whether remote repository has been added successfully by `git remote -v`

+

+```bash

+origin git@github.com:{username}/mmengine.git (fetch)

+origin git@github.com:{username}/mmengine.git (push)

+upstream git@github.com:open-mmlab/mmengine (fetch)

+upstream git@github.com:open-mmlab/mmengine (push)

+```

+

+> Here's a brief introduction to origin and upstream. When we use "git clone", we create an "origin" remote by default, which points to the repository cloned from. As for "upstream", we add it ourselves to point to the target repository. Of course, if you don't like the name "upstream", you could name it as you wish. Usually, we'll push the code to "origin". If the pushed code conflicts with the latest code in official("upstream"), we should pull the latest code from upstream to resolve the conflicts, and then push to "origin" again. The posted Pull Request will be updated automatically.

+

+#### 2. Configure pre-commit

+

+You should configure [pre-commit](https://pre-commit.com/#intro) in the local development environment to make sure the code style matches that of OpenMMLab. **Note**: The following code should be executed under the mmengine directory.

+

+```shell

+pip install -U pre-commit

+pre-commit install

+```

+

+Check that pre-commit is configured successfully, and install the hooks defined in `.pre-commit-config.yaml`.

+

+```shell

+pre-commit run --all-files

+```

+

+ +

+

+

+ +

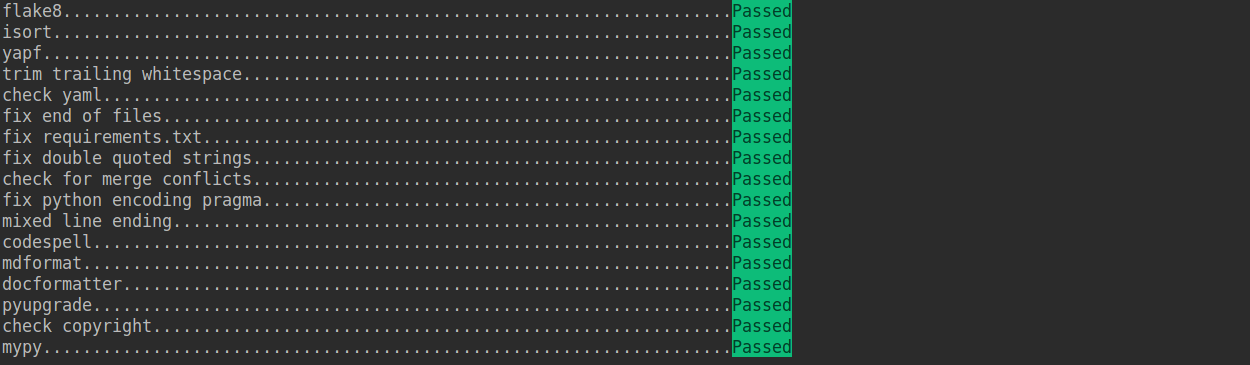

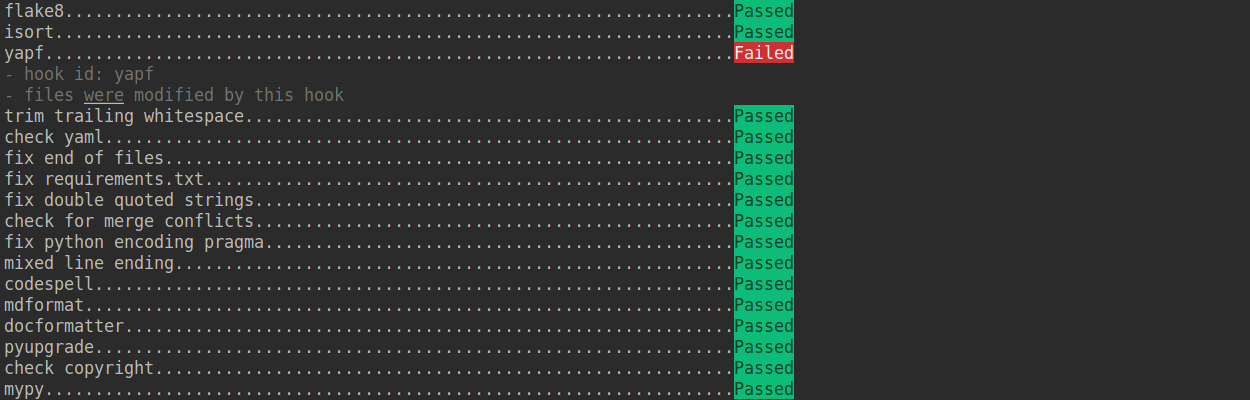

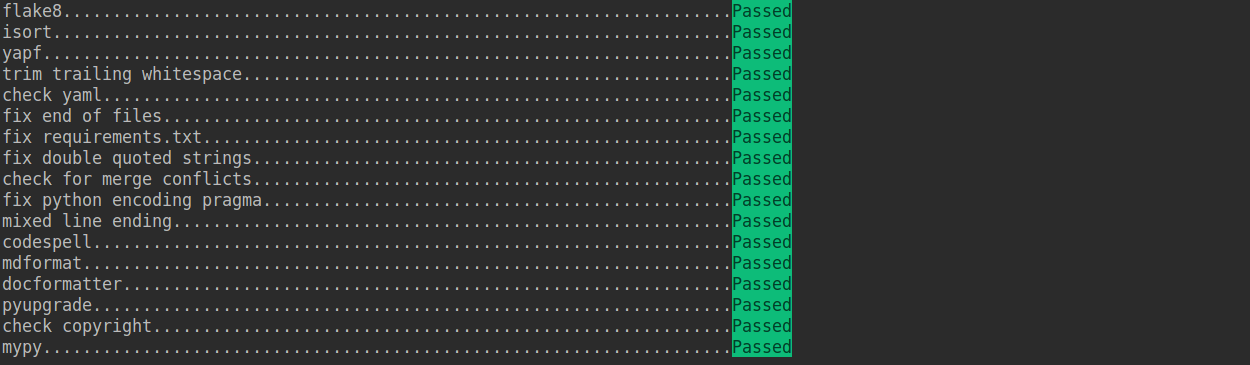

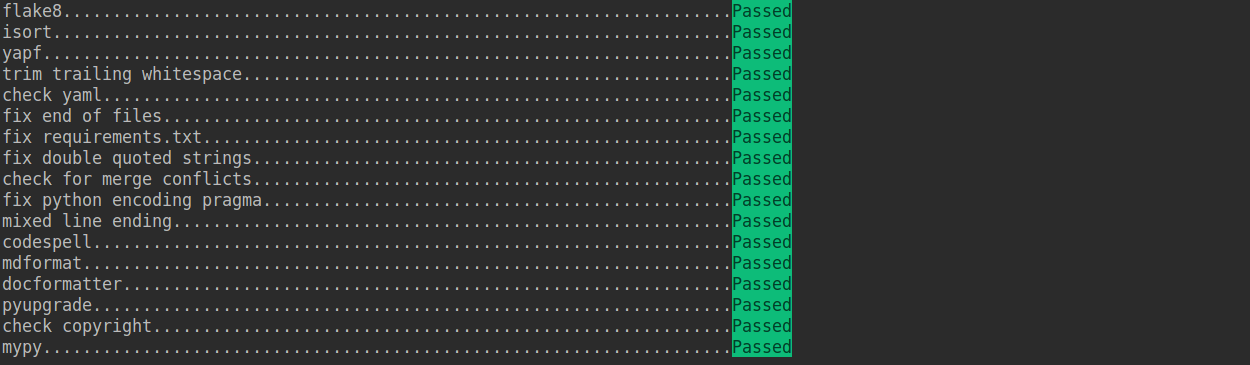

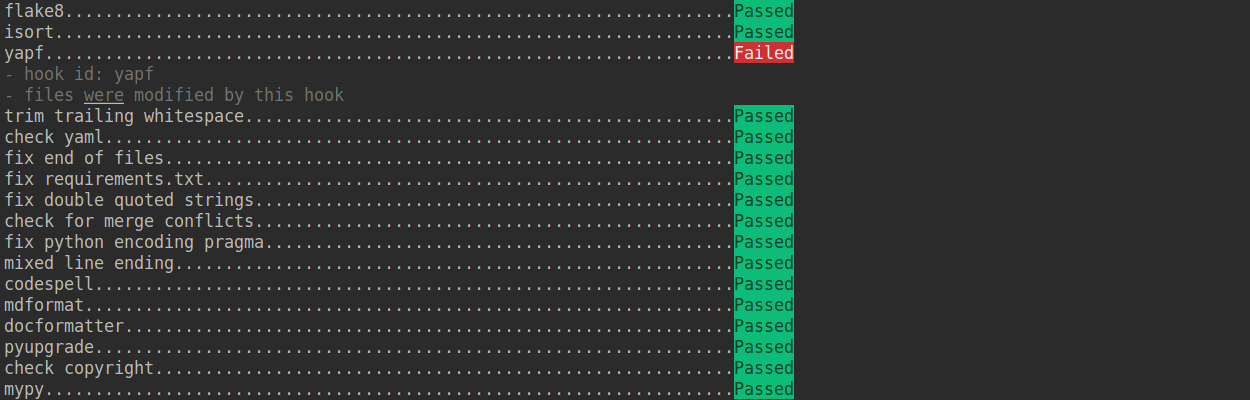

+If the installation process is interrupted, you can repeatedly run `pre-commit run ... ` to continue the installation.

+

+If the code does not conform to the code style specification, pre-commit will raise a warning and fixes some of the errors automatically.

+

+

+

+If the installation process is interrupted, you can repeatedly run `pre-commit run ... ` to continue the installation.

+

+If the code does not conform to the code style specification, pre-commit will raise a warning and fixes some of the errors automatically.

+

+ +

+If we want to commit our code bypassing the pre-commit hook, we can use the `--no-verify` option(**only for temporarily commit**).

+

+```shell

+git commit -m "xxx" --no-verify

+```

+

+#### 3. Create a development branch

+

+After configuring the pre-commit, we should create a branch based on the master branch to develop the new feature or fix the bug. The proposed branch name is `username/pr_name`

+

+```shell

+git checkout -b yhc/refactor_contributing_doc

+```

+

+In subsequent development, if the master branch of the local repository is behind the master branch of "upstream", we need to pull the upstream for synchronization, and then execute the above command:

+

+```shell

+git pull upstream master

+```

+

+#### 4. Commit the code and pass the unit test

+

+- MMEngine introduces mypy to do static type checking to increase the robustness of the code. Therefore, we need to add Type Hints to our code and pass the mypy check. If you are not familiar with Type Hints, you can refer to [this tutorial](https://docs.python.org/3/library/typing.html).

+

+- The committed code should pass through the unit test

+

+ ```shell

+ # Pass all unit tests

+ pytest tests

+

+ # Pass the unit test of runner

+ pytest tests/test_runner/test_runner.py

+ ```

+

+ If the unit test fails for lack of dependencies, you can install the dependencies referring to the [guidance](#unit-test)

+

+- If the documents are modified/added, we should check the rendering result referring to [guidance](#document-rendering)

+

+#### 5. Push the code to remote

+

+We could push the local commits to remote after passing through the check of unit test and pre-commit. You can associate the local branch with remote branch by adding `-u` option.

+

+```shell

+git push -u origin {branch_name}

+```

+

+This will allow you to use the `git push` command to push code directly next time without specifying a branch or the remote repository.

+

+#### 6. Create a Pull Request

+

+(1) Create a pull request in GitHub's Pull request interface

+

+

+

+If we want to commit our code bypassing the pre-commit hook, we can use the `--no-verify` option(**only for temporarily commit**).

+

+```shell

+git commit -m "xxx" --no-verify

+```

+

+#### 3. Create a development branch

+

+After configuring the pre-commit, we should create a branch based on the master branch to develop the new feature or fix the bug. The proposed branch name is `username/pr_name`

+

+```shell

+git checkout -b yhc/refactor_contributing_doc

+```

+

+In subsequent development, if the master branch of the local repository is behind the master branch of "upstream", we need to pull the upstream for synchronization, and then execute the above command:

+

+```shell

+git pull upstream master

+```

+

+#### 4. Commit the code and pass the unit test

+

+- MMEngine introduces mypy to do static type checking to increase the robustness of the code. Therefore, we need to add Type Hints to our code and pass the mypy check. If you are not familiar with Type Hints, you can refer to [this tutorial](https://docs.python.org/3/library/typing.html).

+

+- The committed code should pass through the unit test

+

+ ```shell

+ # Pass all unit tests

+ pytest tests

+

+ # Pass the unit test of runner

+ pytest tests/test_runner/test_runner.py

+ ```

+

+ If the unit test fails for lack of dependencies, you can install the dependencies referring to the [guidance](#unit-test)

+

+- If the documents are modified/added, we should check the rendering result referring to [guidance](#document-rendering)

+

+#### 5. Push the code to remote

+

+We could push the local commits to remote after passing through the check of unit test and pre-commit. You can associate the local branch with remote branch by adding `-u` option.

+

+```shell

+git push -u origin {branch_name}

+```

+

+This will allow you to use the `git push` command to push code directly next time without specifying a branch or the remote repository.

+

+#### 6. Create a Pull Request

+

+(1) Create a pull request in GitHub's Pull request interface

+

+ +

+(2) Modify the PR description according to the guidelines so that other developers can better understand your changes

+

+

+

+(2) Modify the PR description according to the guidelines so that other developers can better understand your changes

+

+ +

+Find more details about Pull Request description in [pull request guidelines](#pr-specs).

+

+**note**

+

+(a) The Pull Request description should contain the reason for the change, the content of the change, and the impact of the change, and be associated with the relevant Issue (see [documentation](https://docs.github.com/en/issues/tracking-your-work-with-issues/linking-a-pull-request-to-an-issue)

+

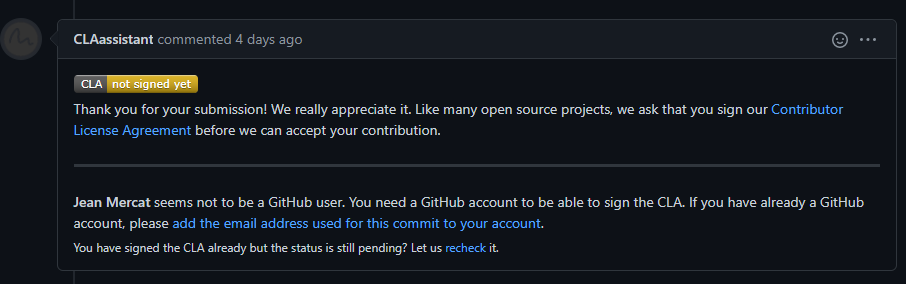

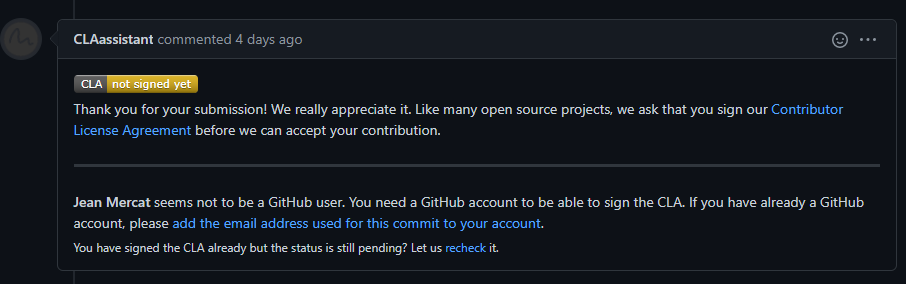

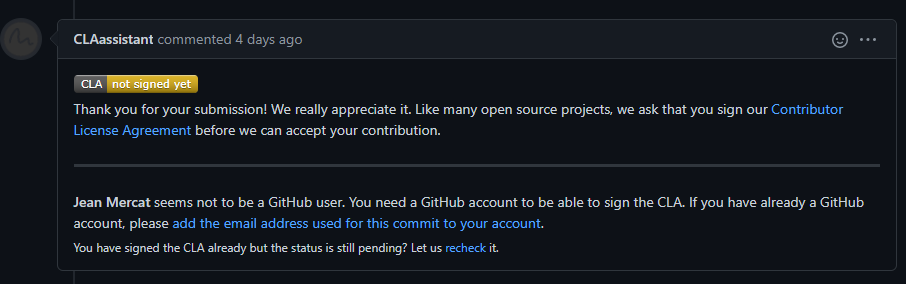

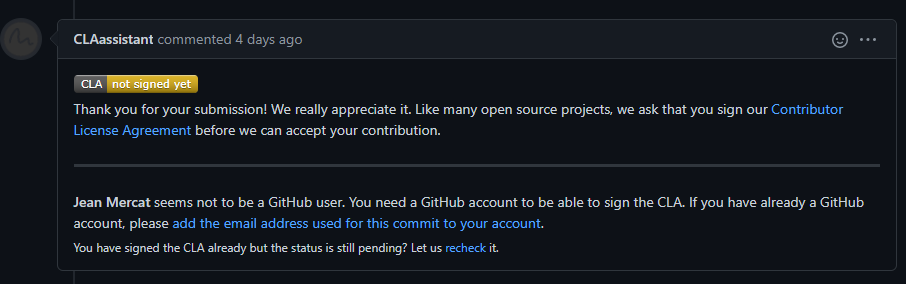

+(b) If it is your first contribution, please sign the CLA

+

+

+

+Find more details about Pull Request description in [pull request guidelines](#pr-specs).

+

+**note**

+

+(a) The Pull Request description should contain the reason for the change, the content of the change, and the impact of the change, and be associated with the relevant Issue (see [documentation](https://docs.github.com/en/issues/tracking-your-work-with-issues/linking-a-pull-request-to-an-issue)

+

+(b) If it is your first contribution, please sign the CLA

+

+ +

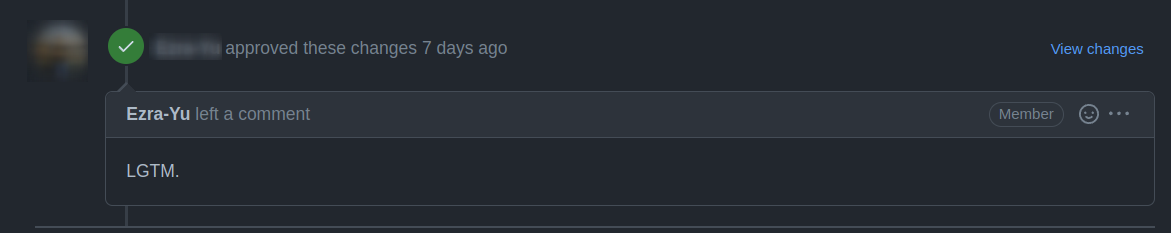

+(c) Check whether the Pull Request pass through the CI

+

+

+

+(c) Check whether the Pull Request pass through the CI

+

+ +

+MMEngine will run unit test for the posted Pull Request on different platforms (Linux, Window, Mac), based on different versions of Python, PyTorch, CUDA to make sure the code is correct. We can see the specific test information by clicking `Details` in the above image so that we can modify the code.

+

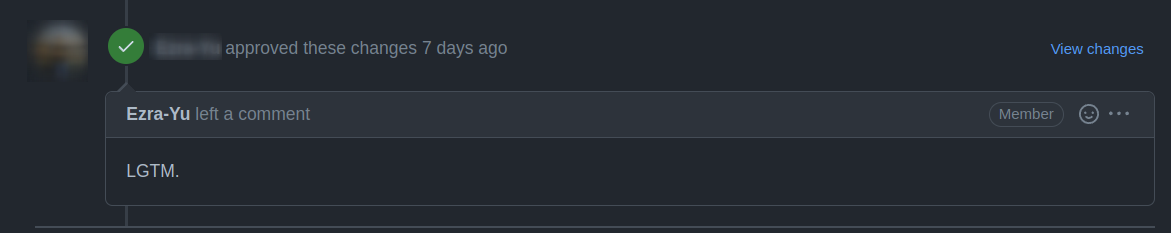

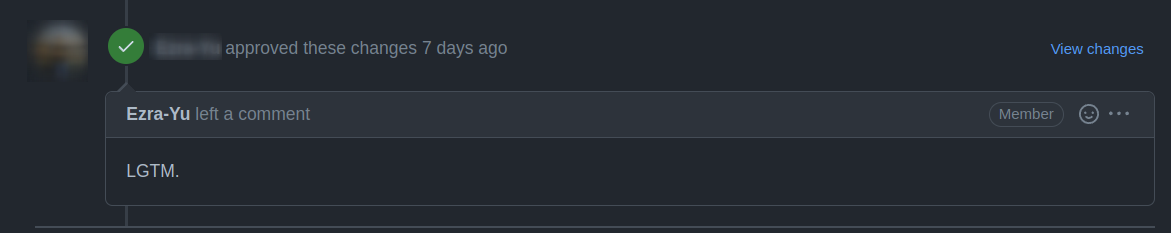

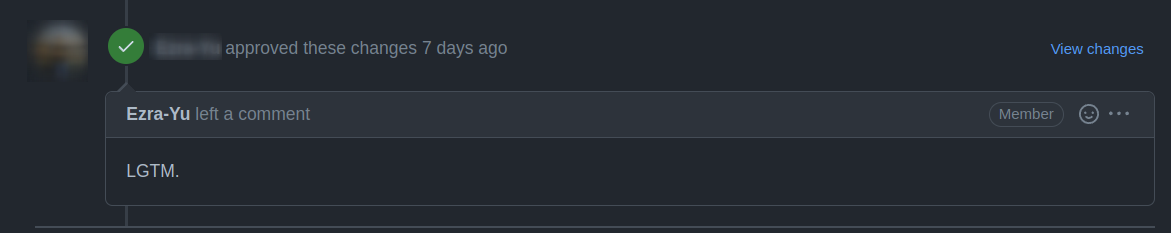

+(3) If the Pull Request passes the CI, then you can wait for the review from other developers. You'll modify the code based on the reviewer's comments, and repeat the steps [4](#4-commit-the-code-and-pass-the-unit-test)-[5](#5-push-the-code-to-remote) until all reviewers approve it. Then, we will merge it ASAP.

+

+

+

+MMEngine will run unit test for the posted Pull Request on different platforms (Linux, Window, Mac), based on different versions of Python, PyTorch, CUDA to make sure the code is correct. We can see the specific test information by clicking `Details` in the above image so that we can modify the code.

+

+(3) If the Pull Request passes the CI, then you can wait for the review from other developers. You'll modify the code based on the reviewer's comments, and repeat the steps [4](#4-commit-the-code-and-pass-the-unit-test)-[5](#5-push-the-code-to-remote) until all reviewers approve it. Then, we will merge it ASAP.

+

+ +

+#### 7. Resolve conflicts

+

+If your local branch conflicts with the latest master branch of "upstream", you'll need to resolove them. There are two ways to do this:

+

+```shell

+git fetch --all --prune

+git rebase upstream/master

+```

+

+or

+

+```shell

+git fetch --all --prune

+git merge upstream/master

+```

+

+If you are very good at handling conflicts, then you can use rebase to resolve conflicts, as this will keep your commit logs tidy. If you are not familiar with `rebase`, then you can use `merge` to resolve conflicts.

+

+### Guidance

+

+#### Unit test

+

+We should also make sure the committed code will not decrease the coverage of unit test, we could run the following command to check the coverage of unit test:

+

+```shell

+python -m coverage run -m pytest /path/to/test_file

+python -m coverage html

+# check file in htmlcov/index.html

+```

+

+#### Document rendering

+

+If the documents are modified/added, we should check the rendering result. We could install the dependencies and run the following command to render the documents and check the results:

+

+```shell

+pip install -r requirements/docs.txt

+cd docs/zh_cn/

+# or docs/en

+make html

+# check file in ./docs/zh_cn/_build/html/index.html

+```

+

+### Python Code style

+

+We adopt [PEP8](https://www.python.org/dev/peps/pep-0008/) as the preferred code style.

+

+We use the following tools for linting and formatting:

+

+- [flake8](https://github.com/PyCQA/flake8): A wrapper around some linter tools.

+- [isort](https://github.com/timothycrosley/isort): A Python utility to sort imports.

+- [yapf](https://github.com/google/yapf): A formatter for Python files.

+- [codespell](https://github.com/codespell-project/codespell): A Python utility to fix common misspellings in text files.

+- [mdformat](https://github.com/executablebooks/mdformat): Mdformat is an opinionated Markdown formatter that can be used to enforce a consistent style in Markdown files.

+- [docformatter](https://github.com/myint/docformatter): A formatter to format docstring.

+

+Style configurations of yapf and isort can be found in [setup.cfg](./setup.cfg).

+

+We use [pre-commit hook](https://pre-commit.com/) that checks and formats for `flake8`, `yapf`, `isort`, `trailing whitespaces`, `markdown files`,

+fixes `end-of-files`, `double-quoted-strings`, `python-encoding-pragma`, `mixed-line-ending`, sorts `requirments.txt` automatically on every commit.

+The config for a pre-commit hook is stored in [.pre-commit-config](./.pre-commit-config.yaml).

+

+### PR Specs

+

+1. Use [pre-commit](https://pre-commit.com) hook to avoid issues of code style

+

+2. One short-time branch should be matched with only one PR

+

+3. Accomplish a detailed change in one PR. Avoid large PR

+

+ - Bad: Support Faster R-CNN

+ - Acceptable: Add a box head to Faster R-CNN

+ - Good: Add a parameter to box head to support custom conv-layer number

+

+4. Provide clear and significant commit message

+

+5. Provide clear and meaningful PR description

+

+ - Task name should be clarified in title. The general format is: \[Prefix\] Short description of the PR (Suffix)

+ - Prefix: add new feature \[Feature\], fix bug \[Fix\], related to documents \[Docs\], in developing \[WIP\] (which will not be reviewed temporarily)

+ - Introduce main changes, results and influences on other modules in short description

+ - Associate related issues and pull requests with a milestone

diff --git a/testbed/open-mmlab__mmengine/CONTRIBUTING_zh-CN.md b/testbed/open-mmlab__mmengine/CONTRIBUTING_zh-CN.md

new file mode 100644

index 0000000000000000000000000000000000000000..357c02d4c6da0a4552708d715e02c7946a0c7844

--- /dev/null

+++ b/testbed/open-mmlab__mmengine/CONTRIBUTING_zh-CN.md

@@ -0,0 +1,255 @@

+## 贡献代码

+

+欢迎加入 MMEngine 社区,我们致力于打造最前沿的深度学习模型训练的基础库,我们欢迎任何类型的贡献,包括但不限于

+

+**修复错误**

+

+修复代码实现错误的步骤如下:

+

+1. 如果提交的代码改动较大,建议先提交 issue,并正确描述 issue 的现象、原因和复现方式,讨论后确认修复方案。

+2. 修复错误并补充相应的单元测试,提交拉取请求。

+

+**新增功能或组件**

+

+1. 如果新功能或模块涉及较大的代码改动,建议先提交 issue,确认功能的必要性。

+2. 实现新增功能并添单元测试,提交拉取请求。

+

+**文档补充**

+

+修复文档可以直接提交拉取请求

+

+添加文档或将文档翻译成其他语言步骤如下

+

+1. 提交 issue,确认添加文档的必要性。

+2. 添加文档,提交拉取请求。

+

+### 拉取请求工作流

+

+如果你对拉取请求不了解,没关系,接下来的内容将会从零开始,一步一步地指引你如何创建一个拉取请求。如果你想深入了解拉取请求的开发模式,可以参考 github [官方文档](https://docs.github.com/en/github/collaborating-with-issues-and-pull-requests/about-pull-requests)

+

+#### 1. 复刻仓库

+

+当你第一次提交拉取请求时,先复刻 OpenMMLab 原代码库,点击 GitHub 页面右上角的 **Fork** 按钮,复刻后的代码库将会出现在你的 GitHub 个人主页下。

+

+

+

+#### 7. Resolve conflicts

+

+If your local branch conflicts with the latest master branch of "upstream", you'll need to resolove them. There are two ways to do this:

+

+```shell

+git fetch --all --prune

+git rebase upstream/master

+```

+

+or

+

+```shell

+git fetch --all --prune

+git merge upstream/master

+```

+

+If you are very good at handling conflicts, then you can use rebase to resolve conflicts, as this will keep your commit logs tidy. If you are not familiar with `rebase`, then you can use `merge` to resolve conflicts.

+

+### Guidance

+

+#### Unit test

+

+We should also make sure the committed code will not decrease the coverage of unit test, we could run the following command to check the coverage of unit test:

+

+```shell

+python -m coverage run -m pytest /path/to/test_file

+python -m coverage html

+# check file in htmlcov/index.html

+```

+

+#### Document rendering

+

+If the documents are modified/added, we should check the rendering result. We could install the dependencies and run the following command to render the documents and check the results:

+

+```shell

+pip install -r requirements/docs.txt

+cd docs/zh_cn/

+# or docs/en

+make html

+# check file in ./docs/zh_cn/_build/html/index.html

+```

+

+### Python Code style

+

+We adopt [PEP8](https://www.python.org/dev/peps/pep-0008/) as the preferred code style.

+

+We use the following tools for linting and formatting:

+

+- [flake8](https://github.com/PyCQA/flake8): A wrapper around some linter tools.

+- [isort](https://github.com/timothycrosley/isort): A Python utility to sort imports.

+- [yapf](https://github.com/google/yapf): A formatter for Python files.

+- [codespell](https://github.com/codespell-project/codespell): A Python utility to fix common misspellings in text files.

+- [mdformat](https://github.com/executablebooks/mdformat): Mdformat is an opinionated Markdown formatter that can be used to enforce a consistent style in Markdown files.

+- [docformatter](https://github.com/myint/docformatter): A formatter to format docstring.

+

+Style configurations of yapf and isort can be found in [setup.cfg](./setup.cfg).

+

+We use [pre-commit hook](https://pre-commit.com/) that checks and formats for `flake8`, `yapf`, `isort`, `trailing whitespaces`, `markdown files`,

+fixes `end-of-files`, `double-quoted-strings`, `python-encoding-pragma`, `mixed-line-ending`, sorts `requirments.txt` automatically on every commit.

+The config for a pre-commit hook is stored in [.pre-commit-config](./.pre-commit-config.yaml).

+

+### PR Specs

+

+1. Use [pre-commit](https://pre-commit.com) hook to avoid issues of code style

+

+2. One short-time branch should be matched with only one PR

+

+3. Accomplish a detailed change in one PR. Avoid large PR

+

+ - Bad: Support Faster R-CNN

+ - Acceptable: Add a box head to Faster R-CNN

+ - Good: Add a parameter to box head to support custom conv-layer number

+

+4. Provide clear and significant commit message

+

+5. Provide clear and meaningful PR description

+

+ - Task name should be clarified in title. The general format is: \[Prefix\] Short description of the PR (Suffix)

+ - Prefix: add new feature \[Feature\], fix bug \[Fix\], related to documents \[Docs\], in developing \[WIP\] (which will not be reviewed temporarily)

+ - Introduce main changes, results and influences on other modules in short description

+ - Associate related issues and pull requests with a milestone

diff --git a/testbed/open-mmlab__mmengine/CONTRIBUTING_zh-CN.md b/testbed/open-mmlab__mmengine/CONTRIBUTING_zh-CN.md

new file mode 100644

index 0000000000000000000000000000000000000000..357c02d4c6da0a4552708d715e02c7946a0c7844

--- /dev/null

+++ b/testbed/open-mmlab__mmengine/CONTRIBUTING_zh-CN.md

@@ -0,0 +1,255 @@

+## 贡献代码

+

+欢迎加入 MMEngine 社区,我们致力于打造最前沿的深度学习模型训练的基础库,我们欢迎任何类型的贡献,包括但不限于

+

+**修复错误**

+

+修复代码实现错误的步骤如下:

+

+1. 如果提交的代码改动较大,建议先提交 issue,并正确描述 issue 的现象、原因和复现方式,讨论后确认修复方案。

+2. 修复错误并补充相应的单元测试,提交拉取请求。

+

+**新增功能或组件**

+

+1. 如果新功能或模块涉及较大的代码改动,建议先提交 issue,确认功能的必要性。

+2. 实现新增功能并添单元测试,提交拉取请求。

+

+**文档补充**

+

+修复文档可以直接提交拉取请求

+

+添加文档或将文档翻译成其他语言步骤如下

+

+1. 提交 issue,确认添加文档的必要性。

+2. 添加文档,提交拉取请求。

+

+### 拉取请求工作流

+

+如果你对拉取请求不了解,没关系,接下来的内容将会从零开始,一步一步地指引你如何创建一个拉取请求。如果你想深入了解拉取请求的开发模式,可以参考 github [官方文档](https://docs.github.com/en/github/collaborating-with-issues-and-pull-requests/about-pull-requests)

+

+#### 1. 复刻仓库

+

+当你第一次提交拉取请求时,先复刻 OpenMMLab 原代码库,点击 GitHub 页面右上角的 **Fork** 按钮,复刻后的代码库将会出现在你的 GitHub 个人主页下。

+

+ +

+将代码克隆到本地

+

+```shell

+git clone git@github.com:{username}/mmengine.git

+```

+

+添加原代码库为上游代码库

+

+```bash

+git remote add upstream git@github.com:open-mmlab/mmengine

+```

+

+检查 remote 是否添加成功,在终端输入 `git remote -v`

+

+```bash

+origin git@github.com:{username}/mmengine.git (fetch)

+origin git@github.com:{username}/mmengine.git (push)

+upstream git@github.com:open-mmlab/mmengine (fetch)

+upstream git@github.com:open-mmlab/mmengine (push)

+```

+

+> 这里对 origin 和 upstream 进行一个简单的介绍,当我们使用 git clone 来克隆代码时,会默认创建一个 origin 的 remote,它指向我们克隆的代码库地址,而 upstream 则是我们自己添加的,用来指向原始代码库地址。当然如果你不喜欢他叫 upstream,也可以自己修改,比如叫 open-mmlab。我们通常向 origin 提交代码(即 fork 下来的远程仓库),然后向 upstream 提交一个 pull request。如果提交的代码和最新的代码发生冲突,再从 upstream 拉取最新的代码,和本地分支解决冲突,再提交到 origin。

+

+#### 2. 配置 pre-commit

+

+在本地开发环境中,我们使用 [pre-commit](https://pre-commit.com/#intro) 来检查代码风格,以确保代码风格的统一。在提交代码,需要先安装 pre-commit(需要在 mmengine 目录下执行):

+

+```shell

+pip install -U pre-commit

+pre-commit install

+```

+

+检查 pre-commit 是否配置成功,并安装 `.pre-commit-config.yaml` 中的钩子:

+

+```shell

+pre-commit run --all-files

+```

+

+

+

+将代码克隆到本地

+

+```shell

+git clone git@github.com:{username}/mmengine.git

+```

+

+添加原代码库为上游代码库

+

+```bash

+git remote add upstream git@github.com:open-mmlab/mmengine

+```

+

+检查 remote 是否添加成功,在终端输入 `git remote -v`

+

+```bash

+origin git@github.com:{username}/mmengine.git (fetch)

+origin git@github.com:{username}/mmengine.git (push)

+upstream git@github.com:open-mmlab/mmengine (fetch)

+upstream git@github.com:open-mmlab/mmengine (push)

+```

+

+> 这里对 origin 和 upstream 进行一个简单的介绍,当我们使用 git clone 来克隆代码时,会默认创建一个 origin 的 remote,它指向我们克隆的代码库地址,而 upstream 则是我们自己添加的,用来指向原始代码库地址。当然如果你不喜欢他叫 upstream,也可以自己修改,比如叫 open-mmlab。我们通常向 origin 提交代码(即 fork 下来的远程仓库),然后向 upstream 提交一个 pull request。如果提交的代码和最新的代码发生冲突,再从 upstream 拉取最新的代码,和本地分支解决冲突,再提交到 origin。

+

+#### 2. 配置 pre-commit

+

+在本地开发环境中,我们使用 [pre-commit](https://pre-commit.com/#intro) 来检查代码风格,以确保代码风格的统一。在提交代码,需要先安装 pre-commit(需要在 mmengine 目录下执行):

+

+```shell

+pip install -U pre-commit

+pre-commit install

+```

+

+检查 pre-commit 是否配置成功,并安装 `.pre-commit-config.yaml` 中的钩子:

+

+```shell

+pre-commit run --all-files

+```

+

+ +

+

+

+ +

+> 如果你是中国用户,由于网络原因,可能会出现安装失败的情况,这时可以使用国内源

+

+> pre-commit install -c .pre-commit-config-zh-cn.yaml

+

+> pre-commit run --all-files -c .pre-commit-config-zh-cn.yaml

+

+如果安装过程被中断,可以重复执行 `pre-commit run ...` 继续安装。

+

+如果提交的代码不符合代码风格规范,pre-commit 会发出警告,并自动修复部分错误。

+

+

+

+> 如果你是中国用户,由于网络原因,可能会出现安装失败的情况,这时可以使用国内源

+

+> pre-commit install -c .pre-commit-config-zh-cn.yaml

+

+> pre-commit run --all-files -c .pre-commit-config-zh-cn.yaml

+

+如果安装过程被中断,可以重复执行 `pre-commit run ...` 继续安装。

+

+如果提交的代码不符合代码风格规范,pre-commit 会发出警告,并自动修复部分错误。

+

+ +

+如果我们想临时绕开 pre-commit 的检查提交一次代码,可以在 `git commit` 时加上 `--no-verify`(需要保证最后推送至远程仓库的代码能够通过 pre-commit 检查)。

+

+```shell

+git commit -m "xxx" --no-verify

+```

+

+#### 3. 创建开发分支

+

+安装完 pre-commit 之后,我们需要基于 master 创建开发分支,建议的分支命名规则为 `username/pr_name`。

+

+```shell

+git checkout -b yhc/refactor_contributing_doc

+```

+

+在后续的开发中,如果本地仓库的 master 分支落后于 upstream 的 master 分支,我们需要先拉取 upstream 的代码进行同步,再执行上面的命令

+

+```shell

+git pull upstream master

+```

+

+#### 4. 提交代码并在本地通过单元测试

+

+- MMEngine 引入了 mypy 来做静态类型检查,以增加代码的鲁棒性。因此我们在提交代码时,需要补充 Type Hints。具体规则可以参考[教程](https://zhuanlan.zhihu.com/p/519335398)。

+

+- 提交的代码同样需要通过单元测试

+

+ ```shell

+ # 通过全量单元测试

+ pytest tests

+

+ # 我们需要保证提交的代码能够通过修改模块的单元测试,以 runner 为例

+ pytest tests/test_runner/test_runner.py

+ ```

+

+ 如果你由于缺少依赖无法运行修改模块的单元测试,可以参考[指引-单元测试](#单元测试)

+

+- 如果修改/添加了文档,参考[指引](#文档渲染)确认文档渲染正常。

+

+#### 5. 推送代码到远程

+

+代码通过单元测试和 pre-commit 检查后,将代码推送到远程仓库,如果是第一次推送,可以在 `git push` 后加上 `-u` 参数以关联远程分支

+

+```shell

+git push -u origin {branch_name}

+```

+

+这样下次就可以直接使用 `git push` 命令推送代码了,而无需指定分支和远程仓库。

+

+#### 6. 提交拉取请求(PR)

+

+(1) 在 GitHub 的 Pull request 界面创建拉取请求

+

+

+如果我们想临时绕开 pre-commit 的检查提交一次代码,可以在 `git commit` 时加上 `--no-verify`(需要保证最后推送至远程仓库的代码能够通过 pre-commit 检查)。

+

+```shell

+git commit -m "xxx" --no-verify

+```

+

+#### 3. 创建开发分支

+

+安装完 pre-commit 之后,我们需要基于 master 创建开发分支,建议的分支命名规则为 `username/pr_name`。

+

+```shell

+git checkout -b yhc/refactor_contributing_doc

+```

+

+在后续的开发中,如果本地仓库的 master 分支落后于 upstream 的 master 分支,我们需要先拉取 upstream 的代码进行同步,再执行上面的命令

+

+```shell

+git pull upstream master

+```

+

+#### 4. 提交代码并在本地通过单元测试

+

+- MMEngine 引入了 mypy 来做静态类型检查,以增加代码的鲁棒性。因此我们在提交代码时,需要补充 Type Hints。具体规则可以参考[教程](https://zhuanlan.zhihu.com/p/519335398)。

+

+- 提交的代码同样需要通过单元测试

+

+ ```shell

+ # 通过全量单元测试

+ pytest tests

+

+ # 我们需要保证提交的代码能够通过修改模块的单元测试,以 runner 为例

+ pytest tests/test_runner/test_runner.py

+ ```

+

+ 如果你由于缺少依赖无法运行修改模块的单元测试,可以参考[指引-单元测试](#单元测试)

+

+- 如果修改/添加了文档,参考[指引](#文档渲染)确认文档渲染正常。

+

+#### 5. 推送代码到远程

+

+代码通过单元测试和 pre-commit 检查后,将代码推送到远程仓库,如果是第一次推送,可以在 `git push` 后加上 `-u` 参数以关联远程分支

+

+```shell

+git push -u origin {branch_name}

+```

+

+这样下次就可以直接使用 `git push` 命令推送代码了,而无需指定分支和远程仓库。

+

+#### 6. 提交拉取请求(PR)

+

+(1) 在 GitHub 的 Pull request 界面创建拉取请求

+ +

+(2) 根据指引修改 PR 描述,以便于其他开发者更好地理解你的修改

+

+

+

+(2) 根据指引修改 PR 描述,以便于其他开发者更好地理解你的修改

+

+ +

+描述规范详见[拉取请求规范](#拉取请求规范)

+

+

+

+**注意事项**

+

+(a) PR 描述应该包含修改理由、修改内容以及修改后带来的影响,并关联相关 Issue(具体方式见[文档](https://docs.github.com/en/issues/tracking-your-work-with-issues/linking-a-pull-request-to-an-issue))

+

+(b) 如果是第一次为 OpenMMLab 做贡献,需要签署 CLA

+

+

+

+描述规范详见[拉取请求规范](#拉取请求规范)

+

+

+

+**注意事项**

+

+(a) PR 描述应该包含修改理由、修改内容以及修改后带来的影响,并关联相关 Issue(具体方式见[文档](https://docs.github.com/en/issues/tracking-your-work-with-issues/linking-a-pull-request-to-an-issue))

+

+(b) 如果是第一次为 OpenMMLab 做贡献,需要签署 CLA

+

+ +

+(c) 检查提交的 PR 是否通过 CI(集成测试)

+

+

+

+(c) 检查提交的 PR 是否通过 CI(集成测试)

+

+ +

+MMEngine 会在不同的平台(Linux、Window、Mac),基于不同版本的 Python、PyTorch、CUDA 对提交的代码进行单元测试,以保证代码的正确性,如果有任何一个没有通过,我们可点击上图中的 `Details` 来查看具体的测试信息,以便于我们修改代码。

+

+(3) 如果 PR 通过了 CI,那么就可以等待其他开发者的 review,并根据 reviewer 的意见,修改代码,并重复 [4](#4-提交代码并本地通过单元测试)-[5](#5-推送代码到远程) 步骤,直到 reviewer 同意合入 PR。

+

+

+

+MMEngine 会在不同的平台(Linux、Window、Mac),基于不同版本的 Python、PyTorch、CUDA 对提交的代码进行单元测试,以保证代码的正确性,如果有任何一个没有通过,我们可点击上图中的 `Details` 来查看具体的测试信息,以便于我们修改代码。

+

+(3) 如果 PR 通过了 CI,那么就可以等待其他开发者的 review,并根据 reviewer 的意见,修改代码,并重复 [4](#4-提交代码并本地通过单元测试)-[5](#5-推送代码到远程) 步骤,直到 reviewer 同意合入 PR。

+

+ +

+所有 reviewer 同意合入 PR 后,我们会尽快将 PR 合并到主分支。

+

+#### 7. 解决冲突

+

+随着时间的推移,我们的代码库会不断更新,这时候,如果你的 PR 与主分支存在冲突,你需要解决冲突,解决冲突的方式有两种:

+

+```shell

+git fetch --all --prune

+git rebase upstream/master

+```

+

+或者

+

+```shell

+git fetch --all --prune

+git merge upstream/master

+```

+

+如果你非常善于处理冲突,那么可以使用 rebase 的方式来解决冲突,因为这能够保证你的 commit log 的整洁。如果你不太熟悉 `rebase` 的使用,那么可以使用 `merge` 的方式来解决冲突。

+

+### 指引

+

+#### 单元测试

+

+在提交修复代码错误或新增特性的拉取请求时,我们应该尽可能的让单元测试覆盖所有提交的代码,计算单元测试覆盖率的方法如下

+

+```shell

+python -m coverage run -m pytest /path/to/test_file

+python -m coverage html

+# check file in htmlcov/index.html

+```

+

+#### 文档渲染

+

+在提交修复代码错误或新增特性的拉取请求时,可能会需要修改/新增模块的 docstring。我们需要确认渲染后的文档样式是正确的。

+本地生成渲染后的文档的方法如下

+

+```shell

+pip install -r requirements/docs.txt

+cd docs/zh_cn/

+# or docs/en

+make html

+# check file in ./docs/zh_cn/_build/html/index.html

+```

+

+### Python 代码风格

+

+[PEP8](https://www.python.org/dev/peps/pep-0008/) 作为 OpenMMLab 算法库首选的代码规范,我们使用以下工具检查和格式化代码

+

+- [flake8](https://github.com/PyCQA/flake8): Python 官方发布的代码规范检查工具,是多个检查工具的封装

+- [isort](https://github.com/timothycrosley/isort): 自动调整模块导入顺序的工具

+- [yapf](https://github.com/google/yapf): Google 发布的代码规范检查工具

+- [codespell](https://github.com/codespell-project/codespell): 检查单词拼写是否有误

+- [mdformat](https://github.com/executablebooks/mdformat): 检查 markdown 文件的工具

+- [docformatter](https://github.com/myint/docformatter): 格式化 docstring 的工具

+

+yapf 和 isort 的配置可以在 [setup.cfg](./setup.cfg) 找到

+

+通过配置 [pre-commit hook](https://pre-commit.com/) ,我们可以在提交代码时自动检查和格式化 `flake8`、`yapf`、`isort`、`trailing whitespaces`、`markdown files`,修复 `end-of-files`、`double-quoted-strings`、`python-encoding-pragma`、`mixed-line-ending`,调整 `requirments.txt` 的包顺序。

+pre-commit 钩子的配置可以在 [.pre-commit-config](./.pre-commit-config.yaml) 找到。

+

+pre-commit 具体的安装使用方式见[拉取请求](#2-配置-pre-commit)。

+

+更具体的规范请参考 [OpenMMLab 代码规范](code_style.md)。

+

+### 拉取请求规范

+

+1. 使用 [pre-commit hook](https://pre-commit.com),尽量减少代码风格相关问题

+

+2. 一个`拉取请求`对应一个短期分支

+

+3. 粒度要细,一个`拉取请求`只做一件事情,避免超大的`拉取请求`

+

+ - Bad:实现 Faster R-CNN

+ - Acceptable:给 Faster R-CNN 添加一个 box head

+ - Good:给 box head 增加一个参数来支持自定义的 conv 层数

+

+4. 每次 Commit 时需要提供清晰且有意义 commit 信息

+

+5. 提供清晰且有意义的`拉取请求`描述

+

+ - 标题写明白任务名称,一般格式:\[Prefix\] Short description of the pull request (Suffix)

+ - prefix: 新增功能 \[Feature\], 修 bug \[Fix\], 文档相关 \[Docs\], 开发中 \[WIP\] (暂时不会被review)

+ - 描述里介绍`拉取请求`的主要修改内容,结果,以及对其他部分的影响, 参考`拉取请求`模板

+ - 关联相关的`议题` (issue) 和其他`拉取请求`

+

+6. 如果引入了其他三方库,或借鉴了三方库的代码,请确认他们的许可证和 MMEngine 兼容,并在借鉴的代码上补充 `This code is inspired from http://`

diff --git a/testbed/open-mmlab__mmengine/LICENSE b/testbed/open-mmlab__mmengine/LICENSE

new file mode 100644

index 0000000000000000000000000000000000000000..1bfc23e48f92245b229cdd57c77e79bc10a1cc27

--- /dev/null

+++ b/testbed/open-mmlab__mmengine/LICENSE

@@ -0,0 +1,203 @@

+Copyright 2018-2023 OpenMMLab. All rights reserved.

+

+ Apache License

+ Version 2.0, January 2004

+ http://www.apache.org/licenses/

+

+ TERMS AND CONDITIONS FOR USE, REPRODUCTION, AND DISTRIBUTION

+

+ 1. Definitions.

+

+ "License" shall mean the terms and conditions for use, reproduction,

+ and distribution as defined by Sections 1 through 9 of this document.

+

+ "Licensor" shall mean the copyright owner or entity authorized by

+ the copyright owner that is granting the License.

+

+ "Legal Entity" shall mean the union of the acting entity and all

+ other entities that control, are controlled by, or are under common

+ control with that entity. For the purposes of this definition,

+ "control" means (i) the power, direct or indirect, to cause the

+ direction or management of such entity, whether by contract or

+ otherwise, or (ii) ownership of fifty percent (50%) or more of the

+ outstanding shares, or (iii) beneficial ownership of such entity.

+

+ "You" (or "Your") shall mean an individual or Legal Entity

+ exercising permissions granted by this License.

+

+ "Source" form shall mean the preferred form for making modifications,

+ including but not limited to software source code, documentation

+ source, and configuration files.

+

+ "Object" form shall mean any form resulting from mechanical

+ transformation or translation of a Source form, including but

+ not limited to compiled object code, generated documentation,

+ and conversions to other media types.

+

+ "Work" shall mean the work of authorship, whether in Source or

+ Object form, made available under the License, as indicated by a

+ copyright notice that is included in or attached to the work

+ (an example is provided in the Appendix below).

+

+ "Derivative Works" shall mean any work, whether in Source or Object

+ form, that is based on (or derived from) the Work and for which the

+ editorial revisions, annotations, elaborations, or other modifications

+ represent, as a whole, an original work of authorship. For the purposes

+ of this License, Derivative Works shall not include works that remain

+ separable from, or merely link (or bind by name) to the interfaces of,

+ the Work and Derivative Works thereof.

+

+ "Contribution" shall mean any work of authorship, including

+ the original version of the Work and any modifications or additions

+ to that Work or Derivative Works thereof, that is intentionally

+ submitted to Licensor for inclusion in the Work by the copyright owner

+ or by an individual or Legal Entity authorized to submit on behalf of

+ the copyright owner. For the purposes of this definition, "submitted"

+ means any form of electronic, verbal, or written communication sent

+ to the Licensor or its representatives, including but not limited to

+ communication on electronic mailing lists, source code control systems,

+ and issue tracking systems that are managed by, or on behalf of, the

+ Licensor for the purpose of discussing and improving the Work, but

+ excluding communication that is conspicuously marked or otherwise

+ designated in writing by the copyright owner as "Not a Contribution."

+

+ "Contributor" shall mean Licensor and any individual or Legal Entity

+ on behalf of whom a Contribution has been received by Licensor and

+ subsequently incorporated within the Work.

+

+ 2. Grant of Copyright License. Subject to the terms and conditions of

+ this License, each Contributor hereby grants to You a perpetual,

+ worldwide, non-exclusive, no-charge, royalty-free, irrevocable

+ copyright license to reproduce, prepare Derivative Works of,

+ publicly display, publicly perform, sublicense, and distribute the

+ Work and such Derivative Works in Source or Object form.

+

+ 3. Grant of Patent License. Subject to the terms and conditions of

+ this License, each Contributor hereby grants to You a perpetual,

+ worldwide, non-exclusive, no-charge, royalty-free, irrevocable

+ (except as stated in this section) patent license to make, have made,

+ use, offer to sell, sell, import, and otherwise transfer the Work,

+ where such license applies only to those patent claims licensable

+ by such Contributor that are necessarily infringed by their

+ Contribution(s) alone or by combination of their Contribution(s)

+ with the Work to which such Contribution(s) was submitted. If You

+ institute patent litigation against any entity (including a

+ cross-claim or counterclaim in a lawsuit) alleging that the Work

+ or a Contribution incorporated within the Work constitutes direct

+ or contributory patent infringement, then any patent licenses

+ granted to You under this License for that Work shall terminate

+ as of the date such litigation is filed.

+

+ 4. Redistribution. You may reproduce and distribute copies of the

+ Work or Derivative Works thereof in any medium, with or without

+ modifications, and in Source or Object form, provided that You

+ meet the following conditions:

+

+ (a) You must give any other recipients of the Work or

+ Derivative Works a copy of this License; and

+

+ (b) You must cause any modified files to carry prominent notices

+ stating that You changed the files; and

+

+ (c) You must retain, in the Source form of any Derivative Works

+ that You distribute, all copyright, patent, trademark, and

+ attribution notices from the Source form of the Work,

+ excluding those notices that do not pertain to any part of

+ the Derivative Works; and

+

+ (d) If the Work includes a "NOTICE" text file as part of its

+ distribution, then any Derivative Works that You distribute must

+ include a readable copy of the attribution notices contained

+ within such NOTICE file, excluding those notices that do not

+ pertain to any part of the Derivative Works, in at least one

+ of the following places: within a NOTICE text file distributed

+ as part of the Derivative Works; within the Source form or

+ documentation, if provided along with the Derivative Works; or,

+ within a display generated by the Derivative Works, if and

+ wherever such third-party notices normally appear. The contents

+ of the NOTICE file are for informational purposes only and

+ do not modify the License. You may add Your own attribution

+ notices within Derivative Works that You distribute, alongside

+ or as an addendum to the NOTICE text from the Work, provided

+ that such additional attribution notices cannot be construed

+ as modifying the License.

+

+ You may add Your own copyright statement to Your modifications and

+ may provide additional or different license terms and conditions

+ for use, reproduction, or distribution of Your modifications, or

+ for any such Derivative Works as a whole, provided Your use,

+ reproduction, and distribution of the Work otherwise complies with

+ the conditions stated in this License.

+

+ 5. Submission of Contributions. Unless You explicitly state otherwise,

+ any Contribution intentionally submitted for inclusion in the Work

+ by You to the Licensor shall be under the terms and conditions of

+ this License, without any additional terms or conditions.

+ Notwithstanding the above, nothing herein shall supersede or modify

+ the terms of any separate license agreement you may have executed

+ with Licensor regarding such Contributions.

+

+ 6. Trademarks. This License does not grant permission to use the trade

+ names, trademarks, service marks, or product names of the Licensor,

+ except as required for reasonable and customary use in describing the

+ origin of the Work and reproducing the content of the NOTICE file.

+

+ 7. Disclaimer of Warranty. Unless required by applicable law or

+ agreed to in writing, Licensor provides the Work (and each

+ Contributor provides its Contributions) on an "AS IS" BASIS,

+ WITHOUT WARRANTIES OR CONDITIONS OF ANY KIND, either express or

+ implied, including, without limitation, any warranties or conditions

+ of TITLE, NON-INFRINGEMENT, MERCHANTABILITY, or FITNESS FOR A

+ PARTICULAR PURPOSE. You are solely responsible for determining the

+ appropriateness of using or redistributing the Work and assume any

+ risks associated with Your exercise of permissions under this License.

+

+ 8. Limitation of Liability. In no event and under no legal theory,

+ whether in tort (including negligence), contract, or otherwise,

+ unless required by applicable law (such as deliberate and grossly

+ negligent acts) or agreed to in writing, shall any Contributor be

+ liable to You for damages, including any direct, indirect, special,

+ incidental, or consequential damages of any character arising as a

+ result of this License or out of the use or inability to use the

+ Work (including but not limited to damages for loss of goodwill,

+ work stoppage, computer failure or malfunction, or any and all

+ other commercial damages or losses), even if such Contributor

+ has been advised of the possibility of such damages.

+

+ 9. Accepting Warranty or Additional Liability. While redistributing

+ the Work or Derivative Works thereof, You may choose to offer,

+ and charge a fee for, acceptance of support, warranty, indemnity,

+ or other liability obligations and/or rights consistent with this

+ License. However, in accepting such obligations, You may act only

+ on Your own behalf and on Your sole responsibility, not on behalf

+ of any other Contributor, and only if You agree to indemnify,

+ defend, and hold each Contributor harmless for any liability

+ incurred by, or claims asserted against, such Contributor by reason

+ of your accepting any such warranty or additional liability.

+

+ END OF TERMS AND CONDITIONS

+

+ APPENDIX: How to apply the Apache License to your work.

+

+ To apply the Apache License to your work, attach the following

+ boilerplate notice, with the fields enclosed by brackets "[]"

+ replaced with your own identifying information. (Don't include

+ the brackets!) The text should be enclosed in the appropriate

+ comment syntax for the file format. We also recommend that a

+ file or class name and description of purpose be included on the

+ same "printed page" as the copyright notice for easier

+ identification within third-party archives.

+

+ Copyright 2018-2023 OpenMMLab.

+

+ Licensed under the Apache License, Version 2.0 (the "License");

+ you may not use this file except in compliance with the License.

+ You may obtain a copy of the License at

+

+ http://www.apache.org/licenses/LICENSE-2.0

+

+ Unless required by applicable law or agreed to in writing, software

+ distributed under the License is distributed on an "AS IS" BASIS,

+ WITHOUT WARRANTIES OR CONDITIONS OF ANY KIND, either express or implied.

+ See the License for the specific language governing permissions and

+ limitations under the License.

diff --git a/testbed/open-mmlab__mmengine/MANIFEST.in b/testbed/open-mmlab__mmengine/MANIFEST.in

new file mode 100644

index 0000000000000000000000000000000000000000..8bf078a7572fb583af011ac5d714895694f279cf

--- /dev/null

+++ b/testbed/open-mmlab__mmengine/MANIFEST.in

@@ -0,0 +1 @@

+include mmengine/hub/openmmlab.json mmengine/hub/deprecated.json mmengine/hub/mmcls.json mmengine/hub/torchvision_0.12.json

diff --git a/testbed/open-mmlab__mmengine/README.md b/testbed/open-mmlab__mmengine/README.md

new file mode 100644

index 0000000000000000000000000000000000000000..c0bb49df1cb9d83af4ac2c53d8529eaceaba9561

--- /dev/null

+++ b/testbed/open-mmlab__mmengine/README.md

@@ -0,0 +1,306 @@

+

+

+所有 reviewer 同意合入 PR 后,我们会尽快将 PR 合并到主分支。

+

+#### 7. 解决冲突

+

+随着时间的推移,我们的代码库会不断更新,这时候,如果你的 PR 与主分支存在冲突,你需要解决冲突,解决冲突的方式有两种:

+

+```shell

+git fetch --all --prune

+git rebase upstream/master

+```

+

+或者

+

+```shell

+git fetch --all --prune

+git merge upstream/master

+```

+

+如果你非常善于处理冲突,那么可以使用 rebase 的方式来解决冲突,因为这能够保证你的 commit log 的整洁。如果你不太熟悉 `rebase` 的使用,那么可以使用 `merge` 的方式来解决冲突。

+

+### 指引

+

+#### 单元测试

+

+在提交修复代码错误或新增特性的拉取请求时,我们应该尽可能的让单元测试覆盖所有提交的代码,计算单元测试覆盖率的方法如下

+

+```shell

+python -m coverage run -m pytest /path/to/test_file

+python -m coverage html

+# check file in htmlcov/index.html

+```

+

+#### 文档渲染

+

+在提交修复代码错误或新增特性的拉取请求时,可能会需要修改/新增模块的 docstring。我们需要确认渲染后的文档样式是正确的。

+本地生成渲染后的文档的方法如下

+

+```shell

+pip install -r requirements/docs.txt

+cd docs/zh_cn/

+# or docs/en

+make html

+# check file in ./docs/zh_cn/_build/html/index.html

+```

+

+### Python 代码风格

+

+[PEP8](https://www.python.org/dev/peps/pep-0008/) 作为 OpenMMLab 算法库首选的代码规范,我们使用以下工具检查和格式化代码

+

+- [flake8](https://github.com/PyCQA/flake8): Python 官方发布的代码规范检查工具,是多个检查工具的封装

+- [isort](https://github.com/timothycrosley/isort): 自动调整模块导入顺序的工具

+- [yapf](https://github.com/google/yapf): Google 发布的代码规范检查工具

+- [codespell](https://github.com/codespell-project/codespell): 检查单词拼写是否有误

+- [mdformat](https://github.com/executablebooks/mdformat): 检查 markdown 文件的工具

+- [docformatter](https://github.com/myint/docformatter): 格式化 docstring 的工具

+

+yapf 和 isort 的配置可以在 [setup.cfg](./setup.cfg) 找到

+

+通过配置 [pre-commit hook](https://pre-commit.com/) ,我们可以在提交代码时自动检查和格式化 `flake8`、`yapf`、`isort`、`trailing whitespaces`、`markdown files`,修复 `end-of-files`、`double-quoted-strings`、`python-encoding-pragma`、`mixed-line-ending`,调整 `requirments.txt` 的包顺序。

+pre-commit 钩子的配置可以在 [.pre-commit-config](./.pre-commit-config.yaml) 找到。

+

+pre-commit 具体的安装使用方式见[拉取请求](#2-配置-pre-commit)。

+

+更具体的规范请参考 [OpenMMLab 代码规范](code_style.md)。

+

+### 拉取请求规范

+

+1. 使用 [pre-commit hook](https://pre-commit.com),尽量减少代码风格相关问题

+

+2. 一个`拉取请求`对应一个短期分支

+

+3. 粒度要细,一个`拉取请求`只做一件事情,避免超大的`拉取请求`

+

+ - Bad:实现 Faster R-CNN

+ - Acceptable:给 Faster R-CNN 添加一个 box head

+ - Good:给 box head 增加一个参数来支持自定义的 conv 层数

+

+4. 每次 Commit 时需要提供清晰且有意义 commit 信息

+

+5. 提供清晰且有意义的`拉取请求`描述

+

+ - 标题写明白任务名称,一般格式:\[Prefix\] Short description of the pull request (Suffix)

+ - prefix: 新增功能 \[Feature\], 修 bug \[Fix\], 文档相关 \[Docs\], 开发中 \[WIP\] (暂时不会被review)

+ - 描述里介绍`拉取请求`的主要修改内容,结果,以及对其他部分的影响, 参考`拉取请求`模板

+ - 关联相关的`议题` (issue) 和其他`拉取请求`

+

+6. 如果引入了其他三方库,或借鉴了三方库的代码,请确认他们的许可证和 MMEngine 兼容,并在借鉴的代码上补充 `This code is inspired from http://`

diff --git a/testbed/open-mmlab__mmengine/LICENSE b/testbed/open-mmlab__mmengine/LICENSE

new file mode 100644

index 0000000000000000000000000000000000000000..1bfc23e48f92245b229cdd57c77e79bc10a1cc27

--- /dev/null

+++ b/testbed/open-mmlab__mmengine/LICENSE

@@ -0,0 +1,203 @@

+Copyright 2018-2023 OpenMMLab. All rights reserved.

+

+ Apache License

+ Version 2.0, January 2004

+ http://www.apache.org/licenses/

+

+ TERMS AND CONDITIONS FOR USE, REPRODUCTION, AND DISTRIBUTION

+

+ 1. Definitions.

+

+ "License" shall mean the terms and conditions for use, reproduction,

+ and distribution as defined by Sections 1 through 9 of this document.

+

+ "Licensor" shall mean the copyright owner or entity authorized by

+ the copyright owner that is granting the License.

+

+ "Legal Entity" shall mean the union of the acting entity and all

+ other entities that control, are controlled by, or are under common

+ control with that entity. For the purposes of this definition,

+ "control" means (i) the power, direct or indirect, to cause the

+ direction or management of such entity, whether by contract or

+ otherwise, or (ii) ownership of fifty percent (50%) or more of the

+ outstanding shares, or (iii) beneficial ownership of such entity.

+

+ "You" (or "Your") shall mean an individual or Legal Entity

+ exercising permissions granted by this License.

+

+ "Source" form shall mean the preferred form for making modifications,

+ including but not limited to software source code, documentation

+ source, and configuration files.

+

+ "Object" form shall mean any form resulting from mechanical

+ transformation or translation of a Source form, including but

+ not limited to compiled object code, generated documentation,

+ and conversions to other media types.

+

+ "Work" shall mean the work of authorship, whether in Source or

+ Object form, made available under the License, as indicated by a

+ copyright notice that is included in or attached to the work

+ (an example is provided in the Appendix below).

+

+ "Derivative Works" shall mean any work, whether in Source or Object

+ form, that is based on (or derived from) the Work and for which the

+ editorial revisions, annotations, elaborations, or other modifications

+ represent, as a whole, an original work of authorship. For the purposes

+ of this License, Derivative Works shall not include works that remain

+ separable from, or merely link (or bind by name) to the interfaces of,

+ the Work and Derivative Works thereof.

+

+ "Contribution" shall mean any work of authorship, including

+ the original version of the Work and any modifications or additions

+ to that Work or Derivative Works thereof, that is intentionally

+ submitted to Licensor for inclusion in the Work by the copyright owner

+ or by an individual or Legal Entity authorized to submit on behalf of

+ the copyright owner. For the purposes of this definition, "submitted"

+ means any form of electronic, verbal, or written communication sent

+ to the Licensor or its representatives, including but not limited to

+ communication on electronic mailing lists, source code control systems,

+ and issue tracking systems that are managed by, or on behalf of, the

+ Licensor for the purpose of discussing and improving the Work, but

+ excluding communication that is conspicuously marked or otherwise

+ designated in writing by the copyright owner as "Not a Contribution."

+

+ "Contributor" shall mean Licensor and any individual or Legal Entity

+ on behalf of whom a Contribution has been received by Licensor and

+ subsequently incorporated within the Work.

+

+ 2. Grant of Copyright License. Subject to the terms and conditions of

+ this License, each Contributor hereby grants to You a perpetual,

+ worldwide, non-exclusive, no-charge, royalty-free, irrevocable

+ copyright license to reproduce, prepare Derivative Works of,

+ publicly display, publicly perform, sublicense, and distribute the

+ Work and such Derivative Works in Source or Object form.

+

+ 3. Grant of Patent License. Subject to the terms and conditions of

+ this License, each Contributor hereby grants to You a perpetual,

+ worldwide, non-exclusive, no-charge, royalty-free, irrevocable

+ (except as stated in this section) patent license to make, have made,

+ use, offer to sell, sell, import, and otherwise transfer the Work,

+ where such license applies only to those patent claims licensable

+ by such Contributor that are necessarily infringed by their

+ Contribution(s) alone or by combination of their Contribution(s)

+ with the Work to which such Contribution(s) was submitted. If You

+ institute patent litigation against any entity (including a

+ cross-claim or counterclaim in a lawsuit) alleging that the Work

+ or a Contribution incorporated within the Work constitutes direct

+ or contributory patent infringement, then any patent licenses

+ granted to You under this License for that Work shall terminate

+ as of the date such litigation is filed.

+

+ 4. Redistribution. You may reproduce and distribute copies of the

+ Work or Derivative Works thereof in any medium, with or without

+ modifications, and in Source or Object form, provided that You

+ meet the following conditions:

+

+ (a) You must give any other recipients of the Work or

+ Derivative Works a copy of this License; and

+

+ (b) You must cause any modified files to carry prominent notices

+ stating that You changed the files; and

+

+ (c) You must retain, in the Source form of any Derivative Works

+ that You distribute, all copyright, patent, trademark, and

+ attribution notices from the Source form of the Work,

+ excluding those notices that do not pertain to any part of

+ the Derivative Works; and

+

+ (d) If the Work includes a "NOTICE" text file as part of its

+ distribution, then any Derivative Works that You distribute must

+ include a readable copy of the attribution notices contained

+ within such NOTICE file, excluding those notices that do not

+ pertain to any part of the Derivative Works, in at least one

+ of the following places: within a NOTICE text file distributed

+ as part of the Derivative Works; within the Source form or

+ documentation, if provided along with the Derivative Works; or,

+ within a display generated by the Derivative Works, if and

+ wherever such third-party notices normally appear. The contents

+ of the NOTICE file are for informational purposes only and

+ do not modify the License. You may add Your own attribution

+ notices within Derivative Works that You distribute, alongside

+ or as an addendum to the NOTICE text from the Work, provided

+ that such additional attribution notices cannot be construed

+ as modifying the License.

+

+ You may add Your own copyright statement to Your modifications and

+ may provide additional or different license terms and conditions

+ for use, reproduction, or distribution of Your modifications, or

+ for any such Derivative Works as a whole, provided Your use,

+ reproduction, and distribution of the Work otherwise complies with

+ the conditions stated in this License.

+

+ 5. Submission of Contributions. Unless You explicitly state otherwise,

+ any Contribution intentionally submitted for inclusion in the Work

+ by You to the Licensor shall be under the terms and conditions of

+ this License, without any additional terms or conditions.

+ Notwithstanding the above, nothing herein shall supersede or modify

+ the terms of any separate license agreement you may have executed

+ with Licensor regarding such Contributions.

+

+ 6. Trademarks. This License does not grant permission to use the trade

+ names, trademarks, service marks, or product names of the Licensor,

+ except as required for reasonable and customary use in describing the

+ origin of the Work and reproducing the content of the NOTICE file.

+

+ 7. Disclaimer of Warranty. Unless required by applicable law or

+ agreed to in writing, Licensor provides the Work (and each

+ Contributor provides its Contributions) on an "AS IS" BASIS,

+ WITHOUT WARRANTIES OR CONDITIONS OF ANY KIND, either express or

+ implied, including, without limitation, any warranties or conditions

+ of TITLE, NON-INFRINGEMENT, MERCHANTABILITY, or FITNESS FOR A

+ PARTICULAR PURPOSE. You are solely responsible for determining the

+ appropriateness of using or redistributing the Work and assume any

+ risks associated with Your exercise of permissions under this License.

+

+ 8. Limitation of Liability. In no event and under no legal theory,

+ whether in tort (including negligence), contract, or otherwise,

+ unless required by applicable law (such as deliberate and grossly

+ negligent acts) or agreed to in writing, shall any Contributor be

+ liable to You for damages, including any direct, indirect, special,

+ incidental, or consequential damages of any character arising as a

+ result of this License or out of the use or inability to use the

+ Work (including but not limited to damages for loss of goodwill,

+ work stoppage, computer failure or malfunction, or any and all

+ other commercial damages or losses), even if such Contributor

+ has been advised of the possibility of such damages.

+

+ 9. Accepting Warranty or Additional Liability. While redistributing

+ the Work or Derivative Works thereof, You may choose to offer,

+ and charge a fee for, acceptance of support, warranty, indemnity,

+ or other liability obligations and/or rights consistent with this

+ License. However, in accepting such obligations, You may act only

+ on Your own behalf and on Your sole responsibility, not on behalf

+ of any other Contributor, and only if You agree to indemnify,

+ defend, and hold each Contributor harmless for any liability

+ incurred by, or claims asserted against, such Contributor by reason

+ of your accepting any such warranty or additional liability.

+

+ END OF TERMS AND CONDITIONS

+

+ APPENDIX: How to apply the Apache License to your work.

+

+ To apply the Apache License to your work, attach the following

+ boilerplate notice, with the fields enclosed by brackets "[]"

+ replaced with your own identifying information. (Don't include

+ the brackets!) The text should be enclosed in the appropriate

+ comment syntax for the file format. We also recommend that a

+ file or class name and description of purpose be included on the

+ same "printed page" as the copyright notice for easier

+ identification within third-party archives.

+

+ Copyright 2018-2023 OpenMMLab.

+

+ Licensed under the Apache License, Version 2.0 (the "License");

+ you may not use this file except in compliance with the License.

+ You may obtain a copy of the License at

+

+ http://www.apache.org/licenses/LICENSE-2.0

+

+ Unless required by applicable law or agreed to in writing, software

+ distributed under the License is distributed on an "AS IS" BASIS,

+ WITHOUT WARRANTIES OR CONDITIONS OF ANY KIND, either express or implied.

+ See the License for the specific language governing permissions and

+ limitations under the License.

diff --git a/testbed/open-mmlab__mmengine/MANIFEST.in b/testbed/open-mmlab__mmengine/MANIFEST.in

new file mode 100644

index 0000000000000000000000000000000000000000..8bf078a7572fb583af011ac5d714895694f279cf

--- /dev/null

+++ b/testbed/open-mmlab__mmengine/MANIFEST.in

@@ -0,0 +1 @@

+include mmengine/hub/openmmlab.json mmengine/hub/deprecated.json mmengine/hub/mmcls.json mmengine/hub/torchvision_0.12.json

diff --git a/testbed/open-mmlab__mmengine/README.md b/testbed/open-mmlab__mmengine/README.md

new file mode 100644

index 0000000000000000000000000000000000000000..c0bb49df1cb9d83af4ac2c53d8529eaceaba9561

--- /dev/null

+++ b/testbed/open-mmlab__mmengine/README.md

@@ -0,0 +1,306 @@

+ +

+  +

+

+

+ +

+Then, you can clone the repositories to local:

+

+```shell

+git clone git@github.com:{username}/mmengine.git

+```

+

+After that, you should add the official repository as the upstream repository.

+

+```bash

+git remote add upstream git@github.com:open-mmlab/mmengine

+```

+

+Check whether the remote repository has been added successfully by `git remote -v`.

+

+```bash

+origin git@github.com:{username}/mmengine.git (fetch)

+origin git@github.com:{username}/mmengine.git (push)

+upstream git@github.com:open-mmlab/mmengine (fetch)

+upstream git@github.com:open-mmlab/mmengine (push)

+```

+

+```{note}

+Here's a brief introduction to origin and upstream. When we use "git clone", we create an "origin" remote by default, which points to the repository cloned from. As for "upstream", we add it ourselves to point to the target repository. Of course, if you don't like the name "upstream", you could name it as you wish. Usually, we'll push the code to "origin". If the pushed code conflicts with the latest code in official("upstream"), we should pull the latest code from upstream to resolve the conflicts, and then push to "origin" again. The posted Pull Request will be updated automatically.

+```

+

+#### 2. Configure pre-commit

+

+You should configure [pre-commit](https://pre-commit.com/#intro) in the local development environment to make sure the code style matches that of OpenMMLab. **Note**: The following code should be executed under the mmengine directory.

+

+```shell

+pip install -U pre-commit

+pre-commit install

+```

+

+Check that pre-commit is configured successfully, and install the hooks defined in `.pre-commit-config.yaml`.

+

+```shell

+pre-commit run --all-files

+```

+

+

+

+Then, you can clone the repositories to local:

+

+```shell

+git clone git@github.com:{username}/mmengine.git

+```

+

+After that, you should add the official repository as the upstream repository.

+

+```bash

+git remote add upstream git@github.com:open-mmlab/mmengine

+```

+

+Check whether the remote repository has been added successfully by `git remote -v`.

+

+```bash

+origin git@github.com:{username}/mmengine.git (fetch)

+origin git@github.com:{username}/mmengine.git (push)

+upstream git@github.com:open-mmlab/mmengine (fetch)

+upstream git@github.com:open-mmlab/mmengine (push)

+```

+

+```{note}

+Here's a brief introduction to origin and upstream. When we use "git clone", we create an "origin" remote by default, which points to the repository cloned from. As for "upstream", we add it ourselves to point to the target repository. Of course, if you don't like the name "upstream", you could name it as you wish. Usually, we'll push the code to "origin". If the pushed code conflicts with the latest code in official("upstream"), we should pull the latest code from upstream to resolve the conflicts, and then push to "origin" again. The posted Pull Request will be updated automatically.

+```

+

+#### 2. Configure pre-commit

+

+You should configure [pre-commit](https://pre-commit.com/#intro) in the local development environment to make sure the code style matches that of OpenMMLab. **Note**: The following code should be executed under the mmengine directory.

+

+```shell

+pip install -U pre-commit

+pre-commit install

+```

+

+Check that pre-commit is configured successfully, and install the hooks defined in `.pre-commit-config.yaml`.

+

+```shell

+pre-commit run --all-files

+```

+

+ +

+

+

+ +

+If the installation process is interrupted, you can repeatedly run `pre-commit run ... ` to continue the installation.

+

+If the code does not conform to the code style specification, pre-commit will raise a warning and fixes some of the errors automatically.

+

+

+

+If the installation process is interrupted, you can repeatedly run `pre-commit run ... ` to continue the installation.

+

+If the code does not conform to the code style specification, pre-commit will raise a warning and fixes some of the errors automatically.

+

+ +

+If we want to commit our code bypassing the pre-commit hook, we can use the `--no-verify` option(**only for temporary committing**).

+

+```shell

+git commit -m "xxx" --no-verify

+```

+

+#### 3. Create a development branch

+

+After configuring the pre-commit, we should create a branch based on the master branch to develop the new feature or fix the bug. The proposed branch name is `username/pr_name`

+

+```shell

+git checkout -b yhc/refactor_contributing_doc

+```

+

+In subsequent development, if the master branch of the local repository is behind the master branch of "upstream", we need to pull the upstream for synchronization, and then execute the above command:

+

+```shell

+git pull upstream master

+```

+

+#### 4. Commit the code and pass the unit test

+

+- MMEngine introduces mypy to do static type checking to increase the robustness of the code. Therefore, we need to add Type Hints to our code and pass the mypy check. If you are not familiar with Type Hints, you can refer to [this tutorial](https://docs.python.org/3/library/typing.html).

+

+- The committed code should pass through the unit test

+

+ ```shell

+ # Pass all unit tests

+ pytest tests

+

+ # Pass the unit test of runner

+ pytest tests/test_runner/test_runner.py

+ ```

+

+ If the unit test fails for lack of dependencies, you can install the dependencies referring to the [guidance](#unit-test)

+

+- If the documents are modified/added, we should check the rendering result referring to [guidance](#document-rendering)

+

+#### 5. Push the code to remote

+

+We could push the local commits to remote after passing through the check of unit test and pre-commit. You can associate the local branch with remote branch by adding `-u` option.

+

+```shell

+git push -u origin {branch_name}

+```

+

+This will allow you to use the `git push` command to push code directly next time, without having to specify a branch or the remote repository.

+

+#### 6. Create a Pull Request

+

+(1) Create a pull request in GitHub's Pull request interface

+

+

+

+If we want to commit our code bypassing the pre-commit hook, we can use the `--no-verify` option(**only for temporary committing**).

+

+```shell

+git commit -m "xxx" --no-verify

+```

+

+#### 3. Create a development branch

+

+After configuring the pre-commit, we should create a branch based on the master branch to develop the new feature or fix the bug. The proposed branch name is `username/pr_name`

+

+```shell

+git checkout -b yhc/refactor_contributing_doc

+```

+

+In subsequent development, if the master branch of the local repository is behind the master branch of "upstream", we need to pull the upstream for synchronization, and then execute the above command:

+

+```shell

+git pull upstream master

+```

+

+#### 4. Commit the code and pass the unit test

+

+- MMEngine introduces mypy to do static type checking to increase the robustness of the code. Therefore, we need to add Type Hints to our code and pass the mypy check. If you are not familiar with Type Hints, you can refer to [this tutorial](https://docs.python.org/3/library/typing.html).

+

+- The committed code should pass through the unit test

+

+ ```shell

+ # Pass all unit tests

+ pytest tests

+

+ # Pass the unit test of runner

+ pytest tests/test_runner/test_runner.py

+ ```

+

+ If the unit test fails for lack of dependencies, you can install the dependencies referring to the [guidance](#unit-test)

+

+- If the documents are modified/added, we should check the rendering result referring to [guidance](#document-rendering)

+

+#### 5. Push the code to remote

+

+We could push the local commits to remote after passing through the check of unit test and pre-commit. You can associate the local branch with remote branch by adding `-u` option.

+

+```shell

+git push -u origin {branch_name}

+```

+

+This will allow you to use the `git push` command to push code directly next time, without having to specify a branch or the remote repository.

+

+#### 6. Create a Pull Request

+

+(1) Create a pull request in GitHub's Pull request interface

+

+ +

+(2) Modify the PR description according to the guidelines so that other developers can better understand your changes

+

+

+

+(2) Modify the PR description according to the guidelines so that other developers can better understand your changes

+

+ +

+Find more details about Pull Request description in [pull request guidelines](#pr-specs).

+

+**note**

+

+(a) The Pull Request description should contain the reason for the change, the content of the change, and the impact of the change, and be associated with the relevant Issue (see [documentation](https://docs.github.com/en/issues/tracking-your-work-with-issues/linking-a-pull-request-to-an-issue)

+

+(b) If it is your first contribution, please sign the CLA

+

+

+

+Find more details about Pull Request description in [pull request guidelines](#pr-specs).

+

+**note**

+

+(a) The Pull Request description should contain the reason for the change, the content of the change, and the impact of the change, and be associated with the relevant Issue (see [documentation](https://docs.github.com/en/issues/tracking-your-work-with-issues/linking-a-pull-request-to-an-issue)

+

+(b) If it is your first contribution, please sign the CLA

+

+ +

+(c) Check whether the Pull Request pass through the CI

+

+

+

+(c) Check whether the Pull Request pass through the CI

+

+ +

+MMEngine will run unit test for the posted Pull Request on different platforms (Linux, Window, Mac), based on different versions of Python, PyTorch, CUDA to make sure the code is correct. We can see the specific test information by clicking `Details` in the above image so that we can modify the code.

+

+(3) If the Pull Request passes the CI, then you can wait for the review from other developers. You'll modify the code based on the reviewer's comments, and repeat the steps [4](#4-commit-the-code-and-pass-the-unit-test)-[5](#5-push-the-code-to-remote) until all reviewers approve it. Then, we will merge it ASAP.

+

+

+

+MMEngine will run unit test for the posted Pull Request on different platforms (Linux, Window, Mac), based on different versions of Python, PyTorch, CUDA to make sure the code is correct. We can see the specific test information by clicking `Details` in the above image so that we can modify the code.

+

+(3) If the Pull Request passes the CI, then you can wait for the review from other developers. You'll modify the code based on the reviewer's comments, and repeat the steps [4](#4-commit-the-code-and-pass-the-unit-test)-[5](#5-push-the-code-to-remote) until all reviewers approve it. Then, we will merge it ASAP.

+

+ +

+#### 7. Resolve conflicts

+

+If your local branch conflicts with the latest master branch of "upstream", you'll need to resolove them. There are two ways to do this:

+

+```shell

+git fetch --all --prune

+git rebase upstream/master

+```

+

+or

+

+```shell

+git fetch --all --prune

+git merge upstream/master

+```

+

+If you are very good at handling conflicts, then you can use rebase to resolve conflicts, as this will keep your commit logs tidy. If you are not familiar with `rebase`, then you can use `merge` to resolve conflicts.

+

+### Guidance

+

+#### Unit test

+

+We should also make sure the committed code will not decrease the coverage of unit test, we could run the following command to check the coverage of unit test:

+

+```shell

+python -m coverage run -m pytest /path/to/test_file

+python -m coverage html

+# check file in htmlcov/index.html

+```

+

+#### Document rendering

+

+If the documents are modified/added, we should check the rendering result. We could install the dependencies and run the following command to render the documents and check the results:

+

+```shell

+pip install -r requirements/docs.txt

+cd docs/zh_cn/

+# or docs/en

+make html

+# check file in ./docs/zh_cn/_build/html/index.html

+```

+

+### Python Code style

+

+We adopt [PEP8](https://www.python.org/dev/peps/pep-0008/) as the preferred code style.

+

+We use the following tools for linting and formatting:

+

+- [flake8](https://github.com/PyCQA/flake8): A wrapper around some linter tools.

+- [isort](https://github.com/timothycrosley/isort): A Python utility to sort imports.

+- [yapf](https://github.com/google/yapf): A formatter for Python files.

+- [codespell](https://github.com/codespell-project/codespell): A Python utility to fix common misspellings in text files.

+- [mdformat](https://github.com/executablebooks/mdformat): Mdformat is an opinionated Markdown formatter that can be used to enforce a consistent style in Markdown files.

+- [docformatter](https://github.com/myint/docformatter): A formatter to format docstring.

+

+Style configurations of yapf and isort can be found in [setup.cfg](./setup.cfg).

+

+We use [pre-commit hook](https://pre-commit.com/) that checks and formats for `flake8`, `yapf`, `isort`, `trailing whitespaces`, `markdown files`,

+fixes `end-of-files`, `double-quoted-strings`, `python-encoding-pragma`, `mixed-line-ending`, sorts `requirments.txt` automatically on every commit.

+The config for a pre-commit hook is stored in [.pre-commit-config](./.pre-commit-config.yaml).

+

+### PR Specs

+

+1. Use [pre-commit](https://pre-commit.com) hook to avoid issues of code style

+

+2. One short-time branch should be matched with only one PR

+

+3. Accomplish a detailed change in one PR. Avoid large PR

+

+ - Bad: Support Faster R-CNN

+ - Acceptable: Add a box head to Faster R-CNN

+ - Good: Add a parameter to box head to support custom conv-layer number

+

+4. Provide clear and significant commit message

+

+5. Provide clear and meaningful PR description

+

+ - Task name should be clarified in title. The general format is: \[Prefix\] Short description of the PR (Suffix)

+ - Prefix: add new feature \[Feature\], fix bug \[Fix\], related to documents \[Docs\], in developing \[WIP\] (which will not be reviewed temporarily)

+ - Introduce main changes, results and influences on other modules in short description

+ - Associate related issues and pull requests with a milestone

diff --git a/testbed/open-mmlab__mmengine/docs/en/switch_language.md b/testbed/open-mmlab__mmengine/docs/en/switch_language.md

new file mode 100644

index 0000000000000000000000000000000000000000..e47b751e33928f59c4bf1aea94110493214754ea

--- /dev/null

+++ b/testbed/open-mmlab__mmengine/docs/en/switch_language.md

@@ -0,0 +1,3 @@

+## English

+

+## 简体中文

diff --git a/testbed/open-mmlab__mmengine/docs/en/tutorials/dataset.md b/testbed/open-mmlab__mmengine/docs/en/tutorials/dataset.md

new file mode 100644

index 0000000000000000000000000000000000000000..b1a1c8ff945b63e4212e23eb51b000c88ca69e5a

--- /dev/null

+++ b/testbed/open-mmlab__mmengine/docs/en/tutorials/dataset.md

@@ -0,0 +1,200 @@

+# Dataset and DataLoader

+

+```{hint}

+If you have never been exposed to PyTorch's Dataset and DataLoader classes, you are recommended to read through [PyTorch official tutorial](https://pytorch.org/tutorials/beginner/basics/data_tutorial.html) to get familiar with some basic concepts.

+```

+