Add 1 files

Browse files- 2407/2407.09781.md +391 -0

2407/2407.09781.md

ADDED

|

@@ -0,0 +1,391 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

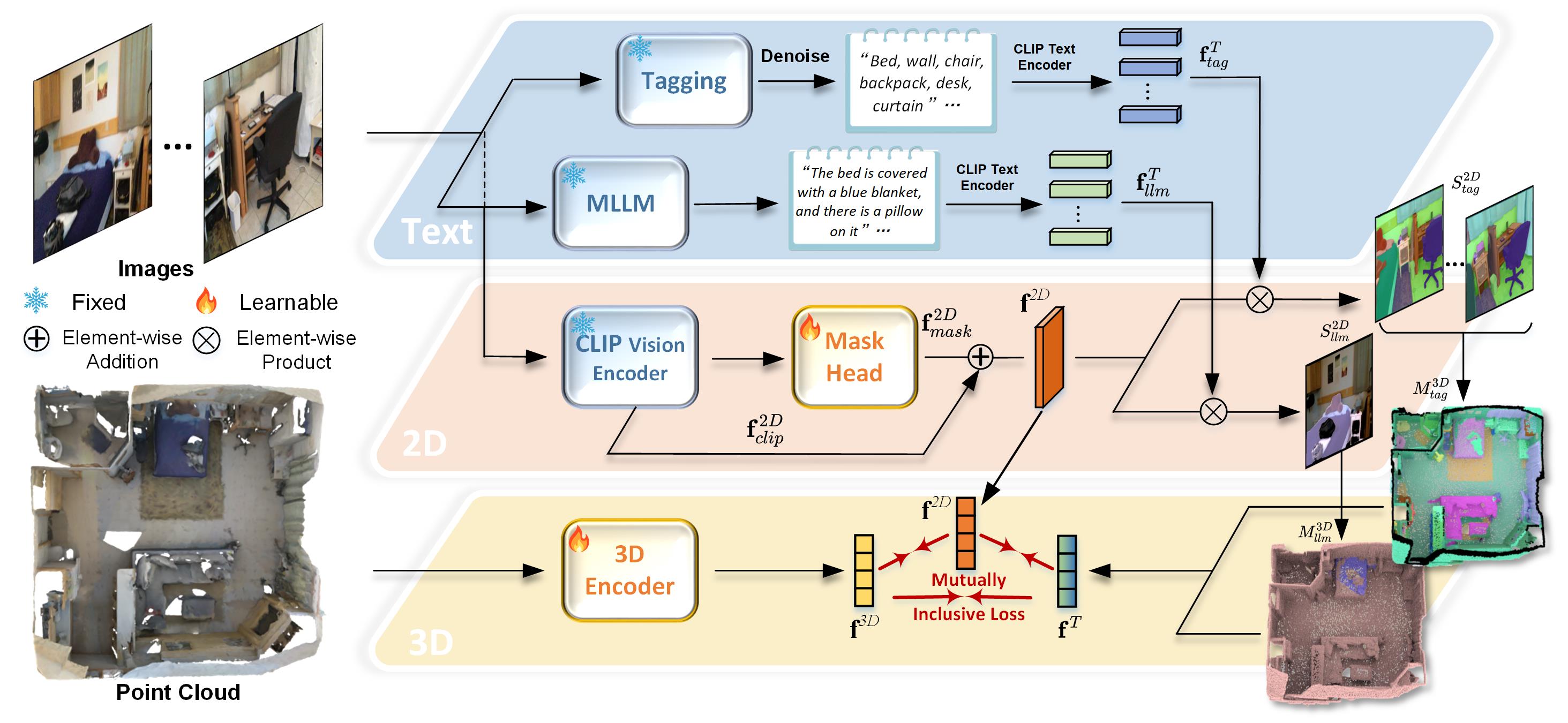

|

|

|

|

|

|

|

|

|

|

|

|

|

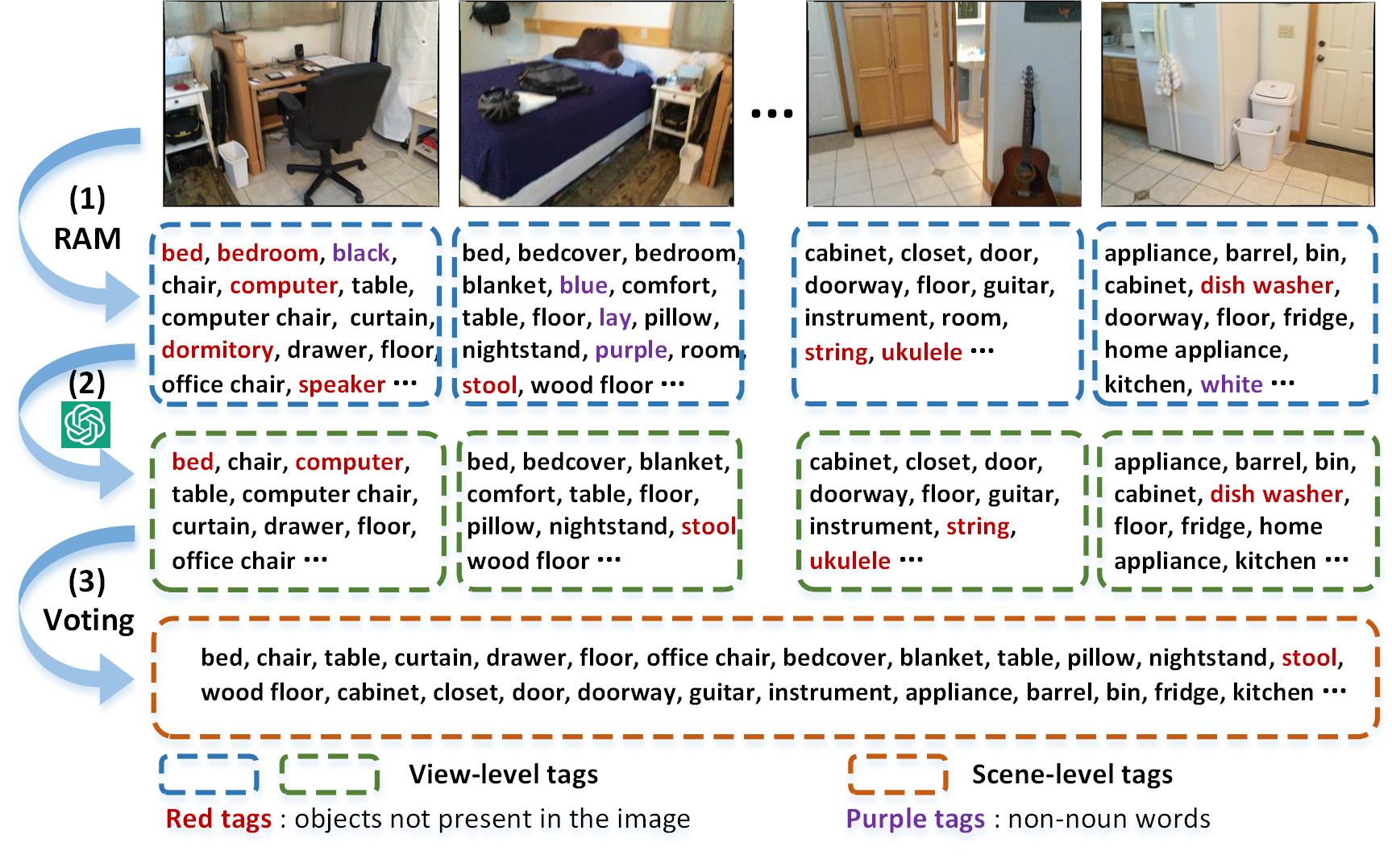

|

|

|

|

|

|

|

|

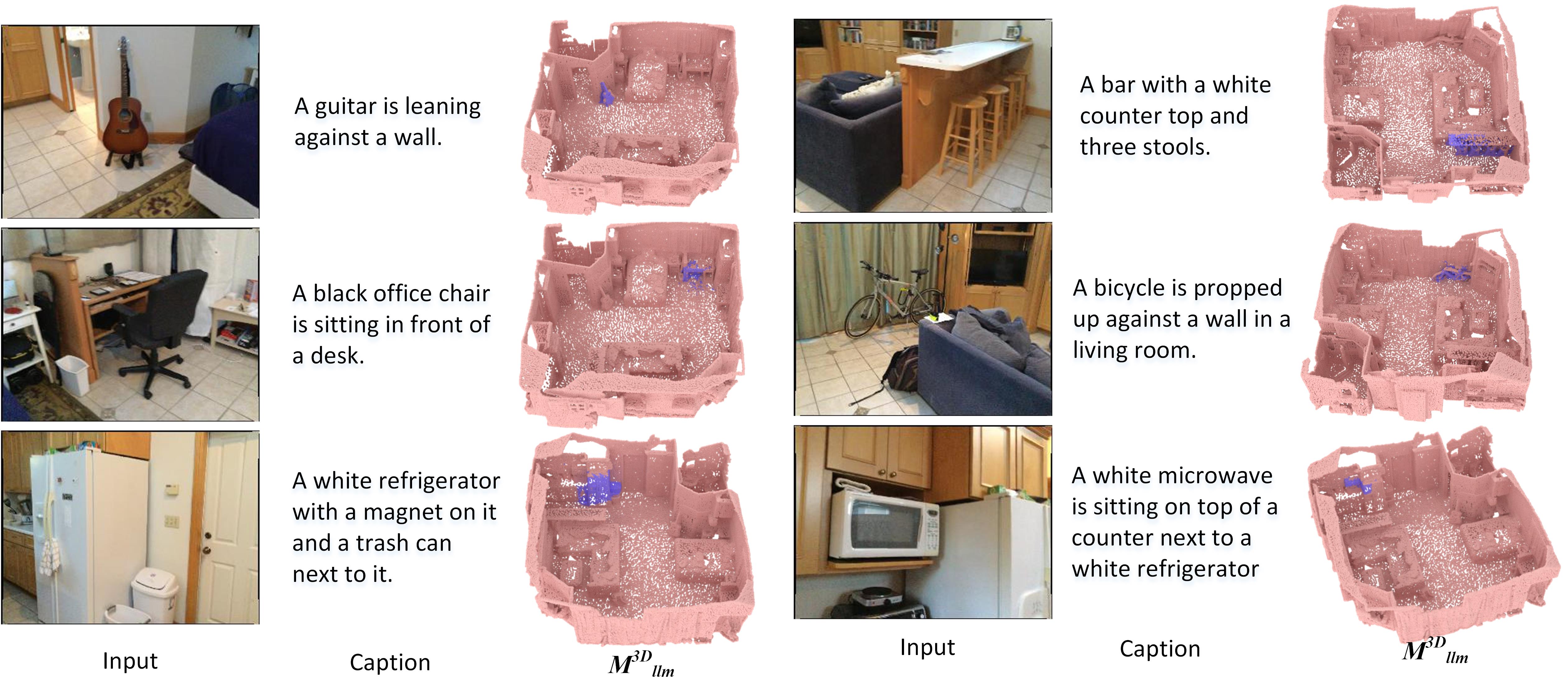

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

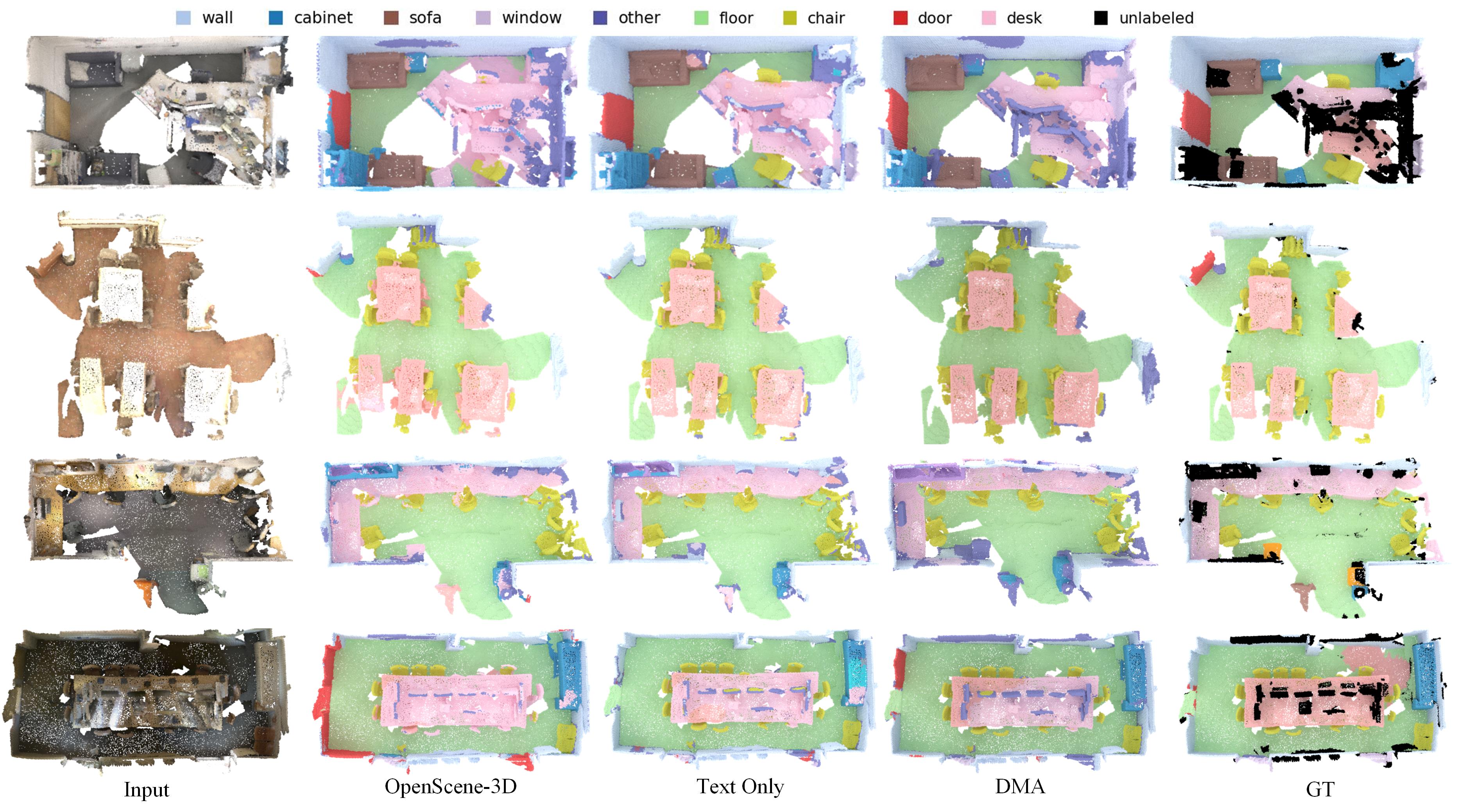

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

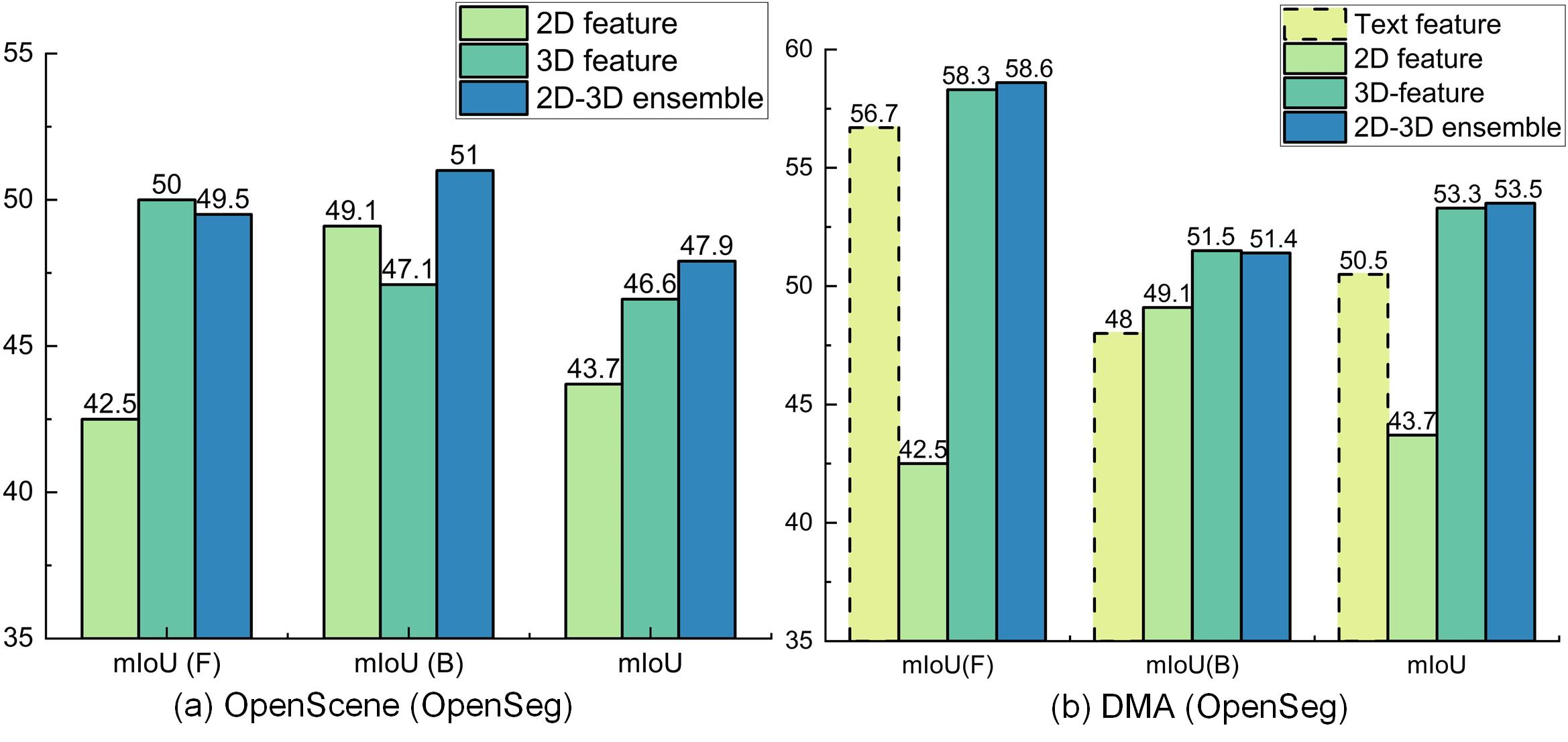

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

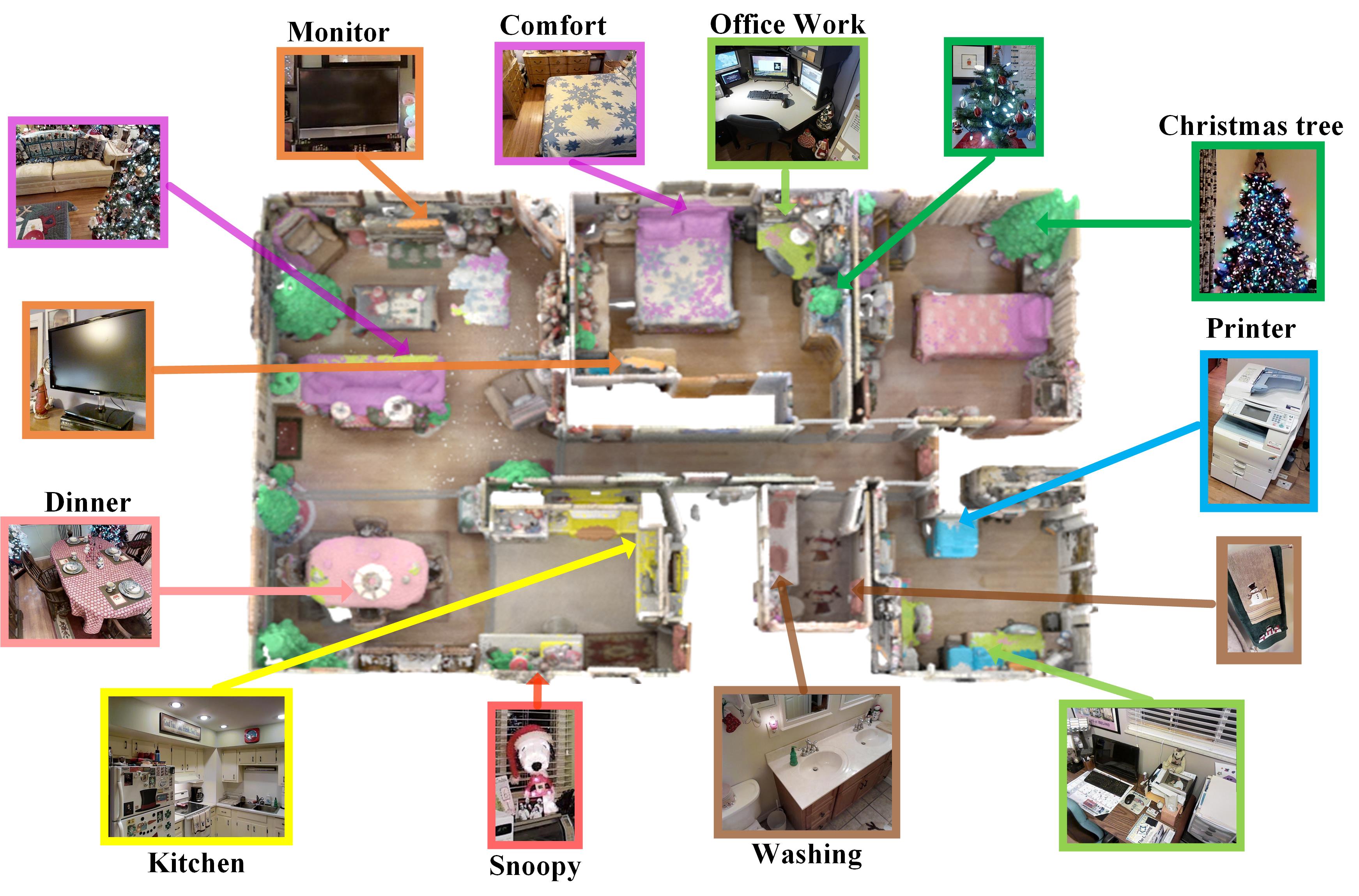

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

Title: Dense Multimodal Alignment for Open-Vocabulary 3D Scene Understanding

|

| 2 |

+

|

| 3 |

+

URL Source: https://arxiv.org/html/2407.09781

|

| 4 |

+

|

| 5 |

+

Published Time: Tue, 16 Jul 2024 00:19:34 GMT

|

| 6 |

+

|

| 7 |

+

Markdown Content:

|

| 8 |

+

1 1 institutetext: Hong Kong Polytechnic University 2 2 institutetext: Joins Hopkins University

|

| 9 |

+

|

| 10 |

+

2 2 email: {csrhli, cslzhang}@comp.polyu.edu.hk, vpatel36@jhu.edu

|

| 11 |

+

|

| 12 |

+

[https://github.com/lslrh/DMA](https://github.com/lslrh/DMA)

|

| 13 |

+

Zhengqiang Zhang 11 Chenhang He 11 Zhiyuan Ma 11

|

| 14 |

+

|

| 15 |

+

Vishal M. Patel 22 Lei Zhang(🖄)11

|

| 16 |

+

|

| 17 |

+

###### Abstract

|

| 18 |

+

|

| 19 |

+

Recent vision-language pre-training models have exhibited remarkable generalization ability in zero-shot recognition tasks. Previous open-vocabulary 3D scene understanding methods mostly focus on training 3D models using either image or text supervision while neglecting the collective strength of all modalities. In this work, we propose a Dense Multimodal Alignment (DMA) framework to densely co-embed different modalities into a common space for maximizing their synergistic benefits. Instead of extracting coarse view- or region-level text prompts, we leverage large vision-language models to extract complete category information and scalable scene descriptions to build the text modality, and take image modality as the bridge to build dense point-pixel-text associations. Besides, in order to enhance the generalization ability of the 2D model for downstream 3D tasks without compromising the open-vocabulary capability, we employ a dual-path integration approach to combine frozen CLIP visual features and learnable mask features. Extensive experiments show that our DMA method produces highly competitive open-vocabulary segmentation performance on various indoor and outdoor tasks.

|

| 20 |

+

|

| 21 |

+

###### Keywords:

|

| 22 |

+

|

| 23 |

+

3D Scene understanding Open-vocabulary Multimodal alignment

|

| 24 |

+

|

| 25 |

+

1 Introduction

|

| 26 |

+

--------------

|

| 27 |

+

|

| 28 |

+

3D scene understanding, which aims to achieve accurate comprehension of objects as well as their attributes and relationships within a scene, has gained significant attention in recent years due to its popular applications in autonomous driving[[32](https://arxiv.org/html/2407.09781v1#bib.bib32)], virtual reality (VR)[[2](https://arxiv.org/html/2407.09781v1#bib.bib2), [40](https://arxiv.org/html/2407.09781v1#bib.bib40), [50](https://arxiv.org/html/2407.09781v1#bib.bib50)] and robot navigation[[3](https://arxiv.org/html/2407.09781v1#bib.bib3)], _etc_. However, the annotation of large-scale 3D data is very costly [[7](https://arxiv.org/html/2407.09781v1#bib.bib7), [11](https://arxiv.org/html/2407.09781v1#bib.bib11)], impeding the training of generalizable models for open-vocabulary scene understanding. Though many existing methods[[10](https://arxiv.org/html/2407.09781v1#bib.bib10), [20](https://arxiv.org/html/2407.09781v1#bib.bib20), [58](https://arxiv.org/html/2407.09781v1#bib.bib58), [46](https://arxiv.org/html/2407.09781v1#bib.bib46), [41](https://arxiv.org/html/2407.09781v1#bib.bib41), [31](https://arxiv.org/html/2407.09781v1#bib.bib31), [29](https://arxiv.org/html/2407.09781v1#bib.bib29), [30](https://arxiv.org/html/2407.09781v1#bib.bib30), [9](https://arxiv.org/html/2407.09781v1#bib.bib9)] have achieved significant advancements in recognizing closed-set categories for specific tasks, they fail to identify novel categories and other types of queries[[42](https://arxiv.org/html/2407.09781v1#bib.bib42)] without 3D supervision, hindering the application of existing 3D scene understanding methods to real-world settings, where the number of possible classes is unlimited.

|

| 29 |

+

|

| 30 |

+

In contrast to the limited 3D data, modalities such as images and texts are more abundantly available. Existing pre-trained multimodal models, such as CLIP[[43](https://arxiv.org/html/2407.09781v1#bib.bib43)] and ALIGN[[24](https://arxiv.org/html/2407.09781v1#bib.bib24)], have shown impressive zero-shot recognition ability by training on large-scale noisy image-text pairs, and have been successfully adapted for open-vocabulary classification[[53](https://arxiv.org/html/2407.09781v1#bib.bib53), [54](https://arxiv.org/html/2407.09781v1#bib.bib54)], detection[[38](https://arxiv.org/html/2407.09781v1#bib.bib38), [5](https://arxiv.org/html/2407.09781v1#bib.bib5)] and segmentation tasks[[33](https://arxiv.org/html/2407.09781v1#bib.bib33), [52](https://arxiv.org/html/2407.09781v1#bib.bib52), [47](https://arxiv.org/html/2407.09781v1#bib.bib47)]. Based on these observations, researchers have attempted to use image or natural language modalities to provide supervisory signals for learning 3D representations[[55](https://arxiv.org/html/2407.09781v1#bib.bib55), [13](https://arxiv.org/html/2407.09781v1#bib.bib13), [42](https://arxiv.org/html/2407.09781v1#bib.bib42), [36](https://arxiv.org/html/2407.09781v1#bib.bib36)]. Some methods use fixed 2D features as supervision and distill the knowledge from either the pre-trained 2D encoder of CLIP[[36](https://arxiv.org/html/2407.09781v1#bib.bib36)] or 2D open-vocabulary segmentation (OVSeg) models[[42](https://arxiv.org/html/2407.09781v1#bib.bib42)] into 3D representations (NeRF or point clouds). However, they overlook the fact that 3D models can in turn enhance 2D models by leveraging the strong 3D structural information. Besides, the 2D OVSeg models compromise their open-vocabulary ability since they are primarily fine-tuned on in-vocabulary datasets. There are also some methods that directly align 3D features to semantic captions[[55](https://arxiv.org/html/2407.09781v1#bib.bib55), [13](https://arxiv.org/html/2407.09781v1#bib.bib13), [45](https://arxiv.org/html/2407.09781v1#bib.bib45)]. However, they only capture coarse image- or region-level descriptions without establishing dense point-to-text correspondences or exploiting image features that involve rich semantic contexts and more variations. Though some methods[[53](https://arxiv.org/html/2407.09781v1#bib.bib53), [54](https://arxiv.org/html/2407.09781v1#bib.bib54)] simultaneously leverage visual and textual supervisions, they only conduct coarse multimodal alignment for object-level point cloud classification.

|

| 31 |

+

|

| 32 |

+

In order to leverage the synergistic benefits of multiple modalities for dense prediction tasks, we propose a dense multimodal alignment (DMA) strategy to co-embed 3D points, image pixels, and text strings into a shared latent space. To build dense associations across different modalities, the primary bottleneck is how to obtain rich and reliable text descriptions without relying on manual labeling. To this end, we generate two types of prompts using large Vision-Language Models (VLMs). Firstly, we employ the tagging model such as RAM[[57](https://arxiv.org/html/2407.09781v1#bib.bib57)] to detect as many categories as possible from an image, ensuring alignment with complete semantic patterns. Considering that category names might not provide sufficient details and contextual information, we incorporate Multimodal Large Language Models (MLLM) such as LLaVA[[35](https://arxiv.org/html/2407.09781v1#bib.bib35)] to generate linguistically expressible scene descriptions, thereby enhancing the scalability of text queries. In addition, we use the GPT to filter out the noise in the generated texts for improving the reliability. As a result, we establish a highly scalable and informative text modality, enhancing the overall understanding of 3D scenes.

|

| 33 |

+

|

| 34 |

+

As for the image modality, we adopt a dual-path integration strategy to extract robust 2D features as supervision. Specifically, we employ the FC-CLIP[[56](https://arxiv.org/html/2407.09781v1#bib.bib56)] as the feature extractor. On one hand, we fix its CLIP visual encoder to maintain the open-world recognition ability. On the other hand, by fine-tuning its mask head, we incorporate 3D structural priors into 2D features, better adapting the model to 3D dense tasks. Then we build triplets of points, pixels, and their corresponding texts by taking image modality as the bridge. Given the generated triplets of different modalities and their dense correspondences, we finally adopt the mutually inclusive loss function to align multiple modalities. In this way, we can effectively unleash the potential of existing foundation VLMs and maximize the complementary effects of multiple modalities.

|

| 35 |

+

|

| 36 |

+

In summary, (1) we first present a dense multimodal alignment framework, which establishes dense correspondences among points, pixels and texts, to learn robust 3D representations for open-vocabulary 3D scene understanding. (2) To generate complete and scalable language modality without relying on manual annotations, we leverage a tagging model and an MLLM to extract category information and scene descriptions, respectively. (3) Finally, to improve the segmentation ability without compromising the open-vocabulary ability, we integrate 3D priors into 2D features by fine-tuning the 2D mask head with the backbone frozen. Extensive experiments demonstrate the outstanding open-world 3D segmentation ability of our DMA model on various indoor and outdoor tasks.

|

| 37 |

+

|

| 38 |

+

2 Related Work

|

| 39 |

+

--------------

|

| 40 |

+

|

| 41 |

+

Open-Vocabulary 3D Scene Understanding. 3D scene understanding is a popular research topic in computer vision. Most previous methods[[10](https://arxiv.org/html/2407.09781v1#bib.bib10), [15](https://arxiv.org/html/2407.09781v1#bib.bib15), [19](https://arxiv.org/html/2407.09781v1#bib.bib19), [20](https://arxiv.org/html/2407.09781v1#bib.bib20), [58](https://arxiv.org/html/2407.09781v1#bib.bib58)] focus on training models on manually labeled close-set categories, and have yielded promising performance on popular 3D benchmarks[[11](https://arxiv.org/html/2407.09781v1#bib.bib11), [4](https://arxiv.org/html/2407.09781v1#bib.bib4)]. However, most of these methods are designed for a specific task, such as object classification[[51](https://arxiv.org/html/2407.09781v1#bib.bib51)], detection[[8](https://arxiv.org/html/2407.09781v1#bib.bib8)], semantic/instance segmentation[[58](https://arxiv.org/html/2407.09781v1#bib.bib58), [10](https://arxiv.org/html/2407.09781v1#bib.bib10), [15](https://arxiv.org/html/2407.09781v1#bib.bib15)], and they cannot identify novel categories, restricting their applications to real-world settings. To overcome this limitation, recent works have been focused on the open-vocabulary scene understanding problem[[36](https://arxiv.org/html/2407.09781v1#bib.bib36)]. Rozenberszki _et al_.[[45](https://arxiv.org/html/2407.09781v1#bib.bib45)] proposed a language-driven pre-training method to enforce 3D feature to be close to text embeddings, and finetune the 3D encoder with ground-truth annotations. PLA[[13](https://arxiv.org/html/2407.09781v1#bib.bib13)] and RegionPLC[[55](https://arxiv.org/html/2407.09781v1#bib.bib55)] explicitly associate 3D points with image- and region-level image captions, respectively. However, existing image captioning models can only identify sparse and salient objects while missing other important categories. Besides, textual signals lack variations and contexts, making them insufficient for dense prediction tasks. Some methods[[42](https://arxiv.org/html/2407.09781v1#bib.bib42), [36](https://arxiv.org/html/2407.09781v1#bib.bib36)] distill knowledge from large-scale pre-trained 2D models, such as image-text contrastive learning models[[43](https://arxiv.org/html/2407.09781v1#bib.bib43)] and open-vocabulary segmentation models[[33](https://arxiv.org/html/2407.09781v1#bib.bib33), [52](https://arxiv.org/html/2407.09781v1#bib.bib52), [14](https://arxiv.org/html/2407.09781v1#bib.bib14), [56](https://arxiv.org/html/2407.09781v1#bib.bib56)]. However, the performance of pre-trained models drops a lot on the downstream datasets due to the large domain shift. These methods also overlook the fact that 3D models can in turn enhance 2D models by leveraging the strong structural information inherent in 3D data.

|

| 42 |

+

|

| 43 |

+

|

| 44 |

+

|

| 45 |

+

Figure 1: Framework of our proposed Dense Multimodal Alignment (DMA) method. We generate comprehensive language modality data by leveraging a tagging model and an MLLM. As for 2D modality, we fix the CLIP visual backbone 𝐟 clip 2D subscript superscript 𝐟 2 𝐷 𝑐 𝑙 𝑖 𝑝{\bf f}^{2D}_{clip}bold_f start_POSTSUPERSCRIPT 2 italic_D end_POSTSUPERSCRIPT start_POSTSUBSCRIPT italic_c italic_l italic_i italic_p end_POSTSUBSCRIPT but finetune the mask head 𝐟 mask 2D subscript superscript 𝐟 2 𝐷 𝑚 𝑎 𝑠 𝑘{\bf f}^{2D}_{mask}bold_f start_POSTSUPERSCRIPT 2 italic_D end_POSTSUPERSCRIPT start_POSTSUBSCRIPT italic_m italic_a italic_s italic_k end_POSTSUBSCRIPT for better adaptation to downstream 3D tasks without compromising the open-vocabulary ability. Then the dense correspondences between pixels 𝐟 2D superscript 𝐟 2 𝐷{\bf f}^{2D}bold_f start_POSTSUPERSCRIPT 2 italic_D end_POSTSUPERSCRIPT and texts 𝐟 tag T subscript superscript 𝐟 𝑇 𝑡 𝑎 𝑔{\bf f}^{T}_{tag}bold_f start_POSTSUPERSCRIPT italic_T end_POSTSUPERSCRIPT start_POSTSUBSCRIPT italic_t italic_a italic_g end_POSTSUBSCRIPT/𝐟 llm T subscript superscript 𝐟 𝑇 𝑙 𝑙 𝑚{\bf f}^{T}_{llm}bold_f start_POSTSUPERSCRIPT italic_T end_POSTSUPERSCRIPT start_POSTSUBSCRIPT italic_l italic_l italic_m end_POSTSUBSCRIPT can be built by computing their feature similarities, resulting in semantic score maps S tag 2D subscript superscript 𝑆 2 𝐷 𝑡 𝑎 𝑔 S^{2D}_{tag}italic_S start_POSTSUPERSCRIPT 2 italic_D end_POSTSUPERSCRIPT start_POSTSUBSCRIPT italic_t italic_a italic_g end_POSTSUBSCRIPT/S llm 2D subscript superscript 𝑆 2 𝐷 𝑙 𝑙 𝑚 S^{2D}_{llm}italic_S start_POSTSUPERSCRIPT 2 italic_D end_POSTSUPERSCRIPT start_POSTSUBSCRIPT italic_l italic_l italic_m end_POSTSUBSCRIPT. By taking image modality as the bridge, we back-project text labels to each point and obtain the 3D label maps M tag 3D subscript superscript 𝑀 3 𝐷 𝑡 𝑎 𝑔 M^{3D}_{tag}italic_M start_POSTSUPERSCRIPT 3 italic_D end_POSTSUPERSCRIPT start_POSTSUBSCRIPT italic_t italic_a italic_g end_POSTSUBSCRIPT/M llm 3D subscript superscript 𝑀 3 𝐷 𝑙 𝑙 𝑚 M^{3D}_{llm}italic_M start_POSTSUPERSCRIPT 3 italic_D end_POSTSUPERSCRIPT start_POSTSUBSCRIPT italic_l italic_l italic_m end_POSTSUBSCRIPT. Finally, we co-embed point 𝐟 3D superscript 𝐟 3 𝐷{\bf f}^{3D}bold_f start_POSTSUPERSCRIPT 3 italic_D end_POSTSUPERSCRIPT, pixel 𝐟 2D superscript 𝐟 2 𝐷{\bf f}^{2D}bold_f start_POSTSUPERSCRIPT 2 italic_D end_POSTSUPERSCRIPT, and text embeddings 𝐟 T superscript 𝐟 𝑇{\bf f}^{T}bold_f start_POSTSUPERSCRIPT italic_T end_POSTSUPERSCRIPT into a common space to learn a robust 3D representation by optimizing the mutually inclusive loss function.

|

| 46 |

+

|

| 47 |

+

Vision-Language Foundation Models. Recent vision-language foundation models have exhibited remarkable generalization ability on zero-shot prediction tasks. Segment Anything Model (SAM)[[26](https://arxiv.org/html/2407.09781v1#bib.bib26)] leads a new trend of universal image segmentation and exhibits promising results on diverse downstream tasks. Recognize Anything Model (RAM)[[57](https://arxiv.org/html/2407.09781v1#bib.bib57)] presents a novel paradigm for image tagging (multi-label classification) by leveraging large-scale image-text pairs for training without manual annotations. The recent success of ChatGPT and GPT4 have stimulated tremendous interests in developing multimodal large language models (LLMs). LLaVA[[35](https://arxiv.org/html/2407.09781v1#bib.bib35)] is an early exploration to apply LLMs to the multimodal fields by connecting a vision encoder to LLM for general-purpose visual and language understanding. The recent open-vocabulary methods[[52](https://arxiv.org/html/2407.09781v1#bib.bib52), [33](https://arxiv.org/html/2407.09781v1#bib.bib33), [56](https://arxiv.org/html/2407.09781v1#bib.bib56)] shed lights on the direct use of pre-trained foundation models for handling different visual tasks. ODISE[[52](https://arxiv.org/html/2407.09781v1#bib.bib52)] explores the potential ability of pretrained text-to-image diffusion models[[44](https://arxiv.org/html/2407.09781v1#bib.bib44)] for open-vocabulary panoptic segmentation. FC-CLIP[[56](https://arxiv.org/html/2407.09781v1#bib.bib56)] utilizes a shared frozen convolutional CLIP backbone to maintain the ability of open-vocabulary classification without compromising accuracy.

|

| 48 |

+

|

| 49 |

+

3 Method

|

| 50 |

+

--------

|

| 51 |

+

|

| 52 |

+

As illustrated in Fig.[1](https://arxiv.org/html/2407.09781v1#S2.F1 "Figure 1 ‣ 2 Related Work ‣ Dense Multimodal Alignment for Open-Vocabulary 3D Scene Understanding"), we propose a dense multimodal alignment (DMA) framework for open-vocabulary 3D scene understanding, where we construct dense correspondences across 2D image pixels, 3D points and 1D texts, and embed them into a common latent space. In this section, we will elaborate the construction of text and image modalities, and explain how we associate and align them in a dense manner.

|

| 53 |

+

|

| 54 |

+

### 3.1 Comprehensive Text Modality Generation

|

| 55 |

+

|

| 56 |

+

Learning a robust 3D model that is generalizable to open vocabularies is challenging since it is unclear how to acquire the dense text labels for point clouds. Although well-trained human annotators could potentially provide detailed language descriptions of 3D scenes, such a method is costly and lacks scalability. To overcome this limitation, we leverage a tagging model and an MLLM to extract complete category information and scalable scene descriptions, respectively.

|

| 57 |

+

|

| 58 |

+

Complete Category Information. The scene tagging process is illustrated in Fig.[2](https://arxiv.org/html/2407.09781v1#S3.F2 "Figure 2 ‣ 3.1 Comprehensive Text Modality Generation ‣ 3 Method ‣ Dense Multimodal Alignment for Open-Vocabulary 3D Scene Understanding"). Firstly, we use the image tagging foundation model such as RAM[[23](https://arxiv.org/html/2407.09781v1#bib.bib23)] to extract all possible categories from an image, and utilize category names and short descriptions derived from the metadata as the text query, referred to as T tag subscript 𝑇 𝑡 𝑎 𝑔 T_{tag}italic_T start_POSTSUBSCRIPT italic_t italic_a italic_g end_POSTSUBSCRIPT, such as “There is a {category name} in the scene”, “A photo of a {category name}”, _etc_. Unlike image captioning models[[28](https://arxiv.org/html/2407.09781v1#bib.bib28), [1](https://arxiv.org/html/2407.09781v1#bib.bib1)] that can only identify sparse and salient objects in a scene, RAM can recognize as many tags as possible without missing important parts, ensuring a high recall rate and alignment with complete semantic patterns. The more complete and accurate the detected categories are, the easier we can establish precise dense correspondences between text and 3D modalities, and hence the open-vocabulary capability of the 3D model can be enhanced.

|

| 59 |

+

|

| 60 |

+

|

| 61 |

+

|

| 62 |

+

Figure 2: Scene tagging generation. (1) We first employ RAM[[57](https://arxiv.org/html/2407.09781v1#bib.bib57)] to generate view-level tags, and then (2) reduce the tag noise with GPT. Finally, scene-level tags are generated by (3) multi-view voting.

|

| 63 |

+

|

| 64 |

+

Reliable GPT-based Denoising. While there are many tags recognized by RAM, some redundant or irrelevant tags are also included, such as non-noun words (“purple”, “blue”, “lay”, _etc_.) and objects that do not exist in the image (“bed”, “ukulele”, _etc_.), as shown in Fig.[2](https://arxiv.org/html/2407.09781v1#S3.F2 "Figure 2 ‣ 3.1 Comprehensive Text Modality Generation ‣ 3 Method ‣ Dense Multimodal Alignment for Open-Vocabulary 3D Scene Understanding"). We address this issue in two steps. Firstly, we utilize GPT to filter potential noisy vocabulary. Given the input list, we instruct GPT to examine the words one by one and perform reasoning according to the chain of thought, outputting a boolean list indicating whether a word is an outlier. Please refer to Fig.1 of supplemental material for the detailed instructions and examples to reduce the noisy tags. Secondly, to decrease the non-existent categories in a scene, we conduct multi-view voting and neglect categories that appear in fewer than five views. Please refer to Fig.2 of the supplemental material for the denoised scene tagging results and the corresponding visualizations of 3D label maps.

|

| 65 |

+

|

| 66 |

+

Scalable Scene Description. Although scene-level tags have already covered most of the categories, the limited scalability and variation of category names hinders their provision of rich contexts and details. To address this limitation and enable arbitrary queries for 3D networks, we additionally leverage MLLMs such as LLaVA[[35](https://arxiv.org/html/2407.09781v1#bib.bib35)] to generate diverse and linguistically expressible descriptions, denoted by T llm subscript 𝑇 𝑙 𝑙 𝑚 T_{llm}italic_T start_POSTSUBSCRIPT italic_l italic_l italic_m end_POSTSUBSCRIPT. Owing to the exposure to a diverse range of linguistic patterns and contextual nuances, the MLLMs can generate comprehensive and in-depth descriptions based on input images. Consequently, these LLMs can enhance the richness and granularity of the generated textual representations, thereby facilitating a more comprehensive understanding of the 3D scenes. Please refer to Fig.3 of supplemental material for the examples of scene-level captions and corresponding visualizations.

|

| 67 |

+

|

| 68 |

+

Finally, we generate the text embeddings f tag T subscript superscript f 𝑇 𝑡 𝑎 𝑔\textbf{f}^{T}_{tag}f start_POSTSUPERSCRIPT italic_T end_POSTSUPERSCRIPT start_POSTSUBSCRIPT italic_t italic_a italic_g end_POSTSUBSCRIPT and f llm T subscript superscript f 𝑇 𝑙 𝑙 𝑚\textbf{f}^{T}_{llm}f start_POSTSUPERSCRIPT italic_T end_POSTSUPERSCRIPT start_POSTSUBSCRIPT italic_l italic_l italic_m end_POSTSUBSCRIPT using CLIP text encoder based on the generated tags T tag subscript 𝑇 𝑡 𝑎 𝑔 T_{tag}italic_T start_POSTSUBSCRIPT italic_t italic_a italic_g end_POSTSUBSCRIPT and scene descriptions T llm subscript 𝑇 𝑙 𝑙 𝑚 T_{llm}italic_T start_POSTSUBSCRIPT italic_l italic_l italic_m end_POSTSUBSCRIPT, respectively, which are utilized to supervise the training of 3D networks subsequently.

|

| 69 |

+

|

| 70 |

+

|

| 71 |

+

|

| 72 |

+

Figure 3: Segmentation results using 2D and 3D models. 2D model has advantages in segmenting background objects (in blue boxes), while 3D model is more favorable for foreground objects with distinct structures (in red boxes).

|

| 73 |

+

|

| 74 |

+

### 3.2 Structure-aware Image Feature Extraction

|

| 75 |

+

|

| 76 |

+

Compared to language modality, the image modality offers a wealth of contextual information and exhibits significant variations among different pixels, which could provide more effective supervision. Inspired by this observation, OpenScene[[42](https://arxiv.org/html/2407.09781v1#bib.bib42)] distills the knowledge from frozen open-vocabulary 2D segmentation models, such as LSeg[[27](https://arxiv.org/html/2407.09781v1#bib.bib27)] and OpenSeg[[14](https://arxiv.org/html/2407.09781v1#bib.bib14)]. However, these methods suffer from two major limitations. Firstly, they are fine-tuned on in-vocabulary datasets, which leads to a misalignment between image and text features and consequently results in poor performance on open-vocabulary categories. Secondly, all of these methods freeze 2D networks, failing to perceive the 3D structure of objects and leading to inaccurate supervision. As shown in Fig.[3](https://arxiv.org/html/2407.09781v1#S3.F3 "Figure 3 ‣ 3.1 Comprehensive Text Modality Generation ‣ 3 Method ‣ Dense Multimodal Alignment for Open-Vocabulary 3D Scene Understanding"), we visualize the segmentation results using 2D and 3D features. One can observe that although 2D features are more advantageous in segmenting background objects with ambiguous geometry, such as “bookshelf”, “door” and “blackboard”, they are less effective in segmenting objects with distinct shapes, such as “table” and “chair”. Therefore, it is necessary to distill the structural priors of 3D networks into 2D ones as well in order to facilitate fine-grained scene understanding.

|

| 77 |

+

|

| 78 |

+

In this paper, we adopt FC-CLIP[[56](https://arxiv.org/html/2407.09781v1#bib.bib56)] as the backbone to extract image features. On one hand, we use the frozen CLIP visual encoder to ensure the intactness of image-text alignment, obtaining CLIP features f clip 2D subscript superscript f 2 𝐷 𝑐 𝑙 𝑖 𝑝\textbf{f}^{2D}_{clip}f start_POSTSUPERSCRIPT 2 italic_D end_POSTSUPERSCRIPT start_POSTSUBSCRIPT italic_c italic_l italic_i italic_p end_POSTSUBSCRIPT. On the other hand, to facilitate the synergistic benefits of both 2D and 3D modalities, we fine-tune the mask head and attain the mask features f mask 2D subscript superscript f 2 𝐷 𝑚 𝑎 𝑠 𝑘\textbf{f}^{2D}_{mask}f start_POSTSUPERSCRIPT 2 italic_D end_POSTSUPERSCRIPT start_POSTSUBSCRIPT italic_m italic_a italic_s italic_k end_POSTSUBSCRIPT. In contrast to previous methods that rely on potentially noisy fixed image features for supervision, the fine-tuned mask features enhance the adaptability to downstream 3D tasks. We explore different fine-tuning strategies, such as LoRA[[18](https://arxiv.org/html/2407.09781v1#bib.bib18)], Adapter[[17](https://arxiv.org/html/2407.09781v1#bib.bib17)], and full parameter fine-tuning and compare them in experiments.

|

| 79 |

+

|

| 80 |

+

### 3.3 Dense Associations across Modalities

|

| 81 |

+

|

| 82 |

+

Once the text and image modalities are constructed, the subsequent step is to associate each point to its corresponding pixel and text. We utilize the image modality as a bridge to establish separate associations between pixels and other modalities. Firstly, we construct the associations between image and language modalities by taking C 𝐶 C italic_C different text embeddings f T={f 1 T,⋯,f C T}superscript f 𝑇 subscript superscript f 𝑇 1⋯subscript superscript f 𝑇 𝐶\textbf{f}^{T}=\{\textbf{f}^{T}_{1},\cdots,\textbf{f}^{T}_{C}\}f start_POSTSUPERSCRIPT italic_T end_POSTSUPERSCRIPT = { f start_POSTSUPERSCRIPT italic_T end_POSTSUPERSCRIPT start_POSTSUBSCRIPT 1 end_POSTSUBSCRIPT , ⋯ , f start_POSTSUPERSCRIPT italic_T end_POSTSUPERSCRIPT start_POSTSUBSCRIPT italic_C end_POSTSUBSCRIPT } as classifier to assign text labels to each pixel, obtaining a 2D semantic score map, denoted by S 2D∈ℝ H×W×C superscript 𝑆 2 𝐷 superscript ℝ 𝐻 𝑊 𝐶 S^{2D}\in\mathbb{R}^{H\times W\times C}italic_S start_POSTSUPERSCRIPT 2 italic_D end_POSTSUPERSCRIPT ∈ blackboard_R start_POSTSUPERSCRIPT italic_H × italic_W × italic_C end_POSTSUPERSCRIPT. This process can be formulated as follows:

|

| 83 |

+

|

| 84 |

+

S c 2D(u,v)=σ(<f 2D(u,v),f c T>/τ 1),\displaystyle S^{2D}_{c}(u,v)=\sigma(<\textbf{f}^{2D}(u,v),\textbf{f}^{T}_{c}>% /\tau_{1}),italic_S start_POSTSUPERSCRIPT 2 italic_D end_POSTSUPERSCRIPT start_POSTSUBSCRIPT italic_c end_POSTSUBSCRIPT ( italic_u , italic_v ) = italic_σ ( < f start_POSTSUPERSCRIPT 2 italic_D end_POSTSUPERSCRIPT ( italic_u , italic_v ) , f start_POSTSUPERSCRIPT italic_T end_POSTSUPERSCRIPT start_POSTSUBSCRIPT italic_c end_POSTSUBSCRIPT > / italic_τ start_POSTSUBSCRIPT 1 end_POSTSUBSCRIPT ) ,(1)

|

| 85 |

+

|

| 86 |

+

where S c 2D(u,v)subscript superscript 𝑆 2 𝐷 𝑐 𝑢 𝑣 S^{2D}_{c}(u,v)italic_S start_POSTSUPERSCRIPT 2 italic_D end_POSTSUPERSCRIPT start_POSTSUBSCRIPT italic_c end_POSTSUBSCRIPT ( italic_u , italic_v ) denotes the probability that the pixel at location (u,v)𝑢 𝑣(u,v)( italic_u , italic_v ) belongs to the c 𝑐 c italic_c-th text label, and <⋅>⋅<\cdot>< ⋅ > represents the cosine similarity between two ℓ 2 subscript ℓ 2\ell_{2}roman_ℓ start_POSTSUBSCRIPT 2 end_POSTSUBSCRIPT-normalized feature vectors. τ 1 subscript 𝜏 1\tau_{1}italic_τ start_POSTSUBSCRIPT 1 end_POSTSUBSCRIPT is a temperature parameter. Then we establish the associations between images and point clouds by back-propagating 3D points p=(x,y,z)p 𝑥 𝑦 𝑧\textbf{p}=(x,y,z)p = ( italic_x , italic_y , italic_z ) onto 2D positions (u,v)𝑢 𝑣(u,v)( italic_u , italic_v ) using a projection matrix T∈ℝ 3×4 𝑇 superscript ℝ 3 4 T\in\mathbb{R}^{3\times 4}italic_T ∈ blackboard_R start_POSTSUPERSCRIPT 3 × 4 end_POSTSUPERSCRIPT, _i.e_., [u,v,w]=T[x,y,z,1]𝑢 𝑣 𝑤 𝑇 𝑥 𝑦 𝑧 1[u,v,w]=T[x,y,z,1][ italic_u , italic_v , italic_w ] = italic_T [ italic_x , italic_y , italic_z , 1 ], where w 𝑤 w italic_w is a scaling factor.

|

| 87 |

+

|

| 88 |

+

Finally, we associate the text and 3D modalities by taking image as the bridge. Given K 𝐾 K italic_K different projection views for one point p, we compute its average semantic score, denoted as S¯3D superscript¯𝑆 3 𝐷\bar{S}^{3D}over¯ start_ARG italic_S end_ARG start_POSTSUPERSCRIPT 3 italic_D end_POSTSUPERSCRIPT, across K 𝐾 K italic_K views [S 1 2D(u 1,v 1),⋯,S K 2D(u K,v K)]subscript superscript 𝑆 2 𝐷 1 subscript 𝑢 1 subscript 𝑣 1⋯subscript superscript 𝑆 2 𝐷 𝐾 subscript 𝑢 𝐾 subscript 𝑣 𝐾[S^{2D}_{1}(u_{1},v_{1}),\cdots,S^{2D}_{K}(u_{K},v_{K})][ italic_S start_POSTSUPERSCRIPT 2 italic_D end_POSTSUPERSCRIPT start_POSTSUBSCRIPT 1 end_POSTSUBSCRIPT ( italic_u start_POSTSUBSCRIPT 1 end_POSTSUBSCRIPT , italic_v start_POSTSUBSCRIPT 1 end_POSTSUBSCRIPT ) , ⋯ , italic_S start_POSTSUPERSCRIPT 2 italic_D end_POSTSUPERSCRIPT start_POSTSUBSCRIPT italic_K end_POSTSUBSCRIPT ( italic_u start_POSTSUBSCRIPT italic_K end_POSTSUBSCRIPT , italic_v start_POSTSUBSCRIPT italic_K end_POSTSUBSCRIPT ) ]. Based on the aggregated 3D semantic scores, the final text-to-3D label map, denoted as M 3D∈ℝ N×C superscript 𝑀 3 𝐷 superscript ℝ 𝑁 𝐶 M^{3D}\in\mathbb{R}^{N\times C}italic_M start_POSTSUPERSCRIPT 3 italic_D end_POSTSUPERSCRIPT ∈ blackboard_R start_POSTSUPERSCRIPT italic_N × italic_C end_POSTSUPERSCRIPT, can be derived by:

|

| 89 |

+

|

| 90 |

+

M c 3D={1,if S¯c 3D>threshold 0,else,\displaystyle M^{3D}_{c}=\left\{\begin{matrix}1,&\text{if}\ \ \bar{S}^{3D}_{c}% >\text{threshold}\\ 0,&else\end{matrix}\right.,italic_M start_POSTSUPERSCRIPT 3 italic_D end_POSTSUPERSCRIPT start_POSTSUBSCRIPT italic_c end_POSTSUBSCRIPT = { start_ARG start_ROW start_CELL 1 , end_CELL start_CELL if over¯ start_ARG italic_S end_ARG start_POSTSUPERSCRIPT 3 italic_D end_POSTSUPERSCRIPT start_POSTSUBSCRIPT italic_c end_POSTSUBSCRIPT > threshold end_CELL end_ROW start_ROW start_CELL 0 , end_CELL start_CELL italic_e italic_l italic_s italic_e end_CELL end_ROW end_ARG ,(2)

|

| 91 |

+

|

| 92 |

+

where N 𝑁 N italic_N denotes the number of points and M c 3D subscript superscript 𝑀 3 𝐷 𝑐 M^{3D}_{c}italic_M start_POSTSUPERSCRIPT 3 italic_D end_POSTSUPERSCRIPT start_POSTSUBSCRIPT italic_c end_POSTSUBSCRIPT indicates whether the point belongs to the c 𝑐 c italic_c-th text label or not. M 3D superscript 𝑀 3 𝐷 M^{3D}italic_M start_POSTSUPERSCRIPT 3 italic_D end_POSTSUPERSCRIPT can be regarded as the pseudo label map for point cloud, serving as the supervision signal for training 3D models.

|

| 93 |

+

|

| 94 |

+

It is noteworthy that instead of generating one-hot label through the argmax operation, we select all confident text labels whose scores exceed the threshold. This is because the generated text categories may exhibit similarities in semantics (like ‘suitcase’ and ‘luggage’) or inclusion relationships (such as ‘kitchen’ and ‘stove’). As a result, it is highly possible that one single point corresponds to multiple text labels simultaneously.

|

| 95 |

+

|

| 96 |

+

### 3.4 Dense Multimodal Alignment

|

| 97 |

+

|

| 98 |

+

After obtaining the triplets of different modalities and their dense correspondences, the subsequent objective is to align the 3D points with their corresponding text and pixel embeddings. This alignment process involves several steps. Firstly, we extract 3D features for the point cloud by utilizing a 3D network, denoted as ε 3D subscript 𝜀 3 𝐷\varepsilon_{3D}italic_ε start_POSTSUBSCRIPT 3 italic_D end_POSTSUBSCRIPT. These features are then projected to match the dimension of the CLIP features.

|

| 99 |

+

|

| 100 |

+

Next, we assign text labels to different 3D points by computing the cosine similarities between point and text embeddings f T superscript f 𝑇\textbf{f}^{T}f start_POSTSUPERSCRIPT italic_T end_POSTSUPERSCRIPT, yielding a 3D segmentation probability map P 3D superscript 𝑃 3 𝐷 P^{3D}italic_P start_POSTSUPERSCRIPT 3 italic_D end_POSTSUPERSCRIPT:

|

| 101 |

+

|

| 102 |

+

P i,c 3D=σ(<f i 3D,f c T>/τ 2),\displaystyle P^{3D}_{i,c}=\sigma(<\textbf{f}^{3D}_{i},\textbf{f}^{T}_{c}>/% \tau_{2}),italic_P start_POSTSUPERSCRIPT 3 italic_D end_POSTSUPERSCRIPT start_POSTSUBSCRIPT italic_i , italic_c end_POSTSUBSCRIPT = italic_σ ( < f start_POSTSUPERSCRIPT 3 italic_D end_POSTSUPERSCRIPT start_POSTSUBSCRIPT italic_i end_POSTSUBSCRIPT , f start_POSTSUPERSCRIPT italic_T end_POSTSUPERSCRIPT start_POSTSUBSCRIPT italic_c end_POSTSUBSCRIPT > / italic_τ start_POSTSUBSCRIPT 2 end_POSTSUBSCRIPT ) ,(3)

|

| 103 |

+

|

| 104 |

+

where f i 3D subscript superscript f 3 𝐷 𝑖\textbf{f}^{3D}_{i}f start_POSTSUPERSCRIPT 3 italic_D end_POSTSUPERSCRIPT start_POSTSUBSCRIPT italic_i end_POSTSUBSCRIPT denotes the feature of the i 𝑖 i italic_i-th point, and P i,c 3D subscript superscript 𝑃 3 𝐷 𝑖 𝑐 P^{3D}_{i,c}italic_P start_POSTSUPERSCRIPT 3 italic_D end_POSTSUPERSCRIPT start_POSTSUBSCRIPT italic_i , italic_c end_POSTSUBSCRIPT denotes the probability that the i 𝑖 i italic_i-th point belongs to the c 𝑐 c italic_c-th text label. Here we employ the Sigmoid activation function σ(⋅)𝜎⋅\sigma(\cdot)italic_σ ( ⋅ ) since it will not lead to mutually exclusive relationships among different categories.

|

| 105 |

+

|

| 106 |

+

Text-to-3D Supervision. We use the text-to-3D label map M 3D superscript 𝑀 3 𝐷 M^{3D}italic_M start_POSTSUPERSCRIPT 3 italic_D end_POSTSUPERSCRIPT as the pseudo label to facilitate the alignment of point and text features. Different loss functions are employed for aligning the point embeddings with the tag 𝐟 tag T superscript subscript 𝐟 𝑡 𝑎 𝑔 𝑇{\bf f}_{tag}^{T}bold_f start_POSTSUBSCRIPT italic_t italic_a italic_g end_POSTSUBSCRIPT start_POSTSUPERSCRIPT italic_T end_POSTSUPERSCRIPT and scene description 𝐟 llm T superscript subscript 𝐟 𝑙 𝑙 𝑚 𝑇{\bf f}_{llm}^{T}bold_f start_POSTSUBSCRIPT italic_l italic_l italic_m end_POSTSUBSCRIPT start_POSTSUPERSCRIPT italic_T end_POSTSUPERSCRIPT embeddings. As can be seen in Fig.[1](https://arxiv.org/html/2407.09781v1#S2.F1 "Figure 1 ‣ 2 Related Work ‣ Dense Multimodal Alignment for Open-Vocabulary 3D Scene Understanding"), we build dense associations between 𝐟 tag T superscript subscript 𝐟 𝑡 𝑎 𝑔 𝑇{\bf f}_{tag}^{T}bold_f start_POSTSUBSCRIPT italic_t italic_a italic_g end_POSTSUBSCRIPT start_POSTSUPERSCRIPT italic_T end_POSTSUPERSCRIPT and the entire point cloud, resulting in M tag 3D superscript subscript 𝑀 𝑡 𝑎 𝑔 3 𝐷 M_{tag}^{3D}italic_M start_POSTSUBSCRIPT italic_t italic_a italic_g end_POSTSUBSCRIPT start_POSTSUPERSCRIPT 3 italic_D end_POSTSUPERSCRIPT. Consequently, The Binary Cross Entropy (BCE) loss is used to effectively penalize both positive and negative samples:

|

| 107 |

+

|

| 108 |

+

ℒ 3d−text(tag)=ℒ BCE(P 3D,M tag 3D).subscript ℒ 3 𝑑 𝑡 𝑒 𝑥 𝑡 𝑡 𝑎 𝑔 subscript ℒ 𝐵 𝐶 𝐸 superscript 𝑃 3 𝐷 superscript subscript 𝑀 𝑡 𝑎 𝑔 3 𝐷\displaystyle\mathcal{L}_{3d-text(tag)}=\mathcal{L}_{BCE}(P^{3D},M_{tag}^{3D}).caligraphic_L start_POSTSUBSCRIPT 3 italic_d - italic_t italic_e italic_x italic_t ( italic_t italic_a italic_g ) end_POSTSUBSCRIPT = caligraphic_L start_POSTSUBSCRIPT italic_B italic_C italic_E end_POSTSUBSCRIPT ( italic_P start_POSTSUPERSCRIPT 3 italic_D end_POSTSUPERSCRIPT , italic_M start_POSTSUBSCRIPT italic_t italic_a italic_g end_POSTSUBSCRIPT start_POSTSUPERSCRIPT 3 italic_D end_POSTSUPERSCRIPT ) .(4)

|

| 109 |

+

|

| 110 |

+

As for 𝐟 llm T superscript subscript 𝐟 𝑙 𝑙 𝑚 𝑇{\bf f}_{llm}^{T}bold_f start_POSTSUBSCRIPT italic_l italic_l italic_m end_POSTSUBSCRIPT start_POSTSUPERSCRIPT italic_T end_POSTSUPERSCRIPT, since it corresponds only to salient objects, we can only obtain the mask for partial points, denoted as M llm 3D superscript subscript 𝑀 𝑙 𝑙 𝑚 3 𝐷 M_{llm}^{3D}italic_M start_POSTSUBSCRIPT italic_l italic_l italic_m end_POSTSUBSCRIPT start_POSTSUPERSCRIPT 3 italic_D end_POSTSUPERSCRIPT. (Visualizations of M tag 3D superscript subscript 𝑀 𝑡 𝑎 𝑔 3 𝐷 M_{tag}^{3D}italic_M start_POSTSUBSCRIPT italic_t italic_a italic_g end_POSTSUBSCRIPT start_POSTSUPERSCRIPT 3 italic_D end_POSTSUPERSCRIPT and M llm 3D superscript subscript 𝑀 𝑙 𝑙 𝑚 3 𝐷 M_{llm}^{3D}italic_M start_POSTSUBSCRIPT italic_l italic_l italic_m end_POSTSUBSCRIPT start_POSTSUPERSCRIPT 3 italic_D end_POSTSUPERSCRIPT are given in Fig.2 and Fig.3 of the supplementary material, respectively.) We utilize the cosine similarity loss to supervise only the positive samples:

|

| 111 |

+

|

| 112 |

+

ℒ 3d−text(llm)=1−cos(𝐟 llm T,f 3D).subscript ℒ 3 𝑑 𝑡 𝑒 𝑥 𝑡 𝑙 𝑙 𝑚 1 superscript subscript 𝐟 𝑙 𝑙 𝑚 𝑇 superscript f 3 𝐷\displaystyle\mathcal{L}_{3d-text(llm)}=1-\cos({\bf f}_{llm}^{T},\textbf{f}^{3% D}).caligraphic_L start_POSTSUBSCRIPT 3 italic_d - italic_t italic_e italic_x italic_t ( italic_l italic_l italic_m ) end_POSTSUBSCRIPT = 1 - roman_cos ( bold_f start_POSTSUBSCRIPT italic_l italic_l italic_m end_POSTSUBSCRIPT start_POSTSUPERSCRIPT italic_T end_POSTSUPERSCRIPT , f start_POSTSUPERSCRIPT 3 italic_D end_POSTSUPERSCRIPT ) .(5)

|

| 113 |

+

|

| 114 |

+

Mutually Inclusive Loss. In this work, we do not employ the Cross-Entropy loss because it would result in a mutually exclusive relationship between different classes, meaning that each point is assigned to only one class of interest. However, in text-to-3D alignment, one point may simultaneously associate with multiple text prompts, such as ‘bed’ and ‘bedroom’, ‘chair’ and ‘office chair’, ‘curtain’ and ‘drape’, _etc_. To handle this issue, we employ mutually inclusive losses (MIL), such as BCE loss and cosine similarity loss, to ensure that each point is aligned with all its corresponding tags/descriptions simultaneously, avoiding the potential conflicts between categories with overlapping or similar semantics.

|

| 115 |

+

|

| 116 |

+

2D-to-3D Supervision. For 3D-2D pairs, we follow the previous work[[42](https://arxiv.org/html/2407.09781v1#bib.bib42)] to fuse pixel embeddings across K 𝐾 K italic_K different views, represented as [f 1 2D,⋯,f K 2D]subscript superscript f 2 𝐷 1⋯subscript superscript f 2 𝐷 𝐾[\textbf{f}^{2D}_{1},\cdots,\textbf{f}^{2D}_{K}][ f start_POSTSUPERSCRIPT 2 italic_D end_POSTSUPERSCRIPT start_POSTSUBSCRIPT 1 end_POSTSUBSCRIPT , ⋯ , f start_POSTSUPERSCRIPT 2 italic_D end_POSTSUPERSCRIPT start_POSTSUBSCRIPT italic_K end_POSTSUBSCRIPT ], into a single feature vector f¯2D superscript¯f 2 𝐷\bar{\textbf{f}}^{2D}over¯ start_ARG f end_ARG start_POSTSUPERSCRIPT 2 italic_D end_POSTSUPERSCRIPT, and align 2D and 3D features by minimizing the cosine similarity loss:

|

| 117 |

+

|

| 118 |

+

ℒ 3d−2d=1−cos(f¯2D,f 3D).subscript ℒ 3 𝑑 2 𝑑 1 superscript¯f 2 𝐷 superscript f 3 𝐷\displaystyle\mathcal{L}_{3d-2d}=1-\cos(\bar{\textbf{f}}^{2D},\textbf{f}^{3D}).caligraphic_L start_POSTSUBSCRIPT 3 italic_d - 2 italic_d end_POSTSUBSCRIPT = 1 - roman_cos ( over¯ start_ARG f end_ARG start_POSTSUPERSCRIPT 2 italic_D end_POSTSUPERSCRIPT , f start_POSTSUPERSCRIPT 3 italic_D end_POSTSUPERSCRIPT ) .(6)

|

| 119 |

+

|

| 120 |

+

Since 2D mask head is also trainable, we additionally add the text-to-2D supervision and compute the BCE loss between 2D predictions and 2D masks, obtaining ℒ text−2d subscript ℒ 𝑡 𝑒 𝑥 𝑡 2 𝑑\mathcal{L}_{text-2d}caligraphic_L start_POSTSUBSCRIPT italic_t italic_e italic_x italic_t - 2 italic_d end_POSTSUBSCRIPT. Finally, the overall objective function to perform dense multimodal alignment is defined as:

|

| 121 |

+

|

| 122 |

+

ℒ 3D=ℒ 3d−text(tag)+ℒ 3d−text(llm)+ℒ 3d−2d+ℒ text−2d,subscript ℒ 3 𝐷 subscript ℒ 3 𝑑 𝑡 𝑒 𝑥 𝑡 𝑡 𝑎 𝑔 subscript ℒ 3 𝑑 𝑡 𝑒 𝑥 𝑡 𝑙 𝑙 𝑚 subscript ℒ 3 𝑑 2 𝑑 subscript ℒ 𝑡 𝑒 𝑥 𝑡 2 𝑑\displaystyle\mathcal{L}_{3D}=\mathcal{L}_{3d-text(tag)}+\mathcal{L}_{3d-text(% llm)}+\mathcal{L}_{3d-2d}+\mathcal{L}_{text-2d},caligraphic_L start_POSTSUBSCRIPT 3 italic_D end_POSTSUBSCRIPT = caligraphic_L start_POSTSUBSCRIPT 3 italic_d - italic_t italic_e italic_x italic_t ( italic_t italic_a italic_g ) end_POSTSUBSCRIPT + caligraphic_L start_POSTSUBSCRIPT 3 italic_d - italic_t italic_e italic_x italic_t ( italic_l italic_l italic_m ) end_POSTSUBSCRIPT + caligraphic_L start_POSTSUBSCRIPT 3 italic_d - 2 italic_d end_POSTSUBSCRIPT + caligraphic_L start_POSTSUBSCRIPT italic_t italic_e italic_x italic_t - 2 italic_d end_POSTSUBSCRIPT ,(7)

|

| 123 |

+

|

| 124 |

+

where the language modality provides comprehensive textual descriptions, and the image modality gives precise supervision on object edges and contextual information. Additionally, the 3D modality reveals crucial structural information of objects. By densely aligning these modalities in a shared space, our method can maximize the synergistic benefits among them and achieve outstanding segmentation performance without compromising the open-vocabulary classification ability of the model.

|

| 125 |

+

|

| 126 |

+

4 Experiments

|

| 127 |

+

-------------

|

| 128 |

+

|

| 129 |

+

### 4.1 Setups

|

| 130 |

+

|

| 131 |

+

Datasets. To demonstrate the effectiveness of our proposed method, we employ three popular datasets,_i.e_., ScanNet[[11](https://arxiv.org/html/2407.09781v1#bib.bib11)], Matterport3D[[6](https://arxiv.org/html/2407.09781v1#bib.bib6)], and nuScenes[[4](https://arxiv.org/html/2407.09781v1#bib.bib4)]. The first two datasets are indoor ones, comprising RGBD images and 3D meshes. The third one is an outdoor dataset, consisting of data collected from two sensors,_i.e_., LiDAR and camera. We conduct comparisons with state-of-the-art methods on each of these datasets. The mean Intersection-of-Union (mIoU), mean Accuracy (mACC), Precision, and Recall are employed as the evaluation metrics.

|

| 132 |

+

|

| 133 |

+

Implementation Details. In this work, MinkowskiNet[[10](https://arxiv.org/html/2407.09781v1#bib.bib10)] is employed as the 3D backbone, whose voxel size is set to 2cm for ScanNet and Matterport3D and 5cm for nuScenes. As for the 2D backbone, we use OpenSeg[[14](https://arxiv.org/html/2407.09781v1#bib.bib14)] and FC-CLIP[[56](https://arxiv.org/html/2407.09781v1#bib.bib56)] that perform mask-wise classification. The parameters τ 1 subscript 𝜏 1\tau_{1}italic_τ start_POSTSUBSCRIPT 1 end_POSTSUBSCRIPT in Eq.[1](https://arxiv.org/html/2407.09781v1#S3.E1 "Equation 1 ‣ 3.3 Dense Associations across Modalities ‣ 3 Method ‣ Dense Multimodal Alignment for Open-Vocabulary 3D Scene Understanding") and τ 2 subscript 𝜏 2\tau_{2}italic_τ start_POSTSUBSCRIPT 2 end_POSTSUBSCRIPT in Eq.[3](https://arxiv.org/html/2407.09781v1#S3.E3 "Equation 3 ‣ 3.4 Dense Multimodal Alignment ‣ 3 Method ‣ Dense Multimodal Alignment for Open-Vocabulary 3D Scene Understanding") are both set to 0.1. We use Adam[[25](https://arxiv.org/html/2407.09781v1#bib.bib25)] as the optimizer and the initial learning rate is set to 1e−4 1 𝑒 4 1e-4 1 italic_e - 4. The model is trained for 100 epochs. We set the batch size as 8 for indoor datasets and use one single NVIDIA RTX A6000 for training. As for nuScenes dataset, we use 8 GPUs for training and set the batch size as 16.

|

| 134 |

+

|

| 135 |

+

Methods mIoU mACC mIoU(F)mIoU(B)Latency

|

| 136 |

+

fully-supervised TangentConv[[49](https://arxiv.org/html/2407.09781v1#bib.bib49)]40.9−--−--−--−--

|

| 137 |

+

TextureNet[[21](https://arxiv.org/html/2407.09781v1#bib.bib21)]54.8−--−--−--−--

|

| 138 |

+

ScanComplete[[12](https://arxiv.org/html/2407.09781v1#bib.bib12)]56.6−--−--−--−--

|

| 139 |

+

Mix3D[[41](https://arxiv.org/html/2407.09781v1#bib.bib41)]73.6−--−--−--−--

|

| 140 |

+

VMNet[[20](https://arxiv.org/html/2407.09781v1#bib.bib20)]73.2−--−--−--−--

|

| 141 |

+

MinkowskiNet[[20](https://arxiv.org/html/2407.09781v1#bib.bib20)]69.0−--−--−--−--

|

| 142 |

+

Zero-shot PLA[[13](https://arxiv.org/html/2407.09781v1#bib.bib13)]17.7 33.5−--−--0.07s

|

| 143 |

+

RegionPLC[[55](https://arxiv.org/html/2407.09781v1#bib.bib55)]43.8 65.6−--−--0.07s

|

| 144 |

+

\cdashline 2-7 OpenScene[[42](https://arxiv.org/html/2407.09781v1#bib.bib42)](LSeg)-3D 52.9 63.2−--−--0.07s

|

| 145 |

+

OpenScene(LSeg)-2D3D 54.2 66.6−--−--102.6s

|

| 146 |

+

OpenScene†(OpenSeg)-3D 46.6 66.5 50.0 47.1 0.07s

|

| 147 |

+

OpenScene†(OpenSeg)-2D3D 47.9 71.7 49.5 51.0 89.4s

|

| 148 |

+

\cdashline 2-7 DMA(OpenSeg)-text only 50.5 63.7 56.7 48.0 0.07s

|

| 149 |

+

DMA(OpenSeg)-3D 53.3 70.3 58.3 51.5 0.07s

|

| 150 |

+

DMA(LSeg)-3D 54.8 66.9 59.9 51.9 0.07s

|

| 151 |

+

DMA(FC-CLIP)-3D 51.8 68.7 56.0 51.4 0.07s

|

| 152 |

+

|

| 153 |

+

Table 1: Comparison on the ScanNet[[11](https://arxiv.org/html/2407.09781v1#bib.bib11)] validation set. “F” and “B” denote foreground and background classes, respectively. † denotes our reproduced results.

|

| 154 |

+

|

| 155 |

+

Methods Anno.mIoU mIoU (Base)mIoU (Long-Tail)

|

| 156 |

+

fully-sup.RangeNet++[[39](https://arxiv.org/html/2407.09781v1#bib.bib39)]100%65.5 76.4 56.4

|

| 157 |

+

Cylinder3D[[58](https://arxiv.org/html/2407.09781v1#bib.bib58)]75.4 84.1 69.4

|

| 158 |

+

SPVNAS[[48](https://arxiv.org/html/2407.09781v1#bib.bib48)]74.8 82.3 67.2

|

| 159 |

+

AMVNet[[34](https://arxiv.org/html/2407.09781v1#bib.bib34)]77.0 83.9 70.8

|

| 160 |

+

weakly-sup.ContrastiveSC[[16](https://arxiv.org/html/2407.09781v1#bib.bib16)]0.9%64.5 79.7 53.8

|

| 161 |

+

LESS[[37](https://arxiv.org/html/2407.09781v1#bib.bib37)]74.8 81.6 68.7

|

| 162 |

+

\cdashline 2-6 ContrastiveSC 0.2%63.5 78.4 51.6

|

| 163 |

+

LESS 73.5 81.1 66.6

|

| 164 |

+

Zero-shot OpenScene[[42](https://arxiv.org/html/2407.09781v1#bib.bib42)](LSeg)-2D3D No 36.7 55.0 22.3

|

| 165 |

+

OpenScene(OpenSeg)-2D3D 42.1 52.6 33.8

|

| 166 |

+

\cdashline 2-6 DMA(OpenSeg)-3D No 45.1 59.3 33.9

|

| 167 |

+

DMA(FC-CLIP)-3D 47.4 61.4 35.3

|

| 168 |

+

|

| 169 |

+

Table 2: Comparison on the nuScenes[[4](https://arxiv.org/html/2407.09781v1#bib.bib4)] validation set. We partition all categories into base and long-tail classes according to their frequencies.

|

| 170 |

+

|

| 171 |

+

### 4.2 Comparison with State-of-the-Arts

|

| 172 |

+

|

| 173 |

+

We compare the proposed DMA with fully-/weakly-supervised and zero-shot methods[[42](https://arxiv.org/html/2407.09781v1#bib.bib42), [13](https://arxiv.org/html/2407.09781v1#bib.bib13), [55](https://arxiv.org/html/2407.09781v1#bib.bib55)]. Tab.[2](https://arxiv.org/html/2407.09781v1#S4.T2 "Table 2 ‣ 4.1 Setups ‣ 4 Experiments ‣ Dense Multimodal Alignment for Open-Vocabulary 3D Scene Understanding") presents the segmentation results on the ScanNet[[11](https://arxiv.org/html/2407.09781v1#bib.bib11)] dataset. To facilitate comparison, we measure the results of OpenScene by using 3D and 2D-3D integrated features as supervision. As can be seen in Tab.[2](https://arxiv.org/html/2407.09781v1#S4.T2 "Table 2 ‣ 4.1 Setups ‣ 4 Experiments ‣ Dense Multimodal Alignment for Open-Vocabulary 3D Scene Understanding"), although OpenScene(LSeg) attains better results (54.2% mIoU) by using both 2D and 3D encoders, it results in significantly increased inference latency. This is because the parameter size of 2D encoder is much larger than 3D encoder, and the 2D encoder needs to perform inference on multi-view images of the scene. Our DMA(OpenSeg) using only 3D model for prediction outperforms OpenScene(OpenSeg)-2D3D by 5.4% mIoU at a significantly lower latency, wherein the mIoU (F) and mIoU (B) are improved by 8.8% and 0.5%, respectively. This is because we perform additional alignment with text modality, thereby compensating for the decreased open-vocabulary ability of 2D model. When using text supervision only, our method outperforms the text-supervised approach RegionPLC[[55](https://arxiv.org/html/2407.09781v1#bib.bib55)] by 9.5%, and even surpasses OpenScene(OpenSeg)-2D3D by 2.6% in terms of mIoU. This indicates that, compared to previous methods that generate image- and region-level captions, our method establishes dense and precise correspondences between text and 3D points by taking 2D modality as the bridge, achieving more precise supervision. The suboptimal performance of our method using FC-CLIP as the 2D encoder may be attributed to the low resolution of the images (320×\times×240), which limits the capabilities of FC-CLIP.

|

| 174 |

+

|

| 175 |

+

|

| 176 |

+

|

| 177 |

+

Figure 4: Qualitative results of different methods on both indoor and outdoor datasets.

|

| 178 |

+

|

| 179 |

+

Outdoor Scenes. To validate the effectiveness of our method on outdoor point clouds, we evaluate the performance of DMA on the nuScenes dataset[[4](https://arxiv.org/html/2407.09781v1#bib.bib4)]. Due to the highly imbalanced class distribution of outdoor scenes, we additionally measure the performance on base and long-tail categories. As shown in Tab.[2](https://arxiv.org/html/2407.09781v1#S4.T2 "Table 2 ‣ 4.1 Setups ‣ 4 Experiments ‣ Dense Multimodal Alignment for Open-Vocabulary 3D Scene Understanding"), by densely aligning with the tagging information and the detailed description extracted from each scene, our DMA(OpenSeg) using only 3D encoder significantly improves the performance over OpenScene(OpenSeg)-2D3D by 3.0% mIoU. Additionally, the final performance is further improved by 2.3% and attains 47.4% mIoU by employing FC-CLIP[[56](https://arxiv.org/html/2407.09781v1#bib.bib56)] to extract 2D features. This is because FC-CLIP could achieve more precise segmentation while maintaining outstanding open-vocabulary recognition ability of CLIP. Besides, by fine-tuning the mask head, FC-CLIP could incorporate the 3D structural priors into mask features and produce better results.

|

| 180 |

+

|

| 181 |

+

mIoU mACC Precision Recall

|

| 182 |

+

Head Common Tail All Head Common Tail All Head Common Tail All Head Common Tail All

|

| 183 |

+

OpenScene†[[42](https://arxiv.org/html/2407.09781v1#bib.bib42)]-2D3D 21.2 8.4 4.0 6.2 35.5 15.7 9.4 12.0 43.9 18.6 7.8 14.5 34.5 15.7 9.4 12.0

|

| 184 |

+

PLA[[13](https://arxiv.org/html/2407.09781v1#bib.bib13)]−--−--−--1.8−--−--−--3.1−--−--−--−--−--−--−--−--

|

| 185 |

+

RegionPLC[[55](https://arxiv.org/html/2407.09781v1#bib.bib55)]−--−--−--6.5−--−--−--15.9−--−--−--−--−--−--−--−--

|

| 186 |

+

\cdashline 1-17 DMA-text only 23.2 7.6 2.0 6.9 32.6 13.4 5.9 11.3 40.0 15.1 5.8 13.7 32.6 13.4 5.9 11.3

|

| 187 |

+

DMA(OpenSeg)-3D 25.3 10.8 5.5 7.6 36.7 18.2 10.7 14.6 44.5 23.6 10.5 14.9 36.7 18.2 10.7 13.2

|

| 188 |

+

DMA(FC-CLIP)-3D 27.2 11.5 5.8 7.9 37.4 19.2 11.2 15.2 46.2 24.9 11.3 15.7 38.2 20.4 11.1 14.0

|

| 189 |

+

Fully-Sup 45.5 13.6 3.4 20.8−--−--−--−--66.8 55.7 23.3 34.4 57.6 19.1 5.8 27.8

|

| 190 |

+

|

| 191 |

+

Table 3: Comparison on ScanNet200[[45](https://arxiv.org/html/2407.09781v1#bib.bib45)] validation set. ††{\dagger}† means our reproduced results.

|

| 192 |

+

|

| 193 |

+

Long-Tail Datasets. As shown in Tab.[3](https://arxiv.org/html/2407.09781v1#S4.T3 "Table 3 ‣ 4.2 Comparison with State-of-the-Arts ‣ 4 Experiments ‣ Dense Multimodal Alignment for Open-Vocabulary 3D Scene Understanding"), we validate the open-vocabulary methods on the more challenging long-tail 3D scene understanding datasets, _i.e_., ScanNet200[[45](https://arxiv.org/html/2407.09781v1#bib.bib45)]. Following[[45](https://arxiv.org/html/2407.09781v1#bib.bib45)], we partition the 200 categories into three splits, _i.e_., head, common, and tail sets, facilitating a more comprehensive comparison across categories with different frequencies. When training on head classes (ceiling, curtain, window, _etc_.), the fully-supervised method performs much better than zero-shot methods due to the sufficient 3D labels for supervision. However, on the common and tail splits, our DMA method approaches to or even surpasses the fully-supervised competitors. This is because there are only a few instances available on these long-tail categories, which is not sufficient to train a robust model from scratch. Our method does not rely on ground truth 3D labels but instead distill knowledge from pretrained vision-language models, thus it is more robust to rare objects.

|

| 194 |

+

|

| 195 |

+

To further validate the robustness of our method on rare objects/classes, we evaluate on the most frequent K 𝐾 K italic_K classes of Matterport3D[[6](https://arxiv.org/html/2407.09781v1#bib.bib6)], where K=21,40,80,160 𝐾 21 40 80 160 K=21,40,80,160 italic_K = 21 , 40 , 80 , 160. We train a 3D model by taking our generated textual descriptions and image features as supervision, and perform inference on different K 𝐾 K italic_K categories. As shown in Tab.[4](https://arxiv.org/html/2407.09781v1#S4.T4 "Table 4 ‣ 4.2 Comparison with State-of-the-Arts ‣ 4 Experiments ‣ Dense Multimodal Alignment for Open-Vocabulary 3D Scene Understanding"), when employing the same 2D network, _i.e_., OpenSeg, our method demonstrates superior zero-shot segmentation capability on both common and rare categories. Specifically, our DMA(OpenSeg)-3D surpasses OpenScene(OpenSeg)-3D by 3.8%, 4.5%, 1.6%, and 1.2% in terms of mIoU at different K 𝐾 K italic_K. This can be attributed to that OpenScene heavily relies on 2D model for supervision without aligning with text prompts, which limits its open-vocabulary ability. Our method, however, directly aligns with the textual modality, overcoming the limitations of 2D models.

|

| 196 |

+

|

| 197 |

+

mIoU mACC

|

| 198 |

+

# of classes K 21 40 80 160 21 40 80 160

|

| 199 |

+

fully-sup.TangentConv[[49](https://arxiv.org/html/2407.09781v1#bib.bib49)]−--−--−--−--46.8−--−--−--

|

| 200 |

+

TextureNet[[21](https://arxiv.org/html/2407.09781v1#bib.bib21)]−--−--−--−--63.0−--−--−--

|

| 201 |

+

ScanComplete[[12](https://arxiv.org/html/2407.09781v1#bib.bib12)]−--−--−--−--44.9−--−--−--

|

| 202 |

+

DCM-Net−--−--−--−--66.2−--−--−--

|

| 203 |

+

VM-Net[[20](https://arxiv.org/html/2407.09781v1#bib.bib20)]−--−--−--−--67.2−--−--−--

|

| 204 |

+

MinkowskiNet[[20](https://arxiv.org/html/2407.09781v1#bib.bib20)]54.2−--−--−--64.6−--−--−--

|

| 205 |

+

Zero-shot.OpenScene[[42](https://arxiv.org/html/2407.09781v1#bib.bib42)](LSeg)-3D 41.9 25.4 12.0 5.9 51.2 30.7 15.2 7.5

|

| 206 |

+

OpenScene(LSeg)-2D3D 43.4 26.8 13.1 6.4 53.5 33.0 17.4 8.6

|

| 207 |

+

OpenScene(OpenSeg)-3D 41.3 33.4 18.1 8.2 55.1 46.7 26.2 13.9

|

| 208 |

+

OpenScene(OpenSeg)-2D3D 42.6 34.2 18.8 8.4 59.2 47.5 27.1 14.5

|

| 209 |

+

\cdashline 2-10 DMA-text only 39.8 25.4 11.7 6.2 49.5 31.6 16.1 8.0

|

| 210 |

+

DMA(OpenSeg)-3D 45.1 37.9 19.7 9.4 57.6 47.7 26.7 14.1

|

| 211 |

+

DMA(FC-CLIP)-3D 46.2 38.4 20.1 9.8 58.4 48.3 26.5 15.2

|

| 212 |

+

|

| 213 |

+

Table 4: Comparison on the Matterport[[6](https://arxiv.org/html/2407.09781v1#bib.bib6)] test set.

|

| 214 |

+

|

| 215 |

+

Qualitative Comparison. Fig.[4](https://arxiv.org/html/2407.09781v1#S4.F4 "Figure 4 ‣ 4.2 Comparison with State-of-the-Arts ‣ 4 Experiments ‣ Dense Multimodal Alignment for Open-Vocabulary 3D Scene Understanding") visualizes the segmentation results of different methods. We can observe that OpenScene[[42](https://arxiv.org/html/2407.09781v1#bib.bib42)] with only 3D encoder exhibits poor performance in segmenting objects that lack spatial structures, such as “door”, “window”, “counter”, _etc_. In contrast, text supervision offers more refined guidance by establishing dense correspondences between the texts and points, thereby enabling more precise alignment. Our approach leverages the advantages of both language and 2D modalities, and achieves excellent segmentation results for both foreground and background classes using only the 3D model.

|

| 216 |

+

|

| 217 |

+

### 4.3 Ablation Study

|

| 218 |

+

|

| 219 |

+

2D Features _vs_. 3D Features. In Fig[6](https://arxiv.org/html/2407.09781v1#S4.F6 "Figure 6 ‣ 4.3 Ablation Study ‣ 4 Experiments ‣ Dense Multimodal Alignment for Open-Vocabulary 3D Scene Understanding"), we compare the segmentation performance on ScanNet by using different features. ‘F’ and ‘B’ denote foreground and background classes, respectively. For OpenScene[[42](https://arxiv.org/html/2407.09781v1#bib.bib42)], we observe that its 2D features are more advantageous for segmenting background categories with ambiguous geometry than 3D ones, _i.e_., 49.1% _vs_. 47.1% mIoU(B), while 3D features excel at segmenting the foreground objects with distinct shapes, _i.e_., 50.0% _vs_. 42.5% mIoU(F). Although the 2D-3D hybrid feature can leverage the strengths of both features simultaneously, utilizing 2D models for inference introduces significant computational overhead (please refer to the latency in Tab.[2](https://arxiv.org/html/2407.09781v1#S4.T2 "Table 2 ‣ 4.1 Setups ‣ 4 Experiments ‣ Dense Multimodal Alignment for Open-Vocabulary 3D Scene Understanding")). By additionally aligning with our generated text modality, our method can achieve outstanding performance on both foreground (58.3%) and background (51.5%) categories using only 3D features. Besides, DMA achieves comparable performance to using both 2D and 3D encoders by solely utilizing the 3D encoder, _i.e_., 53.3% _vs_. 53.5% mIoU(F), and hence significantly reducing inference time.

|

| 220 |

+

|

| 221 |

+

Tagging Models _vs_. MLLMs. In Tab.[6](https://arxiv.org/html/2407.09781v1#S4.F6 "Figure 6 ‣ 4.3 Ablation Study ‣ 4 Experiments ‣ Dense Multimodal Alignment for Open-Vocabulary 3D Scene Understanding"), we compare the results of using different tagging models and MLLMs on ScanNet. For the enhanced version, we replace RAM with RAM++[[22](https://arxiv.org/html/2407.09781v1#bib.bib22)], and LLaVA-7B with LLaVA-13B. We can observe that our method outperforms RegionPLC[[55](https://arxiv.org/html/2407.09781v1#bib.bib55)] by a large margin (about 6.7%) by building dense point-to-text correspondences. The tagging model plays a key role for performance improvement since it encompasses extensive semantic patterns, while MLLM further enhances the final performance by incorporating rich contextual information. By filtering out noisy tags with GPT, the performance can be improved by 2.6% and 1.3% for the basic and the enhanced versions, respectively. The final performance can be further improved when stronger tagging models/MLLMs are employed.

|

| 222 |

+

|

| 223 |

+

|

| 224 |

+

|

| 225 |

+

Figure 5: Comparisons of text, 2D, and 3D features. “F” and “B” denote foreground and background classes.

|

| 226 |

+

|

| 227 |

+

|

| 228 |

+

|

| 229 |

+

Figure 6: Comparisons of tagging models and MLLMs.

|

| 230 |

+

|

| 231 |

+

Method mIoU mACC mIoU (In)mIoU (Out)

|

| 232 |

+

CLIP feature 35.2 51.3 36.7 31.8

|

| 233 |

+

Mask feature (w/o FT)40.1 55.4 48.3 21.3

|

| 234 |

+

Mask feature (w/ FT)42.0 57.4 50.5 24.1

|

| 235 |

+

CLIP+Mask 44.8 59.7 51.7 28.5

|

| 236 |

+

|

| 237 |

+

Table 5: Comparisons of CLIP and Mask features of FC-CLIP on ScanNet. “FT” denotes fine-tuning.

|

| 238 |

+

|

| 239 |

+

Method 2D Mask 3D Mask

|

| 240 |

+

w/o FT 36.6 40.1

|

| 241 |

+

Full Parameter 40.4 42.0

|

| 242 |

+

LoRA[[18](https://arxiv.org/html/2407.09781v1#bib.bib18)]39.0 41.3

|

| 243 |

+

Adapter[[17](https://arxiv.org/html/2407.09781v1#bib.bib17)]37.9 40.9

|

| 244 |

+

|

| 245 |

+

Table 6: Comparisons of different fine-tuning methods.

|

| 246 |

+

|

| 247 |

+

CLIP Features _vs_. Mask Features. In addition to OpenSeg[[14](https://arxiv.org/html/2407.09781v1#bib.bib14)], we employ FC-CLIP[[56](https://arxiv.org/html/2407.09781v1#bib.bib56)] to extract 2D features due to its effectiveness. As show in Tab.[6](https://arxiv.org/html/2407.09781v1#S4.T6 "Table 6 ‣ 4.3 Ablation Study ‣ 4 Experiments ‣ Dense Multimodal Alignment for Open-Vocabulary 3D Scene Understanding"), we compare the performance by using CLIP and Mask features as supervision. ‘In’ and ‘Out’ denote in-vocabulary and out-vocabulary classes, respectively. FC-CLIP contains an in-vocabulary classifier and an out-vocabulary classifier, which correspond to the seen and unseen categories in the training process, respectively. As can be seen, the fixed CLIP feature is more advantageous in segmenting unseen categories, which outperforms mask feature by 10.5% in terms of mIoU(Out). This demonstrates that the fixed CLIP visual encoder could maintain the strong generalization ability on novel classes. While for in-vocabulary classes, mask features outperform the CLIP feature by 11.6%. By combining these features, we can simultaneously achieve competitive results on in- and out-vocabulary categories, attaining 44.8% mIoU over all classes.

|

| 248 |

+

|

| 249 |

+

Comparisons of Different Fine-Tuning Methods. We fine-tune the mask head of FC-CLIP with different strategies for incorporating 3D structural priors into mask features. As can be seen in Tab.[6](https://arxiv.org/html/2407.09781v1#S4.T6 "Table 6 ‣ 4.3 Ablation Study ‣ 4 Experiments ‣ Dense Multimodal Alignment for Open-Vocabulary 3D Scene Understanding"), by fully fine-tuning the mask head, the performances of 2D and 3D masks are improved by 3.8% and 1.9%, respectively. LoRA[[18](https://arxiv.org/html/2407.09781v1#bib.bib18)] and Adapter[[17](https://arxiv.org/html/2407.09781v1#bib.bib17)] can also achieve obvious improvements by tuning a small amount of parameters.

|

| 250 |

+

|

| 251 |

+

|

| 252 |

+

|

| 253 |

+

Figure 7: Open-vocabulary segmentation results on rare categories and different forms of queries. The same color corresponds to the same query/category.

|

| 254 |

+

|

| 255 |

+

Open-Vocabulary Segmentation for Different Text Queries. We finally investigate the ability of our method to segment rare categories. As shown in Fig.[7](https://arxiv.org/html/2407.09781v1#S4.F7 "Figure 7 ‣ 4.3 Ablation Study ‣ 4 Experiments ‣ Dense Multimodal Alignment for Open-Vocabulary 3D Scene Understanding"), our method can accurately segment the corresponding regions for the given texts/queries in 3D scenes, even for unseen categories. For instance, our well-trained model can quickly locate the position of new categories such as “Snoopy”, or functional areas such as “kitchen”, _etc_. On one hand, we align with 2D CLIP features that have been trained with a vast corpus of text. On the other hand, we construct a comprehensive and scalable textual modality by using VLMs, further enhancing the understanding ability.

|

| 256 |

+

|

| 257 |

+

5 Conclusion

|

| 258 |

+

------------

|

| 259 |

+

|

| 260 |

+

We presented a dense multimodal alignment (DMA) framework for open-vocabulary 3D scene understanding by establishing dense correspondences between 3D points, 2D images and 1D texts, and leveraging their synergistic benefits to learn robust and generalizable 3D representations. To build a scalable language modality, we utilized powerful vision-language models to extract comprehensive scene descriptions and category information. Furthermore, we preserved the open-vocabulary recognition ability of the image modality by combining frozen CLIP features with trainable mask features. Extensive experiments demonstrate the promising performance of our method in open-vocabulary segmentation tasks across various indoor and outdoor scenarios.

|

| 261 |

+

|

| 262 |

+

Limitations. Our method relies on the quality of generated text descriptions and image features. In addition, collecting a larger 3D scene dataset is crucial for improving the generalization ability to unseen categories and variations.

|

| 263 |

+

|

| 264 |

+

References

|

| 265 |

+

----------

|

| 266 |

+

|

| 267 |

+

* [1] Alayrac, J.B., Donahue, J., Luc, P., Miech, A., Barr, I., Hasson, Y., Lenc, K., Mensch, A., Millican, K., Reynolds, M., et al.: Flamingo: a visual language model for few-shot learning. Advances in Neural Information Processing Systems 35, 23716–23736 (2022)

|