Add 1 files

Browse files- 2305/2305.14836.md +324 -0

2305/2305.14836.md

ADDED

|

@@ -0,0 +1,324 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

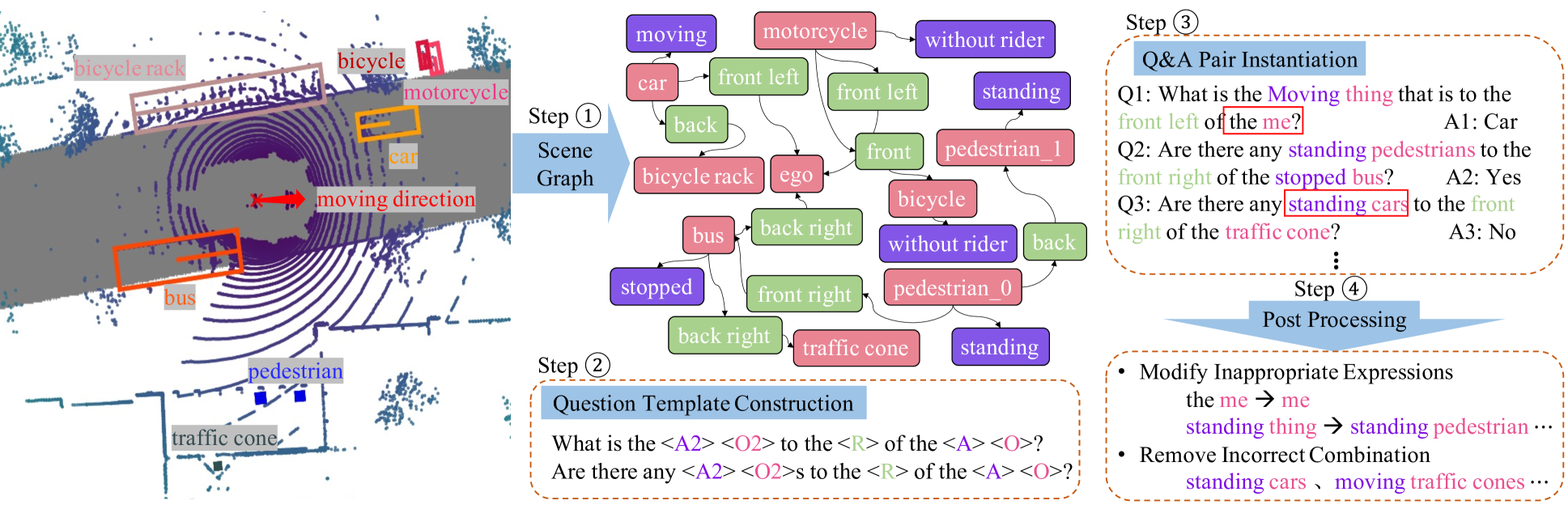

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

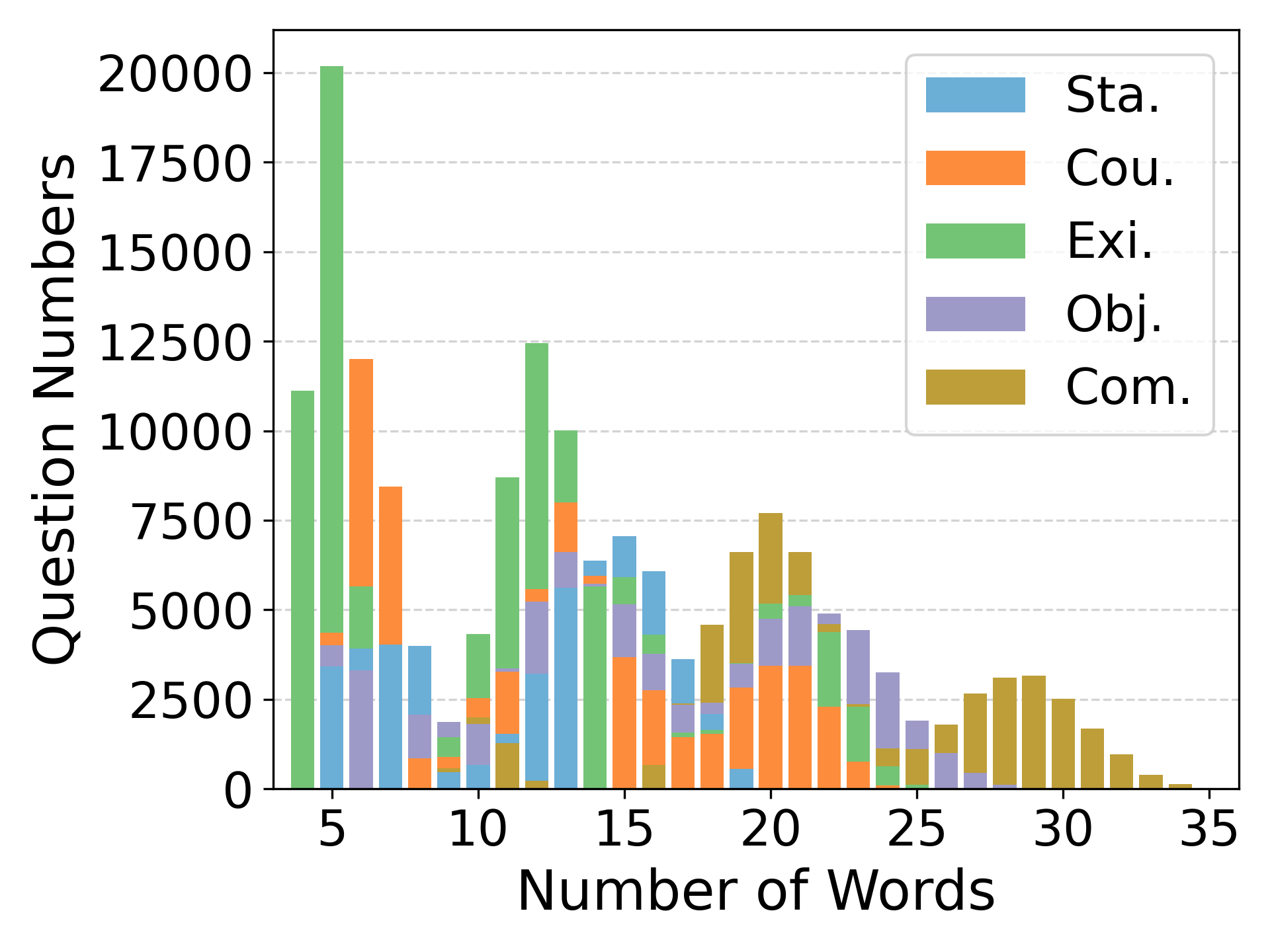

|

|

|

|

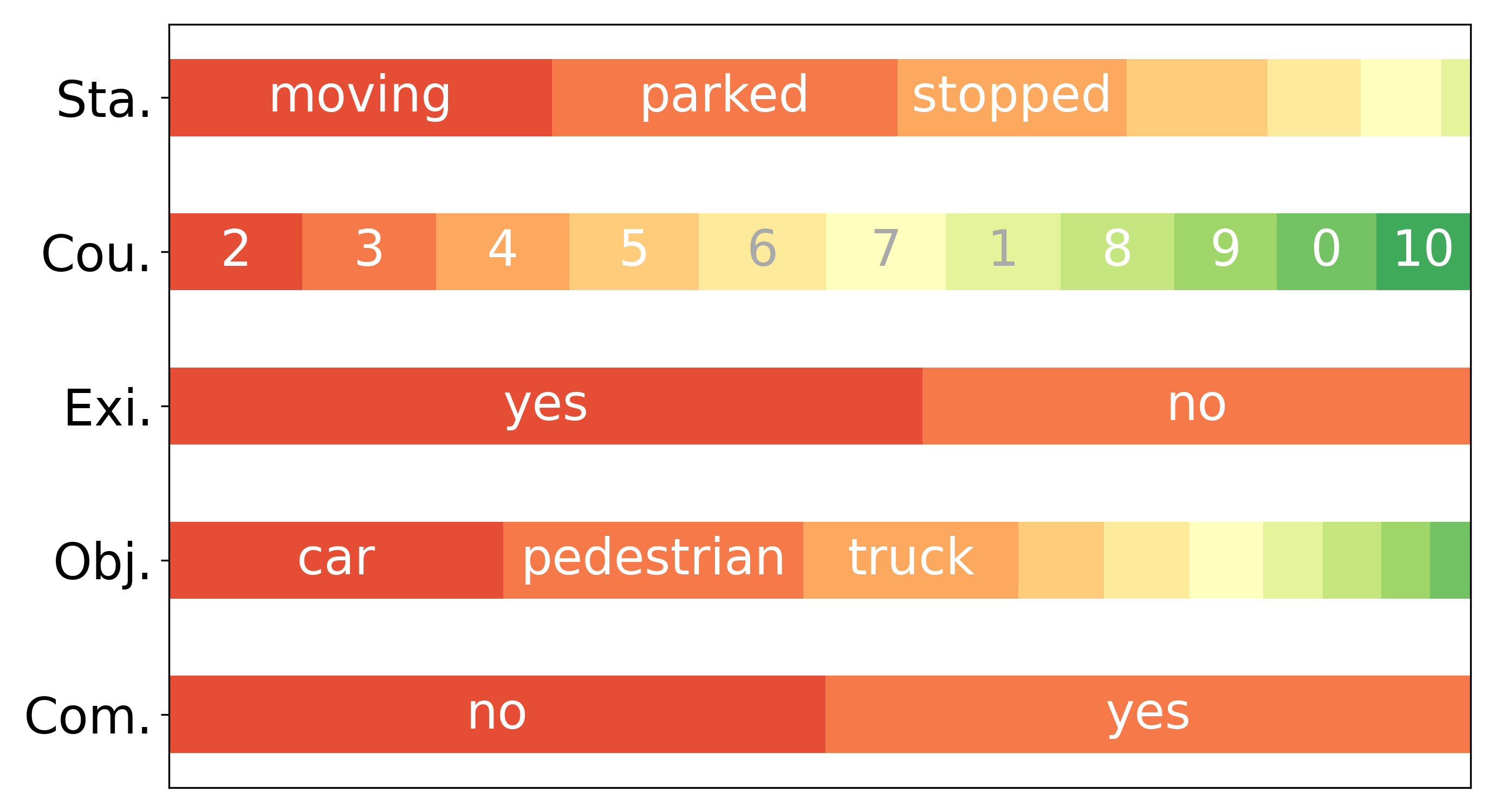

|

|

|

|

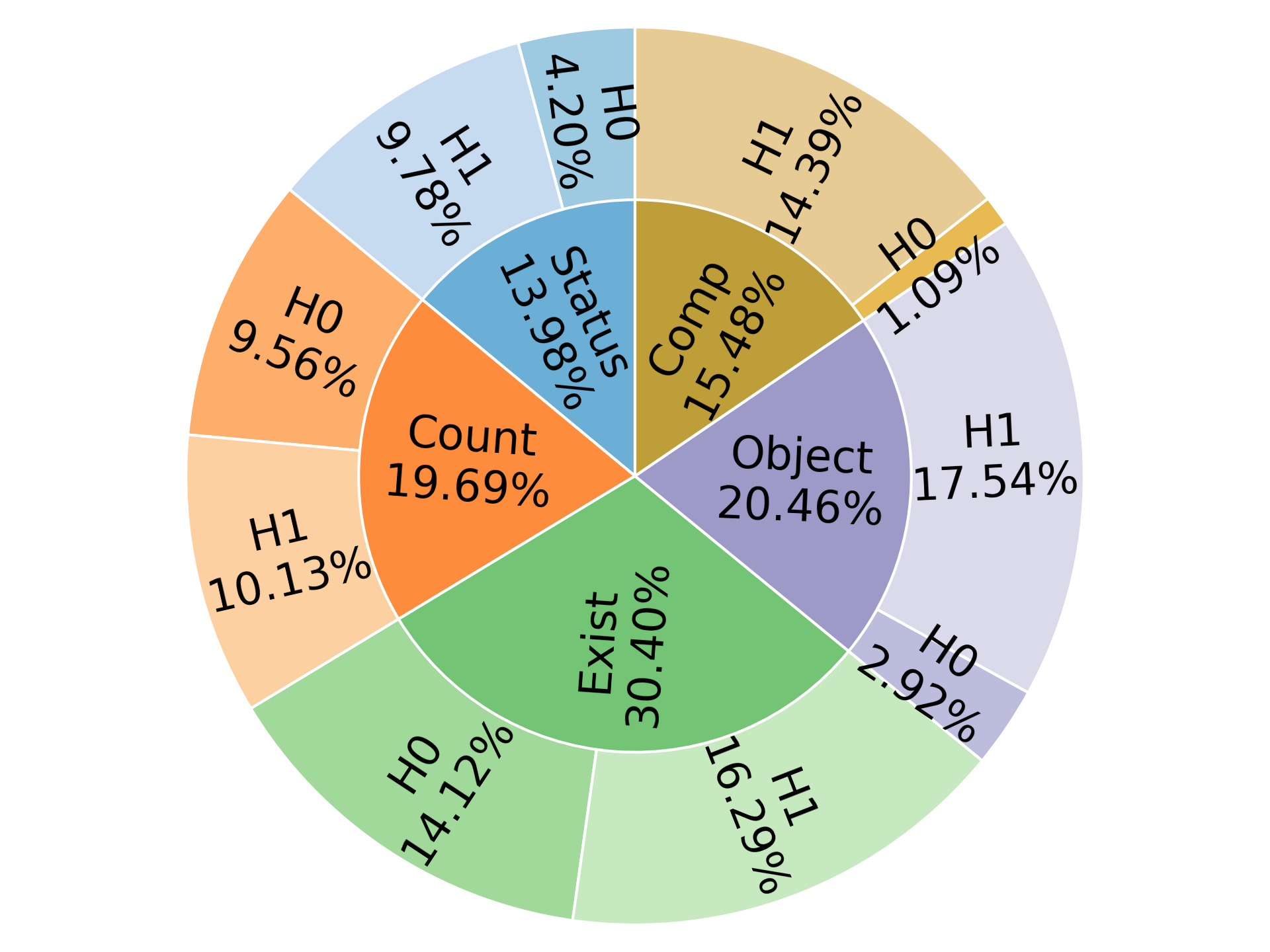

|

|

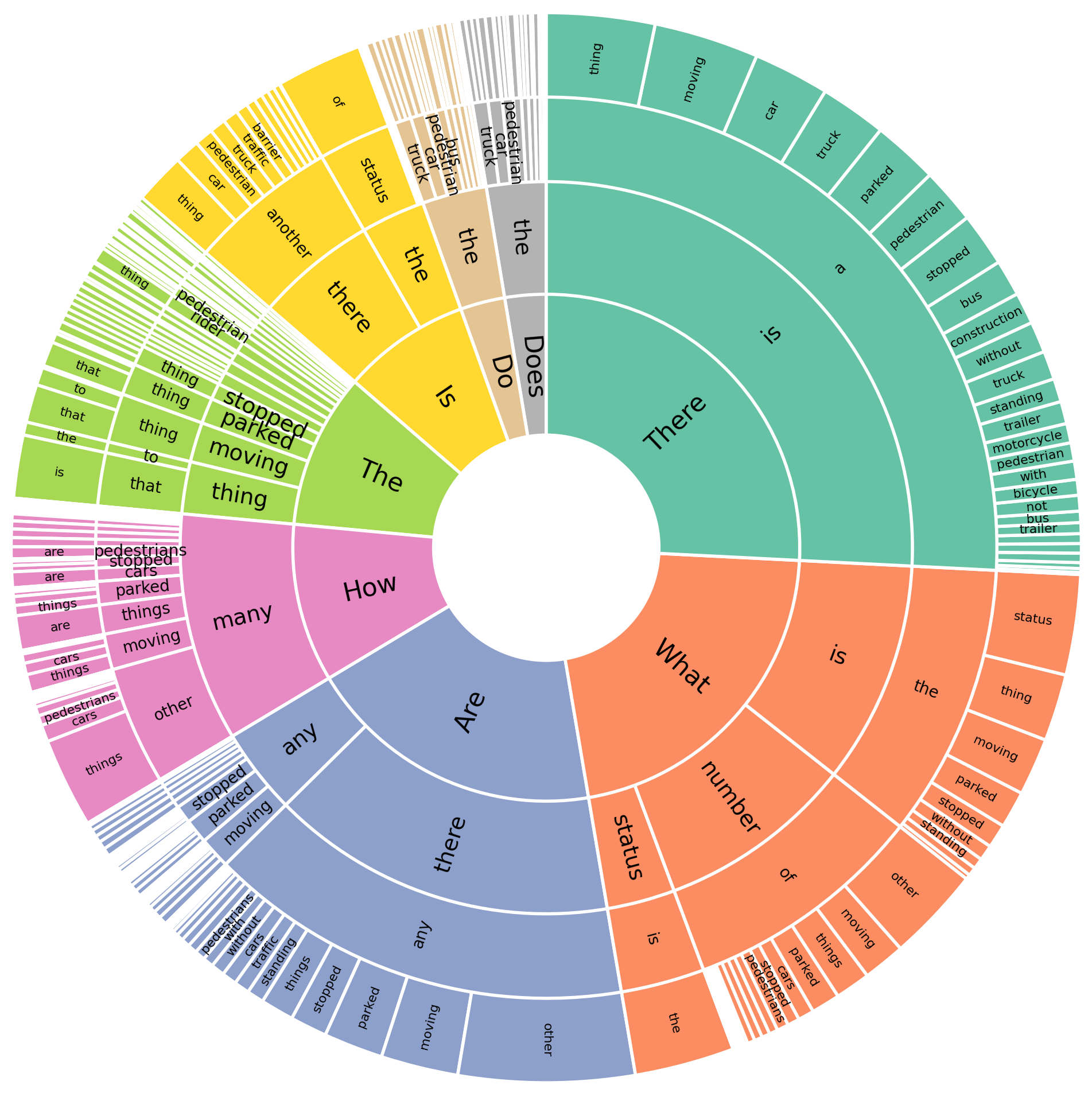

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

Title: NuScenes-QA: A Multi-Modal Visual Question Answering Benchmark for Autonomous Driving Scenario

|

| 2 |

+

|

| 3 |

+

URL Source: https://arxiv.org/html/2305.14836

|

| 4 |

+

|

| 5 |

+

Published Time: Wed, 21 Feb 2024 01:26:26 GMT

|

| 6 |

+

|

| 7 |

+

Markdown Content:

|

| 8 |

+

###### Abstract

|

| 9 |

+

|

| 10 |

+

We introduce a novel visual question answering (VQA) task in the context of autonomous driving, aiming to answer natural language questions based on street-view clues. Compared to traditional VQA tasks, VQA in autonomous driving scenario presents more challenges. Firstly, the raw visual data are multi-modal, including images and point clouds captured by camera and LiDAR, respectively. Secondly, the data are multi-frame due to the continuous, real-time acquisition. Thirdly, the outdoor scenes exhibit both moving foreground and static background. Existing VQA benchmarks fail to adequately address these complexities. To bridge this gap, we propose NuScenes-QA, the first benchmark for VQA in the autonomous driving scenario, encompassing 34K visual scenes and 460K question-answer pairs. Specifically, we leverage existing 3D detection annotations to generate scene graphs and design question templates manually. Subsequently, the question-answer pairs are generated programmatically based on these templates. Comprehensive statistics prove that our NuScenes-QA is a balanced large-scale benchmark with diverse question formats. Built upon it, we develop a series of baselines that employ advanced 3D detection and VQA techniques. Our extensive experiments highlight the challenges posed by this new task. Codes and dataset are available at [https://github.com/qiantianwen/NuScenes-QA](https://github.com/qiantianwen/NuScenes-QA).

|

| 11 |

+

|

| 12 |

+

### Introduction

|

| 13 |

+

|

| 14 |

+

|

| 15 |

+

|

| 16 |

+

Figure 1: NuScenes-QA is a multi-modal, multi-frame, outdoor dataset that differs significantly from other VQA benchmarks in terms of visual data.

|

| 17 |

+

|

| 18 |

+

Autonomous driving is a rapidly developing field with immense potential to improve transportation safety and efficiency with advancements in sensor technologies and computer vision. As the increasing maturity of traditional perceptual techniques such as 3D object detection (Liu et al. [2023](https://arxiv.org/html/2305.14836v2#bib.bib26); Jiao et al. [2023b](https://arxiv.org/html/2305.14836v2#bib.bib20)) and tracking (Chen et al. [2023](https://arxiv.org/html/2305.14836v2#bib.bib5)), autonomous driving systems are progressing towards enhanced interpretability and flexible human-car interactivity. In this context, visual question answering (VQA) (Antol et al. [2015](https://arxiv.org/html/2305.14836v2#bib.bib2)) can play a critical role. On one hand, VQA possesses interactive and entertainment, enabling passengers to perceive their surroundings through language and enhancing the user experience of intelligent driving systems. On the other hand, users can verify the correctness of perception system through question answering, fortifying their trust in its capabilities.

|

| 19 |

+

|

| 20 |

+

Despite the notable progress made by the VQA community, models trained on existing VQA datasets (Goyal et al. [2017](https://arxiv.org/html/2305.14836v2#bib.bib11); Hudson and Manning [2019](https://arxiv.org/html/2305.14836v2#bib.bib16)) have limitations in addressing the complexities of autonomous driving scenario. This limitation is primarily caused by the difference in visual data between self-driving scenario and existing VQA benchmarks. For instance, to answer question like “Are there any moving pedestrians in front of the stopped bus?”, it is necessary to locate and identify the bus, pedestrians, and their status accurately. This requires the model to effectively leverage the complementary information from images and point clouds to understand complex scenes and capture object dynamics from multiple frames of data streams. Therefore, it is essential to explore VQA in the context of multi-modal, multi-frame and outdoor scenario. However, existing VQA benchmarks cannot satisfy all these conditions simultaneously, as illustrated in Fig. [1](https://arxiv.org/html/2305.14836v2#Sx1.F1 "Figure 1 ‣ Introduction ‣ NuScenes-QA: A Multi-Modal Visual Question Answering Benchmark for Autonomous Driving Scenario"). For instance, although 3D-QA (Azuma et al. [2022](https://arxiv.org/html/2305.14836v2#bib.bib3)) and the self-driving scenario both focus on understanding the structure and spatial relationships of objects, 3D-QA is limited to single-modal (_i.e._, point cloud), single-frame, and static indoor scenes. The same goes for other benchmarks. To bridge this gap, we construct the first VQA benchmark specifically designed for autonomous driving scenario, named NuScenes-QA. NuScenes-QA is different from all other existing VQA benchmarks in terms of visual data characteristics, presenting new challenges for both VQA and autonomous driving community.

|

| 21 |

+

|

| 22 |

+

The proposed NuScenes-QA is built upon nuScenes (Caesar et al. [2020](https://arxiv.org/html/2305.14836v2#bib.bib4)), which is a popular 3D perception dataset for autonomous driving. We automatically annotate the question-answer pairs using the CLEVR benchmark (Johnson et al. [2017](https://arxiv.org/html/2305.14836v2#bib.bib21)) as inspiration. To be specific, we consider each keyframe annotated in nuScenes as a “scene” and construct a related scene graph. The objects and their attributes are regarded as the nodes in the graph, while the relative spatial relationships between objects are regarded as the edges, which are calculated based on the 3D bounding boxes annotated in nuScenes. Additionally, we design different types of question templates manually, including counting, comparison, and existence, etc. Based on these constructed templates and scene graphs, we sample different parameters to instantiate the templates, and use the scene graph to infer the correct answers, thus automatically generating question-answer pairs. Eventually, we obtained a total of 460K question-answer pairs on 34K scenes from the annotated nuScenes training and validation split, with 377K pairs for training and 83K for testing.

|

| 23 |

+

|

| 24 |

+

In addition to the dataset, we also develop baseline models using the existing 3D perception (Huang et al. [2021](https://arxiv.org/html/2305.14836v2#bib.bib15); Yin, Zhou, and Krahenbuhl [2021](https://arxiv.org/html/2305.14836v2#bib.bib37); Jiao et al. [2023a](https://arxiv.org/html/2305.14836v2#bib.bib19)) and visual question answering (Anderson et al. [2018](https://arxiv.org/html/2305.14836v2#bib.bib1); Yu et al. [2019](https://arxiv.org/html/2305.14836v2#bib.bib38)) techniques. These models fall into three categories: image-based, point cloud-based, and multi-modal fusion-based. The 3D detection models are used to extract visual features and provide object proposals, which are then combined with question features and fed into the question answering model for answer decoding. While our experiments show that these models outperform the question-only blind model, their performance still significantly lags behind models that use ground truth object labels as inputs. This indicates that combining existing technologies is not sufficient for intricate street views understanding. Thus, NuScenes-QA poses a new challenge, urging further research in this realm.

|

| 25 |

+

|

| 26 |

+

Overall, our contributions can be summarized as follows:

|

| 27 |

+

|

| 28 |

+

* •We introduce a novel visual question answering task in autonomous driving scenario, which evaluates current deep learning based models’ ability to understand and reason with complex visual data in multi-modal, multi-frame, and outdoor scenes. To facilitate this task, we contribute a large-scale dataset, NuScenes-QA, consisting of 34K complex autonomous driving scenes and 460K question-answer pairs.

|

| 29 |

+

* •We establish several baseline models and extensively evaluate the performance of existing techniques for this task. Additionally, we conduct ablation experiments to analyze specific techniques that are relevant to this task, which provide a foundation for future research.

|

| 30 |

+

|

| 31 |

+

|

| 32 |

+

|

| 33 |

+

Figure 2: Data construction flow of NuScenes-QA. First, the scene graphs are generated using the annotated object labels and 3D bounding boxes. Then, we design question templates manually, and instantiate the question-answer pairs with them. Finally, the generated data are filtered based on certain rules.

|

| 34 |

+

|

| 35 |

+

### Related Works

|

| 36 |

+

|

| 37 |

+

#### Visual Question Answering

|

| 38 |

+

|

| 39 |

+

There are various datasets available for VQA, including image-based datasets such as VQA2.0 (Goyal et al. [2017](https://arxiv.org/html/2305.14836v2#bib.bib11)), CLEVR (Johnson et al. [2017](https://arxiv.org/html/2305.14836v2#bib.bib21)), and GQA (Hudson and Manning [2019](https://arxiv.org/html/2305.14836v2#bib.bib16)), as well as video-based datasets such as TGIF-QA (Jang et al. [2017](https://arxiv.org/html/2305.14836v2#bib.bib17)) and TVQA (Lei et al. [2018](https://arxiv.org/html/2305.14836v2#bib.bib24)). For the image-based VQA, earlier works (Lu et al. [2016](https://arxiv.org/html/2305.14836v2#bib.bib27); Anderson et al. [2018](https://arxiv.org/html/2305.14836v2#bib.bib1); Qian et al. [2022a](https://arxiv.org/html/2305.14836v2#bib.bib31)) typically use CNNs to extract image features, and RNNs to process the question. Then, joint embddings of vision and language obtained through concatenation or other operations (Kim, Jun, and Zhang [2018](https://arxiv.org/html/2305.14836v2#bib.bib23)) are input to the decoder for answer prediction. Recently, many Transformer-based models (Tan and Bansal [2019](https://arxiv.org/html/2305.14836v2#bib.bib34); Zhang et al. [2021](https://arxiv.org/html/2305.14836v2#bib.bib39)) have achieved state-of-the-art performance through large-scale vision-language pre-training. Differing to image-based VQA, VideoQA (Jang et al. [2017](https://arxiv.org/html/2305.14836v2#bib.bib17); Zhu et al. [2017](https://arxiv.org/html/2305.14836v2#bib.bib43)) places greater emphasis on mining the temporal contextual from videos. For example, Jiang et al.(Jiang et al. [2020](https://arxiv.org/html/2305.14836v2#bib.bib18)) proposed a question-guided spatial-temporal contextual attention network, and Qian et al.(Qian et al. [2022b](https://arxiv.org/html/2305.14836v2#bib.bib32)) suggested first localizing relevant segments in a long-term video before answering.

|

| 40 |

+

|

| 41 |

+

#### 3D Visual Question Answering

|

| 42 |

+

|

| 43 |

+

3D Visual Question Answering (3D-QA) is a novel task in the VQA field that focuses on answering questions about 3D scenes represented by point cloud. Unlike traditional VQA tasks, 3D-QA requires models to understand the geometric structure and the spatial relations of objects in a indoor scene. Recently, many 3D-QA datasets have been constructed. For example, the 3DQA dataset (Ye et al. [2022](https://arxiv.org/html/2305.14836v2#bib.bib36)), which is based on ScanNet (Dai et al. [2017](https://arxiv.org/html/2305.14836v2#bib.bib6)), has manually annotated 6K question-answer pairs. Similarly, ScanQA (Azuma et al. [2022](https://arxiv.org/html/2305.14836v2#bib.bib3)) has utilized a question generation model along with manual editing to annotate 41K pairs on the same visual data. Despite these advancements, current 3D-QA models face limitations in solving more complex autonomous driving scenario, which involve multi-modalities, multi-frames, and outdoor scenes.

|

| 44 |

+

|

| 45 |

+

#### Vision-Language Tasks in Autonomous Driving

|

| 46 |

+

|

| 47 |

+

Language systems are pivotal for communication between the passengers and vehicles. Pioneering efforts have explored language-guided visual understanding in this context. For instance, Deruyttere et al. proposed the Talk2Car (Deruyttere et al. [2019](https://arxiv.org/html/2305.14836v2#bib.bib8)), which is the first object referral dataset with natural language commands for self-driving cars. Wu et al. developed a benchmark with scalable expressions named Refer-KITTI (Dongming et al. [2023](https://arxiv.org/html/2305.14836v2#bib.bib9)) based on the self-driving dataset KITTI (Geiger, Lenz, and Urtasun [2012](https://arxiv.org/html/2305.14836v2#bib.bib10)). It aims to track multiple targets based on language descriptions. In contrast, our proposed NuScene-QA stands out in two ways. Firstly, it tackles high-level question answering, demanding both understanding and reasoning. Secondly, NuScenes-QA provides richer visual information, including images and point clouds.

|

| 48 |

+

|

| 49 |

+

### NuScenes-QA Dataset

|

| 50 |

+

|

| 51 |

+

Our primary contribution is the NuScenes-QA dataset, which we will introduce in detail in this section. We provide a comprehensive overview of the dataset construction, including scene graph development, question template design, question-answer pair generation, and post-processing. In addition, we analyze the statistical characteristics of the NuScenes-QA dataset, such as the distribution of question types, lengths, and answers.

|

| 52 |

+

|

| 53 |

+

#### Data Construction

|

| 54 |

+

|

| 55 |

+

For question-answer pairs generation, we adapted an automated method inspired by CLEVR (Johnson et al. [2017](https://arxiv.org/html/2305.14836v2#bib.bib21)). This method requires two types of structured data: scene graphs generated from 3D annotations, containing object category, position, and relationships; alongside manually crafted question templates that specify the question type, expected answer type, and reasoning required to answer it. By combining these structured data, we automatically generate question-answer pairs. These pairs are then filtered and validated through post-processing programs to construct the complete dataset. Fig. [2](https://arxiv.org/html/2305.14836v2#Sx1.F2 "Figure 2 ‣ Introduction ‣ NuScenes-QA: A Multi-Modal Visual Question Answering Benchmark for Autonomous Driving Scenario") illustrates the overall data construction pipeline.

|

| 56 |

+

|

| 57 |

+

|

| 58 |

+

|

| 59 |

+

(a) Question Length Distribution

|

| 60 |

+

|

| 61 |

+

|

| 62 |

+

|

| 63 |

+

(b) Answer Distribution

|

| 64 |

+

|

| 65 |

+

|

| 66 |

+

|

| 67 |

+

(c) Category Distribution

|

| 68 |

+

|

| 69 |

+

Figure 3: Statistical distributions of questions and answers in the NuScenes-QA training split.

|

| 70 |

+

|

| 71 |

+

##### Scene Graph Construction

|

| 72 |

+

|

| 73 |

+

A scene graph (Johnson et al. [2015](https://arxiv.org/html/2305.14836v2#bib.bib22)) is defined as an abstract representation of a visual scene, where nodes in the graph represent objects in the scene, and edges represent relationships between objects.

|

| 74 |

+

|

| 75 |

+

In nuScenes, the collected data is annotated with a frequency of 2Hz, and each annotated frame is referred as a “keyframe”. We consider each keyframe as a “scene” in NuScenes-QA. The existing annotations include object categories and their attributes in the scene, as well as the 3D bounding boxes of the objects. These annotated objects and their attributes are directly used as nodes in the graph. However, relationships between objects are not provided in the original annotations, so we developed a rule for calculating object relationships. Given that spatial position relationships are crucial in autonomous driving scenario, we define six relationships between objects, namely front, back, front left, front right, back left, and back right. To determine object relationships, we first project 3D bounding boxes onto the Bird’s-Eye-View (BEV). Subsequently, we calculate the angle between the vector connecting the centers of two bounding boxes and the forward direction of the ego-car. The formula is given by

|

| 76 |

+

|

| 77 |

+

θ=cos−1(B 1[:2]−B 2[:2])⋅V ego[:2]∥B 1[:2]−B 2[:2]∥∥V ego[:2]∥,\theta=\cos^{-1}\frac{(B_{1}[:2]-B_{2}[:2])\cdot V_{ego}[:2]}{\|B_{1}[:2]-B_{2% }[:2]\|\|V_{ego}[:2]\|},italic_θ = roman_cos start_POSTSUPERSCRIPT - 1 end_POSTSUPERSCRIPT divide start_ARG ( italic_B start_POSTSUBSCRIPT 1 end_POSTSUBSCRIPT [ : 2 ] - italic_B start_POSTSUBSCRIPT 2 end_POSTSUBSCRIPT [ : 2 ] ) ⋅ italic_V start_POSTSUBSCRIPT italic_e italic_g italic_o end_POSTSUBSCRIPT [ : 2 ] end_ARG start_ARG ∥ italic_B start_POSTSUBSCRIPT 1 end_POSTSUBSCRIPT [ : 2 ] - italic_B start_POSTSUBSCRIPT 2 end_POSTSUBSCRIPT [ : 2 ] ∥ ∥ italic_V start_POSTSUBSCRIPT italic_e italic_g italic_o end_POSTSUBSCRIPT [ : 2 ] ∥ end_ARG ,(1)

|

| 78 |

+

|

| 79 |

+

where B i=[x,y,z,x size,y size,z size,φ]subscript 𝐵 𝑖 𝑥 𝑦 𝑧 subscript 𝑥 𝑠 𝑖 𝑧 𝑒 subscript 𝑦 𝑠 𝑖 𝑧 𝑒 subscript 𝑧 𝑠 𝑖 𝑧 𝑒 𝜑 B_{i}=[x,y,z,x_{size},y_{size},z_{size},\varphi]italic_B start_POSTSUBSCRIPT italic_i end_POSTSUBSCRIPT = [ italic_x , italic_y , italic_z , italic_x start_POSTSUBSCRIPT italic_s italic_i italic_z italic_e end_POSTSUBSCRIPT , italic_y start_POSTSUBSCRIPT italic_s italic_i italic_z italic_e end_POSTSUBSCRIPT , italic_z start_POSTSUBSCRIPT italic_s italic_i italic_z italic_e end_POSTSUBSCRIPT , italic_φ ] is the 3D bounding box of object i 𝑖 i italic_i, and V ego=[v x,v y,v z]subscript 𝑉 𝑒 𝑔 𝑜 subscript 𝑣 𝑥 subscript 𝑣 𝑦 subscript 𝑣 𝑧 V_{ego}=[v_{x},v_{y},v_{z}]italic_V start_POSTSUBSCRIPT italic_e italic_g italic_o end_POSTSUBSCRIPT = [ italic_v start_POSTSUBSCRIPT italic_x end_POSTSUBSCRIPT , italic_v start_POSTSUBSCRIPT italic_y end_POSTSUBSCRIPT , italic_v start_POSTSUBSCRIPT italic_z end_POSTSUBSCRIPT ] represents the speed of the ego car. Based on the angle range, the relationship between two objects is defined as

|

| 80 |

+

|

| 81 |

+

relation={front if−30∘<θ<=30∘frontleft if30∘<θ<=90∘frontright if−90∘<θ<=−30∘backleft if90∘<θ<=150∘backrigth if−150∘<θ<=−90∘back else.𝑟 𝑒 𝑙 𝑎 𝑡 𝑖 𝑜 𝑛 cases 𝑓 𝑟 𝑜 𝑛 𝑡 if superscript 30 𝜃 superscript 30 𝑓 𝑟 𝑜 𝑛 𝑡 𝑙 𝑒 𝑓 𝑡 if superscript 30 𝜃 superscript 90 𝑓 𝑟 𝑜 𝑛 𝑡 𝑟 𝑖 𝑔 ℎ 𝑡 if superscript 90 𝜃 superscript 30 𝑏 𝑎 𝑐 𝑘 𝑙 𝑒 𝑓 𝑡 if superscript 90 𝜃 superscript 150 𝑏 𝑎 𝑐 𝑘 𝑟 𝑖 𝑔 𝑡 ℎ if superscript 150 𝜃 superscript 90 𝑏 𝑎 𝑐 𝑘 else.relation=\begin{cases}front&\text{ if }-30^{\circ}<\theta<=30^{\circ}\\ front\ left&\text{ if }30^{\circ}<\theta<=90^{\circ}\\ front\ right&\text{ if }-90^{\circ}<\theta<=-30^{\circ}\\ back\ left&\text{ if }90^{\circ}<\theta<=150^{\circ}\\ back\ rigth&\text{ if }-150^{\circ}<\theta<=-90^{\circ}\\ back&\text{ else. }\end{cases}italic_r italic_e italic_l italic_a italic_t italic_i italic_o italic_n = { start_ROW start_CELL italic_f italic_r italic_o italic_n italic_t end_CELL start_CELL if - 30 start_POSTSUPERSCRIPT ∘ end_POSTSUPERSCRIPT < italic_θ < = 30 start_POSTSUPERSCRIPT ∘ end_POSTSUPERSCRIPT end_CELL end_ROW start_ROW start_CELL italic_f italic_r italic_o italic_n italic_t italic_l italic_e italic_f italic_t end_CELL start_CELL if 30 start_POSTSUPERSCRIPT ∘ end_POSTSUPERSCRIPT < italic_θ < = 90 start_POSTSUPERSCRIPT ∘ end_POSTSUPERSCRIPT end_CELL end_ROW start_ROW start_CELL italic_f italic_r italic_o italic_n italic_t italic_r italic_i italic_g italic_h italic_t end_CELL start_CELL if - 90 start_POSTSUPERSCRIPT ∘ end_POSTSUPERSCRIPT < italic_θ < = - 30 start_POSTSUPERSCRIPT ∘ end_POSTSUPERSCRIPT end_CELL end_ROW start_ROW start_CELL italic_b italic_a italic_c italic_k italic_l italic_e italic_f italic_t end_CELL start_CELL if 90 start_POSTSUPERSCRIPT ∘ end_POSTSUPERSCRIPT < italic_θ < = 150 start_POSTSUPERSCRIPT ∘ end_POSTSUPERSCRIPT end_CELL end_ROW start_ROW start_CELL italic_b italic_a italic_c italic_k italic_r italic_i italic_g italic_t italic_h end_CELL start_CELL if - 150 start_POSTSUPERSCRIPT ∘ end_POSTSUPERSCRIPT < italic_θ < = - 90 start_POSTSUPERSCRIPT ∘ end_POSTSUPERSCRIPT end_CELL end_ROW start_ROW start_CELL italic_b italic_a italic_c italic_k end_CELL start_CELL else. end_CELL end_ROW(2)

|

| 82 |

+

|

| 83 |

+

We define the forward direction of the car as 0∘superscript 0 0^{\circ}0 start_POSTSUPERSCRIPT ∘ end_POSTSUPERSCRIPT and counterclockwise angle as positive. At this point, we can convert the annotations of nuScenes into the scene graphs we need, as illustrated in step one of Fig. [2](https://arxiv.org/html/2305.14836v2#Sx1.F2 "Figure 2 ‣ Introduction ‣ NuScenes-QA: A Multi-Modal Visual Question Answering Benchmark for Autonomous Driving Scenario").

|

| 84 |

+

|

| 85 |

+

##### Question Template Design

|

| 86 |

+

|

| 87 |

+

We devised templates manually for question generation. For instance, the question “What is the moving thing to the front left of the stopped bus?” can be abstracted as the template “What is the <A2><O2>to the <R>of the <A1><O1>?”, with <A>, <O>, and <R> as parameters for instantiation, representing attribute, object, and relationship, respectively. Additionally, we can express the same semantic with another form like “There is a <A2><O2>to the <R>of the <A1><O1>, what is it?”.

|

| 88 |

+

|

| 89 |

+

Ultimately, NuScenes-QA holds 66 diverse question templates, divided into 5 question types: existence, counting, query-object, query-status, and comparison. In addition, to better evaluate the models reasoning performance, we also divide the questions into zero-hop and one-hop. Specifically, zero-hop questions require no reasoning between objects, _e.g._, “What is the status of the <A><O>?”. One-hop questions involve one step spatial reasoning, _e.g._, “What is the status of the <A2><O2>to the <R>of the <A1><O1>?”. Comprehensive template details are available in the supplementary material.

|

| 90 |

+

|

| 91 |

+

##### Q&A Pair Generation and Filtering

|

| 92 |

+

|

| 93 |

+

Given the scene graphs and question templates, instantiating a question-answer pair is straightforward: we select a template, assign parameter values through depth-first search, and deduce the ground truth answer on the scene graph. Moreover, we dismiss ill-posed or degenerate questions. For instance, the question is ill-posed if the scene do not contain any cars or pedestrians when <O1>==pedestrian and <O2>==car is assigned for the template “What is the status of the <O2>to the <R>of the <A1><O1>?”.

|

| 94 |

+

|

| 95 |

+

It is important to note that post-processing, as depicted in step 4 of Fig. [2](https://arxiv.org/html/2305.14836v2#Sx1.F2 "Figure 2 ‣ Introduction ‣ NuScenes-QA: A Multi-Modal Visual Question Answering Benchmark for Autonomous Driving Scenario"), addresses numerous unsuitable expressions. For example, we added the ego-car as an object in the scene, it is referred to as “me” in questions. This led to some inappropriate instances like “the me” or “there is a me” when <O> is assigned “me”. We revised such expressions. In addition, during the instantiation, inappropriate <A>+<O> combinations like “standing cars” and “parked pedestrians” were eliminated through rules. Also, we removed questions with counting answers greater than 10 to balance the answer distribution.

|

| 96 |

+

|

| 97 |

+

|

| 98 |

+

|

| 99 |

+

Figure 4: Framework of baseline. The multi-view images and point clouds are first processed by the feature extraction backbone to obtain BEV features. Then, the objects embeddings are cropped based on the detected 3D bounding boxes. Finally, these objects features are fed into the question-answering head along with the given question for answer decoding.

|

| 100 |

+

|

| 101 |

+

#### Data Statistics

|

| 102 |

+

|

| 103 |

+

In total, NuScenes-QA provides 459,941 question-answer pairs across 34,149 visual scenes, with 376,604 questions from 28,130 scenes for training, and 83,337 questions from 6,019 scenes for testing. To the best of our knowledge, NuScenes-QA is currently the largest 3D related question answering dataset. Detailed comparison of 3D-QA datasets can be found in supplementary materials.

|

| 104 |

+

|

| 105 |

+

Fig. [3](https://arxiv.org/html/2305.14836v2#Sx3.F3 "Figure 3 ‣ Data Construction ‣ NuScenes-QA Dataset ‣ NuScenes-QA: A Multi-Modal Visual Question Answering Benchmark for Autonomous Driving Scenario") depicts various statistical distributions of NuScenes-QA. Fig. [3](https://arxiv.org/html/2305.14836v2#Sx3.F3 "Figure 3 ‣ Data Construction ‣ NuScenes-QA Dataset ‣ NuScenes-QA: A Multi-Modal Visual Question Answering Benchmark for Autonomous Driving Scenario")(a) showcases a broad spectrum of question lengths (5 to 35 words). Fig. [3](https://arxiv.org/html/2305.14836v2#Sx3.F3 "Figure 3 ‣ Data Construction ‣ NuScenes-QA Dataset ‣ NuScenes-QA: A Multi-Modal Visual Question Answering Benchmark for Autonomous Driving Scenario")(b) and [3](https://arxiv.org/html/2305.14836v2#Sx3.F3 "Figure 3 ‣ Data Construction ‣ NuScenes-QA Dataset ‣ NuScenes-QA: A Multi-Modal Visual Question Answering Benchmark for Autonomous Driving Scenario")(c) present answer and question category distributions, revealing the balance of NuScenes-QA. A balanced dataset can prevent models from learning answer biases or language shortcuts, which are common in many other VQA benchmarks (Antol et al. [2015](https://arxiv.org/html/2305.14836v2#bib.bib2); Azuma et al. [2022](https://arxiv.org/html/2305.14836v2#bib.bib3)).

|

| 106 |

+

|

| 107 |

+

### Method

|

| 108 |

+

|

| 109 |

+

Along with the proposed dataset, we provide several baselines based on existing 3D detection and VQA techniques.

|

| 110 |

+

|

| 111 |

+

#### Task Definition

|

| 112 |

+

|

| 113 |

+

Given a visual scene S 𝑆 S italic_S, and a question Q 𝑄 Q italic_Q, the task of visual question answering aims to select an answer a^^𝑎\hat{a}over^ start_ARG italic_a end_ARG from the answer space 𝒜={a i}i=1 N 𝒜 superscript subscript subscript 𝑎 𝑖 𝑖 1 𝑁\mathcal{A}=\{a_{i}\}_{i=1}^{N}caligraphic_A = { italic_a start_POSTSUBSCRIPT italic_i end_POSTSUBSCRIPT } start_POSTSUBSCRIPT italic_i = 1 end_POSTSUBSCRIPT start_POSTSUPERSCRIPT italic_N end_POSTSUPERSCRIPT that best answers the question. Therefore, the task can be formulated as:

|

| 114 |

+

|

| 115 |

+

a^=argmax a∈𝒜 P(a∣S,Q).^𝑎 subscript 𝑎 𝒜 𝑃 conditional 𝑎 𝑆 𝑄\hat{a}=\mathop{\arg\max}\limits_{a\in\mathcal{A}}P(a\mid S,Q).over^ start_ARG italic_a end_ARG = start_BIGOP roman_arg roman_max end_BIGOP start_POSTSUBSCRIPT italic_a ∈ caligraphic_A end_POSTSUBSCRIPT italic_P ( italic_a ∣ italic_S , italic_Q ) .(3)

|

| 116 |

+

|

| 117 |

+

For NuScenes-QA, visual scene data encompass multi-view images I 𝐼 I italic_I, point clouds P 𝑃 P italic_P, and any frames I i subscript 𝐼 𝑖 I_{i}italic_I start_POSTSUBSCRIPT italic_i end_POSTSUBSCRIPT and P i subscript 𝑃 𝑖 P_{i}italic_P start_POSTSUBSCRIPT italic_i end_POSTSUBSCRIPT before the current frame in the data sequences. We can further decompose the Eq. [3](https://arxiv.org/html/2305.14836v2#Sx4.E3 "3 ‣ Task Definition ‣ Method ‣ NuScenes-QA: A Multi-Modal Visual Question Answering Benchmark for Autonomous Driving Scenario") into:

|

| 118 |

+

|

| 119 |

+

P(a∣S,Q)=P(a∣I,P,Q)𝑃 conditional 𝑎 𝑆 𝑄 𝑃 conditional 𝑎 𝐼 𝑃 𝑄\displaystyle P(a\mid S,Q)=P(a\mid I,P,Q)italic_P ( italic_a ∣ italic_S , italic_Q ) = italic_P ( italic_a ∣ italic_I , italic_P , italic_Q )

|

| 120 |

+

I={I i,T−t<i≤T}𝐼 subscript 𝐼 𝑖 𝑇 𝑡 𝑖 𝑇\displaystyle I=\{I_{i},\ T-t<i\leq T\}italic_I = { italic_I start_POSTSUBSCRIPT italic_i end_POSTSUBSCRIPT , italic_T - italic_t < italic_i ≤ italic_T }(4)

|

| 121 |

+

P={P i,T−t<i≤T},𝑃 subscript 𝑃 𝑖 𝑇 𝑡 𝑖 𝑇\displaystyle P=\{P_{i},\ T-t<i\leq T\},italic_P = { italic_P start_POSTSUBSCRIPT italic_i end_POSTSUBSCRIPT , italic_T - italic_t < italic_i ≤ italic_T } ,

|

| 122 |

+

|

| 123 |

+

where T 𝑇 T italic_T is the index of current frame and t 𝑡 t italic_t is the number of previous frame used in the model. It is also possible to use only single modality or single frame data for prediction.

|

| 124 |

+

|

| 125 |

+

#### Framework Overview

|

| 126 |

+

|

| 127 |

+

The overall framework of our proposed baseline is illustrated in Fig. [4](https://arxiv.org/html/2305.14836v2#Sx3.F4 "Figure 4 ‣ Q&A Pair Generation and Filtering ‣ Data Construction ‣ NuScenes-QA Dataset ‣ NuScenes-QA: A Multi-Modal Visual Question Answering Benchmark for Autonomous Driving Scenario") and mainly consists of three components. The first is the feature extraction backbone, which includes both image and point cloud feature extractor. The second part is the region proposal module for object embedding, and the last component is the QA-head for answer prediction.

|

| 128 |

+

|

| 129 |

+

Initially, the surrounded-view images and point clouds are fed into the feature extraction backbone, with features projected onto the Bird’s-Eye-View (BEV). Subsequently, 3D bounding boxes inferred by a pre-trained detection model are used to crop and pool object features. Finally, the QA-model takes the question features and the object features as input for cross-modal interaction to predict the answer.

|

| 130 |

+

|

| 131 |

+

#### Input Embedding

|

| 132 |

+

|

| 133 |

+

##### Question Embedding

|

| 134 |

+

|

| 135 |

+

For a question Q={w i}i=1 n q 𝑄 superscript subscript subscript 𝑤 𝑖 𝑖 1 subscript 𝑛 𝑞 Q=\{w_{i}\}_{i=1}^{n_{q}}italic_Q = { italic_w start_POSTSUBSCRIPT italic_i end_POSTSUBSCRIPT } start_POSTSUBSCRIPT italic_i = 1 end_POSTSUBSCRIPT start_POSTSUPERSCRIPT italic_n start_POSTSUBSCRIPT italic_q end_POSTSUBSCRIPT end_POSTSUPERSCRIPT that contains n q subscript 𝑛 𝑞 n_{q}italic_n start_POSTSUBSCRIPT italic_q end_POSTSUBSCRIPT words, we first tokenize it and initialize the tokens with pre-trained GloVe (Pennington, Socher, and Manning [2014](https://arxiv.org/html/2305.14836v2#bib.bib29)) embeddings. The sequence is then fed into a single-layer biLSTM (Hochreiter and Schmidhuber [1997](https://arxiv.org/html/2305.14836v2#bib.bib14)) for word-level context encoding. Each word feature 𝐰 i subscript 𝐰 𝑖\mathbf{w}_{i}bold_w start_POSTSUBSCRIPT italic_i end_POSTSUBSCRIPT is represented by the concatenation of the forward and backward hidden states of the biLSTM, denoted as:

|

| 136 |

+

|

| 137 |

+

𝐰 i=[𝐡 i→;𝐡 i←]∈ℝ d,subscript 𝐰 𝑖→subscript 𝐡 𝑖←subscript 𝐡 𝑖 superscript ℝ 𝑑\mathbf{w}_{i}=[\overset{\rightarrow}{\mathbf{h}_{i}};\ \overset{\leftarrow}{% \mathbf{h}_{i}}]\in\mathbb{R}^{d},bold_w start_POSTSUBSCRIPT italic_i end_POSTSUBSCRIPT = [ over→ start_ARG bold_h start_POSTSUBSCRIPT italic_i end_POSTSUBSCRIPT end_ARG ; over← start_ARG bold_h start_POSTSUBSCRIPT italic_i end_POSTSUBSCRIPT end_ARG ] ∈ blackboard_R start_POSTSUPERSCRIPT italic_d end_POSTSUPERSCRIPT ,(5)

|

| 138 |

+

|

| 139 |

+

and the question embedding is represented as 𝐐∈ℝ n q×d 𝐐 superscript ℝ subscript 𝑛 𝑞 𝑑\mathbf{Q}\in\mathbb{R}^{n_{q}\times d}bold_Q ∈ blackboard_R start_POSTSUPERSCRIPT italic_n start_POSTSUBSCRIPT italic_q end_POSTSUBSCRIPT × italic_d end_POSTSUPERSCRIPT.

|

| 140 |

+

|

| 141 |

+

##### Visual Feature Extraction

|

| 142 |

+

|

| 143 |

+

We adopt leading-edge 3D detection techniques for visual feature extraction. As shown in Fig. [4](https://arxiv.org/html/2305.14836v2#Sx3.F4 "Figure 4 ‣ Q&A Pair Generation and Filtering ‣ Data Construction ‣ NuScenes-QA Dataset ‣ NuScenes-QA: A Multi-Modal Visual Question Answering Benchmark for Autonomous Driving Scenario"), it entails two branches: image stream and point cloud stream. For multi-view images, ResNet (He et al. [2016](https://arxiv.org/html/2305.14836v2#bib.bib13)) with FPN (Lin et al. [2017](https://arxiv.org/html/2305.14836v2#bib.bib25)) is used as the backbone for multi-scale feature extraction. Then, in order to make the feature spatial-aware, we estimate the depth of the pixels in the images and lift them to 3D virtual points with a view transformer inspired by LSS (Philion and Fidler [2020](https://arxiv.org/html/2305.14836v2#bib.bib30)). Finally, pooling along the Z-axis compresses the feature in voxel space, producing the BEV featmap 𝐌 I∈ℝ H×W×d m subscript 𝐌 𝐼 superscript ℝ 𝐻 𝑊 subscript 𝑑 𝑚\mathbf{M}_{I}\in\mathbb{R}^{H\times W\times d_{m}}bold_M start_POSTSUBSCRIPT italic_I end_POSTSUBSCRIPT ∈ blackboard_R start_POSTSUPERSCRIPT italic_H × italic_W × italic_d start_POSTSUBSCRIPT italic_m end_POSTSUBSCRIPT end_POSTSUPERSCRIPT.

|

| 144 |

+

|

| 145 |

+

For point clouds, we first partition 3D space into voxels, transforming raw point clouds into binary voxel grids (Zhou and Tuzel [2018](https://arxiv.org/html/2305.14836v2#bib.bib42)). Subsequently, 3D sparse convolutional neural network (Graham, Engelcke, and Van Der Maaten [2018](https://arxiv.org/html/2305.14836v2#bib.bib12)) is applied to the voxel grid for feature representation. Similar to the image features mentioned earlier, Z-axis pooling yields the point cloud BEV featmap 𝐌 P∈ℝ H×W×d m subscript 𝐌 𝑃 superscript ℝ 𝐻 𝑊 subscript 𝑑 𝑚\mathbf{M}_{P}\in\mathbb{R}^{H\times W\times d_{m}}bold_M start_POSTSUBSCRIPT italic_P end_POSTSUBSCRIPT ∈ blackboard_R start_POSTSUPERSCRIPT italic_H × italic_W × italic_d start_POSTSUBSCRIPT italic_m end_POSTSUBSCRIPT end_POSTSUPERSCRIPT. Combining 𝐌 I subscript 𝐌 𝐼\mathbf{M}_{I}bold_M start_POSTSUBSCRIPT italic_I end_POSTSUBSCRIPT and 𝐌 P subscript 𝐌 𝑃\mathbf{M}_{P}bold_M start_POSTSUBSCRIPT italic_P end_POSTSUBSCRIPT, we can aggregate them to obtain multi-modal featmap 𝐌∈ℝ H×W×d m 𝐌 superscript ℝ 𝐻 𝑊 subscript 𝑑 𝑚\mathbf{M}\in\mathbb{R}^{H\times W\times d_{m}}bold_M ∈ blackboard_R start_POSTSUPERSCRIPT italic_H × italic_W × italic_d start_POSTSUBSCRIPT italic_m end_POSTSUBSCRIPT end_POSTSUPERSCRIPT.

|

| 146 |

+

|

| 147 |

+

##### Object Embedding

|

| 148 |

+

|

| 149 |

+

Following 2D detection works (Ren et al. [2015](https://arxiv.org/html/2305.14836v2#bib.bib33)), we crop and pool the features in bounding boxes as the object embedding. However, unlike standard 2D bounding boxes aligned with the coordinate axis in images, projecting 3D boxes to BEV yields rotated boxes unsuited for standard RoI Pooling. To this end, we make some modifications. Firstly, we project the 3D box B=[x,y,z,x size,y size,z size,φ]𝐵 𝑥 𝑦 𝑧 subscript 𝑥 𝑠 𝑖 𝑧 𝑒 subscript 𝑦 𝑠 𝑖 𝑧 𝑒 subscript 𝑧 𝑠 𝑖 𝑧 𝑒 𝜑 B=[x,y,z,x_{size},y_{size},z_{size},\varphi]italic_B = [ italic_x , italic_y , italic_z , italic_x start_POSTSUBSCRIPT italic_s italic_i italic_z italic_e end_POSTSUBSCRIPT , italic_y start_POSTSUBSCRIPT italic_s italic_i italic_z italic_e end_POSTSUBSCRIPT , italic_z start_POSTSUBSCRIPT italic_s italic_i italic_z italic_e end_POSTSUBSCRIPT , italic_φ ] into the BEV featmap:

|

| 150 |

+

|

| 151 |

+

x m=x−R pc F v×F o,subscript 𝑥 𝑚 𝑥 subscript 𝑅 𝑝 𝑐 subscript 𝐹 𝑣 subscript 𝐹 𝑜 x_{m}=\frac{x-R_{pc}}{F_{v}\times F_{o}},italic_x start_POSTSUBSCRIPT italic_m end_POSTSUBSCRIPT = divide start_ARG italic_x - italic_R start_POSTSUBSCRIPT italic_p italic_c end_POSTSUBSCRIPT end_ARG start_ARG italic_F start_POSTSUBSCRIPT italic_v end_POSTSUBSCRIPT × italic_F start_POSTSUBSCRIPT italic_o end_POSTSUBSCRIPT end_ARG ,(6)

|

| 152 |

+

|

| 153 |

+

where,F v subscript 𝐹 𝑣 F_{v}italic_F start_POSTSUBSCRIPT italic_v end_POSTSUBSCRIPT, F o subscript 𝐹 𝑜 F_{o}italic_F start_POSTSUBSCRIPT italic_o end_POSTSUBSCRIPT and R pc subscript 𝑅 𝑝 𝑐 R_{pc}italic_R start_POSTSUBSCRIPT italic_p italic_c end_POSTSUBSCRIPT represent the voxel factor, out size factor of the backbone, and the point cloud range, respectively. All box parameters follow the Eq. [6](https://arxiv.org/html/2305.14836v2#Sx4.E6 "6 ‣ Object Embedding ‣ Input Embedding ‣ Method ‣ NuScenes-QA: A Multi-Modal Visual Question Answering Benchmark for Autonomous Driving Scenario") to transform into BEV space except the heading angle φ 𝜑\varphi italic_φ. Then, based on the center and size of the box, we can easily calculate the four vertices V={x i,y i}i=0 3 𝑉 superscript subscript subscript 𝑥 𝑖 subscript 𝑦 𝑖 𝑖 0 3 V=\{x_{i},y_{i}\}_{i=0}^{3}italic_V = { italic_x start_POSTSUBSCRIPT italic_i end_POSTSUBSCRIPT , italic_y start_POSTSUBSCRIPT italic_i end_POSTSUBSCRIPT } start_POSTSUBSCRIPT italic_i = 0 end_POSTSUBSCRIPT start_POSTSUPERSCRIPT 3 end_POSTSUPERSCRIPT. Secondly, we calculate the rotated vertex V′superscript 𝑉′V^{{}^{\prime}}italic_V start_POSTSUPERSCRIPT start_FLOATSUPERSCRIPT ′ end_FLOATSUPERSCRIPT end_POSTSUPERSCRIPT via the heading angle φ 𝜑\varphi italic_φ:

|

| 154 |

+

|

| 155 |

+

[x i′y i′]=[cosφ−sinφ sinφ cosφ][x i y i]matrix superscript subscript 𝑥 𝑖′superscript subscript 𝑦 𝑖′matrix 𝜑 𝜑 𝜑 𝜑 matrix subscript 𝑥 𝑖 subscript 𝑦 𝑖\begin{bmatrix}x_{i}^{{}^{\prime}}\\ y_{i}^{{}^{\prime}}\end{bmatrix}=\begin{bmatrix}\cos\varphi&-\sin\varphi\\ \sin\varphi&\cos\varphi\end{bmatrix}\begin{bmatrix}x_{i}\\ y_{i}\end{bmatrix}[ start_ARG start_ROW start_CELL italic_x start_POSTSUBSCRIPT italic_i end_POSTSUBSCRIPT start_POSTSUPERSCRIPT start_FLOATSUPERSCRIPT ′ end_FLOATSUPERSCRIPT end_POSTSUPERSCRIPT end_CELL end_ROW start_ROW start_CELL italic_y start_POSTSUBSCRIPT italic_i end_POSTSUBSCRIPT start_POSTSUPERSCRIPT start_FLOATSUPERSCRIPT ′ end_FLOATSUPERSCRIPT end_POSTSUPERSCRIPT end_CELL end_ROW end_ARG ] = [ start_ARG start_ROW start_CELL roman_cos italic_φ end_CELL start_CELL - roman_sin italic_φ end_CELL end_ROW start_ROW start_CELL roman_sin italic_φ end_CELL start_CELL roman_cos italic_φ end_CELL end_ROW end_ARG ] [ start_ARG start_ROW start_CELL italic_x start_POSTSUBSCRIPT italic_i end_POSTSUBSCRIPT end_CELL end_ROW start_ROW start_CELL italic_y start_POSTSUBSCRIPT italic_i end_POSTSUBSCRIPT end_CELL end_ROW end_ARG ](7)

|

| 156 |

+

|

| 157 |

+

Finally, we use cross product algorithm to identify pixel membership in the rotated rectangle. Then, we perform mean pooling on the features of all the pixels inside the rectangle to obtain the object embedding 𝐎∈ℝ N×d m 𝐎 superscript ℝ 𝑁 subscript 𝑑 𝑚\mathbf{O}\in\mathbb{R}^{N\times d_{m}}bold_O ∈ blackboard_R start_POSTSUPERSCRIPT italic_N × italic_d start_POSTSUBSCRIPT italic_m end_POSTSUBSCRIPT end_POSTSUPERSCRIPT. Algorithm details can be found in supplementary materials.

|

| 158 |

+

|

| 159 |

+

#### Answer Head and Training

|

| 160 |

+

|

| 161 |

+

We adopt the classical VQA model MCAN (Yu et al. [2019](https://arxiv.org/html/2305.14836v2#bib.bib38)) as our answer head. It leverages stacked self-attention layers to model the language and visual context independently, along with stacked cross-attention layers for cross-modal feature interaction. The fused features are then projected to the answer space for prediction via basic MLP layers.

|

| 162 |

+

|

| 163 |

+

During the training phase, we extract the object embeddings using a pre-trained 3D detection model offline. And answer head is trained with the standard cross-entropy loss.

|

| 164 |

+

|

| 165 |

+

Table 1: Results of different models on the NuScenes-QA test set. We evaluate top-1 accuracy across the overall test split and different question types. H0 denotes zero-hop and H1 denotes one-hop. C denotes camera, L denotes LiDAR.

|

| 166 |

+

|

| 167 |

+

### Experiments

|

| 168 |

+

|

| 169 |

+

To validate the challenge of NuScenes-QA, we assess baseline performance in various configurations: camera-only or LiDAR-only single-modality models, camera-lidar fusion models, and diverse answering heads. We conduct ablation studies on crucial steps of the baseline, including BEV feature cropping and pooling strategies, as well as the influence of detected 3D bounding boxes. Furthermore, visualization samples are showcased in supplementary material.

|

| 170 |

+

|

| 171 |

+

#### Evaluation Metrics

|

| 172 |

+

|

| 173 |

+

Questions in NuScenes-QA span 5 categories based on query format: 1) Exist, querying the existence of a object in the scene; 2) Count, object counting under specified conditions; 3) Object, object recognition based on language description; 4) Status, querying the status of a specified object; 5) Comparison, specified objects or their status comparison. Additionally, questions are also divided into two groups based their complexity of reasoning: zero-hop (denoted as H0) and one-hop (denoted as H1). We adopt the Top-1 accuracy as our evaluation metric, follow the practice of many other VQA works (Antol et al. [2015](https://arxiv.org/html/2305.14836v2#bib.bib2); Azuma et al. [2022](https://arxiv.org/html/2305.14836v2#bib.bib3)), and evaluate the performance of different question types separately.

|

| 174 |

+

|

| 175 |

+

#### Implementation Details

|

| 176 |

+

|

| 177 |

+

For the feature extraction backbone, we use the pre-trained detection model following the original settings (Huang et al. [2021](https://arxiv.org/html/2305.14836v2#bib.bib15); Yin, Zhou, and Krahenbuhl [2021](https://arxiv.org/html/2305.14836v2#bib.bib37); Jiao et al. [2023a](https://arxiv.org/html/2305.14836v2#bib.bib19)). The dimension of the QA model d m subscript 𝑑 𝑚 d_{m}italic_d start_POSTSUBSCRIPT italic_m end_POSTSUBSCRIPT is set to 512, and MCAN adopts a 6-layer encoder-decoder version. As for training, we used the Adam optimizer with an initial learning rate of 1e-4 and half decaying every 2 epochs. All experiments are conducted with a batch size of 256 on 2 NVIDIA GeForce RTX 3090 GPUs. More details can be found in supplementary material.

|

| 178 |

+

|

| 179 |

+

#### Quantitative Results

|

| 180 |

+

|

| 181 |

+

##### Compared Methods

|

| 182 |

+

|

| 183 |

+

As mentioned earlier, our task can be divided into three settings: camera-only, LiDAR-only, camera+LiDAR. To explore the impact of different modalities on the question-answering performance, we select representative backbone for each setting. We choose BEVDet(Huang et al. [2021](https://arxiv.org/html/2305.14836v2#bib.bib15)) for camera-only setting, which proposed a novel paradigm of explicitly encoding the perspective-view features into the BEV space. CenterPoint(Yin, Zhou, and Krahenbuhl [2021](https://arxiv.org/html/2305.14836v2#bib.bib37)) is selected for LiDAR-only setting. It introduced a center-based object keypoint detector and has shown excellent performance in both detection accuracy and speed. For the multi-modal model, we opt for MSMDFusion(Jiao et al. [2023a](https://arxiv.org/html/2305.14836v2#bib.bib19)), which leverages depth and fine-grained LiDAR-camera interaction, achieving state-of-the-art results on the nuScenes detection benchmark for single model.

|

| 184 |

+

|

| 185 |

+

Regarding the QA-head, we select two classic models, BUTD(Anderson et al. [2018](https://arxiv.org/html/2305.14836v2#bib.bib1)) and MCAN(Yu et al. [2019](https://arxiv.org/html/2305.14836v2#bib.bib38)). BUTD advocates for computing bottom-up and top-down attention on salient regions of the image. MCAN stacks self-attention and cross-attention modules for vision-language feature interaction. To establish the upper bound of the QA models, we employ perfect perceptual results, _i.e._, ground-truth object labels. Specifically, we use GloVe for objects and their status embedding, noted as GroundTruth in Table [1](https://arxiv.org/html/2305.14836v2#Sx4.T1 "Table 1 ‣ Answer Head and Training ‣ Method ‣ NuScenes-QA: A Multi-Modal Visual Question Answering Benchmark for Autonomous Driving Scenario"). Additionally, we design a Q-Only baseline to investigate the impact of language bias. Q-Only can be considered as a blind model that ignores the visual input.

|

| 186 |

+

|

| 187 |

+

##### Results and Discussions

|

| 188 |

+

|

| 189 |

+

According to the results shown in Table [1](https://arxiv.org/html/2305.14836v2#Sx4.T1 "Table 1 ‣ Answer Head and Training ‣ Method ‣ NuScenes-QA: A Multi-Modal Visual Question Answering Benchmark for Autonomous Driving Scenario"), we have the following observations that are worth discussing.

|

| 190 |

+

|

| 191 |

+

1. It is evident that visual data play a critical role in the performance of our task. When comparing the Q-Only baseline to others, we find that it only achieves an accuracy of 53.4%percent 53.4 53.4\%53.4 %, which is significantly lower than that of other models. For instance, MSMDFusion+MCAN performs 7%percent 7 7\%7 % better. This indicates that model cannot achieve good performance solely rely on language shortcuts, but needs to leverage rich visual information.

|

| 192 |

+

|

| 193 |

+

2. Referring to the bottom part of Table [1](https://arxiv.org/html/2305.14836v2#Sx4.T1 "Table 1 ‣ Answer Head and Training ‣ Method ‣ NuScenes-QA: A Multi-Modal Visual Question Answering Benchmark for Autonomous Driving Scenario"), we can see that the LiDAR-based CenterPoint outperforms the camera-based BEVDet, achieving accuracy of 57.9%percent 57.9 57.9\%57.9 % and 59.5%percent 59.5 59.5\%59.5 %, respectively. We attribute this performance gap to the task characteristics. Images possess detailed texture information, point clouds excel in structure and spatial representation. Our proposed NuScenes-QA emphasizes more on the understanding of structure and spatial relationships of objects. On the other hand, the fusion-based model MSMDFusion attains the best performance with an accuracy of 60.4%percent 60.4 60.4\%60.4 %, demonstrating the camera and LiDAR data are complementary. Further work can explore how to better exploit the complementary information of multi-modal data. Of course, our baselines still have a long way to go compared to the GroundTruth (achieving an accuracy of 84.3%percent 84.3 84.3\%84.3 %).

|

| 194 |

+

|

| 195 |

+

3. According to Table [1](https://arxiv.org/html/2305.14836v2#Sx4.T1 "Table 1 ‣ Answer Head and Training ‣ Method ‣ NuScenes-QA: A Multi-Modal Visual Question Answering Benchmark for Autonomous Driving Scenario"), QA-head has a significant impact on the performance. With the same detection backbone, we observed that the QA-head based on MCAN outperforms BUTD by a large margin. For example, the overall accuracy of CenterPoint+MCAN is 59.5%percent 59.5 59.5\%59.5 %, 1.4%percent 1.4 1.4\%1.4 % higher than CenterPoint+BUTD. A dedicated QA-head designed for NuScenes-QA may lead to a greater improvement. We leave this as future work.

|

| 196 |

+

|

| 197 |

+

4. In a horizontal comparison of Table [1](https://arxiv.org/html/2305.14836v2#Sx4.T1 "Table 1 ‣ Answer Head and Training ‣ Method ‣ NuScenes-QA: A Multi-Modal Visual Question Answering Benchmark for Autonomous Driving Scenario"), it is not difficult to find that counting is the most difficult among all question types. Our best baseline model achieved just 23.2%percent 23.2 23.2\%23.2 % accuracy, much lower than other question types. Counting is historically tough in visual question answering, and some explorations (Zhang, Hare, and Prügel-Bennett [2018](https://arxiv.org/html/2305.14836v2#bib.bib40)) have been made in traditional 2D-QA. Future efforts could involve counting modules to enhance its performance.

|

| 198 |

+

|

| 199 |

+

#### Ablation Studies

|

| 200 |

+

|

| 201 |

+

Table 2: Ablation comparison between model trained with and without detection boxes feature.

|

| 202 |

+

|

| 203 |

+

To validate effectiveness of different operations in our baselines, we conduct extensive ablation experiments on the NuScenes-QA test split using the CenterPoint+MCAN baseline combination.

|

| 204 |

+

|

| 205 |

+

##### Effects of Bounding Boxes

|

| 206 |

+

|

| 207 |

+

Most 2D and 3D VQA models fuse the visual feature with object bounding box in the object embedding stage, making it position-aware. We follow this paradigm and evaluate the impact of 3D bounding boxes in our NuScenes-QA. Specifically, we project the 7-dimensional box B=[x,y,z,x size,y size,z size,φ]𝐵 𝑥 𝑦 𝑧 subscript 𝑥 𝑠 𝑖 𝑧 𝑒 subscript 𝑦 𝑠 𝑖 𝑧 𝑒 subscript 𝑧 𝑠 𝑖 𝑧 𝑒 𝜑 B=[x,y,z,x_{size},y_{size},z_{size},\varphi]italic_B = [ italic_x , italic_y , italic_z , italic_x start_POSTSUBSCRIPT italic_s italic_i italic_z italic_e end_POSTSUBSCRIPT , italic_y start_POSTSUBSCRIPT italic_s italic_i italic_z italic_e end_POSTSUBSCRIPT , italic_z start_POSTSUBSCRIPT italic_s italic_i italic_z italic_e end_POSTSUBSCRIPT , italic_φ ] obtained from the detection model onto the same dimension as the object embeddings using MLP, and concatenate the two features as the final input for the QA head. As shown in Table [2](https://arxiv.org/html/2305.14836v2#Sx5.T2 "Table 2 ‣ Ablation Studies ‣ Experiments ‣ NuScenes-QA: A Multi-Modal Visual Question Answering Benchmark for Autonomous Driving Scenario"), we are surprised to find that the performance varies significantly on different data. Adding box features for ground truth can increase the model’s accuracy from 70.8%percent 70.8 70.8\%70.8 % to 84.3%percent 84.3 84.3\%84.3 %, a significant improvement of 13.5%percent 13.5 13.5\%13.5 %. However, adding the detected boxes slightly decreased performance by 0.6%percent 0.6 0.6\%0.6 %, which is counterproductive. We speculate that this phenomenon may be caused by two reasons. On one hand, the current 3D detection models are still immature, and the noise in the detected boxes hurts the QA model. On the other hand, the point cloud represented by XYZ itself has great position expression ability, and the gain from adding box features is not significant.

|

| 208 |

+

|

| 209 |

+

##### BEV Feature Crop Strategy

|

| 210 |

+

|

| 211 |

+

As mentioned earlier, due to the non-parallelism between the 3D boxes and the BEV coordinate axes, we cannot perform standard RoI pooling as in traditional 2D images. Therefore, we use cross product algorithm to determine pixels inside the rotated box for feature cropping. In addition to this method, we can also use a simpler approach, which directly uses the circumscribed rectangle of the rotated box parallel to the coordinate axes as the cropping region. Table [3](https://arxiv.org/html/2305.14836v2#Sx5.T3 "Table 3 ‣ BEV Feature Crop Strategy ‣ Ablation Studies ‣ Experiments ‣ NuScenes-QA: A Multi-Modal Visual Question Answering Benchmark for Autonomous Driving Scenario") shows the performance comparison of these two crop strategy, where the circumscribed box is slightly inferior to the rotated box. The reason for this is that NuScenes-QA contains many elongated objects, such as bus and truck. These objects occupy a small area in the BEV space, but their circumscribed rectangles have a large range, making the object features over smoothing.

|

| 212 |

+

|

| 213 |

+

Table 3: Ablation comparison of BEV feature crop strategies.

|

| 214 |

+

|

| 215 |

+

Table 4: Ablation comparison of BEV feature pooling strategies.

|

| 216 |

+

|

| 217 |

+

##### BEV Feature Pooling Strategy

|

| 218 |

+

|

| 219 |

+

In terms of the feature pooling strategy for the cropped regions, we compared the classic Max Pooling and Mean Pooling operations. As illustrated in Table [4](https://arxiv.org/html/2305.14836v2#Sx5.T4 "Table 4 ‣ BEV Feature Crop Strategy ‣ Ablation Studies ‣ Experiments ‣ NuScenes-QA: A Multi-Modal Visual Question Answering Benchmark for Autonomous Driving Scenario"), Max Pooling achieved an accuracy of 58.9%percent 58.9 58.9\%58.9 % under the same conditions, which is 0.6%percent 0.6 0.6\%0.6 % lower than Mean Pooling. We speculate that this difference may be due to the fact that Max Pooling focuses on the texture features within the region, while Mean Pooling preserves the overall features. Our proposed NuScenes-QA mainly tests the model’s ability of understanding the structure of objects and their spatial relationships in street views, and relatively ignores the texture of the objects. Thus, Mean Pooling has a slight advantage over Max Pooling.

|

| 220 |

+

|

| 221 |

+

### Conclusion

|

| 222 |

+

|

| 223 |

+

In this paper, we apply VQA to the context of autonomous driving. We construct NuScenes-QA, the first large-scale multi-modal VQA benchmark for autonomous driving scenario. NuScenes-QA are generated automatically based on visual scene graphs and question templates, containing 34K scenes and 460K question-answer pairs. Alongside a series of baseline models, comprehensive experiments establish a solid foundation for future research. We strongly hope that NuScenes-QA can invigorate the evolution of multi-modal VQA and propel advancements in autonomous driving.

|

| 224 |

+

|

| 225 |

+

### Acknowledgments

|

| 226 |

+

|

| 227 |

+

This work was supported in part by National Natural Science Foundation of China Project (No. 62072116) and Shanghai Science and Technology Program [Project No. 21JC1400600].

|

| 228 |

+

|

| 229 |

+

### References

|

| 230 |

+

|

| 231 |

+

* Anderson et al. (2018) Anderson, P.; He, X.; Buehler, C.; Teney, D.; Johnson, M.; Gould, S.; and Zhang, L. 2018. Bottom-up and top-down attention for image captioning and visual question answering. In _Proceedings of the IEEE conference on computer vision and pattern recognition_, 6077–6086.

|

| 232 |

+

* Antol et al. (2015) Antol, S.; Agrawal, A.; Lu, J.; Mitchell, M.; Batra, D.; Zitnick, C.L.; and Parikh, D. 2015. Vqa: Visual question answering. In _Proceedings of the IEEE international conference on computer vision_, 2425–2433.

|

| 233 |

+

* Azuma et al. (2022) Azuma, D.; Miyanishi, T.; Kurita, S.; and Kawanabe, M. 2022. ScanQA: 3D question answering for spatial scene understanding. In _Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition_, 19129–19139.

|

| 234 |

+

* Caesar et al. (2020) Caesar, H.; Bankiti, V.; Lang, A.H.; Vora, S.; Liong, V.E.; Xu, Q.; Krishnan, A.; Pan, Y.; Baldan, G.; and Beijbom, O. 2020. nuscenes: A multimodal dataset for autonomous driving. In _Proceedings of the IEEE/CVF conference on computer vision and pattern recognition_, 11621–11631.

|

| 235 |

+

* Chen et al. (2023) Chen, Y.; Liu, J.; Zhang, X.; Qi, X.; and Jia, J. 2023. VoxelNeXt: Fully Sparse VoxelNet for 3D Object Detection and Tracking. In _Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition_.

|

| 236 |

+

* Dai et al. (2017) Dai, A.; Chang, A.X.; Savva, M.; Halber, M.; Funkhouser, T.; and Nießner, M. 2017. Scannet: Richly-annotated 3d reconstructions of indoor scenes. In _Proceedings of the IEEE conference on computer vision and pattern recognition_, 5828–5839.

|

| 237 |

+

* Das et al. (2018) Das, A.; Datta, S.; Gkioxari, G.; Lee, S.; Parikh, D.; and Batra, D. 2018. Embodied question answering. In _Proceedings of the IEEE conference on computer vision and pattern recognition_, 1–10.

|

| 238 |

+

* Deruyttere et al. (2019) Deruyttere, T.; Vandenhende, S.; Grujicic, D.; Van Gool, L.; and Moens, M.-F. 2019. Talk2car: Taking control of your self-driving car. _arXiv preprint arXiv:1909.10838_.

|

| 239 |

+

* Dongming et al. (2023) Dongming, W.; Wencheng, H.; Tiancai, W.; Xingping, D.; Xiangyu, Z.; and Shen, J. 2023. Referring Multi-Object Tracking. In _CVPR_.

|

| 240 |

+

* Geiger, Lenz, and Urtasun (2012) Geiger, A.; Lenz, P.; and Urtasun, R. 2012. Are we ready for autonomous driving? the kitti vision benchmark suite. In _2012 IEEE conference on computer vision and pattern recognition_, 3354–3361. IEEE.

|

| 241 |

+

* Goyal et al. (2017) Goyal, Y.; Khot, T.; Summers-Stay, D.; Batra, D.; and Parikh, D. 2017. Making the V in VQA Matter: Elevating the Role of Image Understanding in Visual Question Answering. In _Conference on Computer Vision and Pattern Recognition (CVPR)_.

|

| 242 |

+

* Graham, Engelcke, and Van Der Maaten (2018) Graham, B.; Engelcke, M.; and Van Der Maaten, L. 2018. 3d semantic segmentation with submanifold sparse convolutional networks. In _Proceedings of the IEEE conference on computer vision and pattern recognition_, 9224–9232.

|

| 243 |

+

* He et al. (2016) He, K.; Zhang, X.; Ren, S.; and Sun, J. 2016. Deep residual learning for image recognition. In _Proceedings of the IEEE conference on computer vision and pattern recognition_, 770–778.

|

| 244 |

+

* Hochreiter and Schmidhuber (1997) Hochreiter, S.; and Schmidhuber, J. 1997. Long short-term memory. _Neural computation_, 9(8): 1735–1780.

|

| 245 |

+

* Huang et al. (2021) Huang, J.; Huang, G.; Zhu, Z.; Ye, Y.; and Du, D. 2021. Bevdet: High-performance multi-camera 3d object detection in bird-eye-view. _arXiv preprint arXiv:2112.11790_.

|

| 246 |

+

* Hudson and Manning (2019) Hudson, D.A.; and Manning, C.D. 2019. GQA: A New Dataset for Real-World Visual Reasoning and Compositional Question Answering. In _Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR)_.

|

| 247 |

+

* Jang et al. (2017) Jang, Y.; Song, Y.; Yu, Y.; Kim, Y.; and Kim, G. 2017. Tgif-qa: Toward spatio-temporal reasoning in visual question answering. In _Proceedings of the IEEE conference on computer vision and pattern recognition_, 2758–2766.

|

| 248 |

+

* Jiang et al. (2020) Jiang, J.; Chen, Z.; Lin, H.; Zhao, X.; and Gao, Y. 2020. Divide and conquer: Question-guided spatio-temporal contextual attention for video question answering. In _Proceedings of the AAAI Conference on Artificial Intelligence_, volume 34, 11101–11108.

|

| 249 |

+

* Jiao et al. (2023a) Jiao, Y.; Jie, Z.; Chen, S.; Chen, J.; Ma, L.; and Jiang, Y.-G. 2023a. MSMDFusion: Fusing LiDAR and Camera at Multiple Scales with Multi-Depth Seeds for 3D Object Detection. In _Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition (CVPR)_.

|

| 250 |

+

* Jiao et al. (2023b) Jiao, Y.; Jie, Z.; Chen, S.; Cheng, L.; Chen, J.; Ma, L.; and Jiang, Y.-G. 2023b. Instance-aware Multi-Camera 3D Object Detection with Structural Priors Mining and Self-Boosting Learning. _arXiv preprint arXiv:2312.08004_.

|

| 251 |

+

* Johnson et al. (2017) Johnson, J.; Hariharan, B.; Van Der Maaten, L.; Fei-Fei, L.; Lawrence Zitnick, C.; and Girshick, R. 2017. Clevr: A diagnostic dataset for compositional language and elementary visual reasoning. In _Proceedings of the IEEE conference on computer vision and pattern recognition_, 2901–2910.

|

| 252 |

+

* Johnson et al. (2015) Johnson, J.; Krishna, R.; Stark, M.; Li, L.-J.; Shamma, D.; Bernstein, M.; and Fei-Fei, L. 2015. Image retrieval using scene graphs. In _Proceedings of the IEEE conference on computer vision and pattern recognition_, 3668–3678.

|

| 253 |

+

* Kim, Jun, and Zhang (2018) Kim, J.-H.; Jun, J.; and Zhang, B.-T. 2018. Bilinear attention networks. _Advances in neural information processing systems_, 31.

|

| 254 |

+

* Lei et al. (2018) Lei, J.; Yu, L.; Bansal, M.; and Berg, T.L. 2018. Tvqa: Localized, compositional video question answering. _arXiv preprint arXiv:1809.01696_.

|

| 255 |

+

* Lin et al. (2017) Lin, T.-Y.; Dollár, P.; Girshick, R.; He, K.; Hariharan, B.; and Belongie, S. 2017. Feature pyramid networks for object detection. In _Proceedings of the IEEE conference on computer vision and pattern recognition_, 2117–2125.

|

| 256 |

+

* Liu et al. (2023) Liu, Z.; Tang, H.; Amini, A.; Yang, X.; Mao, H.; Rus, D.; and Han, S. 2023. BEVFusion: Multi-Task Multi-Sensor Fusion with Unified Bird’s-Eye View Representation. In _IEEE International Conference on Robotics and Automation (ICRA)_.

|

| 257 |

+

* Lu et al. (2016) Lu, J.; Yang, J.; Batra, D.; and Parikh, D. 2016. Hierarchical question-image co-attention for visual question answering. _Advances in neural information processing systems_, 29.

|

| 258 |

+

* Ma et al. (2023) Ma, X.; Yong, S.; Zheng, Z.; Li, Q.; Liang, Y.; Zhu, S.-C.; and Huang, S. 2023. SQA3D: Situated Question Answering in 3D Scenes. In _International Conference on Learning Representations_.

|

| 259 |

+

* Pennington, Socher, and Manning (2014) Pennington, J.; Socher, R.; and Manning, C.D. 2014. Glove: Global vectors for word representation. In _Proceedings of the 2014 conference on empirical methods in natural language processing (EMNLP)_, 1532–1543.

|

| 260 |

+

* Philion and Fidler (2020) Philion, J.; and Fidler, S. 2020. Lift, splat, shoot: Encoding images from arbitrary camera rigs by implicitly unprojecting to 3d. In _Computer Vision–ECCV 2020: 16th European Conference, Glasgow, UK, August 23–28, 2020, Proceedings, Part XIV 16_, 194–210. Springer.

|

| 261 |

+

* Qian et al. (2022a) Qian, T.; Chen, J.; Chen, S.; Wu, B.; and Jiang, Y.-G. 2022a. Scene graph refinement network for visual question answering. _IEEE Transactions on Multimedia_.

|

| 262 |

+

* Qian et al. (2022b) Qian, T.; Cui, R.; Chen, J.; Peng, P.; Guo, X.; and Jiang, Y.-G. 2022b. Locate before Answering: Answer Guided Question Localization for Video Question Answering. _arXiv preprint arXiv:2210.02081_.

|

| 263 |

+

* Ren et al. (2015) Ren, S.; He, K.; Girshick, R.; and Sun, J. 2015. Faster r-cnn: Towards real-time object detection with region proposal networks. _Advances in neural information processing systems_, 28.

|

| 264 |

+

* Tan and Bansal (2019) Tan, H.; and Bansal, M. 2019. Lxmert: Learning cross-modality encoder representations from transformers. _arXiv preprint arXiv:1908.07490_.

|

| 265 |

+

* Yan et al. (2021) Yan, X.; Yuan, Z.; Du, Y.; Liao, Y.; Guo, Y.; Li, Z.; and Cui, S. 2021. CLEVR3D: Compositional language and elementary visual reasoning for question answering in 3D real-world scenes. _arXiv preprint arXiv:2112.11691_.

|

| 266 |

+

* Ye et al. (2022) Ye, S.; Chen, D.; Han, S.; and Liao, J. 2022. 3D question answering. _IEEE Transactions on Visualization and Computer Graphics_.

|

| 267 |

+

* Yin, Zhou, and Krahenbuhl (2021) Yin, T.; Zhou, X.; and Krahenbuhl, P. 2021. Center-based 3d object detection and tracking. In _Proceedings of the IEEE/CVF conference on computer vision and pattern recognition_, 11784–11793.

|

| 268 |

+

* Yu et al. (2019) Yu, Z.; Yu, J.; Cui, Y.; Tao, D.; and Tian, Q. 2019. Deep modular co-attention networks for visual question answering. In _Proceedings of the IEEE/CVF conference on computer vision and pattern recognition_, 6281–6290.

|

| 269 |

+

* Zhang et al. (2021) Zhang, P.; Li, X.; Hu, X.; Yang, J.; Zhang, L.; Wang, L.; Choi, Y.; and Gao, J. 2021. Vinvl: Revisiting visual representations in vision-language models. In _Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition_, 5579–5588.

|

| 270 |

+

* Zhang, Hare, and Prügel-Bennett (2018) Zhang, Y.; Hare, J.; and Prügel-Bennett, A. 2018. Learning to count objects in natural images for visual question answering. _arXiv preprint arXiv:1802.05766_.

|

| 271 |

+

* Zhao et al. (2022) Zhao, L.; Cai, D.; Zhang, J.; Sheng, L.; Xu, D.; Zheng, R.; Zhao, Y.; Wang, L.; and Fan, X. 2022. Towards Explainable 3D Grounded Visual Question Answering: A New Benchmark and Strong Baseline. _IEEE Transactions on Circuits and Systems for Video Technology_.

|

| 272 |

+

* Zhou and Tuzel (2018) Zhou, Y.; and Tuzel, O. 2018. Voxelnet: End-to-end learning for point cloud based 3d object detection. In _Proceedings of the IEEE conference on computer vision and pattern recognition_, 4490–4499.

|

| 273 |

+

* Zhu et al. (2017) Zhu, L.; Xu, Z.; Yang, Y.; and Hauptmann, A.G. 2017. Uncovering the temporal context for video question answering. _International Journal of Computer Vision_, 124: 409–421.

|

| 274 |

+

|

| 275 |

+

Appendix

|

| 276 |

+

--------

|

| 277 |

+

|

| 278 |

+

Table 5: Comparison between NuScenes-QA and other VQA datasets.

|

| 279 |

+

|

| 280 |

+

### Appendix A Dataset Comparison

|

| 281 |

+

|

| 282 |

+

Table [5](https://arxiv.org/html/2305.14836v2#A0.T5 "Table 5 ‣ Appendix ‣ NuScenes-QA: A Multi-Modal Visual Question Answering Benchmark for Autonomous Driving Scenario") summarizes the differences between our NuScenes-QA and other related 3D VQA datasets. Firstly, to the best of our knowledge, NuScenes-QA is currently the largest 3D VQA datasets, with 34k diverse visual scenes and 460k question-answer pairs, averaging 13.5 pairs per scene. In contrast, other datasets (Das et al. [2018](https://arxiv.org/html/2305.14836v2#bib.bib7); Ye et al. [2022](https://arxiv.org/html/2305.14836v2#bib.bib36); Azuma et al. [2022](https://arxiv.org/html/2305.14836v2#bib.bib3); Yan et al. [2021](https://arxiv.org/html/2305.14836v2#bib.bib35); Zhao et al. [2022](https://arxiv.org/html/2305.14836v2#bib.bib41); Ma et al. [2023](https://arxiv.org/html/2305.14836v2#bib.bib28)) typically have less than 1000 scenes due to the difficulty of acquiring 3D data. Secondly, in terms of visual modality, our NuScenes-QA is multimodal, comprising of images and point clouds, posing higher demands on visual reasoning while increasing the dataset’s complexity. Additionally, our visual data is multi-frame, requiring temporal information mining. Thirdly, Our dataset focuses on outdoor scenes with a combination of dynamic objects and static background, posing higher challenges for answering questions that require perceiving and reasoning about dynamic objects.

|

| 283 |

+

|

| 284 |

+

In summary, compared to other 3D VQA datasets, NuScenes-QA stands out in terms of scale, data modality, and content, making it an important research resource that can advance the research and development of 3D visual question answering.

|

| 285 |

+

|

| 286 |

+

### Appendix B Question Templates

|

| 287 |

+

|

| 288 |

+