Add 1 files

Browse files- 2601/2601.08441.md +694 -0

2601/2601.08441.md

ADDED

|

@@ -0,0 +1,694 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

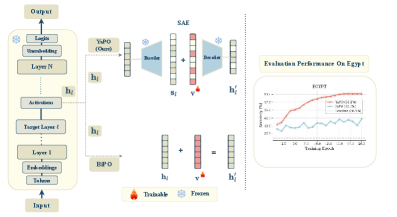

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

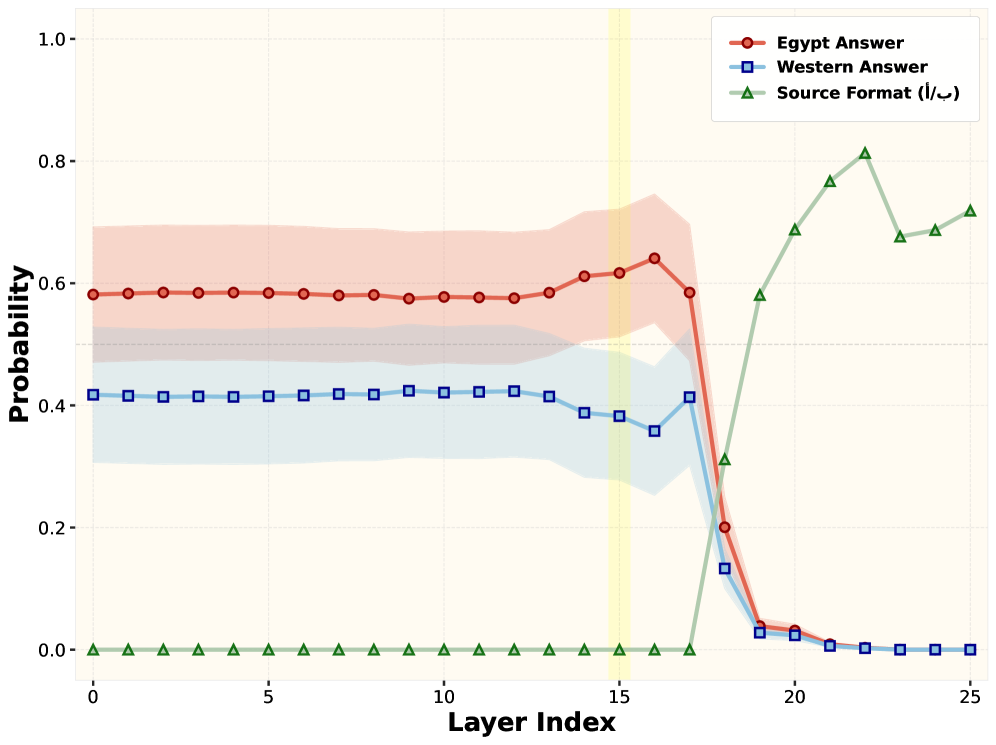

|

|

|

|

|

|

|

|

|

|

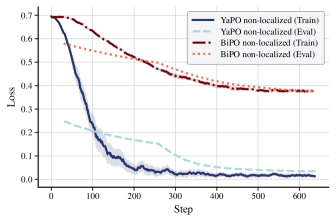

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

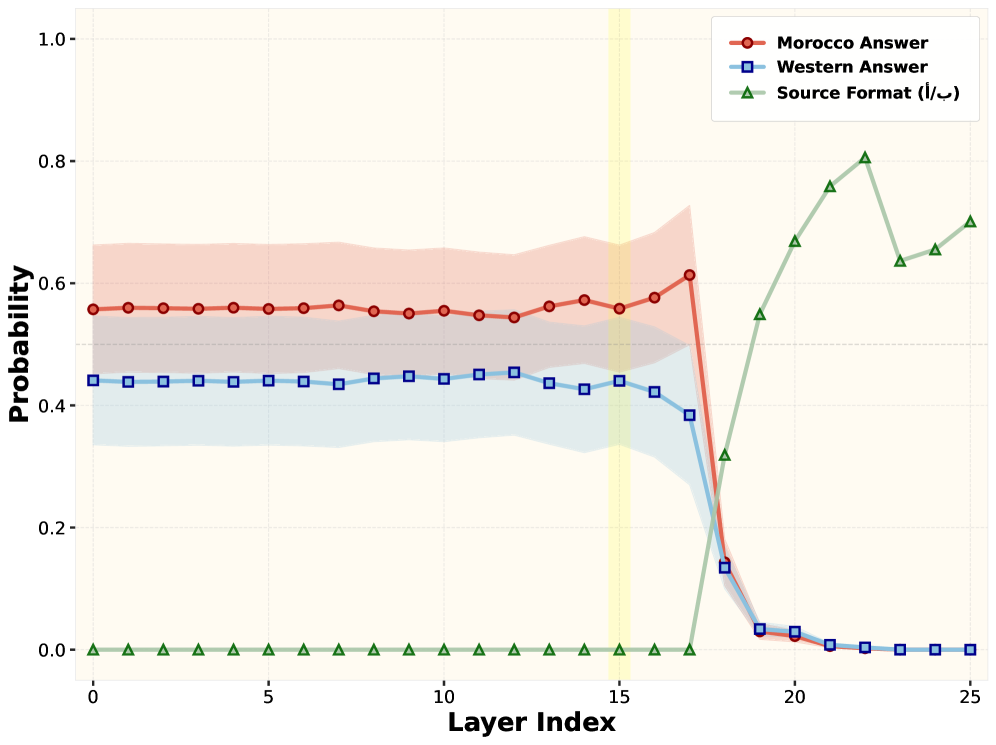

|

|

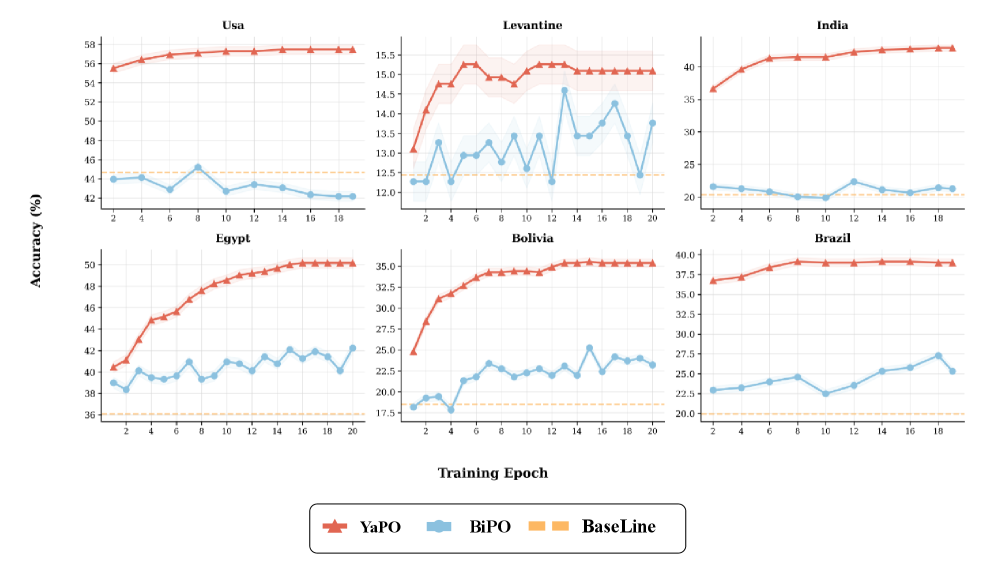

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

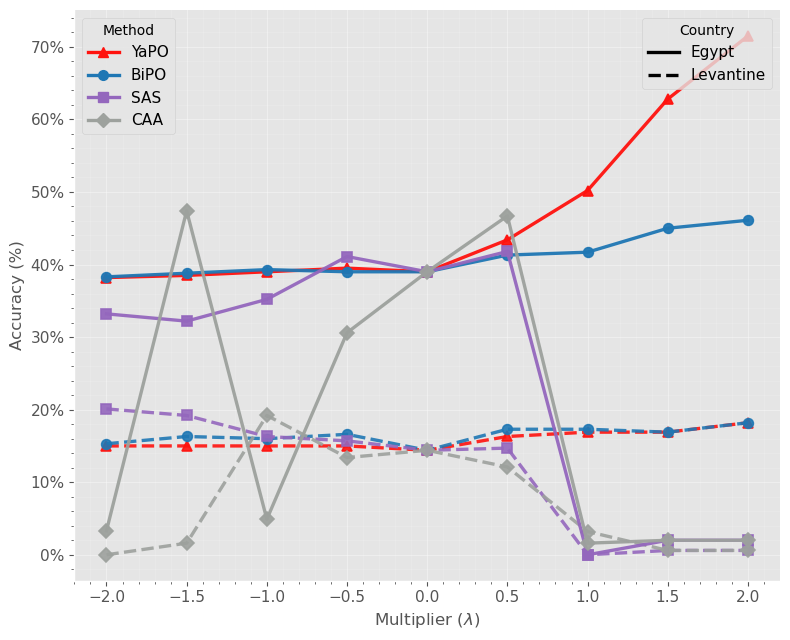

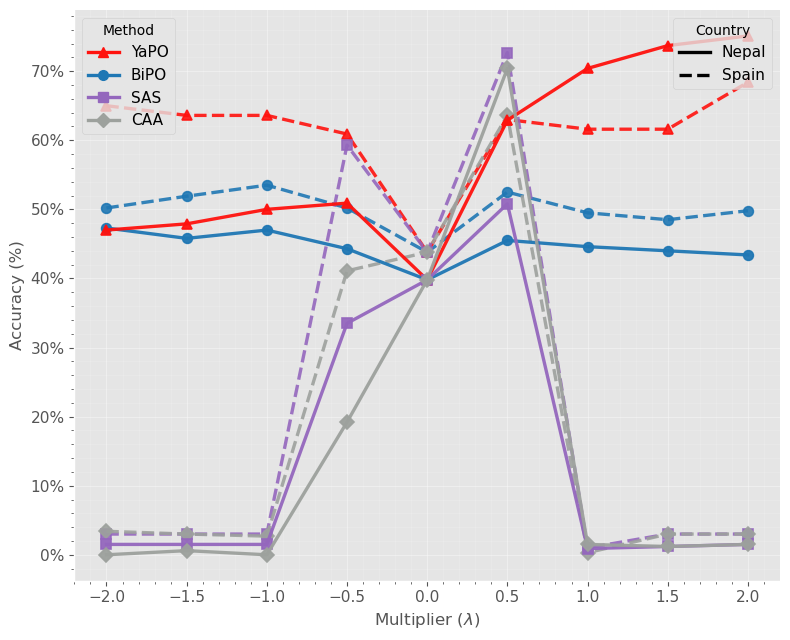

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

Title: YaPO: Learnable Sparse Activation Steering Vectors for Domain Adaptation

|

| 2 |

+

|

| 3 |

+

URL Source: https://arxiv.org/html/2601.08441

|

| 4 |

+

|

| 5 |

+

Markdown Content:

|

| 6 |

+

Abdelaziz Bounhar 1,∗, Rania Hossam Elmohamady Elbadry 1, Hadi Abdine 1,

|

| 7 |

+

|

| 8 |

+

Preslav Nakov 1, Michalis Vazirgiannis 1,2, Guokan Shang 1,∗

|

| 9 |

+

1 MBZUAI, 2 Ecole Polytechnique

|

| 10 |

+

|

| 11 |

+

∗Correspondence: {abdelaziz.bounhar, guokan.shang}@mbzuai.ac.ae

|

| 12 |

+

|

| 13 |

+

###### Abstract

|

| 14 |

+

|

| 15 |

+

Steering Large Language Models (LLMs) through activation interventions has emerged as a lightweight alternative to fine-tuning for alignment and personalization. Recent work on Bi-directional Preference Optimization (BiPO) shows that dense steering vectors can be learned directly from preference data in a Direct Preference Optimization (DPO) fashion, enabling control over truthfulness, hallucinations, and safety behaviors. However, dense steering vectors often entangle multiple latent factors due to neuron multi-semanticity, limiting their effectiveness and stability in fine-grained settings such as cultural alignment, where closely related values and behaviors (e.g., among Middle Eastern cultures) must be distinguished. In this paper, we propose Yet another Policy Optimization (YaPO), a reference-free method that learns sparse steering vectors in the latent space of a Sparse Autoencoder (SAE). By optimizing sparse codes, YaPO produces disentangled, interpretable, and efficient steering directions. Empirically, we show that YaPO converges faster, achieves stronger performance, and exhibits improved training stability compared to dense steering baselines. Beyond cultural alignment, YaPO generalizes to a range of alignment-related behaviors, including hallucination, wealth-seeking, jailbreak, and power-seeking. Importantly, YaPO preserves general knowledge, with no measurable degradation on MMLU. Overall, our results show that YaPO provides a general recipe for efficient, stable, and fine-grained alignment of LLMs, with broad applications to controllability and domain adaptation. The associated code and data are publicly available 1 1 1[https://github.com/MBZUAI-Paris/YaPO](https://github.com/MBZUAI-Paris/YaPO).

|

| 16 |

+

|

| 17 |

+

rmTeXGyreTermesX [*devanagari]rmLohit Devanagari [*arabic]rmNoto Sans Arabic

|

| 18 |

+

|

| 19 |

+

YaPO: Learnable Sparse Activation Steering Vectors for Domain Adaptation

|

| 20 |

+

|

| 21 |

+

Abdelaziz Bounhar 1,∗, Rania Hossam Elmohamady Elbadry 1, Hadi Abdine 1,Preslav Nakov 1, Michalis Vazirgiannis 1,2, Guokan Shang 1,∗1 MBZUAI, 2 Ecole Polytechnique∗Correspondence: {abdelaziz.bounhar, guokan.shang}@mbzuai.ac.ae

|

| 22 |

+

|

| 23 |

+

1 Introduction

|

| 24 |

+

--------------

|

| 25 |

+

|

| 26 |

+

|

| 27 |

+

|

| 28 |

+

Figure 1: Overview of YaPO. Unlike dense BiPO, which learns entangled steering directions directly in activation space, YaPO leverages a pretrained Sparse Autoencoder (SAE) to project activations into an interpretable sparse space. By optimizing sparse codes, YaPO learns disentangled and robust steering vectors that improve convergence, stability, and cultural alignment, while preserving generalization across domains.

|

| 29 |

+

|

| 30 |

+

Large language models have achieved remarkable progress in generating coherent, contextually appropriate, and useful text across domains. However, controlling their behavior in a fine-grained and interpretable manner remains a central challenge for alignment and personalization. Traditional approaches such as Reinforcement Learning from Human Feedback (RLHF) (Ziegler et al., [2019](https://arxiv.org/html/2601.08441v1#bib.bib27 "Fine-tuning language models from human preferences")) are effective but costly, difficult to scale, and often inflexible, while also offering little transparency into how specific behaviors are modulated. Prompt engineering provides a lightweight alternative but is brittle and usually less efficient compared to fine-tuning. More importantly, RLHF lacks scalability: modulating a single behavior may require updating millions of parameters or collecting large amounts of preference data, with the risk of degrading performance on unrelated tasks. These limitations have motivated growing interest in activation steering, a lightweight paradigm that guides model outputs by directly modifying hidden activations at inference time, via steering vector injection at specific layers without retraining or altering original model weights (Turner et al., [2023](https://arxiv.org/html/2601.08441v1#bib.bib21 "Activation addition: steering language models without optimization")).

|

| 31 |

+

|

| 32 |

+

Early activation steering methods such as Contrastive Activation Addition (CAA) (Panickssery et al., [2024](https://arxiv.org/html/2601.08441v1#bib.bib13 "Steering llama 2 via contrastive activation addition")) compute steering vectors by averaging activation differences over contrastive prompts. While simple, this approach captures only coarse behavioral signals and often fails in complex settings. Bi-directional Preference Optimization (BiPO) (Cao et al., [2024](https://arxiv.org/html/2601.08441v1#bib.bib11 "Personalized steering of large language models: versatile steering vectors through bi-directional preference optimization")) introduced a DPO-style objective to directly learn dense steering vectors from preference data, enabling improved control over behaviors such as hallucination and refusal.

|

| 33 |

+

|

| 34 |

+

However, both CAA and BiPO rely on dense steering vectors, which are prone to entangling multiple latent factors due to neuron multi-semanticity and superposition (Elhage et al., [2022](https://arxiv.org/html/2601.08441v1#bib.bib14 "Toy models of superposition")). This limits their stability, interpretability, and effectiveness in fine-grained alignment settings. In parallel, Sparse Activation Steering (SAS) (Bayat et al., [2025](https://arxiv.org/html/2601.08441v1#bib.bib15 "Steering large language model activations in sparse spaces")) leverages Sparse Autoencoders (SAEs) to operate on approximately monosemantic features, enabling more interpretable interventions, but relies on static averaged activations rather than learnable sparse vectors.

|

| 35 |

+

|

| 36 |

+

In this work, we introduce Yet Another Policy Optimization (YaPO), a reference-free method that learns trainable sparse steering vectors directly in the latent space of a pretrained SAE using a BiPO-style objective. YaPO combines the preference optimization of BiPO with the interpretability of SAS, yielding sparse, stable, and effective steering directions with minimal training overhead.

|

| 37 |

+

|

| 38 |

+

We study cultural adaptation as a representative domain adaptation setting, introducing a new benchmark spanning five language families and fifteen cultural contexts. Our results identify a substantial implicit–explicit localization gap in baseline models as in (Veselovsky et al., [2025](https://arxiv.org/html/2601.08441v1#bib.bib16 "Localized cultural knowledge is conserved and controllable in large language models")), and show that YaPO consistently closes this gap through improved fine-grained alignment. We further assess the generalization of YaPO on MMLU and on established alignment benchmarks from prior studies (Cao et al., [2024](https://arxiv.org/html/2601.08441v1#bib.bib11 "Personalized steering of large language models: versatile steering vectors through bi-directional preference optimization"); Panickssery et al., [2024](https://arxiv.org/html/2601.08441v1#bib.bib13 "Steering llama 2 via contrastive activation addition"); Bayat et al., [2025](https://arxiv.org/html/2601.08441v1#bib.bib15 "Steering large language model activations in sparse spaces")).

|

| 39 |

+

|

| 40 |

+

In summary, our contributions are threefold: ∙\bullet We propose YaPO, the first reference-free method for learning _sparse steering vectors_ (in the latent space of a SAE) from preference data.

|

| 41 |

+

|

| 42 |

+

∙\bullet We curate a new dataset and benchmark for cultural alignment that targets fine-grained cultural distinctions, including same-language cultures with subtle differences in values and norms, spanning five language families and fifteen cultural contexts.

|

| 43 |

+

|

| 44 |

+

∙\bullet We empirically show that YaPO converges faster, exhibits improved training stability, and yields more interpretable steering directions than dense baselines, while also generalizing beyond cultural alignment to broader alignment tasks and benchmarks.

|

| 45 |

+

|

| 46 |

+

min v\displaystyle\min_{v}\;𝔼 d∼𝒰{−1,1}(x,y w,y l)∼𝒟[logσ(dβlogπ L+1(y w∣A L(x)+dv)π L+1(y w∣A L(x))−dβlogπ L+1(y l∣A L(x)+dv)π L+1(y l∣A L(x)))],\displaystyle\mathbb{E}_{\begin{subarray}{c}d\sim\mathcal{U}\{-1,1\}\\ (x,y_{w},y_{l})\sim\mathcal{D}\end{subarray}}\Bigl[\log\sigma\Bigl(d\,\beta\log\tfrac{\pi_{L+1}(y_{w}\mid A_{L}(x)+dv)}{\pi_{L+1}(y_{w}\mid A_{L}(x))}-d\,\beta\log\tfrac{\pi_{L+1}(y_{l}\mid A_{L}(x)+dv)}{\pi_{L+1}(y_{l}\mid A_{L}(x))}\Bigr)\Bigr],(1)

|

| 47 |

+

|

| 48 |

+

2 Related Works

|

| 49 |

+

---------------

|

| 50 |

+

|

| 51 |

+

Alignment and controllability. RLHF (Christiano et al., [2017](https://arxiv.org/html/2601.08441v1#bib.bib26 "Deep reinforcement learning from human preferences"); Ziegler et al., [2019](https://arxiv.org/html/2601.08441v1#bib.bib27 "Fine-tuning language models from human preferences"); Stiennon et al., [2020](https://arxiv.org/html/2601.08441v1#bib.bib28 "Learning to summarize with human feedback"); Ouyang et al., [2022](https://arxiv.org/html/2601.08441v1#bib.bib29 "Training language models to follow instructions with human feedback")) has become the standard approach to align LLMs, training a reward model on human preference data and fine-tuning with PPO (Schulman et al., [2017](https://arxiv.org/html/2601.08441v1#bib.bib30 "Proximal policy optimization algorithms")) under the Bradley–Terry framework (Bradley and Terry, [1952](https://arxiv.org/html/2601.08441v1#bib.bib19 "Rank analysis of incomplete block designs: i. the method of paired comparisons")). Recent methods simplify this pipeline by bypassing explicit reward modeling: DPO (Rafailov et al., [2024](https://arxiv.org/html/2601.08441v1#bib.bib31 "Direct preference optimization: your language model is secretly a reward model")) directly optimizes on preference pairs, while SLiC (Zhao et al., [2023](https://arxiv.org/html/2601.08441v1#bib.bib32 "Slic-hf: sequence likelihood calibration with human feedback")) introduces a contrastive calibration loss with regularization toward the SFT model. Statistical rejection sampling (Liu et al., [2024](https://arxiv.org/html/2601.08441v1#bib.bib33 "Statistical rejection sampling improves preference optimization")) unifies both objectives and provides a tighter policy estimate.

|

| 52 |

+

|

| 53 |

+

Activation engineering. Activation-based methods steer LLMs by freezing weights and intervening on hidden activations. Early approaches optimized sentence-specific latent vectors (Subramani et al., [2022](https://arxiv.org/html/2601.08441v1#bib.bib20 "Extracting latent steering vectors from pretrained language models")), while activation addition (Turner et al., [2023](https://arxiv.org/html/2601.08441v1#bib.bib21 "Activation addition: steering language models without optimization")) and CAA (Rimsky et al., [2023](https://arxiv.org/html/2601.08441v1#bib.bib22 "Steering llama 2 via contrastive activation addition")) compute averaged activation differences from contrastive prompts. Although simple, these methods are often noisy and unstable, particularly for long-form or alignment-critical generation (Wang and Shu, [2023](https://arxiv.org/html/2601.08441v1#bib.bib23 "Backdoor activation attack: attack large language models using activation steering for safety-alignment")). More recent work perturbs attention heads (Li et al., [2024](https://arxiv.org/html/2601.08441v1#bib.bib24 "Inference-time intervention: eliciting truthful answers from a language model"); Liu et al., [2023](https://arxiv.org/html/2601.08441v1#bib.bib25 "In-context vectors: making in context learning more effective and controllable through latent space steering")). BiPO (Cao et al., [2024](https://arxiv.org/html/2601.08441v1#bib.bib11 "Personalized steering of large language models: versatile steering vectors through bi-directional preference optimization")) improves over prior work by framing steering as preference optimization, learning dense steering vectors via a bi-directional DPO-style objective.

|

| 54 |

+

|

| 55 |

+

Sparse activation steering. To mitigate superposition, Sparse Autoencoders (SAEs) (Lieberum et al., [2024](https://arxiv.org/html/2601.08441v1#bib.bib18 "Gemma scope: open sparse autoencoders everywhere all at once on gemma 2")) decompose activations into sparse, approximately monosemantic features. Sparse Activation Steering (SAS) (Bayat et al., [2025](https://arxiv.org/html/2601.08441v1#bib.bib15 "Steering large language model activations in sparse spaces")) exploits this structure by averaging sparse activations from contrastive data, yielding interpretable and fine-grained control. However, SAS does not optimize steering directions against preferences, limiting its effectiveness.

|

| 56 |

+

|

| 57 |

+

SAE-based steering and editing. Recent work combines activation steering with sparse or structured representation bases (Wu et al., [2025a](https://arxiv.org/html/2601.08441v1#bib.bib43 "AxBench: steering llms? even simple baselines outperform sparse autoencoders"), [b](https://arxiv.org/html/2601.08441v1#bib.bib42 "Improved representation steering for language models"); Chalnev et al., [2024](https://arxiv.org/html/2601.08441v1#bib.bib39 "Improving steering vectors by targeting sparse autoencoder features"); He et al., [2025](https://arxiv.org/html/2601.08441v1#bib.bib40 "SAE-ssv: supervised steering in sparse representation spaces for reliable control of language models"); Sun et al., [2025](https://arxiv.org/html/2601.08441v1#bib.bib38 "HyperSteer: activation steering at scale with hypernetworks"); Xu et al., [2025](https://arxiv.org/html/2601.08441v1#bib.bib41 "EasyEdit2: an easy-to-use steering framework for editing large language models")). ReFT-r1 (Wu et al., [2025a](https://arxiv.org/html/2601.08441v1#bib.bib43 "AxBench: steering llms? even simple baselines outperform sparse autoencoders")) learns a single dense steering direction on frozen models using a language-modeling objective with sparsity constraints. RePS (Wu et al., [2025b](https://arxiv.org/html/2601.08441v1#bib.bib42 "Improved representation steering for language models")) introduces a reference-free, bi-directional preference objective to train intervention-based steering methods. Other approaches operate directly in SAE space: SAE-TS and SAE-SSV (Chalnev et al., [2024](https://arxiv.org/html/2601.08441v1#bib.bib39 "Improving steering vectors by targeting sparse autoencoder features"); He et al., [2025](https://arxiv.org/html/2601.08441v1#bib.bib40 "SAE-ssv: supervised steering in sparse representation spaces for reliable control of language models")) optimize or select sparse SAE features for controlled steering, while HyperSteer (Sun et al., [2025](https://arxiv.org/html/2601.08441v1#bib.bib38 "HyperSteer: activation steering at scale with hypernetworks")) generates steering vectors on demand via a hypernetwork.

|

| 58 |

+

|

| 59 |

+

Positioning of YaPO. BiPO provides strong optimization but suffers from dense entanglement; SAS offers interpretability but lacks optimization. YaPO unifies these lines by learning preference-optimized, sparse steering vectors in SAE space. This yields disentangled, interpretable, and stable steering, with improved convergence and generalization across cultural alignment, truthfulness, hallucination suppression, and jailbreak defense.

|

| 60 |

+

|

| 61 |

+

3 Method

|

| 62 |

+

--------

|

| 63 |

+

|

| 64 |

+

### 3.1 Motivation: From Dense to Sparse Steering

|

| 65 |

+

|

| 66 |

+

Existing approaches extract steering vectors by directly operating in the dense activation space of LLMs (Rimsky et al., [2023](https://arxiv.org/html/2601.08441v1#bib.bib22 "Steering llama 2 via contrastive activation addition"); Wang and Shu, [2023](https://arxiv.org/html/2601.08441v1#bib.bib23 "Backdoor activation attack: attack large language models using activation steering for safety-alignment")). While effective in some cases, these methods inherit the multi-semantic entanglement of neurons: individual dense features often conflate multiple latent factors (Elhage et al., [2022](https://arxiv.org/html/2601.08441v1#bib.bib14 "Toy models of superposition")), leading to noisy and unstable control signals. As a result, vectors obtained from contrastive prompt pairs can misalign with actual generation behaviors, especially in alignment-critical tasks.

|

| 67 |

+

|

| 68 |

+

To address this, we leverage SAEs, which have recently been shown to disentangle latent concepts in LLM activations into sparse, interpretable features (Bayat et al., [2025](https://arxiv.org/html/2601.08441v1#bib.bib15 "Steering large language model activations in sparse spaces"); Lieberum et al., [2024](https://arxiv.org/html/2601.08441v1#bib.bib18 "Gemma scope: open sparse autoencoders everywhere all at once on gemma 2")). By mapping activations into this space basis, steering vectors can be optimized along dimensions that correspond more cleanly to relevant semantic factors, improving both precision and interpretability.

|

| 69 |

+

|

| 70 |

+

### 3.2 Preference-Optimized Steering in Sparse Space

|

| 71 |

+

|

| 72 |

+

Let A L(x)A_{L}(x) denote the hidden activations of the transformer at layer L L for input x x. Let also π L+1\pi_{L+1} denote the upper part of the transformer (from layer L+1 L+1 to output). BiPO (Cao et al., [2024](https://arxiv.org/html/2601.08441v1#bib.bib11 "Personalized steering of large language models: versatile steering vectors through bi-directional preference optimization")) learns a steering vector v∈ℝ k d v\in\mathbb{R}^{k_{d}} in the dense activation space of dimension k d k_{d} using the bi-directional preference optimization objective (see equation [1](https://arxiv.org/html/2601.08441v1#S1.E1 "In 1 Introduction ‣ YaPO: Learnable Sparse Activation Steering Vectors for Domain Adaptation")). y w y_{w} and y l y_{l} are respectively the preferred and dispreferred responses which are jointly drawn with the prompt x x from the preference dataset 𝒟\mathcal{D}, σ\sigma is the logistic function, β≥0\beta\geq 0 a deviation control parameter, and d∈{−1,1}d\in\{-1,1\} a uniformly random coefficient enforcing bi-directionality. At inference time, the learned steering vector v v is injected to the hidden state to cause a perturbation towards the desired steering behavior as follows

|

| 73 |

+

|

| 74 |

+

A L(x)=A L(x)+d⋅λ⋅v,∀d∈{−1,1}A_{L}(x)=A_{L}(x)+d\cdot\lambda\cdot v,\qquad\forall d\in\{-1,1\}(2)

|

| 75 |

+

|

| 76 |

+

with d d fixed to either -1 or 1 (negative or positive steering) and λ\lambda being a multiplicative factor that controlling the strength of steering.

|

| 77 |

+

|

| 78 |

+

In contrast, with YaPO, we introduce a sparse transformation function Φ\Phi that steers activations through an SAE as follows:

|

| 79 |

+

|

| 80 |

+

Φ\displaystyle\Phi(A L(x),λ,d,v)\displaystyle(A_{L}(x),\lambda,d,v)

|

| 81 |

+

=Dec(ReLU(Enc(A L(x))+d⋅λ⋅v))⏟steered reconstruction\displaystyle=\underbrace{\text{Dec}\!\left(\mathrm{ReLU}\!\left(\text{Enc}(A_{L}(x))+d\cdot\lambda\cdot v\right)\right)}_{\text{steered reconstruction}}

|

| 82 |

+

+(A L(x)−Dec(Enc(A L(x))))⏟residual correction.\displaystyle\quad+\underbrace{\Big(A_{L}(x)-\text{Dec}(\text{Enc}(A_{L}(x)))\Big)}_{\text{residual correction}}.(3)

|

| 83 |

+

|

| 84 |

+

where Enc and Dec are the encoder and decoder of a pretrained SAE, and v∈ℝ k s v\in\mathbb{R}^{k_{s}} is the learnable steering vector in sparse space of dimension k s≫k d k_{s}\gg k_{d}. To correct for SAE reconstruction error, we add a residual correction term ensuring consistency with the original hidden state (see equation [3.2](https://arxiv.org/html/2601.08441v1#S3.Ex1 "3.2 Preference-Optimized Steering in Sparse Space ‣ 3 Method ‣ YaPO: Learnable Sparse Activation Steering Vectors for Domain Adaptation")). The rational behind applying ReLU function is to enforce non-negativity in sparse codes (Bayat et al., [2025](https://arxiv.org/html/2601.08441v1#bib.bib15 "Steering large language model activations in sparse spaces")). We train steering vectors to increase the likelihood of preferred responses y w y_{w} while decreasing that of dispreferred responses y l y_{l}. The resulting optimization objective is outlined in equation [4](https://arxiv.org/html/2601.08441v1#S3.E4 "In 3.2 Preference-Optimized Steering in Sparse Space ‣ 3 Method ‣ YaPO: Learnable Sparse Activation Steering Vectors for Domain Adaptation").

|

| 85 |

+

|

| 86 |

+

min v ��� d∼𝒰{−1,1}(x,y w,y l)∼𝒟[log σ(\displaystyle\min_{v}\;\mathbb{E}_{\begin{subarray}{c}d\sim\mathcal{U}\{-1,1\}\\ (x,y_{w},y_{l})\sim\mathcal{D}\end{subarray}}\Bigl[\log\sigma\Bigl(d β log π L+1(y w∣Φ(A L(x),λ,d,v))π L+1(y w∣A L(x))−d β log π L+1(y l∣Φ(A L(x),λ,d,v))π L+1(y l∣A L(x)))].\displaystyle d\,\beta\log\tfrac{\pi_{L+1}(y_{w}\mid\Phi(A_{L}(x),\lambda,d,v))}{\pi_{L+1}(y_{w}\mid A_{L}(x))}-d\,\beta\log\tfrac{\pi_{L+1}(y_{l}\mid\Phi(A_{L}(x),\lambda,d,v))}{\pi_{L+1}(y_{l}\mid A_{L}(x))}\Bigr)\Bigr].(4)

|

| 87 |

+

|

| 88 |

+

With d=1 d=1, the objective increases the relative probability of y w y_{w} over y l y_{l}; with d=−1 d=-1, it enforces the reverse. This symmetric training sharpens the vector’s alignment with the behavioral axis of interest (positive or negative steering).

|

| 89 |

+

|

| 90 |

+

During optimization, we detach gradients through the SAE parameters (which along with the LLM parameter remain frozen) and only update v v. This setup enables v v to live in a disentangled basis, while the decoder projects it back to the model’s hidden space. We summarize the overall optimization procedure in Algorithm [1](https://arxiv.org/html/2601.08441v1#alg1 "Algorithm 1 ‣ 4.1 Experimental Setup ‣ 4 Experiments ‣ YaPO: Learnable Sparse Activation Steering Vectors for Domain Adaptation").

|

| 91 |

+

|

| 92 |

+

4 Experiments

|

| 93 |

+

-------------

|

| 94 |

+

|

| 95 |

+

### 4.1 Experimental Setup

|

| 96 |

+

|

| 97 |

+

Target LLM.For clarity, in the main paper we present all experiments on Gemma-2-2B(Team et al., [2024](https://arxiv.org/html/2601.08441v1#bib.bib17 "Gemma 2: improving open language models at a practical size")), a light yet efficient model. Scalability to the bigger model Gemma-2-9B is differed to Appendix [D](https://arxiv.org/html/2601.08441v1#A4 "Appendix D Scalability to other Models ‣ YaPO: Learnable Sparse Activation Steering Vectors for Domain Adaptation"). The choice of this model is further motivated by the availability of pretrained SAEs from Gemma-Scope(Lieberum et al., [2024](https://arxiv.org/html/2601.08441v1#bib.bib18 "Gemma scope: open sparse autoencoders everywhere all at once on gemma 2")), which are trained directly on Gemma-2 hidden activations and enable sparse steering without additional overhead of training SAEs from scratch.

|

| 98 |

+

|

| 99 |

+

Tasks. For readability, we focus on cultural adaptation, followed by a generalization study on other standard alignment tasks as studied in previous work (Cao et al., [2024](https://arxiv.org/html/2601.08441v1#bib.bib11 "Personalized steering of large language models: versatile steering vectors through bi-directional preference optimization"); Panickssery et al., [2024](https://arxiv.org/html/2601.08441v1#bib.bib13 "Steering llama 2 via contrastive activation addition"); Bayat et al., [2025](https://arxiv.org/html/2601.08441v1#bib.bib15 "Steering large language model activations in sparse spaces")). For cultural adaptation, we select the steering layer via activation patching, see Appendix [A](https://arxiv.org/html/2601.08441v1#A1 "Appendix A Layer Discovery ‣ YaPO: Learnable Sparse Activation Steering Vectors for Domain Adaptation"). Empirically, we find that layer 15 yields the best performance with Gemma-2-2B. Training details and hyperparameter settings are reported in Appendix [B](https://arxiv.org/html/2601.08441v1#A2 "Appendix B Training Details ‣ YaPO: Learnable Sparse Activation Steering Vectors for Domain Adaptation").

|

| 100 |

+

|

| 101 |

+

Algorithm 1 YaPO: Yet another Policy Optimization

|

| 102 |

+

|

| 103 |

+

1:Input: LLM

|

| 104 |

+

|

| 105 |

+

π\pi

|

| 106 |

+

, preference dataset

|

| 107 |

+

|

| 108 |

+

𝒟\mathcal{D}

|

| 109 |

+

, batch size

|

| 110 |

+

|

| 111 |

+

B B

|

| 112 |

+

, layer

|

| 113 |

+

|

| 114 |

+

A L A_{L}

|

| 115 |

+

, SAE encoder Enc, decoder Dec, learning rate

|

| 116 |

+

|

| 117 |

+

η\eta

|

| 118 |

+

, temperature

|

| 119 |

+

|

| 120 |

+

β\beta

|

| 121 |

+

, epochs

|

| 122 |

+

|

| 123 |

+

N N

|

| 124 |

+

|

| 125 |

+

2:Output: Optimized steering vector

|

| 126 |

+

|

| 127 |

+

v∗v^{\ast}

|

| 128 |

+

|

| 129 |

+

3:Initialize

|

| 130 |

+

|

| 131 |

+

v 0←𝟎∈ℝ k s v_{0}\leftarrow\mathbf{0}\in\mathbb{R}^{k_{s}}

|

| 132 |

+

|

| 133 |

+

4:for

|

| 134 |

+

|

| 135 |

+

e=0 e=0

|

| 136 |

+

to

|

| 137 |

+

|

| 138 |

+

N−1 N-1

|

| 139 |

+

do

|

| 140 |

+

|

| 141 |

+

5: Sample minibatch

|

| 142 |

+

|

| 143 |

+

𝒟 e∼𝒟\mathcal{D}_{e}\sim\mathcal{D}

|

| 144 |

+

of size

|

| 145 |

+

|

| 146 |

+

B B

|

| 147 |

+

|

| 148 |

+

6: Sample direction

|

| 149 |

+

|

| 150 |

+

d∼𝒰{−1,1}d\sim\mathcal{U}\{-1,1\}

|

| 151 |

+

|

| 152 |

+

7:for each

|

| 153 |

+

|

| 154 |

+

(x i,y w i,y l i)∈𝒟 e(x^{i},y_{w}^{i},y_{l}^{i})\in\mathcal{D}_{e}

|

| 155 |

+

do

|

| 156 |

+

|

| 157 |

+

8:

|

| 158 |

+

|

| 159 |

+

h i←A L(x i)h^{i}\leftarrow A_{L}(x^{i})

|

| 160 |

+

|

| 161 |

+

9:

|

| 162 |

+

|

| 163 |

+

s i←Enc(h i)s^{i}\leftarrow\text{Enc}(h^{i})

|

| 164 |

+

|

| 165 |

+

10:

|

| 166 |

+

|

| 167 |

+

s~i←ReLU(s i+dv e)\tilde{s}^{i}\leftarrow\mathrm{ReLU}(s^{i}+dv_{e})

|

| 168 |

+

|

| 169 |

+

11:

|

| 170 |

+

|

| 171 |

+

h~i←Dec(s~i)\tilde{h}^{i}\leftarrow\text{Dec}(\tilde{s}^{i})

|

| 172 |

+

|

| 173 |

+

12:

|

| 174 |

+

|

| 175 |

+

h^i←Dec(Enc(h i))\hat{h}^{i}\leftarrow\text{Dec}(\text{Enc}(h^{i}))

|

| 176 |

+

|

| 177 |

+

13:

|

| 178 |

+

|

| 179 |

+

h′i←h~i+(h i−h^i)h^{\prime\,i}\leftarrow\tilde{h}^{i}+(h^{i}-\hat{h}^{i})

|

| 180 |

+

|

| 181 |

+

14:end for

|

| 182 |

+

|

| 183 |

+

15: Compute loss

|

| 184 |

+

|

| 185 |

+

ℒ\mathcal{L}

|

| 186 |

+

as per equation [4](https://arxiv.org/html/2601.08441v1#S3.E4 "In 3.2 Preference-Optimized Steering in Sparse Space ‣ 3 Method ‣ YaPO: Learnable Sparse Activation Steering Vectors for Domain Adaptation")

|

| 187 |

+

|

| 188 |

+

16:

|

| 189 |

+

|

| 190 |

+

v e+1←AdamW(v e,∇v e ℒ,η)v_{e+1}\leftarrow\text{AdamW}(v_{e},\nabla_{v_{e}}\mathcal{L},\eta)

|

| 191 |

+

|

| 192 |

+

17:end for

|

| 193 |

+

|

| 194 |

+

18:return

|

| 195 |

+

|

| 196 |

+

v∗←v N−1 v^{\ast}\leftarrow v_{N-1}

|

| 197 |

+

|

| 198 |

+

Dataset. We introduce a new cultural alignment dataset that we curate from scratch, with dedicated _training_ and _evaluation_ splits, to probe fine-grained cultural localization _within the same language_. Existing cultural benchmarks often conflate culture with language, geography, or surface lexical cues, making it unclear whether models truly reason about cultural norms or merely exploit explicit signals. Our dataset addresses this limitation by holding language fixed and varying only country-level norms and practices, targeting subtle yet consequential differences in everyday behavior among countries that share a language (e.g., Moroccan vs. Egyptian Arabic, US vs. UK English).

|

| 199 |

+

|

| 200 |

+

Crucially, every question appears in two forms: (i) a _localized_ version that explicitly specifies the country (e.g., “I am from Morocco, …”), and (ii) a _non-localized_ version that omits the country, requiring the model to infer cultural context implicitly from dialectal and situational cues from the input prompt. This paired construction enables principled measurement of the _implicit–explicit localization gap_, the performance drop when explicit country information is removed—following (Veselovsky et al., [2025](https://arxiv.org/html/2601.08441v1#bib.bib16 "Localized cultural knowledge is conserved and controllable in large language models")).

|

| 201 |

+

|

| 202 |

+

To ensure consistent multi-country coverage at scale, responses were generated with Gemini and subsequently filtered and curated. For clarity of presentation, full details on the dataset curation process and statistics are differed to Appendix [F](https://arxiv.org/html/2601.08441v1#A6 "Appendix F Dataset ‣ YaPO: Learnable Sparse Activation Steering Vectors for Domain Adaptation").

|

| 203 |

+

|

| 204 |

+

###### Definition 1(Performance–Normalized Localization Gap (PNLG)).

|

| 205 |

+

|

| 206 |

+

Let x loc x_{\mathrm{loc}} and x nonloc x_{\mathrm{nonloc}} be a localized and its corresponding non–localized prompt, and let y∗y^{\ast} be the culturally correct answer. For a model π\pi, define the per-instance correctness scores

|

| 207 |

+

|

| 208 |

+

p loc=S π(x loc,y∗),p non=S π(x nonloc,y∗),p_{\mathrm{loc}}=S_{\pi}(x_{\mathrm{loc}},y^{\ast}),\qquad p_{\mathrm{non}}=S_{\pi}(x_{\mathrm{nonloc}},y^{\ast}),

|

| 209 |

+

|

| 210 |

+

where S π(x,y∗)≥0 S_{\pi}(x,y^{\ast})\geq 0 indicates whether the model output matches the correct answer. In the multiple-choice questions setting, S π S_{\pi} is the accuracy and thus is 1 1 if the predicted option equals y∗y^{\ast}, and 0 otherwise. In the open-ended generation setting, S π S_{\pi} is a score determined by an external LLM judge.

|

| 211 |

+

|

| 212 |

+

Let p¯=1 2(p loc+p non)\bar{p}=\tfrac{1}{2}(p_{\mathrm{loc}}+p_{\mathrm{non}}). The _performance–normalized localization gap_ is:

|

| 213 |

+

|

| 214 |

+

PNLG α(π)=𝔼(x loc,x nonloc,y∗)∼𝒟[p loc−p non p¯α+ε],\mathrm{PNLG}_{\alpha}(\pi)=\mathbb{E}_{(x_{\mathrm{loc}},x_{\mathrm{nonloc}},y^{\ast})\sim\mathcal{D}}\left[\frac{p_{\mathrm{loc}}-p_{\mathrm{non}}}{\bar{p}^{\,\alpha}+\varepsilon}\right],(5)

|

| 215 |

+

|

| 216 |

+

with ε>0\varepsilon>0 arbitrarily small for numerical stability and α∈[0,1]\alpha\in[0,1] controlling the strength of the normalization.

|

| 217 |

+

|

| 218 |

+

###### Definition 2(Robust Cultural Accuracy (RCA)).

|

| 219 |

+

|

| 220 |

+

Using the same notation, the _robust cultural accuracy_ is the harmonic mean of localized and non–localized accuracies:

|

| 221 |

+

|

| 222 |

+

RCA(π)=𝔼(x loc,x nonloc,y∗)∼𝒟[2p locp non p loc+p non+ε].\mathrm{RCA}(\pi)=\mathbb{E}_{(x_{\mathrm{loc}},x_{\mathrm{nonloc}},y^{\ast})\sim\mathcal{D}}\left[\frac{2\,p_{\mathrm{loc}}\,p_{\mathrm{non}}}{p_{\mathrm{loc}}+p_{\mathrm{non}}+\varepsilon}\right].(6)

|

| 223 |

+

|

| 224 |

+

with ε>0\varepsilon>0 arbitrarily small for numerical stability.

|

| 225 |

+

|

| 226 |

+

Design choice of metrics. A raw localization gap p loc−p non p_{\mathrm{loc}}-p_{\mathrm{non}} can be misleading: a weak model may display a small gap simply because both accuracies are near zero. PNLG corrects for this by normalizing the gap with the mean performance p¯\bar{p}, so models with trivially low accuracy are penalized. RCA complements this by rewarding methods that are both accurate and balanced across localized and non–localized prompts. Together, PNLG and RCA provide a more faithful evaluation of cultural alignment than raw gap alone.

|

| 227 |

+

|

| 228 |

+

Baselines. We benchmark the performances of YaPO against four baselines: No steering: the original Gemma-2-2B model without any intervention. CAA(Panickssery et al., [2024](https://arxiv.org/html/2601.08441v1#bib.bib13 "Steering llama 2 via contrastive activation addition")): which derives dense steering vectors by contrastive activation addition averaging, without preference optimization. SAS(Bayat et al., [2025](https://arxiv.org/html/2601.08441v1#bib.bib15 "Steering large language model activations in sparse spaces")): which derives sparse steering vectors by averaging SAE-encoded activations in the style of CAA, without preference optimization. BiPO(Cao et al., [2024](https://arxiv.org/html/2601.08441v1#bib.bib11 "Personalized steering of large language models: versatile steering vectors through bi-directional preference optimization")): which optimizes dense steering vectors directly in the residual stream via bi-directional preference optimization.

|

| 229 |

+

|

| 230 |

+

These baselines allow us to disentangle the contributions of sparse representations and preference optimization in improving cultural alignment , and to assess whether YaPO indeed provides the best of both worlds by combining the precision of BiPO with the interpretability of SAS.

|

| 231 |

+

|

| 232 |

+

### 4.2 Training Dynamics Analysis

|

| 233 |

+

|

| 234 |

+

|

| 235 |

+

|

| 236 |

+

(a) Egypt localized

|

| 237 |

+

|

| 238 |

+

|

| 239 |

+

|

| 240 |

+

(b) Nepal non-localized

|

| 241 |

+

|

| 242 |

+

Figure 2: Localized (a) and non-localized (b) training and evaluation loss comparison between BiPO and YaPO for Egypt (a) and Nepal (b).

|

| 243 |

+

|

| 244 |

+

We begin by comparing the training dynamics of YaPO and BiPO. Empirically, we find that the same behavior occur for all countries and scenarios. Thus, for conciseness matters, we report training and evaluation loss logs for “Egypt” and “Nepal” under both the “localized” and “non-localized” cultural adaptation settings. Figures [2(a)](https://arxiv.org/html/2601.08441v1#S4.F2.sf1 "In Figure 2 ‣ 4.2 Training Dynamics Analysis ‣ 4 Experiments ‣ YaPO: Learnable Sparse Activation Steering Vectors for Domain Adaptation")–[2(b)](https://arxiv.org/html/2601.08441v1#S4.F2.sf2 "In Figure 2 ‣ 4.2 Training Dynamics Analysis ‣ 4 Experiments ‣ YaPO: Learnable Sparse Activation Steering Vectors for Domain Adaptation") show training and evaluation loss over optimization steps for both methods (YaPO and BiPO).

|

| 245 |

+

|

| 246 |

+

The contrast is striking: YaPO converges an order of magnitude faster, with loss consistently dropping below 0.1 in under than 150 steps in both scenarios, whereas BiPO remains above 0.3 even after 600 steps. This rapid convergence stems from and underscores the advantage of operating in the sparse SAE latent space, where disentangled features yield cleaner gradients and more stable optimization. Sparse codes isolate semantically meaningful directions, reducing interference from irrelevant features that blur optimization in dense space. In contrast, BiPO remains tied to the dense residual space, where multi-semanticity and superposition entangle behavioral factors, hindering convergence, and stability, particularly in tasks that require disentangling closely related features.

|

| 247 |

+

|

| 248 |

+

Localized Non-localized Both

|

| 249 |

+

Lang.Country Base CAA SAS BiPO YaPO Base CAA SAS BiPO YaPO Base CAA SAS BiPO YaPO

|

| 250 |

+

Portuguese Brazil 23.4%44.0%21.1%27.9%41.6%17.7%32.0%17.1%22.2%34.8%19.9%42.0%19.9%27.3%39.1%

|

| 251 |

+

Mozambique 21.8%40.9%44.9%28.0%37.2%19.3%33.9%38.6%25.7%27.5%20.2%36.9%46.0%25.0%32.1%

|

| 252 |

+

Portugal 33.5%43.5%50.9%37.6%53.2%28.7%39.8%49.5%35.2%52.3%32.2%44.1%52.2%34.5%54.0%

|

| 253 |

+

Average 26.2%42.8%39.0%31.2%44.0%21.9%35.2%35.1%27.7%38.2%24.1%41.0%39.4%28.9%41.7%

|

| 254 |

+

Arabic Egypt 43.1%46.7%41.8%45.1%47.7%36.0%43.6%33.4%39.8%43.6%36.1%44.7%37.5%42.2%50.2%

|

| 255 |

+

KSA 16.1%16.8%19.2%19.9%20.2%16.7%13.5%19.6%18.9%19.2%17.1%14.1%20.2%19.5%20.9%

|

| 256 |

+

Levantine 15.0%12.1%14.7%16.9%16.9%10.3%7.9%11.4%11.4%13.1%12.4%10.4%13.4%14.6%15.3%

|

| 257 |

+

Morocco 12.6%11.2%8.7%13.6%14.0%12.6%10.4%11.0%13.6%14.0%11.6%10.8%19.5%13.8%13.6%

|

| 258 |

+

Average 21.7%21.7%21.1%23.9%24.7%21.0%18.9%21.3%23.4%22.5%19.3%20.0%22.7%22.5%25.0%

|

| 259 |

+

|

| 260 |

+

Table 1: Multiple-choice question performance by language and country using Gemma-2-2B-it.

|

| 261 |

+

|

| 262 |

+

Localized Non-localized Both

|

| 263 |

+

Lang.Country Base CAA SAS BiPO YaPO Base CAA SAS BiPO YaPO Base CAA SAS BiPO YaPO

|

| 264 |

+

Portuguese Brazil 5.96 2.66 6.02 6.35 6.11 5.62 2.51 5.51 5.97 5.61 5.81 2.59 5.75 6.21 5.86

|

| 265 |

+

Mozambique 5.56 2.66 5.56 6.01 5.65 4.76 2.47 4.73 5.10 4.79 5.15 2.62 5.14 5.54 5.31

|

| 266 |

+

Portugal 5.85 2.59 5.89 6.10 6.01 5.28 2.54 5.35 5.56 5.30 5.52 2.57 5.57 5.86 5.70

|

| 267 |

+

Average 5.79 2.64 5.82 6.15 5.92 5.22 2.51 5.20 5.54 5.23 5.49 2.60 5.45 5.87 5.62

|

| 268 |

+

Arabic Egypt 2.93 2.38 2.77 3.10 3.02 2.97 2.68 2.91 3.15 3.60 3.00 2.22 2.81 3.08 3.31

|

| 269 |

+

KSA 3.30 2.02 3.68 3.42 3.85 3.09 2.28 3.46 3.29 3.71 3.21 2.15 3.60 3.31 3.75

|

| 270 |

+

Levantine 3.13 1.74 2.81 3.24 3.06 3.06 1.92 2.91 3.23 3.41 3.04 2.00 2.85 3.13 3.22

|

| 271 |

+

Morocco 2.92 2.12 2.43 3.06 2.91 2.75 1.98 2.55 2.82 2.77 2.76 2.04 2.45 2.88 2.80

|

| 272 |

+

Average 3.07 2.07 2.92 3.21 3.21 2.97 2.21 2.96 3.12 3.37 3.00 2.10 2.93 3.10 3.27

|

| 273 |

+

|

| 274 |

+

Table 2: Open-ended performance by language and country using Gemma-2-2B-it.

|

| 275 |

+

|

| 276 |

+

5 Evaluation

|

| 277 |

+

------------

|

| 278 |

+

|

| 279 |

+

We evaluate YaPO against CAA, BiPO, SAS and the baseline model without steering on our curated multilingual cultural adaptation benchmark using both Multiple-Choice Questions (MCQs) and Open-ended Generation (OG). To assess absolute alignment as well as robustness to the explicit–implicit localization gap, we consider the three settings: localized, non-localized, and mixed prompts (both). MCQ performance is measured by accuracy 2 2 2 The ground-truth answer is annotated using a \boxed{k} tag, where k k denotes the index of the correct choice, if the regex doesn’t match, we call an external LLM to judge., while OG responses are scored by an external LLM judge for consistency with the gold answer (see Appendix [E](https://arxiv.org/html/2601.08441v1#A5 "Appendix E Evaluation: LLM-as-Judge Prompts ‣ YaPO: Learnable Sparse Activation Steering Vectors for Domain Adaptation") for the evaluation prompts). For clarity, we only show the results on “Portuguese” and “Arabic” languages, the results on the full five set of languages are in Appendix [C](https://arxiv.org/html/2601.08441v1#A3 "Appendix C Evaluation Results ‣ YaPO: Learnable Sparse Activation Steering Vectors for Domain Adaptation").

|

| 280 |

+

|

| 281 |

+

Language RCA ↑ (Higher is better)PNLG ↓ (Lower is better)

|

| 282 |

+

MCQ (%)Open-Ended (0–10)MCQ Open-Ended

|

| 283 |

+

Base CAA SAS BiPO YaPO Base CAA SAS BiPO YaPO Base CAA SAS BiPO YaPO Base CAA SAS BiPO YaPO

|

| 284 |

+

Arabic 20.1 19.2 21.3 22.2 23.5 1.08 0.76 1.08 1.36 1.60 0.129 0.167 0.098 0.141 0.098 1.470 1.583 1.482 1.359 1.346

|

| 285 |

+

Portuguese 23.8 37.5 36.5 29.3 40.8 1.40 0.72 1.39 1.77 1.62 0.184 0.192 0.113 0.126 0.165 1.569 1.798 1.584 1.462 1.511

|

| 286 |

+

|

| 287 |

+

Table 3: RCA and PNLG Analysis by Language for MCQ and Open-Ended Tasks (All Methods).

|

| 288 |

+

|

| 289 |

+

### 5.1 Multiple-Choice Questions

|

| 290 |

+

|

| 291 |

+

Table [1](https://arxiv.org/html/2601.08441v1#S4.T1 "Table 1 ‣ 4.2 Training Dynamics Analysis ‣ 4 Experiments ‣ YaPO: Learnable Sparse Activation Steering Vectors for Domain Adaptation") reports MCQ accuracy by language, country, and prompt setting. Overall, all methods improve over the baseline in most settings, with YaPO being the most consistent across languages and prompt types. Gains are especially pronounced for non-localized prompts, where cultural cues are implicit. CAA and SAS already yield strong improvements under explicit localization (e.g., Spanish–Spain), but YaPO typically matches or exceeds these gains while remaining robust when localization is removed. In contrast, BiPO shows more variable behavior and can underperform in low-resource or highly entangled settings.

|

| 292 |

+

|

| 293 |

+

In contrast, YaPO shows smooth and monotonic accuracy scaling over a wide range of λ\lambda values. Performance degrades gracefully rather than catastrophically, and optimal accuracy is achieved without precise tuning. This robustness is consistent across culturally distant settings (Egypt vs. Levantine, Nepal vs. Spanish), suggesting that sparse, preference-optimized steering reduces entanglement and limits destructive interference. Overall, these results highlight that YaPO not only improves peak performance but also substantially enlarges the safe and effective steering regime.

|

| 294 |

+

|

| 295 |

+

### 5.2 Open-Ended Generation

|

| 296 |

+

|

| 297 |

+

Table [2](https://arxiv.org/html/2601.08441v1#S4.T2 "Table 2 ‣ 4.2 Training Dynamics Analysis ‣ 4 Experiments ‣ YaPO: Learnable Sparse Activation Steering Vectors for Domain Adaptation") reports open-ended generation results for Portuguese and Arabic under localized, non-localized, and mixed prompt settings. In Portuguese, dense BiPO steering consistently attains the highest scores across all settings, whereas CAA substantially degrades performance and SAS remains close to the baseline. In Arabic, YaPO yields the strongest gains, particularly in the non-localized setting where cultural cues are implicit (e.g., the average score increases from 2.97 to 3.37), while BiPO provides smaller and less consistent improvements. Overall, BiPO is most effective in high-resource settings with strong baselines, whereas YaPO delivers more reliable improvements in lower-resource and implicitly localized open-ended generation. The consistent degradation observed with CAA is likely due to the coarse nature of simple activation averaging: a single dense steering direction applied uniformly across the chosen layer can tend to over-regularizes long-form generation, suppressing stylistic variation, discourse structure, and culturally specific details. In contrast, BiPO benefits from learnable steering, and YaPO further improves robustness by enforcing sparsity and disentanglement thereby taking the best of both worlds from BiPO and SAS.

|

| 298 |

+

|

| 299 |

+

|

| 300 |

+

|

| 301 |

+

Figure 3: Training accuracy over epochs for YaPO (red), BiPO (blue), and the unsteered baseline (orange) on the MCQ localization task across six cultural regions.

|

| 302 |

+

|

| 303 |

+

### 5.3 Explicit–Implicit Localization Gap

|

| 304 |

+

|

| 305 |

+

Table [3](https://arxiv.org/html/2601.08441v1#S5.T3 "Table 3 ‣ 5 Evaluation ‣ YaPO: Learnable Sparse Activation Steering Vectors for Domain Adaptation") reports RCA and PNLG for MCQ and open-ended tasks. Recall that RCA (Eq. [6](https://arxiv.org/html/2601.08441v1#S4.E6 "In Definition 2 (Robust Cultural Accuracy (RCA)). ‣ 4.1 Experimental Setup ‣ 4 Experiments ‣ YaPO: Learnable Sparse Activation Steering Vectors for Domain Adaptation")) is the harmonic mean of localized and non-localized performance, rewarding methods that are both accurate and balanced across settings. Higher RCA therefore reflects robust cultural competence rather than reliance on explicit localization cues. PNLG (Eq. [5](https://arxiv.org/html/2601.08441v1#S4.E5 "In Definition 1 (Performance–Normalized Localization Gap (PNLG)). ‣ 4.1 Experimental Setup ‣ 4 Experiments ‣ YaPO: Learnable Sparse Activation Steering Vectors for Domain Adaptation")) measures the relative gap between localized and non-localized performance; lower values indicate better transfer from explicit to implicit prompts.

|

| 306 |

+

|

| 307 |

+

Across languages and tasks, YaPO consistently achieves the best trade-off, yielding the highest RCA while maintaining among the lowest PNLG values. This indicates that YaPO improves cultural robustness without widening the explicit–implicit localization gap, and that this behavior holds for both MCQ and open-ended generation. BiPO also improves RCA over the baseline, but exhibits a larger PNLG in several cases, suggesting less balanced gains between explicit and implicit settings.

|

| 308 |

+

|

| 309 |

+

A particularly salient pattern is the task dependence of CAA. While CAA attains competitive RCA on MCQs, it substantially degrades both RCA and PNLG on open-ended generation. This supports the view that coarse activation averaging may suffice for short, discrete predictions, but becomes harmful in long-form generation, where it over-constrains representations and amplifies the localization gap. In contrast, sparse and preference-optimized steering, especially YaPO appears better suited to preserving balanced behavior across prompt regimes.

|

| 310 |

+

|

| 311 |

+

### 5.4 Performance Stability and Convergence Throughout Training

|

| 312 |

+

|

| 313 |

+

As shown in Figure [3](https://arxiv.org/html/2601.08441v1#S5.F3 "Figure 3 ‣ 5.2 Open-Ended Generation ‣ 5 Evaluation ‣ YaPO: Learnable Sparse Activation Steering Vectors for Domain Adaptation"), YaPO converges faster and more smoothly than BiPO across all regions, reaching higher final accuracy. BiPO exhibits pronounced oscillations, particularly in lower-resource settings, indicating less stable optimization. This instability often leads to overwriting previously correct behaviors. These results highlight the stabilizing effect of sparse, preference-optimized steering.

|

| 314 |

+

|

| 315 |

+

### 5.5 Sensitivity to the Steering Multiplier

|

| 316 |

+

|

| 317 |

+

|

| 318 |

+

|

| 319 |

+

(a) Egypt & Levantine

|

| 320 |

+

|

| 321 |

+

|

| 322 |

+

|

| 323 |

+

(b) Nepal & Spanish

|

| 324 |

+

|

| 325 |

+

Figure 4: Effect of steering multiplier λ\lambda on MCQ accuracy across methods for different cultural settings. YaPO exhibits smoother and more stable accuracy scaling compared to dense baselines.

|

| 326 |

+

|

| 327 |

+

Localized Non-localized Both

|

| 328 |

+

Language Country CAA SAS BiPO YaPO CAA SAS BiPO YaPO CAA SAS BiPO YaPO

|

| 329 |

+

Baseline (no steering)57.58%

|

| 330 |

+

Spanish Spain 56.99%56.97%57.61%57.30%56.93%56.84%57.64%57.27%57.02%56.94%57.68%57.27%

|

| 331 |

+

Mexico 56.99%57.09%57.66%57.36%57.05%57.03%57.57%57.27%56.98%57.08%57.62%57.12%

|

| 332 |

+

Bolivia 56.96%56.92%57.47%57.17%56.85%57.05%57.45%57.09%56.95%57.08%57.39%57.02%

|

| 333 |

+

Average 56.98%56.99%57.58%57.28%56.94%56.97%57.55%57.21%56.98%57.03%57.56%57.14%

|

| 334 |

+

Arabic Egypt 57.13 57.11 57.51%57.06%57.02 57.18 57.50%57.14%57.21 57.13 57.42%56.97%

|

| 335 |

+

KSA 57.27 57.10 57.62%57.35%57.27 57.19 57.56%57.36%57.29 57.12 57.66%57.16%

|

| 336 |

+

Levantine 57.02 57.12 57.64%57.37%56.98 57.04 57.58%57.29%56.95 57.08 57.67%57.17%

|

| 337 |

+

Morocco 57.17 57.07 57.57%57.30%57.26 57.01 57.61%57.36%57.12 57.05 57.72%57.12%

|

| 338 |

+

Average 57.15 57.10 57.58%57.27%57.13 57.10 57.56%57.29%57.14 57.10 57.62%57.10%

|

| 339 |

+

|

| 340 |

+

Table 4: Performances on MMLU using MCQ steering vectors (All Methods). The non-steered baseline accuracy is reported once globally (with chat template).

|

| 341 |

+

|

| 342 |

+

Figure [4](https://arxiv.org/html/2601.08441v1#S5.F4 "Figure 4 ‣ 5.5 Sensitivity to the Steering Multiplier ‣ 5 Evaluation ‣ YaPO: Learnable Sparse Activation Steering Vectors for Domain Adaptation") analyzes the effect of the steering multiplier λ\lambda on MCQ accuracy. We observe that CAA and SAS exhibit strong sensitivity to λ\lambda: performance is highly non-monotonic and often collapses abruptly beyond a narrow operating range (e.g., λ>0.5\lambda>0.5), indicating over-steering where activation shifts destabilize generation. In contrast, YaPO and BiPO remain robust to larger steering strengths, with YaPO notably achieving its highest accuracy at larger λ\lambda values (e.g., λ=1.5\lambda=1.5 or 2.0 2.0) without degradation, demonstrating the stability of sparse preference optimization.

|

| 343 |

+

|

| 344 |

+

### 5.6 MMLU and Generalization to Other Domains

|

| 345 |

+

|

| 346 |

+

#### MMLU.

|

| 347 |

+

|

| 348 |

+

Table [4](https://arxiv.org/html/2601.08441v1#S5.T4 "Table 4 ‣ 5.5 Sensitivity to the Steering Multiplier ‣ 5 Evaluation ‣ YaPO: Learnable Sparse Activation Steering Vectors for Domain Adaptation") reports results on MMLU to assess whether cultural steering impacts general knowledge. Across all languages and prompt settings, we observe that differences between methods remain small, with scores tightly clustered around the unsteered baseline. This indicates that none of the steering approaches, including YaPO, significantly degrade or inflate general-purpose performance on MMLU. Overall, these results suggest that the learned steering vectors primarily affect targeted alignment behaviors, while leaving broad knowledge capabilities intact.

|

| 349 |

+

|

| 350 |

+

#### Generalization to other tasks.

|

| 351 |

+

|

| 352 |

+

To assess whether cultural steering vectors specialize too narrowly, we evaluate them on BiPO’s benchmarks in Table [5](https://arxiv.org/html/2601.08441v1#S5.T5 "Table 5 ‣ Generalization to other tasks. ‣ 5.6 MMLU and Generalization to Other Domains ‣ 5 Evaluation ‣ YaPO: Learnable Sparse Activation Steering Vectors for Domain Adaptation"), for Hallucination, Wealth-Seeking, Jailbreak, and Power-Seeking.

|

| 353 |

+

|

| 354 |

+

Overall, CAA attains the highest average score on these scalar tasks, with YaPO typically in second place, followed by BiPO and then SAS. However, in practice we find CAA and SAS to be quite brittle: their performance is highly sensitive to the choice of steering weight and activation threshold τ\tau, as shown in Section [5.5](https://arxiv.org/html/2601.08441v1#S5.SS5 "5.5 Sensitivity to the Steering Multiplier ‣ 5 Evaluation ‣ YaPO: Learnable Sparse Activation Steering Vectors for Domain Adaptation"). By contrast, in BiPO and YaPO the effective steering strength is absorbed into the learned vector itself (with a coefficient λ i\lambda_{i} per dimension i i, although we can also use an extra one outside as is done in BiPO). Thus, by the sparsity, YaPO has more degrees of freedom and is less dependent on manual hyperparameter tuning. This suggests that learning in a sparse activation space is not only effective for cultural alignment, but also generalizes as a robust steering mechanism on broader alignment dimensions such as hallucination reduction.

|

| 355 |

+

|

| 356 |

+

Model Task Base CAA SAS BiPO YaPO

|

| 357 |

+

Gemma-2-2B-it Wealth-Seeking 2.10 2.23 2.14 2.17 2.31

|

| 358 |

+

Jailbreak 1.00 1.08 1.00 1.02 1.00

|

| 359 |

+

Power-Seeking 1.89 2.09 1.81 1.93 2.03

|

| 360 |

+

Hallucination 1.60 2.18 1.46 1.60 1.69

|

| 361 |

+

Average 1.65 1.90 1.60 1.68 1.76

|

| 362 |

+

|

| 363 |

+

Table 5: Performance on general tasks.

|

| 364 |

+

|

| 365 |

+

6 Conclusion

|

| 366 |

+

------------

|

| 367 |

+

|

| 368 |

+

In this work, we introduced YaPO, a reference-free method that learns sparse, preference-optimized steering vectors in the latent space of Sparse Autoencoders. Our study demonstrates that operating in sparse space yields faster convergence, greater stability, and improved interpretability compared to dense steering methods such as BiPO. On our newly curated multilingual cultural benchmark spanning five languages and fifteen cultural contexts, YaPO consistently outperforms both BiPO and the baseline model, particularly under non-localized prompts, where implicit cultural cues must be inferred. Beyond culture, YaPO generalizes to other alignment dimensions such as hallucination mitigation, wealth-seeking, jailbreak, and power-seeking, underscoring its potential as a general recipe for efficient and fine-grained alignment.

|

| 369 |

+

|

| 370 |

+

Limitations

|

| 371 |

+

-----------

|

| 372 |

+

|

| 373 |

+

While our study broadens the evaluation landscape, several limitations remain. First, experiments were conducted on the Gemma-2 family (2B and 9B); due to compute and time constraints, we could not include additional architectures such as Llama-Scope 8B (He et al., [2024](https://arxiv.org/html/2601.08441v1#bib.bib37 "Llama scope: extracting millions of features from llama-3.1-8b with sparse autoencoders")) or Qwen models. Second, in the case where no SAE is available, one could learn task-specific small SAEs or low-rank sparse projections, we leave this for future work. Finally, our cultural dataset captures cross-country but not within-country diversity. Future efforts will expand its scope and explore cross-model transferability of sparse steering vectors.

|

| 374 |

+

|

| 375 |

+

References

|

| 376 |

+

----------

|

| 377 |

+

|

| 378 |

+