Add 1 files

Browse files- 2509/2509.24159.md +682 -0

2509/2509.24159.md

ADDED

|

@@ -0,0 +1,682 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

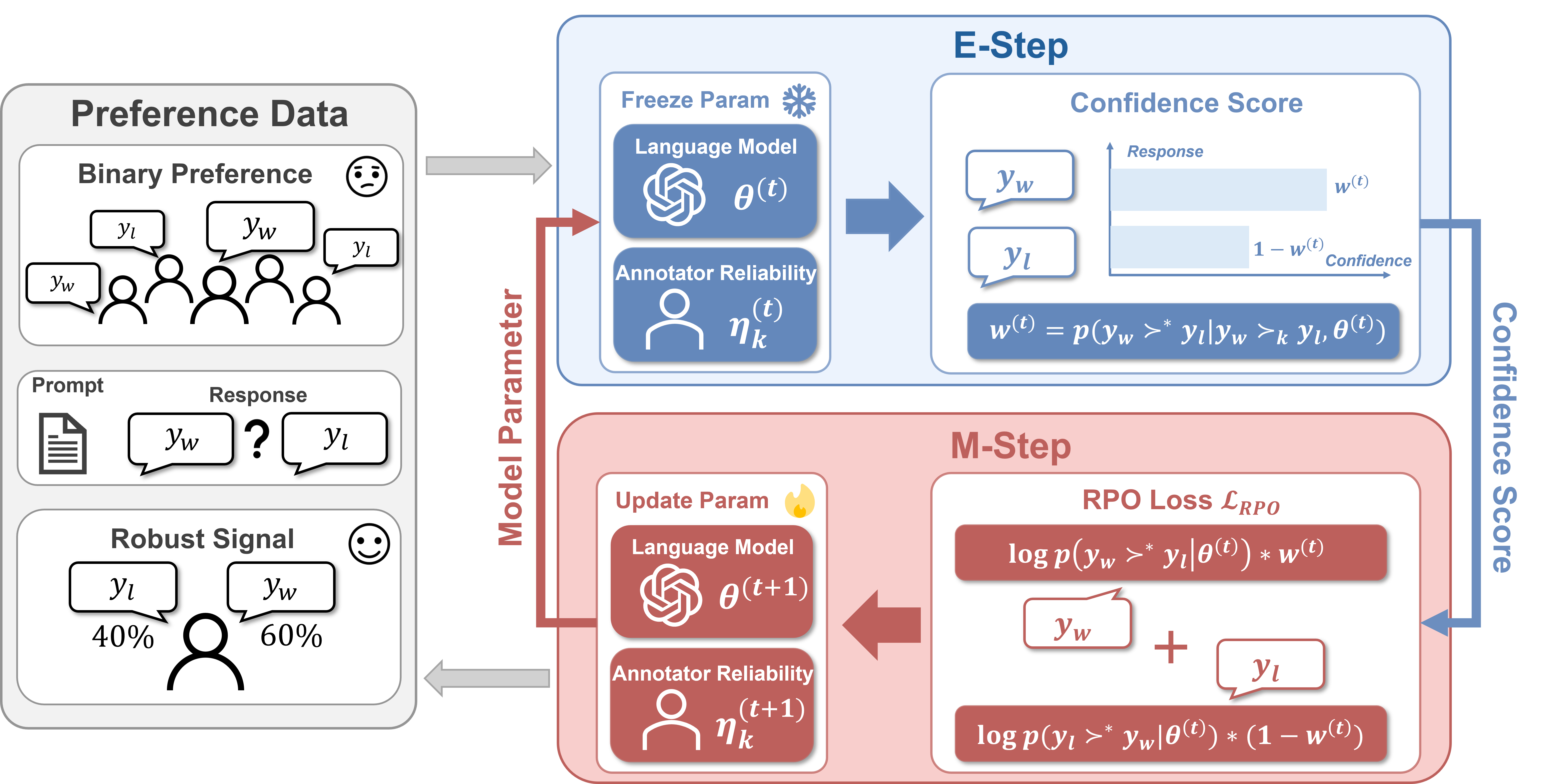

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

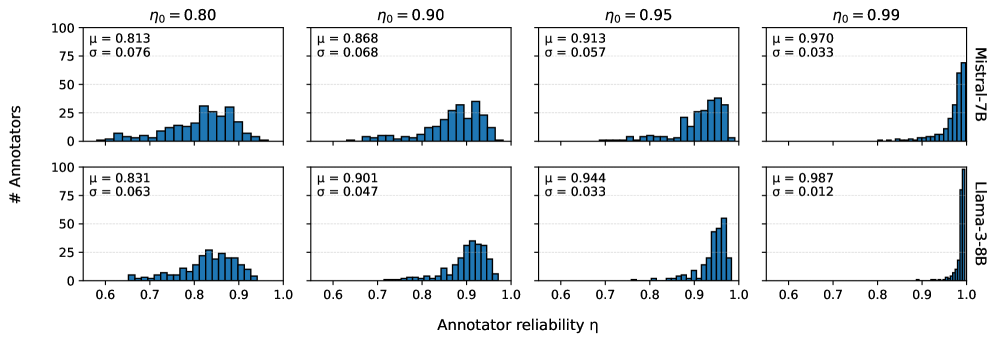

|

|

|

|

|

|

|

|

|

|

|

|

|

|

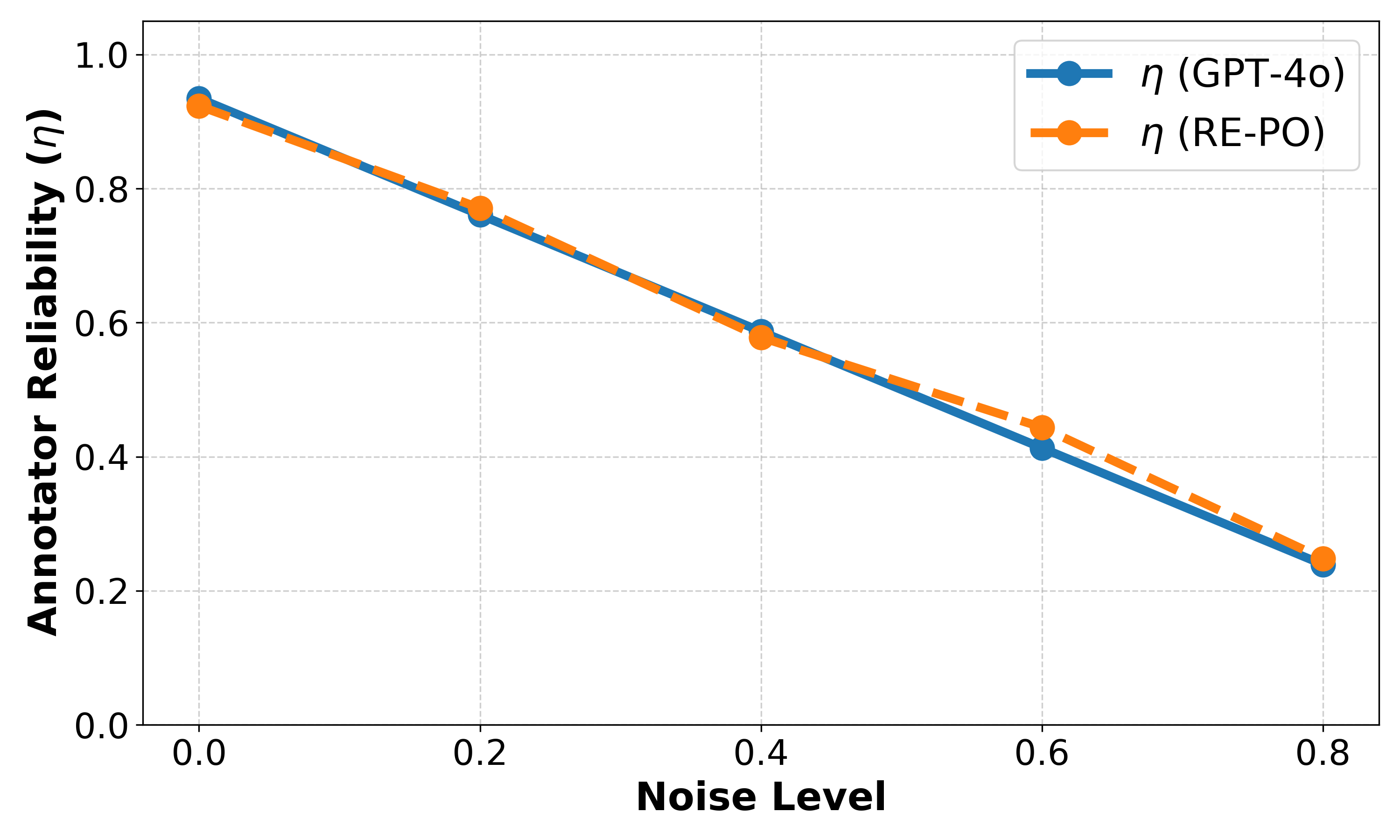

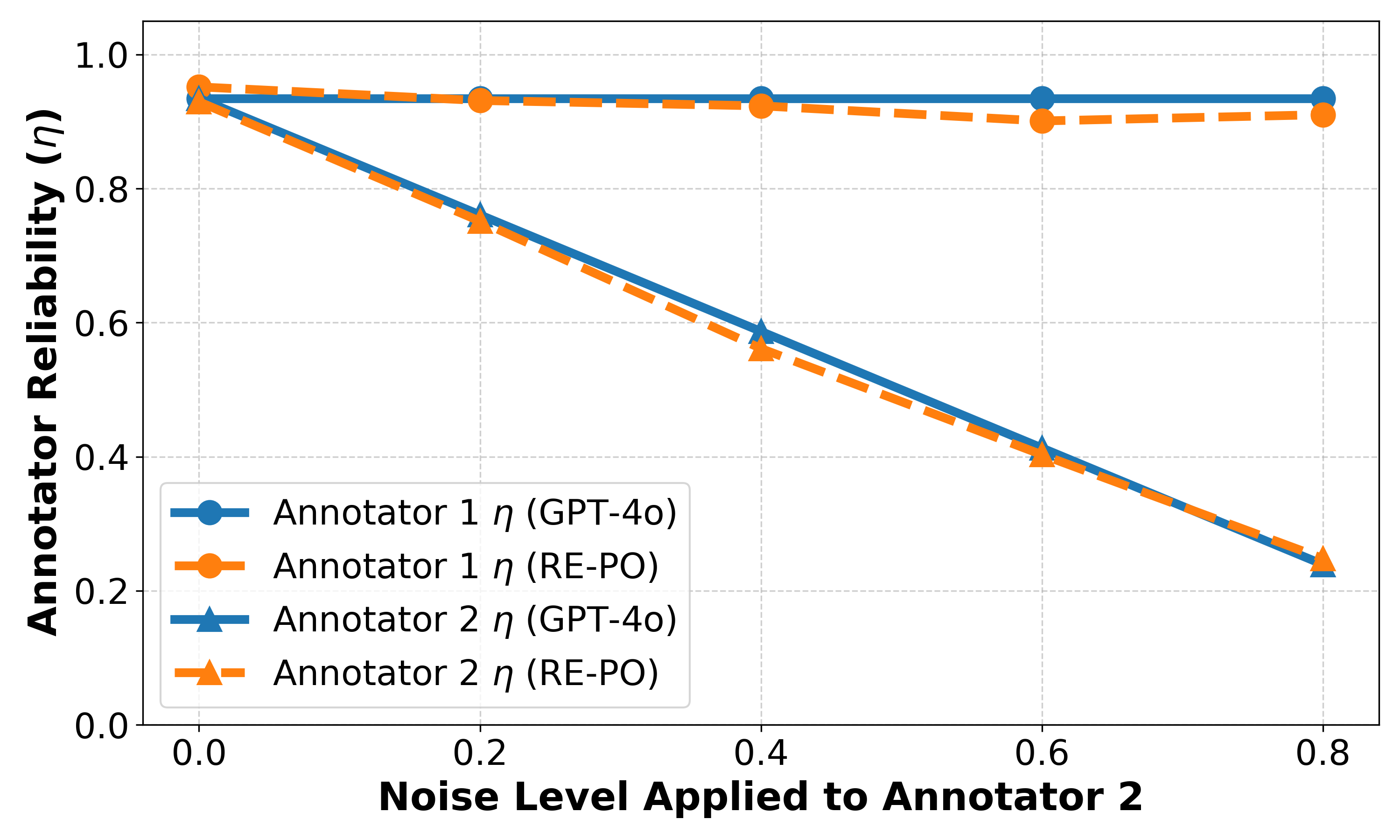

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

Title: RE-PO: Robust Enhanced Policy Optimization as a General Framework for LLM Alignment

|

| 2 |

+

|

| 3 |

+

URL Source: https://arxiv.org/html/2509.24159

|

| 4 |

+

|

| 5 |

+

Published Time: Tue, 09 Dec 2025 01:05:41 GMT

|

| 6 |

+

|

| 7 |

+

Markdown Content:

|

| 8 |

+

Xiaoyang Cao 1 , Zelai Xu 2 1 1 footnotemark: 1 , Mo Guang 3, Kaiwen Long 3,

|

| 9 |

+

|

| 10 |

+

Michiel A. Bakker 1, Yu Wang 2 , Chao Yu 2,4 2 2 footnotemark: 2

|

| 11 |

+

|

| 12 |

+

1 Massachusetts Institute of Technology 2 Tsinghua University

|

| 13 |

+

|

| 14 |

+

3 Li Auto Inc. 4 Zhongguancun Academy Equal contribution. Email: xycao@mit.edu.Corresponding authors. Emails: yu-wang@tsinghua.edu.cn, zoeyuchao@gmail.com.

|

| 15 |

+

|

| 16 |

+

###### Abstract

|

| 17 |

+

|

| 18 |

+

Standard human preference-based alignment methods, such as Reinforcement Learning from Human Feedback (RLHF), are a cornerstone technology for aligning Large Language Models (LLMs) with human values. However, these methods are all underpinned by a \rebuttal strong assumption that the collected preference data is clean and that all observed labels are equally reliable. In reality, \rebuttal large-scale preference datasets contain substantial label noise due to annotator errors, inconsistent instructions, varying expertise, and even adversarial or low-effort feedback. This creates a discrepancy between the recorded data and the ground-truth preferences, which can misguide the model and degrade its performance. To address this challenge, we introduce R obust E nhanced P olicy O ptimization (RE-PO). RE-PO employs an Expectation-Maximization algorithm to infer the posterior probability of each label’s correctness, which is used to adaptively re-weigh each data point in the training loss to mitigate noise. We further generalize this approach by establishing a theoretical link between arbitrary preference losses and their corresponding probabilistic models. This generalization enables the systematic transformation of existing alignment algorithms into their robust counterparts, elevating RE-PO from a specific algorithm to a general framework for robust preference alignment. Theoretically, we prove that under the condition of a perfectly calibrated model, RE-PO is guaranteed to converge to the true noise level of the dataset. Our experiments demonstrate RE-PO’s effectiveness as a general framework, consistently enhancing four state-of-the-art alignment algorithms (DPO, IPO, SimPO, and CPO). When applied to Mistral and Llama 3 models, the RE-PO-enhanced methods improve AlpacaEval 2 win rates by up to 7.0% over their respective baselines.

|

| 19 |

+

|

| 20 |

+

1 Introduction

|

| 21 |

+

--------------

|

| 22 |

+

|

| 23 |

+

Aligning Large Language Models (LLMs) with human values is a critical prerequisite for developing safe and reliable AI systems. Reinforcement Learning from Human Feedback (RLHF) has emerged as the dominant paradigm for this task (christiano2017deep; ziegler2019fine; ouyang2022training). To mitigate the complexity and instability of the traditional RLHF pipeline, simpler and more direct methods such as Direct Preference Optimization (DPO) (rafailov2023direct) have been developed, which reframe alignment as a classification-like problem.

|

| 24 |

+

|

| 25 |

+

\rebuttal

|

| 26 |

+

|

| 27 |

+

However, these alignment methods implicitly assume that preference datasets provide a clean and reliable approximation of a single ground-truth preference signal. In practice, this assumption is often violated. Large-scale preference datasets are typically aggregated from multiple crowdworkers or teacher models, and are therefore subject to substantial label noise arising from inattention, misunderstanding, or systematic bias (frenay2013classification; gao2024impact). Empirical analyses suggest that a significant fraction (often between 20% and 40%) of preference pairs in modern alignment datasets may be corrupted or inconsistent (gao2024impact). Classic work on learning with noisy labels shows that standard loss functions can overfit such corrupted supervision and suffer severe degradation in generalization performance (natarajan2013learning; frenay2013classification). In the context of LLM alignment, gao2024impact further demonstrate that even a 10 percentage point increase in the label-noise rate can lead to drops of tens of percentage points in downstream win rates, highlighting the practical importance of robustness to noisy preference data.

|

| 28 |

+

|

| 29 |

+

|

| 30 |

+

|

| 31 |

+

Figure 1: Overview of the Robust Enhanced Policy Optimization (RE-PO) framework. Starting from noisy pairwise feedback, RE-PO uses an Expectation–Maximization (EM) procedure to jointly refine label confidences and the policy. In each iteration, the E-step estimates a confidence score for every observed preference by inferring the posterior probability that the label is correct under the current model and annotator reliabilities. The M-step then uses these scores as adaptive weights to update both the LLM policy and the annotator reliability parameters, progressively down-weighting likely corrupted labels and emphasizing reliable supervision.

|

| 32 |

+

|

| 33 |

+

To address this challenge, we propose Robust Enhanced Policy Optimization (RE-PO). \rebuttal Instead of assuming that every observed label is a fixed ground truth, our approach aims to learn a preference model that remains accurate and stable even when the training data contains substantial noise. The core innovation of RE-PO is its departure from the hard labels used in traditional RLHF. \rebuttal Rather than committing to binary supervision, we treat the correctness of each observed preference as a latent variable and compute soft confidence weights over labels, so that highly reliable feedback contributes more strongly while suspicious pairs are down-weighted. Building on Expectation-Maximization-style approaches to learning from unreliable annotators in crowdsourcing (dawid1979maximum; chen2013pairwise), RE-PO employs an Expectation-Maximization (EM) framework that simultaneously models annotator reliability while optimizing the LLM. In the E-step, it infers the posterior probability that each annotated label is correct, effectively estimating annotator reliability. In the M-step, it uses these probabilities as adaptive weights to update the LLM, thereby learning from a dynamically re-weighted preference signal.

|

| 34 |

+

|

| 35 |

+

Our experiments validate RE-PO as an effective general framework. We show that applying RE-PO consistently enhances four state-of-the-art alignment algorithms (DPO, IPO, SimPO, and CPO) across two different base models (Mistral-7B and Llama-3-8B) on the AlpacaEval 2 benchmark (Table[2](https://arxiv.org/html/2509.24159v3#S5.T2 "Table 2 ‣ Evaluation benchmarks. ‣ 5.1 Experimental setup ‣ 5 Experiments ‣ RE-PO: Robust Enhanced Policy Optimization as a General Framework for LLM Alignment")). In our main results, RE-PO-enhanced methods achieve substantial win-rate gains on AlpacaEval 2, with improvements of up to 7.0 percentage points in LC/WR over their standard counterparts.Furthermore, we theoretically prove that RE-PO can recover the true reliability of annotators (Theorem[4.1](https://arxiv.org/html/2509.24159v3#S4.Thmtheorem1 "Theorem 4.1 (Identification and convergence of RE-PO). ‣ 4 Theoretical analysis of RE-PO ‣ RE-PO: Robust Enhanced Policy Optimization as a General Framework for LLM Alignment")) and empirically verify this guarantee in controlled experiments (Section[5.5](https://arxiv.org/html/2509.24159v3#S5.SS5 "5.5 Empirical verification of Theorem 4.1 ‣ 5 Experiments ‣ RE-PO: Robust Enhanced Policy Optimization as a General Framework for LLM Alignment")).

|

| 36 |

+

|

| 37 |

+

In summary, our contributions are as follows:

|

| 38 |

+

|

| 39 |

+

* •We propose Robust Enhanced Policy Optimization (RE-PO), a principled EM-based algorithm that treats the correctness of each preference label as a latent variable, jointly infers per-label (and per-annotator) reliabilities, and uses them as adaptive weights in the training loss, yielding LLM alignment that is substantially more robust to noisy and inconsistent feedback.

|

| 40 |

+

* •We theoretically establish a generalized RE-PO framework by using the Gibbs distribution to connect arbitrary preference loss functions to underlying probabilistic models. This lifts RE-PO from a single algorithm to a general framework, enabling standard methods such as DPO, IPO, SimPO, and CPO to be systematically transformed into their robust counterparts with minimal modification.

|

| 41 |

+

* •We conduct extensive experiments demonstrating the practical effectiveness and versatility of RE-PO. Across four alignment algorithms, two base models (Mistral-7B and Llama-3-8B), and AlpacaEval 2, RE-PO delivers consistent win-rate improvements of up to 7.0 percentage points, and further shows clear gains on a real multi-annotator dataset (MultiPref), along with qualitative and visual analyses of how it down-weights low-confidence, noisy labels.

|

| 42 |

+

|

| 43 |

+

2 Related work

|

| 44 |

+

--------------

|

| 45 |

+

|

| 46 |

+

#### LLM alignment with hard preference labels.

|

| 47 |

+

|

| 48 |

+

The standard paradigm for aligning Large Language models (LLMs) with human values is Reinforcement Learning from Human Feedback (RLHF), which involves training a reward model and then fine-tuning the policy against it (christiano2017deep; ouyang2022training). To mitigate the complexity and instability of this multi-stage process, a family of simpler, direct alignment algorithms has emerged (rafailov2023direct; azar2023general; meng2024simpo; hong2024orpo). These methods bypass the explicit reward modeling stage by optimizing a direct classification-style loss on the preference data. However, a critical limitation shared by these methods is their reliance on hard preference labels. This approach models human feedback as a definitive, binary choice, treating every label with equal and absolute confidence. Consequently, it is highly vulnerable to the significant label noise present in real-world datasets, as standard loss functions can lead models to overfit to corrupted labels (natarajan2013learning; zhang2018generalized; frenay2013classification). A simple annotation error, such as an accidental misclick, is given the same weight as a deliberate, high-quality judgment. This inability to distinguish between reliable feedback and noise means that the model’s performance degrades significantly as the error rate increases (frenay2013classification; gao2024impact). \rebuttal In contrast, soft-label approaches that represent preferences probabilistically can better accommodate uncertainty in feedback by assigning confidence scores or weights to individual labels (muller2019does; song2024preference). By allowing the learning algorithm to rely on high-quality signals while down-weighting noise, such approaches provide a natural path toward robust preference alignment. This is precisely the perspective adopted by our RE-PO framework, which replaces hard labels with EM-estimated soft confidences.

|

| 49 |

+

|

| 50 |

+

#### Learning from noisy feedback.

|

| 51 |

+

|

| 52 |

+

The vulnerability to label noise situates preference alignment within the classic machine learning problem of Learning with Noisy Labels (LNL) (natarajan2013learning; frenay2013classification). Foundational work in this area, such as the Dawid–Skene model (dawid1979maximum), uses an EM algorithm to simultaneously infer true latent labels while estimating annotator reliability. This principle was later extended to pairwise comparisons in the Crowd-BT model (chen2013pairwise), which jointly estimates item scores and annotator-specific reliability parameters in crowdsourced ranking tasks. In modern LLM alignment, several methods have been proposed to improve robustness to noisy preference data. These can be broadly divided into loss-centric approaches and data-centric filtering strategies. In the first category, ROPO (liang2024ropo) proposes an iterative robust preference optimization procedure that jointly applies a noise-tolerant loss and down-weights (or discards) highly uncertain samples, without relying on external teacher models. rDPO (chowdhury2024provably) constructs an unbiased estimator of the true loss but requires the global noise rate to be known a priori. Hölder-DPO (fujisawa2025scalable) introduces a loss with a “redescending” property, which inherently nullifies the influence of extreme outliers without needing a known noise rate. In the second category, Selective DPO (gao2025principled) proposes filtering examples based on their difficulty relative to the model’s capacity—a concept orthogonal to label correctness—using validation loss as a proxy.

|

| 53 |

+

|

| 54 |

+

\rebuttal

|

| 55 |

+

|

| 56 |

+

Our proposed RE-PO framework is complementary to these methods. Rather than only modifying the loss shape or discarding high-loss points, RE-PO explicitly models the data-generating process by treating annotator reliability and label correctness as latent variables to be inferred. This allows RE-PO to assign fine-grained, example-specific weights based on a posterior confidence, providing a principled way to separate signal from noise.

|

| 57 |

+

|

| 58 |

+

3 Methodology

|

| 59 |

+

-------------

|

| 60 |

+

|

| 61 |

+

This section details our proposed RE-PO algorithm. We first review the standard DPO framework in Section[3.1](https://arxiv.org/html/2509.24159v3#S3.SS1 "3.1 Preliminaries: Direct Preference Optimization ‣ 3 Methodology ‣ RE-PO: Robust Enhanced Policy Optimization as a General Framework for LLM Alignment"). In Section[3.2](https://arxiv.org/html/2509.24159v3#S3.SS2 "3.2 RE-PO Framework: Core Assumptions ‣ 3 Methodology ‣ RE-PO: Robust Enhanced Policy Optimization as a General Framework for LLM Alignment"), we introduce a latent-variable model that explicitly distinguishes clean and corrupted preference labels. Section[3.3](https://arxiv.org/html/2509.24159v3#S3.SS3 "3.3 The RE-PO Algorithm via Expectation-Maximization ‣ 3 Methodology ‣ RE-PO: Robust Enhanced Policy Optimization as a General Framework for LLM Alignment") then derives the corresponding EM-based update rules for RE-PO. Section[3.4](https://arxiv.org/html/2509.24159v3#S3.SS4 "3.4 Practical implementation with mini-batch training ‣ 3 Methodology ‣ RE-PO: Robust Enhanced Policy Optimization as a General Framework for LLM Alignment") presents a practical mini-batch implementation for RE-PO.

|

| 62 |

+

|

| 63 |

+

### 3.1 Preliminaries: Direct Preference Optimization

|

| 64 |

+

|

| 65 |

+

The goal of preference alignment is to fine-tune a language model policy, π θ\pi_{\theta}, using a dataset of preferences 𝒟={(x,y w,y l)i}i=1 N\mathcal{D}=\{(x,y_{w},y_{l})_{i}\}_{i=1}^{N}, where response y w y_{w} is preferred over y l y_{l} for a given prompt x x. Direct Preference Optimization (DPO) (rafailov2023direct) offers a simple and effective method for this, bypassing the complex multi-stage pipeline of traditional RLHF (christiano2017deep; ouyang2022training). DPO directly optimizes the policy by minimizing a simple classification loss:

|

| 66 |

+

|

| 67 |

+

ℒ DPO(π θ,π ref)=−𝔼(x,y w,y l)∼𝒟[logσ(βlogπ θ(y w|x)π ref(y w|x)−βlogπ θ(y l|x)π ref(y l|x))],\mathcal{L}_{\text{DPO}}(\pi_{\theta},\pi_{\text{ref}})=-\mathbb{E}_{(x,y_{w},y_{l})\sim\mathcal{D}}\left[\log\sigma\left(\beta\log\frac{\pi_{\theta}(y_{w}|x)}{\pi_{\text{ref}}(y_{w}|x)}-\beta\log\frac{\pi_{\theta}(y_{l}|x)}{\pi_{\text{ref}}(y_{l}|x)}\right)\right],(1)

|

| 68 |

+

|

| 69 |

+

where σ(⋅)\sigma(\cdot) is the sigmoid function, π ref\pi_{\text{ref}} is a fixed reference policy and β\beta is a scaling hyperparameter.

|

| 70 |

+

|

| 71 |

+

### 3.2 RE-PO Framework: Core Assumptions

|

| 72 |

+

|

| 73 |

+

A critical limitation of DPO is its implicit assumption that all observed preferences in 𝒟\mathcal{D} are correct. In practice, this data is often noisy. To address this, we propose Robust Enhanced Policy Optimization (RE-PO), which is built upon two core assumptions that reframe the problem.

|

| 74 |

+

|

| 75 |

+

#### \rebuttal Assumption 1: A Latent noise-free preference.

|

| 76 |

+

|

| 77 |

+

\rebuttal

|

| 78 |

+

|

| 79 |

+

We assume that for each training example (x i,y w,i,y l,i)(x_{i},y_{w,i},y_{l,i}) there exists an underlying noise-free preference, denoted y w,i≻∗y l,i y_{w,i}\succ^{\ast}y_{l,i}, which represents the label we would obtain in the absence of annotation errors. The observed preference y w,i≻k i y l,i y_{w,i}\succ_{k_{i}}y_{l,i} (provided by annotator k i k_{i}) is treated as a potentially corrupted observation of this ground truth. To model this, we introduce a binary latent variable z i∈{0,1}z_{i}\in\{0,1\} for each data point, where z i=1 z_{i}=1 if the observed label matches the latent noise-free preference and z i=0 z_{i}=0 otherwise. The reliability of annotator k k is parameterized by η k≜p(z i=1∣k i=k)\eta_{k}\triangleq p(z_{i}=1\mid k_{i}=k). Here k i∈{1,…,K}k_{i}\in\{1,\dots,K\} denotes the index of the annotator who provided the i i-th label, and K K is the total number of annotators in the dataset.

|

| 80 |

+

|

| 81 |

+

#### Assumption 2: A general probabilistic model for preferences.

|

| 82 |

+

|

| 83 |

+

Building on this latent variable model, we must also define the probability of the \rebuttal noise-free preference itself, p(y w≻∗y l|x,θ)p(y_{w}\succ^{\ast}y_{l}|x,\theta). To accommodate various preference losses beyond DPO (e.g., IPO (azar2023general)), our framework is designed to work with any preference loss function, ℒ pref\mathcal{L}_{\text{pref}}. Table [1](https://arxiv.org/html/2509.24159v3#S3.T1 "Table 1 ‣ Assumption 2: A general probabilistic model for preferences. ‣ 3.2 RE-PO Framework: Core Assumptions ‣ 3 Methodology ‣ RE-PO: Robust Enhanced Policy Optimization as a General Framework for LLM Alignment") provides several examples of such loss functions used in prominent alignment algorithms.

|

| 84 |

+

|

| 85 |

+

Table 1: Formulations of the preference loss (ℒ pref\mathcal{L}_{\text{pref}}) for prominent alignment algorithms.

|

| 86 |

+

|

| 87 |

+

Method Preference Loss ℒ pref(x,y w≻y l)\mathcal{L}_{\text{pref}}(x,y_{w}\succ y_{l})

|

| 88 |

+

DPO (rafailov2023direct)−logσ(βlogπ θ(y w|x)π ref(y w|x)−βlogπ θ(y l|x)π ref(y l|x))-\log\sigma\left(\beta\log\frac{\pi_{\theta}(y_{w}|x)}{\pi_{\text{ref}}(y_{w}|x)}-\beta\log\frac{\pi_{\theta}(y_{l}|x)}{\pi_{\text{ref}}(y_{l}|x)}\right)

|

| 89 |

+

IPO (azar2023general)(logπ θ(y w|x)π ref(y w|x)−logπ θ(y l|x)π ref(y l|x)−1 2β)2\left(\log\frac{\pi_{\theta}(y_{w}|x)}{\pi_{\text{ref}}(y_{w}|x)}-\log\frac{\pi_{\theta}(y_{l}|x)}{\pi_{\text{ref}}(y_{l}|x)}-\frac{1}{2\beta}\right)^{2}

|

| 90 |

+

SimPO (meng2024simpo)−logσ(β|y w|logπ θ(y w|x)−β|y l|logπ θ(y l|x)−γ)-\log\sigma(\frac{\beta}{|y_{w}|}\log\pi_{\theta}(y_{w}|x)-\frac{\beta}{|y_{l}|}\log\pi_{\theta}(y_{l}|x)-\gamma)

|

| 91 |

+

CPO (xu2024contrastive)−logσ(βlogπ θ(y w|x)−βlogπ θ(y l|x))−logπ θ(y w|x)-\log\sigma(\beta\log\pi_{\theta}(y_{w}|x)-\beta\log\pi_{\theta}(y_{l}|x))-\log\pi_{\theta}(y_{w}|x)

|

| 92 |

+

|

| 93 |

+

To connect these diverse loss functions to a unified probabilistic interpretation, we draw inspiration from the Boltzmann distribution (luce1959individual). We assume that for any preference loss function ℒ pref\mathcal{L}_{\text{pref}}, the probability of a preference is proportional to the exponentiated negative loss exp(−ℒ pref(x,y w≻y l))\exp(-\mathcal{L}_{\text{pref}}(x,y_{w}\succ y_{l})). This yields a general definition for the \rebuttal noise-free preference probability:

|

| 94 |

+

|

| 95 |

+

p(y w≻∗y l|x,θ)=σ(ℒ pref(x,y l≻y w;θ)−ℒ pref(x,y w≻y l;θ)),p(y_{w}\succ^{\ast}y_{l}|x,\theta)=\sigma\left(\mathcal{L}_{\text{pref}}(x,y_{l}\succ y_{w};\theta)-\mathcal{L}_{\text{pref}}(x,y_{w}\succ y_{l};\theta)\right),(2)

|

| 96 |

+

|

| 97 |

+

where σ(⋅)\sigma(\cdot) is the sigmoid function. This formulation converts any preference loss into a well-defined probability distribution. For instance, with the standard DPO loss, this equation recovers the Bradley-Terry model (bradley1952rank) (see [Appendices A](https://arxiv.org/html/2509.24159v3#A1 "Appendix A Derivation of general probabilistic model ‣ RE-PO: Robust Enhanced Policy Optimization as a General Framework for LLM Alignment") and[B](https://arxiv.org/html/2509.24159v3#A2 "Appendix B Consistency with Bradley-Terry model for DPO ‣ RE-PO: Robust Enhanced Policy Optimization as a General Framework for LLM Alignment") for derivations).

|

| 98 |

+

|

| 99 |

+

### 3.3 The RE-PO Algorithm via Expectation-Maximization

|

| 100 |

+

|

| 101 |

+

Based on these core assumptions, we aim to find the parameters θ\theta and 𝜼\boldsymbol{\eta} that maximize the marginal log-likelihood of the observed data. The probability of a single observed preference is obtained by marginalizing over the latent variable z i z_{i}:

|

| 102 |

+

|

| 103 |

+

p(y w,i≻k i y l,i|x i,θ,𝜼)=p(y w,i≻∗y l,i|x i,θ)η k i+p(y l,i≻∗y w,i|x i,θ)(1−η k i).p(y_{w,i}\succ_{k_{i}}y_{l,i}|x_{i},\theta,\boldsymbol{\eta})=p(y_{w,i}\succ^{\ast}y_{l,i}|x_{i},\theta)\eta_{k_{i}}+p(y_{l,i}\succ^{\ast}y_{w,i}|x_{i},\theta)(1-\eta_{k_{i}}).(3)

|

| 104 |

+

|

| 105 |

+

Directly maximizing ∑i logp(y w,i≻k i y l,i)\sum_{i}\log p(y_{w,i}\succ_{k_{i}}y_{l,i}) is intractable due to the sum inside the logarithm. We therefore employ the EM algorithm (see details in [Appendix C](https://arxiv.org/html/2509.24159v3#A3 "Appendix C Derivation of the RE-PO EM algorithm ‣ RE-PO: Robust Enhanced Policy Optimization as a General Framework for LLM Alignment")), which iterates between two steps. In this iterative process, the superscript (t)(t) will denote the values of parameters at iteration t t.

|

| 106 |

+

|

| 107 |

+

#### E-Step: Inferring label correctness.

|

| 108 |

+

|

| 109 |

+

In the E-step, given the current parameters θ(t)\theta^{(t)} and 𝜼(t)\boldsymbol{\eta}^{(t)}, we compute the posterior probability w i w_{i} that the i i-th observed label is correct. This value w i w_{i} acts as a ”soft label” or the model’s confidence in the data point.

|

| 110 |

+

|

| 111 |

+

w i(t)=p(y w,i≻∗y l,i|x i,θ(t))η k i(t)p(y w,i≻∗y l,i|x i,θ(t))η k i(t)+p(y l,i≻∗y w,i|x i,θ(t))(1−η k i(t)).w_{i}^{(t)}=\frac{p(y_{w,i}\succ^{\ast}y_{l,i}|x_{i},\theta^{(t)})\eta_{k_{i}}^{(t)}}{p(y_{w,i}\succ^{\ast}y_{l,i}|x_{i},\theta^{(t)})\eta_{k_{i}}^{(t)}+p(y_{l,i}\succ^{\ast}y_{w,i}|x_{i},\theta^{(t)})(1-\eta_{k_{i}}^{(t)})}.(4)

|

| 112 |

+

|

| 113 |

+

where p(y w,i≻∗y l,i|x i,θ(t))p(y_{w,i}\succ^{\ast}y_{l,i}|x_{i},\theta^{(t)}) and p(y l,i≻∗y w,i|x i,θ(t))p(y_{l,i}\succ^{\ast}y_{w,i}|x_{i},\theta^{(t)}) can be computed according to Eq.([2](https://arxiv.org/html/2509.24159v3#S3.E2 "Equation 2 ‣ Assumption 2: A general probabilistic model for preferences. ‣ 3.2 RE-PO Framework: Core Assumptions ‣ 3 Methodology ‣ RE-PO: Robust Enhanced Policy Optimization as a General Framework for LLM Alignment")).

|

| 114 |

+

|

| 115 |

+

#### M-Step: weighted parameter update.

|

| 116 |

+

|

| 117 |

+

In the M-step, we update the policy parameters θ\theta and reliabilities 𝜼\boldsymbol{\eta} using the confidences w i(t)w_{i}^{(t)} computed in the E-step. This step conveniently separates into two independent updates.

|

| 118 |

+

|

| 119 |

+

First, the policy is updated by minimizing a weighted loss function. As established in Assumption 2, our probabilistic model for p(y w≻∗y l)p(y_{w}\succ^{\ast}y_{l}) allows RE-PO to work with any preference loss ℒ pref\mathcal{L}_{\text{pref}}, making it a versatile meta-framework. The general RE-PO loss is:

|

| 120 |

+

|

| 121 |

+

ℒ RE-PO(θ)=−∑i=1 N[w i(t)logp(y w,i≻∗y l,i|x i,θ)+(1−w i(t))logp(y l,i≻∗y w,i|x i,θ)].\mathcal{L}_{\text{RE-PO}}(\theta)=-\sum_{i=1}^{N}\left[w_{i}^{(t)}\log p(y_{w,i}\succ^{\ast}y_{l,i}|x_{i},\theta)+(1-w_{i}^{(t)})\log p(y_{l,i}\succ^{\ast}y_{w,i}|x_{i},\theta)\right].(5)

|

| 122 |

+

|

| 123 |

+

Second, the reliability η k\eta_{k} for each annotator is updated to the average confidence of all labels they provided. This has a simple and efficient closed-form solution:

|

| 124 |

+

|

| 125 |

+

η k(t+1)=∑i∈ℐ k w i(t)N k.\eta_{k}^{(t+1)}=\frac{\sum_{i\in\mathcal{I}_{k}}w_{i}^{(t)}}{N_{k}}.(6)

|

| 126 |

+

|

| 127 |

+

Here we define the index set of labeled pairs as ℐ k={i:k i=k}\mathcal{I}_{k}=\{\,i:k_{i}=k\,\}, and the number of labels as N k N_{k}.

|

| 128 |

+

|

| 129 |

+

Input: Dataset

|

| 130 |

+

|

| 131 |

+

𝒟={(x i,y w,i,y l,i,k i)}i=1 N\mathcal{D}=\{(x_{i},y_{w,i},y_{l,i},k_{i})\}_{i=1}^{N}

|

| 132 |

+

; Base policy

|

| 133 |

+

|

| 134 |

+

π θ\pi_{\theta}

|

| 135 |

+

, reference policy

|

| 136 |

+

|

| 137 |

+

π ref\pi_{\text{ref}}

|

| 138 |

+

; Preference loss

|

| 139 |

+

|

| 140 |

+

ℒ pref\mathcal{L}_{\text{pref}}

|

| 141 |

+

; Hyperparameters: learning rate

|

| 142 |

+

|

| 143 |

+

λ\lambda

|

| 144 |

+

, epochs

|

| 145 |

+

|

| 146 |

+

E E

|

| 147 |

+

, EMA momentum

|

| 148 |

+

|

| 149 |

+

α\alpha

|

| 150 |

+

, initial annotator reliabilities

|

| 151 |

+

|

| 152 |

+

η k∈[0.5,1]\eta_{k}\in[0.5,1]

|

| 153 |

+

for all

|

| 154 |

+

|

| 155 |

+

k∈{1,…,K}k\in\{1,\dots,K\}

|

| 156 |

+

|

| 157 |

+

1

|

| 158 |

+

|

| 159 |

+

2 for _epoch = 1 1 to E E_ do

|

| 160 |

+

|

| 161 |

+

3 for _batch ℬ⊂𝒟\mathcal{B}\subset\mathcal{D}_ do

|

| 162 |

+

|

| 163 |

+

4 For each sample

|

| 164 |

+

|

| 165 |

+

i∈ℬ i\in\mathcal{B}

|

| 166 |

+

, compute

|

| 167 |

+

|

| 168 |

+

w i w_{i}

|

| 169 |

+

using current

|

| 170 |

+

|

| 171 |

+

θ\theta

|

| 172 |

+

and

|

| 173 |

+

|

| 174 |

+

η k i\eta_{k_{i}}

|

| 175 |

+

via Eq.([4](https://arxiv.org/html/2509.24159v3#S3.E4 "Equation 4 ‣ E-Step: Inferring label correctness. ‣ 3.3 The RE-PO Algorithm via Expectation-Maximization ‣ 3 Methodology ‣ RE-PO: Robust Enhanced Policy Optimization as a General Framework for LLM Alignment"));

|

| 176 |

+

|

| 177 |

+

5

|

| 178 |

+

|

| 179 |

+

6 Compute the weighted loss

|

| 180 |

+

|

| 181 |

+

ℒ RE-PO(θ)\mathcal{L}_{\text{RE-PO}}(\theta)

|

| 182 |

+

for the batch via Eq.([5](https://arxiv.org/html/2509.24159v3#S3.E5 "Equation 5 ‣ M-Step: weighted parameter update. ‣ 3.3 The RE-PO Algorithm via Expectation-Maximization ‣ 3 Methodology ‣ RE-PO: Robust Enhanced Policy Optimization as a General Framework for LLM Alignment"));

|

| 183 |

+

|

| 184 |

+

7 Update parameters

|

| 185 |

+

|

| 186 |

+

θ\theta

|

| 187 |

+

using an optimizer (e.g., AdamW (loshchilov2019decoupled));

|

| 188 |

+

|

| 189 |

+

8

|

| 190 |

+

|

| 191 |

+

9 for _each annotator k k present in the batch_ do

|

| 192 |

+

|

| 193 |

+

10 Update

|

| 194 |

+

|

| 195 |

+

η k\eta_{k}

|

| 196 |

+

via Eq.([7](https://arxiv.org/html/2509.24159v3#S3.E7 "Equation 7 ‣ 3.4 Practical implementation with mini-batch training ‣ 3 Methodology ‣ RE-PO: Robust Enhanced Policy Optimization as a General Framework for LLM Alignment"));

|

| 197 |

+

|

| 198 |

+

11

|

| 199 |

+

|

| 200 |

+

12 end for

|

| 201 |

+

|

| 202 |

+

13

|

| 203 |

+

|

| 204 |

+

14 end for

|

| 205 |

+

|

| 206 |

+

15

|

| 207 |

+

|

| 208 |

+

16 end for

|

| 209 |

+

|

| 210 |

+

Algorithm 1 Mini-batch Implementation of Robust Enhanced Policy Optimization (RE-PO)

|

| 211 |

+

|

| 212 |

+

### 3.4 Practical implementation with mini-batch training

|

| 213 |

+

|

| 214 |

+

While the exact M-step updates are clear, performing a full iteration over the entire dataset to re-calculate the annotator reliabilities 𝜼\boldsymbol{\eta} after each policy update step can be computationally expensive. To balance computational efficiency and performance, we introduce a more practical online update for η k\eta_{k} using an Exponential Moving Average (EMA). Instead of a hard assignment, we perform a soft update based on the statistics from the current mini-batch ℬ\mathcal{B}:

|

| 215 |

+

|

| 216 |

+

η k←(1−α)η k+α⋅∑i∈ℬ∩ℐ k w i N k,ℬ.\eta_{k}\leftarrow(1-\alpha)\eta_{k}+\alpha\cdot\frac{\sum_{i\in\mathcal{B}\cap\mathcal{I}_{k}}w_{i}}{N_{k,\mathcal{B}}}.(7)

|

| 217 |

+

|

| 218 |

+

Here, N k,ℬ N_{k,\mathcal{B}} is the number of examples from annotator k k in the current mini-batch, and α∈(0,1]\alpha\in(0,1] is a momentum hyperparameter. The complete training procedure for RE-PO is summarized in [Algorithm 1](https://arxiv.org/html/2509.24159v3#algorithm1 "In M-Step: weighted parameter update. ‣ 3.3 The RE-PO Algorithm via Expectation-Maximization ‣ 3 Methodology ‣ RE-PO: Robust Enhanced Policy Optimization as a General Framework for LLM Alignment"):

|

| 219 |

+

|

| 220 |

+

4 Theoretical analysis of RE-PO

|

| 221 |

+

-------------------------------

|

| 222 |

+

|

| 223 |

+

The robustness of RE-PO stems from its adaptive weighting mechanism. This section first provides an intuitive analysis of these training dynamics and then formalizes this intuition with theoretical guarantees, demonstrating that the RE-PO framework can recover the true reliability of annotators.

|

| 224 |

+

|

| 225 |

+

At the start of training, when the language model is not yet well-optimized, its predictions are uncertain, and the probabilities p(y w≻∗y l|x,θ)p(y_{w}\succ^{\ast}y_{l}|x,\theta) are close to 0.5. The confidence score w i w_{i} approximates the annotator’s reliability, η k i\eta_{k_{i}}. The loss then acts as a form of label smoothing, preventing the model from being severely misled by incorrect labels early on. As the policy improves, its behavior adapts. For a high-quality label, the model predicts a high probability for the winning response, and w i w_{i} approaches 1, causing the loss to function like a standard preference optimization objective. Conversely, w i w_{i} approaches 0 for a noisy label. The loss is then dominated by the (1−w i)(1-w_{i}) term, which flips the optimization direction toward the true preference.

|

| 226 |

+

|

| 227 |

+

We now formalize the intuition that RE-PO can recover the true reliability of annotators. We provide this analysis under an idealized setting: full-batch training where the M-step for the policy parameters θ\theta is assumed to have converged perfectly. While our practical implementation in [Algorithm 1](https://arxiv.org/html/2509.24159v3#algorithm1 "In M-Step: weighted parameter update. ‣ 3.3 The RE-PO Algorithm via Expectation-Maximization ‣ 3 Methodology ‣ RE-PO: Robust Enhanced Policy Optimization as a General Framework for LLM Alignment") uses mini-batch gradient updates (a form of Generalized EM), this idealized analysis provides a strong theoretical justification for our framework.

|

| 228 |

+

|

| 229 |

+

Consider the dataset level update rule in Eq.([6](https://arxiv.org/html/2509.24159v3#S3.E6 "Equation 6 ‣ M-Step: weighted parameter update. ‣ 3.3 The RE-PO Algorithm via Expectation-Maximization ‣ 3 Methodology ‣ RE-PO: Robust Enhanced Policy Optimization as a General Framework for LLM Alignment")), defined as an operator T k(η)T_{k}(\eta). The following theorem establishes that iterating this operator guarantees convergence to the true annotator reliability.

|

| 230 |

+

|

| 231 |

+

###### Theorem 4.1(Identification and convergence of RE-PO).

|

| 232 |

+

|

| 233 |

+

Let θ⋆\theta^{\star} be a perfectly calibrated parameter such that the model distribution matches the ground-truth preference distribution. Assume that not all p i⋆=p(y w,i≻∗y l,i|x i)p_{i}^{\star}=p(y_{w,i}\succ^{*}y_{l,i}|x_{i}) equal 1 2\tfrac{1}{2} for i∈ℐ k i\in\mathcal{I}_{k}. Consider the sequence of reliability estimates {η k(t)}t≥0\{\eta_{k}^{(t)}\}_{t\geq 0} generated by the update rule η k(t+1)=T k(η k(t))\eta_{k}^{(t+1)}=T_{k}(\eta_{k}^{(t)}). Then, for any initialization η k(0)∈(0,1)\eta_{k}^{(0)}\in(0,1), the iterates converge to the true reliability η k∗≜𝔼[z i∣k i=k]\eta_{k}^{*}\triangleq\mathbb{E}[z_{i}\mid k_{i}=k]:

|

| 234 |

+

|

| 235 |

+

lim t→∞η k(t)=η k⋆.\lim_{t\to\infty}\eta_{k}^{(t)}=\eta_{k}^{\star}.

|

| 236 |

+

|

| 237 |

+

The proof is provided in Appendix[D](https://arxiv.org/html/2509.24159v3#A4 "Appendix D Proof of Theorem 4.1 ‣ RE-PO: Robust Enhanced Policy Optimization as a General Framework for LLM Alignment"). In section[5.5](https://arxiv.org/html/2509.24159v3#S5.SS5 "5.5 Empirical verification of Theorem 4.1 ‣ 5 Experiments ‣ RE-PO: Robust Enhanced Policy Optimization as a General Framework for LLM Alignment"), we empirically corroborate that the mini-batch procedure closely tracks this theoretical behavior.

|

| 238 |

+

|

| 239 |

+

#### Practical implications and limitations.

|

| 240 |

+

|

| 241 |

+

\rebuttal

|

| 242 |

+

|

| 243 |

+

The assumption of a perfectly calibrated model in Theorem[4.1](https://arxiv.org/html/2509.24159v3#S4.Thmtheorem1 "Theorem 4.1 (Identification and convergence of RE-PO). ‣ 4 Theoretical analysis of RE-PO ‣ RE-PO: Robust Enhanced Policy Optimization as a General Framework for LLM Alignment") is intentionally idealized: in practice, we apply RE-PO to base models that are not exactly calibrated to the ground-truth preference distribution. In our experiments, we always start from strong instruction-tuned LLMs (Mistral-7B-Instruct-v0.2 and Meta-Llama-3-8B-Instruct), which already display good zero-shot preference behavior. Empirically, we do not observe the failure mode suggested by an extremely misaligned initialization: across the broad range of hyperparameters explored in Section[5.4](https://arxiv.org/html/2509.24159v3#S5.SS4 "5.4 Ablation study ‣ 5 Experiments ‣ RE-PO: Robust Enhanced Policy Optimization as a General Framework for LLM Alignment"), the learned η k\eta_{k}’s remain stable and the downstream performance consistently improves over the corresponding base methods. Furthermore, the controlled experiments in Section[5.5](https://arxiv.org/html/2509.24159v3#S5.SS5 "5.5 Empirical verification of Theorem 4.1 ‣ 5 Experiments ‣ RE-PO: Robust Enhanced Policy Optimization as a General Framework for LLM Alignment"), where we inject substantial synthetic noise into the data, show that RE-PO’s estimated reliabilities closely track the ground-truth values, suggesting robustness to imperfect calibration in practice. If the base LLM were initialized in a highly misaligned regime, the E-step could assign misleadingly high confidence to incorrect labels and RE-PO might fail to effectively denoise the supervision.

|

| 244 |

+

|

| 245 |

+

5 Experiments

|

| 246 |

+

-------------

|

| 247 |

+

|

| 248 |

+

In this section, we conduct a comprehensive set of experiments to evaluate the performance of RE-PO. We begin in section[5.1](https://arxiv.org/html/2509.24159v3#S5.SS1 "5.1 Experimental setup ‣ 5 Experiments ‣ RE-PO: Robust Enhanced Policy Optimization as a General Framework for LLM Alignment") by detailing our experimental setup, including the models, datasets, evaluation benchmarks, and baseline algorithms. In Section[5.2](https://arxiv.org/html/2509.24159v3#S5.SS2 "5.2 Main results ‣ 5 Experiments ‣ RE-PO: Robust Enhanced Policy Optimization as a General Framework for LLM Alignment"), we present our main results.\rebuttal Section[5.3](https://arxiv.org/html/2509.24159v3#S5.SS3 "5.3 Multi-annotator experiments on MultiPref ‣ 5 Experiments ‣ RE-PO: Robust Enhanced Policy Optimization as a General Framework for LLM Alignment") reports additional experiments to evaluate RE-PO’s performance on realistic multi-annotator datasets. We then conduct an ablation study in Section[5.4](https://arxiv.org/html/2509.24159v3#S5.SS4 "5.4 Ablation study ‣ 5 Experiments ‣ RE-PO: Robust Enhanced Policy Optimization as a General Framework for LLM Alignment") to analyze the framework’s sensitivity to its key hyperparameters. In section[5.5](https://arxiv.org/html/2509.24159v3#S5.SS5 "5.5 Empirical verification of Theorem 4.1 ‣ 5 Experiments ‣ RE-PO: Robust Enhanced Policy Optimization as a General Framework for LLM Alignment"), we provide an empirical verification of our theoretical claims of Theorem[4.1](https://arxiv.org/html/2509.24159v3#S4.Thmtheorem1 "Theorem 4.1 (Identification and convergence of RE-PO). ‣ 4 Theoretical analysis of RE-PO ‣ RE-PO: Robust Enhanced Policy Optimization as a General Framework for LLM Alignment").

|

| 249 |

+

|

| 250 |

+

### 5.1 Experimental setup

|

| 251 |

+

|

| 252 |

+

#### Models and training settings.

|

| 253 |

+

|

| 254 |

+

We use two state-of-the-art open-source large language models as our base models: Mistral-7B-Instruct-v0.2 and Meta-Llama-3-8B-Instruct. For fine-tuning, we utilize two datasets from the SimPO paper (meng2024simpo), which were generated via on-policy sampling using prompts from the UltraFeedback dataset (cui2024ultrafeedbackboostinglanguagemodels). The specific datasets are mistral-instruct-ultrafeedback for the Mistral model and llama3-ultrafeedback-armorm for the Llama-3 model.1 1 1 See Appendix[I](https://arxiv.org/html/2509.24159v3#A9 "Appendix I Resources ‣ RE-PO: Robust Enhanced Policy Optimization as a General Framework for LLM Alignment") for links to models and datasets. As these datasets do not provide annotator-specific information, we model the preferences as if they originate from a single, virtual annotator (K=1 K=1).2 2 2 This is a reasonable simplification. For instance, a pool of two annotators with reliabilities η A\eta_{A} and η B\eta_{B}, appearing with frequencies p A p_{A} and p B p_{B} respectively, can be modeled as a single annotator with an effective reliability η unified=p Aη A+p Bη B\eta_{\text{unified}}=p_{A}\eta_{A}+p_{B}\eta_{B}.\rebuttal In addition to these UltraFeedback-based datasets, we further evaluate RE-PO on the real-world MultiPref multi-annotator preference dataset (miranda2024hybrid), where per-annotator reliabilities can be explicitly modeled (Section[5.3](https://arxiv.org/html/2509.24159v3#S5.SS3 "5.3 Multi-annotator experiments on MultiPref ‣ 5 Experiments ‣ RE-PO: Robust Enhanced Policy Optimization as a General Framework for LLM Alignment")).

|

| 255 |

+

|

| 256 |

+

#### Evaluation benchmarks.

|

| 257 |

+

|

| 258 |

+

We assess model performance on two widely recognized evaluation benchmarks. The first is AlpacaEval 2 (dubois2024length), an automatic, LLM-based evaluator that measures model performance by computing the win rate against reference outputs. It provides both a raw Win Rate (WR) and a Length-Controlled (LC) Win Rate to account for verbosity bias. The second is Arena-Hard (li2024crowdsourced), a challenging benchmark composed of difficult prompts crowdsourced from the LMSYS Chatbot Arena. It is designed to differentiate high-performing models by testing them on complex, real-world user queries. Performance is reported as the win rate against a suite of other models.

|

| 259 |

+

|

| 260 |

+

Table 2: \rebuttal Performance comparison on AlpacaEval 2 for Mistral-7B-Instruct-v0.2 and Meta-Llama-3-8B-Instruct fine-tuned on UltraFeedback-based preference datasets. Metrics reported are LC (Length-Controlled Win Rate) and WR (Raw Win Rate), both in percentage points. The table presents reference Baselines (bottom) alongside four algorithm families (DPO, IPO, SimPO, CPO). For each family, we compare the Standard implementation, the variant with Label Smoothing (w/ LS), and RE-PO (w/ RE-PO). Bold denotes the best result within each family for a given backbone.

|

| 261 |

+

|

| 262 |

+

Mistral-7B-Instruct Llama-3-8B-Instruct

|

| 263 |

+

Method Standard w/ LS w/ RE-PO Standard w/ LS w/ RE-PO

|

| 264 |

+

DPO 28.5 / 28.6 29.7 / 27.5 35.5 / 33.0 40.8 / 42.9 41.3 / 42.6 44.1 / 46.2

|

| 265 |

+

IPO 30.8 / 28.0 29.7 / 28.7 32.9 / 30.5 43.6 / 41.6 40.3 / 38.2 48.3 / 48.6

|

| 266 |

+

SimPO 28.3 / 29.7 26.5 / 27.1 30.4 / 32.9 44.5 / 37.1 48.1 / 38.7 46.9 / 39.4

|

| 267 |

+

CPO 26.3 / 26.4 28.5 / 28.8 27.6 / 27.8 35.9 / 40.3 35.3 / 34.8 40.1 / 43.8

|

| 268 |

+

Base Model 21.1 / 16.5 29.7 / 29.9

|

| 269 |

+

rDPO 28.1 / 29.1 37.3 / 35.4

|

| 270 |

+

Hölder-DPO 30.1 / 28.6 39.3 / 38.2

|

| 271 |

+

|

| 272 |

+

Table 3: \rebuttal Performance of DPO and RE-DPO on AlpacaEval 2 when trained on the MultiPref dataset (miranda2024hybrid). Results are reported as LC / WR (%) for Mistral-7B-Instruct-v0.2 and Meta-Llama-3-8B-Instruct.

|

| 273 |

+

|

| 274 |

+

Method Mistral-7B-Instruct Llama-3-8B-Instruct

|

| 275 |

+

DPO 28.8 / 26.4 36.7 / 39.3

|

| 276 |

+

RE-DPO (Ours)31.8 / 28.8 41.1 / 44.4

|

| 277 |

+

|

| 278 |

+

#### Baseline algorithms.

|

| 279 |

+

|

| 280 |

+

To demonstrate that RE-PO operates as a versatile meta-framework, we benchmark it against four popular direct preference alignment methods: DPO (rafailov2023direct); IPO (azar2023general), which uses a squared hinge loss to optimize preferences; SimPO (meng2024simpo), which proposes a simplified, reference-free reward formulation normalized by sequence length; and CPO (xu2024contrastive), which adds a term to directly maximize the likelihood of the preferred response. For each of these baselines, we compare the original algorithm to its RE-PO-enhanced counterpart (e.g., DPO vs. RE-DPO).\rebuttal In addition, we include robustness-oriented baselines rDPO (chowdhury2024provably) and Hölder-DPO (fujisawa2025scalable), as well as simple label-smoothing variants for each method. The results are shown in Table[2](https://arxiv.org/html/2509.24159v3#S5.T2 "Table 2 ‣ Evaluation benchmarks. ‣ 5.1 Experimental setup ‣ 5 Experiments ‣ RE-PO: Robust Enhanced Policy Optimization as a General Framework for LLM Alignment").

|

| 281 |

+

|

| 282 |

+

Table 4: Ablation study on the initial annotator reliability (η 0\eta_{0}) and the EMA momentum (α\alpha).\rebuttal Results are reported for RE-DPO on Mistral-7B-Instruct-v0.2 trained on UltraFeedback-based data, evaluated on AlpacaEval 2 (LC / WR) and Arena-Hard (WR), all in percentage points. The best-performing settings used in our main experiments are highlighted.

|

| 283 |

+

|

| 284 |

+

Metric Initial 𝜼 𝟎\boldsymbol{\eta_{0}}EMA 𝜶\boldsymbol{\alpha}

|

| 285 |

+

0.99 0.9 (Ours)0.75 0.55 0.001 0.01 0.1 (Ours)0.5 1.0

|

| 286 |

+

AlpacaEval2 LC (%)30.9 35.5 31.1 31.4 30.9 30.1 35.5 33.4 31.1

|

| 287 |

+

AlpacaEval2 WR (%)31.7 33.0 33.3 32.0 27.8 27.2 33.0 34.8 28.9

|

| 288 |

+

Arena-Hard WR (%)12.3 14.7 12.4 11.8 12.9 13.6 14.7 14.0 12.8

|

| 289 |

+

|

| 290 |

+

### 5.2 Main results

|

| 291 |

+

|

| 292 |

+

As shown in Table[2](https://arxiv.org/html/2509.24159v3#S5.T2 "Table 2 ‣ Evaluation benchmarks. ‣ 5.1 Experimental setup ‣ 5 Experiments ‣ RE-PO: Robust Enhanced Policy Optimization as a General Framework for LLM Alignment"), our experimental results provide strong evidence that RE-PO consistently improves preference-based alignment across objectives, model scales, and datasets. Below we highlight the main empirical findings.

|

| 293 |

+

|

| 294 |

+

#### RE-PO as a general framework.

|

| 295 |

+

|

| 296 |

+

A first observation is that RE-PO behaves as a generally effective “plug-in” robustness layer for a wide range of alignment losses. Across all four objective families (DPO, IPO, SimPO, CPO) and both backbones (Mistral-7B and Llama-3-8B), the RE-PO-enhanced variant either matches or strictly outperforms the corresponding standard implementation on AlpacaEval 2. For example, on Mistral-7B, RE-DPO improves LC / WR from 28.5/28.6 28.5/28.6 to 35.5/33.0 35.5/33.0 (a gain of +7.0+7.0 and +4.4+4.4 points, respectively), and on Llama-3-8B, RE-IPO improves LC / WR from 43.6/41.6 43.6/41.6 to 48.3/48.6 48.3/48.6 (a gain of +4.7+4.7 and +7.0+7.0 points, respectively). These trends hold across all four families, indicating that RE-PO reliably strengthens existing preference objectives rather than competing with them.

|

| 297 |

+

|

| 298 |

+

#### Comparison with label smoothing and robust baselines.

|

| 299 |

+

|

| 300 |

+

\rebuttal

|

| 301 |

+

|

| 302 |

+

Table[2](https://arxiv.org/html/2509.24159v3#S5.T2 "Table 2 ‣ Evaluation benchmarks. ‣ 5.1 Experimental setup ‣ 5 Experiments ‣ RE-PO: Robust Enhanced Policy Optimization as a General Framework for LLM Alignment") also compares RE-PO to two natural robustness baselines: label smoothing applied to each preference loss and the recently proposed robust objectives rDPO(chowdhury2024provably) and HöldeRE-DPO(fujisawa2025scalable). Label smoothing sometimes yields modest gains over the standard objective (e.g., SimPO w/ LS on Llama-3-8B improves LC from 44.5 44.5 to 48.1 48.1), but RE-PO typically achieves the best performance within each family and backbone. For instance, in the DPO family, RE-DPO outperforms both label smoothing and the specialized robust baselines: on Llama-3-8B, RE-DPO reaches 44.1/46.2 44.1/46.2 on AlpacaEval 2, compared to 41.3/42.6 41.3/42.6 for DPO w/ LS, 37.3/35.4 37.3/35.4 for rDPO, and 39.3/38.2 39.3/38.2 for Hölder-DPO. These results suggest that explicitly modeling noisy supervision via RE-PO is more effective than purely loss-level modifications or global noise-correction schemes.

|

| 303 |

+

|

| 304 |

+

#### Qualitative analysis of noisy labels.

|

| 305 |

+

|

| 306 |

+

\rebuttal

|

| 307 |

+

|

| 308 |

+

Beyond aggregate metrics, we also perform a qualitative analysis of the learned confidence scores. In Appendix[F](https://arxiv.org/html/2509.24159v3#A6 "Appendix F \rebuttalQualitative Analysis of Noisy Preference Label ‣ RE-PO: Robust Enhanced Policy Optimization as a General Framework for LLM Alignment"), we present case studies of preference pairs with very low posterior confidence w i w_{i}. RE-PO assigns low confidence to annotations that are off-task, inconsistent with the prompt, or at odds with a more plausible alternative response. Together with the quantitative gains in Tables[2](https://arxiv.org/html/2509.24159v3#S5.T2 "Table 2 ‣ Evaluation benchmarks. ‣ 5.1 Experimental setup ‣ 5 Experiments ‣ RE-PO: Robust Enhanced Policy Optimization as a General Framework for LLM Alignment"), these examples illustrate that RE-PO not only improves benchmark performance but also identifies and down-weights noisy supervision at the example level.

|

| 309 |

+

|

| 310 |

+

### 5.3 Multi-annotator experiments on MultiPref

|

| 311 |

+

|

| 312 |

+

\rebuttal

|

| 313 |

+

|

| 314 |

+

To further evaluate RE-PO under realistic multi-annotator disagreement, we conduct additional experiments on the MultiPref dataset (miranda2024hybrid), a large-scale human preference dataset with genuine rater disagreement. The official training split contains 227 unique human annotators. Unlike the UltraFeedback-based datasets used in our main experiments, MultiPref provides annotator identifiers, allowing us to instantiate an individual reliability parameter η k\eta_{k} for each annotator and to update these parameters via our EM-style scheme.

|

| 315 |

+

|

| 316 |

+

We train vanilla DPO and our RE-DPO on MultiPref for both Mistral-7B-Instruct-v0.2 and Meta-Llama-3-8B-Instruct, and evaluate the resulting models on AlpacaEval 2. As summarized in Table[3](https://arxiv.org/html/2509.24159v3#S5.T3 "Table 3 ‣ Evaluation benchmarks. ‣ 5.1 Experimental setup ‣ 5 Experiments ‣ RE-PO: Robust Enhanced Policy Optimization as a General Framework for LLM Alignment"), RE-DPO consistently outperforms vanilla DPO under this multi-annotator setup: for Llama-3-8B, the AlpacaEval LC improves from 36.7 to 41.1 and WR from 39.3 to 44.4; for Mistral-7B, LC improves from 28.8 to 31.8 and WR from 26.4 to 28.8. These gains mirror the trends observed in our UltraFeedback experiments and show that RE-PO remains beneficial when trained on data with heterogeneous, potentially noisy annotators, rather than a single virtual annotator.

|

| 317 |

+

|

| 318 |

+

In Appendix[E](https://arxiv.org/html/2509.24159v3#A5 "Appendix E \rebuttalVisualization of annotator reliability ‣ RE-PO: Robust Enhanced Policy Optimization as a General Framework for LLM Alignment"), we visualize the learned annotator reliabilities distributions on MultiPref. Experiment results indicate that RE-PO identifies a high-reliability majority and a nontrivial tail of downweighted annotators, and that this pattern is robust across different prior settings and backbones. Moreover, to probe the impact of the choice of automatic judge, we repeat the MultiPref evaluation using a different LLM evaluator; Appendix[G](https://arxiv.org/html/2509.24159v3#A7 "Appendix G \rebuttalAdditional results on MultiPref ‣ RE-PO: Robust Enhanced Policy Optimization as a General Framework for LLM Alignment") reports these results and shows that the performance gains from RE-DPO are stable across judge models.

|

| 319 |

+

|

| 320 |

+

### 5.4 Ablation study

|

| 321 |

+

|

| 322 |

+

We conduct ablation studies to analyze the sensitivity of RE-PO to two key hyperparameters: the initial annotator reliability η 0\eta_{0}, and the EMA momentum parameter α\alpha. All experiments are performed using RE-DPO on the Mistral-7B-Instruct-v0.2 model. The results are summarized in Table[4](https://arxiv.org/html/2509.24159v3#S5.T4 "Table 4 ‣ Baseline algorithms. ‣ 5.1 Experimental setup ‣ 5 Experiments ‣ RE-PO: Robust Enhanced Policy Optimization as a General Framework for LLM Alignment").

|

| 323 |

+

|

| 324 |

+

|

| 325 |

+

|

| 326 |

+

(a)Single-annotator setting.

|

| 327 |

+

|

| 328 |

+

|

| 329 |

+

|

| 330 |

+

(b)Two-annotator setting.

|

| 331 |

+

|

| 332 |

+

Figure 2: Empirical verification of annotator reliability estimation under controlled synthetic noise. Ground-truth reliability (η\eta GPT-4o) is established using GPT-4o’s labels on UltraFeedback-derived preference pairs, and different reliability levels are simulated by injecting synthetic noise into copies of the dataset. In the single-annotator setting (a), a single annotator’s dataset is perturbed with varying noise rates. In the two-annotator setting (b), Annotator 1 uses the original data with no added noise, while noise is progressively added to Annotator 2’s data. The plots compare ground-truth reliabilities (solid lines) with RE-PO-estimated reliabilities (dashed lines), showing that RE-PO closely tracks the true reliability in both scenarios.

|

| 333 |

+

|

| 334 |

+

#### Effect of initial η 0\eta_{0}.

|

| 335 |

+

|

| 336 |

+

The initial reliability η 0\eta_{0} sets the model’s prior belief about the correctness of the labels in the dataset. As shown in Table[4](https://arxiv.org/html/2509.24159v3#S5.T4 "Table 4 ‣ Baseline algorithms. ‣ 5.1 Experimental setup ‣ 5 Experiments ‣ RE-PO: Robust Enhanced Policy Optimization as a General Framework for LLM Alignment"), the model’s performance is best when η 0\eta_{0} is set to 0.9, which was the value used in our main experiments. An overly optimistic initialization (e.g., η 0=0.99\eta_{0}=0.99) can cause the model to trust noisy labels too strongly at the beginning of training, hindering the denoising process. Conversely, a pessimistic initialization (e.g., η 0=0.55\eta_{0}=0.55) treats the data as highly unreliable from the outset, which can slow down the model’s ability to learn the underlying noise-free preference. An initial value of 0.9 appears to strike the right balance, starting with a reasonable assumption of data quality.

|

| 337 |

+

|

| 338 |

+

#### Effect of EMA parameter α\alpha.

|

| 339 |

+

|

| 340 |

+

The EMA parameter α\alpha governs the update rate of the annotator reliability scores, balancing the influence of historical estimates against new information from the current mini-batch. Our experiments confirm that the optimal performance is achieved with α=0.1\alpha=0.1. The model shows considerable sensitivity to this parameter. A very small α\alpha (e.g., 0.001) makes the reliability updates exceedingly slow, preventing the estimates from adapting to the model’s evolving understanding of the data. On the other hand, a very large α\alpha (e.g., 1.0) makes the updates highly volatile, as the reliability score becomes dependent solely on the samples in the current mini-batch.

|

| 341 |

+

|

| 342 |

+

### 5.5 Empirical verification of Theorem[4.1](https://arxiv.org/html/2509.24159v3#S4.Thmtheorem1 "Theorem 4.1 (Identification and convergence of RE-PO). ‣ 4 Theoretical analysis of RE-PO ‣ RE-PO: Robust Enhanced Policy Optimization as a General Framework for LLM Alignment")

|

| 343 |

+

|

| 344 |

+

We conduct controlled experiments to verify Theorem[4.1](https://arxiv.org/html/2509.24159v3#S4.Thmtheorem1 "Theorem 4.1 (Identification and convergence of RE-PO). ‣ 4 Theoretical analysis of RE-PO ‣ RE-PO: Robust Enhanced Policy Optimization as a General Framework for LLM Alignment"). Our setup is designed to align with the theorem’s assumption of a perfectly calibrated model, for which we use a small-scale base model, Qwen2.5-0.5B-Instruct, to ensure fast convergence. To simulate annotators with varying levels of reliability, we create distinct copies of the UltraFeedback dataset (cui2024ultrafeedbackboostinglanguagemodels) for each annotator and inject a controlled degree of synthetic noise into their respective dataset.

|

| 345 |

+

|

| 346 |

+

We test two scenarios, with results presented in Figure[2](https://arxiv.org/html/2509.24159v3#S5.F2 "Figure 2 ‣ 5.4 Ablation study ‣ 5 Experiments ‣ RE-PO: Robust Enhanced Policy Optimization as a General Framework for LLM Alignment"): (a) Single Annotator: A single annotator whose dataset is modified with a synthetically controlled noise rate. (b) Two Annotators: A scenario with two annotators, where Annotator 1 serves as a baseline using the original data without added noise, while the dataset for Annotator 2 is injected with progressively increasing noise levels.

|

| 347 |

+

|

| 348 |

+