Add 1 files

Browse files- 2412/2412.07207.md +488 -0

2412/2412.07207.md

ADDED

|

@@ -0,0 +1,488 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

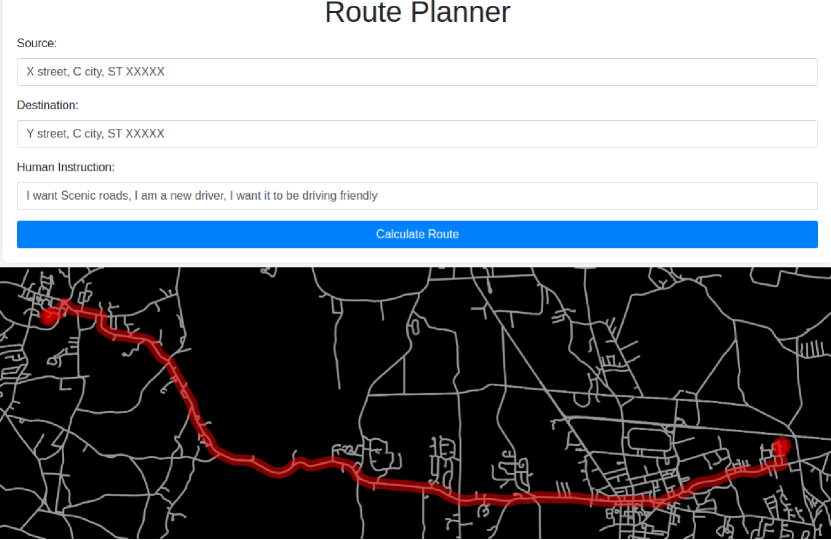

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

Title: A Framework for Active Preference Learning Guided by Large Language Models

|

| 2 |

+

|

| 3 |

+

URL Source: https://arxiv.org/html/2412.07207

|

| 4 |

+

|

| 5 |

+

Published Time: Mon, 23 Dec 2024 01:15:03 GMT

|

| 6 |

+

|

| 7 |

+

Markdown Content:

|

| 8 |

+

###### Abstract

|

| 9 |

+

|

| 10 |

+

The advent of large language models (LLMs) has sparked significant interest in using natural language for preference learning. However, existing methods often suffer from high computational burdens, taxing human supervision, and lack of interpretability. To address these issues, we introduce MAPLE, a framework for large language model-guided Bayesian active preference learning. MAPLE leverages LLMs to model the distribution over preference functions, conditioning it on both natural language feedback and conventional preference learning feedback, such as pairwise trajectory rankings. MAPLE also employs active learning to systematically reduce uncertainty in this distribution and incorporates a language-conditioned active query selection mechanism to identify informative and easy-to-answer queries, thus reducing human burden. We evaluate MAPLE’s sample efficiency and preference inference quality across two benchmarks, including a real-world vehicle route planning benchmark using OpenStreetMap data. Our results demonstrate that MAPLE accelerates the learning process and effectively improves humans’ ability to answer queries.

|

| 11 |

+

|

| 12 |

+

Introduction

|

| 13 |

+

------------

|

| 14 |

+

|

| 15 |

+

Following significant advancements in artificial intelligence, autonomous agents are increasingly being deployed in real-world applications to tackle complex tasks(Zilberstein [2015](https://arxiv.org/html/2412.07207v2#bib.bib51); Dietterich [2017](https://arxiv.org/html/2412.07207v2#bib.bib16)). A prominent method for efficiently aligning these agents with human preferences is Active Learning from Demonstration (Active LfD)(Biyik [2022](https://arxiv.org/html/2412.07207v2#bib.bib4)). Preference-based Active LfD, a variant of LfD, aims to infer a preference function from human-generated rankings over a set of observed behaviors using a Bayesian active learning approach.

|

| 16 |

+

|

| 17 |

+

Recent advancements in natural language processing have inspired many researchers to leverage language-based abstraction for learning human preferences(Soni et al. [2022](https://arxiv.org/html/2412.07207v2#bib.bib40); Guan, Sreedharan, and Kambhampati [2022](https://arxiv.org/html/2412.07207v2#bib.bib18)). This approach offers a more flexible and interpretable way to learn preferences compared to conventional methods(Sadigh et al. [2017](https://arxiv.org/html/2412.07207v2#bib.bib38); Brown, Goo, and Niekum [2019](https://arxiv.org/html/2412.07207v2#bib.bib8); Brown et al. [2019](https://arxiv.org/html/2412.07207v2#bib.bib9)). More recent work(Yu et al. [2023](https://arxiv.org/html/2412.07207v2#bib.bib47); Ma et al. [2023](https://arxiv.org/html/2412.07207v2#bib.bib30)) has focused on utilizing large language models (LLMs), such as ChatGPT(Achiam et al. [2023](https://arxiv.org/html/2412.07207v2#bib.bib2)), with prompting-based approaches to learn preferences from natural language instructions. However, these methods often require significant computational resources and taxing human supervision, as they lack a systematic querying approach.

|

| 18 |

+

|

| 19 |

+

To tackle these challenges, we introduce a novel framework—MAPLE (M odel-guided A ctive P reference Le arning). MAPLE begins by interpreting natural language instructions from humans and utilizes large language models (LLMs) to estimate a distribution over preference functions. It then applies an active learning approach to systematically reduce uncertainty about the correct preference function. This is achieved through standard Bayesian posterior updates, conditioned on both conventional preference learning feedback, such as pairwise trajectory rankings, and linguistic feedback such as clarification or explanations of the cause behind the preference. To further ease human effort, MAPLE incorporates a language-conditioned active query selection mechanism that leverages feedback on the difficulty of previous queries to choose future queries that are both informative and easy to answer. MAPLE represents preference functions as a linear combination of abstract language concepts, providing a modular structure that enables the framework to acquire new concepts over time and enhance sample efficiency for future instructions. Moreover, this interpretable structure allows for human auditing of the learning process, facilitating human-guided validation before applying the preference function to optimize behavior.

|

| 20 |

+

|

| 21 |

+

In our experiments, we evaluate the efficacy of MAPLE in terms of sample efficiency during learning, as well as the quality of the final preference function. We use an environment based on the popular Minigrid(Chevalier-Boisvert et al. [2023](https://arxiv.org/html/2412.07207v2#bib.bib14)) and introduce a new realistic vehicle routing benchmark based on OpenStreetMap (OpenStreetMap Contributors [2017](https://arxiv.org/html/2412.07207v2#bib.bib33)) data, which includes text descriptions of the road network of different cities in the USA. Our evaluation shows the effectiveness of MAPLE in preference inference and improving human’s ability to answer queries. Our contributions are threefold:

|

| 22 |

+

|

| 23 |

+

* •We propose a Bayesian preference learning framework that leverages LLMs and natural language explanations to reduce uncertainty over preference functions.

|

| 24 |

+

* •We provide a language-conditioned active query selection approach to reduce human burden.

|

| 25 |

+

* •We conduct extensive evaluations, including the design of a realistic new benchmark that can be used for future research in this area.

|

| 26 |

+

|

| 27 |

+

Related Work

|

| 28 |

+

------------

|

| 29 |

+

|

| 30 |

+

#### Learning from demonstration

|

| 31 |

+

|

| 32 |

+

Most Learning from Demonstration (LfD) algorithms learn a reward function using expert trajectories(Ng and Russell [2000](https://arxiv.org/html/2412.07207v2#bib.bib32); Abbeel and Ng [2004](https://arxiv.org/html/2412.07207v2#bib.bib1); Ziebart et al. [2008](https://arxiv.org/html/2412.07207v2#bib.bib50)). Some of these approaches utilize a Bayesian framework to learn the reward or preference function(Ramachandran and Amir [2007](https://arxiv.org/html/2412.07207v2#bib.bib37); Brown et al. [2020](https://arxiv.org/html/2412.07207v2#bib.bib10); Mahmud, Saisubramanian, and Zilberstein [2023](https://arxiv.org/html/2412.07207v2#bib.bib31)), and some pair it with active learning to reduce the number of human queries(Sadigh et al. [2017](https://arxiv.org/html/2412.07207v2#bib.bib38); Basu, Singhal, and Dragan [2018](https://arxiv.org/html/2412.07207v2#bib.bib3); Biyik [2022](https://arxiv.org/html/2412.07207v2#bib.bib4)). However, these methods are unable to utilize natural language abstraction, whereas our method can use both. In addition, we employ language-conditioned active learning to reduce user burden, an approach not previously explored in this context.

|

| 33 |

+

|

| 34 |

+

#### Natural language in intention communication

|

| 35 |

+

|

| 36 |

+

With the advent of natural language processing, several works have focused on directly communicating abstract concepts to agents(Tevet et al. [2022](https://arxiv.org/html/2412.07207v2#bib.bib42); Guo et al. [2022](https://arxiv.org/html/2412.07207v2#bib.bib21); Wang et al. [2024](https://arxiv.org/html/2412.07207v2#bib.bib45); Sontakke et al. [2024](https://arxiv.org/html/2412.07207v2#bib.bib41); Lin et al. [2022](https://arxiv.org/html/2412.07207v2#bib.bib26); Tien et al. [2024](https://arxiv.org/html/2412.07207v2#bib.bib44); Lou et al. [2024](https://arxiv.org/html/2412.07207v2#bib.bib27)). The key difference is that these works directly condition behavior on natural language, whereas we learn a language-abstracted preference function. This approach offers several advantages, including increased transparency, a more fine-grained trade-off between concepts, and enhanced transferability. The work most closely related to ours is (Lin et al. [2022](https://arxiv.org/html/2412.07207v2#bib.bib26)), which infers rewards from language but restricts them to step-wise decision-making.

|

| 37 |

+

|

| 38 |

+

Other lines of work(Yu et al. [2023](https://arxiv.org/html/2412.07207v2#bib.bib47); Ma et al. [2023](https://arxiv.org/html/2412.07207v2#bib.bib30)) aim to learn reward functions directly by prompting LLMs. However, these methods are limited by the variables available in the coding space and often struggle with identifying temporally extended abstract behaviors. Further, these approaches can not utilize conventional preference feedback, whereas MAPLE can utilize both. Additionally, they either lack a systematic way of acquiring human feedback or rely on data-hungry evolutionary algorithms. In contrast, our approach employs more efficient Bayesian active learning.

|

| 39 |

+

|

| 40 |

+

#### Abstraction in reward learning

|

| 41 |

+

|

| 42 |

+

Several works leverage abstract concepts to learn reward functions(Lyu et al. [2019](https://arxiv.org/html/2412.07207v2#bib.bib29); Illanes et al. [2020](https://arxiv.org/html/2412.07207v2#bib.bib23); Icarte et al. [2022](https://arxiv.org/html/2412.07207v2#bib.bib22); Guan, Valmeekam, and Kambhampati [2022](https://arxiv.org/html/2412.07207v2#bib.bib19); Soni et al. [2022](https://arxiv.org/html/2412.07207v2#bib.bib40); Bobu et al. [2021](https://arxiv.org/html/2412.07207v2#bib.bib6); Guan et al. [2021](https://arxiv.org/html/2412.07207v2#bib.bib20); Guan, Sreedharan, and Kambhampati [2022](https://arxiv.org/html/2412.07207v2#bib.bib18); Silver et al. [2022](https://arxiv.org/html/2412.07207v2#bib.bib39); Zhang et al. [2022](https://arxiv.org/html/2412.07207v2#bib.bib48); Bucker et al. [2023](https://arxiv.org/html/2412.07207v2#bib.bib12); Cui et al. [2023](https://arxiv.org/html/2412.07207v2#bib.bib15)). Two methods closely related to our work are PRESCA(Soni et al. [2022](https://arxiv.org/html/2412.07207v2#bib.bib40)) and RBA(Guan, Valmeekam, and Kambhampati [2022](https://arxiv.org/html/2412.07207v2#bib.bib19)). PRESCA learns state-based abstract concepts to be avoided, while RBA learns temporally extended concepts with two variants: global (eliciting preference weights directly from humans) and local (tuning weights using binary search). Our approach also leverages temporally extended concepts but learns preference functions from natural language feedback using active learning. Unlike RBA, which relies on direct preference weights from humans or binary search, our method uses LLM-guided active learning for more expressive and informative preference elicitation, thereby reducing human effort.

|

| 43 |

+

|

| 44 |

+

Some works use offline behavior datasets or demonstrations to learn diverse skills(Lee and Popović [2010](https://arxiv.org/html/2412.07207v2#bib.bib24); Wang et al. [2017](https://arxiv.org/html/2412.07207v2#bib.bib46); Zhou and Dragan [2018](https://arxiv.org/html/2412.07207v2#bib.bib49); Peng et al. [2018](https://arxiv.org/html/2412.07207v2#bib.bib34); Luo et al. [2020](https://arxiv.org/html/2412.07207v2#bib.bib28); Chebotar et al. [2021](https://arxiv.org/html/2412.07207v2#bib.bib13); Peng et al. [2021](https://arxiv.org/html/2412.07207v2#bib.bib35)), which complement our approach. While MAPLE can also utilize such datasets in pre-training, the focus of MAPLE is to encode human preference in terms of these concepts using natural language.

|

| 45 |

+

|

| 46 |

+

#### Alignment auditing

|

| 47 |

+

|

| 48 |

+

Alignment auditing ensures that an agent’s behavior aligns with human intentions by verifying that the agent has learned the correct preference function. While some works focus on alignment verification with minimal queries(Brown, Schneider, and Niekum [2021](https://arxiv.org/html/2412.07207v2#bib.bib11)), they often rely on function weights, value weights, or trajectory rankings, which are difficult to interpret. In contrast, our approach leverages natural language to communicate with humans, facilitating validation and serving as a stopping criterion for the active learning process. Mahmud, Saisubramanian, and Zilberstein ([2023](https://arxiv.org/html/2412.07207v2#bib.bib31)) presents a notable alignment auditing approach related to our method, using explanations to detect misalignment and update distributions over preferences. While they employ a feature attribution method, we use natural language explanations. Additionally, they use human-selected or randomly sampled data points from an offline dataset for auditing, whereas we employ active learning to enhance efficiency.

|

| 49 |

+

|

| 50 |

+

#### Active learning

|

| 51 |

+

|

| 52 |

+

Previous works have explored different acquisition functions for active learning, typically focusing on selecting queries that maximize certain uncertainty quantization metrics. These metrics include predictive entropy(Gal and Ghahramani [2016](https://arxiv.org/html/2412.07207v2#bib.bib17)), uncertainty volume reduction(Sadigh et al. [2017](https://arxiv.org/html/2412.07207v2#bib.bib38)), mutual information maximization(Biyik et al. [2019](https://arxiv.org/html/2412.07207v2#bib.bib5)), and maximizing variation ratios(Gal and Ghahramani [2016](https://arxiv.org/html/2412.07207v2#bib.bib17)). Our approach complements these methods by integrating language-conditioned query selection to reduce user burden. While any of these methods can be paired with MAPLE, we opt for variation ratio due to its ease of calculation and high effectiveness.

|

| 53 |

+

|

| 54 |

+

|

| 55 |

+

|

| 56 |

+

Figure 1: Application of MAPLE to the Natural Language Vehicle Routing Task.

|

| 57 |

+

|

| 58 |

+

Background

|

| 59 |

+

----------

|

| 60 |

+

|

| 61 |

+

#### Markov decision process (MDP)

|

| 62 |

+

|

| 63 |

+

A Markov Decision Process (MDP) M 𝑀 M italic_M is represented by the tuple M=(S,A,T,S 0,R,γ)𝑀 𝑆 𝐴 𝑇 subscript 𝑆 0 𝑅 𝛾 M=(S,A,T,S_{0},R,\gamma)italic_M = ( italic_S , italic_A , italic_T , italic_S start_POSTSUBSCRIPT 0 end_POSTSUBSCRIPT , italic_R , italic_γ ), where S 𝑆 S italic_S is the set of states, A 𝐴 A italic_A is the set of actions, T:S×A×S→[0,1]:𝑇→𝑆 𝐴 𝑆 0 1 T:S\times A\times S\rightarrow[0,1]italic_T : italic_S × italic_A × italic_S → [ 0 , 1 ] is the transition function, S 0 subscript 𝑆 0 S_{0}italic_S start_POSTSUBSCRIPT 0 end_POSTSUBSCRIPT is the initial state distribution, and γ∈[0,1)𝛾 0 1\gamma\in[0,1)italic_γ ∈ [ 0 , 1 ) is the discount factor. A history h t subscript ℎ 𝑡 h_{t}italic_h start_POSTSUBSCRIPT italic_t end_POSTSUBSCRIPT is a sequence of states up to time t 𝑡 t italic_t, (s 0,…,s t)subscript 𝑠 0…subscript 𝑠 𝑡(s_{0},\dots,s_{t})( italic_s start_POSTSUBSCRIPT 0 end_POSTSUBSCRIPT , … , italic_s start_POSTSUBSCRIPT italic_t end_POSTSUBSCRIPT ). The reward function R:H×A→[−R max,R max]:𝑅→𝐻 𝐴 subscript 𝑅 max subscript 𝑅 max R:H\times A\rightarrow[-R_{\text{max}},R_{\text{max}}]italic_R : italic_H × italic_A → [ - italic_R start_POSTSUBSCRIPT max end_POSTSUBSCRIPT , italic_R start_POSTSUBSCRIPT max end_POSTSUBSCRIPT ] maps histories and actions to rewards. For some problems, a goal function G:H→[0,1]:𝐺→𝐻 0 1 G:H\rightarrow[0,1]italic_G : italic_H → [ 0 , 1 ] is provided that maps histories to goal achievements. In such problems, the reward function is typically R:H×A→[−R max,0]:𝑅→𝐻 𝐴 subscript 𝑅 max 0 R:H\times A\rightarrow[-R_{\text{max}},0]italic_R : italic_H × italic_A → [ - italic_R start_POSTSUBSCRIPT max end_POSTSUBSCRIPT , 0 ] and ∀a∈A for-all 𝑎 𝐴\forall a\in A∀ italic_a ∈ italic_A, T(s g,a,s g)=1 𝑇 subscript 𝑠 𝑔 𝑎 subscript 𝑠 𝑔 1 T(s_{g},a,s_{g})=1 italic_T ( italic_s start_POSTSUBSCRIPT italic_g end_POSTSUBSCRIPT , italic_a , italic_s start_POSTSUBSCRIPT italic_g end_POSTSUBSCRIPT ) = 1 and R(h t∪s g,a)=0 𝑅 subscript ℎ 𝑡 subscript 𝑠 𝑔 𝑎 0 R(h_{t}\cup s_{g},a)=0 italic_R ( italic_h start_POSTSUBSCRIPT italic_t end_POSTSUBSCRIPT ∪ italic_s start_POSTSUBSCRIPT italic_g end_POSTSUBSCRIPT , italic_a ) = 0 given the final state s g∈h t subscript 𝑠 𝑔 subscript ℎ 𝑡 s_{g}\in h_{t}italic_s start_POSTSUBSCRIPT italic_g end_POSTSUBSCRIPT ∈ italic_h start_POSTSUBSCRIPT italic_t end_POSTSUBSCRIPT. A policy π:H×A→[0,1]:𝜋→𝐻 𝐴 0 1\pi:H\times A\rightarrow[0,1]italic_π : italic_H × italic_A → [ 0 , 1 ] is a mapping from histories to a distribution over actions. The policy π 𝜋\pi italic_π induces a value function V π:S→ℝ:superscript 𝑉 𝜋→𝑆 ℝ V^{\pi}:S\rightarrow\mathbb{R}italic_V start_POSTSUPERSCRIPT italic_π end_POSTSUPERSCRIPT : italic_S → blackboard_R, which represents the expected cumulative return V π(s)superscript 𝑉 𝜋 𝑠 V^{\pi}(s)italic_V start_POSTSUPERSCRIPT italic_π end_POSTSUPERSCRIPT ( italic_s ) that the agent can achieve from state s 𝑠 s italic_s when following policy π 𝜋\pi italic_π. An optimal policy π∗superscript 𝜋\pi^{*}italic_π start_POSTSUPERSCRIPT ∗ end_POSTSUPERSCRIPT maximizes the expected cumulative return V∗(s)superscript 𝑉 𝑠 V^{*}(s)italic_V start_POSTSUPERSCRIPT ∗ end_POSTSUPERSCRIPT ( italic_s ) from any state s 𝑠 s italic_s, particularly from the initial state s 0 subscript 𝑠 0 s_{0}italic_s start_POSTSUBSCRIPT 0 end_POSTSUBSCRIPT.

|

| 64 |

+

|

| 65 |

+

#### Bayesian preference learning

|

| 66 |

+

|

| 67 |

+

A preference function ω 𝜔\omega italic_ω maps a trajectory τ 𝜏\tau italic_τ to a real number reflecting the alignment of the trajectory with the human’s objective. The goal of preference learning is to infer this function from various types of human feedback. A common approach involves learning this function from a pairwise preference dataset, denoted by 𝒟={(τ 1 1≻τ 1 2),(τ 2 1≻τ 2 2),…,(τ n 1≻τ n 2)}𝒟 succeeds subscript superscript 𝜏 1 1 subscript superscript 𝜏 2 1 succeeds subscript superscript 𝜏 1 2 subscript superscript 𝜏 2 2…succeeds subscript superscript 𝜏 1 𝑛 subscript superscript 𝜏 2 𝑛\mathcal{D}=\{(\tau^{1}_{1}\succ\tau^{2}_{1}),(\tau^{1}_{2}\succ\tau^{2}_{2}),% \ldots,(\tau^{1}_{n}\succ\tau^{2}_{n})\}caligraphic_D = { ( italic_τ start_POSTSUPERSCRIPT 1 end_POSTSUPERSCRIPT start_POSTSUBSCRIPT 1 end_POSTSUBSCRIPT ≻ italic_τ start_POSTSUPERSCRIPT 2 end_POSTSUPERSCRIPT start_POSTSUBSCRIPT 1 end_POSTSUBSCRIPT ) , ( italic_τ start_POSTSUPERSCRIPT 1 end_POSTSUPERSCRIPT start_POSTSUBSCRIPT 2 end_POSTSUBSCRIPT ≻ italic_τ start_POSTSUPERSCRIPT 2 end_POSTSUPERSCRIPT start_POSTSUBSCRIPT 2 end_POSTSUBSCRIPT ) , … , ( italic_τ start_POSTSUPERSCRIPT 1 end_POSTSUPERSCRIPT start_POSTSUBSCRIPT italic_n end_POSTSUBSCRIPT ≻ italic_τ start_POSTSUPERSCRIPT 2 end_POSTSUPERSCRIPT start_POSTSUBSCRIPT italic_n end_POSTSUBSCRIPT ) }, where τ i 1 subscript superscript 𝜏 1 𝑖\tau^{1}_{i}italic_τ start_POSTSUPERSCRIPT 1 end_POSTSUPERSCRIPT start_POSTSUBSCRIPT italic_i end_POSTSUBSCRIPT and τ i 2 subscript superscript 𝜏 2 𝑖\tau^{2}_{i}italic_τ start_POSTSUPERSCRIPT 2 end_POSTSUPERSCRIPT start_POSTSUBSCRIPT italic_i end_POSTSUBSCRIPT are two different trajectories, and τ i 1≻τ i 2 succeeds subscript superscript 𝜏 1 𝑖 subscript superscript 𝜏 2 𝑖\tau^{1}_{i}\succ\tau^{2}_{i}italic_τ start_POSTSUPERSCRIPT 1 end_POSTSUPERSCRIPT start_POSTSUBSCRIPT italic_i end_POSTSUBSCRIPT ≻ italic_τ start_POSTSUPERSCRIPT 2 end_POSTSUPERSCRIPT start_POSTSUBSCRIPT italic_i end_POSTSUBSCRIPT indicates that τ i 1 subscript superscript 𝜏 1 𝑖\tau^{1}_{i}italic_τ start_POSTSUPERSCRIPT 1 end_POSTSUPERSCRIPT start_POSTSUBSCRIPT italic_i end_POSTSUBSCRIPT is preferred to τ i 2 subscript superscript 𝜏 2 𝑖\tau^{2}_{i}italic_τ start_POSTSUPERSCRIPT 2 end_POSTSUPERSCRIPT start_POSTSUBSCRIPT italic_i end_POSTSUBSCRIPT. A Bayesian framework for preference learning, as described in Ramachandran and Amir ([2007](https://arxiv.org/html/2412.07207v2#bib.bib37)), defines a probability distribution over preference functions given a trajectory dataset 𝒟 𝒟\mathcal{D}caligraphic_D using Bayes’ rule: P(ω∣𝒟)∝P(𝒟∣ω)P(ω)proportional-to 𝑃 conditional 𝜔 𝒟 𝑃 conditional 𝒟 𝜔 𝑃 𝜔 P(\omega\mid\mathcal{D})\propto P(\mathcal{D}\mid\omega)P(\omega)italic_P ( italic_ω ∣ caligraphic_D ) ∝ italic_P ( caligraphic_D ∣ italic_ω ) italic_P ( italic_ω ). Various algorithms define P(𝒟∣ω)𝑃 conditional 𝒟 𝜔 P(\mathcal{D}\mid\omega)italic_P ( caligraphic_D ∣ italic_ω ) differently, but we adopt the definition from BREX (Brown et al. [2020](https://arxiv.org/html/2412.07207v2#bib.bib10)) using the Bradley–Terry model(Bradley and Terry [1952](https://arxiv.org/html/2412.07207v2#bib.bib7)):

|

| 68 |

+

|

| 69 |

+

P(𝒟∣ω)=∏(τ i 1≻τ i 2)∈𝒟 e βω(τ i 1)e βω(τ i 1)+e βω(τ i 2).𝑃 conditional 𝒟 𝜔 subscript product succeeds subscript superscript 𝜏 1 𝑖 subscript superscript 𝜏 2 𝑖 𝒟 superscript 𝑒 𝛽 𝜔 subscript superscript 𝜏 1 𝑖 superscript 𝑒 𝛽 𝜔 subscript superscript 𝜏 1 𝑖 superscript 𝑒 𝛽 𝜔 subscript superscript 𝜏 2 𝑖 P(\mathcal{D}\mid\omega)=\prod_{(\tau^{1}_{i}\succ\tau^{2}_{i})\in\mathcal{D}}% \frac{e^{\beta\omega(\tau^{1}_{i})}}{e^{\beta\omega(\tau^{1}_{i})}+e^{\beta% \omega(\tau^{2}_{i})}}.italic_P ( caligraphic_D ∣ italic_ω ) = ∏ start_POSTSUBSCRIPT ( italic_τ start_POSTSUPERSCRIPT 1 end_POSTSUPERSCRIPT start_POSTSUBSCRIPT italic_i end_POSTSUBSCRIPT ≻ italic_τ start_POSTSUPERSCRIPT 2 end_POSTSUPERSCRIPT start_POSTSUBSCRIPT italic_i end_POSTSUBSCRIPT ) ∈ caligraphic_D end_POSTSUBSCRIPT divide start_ARG italic_e start_POSTSUPERSCRIPT italic_β italic_ω ( italic_τ start_POSTSUPERSCRIPT 1 end_POSTSUPERSCRIPT start_POSTSUBSCRIPT italic_i end_POSTSUBSCRIPT ) end_POSTSUPERSCRIPT end_ARG start_ARG italic_e start_POSTSUPERSCRIPT italic_β italic_ω ( italic_τ start_POSTSUPERSCRIPT 1 end_POSTSUPERSCRIPT start_POSTSUBSCRIPT italic_i end_POSTSUBSCRIPT ) end_POSTSUPERSCRIPT + italic_e start_POSTSUPERSCRIPT italic_β italic_ω ( italic_τ start_POSTSUPERSCRIPT 2 end_POSTSUPERSCRIPT start_POSTSUBSCRIPT italic_i end_POSTSUBSCRIPT ) end_POSTSUPERSCRIPT end_ARG .(1)

|

| 70 |

+

|

| 71 |

+

Here, β∈[0,∞)𝛽 0\beta\in[0,\infty)italic_β ∈ [ 0 , ∞ ) is the inverse-temperature parameter.

|

| 72 |

+

|

| 73 |

+

#### Variance ratio

|

| 74 |

+

|

| 75 |

+

Given a conditional probability distribution P(⋅∣X)P(\cdot\mid X)italic_P ( ⋅ ∣ italic_X ) over {y i}i=0 k superscript subscript subscript 𝑦 𝑖 𝑖 0 𝑘\{y_{i}\}_{i=0}^{k}{ italic_y start_POSTSUBSCRIPT italic_i end_POSTSUBSCRIPT } start_POSTSUBSCRIPT italic_i = 0 end_POSTSUBSCRIPT start_POSTSUPERSCRIPT italic_k end_POSTSUPERSCRIPT, the variance ratio of an input X 𝑋 X italic_X is defined as follows:

|

| 76 |

+

|

| 77 |

+

Variance_Ratio(X)=1−argmax y iP(y i∣X)Variance_Ratio 𝑋 1 subscript arg max subscript 𝑦 𝑖 𝑃 conditional subscript 𝑦 𝑖 𝑋\text{Variance\_Ratio}(X)=1-\operatorname*{arg\,max}_{y_{i}}P(y_{i}\mid X)Variance_Ratio ( italic_X ) = 1 - start_OPERATOR roman_arg roman_max end_OPERATOR start_POSTSUBSCRIPT italic_y start_POSTSUBSCRIPT italic_i end_POSTSUBSCRIPT end_POSTSUBSCRIPT italic_P ( italic_y start_POSTSUBSCRIPT italic_i end_POSTSUBSCRIPT ∣ italic_X )

|

| 78 |

+

|

| 79 |

+

Problem Formulation

|

| 80 |

+

-------------------

|

| 81 |

+

|

| 82 |

+

#### MAPLE

|

| 83 |

+

|

| 84 |

+

We define a MAPLE problem instance as the tuple (M−R,C,Ω,D τ,ℋ,𝕃)subscript 𝑀 𝑅 𝐶 Ω subscript 𝐷 𝜏 ℋ 𝕃(M_{-R},C,\Omega,D_{\tau},\mathcal{H},\mathbb{L})( italic_M start_POSTSUBSCRIPT - italic_R end_POSTSUBSCRIPT , italic_C , roman_Ω , italic_D start_POSTSUBSCRIPT italic_τ end_POSTSUBSCRIPT , caligraphic_H , blackboard_L ), where:

|

| 85 |

+

|

| 86 |

+

* •M−R subscript 𝑀 𝑅 M_{-R}italic_M start_POSTSUBSCRIPT - italic_R end_POSTSUBSCRIPT is an MDP with an undefined reward function R 𝑅 R italic_R.

|

| 87 |

+

* •ℋ ℋ\mathcal{H}caligraphic_H is the human interaction function that acts as the interface between the human and the MAPLE framework. Humans provide their feedback, preferences, and explanations in response to natural language queries posed by MAPLE.

|

| 88 |

+

* •𝕃 𝕃\mathbb{L}blackboard_L is the LLM interaction function that generates natural language queries to the LLM and returns structured output in text files, such as JSON format.

|

| 89 |

+

* •C 𝐶 C italic_C is an expanding set of natural language concepts {c 1,c 2,…,c n}subscript 𝑐 1 subscript 𝑐 2…subscript 𝑐 𝑛\{c_{1},c_{2},\ldots,c_{n}\}{ italic_c start_POSTSUBSCRIPT 1 end_POSTSUBSCRIPT , italic_c start_POSTSUBSCRIPT 2 end_POSTSUBSCRIPT , … , italic_c start_POSTSUBSCRIPT italic_n end_POSTSUBSCRIPT }. We also use C(⋅)𝐶⋅C(\cdot)italic_C ( ⋅ ) to refer to a mapping model that takes a trajectory embedding ϕ(τ)italic-ϕ 𝜏\phi(\tau)italic_ϕ ( italic_τ ) and a natural language concept embedding ψ(c i)𝜓 subscript 𝑐 𝑖\psi(c_{i})italic_ψ ( italic_c start_POSTSUBSCRIPT italic_i end_POSTSUBSCRIPT ) and maps them to a numeric value indicating the degree to which the trajectory τ 𝜏\tau italic_τ satisfies the concept c i subscript 𝑐 𝑖 c_{i}italic_c start_POSTSUBSCRIPT italic_i end_POSTSUBSCRIPT. For non-Markovian concepts, ϕ(⋅)italic-ϕ⋅\phi(\cdot)italic_ϕ ( ⋅ ) may be a sequence model such as a transformer. For Markovian concepts, we can define C(ϕ(τ),ψ(c i))=∑s∈τ C(ϕ(s),ψ(c i))𝐶 italic-ϕ 𝜏 𝜓 subscript 𝑐 𝑖 subscript 𝑠 𝜏 𝐶 italic-ϕ 𝑠 𝜓 subscript 𝑐 𝑖 C(\phi(\tau),\psi(c_{i}))=\sum_{\displaystyle s\in\tau}C(\phi(s),\psi(c_{i}))italic_C ( italic_ϕ ( italic_τ ) , italic_ψ ( italic_c start_POSTSUBSCRIPT italic_i end_POSTSUBSCRIPT ) ) = ∑ start_POSTSUBSCRIPT italic_s ∈ italic_τ end_POSTSUBSCRIPT italic_C ( italic_ϕ ( italic_s ) , italic_ψ ( italic_c start_POSTSUBSCRIPT italic_i end_POSTSUBSCRIPT ) ), where ϕ(s)italic-ϕ 𝑠\phi(s)italic_ϕ ( italic_s ) is the state embedding.

|

| 90 |

+

* •Ω Ω\Omega roman_Ω is the space of all preference functions. In MAPLE, the preference functions ω 𝜔\omega italic_ω over a trajectory τ 𝜏\tau italic_τ are modeled as a linear combination of the concepts and their associated weights:

|

| 91 |

+

|

| 92 |

+

ω(τ)=∑c i∈C ω c i⋅C(ϕ(τ),ψ(c i))𝜔 𝜏 subscript subscript 𝑐 𝑖 𝐶⋅subscript 𝜔 subscript 𝑐 𝑖 𝐶 italic-ϕ 𝜏 𝜓 subscript 𝑐 𝑖\omega(\tau)=\sum_{\displaystyle c_{i}\in C}\omega_{c_{i}}\cdot C(\phi(\tau),% \psi(c_{i}))italic_ω ( italic_τ ) = ∑ start_POSTSUBSCRIPT italic_c start_POSTSUBSCRIPT italic_i end_POSTSUBSCRIPT ∈ italic_C end_POSTSUBSCRIPT italic_ω start_POSTSUBSCRIPT italic_c start_POSTSUBSCRIPT italic_i end_POSTSUBSCRIPT end_POSTSUBSCRIPT ⋅ italic_C ( italic_ϕ ( italic_τ ) , italic_ψ ( italic_c start_POSTSUBSCRIPT italic_i end_POSTSUBSCRIPT ) )(2)

|

| 93 |

+

* •D τ subscript 𝐷 𝜏 D_{\tau}italic_D start_POSTSUBSCRIPT italic_τ end_POSTSUBSCRIPT is a dataset of unlabeled trajectories {τ 1,τ 2,…,τ m}subscript 𝜏 1 subscript 𝜏 2…subscript 𝜏 𝑚\{\tau_{1},\tau_{2},\ldots,\tau_{m}\}{ italic_τ start_POSTSUBSCRIPT 1 end_POSTSUBSCRIPT , italic_τ start_POSTSUBSCRIPT 2 end_POSTSUBSCRIPT , … , italic_τ start_POSTSUBSCRIPT italic_m end_POSTSUBSCRIPT }.

|

| 94 |

+

|

| 95 |

+

The objective of MAPLE is to model the repeated interaction between a human and an agent, where the human communicates their task objective 𝒜 𝒯 ℋ subscript superscript 𝒜 ℋ 𝒯\mathcal{A}^{\mathcal{H}}_{\mathcal{T}}caligraphic_A start_POSTSUPERSCRIPT caligraphic_H end_POSTSUPERSCRIPT start_POSTSUBSCRIPT caligraphic_T end_POSTSUBSCRIPT in natural language, and the agent is responsible for completing the task in alignment with that objective. MAPLE accomplishes this by actively learning a symbolic preference function ω 𝜔\omega italic_ω using large language models (LLMs), enabling the agent to optimize its behavior according to this function to ensure its actions align with human preferences.

|

| 96 |

+

|

| 97 |

+

#### Motivating example

|

| 98 |

+

|

| 99 |

+

Consider an intelligent route planning system that takes a source, a destination, and user preferences about the route in natural language, as illustrated in Figure [1](https://arxiv.org/html/2412.07207v2#Sx2.F1 "Figure 1 ‣ Active learning ‣ Related Work ‣ MAPLE: A Framework for Active Preference Learning Guided by Large Language Models"). Datasets for several preference-defining concepts such as speed, safety, battery friendliness, smoothness, autopilot friendliness, and scenic view can be easily obtained and used to pre-train the concept mapping function C(⋅)𝐶⋅C(\cdot)italic_C ( ⋅ ). The goal of MAPLE is to take natural language instructions from a human and map them to a preference function ω 𝜔\omega italic_ω interactively so that a search algorithm can optimize it to find the preferred route. MAPLE incorporates preference feedback on top of natural language feedback to address issues like hallucination and calibration associated with directly using LLMs. Additionally, MAPLE allows the human to skip difficult queries and learns in-context which query to present, making the system more human-friendly. Furthermore, the preference function inference process in MAPLE is fully interpretable, enabling a human to audit the process thoroughly and provide the necessary feedback for improvement. Finally, the interaction with the human is repeated, allowing MAPLE to acquire new concepts over time and become more efficient for future tasks.

|

| 100 |

+

|

| 101 |

+

Detailed Description of the Proposed Method

|

| 102 |

+

-------------------------------------------

|

| 103 |

+

|

| 104 |

+

A key innovation of MAPLE is the integration of conventional feedback from the preference learning literature with more expressive linguistic feedback, formally captured within a Bayesian framework introduced in REVEALE(Mahmud, Saisubramanian, and Zilberstein [2023](https://arxiv.org/html/2412.07207v2#bib.bib31)):

|

| 105 |

+

|

| 106 |

+

P(ω∣F h,F l)∝P(F h∣ω)P(F l∣ω)P(ω)proportional-to 𝑃 conditional 𝜔 subscript 𝐹 ℎ subscript 𝐹 𝑙 𝑃 conditional subscript 𝐹 ℎ 𝜔 𝑃 conditional subscript 𝐹 𝑙 𝜔 𝑃 𝜔 P(\omega\mid F_{h},F_{l})\propto P(F_{h}\mid\omega)P(F_{l}\mid\omega)P(\omega)italic_P ( italic_ω ∣ italic_F start_POSTSUBSCRIPT italic_h end_POSTSUBSCRIPT , italic_F start_POSTSUBSCRIPT italic_l end_POSTSUBSCRIPT ) ∝ italic_P ( italic_F start_POSTSUBSCRIPT italic_h end_POSTSUBSCRIPT ∣ italic_ω ) italic_P ( italic_F start_POSTSUBSCRIPT italic_l end_POSTSUBSCRIPT ∣ italic_ω ) italic_P ( italic_ω )(3)

|

| 107 |

+

|

| 108 |

+

Above, F h subscript 𝐹 ℎ F_{h}italic_F start_POSTSUBSCRIPT italic_h end_POSTSUBSCRIPT represents the set of feedback observed in conventional preference learning algorithms, specifically in the context of this paper pairwise trajectory ranking.1 1 1 MAPLE can handle any conventional feedback for which P(F h∣ω)𝑃 conditional subscript 𝐹 ℎ 𝜔 P(F_{h}\mid\omega)italic_P ( italic_F start_POSTSUBSCRIPT italic_h end_POSTSUBSCRIPT ∣ italic_ω ) is defined.F l subscript 𝐹 𝑙 F_{l}italic_F start_POSTSUBSCRIPT italic_l end_POSTSUBSCRIPT denotes the set of linguistic feedback. We can rewrite the equation as:

|

| 109 |

+

|

| 110 |

+

P(ω∣F h,F l)𝑃 conditional 𝜔 subscript 𝐹 ℎ subscript 𝐹 𝑙\displaystyle P(\omega\mid F_{h},F_{l})italic_P ( italic_ω ∣ italic_F start_POSTSUBSCRIPT italic_h end_POSTSUBSCRIPT , italic_F start_POSTSUBSCRIPT italic_l end_POSTSUBSCRIPT )∝P(F h∣ω)⏟Bradley-Terry ModelP(ω∣F l)⏟LLMP(F l)⏟Uniform proportional-to absent subscript⏟𝑃 conditional subscript 𝐹 ℎ 𝜔 Bradley-Terry Model subscript⏟𝑃 conditional 𝜔 subscript 𝐹 𝑙 LLM subscript⏟𝑃 subscript 𝐹 𝑙 Uniform\displaystyle\propto\underbrace{P(F_{h}\mid\omega)}_{\text{Bradley-Terry Model% }}\underbrace{P(\omega\mid F_{l})}_{\text{LLM}}\underbrace{P(F_{l})}_{\text{% Uniform}}∝ under⏟ start_ARG italic_P ( italic_F start_POSTSUBSCRIPT italic_h end_POSTSUBSCRIPT ∣ italic_ω ) end_ARG start_POSTSUBSCRIPT Bradley-Terry Model end_POSTSUBSCRIPT under⏟ start_ARG italic_P ( italic_ω ∣ italic_F start_POSTSUBSCRIPT italic_l end_POSTSUBSCRIPT ) end_ARG start_POSTSUBSCRIPT LLM end_POSTSUBSCRIPT under⏟ start_ARG italic_P ( italic_F start_POSTSUBSCRIPT italic_l end_POSTSUBSCRIPT ) end_ARG start_POSTSUBSCRIPT Uniform end_POSTSUBSCRIPT(4)

|

| 111 |

+

∝P(F h∣ω)⏟Bradley-Terry ModelP(ω∣F l)⏟LLM proportional-to absent subscript⏟𝑃 conditional subscript 𝐹 ℎ 𝜔 Bradley-Terry Model subscript⏟𝑃 conditional 𝜔 subscript 𝐹 𝑙 LLM\displaystyle\propto\underbrace{P(F_{h}\mid\omega)}_{\text{Bradley-Terry Model% }}\underbrace{P(\omega\mid F_{l})}_{\text{LLM}}∝ under⏟ start_ARG italic_P ( italic_F start_POSTSUBSCRIPT italic_h end_POSTSUBSCRIPT ∣ italic_ω ) end_ARG start_POSTSUBSCRIPT Bradley-Terry Model end_POSTSUBSCRIPT under⏟ start_ARG italic_P ( italic_ω ∣ italic_F start_POSTSUBSCRIPT italic_l end_POSTSUBSCRIPT ) end_ARG start_POSTSUBSCRIPT LLM end_POSTSUBSCRIPT(5)

|

| 112 |

+

|

| 113 |

+

Here, the likelihood of F h subscript 𝐹 ℎ F_{h}italic_F start_POSTSUBSCRIPT italic_h end_POSTSUBSCRIPT given ω 𝜔\omega italic_ω is defined using the Bradley-Terry Model. The likelihood of ω 𝜔\omega italic_ω given F l subscript 𝐹 𝑙 F_{l}italic_F start_POSTSUBSCRIPT italic_l end_POSTSUBSCRIPT is estimated using an LLM. Beyond incorporating linguistic feedback via LLMs, MAPLE advances conventional active learning methods. Conventional active learning typically focuses on selecting queries that reduce the maximum uncertainty of the posterior but lacks a flexible mechanism to account for human capability in responding to certain types of queries. MAPLE’s Oracle-guided active query selection enhances any conventional acquisition function by leveraging linguistic feedback to alleviate the human burden associated with difficult queries. In the rest of this section, we provide more details on MAPLE, particularly Algorithms[1](https://arxiv.org/html/2412.07207v2#alg1 "Algorithm 1 ‣ Detailed Description of the Proposed Method ‣ MAPLE: A Framework for Active Preference Learning Guided by Large Language Models") and [2](https://arxiv.org/html/2412.07207v2#alg2 "Algorithm 2 ‣ Stopping criteria ‣ LLM-Guided Active Preference Learning ‣ Detailed Description of the Proposed Method ‣ MAPLE: A Framework for Active Preference Learning Guided by Large Language Models").

|

| 114 |

+

|

| 115 |

+

Algorithm 1 MAPLE

|

| 116 |

+

|

| 117 |

+

0:Human instruction

|

| 118 |

+

|

| 119 |

+

𝒜 𝒯 ℋ subscript superscript 𝒜 ℋ 𝒯\mathcal{A}^{\mathcal{H}}_{\mathcal{T}}caligraphic_A start_POSTSUPERSCRIPT caligraphic_H end_POSTSUPERSCRIPT start_POSTSUBSCRIPT caligraphic_T end_POSTSUBSCRIPT

|

| 120 |

+

, Acquisition function

|

| 121 |

+

|

| 122 |

+

𝒜 f subscript 𝒜 𝑓\mathcal{A}_{f}caligraphic_A start_POSTSUBSCRIPT italic_f end_POSTSUBSCRIPT

|

| 123 |

+

, # of LLM query

|

| 124 |

+

|

| 125 |

+

K 𝐾 K italic_K

|

| 126 |

+

|

| 127 |

+

1:

|

| 128 |

+

|

| 129 |

+

F h,F q←∅,∅formulae-sequence←subscript 𝐹 ℎ subscript 𝐹 𝑞 F_{h},F_{q}\leftarrow\emptyset,\emptyset italic_F start_POSTSUBSCRIPT italic_h end_POSTSUBSCRIPT , italic_F start_POSTSUBSCRIPT italic_q end_POSTSUBSCRIPT ← ∅ , ∅

|

| 130 |

+

|

| 131 |

+

2:

|

| 132 |

+

|

| 133 |

+

F l←{𝒯}←subscript 𝐹 𝑙 𝒯 F_{l}\leftarrow\{\mathcal{T}\}italic_F start_POSTSUBSCRIPT italic_l end_POSTSUBSCRIPT ← { caligraphic_T }

|

| 134 |

+

|

| 135 |

+

3:

|

| 136 |

+

|

| 137 |

+

Ω 𝒯←{ω i}i=0 n∼𝕃(ω∣F l)←subscript Ω 𝒯 superscript subscript subscript 𝜔 𝑖 𝑖 0 𝑛 similar-to 𝕃 conditional 𝜔 subscript 𝐹 𝑙\Omega_{\mathcal{T}}\leftarrow\{\omega_{i}\}_{i=0}^{n}\sim\mathbb{L}(\omega% \mid F_{l})roman_Ω start_POSTSUBSCRIPT caligraphic_T end_POSTSUBSCRIPT ← { italic_ω start_POSTSUBSCRIPT italic_i end_POSTSUBSCRIPT } start_POSTSUBSCRIPT italic_i = 0 end_POSTSUBSCRIPT start_POSTSUPERSCRIPT italic_n end_POSTSUPERSCRIPT ∼ blackboard_L ( italic_ω ∣ italic_F start_POSTSUBSCRIPT italic_l end_POSTSUBSCRIPT )

|

| 138 |

+

|

| 139 |

+

4:while condition not met do

|

| 140 |

+

|

| 141 |

+

5:

|

| 142 |

+

|

| 143 |

+

Q←{(τ i,τ j):τ i,τ j∈𝒟 τ∧(τ i,τ j)∉F h}←𝑄 conditional-set subscript 𝜏 𝑖 subscript 𝜏 𝑗 subscript 𝜏 𝑖 subscript 𝜏 𝑗 subscript 𝒟 𝜏 subscript 𝜏 𝑖 subscript 𝜏 𝑗 subscript 𝐹 ℎ Q\leftarrow\{(\tau_{i},\tau_{j}):\tau_{i},\tau_{j}\in\mathcal{D}_{\tau}\land(% \tau_{i},\tau_{j})\not\in F_{h}\}italic_Q ← { ( italic_τ start_POSTSUBSCRIPT italic_i end_POSTSUBSCRIPT , italic_τ start_POSTSUBSCRIPT italic_j end_POSTSUBSCRIPT ) : italic_τ start_POSTSUBSCRIPT italic_i end_POSTSUBSCRIPT , italic_τ start_POSTSUBSCRIPT italic_j end_POSTSUBSCRIPT ∈ caligraphic_D start_POSTSUBSCRIPT italic_τ end_POSTSUBSCRIPT ∧ ( italic_τ start_POSTSUBSCRIPT italic_i end_POSTSUBSCRIPT , italic_τ start_POSTSUBSCRIPT italic_j end_POSTSUBSCRIPT ) ∉ italic_F start_POSTSUBSCRIPT italic_h end_POSTSUBSCRIPT }

|

| 144 |

+

|

| 145 |

+

6:

|

| 146 |

+

|

| 147 |

+

q←←𝑞 absent q\leftarrow italic_q ←

|

| 148 |

+

Query Selection(

|

| 149 |

+

|

| 150 |

+

𝒜 f subscript 𝒜 𝑓\mathcal{A}_{f}caligraphic_A start_POSTSUBSCRIPT italic_f end_POSTSUBSCRIPT

|

| 151 |

+

,

|

| 152 |

+

|

| 153 |

+

Q 𝑄 Q italic_Q

|

| 154 |

+

,

|

| 155 |

+

|

| 156 |

+

F q subscript 𝐹 𝑞 F_{q}italic_F start_POSTSUBSCRIPT italic_q end_POSTSUBSCRIPT

|

| 157 |

+

,

|

| 158 |

+

|

| 159 |

+

Ω 𝒯 subscript Ω 𝒯\Omega_{\mathcal{T}}roman_Ω start_POSTSUBSCRIPT caligraphic_T end_POSTSUBSCRIPT

|

| 160 |

+

,

|

| 161 |

+

|

| 162 |

+

𝕃 𝕃\mathbb{L}blackboard_L

|

| 163 |

+

,

|

| 164 |

+

|

| 165 |

+

K 𝐾 K italic_K

|

| 166 |

+

)

|

| 167 |

+

|

| 168 |

+

7:

|

| 169 |

+

|

| 170 |

+

(f h,f l,f q)←ℋ(q)←subscript 𝑓 ℎ subscript 𝑓 𝑙 subscript 𝑓 𝑞 ℋ 𝑞(f_{h},f_{l},f_{q})\leftarrow\mathcal{H}(q)( italic_f start_POSTSUBSCRIPT italic_h end_POSTSUBSCRIPT , italic_f start_POSTSUBSCRIPT italic_l end_POSTSUBSCRIPT , italic_f start_POSTSUBSCRIPT italic_q end_POSTSUBSCRIPT ) ← caligraphic_H ( italic_q )

|

| 171 |

+

|

| 172 |

+

8:

|

| 173 |

+

|

| 174 |

+

F h,F l,F q←F h∪{f h},F l∪{f l},F q∪{f q}formulae-sequence←subscript 𝐹 ℎ subscript 𝐹 𝑙 subscript 𝐹 𝑞 subscript 𝐹 ℎ subscript 𝑓 ℎ subscript 𝐹 𝑙 subscript 𝑓 𝑙 subscript 𝐹 𝑞 subscript 𝑓 𝑞 F_{h},F_{l},F_{q}\leftarrow F_{h}\cup\{f_{h}\},F_{l}\cup\{f_{l}\},F_{q}\cup\{f% _{q}\}italic_F start_POSTSUBSCRIPT italic_h end_POSTSUBSCRIPT , italic_F start_POSTSUBSCRIPT italic_l end_POSTSUBSCRIPT , italic_F start_POSTSUBSCRIPT italic_q end_POSTSUBSCRIPT ← italic_F start_POSTSUBSCRIPT italic_h end_POSTSUBSCRIPT ∪ { italic_f start_POSTSUBSCRIPT italic_h end_POSTSUBSCRIPT } , italic_F start_POSTSUBSCRIPT italic_l end_POSTSUBSCRIPT ∪ { italic_f start_POSTSUBSCRIPT italic_l end_POSTSUBSCRIPT } , italic_F start_POSTSUBSCRIPT italic_q end_POSTSUBSCRIPT ∪ { italic_f start_POSTSUBSCRIPT italic_q end_POSTSUBSCRIPT }

|

| 175 |

+

|

| 176 |

+

9:

|

| 177 |

+

|

| 178 |

+

Ω 𝒯←{ω i}i=0 n∼P(F h∣ω)P(ω∣F l)←subscript Ω 𝒯 superscript subscript subscript 𝜔 𝑖 𝑖 0 𝑛 similar-to 𝑃 conditional subscript 𝐹 ℎ 𝜔 𝑃 conditional 𝜔 subscript 𝐹 𝑙\Omega_{\mathcal{T}}\leftarrow\{\omega_{i}\}_{i=0}^{n}\sim P(F_{h}\mid\omega)P% (\omega\mid F_{l})roman_Ω start_POSTSUBSCRIPT caligraphic_T end_POSTSUBSCRIPT ← { italic_ω start_POSTSUBSCRIPT italic_i end_POSTSUBSCRIPT } start_POSTSUBSCRIPT italic_i = 0 end_POSTSUBSCRIPT start_POSTSUPERSCRIPT italic_n end_POSTSUPERSCRIPT ∼ italic_P ( italic_F start_POSTSUBSCRIPT italic_h end_POSTSUBSCRIPT ∣ italic_ω ) italic_P ( italic_ω ∣ italic_F start_POSTSUBSCRIPT italic_l end_POSTSUBSCRIPT )

|

| 179 |

+

|

| 180 |

+

10:end while

|

| 181 |

+

|

| 182 |

+

11:return

|

| 183 |

+

|

| 184 |

+

Ω 𝒯 subscript Ω 𝒯\Omega_{\mathcal{T}}roman_Ω start_POSTSUBSCRIPT caligraphic_T end_POSTSUBSCRIPT

|

| 185 |

+

|

| 186 |

+

### Initialization

|

| 187 |

+

|

| 188 |

+

MAPLE starts by taking natural language instruction about task preference 𝒜 𝒯 ℋ subscript superscript 𝒜 ℋ 𝒯\mathcal{A}^{\mathcal{H}}_{\mathcal{T}}caligraphic_A start_POSTSUPERSCRIPT caligraphic_H end_POSTSUPERSCRIPT start_POSTSUBSCRIPT caligraphic_T end_POSTSUBSCRIPT and initializes the pairwise preference feedback set F h subscript 𝐹 ℎ F_{h}italic_F start_POSTSUBSCRIPT italic_h end_POSTSUBSCRIPT, linguistic feedback F l subscript 𝐹 𝑙 F_{l}italic_F start_POSTSUBSCRIPT italic_l end_POSTSUBSCRIPT, and feedback about query difficulty F q subscript 𝐹 𝑞 F_{q}italic_F start_POSTSUBSCRIPT italic_q end_POSTSUBSCRIPT (lines 1-2, Algorithm[1](https://arxiv.org/html/2412.07207v2#alg1 "Algorithm 1 ‣ Detailed Description of the Proposed Method ‣ MAPLE: A Framework for Active Preference Learning Guided by Large Language Models")). After that, the initial set of weights is sampled using the LLM from the distribution P(ω∣F l)𝑃 conditional 𝜔 subscript 𝐹 𝑙 P(\omega\mid F_{l})italic_P ( italic_ω ∣ italic_F start_POSTSUBSCRIPT italic_l end_POSTSUBSCRIPT ) as F h subscript 𝐹 ℎ F_{h}italic_F start_POSTSUBSCRIPT italic_h end_POSTSUBSCRIPT is still empty (line 3, Algorithm[1](https://arxiv.org/html/2412.07207v2#alg1 "Algorithm 1 ‣ Detailed Description of the Proposed Method ‣ MAPLE: A Framework for Active Preference Learning Guided by Large Language Models")). To sample ω 𝜔\omega italic_ω from P(ω∣F l)𝑃 conditional 𝜔 subscript 𝐹 𝑙 P(\omega\mid F_{l})italic_P ( italic_ω ∣ italic_F start_POSTSUBSCRIPT italic_l end_POSTSUBSCRIPT ) we explore two sampling strategies described below.

|

| 189 |

+

|

| 190 |

+

#### Preference weight sampling from LLM

|

| 191 |

+

|

| 192 |

+

We directly prompt the LLM 𝕃 𝕃\mathbb{L}blackboard_L to provide linear weights ω 𝜔\omega italic_ω over the abstract concepts. Specifically, we provide 𝕃 𝕃\mathbb{L}blackboard_L with a prompt containing the task description 𝒯 𝒯\mathcal{T}caligraphic_T, a list of known concepts C 𝐶 C italic_C, human preference 𝒜 𝒯 ℋ subscript superscript 𝒜 ℋ 𝒯\mathcal{A}^{\mathcal{H}}_{\mathcal{T}}caligraphic_A start_POSTSUPERSCRIPT caligraphic_H end_POSTSUPERSCRIPT start_POSTSUBSCRIPT caligraphic_T end_POSTSUBSCRIPT, and examples of instruction weight pairs, along with additional answer generation instructions G 𝐺 G italic_G (see Appendix for details). The LLM processes this prompt and returns an answer 𝒜 ω i 𝕃 subscript superscript 𝒜 𝕃 subscript 𝜔 𝑖\mathcal{A}^{\mathbb{L}}_{\omega_{i}}caligraphic_A start_POSTSUPERSCRIPT blackboard_L end_POSTSUPERSCRIPT start_POSTSUBSCRIPT italic_ω start_POSTSUBSCRIPT italic_i end_POSTSUBSCRIPT end_POSTSUBSCRIPT:

|

| 193 |

+

|

| 194 |

+

𝒜 ω i 𝕃←𝕃(prompt(𝒯,C,𝒜 𝒯 ℋ,D ℐ,G))←subscript superscript 𝒜 𝕃 subscript 𝜔 𝑖 𝕃 prompt 𝒯 𝐶 subscript superscript 𝒜 ℋ 𝒯 subscript 𝐷 ℐ 𝐺\mathcal{A}^{\mathbb{L}}_{\omega_{i}}\leftarrow\mathbb{L}(\text{prompt}(% \mathcal{T},C,\mathcal{A}^{\mathcal{H}}_{\mathcal{T}},D_{\mathcal{I}},G))caligraphic_A start_POSTSUPERSCRIPT blackboard_L end_POSTSUPERSCRIPT start_POSTSUBSCRIPT italic_ω start_POSTSUBSCRIPT italic_i end_POSTSUBSCRIPT end_POSTSUBSCRIPT ← blackboard_L ( prompt ( caligraphic_T , italic_C , caligraphic_A start_POSTSUPERSCRIPT caligraphic_H end_POSTSUPERSCRIPT start_POSTSUBSCRIPT caligraphic_T end_POSTSUBSCRIPT , italic_D start_POSTSUBSCRIPT caligraphic_I end_POSTSUBSCRIPT , italic_G ) )

|

| 195 |

+

|

| 196 |

+

We can take advantage of text generation temperature to collect a diverse set of samples. We define the set of all generated weights as 𝒜 ω 𝕃 subscript superscript 𝒜 𝕃 𝜔\mathcal{A}^{\mathbb{L}}_{\omega}caligraphic_A start_POSTSUPERSCRIPT blackboard_L end_POSTSUPERSCRIPT start_POSTSUBSCRIPT italic_ω end_POSTSUBSCRIPT. Then P(ω j∣F l)𝑃 conditional subscript 𝜔 𝑗 subscript 𝐹 𝑙 P(\omega_{j}\mid F_{l})italic_P ( italic_ω start_POSTSUBSCRIPT italic_j end_POSTSUBSCRIPT ∣ italic_F start_POSTSUBSCRIPT italic_l end_POSTSUBSCRIPT ) can be modeled for any arbitrary ω j subscript 𝜔 𝑗\omega_{j}italic_ω start_POSTSUBSCRIPT italic_j end_POSTSUBSCRIPT as follows:

|

| 197 |

+

|

| 198 |

+

P(ω j∣F l)=exp(−β l𝔼 ω i∈𝒜 ω 𝕃[Distance(ω i,ω j)])𝑃 conditional subscript 𝜔 𝑗 subscript 𝐹 𝑙 subscript 𝛽 𝑙 subscript 𝔼 subscript 𝜔 𝑖 subscript superscript 𝒜 𝕃 𝜔 delimited-[]Distance subscript 𝜔 𝑖 subscript 𝜔 𝑗 P(\omega_{j}\mid F_{l})=\exp\left(-\beta_{l}\mathbb{E}_{\omega_{i}\in\mathcal{% A}^{\mathbb{L}}_{\omega}}[\text{Distance}(\omega_{i},\omega_{j})]\right)italic_P ( italic_ω start_POSTSUBSCRIPT italic_j end_POSTSUBSCRIPT ∣ italic_F start_POSTSUBSCRIPT italic_l end_POSTSUBSCRIPT ) = roman_exp ( - italic_β start_POSTSUBSCRIPT italic_l end_POSTSUBSCRIPT blackboard_E start_POSTSUBSCRIPT italic_ω start_POSTSUBSCRIPT italic_i end_POSTSUBSCRIPT ∈ caligraphic_A start_POSTSUPERSCRIPT blackboard_L end_POSTSUPERSCRIPT start_POSTSUBSCRIPT italic_ω end_POSTSUBSCRIPT end_POSTSUBSCRIPT [ Distance ( italic_ω start_POSTSUBSCRIPT italic_i end_POSTSUBSCRIPT , italic_ω start_POSTSUBSCRIPT italic_j end_POSTSUBSCRIPT ) ] )(6)

|

| 199 |

+

|

| 200 |

+

In this case, Euclidean or Cosine distance can be applied.

|

| 201 |

+

|

| 202 |

+

#### Distribution weight sampling using LLM

|

| 203 |

+

|

| 204 |

+

The second approach we explore is distribution modeling using an LLM. Here, we use similar prompts as in the previous approach; however, we instruct the LLM to generate parameters for P(ω∣F l)𝑃 conditional 𝜔 subscript 𝐹 𝑙 P(\omega\mid F_{l})italic_P ( italic_ω ∣ italic_F start_POSTSUBSCRIPT italic_l end_POSTSUBSCRIPT ). For example, for the weight of each concept ω c i∈ω subscript 𝜔 subscript 𝑐 𝑖 𝜔\omega_{c_{i}}\in\omega italic_ω start_POSTSUBSCRIPT italic_c start_POSTSUBSCRIPT italic_i end_POSTSUBSCRIPT end_POSTSUBSCRIPT ∈ italic_ω we prompt 𝕃 𝕃\mathbb{L}blackboard_L to generate a range ω c i range=[ω c i min,ω c i max]superscript subscript 𝜔 subscript 𝑐 𝑖 range superscript subscript 𝜔 subscript 𝑐 𝑖 min superscript subscript 𝜔 subscript 𝑐 𝑖 max\omega_{c_{i}}^{\text{range}}=[\omega_{c_{i}}^{\text{min}},\omega_{c_{i}}^{% \text{max}}]italic_ω start_POSTSUBSCRIPT italic_c start_POSTSUBSCRIPT italic_i end_POSTSUBSCRIPT end_POSTSUBSCRIPT start_POSTSUPERSCRIPT range end_POSTSUPERSCRIPT = [ italic_ω start_POSTSUBSCRIPT italic_c start_POSTSUBSCRIPT italic_i end_POSTSUBSCRIPT end_POSTSUBSCRIPT start_POSTSUPERSCRIPT min end_POSTSUPERSCRIPT , italic_ω start_POSTSUBSCRIPT italic_c start_POSTSUBSCRIPT italic_i end_POSTSUBSCRIPT end_POSTSUBSCRIPT start_POSTSUPERSCRIPT max end_POSTSUPERSCRIPT ]. Then we can define P(ω∣F l)𝑃 conditional 𝜔 subscript 𝐹 𝑙 P(\omega\mid F_{l})italic_P ( italic_ω ∣ italic_F start_POSTSUBSCRIPT italic_l end_POSTSUBSCRIPT ) as follows:

|

| 205 |

+

|

| 206 |

+

P(ω∣F l)={1,ifω c i∈ω c i range,∀ω c i∈ω 0,otherwise.𝑃 conditional 𝜔 subscript 𝐹 𝑙 cases 1 formulae-sequence if subscript 𝜔 subscript 𝑐 𝑖 superscript subscript 𝜔 subscript 𝑐 𝑖 range for-all subscript 𝜔 subscript 𝑐 𝑖 𝜔 0 otherwise P(\omega\mid F_{l})=\begin{cases}1,&\text{if }\omega_{c_{i}}\in\omega_{c_{i}}^% {\text{range}},\forall\omega_{c_{i}}\in\omega\\ 0,&\text{otherwise}.\end{cases}italic_P ( italic_ω ∣ italic_F start_POSTSUBSCRIPT italic_l end_POSTSUBSCRIPT ) = { start_ROW start_CELL 1 , end_CELL start_CELL if italic_ω start_POSTSUBSCRIPT italic_c start_POSTSUBSCRIPT italic_i end_POSTSUBSCRIPT end_POSTSUBSCRIPT ∈ italic_ω start_POSTSUBSCRIPT italic_c start_POSTSUBSCRIPT italic_i end_POSTSUBSCRIPT end_POSTSUBSCRIPT start_POSTSUPERSCRIPT range end_POSTSUPERSCRIPT , ∀ italic_ω start_POSTSUBSCRIPT italic_c start_POSTSUBSCRIPT italic_i end_POSTSUBSCRIPT end_POSTSUBSCRIPT ∈ italic_ω end_CELL end_ROW start_ROW start_CELL 0 , end_CELL start_CELL otherwise . end_CELL end_ROW(7)

|

| 207 |

+

|

| 208 |

+

We can similarly model this for other forms of distributions, such as the Gaussian distribution. Once the initialization process is complete, MAPLE iteratively reduces its uncertainty using human feedback.

|

| 209 |

+

|

| 210 |

+

### LLM-Guided Active Preference Learning

|

| 211 |

+

|

| 212 |

+

After initialization, MAPLE iteratively follows three steps: 1) query selection, 2) human feedback collection, and 3) preference posterior update, discussed below.

|

| 213 |

+

|

| 214 |

+

#### Oracle-guided active query selection (OAQS)

|

| 215 |

+

|

| 216 |

+

At the beginning of each iteration, MAPLE selects a query q 𝑞 q italic_q (a pair of trajectories) (lines 5-6, Algorithm [1](https://arxiv.org/html/2412.07207v2#alg1 "Algorithm 1 ‣ Detailed Description of the Proposed Method ‣ MAPLE: A Framework for Active Preference Learning Guided by Large Language Models")) from 𝒟 τ subscript 𝒟 𝜏\mathcal{D}_{\tau}caligraphic_D start_POSTSUBSCRIPT italic_τ end_POSTSUBSCRIPT that would reduce uncertainty the most while mitigating query difficulty based on human feedback. The query selection process is described in Algorithm [2](https://arxiv.org/html/2412.07207v2#alg2 "Algorithm 2 ‣ Stopping criteria ‣ LLM-Guided Active Preference Learning ‣ Detailed Description of the Proposed Method ‣ MAPLE: A Framework for Active Preference Learning Guided by Large Language Models"), which starts by sorting all the queries based on an acquisition function 𝒜 f subscript 𝒜 𝑓\mathcal{A}_{f}caligraphic_A start_POSTSUBSCRIPT italic_f end_POSTSUBSCRIPT. In this paper, we use the variance ratio for its flexibility and high efficacy. In particular, for trajectory ranking queries, the score for (τ i,τ j)subscript 𝜏 𝑖 subscript 𝜏 𝑗(\tau_{i},\tau_{j})( italic_τ start_POSTSUBSCRIPT italic_i end_POSTSUBSCRIPT , italic_τ start_POSTSUBSCRIPT italic_j end_POSTSUBSCRIPT ) is calculated as 𝔼 ω∼Ω 𝒯[1−max(P(τ i≻τ j∣ω),P(τ j≻τ i∣ω))]subscript 𝔼 similar-to 𝜔 subscript Ω 𝒯 delimited-[]1 𝑃 succeeds subscript 𝜏 𝑖 conditional subscript 𝜏 𝑗 𝜔 𝑃 succeeds subscript 𝜏 𝑗 conditional subscript 𝜏 𝑖 𝜔\mathbb{E}_{\omega\sim\Omega_{\mathcal{T}}}[1-\max(P(\tau_{i}\succ\tau_{j}\mid% \omega),P(\tau_{j}\succ\tau_{i}\mid\omega))]blackboard_E start_POSTSUBSCRIPT italic_ω ∼ roman_Ω start_POSTSUBSCRIPT caligraphic_T end_POSTSUBSCRIPT end_POSTSUBSCRIPT [ 1 - roman_max ( italic_P ( italic_τ start_POSTSUBSCRIPT italic_i end_POSTSUBSCRIPT ≻ italic_τ start_POSTSUBSCRIPT italic_j end_POSTSUBSCRIPT ∣ italic_ω ) , italic_P ( italic_τ start_POSTSUBSCRIPT italic_j end_POSTSUBSCRIPT ≻ italic_τ start_POSTSUBSCRIPT italic_i end_POSTSUBSCRIPT ∣ italic_ω ) ) ]. Note that other acquisition functions can also be used. Once sorted, OAQS iterates over the top K 𝐾 K italic_K queries and selects the first query that the oracle (in our case an LLM) evaluates to be answerable by the human (lines 2-11). Finally, Algorithm [2](https://arxiv.org/html/2412.07207v2#alg2 "Algorithm 2 ‣ Stopping criteria ‣ LLM-Guided Active Preference Learning ‣ Detailed Description of the Proposed Method ‣ MAPLE: A Framework for Active Preference Learning Guided by Large Language Models") returns the least difficult query q 𝑞 q italic_q among the top K query selected by 𝒜 f subscript 𝒜 𝑓\mathcal{A}_{f}caligraphic_A start_POSTSUBSCRIPT italic_f end_POSTSUBSCRIPT. We now analyze the performance of OAQS based on the characterization of the oracle.2 2 2 Proofs are in the Appendix.

|

| 217 |

+

|

| 218 |

+

###### Definition 1

|

| 219 |

+

|

| 220 |

+

Let Q 𝑄 Q italic_Q denote the set of all possible queries, and Q 𝒜⊆Q subscript 𝑄 𝒜 𝑄 Q_{\mathcal{A}}\subseteq Q italic_Q start_POSTSUBSCRIPT caligraphic_A end_POSTSUBSCRIPT ⊆ italic_Q represent the subset of queries answerable by ℋ ℋ\mathcal{H}caligraphic_H. The Absolute Query Success Rate (AQSR) is defined as the probability that a randomly selected query q 𝑞 q italic_q belongs to the intersection Q∩Q 𝒜 𝑄 subscript 𝑄 𝒜 Q\cap Q_{\mathcal{A}}italic_Q ∩ italic_Q start_POSTSUBSCRIPT caligraphic_A end_POSTSUBSCRIPT, i.e., P(q∈Q 𝒜)𝑃 𝑞 subscript 𝑄 𝒜 P(q\in Q_{\mathcal{A}})italic_P ( italic_q ∈ italic_Q start_POSTSUBSCRIPT caligraphic_A end_POSTSUBSCRIPT ).

|

| 221 |

+

|

| 222 |

+

###### Definition 2

|

| 223 |

+

|

| 224 |

+

The Query Success Rate (QSR) of a query selection strategy is defined as the probability that a query q 𝑞 q italic_q, selected by the strategy, belongs to Q 𝒜 subscript 𝑄 𝒜 Q_{\mathcal{A}}italic_Q start_POSTSUBSCRIPT caligraphic_A end_POSTSUBSCRIPT, i.e., P(q∈Q 𝒜∣strategy)𝑃 𝑞 conditional subscript 𝑄 𝒜 strategy P(q\in Q_{\mathcal{A}}\mid\text{strategy})italic_P ( italic_q ∈ italic_Q start_POSTSUBSCRIPT caligraphic_A end_POSTSUBSCRIPT ∣ strategy ).

|

| 225 |

+

|

| 226 |

+

###### Proposition 1

|

| 227 |

+

|

| 228 |

+

Assuming the independence of AQSR from acquisition function ranking, the QSR of a random query selection strategy: P(q∈Q 𝒜∣random)=AQSR 𝑃 𝑞 conditional subscript 𝑄 𝒜 random 𝐴 𝑄 𝑆 𝑅 P(q\in Q_{\mathcal{A}}\mid\text{random})=AQSR italic_P ( italic_q ∈ italic_Q start_POSTSUBSCRIPT caligraphic_A end_POSTSUBSCRIPT ∣ random ) = italic_A italic_Q italic_S italic_R

|

| 229 |

+

|

| 230 |

+

###### Proposition 2

|

| 231 |

+

|

| 232 |

+

Under the same assumption of proposition 1, the QSR of a top-query selection strategy, which always selects the highest-rated query by 𝒜 f subscript 𝒜 𝑓\mathcal{A}_{f}caligraphic_A start_POSTSUBSCRIPT italic_f end_POSTSUBSCRIPT, P(q∈Q 𝒜∣top)=AQSR 𝑃 𝑞 conditional subscript 𝑄 𝒜 top 𝐴 𝑄 𝑆 𝑅 P(q\in Q_{\mathcal{A}}\mid\text{top})=AQSR italic_P ( italic_q ∈ italic_Q start_POSTSUBSCRIPT caligraphic_A end_POSTSUBSCRIPT ∣ top ) = italic_A italic_Q italic_S italic_R.

|

| 233 |

+

|

| 234 |

+

###### Proposition 3

|

| 235 |

+

|

| 236 |

+

The QSR of the OAQS strategy is given by

|

| 237 |

+

|

| 238 |

+

AQSR⋅Y 1⋅1−[AQSR⋅(1−Y 0−Y 1)+Y 0]K 1−[AQSR⋅(1−Y 0−Y 1)+Y 0],⋅𝐴 𝑄 𝑆 𝑅 subscript 𝑌 1 1 superscript delimited-[]⋅AQSR 1 subscript 𝑌 0 subscript 𝑌 1 subscript 𝑌 0 𝐾 1 delimited-[]⋅AQSR 1 subscript 𝑌 0 subscript 𝑌 1 subscript 𝑌 0 AQSR\cdot Y_{1}\cdot\frac{1-\left[\text{AQSR}\cdot(1-Y_{0}-Y_{1})+Y_{0}\right]% ^{K}}{1-\left[\text{AQSR}\cdot(1-Y_{0}-Y_{1})+Y_{0}\right]},italic_A italic_Q italic_S italic_R ⋅ italic_Y start_POSTSUBSCRIPT 1 end_POSTSUBSCRIPT ⋅ divide start_ARG 1 - [ AQSR ⋅ ( 1 - italic_Y start_POSTSUBSCRIPT 0 end_POSTSUBSCRIPT - italic_Y start_POSTSUBSCRIPT 1 end_POSTSUBSCRIPT ) + italic_Y start_POSTSUBSCRIPT 0 end_POSTSUBSCRIPT ] start_POSTSUPERSCRIPT italic_K end_POSTSUPERSCRIPT end_ARG start_ARG 1 - [ AQSR ⋅ ( 1 - italic_Y start_POSTSUBSCRIPT 0 end_POSTSUBSCRIPT - italic_Y start_POSTSUBSCRIPT 1 end_POSTSUBSCRIPT ) + italic_Y start_POSTSUBSCRIPT 0 end_POSTSUBSCRIPT ] end_ARG ,

|

| 239 |

+

|

| 240 |

+

where Y 0=P(𝕃(F q,q∉Q 𝒜)=False)subscript 𝑌 0 𝑃 𝕃 subscript 𝐹 𝑞 𝑞 subscript 𝑄 𝒜 False Y_{0}=P(\mathbb{L}(F_{q},q\notin Q_{\mathcal{A}})=\text{False})italic_Y start_POSTSUBSCRIPT 0 end_POSTSUBSCRIPT = italic_P ( blackboard_L ( italic_F start_POSTSUBSCRIPT italic_q end_POSTSUBSCRIPT , italic_q ∉ italic_Q start_POSTSUBSCRIPT caligraphic_A end_POSTSUBSCRIPT ) = False ) and

|

| 241 |

+

|

| 242 |

+

Y 1=P(𝕃(F q,q∈Q 𝒜)=True)subscript 𝑌 1 𝑃 𝕃 subscript 𝐹 𝑞 𝑞 subscript 𝑄 𝒜 True Y_{1}=P(\mathbb{L}(F_{q},q\in Q_{\mathcal{A}})=\text{True})italic_Y start_POSTSUBSCRIPT 1 end_POSTSUBSCRIPT = italic_P ( blackboard_L ( italic_F start_POSTSUBSCRIPT italic_q end_POSTSUBSCRIPT , italic_q ∈ italic_Q start_POSTSUBSCRIPT caligraphic_A end_POSTSUBSCRIPT ) = True ). Here, we assume independence of AQSR, Y 0 subscript 𝑌 0 Y_{0}italic_Y start_POSTSUBSCRIPT 0 end_POSTSUBSCRIPT, and Y 1 subscript 𝑌 1 Y_{1}italic_Y start_POSTSUBSCRIPT 1 end_POSTSUBSCRIPT from acquisition function ranking.

|

| 243 |

+

|

| 244 |

+

###### Corollary 1

|

| 245 |

+

|

| 246 |

+

Based on Proposition [3](https://arxiv.org/html/2412.07207v2#Thmproposition3 "Proposition 3 ‣ Oracle-guided active query selection (OAQS) ‣ LLM-Guided Active Preference Learning ‣ Detailed Description of the Proposed Method ‣ MAPLE: A Framework for Active Preference Learning Guided by Large Language Models"), the OAQS will have a higher QSR than the random query selection strategy and top-query selection strategy iff, Y 0+Y 1>1 subscript 𝑌 0 subscript 𝑌 1 1 Y_{0}+Y_{1}>1 italic_Y start_POSTSUBSCRIPT 0 end_POSTSUBSCRIPT + italic_Y start_POSTSUBSCRIPT 1 end_POSTSUBSCRIPT > 1 as K→∞→𝐾 K\rightarrow\infty italic_K → ∞.

|

| 247 |

+

|

| 248 |

+

###### Definition 3

|

| 249 |

+

|

| 250 |

+

The Optimal Query Success Rate (OQSR) of a strategy is defined as the probability that the strategy returns the query q∗superscript 𝑞 q^{*}italic_q start_POSTSUPERSCRIPT ∗ end_POSTSUPERSCRIPT with the highest value according to an acquisition function 𝒜 f subscript 𝒜 𝑓\mathcal{A}_{f}caligraphic_A start_POSTSUBSCRIPT italic_f end_POSTSUBSCRIPT, among all answerable queries, i.e.,

|

| 251 |

+

|

| 252 |

+

P(q∗=argmax q∈Q𝒜 f(q)𝕀(q∈Q 𝒜)),𝑃 superscript 𝑞 subscript 𝑞 𝑄 subscript 𝒜 𝑓 𝑞 𝕀 𝑞 subscript 𝑄 𝒜 P(q^{*}=\arg\max_{q\in Q}\mathcal{A}_{f}(q)\mathbb{I}(q\in Q_{\mathcal{A}})),italic_P ( italic_q start_POSTSUPERSCRIPT ∗ end_POSTSUPERSCRIPT = roman_arg roman_max start_POSTSUBSCRIPT italic_q ∈ italic_Q end_POSTSUBSCRIPT caligraphic_A start_POSTSUBSCRIPT italic_f end_POSTSUBSCRIPT ( italic_q ) blackboard_I ( italic_q ∈ italic_Q start_POSTSUBSCRIPT caligraphic_A end_POSTSUBSCRIPT ) ) ,

|

| 253 |

+

|

| 254 |

+

where q∗superscript 𝑞 q^{*}italic_q start_POSTSUPERSCRIPT ∗ end_POSTSUPERSCRIPT is the query returned by the strategy.

|

| 255 |

+

|

| 256 |

+

###### Proposition 4

|

| 257 |

+

|

| 258 |

+

Under the similar assumption of proposition 1. assumption, the OQSR of a random query selection strategy is equal to 1/|Q|1 𝑄 1/|Q|1 / | italic_Q |.

|

| 259 |

+

|

| 260 |

+

###### Proposition 5

|

| 261 |

+

|

| 262 |

+

Under the similar assumption of proposition 1, the OQSR of a Top-Query Selection Strategy is equal to the AQSR.

|

| 263 |

+

|

| 264 |

+

###### Proposition 6

|

| 265 |

+

|

| 266 |

+

Under the same assumption of Proposition 3, the OQSR of the OAQS strategy is given by

|

| 267 |

+

|

| 268 |

+

OQSR=AQSR⋅Y 1⋅1−[(1−AQSR)Y 0]K 1−(1−AQSR)Y 0.OQSR⋅𝐴 𝑄 𝑆 𝑅 subscript 𝑌 1 1 superscript delimited-[]1 𝐴 𝑄 𝑆 𝑅 subscript 𝑌 0 𝐾 1 1 𝐴 𝑄 𝑆 𝑅 subscript 𝑌 0\text{OQSR}=AQSR\cdot Y_{1}\cdot\frac{1-[(1-AQSR)Y_{0}]^{K}}{1-(1-AQSR)Y_{0}}.OQSR = italic_A italic_Q italic_S italic_R ⋅ italic_Y start_POSTSUBSCRIPT 1 end_POSTSUBSCRIPT ⋅ divide start_ARG 1 - [ ( 1 - italic_A italic_Q italic_S italic_R ) italic_Y start_POSTSUBSCRIPT 0 end_POSTSUBSCRIPT ] start_POSTSUPERSCRIPT italic_K end_POSTSUPERSCRIPT end_ARG start_ARG 1 - ( 1 - italic_A italic_Q italic_S italic_R ) italic_Y start_POSTSUBSCRIPT 0 end_POSTSUBSCRIPT end_ARG .

|

| 269 |

+

|

| 270 |

+

###### Corollary 2

|

| 271 |

+

|

| 272 |

+

Based on Proposition [6](https://arxiv.org/html/2412.07207v2#Sx5.Ex5 "Proposition 6 ‣ Oracle-guided active query selection (OAQS) ‣ LLM-Guided Active Preference Learning ‣ Detailed Description of the Proposed Method ‣ MAPLE: A Framework for Active Preference Learning Guided by Large Language Models"), the OAQS strategy will have a higher OQSR than the top-query selection strategy if (1−AQSR)Y 0+Y 1>1 1 𝐴 𝑄 𝑆 𝑅 subscript 𝑌 0 subscript 𝑌 1 1(1-AQSR)Y_{0}+Y_{1}>1( 1 - italic_A italic_Q italic_S italic_R ) italic_Y start_POSTSUBSCRIPT 0 end_POSTSUBSCRIPT + italic_Y start_POSTSUBSCRIPT 1 end_POSTSUBSCRIPT > 1 as K→∞→𝐾 K\rightarrow\infty italic_K → ∞, and then random query selection strategy if AQSR⋅Y 1>1−(1−AQSR)Y 0|Q|⋅AQSR subscript 𝑌 1 1 1 AQSR subscript 𝑌 0 𝑄\mathrm{AQSR}\cdot Y_{1}>\frac{1-(1-\mathrm{AQSR})Y_{0}}{|Q|}roman_AQSR ⋅ italic_Y start_POSTSUBSCRIPT 1 end_POSTSUBSCRIPT > divide start_ARG 1 - ( 1 - roman_AQSR ) italic_Y start_POSTSUBSCRIPT 0 end_POSTSUBSCRIPT end_ARG start_ARG | italic_Q | end_ARG as K→∞→𝐾 K\rightarrow\infty italic_K → ∞.

|

| 273 |

+

|

| 274 |

+

#### Human feedback collection

|

| 275 |

+

|

| 276 |

+

MAPLE queries the human ℋ ℋ\mathcal{H}caligraphic_H using the query q 𝑞 q italic_q returned by Algorithm [2](https://arxiv.org/html/2412.07207v2#alg2 "Algorithm 2 ‣ Stopping criteria ‣ LLM-Guided Active Preference Learning ‣ Detailed Description of the Proposed Method ‣ MAPLE: A Framework for Active Preference Learning Guided by Large Language Models") to collect feedback. For each query q 𝑞 q italic_q, MAPLE provides a pair of trajectories, and ℋ ℋ\mathcal{H}caligraphic_H returns an answer 𝒜 τ ℋ=(f h,f l,f q)subscript superscript 𝒜 ℋ 𝜏 subscript 𝑓 ℎ subscript 𝑓 𝑙 subscript 𝑓 𝑞\mathcal{A}^{\mathcal{H}}_{\tau}=(f_{h},f_{l},f_{q})caligraphic_A start_POSTSUPERSCRIPT caligraphic_H end_POSTSUPERSCRIPT start_POSTSUBSCRIPT italic_τ end_POSTSUBSCRIPT = ( italic_f start_POSTSUBSCRIPT italic_h end_POSTSUBSCRIPT , italic_f start_POSTSUBSCRIPT italic_l end_POSTSUBSCRIPT , italic_f start_POSTSUBSCRIPT italic_q end_POSTSUBSCRIPT ), where f h subscript 𝑓 ℎ f_{h}italic_f start_POSTSUBSCRIPT italic_h end_POSTSUBSCRIPT is binary feedback, f l subscript 𝑓 𝑙 f_{l}italic_f start_POSTSUBSCRIPT italic_l end_POSTSUBSCRIPT is an optional natural language explanation associated with that feedback—possibly empty if the human does not provide an explanation—and f q subscript 𝑓 𝑞 f_{q}italic_f start_POSTSUBSCRIPT italic_q end_POSTSUBSCRIPT is a optional natural language feedback about the difficulty of the query. Each piece of feedback is then added to the corresponding feedback set (lines 7-8, Algorithm [1](https://arxiv.org/html/2412.07207v2#alg1 "Algorithm 1 ‣ Detailed Description of the Proposed Method ‣ MAPLE: A Framework for Active Preference Learning Guided by Large Language Models")).

|

| 277 |

+

|

| 278 |

+

#### LLM-guided posterior update

|

| 279 |

+

|

| 280 |

+