Add 1 files

Browse files- 2412/2412.11599.md +245 -0

2412/2412.11599.md

ADDED

|

@@ -0,0 +1,245 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

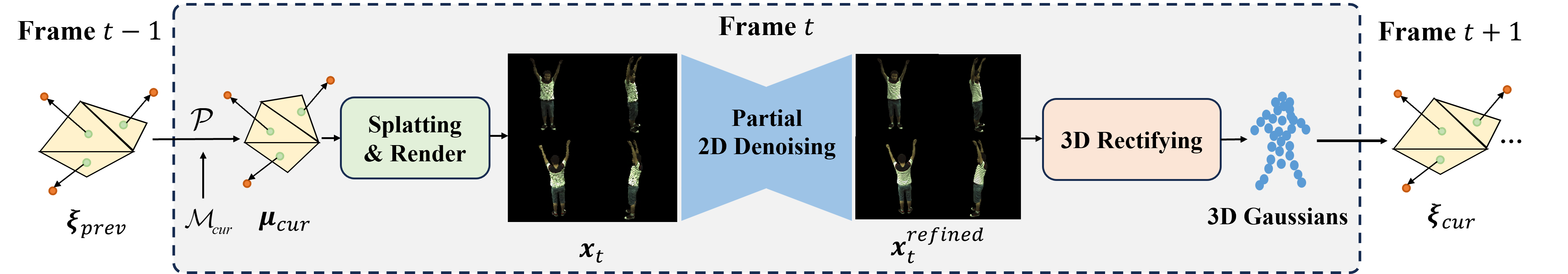

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

Title: 3D2-Actor: Learning Pose-Conditioned 3D-Aware Denoiser for Realistic Gaussian Avatar Modeling

|

| 2 |

+

|

| 3 |

+

URL Source: https://arxiv.org/html/2412.11599

|

| 4 |

+

|

| 5 |

+

Markdown Content:

|

| 6 |

+

###### Abstract

|

| 7 |

+

|

| 8 |

+

Advancements in neural implicit representations and differentiable rendering have markedly improved the ability to learn animatable 3D avatars from sparse multi-view RGB videos. However, current methods that map observation space to canonical space often face challenges in capturing pose-dependent details and generalizing to novel poses. While diffusion models have demonstrated remarkable zero-shot capabilities in 2D image generation, their potential for creating animatable 3D avatars from 2D inputs remains underexplored. In this work, we introduce 3D 2-Actor, a novel approach featuring a pose-conditioned 3D-aware human modeling pipeline that integrates iterative 2D denoising and 3D rectifying steps. The 2D denoiser, guided by pose cues, generates detailed multi-view images that provide the rich feature set necessary for high-fidelity 3D reconstruction and pose rendering. Complementing this, our Gaussian-based 3D rectifier renders images with enhanced 3D consistency through a two-stage projection strategy and a novel local coordinate representation. Additionally, we propose an innovative sampling strategy to ensure smooth temporal continuity across frames in video synthesis. Our method effectively addresses the limitations of traditional numerical solutions in handling ill-posed mappings, producing realistic and animatable 3D human avatars. Experimental results demonstrate that 3D 2-Actor excels in high-fidelity avatar modeling and robustly generalizes to novel poses. Code is available at: https://github.com/silence-tang/GaussianActor.

|

| 9 |

+

|

| 10 |

+

Introduction

|

| 11 |

+

------------

|

| 12 |

+

|

| 13 |

+

Reconstructing animatable 3D human avatars is essential for applications in VR/AR, the Metaverse, and gaming. However, the task is challenging due to factors like non-rigid complex motions and the stochastic nature of subtle clothing wrinkles, which complicate realistic human actor modeling.

|

| 14 |

+

|

| 15 |

+

Traditional methods (Collet et al. [2015](https://arxiv.org/html/2412.11599v1#bib.bib4); Dou et al. [2016](https://arxiv.org/html/2412.11599v1#bib.bib5); Bogo et al. [2015](https://arxiv.org/html/2412.11599v1#bib.bib2); Shapiro et al. [2014](https://arxiv.org/html/2412.11599v1#bib.bib37)) are often hindered by labor-intensive manual design and the difficulty of acquiring high-quality data, limiting their applicability in real-world scenarios. Recent advances in neural implicit representations and differentiable neural rendering (Sitzmann et al. [2020](https://arxiv.org/html/2412.11599v1#bib.bib38); Wang et al. [2021](https://arxiv.org/html/2412.11599v1#bib.bib43); Lombardi et al. [2019](https://arxiv.org/html/2412.11599v1#bib.bib23); Gao et al. [2021](https://arxiv.org/html/2412.11599v1#bib.bib6); Park et al. [2021](https://arxiv.org/html/2412.11599v1#bib.bib28); Pumarola et al. [2021](https://arxiv.org/html/2412.11599v1#bib.bib32)) have opened new avenues for character reconstruction and animation from sparse multi-view RGB videos. While techniques like Neural Radiance Field (NeRF) (Mildenhall et al. [2021](https://arxiv.org/html/2412.11599v1#bib.bib25)) excel in synthesizing static scenes, achieving high-fidelity results for dynamic human avatars remains a significant challenge.

|

| 16 |

+

|

| 17 |

+

One prominent approach involves using a deformation field to map the observation space to a canonical space, as demonstrated in methods like (Su et al. [2021](https://arxiv.org/html/2412.11599v1#bib.bib41); Peng et al. [2021a](https://arxiv.org/html/2412.11599v1#bib.bib29)). Although learning this backward mapping is relatively straightforward, its generalization to novel poses is often limited due to its reliance on the observation state. Alternatives that employ forward mapping (Wang et al. [2022](https://arxiv.org/html/2412.11599v1#bib.bib44); Li et al. [2022](https://arxiv.org/html/2412.11599v1#bib.bib17)), utilizing techniques like differentiable root-finding, have been proposed to address these generalization challenges. Additionally, Neural Body (Peng et al. [2021b](https://arxiv.org/html/2412.11599v1#bib.bib30)) introduces a conditional NeRF approach that anchors local features on SMPL (Loper et al. [2015](https://arxiv.org/html/2412.11599v1#bib.bib24)) vertices, which serve as a scaffold for the model.

|

| 18 |

+

|

| 19 |

+

Despite these advancements, current methods face limitations in handling the complex dynamics of human bodies. For instance, the high stochasticity of clothing, characterized by delicate wrinkles that appear and disappear, poses a significant challenge. Approaches such as (Peng et al. [2021b](https://arxiv.org/html/2412.11599v1#bib.bib30), [a](https://arxiv.org/html/2412.11599v1#bib.bib29); Wang et al. [2022](https://arxiv.org/html/2412.11599v1#bib.bib44)) optimize per-frame latent codes to capture this variability but struggle to adapt to novel poses due to the limited expressivity of these latent codes. More recent works, such as (Hu and Liu [2023](https://arxiv.org/html/2412.11599v1#bib.bib10); Qian et al. [2023a](https://arxiv.org/html/2412.11599v1#bib.bib33); Li et al. [2024](https://arxiv.org/html/2412.11599v1#bib.bib19)), have integrated 3D Gaussian Splatting (3DGS) (Kerbl et al. [2023](https://arxiv.org/html/2412.11599v1#bib.bib15)) into their pipelines, delivering significantly improved results in terms of both rendering efficiency and fidelity. However, these approaches do not fully consider finer visual details during novel pose synthesis.

|

| 20 |

+

|

| 21 |

+

In contrast to these 3D-based approaches, recent 2D generative diffusion models (Ho, Jain, and Abbeel [2020](https://arxiv.org/html/2412.11599v1#bib.bib9); Rombach et al. [2022](https://arxiv.org/html/2412.11599v1#bib.bib35)) have demonstrated significant advantages in terms of visual quality. However, the absence of a 3D representation presents a challenge when extending 2D diffusion models to maintain spatial and temporal consistency, particularly in human-centric scenarios. Several works have attempted to achieve 3D-consistent generation by incorporating additional control inputs (Liu et al. [2023](https://arxiv.org/html/2412.11599v1#bib.bib20)) or integrating a 3D representation into the workflow (Liu et al. [2024b](https://arxiv.org/html/2412.11599v1#bib.bib22); Anciukevičius et al. [2023](https://arxiv.org/html/2412.11599v1#bib.bib1); Karnewar et al. [2023](https://arxiv.org/html/2412.11599v1#bib.bib14)). These methods mainly focus on single-scene generation for general objects, often neglecting temporal consistency, which makes them inadequate for dynamic human modeling.

|

| 22 |

+

|

| 23 |

+

In this work, we tackle the challenge of reconstructing and animating high-fidelity 3D human avatars with controllable poses by introducing 3D 2-Actor, a novel approach featuring a 3D-aware denoiser composed of interleaved pose-conditioned 2D denoising and 3D rectifying steps. Our method uniquely combines the strengths of 3DGS and 2D diffusion models to achieve superior performance in human-centric tasks. Specifically, the 2D denoiser is conditioned on pose clues to generate detailed multi-view images, which are essential for providing rich features supporting the following high-fidelity 3D reconstruction and rendering process. Additionally, the 2D denoiser enhances intricate details from preceding 2D or 3D steps, thereby improving the overall fidelity of the avatar. Complementing the 2D denoiser, our 3D rectifier employs a novel two-stage projection strategy combined with a mesh-based local coordinate representation. The rectifier queries positional offsets and other 3D Gaussian attributes from input images to produce structurally refined multi-view renderings. The integration of 3D Gaussian Splatting ensures high morphological integrity and consistent 3D modeling across various views. To address the temporal incontinuity in animated avatar videos, we propose a Gaussian consistency sampling strategy. This technique utilizes Gaussian local coordinates from previous frames to determine current positions, enabling smooth inter-frame transitions without the need for additional temporal smoothing modules. Our key contributions include:

|

| 24 |

+

|

| 25 |

+

* •

|

| 26 |

+

Novel 3D-Aware Denoiser: We propose a 3D-aware denoiser tailored for reconstructing animatable human avatars from multi-view RGB videos. This method integrates the generative capabilities of 2D diffusion models with the efficient rendering of 3D Gaussian Splatting.

|

| 27 |

+

|

| 28 |

+

* •

|

| 29 |

+

Advanced 3D Rectifier: Our 3D rectifier incorporates a two-stage projection module and a novel local coordinate representation to render structurally refined frames with high multi-view consistency.

|

| 30 |

+

|

| 31 |

+

* •

|

| 32 |

+

Gaussian Consistency Sampling Strategy: We propose a simple yet effective sampling strategy that ensures inter-frame continuity in generated avatar videos. This approach preserves temporal consistency and enhances the overall quality of animated sequences.

|

| 33 |

+

|

| 34 |

+

Related Work

|

| 35 |

+

------------

|

| 36 |

+

|

| 37 |

+

### Animatable 3D Human Avatars

|

| 38 |

+

|

| 39 |

+

In recent years, significant advancements in neural scene representations and differentiable neural rendering techniques have demonstrated high effectiveness in synthesizing novel views for both static (Mildenhall et al. [2021](https://arxiv.org/html/2412.11599v1#bib.bib25); Sitzmann et al. [2020](https://arxiv.org/html/2412.11599v1#bib.bib38)) and dynamic scenes (Gao et al. [2021](https://arxiv.org/html/2412.11599v1#bib.bib6); Park et al. [2021](https://arxiv.org/html/2412.11599v1#bib.bib28); Pumarola et al. [2021](https://arxiv.org/html/2412.11599v1#bib.bib32)). Building upon these studies, various methods attempt to realize 3D human reconstruction from sparse-view RGB videos.

|

| 40 |

+

|

| 41 |

+

Among these approaches, a common line of works involve learning a backward mapping to project points from the observation space to the canonical space. A-Nerf (Su et al. [2021](https://arxiv.org/html/2412.11599v1#bib.bib41)) constructs a deterministic backward mapping using bone-relative embeddings. Animatable NeRF (Peng et al. [2021a](https://arxiv.org/html/2412.11599v1#bib.bib29)) trains a backward LBS network, yet it encounters challenges in generalizing to poses beyond the distribution. ARAH (Wang et al. [2022](https://arxiv.org/html/2412.11599v1#bib.bib44)) and TAVA (Li et al. [2022](https://arxiv.org/html/2412.11599v1#bib.bib17)), in contrast, utilize a forward mapping to transfer features from the canonical space to the observation space. While the generalizability to novel poses has been improved by these methods, the computational cost of their differentiable root-finding algorithm is quite high.

|

| 42 |

+

|

| 43 |

+

|

| 44 |

+

|

| 45 |

+

Figure 1: Illustration of the 3D-aware denoising process. (a) The 3D-aware denoising pipeline consists of interlaced 2D and 3D steps. It begins with pure noise input, progressively generating realistic multi-view images of the human avatar with the assistance of pose information. (b) Guided by body segmentation maps as pose cues, the 2D denoiser (blue box in (a)) transforms noised images from the previous 2D or 3D steps into clean ones with enhanced intricate details. It also provides clean images for the subsequent 3D rectifier to achieve accurate 3D human avatar modeling. (c) Given clean images from N 𝑁 N italic_N anchor views, the 3D rectifier (green box in (a)) performs a two-stage projection leveraging a mesh-based Gaussian local coordinate representation to reconstruct 3D Gaussians, enabling the rendering of multi-view human images with high 3D consistency.

|

| 46 |

+

|

| 47 |

+

Another line of works focus on creating a conditional NeRF for modeling dynamic human bodies. Neural Body (Peng et al. [2021b](https://arxiv.org/html/2412.11599v1#bib.bib30)) attaches structured latent codes to posed SMPL vertices and diffuses them into the adjacent 3D space. Despite its capability for high-quality view synthesis, this method performs suboptimally with novel poses. NPC (Su, Bagautdinov, and Rhodin [2023](https://arxiv.org/html/2412.11599v1#bib.bib40)) employs points to store high-frequency details and utilizes a graph neural network to model pose-dependent deformation based on skeleton poses.

|

| 48 |

+

|

| 49 |

+

A key focus of human avatar animation lies in how to transform input poses into changes in appearance. PoseVocab (Li et al. [2023b](https://arxiv.org/html/2412.11599v1#bib.bib18)) proposes joint-structured pose embeddings to encode dynamic human appearance, successfully mapping low-frequency SMPL-derived attributes to high-frequency dynamic human appearances. However, it neglects the fact that identical poses in different motions can result in varying appearances. Some methods (Peng et al. [2021b](https://arxiv.org/html/2412.11599v1#bib.bib30), [a](https://arxiv.org/html/2412.11599v1#bib.bib29); Wang et al. [2022](https://arxiv.org/html/2412.11599v1#bib.bib44)) employ a per-frame global latent vector to encode stochastic information but this representation cannot generalize well to novel poses. In contrast, our method directly models the distribution of appearances under various poses in image space, enabling a more effective capture of high-frequency visual details.

|

| 50 |

+

|

| 51 |

+

The advent of 3D Gaussian splatting (Kerbl et al. [2023](https://arxiv.org/html/2412.11599v1#bib.bib15)) has unlocked new possibilities for high-fidelity avatar reconstruction with real-time rendering. A number of concurrent methods (Hu and Liu [2023](https://arxiv.org/html/2412.11599v1#bib.bib10); Jung et al. [2023](https://arxiv.org/html/2412.11599v1#bib.bib13); Li et al. [2023a](https://arxiv.org/html/2412.11599v1#bib.bib16); Qian et al. [2023a](https://arxiv.org/html/2412.11599v1#bib.bib33), [b](https://arxiv.org/html/2412.11599v1#bib.bib34); Liu et al. [2024a](https://arxiv.org/html/2412.11599v1#bib.bib21)) have investigated the integration of 3D Gaussian with SMPL models for constructing a 3D Gaussian avatar. While the majority of them try to improve rendering efficiency by substituting the neural implicit radiance field with 3D Gaussian representation, our work focuses more on improving the modeling of detailed appearances to enhance image quality.

|

| 52 |

+

|

| 53 |

+

### 3D Diffusion Models

|

| 54 |

+

|

| 55 |

+

Diffusion models (Ho, Jain, and Abbeel [2020](https://arxiv.org/html/2412.11599v1#bib.bib9)) have demonstrated superior performance in 2D image generation. Due to the absence of a standardized 3D data representation, the expansion of 2D diffusion models into the 3D domain remains an unresolved issue. Some studies (Nichol et al. [2022](https://arxiv.org/html/2412.11599v1#bib.bib27); Gupta et al. [2023](https://arxiv.org/html/2412.11599v1#bib.bib7); Müller et al. [2023](https://arxiv.org/html/2412.11599v1#bib.bib26)) employ 3D supervision to achieve direct generation of 3D content. However, their practical effectiveness is constrained by the limited size and diversity of the available training data (Po et al. [2023](https://arxiv.org/html/2412.11599v1#bib.bib31)).

|

| 56 |

+

|

| 57 |

+

Inspired by 3D GANs, various approaches (Anciukevičius et al. [2023](https://arxiv.org/html/2412.11599v1#bib.bib1); Karnewar et al. [2023](https://arxiv.org/html/2412.11599v1#bib.bib14); Chen et al. [2023](https://arxiv.org/html/2412.11599v1#bib.bib3); Szymanowicz, Rupprecht, and Vedaldi [2023](https://arxiv.org/html/2412.11599v1#bib.bib42)) have been proposed to directly train a diffusion model using 2D image datasets. RenderDiffusion (Anciukevičius et al. [2023](https://arxiv.org/html/2412.11599v1#bib.bib1)) builds a 3D-aware denoiser by incorporating tri-plane representation and neural rendering, predicting a clean image from the noised 2D image. Building upon this research, Viewset Diffusion (Szymanowicz, Rupprecht, and Vedaldi [2023](https://arxiv.org/html/2412.11599v1#bib.bib42)) extends it to multi-view settings. In contrast to these approaches, we take a step further to achieve pose-conditioned human-centric generation. We also present a meticulously designed sampling strategy, enabling the smooth generation of dynamic human videos without introducing extra temporal modules, which is not achieved by current works.

|

| 58 |

+

|

| 59 |

+

Method

|

| 60 |

+

------

|

| 61 |

+

|

| 62 |

+

Problem statement. Given multi-view RGB videos of a single human actor as training data, a model should be trained to reconstruct a realistic 3D avatar of the actor and generate high-fidelity and temporal-smoothing videos when performing avatar animation. In the following sections, preliminary will be present first. Next, we will introduce our 3D-aware denoising process combined with 2D denoiser and 3D rectifier. Following that, a simple yet effective inter-frame sampling strategy will be detailed. Finally, we will elucidate the training objectives of our proposed 3D and 2D modules.

|

| 63 |

+

|

| 64 |

+

### Preliminary

|

| 65 |

+

|

| 66 |

+

Diffusion and denoising process is the core of diffusion models. In this work, we extend this process to our multi-view setting, data 𝒙:=𝒙(1:N)assign 𝒙 superscript 𝒙:1 𝑁\boldsymbol{x}:=\boldsymbol{x}^{(1:N)}bold_italic_x := bold_italic_x start_POSTSUPERSCRIPT ( 1 : italic_N ) end_POSTSUPERSCRIPT represents a set of N 𝑁 N italic_N images that consistently depict a 3D human avatar. To establish the correlation between the noise distribution and the data distribution, a hierarchy of variables is defined as 𝒙 t(1:N)superscript subscript 𝒙 𝑡:1 𝑁\boldsymbol{x}_{t}^{(1:N)}bold_italic_x start_POSTSUBSCRIPT italic_t end_POSTSUBSCRIPT start_POSTSUPERSCRIPT ( 1 : italic_N ) end_POSTSUPERSCRIPT, t=0,…,T 𝑡 0…𝑇 t=0,\dots,T italic_t = 0 , … , italic_T, where 𝒙 T(1:N)∼𝒩(0,𝐈)similar-to superscript subscript 𝒙 𝑇:1 𝑁 𝒩 0 𝐈\boldsymbol{x}_{T}^{(1:N)}\sim\mathcal{N}(0,\mathbf{I})bold_italic_x start_POSTSUBSCRIPT italic_T end_POSTSUBSCRIPT start_POSTSUPERSCRIPT ( 1 : italic_N ) end_POSTSUPERSCRIPT ∼ caligraphic_N ( 0 , bold_I ) and 𝒙 0(1:N)superscript subscript 𝒙 0:1 𝑁\boldsymbol{x}_{0}^{(1:N)}bold_italic_x start_POSTSUBSCRIPT 0 end_POSTSUBSCRIPT start_POSTSUPERSCRIPT ( 1 : italic_N ) end_POSTSUPERSCRIPT is the set of generated multi-view images. Leveraging the properties of the Gaussian distribution, the forward diffusion process that gradually introduces Gaussian noises to clean data 𝒙 0(1:N)superscript subscript 𝒙 0:1 𝑁\boldsymbol{x}_{0}^{(1:N)}bold_italic_x start_POSTSUBSCRIPT 0 end_POSTSUBSCRIPT start_POSTSUPERSCRIPT ( 1 : italic_N ) end_POSTSUPERSCRIPT can be rewritten as:

|

| 67 |

+

|

| 68 |

+

q(𝒙 t(1:N)|𝒙 0(1:N))=𝒩(𝒙 t(1:N);α¯t𝒙 0(1:N),(1−α¯t)𝐈),𝑞 conditional superscript subscript 𝒙 𝑡:1 𝑁 superscript subscript 𝒙 0:1 𝑁 𝒩 superscript subscript 𝒙 𝑡:1 𝑁 subscript¯𝛼 𝑡 superscript subscript 𝒙 0:1 𝑁 1 subscript¯𝛼 𝑡 𝐈 q(\boldsymbol{x}_{t}^{(1:N)}|\boldsymbol{x}_{0}^{(1:N)})=\mathcal{N}(% \boldsymbol{x}_{t}^{(1:N)};\sqrt{\bar{\alpha}_{t}}\boldsymbol{x}_{0}^{(1:N)},(% 1-\bar{\alpha}_{t})\mathbf{I}),italic_q ( bold_italic_x start_POSTSUBSCRIPT italic_t end_POSTSUBSCRIPT start_POSTSUPERSCRIPT ( 1 : italic_N ) end_POSTSUPERSCRIPT | bold_italic_x start_POSTSUBSCRIPT 0 end_POSTSUBSCRIPT start_POSTSUPERSCRIPT ( 1 : italic_N ) end_POSTSUPERSCRIPT ) = caligraphic_N ( bold_italic_x start_POSTSUBSCRIPT italic_t end_POSTSUBSCRIPT start_POSTSUPERSCRIPT ( 1 : italic_N ) end_POSTSUPERSCRIPT ; square-root start_ARG over¯ start_ARG italic_α end_ARG start_POSTSUBSCRIPT italic_t end_POSTSUBSCRIPT end_ARG bold_italic_x start_POSTSUBSCRIPT 0 end_POSTSUBSCRIPT start_POSTSUPERSCRIPT ( 1 : italic_N ) end_POSTSUPERSCRIPT , ( 1 - over¯ start_ARG italic_α end_ARG start_POSTSUBSCRIPT italic_t end_POSTSUBSCRIPT ) bold_I ) ,(1)

|

| 69 |

+

|

| 70 |

+

where α¯t=∏i=1 t α i subscript¯𝛼 𝑡 superscript subscript product 𝑖 1 𝑡 subscript 𝛼 𝑖\bar{\alpha}_{t}=\prod_{i=1}^{t}\alpha_{i}over¯ start_ARG italic_α end_ARG start_POSTSUBSCRIPT italic_t end_POSTSUBSCRIPT = ∏ start_POSTSUBSCRIPT italic_i = 1 end_POSTSUBSCRIPT start_POSTSUPERSCRIPT italic_t end_POSTSUPERSCRIPT italic_α start_POSTSUBSCRIPT italic_i end_POSTSUBSCRIPT and α i subscript 𝛼 𝑖\alpha_{i}italic_α start_POSTSUBSCRIPT italic_i end_POSTSUBSCRIPT denotes the predefined schedule constant. Correspondingly, the inverse process can be formulated as:

|

| 71 |

+

|

| 72 |

+

p(𝒙 t−1(1:N)|𝒙 t(1:N))=𝒩(𝒙 t−1(1:N);μ θ(𝒙 t(1:N),t),Σ θ(𝒙 t(1:N),t)),𝑝 conditional superscript subscript 𝒙 𝑡 1:1 𝑁 superscript subscript 𝒙 𝑡:1 𝑁 𝒩 superscript subscript 𝒙 𝑡 1:1 𝑁 subscript 𝜇 𝜃 superscript subscript 𝒙 𝑡:1 𝑁 𝑡 subscript Σ 𝜃 superscript subscript 𝒙 𝑡:1 𝑁 𝑡 p(\boldsymbol{x}_{t-1}^{(1:N)}|\boldsymbol{x}_{t}^{(1:N)})=\mathcal{N}(% \boldsymbol{x}_{t-1}^{(1:N)};\mu_{\theta}(\boldsymbol{x}_{t}^{(1:N)},t),\Sigma% _{\theta}(\boldsymbol{x}_{t}^{(1:N)},t)),italic_p ( bold_italic_x start_POSTSUBSCRIPT italic_t - 1 end_POSTSUBSCRIPT start_POSTSUPERSCRIPT ( 1 : italic_N ) end_POSTSUPERSCRIPT | bold_italic_x start_POSTSUBSCRIPT italic_t end_POSTSUBSCRIPT start_POSTSUPERSCRIPT ( 1 : italic_N ) end_POSTSUPERSCRIPT ) = caligraphic_N ( bold_italic_x start_POSTSUBSCRIPT italic_t - 1 end_POSTSUBSCRIPT start_POSTSUPERSCRIPT ( 1 : italic_N ) end_POSTSUPERSCRIPT ; italic_μ start_POSTSUBSCRIPT italic_θ end_POSTSUBSCRIPT ( bold_italic_x start_POSTSUBSCRIPT italic_t end_POSTSUBSCRIPT start_POSTSUPERSCRIPT ( 1 : italic_N ) end_POSTSUPERSCRIPT , italic_t ) , roman_Σ start_POSTSUBSCRIPT italic_θ end_POSTSUBSCRIPT ( bold_italic_x start_POSTSUBSCRIPT italic_t end_POSTSUBSCRIPT start_POSTSUPERSCRIPT ( 1 : italic_N ) end_POSTSUPERSCRIPT , italic_t ) ) ,(2)

|

| 73 |

+

|

| 74 |

+

where the mean and variance can be estimated through a U-Net D θ subscript 𝐷 𝜃 D_{\theta}italic_D start_POSTSUBSCRIPT italic_θ end_POSTSUBSCRIPT trained with loss L 𝐿 L italic_L to reconstruct the clean data x 0(1:N)superscript subscript 𝑥 0:1 𝑁 x_{0}^{(1:N)}italic_x start_POSTSUBSCRIPT 0 end_POSTSUBSCRIPT start_POSTSUPERSCRIPT ( 1 : italic_N ) end_POSTSUPERSCRIPT from the noised counterpart x t(1:N)superscript subscript 𝑥 𝑡:1 𝑁 x_{t}^{(1:N)}italic_x start_POSTSUBSCRIPT italic_t end_POSTSUBSCRIPT start_POSTSUPERSCRIPT ( 1 : italic_N ) end_POSTSUPERSCRIPT:

|

| 75 |

+

|

| 76 |

+

L=‖D θ(𝒙 t(1:N),t)−𝒙 0(1:N)‖2.𝐿 superscript norm subscript 𝐷 𝜃 superscript subscript 𝒙 𝑡:1 𝑁 𝑡 superscript subscript 𝒙 0:1 𝑁 2 L=\|D_{\theta}(\boldsymbol{x}_{t}^{(1:N)},t)-\boldsymbol{x}_{0}^{(1:N)}\|^{2}.italic_L = ∥ italic_D start_POSTSUBSCRIPT italic_θ end_POSTSUBSCRIPT ( bold_italic_x start_POSTSUBSCRIPT italic_t end_POSTSUBSCRIPT start_POSTSUPERSCRIPT ( 1 : italic_N ) end_POSTSUPERSCRIPT , italic_t ) - bold_italic_x start_POSTSUBSCRIPT 0 end_POSTSUBSCRIPT start_POSTSUPERSCRIPT ( 1 : italic_N ) end_POSTSUPERSCRIPT ∥ start_POSTSUPERSCRIPT 2 end_POSTSUPERSCRIPT .(3)

|

| 77 |

+

|

| 78 |

+

3D Gaussian Splatting(Kerbl et al. [2023](https://arxiv.org/html/2412.11599v1#bib.bib15)) is an effective point-based representation consisting of a set of anisotropic Gaussians. Each 3D Gaussian is parameterized by its center position 𝝁∈ℝ 3 𝝁 superscript ℝ 3\boldsymbol{\mu}\in\mathbb{R}^{3}bold_italic_μ ∈ blackboard_R start_POSTSUPERSCRIPT 3 end_POSTSUPERSCRIPT, covariance matrix 𝚺∈ℝ 7 𝚺 superscript ℝ 7\boldsymbol{\Sigma}\in\mathbb{R}^{7}bold_Σ ∈ blackboard_R start_POSTSUPERSCRIPT 7 end_POSTSUPERSCRIPT, opacity α∈ℝ 𝛼 ℝ\alpha\in\mathbb{R}italic_α ∈ blackboard_R and color 𝒄∈ℝ 3 𝒄 superscript ℝ 3\boldsymbol{c}\in\mathbb{R}^{3}bold_italic_c ∈ blackboard_R start_POSTSUPERSCRIPT 3 end_POSTSUPERSCRIPT. By splatting 3D Gaussians onto 2D image planes, we can perform point-based rendering:

|

| 79 |

+

|

| 80 |

+

G(𝒑,𝝁 i,𝚺 i)=exp(−1 2(𝒑−𝝁 i)⊺𝚺 i−1(𝒑−𝝁 i)),𝒄(𝒑)=∑i∈𝒦 𝒄 iα i′∏j=1 i−1(1−α j′),α i′=α iG(𝒑,𝝁 i,𝚺 i).formulae-sequence 𝐺 𝒑 subscript 𝝁 𝑖 subscript 𝚺 𝑖 1 2 superscript 𝒑 subscript 𝝁 𝑖⊺superscript subscript 𝚺 𝑖 1 𝒑 subscript 𝝁 𝑖 formulae-sequence 𝒄 𝒑 subscript 𝑖 𝒦 subscript 𝒄 𝑖 superscript subscript 𝛼 𝑖′superscript subscript product 𝑗 1 𝑖 1 1 superscript subscript 𝛼 𝑗′superscript subscript 𝛼 𝑖′subscript 𝛼 𝑖 𝐺 𝒑 subscript 𝝁 𝑖 subscript 𝚺 𝑖\begin{gathered}G(\boldsymbol{p},\boldsymbol{\mu}_{i},\boldsymbol{\Sigma}_{i})% =\exp(-\frac{1}{2}(\boldsymbol{p}-\boldsymbol{\mu}_{i})^{\intercal}\boldsymbol% {\Sigma}_{i}^{-1}(\boldsymbol{p}-\boldsymbol{\mu}_{i})),\\ \boldsymbol{c}(\boldsymbol{p})=\sum\limits_{i\in\mathcal{K}}\boldsymbol{c}_{i}% \alpha_{i}^{\prime}\prod\limits_{j=1}^{i-1}(1-\alpha_{j}^{\prime}),\alpha_{i}^% {\prime}=\alpha_{i}G(\boldsymbol{p},\boldsymbol{\mu}_{i},\boldsymbol{\Sigma}_{% i}).\end{gathered}start_ROW start_CELL italic_G ( bold_italic_p , bold_italic_μ start_POSTSUBSCRIPT italic_i end_POSTSUBSCRIPT , bold_Σ start_POSTSUBSCRIPT italic_i end_POSTSUBSCRIPT ) = roman_exp ( - divide start_ARG 1 end_ARG start_ARG 2 end_ARG ( bold_italic_p - bold_italic_μ start_POSTSUBSCRIPT italic_i end_POSTSUBSCRIPT ) start_POSTSUPERSCRIPT ⊺ end_POSTSUPERSCRIPT bold_Σ start_POSTSUBSCRIPT italic_i end_POSTSUBSCRIPT start_POSTSUPERSCRIPT - 1 end_POSTSUPERSCRIPT ( bold_italic_p - bold_italic_μ start_POSTSUBSCRIPT italic_i end_POSTSUBSCRIPT ) ) , end_CELL end_ROW start_ROW start_CELL bold_italic_c ( bold_italic_p ) = ∑ start_POSTSUBSCRIPT italic_i ∈ caligraphic_K end_POSTSUBSCRIPT bold_italic_c start_POSTSUBSCRIPT italic_i end_POSTSUBSCRIPT italic_α start_POSTSUBSCRIPT italic_i end_POSTSUBSCRIPT start_POSTSUPERSCRIPT ′ end_POSTSUPERSCRIPT ∏ start_POSTSUBSCRIPT italic_j = 1 end_POSTSUBSCRIPT start_POSTSUPERSCRIPT italic_i - 1 end_POSTSUPERSCRIPT ( 1 - italic_α start_POSTSUBSCRIPT italic_j end_POSTSUBSCRIPT start_POSTSUPERSCRIPT ′ end_POSTSUPERSCRIPT ) , italic_α start_POSTSUBSCRIPT italic_i end_POSTSUBSCRIPT start_POSTSUPERSCRIPT ′ end_POSTSUPERSCRIPT = italic_α start_POSTSUBSCRIPT italic_i end_POSTSUBSCRIPT italic_G ( bold_italic_p , bold_italic_μ start_POSTSUBSCRIPT italic_i end_POSTSUBSCRIPT , bold_Σ start_POSTSUBSCRIPT italic_i end_POSTSUBSCRIPT ) . end_CELL end_ROW(4)

|

| 81 |

+

|

| 82 |

+

Here, 𝒑 𝒑\boldsymbol{p}bold_italic_p is the coordinate of the queried point. 𝝁 i subscript 𝝁 𝑖\boldsymbol{\mu}_{i}bold_italic_μ start_POSTSUBSCRIPT italic_i end_POSTSUBSCRIPT, 𝚺 i subscript 𝚺 𝑖\boldsymbol{\Sigma}_{i}bold_Σ start_POSTSUBSCRIPT italic_i end_POSTSUBSCRIPT, 𝒄 i subscript 𝒄 𝑖\boldsymbol{c}_{i}bold_italic_c start_POSTSUBSCRIPT italic_i end_POSTSUBSCRIPT, α i subscript 𝛼 𝑖\alpha_{i}italic_α start_POSTSUBSCRIPT italic_i end_POSTSUBSCRIPT, and α i′superscript subscript 𝛼 𝑖′\alpha_{i}^{\prime}italic_α start_POSTSUBSCRIPT italic_i end_POSTSUBSCRIPT start_POSTSUPERSCRIPT ′ end_POSTSUPERSCRIPT denote the center, covariance, color, opacity, and density of the i 𝑖 i italic_i-th Gaussian, respectively. G(𝒑,𝝁 i,𝚺 i)𝐺 𝒑 subscript 𝝁 𝑖 subscript 𝚺 𝑖 G(\boldsymbol{p},\boldsymbol{\mu}_{i},\boldsymbol{\Sigma}_{i})italic_G ( bold_italic_p , bold_italic_μ start_POSTSUBSCRIPT italic_i end_POSTSUBSCRIPT , bold_Σ start_POSTSUBSCRIPT italic_i end_POSTSUBSCRIPT ) represents the value of the i 𝑖 i italic_i-th Gaussian at position 𝒑 𝒑\boldsymbol{p}bold_italic_p. 𝒦 𝒦\mathcal{K}caligraphic_K is a sorted list of Gaussians in this tile.

|

| 83 |

+

|

| 84 |

+

### 3D-aware Denoising Process

|

| 85 |

+

|

| 86 |

+

To facilitate human avatar reconstruction (given seen poses) and animation (given novel poses), we innovatively propose a generative 3D-aware denoising process which takes as input pure noise from N 𝑁 N italic_N anchor views and SMPL (Loper et al. [2015](https://arxiv.org/html/2412.11599v1#bib.bib24)) pose information and outputs high-quality clean images of the clothed human body. Due to the fact that the 2D denoiser denoises images from different views independently at each step, it fails to ensure consistency in human geometry and texture across views. To address this issue, k 𝑘 k italic_k 3D rectifying steps are inserted between the 2D denoising steps to maintain the 3D consistency of generated images. Considering that the overall denoising process generates large-scale global structure at early stages and finer details at later stages (Huang et al. [2023](https://arxiv.org/html/2412.11599v1#bib.bib11)), and that 3D consistency among multi-view images is mainly reflected in large-scale features, we merely insert 3D steps in the early stages. This approach aims to improve 3D consistency without jeopardizing the quality of fine texture generation. As illustrated in Fig. [1](https://arxiv.org/html/2412.11599v1#Sx2.F1 "Figure 1 ‣ Animatable 3D Human Avatars ‣ Related Work ‣ 3D2-Actor: Learning Pose-Conditioned 3D-Aware Denoiser for Realistic Gaussian Avatar Modeling"), we first apply the initial 3D rectifying step after the first 2D denoising step. Then, we select a timestep t split subscript 𝑡 𝑠 𝑝 𝑙 𝑖 𝑡 t_{split}italic_t start_POSTSUBSCRIPT italic_s italic_p italic_l italic_i italic_t end_POSTSUBSCRIPT as the split point between the early and later stages of denoising and insert the final 3D rectifying step at this point. Subsequently, k−2 𝑘 2 k-2 italic_k - 2 3D rectifying steps are evenly inserted between these 2D steps. Finally, a few 2D steps are appended to the overall denoising process, further optimizing the local delicate textures. The details of the the 2D denoiser and the 3D rectifier will be introduced below.

|

| 87 |

+

|

| 88 |

+

### Pixel-level 2D Denoiser

|

| 89 |

+

|

| 90 |

+

The 2D denoiser is a fundamental component of our 3D-aware denoising process. Basically, it functions as a refiner that enhances local details in the output images from prior 2D or 3D steps. It can also provide clean images for the subsequent 3D rectifying step. Our 2D denoiser acts like a U-Net (Ronneberger, Fischer, and Brox [2015](https://arxiv.org/html/2412.11599v1#bib.bib36)), taking noisy images, human body segmentation maps and the denoising timestep t 𝑡 t italic_t as inputs to predict the denoised clean image at each step. To effectively incorporate pose cues, we draw inspiration from SFTGAN (Wang et al. [2018](https://arxiv.org/html/2412.11599v1#bib.bib45)) and introduce an SFT layer into each U-Net block to modulate the output of the 2D convolution layer. Given that the 3D rectifier can render images from any camera view, our 2D denoiser is trained on frames with varying views to ensure robustness.

|

| 91 |

+

|

| 92 |

+

### Gaussian-based 3D Rectifier

|

| 93 |

+

|

| 94 |

+

The 3D rectifier plays an essential role in our 3D-aware denoising process. It takes in clean images 𝑰(1:N)superscript 𝑰:1 𝑁\boldsymbol{I}^{(1:N)}bold_italic_I start_POSTSUPERSCRIPT ( 1 : italic_N ) end_POSTSUPERSCRIPT from N 𝑁 N italic_N anchor views produced by the previous 2D denoiser and reconstructs the current 3D Gaussians of the avatar. Then, real-time rendering of structure-aligned multi-view images with higher 3D consistency (than the previous 2D step) can be achieved. Note that the 3D rectifier outputs clean images, which can be regarded as “𝒙 0 subscript 𝒙 0\boldsymbol{x}_{0}bold_italic_x start_POSTSUBSCRIPT 0 end_POSTSUBSCRIPT”. Therefore, we can naturally integrate it with the next denoising step leveraging the DDIM (Song, Meng, and Ermon [2020](https://arxiv.org/html/2412.11599v1#bib.bib39)) sampling trick:

|

| 95 |

+

|

| 96 |

+

𝒙 t−1(1:N)=α t−1𝒙^0(1:N)+c tϵ^t(1:N)+σ tϵ t(1:N),superscript subscript 𝒙 𝑡 1:1 𝑁 subscript 𝛼 𝑡 1 superscript subscript^𝒙 0:1 𝑁 subscript 𝑐 𝑡 superscript subscript^italic-ϵ 𝑡:1 𝑁 subscript 𝜎 𝑡 superscript subscript italic-ϵ 𝑡:1 𝑁\boldsymbol{x}_{t-1}^{(1:N)}=\sqrt{\alpha_{t-1}}\hat{\boldsymbol{x}}_{0}^{(1:N% )}+c_{t}\hat{\epsilon}_{t}^{(1:N)}+\sigma_{t}\epsilon_{t}^{(1:N)},bold_italic_x start_POSTSUBSCRIPT italic_t - 1 end_POSTSUBSCRIPT start_POSTSUPERSCRIPT ( 1 : italic_N ) end_POSTSUPERSCRIPT = square-root start_ARG italic_α start_POSTSUBSCRIPT italic_t - 1 end_POSTSUBSCRIPT end_ARG over^ start_ARG bold_italic_x end_ARG start_POSTSUBSCRIPT 0 end_POSTSUBSCRIPT start_POSTSUPERSCRIPT ( 1 : italic_N ) end_POSTSUPERSCRIPT + italic_c start_POSTSUBSCRIPT italic_t end_POSTSUBSCRIPT over^ start_ARG italic_ϵ end_ARG start_POSTSUBSCRIPT italic_t end_POSTSUBSCRIPT start_POSTSUPERSCRIPT ( 1 : italic_N ) end_POSTSUPERSCRIPT + italic_σ start_POSTSUBSCRIPT italic_t end_POSTSUBSCRIPT italic_ϵ start_POSTSUBSCRIPT italic_t end_POSTSUBSCRIPT start_POSTSUPERSCRIPT ( 1 : italic_N ) end_POSTSUPERSCRIPT ,(5)

|

| 97 |

+

|

| 98 |

+

where c t=1−α t−1−σ t 2 subscript 𝑐 𝑡 1 subscript 𝛼 𝑡 1 superscript subscript 𝜎 𝑡 2 c_{t}=\sqrt{1-\alpha_{t-1}-\sigma_{t}^{2}}italic_c start_POSTSUBSCRIPT italic_t end_POSTSUBSCRIPT = square-root start_ARG 1 - italic_α start_POSTSUBSCRIPT italic_t - 1 end_POSTSUBSCRIPT - italic_σ start_POSTSUBSCRIPT italic_t end_POSTSUBSCRIPT start_POSTSUPERSCRIPT 2 end_POSTSUPERSCRIPT end_ARG and σ t subscript 𝜎 𝑡\sigma_{t}italic_σ start_POSTSUBSCRIPT italic_t end_POSTSUBSCRIPT are necessary coefficients, 𝒙^0(1:N)superscript subscript^𝒙 0:1 𝑁\hat{\boldsymbol{x}}_{0}^{(1:N)}over^ start_ARG bold_italic_x end_ARG start_POSTSUBSCRIPT 0 end_POSTSUBSCRIPT start_POSTSUPERSCRIPT ( 1 : italic_N ) end_POSTSUPERSCRIPT is the output of the 3D rectifier and ϵ t(1:N)superscript subscript italic-ϵ 𝑡:1 𝑁\epsilon_{t}^{(1:N)}italic_ϵ start_POSTSUBSCRIPT italic_t end_POSTSUBSCRIPT start_POSTSUPERSCRIPT ( 1 : italic_N ) end_POSTSUPERSCRIPT is sampled random noise.

|

| 99 |

+

|

| 100 |

+

Specifically, we start by rendering body segmentation maps 𝑺(1:N)=ℛ(ℳ,𝒄(1:N))superscript 𝑺:1 𝑁 ℛ ℳ superscript 𝒄:1 𝑁\boldsymbol{S}^{(1:N)}=\mathcal{R}(\mathcal{M},\boldsymbol{c}^{(1:N)})bold_italic_S start_POSTSUPERSCRIPT ( 1 : italic_N ) end_POSTSUPERSCRIPT = caligraphic_R ( caligraphic_M , bold_italic_c start_POSTSUPERSCRIPT ( 1 : italic_N ) end_POSTSUPERSCRIPT ), where ℳ ℳ\mathcal{M}caligraphic_M and 𝒄(1:N)superscript 𝒄:1 𝑁\boldsymbol{c}^{(1:N)}bold_italic_c start_POSTSUPERSCRIPT ( 1 : italic_N ) end_POSTSUPERSCRIPT denote the current posed SMPL model and camera poses, respectively, and ℛ ℛ\mathcal{R}caligraphic_R is the mesh rasterizer. They serve as pose conditions to aid the neural network f ext subscript 𝑓 𝑒 𝑥 𝑡 f_{ext}italic_f start_POSTSUBSCRIPT italic_e italic_x italic_t end_POSTSUBSCRIPT in feature extraction for perceiving the 3D actor. Similar to the 2D denoiser, we also insert SFT layers into each U-Net block, effectively leveraging the pose guidance. The entire process of extracting pixel-aligned features can be formulated as:

|

| 101 |

+

|

| 102 |

+

𝑭 pix(1:N)=f ext(𝑰(1:N),𝑺(1:N),t).superscript subscript 𝑭 𝑝 𝑖 𝑥:1 𝑁 subscript 𝑓 𝑒 𝑥 𝑡 superscript 𝑰:1 𝑁 superscript 𝑺:1 𝑁 𝑡\boldsymbol{F}_{pix}^{(1:N)}=f_{ext}(\boldsymbol{I}^{(1:N)},\boldsymbol{S}^{(1% :N)},t).bold_italic_F start_POSTSUBSCRIPT italic_p italic_i italic_x end_POSTSUBSCRIPT start_POSTSUPERSCRIPT ( 1 : italic_N ) end_POSTSUPERSCRIPT = italic_f start_POSTSUBSCRIPT italic_e italic_x italic_t end_POSTSUBSCRIPT ( bold_italic_I start_POSTSUPERSCRIPT ( 1 : italic_N ) end_POSTSUPERSCRIPT , bold_italic_S start_POSTSUPERSCRIPT ( 1 : italic_N ) end_POSTSUPERSCRIPT , italic_t ) .(6)

|

| 103 |

+

|

| 104 |

+

|

| 105 |

+

|

| 106 |

+

Figure 2: An illustration of the inter-frame Gaussian consistency sampling strategy for improving temporal continuity.

|

| 107 |

+

|

| 108 |

+

After pixel-aligned features are fetched, a key question is how to build the 3D representation of the avatar. Considering the flexibility and efficiency of 3D Gaussian Splatting, we choose it as our 3D representation. Different from current works (Li et al. [2024](https://arxiv.org/html/2412.11599v1#bib.bib19); Jiang et al. [2024](https://arxiv.org/html/2412.11599v1#bib.bib12)) which use regressed 2D maps to store Gaussian attributes, we seek to predict these attributes with a two-stage projection strategy which fully exploits the 3D spatial information.

|

| 109 |

+

|

| 110 |

+

Stage 1: query Gaussian local coordinates. For any Gaussian P 𝑃 P italic_P in the 3D space, its projection T 𝑇 T italic_T on the nearest triangle mesh can be represented by barycentric coordinates (λ 1,λ 2,λ 3)subscript 𝜆 1 subscript 𝜆 2 subscript 𝜆 3(\lambda_{1},\lambda_{2},\lambda_{3})( italic_λ start_POSTSUBSCRIPT 1 end_POSTSUBSCRIPT , italic_λ start_POSTSUBSCRIPT 2 end_POSTSUBSCRIPT , italic_λ start_POSTSUBSCRIPT 3 end_POSTSUBSCRIPT ), where λ 1+λ 2+λ 3=1 subscript 𝜆 1 subscript 𝜆 2 subscript 𝜆 3 1\lambda_{1}+\lambda_{2}+\lambda_{3}=1 italic_λ start_POSTSUBSCRIPT 1 end_POSTSUBSCRIPT + italic_λ start_POSTSUBSCRIPT 2 end_POSTSUBSCRIPT + italic_λ start_POSTSUBSCRIPT 3 end_POSTSUBSCRIPT = 1. Therefore, P 𝑃 P italic_P can be easily described by a local coordinate quaternion 𝝃=(λ 1,λ 2,λ 3,m)𝝃 subscript 𝜆 1 subscript 𝜆 2 subscript 𝜆 3 𝑚\boldsymbol{\xi}=(\lambda_{1},\lambda_{2},\lambda_{3},m)bold_italic_ξ = ( italic_λ start_POSTSUBSCRIPT 1 end_POSTSUBSCRIPT , italic_λ start_POSTSUBSCRIPT 2 end_POSTSUBSCRIPT , italic_λ start_POSTSUBSCRIPT 3 end_POSTSUBSCRIPT , italic_m ), where m=|𝑻𝑷|𝑚 𝑻 𝑷 m=|\boldsymbol{TP}|italic_m = | bold_italic_T bold_italic_P | and we can derive the actual position of P 𝑃 P italic_P by P=λ 1A+λ 2B+λ 3C+m𝒏 𝑃 subscript 𝜆 1 𝐴 subscript 𝜆 2 𝐵 subscript 𝜆 3 𝐶 𝑚 𝒏 P=\lambda_{1}A+\lambda_{2}B+\lambda_{3}C+m\boldsymbol{n}italic_P = italic_λ start_POSTSUBSCRIPT 1 end_POSTSUBSCRIPT italic_A + italic_λ start_POSTSUBSCRIPT 2 end_POSTSUBSCRIPT italic_B + italic_λ start_POSTSUBSCRIPT 3 end_POSTSUBSCRIPT italic_C + italic_m bold_italic_n, where A,B,C 𝐴 𝐵 𝐶 A,B,C italic_A , italic_B , italic_C and 𝒏 𝒏\boldsymbol{n}bold_italic_n are the vertex positions and the normal vector of the triangle mesh, respectively. After Gaussian positions are initialized by sampling uniformly on the SMPL mesh, we project each Gaussian onto 𝑭 pix(1:N)superscript subscript 𝑭 𝑝 𝑖 𝑥:1 𝑁\boldsymbol{F}_{pix}^{(1:N)}bold_italic_F start_POSTSUBSCRIPT italic_p italic_i italic_x end_POSTSUBSCRIPT start_POSTSUPERSCRIPT ( 1 : italic_N ) end_POSTSUPERSCRIPT to query their position displacements. Rather than directly querying their displacements in observation space, we choose to query their local coordinates instead. This trick constrains the Gaussian movement within a reasonable range, helps model subtle clothes wrinkles and facilitates our inter-frame sampling strategy. Specifically, We project each Gaussian onto 𝑭 pix(1:N)superscript subscript 𝑭 𝑝 𝑖 𝑥:1 𝑁\boldsymbol{F}_{pix}^{(1:N)}bold_italic_F start_POSTSUBSCRIPT italic_p italic_i italic_x end_POSTSUBSCRIPT start_POSTSUPERSCRIPT ( 1 : italic_N ) end_POSTSUPERSCRIPT, apply bilinear interpolation to obtain feature vectors for each view, and subsequently concatenate them along the feature dimension. If a Gaussian is not visible under a certain view, the corresponding vector is set to zero. Afterwards, a light-weight MLP takes Gaussian positions in the canonical pose space and the previously obtained projected features as input to predict Gaussian local coordinates. Stage 2: query other Gaussian attributes. After deriving actual Gaussian positions with local coordinates, we apply another projection to fetch the offsets of the remaining Gaussian attributes. Finally, clean multi-view images with higher 3D consistency can be rendered rapidly.

|

| 111 |

+

|

| 112 |

+

### Inter-frame Gaussian Consistency Sampling

|

| 113 |

+

|

| 114 |

+

From the previous discussion, we know that the 3D-aware denoising process can generate highly realistic single-frame renderings from pure noise. However, applying this independently to each frame in video generation can cause noticeable inconsistencies, severely affecting the visual quality. Adding a temporal module is a possible way to address this issue, but this comes with an increased computational cost for training. In contrast, we design a novel inter-frame Gaussian consistency sampling strategy during inference to ensure seamless inter-frame transitions when synthesizing videos. The core idea is to use information from the previous frame to generate a rough image of the current frame, then perform several late-stage 2D denoising steps to correct visual artifacts. To obtain the current frame’s Gaussians, we should propagate the SMPL pose change to the change of Gaussian positions. Fortunately, this can be achieved easily using our mesh-based local coordinates. As depicted in Fig. [2](https://arxiv.org/html/2412.11599v1#Sx3.F2 "Figure 2 ‣ Gaussian-based 3D Rectifier ‣ Method ‣ 3D2-Actor: Learning Pose-Conditioned 3D-Aware Denoiser for Realistic Gaussian Avatar Modeling"), given Gaussian local coordinates 𝝃 prev(1:n)superscript subscript 𝝃 𝑝 𝑟 𝑒 𝑣:1 𝑛\boldsymbol{\xi}_{prev}^{(1:n)}bold_italic_ξ start_POSTSUBSCRIPT italic_p italic_r italic_e italic_v end_POSTSUBSCRIPT start_POSTSUPERSCRIPT ( 1 : italic_n ) end_POSTSUPERSCRIPT of the last frame and the current frame’s SMPL mesh ℳ cur subscript ℳ 𝑐 𝑢 𝑟\mathcal{M}_{cur}caligraphic_M start_POSTSUBSCRIPT italic_c italic_u italic_r end_POSTSUBSCRIPT, the current Gaussian positions 𝝁 cur(1:n)superscript subscript 𝝁 𝑐 𝑢 𝑟:1 𝑛\boldsymbol{\mu}_{cur}^{(1:n)}bold_italic_μ start_POSTSUBSCRIPT italic_c italic_u italic_r end_POSTSUBSCRIPT start_POSTSUPERSCRIPT ( 1 : italic_n ) end_POSTSUPERSCRIPT can be derived by:

|

| 115 |

+

|

| 116 |

+

𝝁 cur(1:n)=𝒫(𝝃 prev(1:n),ℳ cur),superscript subscript 𝝁 𝑐 𝑢 𝑟:1 𝑛 𝒫 superscript subscript 𝝃 𝑝 𝑟 𝑒 𝑣:1 𝑛 subscript ℳ 𝑐 𝑢 𝑟\boldsymbol{\mu}_{cur}^{(1:n)}=\mathcal{P}(\boldsymbol{\xi}_{prev}^{(1:n)},% \mathcal{M}_{cur}),bold_italic_μ start_POSTSUBSCRIPT italic_c italic_u italic_r end_POSTSUBSCRIPT start_POSTSUPERSCRIPT ( 1 : italic_n ) end_POSTSUPERSCRIPT = caligraphic_P ( bold_italic_ξ start_POSTSUBSCRIPT italic_p italic_r italic_e italic_v end_POSTSUBSCRIPT start_POSTSUPERSCRIPT ( 1 : italic_n ) end_POSTSUPERSCRIPT , caligraphic_M start_POSTSUBSCRIPT italic_c italic_u italic_r end_POSTSUBSCRIPT ) ,(7)

|

| 117 |

+

|

| 118 |

+

where n 𝑛 n italic_n is the number of Gaussians and 𝒫 𝒫\mathcal{P}caligraphic_P is an operation that transforms Gaussian local coordinates to their actual positions in the observation space. However, rendering images directly using these Gaussians may lead to noticeable artifacts. To mitigate this, we add slight noise to the rendered images and perform several 2D denoising steps from a smaller timestep, yielding more plausible results. Finally, we apply an additional 3D step to obtain the 3D Gaussians of the current frame. Adopting this sampling strategy for video generation offers distinct advantages in terms of inter-frame continuity compared to generating each frame separately. It also provides computational efficiency, as denoising is only performed partially from a relatively small timestep.

|

| 119 |

+

|

| 120 |

+

### Training objective

|

| 121 |

+

|

| 122 |

+

The complete training process includes two separate training workflows for the 3D rectifier G 3D subscript 𝐺 3 𝐷 G_{3D}italic_G start_POSTSUBSCRIPT 3 italic_D end_POSTSUBSCRIPT and the 2D denoiser D 2D subscript 𝐷 2 𝐷 D_{2D}italic_D start_POSTSUBSCRIPT 2 italic_D end_POSTSUBSCRIPT. G 3D subscript 𝐺 3 𝐷 G_{3D}italic_G start_POSTSUBSCRIPT 3 italic_D end_POSTSUBSCRIPT is trained with a loss function that includes both photometric loss and mask loss. Given clean video frames 𝑰 f(1:N)superscript subscript 𝑰 𝑓:1 𝑁\boldsymbol{I}_{f}^{(1:N)}bold_italic_I start_POSTSUBSCRIPT italic_f end_POSTSUBSCRIPT start_POSTSUPERSCRIPT ( 1 : italic_N ) end_POSTSUPERSCRIPT from N 𝑁 N italic_N anchor views and conditional SMPL segmentation maps 𝑺 f(1:N)superscript subscript 𝑺 𝑓:1 𝑁\boldsymbol{S}_{f}^{(1:N)}bold_italic_S start_POSTSUBSCRIPT italic_f end_POSTSUBSCRIPT start_POSTSUPERSCRIPT ( 1 : italic_N ) end_POSTSUPERSCRIPT of frame f 𝑓 f italic_f, the training objective is to reconstruct accurate 3D Gaussians from the given input to achieve consistent 3D rendering from M 𝑀 M italic_M specified views 𝒄(1:M)superscript 𝒄:1 𝑀\boldsymbol{c}^{(1:M)}bold_italic_c start_POSTSUPERSCRIPT ( 1 : italic_M ) end_POSTSUPERSCRIPT. The loss function measures the similarity between the rendered multi-view images and the ground-truth images, including the L 2 subscript 𝐿 2 L_{2}italic_L start_POSTSUBSCRIPT 2 end_POSTSUBSCRIPT loss for the RGB images:

|

| 123 |

+

|

| 124 |

+

L rgb=‖G 3D(𝑰 f(1:N),𝑺 f(1:N),𝒄(1:M))−𝑰 f(1:M)‖2,subscript 𝐿 𝑟 𝑔 𝑏 superscript norm subscript 𝐺 3 𝐷 superscript subscript 𝑰 𝑓:1 𝑁 superscript subscript 𝑺 𝑓:1 𝑁 superscript 𝒄:1 𝑀 superscript subscript 𝑰 𝑓:1 𝑀 2 L_{rgb}=\|G_{3D}(\boldsymbol{I}_{f}^{(1:N)},\boldsymbol{S}_{f}^{(1:N)},% \boldsymbol{c}^{(1:M)})-\boldsymbol{I}_{f}^{(1:M)}\|^{2},italic_L start_POSTSUBSCRIPT italic_r italic_g italic_b end_POSTSUBSCRIPT = ∥ italic_G start_POSTSUBSCRIPT 3 italic_D end_POSTSUBSCRIPT ( bold_italic_I start_POSTSUBSCRIPT italic_f end_POSTSUBSCRIPT start_POSTSUPERSCRIPT ( 1 : italic_N ) end_POSTSUPERSCRIPT , bold_italic_S start_POSTSUBSCRIPT italic_f end_POSTSUBSCRIPT start_POSTSUPERSCRIPT ( 1 : italic_N ) end_POSTSUPERSCRIPT , bold_italic_c start_POSTSUPERSCRIPT ( 1 : italic_M ) end_POSTSUPERSCRIPT ) - bold_italic_I start_POSTSUBSCRIPT italic_f end_POSTSUBSCRIPT start_POSTSUPERSCRIPT ( 1 : italic_M ) end_POSTSUPERSCRIPT ∥ start_POSTSUPERSCRIPT 2 end_POSTSUPERSCRIPT ,(8)

|

| 125 |

+

|

| 126 |

+

and the L 2 subscript 𝐿 2 L_{2}italic_L start_POSTSUBSCRIPT 2 end_POSTSUBSCRIPT loss L mask subscript 𝐿 𝑚 𝑎 𝑠 𝑘 L_{mask}italic_L start_POSTSUBSCRIPT italic_m italic_a italic_s italic_k end_POSTSUBSCRIPT for the masks, which is omitted for brevity. The overall loss of G 3D subscript 𝐺 3 𝐷 G_{3D}italic_G start_POSTSUBSCRIPT 3 italic_D end_POSTSUBSCRIPT can be represented as:

|

| 127 |

+

|

| 128 |

+

L 3D=λ rgbL rgb+λ maskL mask.subscript 𝐿 3 𝐷 subscript 𝜆 𝑟 𝑔 𝑏 subscript 𝐿 𝑟 𝑔 𝑏 subscript 𝜆 𝑚 𝑎 𝑠 𝑘 subscript 𝐿 𝑚 𝑎 𝑠 𝑘 L_{3D}=\lambda_{rgb}L_{rgb}+\lambda_{mask}L_{mask}.italic_L start_POSTSUBSCRIPT 3 italic_D end_POSTSUBSCRIPT = italic_λ start_POSTSUBSCRIPT italic_r italic_g italic_b end_POSTSUBSCRIPT italic_L start_POSTSUBSCRIPT italic_r italic_g italic_b end_POSTSUBSCRIPT + italic_λ start_POSTSUBSCRIPT italic_m italic_a italic_s italic_k end_POSTSUBSCRIPT italic_L start_POSTSUBSCRIPT italic_m italic_a italic_s italic_k end_POSTSUBSCRIPT .(9)

|

| 129 |

+

|

| 130 |

+

In terms of the 2D denoiser D 2D subscript 𝐷 2 𝐷 D_{2D}italic_D start_POSTSUBSCRIPT 2 italic_D end_POSTSUBSCRIPT, given a clean video frame 𝑰 f subscript 𝑰 𝑓\boldsymbol{I}_{f}bold_italic_I start_POSTSUBSCRIPT italic_f end_POSTSUBSCRIPT, the corresponding SMPL segmentation map 𝑺 f subscript 𝑺 𝑓\boldsymbol{S}_{f}bold_italic_S start_POSTSUBSCRIPT italic_f end_POSTSUBSCRIPT and timestep t 𝑡 t italic_t, we only apply RGB loss to train the model:

|

| 131 |

+

|

| 132 |

+

L 2D=‖D 2D(𝑰 f,𝑺 f,t)−𝑰 f‖2.subscript 𝐿 2 𝐷 superscript norm subscript 𝐷 2 𝐷 subscript 𝑰 𝑓 subscript 𝑺 𝑓 𝑡 subscript 𝑰 𝑓 2 L_{2D}=\|D_{2D}(\boldsymbol{I}_{f},\boldsymbol{S}_{f},t)-\boldsymbol{I}_{f}\|^% {2}.italic_L start_POSTSUBSCRIPT 2 italic_D end_POSTSUBSCRIPT = ∥ italic_D start_POSTSUBSCRIPT 2 italic_D end_POSTSUBSCRIPT ( bold_italic_I start_POSTSUBSCRIPT italic_f end_POSTSUBSCRIPT , bold_italic_S start_POSTSUBSCRIPT italic_f end_POSTSUBSCRIPT , italic_t ) - bold_italic_I start_POSTSUBSCRIPT italic_f end_POSTSUBSCRIPT ∥ start_POSTSUPERSCRIPT 2 end_POSTSUPERSCRIPT .(10)

|

| 133 |

+

|

| 134 |

+

|

| 135 |

+

|

| 136 |

+

Figure 3: Qualitative comparison of single-frame novel pose synthesis results against ARAH (Wang et al. [2022](https://arxiv.org/html/2412.11599v1#bib.bib44)) and PoseVocab (Li et al. [2023b](https://arxiv.org/html/2412.11599v1#bib.bib18)) on sequences 313 and 315 of the ZJU-MoCap dataset. Please zoom in for better observation.

|

| 137 |

+

|

| 138 |

+

Table 1: Quantitative comparison of single-frame novel pose synthesis against ARAH (Wang et al. [2022](https://arxiv.org/html/2412.11599v1#bib.bib44)) and PoseVocab (Li et al. [2023b](https://arxiv.org/html/2412.11599v1#bib.bib18)) on 4 sequences of the ZJU-MoCap dataset. Bold indicates the best, while underline denotes the second-best.

|

| 139 |

+

|

| 140 |

+

Experiments

|

| 141 |

+

-----------

|

| 142 |

+

|

| 143 |

+

### Implementation Details

|

| 144 |

+

|

| 145 |

+

The 3D rectifier takes clean images at a resolution of 512×\times×512 from N=4 𝑁 4 N=4 italic_N = 4 anchor views as input, reconstructs 3D avatar Gaussians, and renders M=8 𝑀 8 M=8 italic_M = 8 multi-view images at the same resolution. The number of Gaussians sampled on the SMPL (Loper et al. [2015](https://arxiv.org/html/2412.11599v1#bib.bib24)) mesh is n=373056 𝑛 373056 n=373056 italic_n = 373056. We train this model with a learning rate of 5×10−5 5 superscript 10 5 5\times 10^{-5}5 × 10 start_POSTSUPERSCRIPT - 5 end_POSTSUPERSCRIPT. The 2D denoiser functions at a resolution of 512×\times×512, consistent with the resolution of the ground-truth images. This model is trained with a learning rate of 4×10−4 4 superscript 10 4 4\times 10^{-4}4 × 10 start_POSTSUPERSCRIPT - 4 end_POSTSUPERSCRIPT. When conducting single frame novel pose synthesis, our 3D-aware denoising process has 20 denoising steps in total. All experiments are conducted on NVIDIA RTX 3080 Ti GPUs.

|

| 146 |

+

|

| 147 |

+

Dataset. Our experiments are conducted on the ZJU-MoCap (Peng et al. [2021b](https://arxiv.org/html/2412.11599v1#bib.bib30)) dataset, which includes 9 sequences captured with 23 calibrated cameras. Each sequence features a video of an individual performing a specific action. We utilize 80% of frames from each sequence for training and left the remaining frames for testing.

|

| 148 |

+

|

| 149 |

+

Metrics. We adopt Peak Signal-to-Noise Ratio (PSNR), Learned Perceptual Image Patch Similarity (LPIPS) (Zhang et al. [2018](https://arxiv.org/html/2412.11599v1#bib.bib46)), and Frechet Inception Distance (FID) (Heusel et al. [2017](https://arxiv.org/html/2412.11599v1#bib.bib8)) for quantitative evaluation.

|

| 150 |

+

|

| 151 |

+

|

| 152 |

+

|

| 153 |

+

Figure 4: Consecutive frame generation results. Top row shows results using the proposed sampling strategy; bottom row displays results from independent sampling.

|

| 154 |

+

|

| 155 |

+

Baselines. We compare our method with two state-of-the-art counterparts suitable for ZJU-MoCap dataset: ARAH (Wang et al. [2022](https://arxiv.org/html/2412.11599v1#bib.bib44)) and PoseVocab (Li et al. [2023b](https://arxiv.org/html/2412.11599v1#bib.bib18)). We retrained the baseline methods using their officially released code to align the training/test set split for all the methods.

|

| 156 |

+

|

| 157 |

+

### Single-Frame Novel Pose Synthesis

|

| 158 |

+

|

| 159 |

+

To evaluate the visual quality of the generated frames and the pose generalization performance of our method, testing is specifically performed on novel poses that are not included in the training dataset. It is important to note that due to the stochasticity introduced by factors such as clothing wrinkles, for the unseen poses, ground-truth images represent only _one possible scenario_. Therefore, metrics emphasizing pixel-level correspondence, such as PSNR, may not comprehensively evaluate the fidelity of the generated images. In our evaluation, we primarily employ LPIPS and FID, metrics describing perceptual similarity, while we also provide experimental results with PSNR (in light font).

|

| 160 |

+

|

| 161 |

+

Quantitative Results. When performing novel pose synthesis on different IDs, we search for the best t split,k subscript 𝑡 𝑠 𝑝 𝑙 𝑖 𝑡 𝑘 t_{split},k italic_t start_POSTSUBSCRIPT italic_s italic_p italic_l italic_i italic_t end_POSTSUBSCRIPT , italic_k pair for each ID regarding their various clothes wrinkles and action dynamics. Tab. [1](https://arxiv.org/html/2412.11599v1#Sx3.T1 "Table 1 ‣ Training objective ‣ Method ‣ 3D2-Actor: Learning Pose-Conditioned 3D-Aware Denoiser for Realistic Gaussian Avatar Modeling") presents a quantitative comparison among ARAH, PoseVocab and our method across four sequences of the ZJU-MoCap dataset, we report the average values of these metrics across all test frames. It can be illustrated that our method achieves the best or second-best results across the four sequences. While ARAH achieves the highest PSNR, our approach offers a balanced performance, excelling in LPIPS and FID, which indicates that our method has good generalizability and generative capability given novel poses.

|

| 162 |

+

|

| 163 |

+

Qualitative Results. The results of the qualitative experiments are depicted in Fig. [3](https://arxiv.org/html/2412.11599v1#Sx3.F3 "Figure 3 ‣ Training objective ‣ Method ‣ 3D2-Actor: Learning Pose-Conditioned 3D-Aware Denoiser for Realistic Gaussian Avatar Modeling"). ARAH tends to produce relatively blurry images in these scenarios. In contrast, images generated by PoseVocab exhibit relatively clear texture details, albeit with some issues. For instance, the stripes on the T-shirt appear somewhat blurry, and there is color “bleeding” from the green clothing onto the arms. Contrarily, the images produced by our method show clearer finer details and a higher sense of realism in clothing wrinkle details.

|

| 164 |

+

|

| 165 |

+

### Continuous Video Synthesis

|

| 166 |

+

|

| 167 |

+

Fig. [4](https://arxiv.org/html/2412.11599v1#Sx4.F4 "Figure 4 ‣ Implementation Details ‣ Experiments ‣ 3D2-Actor: Learning Pose-Conditioned 3D-Aware Denoiser for Realistic Gaussian Avatar Modeling") illustrates the experimental results of generating a sequence of consecutive frames using our method, which is also performed on novel poses. When the Gaussian consistency sampling strategy is not utilized, and instead, sampling begins with pure Gaussian noise for each frame, the resulting frames experience pronounced inter-frame inconsistency. On the contrary, deriving the 3D Gaussians for the current frame with the Gaussian local coordinates from the previous frame first and then proceeding with subsequent 2D denoising processes significantly enhances the continuity between the output frames.

|

| 168 |

+

|

| 169 |

+

Table 2: Quantitative ablation study on our 3D-aware denoising process. Ours-2D uses only 2D denoisers, while Ours-3D retains the initial 3D rectifier but omits later 2D or 3D steps.

|

| 170 |

+

|

| 171 |

+

|

| 172 |

+

|

| 173 |

+

Figure 5: Novel pose synthesis results with different designs of our 3D-aware denoiser.

|

| 174 |

+

|

| 175 |

+

### Ablation Studies

|

| 176 |

+

|

| 177 |

+

3D-aware Denoising Process. The complete 3D-aware denoising process consists of two submodules: 3D rectifier and 2D denoiser. LPIPS and FID metrics in Tab. [2](https://arxiv.org/html/2412.11599v1#Sx4.T2 "Table 2 ‣ Continuous Video Synthesis ‣ Experiments ‣ 3D2-Actor: Learning Pose-Conditioned 3D-Aware Denoiser for Realistic Gaussian Avatar Modeling") indicates that conducting only the 2D process yields relatively better performance, while images obtained solely through the 3D counterpart are the least satisfactory. The results in Fig. [5](https://arxiv.org/html/2412.11599v1#Sx4.F5 "Figure 5 ‣ Continuous Video Synthesis ‣ Experiments ‣ 3D2-Actor: Learning Pose-Conditioned 3D-Aware Denoiser for Realistic Gaussian Avatar Modeling") reveal that images generated solely through 2D denoising display richer local details but have limitations in overall modeling. In contrast, the 3D process excels in producing reasonable global attributes but fails to model wrinkles and occlusions. Consecutively performing these two processes allows for a synergistic combination of their strengths, as depicted in “ours”. We further analyze the impact of varying split point t split subscript 𝑡 𝑠 𝑝 𝑙 𝑖 𝑡 t_{split}italic_t start_POSTSUBSCRIPT italic_s italic_p italic_l italic_i italic_t end_POSTSUBSCRIPT and the insertion counts of 3D rectifier k 𝑘 k italic_k on the result. As presented in Tab. [3](https://arxiv.org/html/2412.11599v1#Sx4.T3 "Table 3 ‣ Ablation Studies ‣ Experiments ‣ 3D2-Actor: Learning Pose-Conditioned 3D-Aware Denoiser for Realistic Gaussian Avatar Modeling"), as t split subscript 𝑡 𝑠 𝑝 𝑙 𝑖 𝑡 t_{split}italic_t start_POSTSUBSCRIPT italic_s italic_p italic_l italic_i italic_t end_POSTSUBSCRIPT increases, the 2D denoiser applies stronger corrections, resulting in lower LPIPS and FID values, indicating improved image realism. Moreover, the number of 3D rectifying steps has little impact on image quality when t split subscript 𝑡 𝑠 𝑝 𝑙 𝑖 𝑡 t_{split}italic_t start_POSTSUBSCRIPT italic_s italic_p italic_l italic_i italic_t end_POSTSUBSCRIPT is fixed.

|

| 178 |

+

|

| 179 |

+

Table 3: Comparison of image generation quality under varying t split subscript 𝑡 𝑠 𝑝 𝑙 𝑖 𝑡 t_{split}italic_t start_POSTSUBSCRIPT italic_s italic_p italic_l italic_i italic_t end_POSTSUBSCRIPT and k 𝑘 k italic_k.

|

| 180 |

+

|

| 181 |

+

|

| 182 |

+

|

| 183 |

+

Figure 6: Novel pose synthesis results without (w/o) and with (w/) our mesh-based local coordinate representation.

|

| 184 |

+

|

| 185 |

+

Gaussian Local Coordinate Representation. We conduct an ablation study comparing the image generation quality of our framework with and without our mesh-based Gaussian local coordinate representation. From Fig. [6](https://arxiv.org/html/2412.11599v1#Sx4.F6 "Figure 6 ‣ Ablation Studies ‣ Experiments ‣ 3D2-Actor: Learning Pose-Conditioned 3D-Aware Denoiser for Realistic Gaussian Avatar Modeling"), we can see that this representation allows 3D Gaussians to move flexibly within a reasonable range, resulting in more realistic modeling of body and clothes details, such as wrinkles and occlusions. In contrast, a 3D-aware denoiser without this representation lacks the ability to express details reasonably. As a consequence, the generated images tend to exhibit unnatural clothing wrinkles and fail to accurately model limbs, leading to a significant decline in visual quality.

|

| 186 |

+

|

| 187 |

+

Conclusion

|

| 188 |

+

----------

|

| 189 |

+

|

| 190 |

+

We present 3D 2-Actor, an innovative pose-conditioned 3D-aware denoiser designed for the high-fidelity reconstruction and animation of 3D human avatars. Our approach employs a 2D denoiser to refine the intricate details of noised images from the previous step, generating high-quality clean images that facilitate the 3D reconstruction process in the subsequent 3D rectifier. Complementing this, our 3D rectifier employs a two-stage projection strategy with a novel local coordinate representation to render multi-view images with enhanced 3D consistency by incorporating 3DGS-based techniques. Additionally, we introduce a Gaussian consistency sampling strategy that improves inter-frame continuity in video synthesis without additional training overhead. Our method achieves realistic human animation and high-quality dynamic video generation with novel poses.

|

| 191 |

+

|

| 192 |

+

Acknowledgments

|

| 193 |

+

---------------

|

| 194 |

+

|

| 195 |

+

This work is partly supported by the National Key R&D Program of China (2022ZD0161902), Beijing Municipal Natural Science Foundation (No. 4222049), the National Natural Science Foundation of China (No. 62202031), and the Fundamental Research Funds for the Central Universities.

|

| 196 |

+

|

| 197 |

+

References

|

| 198 |

+

----------

|

| 199 |

+

|

| 200 |

+

* Anciukevičius et al. (2023) Anciukevičius, T.; Xu, Z.; Fisher, M.; Henderson, P.; Bilen, H.; Mitra, N.J.; and Guerrero, P. 2023. Renderdiffusion: Image diffusion for 3d reconstruction, inpainting and generation. In _Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition_, 12608–12618.

|

| 201 |

+

* Bogo et al. (2015) Bogo, F.; Black, M.J.; Loper, M.; and Romero, J. 2015. Detailed full-body reconstructions of moving people from monocular RGB-D sequences. In _Proceedings of the IEEE international conference on computer vision_, 2300–2308.

|

| 202 |

+

* Chen et al. (2023) Chen, H.; Gu, J.; Chen, A.; Tian, W.; Tu, Z.; Liu, L.; and Su, H. 2023. Single-Stage Diffusion NeRF: A Unified Approach to 3D Generation and Reconstruction. In _ICCV_.

|

| 203 |

+

* Collet et al. (2015) Collet, A.; Chuang, M.; Sweeney, P.; Gillett, D.; Evseev, D.; Calabrese, D.; Hoppe, H.; Kirk, A.; and Sullivan, S. 2015. High-quality streamable free-viewpoint video. _ACM Transactions on Graphics (ToG)_, 34(4): 1–13.

|

| 204 |

+

* Dou et al. (2016) Dou, M.; Khamis, S.; Degtyarev, Y.; Davidson, P.; Fanello, S.R.; Kowdle, A.; Escolano, S.O.; Rhemann, C.; Kim, D.; Taylor, J.; et al. 2016. Fusion4d: Real-time performance capture of challenging scenes. _ACM Transactions on Graphics (ToG)_, 35(4): 1–13.

|

| 205 |

+

* Gao et al. (2021) Gao, C.; Saraf, A.; Kopf, J.; and Huang, J.-B. 2021. Dynamic view synthesis from dynamic monocular video. In _Proceedings of the IEEE/CVF International Conference on Computer Vision_, 5712–5721.

|

| 206 |

+

* Gupta et al. (2023) Gupta, A.; Xiong, W.; Nie, Y.; Jones, I.; and Oğuz, B. 2023. 3dgen: Triplane latent diffusion for textured mesh generation. _arXiv preprint arXiv:2303.05371_.

|

| 207 |

+

* Heusel et al. (2017) Heusel, M.; Ramsauer, H.; Unterthiner, T.; Nessler, B.; and Hochreiter, S. 2017. Gans trained by a two time-scale update rule converge to a local nash equilibrium. _Advances in neural information processing systems_, 30.

|

| 208 |

+

* Ho, Jain, and Abbeel (2020) Ho, J.; Jain, A.; and Abbeel, P. 2020. Denoising diffusion probabilistic models. _Advances in neural information processing systems_, 33: 6840–6851.

|

| 209 |

+

* Hu and Liu (2023) Hu, S.; and Liu, Z. 2023. Gauhuman: Articulated gaussian splatting from monocular human videos. _arXiv preprint arXiv:2312.02973_.

|

| 210 |

+

* Huang et al. (2023) Huang, Y.; Wang, J.; Shi, Y.; Tang, B.; Qi, X.; and Zhang, L. 2023. Dreamtime: An Improved Optimization Strategy for Diffusion-guided 3D Generation. In _The Twelfth International Conference on Learning Representations_.

|

| 211 |

+

* Jiang et al. (2024) Jiang, Y.; Liao, Q.; Li, X.; Ma, L.; Zhang, Q.; Zhang, C.; Lu, Z.; and Shan, Y. 2024. UV Gaussians: Joint Learning of Mesh Deformation and Gaussian Textures for Human Avatar Modeling. _arXiv preprint arXiv:2403.11589_.

|

| 212 |

+

* Jung et al. (2023) Jung, H.; Brasch, N.; Song, J.; Perez-Pellitero, E.; Zhou, Y.; Li, Z.; Navab, N.; and Busam, B. 2023. Deformable 3d gaussian splatting for animatable human avatars. _arXiv preprint arXiv:2312.15059_.

|

| 213 |

+

* Karnewar et al. (2023) Karnewar, A.; Vedaldi, A.; Novotny, D.; and Mitra, N.J. 2023. Holodiffusion: Training a 3D diffusion model using 2D images. In _Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition_, 18423–18433.

|

| 214 |

+

* Kerbl et al. (2023) Kerbl, B.; Kopanas, G.; Leimkühler, T.; and Drettakis, G. 2023. 3D Gaussian Splatting for Real-Time Radiance Field Rendering. _ACM Transactions on Graphics_, 42(4).

|

| 215 |

+

* Li et al. (2023a) Li, M.; Tao, J.; Yang, Z.; and Yang, Y. 2023a. Human101: Training 100+ fps human gaussians in 100s from 1 view. _arXiv preprint arXiv:2312.15258_.

|

| 216 |

+

* Li et al. (2022) Li, R.; Tanke, J.; Vo, M.; Zollhöfer, M.; Gall, J.; Kanazawa, A.; and Lassner, C. 2022. Tava: Template-free animatable volumetric actors. In _European Conference on Computer Vision_, 419–436. Springer.

|

| 217 |

+

* Li et al. (2023b) Li, Z.; Zheng, Z.; Liu, Y.; Zhou, B.; and Liu, Y. 2023b. PoseVocab: Learning Joint-structured Pose Embeddings for Human Avatar Modeling. In _ACM SIGGRAPH Conference Proceedings_.

|

| 218 |

+

* Li et al. (2024) Li, Z.; Zheng, Z.; Wang, L.; and Liu, Y. 2024. Animatable gaussians: Learning pose-dependent gaussian maps for high-fidelity human avatar modeling. In _Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition_, 19711–19722.

|

| 219 |

+

* Liu et al. (2023) Liu, R.; Wu, R.; Van Hoorick, B.; Tokmakov, P.; Zakharov, S.; and Vondrick, C. 2023. Zero-1-to-3: Zero-shot one image to 3d object. In _Proceedings of the IEEE/CVF International Conference on Computer Vision_, 9298–9309.

|

| 220 |

+

* Liu et al. (2024a) Liu, X.; Wu, C.; Liu, X.; Liu, J.; Wu, J.; Zhao, C.; Feng, H.; Ding, E.; and Wang, J. 2024a. GEA: Reconstructing Expressive 3D Gaussian Avatar from Monocular Video. _arXiv preprint arXiv:2402.16607_.

|

| 221 |

+

* Liu et al. (2024b) Liu, Y.; Lin, C.; Zeng, Z.; Long, X.; Liu, L.; Komura, T.; and Wang, W. 2024b. SyncDreamer: Generating Multiview-consistent Images from a Single-view Image. In _The Twelfth International Conference on Learning Representations_.

|

| 222 |

+

* Lombardi et al. (2019) Lombardi, S.; Simon, T.; Saragih, J.; Schwartz, G.; Lehrmann, A.; and Sheikh, Y. 2019. Neural volumes: Learning dynamic renderable volumes from images. _arXiv preprint arXiv:1906.07751_.

|

| 223 |

+

* Loper et al. (2015) Loper, M.; Mahmood, N.; Romero, J.; Pons-Moll, G.; and Black, M.J. 2015. SMPL: A Skinned Multi-Person Linear Model. _ACM Transactions on Graphics_, 34(6).

|

| 224 |

+

* Mildenhall et al. (2021) Mildenhall, B.; Srinivasan, P.P.; Tancik, M.; Barron, J.T.; Ramamoorthi, R.; and Ng, R. 2021. Nerf: Representing scenes as neural radiance fields for view synthesis. _Communications of the ACM_, 65(1): 99–106.

|

| 225 |

+

* Müller et al. (2023) Müller, N.; Siddiqui, Y.; Porzi, L.; Bulo, S.R.; Kontschieder, P.; and Nießner, M. 2023. Diffrf: Rendering-guided 3d radiance field diffusion. In _Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition_, 4328–4338.

|

| 226 |

+

* Nichol et al. (2022) Nichol, A.; Jun, H.; Dhariwal, P.; Mishkin, P.; and Chen, M. 2022. Point-e: A system for generating 3d point clouds from complex prompts. _arXiv preprint arXiv:2212.08751_.

|

| 227 |

+

* Park et al. (2021) Park, K.; Sinha, U.; Hedman, P.; Barron, J.T.; Bouaziz, S.; Goldman, D.B.; Martin-Brualla, R.; and Seitz, S.M. 2021. Hypernerf: A higher-dimensional representation for topologically varying neural radiance fields. _arXiv preprint arXiv:2106.13228_.

|

| 228 |

+

* Peng et al. (2021a) Peng, S.; Dong, J.; Wang, Q.; Zhang, S.; Shuai, Q.; Zhou, X.; and Bao, H. 2021a. Animatable neural radiance fields for modeling dynamic human bodies. In _Proceedings of the IEEE/CVF International Conference on Computer Vision_, 14314–14323.

|

| 229 |

+

* Peng et al. (2021b) Peng, S.; Zhang, Y.; Xu, Y.; Wang, Q.; Shuai, Q.; Bao, H.; and Zhou, X. 2021b. Neural body: Implicit neural representations with structured latent codes for novel view synthesis of dynamic humans. In _Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition_, 9054–9063.

|

| 230 |

+

* Po et al. (2023) Po, R.; Yifan, W.; Golyanik, V.; Aberman, K.; Barron, J.T.; Bermano, A.H.; Chan, E.R.; Dekel, T.; Holynski, A.; Kanazawa, A.; et al. 2023. State of the Art on Diffusion Models for Visual Computing. _arXiv preprint arXiv:2310.07204_.

|

| 231 |

+

* Pumarola et al. (2021) Pumarola, A.; Corona, E.; Pons-Moll, G.; and Moreno-Noguer, F. 2021. D-nerf: Neural radiance fields for dynamic scenes. In _Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition_, 10318–10327.

|

| 232 |

+

* Qian et al. (2023a) Qian, S.; Kirschstein, T.; Schoneveld, L.; Davoli, D.; Giebenhain, S.; and Nießner, M. 2023a. Gaussianavatars: Photorealistic head avatars with rigged 3d gaussians. _arXiv preprint arXiv:2312.02069_.

|

| 233 |

+

* Qian et al. (2023b) Qian, Z.; Wang, S.; Mihajlovic, M.; Geiger, A.; and Tang, S. 2023b. 3dgs-avatar: Animatable avatars via deformable 3d gaussian splatting. _arXiv preprint arXiv:2312.09228_.

|

| 234 |

+

* Rombach et al. (2022) Rombach, R.; Blattmann, A.; Lorenz, D.; Esser, P.; and Ommer, B. 2022. High-resolution image synthesis with latent diffusion models. In _Proceedings of the IEEE/CVF conference on computer vision and pattern recognition_, 10684–10695.

|

| 235 |

+

* Ronneberger, Fischer, and Brox (2015) Ronneberger, O.; Fischer, P.; and Brox, T. 2015. U-net: Convolutional networks for biomedical image segmentation. In _Medical image computing and computer-assisted intervention–MICCAI 2015: 18th international conference, Munich, Germany, October 5-9, 2015, proceedings, part III 18_, 234–241. Springer.

|

| 236 |

+

* Shapiro et al. (2014) Shapiro, A.; Feng, A.; Wang, R.; Li, H.; Bolas, M.; Medioni, G.; and Suma, E. 2014. Rapid avatar capture and simulation using commodity depth sensors. _Computer Animation and Virtual Worlds_, 25(3-4): 201–211.

|

| 237 |

+