Datasets:

Tasks:

Image Segmentation

Formats:

parquet

Sub-tasks:

instance-segmentation

Languages:

English

Size:

10K - 100K

ArXiv:

License:

Duplicate from 1aurent/ADE20K

Browse filesCo-authored-by: Laureηt Fainsin <1aurent@users.noreply.huggingface.co>

- .gitattributes +55 -0

- README.md +170 -0

- data/train-00000-of-00010.parquet +3 -0

- data/train-00001-of-00010.parquet +3 -0

- data/train-00002-of-00010.parquet +3 -0

- data/train-00003-of-00010.parquet +3 -0

- data/train-00004-of-00010.parquet +3 -0

- data/train-00005-of-00010.parquet +3 -0

- data/train-00006-of-00010.parquet +3 -0

- data/train-00007-of-00010.parquet +3 -0

- data/train-00008-of-00010.parquet +3 -0

- data/train-00009-of-00010.parquet +3 -0

- data/validation-00000-of-00001.parquet +3 -0

- gen_script.py +229 -0

.gitattributes

ADDED

|

@@ -0,0 +1,55 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

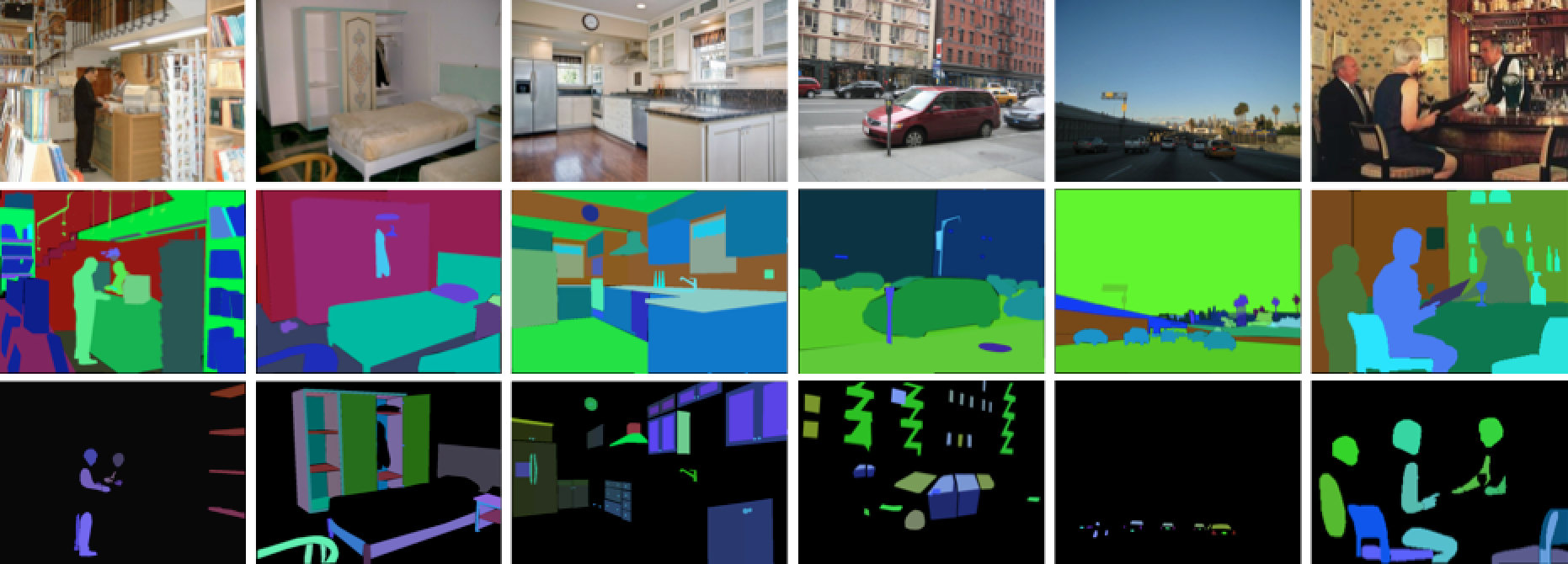

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

*.7z filter=lfs diff=lfs merge=lfs -text

|

| 2 |

+

*.arrow filter=lfs diff=lfs merge=lfs -text

|

| 3 |

+

*.bin filter=lfs diff=lfs merge=lfs -text

|

| 4 |

+

*.bz2 filter=lfs diff=lfs merge=lfs -text

|

| 5 |

+

*.ckpt filter=lfs diff=lfs merge=lfs -text

|

| 6 |

+

*.ftz filter=lfs diff=lfs merge=lfs -text

|

| 7 |

+

*.gz filter=lfs diff=lfs merge=lfs -text

|

| 8 |

+

*.h5 filter=lfs diff=lfs merge=lfs -text

|

| 9 |

+

*.joblib filter=lfs diff=lfs merge=lfs -text

|

| 10 |

+

*.lfs.* filter=lfs diff=lfs merge=lfs -text

|

| 11 |

+

*.lz4 filter=lfs diff=lfs merge=lfs -text

|

| 12 |

+

*.mlmodel filter=lfs diff=lfs merge=lfs -text

|

| 13 |

+

*.model filter=lfs diff=lfs merge=lfs -text

|

| 14 |

+

*.msgpack filter=lfs diff=lfs merge=lfs -text

|

| 15 |

+

*.npy filter=lfs diff=lfs merge=lfs -text

|

| 16 |

+

*.npz filter=lfs diff=lfs merge=lfs -text

|

| 17 |

+

*.onnx filter=lfs diff=lfs merge=lfs -text

|

| 18 |

+

*.ot filter=lfs diff=lfs merge=lfs -text

|

| 19 |

+

*.parquet filter=lfs diff=lfs merge=lfs -text

|

| 20 |

+

*.pb filter=lfs diff=lfs merge=lfs -text

|

| 21 |

+

*.pickle filter=lfs diff=lfs merge=lfs -text

|

| 22 |

+

*.pkl filter=lfs diff=lfs merge=lfs -text

|

| 23 |

+

*.pt filter=lfs diff=lfs merge=lfs -text

|

| 24 |

+

*.pth filter=lfs diff=lfs merge=lfs -text

|

| 25 |

+

*.rar filter=lfs diff=lfs merge=lfs -text

|

| 26 |

+

*.safetensors filter=lfs diff=lfs merge=lfs -text

|

| 27 |

+

saved_model/**/* filter=lfs diff=lfs merge=lfs -text

|

| 28 |

+

*.tar.* filter=lfs diff=lfs merge=lfs -text

|

| 29 |

+

*.tar filter=lfs diff=lfs merge=lfs -text

|

| 30 |

+

*.tflite filter=lfs diff=lfs merge=lfs -text

|

| 31 |

+

*.tgz filter=lfs diff=lfs merge=lfs -text

|

| 32 |

+

*.wasm filter=lfs diff=lfs merge=lfs -text

|

| 33 |

+

*.xz filter=lfs diff=lfs merge=lfs -text

|

| 34 |

+

*.zip filter=lfs diff=lfs merge=lfs -text

|

| 35 |

+

*.zst filter=lfs diff=lfs merge=lfs -text

|

| 36 |

+

*tfevents* filter=lfs diff=lfs merge=lfs -text

|

| 37 |

+

# Audio files - uncompressed

|

| 38 |

+

*.pcm filter=lfs diff=lfs merge=lfs -text

|

| 39 |

+

*.sam filter=lfs diff=lfs merge=lfs -text

|

| 40 |

+

*.raw filter=lfs diff=lfs merge=lfs -text

|

| 41 |

+

# Audio files - compressed

|

| 42 |

+

*.aac filter=lfs diff=lfs merge=lfs -text

|

| 43 |

+

*.flac filter=lfs diff=lfs merge=lfs -text

|

| 44 |

+

*.mp3 filter=lfs diff=lfs merge=lfs -text

|

| 45 |

+

*.ogg filter=lfs diff=lfs merge=lfs -text

|

| 46 |

+

*.wav filter=lfs diff=lfs merge=lfs -text

|

| 47 |

+

# Image files - uncompressed

|

| 48 |

+

*.bmp filter=lfs diff=lfs merge=lfs -text

|

| 49 |

+

*.gif filter=lfs diff=lfs merge=lfs -text

|

| 50 |

+

*.png filter=lfs diff=lfs merge=lfs -text

|

| 51 |

+

*.tiff filter=lfs diff=lfs merge=lfs -text

|

| 52 |

+

# Image files - compressed

|

| 53 |

+

*.jpg filter=lfs diff=lfs merge=lfs -text

|

| 54 |

+

*.jpeg filter=lfs diff=lfs merge=lfs -text

|

| 55 |

+

*.webp filter=lfs diff=lfs merge=lfs -text

|

README.md

ADDED

|

@@ -0,0 +1,170 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

---

|

| 2 |

+

dataset_info:

|

| 3 |

+

features:

|

| 4 |

+

- name: image

|

| 5 |

+

dtype:

|

| 6 |

+

image:

|

| 7 |

+

mode: RGB

|

| 8 |

+

- name: segmentations

|

| 9 |

+

sequence:

|

| 10 |

+

image:

|

| 11 |

+

mode: RGB

|

| 12 |

+

- name: instances

|

| 13 |

+

sequence:

|

| 14 |

+

image:

|

| 15 |

+

mode: L

|

| 16 |

+

- name: filename

|

| 17 |

+

dtype: string

|

| 18 |

+

- name: folder

|

| 19 |

+

dtype: string

|

| 20 |

+

- name: source

|

| 21 |

+

struct:

|

| 22 |

+

- name: folder

|

| 23 |

+

dtype: string

|

| 24 |

+

- name: filename

|

| 25 |

+

dtype: string

|

| 26 |

+

- name: origin

|

| 27 |

+

dtype: string

|

| 28 |

+

- name: scene

|

| 29 |

+

sequence: string

|

| 30 |

+

- name: objects

|

| 31 |

+

list:

|

| 32 |

+

- name: id

|

| 33 |

+

dtype: uint16

|

| 34 |

+

- name: name

|

| 35 |

+

dtype: string

|

| 36 |

+

- name: name_ndx

|

| 37 |

+

dtype: uint16

|

| 38 |

+

- name: hypernym

|

| 39 |

+

sequence: string

|

| 40 |

+

- name: raw_name

|

| 41 |

+

dtype: string

|

| 42 |

+

- name: attributes

|

| 43 |

+

dtype: string

|

| 44 |

+

- name: depth_ordering_rank

|

| 45 |

+

dtype: uint16

|

| 46 |

+

- name: occluded

|

| 47 |

+

dtype: bool

|

| 48 |

+

- name: crop

|

| 49 |

+

dtype: bool

|

| 50 |

+

- name: parts

|

| 51 |

+

struct:

|

| 52 |

+

- name: is_part_of

|

| 53 |

+

dtype: uint16

|

| 54 |

+

- name: part_level

|

| 55 |

+

dtype: uint8

|

| 56 |

+

- name: has_parts

|

| 57 |

+

sequence: uint16

|

| 58 |

+

- name: polygon

|

| 59 |

+

struct:

|

| 60 |

+

- name: x

|

| 61 |

+

sequence: uint16

|

| 62 |

+

- name: 'y'

|

| 63 |

+

sequence: uint16

|

| 64 |

+

- name: click_date

|

| 65 |

+

sequence: timestamp[us]

|

| 66 |

+

- name: saved_date

|

| 67 |

+

dtype: timestamp[us]

|

| 68 |

+

splits:

|

| 69 |

+

- name: train

|

| 70 |

+

num_bytes: 4812448179.314

|

| 71 |

+

num_examples: 25574

|

| 72 |

+

- name: validation

|

| 73 |

+

num_bytes: 464280715

|

| 74 |

+

num_examples: 2000

|

| 75 |

+

download_size: 5935251309

|

| 76 |

+

dataset_size: 5276728894.314

|

| 77 |

+

configs:

|

| 78 |

+

- config_name: default

|

| 79 |

+

data_files:

|

| 80 |

+

- split: train

|

| 81 |

+

path: data/train-*

|

| 82 |

+

- split: validation

|

| 83 |

+

path: data/validation-*

|

| 84 |

+

license: bsd

|

| 85 |

+

task_categories:

|

| 86 |

+

- image-segmentation

|

| 87 |

+

task_ids:

|

| 88 |

+

- instance-segmentation

|

| 89 |

+

language:

|

| 90 |

+

- en

|

| 91 |

+

tags:

|

| 92 |

+

- MIT

|

| 93 |

+

- CSAIL

|

| 94 |

+

- panoptic

|

| 95 |

+

pretty_name: ADE20K

|

| 96 |

+

size_categories:

|

| 97 |

+

- 10K<n<100K

|

| 98 |

+

paperswithcode_id: ade20k

|

| 99 |

+

multilinguality:

|

| 100 |

+

- monolingual

|

| 101 |

+

annotations_creators:

|

| 102 |

+

- crowdsourced

|

| 103 |

+

- expert-generated

|

| 104 |

+

language_creators:

|

| 105 |

+

- found

|

| 106 |

+

---

|

| 107 |

+

|

| 108 |

+

# ADE20K Dataset

|

| 109 |

+

|

| 110 |

+

[](https://groups.csail.mit.edu/vision/datasets/ADE20K/)

|

| 111 |

+

|

| 112 |

+

## Dataset Description

|

| 113 |

+

|

| 114 |

+

- **Homepage:** [MIT CSAIL ADE20K Dataset](https://groups.csail.mit.edu/vision/datasets/ADE20K/)

|

| 115 |

+

- **Repository:** [github:CSAILVision/ADE20K](https://github.com/CSAILVision/ADE20K)

|

| 116 |

+

|

| 117 |

+

## Description

|

| 118 |

+

|

| 119 |

+

ADE20K is composed of more than 27K images from the SUN and Places databases.

|

| 120 |

+

Images are fully annotated with objects, spanning over 3K object categories.

|

| 121 |

+

Many of the images also contain object parts, and parts of parts.

|

| 122 |

+

We also provide the original annotated polygons, as well as object instances for amodal segmentation.

|

| 123 |

+

Images are also anonymized, blurring faces and license plates.

|

| 124 |

+

|

| 125 |

+

## Images

|

| 126 |

+

|

| 127 |

+

MIT, CSAIL does not own the copyright of the images. If you are a researcher or educator who wish to have a copy of the original images for non-commercial research and/or educational use, we may provide you access by filling a request in our site. You may use the images under the following terms:

|

| 128 |

+

1. Researcher shall use the Database only for non-commercial research and educational purposes. MIT makes no representations or warranties regarding the Database, including but not limited to warranties of non-infringement or fitness for a particular purpose.

|

| 129 |

+

2. Researcher accepts full responsibility for his or her use of the Database and shall defend and indemnify MIT, including their employees, Trustees, officers and agents, against any and all claims arising from Researcher's use of the Database, including but not limited to Researcher's use of any copies of copyrighted images that he or she may create from the Database.

|

| 130 |

+

3. Researcher may provide research associates and colleagues with access to the Database provided that they first agree to be bound by these terms and conditions.

|

| 131 |

+

4. MIT reserves the right to terminate Researcher's access to the Database at any time.

|

| 132 |

+

5. If Researcher is employed by a for-profit, commercial entity, Researcher's employer shall also be bound by these terms and conditions, and Researcher hereby represents that he or she is fully authorized to enter into this agreement on behalf of such employer.

|

| 133 |

+

|

| 134 |

+

## Software and Annotations

|

| 135 |

+

|

| 136 |

+

The MIT CSAIL website, image annotations and the software provided belongs to MIT CSAIL and is licensed under a [Creative Commons BSD-3 License Agreement](https://opensource.org/licenses/BSD-3-Clause).

|

| 137 |

+

|

| 138 |

+

Copyright 2019 MIT, CSAIL

|

| 139 |

+

Redistribution and use in source and binary forms, with or without modification, are permitted provided that the following conditions are met:

|

| 140 |

+

1. Redistributions of source code must retain the above copyright notice, this list of conditions and the following disclaimer.

|

| 141 |

+

2. Redistributions in binary form must reproduce the above copyright notice, this list of conditions and the following disclaimer in the documentation and/or other materials provided with the distribution.

|

| 142 |

+

3. Neither the name of the copyright holder nor the names of its contributors may be used to endorse or promote products derived from this software without specific prior written permission.

|

| 143 |

+

|

| 144 |

+

THIS SOFTWARE IS PROVIDED BY THE COPYRIGHT HOLDERS AND CONTRIBUTORS "AS IS" AND ANY EXPRESS OR IMPLIED WARRANTIES, INCLUDING, BUT NOT LIMITED TO, THE IMPLIED WARRANTIES OF MERCHANTABILITY AND FITNESS FOR A PARTICULAR PURPOSE ARE DISCLAIMED.

|

| 145 |

+

IN NO EVENT SHALL THE COPYRIGHT HOLDER OR CONTRIBUTORS BE LIABLE FOR ANY DIRECT, INDIRECT, INCIDENTAL, SPECIAL, EXEMPLARY, OR CONSEQUENTIAL DAMAGES (INCLUDING, BUT NOT LIMITED TO, PROCUREMENT OF SUBSTITUTE GOODS OR SERVICES;

|

| 146 |

+

LOSS OF USE, DATA, OR PROFITS; OR BUSINESS INTERRUPTION) HOWEVER CAUSED AND ON ANY THEORY OF LIABILITY, WHETHER IN CONTRACT, STRICT LIABILITY, OR TORT (INCLUDING NEGLIGENCE OR OTHERWISE) ARISING IN ANY WAY OUT OF THE USE OF THIS SOFTWARE, EVEN IF ADVISED OF THE POSSIBILITY OF SUCH DAMAGE.

|

| 147 |

+

|

| 148 |

+

## Citations

|

| 149 |

+

|

| 150 |

+

```bibtex

|

| 151 |

+

@inproceedings{8100027,

|

| 152 |

+

title = {Scene Parsing through ADE20K Dataset},

|

| 153 |

+

author = {Zhou, Bolei and Zhao, Hang and Puig, Xavier and Fidler, Sanja and Barriuso, Adela and Torralba, Antonio},

|

| 154 |

+

year = 2017,

|

| 155 |

+

booktitle = {2017 IEEE Conference on Computer Vision and Pattern Recognition (CVPR)},

|

| 156 |

+

volume = {},

|

| 157 |

+

number = {},

|

| 158 |

+

pages = {5122--5130},

|

| 159 |

+

doi = {10.1109/CVPR.2017.544},

|

| 160 |

+

keywords = {Image segmentation;Semantics;Sun;Labeling;Visualization;Neural networks;Computer vision}

|

| 161 |

+

}

|

| 162 |

+

@misc{zhou2018semantic,

|

| 163 |

+

title = {Semantic Understanding of Scenes through the ADE20K Dataset},

|

| 164 |

+

author = {Bolei Zhou and Hang Zhao and Xavier Puig and Tete Xiao and Sanja Fidler and Adela Barriuso and Antonio Torralba},

|

| 165 |

+

year = 2018,

|

| 166 |

+

eprint = {1608.05442},

|

| 167 |

+

archiveprefix = {arXiv},

|

| 168 |

+

primaryclass = {cs.CV}

|

| 169 |

+

}

|

| 170 |

+

```

|

data/train-00000-of-00010.parquet

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

version https://git-lfs.github.com/spec/v1

|

| 2 |

+

oid sha256:20ab4443522379f08a0f6ae27f35374d98b6f4854f92067882c79188e5307671

|

| 3 |

+

size 435840180

|

data/train-00001-of-00010.parquet

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

version https://git-lfs.github.com/spec/v1

|

| 2 |

+

oid sha256:72110d0636d700e64a16f587deb7c900eab199a0a24ad3a17405644465c2f9c8

|

| 3 |

+

size 478247834

|

data/train-00002-of-00010.parquet

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

version https://git-lfs.github.com/spec/v1

|

| 2 |

+

oid sha256:ef3dbd70ab4d6ae6ce3d24f74a012203b9fe5062d0f09aed6c650bb3ad304f73

|

| 3 |

+

size 487858602

|

data/train-00003-of-00010.parquet

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

version https://git-lfs.github.com/spec/v1

|

| 2 |

+

oid sha256:a7bbfc479c48fe36bda67437949c5c3d478befaba94dccef5aa3eeb916666fe1

|

| 3 |

+

size 292858164

|

data/train-00004-of-00010.parquet

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

version https://git-lfs.github.com/spec/v1

|

| 2 |

+

oid sha256:bba48e38693f910877e5a02e14eb1b949923bbcafc4103aade9a120179df0a85

|

| 3 |

+

size 441335341

|

data/train-00005-of-00010.parquet

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

version https://git-lfs.github.com/spec/v1

|

| 2 |

+

oid sha256:2ad42ce28d8c1808c9d5ffe0a62e83a8d84f7d27101107b2b8b6ac70157b8c61

|

| 3 |

+

size 679431576

|

data/train-00006-of-00010.parquet

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

version https://git-lfs.github.com/spec/v1

|

| 2 |

+

oid sha256:64a6634a9f45b9ba964679f192f68b2499909eb30074e48ad2d1563d442c26b5

|

| 3 |

+

size 299296029

|

data/train-00007-of-00010.parquet

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

version https://git-lfs.github.com/spec/v1

|

| 2 |

+

oid sha256:2ae8306de3a08b3621b93f7080e93949a1b6fae7966d1f82aa717fb62074b454

|

| 3 |

+

size 464210673

|

data/train-00008-of-00010.parquet

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

version https://git-lfs.github.com/spec/v1

|

| 2 |

+

oid sha256:e3028ae600df8b8a2a5367c0d8e930fe4d4cffe3348c77e0eaf5a40d96ad9fe2

|

| 3 |

+

size 1327521213

|

data/train-00009-of-00010.parquet

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

version https://git-lfs.github.com/spec/v1

|

| 2 |

+

oid sha256:fc4681c7c692fda8453fe7546514b126ef4938471f438fb4a90030e3d66c14e2

|

| 3 |

+

size 554985718

|

data/validation-00000-of-00001.parquet

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

version https://git-lfs.github.com/spec/v1

|

| 2 |

+

oid sha256:3da40ee1eb5d7988f62f58abbe10f69fb1684a95bed613332833e6a4fe08b50a

|

| 3 |

+

size 473665979

|

gen_script.py

ADDED

|

@@ -0,0 +1,229 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

from pathlib import Path

|

| 2 |

+

|

| 3 |

+

import datasets

|

| 4 |

+

import json

|

| 5 |

+

from datetime import datetime

|

| 6 |

+

|

| 7 |

+

_VERSION = "0.1.0"

|

| 8 |

+

|

| 9 |

+

_CITATION = """

|

| 10 |

+

@inproceedings{8100027,

|

| 11 |

+

title = {Scene Parsing through ADE20K Dataset},

|

| 12 |

+

author = {Zhou, Bolei and Zhao, Hang and Puig, Xavier and Fidler, Sanja and Barriuso, Adela and Torralba, Antonio},

|

| 13 |

+

year = 2017,

|

| 14 |

+

booktitle = {2017 IEEE Conference on Computer Vision and Pattern Recognition (CVPR)},

|

| 15 |

+

volume = {},

|

| 16 |

+

number = {},

|

| 17 |

+

pages = {5122--5130},

|

| 18 |

+

doi = {10.1109/CVPR.2017.544},

|

| 19 |

+

keywords = {Image segmentation;Semantics;Sun;Labeling;Visualization;Neural networks;Computer vision}

|

| 20 |

+

}

|

| 21 |

+

@misc{zhou2018semantic,

|

| 22 |

+

title = {Semantic Understanding of Scenes through the ADE20K Dataset},

|

| 23 |

+

author = {Bolei Zhou and Hang Zhao and Xavier Puig and Tete Xiao and Sanja Fidler and Adela Barriuso and Antonio Torralba},

|

| 24 |

+

year = 2018,

|

| 25 |

+

eprint = {1608.05442},

|

| 26 |

+

archiveprefix = {arXiv},

|

| 27 |

+

primaryclass = {cs.CV}

|

| 28 |

+

}

|

| 29 |

+

"""

|

| 30 |

+

|

| 31 |

+

_DESCRIPTION = """

|

| 32 |

+

ADE20K is composed of more than 27K images from the SUN and Places databases.

|

| 33 |

+

Images are fully annotated with objects, spanning over 3K object categories.

|

| 34 |

+

Many of the images also contain object parts, and parts of parts.

|

| 35 |

+

We also provide the original annotated polygons, as well as object instances for amodal segmentation.

|

| 36 |

+

Images are also anonymized, blurring faces and license plates.

|

| 37 |

+

"""

|

| 38 |

+

|

| 39 |

+

_HOMEPAGE = "https://groups.csail.mit.edu/vision/datasets/ADE20K/"

|

| 40 |

+

|

| 41 |

+

_LICENSE = "Creative Commons BSD-3 License Agreement"

|

| 42 |

+

|

| 43 |

+

_FEATURES = datasets.Features(

|

| 44 |

+

{

|

| 45 |

+

"image": datasets.Image(mode="RGB"),

|

| 46 |

+

"segmentations": datasets.Sequence(datasets.Image(mode="RGB")),

|

| 47 |

+

"instances": datasets.Sequence(datasets.Image(mode="L")),

|

| 48 |

+

"filename": datasets.Value("string"),

|

| 49 |

+

"folder": datasets.Value("string"),

|

| 50 |

+

"source": datasets.Features(

|

| 51 |

+

{

|

| 52 |

+

"folder": datasets.Value("string"),

|

| 53 |

+

"filename": datasets.Value("string"),

|

| 54 |

+

"origin": datasets.Value("string"),

|

| 55 |

+

}

|

| 56 |

+

),

|

| 57 |

+

"scene": datasets.Sequence(datasets.Value("string")),

|

| 58 |

+

"objects": [

|

| 59 |

+

{

|

| 60 |

+

"id": datasets.Value("uint16"),

|

| 61 |

+

"name": datasets.Value("string"),

|

| 62 |

+

"name_ndx": datasets.Value("uint16"),

|

| 63 |

+

"hypernym": datasets.Sequence(datasets.Value("string")),

|

| 64 |

+

"raw_name": datasets.Value("string"),

|

| 65 |

+

"attributes": datasets.Value("string"),

|

| 66 |

+

"depth_ordering_rank": datasets.Value("uint16"),

|

| 67 |

+

"occluded": datasets.Value("bool"),

|

| 68 |

+

"crop": datasets.Value(dtype="bool"),

|

| 69 |

+

"parts": {

|

| 70 |

+

"is_part_of": datasets.Value("uint16"),

|

| 71 |

+

"part_level": datasets.Value("uint8"),

|

| 72 |

+

"has_parts": datasets.Sequence(datasets.Value("uint16")),

|

| 73 |

+

},

|

| 74 |

+

"polygon": {

|

| 75 |

+

"x": datasets.Sequence(datasets.Value("uint16")),

|

| 76 |

+

"y": datasets.Sequence(datasets.Value("uint16")),

|

| 77 |

+

"click_date": datasets.Sequence(datasets.Value("timestamp[us]")),

|

| 78 |

+

},

|

| 79 |

+

"saved_date": datasets.Value("timestamp[us]"),

|

| 80 |

+

}

|

| 81 |

+

],

|

| 82 |

+

}

|

| 83 |

+

)

|

| 84 |

+

|

| 85 |

+

|

| 86 |

+

class ADE20K(datasets.GeneratorBasedBuilder):

|

| 87 |

+

DEFAULT_WRITER_BATCH_SIZE = 1000

|

| 88 |

+

|

| 89 |

+

def _info(self):

|

| 90 |

+

return datasets.DatasetInfo(

|

| 91 |

+

features=_FEATURES,

|

| 92 |

+

supervised_keys=None,

|

| 93 |

+

description=_DESCRIPTION,

|

| 94 |

+

homepage=_HOMEPAGE,

|

| 95 |

+

license=_LICENSE,

|

| 96 |

+

version=_VERSION,

|

| 97 |

+

citation=_CITATION,

|

| 98 |

+

)

|

| 99 |

+

|

| 100 |

+

def _split_generators(self, dl_manager: datasets.DownloadManager):

|

| 101 |

+

archive_training = Path("ADE20K_2021_17_01/images/ADE/training")

|

| 102 |

+

archive_validation = Path("ADE20K_2021_17_01/images/ADE/validation")

|

| 103 |

+

|

| 104 |

+

jsons_training = sorted(list(archive_training.rglob("*.json")))

|

| 105 |

+

jsons_validation = sorted(list(archive_validation.rglob("*.json")))

|

| 106 |

+

|

| 107 |

+

return [

|

| 108 |

+

datasets.SplitGenerator(

|

| 109 |

+

name=datasets.Split.TRAIN,

|

| 110 |

+

gen_kwargs={"jsons": jsons_training},

|

| 111 |

+

),

|

| 112 |

+

datasets.SplitGenerator(

|

| 113 |

+

name=datasets.Split.VALIDATION,

|

| 114 |

+

gen_kwargs={"jsons": jsons_validation},

|

| 115 |

+

),

|

| 116 |

+

]

|

| 117 |

+

|

| 118 |

+

def parse_date(self, date: str) -> datetime:

|

| 119 |

+

if date == []:

|

| 120 |

+

return None

|

| 121 |

+

|

| 122 |

+

try:

|

| 123 |

+

timestamp = datetime.strptime(date, "%d-%m-%y %H:%M:%S:%f")

|

| 124 |

+

return timestamp

|

| 125 |

+

except:

|

| 126 |

+

pass

|

| 127 |

+

|

| 128 |

+

try:

|

| 129 |

+

timestamp = datetime.strptime(date, "%d-%b-%Y %H:%M:%S:%f")

|

| 130 |

+

return timestamp

|

| 131 |

+

except:

|

| 132 |

+

pass

|

| 133 |

+

|

| 134 |

+

try:

|

| 135 |

+

timestamp = datetime.strptime(date, "%d-%m-%y %H:%M:%S")

|

| 136 |

+

return timestamp

|

| 137 |

+

except:

|

| 138 |

+

pass

|

| 139 |

+

|

| 140 |

+

try:

|

| 141 |

+

timestamp = datetime.strptime(date, "%d-%b-%Y %H:%M:%S")

|

| 142 |

+

return timestamp

|

| 143 |

+

except:

|

| 144 |

+

pass

|

| 145 |

+

|

| 146 |

+

raise ValueError(f"Could not parse date: {date}")

|

| 147 |

+

|

| 148 |

+

def parse_imsize(self, imsize: list[int]) -> list[int]:

|

| 149 |

+

if len(imsize) == 2:

|

| 150 |

+

return imsize + [3]

|

| 151 |

+

return imsize

|

| 152 |

+

|

| 153 |

+

def parse_json(self, json_path: Path):

|

| 154 |

+

with json_path.open("r", encoding="ISO-8859-1") as f:

|

| 155 |

+

data = json.load(f)

|

| 156 |

+

annotation = data["annotation"]

|

| 157 |

+

objects = annotation["object"]

|

| 158 |

+

|

| 159 |

+

segmentations = list(

|

| 160 |

+

json_path.parent.glob(

|

| 161 |

+

f"{annotation['filename'].removesuffix(".jpg")}_parts*"

|

| 162 |

+

)

|

| 163 |

+

)

|

| 164 |

+

segmentations = [str(part) for part in segmentations]

|

| 165 |

+

main_mask = json_path.parent / annotation["filename"]

|

| 166 |

+

main_mask = str(main_mask.with_suffix("")) + "_seg.png"

|

| 167 |

+

segmentations.insert(0, main_mask)

|

| 168 |

+

|

| 169 |

+

instances = [

|

| 170 |

+

json_path.parent / object["instance_mask"] for object in objects

|

| 171 |

+

]

|

| 172 |

+

instances = [str(instance) for instance in instances]

|

| 173 |

+

|

| 174 |

+

return {

|

| 175 |

+

"image": str(json_path.parent / annotation["filename"]),

|

| 176 |

+

"segmentations": segmentations,

|

| 177 |

+

"instances": instances,

|

| 178 |

+

"filename": annotation["filename"],

|

| 179 |

+

"folder": annotation["folder"],

|

| 180 |

+

"source": {

|

| 181 |

+

"folder": annotation["source"]["folder"],

|

| 182 |

+

"filename": annotation["source"]["filename"],

|

| 183 |

+

"origin": annotation["source"]["origin"],

|

| 184 |

+

},

|

| 185 |

+

"scene": annotation["scene"],

|

| 186 |

+

"objects": [

|

| 187 |

+

{

|

| 188 |

+

"id": object["id"],

|

| 189 |

+

"name": object["name"],

|

| 190 |

+

"name_ndx": object["name_ndx"],

|

| 191 |

+

"hypernym": object["hypernym"],

|

| 192 |

+

"raw_name": object["raw_name"],

|

| 193 |

+

"attributes": ""

|

| 194 |

+

if object["attributes"] == []

|

| 195 |

+

else object["attributes"],

|

| 196 |

+

"depth_ordering_rank": object["depth_ordering_rank"],

|

| 197 |

+

"occluded": object["occluded"] == "yes",

|

| 198 |

+

"crop": object["crop"] == "1",

|

| 199 |

+

"parts": {

|

| 200 |

+

"part_level": object["parts"]["part_level"],

|

| 201 |

+

"is_part_of": None

|

| 202 |

+

if object["parts"]["ispartof"] == []

|

| 203 |

+

else object["parts"]["ispartof"],

|

| 204 |

+

"has_parts": [object["parts"]["hasparts"]]

|

| 205 |

+

if isinstance(object["parts"]["hasparts"], int)

|

| 206 |

+

else object["parts"]["hasparts"],

|

| 207 |

+

},

|

| 208 |

+

"polygon": {

|

| 209 |

+

"x": list(

|

| 210 |

+

map(lambda x: int(max(0, x)), object["polygon"]["x"])

|

| 211 |

+

),

|

| 212 |

+

"y": list(

|

| 213 |

+

map(lambda y: int(max(0, y)), object["polygon"]["y"])

|

| 214 |

+

),

|

| 215 |

+

"click_date": []

|

| 216 |

+

if "click_date" not in object["polygon"]

|

| 217 |

+

else list(

|

| 218 |

+

map(self.parse_date, object["polygon"]["click_date"])

|

| 219 |

+

),

|

| 220 |

+

},

|

| 221 |

+

"saved_date": self.parse_date(object["saved_date"]),

|

| 222 |

+

}

|

| 223 |

+

for object in objects

|

| 224 |

+

],

|

| 225 |

+

}

|

| 226 |

+

|

| 227 |

+

def _generate_examples(self, jsons: list[Path]):

|

| 228 |

+

for i, json_path in enumerate(jsons):

|

| 229 |

+

yield i, self.parse_json(json_path)

|